Strategies for Reducing False Positives in NGS Variant Calling: A Comprehensive Guide for Biomedical Researchers

Next-generation sequencing (NGS) has revolutionized genetic research and clinical diagnostics, yet false positive variant calls remain a significant challenge that can misdirect research and clinical决策.

Strategies for Reducing False Positives in NGS Variant Calling: A Comprehensive Guide for Biomedical Researchers

Abstract

Next-generation sequencing (NGS) has revolutionized genetic research and clinical diagnostics, yet false positive variant calls remain a significant challenge that can misdirect research and clinical决策. This article provides researchers, scientists, and drug development professionals with a comprehensive framework for understanding, identifying, and mitigating false positives across NGS workflows. Drawing on the latest research and consensus recommendations, we explore the technical foundations of variant calling errors, advanced methodological approaches including AI-based solutions, practical troubleshooting strategies for complex genomic regions, and rigorous validation frameworks. By synthesizing current best practices and emerging technologies, this guide aims to enhance the reliability and reproducibility of genomic studies in biomedical research.

Understanding the Roots of False Positives in NGS Data

In clinical next-generation sequencing (NGS), a false positive occurs when a variant is reported that is not actually present in the patient's genome. These errors present an immediate challenge to diagnostic accuracy, potentially leading to misdiagnoses, unnecessary treatments, and significant psychological distress for patients [1]. The American College of Medical Genetics and Genomics (ACMG) and the College of American Pathologists (CAP) recommend orthogonal confirmation (e.g., Sanger sequencing) for reported variants to mitigate this risk, but this approach increases both cost and turnaround time [2].

The following guide provides a structured framework for understanding, identifying, and reducing false positive variant calls in clinical NGS workflows, featuring case studies, troubleshooting guides, and validated solutions for researchers and clinical laboratories.

Case Study: A Pediatric Pitfall in Hereditary Pancreatitis

A clinical case highlights the real-world dangers of false positives and the necessity of confirmation testing.

Case Presentation and Initial Findings

A 6-year-old boy with a history of epilepsy presented with acute abdominal pain. Laboratory tests confirmed acute pancreatitis. His medication included valproic acid (VPA), initiated five months prior. As no common causes of pancreatitis were identified (biliary, traumatic, metabolic, or infectious), and given the known association of VPA with drug-induced pancreatitis, this was deemed the suspected cause. VPA was discontinued, leading to a gradual improvement in his symptoms and biochemical markers [3].

Due to a family history of pancreatitis, clinicians investigated potential genetic causes. Whole-exome sequencing (WES) initially identified two heterozygous variants in the PRSS1 gene (c.47C>T p.A16V and c.86A>T p.N29I), both of which are known to be associated with autosomal dominant hereditary pancreatitis [3].

The False Positive Revelation

Despite the initial NGS findings, subsequent Sanger sequencing of all five PRSS1 exons failed to confirm these variants in either the patient or his parents. The WES results were false positives, likely arising from difficulties in accurately aligning and calling variants within highly homologous genomic regions. The diagnosis of valproic acid-induced acute pancreatitis was confirmed, a conclusion supported by a high score on the Naranjo Adverse Drug Reaction Probability Scale [3].

Clinical Impact and Consequences

This case underscores a critical pitfall: reliance solely on NGS data, without confirmatory testing, could have led to:

- Misdiagnosis: An incorrect diagnosis of hereditary pancreatitis.

- Misguided Genetic Counseling: Inaccurate assessment of recurrence risks for the patient and family.

- Overshadowed Etiology: The correct, drug-related cause might have been overlooked [3].

Table 1: Clinical and Genetic Findings in the Pediatric Pancreatitis Case

| Clinical Element | Finding |

|---|---|

| Presenting Symptom | Acute abdominal pain |

| Key Laboratory Finding | Elevated amylase (773 U/L) |

| Suspect Etiology | Valproic acid exposure |

| Initial NGS Finding | Two PRSS1 variants (p.A16V & p.N29I) |

| Sanger Sequencing Result | Variants not confirmed in patient or parents |

| Final Diagnosis | Valproic acid-induced acute pancreatitis |

Troubleshooting Guide: FAQs on Reducing False Positives

During Library Preparation and Sequencing

Q: Our NGS runs consistently show high numbers of false positive variant calls. What are the most common preparation-related causes?

A: Failures in library preparation are a major source of false positives. Key issues and solutions include:

- Adapter Contamination: Inefficient ligation or overly aggressive amplification can lead to adapter-dimer formation, which appears as a sharp peak at ~70-90 bp on an electropherogram. This can misalign and create false variant calls [4].

- Solution: Titrate adapter-to-insert molar ratios and optimize ligation conditions. Use fluorometric quantification (e.g., Qubit) over UV absorbance for accurate molarity.

- Over-amplification: Too many PCR cycles introduces duplication artifacts and base substitution errors, especially in later cycles.

- Solution: Use the minimum number of PCR cycles necessary. If yield is low, it is better to repeat the amplification from the ligation product than to over-amplify a weak product [4].

- Input DNA Quality: Degraded DNA or samples contaminated with salts, phenol, or ethanol can inhibit enzymes, leading to non-uniform coverage and erroneous calls.

- Solution: Re-purify input DNA, ensure high purity ratios (260/280 ~1.8), and use fluorometric quantification methods [4].

Q: How can I troubleshoot sporadic, operator-dependent false positives in my lab?

A: Sporadic failures often point to human error during manual library prep.

- Root Causes: Common issues include accidental discarding of beads during cleanup, improper ethanol wash concentrations, deviations from mixing protocols, and pipetting inaccuracies [4].

- Corrective Actions:

- Implement detailed Standard Operating Procedures (SOPs) with critical steps highlighted.

- Use master mixes to reduce pipetting steps and variability.

- Introduce temporary "waste plates" to allow sample retrieval in case of mistaken discards.

- Enforce operator checklists and redundant logging of key steps [4].

During Bioinformatic Analysis

Q: What bioinformatic strategies can we employ to reduce false positives without sacrificing sensitivity?

A: Two powerful approaches are ensemble genotyping and machine learning-based filtering.

- Ensemble Genotyping: This method integrates the results of multiple, independent variant-calling algorithms on the same dataset. By requiring a consensus, it significantly reduces false positives that are specific to one caller's methodology. One study demonstrated that this approach excluded >98% of false positives in de novo mutation discovery while retaining >95% of true positives [5].

- Machine Learning (ML) Filtering: ML models can be trained to distinguish true variants from false positives using quality metrics from the variant call format (VCF) files as features (e.g., read depth, strand bias, mapping quality). One implementation, the STEVE framework, reduced the need for orthogonal Sanger confirmation by 71% while maintaining high accuracy by automatically learning complex interactions between these metrics [2].

Q: Are there specific genomic regions that are more prone to false positive variant calls?

A: Yes, false positives are not uniformly distributed. Special attention is needed for:

- Highly Homologous Regions: Such as segmental duplications or gene families. The PRSS1 gene case is a prime example, where homologous sequences can mislead alignment software [3].

- Repetitive Sequences: Regions flagged by RepeatMasker show higher false positive rates, particularly for insertions and deletions (indels) [5].

- Context-Specific Errors: PCR-induced errors are a major source, with G>A and C>T transitions being the most common. The use of high-fidelity, proofreading DNA polymerases during library prep can significantly reduce these artifacts [6].

Quantitative Data: Assessing the Scale of the Problem

Understanding the performance of different filtering methods is key to optimizing a pipeline. The following table summarizes the effectiveness of various approaches as demonstrated in recent studies.

Table 2: Performance of Different False Positive Filtering Methods in NGS

| Filtering Method / Metric | Key Performance Outcome | Study Context |

|---|---|---|

| Machine Learning (STEVE) | Reduced need for Sanger confirmation by 71%; identified 99.5% of false positive SNVs and indels [2]. | Clinical genome sequencing (cGS) of GIAB samples. |

| Ensemble Genotyping | Excluded >98% (105,080/107,167) of false positives while retaining >95% (897/937) of true positives in DNM discovery [5]. | Whole-genome sequencing of an extended family. |

| Logistic Regression (LR) Filtering | Significantly reduced false negative rates by 1.1- to 17.8-fold compared to standard genotype quality filtering [5]. | Comparison of Illumina and Complete Genomics WGS data. |

| PCR Enzyme Optimization | Reliably detected JAK2 c.1849G>T mutations at Variant Allele Frequencies (VAFs) as low as 0.0015% by reducing transition errors [6]. | Targeted NGS for minimal residual disease (MRD) detection. |

Experimental Protocols for Validation and Improvement

Protocol: Implementing a Machine Learning Filter for Clinical Genomes

This protocol is based on the STEVE framework, which uses GIAB truth sets for training [2].

Data Set Generation:

- Sequence well-characterized reference genomes (e.g., GIAB samples HG001-HG005) using your standard clinical NGS pipeline.

- Process the raw data through your secondary analysis pipeline (alignment and variant calling) to generate VCF files.

- Use the GIAB benchmark variant calls as the "truth set." Compare your VCFs to this set using a tool like RTG vcfeval to label each of your variant calls as a "True Positive" or "False Positive."

Feature Extraction and Modeling:

- Extract quality metrics (e.g., read depth, genotype quality, allele balance, strand bias) from the VCF files to use as machine learning features.

- Divide the labeled variant calls into six distinct data sets based on variant type and genotype: heterozygous SNVs, homozygous SNVs, complex heterozygous SNVs, heterozygous indels, homozygous indels, and complex heterozygous indels.

- For each of the six data sets, train a separate machine learning model (e.g., using a supervised classification algorithm) to predict the "True Positive" label.

Validation and Implementation:

- Validate model performance on a held-out test set of data, ensuring it meets predefined clinical sensitivity and specificity thresholds.

- Integrate the trained models into your clinical pipeline. Variants classified by the model as high-confidence true positives can be reported with a reduced need for orthogonal confirmation, while others can be flagged for mandatory Sanger sequencing.

Protocol: Wet-Lab Optimization for Sensitive SNV Detection

This protocol outlines steps to minimize false positives arising from library preparation, crucial for detecting low-frequency variants [6].

Polymerase Selection: Use a high-fidelity, proofreading DNA polymerase during the target amplification PCR steps. This is critical for reducing PCR-induced substitution errors, which are a major source of false positives, particularly G>A and C>T transitions.

Minimize PCR Cycles: Use the minimum number of PCR cycles necessary to obtain sufficient library yield. Over-amplification increases the chance of propagating early errors.

Analytical Threshold Setting: For applications like MRD detection, establish site-specific analytical thresholds (cut-offs) for variant calling. Account for the underlying transition/transversion error bias, as detection limits will be lower for transversions (e.g., G>T) which occur less frequently as artifacts.

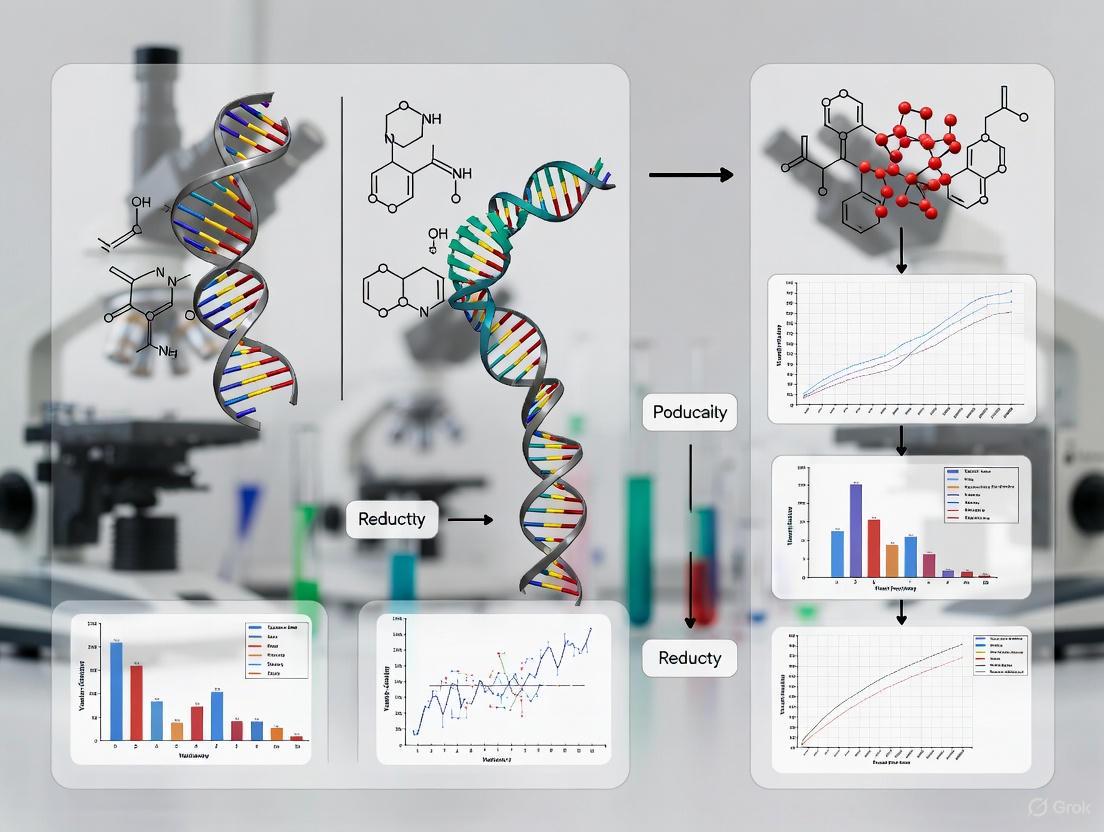

Visualizing Workflows and Decision Pathways

The following diagram illustrates a robust clinical NGS workflow that incorporates multiple checkpoints to minimize the impact of false positives, from sample to clinical report.

Diagram: A Robust Clinical NGS Workflow with False Positive Mitigation

The Scientist's Toolkit: Essential Reagents and Software

This table lists key resources cited in the literature for constructing a reliable NGS pipeline with low false positive rates.

Table 3: Key Research Reagent Solutions for Reducing False Positives

| Tool / Reagent | Function / Purpose | Role in Reducing False Positives |

|---|---|---|

| GIAB Reference Materials | Characterized human genome samples (e.g., NA12878) | Provides a gold-standard "truth set" for benchmarking pipeline performance and training ML models [2]. |

| High-Fidelity DNA Polymerase | Enzyme for PCR amplification during library prep | Reduces PCR-induced substitution errors (e.g., G>A, C>T transitions), a major source of false low-frequency variants [6]. |

| Torrent Suite / Ion Reporter | Software for primary analysis, variant calling, and annotation | Integrated platforms that provide quality metrics for initial variant filtering and annotation [7]. |

| Ensemble Genotyping Pipeline | Bioinformatic method combining multiple variant callers | Increases specificity by requiring consensus from different calling algorithms, effectively filtering platform-specific errors [5]. |

| Machine Learning Frameworks (e.g., STEVE) | Automated variant classification | Uses multiple quality metrics to probabilistically classify true vs. false variants, dramatically reducing need for costly confirmation [2]. |

This guide addresses the major technical sources of error in Next-Generation Sequencing (NGS) that contribute to false positives in variant calling, providing troubleshooting strategies to enhance the accuracy and reliability of your data.

Frequently Asked Questions (FAQs)

Q1: What are the most common laboratory preparation steps that introduce false positives? Errors during library preparation are a primary source of false positives. Common issues include:

- Cross-contamination between samples, which can be mitigated by using unique dual indices (UDIs) and including negative controls.

- PCR artifacts caused by over-amplification or mispriming, which introduce stochastic errors that are later amplified. Limiting PCR cycles and using high-fidelity polymerases is crucial [4].

- Insufficient purification of libraries, leading to carryover of adapter dimers or contaminants that inhibit enzymes and skew sequencing [4].

Q2: How do Unique Molecular Identifiers (UMIs) reduce false positives, and what are their limitations? UMIs are short, random DNA sequences used to uniquely tag individual DNA molecules before PCR amplification. This allows bioinformatics tools to group sequencing reads derived from the same original molecule and generate a consensus sequence, effectively filtering out errors introduced during PCR or sequencing [8].

- Limitations: The effectiveness of UMIs can be compromised by UMI collisions (different molecules tagged with the same UMI) and by PCR or sequencing errors within the UMI sequence itself, which can lead to incorrect grouping of reads and the creation of artifactual consensus sequences [8].

Q3: My sequencing run had high coverage, but I still have many false positives. Why? High but uneven coverage can be misleading. If certain genomic regions have low coverage, variants called there will have low confidence. More critically, the source of your false positives is likely earlier in the workflow. Focus on the pre-sequencing steps: input DNA quality, library preparation fidelity, and the efficiency of cleanup steps. A high duplication rate often indicates low library complexity or PCR bias, which can inflate false positives [4].

Q4: What is the difference between a clastogen and a mutagen, and how does this impact assay choice? This distinction is critical for accurate genotoxicity assessment:

- A mutagen directly causes changes to the DNA sequence (point mutations). It should be detected by mutagenicity assays like error-corrected NGS (ecNGS) [9].

- A clastogen causes chromosomal breaks or damage to the mitotic apparatus, leading to large-scale structural damage. It is best detected by cytogenetic assays like the micronucleus test [9]. Some compounds, like etoposide, are clastogenic and will trigger a strong cytogenetic response without increasing point mutation frequency. Using an assay combination (e.g., ecNGS with a micronucleus test) is therefore essential for a complete genotoxic profile [9].

Troubleshooting Common NGS Errors

Library Preparation Artifacts

Library preparation is a foundational step where initial errors can occur and be massively amplified.

- Problem: Low library yield and high adapter-dimer content.

- Root Causes:

- Degraded or contaminated input DNA: Inhibitors can reduce enzyme efficiency in fragmentation and ligation steps [4].

- Inaccurate quantification: Over-estimation of input DNA leads to suboptimal adapter-to-insert ratios, promoting adapter-dimer formation [4].

- Over-aggressive purification: Sample loss during size selection or bead cleanups [4].

- Solutions:

- Use fluorometric quantification (e.g., Qubit) instead of absorbance alone to accurately measure double-stranded DNA [4].

- Titrate adapter concentrations to find the optimal molar ratio for your insert size.

- Visually inspect your library profile using an Agilent Bioanalyzer or TapeStation to check for a clean, specific peak and the absence of a ~70-90 bp adapter-dimer peak [4].

PCR Amplification Bias and Errors

PCR is necessary to amplify libraries but is a major source of artifacts.

- Problem: Overamplification leads to high duplicate rates, skewed coverage, and introduction of polymerase errors that manifest as false low-frequency variants [4].

- Root Causes:

- Too many PCR cycles.

- Inefficient polymerase or the presence of polymerase inhibitors in the library [4].

- Solutions:

- Use the minimum number of PCR cycles necessary for adequate library yield.

- Use high-fidelity DNA polymerases to reduce the intrinsic error rate during amplification.

- Employ UMIs to bioinformatically identify and correct for PCR errors [8].

Sequencing Errors and Inadequate QC

Inherent sequencing chemistry errors and poor data quality directly cause false positives.

- Problem: High per-base error rates from the sequencer, often in a non-random pattern (e.g., associated with specific sequences or flow cells).

- Root Causes: The biochemical process of Sequencing by Synthesis (SBS) is not 100% efficient, leading to misincorporation and phasing errors [10].

- Solutions:

- Perform rigorous quality control (QC) on raw sequencing reads using tools like FastQC [8].

- Trim adapters and low-quality bases using tools like Trim Galore [8].

- For detecting very low-frequency variants (<1%), standard NGS is insufficient; implement error-corrected NGS (ecNGS) methods like duplex sequencing, which can achieve error rates as low as < 1 in 10^7 [9].

Bioinformatics Challenges: Alignment and Variant Calling

Computational steps can introduce or fail to correct errors.

- Problem: Misalignment of reads to repetitive or homopolymer regions, leading to false indel calls.

- Root Causes: Short reads cannot be uniquely mapped to complex regions of the reference genome [10].

- Solutions:

- Use more sophisticated aligners that are better at handling indels.

- For complex genomic regions, consider using long-read sequencing technologies (e.g., PacBio SMRT or Oxford Nanopore) which generate reads thousands of bases long and can span repetitive areas unambiguously [10].

- Implement AI-based variant callers like DeepVariant, which uses a deep learning model to distinguish true variants from alignment artifacts more accurately than traditional statistical methods [11].

Experimental Protocols for Error Suppression

Protocol 1: Error-Corrected NGS (ecNGS) using Duplex Sequencing

This protocol, adapted from recent literature, uses UMIs and consensus sequencing to achieve ultra-high accuracy [9] [8].

1. DNA Shearing and UMI Ligation:

- Fragment genomic DNA to the desired size (e.g., ~300 bp) using focused acoustic shearing [12].

- Ligate double-stranded adapters containing a random UMI sequence to both ends of each DNA fragment. This uniquely tags every original molecule [8].

2. Library Amplification and Sequencing:

- Amplify the library with a limited number of PCR cycles.

- Sequence the library on a short-read platform (e.g., Illumina NovaSeq), ensuring the read structure allows for accurate UMI extraction [9].

3. Bioinformatics Processing with AFUMIC:

- UMI Clustering: Use an advanced, alignment-free UMI clustering tool like AFUMIC.

- AFUMIC groups reads based on UMI sequence similarity, correcting for PCR and sequencing errors within the UMIs themselves. This reduces singleton families and increases data retention [8].

- Consensus Generation:

- Generate Single-Strand Consensus Sequences (SSCS) for each group of reads sharing a UMI. This eliminates single-stranded errors.

- Pair complementary SSCSs from the same original DNA duplex to generate a Duplex Consensus Sequence (DCS). A true variant must be present in both strands. This step reduces the error rate to ~1x10^-9 [8].

Protocol 2: AI-Enhanced Variant Calling for False Positive Reduction

This protocol leverages modern machine learning to improve variant calling accuracy [11].

1. Standard Alignment and Processing:

- Align your NGS reads to a reference genome (e.g., GRCh37) using a preferred aligner (e.g., BWA).

- Perform standard post-alignment processing (sorting, duplicate marking, base quality score recalibration).

2. Variant Calling with an AI-Based Tool:

- Use a deep learning-based variant caller like DeepVariant.

- DeepVariant transforms alignment data (pileups) into images and uses a convolutional neural network (CNN) to learn the features of true variants versus sequencing artifacts [11].

- Alternative Tools: Consider DeepTrio for family-based studies or DNAscope for a computationally efficient, machine-learning-enhanced pipeline [11].

3. Validation and Filtering:

- Filter the resulting VCF file based on the quality metrics output by the AI caller.

- For clinical or critical research applications, validate key findings using an orthogonal method (e.g., digital PCR or Sanger sequencing).

Table 1: Common NGS Preparation Errors and Their Impact

| Error Category | Typical Failure Signals | Impact on False Positives | Corrective Action |

|---|---|---|---|

| Sample Input/Quality | Low yield; smear in electropherogram [4] | High false negatives & positives due to enzyme inhibition | Re-purify input; use fluorometric quantification [4] |

| Fragmentation/Ligation | Unexpected fragment size; adapter-dimer peaks [4] | Skewed coverage; artifactual indels; sequence dropout | Optimize shearing parameters; titrate adapter ratio [4] |

| Amplification/PCR | High duplicate rate; overamplification artifacts [4] | Polymerase errors appear as low-frequency variants | Use minimum PCR cycles; employ UMIs [8] [4] |

| Purification/Cleanup | Incomplete removal of small fragments; sample loss [4] | Adapter-dimer reads; low library complexity | Optimize bead-based cleanup ratios; avoid bead over-drying [4] |

Table 2: Performance of Advanced Error Suppression Methods

| Method / Tool | Key Mechanism | Reported Performance Improvement | Best Use Case |

|---|---|---|---|

| Duplex Sequencing [9] | UMI-based duplex consensus | Detects mutations at frequencies as low as 1 in 10^7; distinguishes mutagens from clastogens [9] | Ultra-sensitive variant detection; genotoxicity screening |

| AFUMIC UMI Clustering [8] | Collision-resilient UMI grouping & CQS-guided consensus | 3.84x increase in DCS output; error-free positions raised from 45.27% to 99.85% [8] | High-sensitivity detection of low-frequency variants (e.g., in liquid biopsy) |

| DeepVariant [11] | Deep learning on pileup images | Higher accuracy than GATK, SAMTools; automatically produces filtered variants [11] | General variant calling; large-scale genomic studies (e.g., UK Biobank) |

| DNAscope [11] | Machine learning-enhanced HaplotypeCaller | High SNP/InDel accuracy with reduced computational cost vs. DeepVariant/GATK [11] | Efficient, high-throughput variant calling in production environments |

The Scientist's Toolkit: Key Research Reagents & Materials

Table 3: Essential Reagents for Error-Reduced NGS Workflows

| Item | Function | Example Use Case |

|---|---|---|

| High-Fidelity DNA Polymerase | Reduces errors introduced during PCR amplification, preserving sequence accuracy. | Library amplification in ecNGS protocols to minimize polymerase-derived false variants [9]. |

| UMI-Adapters | Uniquely tags each original DNA molecule for error correction. | Foundational for all UMI-based methods, including duplex sequencing, to track PCR duplicates and generate consensus [9] [8]. |

| Size Selection Beads | Precisely cleans up reaction products and selects for a target fragment size range. | Removing adapter dimers after ligation and performing precise size selection to ensure library uniformity [4]. |

| HepaRG Cells | Metabolically competent human liver cells expressing key xenobiotic-metabolizing enzymes. | A human-relevant in vitro model for genotoxicity testing that can bioactivate pro-mutagens like Benzo[a]pyrene [9]. |

| AI-Based Variant Caller (e.g., DeepVariant) | Uses trained neural networks to distinguish true genetic variants from sequencing/alignment artifacts. | Final analytical step to maximize variant calling accuracy and reduce false positives after sequencing [11]. |

Workflow Visualization

NGS Error and Mitigation Workflow: This diagram maps major technical error sources (red) to specific steps in the NGS process and pairs them with corresponding mitigation strategies (green) to reduce false positives.

FAQs: Understanding and Addressing Common Issues

Q1: What types of genomic regions are most prone to false positive variant calls in NGS data? Regions with high sequence homology, such as segmental duplications or multi-gene families, are particularly problematic. In these areas, sequence reads can map incorrectly to a highly similar region of the genome instead of their true origin, creating false positive variant calls. This issue, known as reference bias, is especially challenging for detecting structural variants and variants in repetitive sequences [13] [14]. Complex loci, like the CBS gene which can contain a 68-base pair insertion, also present significant challenges for accurate genotyping and phasing using standard alignment methods [14].

Q2: What specific problem can occur at the CBS gene locus, and why is it difficult to detect? The CBS gene can harbor a complex variant where a single nucleotide variant (c.833T>C) exists in cis with a 68 bp insertion (c.844_845ins68). The high sequence similarity (~96% identical) between this 68 bp insertion and the reference genome sequence causes alignment algorithms to force reads containing the complex variant to the standard reference. This mapping bias can result in the failure to detect the insertion and/or the misclassification of the c.833T>C variant, potentially leading to a false positive call for homocystinuria if the phasing is not correctly determined [14].

Q3: What computational strategy can improve detection and phasing of complex variants? A custom scaffolds approach can circumvent these limitations. This method involves creating supplementary reference sequences tailored to specific complex variants. In the case of the CBS gene, two scaffolds are constructed: one representing the wild-type sequence and another incorporating the 68 bp insertion. During alignment, reads with the insertion will map preferentially to the custom scaffold containing it, enabling correct variant calling and providing direct phasing information. This method has demonstrated 100% accuracy in resolving all genotype combinations for the CBS complex variant in simulated reads and has been successfully applied to over 60,000 clinical specimens [14].

Q4: Beyond complex SNPs/indels, what other variant types are challenging to call? The accurate detection of structural variants (SVs), including copy number variants (CNVs) and large genomic rearrangements, remains a significant challenge in NGS data analysis. These variants are difficult to call in regions with uneven read coverage, which is often the case in repetitive or homologous regions [13]. Furthermore, emerging complex biomarkers in oncology, such as Homologous Recombination Deficiency (HRD), Tumor Mutational Burden (TMB), and Microsatellite Instability (MSI), require sophisticated bioinformatics pipelines that often employ machine learning and statistical methods for accurate determination [13].

Q5: How can I troubleshoot a sudden increase in false positive variant calls across my dataset? A systematic check of your workflow is essential. First, verify that the correct version of the reference genome is being used and that it is properly indexed. Next, examine the raw sequencing data using quality control tools like FastQC to check for issues like adapter contamination or a drop in base quality scores, which may require trimming [15]. Also, review your library preparation process; an increase in false positives can sometimes be traced back to issues in fragmentation, ligation, or amplification during library prep, such as over-cycling during PCR [4].

Troubleshooting Guides

Guide 1: Resolving False Positives from Mapping Bias with Custom Scaffolds

Problem: Inaccurate variant calls and phasing due to reference genome bias in regions with high homology or complex structural variants.

Solution: Implement a custom scaffolds approach for read alignment.

Experimental Protocol:

Step 1: Identify the Problematic Locus Define the genomic region of interest and the specific complex variant(s). For the CBS gene example, this includes the c.833T>C SNV and the c.844_845ins68.

Step 2: Design Custom Scaffold Sequences Construct two or more reference sequences:

- Wild-type Scaffold: A sequence encompassing the genomic region of interest based on the standard reference genome (e.g., GRCh37/hg19).

- Variant Scaffold(s): The same genomic region, but modified to include the complex variant (e.g., the 68 bp insertion). To further enhance phasing of closely linked variants, you can introduce an artificial marker (e.g., a silent base change) into the variant scaffold [14].

Step 3: Integrate Scaffolds into Alignment Workflow Combine the custom scaffolds with the standard primary reference genome to create a composite reference file for read alignment. This allows the alignment algorithm to choose the best-matching reference for each read.

Step 4: Analyze Alignment Output Reads originating from haplotypes with the complex variant will align to the variant scaffold, while reads from wild-type haplotypes will align to the wild-type scaffold. This segregation allows for accurate genotyping and provides direct phasing information based on the read alignment [14].

Guide 2: General Wet-Lab Best Practices to Minimize False Positives

Many false positives originate from artifacts introduced during library preparation. Adhering to rigorous protocols is crucial.

Common Pitfalls and Corrective Actions:

| Category | Typical Failure Signals | Common Root Causes | Corrective Actions |

|---|---|---|---|

| Sample Input / Quality | Low library complexity; smear in electropherogram [4] | Degraded DNA/RNA; sample contaminants (phenol, salts) [4] | Re-purify input; use fluorometric quantification (Qubit) over UV absorbance; check purity ratios (260/230 > 1.8) [4] [16] |

| Fragmentation & Ligation | Unexpected fragment size; high adapter-dimer peaks [4] | Over-/under-shearing; improper adapter-to-insert ratio [4] | Optimize fragmentation parameters; titrate adapter concentration; use fresh ligase buffer [4] |

| Amplification / PCR | High duplicate rate; over-amplification artifacts [4] | Too many PCR cycles; enzyme inhibitors [4] | Reduce the number of amplification cycles; ensure complete removal of PCR inhibitors during cleanup [4] |

| Purification & Cleanup | Incomplete removal of adapter dimers; significant sample loss [4] | Incorrect bead-to-sample ratio; over-drying beads [4] | Precisely follow cleanup protocol ratios; do not over-dry magnetic beads [4] |

Workflow Diagrams

Standard vs. Custom Scaffolds Alignment

Custom Scaffolds Analysis Workflow

Key Experimental Data and Performance

Table 1: Performance of the Custom Scaffolds Method for CBS Variant Detection

| Metric | Result | Context / Details |

|---|---|---|

| Analytical Accuracy | 100% | Resolution of all possible genotype combinations for CBS c.833T>C and c.844_845ins68 using simulated reads [14]. |

| Clinical Scale Validation | > 60,000 specimens | Successful application in clinical genetic testing, outperforming standard GRCh37 alignment [14]. |

| Variant Discovery | Previously undetected | Identification of the c.[833T>C; 844_845ins68] complex variant in two 1000 Genomes Project trios where it was previously missed [14]. |

Table 2: Impact of Integrated Technologies on Newborn Screening Accuracy

| Method | Sensitivity | False Positive Reduction | Key Finding |

|---|---|---|---|

| Metabolomics with AI/ML | 100% (35/35 true positives) | Varied by condition | Effectively identified all confirmed cases, but ability to exclude false positives was disorder-dependent [12] [17]. |

| Genome Sequencing | 89% (31/35 true positives) | 98.8% | Effectively ruled out disease in false-positive cases, but missed some true positives due to lack of two reportable variants [12] [17]. |

| Integrated Approach | High | High | Combining metabolomics and sequencing data provides a more balanced and accurate result, enhancing precision [12] [17]. |

The Scientist's Toolkit: Research Reagent Solutions

Essential Materials for Complex Variant Analysis:

| Item | Function in the Context of Problematic Regions |

|---|---|

| High-Quality Input DNA | Minimizes artifacts from degraded or contaminated samples that compound alignment issues in difficult regions. Use fluorometry for quantification [4] [16]. |

| Custom-Designed Scaffold Sequences | Synthetic DNA fragments or bioinformatic constructs that serve as alternative references for specific complex variants, enabling correct read alignment and phasing [14]. |

| Robust Library Prep Kit | Kits with optimized enzymes and buffers reduce bias during fragmentation, adapter ligation, and amplification, which is critical for maintaining uniform coverage in complex loci [4]. |

| Size Selection Beads | Magnetic beads used in precise cleanup and size selection to effectively remove adapter dimers and select the desired insert size, improving library quality [4]. |

| Fresh Wash Buffers | Critical for purification steps; degraded ethanol washes can lead to carryover of contaminants that inhibit enzymes and increase error rates [4]. |

| Composite Reference Genome | A bioinformatic file combining the standard primary reference (e.g., GRCh38) with one or more custom scaffolds, used as the alignment target [14]. |

| Alignment Software (e.g., BWA-Mem) | The tool that performs the actual mapping of sequencing reads to the composite reference, sensitive to parameters like mismatch and gap penalties [18] [14]. |

Frequently Asked Questions (FAQs)

FAQ 1: Why does my variant calling pipeline produce a high number of false positives in certain genomic regions?

False positives are disproportionately high in complex genomic regions, such as those with repetitive sequences or high homology. A 2025 investigative study on esophageal squamous cell carcinoma (ESCC) provided a stark example: standard bioinformatics pipelines generated extensive false positive calls in the MUC3A gene, with false positive rates approaching 100%. This occurred despite using multiple variant calling algorithms and a Panel of Normals (PON) filtering strategy [19].

The primary reasons for this failure include:

- Inherent Sequence Complexity: Standard alignment and variant calling tools struggle to accurately map short sequencing reads to repetitive regions or areas with many similar sequences [19].

- Limitations of Standard Filters: Common strategies like using a PON or multi-tool consensus can be insufficient on their own to correct for these inherent technical artifacts [19].

- Sequencing Errors: Even minor inaccuracies introduced during library preparation or the sequencing process itself can be misinterpreted as false variants, especially in these challenging regions [20].

Recommendation: The study strongly recommends mandatory quantitative laboratory validation (e.g., PCR-based confirmation) for any variants identified in genes with known complex sequence architectures to prevent the propagation of spurious findings [19].

FAQ 2: My sequencing run had low library yield. What went wrong in the preparation stage and how can I fix it?

Low library yield is a common issue often stemming from problems during the initial sample and library preparation phases. Addressing this is critical, as errors introduced early on can lead to biased data and false positives downstream—a classic "garbage in, garbage out" scenario [16].

The table below summarizes the primary causes and corrective actions for low library yield:

| Cause | Mechanism of Yield Loss | Corrective Action |

|---|---|---|

| Poor Input Quality / Contaminants | Enzyme inhibition from residual salts, phenol, or EDTA [4]. | Re-purify input sample; ensure high purity (e.g., 260/230 > 1.8); use fresh wash buffers [4]. |

| Inaccurate Quantification | Over-estimating input concentration leads to suboptimal enzyme reactions [4]. | Use fluorometric methods (Qubit) over UV absorbance (NanoDrop); calibrate pipettes [4] [21]. |

| Suboptimal Adapter Ligation | Poor ligase performance or incorrect adapter-to-insert ratio reduces efficiency [4]. | Titrate adapter:insert molar ratios; ensure fresh ligase and optimal reaction conditions [4]. |

| Overly Aggressive Purification | Desired fragments are accidentally excluded during clean-up steps [4]. | Optimize bead-based clean-up ratios; avoid over-drying beads to ensure efficient resuspension [4]. |

Additional Solution: Consider leveraging multiplexed library preparation kits that feature auto-normalization. These can maintain consistent read depths across a wide range of input concentrations, reducing the risk of yield-related failures and the associated errors [21].

FAQ 3: Are there more accurate variant calling tools that can help reduce false positives?

Yes, a new generation of Artificial Intelligence (AI)-based variant callers has emerged, leveraging machine learning (ML) and deep learning (DL) to improve accuracy and reduce false positives in complex genomic contexts [22].

The following table compares several state-of-the-art AI-based variant callers:

| Tool | Technology | Key Features & Strengths | Limitations |

|---|---|---|---|

| DeepVariant [22] | Deep Learning (CNN) | Uses pileup images; high accuracy; eliminates need for manual post-calling filtering; supports short and long-read data. | High computational cost [22]. |

| DeepTrio [22] | Deep Learning (CNN) | Extends DeepVariant; analyzes family trios to improve accuracy, especially for de novo mutations and in challenging regions. | Designed for trio analysis, not single samples [22]. |

| DNAscope [22] | Machine Learning | Optimized for speed and efficiency; combines GATK HaplotypeCaller with an AI-based genotyping model; reduces computational cost. | Does not use deep learning architectures [22]. |

| VarRNA [23] | Machine Learning (XGBoost) | Specialized for calling and classifying variants from RNA-Seq data; distinguishes germline, somatic, and artifact variants without a matched normal DNA sample. | Developed for RNA-Seq data, not DNA [23]. |

These tools demonstrate that AI can capture complex patterns in sequencing data that traditional statistical methods might miss, leading to more robust variant calls [22].

Troubleshooting Guides

Guide 1: How to Systematically Troubleshoot NGS Library Preparation Failures

Library preparation is a frequent source of error. The following workflow provides a systematic diagnostic strategy to identify and correct common issues.

Case Example: Addressing Intermittent Failures in a Core Lab A shared core facility experiencing sporadic library prep failures traced the issue to human variation in manual pipetting and reagent degradation [4].

- Root Causes: Deviations from SOPs (e.g., mixing methods), evaporation of ethanol wash solutions, and accidental discarding of beads during clean-up [4].

- Proven Fixes:

Guide 2: A Protocol for Validating Putative Variants in Complex Genomic Regions

As demonstrated in the MUC3A case study, computational predictions in complex regions require experimental confirmation. This protocol outlines a robust validation methodology [19].

Objective: To quantitatively confirm the presence of somatic mutations identified by a computational pipeline in a gene with complex sequence architecture.

Experimental Workflow:

Key Materials and Reagents:

- Original DNA Sample: The same genomic DNA used for the initial WGS/WES.

- PCR Primers: Designed to specifically flank the putative variant site identified in the complex region.

- High-Fidelity DNA Polymerase: To minimize errors during the PCR amplification step.

- Orthogonal Sequencing Technology:

- Sanger Sequencing: The gold standard for confirming specific variants. It provides long, accurate reads ideal for validating individual loci [10].

- Third-Generation Long-Read Sequencing (PacBio HiFi, Oxford Nanopore): Particularly valuable for complex regions as long reads can span repetitive elements, providing context that short-read technologies miss [10] [22].

Procedure:

- Design Primers: Create primers to amplify a region of a few hundred base pairs encompassing the putative variant.

- Amplify Target: Perform PCR amplification on the original DNA sample using a high-fidelity polymerase.

- Purify Amplicons: Clean up the PCR product to remove primers and enzymes.

- Sequence: Submit the purified amplicon for Sanger sequencing or prepare a library for long-read sequencing.

- Analyze Data: Align the validation sequencing data to the reference genome and inspect the specific base or region in question.

Interpretation:

- If the putative variant is confirmed by the orthogonal method, it can be considered a true positive.

- If the putative variant is absent in the validation data, it is a computational false positive, as was the case for all putative mutations in the

MUC3Agene in the cited study [19]. This highlights the critical limitation of standard pipelines in these regions.

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential materials and their functions for improving the accuracy of NGS-based variant detection, particularly in challenging scenarios.

| Item | Function & Application |

|---|---|

| High-Fidelity DNA Polymerase | Reduces PCR errors during library amplification, preventing the introduction of artifactual mutations that can be mistaken for true variants [4]. |

| Fluorometric Quantification Kits (Qubit) | Accurately measures concentration of double-stranded DNA without interference from common contaminants, ensuring correct input amounts for library prep and preventing yield failures [4] [21]. |

| Automated Liquid Handling Systems | Minimizes human pipetting error and sample cross-contamination, increasing reproducibility and reducing batch effects in high-throughput workflows [21]. |

| Panel of Normals (PON) | A computational reagent; a database of common artifacts found in control samples. Used to filter out systematic false positives recurring across specific lab workflows [19]. |

| AI-Based Variant Callers (e.g., DeepVariant) | Uses deep learning on pileup images of aligned reads to distinguish true genetic variation from sequencing and alignment artifacts, offering higher accuracy than traditional methods [22]. |

Advanced Bioinformatics and AI Approaches for Accurate Variant Detection

Machine Learning and Logistic Regression Models for Variant Filtering

Next-Generation Sequencing (NGS) has revolutionized genetic research and clinical diagnostics, enabling comprehensive mutation profiling across the genome and exome. However, a significant challenge persists: the accurate distinction between true biological variants and false positives (FPs) arising from technical artifacts. These FPs can originate from multiple sources, including sequencing errors, inadequate library preparation, oxidative DNA damage during ultrasonic fragmentation, and alignment difficulties in complex genomic regions [24] [25] [4]. The presence of FPs confounds downstream analysis, leading to incorrect biological interpretations, wasted resources on orthogonal validation, and potential errors in clinical reporting.

To address this, machine learning (ML) models have emerged as powerful tools that surpass traditional threshold-based filtering. By integrating multiple quality metrics and genomic features, ML approaches can learn complex patterns that distinguish true variants from artifacts with high precision. This technical support guide details the implementation of ML-based filtering strategies, particularly focusing on logistic regression and random forest models, to enhance the specificity of variant calling without compromising sensitivity, directly supporting research aims focused on reducing false positives in NGS data.

Key Concepts: ML Approaches for Variant Filtering

Why Machine Learning?

Traditional variant filtering methods, such as the Hard Filtering (HF) or Variant Quality Score Recalibration (VQSR) within the Genome Analysis Toolkit (GATK), often rely on applying static thresholds to a limited set of quality metrics [25]. This approach is limiting because a single annotation falling outside a threshold can filter out a true variant even if all other annotations suggest it is genuine [25]. Machine learning models overcome this by considering the complex, non-linear relationships between multiple features simultaneously. They can be trained on high-confidence "truth sets" to learn a probabilistic model that assigns a confidence score to each variant call, allowing for a more nuanced and accurate classification [25] [5].

Several supervised ML models have been successfully applied to the variant filtering problem. The choice of model often involves a trade-off between interpretability, performance, and computational complexity.

- Logistic Regression (LR): A linear model that predicts the probability of a variant being a true positive. Its key advantage is high interpretability, as the contribution of each feature to the final decision can be easily understood [26] [5]. Studies have shown LR to be highly effective in capturing false positives [26].

- Random Forest (RF): An ensemble method that constructs multiple decision trees during training and outputs the mode of their classes. It is robust to overfitting and can model complex, non-linear relationships between features. It has demonstrated high false positive capture rates [25] [26].

- Gradient Boosting (GB): Another ensemble technique that builds trees sequentially, with each new tree correcting the errors of the previous ones. This model has been shown to achieve an excellent balance between false positive capture and true positive retention [26].

The following table summarizes the performance characteristics of these models as reported in recent studies:

Table 1: Performance Comparison of Machine Learning Models for Variant Filtering

| Model | Key Strengths | Reported Performance |

|---|---|---|

| Logistic Regression | Highly interpretable, efficient to train, provides feature coefficients | High false positive capture rate; effective for probabilistic filtering [26] [5] |

| Random Forest | Robust, handles non-linear relationships, reduces overfitting | High false positive capture rate; outperforms threshold-based methods [25] [26] |

| Gradient Boosting | High predictive accuracy, handles complex feature interactions | Achieves best balance between FP capture and TP retention [26] |

Experimental Protocols: Implementing an ML Filtering Pipeline

This section provides a detailed methodology for developing and validating a machine learning model for filtering false-positive single nucleotide variants (SNVs).

Data Preparation and Feature Engineering

The foundation of a robust ML model is a high-quality training dataset with accurate labels.

- Sample Selection and Sequencing: Begin with genomic DNA from well-characterized reference samples, such as those from the Genome in a Bottle (GIAB) Consortium (e.g., NA12878). Perform whole exome or whole genome sequencing using your standard laboratory protocol. It is critical to document all steps in the library preparation process, as factors like the fragmentation method (enzymatic vs. ultrasonic) can introduce specific artifacts and significantly impact the feature landscape [24].

- Variant Calling and Labeling: Process the raw sequencing data through your established bioinformatics pipeline (e.g., based on GATK Best Practices [27]). The resulting variant calls (VCF file) must then be compared against a high-confidence truth set, such as the GIAB benchmark files [25] [26]. Variants present in the truth set are labeled "True Positive (TP)," while those absent are labeled "False Positive (FP)."

- Feature Extraction: For each variant, compile a comprehensive set of features. These typically fall into two categories:

- Variant Caller Quality Metrics: Standard metrics from callers like GATK, including Quality-by-Depth (QD), Mapping Quality (MQ), Strand Bias (FS, SOR), Read Position Bias (ReadPosRankSum), and Allele Frequency [25] [26].

- Genomic Context Features: These are crucial for capturing systematic errors and have been shown to be highly informative [25]. Key features include:

- Local GC content: Calculated in a window around the variant.

- Sequence context: e.g., presence in a homopolymer run, CpG island, or segmental duplication.

- Substitution type: Classifying the mutation as a transition or transversion (e.g., C>A/G>T transversions are often linked to oxidative artifacts [24]).

- Evolutionary context: Ancestral allele status and conservation scores.

Model Training and Validation

Once the labeled dataset with features is prepared, the model training process begins.

- Data Splitting: Split your data into training and testing sets. To avoid bias, ensure variants from the same sequencing run or sample are not spread across both sets. Techniques like leave-one-sample-out cross-validation are recommended [26].

- Model Training: Train selected models (LR, RF, GB) using the training set. Given that true variants typically far outnumber false positives in a dataset, it is essential to address this class imbalance. Techniques like cost-sensitive learning during training or oversampling the minority class (FP variants) can prevent the model from being biased toward the majority class [25].

- Performance Assessment: Evaluate the trained models on the held-out test set. Key metrics include:

- Precision: The proportion of predicted true positives that are correct. (Critical for reducing confirmatory testing).

- Recall/Sensitivity: The proportion of actual true positives that are correctly identified.

- Specificity: The proportion of actual false positives that are correctly identified.

- False Discovery Rate (FDR): The proportion of predicted true positives that are actually false.

The workflow for this entire process is summarized in the diagram below:

Diagram 1: ML Variant Filtering Workflow. This diagram outlines the key steps in creating a machine learning model for variant filtering, from sample preparation to model evaluation.

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: My model has high precision but low recall (sensitivity). Am I missing too many true variants? This is a common outcome of class imbalance or an overly conservative model. To address this:

- Refine the Training Set: Ensure your truth set comprehensively covers different genomic contexts (e.g., low-complexity regions, high-GC areas).

- Adjust Classification Threshold: The default 0.5 probability threshold might be too high. Use a Precision-Recall curve to find an optimal threshold that balances your specific needs for sensitivity and precision [25].

- Implement Cost-Sensitive Learning: Penalize the misclassification of true positives more heavily during model training to reduce false negatives [25].

Q2: Can I use a model trained on public data (like NA12878) for my own project's data? While a pre-trained model can be a good starting point, performance may suffer if your experimental protocols (e.g., sequencing platform, library prep kit) differ significantly. For optimal results, retraining or fine-tuning the model on a subset of your own data that has been orthogonally validated is strongly recommended [25] [26]. Pipeline-specific differences in quality features necessitate de novo model building for clinical-grade applications [26].

Q3: My lab uses enzymatic fragmentation instead of ultrasonic shearing. Do I still need to worry about oxidative artifacts? Yes, but the burden may be lower. Ultrasonic fragmentation is a principal source of oxidative artifacts like 8-oxoguanine, which lead to specific C>A/G>T transversions [24]. While enzymatic fragmentation minimizes these artifacts, other sources of error persist. Your ML model will learn the specific artifact signatures present in your data, but including features like substitution type and strand bias will help it identify any residual oxidative damage.

Troubleshooting Common Experimental Issues

ML models can also help diagnose wet-lab issues. The table below links common experimental problems to their signatures in the data and proposed ML-focused solutions.

Table 2: Troubleshooting Guide for NGS Preparation Errors Impacting Variant Calling

| Problem | Failure Signatures in Data | Corrective Actions & ML Integration |

|---|---|---|

| Oxidative Damage during Fragmentation [24] | Enrichment of low-frequency C>A / G>T transversions; strong batch effects. | Switch to enzymatic fragmentation. Use ML features: substitution type, VAF, strand orientation bias (SOB) to model these artifacts. |

| Low Library Yield / Complexity [4] | High duplicate read rate; low on-target rate; uneven coverage. | Re-purify input DNA; optimize fragmentation; use fluorometric quantification. Low complexity can be a feature for the ML model. |

| Adapter Contamination / Dimer Formation [4] | Sharp peak at ~70-90 bp in Bioanalyzer trace; low yield. | Titrate adapter:insert ratio; optimize bead-based cleanup. ML can help filter spurious calls originating from these regions. |

| Over-amplification PCR Artifacts [4] | High duplicate rate; sequence-dependent bias; elevated error rates. | Reduce PCR cycles; use robust polymerases. The resulting errors can be learned by the model from quality metrics. |

Table 3: Key Research Reagent Solutions for ML-Based Variant Filtering Workflows

| Item | Function / Application | Example & Notes |

|---|---|---|

| GIAB Reference Materials | Provides genomic DNA from characterized cell lines for model training and validation. | Available from Coriell Institute. Essential for creating labeled training data [26]. |

| Enzymatic Fragmentation Kits | Minimizes introduction of oxidative DNA damage artifacts during library prep compared to ultrasonic shearing. | Kapa HyperPlus reagents [26]. Reduces specific C>A/G>T false positives [24]. |

| Automated Library Prep Systems | Increases reproducibility and reduces human error, leading to more consistent data for modeling. | Hamilton NGS Star workstation [26]. Standardization minimizes batch-effect features. |

| Targeted Capture Panels | For exome or custom target enrichment. Probe design impacts coverage uniformity. | Custom panels from Twist Biosciences [26]. Inefficient capture can be a source of false positives. |

| Fluorometric Quantification Kits | Accurately measures DNA/RNA concentration for optimal library prep, preventing yield issues. | Qubit HS assay [28]. Prevents quantification errors that lead to failed libraries [4]. |

Visualizing the Logistic Regression Process

Logistic regression is a particularly interpretable model. The following diagram illustrates the process it uses to classify a variant call.

Diagram 2: Logistic Regression Classification Process. This diagram shows how a logistic regression model uses a set of input features from a variant call to calculate a probability and make a final classification.

Next-generation sequencing (NGS) has revolutionized genomics, but accurate variant calling remains challenging. False positive variant calls can lead to incorrect biological conclusions, misdiagnosis in clinical settings, and wasted research resources. The integration of artificial intelligence (AI), particularly deep learning, has introduced a paradigm shift in tackling this challenge. Unlike traditional statistical methods, AI-based callers learn complex patterns from large-scale genomic datasets to distinguish true biological variants from sequencing artifacts with unprecedented accuracy [22] [29].

This technical support center focuses on three leading AI-powered variant callers—DeepVariant, Clair3, and DNAscope—which represent the cutting edge in reducing false positives. These tools leverage sophisticated neural network architectures to improve variant detection across diverse sequencing technologies, from Illumina short-reads to Oxford Nanopore long-reads [22] [30]. Below, you will find performance comparisons, detailed experimental protocols, and troubleshooting guides to help you implement these solutions effectively in your research workflow.

Performance Comparison: Quantitative Benchmarking Data

Accuracy Metrics Across Sequencing Technologies

Benchmarking studies using Genome in a Bottle (GIAB) reference samples provide critical insights into the performance of AI-based variant callers. The following table summarizes their accuracy in calling single nucleotide variants (SNVs) and insertions/deletions (indels) across different sequencing platforms [30].

Table 1: Variant Calling Performance Across Sequencing Technologies

| Variant Caller | Sequencing Technology | Variant Type | Precision (%) | Recall (%) | F1-Score (%) |

|---|---|---|---|---|---|

| DeepVariant | Illumina (Short-read) | SNV | 98.95 | 93.27 | 96.07 |

| Indel | 97.19 | 70.21 | 81.41 | ||

| PacBio HiFi (Long-read) | SNV | >99.9 | >99.9 | >99.9 | |

| Indel | >99.5 | >99.5 | >99.5 | ||

| ONT (Long-read) | SNV | 97.84 | 98.12 | 97.98 | |

| Indel | 94.11 | 69.84 | 80.10 | ||

| DNAscope | Illumina (Short-read) | SNV | 94.48 | 95.35 | 94.91 |

| Indel | 44.78 | 83.60 | 57.53 | ||

| PacBio HiFi (Long-read) | SNV | >99.9 | >99.9 | >99.9 | |

| Indel | >99.5 | >99.5 | >99.5 | ||

| Clair3 | ONT (Long-read) | SNV | High* | High* | High* |

*Clair3 demonstrates performance comparable to DeepVariant on ONT data, particularly excelling at lower coverages [22] [31].

Computational Resource Requirements

Computational efficiency is a crucial practical consideration for selecting a variant caller. The table below compares resource usage for processing a typical human whole genome [30].

Table 2: Computational Resource Requirements

| Variant Caller | AI Architecture | Sequencing Data | Runtime (Hours) | Memory (GB) | GPU Required |

|---|---|---|---|---|---|

| DeepVariant | Deep CNN | Illumina | 17.32 | 5.70 | No (Optional) |

| PacBio HiFi | 36.89 | 16.53 | No (Optional) | ||

| ONT | 105.22 | 9.85 | No (Optional) | ||

| DNAscope | Machine Learning | Illumina | 4.17 | 7.62 | No |

| PacBio HiFi | 11.66 | 17.21 | No | ||

| Clair3 | Deep CNN | ONT | Faster than peers | Not Reported | No (Optional) |

| BCFTools | Conventional | Illumina | 0.34 | 0.49 | No |

| GATK4 | Conventional | Illumina | 44.19 | 27.60 | No |

Experimental Protocols: Benchmarking Workflow

Standardized Benchmarking Using GIAB Reference Materials

A robust protocol for benchmarking variant callers against GIAB gold standard datasets ensures consistent and comparable results. This methodology is widely used in published comparative studies [32] [30].

Step-by-Step Protocol:

Data Acquisition: Download whole-exome or whole-genome sequencing data for GIAB reference samples (e.g., HG001, HG002, HG003) from public repositories like NCBI Sequence Read Archive (SRA). Use the corresponding Agilent SureSelect BED file for exome analyses [32].

Read Alignment: Preprocess raw FASTQ files by aligning to the human reference genome GRCh38 using BWA-MEM. Sort and mark duplicates in the resulting BAM files using tools like Samtools or GATK [33].

Variant Calling: Execute the AI-based variant callers on the processed BAM files. Use default parameters for initial benchmarking:

- DeepVariant: Run with platform-specific models (e.g.,

--model_type=WGSfor whole-genome data) [22]. - Clair3: Specify the platform and the path to the pre-trained model (e.g.,

--platform=ontfor Nanopore data) [22]. - DNAscope: Use the appropriate pipeline for your data type (e.g.,

DNAscope HiFifor PacBio data) [22].

- DeepVariant: Run with platform-specific models (e.g.,

Performance Evaluation: Compare the output VCF files against the GIAB high-confidence truth sets (v4.2.1) using the Variant Calling Assessment Tool (VCAT) or hap.py. Ensure comparisons are restricted to high-confidence regions and the exome capture kit BED file, if applicable [32].

Metric Calculation: Calculate precision, recall, and F1-score from the VCAT output to quantitatively assess performance. Precision is particularly critical for evaluating the reduction of false positives [32] [30].

Research Reagent Solutions and Essential Materials

Table 3: Key Reagents and Materials for Benchmarking Experiments

| Item | Function/Benefit | Example/Reference |

|---|---|---|

| GIAB Reference DNA | Provides gold-standard, well-characterized genomic material for benchmarking. | HG001-HG007 series [32] |

| Agilent SureSelect Exome Kit | Captures exonic regions for consistent whole-exome sequencing comparisons. | Agilent SureSelect Human All Exon V5 [32] |

| Reference Genome | Standardized baseline for read alignment and variant calling. | GRCh38/hg38 [32] [33] |

| High-Confidence Region BED Files | Defines genomic regions for reliable variant assessment, excluding ambiguous areas. | GIAB v4.2.1 [32] |

| Pre-Trained AI Models | Platform-specific models enabling accurate variant calling without custom training. | DeepVariant WGS model, Clair3 ONT model [22] |

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: Which AI caller is most effective for reducing false positive indels in Illumina data? A: For Illumina short-read data, DeepVariant consistently demonstrates superior precision for indel calling, significantly reducing false positives compared to other tools. While DNAscope may achieve high recall, its precision for indels can be substantially lower (e.g., 44.78% vs. DeepVariant's 97.19%), resulting in many more false positives [30]. For the most accurate indel detection with minimal false positives, DeepVariant is the recommended choice.

Q2: Are deep learning models like DeepVariant and Clair3 applicable to bacterial genomics? A: Yes. Recent evidence confirms that deep learning variant callers, particularly Clair3 and DeepVariant, significantly outperform traditional methods on bacterial nanopore sequence data. These tools achieve accuracy that matches or even exceeds the traditional "gold standard" of Illumina short-read sequencing, even when the models were originally trained on human data [31]. This makes them highly suitable for microbial genomics applications such as outbreak investigation and antimicrobial resistance detection.

Q3: What is the main computational limitation when implementing these AI callers? A: The primary constraint is often runtime and memory requirements. While DNAscope is optimized for speed and does not require a GPU, DeepVariant can be computationally intensive, especially with long-read data (e.g., >100 hours for ONT data) [30]. For large-scale studies, consider using high-performance computing clusters or cloud-based solutions. DNAscope offers a favorable balance of speed and accuracy, particularly for short-read data.

Q4: Can I use these tools for somatic variant calling in cancer research? A: DeepVariant is primarily designed for germline variant calling. For somatic variant detection in cancer (e.g., tumor-normal pairs), specialized tools like GATK Mutect2 are more appropriate. However, emerging machine learning approaches, such as Random Forest models, are being developed to filter somatic variants in circulating tumor DNA (ctDNA) data, demonstrating the potential for AI to also improve somatic mutation detection [33].

Troubleshooting Common Issues

Problem: Low Precision (High False Positives) in Specific Genomic Regions

- Cause: All variant callers, including AI-based ones, can struggle with complex genomic regions such as segmental duplications, high-homopolymer content, or low-complexity repeats [10] [22].

- Solution:

- Utilize Hybrid Sequencing: Consider a hybrid approach that combines both short-read and long-read data. A hybrid DeepVariant model that jointly processes Illumina and Nanopore data has been shown to improve germline variant detection accuracy, leveraging the strengths of both technologies [34].

- Apply Region-Based Filtering: Use high-confidence region BED files (e.g., from GIAB) to filter out calls in problematic regions that are known to be prone to false positives [32].

Problem: Excessive Computational Time or Memory Usage

- Cause: Deep learning models are computationally intensive, especially when processing high-coverage whole genomes or long-read data [30].

- Solution:

- Optimize Resource Allocation: Ensure you are using the most recent version of the software, as performance optimizations are frequently implemented.

- Select the Right Tool for the Task: If computational resources are limited, consider DNAscope for short-read analyses, as it provides a good balance of accuracy and speed without requiring a GPU [22] [30].

- Subsample Data for Testing: When optimizing parameters, use a subset of your data (e.g., a single chromosome) to reduce runtime during the testing phase.

Problem: Poor Performance on Long-Read Data (ONT/PacBio)

- Cause: Basecalling errors and higher error rates inherent in long-read technologies, particularly in homopolymer regions, can challenge variant callers [31].

- Solution:

- Use the Highest Quality Basecalling: For ONT data, use the super-accuracy (sup) basecalling model and, if available, duplex reads. This significantly improves input data quality, which directly enhances variant calling accuracy for all downstream tools [31].

- Employ Specialized Pipelines: For ONT data, use pipelines specifically designed for long-reads, such as the PEPPER-Margin-DeepVariant pipeline, which is optimized for this data type and can outperform conventional tools [30].

Frequently Asked Questions (FAQs)

Q: What is ensemble genotyping and why is it used in NGS analysis?

Ensemble genotyping is a bioinformatics approach that integrates the results from multiple variant calling algorithms to produce a more accurate and confident set of genetic variants. It aims to reduce false positives—variants mistakenly identified due to sequencing or analysis errors—without significantly sacrificing sensitivity. Different variant callers use distinct statistical models and heuristics, making them susceptible to different types of errors. By combining them, ensemble methods leverage their complementary strengths, providing higher confidence in the final variant calls, which is crucial for both research and clinical diagnostics [5] [35] [18].

Q: How does ensemble genotyping specifically help in reducing false positives?

Ensemble genotyping significantly reduces false positives by requiring consensus or using machine learning to weigh evidence from multiple, independent variant callers. One study demonstrated that an ensemble genotyping approach successfully excluded > 98% (105,080 of 107,167) of false positives while retaining > 95% (897 of 937) of true positives in de novo mutation discovery. This performance was superior to a simple consensus method using two different sequencing platforms [5]. Another method, VariantMetaCaller, uses a support vector machine (SVM) to combine rich annotation data from multiple callers, achieving higher sensitivity and precision than any single tool alone [35].

Q: What are the common challenges when setting up an ensemble genotyping workflow?

Researchers often face several challenges:

- Tool Variability and Standardization: Different variant callers can produce conflicting results, making integration complex. Using standardized pipelines where possible helps reduce inconsistencies [20].

- Computational Demands: Running multiple callers and the subsequent ensemble process requires significant computational resources and time, which can be a bottleneck for large-scale studies [20].

- Data Integration: Effectively combining the high-dimensional, heterogeneous data and annotations from different callers requires sophisticated statistical models or machine learning, which demands expertise [35].

- Determining Thresholds: Finding the optimal balance between sensitivity (avoiding false negatives) and precision (avoiding false positives) is not always straightforward [5] [35].

Q: Which variant callers are commonly integrated into ensemble methods?

There is no single fixed combination, as the choice can depend on the specific application (e.g., germline vs. somatic variants). Commonly used and evaluated callers in ensemble studies include:

The key is to use callers that are orthogonal, meaning they employ different underlying algorithms, to maximize the benefit of combination [35] [18].

Troubleshooting Guides

Problem: High False Positive Rate in Final Variant Set

Potential Causes and Solutions:

- Cause 1: Inadequate Consensus. A variant called by only one out of multiple tools is more likely to be a false positive.

- Cause 2: Poor Quality Genomic Regions. Certain parts of the genome (e.g., repetitive regions, areas with high or low GC content) are prone to systematic sequencing and alignment errors, leading to false positives.

- Solution: Use predefined sets of "low-quality" genomic regions to filter your variants. One study showed that 86-89% of false-positive SNVs were found in such regions. Filtering these out can dramatically reduce false positives with a minimal cost to true positives [36].

- Cause 3: Lack of Quantitative Filtering. Relying only on a "hard" consensus (e.g., present/absent in callers) ignores valuable quantitative data.

- Solution: Implement a machine learning-based filter. Tools like VariantMetaCaller (which uses SVM) or logistic regression models can use quality metrics from multiple callers (e.g., read depth, mapping quality, strand bias) to calculate a probability of a variant being a true positive. This allows for precision-based filtering tailored to your study's needs [5] [35] [2].

Problem: Low Concordance Between Individual Variant Callers

Potential Causes and Solutions:

- Cause 1: Differences in Alignment. The initial read alignment is a critical step that greatly influences downstream variant calling.

- Cause 2: Suboptimal Input Data Quality. The principle of "garbage in, garbage out" applies strongly to variant calling. Poor quality DNA, library preparation artifacts, or low sequencing coverage can cause callers to disagree.

Problem: Computational Bottlenecks in the Ensemble Workflow

Potential Causes and Solutions:

- Cause: Running multiple full variant calling pipelines is inherently resource-intensive.

- Solution:

- Optimize Pipeline Efficiency: Use efficient tools like the DRAGEN pipeline or Sentieon, which are optimized for speed [2].

- Leverage Pre-called Data: If available, some ensemble methods can work with the variant call format (VCF) files and their annotations from previous runs, avoiding the need to re-call variants every time [5].

- Strategic Caller Selection: Benchmark a smaller set of 2-3 complementary callers to find a balance between performance and computational load [18].

- Solution:

Performance Data

The quantitative benefits of ensemble genotyping and related filtering methods are demonstrated in the following tables.

Table 1: Performance of Ensemble Genotyping in Reducing False Positives

| Metric | Performance with Ensemble Genotyping | Context |

|---|---|---|

| False Positives Excluded | > 98% (105,080 of 107,167) | De novo mutation discovery [5] |

| True Positives Retained | > 95% (897 of 937) | De novo mutation discovery [5] |

| Reduction in Confirmatory Testing | 85% for SNVs; 75% for indels | Clinical genome sequencing using an ML model [2] |

| Overall Reduction in Sanger Sequencing | 71% | Clinical practice after implementing an ML filter [2] |

Table 2: Theoretical Variant Recall by Sequencing Depth and Allele Frequency

| Variant Allele Frequency (VAF) | Theoretical Recall at 30x Coverage | Theoretical Recall at 75x/100x Coverage |

|---|---|---|

| ≥ 0.2 (20%) | Confidently detectable | Confidently detectable |

| ~ 0.15 (15%) | - | High recall in high-quality genomic regions [36] |

| ≤ 0.1 (10%) | Low recall | Challenging, requires deeper sequencing [36] |

This table highlights that even with ensemble methods, the ability to detect low-frequency variants is constrained by sequencing depth and genomic context [36].

Experimental Protocols

Protocol 1: Implementing a Basic Consensus Ensemble Method

This protocol outlines a foundational approach for combining variant calls from multiple tools.

- Alignment: Align your raw sequencing data (FASTQ) to a reference genome (e.g., GRCh38) using a aligner like BWA-MEM to create a BAM file [18].

- Variant Calling: Run the aligned BAM file through at least two, but preferably three or more, distinct variant callers (e.g., GATK HaplotypeCaller, FreeBayes, SAMtools) to generate individual VCF files [35] [18].

- Variant Normalization: Decompose and normalize the variants in all VCF files using a tool like

bcftools norm. This ensures consistent representation of complex variants (e.g., multinucleotide polymorphisms) across callers, which is essential for accurate comparison [18]. - Intersection: Use a tool like

bcftools isecto find the intersection of variants present in the normalized VCF files. - Set Consensus Threshold: Define your final high-confidence variant set. A common threshold is variants called by at least 2 out of your N callers [5] [18].

Protocol 2: Machine Learning-Based Ensemble Using VariantMetaCaller

This protocol uses a more advanced, quantitative approach to combine evidence.

- Data Preparation: Generate VCF files from multiple variant callers (e.g., GATK HaplotypeCaller, GATK UnifiedGenotyper, FreeBayes, SAMtools) as in the basic protocol [35].

- Feature Extraction: Extract a comprehensive set of quality metrics and annotations from each VCF file. These are the features for the model and can include read depth (DP), genotype quality (GQ), mapping quality (MQ), allele balance, and strand bias metrics [35] [2].

- Model Training: Use VariantMetaCaller, which employs a Support Vector Machine (SVM), to train a model on a dataset with known true and false variants. Training on benchmark sets like Genome in a Bottle (GIAB) is ideal [35] [2].

- Probability Prediction: Apply the trained model to your experimental VCF data. VariantMetaCaller will output a probability score for each variant, representing its likelihood of being a true positive [35].

- Precision-Based Filtering: Filter variants based on their probability score. You can set a threshold that matches the desired balance between sensitivity and precision for your specific project [35].

Workflow Diagrams

Diagram Title: Ensemble Genotyping Workflow

Diagram Title: Machine Learning Filtering Process

The Scientist's Toolkit: Research Reagent & Computational Solutions

Table 3: Essential Resources for Ensemble Genotyping Experiments