Strategic Cost-Reduction in the Clinic: Implementing Economical NGS for Precision Medicine

This article provides a comprehensive guide for researchers, scientists, and drug development professionals seeking to implement cost-effective Next-Generation Sequencing (NGS) in clinical and translational research.

Strategic Cost-Reduction in the Clinic: Implementing Economical NGS for Precision Medicine

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals seeking to implement cost-effective Next-Generation Sequencing (NGS) in clinical and translational research. It explores the foundational economic evidence supporting NGS over single-gene testing, details methodological approaches from targeted panels to automation, and offers practical strategies for workflow optimization and quality management. Furthermore, it covers validation frameworks and a comparative analysis of sequencing approaches, synthesizing key takeaways to empower labs of all sizes to enhance genomic research efficiency and accelerate drug discovery.

The Economic Case for NGS: From Single-Gene Testing to Comprehensive Genomic Profiling

The adoption of Next-Generation Sequencing (NGS) represents a paradigm shift in molecular diagnostics for advanced cancers. Current guidelines from leading oncological societies, including the European Society for Medical Oncology (ESMO) and the National Comprehensive Cancer Network (NCCN), recommend NGS for comprehensive biomarker testing in patients with advanced non–small cell lung cancer (NSCLC) and other malignancies [1] [2]. Unlike sequential single-gene testing (SGT), which evaluates biomarkers one at a time, NGS uses a panel-based approach to analyze multiple genomic alterations simultaneously from a single tissue sample [2]. While the implementation of NGS has been slowed by perceptions of high cost and lack of standardization, a growing body of economic evidence demonstrates that NGS provides significant clinical and economic advantages over traditional testing approaches [1] [3] [2]. This article frames the cost-benefit analysis of NGS within the broader context of developing cost-effective strategies for clinical laboratories, providing both economic evidence and practical technical support for researchers and drug development professionals implementing these technologies.

Quantitative Cost-Benefit Analysis

Recent studies across multiple countries and healthcare settings have consistently demonstrated the economic advantage of NGS-based testing strategies compared to sequential SGT, particularly as the number of clinically actionable biomarkers increases.

Table 1: Key Cost-Effectiveness Findings for NGS vs. SGT in Advanced NSCLC

| Study Context & Population | Economic Outcome Measures | NGS Performance vs. SGT | Citation |

|---|---|---|---|

| Spanish reference centers (Target population: 9,734 patients with advanced NSCLC) | Incremental Cost-Utility Ratio (ICUR): €25,895 per Quality-Adjusted Life-Year (QALY) gainedAdditional alterations detected: 1,873Additional patients enrolled in trials: 82Total QALYs gained: 1,188 | Cost-effective (below standard thresholds) | [1] |

| Global multicenter study (10 countries, 4,491 patients with nonsquamous aNSCLC) | Cost per Patient (Real-world model):18% lower for NGS in 2021-202226% lower for NGS in 2023-2024Tipping Point: NGS costs less when >10 biomarkers tested | Cost-saving | [2] |

| Genomic Testing Cost Calculator (Multiple cancer types) | Cost Per Correctly Identified Patient (CCIP) for nonsquamous NSCLC:SGT: €1,983NGS: €658 | 67% cost reduction with NGS | [3] |

Detailed Costing Methodology and Experimental Protocol

The economic evidence supporting NGS is derived from robust costing methodologies. The 2025 global multicenter study employed micro-costing analyses based on data from 10 international pathology centers [2]. The research was structured around three temporal scenarios:

- 'Starting Point' (SP): Reflected practice in 2021-2022

- 'Current Practice' (CP): Reflects practice in 2023-2024

- 'Future Horizons' (FH): Projects practice for 2025-2028

The study utilized two distinct models [2]:

- Real-world model: Incorporated the specific biomarkers and testing techniques actually used by each center.

- Standardized model: Employed a predefined, identical set of biomarkers and testing techniques across all centers to enable direct comparison.

Total costs included personnel costs, consumables, equipment, and overheads. Cost calculations also accounted for the need for retesting when initial samples were insufficient [2]. A deterministic sensitivity analysis (DSA) was performed, varying individual cost parameters by ±20% to test the robustness of the results, which confirmed that the core findings were stable [2].

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementing a robust NGS workflow in a clinical research setting requires several key components beyond the sequencer itself.

Table 2: Essential Materials for NGS Implementation in Clinical Research

| Item Category | Specific Examples | Function in NGS Workflow |

|---|---|---|

| Nucleic Acid Quality Assessment | Nucleic acid quantitation instrument, Quality analyzer (e.g., Bioanalyzer) | Ensures input sample quality and quantity for reliable library preparation [4]. |

| Library Preparation | Library prep kits, Enzymes, Adapters, Barcodes | Fragments DNA/RNA and adds platform-specific adapters for sequencing [4]. |

| Cluster Generation | Flow cells, Cluster generation reagents (varies by platform) | Amplifies individual DNA fragments locally on a surface to create detectable signals during sequencing [4]. |

| Sequencing Consumables | Sequencing reagents, Buffers, Wash solutions | Provides nucleotides, enzymes, and buffers required for the sequencing-by-synthesis chemistry [5] [4]. |

| Data Analysis | Analysis software licenses, Reference genomes, Compute infrastructure | Converts raw signal to base calls, aligns reads, and identifies variants for biological interpretation [4]. |

Technical Support Center: NGS Troubleshooting Guides and FAQs

Common Instrumental Issues and Solutions

Table 3: Troubleshooting Common NGS Instrument Issues

| Instrument System | Problem / Error Message | Possible Cause | Recommended Action |

|---|---|---|---|

| Ion PGM System | "W1 pH out of range" error | pH of W1 buffer out of spec; insufficient volume; measurement glitch [5]. | Check W1 volume; restart measurement. If error persists, note pH/volume/error and contact support [5]. |

| Ion PGM System | "Server not connected" | Communication failure between sequencer and server [5]. | Reboot both system and server. If immediate reboot is not possible, runs can be saved locally on the instrument and transferred later [5]. |

| Ion S5 / S5 XL | Red "Alarms" or "Events" message | Software update available; network connectivity issues [5]. | Check for and install software updates; check ethernet connection and network router; power cycle the instrument [5]. |

| Ion S5 / S5 XL | "Chip Check Failed" | Clamp not closed; chip improperly seated; damaged chip [5]. | Open clamp, remove and inspect chip for damage, reseat or replace chip, close clamp firmly, and rerun Chip Check [5]. |

| NextSeq 1000/2000 | "Error performing SequencingProtocolNameSafeState" | Communication issue on the instrument interrupting the run [6]. | Power cycle the instrument; prepare a new flow cell; set up a new run with the same cartridge and the new flow cell [6]. |

Data Analysis Bottlenecks and Best Practices

FAQ 1: What are the most critical steps to ensure accurate NGS data analysis?

- Rigorous Quality Control (QC): Use QC tools (e.g., FastQC) to evaluate raw sequencing reads for issues like low base quality, adapter contamination, and overrepresented sequences before proceeding with analysis [7] [8]. Trim low-quality bases and adapter sequences, and filter out low-quality reads [8].

- Appropriate Tool Selection and Parameter Optimization: Do not rely solely on default settings. Choose aligners and variant callers suited to your experimental goal (e.g., germline vs. somatic variants) and fine-tune parameters to balance sensitivity and specificity [7] [8].

- Comprehensive Variant Filtering and Annotation: After variant calling, filter variants based on quality scores, read depth, and allele frequency. Annotate variants with functional impact, population frequency, and clinical databases to aid biological interpretation [8].

FAQ 2: Our analysis pipeline is computationally slow. How can we improve efficiency?

- Utilize Standardized Workflows: Using established, standardized pipelines (e.g., those from GATK or nf-core) can reduce inconsistencies and improve computational efficiency [7].

- Plan for Computational Demands: Whole-genome and transcriptome studies generate large datasets that require powerful servers with significant memory and processing power. Ensure your computational resources are scaled appropriately for your projects to avoid significant delays [7].

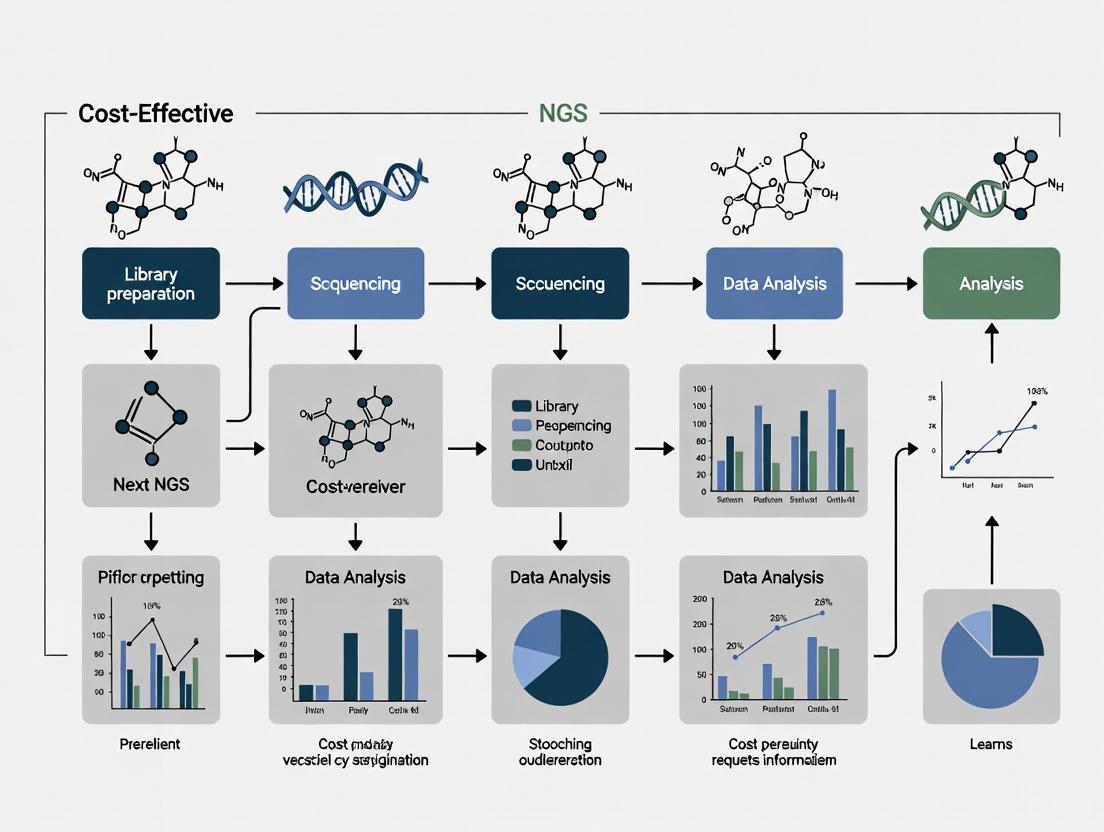

Workflow and Logical Relationship Diagrams

NGS Clinical Testing and Analysis Workflow

NGS vs SGT Cost-Benefit Decision Logic

The body of economic evidence demonstrates that NGS is not merely a technologically superior testing modality but also a cost-effective and often cost-saving strategy for molecular profiling in oncology, particularly for advanced NSCLC. The key drivers of this economic benefit include the ability to test multiple biomarkers concurrently from a limited tissue sample, reduced turnaround times enabling faster treatment decisions, and the detection of more actionable alterations that can lead to enrollment in clinical trials or use of targeted therapies [1] [3] [2]. For clinical laboratories, the decision to implement NGS should be guided by the volume of testing and the number of biomarkers required. Current evidence indicates a tipping point of approximately 10 biomarkers, beyond which NGS becomes the economically rational choice [2]. By combining this economic rationale with robust technical protocols and troubleshooting guidance, research institutions and drug development professionals can strategically implement NGS to maximize both clinical outcomes and operational efficiency.

The global clinical next-generation sequencing (NGS) market is undergoing a significant transformation, driven by a convergence of technological innovation and growing clinical demand. Valued at $6.2 billion in 2024, the clinical NGS market is projected to reach $15.2 billion by 2032, registering a compound annual growth rate (CAGR) of 13.6% [9]. Similarly, the broader NGS market, encompassing research and clinical applications, is poised to grow from $3.88 billion in 2024 in the United States to $16.57 billion by 2033, expanding at a CAGR of 17.5% [10]. This growth is fundamentally fueled by the declining costs of sequencing technologies and their expanding utility in personalized medicine, oncology, and infectious disease diagnostics.

The core thesis of this technical support center is that strategic implementation of cost-effective NGS workflows enables clinical laboratories to maximize diagnostic yield while managing operational expenses. Key to this strategy is understanding that holistic cost assessments—which factor in turnaround time, personnel requirements, and the number of hospital visits—often reveal NGS to be more economical than traditional single-gene testing approaches, especially when multiple genes require analysis [11]. This resource provides troubleshooting guides and FAQs to help researchers and clinicians overcome common technical barriers to implementing robust and cost-efficient NGS in their workflows.

Market Data and Cost Analysis

Understanding the quantitative market landscape and cost structures is essential for strategic planning in clinical NGS implementation.

Table 1: Clinical and General NGS Market Projections

| Market Segment | 2024 Market Value | Projected 2032/2033 Value | CAGR (Compound Annual Growth Rate) | Key Drivers |

|---|---|---|---|---|

| Global Clinical NGS Market [9] | USD 6.2 billion | USD 15.2 billion (2032) | 13.6% | Personalized medicine demand, reduced sequencing costs, increased R&D investments. |

| U.S. NGS Market [10] | USD 3.88 billion | USD 16.57 billion (2033) | 17.5% | Demand for tailored medications, use in environmental/agricultural research, advances in automation. |

| Global NGS Market [12] [13] | - | USD 42.25 billion (2033) | 18.0% (2025-2033) | Genomic research demand, technological advancement, adoption in clinical diagnostics (NIPT, rare diseases, oncology). |

The driving forces behind this growth are multifaceted. Technologically, continuous innovation in sequencing platforms (e.g., Illumina's NovaSeq X series) has dramatically reduced the cost of sequencing a human genome to approximately $200 while simultaneously increasing throughput and speed [10]. Clinically, the shift towards personalized medicine is paramount, with NGS becoming indispensable for tailoring treatments to individual patients based on their genetic profiles, thereby minimizing ineffective therapies and improving outcomes [9] [10].

A critical consideration for labs is the cost-effectiveness of NGS compared to traditional single-gene tests. Evidence from a systematic review indicates that targeted NGS panels become cost-effective compared to single-gene tests when four or more genes require analysis [11]. Furthermore, when holistic costs are considered—including turnaround time, healthcare staff requirements, and the number of hospital visits—targeted panel testing consistently provides cost savings [11].

Table 2: NGS Cost-Effectiveness Analysis versus Single-Gene Testing

| Analysis Type | Scenario | Cost-Effectiveness Outcome | Key Insights |

|---|---|---|---|

| Direct Testing Cost Comparison [11] | Testing 4+ genes | Cost-effective | Targeted NGS panels (2-52 genes) reduce costs versus sequential single-gene tests. |

| Holistic Cost Comparison [11] | Any multi-gene testing | Cost-saving | NGS reduces turnaround time, staff requirements, number of hospital visits, and overall hospital costs. |

| Long-Term Outcome Comparison [11] | Including cost of targeted therapies | Variable | Incremental cost-effectiveness ratio may be above common thresholds, but provides valuable patient benefits. |

Troubleshooting Guides and FAQs

This section addresses common, specific issues users encounter during NGS experiments, providing root causes and actionable solutions.

Common Sequencing Preparation Problems

Robust library preparation is the foundation of a successful NGS run. The following table outlines frequent failure points.

Table 3: Troubleshooting Common NGS Library Preparation Issues

| Problem Category | Typical Failure Signals | Common Root Causes | Corrective & Preventive Actions |

|---|---|---|---|

| Sample Input & Quality [14] [15] | Low starting yield; smear in electropherogram; low library complexity. | Degraded DNA/RNA; contaminants (phenol, salts); inaccurate quantification [14]. | Re-purify samples [15]. Use fluorometric quantification (e.g., Qubit) over UV absorbance [14]. |

| Fragmentation & Ligation [14] | Unexpected fragment size; inefficient ligation; sharp ~70-90 bp adapter-dimer peaks. | Over-/under-shearing; improper buffer conditions; suboptimal adapter-to-insert ratio [14]. | Optimize fragmentation parameters; titrate adapter:insert molar ratios; ensure fresh ligase [14]. |

| Amplification & PCR [14] | Overamplification artifacts; high duplicate rate; bias. | Too many PCR cycles; inefficient polymerase due to inhibitors; primer exhaustion [14]. | Reduce PCR cycles; re-amplify from leftover ligation product; ensure optimal primer design and annealing [14]. |

| Purification & Cleanup [16] [14] | Incomplete removal of adapter dimers; high sample loss; carryover of salts. | Wrong bead:sample ratio; over-dried beads; inadequate washing; pipetting errors [14]. | Precisely follow cleanup protocols; avoid bead over-drying; use master mixes to reduce pipetting error [14]. |

Frequently Asked Questions (FAQs)

Q1: My NGS library yield is unexpectedly low after preparation. What are the primary causes and how can I fix this? [14]

- Cause: The most common causes are poor input DNA/RNA quality (degradation or contaminants), inaccurate quantification or pipetting errors, and inefficiencies during fragmentation or adapter ligation.

- Solution: Re-purify your input sample and use a fluorometric method (Qubit) for accurate quantification. Calibrate pipettes and use master mixes to reduce pipetting error. Systematically optimize fragmentation time and adapter-to-insert molar ratios.

Q2: How can I reduce the risk of human error and contamination in my manual NGS workflow? [16] [14]

- Solution: Implement automated sample preparation systems where possible. Automation eliminates researcher-to-researcher variation, reduces hands-on time, and minimizes environmental exposure through closed systems [16]. For manual protocols, introduce detailed SOPs with highlighted critical steps, use "waste plates" to prevent accidental sample discarding, and enforce operator checklists [14].

Q3: I see a sharp peak at ~70-90 bp in my Bioanalyzer trace. What is it and how do I remove it? [14]

- Answer: This peak is characteristic of adapter dimers, which result from unligated adapters ligating to each other. Their primary causes are an excessive amount of adapters relative to insert DNA or inefficient ligation.

- Solution: Optimize the adapter-to-insert molar ratio during ligation. Improve size selection during cleanup by adjusting bead-to-sample ratios to more effectively exclude small fragments. If dimers persist, a re-purification of the final library is recommended.

Q4: What is a cost-effective strategy to improve the diagnostic yield of Whole-Exome Sequencing (WES) without moving to Whole-Genome Sequencing (WGS)? [17]

- Answer: Employ an extended WES approach. Standard WES targets protein-coding regions (CDS), but many pathogenic variants lie in deep intronic regions, untranslated regions (UTRs), or are structural variants (SVs). By designing custom capture probes to include intronic/UTR regions of clinically relevant genes, disease-associated repeat regions, and the mitochondrial genome, you can significantly increase diagnostic yield at a cost comparable to conventional WES [17].

Featured Protocol: Extended Whole-Exome Sequencing for Improved Diagnostic Yield

This protocol is based on a peer-reviewed study demonstrating a cost-effective method to expand the diagnostic capabilities of WES [17].

Methodology

- Objective: To detect pathogenic variants located outside standard exome capture regions (e.g., deep intronic, UTR, structural, and mitochondrial variants) without the need for more expensive WGS.

- Probe Design: Custom capture probes are designed to target:

- Intronic and UTR regions of a clinically relevant gene set (e.g., 188 genes from the Japanese insurance-covered multi-gene testing list).

- Intronic and UTR regions of genes recommended for secondary findings (e.g., 81 genes from ACMG SF v3.2).

- 70 known disease-associated repeat regions.

- The entire mitochondrial genome (using a commercial panel kit).

- Library Preparation: Genomic DNA is used as input. The library is constructed using a commercial kit (e.g., Twist Library Preparation EF Kit 2.0). The custom probe mix is added to the standard exome capture probes (e.g., Twist Exome 2.0) at an optimized ratio (e.g., 0.25x to 1x) to ensure sufficient coverage of the expanded regions without overwhelming the reaction.

- Sequencing & Analysis: Libraries are sequenced on an Illumina platform (e.g., NextSeq 500) with 150 bp paired-end reads. Data analysis involves:

- Variant Calling: GATK Best Practices workflow for SNVs and indels.

- SV Detection: Tools like DRAGEN and CNVkit.

- Repeat Expansion Analysis: Tools like ExpansionHunter and STRipy.

The following workflow diagram illustrates the key steps in this extended WES protocol:

The Scientist's Toolkit: Key Reagents and Materials

Table 4: Essential Research Reagent Solutions for Extended WES

| Item | Function/Description | Example Product/Catalog |

|---|---|---|

| Library Prep Kit | Prepares genomic DNA for sequencing by fragmenting, end-repairing, A-tailing, and adapter ligating. | Twist Library Preparation EF Kit 2.0 [17] |

| Core Exome Capture Probes | Targets the standard protein-coding exome regions. | Twist Exome 2.0 [17] |

| Custom Capture Probes | Expands target regions to include introns, UTRs, repeats, and mtDNA for improved diagnostic yield. | Custom-designed probes (e.g., from Twist Bioscience) [17] |

| Mitochondrial Panel | Specifically designed to capture the entire mitochondrial genome with high uniformity. | Twist Mitochondrial Panel Kit [17] |

| Variant Caller | Software for identifying single nucleotide variants and small insertions/deletions. | GATK [17] |

| SV Caller | Software for detecting larger genomic rearrangements (deletions, duplications, etc.). | DRAGEN or CNVkit [17] |

| Repeat Expansion Detector | Specialized tool for identifying and sizing disease-associated short tandem repeats. | ExpansionHunter [17] |

Next-Generation Sequencing (NGS) has revolutionized biomedical research and clinical diagnostics by providing a powerful, high-throughput method for analyzing genetic information. For researchers, scientists, and drug development professionals working in clinical labs, implementing cost-effective NGS strategies is crucial for advancing personalized medicine and streamlining drug development pipelines. This technical support center addresses the most common experimental challenges while framing solutions within the broader context of maximizing return on investment and operational efficiency in clinical lab settings. The following sections provide practical troubleshooting guidance, detailed protocols, and strategic insights to optimize your NGS workflows.

NGS Troubleshooting Guide: Common Issues and Solutions

Library Preparation Problems

Problem: Low Library Yield After Preparation Low library yield is a frequent challenge that can compromise entire sequencing runs. This often stems from issues with sample input quality, fragmentation efficiency, or adapter ligation.

- Root Causes and Corrective Actions:

- Poor input quality/degradation: Re-purify input samples using clean columns or beads; verify sample integrity post-extraction [14].

- Contaminants inhibiting enzymes: Check 260/230 and 260/280 ratios; ensure values are >1.8 and ~1.8 respectively; dilute residual inhibitors if necessary [14].

- Fragmentation inefficiency: Optimize fragmentation parameters (time, energy, enzyme concentrations) for your specific sample type; verify fragment distribution before proceeding [14].

- Suboptimal adapter ligation: Titrate adapter:insert molar ratios; use fresh ligase and buffer; maintain optimal temperature at ~20°C [14].

Problem: High Adapter Dimer Formation Adapter dimers manifest as sharp peaks around 70-90 bp on electropherograms and compete with library fragments during sequencing.

- Diagnosis and Resolution:

- Excess adapters: Precisely calculate and optimize adapter:insert molar ratios to prevent adapter-Adapter ligation [14].

- Inefficient cleanup: Increase bead-to-sample ratios during purification to preferentially remove shorter fragments [14].

- Validation: Always check final library profile on BioAnalyzer or similar system before sequencing [14].

Instrumentation and Sequencing Issues

Problem: Chip Initialization Failures (Ion S5/S5 XL Systems) Initialization failures prevent sequencing runs from starting, potentially resulting in lost time and reagents.

- Troubleshooting Steps:

- Chip seating: Open the chip clamp, remove the chip, and check for proper seating; ensure no visible damage or moisture outside flow cell [18].

- Socket issues: If chip passes visual inspection but fails initialization, replace with new chip; persistent failures may indicate socket problems requiring technical support [18].

- Control particles: Confirm that Control Ion Sphere Particles were properly added to the sample [18].

Problem: Poor Data Quality or Low Signal Inadequate signal during sequencing can result in poor quality data and reduced throughput.

- Investigation Protocol:

- Verify library and template preparation quality and quantity [18]

- Check for proper chip loading and seating

- Confirm reagent integrity and proper storage conditions

- Validate instrument calibration and performance metrics

NGS Experimental Protocols and Methodologies

Standardized NGS Library Preparation Workflow Implementing consistent, optimized protocols is essential for generating reproducible, high-quality NGS data in clinical research settings.

Sample Quality Control and Quantification:

- Use fluorometric methods (Qubit, PicoGreen) rather than UV absorbance for template quantification [14]

- Assess sample integrity via agarose gel electrophoresis or BioAnalyzer; RNA Integrity Number (RIN) >8.0 for transcriptome studies

- Ensure purity ratios (260/280 ~1.8, 260/230 >1.8) indicate minimal contamination

Fragmentation and Size Selection:

- Optimize fragmentation conditions to achieve target insert size (typically 200-500bp for Illumina platforms)

- Perform rigorous size selection using bead-based methods or gel extraction to remove adapter dimers and short fragments

- Validate size distribution using BioAnalyzer or TapeStation before proceeding

Adapter Ligation and Amplification:

- Use validated, platform-specific adapters with appropriate barcodes for multiplexing

- Limit PCR cycles (typically 4-15) during library amplification to minimize bias and duplicate reads

- Employ high-fidelity polymerases with low error rates for amplification steps

Preventing Common Experimental Errors

Case Study: Manual NGS Library Prep in Core Facility A core laboratory experiencing sporadic failures correlated with different operators implemented these corrective measures:

- Problem: Inconsistent results across technicians, occasional complete failures [14]

- Root Cause: Deviations from protocol details, pipetting errors, reagent degradation [14]

- Solutions Implemented:

- Introduced "waste plates" to temporarily catch discarded material, allowing retrieval in case of mistakes [14]

- Highlighted critical steps in SOPs with bold text and colors to draw attention [14]

- Switched to master mixes to reduce pipetting steps and errors [14]

- Enforced cross-checking, operator checklists, and redundant logging of steps [14]

Cost-Benefit Analysis of NGS Implementation

Economic Value of NGS in Clinical Settings

Multiple studies have demonstrated that NGS-based approaches can be more cost-effective than traditional single-gene testing (SGT) strategies, particularly when evaluating multiple genomic alterations.

Table: Cost Comparison of NGS vs. Single-Gene Testing in Oncology [19]

| Testing Scenario | Patients/Year | Savings with NGS (€ per patient) | Break-Even Threshold |

|---|---|---|---|

| aNSCLC - Path 1 | 364 | 1249 | Immediate savings |

| aNSCLC - Path 2 | 317 | 30 | Above 40 patients |

| mCRC - Path 3 | 260 | 945 | Immediate savings |

| mCRC - Path 4 | 225 | 25 | Above 55 patients |

Key Findings from Cost Analysis:

- NGS-based strategy was cost-saving in 15 of 16 testing cases evaluated [19]

- Savings increase with the number of patients tested and molecular alterations analyzed [19]

- The economic advantage of NGS grows as more biomarkers become clinically actionable [19]

The Scientist's Toolkit: Essential Research Reagents

Table: Key Reagent Solutions for NGS Experiments [14]

| Reagent Category | Specific Examples | Function | Critical Quality Controls |

|---|---|---|---|

| Fragmentation Enzymes | Tagmentase, Fragmentase | Controlled DNA shearing | Lot consistency, activity validation |

| Library Preparation Kits | Illumina DNA Prep | End repair, A-tailing, adapter ligation | Efficiency, low bias, minimal adapter dimer formation |

| Cleanup Beads | SPRIselect, AMPure XP | Size selection and purification | Bead:sample ratio optimization, freshness |

| Polymerases | High-fidelity PCR enzymes | Library amplification | Low error rate, high processivity |

| Quantification Assays | Qubit dsDNA HS, qPCR | Accurate library quantification | Standard curve validation, sensitivity |

NGS Applications in Personalized Medicine and Drug Development

Clinical Applications and Workflows

NGS technologies have transformed multiple aspects of clinical medicine and therapeutic development through comprehensive genomic analysis.

Oncology and Rare Disease Diagnostics:

- Comprehensive Genomic Profiling: Simultaneous assessment of multiple biomarker classes (SNVs, indels, CNVs, fusions) from limited tissue [20]

- Rare Disease Diagnosis: Approximately 71.9% of rare diseases are genetic, with NGS enabling accurate diagnosis in previously undiagnosed cases [20]

- Liquid Biopsies: Non-invasive monitoring of tumor dynamics through circulating tumor DNA (ctDNA) analysis [20]

Infectious Disease Management:

- Pathogen Detection: Rapid identification of both known and novel pathogens through metagenomic approaches [21]

- Outbreak Investigation: Genomic characterization of emerging pathogens like SARS-CoV-2, MERS-CoV, and Bas-Congo virus [21]

Drug Development Pipeline Applications

NGS informs multiple stages of pharmaceutical development, from target identification to post-marketing surveillance.

Table: NGS Applications Across the Drug Development Pipeline [22]

| Development Stage | NGS Application | Key Benefits |

|---|---|---|

| Target Identification | Whole genome/exome sequencing of patient cohorts | Identifies novel disease-associated genes and pathways |

| Preclinical Development | RNA sequencing, epigenetic profiling | Characterizes drug mechanism of action, identifies biomarkers |

| Clinical Trials | Patient stratification, pharmacogenomics | Enriches for responders, identifies resistance mechanisms |

| Companion Diagnostics | Targeted panels (e.g., TruSight Oncology) | Identifies patients likely to respond to targeted therapies |

| Post-Market Surveillance | Liquid biopsy monitoring | Tracks resistance development, disease recurrence |

Workflow Visualization: NGS in Clinical Research and Drug Development

NGS Clinical Research Pathway

NGS in Drug Development Pipeline

Future Perspectives and Strategic Implementation

The clinical genomics market is projected to grow from US$1.06 billion in 2024 to US$5.34 billion by 2034, reflecting a compound annual growth rate (CAGR) of 17.54% [23]. This expansion is driven by increasing adoption of NGS in clinical diagnostics, rising demand for personalized medicine, and ongoing technological advancements. Laboratory leaders should prioritize several key trends when planning their 2025 NGS strategies:

- Automation and IoMT: Implementation of automated systems and Internet of Medical Things connectivity to enhance efficiency and reduce manual errors [24]

- Advanced Data Analytics: Integration of AI-driven bioinformatic tools for improved variant interpretation and clinical decision support [24] [20]

- Sustainability Initiatives: Adoption of greener processes and energy-efficient equipment that also offer long-term cost savings [24]

- Point-of-Care Testing: Development of decentralized testing solutions for increased accessibility and faster turnaround times [24]

Successful implementation of cost-effective NGS strategies requires careful consideration of both technical and economic factors. Laboratories should evaluate testing volume, required genomic coverage, bioinformatic infrastructure, and personnel expertise when selecting platforms and approaches. As the field continues to evolve, NGS technologies will play an increasingly central role in delivering personalized healthcare and accelerating therapeutic development.

Frequently Asked Questions

What is the break-even threshold for NGS, and why is it important? The break-even threshold is the minimum number of patient tests required for a next-generation sequencing (NGS)-based strategy to become less costly than a single-gene testing (SGT)-based approach. Understanding this metric is crucial for laboratories to plan testing volumes and realize cost savings. When testing volume is above this threshold, NGS provides significant economic benefits [19].

In which scenarios is NGS most likely to be cost-saving? Research indicates that an NGS-based approach is a cost-saving alternative to SGT in the vast majority of testing cases, particularly when four or more genes require analysis. Savings are consistently achieved in holistic analyses that account for factors like turnaround time, healthcare staff requirements, and the number of hospital visits [19] [11].

What are the typical per-patient savings when using NGS? Reported savings vary based on the specific testing pathway and patient volume. One study of Italian hospital pathways found that savings obtained using an NGS-based approach ranged from €30 to €1249 per patient. In a single case where NGS was more costly, the additional cost was a relatively small €25 per patient [19].

Does the number of genes tested affect cost-effectiveness? Yes, the number of molecular alterations tested is a primary driver of cost-effectiveness. Targeted NGS panels (2-52 genes) are considered cost-effective when four or more genes are assessed. The savings generated by NGS increase with the number of patients tested and the number of different molecular alterations analyzed [19] [11].

Troubleshooting Guide: Achieving Cost-Effectiveness with NGS

Problem: Failure to Reach the Economic Break-Even Point

Your laboratory has implemented NGS but is not achieving the projected cost savings compared to single-gene testing.

Diagnosis and Solutions

- Verify Testing Volume: Compare your current patient test volume against the established break-even threshold for your specific cancer type and panel. The required volume varies significantly depending on the molecular alterations tested and the techniques adopted [19].

- Review Gene Panel Composition: Ensure your NGS panel is optimized for your clinical needs. Panels designed to test four or more actionable genes are typically the point at which NGS becomes cost-effective compared to running multiple single-gene tests [11].

- Analyze Holistic Costs: Conduct a thorough review of all associated costs, not just direct testing expenses. A holistic cost analysis should include personnel time, equipment usage, reagent costs, and overheads. In many cases, NGS reduces costs by streamlining workflows and reducing technician time [19] [11].

Problem: High Per-Patient Costs Despite Adequate Volume

Your lab is running sufficient NGS tests but per-patient costs remain high compared to expectations.

Diagnosis and Solutions

- Optimize Batching Strategies: Implement efficient sample batching protocols to maximize reagent use and instrument run capacity. Proper planning can significantly reduce the cost per sample.

- Evaluate Redo Rates: High redo rates for library preparation or sequencing runs dramatically increase costs. Implement quality control checkpoints and standardized protocols to minimize repeat testing. New automated sample prep systems specifically aim to reduce redo rates in targeted workflows [25].

- Assay Consolidation: Where possible, consolidate multiple testing strategies into a single NGS assay. Research shows that a single CNV/SNV NGS pipeline, as opposed to multi-tiered tests, streamlines the process and provides significant cost savings [26].

Economic Evidence for NGS Implementation

Table 1: Economic Comparison of NGS vs. Single-Gene Testing (SGT)

| Study / Context | Testing Strategy | Key Economic Finding | Break-Even Considerations |

|---|---|---|---|

| Italian Hospitals (aNSCLC & mCRC) [19] | NGS-based panel vs. SGT-based | NGS was cost-saving in 15/16 testing cases | Threshold varies; NGS less costly above specific patient volumes |

| Savings: €30-€1249 per patient | In 9/16 cases, NGS was less costly at any test volume | ||

| Systematic Review (Oncology) [11] | Targeted NGS panels (2-52 genes) | Cost-effective when ≥4 genes require testing | Larger panels (hundreds of genes) generally not cost-effective |

| Holistic NGS implementation | Reduces turnaround time, staff requirements, and hospital visits | Holistic analysis consistently demonstrates cost savings | |

| U.S. Economic Model (mNSCLC) [26] | Upfront NGS vs. sequential single-gene tests | Saved $1.4-2.1 million for CMS insurers | Upfront NGS identified more actionable mutations faster |

| Saved ~$3,800-250,000 for commercial insurers | Faster results by 2.7-2.8 weeks compared to other strategies |

Experimental Protocols for Economic Analysis

Protocol: Conducting a Break-Even Analysis for NGS Implementation

- Define Testing Pathways: Identify and map the specific testing pathways for your laboratory context, similar to the approach used in the Italian hospital study which defined four distinct pathways for advanced non-small-cell lung cancer (aNSCLC) and metastatic colorectal cancer (mCRC) [19].

- Catalog Cost Components: Document all relevant costs including:

- Personnel time for testing and analysis

- Consumables and reagents

- Equipment and instrumentation

- Overhead and facility costs

- Calculate SGT Baseline: Compute the total cost of the sequential single-gene testing approach for your required biomarker panel.

- Model NGS Costs: Calculate NGS implementation costs, accounting for:

- Library preparation and sequencing reagents

- Instrument run costs

- Bioinformatics analysis

- Personnel requirements

- Determine Break-Even Point: Identify the patient volume where total NGS costs equal total SGT costs using the formula: Fixed Costs / (Cost per Test SGT - Variable Cost per Test NGS).

- Perform Sensitivity Analysis: Test how changes in key variables (e.g., reagent costs, personnel time, test volume) affect the break-even point.

Table 2: Key Research Reagent Solutions for NGS Economic Studies

| Reagent / Material | Function in Economic Analysis |

|---|---|

| NGS Library Prep Kits | Foundation for calculating per-sample consumable costs |

| Target Enrichment Panels | Determine gene coverage and impact on testing comprehensiveness |

| Quality Control Kits | Assess input DNA/RNA quality to prevent failed runs and associated costs |

| Bioinformatics Pipelines | Critical for analyzing personnel time and computational resource needs |

| Automated Prep Systems | Impact personnel costs and redo rates; newer systems aim to reduce both [25] |

Visualizing the Break-Even Analysis

Building Efficient NGS Workflows: Technology Selection and Application-Specific Strategies

For researchers and drug development professionals, selecting the optimal next-generation sequencing (NGS) method is crucial for balancing cost, data quality, and clinical utility. The choice between targeted panels, whole exome sequencing (WES), and whole genome sequencing (WGS) directly impacts project budgets, experimental success, and the potential for discovery. This technical support center provides a structured guide to help you navigate this decision, troubleshoot common experimental issues, and implement the most cost-effective strategies for your clinical lab.

NGS Approach Comparison at a Glance

The table below summarizes the core characteristics of the three primary NGS approaches to guide your initial selection [27] [28].

| Feature | Targeted Panels | Whole Exome Sequencing (WES) | Whole Genome Sequencing (WGS) |

|---|---|---|---|

| Target Region | A select set of genes or regions of interest (~2 to 52 genes for common panels) [11] | All protein-coding regions (exons), ~2% of the genome [29] [28] | The entire genome, including coding and non-coding regions [28] |

| Typical Coverage Depth | Very High (>500x) | High (50x for germline, ≥200x for somatic) [29] | Moderate (30x) |

| Key Advantages | - Most cost-effective for specific goals- High sensitivity for low-frequency variants- Simplified data analysis | - Good balance of cost and breadth- Captures ~85% of known disease-related mutations [29] [27] | - Most comprehensive- Detects variants in non-coding regions- Identifies structural variants |

| Primary Limitations | - Limited to known genes- Cannot discover novel gene-disease associations | - Misses non-coding and regulatory variants- Inconsistent coverage of some exonic regions [27] | - Highest cost for sequencing and data storage- Challenging variant interpretation in non-coding regions [27] |

| Ideal Use Case | Confirming suspected mutations in a known set of genes (e.g., oncology hotspots) [27] | Identifying the genetic cause of diseases with heterogeneous or nonspecific symptoms [27] | Discovery research, identifying novel structural variants, or when previous testing is negative [27] |

Cost-Effectiveness in Clinical Research

Economic evaluations are critical for lab sustainability. Evidence shows that an NGS-based strategy can be more cost-effective than single-gene testing (SGT), especially as the number of genes tested increases.

- Break-Even Point: A targeted NGS panel becomes less costly than SGT when testing for four or more genes [11]. The minimum number of patients needed to reach this break-even point varies by panel and institutional costs [19].

- Holistic Savings: When considering the full diagnostic pathway, NGS reduces turnaround time, healthcare staff requirements, and the number of hospital visits, leading to significant systemic cost savings compared to multiple sequential SGT tests [11].

- Broader Context: While larger panels (hundreds of genes) may have higher upfront costs, their ability to provide comprehensive data can prevent future testing, making them a cost-efficient choice in the long run for complex diagnostic odysseys [11] [27].

Decision Workflow for Selecting an NGS Approach

This flowchart outlines a logical pathway for choosing the most appropriate NGS method based on your research goals and constraints.

Troubleshooting Guide & FAQs

Common Library Preparation Issues

Missteps during library preparation are a primary source of NGS failure. The table below outlines common problems, their causes, and proven solutions [14].

| Problem & Symptoms | Root Cause | Corrective Action |

|---|---|---|

| Low Library Yield• Low final concentration• Broad/faint electropherogram peaks | • Input DNA/RNA degradation or contaminants (phenol, salts) [14] [15]• Inaccurate quantification (e.g., NanoDrop overestimation) [14] [30]• Overly aggressive purification [14] | • Re-purify samples; check 260/230 and 260/280 ratios [14]• Use fluorometric quantification (Qubit) [14] [30]• Optimize bead-based cleanup ratios [14] |

| Adapter Dimer Contamination• Sharp peak at ~70-90 bp in Bioanalyzer | • Inefficient ligation [14]• Suboptimal adapter-to-insert molar ratio [14]• Incomplete cleanup post-ligation | • Titrate adapter:insert ratios [14]• Ensure fresh ligase and optimal reaction conditions [14]• Use bead cleanups with optimized ratios to remove short fragments [14] |

| High Duplication Rate / PCR Bias• Overamplification artifacts• Uneven sequencing coverage | • Too many PCR cycles during amplification [14]• Low input material leading to overamplification [31] | • Reduce the number of PCR cycles [14]• Increase input DNA if possible• Use PCR enzymes designed to minimize bias [31] |

| Insufficient Sequencing Coverage• Low cluster density• High rate of duplicate reads | • Poor quality or quantity of starting material [30]• Degraded DNA or contamination with host genomic DNA [30] | • Check DNA integrity (e.g., gel electrophoresis, Bioanalyzer) [30]• Perform a new plasmid/DNA prep to rule out contamination [30] |

Frequently Asked Questions (FAQs)

Q1: My WES data came back negative. What should I do next? A negative WES result does not rule out a genetic cause. Consider the following steps:

- Data Reanalysis: Periodically reanalyze the existing data. One study found that 23% of positive WES findings were in genes discovered within the prior two years [27].

- Upgrade to WGS: If reanalysis is inconclusive, WGS can detect variants in non-coding regions and structural variants that are missed by WES [27].

Q2: How much coverage depth is sufficient for my project? The required depth depends on the application and variant type [29]:

- Germline / Frequent variants: 50-100x

- Somatic / Rare variants (e.g., tumor samples): ≥200x

- Population studies: 50-100x

Q3: When is WGS recommended over WES as a first-tier test? The American College of Medical Genetics and Genomics (ACMG) recommends WES or WGS for patients with rare diseases like congenital abnormalities or developmental delay [27]. WGS is typically reserved for cases where pathogenic variants are not detected by WES or targeted sequencing, or when comprehensive detection of structural variants is required [27].

Q4: What is the most common cause of failed plasmid sequencing? The most common reason is inaccurate DNA concentration measurement via photometric methods (e.g., Nanodrop), which overestimates concentration. This leads to insufficient material for sequencing. Always use a fluorometric method (e.g., Qubit) for accurate double-stranded DNA quantification [30].

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function | Key Consideration |

|---|---|---|

| Fluorometric Quantification Kits (Qubit) | Accurately measures concentration of double-stranded DNA or RNA [30] | More accurate than spectrophotometry (NanoDrop) for sequencing prep; avoids overestimation from contaminants [14] [30] |

| Magnetic Beads (AMPure XP) | Purifies and size-selects nucleic acid fragments after enzymatic reactions [14] | The bead-to-sample ratio is critical for removing adapter dimers and selecting the desired insert size [14] |

| High-Fidelity DNA Polymerase | Amplifies library fragments during PCR | Minimizes amplification bias and errors, reducing duplicate rates and ensuring even coverage [31] |

| Twist Human Comprehensive Exome Panel | Target-enrichment method for WES to capture exonic regions [29] | Different commercial kits have variations in targeted regions and data quality; choice impacts consistency [27] |

| BioAnalyzer / Fragment Analyzer | Provides high-sensitivity assessment of nucleic acid size distribution and quality [14] [30] | Essential for diagnosing adapter dimer contamination, RNA integrity, and DNA fragmentation quality [14] |

Automating Next-Generation Sequencing (NGS) library preparation is a critical strategy for clinical and research laboratories aiming to enhance efficiency, ensure reproducibility, and manage costs. Automated systems address the inherent challenges of manual workflows, such as pipetting variability, contamination risks, and lengthy hands-on time, which are significant concerns in a high-throughput clinical diagnostics environment [32]. By implementing tailored automation, laboratories can achieve the robust, standardized processes required for cost-effective genomic testing and research, directly supporting the broader thesis of optimizing resources without compromising data quality.

Hardware Selection Guide

Selecting the appropriate automation platform requires a careful assessment of your laboratory's specific needs. The goal is to match the system's capabilities to your workflow demands, ensuring a cost-effective investment that can scale with your projects.

Key Selection Criteria

When evaluating automated NGS library preparation systems, consider the following factors:

- Throughput and Scalability: Assess your current and projected sample volumes. Systems offer variable throughput, from a few to hundreds of samples per run. Platforms with modular designs allow labs to scale operations up or down as needed [33].

- Protocol Flexibility and Compatibility: Determine if the system uses locked, vendor-established protocols or offers software for creating and modifying custom workflows. Flexibility is crucial for labs using diverse assay types or custom reagent kits [33].

- Integration and Footprint: Verify the instrument can seamlessly integrate with your existing laboratory infrastructure, including Laboratory Information Management Systems (LIMS) and downstream analysis pipelines [32]. Consider the physical space required for the instrument and its peripherals.

- Total Cost of Ownership: Look beyond the initial purchase price. Factor in the costs of annual preventative maintenance contracts (which can range from $15,000 to $30,000), proprietary consumables, reagents, and the required training for personnel [33].

Quantitative Comparison of Automation Systems

The table below summarizes key characteristics of different automation approaches to aid in the selection process.

Table: Overview of NGS Automation System Considerations

| System Characteristic | Low-Throughput / Benchtop | Medium- to High-Throughput |

|---|---|---|

| Sample Throughput per Run | 4 - 24 samples [33] | 96 - 384 samples [33] [34] |

| Approximate Initial Investment | Lower cost platforms available | ~$45,000 - $300,000 [33] |

| Typical Hands-On Time | Significant reduction from manual methods | Approximately 30 minutes for setup [33] |

| Best Suited For | Small labs, specialized assays, pilot studies | Large academic cores, clinical diagnostic labs, pharmaceutical R&D |

| Example Applications | Targeted gene panels, small-scale RNA-seq | Whole genomes/exomes, large-scale population studies, high-throughput screening [34] |

Troubleshooting and FAQs

This section addresses common questions and issues encountered when implementing and operating automated NGS systems.

Frequently Asked Questions

Q: What is the primary financial benefit of automating NGS library preparation? A: While the initial investment is substantial, the primary return on investment comes from significant reductions in hands-on technician time and improved operational efficiency. Automation minimizes reagent waste through precise liquid handling and reduces costs associated with failed runs by enhancing reproducibility [32] [33].

Q: How does automation improve data quality? A: Automated liquid handlers perform precise, sub-microliter pipetting, which eliminates the variability introduced by manual technique. This leads to more consistent library fragment sizes, uniform sequencing coverage, and a lower failure rate, which is critical for clinical data integrity [33].

Q: Can I use my existing manual library prep kits on an automated platform? A: It depends on the platform. Some systems have "open" software that allows users to program custom protocols for any kit. Others operate with "locked" protocols optimized for specific vendor-branded reagents. This is a key factor to verify during the selection process [33].

Q: What are the key personnel considerations for implementing automation? A: Successful implementation requires training staff not only to operate the system but also to perform basic troubleshooting. It is highly recommended to train at least two "super users" to protect against knowledge loss due to staff turnover. Maintaining competency in the manual method is also advised as a backup [33].

Common Error Messages and Resolutions

Table: Troubleshooting Common Automated NGS System Errors

| Error / Problem Indicator | Possible Cause | Recommended Action |

|---|---|---|

| Chip/Door Open Error | Chip clamp not fully closed; chip not seated properly; damaged chip [5]. | Open the clamp, remove and inspect the chip for damage or moisture. Replace if faulty, re-seat, and ensure the clamp is fully closed before re-running the check [5]. |

| Low Signal/Keypass Error | Problem during library or template preparation; control beads not added [5]. | Verify that control beads were added to the sample. Check the quantity and quality of the input library and template [5]. |

| Instrument-Server Connectivity Loss | Network issues; software glitch [5]. | Restart the instrument and server. If the issue persists, some systems can store runs locally and transfer data once the connection is restored [5]. |

| Poor Library Quality | Sample contamination (e.g., salt, solvents); inaccurate DNA quantification [15]. | Re-purify the sample via ethanol precipitation. Always quantify DNA immediately before library prep using a fluorometric method (e.g., Qubit) [15]. |

| Liquid Handling Failure (e.g., "W1 Empty") | Empty reagent bottle; blocked fluidic line; loose sippers [5]. | Check reagent volumes and ensure all bottles and sippers are secure. Prime or clear the fluidic lines as per the manufacturer's instructions [5]. |

Experimental Protocols for Automated Workflows

Protocol: Implementing an Automated NGS Workflow

Principle: This protocol outlines the steps for transitioning a manual NGS library preparation workflow to an automated liquid handler, using a streamlined kit like the seqWell ExpressPlex as an example [34].

Reagents and Materials:

- DNA samples (e.g., plasmid or amplicon)

- ExpressPlex Library Preparation Kit (or equivalent automated-grade kit)

- Nuclease-free water

- Reagent reservoir plates

- Sample plates (96-well or 384-well)

- Automated Liquid Handler (e.g., Tecan Fluent, Opentrons Flex, SPT Labtech firefly)

- On-deck or external thermocycler

Methodology:

- System Preparation: Power on the liquid handler and associated thermocycler. Ensure the instrument is calibrated and all required maintenance has been performed.

- Reagent Plating: Dispense all necessary library preparation reagents (enzymes, buffers, master mix) into a chilled reagent reservoir plate according to the calculated volumes for your sample number.

- Sample Loading: Transfer quantified and normalized DNA samples to the designated wells of the sample plate.

- Workflow Setup: Load the automated protocol into the liquid handler's software. Following the on-screen deck layout, place the reagent plate, sample plate, tip boxes, and any other required labware onto the designated deck positions.

- Run Initiation: Start the automated protocol. The system will perform all liquid transfers, mixing, and incubation steps. For a system like the Tecan Fluent running ExpressPlex, hands-on time is minimal after setup, and the run can be completed in approximately 90 minutes [34].

- Post-Processing: Once the run is complete, retrieve the plate containing the prepared libraries. Proceed with library quantification, normalization, and pooling as required before sequencing.

Protocol: Validation of an Automated NGS System

Principle: Before implementing an automated system for clinical or critical research samples, a rigorous validation against the established manual method is essential to demonstrate non-inferiority in performance [33].

Reagents and Materials:

- A set of standardized, well-characterized DNA samples (e.g., reference cell line DNA)

- Identical library preparation kits for both manual and automated methods

- All equipment and consumables for both workflows

- NGS sequencer

- Bioinformatics pipeline for data analysis

Methodology:

- Parallel Processing: Split the same set of DNA samples. Process one subset using the established manual protocol and the other using the new automated protocol.

- Metric Tracking: For both sets of libraries, record and compare the following metrics:

- Hands-on time: Measure the active time a technician spends on each method.

- Library Yield: Quantify final library concentration (e.g., via qPCR).

- Library Quality: Assess size distribution (e.g., via Bioanalyzer/TapeStation).

- Sequencing Metrics: Sequence all libraries on the same platform and compare key outcomes including coverage uniformity, on-target rate, and duplicate read rate.

- Data Analysis: Use statistical tests to confirm that the data generated by the automated method is equivalent or superior to the manual method in terms of quality and reproducibility.

- Documentation: Fully document the validation process, results, and any protocol adjustments made. This is critical for regulatory compliance in clinical settings [32].

Workflow Diagrams

The following diagrams illustrate the logical pathways for selecting hardware and executing an automated NGS experiment.

Diagram: Hardware Selection Pathway. This flowchart outlines the key decision-making process for selecting an NGS automation platform, from initial needs assessment to final implementation.

Diagram: Automated NGS Workflow. This diagram visualizes the streamlined workflow from sample to data, highlighting the core steps that are typically automated in a cost-effective NGS pipeline.

The Scientist's Toolkit: Research Reagent Solutions

The table below details essential reagents and materials used in automated NGS workflows, with a focus on their function in ensuring a successful and reliable process.

Table: Essential Reagents for Automated NGS Workflows

| Reagent / Material | Function | Considerations for Automation |

|---|---|---|

| Library Prep Kits (e.g., ExpressPlex) | Provides all enzymes, buffers, and adapters for converting DNA/RNA into sequencer-compatible libraries. | Select kits validated for automation with stable, pre-mixed reagents that reduce pipetting steps and deck footprint [34]. |

| Magnetic Beads | Used for DNA/RNA purification, size selection, and cleanup between library prep steps. | Bead consistency is critical. Automated protocols require precise control over incubation, magnet engagement time, and washing [33]. |

| Liquid Handler Tips | Disposable tips for aspirating and dispensing samples and reagents. | Low-retention tips are essential for accuracy with small volumes. Availability in 384-well formats enables high-throughput processing [34]. |

| Fluorometric QC Kits (e.g., Qubit) | Precisely quantifies DNA/RNA concentration using fluorescent dyes. | More accurate for NGS than spectrophotometry. Automated versions can be integrated into the liquid handling platform [15]. |

| Library QC Kits (e.g., Bioanalyzer) | Analyzes library fragment size distribution and quality. | Provides an electropherogram to confirm successful library preparation before costly sequencing [15]. |

| Positive Control DNA | A well-characterized DNA sample (e.g., from a reference cell line). | Used in every run to monitor the performance and reproducibility of the automated library prep protocol [33]. |

Next-Generation Sequencing (NGS) has revolutionized genomic analysis, with the global NGS library preparation market projected to grow from $2.07 billion in 2025 to $6.44 billion by 2034 [35]. For clinical laboratories operating under budget constraints, strategic custom panel design represents the most cost-effective approach to genomic testing. Unlike broader whole-genome sequencing, targeted panels focus on specific genes of interest, reducing data noise, lowering costs, and accelerating turnaround times [36]. This technical support center provides comprehensive guidance for researchers developing custom NGS panels for oncology, rare diseases, and infectious diseases, with troubleshooting protocols to ensure successful implementation within clinical research settings.

Custom Panel Design by Disease Domain

Oncology Panels

Oncology panels target genes associated with cancer biology, enabling tumor profiling, mutation identification, and therapy selection [36]. Custom cancer panels can be tailored for solid tumors, hematological malignancies, germline risk assessment, or immuno-oncology applications [37].

Table: Custom Cancer Panel Configurations

| Panel Type | Gene Count | Key Applications | Detectable Variants |

|---|---|---|---|

| Core Panel | Focused gene set | Essential somatic mutations | SNVs, indels |

| 50-Gene Panel | ~50 genes | Common cancer drivers | SNVs, indels, CNVs |

| 100-Gene Panel | ~100 genes | Comprehensive profiling | SNVs, indels, CNVs, fusions |

| 400-Gene Panel | ~400 genes | Pan-cancer analysis | SNVs, indels, CNVs, fusions, MSI, TMB |

Technical Considerations: Effective oncology panels must detect diverse variant types including single nucleotide variants (SNVs), insertions/deletions (indels), copy number variations (CNVs), gene rearrangements, microsatellite instability (MSI), and tumor mutational burden (TMB) [37]. Hybridization-based capture methods using platforms like CDCAP and CDAMP enable this comprehensive variant detection across DNA and RNA targets [37].

Rare Disease Panels

Rare disease panels present unique challenges due to phenotypic and genotypic heterogeneity, with approximately 80% of rare diseases having a genetic origin [38]. Successful rare disease investigation requires integrating genotype and phenotype information using standardized data models like the OMOP-based Rare Diseases Common Data Model (RD-CDM) [38].

Panel Design Strategy: Focus on genes with established associations to rare conditions while incorporating flexibility for novel gene discovery. Given the diagnostic challenges, rare disease panels often require broader design than oncology panels, sometimes spanning hundreds of genes related to specific clinical presentations.

Infectious Disease Panels

Infectious disease panels target pathogen-specific sequences for identification, strain typing, and antimicrobial resistance detection. The expansion of point-of-care testing (POCT) capabilities drives innovation in this area [24].

Design Approach: Target conserved regions for species identification alongside variable regions for strain differentiation. Incorporate resistance markers to guide therapeutic decisions. Multiplexing capabilities are essential for panels detecting multiple pathogens from a single sample.

Experimental Protocol: End-to-End Panel Workflow

Custom NGS Panel Workflow

Sample Collection & Quality Control

Sample Types:

- Tissue biopsies: Fresh frozen or FFPE (Formalin-Fixed Paraffin-Embedded)

- Blood: Peripheral blood for germline DNA or liquid biopsies

- Liquid biopsies: Plasma for circulating tumor DNA (ctDNA) in oncology [36]

Quality Assessment:

- DNA: Quantify using fluorometry (Qubit), assess integrity via Bioanalyzer/TapeStation

- RNA: Determine RNA Integrity Number (RIN) >7 for expression studies

- FFPE: Assess fragment size and degradation level

Library Preparation Methods

Table: Library Preparation Kit Options

| Kit Type | Fragmentation Method | Processing Time | Best For |

|---|---|---|---|

| Sonicator-based standard | Acoustic shearing | 4-6 hours | High-quality DNA inputs |

| Fragmentase-based standard | Enzymatic | 3-5 hours | Standard DNA samples |

| Enzymatic preparation kit | Single-tube reaction | 2-3 hours | High-throughput workflows |

| Targeted library kit with barcodes | PCR-based | 3-4 hours | Low-input samples |

Protocol Selection: Choose fragmentation method based on input material quality and quantity. FFPE samples often benefit from enzymatic fragmentation, while high-quality DNA can utilize sonication-based approaches [37]. Unique molecular identifiers (UMIs) are recommended for low-frequency variant detection in liquid biopsies.

Target Enrichment Strategies

Target Enrichment Methods

Hybrid Capture-Based Enrichment:

- Uses biotinylated probes to capture target regions

- Suitable for large target areas (>500 kb)

- Better uniformity and coverage of complex genomic regions

- Protocol: Fragment DNA, add adapters, hybridize with probes, capture with streptavidin beads, wash, and amplify [36]

Amplicon-Based Enrichment:

- Uses targeted primers to amplify regions of interest

- Ideal for smaller panels (<50 genes)

- Higher sensitivity for low-frequency variants

- Protocol: Design multiplex PCR primers, amplify targets, add sequencing adapters

Sequencing Platform Selection

Table: NGS Platform Comparison

| Platform | Read Length | Output | Strengths | Cost Consideration |

|---|---|---|---|---|

| Illumina | Short-read (75-300 bp) | High | Accuracy, throughput | Higher perGb cost |

| Ion Torrent | Short-read (200-400 bp) | Medium | Speed, simplicity | Lower instrument cost |

| Oxford Nanopore | Long-read (>10 kb) | Variable | Real-time, structural variants | Lower capital investment |

| PacBio | Long-read (10-25 kb) | High | Accuracy, complex regions | Higher reagent cost |

Matching Platform to Application: Oncology panels requiring high sensitivity for low-frequency variants perform well on Illumina platforms. Rare disease panels benefiting from structural variant detection may leverage long-read technologies [36]. Consider data analysis infrastructure when selecting platforms, as computational requirements vary significantly.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Reagents for Custom NGS Panels

| Reagent/Category | Function | Application Notes |

|---|---|---|

| Library Prep Kits | Convert nucleic acids to sequenceable libraries | Select based on input material: sonicase-based for high-quality DNA, enzymatic for degraded samples |

| Hybridization Kits | Enrich target regions using probe capture | Standard kits for common applications; enhanced kits for difficult regions |

| Clean-up Beads | Purify and size-select fragments | Magnetic bead-based systems enable automation compatibility |

| Polymerase Amplification Kits | Amplify library molecules | High-fidelity enzymes critical for accuracy; optimize cycle number to avoid duplicates |

| Molecular Barcodes (UMIs) | Tag individual molecules | Essential for liquid biopsy applications to distinguish low-frequency variants |

| Quality Control Reagents | Assess library quality and quantity | qPCR assays for quantification; Bioanalyzer for fragment distribution |

Troubleshooting Guides & FAQs

Common Library Preparation Issues

Q: Our library yields are consistently low across multiple samples. What could be causing this?

A: Low library yields can result from several factors:

- Input DNA Quality: Verify DNA integrity (DV200 >30% for FFPE). Degraded samples require specialized library prep kits designed for low-input [35]

- Enzymatic Reaction Efficiency: Check enzyme storage conditions and expiration dates. Ensure proper mixing of reactions

- Purification Losses: Increase bead-to-sample ratio during cleanups. Extend incubation time with beads

- Adapter Concentration: Titrate adapter amounts for low-input samples. Excessive adapters can cause adapter dimer formation

Q: We observe high duplicate rates in our sequencing data despite adequate input. How can we resolve this?

A: High duplicate rates indicate issues during library amplification:

- Over-amplification: Reduce PCR cycles. For high-quality inputs, 8-10 cycles may be sufficient

- Insufficient Library Complexity: Increase input DNA where possible. For limited samples, incorporate unique molecular identifiers (UMIs)

- Quantification Errors: Use qPCR-based quantification rather than fluorometry alone to accurately measure amplifiable library fragments

Target Enrichment Problems

Q: Our coverage uniformity is poor, with some regions having significantly lower reads. What improvements can we make?

A: Poor uniformity stems from enrichment inefficiencies:

- Hybridization Conditions: Optimize hybridization time and temperature. For GC-rich regions, increase hybridization time

- Probe Design: Verify probe specificity and tiling density. Problematic regions may require overlapping probes

- Blocking Agents: Ensure sufficient blocking of adapters and repetitive elements. Increase Cot-1 DNA for human samples

- Capture Efficiency: Extend capture incubation time. Evaluate different capture buffer formulations

Q: We're detecting false positives in our variant calls. How can we improve specificity?

A: False positives often originate from technical artifacts:

- PCR Errors: Incorporate polymerase with proofreading capability. Reduce PCR cycles when possible

- Cross-Contamination: Implement strict workflow separation (pre- and post-PCR areas). Use UV irradiation and enzymatic decontamination

- Mapping Errors: Optimize alignment parameters. For difficult regions, implement local reassembly

- UMI Integration: Employ unique molecular identifiers to distinguish true mutations from amplification artifacts [36]

Sequencing & Instrumentation

Q: Our Ion S5 system shows a "Chip Check Fail" error. What steps should we take?

A: This indicates issues with chip seating or integrity [5]:

- Open the chip clamp, remove the chip, and inspect for physical damage or moisture

- If damaged, replace with a new chip

- Ensure the chip is properly seated and the clamp is fully closed

- Run Chip Check again

- If failure persists, contact Technical Support as there may be a socket issue

Q: Our Illumina sequencing quality scores drop precipitously in later cycles. What is the likely cause?

A: This pattern typically indicates:

- Reagent Depletion: Check reagent volumes and flow cell integrity

- Phasing/Prephasing: Optimize cluster density. Overclustering accelerates signal decay

- Instrument Maintenance: Perform recommended cleaning cycles. Check laser alignment and camera sensitivity

- Library Quality: Assess library for overcycling artifacts or adapter dimers that may affect later cycles

Cost-Effective Implementation Strategies

Workflow Optimization

Automation Integration: The laboratory automation market is growing at 13.47% CAGR, with automated library preparation instruments representing the fastest-growing segment [35]. Implementing automation for library prep increases throughput, reduces hands-on time, and improves reproducibility. For medium-to-high volume labs (≥96 samples/week), automated platforms provide significant return on investment through reduced reagent costs and staffing requirements.

Reagent Management:

- Utilize lyophilized kits to eliminate cold-chain shipping constraints [35]

- Implement reagent tracking systems to minimize waste

- Bulk purchasing for high-volume applications

- Evaluate kit performance based on cost per sample, not just unit cost

Panel Design Economics

Right-Sizing Panels: Balance comprehensiveness with cost efficiency. The U.S. NGS market is projected to grow from $3.88 billion in 2024 to $16.57 billion by 2033, driven by personalized medicine [10]. Design panels focused on clinically actionable genes rather than maximally large panels. Implement modular designs that allow cost-effective expansion as new genes demonstrate clinical utility.

Multiplexing Strategies: Maximize sequencing capacity by barcoding samples for pooled sequencing. The key is balancing multiplexing level with maintaining sufficient coverage depth for variant detection sensitivity.

Custom NGS panel design represents the most cost-effective approach for clinical laboratories entering the genomic testing space. By focusing on disease-specific targets, labs can deliver clinically actionable results while managing operational costs. Success requires careful consideration of panel content, appropriate technology selection, robust quality control, and systematic troubleshooting.

The integration of automation, advanced data analytics, and sustainable practices will define the next generation of NGS implementations [24]. As the field evolves toward increased standardization, custom panels will continue to bridge the gap between comprehensive genomic analysis and practical clinical utility, enabling broader access to precision medicine approaches across oncology, rare diseases, and infectious diseases.

Leveraging Cloud Computing and Vendor-Agnostic Platforms for Scalability and Flexibility

For clinical labs engaged in research, next-generation sequencing (NGS) presents a dual challenge: generating vast amounts of data and managing the substantial computational cost of its analysis. A strategic combination of cloud computing and vendor-agnostic bioinformatics platforms provides a powerful solution, enabling scalable, flexible, and cost-effective genomic research. This technical support center outlines the core concepts, troubleshooting guides, and FAQs to help your lab implement these technologies successfully.

Core Concepts: Cloud & Vendor-Agnostic Platforms

Cloud Computing Service Models

Cloud computing provides on-demand IT resources over the internet, transforming large capital expenditures into manageable operational costs [39]. The model you choose depends on the level of control and management your team requires.

Table 1: Cloud Computing Service Models for Clinical Research

| Service Model | Management Responsibility | Healthcare & Research Use Cases | Flexibility |

|---|---|---|---|

| IaaS (Infrastructure-as-a-Service) [39] [40] | You manage OS, applications, and data; Provider manages infrastructure. | Hosting custom research databases, large medical image archives, high-performance computing (HPC) for genomic sequencing [39]. | High |

| PaaS (Platform-as-a-Service) [39] [40] | You build and deploy applications; Provider manages OS and infrastructure. | Developing custom patient apps, building and deploying AI/ML tools for data analysis [39]. | Medium |

| SaaS (Software-as-a-Service) [39] [40] | Provider manages the complete application and all underlying infrastructure. | Electronic Health Record (EHR) systems, telemedicine applications, and many billing platforms [39]. | Low |

Vendor-Agnostic Bioinformatics Platforms

Vendor-agnostic platforms are designed to import and analyze raw data from virtually any sequencing instrument or assay kit [41]. This eliminates vendor "lock-in," allowing your lab to choose the most cost-effective or technologically advanced sequencing platforms without overhauling your bioinformatics pipeline. Key features include:

- Unified Interface: A single interface to ingest data from any major NGS system (e.g., Illumina, ThermoFisher, PacBio) [41].

- Extensible Architecture: A plugin-based architecture allows for the addition of new analysis tools and adaptation to future kits and technologies [41].

- Provenance Tracking: Maintains data provenance and audits usage, which is critical for reproducible research and regulatory compliance [41].

Troubleshooting Guides

Guide 1: Resolving Cloud Pipeline Performance Issues

Problem: NGS data analysis pipelines (e.g., Sentieon, Parabricks) are running slowly or timing out on the cloud, leading to delayed results and increased costs.

Investigation & Resolution:

- Step 1: Benchmark Virtual Machine (VM) Configuration

- Action: Compare your current cloud VM setup against published benchmarks for your specific pipeline. The table below provides an example benchmark for rapid whole-genome sequencing on Google Cloud Platform (GCP).

- Solution: Reconfigure your VM to match the optimal specifications.

Table 2: Cloud VM Benchmark for Ultra-Rapid NGS Analysis on GCP [42]

| Analysis Pipeline | Recommended VM Configuration | Cost per Hour | Typical WGS Runtime |

|---|---|---|---|

| Sentieon DNASeq | 64 vCPUs, 57 GB Memory (n1-highcpu-64) [42] | ~$1.79 [42] | ~2.5 hours [42] |

| Clara Parabricks Germline | 48 vCPUs, 58 GB Memory, 1x NVIDIA T4 GPU (g2-standard-48) [42] | ~$1.65 [42] | ~1 hour [42] |

Step 2: Check for I/O Bottlenecks

- Symptom: Pipeline stages involving file reading/writing (e.g., alignment, sorting) are slow.

- Solution: Ensure you are using high-performance, network-attached storage (e.g., Google Persistent SSD, AWS io2 Block Express) designed for high-throughput data operations, not standard storage tiers.

Step 3: Verify Software Licensing

- Symptom: Pipeline fails to start or halts unexpectedly.

- Solution: For licensed software like Sentieon, confirm the license file is correctly mounted on the VM and accessible. Cloud-based license servers can sometimes have connectivity issues [42].

Guide 2: Addressing Data Import Errors in Vendor-Agnostic Platforms

Problem: A vendor-agnostic platform (e.g., Parabon Fx) fails to import or recognize raw data files from a new sequencer or kit.

Investigation & Resolution:

- Step 1: Validate File Format and Integrity

- Action: Confirm the raw data files (e.g., FASTQ, BCL) are not corrupted and adhere to standard formats. Use command-line tools like

md5sumto verify file integrity andfastqcfor basic FASTQ quality checks.

- Action: Confirm the raw data files (e.g., FASTQ, BCL) are not corrupted and adhere to standard formats. Use command-line tools like

- Step 2: Review Platform Documentation for Specific Requirements

- Action: Vendor-agnostic platforms often require specific adapter sequences or file structure layouts. Consult the platform's documentation for the exact requirements for your sequencing instrument.

- Solution: Pre-process the raw data using tools like

bcl2fastqorcutadaptto ensure it meets the platform's import specifications.

- Step 3: Utilize the Platform's Extensible Architecture