Small Sample Sizes in Medical Machine Learning: A 2025 Guide to Robust Models and Reliable Clinical Translation

This article provides a comprehensive guide for researchers and drug development professionals tackling the pervasive challenge of small sample sizes in medical machine learning (ML).

Small Sample Sizes in Medical Machine Learning: A 2025 Guide to Robust Models and Reliable Clinical Translation

Abstract

This article provides a comprehensive guide for researchers and drug development professionals tackling the pervasive challenge of small sample sizes in medical machine learning (ML). It explores the foundational consequences of inadequate data on model performance, fairness, and clinical utility. The content details methodological solutions, including synthetic data generation and resampling techniques, and offers troubleshooting strategies for optimization. Finally, it covers rigorous validation frameworks and comparative analyses of different ML algorithms to ensure models are reliable, transparent, and ready for regulatory scrutiny and clinical application.

Why Size Matters: The Critical Impact of Sample Size on Medical AI Reliability and Safety

Technical Support Center: FAQs on Sample Size in Medical AI

This technical support center provides troubleshooting guides and FAQs to help researchers navigate the critical challenge of sample size determination in medical machine learning (ML) studies.

Frequently Asked Questions

Q1: Why is my machine learning model performing well during training but failing on new data? This is a classic symptom of overfitting, often caused by a sample size that is too small relative to the model's complexity. In small datasets (typically N ≤ 300), models can learn noise and spurious correlations specific to your training set, rather than the underlying biological signal. This is especially prevalent with complex models like neural networks and when using feature sets [1]. To troubleshoot, check the gap between your cross-validation and holdout test set performance; a large discrepancy indicates overfitting.

Q2: How can I estimate an appropriate sample size for a clinical validation study of a predictive model? Unlike traditional hypothesis testing, sample size for model validation should be based on achieving precise and accurate performance estimates (e.g., for AUC, calibration slope). Use a method like SSAML (Sample Size Analysis for Machine Learning). This involves:

- Specifying your target performance metrics (e.g., AUC, C-index).

- Defining your required precision (e.g., relative width of confidence interval ≤ 0.5) and accuracy (e.g., percent bias ≤ ±5%) [2].

- Using bootstrapping on pilot data to find the minimum sample size that meets your precision, accuracy, and confidence level (e.g., 95%) requirements for all metrics simultaneously [2].

Q3: My dataset is fixed and cannot be enlarged. What strategies can I use to improve robustness? When collecting more data is not feasible, consider these approaches:

- Model Simplification: Use simpler, less flexible models (e.g., Logistic Regression or Naive Bayes) which are less prone to overfitting on small samples [1].

- Feature Selection: Reduce the number of input features to only the most informative ones. Using a small set of powerful features can yield better performance than a large set of weak ones [1].

- Leverage External Knowledge: Incorporate information from existing literature or clinical expertise. Emerging techniques use Large Language Models (LLMs) to derive informed prior distributions in Bayesian models, effectively increasing the statistical power of your analysis without needing more patient data [3].

Q4: Is there a minimum sample size "rule of thumb" for medical ML studies? While requirements vary, several studies provide empirical guidance:

- For digital mental health intervention dropout prediction, N = 500–1000 is suggested as a minimum to mitigate overfitting and ensure performance convergence [1].

- In natural language processing tasks, performance often plateaus with training samples of around N = 500 [4].

- Crucially, a dataset's quality and discriminative power are as important as its size. A small dataset with strong, clear signals can be more valuable than a large, indeterminate dataset [5].

Troubleshooting Guides

Problem: High Variance in Model Performance and Effect Sizes

- Symptoms: Model accuracy and estimated effect sizes change dramatically with small changes in the training data (e.g., adding or removing a few dozen samples) [5].

- Underlying Cause: The sample size is insufficient to provide a stable estimate of the model's true performance or the underlying population effect.

- Solution:

- Calculate the confidence intervals for your performance metrics (e.g., AUC). Wide intervals indicate high uncertainty.

- Plot a learning curve by systematically increasing the sample size and plotting the resulting performance. This helps visualize if performance has begun to converge [5] [1].

- The minimal sample size can be set where the relative performance gains from adding more data become negligible and the confidence intervals sufficiently narrow.

Problem: Indeterminate Dataset with Poor Performance

- Symptoms: Both model accuracy and effect sizes (e.g., Cohen's d) are low (e.g., accuracy < 80%, effect size < 0.5) and do not improve significantly even when increasing the sample size [5].

- Underlying Cause: The dataset may lack predictive power. The chosen features may not be discriminative enough to separate the classes or predict the outcome effectively.

- Solution:

- Re-evaluate your feature set. Conduct exploratory data analysis to check if there are any meaningful statistical differences between your groups for key variables.

- Consider collecting different types of data or designing new features that are more directly related to the clinical outcome.

- If the data quality is poor (e.g., noisy labels), efforts to clean the data or use noise-robust algorithms may be more beneficial than simply collecting more samples.

Detailed Methodology: The SSAML Framework

For clinical validation studies, the SSAML framework provides a robust methodology for sample size estimation [2].

- Define Performance Metrics: Select primary metrics for model discrimination (e.g., AUC for classification, C-index for survival analysis) and calibration (e.g., calibration slope, calibration-in-the-large).

- Set Precision and Accuracy Goals: Define the required precision (Relative Width of confidence interval, RWD ≤ 0.5) and accuracy (Percent Bias, BIAS ≤ ±5%) for these metrics at a specific confidence level (e.g., Coverage Probability, COVP ≥ 95%).

- Perform Double Bootstrapping:

- From your available dataset, draw a bootstrap sample of a specific size (N).

- On this sample, compute your performance metrics.

- Repeat this process M times (a second layer of bootstrapping) to estimate the mean RWD, BIAS, and COVP for each performance metric at sample size N.

- Iterate and Determine Minimum N: Repeat Step 3 for increasing sample sizes (N). The minimum required sample size is the smallest N for which all performance metrics simultaneously meet the pre-specified RWD, BIAS, and COVP criteria.

Quantitative Data on Sample Size and Performance

Table 1: Empirical Recommendations for Minimum Sample Sizes from Research

| Research Context | Proposed Minimum Sample Size | Key Findings & Rationale |

|---|---|---|

| Digital Mental Health (Dropout Prediction) [1] | N = 500 - 1000 | Mitigates overfitting; performance converges between N=750-1500. |

| Natural Language Processing [4] | N ≈ 500 | Validity and reliability plateau after ~500 observations for many target variables. |

| General ML Classification [5] | N/A (Criteria-based) | Suggests sample size is suitable when effect size ≥0.5 and ML accuracy ≥80%. |

Table 2: Impact of Sample Size and Model Choice on Overfitting

| Factor | Impact on Overfitting in Small Samples (N ≤ 300) | Recommendation |

|---|---|---|

| Model Complexity | Complex models (Random Forest, Neural Networks) overfit more severely [1]. | Use simpler models (Logistic Regression, Naive Bayes) when data is limited [1]. |

| Number of Features | Models with many features (high dimensionality) are more prone to overfitting [1]. | Use feature selection to reduce dimensionality and improve generalizability [1]. |

| Data Quality | Uninformative feature sets show high overfitting and performance does not improve with more data [5]. | Focus on data with good discriminative power between classes. |

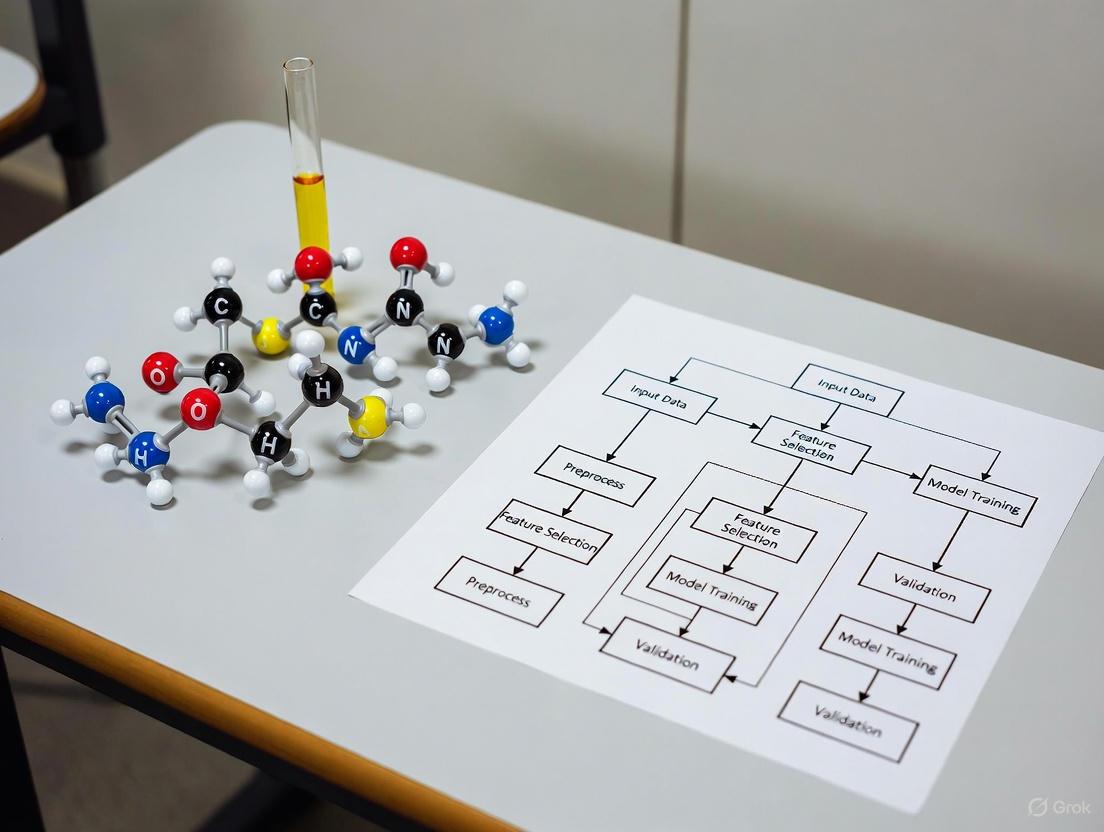

Methodological Workflow Visualizations

SSAML Sample Size Calculation

LLM-Informed Bayesian Analysis

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Sample Size and Validation

| Tool / Solution | Function | Application Context |

|---|---|---|

| SSAML | An open-source method for sample size calculation for ML clinical validation studies. It estimates the sample needed to achieve precise and accurate performance metrics [2]. | Clinical validation of any ML model; agnostic to data type and model. |

| LLM-Derived Priors | Using Large Language Models (e.g., Llama 3.3, MedGemma) to systematically elicit informative prior distributions for Bayesian models [3]. | Incorporating clinical expertise into hierarchical models; can increase effective sample size in clinical trials. |

| Learning Curves | A diagnostic plot showing model performance (e.g., accuracy) as a function of training set size. | Identifying if a model would benefit from more data and estimating the point of diminishing returns [5] [1]. |

| Double Bootstrapping | A resampling technique used to estimate the sampling distribution of a statistic and evaluate the stability of model performance. | Used within SSAML to reliably estimate precision (RWD) and accuracy (BIAS) of performance metrics [2]. |

| Hierarchical Bayesian Model | A statistical model that pools information across groups (e.g., clinical sites) while accounting for group-specific variation. | Modeling multi-center clinical trial data, especially with limited patients per site [3]. |

This guide addresses two critical performance issues—degraded discrimination and poor calibration—that researchers often encounter when building machine learning (ML) models with small sample sizes in medical research. These issues can mislead clinical decision-making, leading to overtreatment, undertreatment, or unfair outcomes. The following sections provide diagnostic and remediation strategies to help you develop more reliable and equitable models.

Frequently Asked Questions

What are the practical consequences of poor calibration in a clinical model?

Poor calibration means a model's predicted probabilities do not match the observed event rates. This inaccuracy can have significant consequences in clinical settings [6]:

- Misleading Clinical Decisions: A poorly calibrated model can give false expectations to patients and healthcare professionals. For example, a model that systematically overestimates the chance of success for in vitro fertilization (IVF) can give couples false hope and expose them to unnecessary treatments and potential side effects [6].

- Resource Misallocation: Overestimation of risk leads to overtreatment, while underestimation leads to undertreatment. In one example, a cardiovascular risk model that overestimated risk would identify nearly twice as many men for high-risk intervention compared to a well-calibrated model, leading to unnecessary costs and patient anxiety [6].

Even a model with high discrimination (AUC) can be poorly calibrated, and a well-calibrated but less "accurate" model is often more clinically useful [6] [7].

My model performs well on training data but poorly on the test set. Is this due to my small sample size?

Yes, small dataset sizes are a primary cause of this overfitting. Research on digital mental health interventions has empirically shown that models trained on small datasets (N ≤ 300) are highly prone to overfitting, where they learn noise in the training data rather than generalizable patterns [1].

- Performance Gaps: In small datasets (N ≤ 300), the difference between cross-validation (CV) results and holdout test results can be as large as 0.12 in AUC, with an average overestimation of 0.05 AUC [1].

- Model Choice Matters: Complex, flexible models like Random Forests and Neural Networks are especially prone to overfitting in small-sample scenarios. One study found that at a sample size of N=100, tree-based models overestimated their performance by at least 0.10 AUC in over 40% of cases compared to the test set [1].

- Minimum Sample Sizes: While N = 500 can substantially reduce overfitting, performance metrics may not stabilize until N = 750–1500. It is therefore recommended to aim for minimum dataset sizes of N = 500–1000 for development [1].

Does poor calibration mean the model's rankings are also wrong?

Not necessarily, but it is possible. A model can be poorly calibrated yet still correctly rank patients from highest to lowest risk. This means the model is useful for identifying which patients are at relatively higher risk but should not be used to communicate exact probabilities [8].

However, in cases of severe miscalibration, the ranking can also become invalid. For instance, a calibration curve that is not monotonically increasing has sections where higher predicted probabilities actually correspond to lower observed event rates. This means a patient with a higher predicted score might be at lower actual risk than a patient with a lower score, breaking the ranking [8]. You should always check the calibration curve for such decreasing sections if you plan to use the model for ranking [8].

How can I prevent my model from learning discriminatory biases from the data?

ML models can learn and amplify societal biases present in historical data. To prevent this, you must use methods that go beyond simply removing the protected attribute (e.g., race) [9].

- Proxy Discrimination: Simply removing a protected feature like race is often insufficient because the model may use proxy features highly correlated with race (e.g., "public or private institution") to make decisions, thereby inducing indirect discrimination [9].

- Bias Mitigation Techniques: Several methodological approaches exist:

- Learning methods preventing disparate impact: These remove the impact of all features related to a protected attribute, which can ensure fairness but may reduce model accuracy if some of those features are relevant [9].

- Fair and eXplainable AI (FaX AI): This is a post-processing technique that prevents direct discrimination and the induction of indirect discrimination through proxies, while preserving the use of relevant features associated with the protected group for business necessity reasons [9].

Troubleshooting Guides

How to Diagnose Poor Calibration

Assessing calibration is a multi-step process. The following workflow and descriptions detail how to evaluate your model's calibration performance. Calibration can be assessed at different levels of stringency, from the mean to a flexible calibration curve [6].

Levels of Calibration Assessment [6]:

- Mean Calibration: Compare the average predicted risk across all patients with the overall event rate in your dataset. A large difference indicates general overestimation or underestimation.

- Weak Calibration: Fit a logistic regression model to the true outcomes using the model's predicted log-odds as the sole predictor. The ideal calibration intercept is 0, and the ideal calibration slope is 1.

- A slope < 1 suggests predictions are too extreme (high risks are overestimated, low risks are underestimated).

- A slope > 1 suggests predictions are too modest.

- Moderate Calibration (Calibration Curve): Plot the predicted probabilities (x-axis) against the observed event frequencies (y-axis) for groups of patients (typically using bins or a smoothing function like loess).

Avoid the Hosmer-Lemeshow test. It is not recommended due to its reliance on arbitrary risk grouping, low statistical power, and an uninformative P-value [6].

How to Address Overfitting in Small Samples

When working with limited data, a strategic approach to model development is crucial to prevent overfitting. The guide below outlines a systematic workflow for this process.

Detailed Methodologies:

- Simplify the Modeling Approach [6] [10]:

- Algorithm Choice: Start with simpler, more interpretable models like Logistic Regression or Naive Bayes, which are less prone to overfitting. Studies show these produce more stable results on small datasets [1].

- Feature Reduction: Limit the number of candidate predictors. Use domain knowledge for feature selection rather than purely statistical methods. "Small dataset" problems are often linked to high-dimensional feature spaces [11].

- Prevent Overfitting During Development [6]:

- Penalized Regression: Use techniques like Lasso or Ridge regression, which constrain model complexity by penalizing large coefficients.

- Sample Size Considerations: Be aware that small datasets (N ≤ 300) greatly overestimate predictive power. If possible, aim for sample sizes of N = 500–1000 to mitigate overfitting and allow performance to converge [1].

- Implement a Rigorous Validation Framework [1]:

- Always use a hold-out test set or careful cross-validation to evaluate performance.

- Monitor the gap between training and test performance. A large gap is a clear indicator of overfitting.

- Update and Calibrate [6]:

Key Research Reagent Solutions

The following table lists essential methodological "reagents" for developing robust models with small medical samples.

| Research Reagent | Function in Small-Sample Context |

|---|---|

| Penalized Regression (Lasso/Ridge) | Prevents overfitting by adding a penalty term to the model's loss function, shrinking coefficient estimates and simplifying the model [6]. |

| Platt Scaling / Isotonic Regression | Post-processing calibration methods that adjust a model's output probabilities to better match observed event rates [7]. |

| Data Augmentation Techniques | Artificially increases the effective size and diversity of the training dataset (e.g., SMOTE for tabular data); identified as a key theme in small data research [11]. |

| Explainability Tools (e.g., SHAP) | Helps identify if a model is relying on proxy features for a protected attribute, thereby aiding in bias detection and model debugging [9]. |

| Bias Mitigation Algorithms (e.g., FaX AI) | Post-processing techniques designed to remove the influence of protected attributes without inducing indirect discrimination through proxies, ensuring fairer outcomes [9]. |

| Simple Baselines (e.g., Linear Model) | Serves as a sanity check to ensure a complex model is learning anything useful beyond a simple, interpretable approach [10]. |

| Learning Curves | A diagnostic tool that plots model performance against dataset size, helping to determine if collecting more data will improve results [1]. |

Experimental Protocol: Evaluating Model Robustness to Small Sample Sizes

This protocol allows you to empirically determine the minimal dataset size required for your specific medical ML task and evaluate the stability of different algorithms.

Objective: To investigate the interaction effects of dataset size, model type, and feature set on performance and overfitting.

Methodology (Based on [1]):

Data Preparation:

- Start with your full dataset (e.g., N = 3654 patients as in the referenced study).

- Define multiple feature groups with varying predictive power and dimensionality (e.g., a simple 7-feature set vs. an extended 129-feature set).

- Hold out a fixed, sufficiently large test set (e.g., 20% of the data) that will be used for all evaluations.

Experimental Loop:

- For a range of training subset sizes (e.g., from N=100 to N=2923 in steps), repeatedly perform the following:

- Randomly sample a subset of the specified size from the training portion.

- Train multiple model types (e.g., Naive Bayes, Logistic Regression, Random Forest, Neural Network) on this subset.

- For each model, perform 10-fold Cross-Validation on the training subset and evaluate on the fixed hold-out test set.

- Record key performance metrics (e.g., AUC, calibration slope) for both CV and the test set.

- For a range of training subset sizes (e.g., from N=100 to N=2923 in steps), repeatedly perform the following:

Key Analysis and Outputs:

- Learning Curves: Plot training and test performance (AUC) against the dataset size for each model and feature group.

- Overfitting Assessment: Calculate the average gap between CV and test performance at each sample size.

- Convergence Point: Identify the sample size at which performance metrics stabilize (i.e., the point of diminishing returns).

- Variance Analysis: Assess the stability of results across different random samples at small sizes (e.g., N=100).

Expected Outcomes (Based on [1]): You will likely observe that:

- Sophisticated models (RF, NN) overfit significantly on small datasets (N ≤ 300) but maximize performance in larger datasets.

- Simpler models (NB, LR) produce more stable results on small datasets.

- Performance and calibration may not converge until N = 750–1500, providing an empirical basis for recommending minimal sample sizes.

Troubleshooting Guides

Guide 1: Diagnosing Bias in Small Sample Medical Datasets

Q: My medical imaging AI model performs well overall but shows significant performance drops for racial minority subgroups. What steps should I take to diagnose the issue?

A: This pattern often indicates sample-size-induced bias. Follow this diagnostic protocol:

Step 1: Quantify Representation Imbalance Create a table showing sample sizes and prevalence rates for each demographic subgroup in your training data. Significant underrepresentation (e.g., <5-10% of total samples) often leads to poor model generalization for those groups [12].

Step 2: Analyze Performance Disparities Calculate performance metrics (AUROC, F1-score, FPR, FNR) stratified by demographic attributes. Research shows models can exhibit up to 30% higher error rates for underrepresented age groups, even when overall performance appears strong [13].

Step 3: Test for Shortcut Learning Use feature attribution methods to determine if your model relies on demographic shortcuts rather than clinically relevant features. Studies confirm that disease classification models can encode demographic information in their latent representations, leading to biased predictions when these shortcuts don't hold in new environments [13].

Step 4: Evaluate Metric Stability Be aware that common classification metrics become unstable with small sample sizes. Sample-size-induced bias can make fairness assessments unreliable when subgroup sizes are small [14].

Guide 2: Addressing Bias Amplification in Predictive Policing Models

Q: Our predictive policing algorithm, trained on historical crime data, is disproportionately flagging neighborhoods with high non-white populations. How can we troubleshoot this bias amplification?

A: This demonstrates a classic feedback loop where biased historical data generates biased predictions:

Step 1: Identify Proxy Variables Audit your features for variables serving as proxies for protected attributes. For example, postal codes often correlate strongly with race and socioeconomic status [15].

Step 2: Analyze Data Generation Process Determine whether your training data reflects ground truth or reporting biases. One study found predictive policing algorithms predicted 20% more high-crime locations in districts with high report volumes, reflecting social bias in who gets reported rather than actual crime patterns [15].

Step 3: Implement Bias Audits Conduct regular bias audits using multiple fairness metrics. Be cautious with small subgroup sizes, as metrics like the four-fifths rule can produce false positives when sample sizes are insufficient [16].

Step 4: Break Feedback Loops Implement human-in-the-loop systems where algorithm recommendations are reviewed before deployment, preventing biased outputs from becoming reinforced in future training data [15].

Guide 3: Mitigating Small Sample Bias in Clinical Risk Prediction

Q: Our clinical risk prediction model shows significantly lower accuracy for Black patients despite appearing fair during development. How can we resolve this?

A: This problem often stems from underrepresented groups in training data:

Step 1: Expand Data Representation Prioritize data collection for underrepresented groups. The delayed enforcement of NYC's bias audit law provides time to collect additional data to increase sample sizes for robust analysis [16].

Step 2: Address Label Bias Scrutinize your outcome variables. A landmark study found a commercial risk prediction tool used healthcare costs as a proxy for health needs, falsely concluding Black patients were healthier because less money was spent on them, despite higher severity indexes [17] [12].

Step 3: Apply Bias Mitigation Techniques Implement algorithms designed to remove spurious correlations, such as:

Step 4: Validate Across Distributions Test your model on external datasets from different clinical environments. Studies show models with less demographic encoding often perform more fairly in new test settings, becoming "globally optimal" [13].

Table 1: Key Materials for Bias Mitigation Experiments

| Research Reagent | Function/Application | Key Considerations |

|---|---|---|

| Bias Audit Frameworks (e.g., HolisticAI) | Calculate impact ratios, disparate impact, and other fairness metrics | For small samples, use metrics robust to sample size; combine categories when samples are very small [16] |

| Adversarial Removal Algorithms (e.g., DANN, CDANN) | Remove demographic information from model representations | Effective for creating "locally optimal" models within original data distribution [13] |

| Distributionally Robust Optimization (e.g., GroupDRO) | Optimize for worst-group performance rather than average performance | Particularly valuable when subgroup sample sizes are imbalanced [13] |

| Synthetic Data Generation | Augment underrepresented subgroups with synthetic samples | Ensure synthetic data preserves clinical validity and doesn't introduce new biases |

| Cross-Validation Techniques | Model selection while maintaining fairness across groups | Use stratified sampling to maintain subgroup representation in all folds [18] |

Table 2: Quantitative Evidence of Small Sample Bias in Medical AI

| Domain | Sample Size Disparity | Performance Impact | Reference |

|---|---|---|---|

| Chest X-ray Classification | Black patients: ~5-10% representation in training data | ≈50% reduction in diagnostic accuracy for Black patients vs. original claims [12] | [12] |

| Skin Lesion Classification | Training on predominantly white patient images | Half the diagnostic accuracy for Black patients compared to white patients [12] | [12] |

| Genomic Studies | European ancestry populations vastly overrepresented | Polygenic risk scores perform less accurately for non-European ancestry [17] | [17] |

| Bias Audits | Subgroups <2% of sample size | Fairness metrics become unreliable; recommended minimum 5-10% per subgroup [16] | [16] |

Frequently Asked Questions

Q: What is the minimum sample size required for meaningful fairness testing? A: While there's no universal threshold, the EEOC recommends analysis only for groups representing at least 2% of the sample. For robust fairness measurement, aim for subgroups comprising 5-10% of your total sample. For smaller groups, consider combining categories or explicitly acknowledging limited statistical power [16].

Q: How does algorithmic bias amplification actually work? A: Bias amplification occurs through several mechanisms: (1) Feedback loops where biased outputs influence future data collection; (2) Optimization for narrow metrics that don't capture real-world complexity; (3) Cascading errors where bias in early processing stages amplifies through the pipeline; and (4) Scale and automation that magnify small biases across large populations [19].

Q: Can we create completely unbiased models if we remove demographic information? A: No. Merely removing explicit demographic variables is insufficient because algorithms can infer protected attributes from proxy variables (e.g., postal codes correlating with race). Studies show medical imaging AI can predict patient race from X-rays with high accuracy, even when clinicians cannot. The solution requires addressing bias throughout the ML pipeline, not just removing demographic fields [15] [13].

Q: What's the difference between "locally optimal" and "globally optimal" fair models? A: "Locally optimal" models are fair within their original training distribution but may fail during real-world deployment. "Globally optimal" models maintain fairness when deployed in new environments. Surprisingly, research shows models with less demographic encoding often generalize more fairly across clinical sites, making them "globally optimal" [13].

Experimental Protocols

Protocol 1: Measuring Sample-Size-Induced Metric Bias

Objective: Quantify how small sample sizes distort fairness metrics in classification tasks.

Methodology:

- Stratified Sampling: From a large medical imaging dataset, create subsets with varying representation of minority groups (e.g., 1%, 2%, 5%, 10% of total samples)

- Metric Calculation: Compute common fairness metrics (demographic parity, equalized odds, predictive equality) for each subset

- Bias Measurement: Compare metrics against ground truth values from the full dataset

- Statistical Analysis: Calculate confidence intervals for each metric at different sample sizes

Expected Results: Metrics will show increasing variance and systematic bias as sample sizes decrease, particularly for subgroups representing <5% of total samples [14].

Protocol 2: Evaluating Cross-Site Generalization of "Fair" Models

Objective: Determine whether fairness interventions that work in development environments maintain effectiveness during real-world deployment.

Methodology:

- Model Training: Train multiple models using different bias mitigation approaches (adversarial removal, resampling, GroupDRO) on a source medical dataset

- Local Evaluation: Assess fairness metrics (FPR/FNR gaps) on held-out test data from the same distribution

- External Validation: Evaluate the same models on external datasets from different clinical environments

- Shortcut Assessment: Measure the degree of demographic encoding in each model's representations

Expected Results: Models with strong demographic encoding will show larger fairness gaps during external validation, even if they appear fair locally. Models with less demographic shortcut learning will demonstrate better "global optimality" [13].

Workflow Diagrams

Bias Amplification Mechanism

Small Sample Bias Mitigation Workflow

Frequently Asked Questions (FAQs)

Q1: Why are small sample sizes a major threat to clinical adoption of machine learning models?

Small sample sizes in medical machine learning (ML) research lead to unreliable and non-generalizable models, which directly erode clinical trust and pose risks to patient safety. Studies with small samples (e.g., N ≤ 300) notoriously overestimate predictive performance and are prone to overfitting, meaning the model learns the noise in the limited dataset rather than a generalizable pattern [1]. When such a model fails in a real-world clinical setting, it can result in misdiagnosis or inappropriate treatment, causing direct patient harm and justified skepticism among clinicians [20] [1].

Q2: What specific problems arise from using small datasets in medical ML?

- High Variance and Unreliable Results: As demonstrated through simulation, conclusions drawn from small samples (e.g., n=10 per group) can be wildly inconsistent. Different random samples from the same underlying population can lead to opposite statistical conclusions, making the results a matter of chance rather than a true effect [21].

- Overfitting: This occurs when a model performs well on its training data but poorly on new, unseen data (the test set). This is a substantial problem for small datasets (N ≤ 300), where the gap between training and test performance can be very large [1].

- Inadequate Statistical Power: A small sample size increases the probability of a Type II error (a false negative), meaning the study may fail to detect a true effect of a treatment or a model's true predictive capability [22].

Q3: Beyond sample size, what other factors threaten trust in clinical ML systems?

- Cybersecurity Vulnerabilities: The healthcare sector faces escalating cyber threats, including ransomware attacks and data breaches. A successful attack can disrupt patient care, alter treatment plans, and compromise sensitive health data, posing an immediate threat to patient safety and eroding trust in digital systems [23] [24].

- Poor Model Generalizability: Even with a sufficient sample size, models can fail if they are not evaluated on appropriate, independent test data. Information leakage from the test set into the training process is a common reason models fail to generalize [25].

- Inadequate Governance of AI: Insufficient oversight and regulation of AI tools in clinical settings can lead to the deployment of unsafe or biased models, resulting in medical errors and delays in care [24].

Troubleshooting Guides

Problem: My model performs well during training but fails on new clinical data.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Insufficient Sample Size | Calculate statistical power or plot learning curves to see if performance has plateaued [1]. | Acquire more data. If not possible, use data augmentation (e.g., for images or time series) or transfer learning. Simplify the model to reduce overfitting [25]. |

| Data Leakage | Audit the data preprocessing pipeline. Ensure the test set was completely isolated and not used for any step, including feature selection or normalization [25]. | Re-split the data, ensuring the test set is held out from the very beginning. Use nested cross-validation for rigorous hyperparameter tuning [25]. |

| Overfitting on Small Data | Compare training and test set performance metrics (e.g., AUC). A large gap indicates overfitting [1]. | Increase regularization, perform feature selection to reduce dimensionality, or switch to a simpler, less flexible model (e.g., Logistic Regression over a large Neural Network) [1]. |

Problem: I have limited data and cannot collect more.

| Strategy | Protocol Description | Key Considerations |

|---|---|---|

| Cross-Validation | Use k-fold cross-validation to make better use of limited data. The data is split into 'k' folds; the model is trained on k-1 folds and validated on the remaining fold, repeated for each fold [25]. | Provides a more robust estimate of performance than a single train-test split. Does not eliminate the need for a final, completely held-out test set [25]. |

| Data Augmentation | Artificially expand the training set by creating modified versions of existing data points (e.g., rotating images, adding noise to time-series signals) [25]. | Must be applied only to the training data after the train-test split to avoid data leakage. The transformations should be realistic for the clinical domain [25]. |

| Transfer Learning | Leverage a pre-trained model developed for a related task or larger dataset, and fine-tune it on your specific, smaller clinical dataset. | Effective when the source and target tasks are related. Can yield good performance with far less target data than training from scratch [25]. |

Experimental Protocols for Robust Research

Protocol 1: Conducting a Sample Size and Learning Curve Analysis

Purpose: To empirically determine if the available dataset is sufficient for developing a robust model and to estimate the potential performance gains with more data.

Materials:

- Dataset with clinical outcomes (e.g., patient diagnosis, treatment dropout).

- Machine learning environment (e.g., Python with scikit-learn).

Methodology:

- Define Feature Sets: Group your features by type and predictive power (e.g., basic demographics, questionnaire data, complex behavioral data) [1].

- Select Model Algorithms: Choose a range of models with varying complexity (e.g., Logistic Regression, Random Forest, a simple Neural Network) [1].

- Generate Learning Curves:

- Start with a small subset of your data (e.g., N=100).

- Train each model on this subset using cross-validation and evaluate on a held-out test set.

- Incrementally increase the training set size (e.g., N=200, 300, 500, 750, 1000, etc.), repeating the training and evaluation at each step.

- Record the cross-validation and test set performance (e.g., AUC) for each data size, feature set, and model.

- Analysis: Plot the learning curves (performance vs. dataset size). The point where the test set performance curve begins to plateau indicates a sufficient sample size. Significant gaps between training and test performance at smaller sizes indicate overfitting [1].

Protocol 2: Rigorous Train-Validation-Test Split to Prevent Data Leakage

Purpose: To ensure a model's performance is evaluated on completely unseen data, providing an unbiased estimate of its real-world performance.

Methodology:

- Initial Split: Randomly split the entire dataset into a development set (e.g., 80%) and a final held-out test set (e.g., 20%). The test set must be locked away and not used for any model development [25].

- Model Development: Use only the development set for all steps, including:

- Exploratory data analysis

- Feature selection and engineering

- Hyperparameter tuning (using cross-validation on the development set)

- Final Evaluation: Train the final model on the entire development set using the chosen hyperparameters. Evaluate this single model once on the locked-away test set to report its final performance [25].

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Medical ML Research |

|---|---|

| nQuery | A validated sample size software used to determine the minimum number of participants required for a study to achieve statistical significance, often required for regulatory approval [22]. |

| Cross-Validation (e.g., k-fold) | A resampling procedure used to evaluate models on limited data. It provides a more robust estimate of skill than a single train-test split [25]. |

| Data Augmentation Techniques | Methods to artificially increase the size and diversity of a training dataset without collecting new data, helping to improve model generality and reduce overfitting [25]. |

| Learning Curves | A diagnostic tool that plots model performance against the training set size. It is essential for identifying underfitting, overfitting, and estimating the benefit of adding more data [1]. |

| Nested Cross-Validation | A method used for both model selection and hyperparameter tuning, as well as performance evaluation. It provides an almost unbiased estimate of the true performance of a model [25]. |

Table 1: Impact of Dataset Size on Model Performance and Overfitting (AUC) [1]

| Dataset Size (N) | Average Overfitting (CV AUC - Test AUC) | Condition for Performance Convergence |

|---|---|---|

| N ≤ 300 | 0.05 (up to 0.12) | Severe overfitting, results are unreliable. |

| N ≥ 500 | 0.02 (max 0.06) | Overfitting is substantially reduced. |

| N = 750 - 1500 | Minimal | Model performance begins to converge. |

Table 2: Recommended Minimum Dataset Sizes for Medical ML [21] [20] [1]

| Context | Recommended Minimum Sample Size | Rationale |

|---|---|---|

| General Clinical Research | n > 50 to approach normal distribution; much larger for robust inference. | Small samples (n=10-30) produce unreliable estimates of means, medians, and P-values [21]. |

| Digital Mental Health (Dropout Prediction) | N = 500 - 1000 | Mitigates overfitting and allows performance to converge, as per empirical learning curves [1]. |

| AI-Based Prediction Models | Justification required; often inadequate. | Regulatory agencies like the FDA require sample size justification to ensure reliable findings and patient safety [20] [22]. |

Workflow: From Data to Trustworthy Model

The following diagram outlines a rigorous workflow for developing machine learning models in clinical settings, emphasizing steps to mitigate risks from small sample sizes and build trust.

Frequently Asked Questions

1. Why is sample size a focus in Good Machine Learning Practice principles? Sample size is directly relevant to multiple GMLP principles because it is foundational for developing models that are safe, effective, and high-quality [26]. An inadequate sample size can lead to models that fail to generalize to the intended patient population, producing unreliable and potentially harmful predictions [20]. Regulatory bodies have identified this as a key area for international harmonization and the development of consensus standards [26].

2. My dataset is small due to a rare disease. How can I comply with GMLP? GMLP emphasizes that your dataset must be "representative of the intended patient population" and of "adequate size" [26]. While a small sample is challenging, the focus should be on its representativeness and quality. You must leverage specific methodologies to mitigate the risks of small sample sizes, such as data augmentation, transfer learning, and choosing model designs tailored to the available data [11] [26]. Furthermore, rigorous testing on independent datasets and clear documentation of the model's limitations are essential [26].

3. How does sample size relate to the number of features in my model? There is a direct relationship. One GMLP principle states that "Model Design Is Tailored to the Available Data" to mitigate known risks like overfitting [26]. Using a sample size that is too small for the number of candidate features (high dimensionality) will almost certainly result in an unreliable model. Research suggests that for a model to be rigorously validated, machine learning can require up to 200 events per candidate feature, far more than traditional statistical methods [27]. This highlights the "data-hungry" nature of many ML algorithms [27].

4. What is the regulatory expectation for testing dataset independence? The GMLP principles are explicit: "Training Data Sets Are Independent of Test Sets" [26]. You must select and maintain training and test datasets that are appropriately independent. This requires considering and addressing all potential sources of dependence, including patient, data acquisition, and site factors, to ensure a statistically sound evaluation of device performance [26].

Troubleshooting Guide: Common Sample Size Scenarios

| Scenario | Symptom | Root Cause | GMLP-Aligned Solution |

|---|---|---|---|

| Limited Patient Population | Model performance degrades dramatically when deployed in a new clinic. | Dataset is not representative of the full intended patient population, failing GMLP principle 3 [26]. | Employ data augmentation techniques to create synthetic data and expand the training set's diversity [11]. Intentionally collect data from multiple sites to ensure representation of key subgroups. |

| High-Dimensional Data | The model performs perfectly on training data but poorly on validation data (overfitting). | Sample size is inadequate for the number of features, violating the GMLP principle that model design must be tailored to available data [26] [27]. | Perform dimensionality reduction (e.g., PCA) or feature selection to reduce the number of parameters before modeling [11] [18]. Use simpler, more interpretable models. |

| Uncertain Sample Size Needs | Unable to provide a rationale for the chosen sample size during regulatory review. | No sample size determination methodology was used, a common issue in medical AI research [28]. | Use a post-hoc curve-fitting approach: empirically test model performance on subsets of your data, model the performance-to-sample-size relationship, and extrapolate to estimate the sample needed for target performance [28]. |

| Class Imbalance | The model is highly accurate but fails to identify the rare condition of interest. | The dataset is imbalanced; one target class has very few samples, making the model biased toward the majority class [11] [18]. | Apply resampling techniques (oversampling the minority class or undersampling the majority class) during training to rebalance the dataset [18]. |

Experimental Protocol: A Step-by-Step Workflow for Sample Size Determination

The following workflow diagram outlines a methodology for planning and evaluating sample size in line with GMLP principles.

Sample Size Determination Workflow

Step 1: Define Clinical Context and Performance Goals

- Action: Clearly articulate the device's intended use, indications for use, and the target patient population as required by GMLP principles 1 and 6 [26]. Identify clinically meaningful performance goals for the model.

- Deliverable: A predefined statistical analysis plan that includes performance targets (e.g., sensitivity >0.85) and the subgroups for analysis.

Step 2: Conduct a Literature Review and Collect Pilot Data

- Action: Investigate existing similar models and their sample sizes. If no prior data exists, begin with an initial pilot study.

- Deliverable: A preliminary dataset and a report on existing evidence to inform the choice of sample size methodology.

Step 3: Select and Execute a Sample Size Determination Method

- Action: Choose between two common methodological categories identified in research [28]:

- Pre-Hoc Model-Based Approach: Uses characteristics of the algorithm and data (e.g., number of features, expected effect size) to calculate a required sample size before data collection.

- Post-Hoc Curve-Fitting Approach: Requires an initial dataset. Model performance is tested on increasingly larger subsets of this data, and a curve is fitted to extrapolate the sample size needed to achieve the target performance [28].

- Deliverable: A calculated sample size (N) required for model training and testing.

Step 4: Data Collection and Partitioning

- Action: Collect the full dataset of size N, ensuring it is representative of the intended population [26]. Then, partition it into independent training and test sets, addressing all potential sources of dependence (e.g., patient, site) as mandated by GMLP principle 4 [26].

- Deliverable: A partitioned, well-characterized dataset with a description of how independence was maintained.

Step 5: Model Training, Testing, and Iteration

- Action: Train the model on the training set and evaluate its performance on the held-out independent test set. The testing must demonstrate performance during clinically relevant conditions (GMLP principle 8) [26].

- Deliverable: A performance report. If performance does not meet pre-specified goals, return to Step 2 to collect more data or refine the model.

The Scientist's Toolkit: Key Research Reagents & Solutions

| Item | Function in Context of Small Samples |

|---|---|

| Synthetic Data Generation | Creates new, artificial data instances that follow the same distribution as the original, limited dataset. This is a key data augmentation technique for expanding training sets in a statistically sound way [11]. |

| Representative Reference Datasets | Best-available, well-characterized datasets used as a benchmark (reference standard) to promote and demonstrate model robustness and generalizability across the intended population, as per GMLP principle 5 [26]. |

| Feature Selection Algorithms | Methods (e.g., Univariate Selection, Principal Component Analysis (PCA), tree-based importance) that reduce the number of input variables, thereby lowering model complexity and the risk of overfitting on small samples [11] [18]. |

| Cross-Validation | A resampling technique used to assess model performance. It maximizes the use of limited data by repeatedly partitioning it into training and validation sets, providing a more reliable estimate of performance than a single train-test split [18]. |

| Transfer Learning | A methodology where a model developed for one task is reused as the starting point for a model on a second, related task. This is particularly valuable when the target dataset is small but a large source dataset exists [27]. |

Practical Solutions: From Synthetic Data to Resampling for Enhanced Model Training

Class imbalance is a pervasive challenge in medical machine learning (ML), where the number of patients in one category (e.g., healthy) significantly outweighs the number in another (e.g., diseased) [29]. Models trained on such imbalanced data tend to be biased toward the majority class, leading to poor performance in identifying the minority class, which is often the class of greater clinical interest (e.g., patients with a rare disease) [30]. This primer introduces foundational data-level techniques—Random Oversampling (ROS), Random Undersampling (RUS), SMOTE, and ADASYN—to combat this issue, providing troubleshooting guidance for researchers and scientists in healthcare and drug development.

The following table summarizes the key mechanisms, advantages, and limitations of the four core techniques discussed in this guide.

| Technique | Core Mechanism | Key Advantages | Primary Limitations |

|---|---|---|---|

| Random Oversampling (ROS) | Duplicates existing minority class instances at random [31]. | Simple to implement and understand [32]. | High risk of overfitting, as it does not add new information [31] [32]. |

| Random Undersampling (RUS) | Randomly removes instances from the majority class [31]. | Reduces computational cost and training time [31] [33]. | Potential loss of potentially useful information from the removed data [32]. |

| SMOTE | Generates synthetic minority samples via linear interpolation between existing minority instances and their nearest neighbors [30] [32]. | Creates more diverse samples than ROS, improving model generalization [30] [32]. | May generate noisy samples in overlapping regions and can over-amplify minority class clusters [30] [32]. |

| ADASYN | Uses a weighted distribution to generate more synthetic samples for "hard-to-learn" minority instances [32] [34]. | Adaptively shifts the decision boundary to focus on difficult cases [32] [34]. | Can be sensitive to outliers and does not effectively handle the generation of noisy data [32] [34]. |

Detailed Methodologies and Experimental Protocols

This section provides detailed, step-by-step protocols for implementing the discussed sampling techniques in a medical ML workflow.

General Experimental Setup for Medical Data

- Data Partitioning: Split your dataset into training and testing sets before applying any sampling technique. A typical split is 60-40 or 70-30 [31].

- Apply Sampling Exclusively to Training Data: Perform ROS, RUS, SMOTE, or ADASYN only on the training set [31] [32]. The test set must remain untouched to provide an unbiased evaluation of model performance on the original, real-world data distribution.

- Model Training and Evaluation: Train your classifier on the resampled training data. Evaluate its performance on the original, unmodified test set using metrics appropriate for imbalanced data, such as F1-score, G-mean, and AUC-ROC [30] [32].

Protocol 1: Implementing Random Oversampling (ROS) and Undersampling (RUS)

Objective: To balance class distribution by replicating minority samples (ROS) or eliminating majority samples (RUS).

Procedure:

- Load Data: Load the imbalanced medical dataset (e.g., from a CSV file).

- Identify Class Counts: Calculate the number of instances in the majority (Nmaj) and minority (Nmin) classes.

- For ROS:

- Set the desired number of minority class samples (typically equal to N_maj).

- Randomly select (Nmaj - Nmin) instances from the minority class with replacement.

- Add these duplicated instances to the original training set [31].

- For RUS:

- Randomly select N_min instances from the majority class without replacement.

- Remove the unselected majority class instances from the training set [31].

- Output: A resampled training dataset with a balanced class distribution.

Protocol 2: Implementing the SMOTE Algorithm

Objective: To generate synthetic minority class samples to balance the dataset.

Procedure:

- Input Parameters: Set the number of nearest neighbors

k(default is 5) and the desired oversampling amountN[32]. - Iterate over Minority Instances: For each instance

x_iin the minority class: a. Find itsknearest neighbors from the minority class. b. Randomly selectNof these neighbors. c. For each selected neighborx_zi, generate a synthetic samplex_newusing the formula:x_new = x_i + λ * (x_zi - x_i)whereλis a random number between 0 and 1 [30] [32]. - Output: Add the generated synthetic samples to the original training dataset.

Workflow Diagram: SMOTE Data Generation

Protocol 3: Implementing the ADASYN Algorithm

Objective: To adaptively generate more synthetic samples for "hard-to-learn" minority instances.

Procedure:

- Calculate Data Distribution:

- Let

msbe the number of minority class instances andmlthe number of majority class instances. - Calculate the degree of imbalance:

d = ms / ml. Ifdis less than a preset thresholdd_th, proceed [34].

- Let

- Determine Total Synthetic Samples: Calculate the total number of synthetic samples to generate:

G = (ml - ms) * β, whereβis a parameter to specify the desired balance level after oversampling [32] [34]. - Calculate Density Distribution for Each Minority Sample (

x_i): - Calculate Per-Sample Synthetic Count: For each minority sample

x_i, the number of synthetic samples to generate isg_i = r_hat_i * G[34]. - Generate Samples: For each

x_i, generateg_isynthetic samples using the same interpolation method as SMOTE, but focusing proportionally more on instances with a higherr_hat_i[32] [34].

Workflow Diagram: ADASYN Data Generation

Frequently Asked Questions (FAQs) and Troubleshooting

Q1: My model's overall accuracy improved after ROS, but it's now missing critical rare disease cases. What went wrong?

- Diagnosis: This is a classic symptom of overfitting due to ROS. By simply duplicating minority samples, the model memorizes the specific instances instead of learning generalizable patterns [32].

- Solution:

- Switch to a technique that creates new data points, such as SMOTE or ADASYN.

- Avoid using accuracy as your sole metric. Instead, monitor Recall (Sensitivity) and the F1-score, which are more robust for evaluating performance on the minority class [32]. A successful intervention should show a significant increase in recall, even if accuracy slightly decreases [35].

Q2: After applying RUS, my model seems less stable and its performance varies greatly with different data splits. Why?

- Diagnosis: RUS may have removed critical information from the majority class, making the model's learned decision boundary highly sensitive to the specific majority samples that remained [32].

- Solution:

- Consider using SMOTE or hybrid methods like SMOTEENN (SMOTE + Edited Nearest Neighbors) or SMOTETomek, which combine oversampling of the minority class with intelligent undersampling of the majority class to achieve cleaner class clusters [32] [29].

- Ensure you are using techniques like stratified k-fold cross-validation to better account for variability in your performance estimates [35].

Q3: I used SMOTE, but my classifier's performance did not improve, or it got worse. What could be the cause?

- Diagnosis 1: Generation of Noisy Samples. SMOTE can create synthetic samples in the overlapping region between classes or around outliers, introducing ambiguity [30] [36].

- Diagnosis 2: Ignoring Data Density. Standard SMOTE treats all minority regions equally, potentially over-allocating samples to already dense areas [30].

Q4: For a typical medical dataset like the Pima Indians Diabetes, which technique should I try first?

- Recommendation: Based on empirical studies, a hybrid method like SMOTEENN (SMOTE + Edited Nearest Neighbors) often performs well on clinical datasets [29]. It not only generates new minority samples but also cleans the resulting dataset by removing samples from both classes that are misclassified by their nearest neighbors, leading to better-defined class clusters [32].

- Evidence: One study comparing techniques across five clinical datasets (including Pima Indians Diabetes) found that SMOTEENN often performed better than other balancing techniques across multiple classifiers [29].

The Scientist's Toolkit: Essential Research Reagents

The following table lists key computational "reagents" and resources essential for experiments in handling class-imbalanced medical data.

| Tool / Resource | Function / Description | Example Use Case |

|---|---|---|

imbalanced-learn (Python) |

An open-source library providing implementations of ROS, RUS, SMOTE, ADASYN, and numerous other sampling techniques [31]. | The primary library for implementing all sampling protocols described in this guide. |

| Stratified k-Fold Cross-Validation | A resampling technique that preserves the class distribution in each fold, ensuring reliable performance estimation on imbalanced data [35]. | Used during model training and validation to prevent biased performance estimates. |

| Local Outlier Factor (LOF) | An unsupervised algorithm used for outlier detection, which can help identify noisy samples in the minority class before or after applying SMOTE [34]. | Integrated into methods like ADASYN-LOF to clean the synthetic dataset and improve quality [34]. |

| Clinical Datasets (UCI, KEEL) | Public repositories providing benchmark imbalanced clinical datasets (e.g., Breast Cancer, Pima Indians Diabetes) for method development and comparison [30] [29]. | Used for benchmarking and validating the performance of different sampling strategies. |

| Cost-Sensitive Learning | An algorithmic-level approach (as opposed to data-level) that assigns a higher misclassification cost to the minority class during model training [33]. | An alternative or complementary strategy to data sampling, often used in ensemble methods. |

Frequently Asked Questions (FAQs)

Q1: What are the main advantages of using Deep-CTGAN over traditional oversampling methods like SMOTE for medical tabular data?

Deep-CTGAN offers significant advantages for handling the complexity of medical data. While traditional methods like SMOTE and ADASYN create new samples through simple interpolation in feature space, they often fail to capture the complex, non-linear relationships and multi-modal distributions present in clinical datasets [37] [38]. Deep-CTGAN, particularly when integrated with ResNet, uses deep learning to learn the underlying data distribution, generating more realistic and diverse synthetic samples. Research shows that while SMOTE can outperform deep generative models on small datasets, an ensemble of deep generative models performs better on large, complex datasets [38]. Furthermore, in disease prediction tasks, models trained on Deep-CTGAN synthesized data have achieved accuracy rates exceeding 99% [37].

Q2: How does the integration of ResNet architectures enhance Deep-CTGAN for medical data generation?

Integrating ResNet (Residual Network) with Deep-CTGAN addresses a key challenge in training deep networks: gradient vanishing and explosion [37] [39]. The residual connections in ResNet allow the model to be much deeper, enabling it to learn more complex patterns from the data without performance degradation. This is particularly crucial for medical data, which often involves intricate dependencies between patient attributes. The ResNet integration enhances the feature learning capability of the Deep-CTGAN, allowing it to better capture the complex patterns and relationships within heterogeneous clinical datasets, leading to the generation of higher-fidelity synthetic patient records [37].

Q3: My model is experiencing mode collapse, where it generates limited varieties of synthetic samples. How can I resolve this?

Mode collapse is a common challenge where the generator produces synthetic data with low diversity. To mitigate this in CTGAN training, you can:

- Implement Gradient Penalty: Replace traditional weight clipping with a gradient penalty, as used in Wasserstein GAN with Gradient Penalty (WGAN-GP). This promotes more stable training and helps prevent mode collapse by enforcing a Lipschitz constraint [39].

- Modify the Loss Function: Incorporate a custom loss function that includes terms to maximize the diversity of generated samples and ensure they cover different modes of the data distribution [40].

- Conduct Robustness Validation: Use strategies like identifying "weak robust samples" from your training data to understand model vulnerabilities. Augmenting training with these challenging samples can lead to a more robust generator that better captures the entire data distribution [41].

Q4: How can I validate that my synthetic medical data is both realistic and preserves patient privacy?

A robust validation strategy should assess both fidelity (realism) and privacy.

- Fidelity and Utility: Use the "Training on Synthetic, Testing on Real" (TSTR) framework [37]. Train a downstream machine learning model (e.g., a classifier) on your synthetic data and test its performance on the real, held-out data. High performance indicates the synthetic data maintains utility. You can also compute statistical similarity scores between real and synthetic data distributions [37].

- Privacy: Employ privacy metrics that estimate the risk of re-identification. For instance, calculate the probability that a synthetic record could be matched to a real patient in the original dataset. One study using GANs reported a very low identification probability of 0.008% [42]. A good synthetic dataset should find a balance between being useful for analysis and providing strong privacy protection [42].

Troubleshooting Guides

Issue 1: Unstable Training and Failure to Converge

Symptoms: Large fluctuations in loss values, the generator or discriminator loss quickly goes to zero, and the quality of generated samples does not improve over time.

| Potential Cause | Solution | Key References |

|---|---|---|

| Unbalanced Network Capacity | Ensure the generator (G) and discriminator (D) have comparable model capacity. If D becomes too powerful too quickly, it doesn't provide useful gradients for G to learn. | [40] |

| Inappropriate Loss Function | Use more stable loss functions like Wasserstein loss with gradient penalty. This provides smoother gradients and helps stabilize training. | [39] |

| Poorly Tuned Hyperparameters | Systematically optimize hyperparameters such as learning rate, batch size, and the number of D updates per G update. A lower learning rate (e.g., 1e-4) is often more stable. | [43] |

| Improper Data Preprocessing | Ensure categorical variables are properly encoded (e.g., using a softmax output per category) and continuous variables are normalized. CTGAN uses mode-specific normalization for continuous columns. | [37] |

Issue 2: Poor Quality of Generated Synthetic Data

Symptoms: Synthetic data lacks realism, fails to capture correlations between features, or results in poor performance in the TSTR evaluation.

| Potential Cause | Solution | Key References |

|---|---|---|

| Insufficient Training Data | Even with small sample sizes, ensure you are using all available data. Leverage techniques like k-fold cross-validation during model development to maximize data usage. | [43] |

| Ignoring Data Multi-modality | Implement mode-specific normalization for continuous features. This allows the model to better handle features with complex, multi-peaked distributions. | [37] [42] |

| Failure to Capture Feature Dependencies | Use architectural improvements and loss functions that explicitly encourage the model to learn relationships between attributes (e.g., "gender" must be consistent with "pregnancy status"). | [42] |

| Class Imbalance in Original Data | Use conditional generation. Feed class labels as an additional input to both the generator and discriminator, forcing the GAN to controllably generate samples for underrepresented classes. | [39] |

Issue 3: Overfitting on Small Medical Datasets

Symptoms: The synthetic data is too similar to the original training data, raising privacy concerns, and the model does not generalize well to create plausible variations.

| Potential Cause | Solution | Key References |

|---|---|---|

| Lack of Diversity in Training Set | Introduce targeted data augmentation on "weak robust samples" (the most vulnerable samples in your training set) to force the model to learn a more robust decision boundary. | [41] |

| Overly Complex Model | Regularize the generator and discriminator networks using techniques like dropout or weight decay. Reduce model capacity if the dataset is very small. | [44] |

| Insufficient Validation | Employ a rigorous validation framework. Use a hold-out validation set to monitor for overfitting during training and apply early stopping. | [41] |

Experimental Protocols & Methodologies

Protocol 1: Benchmarking Deep-CTGAN with ResNet Integration

This protocol outlines the steps to evaluate the performance of a Deep-CTGAN model integrated with ResNet for synthetic data generation on a small medical dataset.

1. Data Preprocessing:

- Categorical Variables: Encode using one-hot encoding.

- Continuous Variables: Apply mode-specific normalization, which fits a Gaussian Mixture Model (GMM) to identify modes in the distribution and normalizes each value based on the identified mode [37].

- Class Labels: For conditional generation, encode the target variable and use it as an input to the generator and discriminator.

2. Model Architecture & Training:

- Generator: A deep residual network (ResNet) that takes random noise and a conditional vector (class label) as input. The ResNet blocks help alleviate vanishing gradient problems.

- Discriminator: A second ResNet that classifies inputs as real or fake and also predicts the conditional label (auxiliary classifier).

- Training Loop: Use the Wasserstein loss with gradient penalty. A suggested default is to update the discriminator 5 times for every generator update. Train for a predetermined number of epochs or until convergence is observed [39].

3. Evaluation via TSTR:

- Step 1: Train the Deep-CTGAN model on the entire (small) real training dataset.

- Step 2: Generate a synthetic dataset of a desired size.

- Step 3: Train a downstream classifier (e.g., TabNet, Random Forest) exclusively on the synthetic data.

- Step 4: Test the classifier on the held-out real test dataset.

- Step 5: Record performance metrics (Accuracy, F1-score) and compare them against models trained with other augmentation methods (e.g., SMOTE, ADASYN, vanilla CTGAN) [37].

Protocol 2: Validating Synthetic Data Utility and Privacy

A critical protocol for ensuring generated data is both useful and private, suitable for a medical research thesis.

1. Utility Assessment:

- Statistical Similarity: Calculate metrics like Total Variation Distance (TVD) or Jensen-Shannon divergence between the distributions of real and synthetic data for key features. One study reported similarity scores of 84-87% for clinical datasets [37].

- Downstream Model Performance: As in the TSTR framework above, the ultimate test of utility is the performance of a model trained on synthetic data when applied to real-world tasks [37].

2. Privacy Risk Assessment:

- Membership Inference Attack: Train an attack model to determine whether a given record was part of the GAN's training data. The success rate of this attack should be close to a random guess.

- Distance-to-Closest-Record: For each synthetic sample, compute the distance to its nearest neighbor in the real training dataset. A higher average distance indicates better privacy protection.

- Re-identification Risk: Quantitatively estimate the probability of identifying a real individual from the synthetic data, aiming for a very low rate (e.g., <0.01%) [42].

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Solution | Function in Experiment | Specification Notes |

|---|---|---|

| Deep-CTGAN Model | Core generative model for synthesizing tabular data. | Look for implementations that support conditional generation and mode-specific normalization. |

| ResNet Module | Enhances feature learning and mitigates vanishing gradients in deep networks. | Can be integrated as building blocks within the generator and/or discriminator. |

| TabNet Classifier | High-performance deep learning model for tabular data; ideal for TSTR evaluation. | Uses sequential attention to choose which features to reason from at each step [37]. |

| Wasserstein Loss with Gradient Penalty | Training objective function that improves stability and avoids mode collapse. | More reliable than the original minimax GAN loss [39]. |

| SHAP (SHapley Additive exPlanations) | Explainable AI tool for interpreting model predictions and feature importance. | Provides insights into which features are driving the generative model's decisions [37]. |

| k-fold Cross-Validation | Resampling technique for robust model evaluation with limited data. | Essential for reliably estimating model performance when sample sizes are small [43]. |

Workflow Diagrams

Synthetic Data Validation Workflow

ResNet-Enhanced Generator Architecture

Frequently Asked Questions

Q1: What is the fundamental difference between Cost-Sensitive Learning and standard learning algorithms? Standard machine learning algorithms are designed to minimize the overall error rate and typically assume that all misclassification errors carry the same cost [45] [46]. In contrast, Cost-Sensitive Learning is a subfield that explicitly defines and uses costs during training, focusing on minimizing the total cost of misclassification rather than just the error rate [45]. This is particularly crucial in medical applications where misclassifying a sick patient as healthy (false negative) is often far more serious than misclassifying a healthy patient as sick (false positive) [47] [45].

Q2: When should I use Focal Loss instead of traditional loss functions like Cross-Entropy? You should consider Focal Loss when working on highly imbalanced segmentation or detection tasks where the structures of interest (e.g., small tumors, aneurysms) occupy a very small volume—often less than 1% of the total image [48]. It is particularly beneficial when your model is missing small structures and producing high false negatives. If your dataset does not have severe class imbalance or you are already achieving high performance with Dice Loss alone, Focal Loss may be unnecessary [48].

Q3: How do I determine the appropriate misclassification costs for my medical classification problem?

Determining accurate costs often requires collaboration with domain experts to analyze the clinical consequences of different error types [46]. However, a practical implementation approach is to treat costs as hyperparameters and use grid or random search to optimize them against your performance metric [46]. A common heuristic is to set the class weights inversely proportional to the class distribution in your dataset, which is implemented in libraries like Scikit-learn via the class_weight='balanced' parameter [46].

Q4: Why is my model with Focal Loss performing worse than with Cross-Entropy, even though the gradients check out? Even with correct gradient calculations, training dynamics can differ significantly. This could be due to improper hyperparameter tuning (α and γ values) or an imbalance between loss components if you're using a combined loss function [48] [49]. Start with a baseline using Dice + BCE Loss, then gradually introduce Focal Loss with conservative weights (e.g., γ=2, α=0.25) and monitor performance changes carefully [48].

Q5: Can Cost-Sensitive Learning and data resampling techniques be used together? Yes, these strategies are complementary. While Cost-Sensitive Learning modifies the algorithm's objective function to account for varying misclassification costs, resampling techniques (like SMOTE) physically alter the training data distribution [47] [50]. Research has shown that cost-sensitive methods can sometimes outperform resampling alone because they preserve the original data distribution while directly addressing the imbalance during training [50].

Troubleshooting Guides

Problem: Model Fails to Detect Minority Class in Medical Imaging

Symptoms: High false negative rate, poor recall for minority class, missed detections of small pathological structures.

Diagnosis and Solutions:

Implement Focal Loss for Segmentation Tasks

- Solution: Replace or combine standard Cross-Entropy loss with Focal Loss to make the model focus on hard-to-classify examples.

- Implementation: Use the formula:

FL(pₜ) = -α(1-pₜ)γlog(pₜ)where pₜ is the model's predicted probability for the correct class, α controls minority class weight, and γ determines focus on hard examples [48]. - Recommended Parameters: Start with γ=2.0 and α=0.25, then adjust based on performance [48].

Combine Multiple Loss Functions

- Solution: Use a weighted combination of Dice Loss, BCE Loss, and Focal Loss rather than relying on a single loss function.

- Formula:

Total Loss = a × Dice Loss + b × BCE Loss + c × Focal Loss[48] - Typical Weight Distribution:

- Dice Loss: Optimizes segmentation shape and overlap

- BCE Loss: Ensures per-pixel accuracy

- Focal Loss: Helps focus on hard-to-classify structures [48]

Hyperparameter Tuning Strategy

- To Reduce False Negatives: Increase γ (e.g., 2.0 → 3.0) and increase Focal Loss weight (e.g., 0.25 → 0.4) [48]

- To Reduce False Positives: Increase BCE Loss weight and decrease Focal Loss weight [48]

- To Strengthen Focal Loss Impact: Increase α (e.g., 0.25 → 0.35) and increase Focal Loss weight in the combined loss function [48]

Problem: Poor Performance with Small Medical Datasets

Symptoms: Overfitting, high variance, poor generalization despite using class weights.

Diagnosis and Solutions:

Cost-Sensitive Algorithm Modifications

- Solution: Modify the objective functions of traditional algorithms to incorporate misclassification costs directly.

- Implementation Examples:

- Validation: Research on medical datasets including Pima Indians Diabetes, Haberman Breast Cancer, and Cervical Cancer Risk Factors has shown cost-sensitive methods yield superior performance compared to standard algorithms [50]

Leverage Transfer Learning

Cost-Sensitive Active Learning

- Solution: Implement active learning that considers both annotation cost and informational value when selecting samples for labeling.

- Protocol:

- Train a linear regression model to estimate actual annotation time per sample

- Select samples that maximize information gain while minimizing annotation cost [52]

- Results: Studies on breast cancer phenotyping showed cost-sensitive active learning achieved AUC scores of 0.8673-0.9006 while saving 60-70% annotation time compared to random sampling [52]

Problem: Gradient Issues with Custom Focal Loss Implementation

Symptoms: Unstable training, vanishing/exploding gradients, different convergence behavior compared to standard loss functions.

Diagnosis and Solutions:

Gradient Verification

- Solution: Systematically verify that your Focal Loss implementation produces correct gradients.

- Testing Protocol:

- Troubleshooting: If gradients match but training behavior differs, investigate hyperparameter settings and loss balancing [49]

Numerical Stability Improvements

- Solution: Add epsilon smoothing to probability calculations and implement gradient clipping.

- Implementation: Ensure all probability values in Focal Loss calculations are clamped to [ε, 1-ε] to prevent log(0) errors.

Performance Comparison Tables

Comparison of Loss Functions for Medical Image Segmentation

| Loss Function | Best For | Strengths | Limitations | Typical Performance |

|---|---|---|---|---|

| Standard Cross-Entropy | Balanced datasets | Stable training, good convergence | Poor on imbalanced data | Low Dice on small structures |

| Dice Loss | Moderate class imbalance | Optimizes for overlap metrics | Can struggle with very small structures | Variable performance |

| Focal Loss | Extreme class imbalance (<1%) | Reduces false negatives, focuses on hard examples | Requires careful hyperparameter tuning | Improved sensitivity for small structures [48] |

| Unified Focal Loss | General class imbalance | Generalizes Dice and CE losses, robust | More complex implementation | Consistently outperforms other losses across datasets [53] |