Sample Quality in Cancer Molecular Testing: Foundational Principles, Methodological Advances, and Optimization Strategies for Researchers

This comprehensive review addresses the critical role of sample quality in cancer molecular testing, a fundamental determinant of diagnostic accuracy and research validity.

Sample Quality in Cancer Molecular Testing: Foundational Principles, Methodological Advances, and Optimization Strategies for Researchers

Abstract

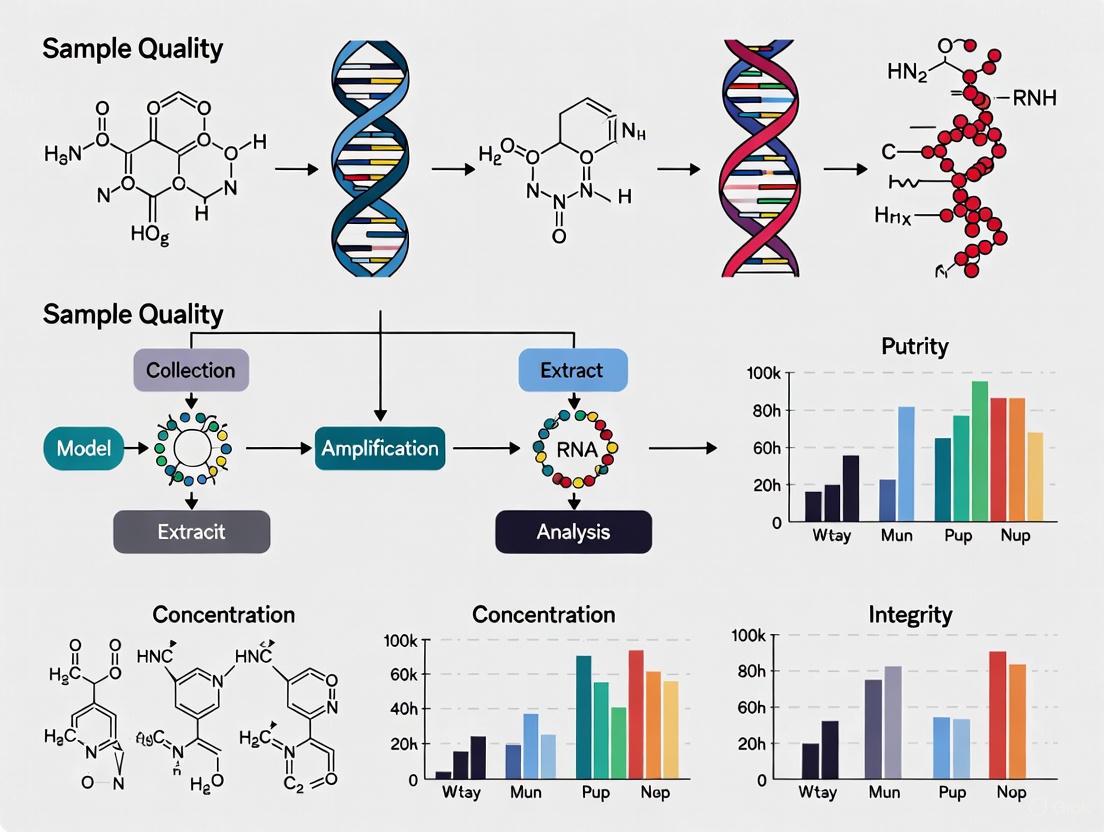

This comprehensive review addresses the critical role of sample quality in cancer molecular testing, a fundamental determinant of diagnostic accuracy and research validity. For researchers, scientists, and drug development professionals, we explore the foundational impact of pre-analytical variables on biomarker detection, evaluate advanced methodologies for challenging samples, provide systematic troubleshooting frameworks for common quality issues, and establish validation standards for emerging technologies. By synthesizing current evidence and practical guidelines, this article aims to empower precision oncology research through enhanced understanding of sample quality considerations across diverse testing platforms and cancer types.

The Critical Link Between Sample Quality and Molecular Testing Outcomes in Cancer Research

Impact of Pre-analytical Variables on Biomarker Detection Accuracy

Accurate biomarker detection is the cornerstone of modern cancer molecular testing, directly influencing patient diagnosis, treatment selection, and therapeutic monitoring. However, a significant challenge in biomarker research lies in the pre-analytical phase—the procedures involving sample collection, processing, and storage before analysis. Evidence indicates that pre-analytical errors contribute to 60-70% of all laboratory errors, with poor blood sample quality alone accounting for 80-90% of these pre-analytical issues [1]. For cancer research, where biomarkers often include labile molecules such as cell-free DNA, proteins, and RNA, the integrity of these analytes is highly susceptible to handling conditions. Suboptimal practices can alter the molecular profile of a biospecimen, leading to unreliable data, failed validation studies, and ultimately, a loss of translational potential for clinical applications [2]. This guide addresses the most impactful pre-analytical variables through troubleshooting FAQs and structured data to help researchers safeguard their biomarker data quality.

Frequently Asked Questions (FAQs)

1. How does a delay in processing my blood samples affect common biomarkers?

The time interval between blood collection and centrifugation (delay to processing) is a critical variable. Cellular metabolism continues in collected blood, leading to measurable changes in analyte concentration. The table below summarizes the effects of a 24-hour processing delay at room temperature on key serum biomarkers [3].

Table 1: Effect of 24-Hour Processing Delay on Serum Biomarkers

| Biomarker | Change after 24-hour delay | Magnitude of Change |

|---|---|---|

| Glucose | Decrease | ~1.6-fold decrease (approx. 1.387 mg/dL per hour) |

| Lactate Dehydrogenase (LDH) | Increase | Significant |

| Gamma-Glutamyl Transferase (GGT) | Increase | Significant |

| Aspartate Aminotransferase (AST) | Increase | Significant |

| C-Reactive Protein (CRP) | No significant change | Stable |

2. What is the impact of repeated freeze-thaw cycles on my archived plasma and serum samples?

Repeated freezing and thawing of samples can cause protein denaturation and degradation, leading to inaccurate results. The stability of biomarkers varies, but some are particularly sensitive. Studies have shown that AST, BUN, GGT, and LDH demonstrate sensitive responses to multiple freeze-thaw cycles [3]. Best practice is to aliquot samples upon initial processing to avoid multiple freeze-thaw cycles.

3. Why might my PD-L1 immunohistochemistry staining be inconsistent, and how can I control for pre-analytical variables?

PD-L1 expression, a critical biomarker for immunotherapy, is sensitive to pre-analytical handling, particularly cold ischemic time (the time between tissue resection and formalin fixation). While an optimal cold ischemic time is often considered to be ≤ 12 hours, the acceptable duration can depend on the specific protein and tissue type [2]. Inconsistencies can also arise from:

- Fixation Type and Time: Use of neutral-buffered formalin and standardized fixation times is crucial. Prolonged fixation can mask epitopes.

- Assay Platform: Different FDA-approved PD-L1 assays (e.g., Dako 22C3, Ventana SP142) may yield different results on case-matched samples, highlighting the need for standardized and validated protocols for each specific assay [2].

4. I am getting high background in my ELISA. What are the most common causes?

High background signal in immunoassays like ELISA is a frequent issue, often traced to the following pre-analytical and analytical factors [4] [5]:

- Insufficient Washing: Inadequate washing leaves unbound antibody or enzyme conjugate in the wells, which react with the substrate. Ensure a robust washing procedure, potentially adding a 30-second soak step between washes.

- Contaminated Buffers: Make fresh buffers to avoid contamination from metals, bacteria, or residual peroxidase.

- Plate Sealers: Reusing plate sealers can transfer residual HRP enzyme between wells, causing non-specific signal. Always use a fresh sealer.

- Over-long Incubation: Incubating with the detection antibody or substrate for longer than the protocol recommends can increase background.

5. How does sample collection from an indwelling catheter introduce error, and how can it be prevented?

Blood samples drawn from an indwelling catheter are susceptible to contamination from the flush solution (e.g., normal saline or heparin). This can cause a dilution of all analytes and a direct bias for electrolytes present in the flush fluid [6]. For example, normal saline has high concentrations of Na+ (154 mmol/L) and Cl- (154 mmol/L).

- Prevention: Before collecting a sample, withdraw and discard a volume of blood that is at least three times the dead space volume of the catheter and line. Always follow the manufacturer's recommendations for the specific intravascular line product [6].

The following tables consolidate quantitative data on the effects of various pre-analytical variables, providing a quick reference for experimental planning and data interpretation.

Table 2: Impact of Pre-analytical Variables on Gene Expression Analysis [7]

| Pre-analytical Variable | Average Number of Genes with 2-Fold Expression Change | Average Consistency of Relative Expression Orderings (REOs) |

|---|---|---|

| Sampling Method (Biopsy vs. Surgical) | 3286 | 86% |

| Tumor Sample Heterogeneity (Low vs. High Tumor Cell %) | 5707 | 89.24% |

| Fixation Delay (48-hr delay vs. 0-hr) | 2970 | 85.63% |

| Preservation (FFPE vs. Fresh-Frozen) | 5009 - 10388 | 84.64% - 86.42% |

Table 3: Common Blood Sample Quality Issues and Their Prevalence [1]

| Sample Quality Issue | Approximate Prevalence among Pre-analytical Errors |

|---|---|

| Hemolyzed Sample | 40% - 70% |

| Insufficient Sample Volume | 10% - 20% |

| Use of Wrong Container | 5% - 15% |

| Clotted Sample | 5% - 10% |

Experimental Protocols for Validating Pre-analytical Conditions

Validating the stability of your target biomarkers under specific pre-analytical conditions is essential for developing a robust laboratory protocol. Below is a generalized methodology that can be adapted for various analyte types.

Protocol 1: Assessing the Impact of Delayed Processing on a Serum/Plasma Biomarker

This protocol is designed to systematically evaluate the stability of your biomarker of interest in blood samples over time [3].

1. Sample Collection:

- Collect venous blood from consented donors (e.g., n=10-50) into appropriate vacutainer tubes (e.g., SST for serum, EDTA for plasma).

2. Experimental Time-Course Setup:

- For each donor, process multiple tubes at different time points after collection (e.g., 0.5 h, 1 h, 2 h, 4 h, 24 h).

- Hold all tubes at room temperature until processing.

- Control (Reference) Sample: Centrifuge one set of tubes at 0.5 hours post-collection. Aliquot and freeze the serum/plasma immediately. This serves as the baseline.

3. Sample Processing:

- At each designated time point, centrifuge the tubes at a standardized force (e.g., 3000 g for 10 minutes).

- Immediately aliquot the supernatant (serum/plasma) into cryovials.

- Flash-freeze aliquots and store at -80°C until analysis.

4. Data Analysis:

- Measure the concentration of your target biomarker and common clinical analytes (e.g., glucose, LDH) in all aliquots using a validated assay (e.g., automated chemistry analyzer, ELISA).

- Perform statistical analysis (e.g., repeated-measures ANOVA) to compare analyte levels at each time point against the reference (0.5 h) sample.

- Determine the Significant Change Limit (SCL), defined as the reference mean ± 2.8 times the usual standard deviation (USD), to identify clinically/relevantly significant changes [3].

Protocol 2: Evaluating the Effect of Freeze-Thaw Cycles on Biomarker Stability

This protocol tests the resilience of your biomarker to the freeze-thaw stress encountered during long-term storage and repeated use.

1. Sample Preparation:

- Process blood samples from multiple donors within 30-60 minutes of collection to obtain serum or plasma.

- Pool serum/plasma from each donor in a large tube, then aliquot into a large number of identical cryovials.

2. Freeze-Thaw Cycling:

- Cycle 0 (Reference): Analyze one set of aliquots immediately after processing without freezing.

- Subsequent Cycles: Freeze the remaining aliquots at -80°C. For each freeze-thaw cycle (e.g., 1, 3, 6, 9 cycles), remove a set of aliquots, thaw them completely at room temperature, and then refreeze them. After completing the designated number of cycles, thaw the aliquots and analyze them alongside the reference.

3. Data Analysis:

- Measure biomarker concentrations in all samples.

- Calculate the percent recovery compared to the reference (Cycle 0) sample. A drop in recovery below a pre-set threshold (e.g., 15%) indicates instability to freeze-thaw cycles.

Workflow and Decision Diagrams

Biomarker Validation Workflow

Assay Problem-Solving Guide

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Materials for Managing Pre-analytical Variables

| Item | Function & Critical Feature | Application Example |

|---|---|---|

| Dry Electrolyte-Balanced Heparin | Anticoagulant for blood gas/electrolyte tests. Dry form prevents sample dilution; electrolyte-balanced prevents cation binding (e.g., to Ca2+). | Blood gas analysis to avoid falsely low cCa2+ [6]. |

| Bar-Coded Sample Tubes | Patient identification and sample tracking. Links patient, operator, and sample ID to minimize risk of patient sample mix-up. | Phlebotomy and biobanking to reduce labeling errors [6]. |

| Automated Homogenizer (e.g., Omni LH 96) | Standardized tissue/cell disruption. High-throughput, single-use tips reduce cross-contamination and operator-dependent variability. | Preparing homogeneous lysates from tumor tissues for nucleic acid or protein extraction [8]. |

| ELISA Plates (not Tissue Culture Plates) | Solid phase for antibody binding. Specifically designed for high protein-binding capacity to ensure efficient capture antibody coating. | Developing or running sandwich ELISAs for cytokine or protein biomarker detection [4] [5]. |

| Fresh, Aliquoted, Quality-Controlled Reagents | Components of assays (buffers, substrates, antibodies). Freshness and proper QC prevent contamination and ensure activity, reducing background and signal issues. | All immunoassays and molecular assays to ensure reproducibility [4] [9]. |

Understanding Tumor Purity and Its Impact on Molecular Analysis

What is tumor purity and why is it a critical parameter in cancer genomics? Tumor purity, or the proportion of cancer cells in a tissue sample, is crucial because molecular profiles from bulk tissue represent a mixture of cancer, immune, and stromal cells. This admixture confounds the biological signal, potentially altering the interpretation of genomic assays and subsequent clinical decisions. Accurate assessment is vital for parameterizing genomic analyses and correctly interpreting the clinical properties of a tumor [10].

What methods are available to estimate tumor purity, and how do they compare? Tumor purity can be estimated through pathological review or in silico methods using genomic, epigenomic, or transcriptomic data. However, these methods show significant variation and poor concordance. The choice of estimation method can profoundly impact the interpretation of genomic assays [10]. A systematic pan-cancer analysis found that purity estimates from DNA-, RNA-, and methylation-based methods have high concordance with each other, but lower correlation with pathologist-derived estimates from immunohistochemistry (IHC) [11].

What are the clinical consequences of variable tumor purity? Variable tumor purity can impinge upon molecular data interpretations and subsequent clinical decisions. It has a confounding effect on correlating and clustering tumours with transcriptomics data. For example, after accounting for tumor purity in differential expression analysis, an immunotherapy gene signature was found in several cancer types that was not detected by traditional methods [11].

Table 1: Common Tumor Purity Estimation Methods and Their Characteristics

| Method Type | Underlying Data | Key Principle | Reported Challenges |

|---|---|---|---|

| Pathology Review | Histology (H&E slides) | Visual estimation of cancer cell fraction by pathologist | Inconsistent between pathologists; may not represent profiled region [10]. |

| ESTIMATE | Transcriptome (RNA-seq) | Uses expression of 141 immune and 141 stromal genes [11] | Does not account for non-immune/stromal normal cells [11]. |

| ABSOLUTE | Genome (Copy-number data) | Models somatic copy-number alterations and allelic frequencies [10] [11] | Can fail on "quiet" genomes with few alterations; lower median purity estimates [10] [11]. |

| LUMP | Epigenome (Methylation) | Averages 44 non-methylated immune-specific CpG sites [11] | Specifically estimates immune cell infiltration as an inverse of purity [11]. |

Strategies for Overcoming Insufficient Tissue Material

What is the typical failure rate for molecular testing due to insufficient tissue, and in which cancers is this most prevalent? In non-small cell lung cancer (NSCLC), a disease where molecular testing is standard for therapy selection, up to 40% of initial biopsies can be inadequate for molecular testing, necessitating repeat invasive procedures [12].

What procedural techniques can improve sample adequacy for molecular testing? Combining different biopsy types significantly increases success rates. In Endobronchial Ultrasound (EBUS) procedures, using core needle biopsy (CNB) alone had a 20% inadequacy rate, while combining CNB with FNA smears reduced the inadequacy rate to 11.4% [12]. For CT-guided core needle biopsies, performing 5 or more passes achieved an 85% adequacy rate, which increased to 100% with over 7 passes [12].

How can cytology specimens be better utilized to avoid repeat biopsies? Cytology specimens, including smears and cell blocks, are a proven approach for genetic sequencing but are often underutilized. When tissue specimens are inadequate, cytology specimens can be a viable alternative for comprehensive genomic profiling, enhancing diagnostic accuracy and reducing the need for repeat biopsies [13]. Ensuring proper collection and processing is key to their success.

Table 2: Sample Adequacy Rates by Biopsy Technique (NSCLC Study)

| Biopsy Procedure | Sample Type | Key Finding | Recommended Best Practice |

|---|---|---|---|

| EBUS-Guided | FNA Smears Only | 35.3% inadequacy rate for NGS [12] | Combine FNA smears with core needle biopsy (CNB) [12]. |

| EBUS-Guided | CNB Only | 20.0% inadequacy rate for NGS [12] | Combine CNB with FNA smears [12]. |

| EBUS-Guided | FNA + CNB Combined | 11.4% inadequacy rate for NGS [12] | Optimal approach for lymph node sampling [12]. |

| CT-Guided | CNB | 85% adequacy with ≥5 passes; 100% with >7 passes [12] | Aim for 5-7 passes during procedure [12]. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Research Tools and Technologies for Challenging Samples

| Tool / Technology | Primary Function | Application in Sample Challenges |

|---|---|---|

| ESTIMATE Algorithm | Estimates tumor purity from RNA-Seq data [11] | Informs analysis of transcriptomic data confounded by stromal and immune cells. |

| ABSOLUTE Algorithm | Estimates purity/ploidy from copy-number data [10] [11] | Provides DNA-based purity estimate for interpreting somatic alterations. |

| SLIMamp Technology | Handles degraded/ low-input DNA from FFPE samples [14] | Enables NGS on samples that fail standard QC; 77% reportability in failed samples [14]. |

| Personalized ctDNA Assays | Detects structural variants in blood [15] | Monitors disease recurrence or progression via liquid biopsy, circumventing tissue limitations. |

| Dual-Platform Liquid Biopsy | Analyzes both cfDNA and CTC DNA [15] | Provides a more comprehensive and accurate mutational profile from a blood sample. |

FAQ: Addressing Common Experimental Scenarios

A pathologist estimated my tumor sample at 80% purity, but my genomic analysis suggests it is much lower. Which should I trust? This is a common finding. Systematic benchmarking shows poor concordance between pathologic and molecular purity estimates. It is recommended to parameterize genomic analyses with tumor purity estimated from the matched molecular analyte being analyzed (e.g., use a DNA-based purity estimate for DNA sequencing analysis). Pathology estimates can be inconsistent and may not represent the specific region used for DNA/RNA extraction [10].

My sample has failed standard QC for our NGS panel due to low DNA quantity/quality. What are my options? Specialized library preparation technologies like SLIMamp are designed for challenging formalin-fixed, paraffin-embedded (FFPE) samples with low tumor purity, poor-quality DNA, or low-input DNA. One study demonstrated that this technology could generate clinical reports for 77% of samples (37/48) that had previously failed standard preanalytical QC, identifying clinically significant variants that would have otherwise been missed [14].

We are planning a study on a cancer type known for low-yield biopsies (e.g., lung cancer). How can we proactively minimize tissue adequacy issues? Engage with your clinical and pathology team to implement optimized sampling protocols. For lesions accessible by EBUS, advocate for a combined approach using both FNA smears and core needle biopsies. For CT-guided biopsies, ensure the operator is aware that 5 or more passes dramatically increase adequacy rates. Furthermore, validate the use of cytology smears for your NGS workflows to unlock an underutilized source of material [12] [13].

How does the tissue source (e.g., lymph node vs. liver) impact the success of molecular testing? The biopsy site can significantly affect adequacy. One root-cause analysis found that lymph node CNB had a 30% inadequacy rate, while liver and soft tissue biopsies showed lower rates (14.3% and 15.4%, respectively), suggesting that intrinsic lymph node heterogeneity may pose a greater challenge for obtaining sufficient tumor cells for sequencing [12].

Troubleshooting Guides

How can I reduce errors and save time during sample labeling and identification?

Incorrect sample labeling is a frequent issue that can lead to significant setbacks, including false research outcomes [16]. Implementing a dual-check system, where two personnel verify the sample labeling, can drastically reduce these errors [16]. For larger-scale operations, technological solutions are highly effective.

- Solution: Implement barcode or RFID (Radio-Frequency Identification) technology for sample tracking [16]. Each sample receives a unique identifier that, when scanned, pulls up its entire history. This not only saves time but also virtually eliminates the risk of sample misidentification [16].

- Supporting Protocol: Regular training workshops that emphasize the importance of precise labeling reinforce its significance and help maintain high standards [16].

What strategies can prevent delays in sample processing?

Processing samples promptly is essential, as delays risk sample viability and the accuracy of outcomes [16]. Bottlenecks often occur in manual, repetitive tasks.

- Solution: Streamline your lab's workflow through automation. Automated systems for sample sorting, labeling, and initial analysis steps can significantly reduce processing times and reduce the potential for human error [16].

- Supporting Protocol: Implement a lean management system. By analyzing and optimizing each step of the sample processing sequence, you can identify and remove bottlenecks, enhancing speed without compromising quality [16].

How can I improve the organization and tracking of samples in storage?

Samples that are stored incorrectly lead to wasted materials and may compromise entire studies [16]. Inefficient tracking, often reliant on error-prone manual logs, creates a ripple effect of inefficiency [16].

- Solution: Establish a tight organizational system with clear storage protocols and designated spots for every sample to prevent overcrowding and confusion [16].

- Supporting Protocol: Implement a digital management system, such as a Laboratory Information Management System (LIMS). This electronic system makes tracking every sample's location, condition, and history straightforward and can provide alerts for mishandled samples [16]. Frequent audits of the storage system ensure ongoing organization [16].

What are the best practices for maintaining sample integrity during processing and storage?

Maintaining sample integrity is paramount for accurate research and diagnostic outcomes. Common culprits that compromise sample quality include temperature fluctuations, cross-contamination, and improper handling [16] [17].

- Solution for Temperature Control: Invest in high-quality, reliable refrigeration systems with real-time monitoring and alerts for temperature deviations. Regular maintenance checks on these systems are essential [16].

- Solution for Contamination: Minimize cross-contamination with rigorous adherence to cleaning protocols and the use of disposable materials whenever possible. Upgrading storage solutions to minimize human handling through automation further preserves sample integrity [16].

- Supporting Protocol for Filtration: When sample filtration is needed, ensure chemical compatibility between the filter membrane and your solvent system to prevent leaching of interferents. For analytes prone to adsorption (like proteins and peptides), use low-binding filters such as PVDF or PES, and always conduct a filter binding investigation during method development [17].

How can we address equipment failure and reagent shortages that disrupt workflows?

In resource-limited settings, laboratory service interruptions are often caused by equipment malfunction, lack of maintenance, and stockouts of reagents [18]. Between 50% and 96% of medical equipment in low-income nations may be broken and not in use [18].

- Solution: Establish a robust equipment maintenance program. This includes adequate operator training, performing regular preventive maintenance, and ensuring a supply of necessary spare parts [18].

- Supporting Protocol: For critical equipment like personal air sampling pumps, ensure batteries are fully charged and calibrate pumps before and after each day of sampling [19]. Implement a reliable supply chain management system for laboratory consumables to prevent stockouts [18].

What role can specialized staff play in streamlining biomarker testing workflows?

The complexity of biomarker testing often leads to operational inefficiencies. Many laboratories lack dedicated staff for coordinating these complex tasks, which can lead to communication gaps and delays [20].

- Solution: The introduction of a Biomarker Testing Navigator (BTN) is a novel and feasible solution. A BTN is a laboratory professional dedicated to coordinating the biomarker testing process [20].

- Supporting Protocol: In a feasibility pilot, BTNs were shown to coordinate multigene testing panels, expedite key steps to ensure optimal preanalytical processes, reduce testing delays, and smooth overall operations. Their key functions include improving communication, better tracking of send-out tests, and enhancing task efficiency [20].

Frequently Asked Questions (FAQs)

What is the single most impactful change we can make to improve sample processing efficiency?

The most impactful change is often the implementation of an electronic tracking system, such as a LIMS, combined with barcode or RFID technology [16]. This addresses multiple hurdles at once by reducing manual data entry errors, drastically speeding up sample identification and location, and providing a clear, accessible record of each sample's lifecycle, which enhances overall workflow transparency and accountability.

Not all optimizations require significant financial investment. Start with process analysis techniques like value stream mapping or the DMAIC (Define, Measure, Analyze, Improve, Control) method from Six Sigma to identify and eliminate non-value-added steps and bottlenecks in your current workflow [21]. Implementing a dual-check system for labeling and establishing clear, standardized protocols for common tasks are low-cost, high-impact improvements [16] [21].

Our team is resistant to new workflows. How can we manage this change?

Resistance to change is a common challenge in process optimization [21]. Successful implementation requires:

- Clear Communication: Explain the reasons for the change and the benefits it will bring.

- Adequate Training: Ensure all staff are thoroughly trained on new procedures and technologies [21].

- Stakeholder Involvement: Involve team members in the planning and testing phases to build ownership and address concerns early [21].

Why is a pre-analytical phase so critical in molecular testing for cancer?

The pre-analytical phase—encompassing sample collection, processing, and storage—directly determines the quality and quantity of biological material available for analysis [22]. In molecular pathology for cancers like NSCLC, the pathologist must evaluate the sample for tumor cell percentage and necrosis. Inadequate samples can lead to false-negative, inconclusive, or incomplete molecular results, which in turn can result in an inappropriate choice of therapeutic strategy and potentially poor patient outcomes [22]. Meticulous tissue handling is required to ensure robust molecular analyses and to avoid exhausting limited tissue samples [23].

Data Presentation

Table 1: Key Challenges and Corresponding Solutions in Sample Processing

| Operational Hurdle | Root Cause | Proposed Solution | Key Benefit |

|---|---|---|---|

| Inaccurate Sample Labeling | Human error during manual entry [16]. | Implement barcode/RFID systems and a dual-check verification process [16]. | Drastically reduced misidentification and false outcomes [16]. |

| Delayed Sample Processing | Manual, repetitive tasks; workflow bottlenecks [16]. | Automate sorting/labeling steps; apply lean management to remove bottlenecks [16]. | Improved speed and maintained sample viability [16]. |

| Inefficient Sample Tracking | Reliance on manual logs and poor organization [16]. | Implement a digital LIMS; establish clear storage protocols [16]. | Real-time location and history tracking; reduced time spent searching [16]. |

| Sample Integrity Loss | Temperature fluctuations; cross-contamination; improper handling [16] [17]. | Use monitored storage; rigorous cleaning; low-binding filters [16] [17]. | Reliable and accurate analytical results [16]. |

| Equipment & Reagent Failure | Lack of maintenance; supply chain issues [18]. | Schedule preventive maintenance; improve supply chain management [18]. | Reduced downtime and service interruptions [18]. |

| Complex Biomarker Testing | Lack of dedicated coordination; poor communication [20]. | Introduce a Biomarker Testing Navigator (BTN) role [20]. | Improved communication, reduced turnaround time, smoother operations [20]. |

Experimental Protocols

Protocol 1: Implementing a Barcode-Based Sample Tracking System

- Needs Assessment: Identify the types of samples, data fields required (e.g., patient ID, date, sample type), and volume of samples processed daily.

- System Selection: Choose a LIMS or standalone software that integrates with barcode scanners and printers.

- Hardware Setup: Acquire barcode printers and durable labels resistant to lab conditions (e.g., frost-free, solvent-resistant). Install barcode scanners.

- Database Creation: Develop a centralized database within the LIMS to store all sample information.

- Staff Training: Conduct comprehensive training on the new procedure, including label printing, application, and scanning [16].

- Pilot and Roll-out: Run a pilot with a small team to identify issues before a lab-wide rollout.

- Audit and Refine: Regularly audit the system for compliance and accuracy, and refine processes as needed.

Protocol 2: Filter Binding Investigation for Sample Preparation

- Sample Preparation: Prepare a standard solution of the analyte(s) of interest in the appropriate matrix.

- Divide Sample: Split the sample into two equal aliquots.

- Processing:

- Centrifuge one aliquot to remove particulates (unfiltered sample).

- Pass the second aliquot through the filter membrane being evaluated (filtered sample).

- Analysis: Analyze both the filtered and unfiltered samples using your standard analytical method (e.g., LC-MS).

- Calculation: Compare the instrument response (e.g., peak area) for each analyte between the filtered and unfiltered samples.

- Interpretation: A significant loss in response (>5-10%) in the filtered sample indicates analyte adsorption to the filter. A different filter material (e.g., PVDF or PTFE for low molecular weight analytes) should be evaluated [17].

Workflow and Relationship Diagrams

Sample Processing Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Sample Management and Molecular Profiling

| Item | Function | Application Note |

|---|---|---|

| Barcode/RFID Labels | Provides a unique, machine-readable identifier for each sample [16]. | Choose durable labels resistant to low temperatures, solvents, and abrasion. |

| LIMS (Laboratory Information Management System) | A software platform for centralizing sample data, tracking location, and managing workflows [16]. | Essential for standardizing processes and providing a secure, accessible database. |

| Low-Binding Filters (e.g., PVDF, PES) | Syringe filters designed to minimize analyte adsorption during sample cleanup [17]. | Critical for filtering proteinaceous samples or low-concentration analytes to avoid loss. |

| Liquid Biopsy Kits | For isolation of cell-free DNA (cfDNA) or circulating tumor DNA (ctDNA) from blood [22]. | A minimally invasive tool for dynamic tumor monitoring when tissue is unavailable. |

| Next-Generation Sequencing (NGS) Kits | Allow for parallel sequencing of multiple genes from a single sample [23] [22]. | Recommended for comprehensive genomic profiling to identify multiple actionable biomarkers. |

| Stabilization Buffers (e.g., for RNA) | Preserve nucleic acids in tissue or blood samples immediately after collection [23]. | Prevents degradation and maintains integrity for accurate downstream molecular analysis. |

Financial and Infrastructure Limitations Affecting Sample Quality Management

Technical Support Center

Troubleshooting Guide: Common Sample Quality Issues

This guide assists researchers in identifying and resolving common pre-analytical challenges that compromise sample quality in cancer molecular testing.

TABLE: Troubleshooting Common Sample Quality Issues

| Problem Scenario | Root Cause | Impact on Molecular Testing | Corrective & Preventive Actions |

|---|---|---|---|

| Poor Fixation: Specimen fixed in unbuffered formalin or for an insufficient time [24]. | Acidic formalin degrades DNA; cold ischemia alters RNA/protein [24]. | Degraded nucleic acids; high failure rates for sequencing; erroneous results [24]. | Use 10% neutral buffered formalin; fix for 48-72 hours; ensure 10:1 formalin-to-tissue volume ratio [24]. |

| Inadequate Tissue/ Tumor Content: Biopsy with low tumor cell percentage [24]. | Sample does not meet minimum tumor content threshold for assay sensitivity. | False-negative results; inability to detect low-frequency somatic variants [24]. | Macrodissection or manual microdissection to enrich tumor content; pre-review of H&E slide by pathologist [24]. |

| Suboptimal Nucleic Acid Quality: FFPE sample with low DNA/RNA integrity [25]. | Prolonged formalin fixation causing cross-linking; improper storage [24]. | Low on-target rate & sequencing depth; poor coverage of housekeeping genes (RNA) [25]. | Employ quality metrics (ddCq, Q-value, DV200) to triage samples; optimize extraction protocols for FFPE [25]. |

| Sample Identification Error: Mislabelled specimen container or cassette [24]. | Breach in standard operating procedure during collection or processing. | Incorrect patient diagnosis and treatment; invalid research data [24]. | Implement barcode-based sample tracking (LIS); automate tissue processing to minimize manual handling [24]. |

Frequently Asked Questions (FAQs)

Q1: What are the most critical steps I can control to ensure high-quality DNA from FFPE samples for sequencing?

A: The pre-analytical phase is paramount. First, ensure rapid and adequate fixation: transfer tissue to a sufficient volume of 10% neutral buffered formalin within an hour of resection. Fixation should continue for 48-72 hours to prevent degradation [24]. Second, avoid acidic or outdated formalin, which fragments DNA. Using small, thin tissue sections and controlled processing equipment ensures consistent quality useful for sensitive sequencing methods [24].

Q2: Our research lab faces budget constraints. What are cost-effective strategies for implementing quality control (QC) for samples?

A: Proactive investment in QC is ultimately cost-saving by reducing assay failure rates. Strategically:

- Leverage Pre-Existing Data: Use quality metrics often provided by commercial sequencing labs (e.g., ddCq, Q-value, DV200) to retrospectively analyze which sample types perform best in your lab [25].

- Prioritize Tests: Triage samples using a small set of affordable, high-impact QC assays (e.g., qPCR-based DNA quality check) before committing to more expensive comprehensive genomic profiling [25].

- Standardize Protocols: In-house standardization of fixation and processing protocols reduces inter-sample variability and the need for repeated experiments [24].

Q3: How does sample quality directly impact the performance and cost-effectiveness of comprehensive genomic profiling?

A: Poor sample quality has a direct cascading effect [25]:

- Performance: Low-quality DNA/RNA leads to poor sequencing metrics like low on-target rate and uneven coverage uniformity. This increases the likelihood of missing critical mutations (false negatives) or generating inaccurate data [25].

- Cost: A failed sequencing run wastes significant funds on reagents and sequencing capacity. The subsequent need to repeat the test with a new sample doubles the cost and delays research timelines. Investing in upfront quality management is therefore essential for financial sustainability.

Q4: Our collaborative study involves samples from multiple hospitals. How can we manage inter-site variability in sample quality?

A: Significant inter-hospital differences in DNA quality metrics (ddCq, Q-value) are a documented challenge [25]. To manage this:

- Develop a Shared SOP: Create and distribute a detailed, standardized protocol for sample collection, fixation, and processing to all collaborating sites [24].

- Centralize QC: Implement a central laboratory to perform uniform quality control checks on all incoming samples before inclusion in the study [25].

- Audit and Feedback: Regularly assess quality metrics from each site and provide feedback to harmonize practices and improve overall sample quality across the network [25].

Experimental Protocols: Assessing Nucleic Acid Quality

Methodology for Pre-Sequencing Quality Control

This protocol outlines the use of key quality metrics to predict the success of comprehensive cancer genomic profiling.

TABLE: Key Quality Metrics for Nucleic Acids [25]

| Metric Name | Nucleic Acid Type | Measurement Technique | Ideal Outcome | Indication of Poor Quality |

|---|---|---|---|---|

| ddCq | DNA | qPCR-based assay | Lower values (indicating less degradation) | High ddCq suggests DNA fragmentation, predicting low sequencing depth [25]. |

| Q-value | DNA | qPCR-based assay | Higher values (indicating better integrity) | Low Q-value suggests the presence of inhibitors or damage, predicting poor coverage uniformity [25]. |

| DV200 | RNA | Fragment Analyzer/Bioanalyzer | Percentage of RNA fragments >200 nucleotides | Low DV200 indicates extensive RNA degradation, predicting poor coverage of target genes [25]. |

Procedure:

- Nucleic Acid Extraction: Isolate DNA and RNA from FFPE tissue sections using dedicated, validated kits.

- Quality Assessment:

- For DNA, perform a multiplex qPCR assay that amplifies a long and a short target. Calculate ddCq (the difference in Cq values) and the Q-value, a quantitative measure of amplifiable DNA [25].

- For RNA, use a capillary electrophoresis system (e.g., Agilent Bioanalyzer) to determine the DV200 metric [25].

- Data Interpretation: Establish lab-specific quality thresholds for each metric. For example, samples with a Q-value below a certain cutoff or a DV200 < 30% should be flagged as high-risk for sequencing failure and may require exclusion or alternative processing methods [25].

Molecular Signaling Pathway in Colorectal Cancer

Understanding the pathways tested is crucial for sample quality goals. In colorectal cancer, the EGFR signaling pathway is a key therapeutic target. The following diagram illustrates this pathway and where common molecular tests for mutations in KRAS, NRAS, and BRAF genes impact treatment decisions.

Sample Processing and Quality Control Workflow

A standardized workflow from sample collection to analysis is essential for maintaining quality. The following chart outlines the critical stages and key decision points.

The Scientist's Toolkit: Research Reagent Solutions

TABLE: Essential Materials for Sample Quality Management in Molecular Testing

| Item | Function in Experiment | Critical Specification |

|---|---|---|

| 10% Neutral Buffered Formalin | Primary tissue fixative. Preserves tissue architecture and prevents nucleic acid degradation. | pH 7.2-7.4; must be fresh; use within a defined shelf-life [24]. |

| FFPE Tissue Processing Cassettes | Holds tissue during dehydration, clearing, and paraffin infiltration in an automated processor. | Withstand high temperatures; secure lid to prevent cross-contamination [24]. |

| High-Purity Paraffin | For embedding tissue; provides support for microtomy. | Low contaminant levels; consistent melting point for uniform sectioning [24]. |

| DNA/RNA Extraction Kits (FFPE-specific) | Isolate nucleic acids from complex, cross-linked FFPE tissue. | Optimized for paraffin removal and reversal of formalin-induced cross-links [24] [25]. |

| qPCR Assay Kits for QC | Quantify and assess the quality (ddCq, Q-value) of DNA prior to sequencing. | Must include assays for multiple amplicon sizes to assess fragmentation [25]. |

| RNA Integrity Number (RIN) or DV200 Assay | Assess the degree of RNA fragmentation (e.g., via Bioanalyzer). | Critical for determining RNA sample suitability for sequencing; DV200 > 30% is often a minimum threshold [25]. |

Advanced Methodologies and Technological Solutions for Quality-Challenged Samples

Next-Generation Sequencing Applications for Low-Quantity and Degraded Samples

Next-Generation Sequencing (NGS) has transformed cancer diagnostics and treatment by enabling comprehensive genomic profiling of tumors. However, successful sequencing depends heavily on sample quality and quantity. This technical support center addresses the specific challenges researchers face when working with low-quantity and degraded samples, particularly in cancer molecular testing research. Below you'll find troubleshooting guides, FAQs, and detailed protocols to optimize your NGS workflows for the most challenging sample types.

Troubleshooting Guides

Common NGS Failure Patterns and Solutions

Table 1: Troubleshooting Common NGS Preparation Issues with Challenging Samples

| Problem Category | Typical Failure Signals | Common Root Causes | Corrective Actions |

|---|---|---|---|

| Sample Input/Quality | Low starting yield; smear in electropherogram; low library complexity [26] | Degraded DNA/RNA; sample contaminants (phenol, salts); inaccurate quantification [26] | Re-purify input sample; use fluorometric quantification (Qubit) instead of just UV; ensure high purity ratios (260/280 ~1.8 for DNA) [26] |

| Fragmentation & Ligation | Unexpected fragment size; inefficient ligation; adapter-dimer peaks [26] | Over- or under-shearing; improper buffer conditions; suboptimal adapter-to-insert ratio [26] | Optimize fragmentation parameters; titrate adapter:insert molar ratios; ensure fresh ligase and buffer [26] |

| Amplification/PCR | Overamplification artifacts; bias; high duplicate rate [26] [27] | Too many PCR cycles; inefficient polymerase or inhibitors; primer exhaustion [26] | Reduce the number of PCR cycles; use high-fidelity polymerases; optimize annealing conditions [27] |

| Purification & Cleanup | Incomplete removal of small fragments; sample loss; carryover of salts [26] | Wrong bead:sample ratio; bead over-drying; inefficient washing [26] | Precisely follow bead cleanup ratios; avoid over-drying beads; use fresh wash buffers [26] |

NGS Quality Control Metrics and Standards

Table 2: Essential Quality Control Checkpoints for Challenging Samples

| QC Checkpoint | Assessment Method | Acceptance Criteria | Implications of Failure |

|---|---|---|---|

| Nucleic Acid Purity | Spectrophotometry (NanoDrop) [28] | A260/A280 ~1.8 (DNA), ~2.0 (RNA); A260/A230 >1.8 [28] | Enzyme inhibition in downstream steps; reduced library yield [26] |

| Nucleic Acid Integrity | Electrophoresis (TapeStation, Bioanalyzer) [28] | DNA Integrity Number (DIN) >7; RNA Integrity Number (RIN) >8 [28] | Poor library complexity; high duplication rates; sequencing bias [26] |

| Library Quantification | Fluorometric methods (Qubit), qPCR [26] | qPCR for accurate amplifiable concentration [26] | Over- or under-clustering on sequencer; failed run [26] |

| Library Size Distribution | Electropherogram [26] | Sharp peak at expected size; absence of adapter dimer (~70-90 bp peak) [26] | Inefficient sequencing; high adapter content in data [28] |

| Sequencing Run Quality | FASTQ Q-scores, Cluster Density [28] | Q-score >30; >80% clusters passing filter [28] | High error rates; reduced yield and confidence in variant calling [28] |

Frequently Asked Questions (FAQs)

What are the main causes of DNA degradation in clinical samples, and how can they be minimized? DNA degradation occurs through several mechanisms: oxidation (from heat or UV exposure), hydrolysis (breaking DNA backbone bonds), enzymatic breakdown (from nucleases), and mechanical shearing [29]. In clinical contexts, formalin fixation of FFPE samples causes cross-linking and fragmentation, while delayed processing or improper storage of frozen tissues accelerates degradation [30]. Minimization strategies include using antioxidants, proper storage at -80°C, employing nuclease inhibitors like EDTA, and optimizing mechanical homogenization to avoid excessive shearing [29].

Why does my NGS library from an FFPE sample have low complexity and high duplication rates? This is a classic symptom of degraded starting material. FFPE processing fragments DNA into small pieces, reducing the diversity of unique DNA molecules available for library construction [31] [30]. During PCR amplification, the few intact molecules are over-amplified, leading to a high percentage of duplicate reads. To mitigate this, use extraction protocols designed for FFPE, input more DNA if possible, and employ library prep kits that specialize in short fragments [31].

My sequencing data shows a high percentage of adapter dimers. What went wrong in the library prep, and how can I fix it? A prominent ~70-90 bp peak on an electropherogram indicates adapter dimers, a common failure in challenging samples [26]. The root causes are often an imbalance in the adapter-to-insert ratio (too much adapter) or inefficient ligation due to poor enzyme performance or contaminants [26]. Corrective actions include: (1) accurately quantifying your fragmented DNA before adapter ligation, (2) titrating the adapter concentration, (3) ensuring a second cleanup step to remove excess adapters, and (4) verifying that ligation reagents are fresh and active [26].

What are the best practices for quantifying DNA from low-quality samples before NGS? Relying solely on UV absorbance (e.g., NanoDrop) is a common pitfall, as it overestimates concentration by counting contaminants and degraded nucleic acids [26]. A robust workflow uses a tiered approach:

- UV Spectrophotometry: For a quick purity check (260/280 and 260/230 ratios) [28].

- Fluorometry: Use a Qubit or similar instrument for a specific and accurate measurement of double-stranded DNA concentration [26].

- qPCR: For the most accurate measure of amplifiable DNA, which is critical for predicting library Prep success [26].

Are there alternative technologies if my sample consistently fails NGS? Yes. If comprehensive NGS profiling fails repeatedly due to sample quality, targeted technologies with lower input requirements and tolerance for fragmentation can be a solution. For example, the MassARRAY System uses short amplicon PCR (80-120 bp) and mass spectrometry, enabling it to generate results from FFPE samples that had previously failed NGS, achieving high sensitivity with as little as 20 ng of input DNA [31].

Detailed Methodologies & Protocols

Protocol 1: Optimized DNA Extraction from Challenging FFPE Samples

Principle: Efficiently recover fragmented DNA while removing formalin-induced cross-links and PCR inhibitors common in FFPE tissue [29] [30].

Reagents and Equipment:

- Xylene or another deparaffinization agent

- Ethanol (100%, 96%)

- Proteinase K

- Lysis buffer with EDTA

- RNAse A

- Magnetic bead-based purification kit or spin columns

- Heat block or water bath (56°C, 80°C)

- Centrifuge

- Vortex mixer

Procedure:

- Sectioning and Deparaffinization: Cut 3-5 sections of 10 µm thickness into a microfuge tube. Add 1 ml of xylene, vortex, and incubate at room temperature for 5 minutes. Centrifuge at full speed for 2 minutes and carefully remove the supernatant. Repeat once.

- Ethanol Wash: Add 1 ml of 100% ethanol to the pellet, vortex, and centrifuge. Remove the supernatant. Repeat with 96% ethanol. Air-dry the pellet for 5-10 minutes.

- Lysis and De-crosslinking: Resuspend the pellet in 200 µl of lysis buffer containing 20 µl of Proteinase K. Incubate at 56°C with agitation until the tissue is completely dissolved (several hours to overnight).

- RNAse Treatment: Add 2 µl of RNAse A, mix, and incubate at room temperature for 2 minutes.

- Purification: Purify the DNA using a magnetic bead-based cleanup system (e.g., following the Bead Ruptor Elite protocol for tough samples [29]) or a spin column, following the manufacturer's instructions. Elute in a low-EDTA buffer or nuclease-free water.

- Quality Control: Quantify DNA using fluorometry (Qubit) and assess integrity via fragment analyzer (TapeStation). A DV200 value (percentage of fragments >200 bp) of >50% is generally desirable for NGS.

Protocol 2: Saline Freezing for Sample Preservation in Low-Resource Settings

Principle: When standard FFPE processing leads to severe DNA degradation, freezing tissue in saline provides a cost-effective alternative that preserves high DNA integrity, requiring only basic equipment [30].

Reagents and Equipment:

- Sterile physiological saline (0.9% NaCl)

- Cryogenic vials

- -20°C freezer (a standard laboratory/freezer is sufficient)

Procedure:

- Immediately after collection, place the fresh tissue biopsy (e.g., core needle biopsy) in a cryovial filled with sterile physiological saline.

- Ensure the tissue is fully submerged.

- Place the vial directly into a -20°C freezer for storage.

- For DNA extraction, thaw the sample on ice and proceed with a standard tissue DNA extraction protocol. This method has been shown to yield DNA of significantly higher integrity than matched FFPE samples, enabling robust NGS library construction [30].

Workflow Visualization

The following diagram illustrates the complete NGS workflow for challenging samples, highlighting key quality control checkpoints and potential failure points.

NGS Workflow with QC Gates

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Kits for Challenging NGS Samples

| Item | Function | Application Notes |

|---|---|---|

| Bead Ruptor Elite | Mechanical homogenizer for efficient cell lysis and DNA extraction from tough samples (e.g., bone, tissue) [29] | Provides precise control over homogenization parameters to minimize DNA shearing; specialized bead tubes optimize recovery [29]. |

| Magnetic Bead Cleanup Kits | For post-extraction and post-ligation purification to remove contaminants, salts, and short fragments [26] [27] | Critical for removing adapter dimers; the bead-to-sample ratio must be精确 followed to prevent sample loss or inefficient cleanup [26]. |

| NGS Library Prep Kits for FFPE/Low Input | Specialized reagents for constructing sequencing libraries from degraded or limited material [31] [27] | Often incorporate protocols for enzymatic fragmentation and are optimized for shorter fragment sizes, improving success rates with suboptimal samples [31]. |

| MassARRAY System | Mass spectrometry-based platform for targeted genotyping [31] | An alternative rescue technology for samples failing NGS; uses short amplicons (80-120 bp) requiring minimal DNA input (≤20 ng) [31]. |

| EDTA | Chelating agent used in lysis buffers [29] | Demineralizes tough tissues (e.g., bone) and inhibits nucleases that degrade DNA; concentration must be balanced as it can also inhibit PCR [29]. |

Integrated RNA and DNA Sequencing Approaches to Overcome Technical Limitations

Frequently Asked Questions (FAQs)

General Principles and Rationale

Q1: Why is integrating RNA and DNA sequencing particularly important for cancer research? In cancer molecular testing, DNA sequencing identifies mutations, but RNA sequencing reveals which of those mutations are actively expressed and likely to impact protein function. This integration helps distinguish driver mutations from passive events and uncovers biologically active gene fusions and splicing variants that DNA-seq alone can miss. RNA-seq bridges the "DNA to protein divide," providing more clarity and therapeutic predictability for precision oncology [32].

Q2: When should I use a targeted RNA-seq panel versus whole transcriptome sequencing? Targeted RNA-seq panels are recommended when you need deeper coverage of specific cancer-related genes, higher detection accuracy for rare alleles, and more reliable variant identification. Whole transcriptome sequencing is more suitable for discovery-based research, such as identifying novel fusion genes or unanticipated splicing events [32].

Troubleshooting Common Experimental Challenges

Q3: My RNA-seq data shows a high false positive rate for variant calling. How can I improve accuracy? Implement stringent bioinformatics parameters and leverage a high-confidence negative position list to control the false positive rate. Key steps include:

- Setting a minimum variant allele frequency (VAF) threshold (e.g., ≥2%)

- Ensuring sufficient read depth (e.g., DP ≥20) and alternative allele depth (e.g., ADP ≥2)

- Using multiple variant callers (e.g., VarDict, Mutect2, LoFreq) and consensus approaches [32]

Q4: I am getting low library yields from my NGS prep. What are the most common causes? Low library yield is a frequent challenge with several potential root causes and solutions [26]:

| Common Cause | Mechanism of Yield Loss | Corrective Action |

|---|---|---|

| Poor Input Quality | Enzyme inhibition from contaminants (phenol, salts, EDTA). | Re-purify input sample; ensure 260/230 >1.8 and 260/280 ~1.8. |

| Quantification Errors | Overestimating usable DNA/RNA concentration. | Use fluorometric methods (Qubit) over UV absorbance (NanoDrop). |

| Fragmentation Issues | Over- or under-fragmentation produces suboptimal fragment sizes. | Optimize fragmentation time/energy; check fragment distribution pre-ligation. |

| Suboptimal Ligation | Poor ligase performance or incorrect adapter:insert ratio. | Titrate adapter ratios; ensure fresh ligase/buffer; optimize incubation. |

Q5: A variant was detected by DNA-seq but not by RNA-seq. What does this mean? This typically indicates that the mutation is not expressed or is expressed at very low levels in the tumor sample. This could mean the mutation occurred in a non-expressed gene, in a subclone of tumor cells, or in non-tumor cells (e.g., due to clonal hematopoiesis). From a clinical perspective, an unexpressed variant may be of lower therapeutic relevance, as the aberrant protein is not being produced [32].

Q6: My sequencing data shows high adapter-dimer contamination. How can I prevent this? A sharp peak at ~70-90 bp on an electropherogram indicates adapter dimers. To prevent this [26]:

- Accurately quantify input DNA to use the optimal adapter-to-insert molar ratio.

- Avoid using an excess of adapters.

- Optimize bead-based cleanup parameters (e.g., adjust bead-to-sample ratio) to more effectively remove short fragments.

- Consider a two-step indexing PCR protocol instead of a one-step protocol to reduce artifact formation.

Data Analysis and Interpretation

Q7: How can I best integrate DNA and RNA sequencing data for a holistic view of a tumor's biology? A robust integrative analysis involves both confirmation and discovery [32]:

- Use RNA-seq to verify and prioritize DNA variants: Confirm that DNA mutations are transcribed, suggesting functional relevance.

- Use RNA-seq for independent variant detection: It can uniquely identify expressed variants missed by DNA-seq, especially in complex genomic regions.

- Leverage RNA-seq for fusion and splicing analysis: It is indispensable for detecting gene fusions, alternative splicing, and other transcriptomic alterations.

Q8: What are the key quality control metrics for a successful integrated sequencing experiment? For both DNA and RNA sequencing, key QC metrics include [32] [26]:

- For Input Sample: High-quality, high-molecular-weight DNA/RNA (e.g., RIN >7 for RNA).

- For Library Prep: High library concentration, correct fragment size distribution, and low adapter-dimer peak.

- For Sequencing Data:

- High read depth (coverage) over your regions of interest.

- High mapping rate to the reference genome.

- For RNA-seq, good 3' bias metrics and expected expression of housekeeping genes.

Troubleshooting Guides

Guide 1: Addressing Sample Quality and Preparation Failures

Sample quality is the foundation of successful sequencing. Failures at this stage can compromise your entire experiment.

Problem: Low or Failed Library Yield.

Step-by-Step Diagnosis and Solution:

- Check Input DNA/RNA Quality:

- Symptoms: Degraded nucleic acids appear as a smear (rather than a sharp band) on an electropherogram.

- Solution: Re-extract samples, minimize freeze-thaw cycles, and use proper storage conditions. For RNA, always work in an RNase-free environment [26].

Check for Contaminants:

- Symptoms: Low 260/230 and 260/280 ratios from a spectrophotometer.

- Solution: Re-purify the sample using clean columns or beads to remove salts, phenol, or other inhibitors. Ensure wash buffers are fresh [26].

Verify Quantification:

- Symptoms: Yield is low despite good spectrophotometer readings.

- Solution: Do not rely solely on UV absorbance (NanoDrop), which can overestimate concentration by detecting contaminants. Use fluorometric methods (Qubit, PicoGreen) that are specific for nucleic acids [26].

Review Fragmentation and Ligation Steps:

- Symptoms: Incorrect fragment size distribution or high adapter-dimer peaks.

- Solution:

- Optimize fragmentation parameters (time, energy) for your specific instrument.

- Titrate the adapter-to-insert molar ratio to find the optimal balance that maximizes ligation efficiency while minimizing adapter-dimer formation [26].

Guide 2: Resolving Discrepancies Between DNA and RNA Sequencing Results

Encountering a variant in one dataset but not the other is a common challenge in integrated analysis. The following workflow guides you through a systematic investigation.

Investigation Workflow for DNA/RNA Variant Discrepancies

Guide 3: Choosing the Right Sequencing Platform for Integrated Analysis

The choice of sequencing platform is a strategic decision that depends on the specific research questions and applications. The table below compares key technologies.

Comparison of Next-Generation Sequencing Platforms [33] [34] [35]

| Platform (Technology) | Read Length | Key Strengths | Common Applications in Cancer Genomics | Technical Limitations |

|---|---|---|---|---|

| Illumina (Short-read) | 75-300 bp | High accuracy (~99.9%), low cost per base, high throughput. | Whole genome/exome sequencing, targeted panels, RNA-seq. | Struggles with repetitive regions, phasing, and structural variants. |

| PacBio HiFi (Long-read) | >15,000 bp | High accuracy (>99.9%), resolves complex regions. | Genome assembly, phasing, structural variant detection, isoform sequencing. | Higher cost per sample, lower throughput than Illumina. |

| Oxford Nanopore (Long-read) | Up to 2+ Mb | Ultra-long reads, real-time analysis, direct RNA sequencing. | Real-time pathogen detection, structural variants, epigenetic modifications. | Higher raw error rate than Illumina, requires specific bioinformatics. |

Experimental Protocols

Protocol: Targeted RNA-Seq for Expressed Variant Validation

This protocol is designed to validate and prioritize DNA-identified variants using targeted RNA sequencing [32].

1. Sample Preparation and Input QC

- Input Material: 50-100 ng of total RNA with a RIN (RNA Integrity Number) > 7.0.

- QC Method: Assess RNA quality using an Agilent BioAnalyzer or TapeStation. Use fluorometry (Qubit) for accurate quantification.

2. Library Construction

- Enrichment Method: Use targeted RNA-seq panels (e.g., Agilent ClearSeq, Roche Comprehensive Cancer) with probes designed for cancer-related genes.

- Probe Design: Panels should include exon-exon junction spanning probes to accurately capture spliced transcripts.

- Fragmentation & Library Prep: Follow manufacturer's instructions for the selected kit. Typically involves RNA fragmentation, cDNA synthesis, adapter ligation, and PCR amplification.

3. Sequencing

- Platform: Illumina systems (e.g., NovaSeq, MiSeq) are commonly used for targeted sequencing.

- Coverage: Sequence to a high average depth (>500x) over the targeted regions to ensure sensitivity for detecting lowly expressed variants.

4. Data Analysis

- Alignment: Map reads to the reference genome using a splice-aware aligner (e.g., STAR).

- Variant Calling: Use a pipeline optimized for RNA-seq data. It is recommended to use multiple callers (e.g., VarDict, Mutect2) and aggregate the results.

- Filtering: Apply stringent filters:

- Minimum Depth: DP ≥ 20

- Alternative Allele Depth: ADP ≥ 2

- Variant Allele Frequency: VAF ≥ 2%

- Validation: Compare the final list of high-confidence RNA variants against the original DNA-seq findings to confirm expression.

Key Research Reagent Solutions for Integrated Sequencing [32] [26] [36]

| Item | Function | Example/Notes |

|---|---|---|

| Targeted RNA Panels | Enriches sequencing reads for specific genes of interest, allowing for deeper coverage and more sensitive mutation detection. | e.g., Afirma Xpression Atlas (593 genes), Agilent ClearSeq, Roche Comprehensive Cancer panels. |

| DNA/RNA Extraction Kits | Isolates high-quality, high-molecular-weight nucleic acids from various sample types (FFPE, fresh frozen). | Ensure kits are validated for your sample type and minimize contaminant carryover. |

| Library Prep Kits | Prepares nucleic acid fragments for sequencing by adding platform-specific adapters and barcodes. | Choice depends on platform (Illumina, Nanopore) and application (DNA, RNA). |

| Nuclease-free Water | A critical reagent used to dilute samples and reagents without degrading nucleic acids. | |

| Magnetic Beads (SPRI) | Used for post-reaction cleanups and size selection to remove unwanted fragments like adapter dimers. | The bead-to-sample ratio is critical for optimal size selection. |

| Flow Cells | The consumable where the actual sequencing reaction occurs. | Platform-specific (e.g., Illumina S-series, Nanopore MinION R10.4.1). |

| QC Instruments | Assesses the quantity and quality of input samples and final libraries. | Fluorometer (Qubit) for concentration; Bioanalyzer/TapeStation for fragment size. |

Tissue biopsy has long been the gold standard for cancer diagnosis and molecular profiling. However, it faces significant limitations including invasive sampling procedures, tumor heterogeneity, and challenges in longitudinal monitoring. Liquid biopsy emerges as a transformative technology that circumvents these tissue quality issues by analyzing tumor-derived biomarkers from bodily fluids, enabling less invasive sampling, real-time monitoring, and a comprehensive view of tumor heterogeneity [37] [38].

This technical support center provides researchers and clinicians with practical guidance for implementing liquid biopsy technologies, focusing on methodologies, troubleshooting, and reagent solutions to address common experimental challenges.

Frequently Asked Questions (FAQs)

What is the primary advantage of liquid biopsy over tissue biopsy for molecular profiling?

The primary advantage is the ability to obtain a comprehensive molecular profile without the invasiveness and limitations of tissue sampling. Liquid biopsy analyzes circulating tumor biomarkers (CTCs, ctDNA, EVs) from blood or other bodily fluids, providing a real-time snapshot of tumor heterogeneity across multiple disease sites and enabling serial monitoring of treatment response and resistance evolution [37] [38]. This is particularly valuable when tissue is insufficient, inaccessible, or when repeated sampling is needed to monitor disease progression.

Which biomarkers can be detected via liquid biopsy and what are their key applications?

Table 1: Liquid Biopsy Biomarkers and Applications

| Biomarker | Description | Primary Applications |

|---|---|---|

| Circulating Tumor DNA (ctDNA) | Fragments of tumor-derived DNA in bloodstream [38] | - Somatic mutation detection- Treatment monitoring- Minimal Residual Disease (MRD) assessment |

| Circulating Tumor Cells (CTCs) | Intact cells shed from primary/metastatic tumors [38] | - Prognostic assessment- Studying metastasis mechanisms |

| Tumor-Derived Extracellular Vesicles (EVs) | Membrane-bound vesicles carrying proteins, nucleic acids [37] | - Proteomic profiling- RNA analysis |

When is liquid biopsy clinically indicated versus when should tissue biopsy be prioritized?

Liquid biopsy is considered medically necessary in specific clinical scenarios, particularly for patients with advanced cancers. Key indications include:

- When a companion diagnostic test is required for patient selection for a specific therapy and tissue results are unavailable [39].

- For patients with locally advanced or metastatic NSCLC when tumor tissue is insufficient for testing or biopsy is unsafe [39].

- To identify specific mutations (e.g., PIK3CA in breast cancer) to guide targeted therapy in the metastatic setting [39]. Tissue biopsy remains the gold standard for initial diagnosis and can provide architectural information that liquid biopsy cannot. The decision should be based on clinical context and testing objectives [38] [39].

What are the most common causes of false-negative liquid biopsy results?

- Low tumor DNA shedding: The amount of ctDNA released into the bloodstream varies by cancer type, disease burden, and tumor characteristics. Cancers such as brain, renal, and thyroid tumors typically shed less DNA [40].

- Inadequate analytical sensitivity of the assay, especially for early-stage disease or minimal residual disease with very low ctDNA concentrations [40].

- Pre-analytical errors: Improper blood collection, processing, or storage can degrade biomarkers [41].

How can variants from clonal hematopoiesis (CHIP) be distinguished from true tumor-derived variants?

Clonal hematopoiesis of indeterminate potential (CHIP) is a phenomenon where non-cancerous blood or bone marrow cells develop genomic variants in cancer-associated genes. These CHIP variants are detectable in liquid biopsy and can be misinterpreted as tumor-derived, potentially leading to inappropriate treatment decisions [40]. Differentiation strategies include:

- Paired white blood cell (WBC) sequencing: Sequencing a matched WBC sample allows for subtraction of CHIP-associated variants [40].

- Bioinformatic filtering: Using databases of known CHIP mutations and specific mutational signatures [40].

- Correlation with clinical context: Considering the mutation profile in relation to the patient's specific cancer type.

Troubleshooting Common Experimental Challenges

Challenge: Low ctDNA Yield or Purity

Table 2: Troubleshooting Low ctDNA Yield

| Observed Problem | Potential Root Cause | Recommended Solution |

|---|---|---|

| Consistently low ctDNA yield across samples | - Delayed sample processing- Improper blood collection tube- Inefficient DNA extraction method | - Process plasma within 2-4 hours of blood draw [41]- Use validated blood collection tubes (e.g., Streck, EDTA)- Implement extraction methods optimized for short-fragment cfDNA |

| High background of wild-type DNA masking variants | - Low tumor fraction- Inadequate assay specificity | - Use assays with high sequencing depth (>10,000X) for low-frequency variants [41]- Employ unique molecular identifiers (UMIs) to reduce PCR errors |

Challenge: Inconsistent CTC Capture or Viability

Table 3: Troubleshooting CTC Isolation

| Observed Problem | Potential Root Cause | Recommended Solution |

|---|---|---|

| Low CTC recovery rates | - Over-reliance on EpCAM-based capture for epithelial-mesenchymal transition (EMT) cells- Excessive blood cell contamination | - Use multi-marker approaches (e.g., include EMT markers) [38]- Implement size-based or density-based pre-enrichment methods |

| Isolated CTCs non-viable for culture | - Cellular damage during isolation- Extended processing time | - Use gentler microfluidic technologies- Reduce time from draw to processing; optimize culture media immediately post-isolation |

Experimental Protocols for Key Liquid Biopsy Applications

Protocol 1: ctDNA Extraction and Mutation Analysis from Plasma

Principle: Isolate cell-free DNA (cfDNA) from plasma and analyze for tumor-specific mutations via next-generation sequencing (NGS) [38] [41].

Workflow Diagram:

Methodology:

- Blood Collection and Processing: Collect venous blood into stabilized blood collection tubes (e.g., Streck Cell-Free DNA BCT). Process within 2-4 hours by double centrifugation (e.g., 1600 × g for 10 min, then 16,000 × g for 10 min) to obtain platelet-poor plasma [41].

- cfDNA Extraction: Use commercial silica-membrane column or magnetic bead-based kits specifically validated for cfDNA isolation. Quantify yield using fluorometry (e.g., Qubit).

- Library Preparation and Sequencing: Prepare sequencing libraries from cfDNA using kits compatible with low input DNA. Incorporate Unique Molecular Identifiers (UMIs) to correct for amplification errors and enable accurate quantification. Use targeted NGS panels covering relevant cancer genes with high sequencing depth (>10,000X coverage).

- Bioinformatic Analysis: Align sequences to reference genome. Use UMI consensus reads to generate high-confidence variant calls. Filter against population databases and, if possible, matched white blood cell DNA to exclude CHIP variants [40].

Protocol 2: Circulating Tumor Cell (CTC) Enrichment and Identification

Principle: Enrich and identify rare circulating tumor cells from peripheral blood based on physical and/or biological properties [38].

Workflow Diagram:

Methodology:

- Sample Preparation: Collect blood in EDTA tubes. Process within 24-48 hours. Perform red blood cell lysis using ammonium chloride solution.

- CTC Enrichment:

- Positive Immunomagnetic Selection: Incubate with antibody-coated magnetic beads (e.g., anti-EpCAM). Isulate using a magnetic separator. Note: This may miss EpCAM-low CTCs.

- Negative Selection: Deplete hematopoietic cells using CD45-coated beads.

- Size-Based Filtration: Use microfluidic devices with precise pore sizes to separate larger CTCs from blood cells.

- CTC Identification and Staining: Fix and permeabilize enriched cells. Stain with fluorescently labeled antibodies: anti-cytokeratin (CK+ for epithelial origin), anti-CD45 (leukocyte marker, should be negative), and DAPI (nuclear stain). Vimentin staining can detect mesenchymal-like CTCs.

- Enumeration and Analysis: Identify CTCs as nucleated (DAPI+), CK+, CD45- cells using fluorescence microscopy or automated scanning systems.

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key Reagents for Liquid Biopsy Research

| Reagent / Material | Function | Example Application Notes |

|---|---|---|

| Stabilized Blood Collection Tubes | Preserves cell-free DNA and cellular integrity post-draw | Critical for multi-center trials; prevents genomic DNA contamination and cfDNA degradation during transport [41]. |

| cfDNA Extraction Kits | Isolate and purify short-fragment DNA from plasma | Select kits optimized for <300bp fragments; avoid genomic DNA co-extraction. |

| UMI Adapters | Tag individual DNA molecules pre-amplification | Enables error correction and accurate quantification of variant allele frequency in NGS [41]. |

| Anti-EpCAM Magnetic Beads | Immuno-affinity capture of epithelial CTCs | Effective for carcinomas; may require combination with other markers (e.g., Vimentin) for EMT-type CTCs [38]. |

| Microfluidic Chips (e.g., CTC-iChip) | Size-based and inertial focusing for CTC isolation | Allows marker-independent isolation, preserving CTC viability for downstream culture or functional analysis. |

Rapid Molecular Testing Platforms for Time-Sensitive Clinical Scenarios

Frequently Asked Questions (FAQs)

1. What are the key advantages of using rapid molecular syndromic panels in critical care? Rapid Multiplex Molecular Syndromic Panels (RMMSP) are designed to simultaneously detect multiple pathogens (typically 3 or more) and genotypic resistance markers with a time-to-result of less than 6 hours. Their implementation significantly enhances diagnostic accuracy, reduces the time-to-appropriate antimicrobial treatment, decreases inappropriate empiric therapy, and shortens the duration of antibiotic therapy. This contributes positively to antimicrobial stewardship and may be associated with lower in-hospital mortality and potential cost savings [42].

2. How can sample quality impact the results of my cancer molecular test? Poor sample quality is a primary cause of test failure or unreliable results in molecular assays. For tests like PCR, poor DNA template integrity—such as sheared or nicked DNA from improper isolation—can lead to amplification failure. Similarly, low-purity templates containing residual inhibitors like phenol, EDTA, or excess salts can also prevent successful amplification. It is crucial to use proper isolation techniques, store DNA correctly in molecular-grade water or TE buffer, and assess DNA integrity via gel electrophoresis when necessary [43].

3. My PCR reaction produced no amplification product. What are the most common causes? A complete lack of PCR product can stem from several issues related to the template, primers, or reaction conditions:

- Template DNA: The target sequence might be absent, the template could be degraded, or inhibitors might be present.

- Primers: They may be poorly designed, non-specific, or used at an insufficient concentration.

- Reaction Components: The reaction mix might be missing a component, or the concentration of Mg2+ or DNA polymerase may be incorrect.

- Thermal Cycling: The denaturation, annealing, or extension temperatures and times may be suboptimal, or an insufficient number of cycles may have been run [43] [44].

4. What should I do if my PCR results show multiple non-specific bands? Non-specific amplification is often due to low reaction specificity. Solutions include:

- Employing a Hot-Start DNA Polymerase to prevent activity during reaction setup.

- Increasing the annealing temperature in 2°C increments to enhance stringency.

- Optimizing Mg2+ concentration, as excess Mg2+ can reduce fidelity.

- Checking primer design to ensure specificity and avoid self-complementarity.

- Reducing primer or template concentration if they are in excess [43] [44].

5. How do I ensure my diagnostic model is robust when using machine learning with molecular data? For a robust molecular classifier, as demonstrated in cancer grading research, key considerations include:

- Using a rigorous multi-phase study design (discovery, screening, validation, model construction) with progressively expanded cohorts to refine biomarkers and validate models.

- Applying appropriate data preprocessing, such as rank transformation of gene expression data, which conserves gene relationships and allows for single-sample analysis without batch correction.

- Validating the model's performance using independent datasets and relevant statistical measures, such as Receiver Operating Characteristic (ROC) curves and Area Under the Curve (AUC) values [45] [46].

Troubleshooting Guides

Common PCR Issues and Solutions

Table: Troubleshooting Common PCR Problems

| Observation | Possible Cause(s) | Recommended Solution(s) |

|---|---|---|

| No Amplification Product | Poor template quality or integrity [43]. | Isolate DNA with minimal shearing. Assess integrity by gel electrophoresis. Re-purify to remove inhibitors [43]. |

| Poor primer design or specificity [44]. | Verify primer sequence complementarity to the target. Use primer design tools. Avoid complementary regions [43] [44]. | |

| Suboptimal reaction conditions [44]. | Optimize Mg2+ concentration and annealing temperature. Ensure all reaction components are added [43] [44]. | |

| Multiple or Non-Specific Bands | Primer annealing temperature too low [43]. | Increase annealing temperature in 2°C increments. Use a gradient cycler for optimization [43]. |

| Excess Mg2+ concentration [43]. | Adjust Mg2+ concentration in 0.5 mM increments to find the optimal range [43]. | |

| Non-hot-start DNA polymerase activity at low temps [44]. | Use a hot-start DNA polymerase. Set up reactions on ice [43] [44]. | |

| Faint Bands | Insufficient template or primer quantity [43]. | Increase the amount of input DNA. Optimize primer concentration (typically 0.1–1 μM) [43]. |

| Insufficient number of cycles [43]. | Increase the number of cycles (up to 40 for low-copy templates) [43]. | |

| Suboptimal extension time/temperature [43]. | Prolong extension time for longer amplicons. Ensure extension temperature is correct for the polymerase [43]. | |

| Smear or High Background | Excessive DNA input [43]. | Lower the amount of template DNA in the reaction [43]. |

| Non-specific priming [44]. | Increase annealing temperature. Redesign primers for greater specificity [43] [44]. | |

| Contamination with foreign DNA [44]. | Use dedicated workspace and filtered pipette tips. Use UV irradiation to decontaminate surfaces [44]. |

Pre-analytical Sample Quality Checklist

This checklist is critical for ensuring reliable results in cancer molecular testing research.

- □ Sample Collection: Use appropriate collection tubes (e.g., EDTA, PAXgene) and standardize procedures.