ROC Curve Analysis for Pharmacophore Model Validation: A Comprehensive Guide for Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on applying Receiver Operating Characteristic (ROC) curve analysis to evaluate pharmacophore model performance.

ROC Curve Analysis for Pharmacophore Model Validation: A Comprehensive Guide for Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on applying Receiver Operating Characteristic (ROC) curve analysis to evaluate pharmacophore model performance. It covers foundational principles of ROC curves and pharmacophore modeling, practical methodologies for performance assessment, strategies for troubleshooting and optimization, and advanced validation techniques. By integrating ROC analysis into virtual screening workflows, scientists can quantitatively measure model sensitivity and specificity, select optimal screening thresholds, and improve the efficiency of identifying bioactive compounds, ultimately accelerating the drug discovery process.

Understanding ROC Curves and Pharmacophore Modeling Fundamentals

What is a Pharmacophore? Defining Steric and Electronic Features

A pharmacophore is an abstract model fundamental to modern drug discovery, representing the molecular features necessary for a ligand to interact with a biological target. According to the International Union of Pure and Applied Chemistry (IUPAC), a pharmacophore is defined as "an ensemble of steric and electronic features that is necessary to ensure the optimal supramolecular interactions with a specific biological target and to trigger (or block) its biological response" [1] [2]. This conceptual framework explains how structurally diverse ligands can bind to a common receptor site by capturing the essential, shared interaction capabilities of active molecules, rather than specific chemical structures [1] [3]. The pharmacophore concept has evolved into a critical tool in computer-aided drug design (CADD), enabling efficient virtual screening, de novo molecular design, and scaffold hopping to identify novel bioactive compounds across various therapeutic areas, including cancer, viral infections, and central nervous system disorders [4] [5] [6].

Historical Development and Core Principles

The modern concept of the pharmacophore was popularized by Lemont Kier in the late 1960s and formally termed in 1971 [1]. Contrary to common belief, the concept does not originate from Paul Ehrlich's work, as neither his publications nor his documented research mentions the term or employs the conceptual framework [1]. The fundamental principle underlying pharmacophores is the distinction between molecular structure and function – different chemical scaffolds can exhibit similar biological activity if they share a common spatial arrangement of key interaction features [3]. This abstraction allows medicinal chemists to transcend specific chemical functionalities and focus on the essential steric and electronic requirements for target recognition and activation or inhibition.

Formal Definition and Conceptual Significance

The IUPAC definition emphasizes that a pharmacophore "does not represent a real molecule or a real association of functional groups, but a purely abstract concept that accounts for the common molecular interaction capacities of a group of compounds towards their target structure" [2]. It represents the largest common denominator shared by a set of active molecules [2]. This definition deliberately excludes the misuse sometimes found in literature where specific chemical functionalities (e.g., guanidines, sulfonamides) or structural skeletons (e.g., flavones, steroids) are incorrectly labeled as pharmacophores [2]. The power of the pharmacophore concept lies in its ability to facilitate "scaffold hopping" – identifying structurally distinct compounds that share the same biological activity through common interaction features [5] [3].

Essential Steric and Electronic Features of Pharmacophores

Fundamental Feature Types and Their Geometric Representations

Pharmacophore models consist of distinct steric and electronic features that represent potential interaction points with biological targets. These features are defined by their chemical nature and spatial orientation, creating a three-dimensional pattern necessary for biological activity [1] [3]. The table below summarizes the core pharmacophore features, their geometric representations, and their roles in molecular recognition.

Table 1: Fundamental Pharmacophore Features and Their Characteristics

| Feature Type | Geometric Representation | Complementary Feature Type(s) | Interaction Type(s) | Structural Examples |

|---|---|---|---|---|

| Hydrogen-Bond Acceptor (HBA) | Vector or Sphere | HBD | Hydrogen-Bonding | Amines, Carboxylates, Ketones, Alcoholes, Fluorine Substituents |

| Hydrogen-Bond Donor (HBD) | Vector or Sphere | HBA | Hydrogen-Bonding | Amines, Amides, Alcoholes |

| Aromatic (AR) | Plane or Sphere | AR, PI | π-Stacking, Cation-π | Any aromatic Ring |

| Positive Ionizable (PI) | Sphere | AR, NI | Ionic, Cation-π | Ammonium Ion, Metal Cations |

| Negative Ionizable (NI) | Sphere | PI | Ionic | Carboxylates |

| Hydrophobic (H) | Sphere | H | Hydrophobic Contact | Halogen Substituents, Alkyl Groups, Alicycles, weakly or non-polar aromatic Rings |

Source: Adapted from [3]

Vector and plane representations are typically used for feature types whose interactions are directed (e.g., hydrogen bonds), requiring specific mutual orientation of complementary features [3]. Sphere representations are used for features with undirected interactions or where orientation cannot be determined (e.g., hydrophobic interactions, rotatable -OH groups) [3]. The arrangement of these features in three-dimensional space defines the pharmacophore model necessary for biological activity.

Exclusion Volumes and Shape Constraints

Beyond the essential interaction features, pharmacophore models often incorporate exclusion volumes to represent spatial constraints imposed by the binding site shape [3]. These volumes identify receptor areas where ligand occupation would cause steric clashes, preventing binding [3]. Exclusion volumes can be derived from X-ray structures of ligand-receptor complexes or computational methods that distribute spheres based on the union of molecular shapes of aligned known actives [3]. The most reliable spatial information comes from experimental structures, though computational approaches can provide reasonable approximations when structural data is unavailable [3].

Pharmacophore Model Development and Validation

Model Generation Methodologies

Pharmacophore models can be developed through three primary approaches, each with distinct advantages and requirements.

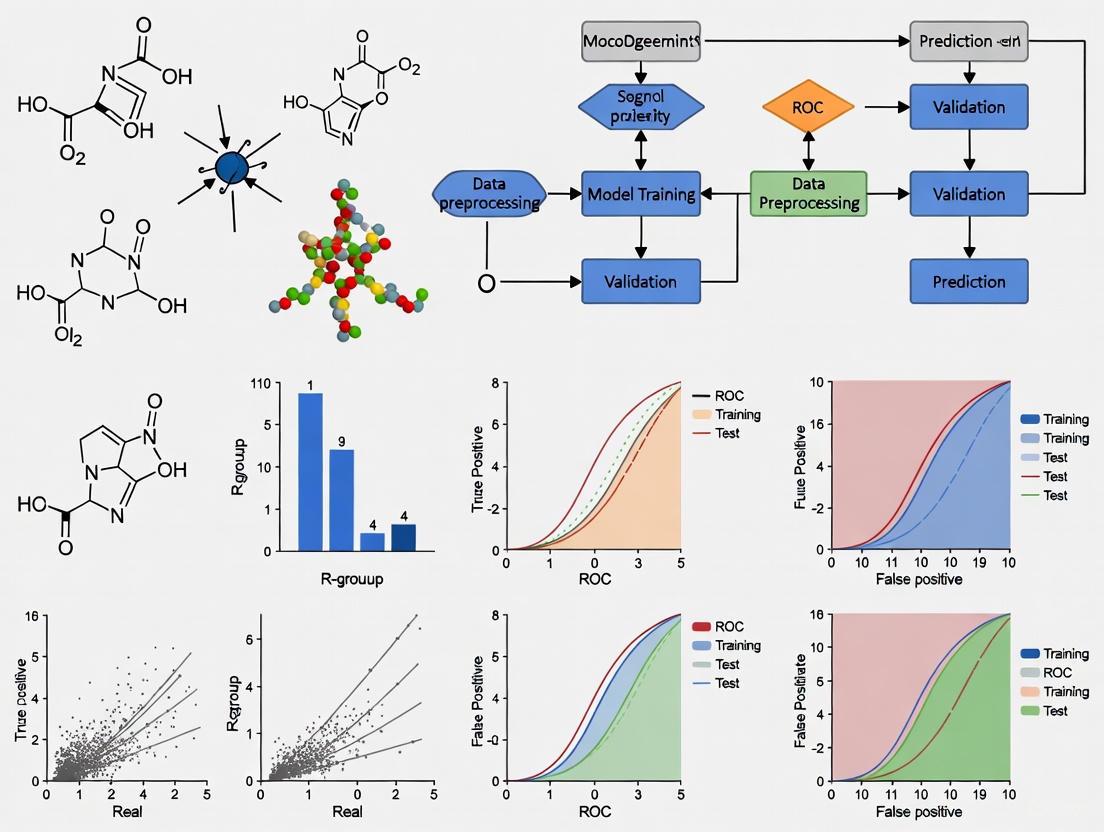

Figure 1: Pharmacophore model generation methodologies and their workflows

Structure-Based Pharmacophore Modeling

Structure-based approaches derive pharmacophore models directly from three-dimensional structures of target proteins, often in complex with ligands [3] [6]. When a bioactive ligand conformation is known from crystallographic data, atomic coordinates directly guide feature placement [3]. Software tools like LigandScout can automatically generate structure-based pharmacophore models by analyzing protein-ligand interactions in complexes [4] [6]. For example, in a study targeting the XIAP protein, researchers used the crystal structure (PDB: 5OQW) in complex with a known inhibitor to generate a pharmacophore model containing hydrophobic features, hydrogen bond donors/acceptors, positive ionizable features, and exclusion volumes [6]. Structure-based models benefit from incorporating precise binding site shape information but require high-quality structural data.

Ligand-Based Pharmacophore Modeling

When target structure information is unavailable, ligand-based approaches construct pharmacophores from a set of known active compounds [1] [3]. The development process typically involves: (1) selecting a training set of structurally diverse active molecules, (2) conformational analysis to generate low-energy conformations, (3) molecular superimposition to identify common spatial arrangements, (4) abstraction to transform superimposed molecules into abstract features, and (5) validation to ensure the model accounts for biological activity differences [1]. A critical prerequisite is that all active ligands bind to the same receptor site in the same orientation; otherwise, the resulting model will not accurately represent the essential features [3].

Manual Pharmacophore Construction

Manual construction requires significant expert knowledge about the biological target and key structural characteristics of active compounds [3]. While largely supplanted by computational methods, manual intervention remains valuable for refining automatically generated models based on medicinal chemistry intuition and additional biological insights [3].

Validation Using ROC Curve Analysis

Receiver Operating Characteristic (ROC) curve analysis provides a robust statistical framework for validating pharmacophore models and quantifying their ability to distinguish active from inactive compounds [4] [6]. The validation process involves testing the model against a dataset containing known active compounds and decoy molecules (presumed inactives), then plotting the true positive rate against the false positive rate at various classification thresholds [4] [6].

The Area Under the Curve (AUC) value summarizes model performance, with values ranging from 0-1 [6]. Models with AUC values of 0.5 suggest random discrimination, while values of 0.71-0.8 indicate good performance, and values above 0.8 represent excellent performance [4] [6]. The enrichment factor (EF) further quantifies a model's ability to enrich active compounds in early screening stages [4] [6].

In a study targeting the BRD4 protein for neuroblastoma treatment, researchers validated their structure-based pharmacophore model using 36 known active antagonists and corresponding decoys from the DUD-E database [4]. The model demonstrated exceptional performance with an AUC of 1.0 and enrichment factors of 11.4-13.1, indicating strong discriminatory power [4]. Similarly, a pharmacophore model developed for XIAP protein inhibition achieved an AUC value of 0.98 with an early enrichment factor (EF1%) of 10.0, confirming its ability to identify true actives [6].

Table 2: Pharmacophore Model Validation Metrics from Case Studies

| Target Protein | Application | Number of Actives | AUC Value | Enrichment Factor | Reference |

|---|---|---|---|---|---|

| BRD4 | Neuroblastoma Treatment | 36 | 1.0 | 11.4-13.1 | [4] |

| XIAP | Anti-Cancer Agents | 10 | 0.98 | 10.0 (EF1%) | [6] |

Performance Comparison of Pharmacophore Modeling Approaches

Virtual Screening Performance Across Multiple Targets

Recent studies demonstrate the effectiveness of pharmacophore-based virtual screening across various biological targets. The table below compares performance metrics of pharmacophore approaches applied to different protein targets and therapeutic areas.

Table 3: Performance Comparison of Pharmacophore Modeling in Virtual Screening

| Target Protein | Therapeutic Area | Screening Database | Initial Hits | Final Candidates | Key Features Identified | Reference |

|---|---|---|---|---|---|---|

| BRD4 | Neuroblastoma | ZINC Natural Products | 136 compounds | 4 compounds | 6 hydrophobic contacts, 2 hydrophilic interactions, 1 negative ionizable bond | [4] |

| XIAP | Anti-Cancer | ZINC Natural Compounds | 7 hit compounds | 3 compounds | 4 hydrophobic, 1 positive ionizable, 3 HBA, 5 HBD features | [6] |

| SARS-CoV-2 PLpro | Antiviral | Marine Natural Products | 66 initial hits | 1 lead compound | 9-feature model engaging all 5 binding sites | [7] |

| Alpha Estrogen Receptor | Breast Cancer | Generated de novo | N/A | Multiple novel candidates | Balanced pharmacophore similarity and structural diversity | [8] |

The consistent identification of viable lead candidates across diverse targets highlights the robustness of pharmacophore-based approaches. Successful implementations typically identify key interaction features complementary to the target binding site, then screen large compound databases to find matches [4] [6] [7]. The structural diversity of natural product databases often makes them particularly valuable screening sources [3] [6].

Comparison of Modern Generative Pharmacophore Models

Recent advances integrate pharmacophore concepts with generative artificial intelligence models for de novo molecular design. These approaches condition molecule generation on pharmacophoric constraints, potentially enhancing novelty while maintaining bioactivity.

Table 4: Performance Comparison of Pharmacophore-Informed Generative Models

| Model Name | Architecture | Key Innovation | Performance Highlights | Experimental Validation | Reference |

|---|---|---|---|---|---|

| TransPharmer | GPT-based with pharmacophore fingerprints | Integrates ligand-based pharmacophore fingerprints with generative framework | Superior performance in de novo generation and scaffold elaboration; Top rank in GuacaMol benchmark | 3 of 4 synthesized PLK1 compounds showed submicromolar activity (most potent: 5.1 nM) | [5] |

| PharmacoForge | Diffusion model | Generates 3D pharmacophores conditioned on protein pockets | Surpasses automated methods in LIT-PCBA benchmark; produces valid, commercially available molecules | Retrospective screening on DUD-E showed similar docking performance to de novo ligands | [9] |

| Framework by Podplutova et al. | Reinforcement learning | Balances pharmacophore similarity with structural diversity | Generated compounds with high pharmacophoric fidelity (Cosine similarity: 0.94±0.06) and 100% novelty | Improved drug-likeness (QED: 0.33±0.13) and synthetic accessibility (SA: 4.64±0.51) | [8] |

Generative pharmacophore models demonstrate particular strength in scaffold hopping – producing structurally distinct compounds that maintain key pharmacophoric features [5]. The TransPharmer model, for example, generated compounds with a novel 4-(benzo[b]thiophen-7-yloxy)pyrimidine scaffold that showed high potency and selectivity for PLK1, demonstrating the approach's ability to explore novel chemical space while maintaining target engagement [5].

Experimental Protocols for Pharmacophore Modeling

Structure-Based Pharmacophore Modeling Workflow

The following protocol outlines the key steps for developing and validating structure-based pharmacophore models, based on established methodologies from recent literature [4] [6] [7]:

Target Preparation: Obtain the three-dimensional structure of the target protein, preferably in complex with a known active ligand from sources like the Protein Data Bank (PDB). Prepare the structure by removing water molecules (except structurally relevant ones), adding hydrogen atoms, and correcting any missing residues or atoms.

Pharmacophore Feature Identification: Use molecular interaction analysis software (e.g., LigandScout) to automatically identify and map interaction features between the ligand and protein. Key features include hydrogen bond donors/acceptors, hydrophobic regions, aromatic rings, and ionizable groups [4] [6].

Exclusion Volume Placement: Define exclusion volumes based on the protein structure to represent steric constraints where ligand atoms cannot be positioned without causing clashes [3] [6]. These volumes are typically generated automatically based on the protein's van der Waals surface.

Model Validation Using ROC Analysis:

- Decoy Set Generation: Obtain a set of known active compounds and corresponding decoy molecules from databases like DUD-E [4] [6].

- Screening and ROC Calculation: Screen the active and decoy compounds against the pharmacophore model. Calculate true positive and false positive rates across different fit thresholds.

- Performance Metrics: Compute the Area Under the ROC Curve (AUC) and early enrichment factors (EF) to quantify model quality [4] [6]. AUC values >0.8 generally indicate good model performance.

Virtual Screening Application: Apply the validated model to screen large compound databases (e.g., ZINC, marine natural product libraries) [4] [6] [7]. Select compounds matching the pharmacophore features for further investigation through molecular docking and molecular dynamics simulations.

Performance Validation Through Integrated Computational Approaches

Comprehensive validation of pharmacophore models typically involves multiple computational techniques in an integrated workflow:

Molecular Docking: Screen pharmacophore-matched compounds using molecular docking programs (e.g., AutoDock, AutoDock Vina) to evaluate binding poses and predicted affinities [4] [6] [7]. Consensus docking using multiple engines enhances reliability [7].

ADMET Profiling: Predict absorption, distribution, metabolism, excretion, and toxicity properties using in silico tools to filter compounds with unfavorable pharmacokinetic or safety profiles [4] [6].

Molecular Dynamics (MD) Simulations: Perform MD simulations (typically 50-200 ns) to evaluate compound stability in the binding site, assess conformational changes, and calculate binding free energies using MM-GBSA/PBSA methods [4] [6] [7].

Experimental Verification: Synthesize or acquire top-ranking compounds for in vitro and in vivo testing to confirm biological activity and therapeutic potential [5].

Table 5: Key Resources for Pharmacophore Modeling and Validation

| Resource Category | Specific Tools & Databases | Primary Function | Application Context |

|---|---|---|---|

| Software Platforms | Phase (Schrödinger), LigandScout | Pharmacophore model generation, screening, and analysis | Structure-based and ligand-based pharmacophore modeling; virtual screening [6] [10] |

| Compound Databases | ZINC Database, Comprehensive Marine Natural Products Database (CMNPD) | Sources of screening compounds for virtual screening | Commercial availability; diverse natural product space [4] [6] [7] |

| Validation Tools | DUD-E Database, ROC Curve Analysis | Model validation and performance assessment | Decoy generation; calculation of AUC and enrichment factors [4] [6] |

| Complementary Methods | AutoDock, AutoDock Vina, GROMACS | Molecular docking, dynamics simulations, and binding affinity calculations | Binding pose prediction; stability assessment; free energy calculations [4] [6] [7] |

| Generative AI Models | TransPharmer, PharmacoForge, PGMG | de novo molecular generation guided by pharmacophore constraints | Scaffold hopping; novel ligand design [5] [8] [9] |

Pharmacophores represent a fundamental abstraction in medicinal chemistry, capturing the essential steric and electronic features necessary for molecular recognition and biological activity. The core feature set – including hydrogen bond donors/acceptors, hydrophobic areas, aromatic rings, and ionizable groups – forms a three-dimensional pattern that transcends specific chemical structures and enables scaffold hopping [1] [3]. Modern computational approaches leverage both structure-based and ligand-based methodologies to develop pharmacophore models, with ROC curve analysis providing robust validation of model quality through AUC values and enrichment factors [4] [6].

The integration of pharmacophore modeling with virtual screening has proven highly effective across diverse therapeutic targets, from cancer-related proteins like BRD4 and XIAP to viral targets such as SARS-CoV-2 PLpro [4] [6] [7]. Recent advances in generative AI models that incorporate pharmacophore constraints demonstrate exceptional potential for de novo molecular design, successfully balancing structural novelty with maintained bioactivity [5] [8]. These approaches have yielded experimentally validated compounds with nanomolar potency, highlighting the continued relevance and evolving sophistication of the pharmacophore concept in modern drug discovery [5]. As computational power and algorithmic sophistication advance, pharmacophore-based strategies will continue to play a crucial role in bridging the gap between molecular structure and biological function in therapeutic development.

Core Concepts of ROC Analysis

Receiver Operating Characteristic (ROC) analysis is a fundamental method for evaluating the performance of binary classification systems, such as diagnostic tests or, in the context of this paper, computational models used in drug discovery [11] [12]. It graphically represents the diagnostic ability of a test by illustrating the trade-off between its sensitivity and its false positive rate across all possible decision thresholds [13]. Originally developed for signal detection in radar during World War II, ROC analysis has since become a cornerstone in medical decision-making, machine learning, and predictive model assessment [11] [12] [13].

The following table summarizes the key terminology and metrics essential for understanding ROC analysis.

Table 1: Key Terminology and Metrics in ROC Analysis

| Term | Definition | Calculation | Interpretation |

|---|---|---|---|

| True Positive Rate (TPR)/Sensitivity | Proportion of actual positives correctly identified [11]. | TP / (TP + FN) [12] | A test with high sensitivity correctly rules in the condition. |

| False Positive Rate (FPR) | Proportion of actual negatives incorrectly identified as positive [11]. | FP / (FP + TN) or 1 - Specificity [12] | Indicates the rate of false alarms. |

| Specificity | Proportion of actual negatives correctly identified [11]. | TN / (TN + FP) [12] | A test with high specificity correctly rules out the condition. |

| Threshold/Cut-off | The value used to dichotomize continuous results into positive or negative classes [11]. | N/A | Determines the balance between TPR and FPR; varying it generates the ROC curve. |

| Area Under the Curve (AUC) | A single measure of the classifier's overall performance across all thresholds [11] [14]. | N/A | Ranges from 0 to 1; 0.5 indicates random guessing, 1.0 indicates perfect discrimination [14]. |

The ROC curve itself is a plot with the False Positive Rate (1 - Specificity) on the x-axis and the True Positive Rate (Sensitivity) on the y-axis [11] [12]. Each point on the curve represents a sensitivity/specificity pair corresponding to a particular decision threshold. A perfect test would yield a point in the upper-left corner (0 FPR, 1 TPR), representing perfect classification. A test with no discriminatory power will have an ROC curve that lies along the diagonal line of no-discrimination (the "line of randomness"), where the probability of a true positive equals the probability of a false positive at every threshold [12] [13]. The overall performance of a test is often summarized by the Area Under the ROC Curve (AUC), which provides a single scalar value representing the probability that the classifier will rank a randomly chosen positive instance higher than a randomly chosen negative instance [11] [13].

ROC Analysis in Pharmacophore Model Validation

In the field of computer-aided drug design, pharmacophore modeling is a vital technique for identifying the essential steric and electronic features responsible for a molecule's biological activity [15]. Once a pharmacophore model is developed, it is used as a query to screen large chemical databases, classifying molecules as either "active" (potential hits) or "inactive" [16] [15]. Since this prediction is rarely perfect, ROC analysis serves as a critical tool for objectively quantifying the model's ability to discriminate between known active and inactive compounds.

A prominent example comes from a study on sigma-1 receptor (σ1R) ligands [17]. Researchers generated new structure-based pharmacophore models using a crystal structure and compared them against previously published models. To validate performance, they screened an internal database of over 25,000 compounds with experimentally measured σ1R affinity. The predictive power of each pharmacophore model was evaluated using ROC analysis, which calculated the models' ability to correctly prioritize active compounds over inactive ones. The best-performing model, 5HK1–Ph.B, achieved a ROC-AUC value above 0.8, demonstrating excellent discriminatory power. The study reported that this model also showed enrichment values above 3 at different fractions of the screened sample, meaning it was over three times more likely to identify an active compound compared to random selection [17]. This case highlights how ROC analysis provides a robust, empirical basis for selecting the best computational model for virtual screening campaigns.

Table 2: Performance Metrics from a Pharmacophore Model Validation Study [17]

| Pharmacophore Model | ROC-AUC | Enrichment Factor | Key Strengths |

|---|---|---|---|

| 5HK1–Ph.B | > 0.80 | > 3.0 | Best overall discrimination between active/inactive compounds. |

| 5HK1–Ph.A | Data not fully specified | Data not fully specified | Generated from crystal structure; outperformed docking. |

| Langer–Ph | Data not fully specified | Data not fully specified | A previously published ligand-based model. |

| Glennon–Ph | Data not fully specified | Data not fully specified | An early qualitative 2D model. |

Another application is found in the development of novel machine learning-based virtual screening techniques [18]. A stitched neural network architecture with trainable, graph convolution-based fingerprints was assessed using standardized virtual screening databases like DUD-E and LIT-PCBA. The model's performance in the binary classification of ligands (based on a docking score threshold) was evaluated using metrics including precision, recall, and receiver operating characteristics [18]. The use of these standardized benchmarks, which contain confirmed active and decoy molecules, allows for a fair and rigorous comparison of different algorithms via ROC analysis, ensuring that new methods offer a genuine improvement over contemporary counterparts.

Experimental Protocols for ROC Assessment

Implementing ROC analysis in pharmacophore model validation requires a structured experimental protocol. The following methodology, adapted from recent literature, outlines the key steps.

Protocol: Validating a Pharmacophore Model using ROC Analysis

1. Preparation of the Validation Dataset:

- Active Compounds (ACs): Curate a set of known active compounds for the target from reliable sources like ChEMBL [16] [15] or internal assay data. For example, a study on acetylcholinesterase inhibitors used 176 actives with pIC50 ≥ 8 [16].

- Inactive Compounds/Decoys (DCs): Assemble a set of molecules confirmed to be inactive or, more commonly, generate a large set of "decoys"—molecules that are physically similar to actives but topologically different to avoid bias [15] [17]. The same acetylcholinesterase study used 1070 inactives with pIC50 ≤ 6 [16]. The σ1R study used a massive internal database of over 25,000 compounds with measured affinity [17].

2. Virtual Screening with the Pharmacophore Model:

- Use the pharmacophore model as a search query to screen the combined dataset of actives and decoys.

- For each screened compound, the software will return a "fit value" or a binary outcome (match/no match) if a rigid threshold is used. To generate an ROC curve, the screening must be performed in a manner that yields a rank-ordered list or a continuous score for each compound [17].

3. Calculation of ROC Curve and AUC:

- True/False Positive Determination: Based on the model's predictions and the known activity of the compounds, populate the confusion matrix (True Positives, False Positives, True Negatives, False Negatives) at various score thresholds [12].

- Plotting the Curve: For each possible threshold, calculate the corresponding TPR (Sensitivity) and FPR (1 - Specificity). Plot these coordinate points on a graph with FPR on the x-axis and TPR on the y-axis [11] [13].

- Calculate AUC: Compute the Area Under the ROC Curve using statistical software or libraries (e.g.,

scikit-learnin Python) [18]. The AUC can be calculated using non-parametric (empirical) methods, which are most common and make no distributional assumptions, or parametric methods, which assume a binormal distribution of test results [11] [13].

4. Interpretation and Threshold Selection:

- Model Performance: Assess the AUC value. An AUC of 0.5 suggests no discriminative power, 0.7-0.8 is considered acceptable, 0.8-0.9 is excellent, and >0.9 is outstanding [13] [14].

- Optimal Cut-off Selection: The optimal operational threshold for the pharmacophore model is not necessarily the one that maximizes the AUC. It can be selected as the point on the ROC curve closest to the top-left corner (maximizing both sensitivity and specificity) or based on the specific goals of the screening campaign (e.g., favoring high sensitivity to avoid missing hits) [11] [14].

The Scientist's Toolkit: Essential Reagents and Software

Table 3: Key Research Reagents and Software for ROC-Based Pharmacophore Validation

| Item Name | Function/Description | Application in Protocol |

|---|---|---|

| Standardized Benchmarking Databases (DUD-E, LIT-PCBA) | Public databases containing known active ligands and property-matched decoy molecules [18]. | Provides a pre-curated, unbiased validation set for assessing model performance [18]. |

| Chemical Databases (ZINC, ChEMBL, NCI) | Public repositories of purchasable and annotated chemical compounds [18] [16] [19]. | Source for building custom active/inactive datasets and for prospective virtual screening. |

| Pharmacophore Modeling Software (Discovery Studio, MOE, LigandScout) | Commercial software suites for creating, visualizing, and screening with structure-based and ligand-based pharmacophore models [16] [15] [17]. | Used to generate the pharmacophore model and perform the virtual screening step. |

| Python with scikit-learn/R Libraries | Open-source programming languages with extensive statistical and machine learning libraries [18]. | Used to calculate ROC curves, AUC, precision, recall, and other performance metrics from screening results [18]. |

| CORAL Software | Software for building QSAR models using Monte Carlo optimization and optimal descriptors [20]. | Can be used to generate predictive models whose classification performance is then evaluated with ROC analysis. |

Contents

- Introduction to AUC-ROC in Model Evaluation

- Interpreting the AUC Score: A Standardized Scale

- AUC in Action: Performance Benchmarks from Recent Research

- Experimental Protocols for AUC Validation

- Essential Research Toolkit for ROC Curve Analysis

In the fields of machine learning and computational drug discovery, the Area Under the Receiver Operating Characteristic Curve (AUC-ROC) is a paramount metric for evaluating the performance of classification models. The ROC curve itself is a graphical plot that illustrates the diagnostic ability of a binary classifier system by mapping the relationship between its True Positive Rate (TPR) and False Positive Rate (FPR) across various classification thresholds [21]. The AUC quantifies this entire curve into a single scalar value, representing the model's overall ability to distinguish between positive and negative classes [21]. A model with perfect discrimination has an AUC of 1.0, while a model with no discriminative power, equivalent to random guessing, has an AUC of 0.5 [22] [23].

The principal advantage of AUC is that it is threshold-independent. Unlike accuracy, which provides a performance snapshot at a single decision threshold, AUC summarizes performance across all possible thresholds [21]. This characteristic is particularly valuable when working with imbalanced datasets, a common scenario in pharmacovigilance and drug discovery where the number of inactive compounds vastly outweighs the active ones. In such contexts, AUC provides a more reliable and robust assessment of a model's intrinsic discriminatory power than metrics reliant on a fixed threshold [21].

Interpreting the AUC Score: A Standardized Scale

While there is no universal "good" AUC score applicable to all contexts due to its dependence on the specific task and data complexity [22] [23], the research community employs general guidelines for interpretation. These guidelines, as established by Hosmer and Lemeshow, offer a standardized scale for qualifying model discrimination [23].

The table below outlines this conventional interpretation framework.

Table 1: Standard Interpretations of AUC Values

| AUC Value Range | Level of Discrimination | Interpretation |

|---|---|---|

| 0.9 - 1.0 | Outstanding | Model has excellent ability to distinguish between classes. |

| 0.8 - 0.9 | Excellent | Model has very good discriminatory power. |

| 0.7 - 0.8 | Acceptable | Model has fair discriminatory power. |

| 0.5 - 0.7 | Poor | Model has low discriminatory power. |

| 0.5 | No Discrimination | Performance is no better than random guessing. |

It is critical to understand that these are guidelines, not absolute standards. A "good" AUC is highly context-dependent [23]. In medical diagnostics, where the cost of a false negative is extremely high, researchers may seek AUC scores above 0.95 to be considered useful [23]. Conversely, in early-stage virtual screening of compounds, a model with an AUC of 0.75 might represent a significant and valuable improvement over existing tools [22].

AUC in Action: Performance Benchmarks from Recent Research

Recent scientific literature provides concrete examples of AUC scores achieved in various biomedical and pharmacological applications, offering realistic benchmarks for researchers. The following table summarizes AUC performances from recent peer-reviewed studies, demonstrating the metric's application in evaluating everything from diagnostic criteria to complex machine learning models.

Table 2: AUC Performance Benchmarks from Recent Research

| Study / Model Context | Reported AUC | Performance Classification |

|---|---|---|

| Gold Coast Criteria for ALS Diagnosis [24] | 0.95 | Outstanding |

| AI for HCC Screening (Strategy 4) [25] | 0.872 | Excellent |

| LivNet Model for Liver Lesion Classification [25] | 0.837 | Excellent |

| UniMatch Model for Liver Lesion Detection [25] | 0.887 | Excellent |

| Logistic Regression for Severe Adverse Drug Reactions [26] | 0.707 (test set) | Acceptable to Poor |

These real-world examples highlight the variability of performance expectations across different tasks. The outstanding AUC of 0.95 for the Gold Coast Criteria in a meta-analysis signifies a highly effective diagnostic tool [24]. In contrast, a logistic regression model for predicting Severe Adverse Drug Reactions (SADRs) with an AUC of 0.707 was considered the best among three machine learning models in that specific study, demonstrating that in complex, real-world pharmacological problems, even an AUC in the "acceptable" or "poor" range can hold significant predictive value and represent a meaningful step forward [26].

Experimental Protocols for AUC Validation

A robust AUC score is underpinned by a rigorous experimental protocol. The following workflow, common in computational pharmacology, outlines the key steps for developing and validating a model whose performance is measured by AUC.

Detailed Protocol Steps:

Data Curation and Partitioning: The foundation of any model is a high-quality dataset. For a typical classification task in drug discovery, this involves gathering confirmed active and inactive compounds. The dataset must then be partitioned into a training set for model development and a hold-out test set for final validation. A common practice, as seen in a recent SADR study, is to use a fixed ratio like 75%-25% for this partition, aligning with modern reporting standards like TRIPOD-AI [26]. This step is critical to avoid over-optimistic performance estimates.

Model Training and Prediction: Using the training set, the model (e.g., a pharmacophore ensemble, logistic regression, or random forest) is developed and its parameters are learned [26] [27]. The trained model is then used to generate a prediction score (e.g., a probability or a fit value) for every instance in the test set. These scores reflect the model's confidence that an instance belongs to the positive class [21].

ROC Curve Construction and AUC Calculation: A classification threshold is varied across the range of possible prediction scores (e.g., from 0 to 1). At each threshold, the True Positive Rate (TPR) and False Positive Rate (FPR) are calculated and plotted against each other [28] [21]. The AUC is then computed, which represents the probability that the model will rank a randomly chosen positive instance higher than a randomly chosen negative one [21]. This process is efficiently handled by libraries like

scikit-learnin Python, which provide functions forroc_curveandroc_auc_score[21].

Essential Research Toolkit for ROC Curve Analysis

For researchers implementing ROC curve analysis, particularly in computational pharmacology, a specific set of computational tools and resources is essential. The table below details key components of the research toolkit.

Table 3: Essential Research Reagent Solutions for ROC Analysis

| Tool / Resource | Function | Application in Research |

|---|---|---|

| Python & scikit-learn | Programming environment and ML library. | Provides functions (roc_curve, roc_auc_score) for calculating ROC curves and AUC, and for model comparison [21]. |

| Statistical Text (e.g., Hosmer & Lemeshow) | Reference for established guidelines. | Offers widely accepted benchmarks for interpreting AUC values (e.g., Poor, Acceptable, Excellent) [23]. |

| Compound Databases (e.g., ZINC, BindingDB) | Repository of chemical structures. | Source of known active and inactive compounds for training and testing predictive models [27] [29]. |

| Pharmacophore Modeling Software | Platform for creating and screening structure- and ligand-based models. | Used to build predictive models whose performance is then evaluated using AUC [27] [15]. |

| High-Performance Computing (HPC) Cluster | Infrastructure for computationally intensive tasks. | Enables large-scale virtual screening, molecular dynamics simulations, and model validation [27] [29]. |

The relationship between the ROC curve, its AUC, and the model's underlying score distribution is conceptually fundamental. The following diagram illustrates how the separation of scores for positive and negative classes directly translates to the shape of the ROC curve and the value of the AUC.

In summary, the AUC metric provides a powerful, threshold-independent measure for evaluating the discriminatory power of classification models. Its interpretation, guided by established standards and contextualized with real-world benchmarks, is indispensable for researchers and scientists, especially in the high-stakes field of drug discovery and development. A rigorous experimental protocol and a well-equipped computational toolkit are fundamental to obtaining and validating a meaningful AUC score.

Integrating ROC Analysis into the Pharmacophore Validation Workflow

The validation of pharmacophore models is a critical step in structure-based drug design, ensuring that computational models possess the predictive power to identify true active compounds during virtual screening. This guide objectively compares the performance and application of Receiver Operating Characteristic (ROC) curve analysis against other validation methods within the pharmacophore modeling workflow. Data synthesized from recent peer-reviewed studies demonstrates that ROC analysis, characterized by the Area Under the Curve (AUC) metric, provides a robust and standardized framework for evaluating model selectivity. When integrated with cost analysis, Fischer's randomization, and decoy set validation, ROC analysis forms the cornerstone of a comprehensive validation protocol, significantly enhancing the reliability of virtual screening campaigns for identifying novel therapeutic agents.

Pharmacophore modeling is an established computational technique that abstracts the essential steric and electronic features responsible for a ligand's biological activity. The core challenge lies in validating the quality of the generated pharmacophore hypothesis before its deployment in costly virtual screening (VS) campaigns. A poorly validated model can yield an unacceptably high rate of false positives, wasting computational resources and experimental effort [30].

Within a broader thesis on performance evaluation methods, this guide examines the integration of ROC analysis as a definitive standard for quantifying pharmacophore model performance. ROC analysis objectively measures a model's ability to discriminate between active and inactive compounds, providing a benchmark against which other methods, such as cost function analysis and Fischer's randomization, can be contextualized. We present comparative data from recent studies, detailed experimental protocols, and key reagent solutions to equip researchers with a practical framework for rigorous pharmacophore validation.

Performance Comparison of Validation Methodologies

A comprehensive pharmacophore validation strategy typically employs multiple techniques to assess different aspects of model quality. The table below summarizes the performance of ROC analysis alongside other common validation methods, based on data from recent research applications.

Table 1: Comparison of Pharmacophore Model Validation Methods

| Validation Method | Measured Parameter | Performance Interpretation | Reported Performance in Recent Studies |

|---|---|---|---|

| ROC Curve Analysis | Area Under the Curve (AUC) | Excellent: 0.9-1.0; Good: 0.8-0.9; Acceptable: 0.7-0.8; Chance: 0.5 [6] [31] | AUC of 0.98 for an XIAP inhibitor model [6]; AUC of 0.972 for a PAD2 inhibitor model [32] |

| Decoy Set Validation | Enrichment Factor (EF) | Measures the fold-increase in hit rate vs. random selection; higher values indicate better performance [4] | EF of 10.0-13.1 for a Brd4 inhibitor model [4] |

| Cost Function Analysis | Total Cost vs. Null Cost | A model is significant if the cost difference (Δ) is > 60 bits [30] | Used to establish robustness during model generation [30] [33] |

| Fischer's Randomization | Statistical Significance | Checks if the original model's correlation is non-random; a 95% confidence level is standard [30] | Employed to rule out chance correlation in QSAR models [30] |

| Test Set Prediction | R²pred, rmse | Assesses the model's predictive power for an external set of compounds; R²pred > 0.5 is acceptable [30] | R²pred of 0.96 for a COX-2 inhibitor QSAR model [33] |

ROC analysis distinguishes itself by providing a single, standardized metric (AUC) that is easy to interpret and compare across different models and studies. For instance, a model targeting the XIAP protein achieved an excellent AUC of 0.98, proving its high capability to distinguish true actives from decoys [6]. Similarly, a model for PAD2 inhibitors showed an AUC of 0.972, confirming its robustness [32]. While the Enrichment Factor (EF) from decoy set validation offers concrete insight into early enrichment (e.g., an EF of 13.1 for a Brd4 model [4]), ROC analysis gives a holistic view of model performance across all thresholds. Cost analysis and Fischer's randomization are crucial for establishing the statistical foundation of a model during the hypothesis generation phase, but they do not directly quantify screening performance like ROC analysis does.

Experimental Protocols for Key Validation Steps

Protocol 1: ROC Curve Analysis using Decoy Sets

This protocol evaluates a model's ability to retrieve known active compounds from a database spiked with decoy molecules.

- Decoy Set Generation: Generate decoy molecules for your known active compounds using a dedicated server like DUD-E (https://dude.docking.org/generate). Decoys should be physically similar but chemically distinct from the actives to avoid bias, matching properties like molecular weight, hydrogen bond donors/acceptors, and logP [30].

- Database Creation: Merge the known active compounds (typically 10-40 molecules) with their corresponding decoys (often thousands of molecules) into a single screening database [6] [32].

- Pharmacophore Screening: Screen the combined database against the pharmacophore model. The software will return a list of "hits," ranking them based on their fit value.

- Calculate ROC Curve: As you move down the ranked hit list, calculate the cumulative True Positive Rate (Sensitivity) and False Positive Rate (1-Specificity). Plot the True Positive Rate against the False Positive Rate.

- Calculate AUC: Determine the Area Under the ROC Curve (AUC). An AUC of 1 represents a perfect model, while 0.5 indicates performance no better than random [6] [31]. An AUC value above 0.7 is generally considered acceptable, with values above 0.9 indicating an excellent model [6].

Protocol 2: Cost Analysis and Fischer's Randomization

This protocol assesses the statistical significance of the pharmacophore hypothesis.

- Cost Function Analysis: During the model generation process (e.g., in software like Discovery Studio or LigandScout), analyze the hypothesis cost values. The key metrics are the total hypothesis cost, the null cost, and the configuration cost.

- Fischer's Randomization Test:

- Randomly shuffle the biological activity data (e.g., pIC50 values) among the training set compounds, creating a new dataset with no inherent structure.

- Generate new pharmacophore models using this randomized dataset.

- Repeat this process 10-100 times to create a distribution of correlation coefficients from random chance.

- Compare the correlation coefficient of your original model to this randomized distribution. If the original correlation falls in the tail of the randomized distribution (e.g., p < 0.05), the model is considered statistically significant and not a product of chance correlation [30].

Visualization of the Integrated Validation Workflow

The following diagram illustrates the logical sequence of the integrated pharmacophore validation workflow, highlighting the role of ROC analysis as a critical performance check.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Successful implementation of the validation workflow requires specific software tools and databases. The following table details key solutions used in the studies cited within this guide.

Table 2: Key Research Reagent Solutions for Pharmacophore Validation

| Tool/Solution | Type | Primary Function in Validation | Example Use Case |

|---|---|---|---|

| LigandScout | Software | Structure & ligand-based pharmacophore generation and screening [4] [6] [34]. | Used to generate and validate the model for anti-HBV flavonols [34]. |

| DUD-E Server | Online Database | Generates decoy molecules for known actives to create benchmark datasets for validation [30]. | Provided decoys for validating a pharmacophore model against the XIAP protein [6]. |

| Discovery Studio | Software | Provides a comprehensive suite for pharmacophore modeling (Hypogen), screening, and statistical analysis (e.g., cost analysis) [32]. | Employed for structure-based pharmacophore modeling of PAD2 inhibitors [32]. |

| ZINC Database | Chemical Database | A curated collection of commercially available compounds used for virtual screening after model validation [4] [6] [32]. | Screened to identify natural compounds as novel Brd4 inhibitors [4]. |

| ChEMBL Database | Bioactivity Database | A repository of bioactive molecules with curated bioactivity data, used to gather known active compounds for model training and validation [4] [6] [34]. | Sourced active antagonists for XIAP to validate the pharmacophore model [6]. |

| ROC Curve Analysis | Analytical Method | A graphical plot and AUC metric that illustrates the diagnostic ability of a classifier system [6] [30] [32]. | Central to the validation workflow, as demonstrated in models for XIAP, PAD2, and PD-L1 [6] [32] [31]. |

Integrating ROC analysis into the pharmacophore validation workflow provides an objective, quantitative, and standardized measure of model performance that is easily communicable across the scientific community. While methods like cost analysis and Fischer's randomization are indispensable for establishing the statistical soundness of a model internally, ROC analysis offers an external and practical assessment of its discriminative power in a simulated screening environment. As evidenced by multiple successful applications in drug discovery projects—from targeting Brd4 in neuroblastoma to XIAP in liver cancer—the combination of ROC analysis with complementary validation techniques forms a robust framework. This multi-faceted approach significantly de-risks the subsequent virtual screening process, leading to more efficient identification of novel, potent lead compounds.

Key Applications in Virtual Screening for Hit Identification

Virtual screening (VS) has become a cornerstone of modern drug discovery, providing a computational strategy to identify novel hit compounds from vast chemical libraries before they are synthesized and tested experimentally. A critical analysis of virtual screening results published between 2007 and 2011 revealed over 400 studies reporting active compounds identified by these methods, demonstrating the widespread adoption of VS technologies [35]. The fundamental goal of virtual screening is to identify initial hit compounds that provide novel chemical scaffolds for further medicinal chemistry optimization, serving as a complementary approach to traditional high-throughput screening (HTS) and fragment-based screening [35]. With the advent of readily accessible chemical libraries containing billions of compounds, there has been increasing interest in screening expansive chemical space for lead discovery, though only a few successful virtual screening campaigns using ultra-large libraries have been reported [36].

The success of virtual screening campaigns depends crucially on the accuracy of computational methods to predict binding poses and affinities between small molecules and target proteins [36]. While the hit identification criteria for traditional HTS are well-defined, there has been less consensus on how to define a hit compound identified from computational screening methods based on experimental activity [35]. This guide explores key applications in virtual screening for hit identification, with particular focus on performance evaluation using ROC curve analysis within the context of pharmacophore model research.

Core Virtual Screening Methodologies

Structure-Based Virtual Screening

Structure-based virtual screening relies on the three-dimensional structural information of biological targets to identify potential ligands. This approach uses the 3D structure of a macromolecule target, typically obtained from sources like the RCSB Protein Data Bank or through computational techniques like homology modeling, to identify compounds that can potentially bind to the target [37]. The workflow consists of protein preparation, identification of ligand binding sites, pharmacophore feature generation, and selection of relevant features for ligand activity [37].

Leading structure-based docking programs include Schrödinger Glide, CCDC GOLD, and AutoDock Vina, though many of these are not freely available to researchers [36]. A recently developed open-source alternative, RosettaVS, implements two docking modes: virtual screening express (VSX) for rapid initial screening and virtual screening high-precision (VSH) for more accurate final ranking of top hits, with the key difference being the inclusion of full receptor flexibility in VSH [36]. These methods have demonstrated remarkable success; for instance, RosettaVS was used to screen multi-billion compound libraries against unrelated targets (KLHDC2 and NaV1.7), discovering hit compounds with single-digit micromolar binding affinities in less than seven days [36].

Ligand-Based Virtual Screening

Ligand-based virtual screening approaches develop 3D pharmacophore models and quantitative structure-activity relationship (QSAR) models using only the physicochemical properties of known active molecules when the target structure is unavailable [37]. The underlying principle is that molecules sharing common chemical functionalities and similar spatial arrangement are likely to exhibit similar biological activity on the same target [37].

Pharmacophore models represent these chemical functionalities as abstract features including hydrogen bond acceptors (HBAs), hydrogen bond donors (HBDs), hydrophobic areas (H), positively and negatively ionizable groups (PI/NI), aromatic groups (AR), and metal coordinating areas [37]. These are represented as geometric entities such as spheres, planes, and vectors in 3D space, with additional shape or exclusion volumes (XVOL) added to represent the binding pocket's spatial constraints [37]. The advantage of pharmacophore models is their scaffold-hopping capability—the ability to identify chemically divergent molecules that can trigger similar biological responses due to shared pharmacophoric features [37].

Hybrid and AI-Accelerated Approaches

Recent advances combine multiple virtual screening approaches with artificial intelligence to enhance hit identification. Schrödinger's Virtual Screening Web Service combines physics-based methods with machine learning to screen ultra-large-scale purchasable compound libraries, allowing researchers to identify novel hits from libraries of over one billion compounds in approximately one week [38]. These integrated platforms benefit from parallel screening approaches, where different screening technologies have been shown to produce unique ligand scaffolds, thereby maximizing chemical diversity [38].

Another innovative approach, PGMG (Pharmacophore-Guided deep learning approach for bioactive Molecule Generation), uses pharmacophore hypotheses as a bridge to connect different types of activity data [39]. This method employs a graph neural network to encode spatially distributed chemical features and a transformer decoder to generate molecules, introducing a latent variable to solve the many-to-many mapping between pharmacophores and molecules to improve diversity [39]. Such approaches are particularly valuable for targets with insufficient activity data, as they can utilize different types of activity data in a uniform representation to control the molecule design process biologically meaningfully [39].

Performance Evaluation Using ROC Curve Analysis

Fundamentals of ROC Analysis in Virtual Screening

Receiver Operating Characteristic (ROC) curve analysis provides a robust framework for evaluating the performance of virtual screening methods by measuring their ability to distinguish true active compounds from inactive ones. The ROC curve plots the true positive rate (sensitivity) against the false positive rate (1-specificity) across different classification thresholds [36]. In virtual screening applications, the area under the ROC curve (AUC) serves as a key metric, with values ranging from 0.5 (random performance) to 1.0 (perfect discrimination) [36].

Another critical metric derived from ROC analysis is the enrichment factor (EF), which measures the ability of docking calculations to identify early enrichment of true positives at a given percentage cutoff of all recovered compounds [36]. The success rate of placing the best binder among the top 1%, 5%, or 10% of ranked ligands across target proteins provides additional performance assessment [36]. These metrics are particularly valuable for comparing different virtual screening methods and optimizing parameters for specific target classes.

Benchmark Studies and Comparative Performance

Virtual screening methods are typically benchmarked on standardized datasets to enable objective comparison. The Directory of Useful Decoys (DUD) dataset, consisting of 40 pharmaceutically relevant protein targets with over 100,000 small molecules, serves as a common benchmark, with AUC and ROC enrichment used to quantify virtual screening performance [36]. The Comparative Assessment of Scoring Functions 2016 (CASF-2016) dataset, comprising 285 diverse protein-ligand complexes, provides another standard benchmark specifically designed for scoring function evaluation [36].

Recent studies demonstrate the advancing performance of state-of-the-art methods. For example, RosettaGenFF-VS achieved a top 1% enrichment factor (EF1%) of 16.72 on the CASF-2016 benchmark, significantly outperforming the second-best method (EF1% = 11.9) [36]. Similarly, analysis of binding funnels shows superior performance across a broad range of ligand RMSDs, suggesting more efficient search for the lowest energy minimum compared to other methods [36].

Table 1: Performance Comparison of Virtual Screening Methods on Standard Benchmarks

| Method | Type | EF1% (CASF-2016) | AUC (DUD) | Key Advantages |

|---|---|---|---|---|

| RosettaGenFF-VS | Physics-based docking | 16.72 | Not reported | Models receptor flexibility; superior enrichment |

| Glide | Physics-based docking | 11.9 (2nd best) | Not reported | Industry standard; well-validated |

| PGMG | Pharmacophore-guided AI | Not reported | Not reported | Flexible generation without fine-tuning |

| Structure-based pharmacophore | Feature-based | Varies by implementation | Varies by implementation | Directly uses target structure information |

| Ligand-based pharmacophore | Feature-based | Varies by implementation | Varies by implementation | Works without target structure |

Experimental Protocols for ROC Validation

To ensure meaningful ROC analysis for pharmacophore model performance, researchers should follow standardized experimental protocols:

Dataset Preparation: Utilize standardized benchmarking datasets like DUD or CASF-2016 to ensure comparable results across studies. These datasets provide carefully curated active compounds and decoy molecules that resemble actives in physical properties but differ in chemical structure [36].

Method Application: Implement the virtual screening protocol on the benchmark dataset, ensuring consistent parameters across all targets. For structure-based methods, this includes standardized protein preparation, binding site definition, and docking parameters [36].

Pose Prediction and Scoring: Generate binding poses for each compound and assign scoring values. For methods incorporating receptor flexibility, like RosettaVS VSH mode, allow sidechain and limited backbone movement during docking [36].

ROC Calculation: Rank compounds based on their docking scores and calculate true positive and false positive rates across the ranked list. Plot the ROC curve and compute the AUC value [36].

Enrichment Analysis: Calculate early enrichment factors (EF1%, EF5%) by determining the ratio of true actives found in the top 1% or 5% of the ranked list compared to random selection [36].

Statistical Validation: Perform multiple runs with different random seeds where applicable and report mean and standard deviation of performance metrics to ensure statistical significance [36].

Research Reagent Solutions for Virtual Screening

Table 2: Essential Research Reagents and Computational Tools for Virtual Screening

| Resource Category | Specific Tools/Resources | Function in Virtual Screening | Accessibility |

|---|---|---|---|

| Protein Structure Databases | RCSB Protein Data Bank (PDB) | Source of experimental 3D structures for structure-based methods | Public |

| Compound Libraries | ZINC, ChEMBL, Enamine REAL | Collections of purchasable compounds for virtual screening | Mixed (public/commercial) |

| Docking Software | Schrödinger Glide, AutoDock Vina, RosettaVS, GOLD | Predict binding poses and affinities for ligand-receptor complexes | Mixed (open-source/commercial) |

| Pharmacophore Modeling | Phase, MOE, LigandScout | Create and validate structure-based and ligand-based pharmacophore models | Primarily commercial |

| Molecular Dynamics | GROMACS, AMBER, Desmond | Assess binding stability and refine docking poses through simulation | Mixed (open-source/commercial) |

| Validation Benchmarks | DUD, DUD-E, CASF-2016 | Standardized datasets for method validation and comparison | Public |

| AI-Accelerated Platforms | Schrödinger VS Web Service, OpenVS | High-throughput screening of billion-compound libraries using cloud computing | Primarily commercial |

Integrated Workflows and Signaling Pathways

The virtual screening process follows logical workflow pathways that integrate multiple computational methods. The diagram below illustrates a typical structure-based virtual screening workflow that incorporates ROC validation for performance assessment.

Virtual Screening Workflow with ROC Validation

The pharmacophore modeling and screening process involves distinct pathways depending on the available input data. The diagram below illustrates both structure-based and ligand-based approaches to pharmacophore model development and their application in virtual screening.

Pharmacophore Modeling Approaches for Virtual Screening

Virtual screening has evolved into a sophisticated toolkit for hit identification in drug discovery, with diverse methodologies ranging from traditional structure-based docking to modern AI-accelerated platforms. The performance evaluation of these methods using ROC curve analysis provides critical validation of their utility in identifying true active compounds while minimizing false positives. As virtual screening continues to advance, integrating multiple approaches—combining structure-based docking with pharmacophore constraints and machine learning acceleration—shows promise for further enhancing hit rates and chemical diversity. The development of open-source platforms like OpenVS and innovative methodologies like PGMG demonstrates the ongoing evolution of this field, making powerful virtual screening capabilities more accessible to the research community and accelerating the discovery of novel therapeutic agents.

Implementing ROC Analysis in Pharmacophore Validation: A Step-by-Step Guide

In the field of computer-aided drug design, virtual screening is a fundamental technique for identifying potential active compounds from vast chemical libraries. To rigorously evaluate the performance of virtual screening methods, researchers employ carefully designed benchmarking experiments that assess a model's ability to distinguish active compounds from inactive ones [40]. This process relies on the creation of active compound sets and decoy databases, which together form the ground truth for validation.

The core challenge in virtual screening lies in the biased distribution of real-world compound activity data, where active molecules are vastly outnumbered by inactive ones [40]. Decoy databases address this imbalance by providing putative inactive compounds that are similar enough to active molecules to challenge screening models, yet different enough to have low probability of actual activity [41]. The quality of these datasets directly impacts the reliability of performance metrics, particularly Receiver Operating Characteristic (ROC) curve analysis, which quantifies a model's ability to discriminate between active and inactive compounds across all classification thresholds [6].

This guide examines experimental methodologies for preparing active compound sets and decoy databases, comparing popular approaches and their implications for pharmacophore model validation.

Fundamental Concepts and Definitions

Active Compounds

Active compounds are molecules with experimentally verified activity against a specific biological target. These are typically gathered from:

- Public databases like ChEMBL [42] [40] [43] and BindingDB [44] [40]

- Scientific literature and patents [40]

- High-throughput screening (HTS) campaigns [43]

Active sets should be curated with strict adherence to activity thresholds (e.g., IC50 ≤ 200 nM) [42] and experimental consistency to ensure reliable benchmarking.

Decoy Compounds

Decoys are putative inactive molecules used to challenge virtual screening methods by mimicking the chemical space of active compounds while lacking actual biological activity. Ideal decoys should [41]:

- Exhibit similar physical properties (molecular weight, lipophilicity) to active compounds

- Display comparable chemical features while avoiding structural motifs associated with activity

- Have low structural similarity to known actives to avoid false negatives

- Be readily synthesizable or commercially available for experimental follow-up

ROC Curve Analysis in Pharmacophore Evaluation

ROC curve analysis is a fundamental statistical tool for evaluating the diagnostic ability of binary classifiers. In pharmacophore model validation [6]:

- The x-axis represents the false positive rate (decoy compounds incorrectly classified as active)

- The y-axis represents the true positive rate (active compounds correctly identified)

- The Area Under the Curve (AUC) quantifies overall discriminative ability, with values ranging from 0.5 (random guessing) to 1.0 (perfect classification)

Table 1: Interpretation of AUC Values in Pharmacophore Model Validation

| AUC Value Range | Classification Performance | Implication for Virtual Screening |

|---|---|---|

| 0.90-1.00 | Excellent | Highly reliable for hit identification |

| 0.80-0.90 | Good | Suitable for practical applications |

| 0.70-0.80 | Fair | May require improvement |

| 0.60-0.70 | Poor | Limited practical utility |

| 0.50-0.60 | Fail | No discriminative ability |

Methodologies for Decoy Database Generation

Sequence-Based Decoy Generation

Sequence-based methods primarily generate decoys for protein targets, particularly useful for docking studies and proteomic applications [45]:

Diagram 1: Sequence-based decoy generation workflow (27 words)

- Reverse Protein: Simple reversal of amino acid sequences for entire proteins [45]

- Reverse Peptide: Reversal of amino acid sequences while preserving tryptic cleavage sites (K/R positions) [45]

- Random AA: Complete randomization of amino acids according to occurrence frequencies [45]

- Random AA Trypsin: Randomization while preserving tryptic cleavage sites [45]

- Random Dipeptide: Randomization based on dipeptide occurrence frequencies [45]

Ligand-Based Decoy Generation

Ligand-based methods create decoys for small molecule targets, essential for ligand-based virtual screening [41] [4]:

- DUD-E (Database of Useful Decoys: Enhanced): A widely adopted benchmark that generates decoys with similar physical properties but dissimilar 2D structures to active compounds [4] [40] [6]

- LUDe (LIDeB's Useful Decoys): An open-source tool designed to reduce the probability of generating decoys topologically similar to known actives [41]

- Property-Matched Decoys: Selection from available compound libraries based on similar molecular weight, logP, hydrogen bond donors/acceptors, and rotatable bonds [41]

Table 2: Comparison of Major Decoy Generation Tools

| Tool | Methodology | Key Features | Performance Metrics |

|---|---|---|---|

| DUD-E | Property-based matching with topological dissimilarity | Widely adopted benchmark; includes 2D similarity filtering | Prone to artificial enrichment; established baseline |

| LUDe | Optimized chemical similarity assessment | Open-source; reduces topological similarity to actives; can be run locally | Better DOE scores across 102 targets; reduced artificial enrichment [41] |

| Custom Property Matching | Selection from compound libraries based on physicochemical properties | Highly flexible; adaptable to specific targets | Dependent on library diversity; requires careful parameter tuning |

Experimental Protocols for Database Preparation

Active Compound Curation Protocol

Step 1: Data Collection

- Extract compounds from ChEMBL [42] [40] or BindingDB [44] with reported activity values (IC50, Ki, EC50)

- Apply consistent activity thresholds (e.g., ≤ 200 nM for high-affinity binders) [42]

- Include only compounds with explicit experimental verification

Step 2: Structural Standardization

- Convert structures to standardized representation (canonical SMILES)

- Remove duplicates, salts, and inorganic compounds

- Apply filters for drug-likeness (e.g., Lipinski's Rule of Five)

Step 3: Activity Annotation

- Record exact experimental values and measurement conditions

- Note protein target, assay type, and data source

- Categorize by confidence level based on experimental evidence

Decoy Generation and Validation Protocol

Step 1: Selection of Generation Method

- Choose between sequence-based (for proteins) or ligand-based (for small molecules) approaches

- Consider screening context: structure-based vs. ligand-based virtual screening

Step 2: Generation Process

- For DUD-E: Match physicochemical properties while ensuring topological dissimilarity [4] [40]

- For LUDe: Implement optimized similarity thresholds to avoid structural analogs of actives [41]

- Generate 50-100 decoys per active compound to ensure statistical robustness [6]

Step 3: Quality Control

- Calculate Doppelganger Score to identify decoys too similar to actives [41]

- Verify chemical stability and synthetic accessibility

- Ensure adequate property matching while maintaining chemical diversity

Performance Evaluation Framework

ROC Curve Generation [6]:

- Screen combined active and decoy sets using the pharmacophore model

- Rank compounds by fit score or predicted activity

- Calculate true positive and false positive rates across score thresholds

- Plot ROC curve and calculate AUC value

Additional Validation Metrics:

- Enrichment Factor (EF): Measures early recognition capability [4] [6]

- BedROC: Emphasizes early enrichment with parameterized weighting

- Robust Initial Enhancement (RIE): Quantifies early performance with exponential weighting

Diagram 2: Complete database preparation workflow (24 words)

Comparative Analysis of Decoy Generation Strategies

Performance in Virtual Screening Contexts

Different decoy generation methods significantly impact virtual screening performance assessment:

Sequence Reversal vs. Randomization [45]:

- Stochastic methods generally produce higher FDR estimations than sequence reversing approaches

- This difference diminishes when multiple filters are applied during screening

- Reverse methods may underestimate false positive rates in single-filter contexts

DUD-E vs. LUDe [41]:

- LUDe demonstrates improved DOE scores across multiple targets, indicating reduced artificial enrichment

- Both tools show comparable Doppelganger scores, with slight improvement for LUDe

- LUDe's open-source implementation allows local execution, facilitating large-scale applications

Impact on Pharmacophore Model Validation

The choice of decoy database directly influences pharmacophore model assessment [4] [6]:

- Overly simplistic decoys may inflate performance metrics through artificial enrichment

- Excessively challenging decoys may underestimate model capability

- Optimal decoys balance molecular similarity with functional dissimilarity

Table 3: Methodological Considerations for Different Screening Contexts

| Screening Context | Recommended Approach | Key Considerations | Validation Metrics |

|---|---|---|---|

| Structure-Based Virtual Screening | Sequence-based decoys for targets; property-matched for ligands | Ensure binding site compatibility; consider protein flexibility | AUC; BEDROC; docking score distribution |

| Ligand-Based Virtual Screening | DUD-E or LUDe decoys with optimized similarity thresholds | Focus on 2D/3D similarity measures; avoid analogs | ROC-AUC; enrichment factors; similarity to known actives |

| Machine Learning Model Training | LUDe decoys with diverse chemical space coverage | Prevent data leakage; ensure representative negative examples | Precision-recall AUC; cross-validation performance |

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key Research Reagents and Resources for Database Preparation

| Resource | Type | Function | Access |

|---|---|---|---|

| ChEMBL Database | Active compound database | Provides curated bioactivity data for drug discovery | https://www.ebi.ac.uk/chembl/ [42] [40] |

| DUD-E | Decoy generation tool | Benchmark for virtual screening; property-matched decoys | http://dude.docking.org/ [4] [40] |

| LUDe | Decoy generation tool | Open-source alternative with reduced topological bias | https://github.com/LIDeB/LUDe.v1.0 [41] |

| ZINC Database | Compound library | Source for purchasable compounds for custom decoy sets | https://zinc.docking.org/ [4] [6] |

| ROC Analysis Tools | Statistical software | Performance evaluation of classification models | R (pROC), Python (scikit-learn) |

Proper experimental design for preparing active compound sets and decoy databases is fundamental to reliable virtual screening performance assessment. Based on current methodologies and comparative analyses:

- Active compounds should be rigorously curated from reliable sources with consistent activity thresholds and experimental verification [42] [40]

- Decoy selection should balance molecular similarity with structural diversity to avoid artificial enrichment [41]

- LUDe represents an improvement over DUD-E in reducing topological similarity to known actives while maintaining property matching [41]

- ROC curve analysis provides comprehensive assessment of discriminative ability, particularly when supplemented with early enrichment metrics [6]

The field continues to evolve with new benchmarking approaches such as the CARA benchmark that better reflect real-world drug discovery challenges, including biased data distributions and the presence of congeneric compounds [40]. Future developments will likely focus on addressing these complexities while maintaining methodological rigor in virtual screening validation.

In pharmacophore-based virtual screening, accurately evaluating a model's ability to discriminate between active and inactive compounds is paramount. The Receiver Operating Characteristic (ROC) curve provides a comprehensive visual tool for assessing this discriminatory performance across all possible classification thresholds [31]. Originally developed during World War II for radar signal detection, ROC analysis has become an indispensable method in machine learning and cheminformatics for quantifying classification performance [46] [47].

For drug development professionals, the ROC curve offers more than just a model evaluation metric—it enables informed decision-making about threshold selection based on the specific costs of false positives (e.g., pursuing non-active compounds) versus false negatives (e.g., missing potential drug candidates) [46]. This guide examines the theoretical foundations and practical applications of ROC analysis specifically within the context of pharmacophore model validation, providing experimental protocols and comparative data to facilitate its implementation in drug discovery pipelines.

Theoretical Foundations: TPR, FPR, and Thresholds

Core Definitions and Calculations

The ROC curve illustrates the relationship between two fundamental metrics: the True Positive Rate (TPR) and the False Positive Rate (FPR) across all classification thresholds [48]. These metrics derive from the confusion matrix, which categorizes predictions into True Positives (TP), False Positives (FP), True Negatives (TN), and False Negatives (FN) [47].

True Positive Rate (TPR), also called sensitivity or recall, measures the proportion of actual positives correctly identified:

[ TPR = \frac{TP}{TP + FN} ]

False Positive Rate (FPR) quantifies the proportion of actual negatives incorrectly classified as positive:

[ FPR = \frac{FP}{FP + TN} ]

For pharmacophore models, TPR represents the ability to correctly identify true active compounds, while FPR indicates the tendency to mistakenly classify inactive compounds as active [31].

The Role of Classification Thresholds

The classification threshold is a critical parameter that determines how prediction scores are converted into binary classes [49]. In pharmacophore modeling, this threshold might be a similarity score or fit value between a compound and the pharmacophore model.

- High threshold: Makes the model more conservative, reducing false positives but potentially increasing false negatives [49]

- Low threshold: Makes the model more inclusive, increasing true positives but also raising false positives [49]

At the extreme threshold of 1.0, the model predicts all instances as negative (TPR=0, FPR=0). At the threshold of 0.0, the model predicts all instances as positive (TPR=1, FPR=1) [48].

Table 1: Effect of Threshold Selection on Model Behavior

| Threshold Level | Effect on TPR | Effect on FPR | Use Case Scenario |

|---|---|---|---|

| High (≥0.8) | Lower | Lower | When false positives are costly |

| Moderate (0.4-0.7) | Balanced | Balanced | General screening purposes |

| Low (≤0.3) | Higher | Higher | When missing actives is unacceptable |

Experimental Protocol for ROC Curve Generation

Step-by-Step Methodology

Generating a ROC curve for pharmacophore model validation involves a systematic process that can be implemented using common programming libraries or specialized software tools.