Overcoming the Hurdles: A Strategic Guide to Next-Generation Sequencing Challenges in Modern Cancer Diagnostics

Next-generation sequencing (NGS) has fundamentally transformed oncology, enabling comprehensive genomic profiling that guides precision therapy.

Overcoming the Hurdles: A Strategic Guide to Next-Generation Sequencing Challenges in Modern Cancer Diagnostics

Abstract

Next-generation sequencing (NGS) has fundamentally transformed oncology, enabling comprehensive genomic profiling that guides precision therapy. However, its clinical integration faces significant challenges, including technical complexity, data interpretation hurdles, high costs, and regulatory considerations. This article provides a detailed exploration of these obstacles, offering a strategic framework for researchers, scientists, and drug development professionals. We examine the foundational principles of NGS, its methodological applications in tumor profiling and liquid biopsy, practical troubleshooting for optimization, and rigorous validation approaches. By synthesizing current evidence and real-world implementation studies, this guide aims to equip experts with the knowledge to enhance the reliability, accessibility, and clinical impact of NGS in cancer care.

The NGS Revolution in Oncology: Core Principles and Current Roadblocks

The evolution of sequencing technology from Sanger methods to Next-Generation Sequencing (NGS) represents a transformative leap in cancer research and diagnostics. Sanger sequencing, developed in the 1970s, utilizes the chain termination method with dideoxynucleotides (ddNTPs) to generate DNA fragments of varying lengths, which are separated by capillary electrophoresis to determine the sequence [1]. While this method provides long, high-quality reads (500-1000 bp) with exceptional accuracy (exceeding 99.999%), its fundamental limitation is low throughput, processing only a single DNA fragment per run [2] [1].

NGS, also known as massively parallel sequencing, has revolutionized genomic analysis by simultaneously sequencing millions to billions of DNA fragments [2] [1]. This quantum leap in scale has enabled comprehensive genomic studies previously deemed impossible, making NGS an indispensable tool for precision oncology. The core distinction lies in their underlying architectures: while Sanger sequencing provides a linear, focused view of specific genomic regions, NGS delivers a panoramic, high-resolution view of the entire genomic landscape, dramatically accelerating the pace of discovery in cancer research [1] [3].

Technical Comparison: Sanger Sequencing vs. Next-Generation Sequencing

Fundamental Methodological Differences

The operational principles of Sanger sequencing and NGS diverge significantly in their approach to sequence determination. Sanger sequencing relies on the chain termination method, where DNA polymerase synthesizes a complementary strand from a single-stranded template using fluorescently labeled ddNTPs that lack a 3'-hydroxyl group, causing random termination of DNA synthesis at specific bases [1]. The resulting fragments are separated by size via capillary electrophoresis, and the sequence is determined by the order of termination events [1].

In contrast, NGS employs a diverse range of chemical methods with massive parallelism as the common thread. One prominent method is Sequencing by Synthesis (SBS), where fluorescently labeled, reversible terminators are incorporated one base at a time across millions of clustered DNA fragments on a solid surface [1]. After each incorporation cycle, the fluorescent signal is captured by imaging, the terminator is cleaved, and the 3'-OH group is deblocked for the next cycle [1]. Other NGS chemistries include ion detection (measuring hydrogen ions released during nucleotide incorporation) and ligation-based methods [1] [3].

Performance Metrics and Capabilities

Table 1: Comparative Analysis of Sanger Sequencing and NGS Technologies

| Feature | Sanger Sequencing | Next-Generation Sequencing (NGS) |

|---|---|---|

| Fundamental Method | Chain termination using ddNTPs [1] | Massively parallel sequencing (e.g., SBS, ion detection) [1] |

| Throughput | Low to medium (individual samples or small batches) [1] | Extremely high (entire genomes, exomes, or multiple multiplexed samples) [2] [1] |

| Output Type | Single, long contiguous read per reaction (500-1000 bp) [1] | Millions to billions of short reads (typically 50-300 bp) [1] |

| Detection Sensitivity | ~15-20% limit of detection [2] | Down to 1% for low-frequency variants [2] |

| Key Strengths | High per-base accuracy for defined targets; long read length; operational simplicity [1] | Comprehensive genomic coverage; high discovery power; low cost per base at scale [2] |

| Primary Limitations | Low throughput and discovery power; not cost-effective for >20 targets [2] | Substantial bioinformatics requirements; high initial capital investment [1] |

| Optimal Application | Targeted confirmation; single-gene variant screening; PCR product sequencing [1] | Whole-genome/exome sequencing; transcriptomics; rare variant detection; complex cancer profiling [2] [1] |

Economic and Operational Considerations

The economic efficiencies of sequencing are drastically impacted by the choice of platform. NGS technology fundamentally changed the cost and speed equation through its massively parallel architecture, processing gigabases to terabases of data in a single run [1]. This capacity translates to a significantly lower cost per base pair, making large-scale projects financially viable [1]. While the initial capital investment for NGS is substantial, the economy of scale quickly favors NGS for high-volume or extensive genomic analyses [1].

Sanger sequencing has a lower initial instrument cost and remains cost-effective for single-target or very small-scale projects [1]. However, its reliance on separate reactions for each template and sequential fragment separation results in a high cost per base when scaling to larger projects [1]. The capacity for high-degree multiplexing in NGS, where hundreds of unique barcoded samples can be pooled and sequenced simultaneously, further optimizes reagent use and operational time compared to Sanger workflows [1].

NGS Implementation in Cancer Diagnostics: Applications and Workflows

Comprehensive Genomic Profiling in Oncology

NGS has become the cornerstone of comprehensive genomic profiling (CGP) in oncology, enabling simultaneous analysis of a broad array of genetic alterations across hundreds of cancer-related genes [3]. CGP provides significant advantages over traditional single-gene tests by requiring smaller tissue samples, reducing the time needed to test for multiple biomarkers, and offering a more complete picture of a tumor's genetic landscape [3]. This approach is particularly valuable for identifying targetable mutations, gene fusions, and splicing variants that inform personalized treatment strategies [3].

In clinical oncology, NGS applications include whole-genome sequencing (WGS) for comprehensive analysis of the entire genome; whole-exome sequencing (WES) for focused examination of protein-coding regions; transcriptomics (RNA-Seq) for quantitative gene expression analysis; and targeted panels for deep sequencing of specific gene sets relevant to particular cancers [1] [4]. These approaches have reshaped cancer management by enabling molecularly driven classification and treatment selection [3].

Liquid Biopsy and Cancer Monitoring

The integration of NGS with liquid biopsy has emerged as a groundbreaking approach in cancer diagnostics and monitoring [3]. Liquid biopsy involves the non-invasive analysis of tumor-derived material, particularly circulating tumor DNA (ctDNA), present in blood samples [3]. This approach offers a dynamic snapshot of cancer's genetic landscape, enabling real-time assessment of tumor evolution, resistance mechanisms, and treatment efficacy [3].

The applications of NGS in liquid biopsy include identification of actionable mutations to guide targeted therapies, monitoring treatment response through changes in ctDNA levels, detection of minimal residual disease (MRD) after treatment, and identification of resistance mechanisms that emerge during therapy [3]. The sensitivity of NGS enables the detection of variants present at very low frequencies (down to 1%), making it particularly valuable for monitoring cancer progression and therapeutic resistance [2] [3].

Troubleshooting Guide: Addressing Common NGS Challenges in Cancer Research

Pre-Analytical Variables and Sample Quality Issues

Problem: Low DNA yield from FFPE tumor samples

- Cause: Excessive DNA fragmentation and cross-linking due to formalin fixation and processing methods [5].

- Solution: Optimize DNA extraction protocols specifically for FFPE tissue. Use fully demineralized pulverized tissue and implement specialized purification systems [5]. Ensure tumor percentage exceeds 10% whenever possible, as samples with 10-20% tumor content show similar sequencing success rates to those with >30% tumor content [5].

Problem: Failed sequencing of bone metastasis samples

- Cause: DNA degradation during decalcification procedures; very low success rate (42.1%) observed in bone specimens compared to lung samples (79.8%) [5].

- Solution: Implement gentler decalcification protocols. Consider alternative metastatic sites for biopsy when possible. Use specialized library preparation kits designed for degraded DNA [5].

Problem: Poor library preparation efficiency

- Cause: Insufficient DNA quantity or quality; inappropriate fragment size selection [5].

- Solution: Quantify DNA using fluorometric methods rather than spectrophotometry for greater accuracy. Use magnetic beads or agarose gel filtration to remove adapter dimers and select appropriate fragment sizes (around 300 bp) [6]. Assess library quantity and quality using quantitative PCR before sequencing [6].

Instrumentation and Technical Failures

Problem: Chip initialization failure on Ion Torrent systems

- Cause: Bubbles or residue on chip surface; improper chip seating; damaged chip [7].

- Solution: Rinse the chip by pipetting 100 μL of isopropanol into the chip, followed by 100 μL of water. Ensure the chip is properly seated and the clamp is fully closed. If the chip appears damaged, replace it with a new one [7].

Problem: Connectivity issues between sequencer and server

- Cause: Network connectivity problems; software updates required; hardware detection failures [7].

- Solution: Disconnect and re-connect the Ethernet cable. Confirm router operation and network status. Check for software updates in the main menu under Options > Updates. For persistent issues, power cycle the instrument: shut down completely, wait 30 seconds, then restart [7].

Problem: Low sequence yield or poor quality scores

- Cause: Problems during library or template preparation; inadequate quantification; poor cluster amplification [7].

- Solution: Verify the quantity and quality of library and template preparations using appropriate methods. Ensure Control Ion Sphere particles were added to the sample (for Ion Torrent systems). Check reagent volumes and freshness [7].

Analytical and Bioinformatics Challenges

Problem: Difficulty detecting low-frequency variants

- Cause: Insufficient sequencing depth; high background noise; low tumor purity [2] [5].

- Solution: Increase sequencing depth to 1000x or higher for rare variant detection. Use duplicate removal and base quality score recalibration. Implement unique molecular identifiers (UMIs) to correct for amplification artifacts and improve accuracy [2].

Problem: High false positive rates in variant calling

- Cause: Sequencing artifacts; mapping errors; contamination [8].

- Solution: Implement rigorous quality control metrics including cross-sample contamination checks. Use multiple variant calling algorithms and orthogonal validation (e.g., Sanger sequencing) for confirmed variants. Participate in proficiency testing programs to identify recurrent weaknesses in assays [8].

Problem: Interpretation of variants of unknown significance (VUS)

- Cause: Limited evidence for clinical impact; insufficient population frequency data [3].

- Solution: Utilize multiple annotation databases and computational prediction tools. Incorporate functional studies when possible. Establish multidisciplinary molecular tumor boards for consensus interpretation [3].

Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for NGS in Cancer Diagnostics

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Nucleic Acid Extraction Kits | Isolation of DNA/RNA from various sample types | Select specialized kits for FFPE tissue; include RNase inhibitors for RNA sequencing [5] |

| Library Preparation Kits | Fragmentation, adapter ligation, and amplification of DNA | Choose targeted panels for specific cancers or comprehensive kits for whole-genome analysis [6] |

| Barcoding/Indexing Adapters | Sample multiplexing | Enable pooling of multiple samples; reduce cost per sample [1] |

| Sequence Capture Probes | Target enrichment | Essential for targeted sequencing panels; design should cover regions of interest with padding [6] |

| Quality Control Reagents | Assessment of nucleic acid and library quality | Include fluorometric quantitation, fragment analyzers, and qPCR assays [6] |

| Sequencing Chemicals | Nucleotides, enzymes, and buffers for sequencing | Platform-specific reagents; ensure proper storage and stability [7] |

Workflow Diagrams for NGS Implementation

NGS Library Preparation Workflow

Decision Pathway for Sequencing Technology Selection

Future Directions and Implementation Strategies

Emerging Technologies and Approaches

The future of NGS in cancer diagnostics continues to evolve with several promising directions. Single-cell sequencing technologies are enabling the dissection of tumor heterogeneity at unprecedented resolution, revealing cellular subpopulations and their distinct molecular features [3]. Liquid biopsy applications are expanding beyond blood to include urine, cerebrospinal fluid, and other bodily fluids, providing less invasive options for cancer monitoring [3].

Fragmentomics, the analysis of cell-free DNA fragmentation patterns, shows promise as a novel approach for cancer detection and tissue-of-origin determination [3]. Integration of multi-omics data (genomics, transcriptomics, epigenomics, and proteomics) through advanced computational methods is creating more comprehensive models of cancer biology, potentially leading to more effective therapeutic strategies [3].

Overcoming Implementation Barriers

Successful implementation of NGS in cancer diagnostics requires addressing several critical barriers. Reimbursement challenges, including prior authorization complexities and administrative burdens, were reported by 87.5% of physicians as significant obstacles [9]. Lack of knowledge about NGS testing methodologies (81.0%) and insufficient evidence of clinical utility (80.0%) were also commonly cited barriers [9].

Strategies to overcome these challenges include developing standardized reimbursement frameworks, enhancing education for healthcare providers on NGS technologies and interpretation, generating robust clinical utility evidence through prospective trials, and establishing multidisciplinary molecular tumor boards for case review [9]. Additionally, investment in bioinformatics infrastructure and computational resources is essential for managing the massive datasets generated by NGS and translating them into clinically actionable insights [1] [3].

The continued advancement and implementation of NGS technologies hold tremendous promise for further personalizing cancer care, improving patient outcomes, and deepening our understanding of cancer biology. As these technologies become more accessible and interpretable, they are poised to become increasingly integral to routine cancer diagnosis, monitoring, and treatment selection.

Next-generation sequencing (NGS) has revolutionized cancer diagnostics, enabling comprehensive genomic profiling that guides precision oncology. This powerful technology allows researchers to sequence millions of DNA fragments simultaneously, providing unprecedented insights into the genetic drivers of cancer [6] [10]. However, the path from sample to biological insight is complex, with potential bottlenecks at every stage that can compromise data quality and reliability. This technical support center addresses the most common challenges in the NGS workflow, providing researchers, scientists, and drug development professionals with practical troubleshooting guides and FAQs to ensure the generation of robust, reproducible data for cancer research.

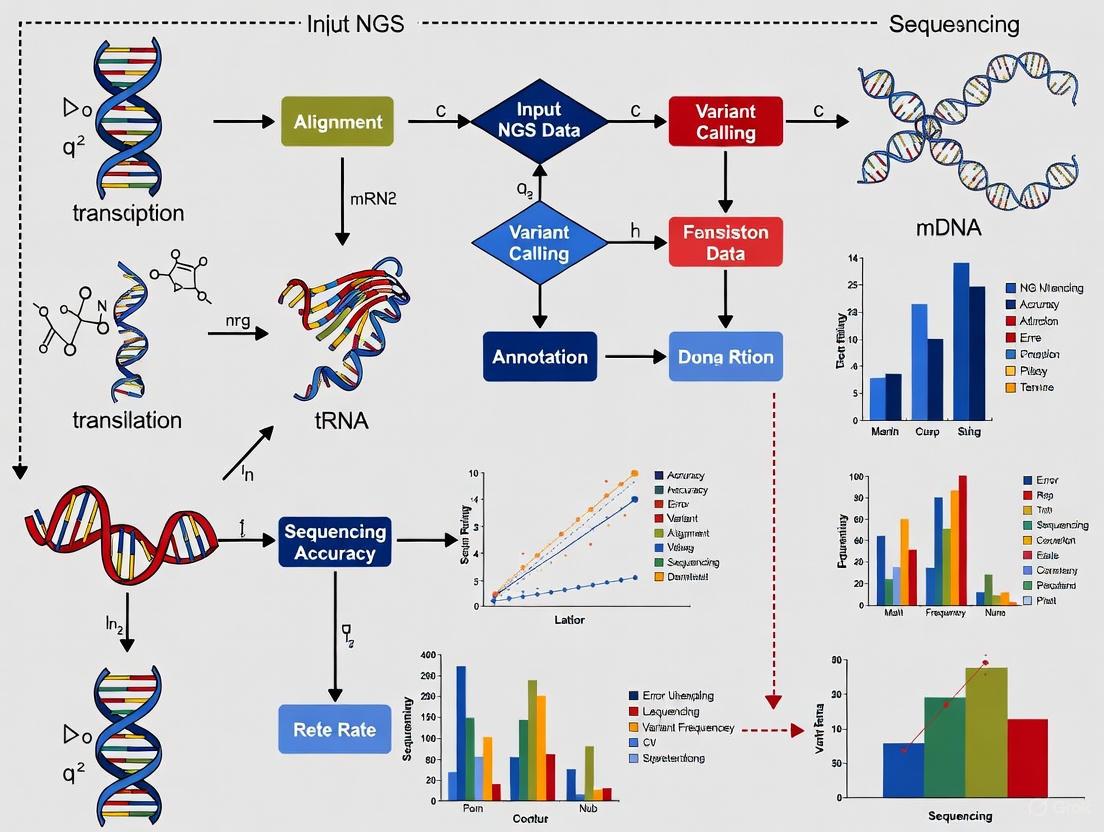

The NGS Workflow: A Visual Guide

The NGS process involves multiple interconnected steps, each critical to the success of the entire workflow. The following diagram illustrates the key stages from sample preparation to final data analysis:

Troubleshooting Guide: Common NGS Challenges and Solutions

Library Preparation Issues

Problem: Low Library Yield Low library yield is one of the most frequent challenges in NGS workflow optimization, particularly when working with limited clinical samples.

Table 1: Causes and Solutions for Low Library Yield

| Root Cause | Failure Mechanism | Corrective Action |

|---|---|---|

| Poor input quality/contaminants | Enzyme inhibition from residual salts, phenol, or EDTA | Re-purify input sample; ensure 260/230 > 1.8 and 260/280 ~1.8 ratios; use fresh wash buffers [11] |

| Inaccurate quantification | Under- or over-estimating input concentration leads to suboptimal enzyme stoichiometry | Use fluorometric methods (Qubit) rather than UV for quantification; calibrate pipettes; run technical replicates [11] |

| Fragmentation inefficiency | Over- or under-fragmentation reduces adapter ligation efficiency | Optimize fragmentation parameters (time, energy); verify fragmentation distribution before proceeding [11] |

| Suboptimal adapter ligation | Poor ligase performance or incorrect molar ratios reduce adapter incorporation | Titrate adapter:insert molar ratios; ensure fresh ligase and buffer; maintain optimal temperature [11] |

Problem: Adapter Dimer Formation Adapter dimers may form during the adapter ligation step and can significantly impact sequencing efficiency. These dimers appear as sharp peaks at ~70 bp for non-barcoded libraries or ~90 bp for barcoded libraries on electrophoretograms [12]. To prevent this issue:

- Perform additional clean-up steps prior to template preparation

- Ensure proper size selection to remove dimer artifacts

- Optimize adapter concentrations to prevent self-ligation

- Use fresh ethanol for wash steps and pre-wet pipette tips to ensure accurate volume transfer during clean-up [12]

Problem: PCR Amplification Artifacts Overamplification during library preparation can introduce significant bias and artifacts:

- Limit the number of amplification cycles (typically 1-3 additional cycles if needed)

- Add cycles to the initial amplification rather than the final amplification step

- Avoid overamplification as it introduces bias toward smaller fragments

- If yield remains low after optimization, repeat the amplification reaction rather than adding excessive cycles [12]

Sequencing Data Quality Issues

Problem: High Error Rates in Sequencing Data Sequencing errors are key confounding factors for detecting low-frequency genetic variants that are crucial in cancer research. Different error types have characteristic profiles:

Table 2: NGS Error Profiles and Their Sources

| Error Type | Typical Rate | Primary Sources | Impact on Cancer Diagnostics |

|---|---|---|---|

| A>G/T>C | ~10⁻⁴ | Sequencing process itself | Medium impact - can mimic true somatic variants |

| A>C/T>G, C>A/G>T, C>G/G>C | ~10⁻⁵ | Sample handling, polymerase errors | Lower frequency but still confounding |

| C>T/G>A | ~10⁻⁴ to 10⁻³ | Strong sequence context dependency, cytosine deamination | High impact - can create false positive cancer-associated mutations |

| All substitutions post-enrichment PCR | ~6-fold increase | Target-enrichment PCR | Significant - limits detection of low-frequency variants [13] |

Solution: Computational error suppression can reduce substitution error rates to 10⁻⁵ to 10⁻⁴, which is 10- to 100-fold lower than generally considered achievable (10⁻³) in standard NGS workflows [13]. This enhanced sensitivity is particularly valuable for detecting subclonal populations in tumors and minimal residual disease.

Data Analysis Challenges

Problem: Bioinformatics Bottlenecks NGS data analysis faces multiple computational challenges that can slow research progress:

- Tool variability: Different alignment algorithms or variant calling methods may produce conflicting results, complicating interpretation [14]

- Computational demands: Large datasets from whole-genome or transcriptome studies often require powerful servers and optimized workflows [14]

- Data interpretation complexity: Accurately interpreting the vast amount of data generated presents significant challenges, requiring robust bioinformatics support [6]

Solution: Implement standardized pipelines to reduce inconsistencies while maintaining flexibility for specific research goals. Utilize robust quality control measures at every stage and ensure adequate computational resources for the scale of analysis required [14].

Frequently Asked Questions (FAQs)

Q: What are the critical steps for ensuring high-quality NGS library preparation? A: The most critical steps include: (1) Using high-quality, pure input DNA/RNA with proper quality control metrics; (2) Optimizing fragmentation to achieve the desired insert size; (3) Careful adapter ligation with appropriate adapter:insert ratios; (4) Limited-cycle amplification to prevent bias; (5) Meticulous clean-up and size selection to remove adapter dimers and other artifacts [12] [11].

Q: How can I improve detection of low-frequency variants in cancer samples? A: Enhancing low-frequency variant detection requires both experimental and computational approaches: (1) Use computational error suppression to reduce background error rates; (2) Increase sequencing depth to improve statistical power; (3) Implement duplex sequencing methods where feasible; (4) Carefully control for sample-specific artifacts that can mimic true variants [13].

Q: What quality control metrics should I check at each stage of the NGS workflow? A: Implement a comprehensive QC protocol including: (1) Sample preparation: Nucleic acid purity (260/280, 260/230 ratios), integrity (RIN/DIN), and accurate quantification; (2) Library preparation: Fragment size distribution, adapter dimer presence, and library concentration; (3) Sequencing: Cluster density, Q-score distribution, and base call quality; (4) Data analysis: Alignment metrics, coverage uniformity, and variant quality scores [14] [12] [11].

Q: How does NGS compare to Sanger sequencing for cancer mutation detection? A: NGS offers significant advantages for comprehensive cancer genomic profiling:

Table 3: NGS vs. Sanger Sequencing for Cancer Diagnostics

| Feature | Next-Generation Sequencing | Sanger Sequencing |

|---|---|---|

| Cost-effectiveness | Higher for large-scale projects | Lower for small-scale projects |

| Speed | Rapid sequencing of multiple samples | Time-consuming for multiple targets |

| Application | Whole-genome, exome, targeted sequencing; ideal for comprehensive profiling | Ideal for sequencing single genes or few amplicons |

| Throughput | Multiple sequences simultaneously | Single sequence at a time |

| Data output | Large amount of data from a single run | Limited data output |

| Clinical utility in oncology | Detects mutations, structural variants, copy number changes, and gene expression | Identifies specific mutations in known hotspots [6] |

Essential Research Reagent Solutions

Table 4: Key Reagents for Robust NGS Workflows

| Reagent/Category | Function | Considerations for Cancer Research |

|---|---|---|

| High-Fidelity Polymerases | Amplification during library prep | Critical for maintaining sequence accuracy; Q5 and Kapa polymerases show different error profiles [13] |

| Nucleic Acid Binding Beads | Clean-up and size selection | Fresh ethanol and proper bead:sample ratios are essential; avoid over-drying or under-drying beads [12] |

| Adapter Oligos | Ligate to DNA fragments for sequencing | Optimize adapter:insert ratio to minimize dimer formation; barcoded adapters enable multiplexing [6] |

| Quantification Kits | Precisely measure library concentration | Use qPCR-based quantification (e.g., Ion Library Quantitation Kit); cannot differentiate primer-dimers from library fragments [12] |

| Target Enrichment Panels | Select cancer-relevant genomic regions | Choose panels covering relevant cancer genes; be aware that enrichment PCR increases error rates ~6-fold [13] |

Successful NGS implementation in cancer diagnostics requires meticulous attention to each step of the workflow, from sample preparation through data analysis. By understanding common failure points and implementing the troubleshooting strategies outlined in this guide, researchers can significantly improve their sequencing results' quality, reliability, and reproducibility. As NGS technologies continue to evolve, maintaining rigorous quality control standards and staying informed about best practices will remain essential for leveraging the full potential of this transformative technology in precision oncology.

Next-Generation Sequencing (NGS) has revolutionized cancer diagnostics and research, enabling comprehensive genomic profiling that guides precision medicine. However, implementing this powerful technology in clinical settings presents significant challenges spanning technical, operational, and analytical domains. This technical support center addresses these hurdles through practical troubleshooting guides and FAQs designed to help researchers, scientists, and drug development professionals navigate the complexities of NGS implementation in cancer diagnostics research.

The table below summarizes the major categories of challenges encountered when implementing NGS in clinical cancer research settings.

| Challenge Category | Specific Barriers | Impact on Research & Diagnostics |

|---|---|---|

| Technical & Analytical | Sample quality issues (FFPE degradation, low input) [15], platform-specific errors [7], bioinformatics complexity [16] [6] | Reduced data accuracy, false positives/negatives, compromised assay sensitivity and specificity [17] [15] |

| Operational & Workforce | High instrumentation costs [6], lack of standardized SOPs [16], lengthy validation processes [16], staffing shortages and high turnover [16] | Increased operational costs, implementation delays, inconsistent results, reduced institutional capacity [16] |

| Interpretation & Regulatory | Data interpretation complexities [6] [17], evolving regulatory requirements (CLIA, FDA) [16], reimbursement challenges [9] | Barriers to clinical adoption, misinterpretation of genomic variants, financial sustainability issues [9] |

NGS Troubleshooting FAQs

Sample Preparation and Quality Control

Q: Our FFPE-derived DNA yields are low and fragmented. What approaches can improve NGS success with these samples?

A: FFPE samples are highly fragmented and cross-linked, making them challenging for NGS [15]. To optimize results:

- Use Targeted Sequencing Panels: Employ amplicon-based targeted sequencing designed for short DNA fragments (e.g., 300 bp) [15]. These require lower input (as little as 10 ng) and are more tolerant of degraded material compared to whole genome or exome sequencing [15].

- Quality Control: Assess DNA fragment length using gel electrophoresis and use fluorescence-based methods for accurate concentration measurement [15]. Avoid UV absorbance methods, which can overestimate concentration due to contaminants [15].

- Tumor Enrichment: Ensure sample has adequate tumor content (typically 10-20% minimum) through macrodissection or pathologist review of slides [15].

Q: What are the minimum sample quality requirements for different NGS methods in cancer research?

A: Requirements vary significantly by method, as detailed in the table below.

| NGS Method | Recommended Sample Type | Input Requirement | DNA Quality | Key Considerations |

|---|---|---|---|---|

| Whole Genome Sequencing (WGS) | gDNA from blood, fresh-frozen biopsy [15] | High (typically 1 µg) [15] | High-molecular weight genomic DNA [15] | Not practical for FFPE or small biopsies [15] |

| Exome Sequencing | gDNA from blood, fresh-frozen biopsy [15] | Moderate (typically 500 ng) [15] | High-quality DNA [15] | Not recommended for FFPE [15] |

| Targeted Sequencing Panels | gDNA/RNA from blood, FFPE, fine needle aspirates [15] | Low (minimum 10 ng) [15] | Tolerant of fragmented DNA [15] | Most reliable for FFPE samples; can analyze DNA and RNA in same assay [15] |

| Liquid Biopsy (cfDNA) | Plasma or serum [15] | cfDNA from single blood draw (typical 7.5 mL) [15] | Very short fragments; degrades rapidly [15] | Requires specialized tubes and rapid processing; tumor DNA is small percentage of total [15] |

Instrumentation and Platform-Specific Issues

Q: Our Ion S5 system is showing a "Chip Not Found" error during initialization. What steps should we take?

A: This error indicates communication issues between the chip and instrument [7]. Follow this troubleshooting protocol:

- Open the chip clamp, remove the chip, and inspect for physical damage or moisture outside the flow cell [7].

- If damaged, replace with a new chip [7].

- Ensure the chip is properly seated and close the clamp firmly [7].

- Run Chip Check again. If failure persists, there may be a problem with the chip socket; contact Technical Support [7].

Q: During a run, our Ion PGM system reports "W1 Empty" error, but the bottle has sufficient solution. What could be wrong?

A: This error can indicate a blockage in the fluidics system [7]. Recommended actions:

- Check that the sippers and bottles are securely attached and not loose [7].

- If components are secure, the line between W1 and W2 may be blocked. Run the line clear procedure [7].

- If error persists, detach reagent bottles, water-clean, and shut down the instrument. Restart with fresh W1 solution (prepared with 350 µL of 100 mM NaOH) [7].

Data Analysis and Bioinformatics

Q: What are the essential components of an NGS data analysis workflow for cancer research?

A: A robust bioinformatics pipeline includes multiple stages [18]:

- Primary Analysis: Base calling, demultiplexing, and quality control of raw sequence reads [18].

- Secondary Analysis: Reference genome alignment, variant calling (SNVs, indels, CNVs, SVs), and gene expression quantification [18].

- Tertiary Analysis: Pathway analysis, variant annotation, and clinical interpretation for actionable biomarkers [18].

Q: Our bioinformatics team struggles with the volume and complexity of NGS data. What resources are available?

A: Several approaches can address this challenge:

- Standardized Pipelines: Implement established pipelines like those available from the Frederick Sequencing and Genomics Core on GitHub [18].

- Quality Metrics: Utilize MultiQC reports for comprehensive quality assessment across multiple samples [18].

- Computational Resources: Leverage high-performance computing environments such as NIH's Biowulf for data-intensive analyses [18].

NGS Challenge Relationships and Solutions

NGS Sample Processing Workflow

Research Reagent Solutions for NGS Implementation

The table below outlines essential reagents and materials required for successful NGS workflows in cancer research, along with their specific functions.

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Nucleic Acid Extraction Kits | Isolation of high-quality DNA/RNA from various sample types [15] | Use sample type-specific kits; FFPE requires specialized protocols to address cross-linking [15] |

| Library Preparation Kits | Fragmentation, adapter ligation, and amplification of target sequences [6] [19] | Targeted panels recommended for FFPE and low-input samples; amplicon size should match fragment length [15] |

| Sequence Adapters | Platform-specific oligonucleotides for binding DNA fragments to flow cells [6] | Contain binding sites for cluster generation and sequencing primers; often include barcodes for multiplexing [6] |

| Quality Control Assays | Fluorometric quantification and fragment analysis [15] | Essential for determining library concentration and size distribution; critical for optimizing sequencing runs [15] |

| Target Enrichment Panels | Selection of cancer-relevant genes/regions for targeted sequencing [15] | Can be amplicon-based or hybrid capture; focus on clinically actionable cancer genes and biomarkers [15] |

| Control Ion Sphere Particles | Quality monitoring for semiconductor sequencing [7] | Included in Ion S5 Installation Kit; essential for verifying template preparation and sequencing performance [7] |

Implementing NGS in clinical cancer research presents multifaceted challenges spanning technical, operational, and analytical domains. Success requires careful attention to sample quality, appropriate platform selection, robust bioinformatics pipelines, and systematic troubleshooting of technical issues. By addressing these hurdles through standardized protocols, comprehensive training, and ongoing quality improvement, research institutions can harness the full potential of NGS to advance precision oncology and improve patient outcomes.

Next-Generation Sequencing (NGS) has revolutionized cancer diagnostics, enabling comprehensive genomic profiling that guides personalized treatment strategies [6] [20]. However, beneath the transformative potential of this technology lies a substantial hidden burden: significant economic and workforce constraints that hinder its widespread adoption in clinical and research settings. These challenges manifest as complex, labor-intensive workflows, specialized training requirements, and substantial financial investments that create barriers to implementation, particularly in resource-limited environments. This technical support center provides practical guidance to help researchers, scientists, and drug development professionals overcome these hurdles through optimized protocols, troubleshooting guides, and strategic workflow planning.

NGS Workflow and Economic Impact

The standard NGS workflow consists of multiple technically demanding steps where economic and workforce constraints frequently emerge. Understanding this workflow is essential for identifying cost-saving and efficiency opportunities.

The NGS workflow encompasses nucleic acid extraction, library construction, template amplification, sequencing reaction, and data analysis [21]. Economic and workforce pressures manifest prominently in the library preparation and data analysis stages, which are notably labor-intensive and require specialized expertise [22].

Economic Impact of Workflow Stages

Table: Economic and Workforce Impact Across NGS Workflow Stages

| Workflow Stage | Primary Economic Impact | Primary Workforce Impact | Typical Duration |

|---|---|---|---|

| Library Preparation | High reagent costs, consumable expenses | Labor-intensive processes requiring technical staff | 6-8 hours (manual) |

| Template Amplification | PCR reagent costs, equipment maintenance | Cross-contamination risks requiring careful technique | 2-4 hours |

| Sequencing Reaction | High instrument costs, sequencing reagents | Technical operation expertise | 1-48 hours (varies by platform) |

| Data Analysis | Bioinformatics software, computational resources | Specialized bioinformatics expertise | Several hours to days |

Troubleshooting Guide: Common NGS Challenges and Solutions

Library Preparation Issues

Problem: Low Library Yield Low library yield results in poor sequencing performance and insufficient data generation, often requiring costly process repetition.

- Symptoms: Final library concentrations below expected values; broad or faint peaks on electropherogram; dominance of adapter dimer peaks (~70-90 bp) [11].

- Root Causes:

- Degraded or contaminated nucleic acid input

- Inaccurate quantification methods (e.g., relying solely on absorbance)

- Inefficient fragmentation or ligation

- Overly aggressive purification or size selection [11]

- Solutions:

- Re-purify input sample using clean columns or beads

- Use fluorometric quantification methods (Qubit, PicoGreen) rather than UV absorbance

- Optimize fragmentation parameters for specific sample types

- Titrate adapter:insert molar ratios to improve ligation efficiency [11]

Problem: High Duplication Rates Elevated duplication rates indicate poor library complexity, reducing effective sequencing depth and increasing costs per usable data point.

- Symptoms: High percentage of PCR duplicate reads; overamplification artifacts; reduced unique read coverage [11].

- Root Causes:

- Insufficient starting material

- Too many PCR amplification cycles

- Inefficient polymerase or presence of inhibitors

- Primer exhaustion or mispriming [11]

- Solutions:

- Increase input DNA where possible

- Reduce number of amplification cycles

- Ensure fresh polymerase and optimal reaction conditions

- Use two-step indexing instead of one-step PCR approaches [11]

Sequencing and Data Analysis Challenges

Problem: High Error Rates in Sequencing Data NGS technologies have inherent error rates (0.26%-1.78% depending on platform) that can obscure true biological variants, particularly in SNP detection or low-abundance mutation analysis [21].

- Symptoms: Elevated false positive variant calls; inconsistent base calling; platform-specific error patterns.

- Root Causes:

- PCR amplification artifacts introducing base errors

- Platform-specific limitations (e.g., homopolymer errors in Ion Torrent)

- Sample-specific issues (e.g., AT-rich or CG-rich regions in Illumina) [21]

- Solutions:

- Implement duplicate read removal in bioinformatics pipeline

- Use unique molecular identifiers (UMIs) to distinguish true variants

- Choose platform appropriate for application (e.g., SOLiD for highest accuracy)

- Increase sequencing depth for critical regions [21]

Problem: Bioinformatics Bottlenecks NGS data analysis faces challenges including sequencing errors, tool variability, and computational limits that slow analysis and require specialized expertise [14].

- Symptoms: Extended processing times; conflicting results between analysis tools; insufficient computational resources.

- Root Causes:

- Lack of standardized analysis pipelines

- Inadequate computational infrastructure

- Variability in bioinformatics tool algorithms

- Insufficient bioinformatics training [14]

- Solutions:

- Implement standardized workflows with quality control checkpoints

- Utilize high-performance computing resources or cloud-based solutions

- Establish consensus variant calling using multiple algorithms

- Invest in bioinformatics training for research staff [14]

Cost-Benefit Analysis of NGS Implementation Strategies

Table: Economic Comparison of NGS Implementation Approaches

| Implementation Strategy | Initial Investment | Operational Costs | Workforce Requirements | Suitable Settings |

|---|---|---|---|---|

| Manual Library Preparation | Low ($-$$) | High (reagents, labor) | Technical staff with specialized training | Low-volume academic labs |

| Automated Sample Prep | High ($$$$) | Moderate (reagents, maintenance) | Technical staff with automation training | High-throughput clinical labs |

| Centralized Sequencing Core | Very High ($$$$$) | Moderate (service fees) | Minimal technical staff required | Multi-institutional consortia |

| On-Site NGS Testing | High ($$$$) | Low-moderate (reagents) | Technical and bioinformatics staff | Clinical trial sites, hospital networks |

Essential Research Reagent Solutions

Table: Key Reagents and Materials for NGS Workflows

| Reagent/Material | Function | Considerations for Economic Constraints |

|---|---|---|

| Fragmentation Enzymes | Shears DNA into appropriately-sized fragments for sequencing | Optimize reaction conditions to prevent over-/under-shearing and reduce reagent waste |

| Adapter Oligos | Attach to DNA fragments for platform-specific sequencing | Titrate adapter:insert ratio to minimize dimer formation and reduce costs |

| Polymerase Enzymes | Amplify library fragments prior to sequencing | Select high-fidelity enzymes to reduce errors and need for repetition |

| Size Selection Beads | Purify and select appropriately-sized library fragments | Optimize bead:sample ratios to improve yield and reduce reagent consumption |

| Unique Molecular Identifiers (UMIs) | Distinguish true biological variants from PCR/sequencing errors | Implement to reduce false positives and need for confirmatory testing |

| Automated Prep Systems | Reduce manual processing and improve reproducibility | High initial investment but long-term labor and reagent savings |

Frequently Asked Questions (FAQs)

What are the most cost-effective strategies for implementing NGS in resource-limited settings? Prioritize targeted sequencing panels over whole-genome approaches to reduce data analysis burdens and costs. Implement automated sample preparation systems where possible despite higher initial investment, as they reduce reagent costs and labor requirements over time [22]. Consider shared instrumentation models or core facilities to distribute equipment maintenance costs across multiple research groups.

How can we minimize workforce training requirements while maintaining NGS quality? Develop standardized operating procedures (SOPs) with emphasized critical steps to reduce inter-operator variability [11]. Implement automated sample prep systems to minimize manual handling errors and reduce technical training needs [22]. Create detailed checklists and utilize "waste plates" during critical purification steps to prevent sample loss from handling errors [11].

What are the primary causes of NGS workflow failures, and how can they be prevented? Common failure points include sample quality issues, library preparation errors, and contamination. Prevention strategies include:

- Validate input DNA/RNA quality using multiple quantification methods (fluorometric and spectrophotometric)

- Implement rigorous contamination controls including negative extraction and amplification controls

- Standardize library preparation protocols with clear quality checkpoints

- Regular maintenance and calibration of laboratory equipment [11]

How can we address the bioinformatics bottleneck without hiring additional staff? Utilize cloud-based analysis platforms with pre-configured pipelines to reduce local computational infrastructure needs. Implement standardized, automated analysis workflows to minimize manual intervention requirements. Pursue collaborative partnerships with bioinformatics cores or service providers for complex analyses rather than maintaining full-time specialized staff [14].

What operational changes provide the best return on investment for improving NGS efficiency? Implementation of automated sample preparation systems demonstrates significant ROI through reduced hands-on time, improved reproducibility, and decreased reagent consumption [22]. Transitioning from manual library preparation to automated or semi-automated workflows can reduce processing time by up to 70% while significantly improving inter-experiment consistency [22]. Additionally, optimizing template amplification to require fewer PCR cycles reduces duplicate rates and improves library complexity.

Optimized NGS Protocol for Resource-Constrained Settings

Efficient Library Preparation Protocol

This protocol balances cost considerations with technical performance for cancer research applications:

Input DNA Quality Control: Assess DNA quality using fluorometric methods (Qubit) supplemented with fragment analyzer systems to ensure accurate quantification and integrity assessment [11].

Enzymatic Fragmentation: Use validated enzymatic fragmentation kits with optimized reaction conditions specific to DNA source (FFPE vs. fresh frozen) to maximize efficiency and minimize over-processing [21].

Adapter Ligation Optimization: Employ reduced adapter concentrations with extended ligation times (2-4 hours at 20°C) to maintain efficiency while reducing reagent costs [11].

Limited-Cycle Amplification: Determine minimum PCR cycle requirements through pilot studies for each sample type, typically 8-12 cycles, to maintain library complexity while reducing duplication rates and bias [21].

Pooling Strategy: Implement combinatorial dual indexing to enable sample multiplexing while maintaining flexibility in sequencing depth allocation across projects [22].

This protocol emphasizes strategic resource allocation while maintaining data quality, addressing both economic and workforce constraints through optimized processes and reduced technical hands-on time.

Strategic NGS Applications in Cancer: From Tumor Genomes to Liquid Biopsies

Frequently Asked Questions (FAQs)

Q1: What is Comprehensive Genomic Profiling (CGP) and how does it differ from traditional single-gene tests? Comprehensive Genomic Profiling (CGP) is a next-generation sequencing (NGS) method that detects multiple classes of genomic alterations (SNVs, indels, CNVs, fusions, MSI, TMB) across a broad panel of cancer-related genes simultaneously from a single tumor sample [23]. Unlike traditional single-gene tests which analyze one biomarker at a time, CGP utilizes a hybrid capture-based NGS assay to provide a complete genomic picture, optimizing tissue use and identifying more therapeutic options, including clinical trial eligibility [24] [25].

Q2: What is the typical actionable mutation rate detected by CGP in real-world practice? Actionable mutation rates vary by tumor type and testing methodology. A 2023 retrospective study of 170 solid tumor patients in a clinical practice setting found that 46.4% of cases had actionable mutations with FDA-approved medications for their specific tumor histology, while an additional 37.6% had alterations with approved drugs for other cancer types [24]. This demonstrates the substantial potential of CGP to guide targeted therapy decisions.

Q3: How can CGP results impact patient diagnosis beyond treatment selection? CGP can complement traditional pathology and in some cases lead to diagnostic recharacterization. A 2025 study highlighted 28 cases where CGP findings prompted re-evaluation of initial diagnoses, resulting in tumor reclassification or refinement (particularly for cancers of unknown primary) [25]. This more precise diagnosis subsequently unlocked more accurate therapeutic strategies tailored to the updated diagnosis [25].

Q4: What are the main challenges in implementing CGP in clinical practice? Key challenges include variability in testing timing, reporting practices, and interpretation complexities [26]. Additional barriers, especially in developing countries, involve cost, long turnaround times, lack of local clinical guidelines, insufficient clinician education, and limited conclusive cost-benefit studies for policymakers [24]. Standardizing reporting formats and leveraging multidisciplinary tumor boards are critical to overcoming these hurdles [26].

Troubleshooting Common CGP Workflow Issues

Pre-Analytical and Sequencing Preparation

Problem: Low Library Yield

- Failure Signals: Low final library concentration, broad or faint peaks on electropherogram, high adapter dimer presence [11].

- Root Causes & Corrective Actions:

- Poor Input Quality: Re-purify input DNA/RNA to remove contaminants (phenol, salts); ensure 260/230 >1.8 and 260/280 ~1.8 [11].

- Quantification Errors: Use fluorometric methods (Qubit) instead of UV absorbance (NanoDrop) for accurate template quantification [11] [27].

- Fragmentation Issues: Optimize fragmentation parameters (time, energy) for your sample type (e.g., FFPE, GC-rich) [11].

- Adapter Ligation Problems: Titrate adapter-to-insert molar ratio; ensure fresh ligase and optimal reaction conditions [11].

Problem: High Adapter Dimer Contamination

- Failure Signals: Sharp peak at ~70-90 bp on electropherogram [11].

- Root Causes & Corrective Actions:

Data Analysis and Interpretation

Problem: Insufficient Sequencing Coverage

- Failure Signals: Low average coverage across target regions, poor variant calling confidence [27].

- Root Causes & Corrective Actions:

- Sample Degradation: Check RNA/DNA integrity number (RIN/DIN) before library prep; use fresh extraction methods [11].

- Library Quantity: Accurately quantify final library using qPCR before sequencing [11].

- Sequencing Load: Adjust template concentration on flow cell or sequencing chip according to platform specifications [27].

Problem: Discordant Results Between CGP and Initial Diagnosis

- Scenario: CGP reveals molecular findings inconsistent with initial pathological assessment [25].

- Resolution Strategy:

- Initiate secondary integrated clinicopathological review of all findings [25].

- Correlate specific genomic biomarkers with their typical diagnostic associations (e.g., TMPRSS2-ERG fusions in prostate cancer, IDH1 mutations in cholangiocarcinoma) [25].

- Utilize molecular tumor boards to reconcile discordance and determine if reclassification is warranted [26] [25].

Quantitative Data on Actionable Genomic Alterations

Table 1: Actionable Genomic Alterations in Colorectal Carcinoma (N=575) [28]

| Biomarker Category | Prevalence in MSS CRC | Prevalence in MSI-H CRC | Potential Therapeutic Implications |

|---|---|---|---|

| MSI Status | 82% | 18% | Immunotherapy response predictor |

| TMB (Median) | 3.9 mut/Mb | 37.8 mut/Mb | Immunotherapy response predictor |

| Driver Mutations | |||

| - APC | 74% | - | - |

| - TP53 | 67% | - | - |

| - KRAS | 47% | - | Anti-EGFR resistance |

| - PIK3CA | 21% | - | PI3K pathway inhibitors |

| - BRAF | 13% | - | BRAF/MEK inhibitors |

| Anti-EGFR Resistance | 59% in RAS/RAF WT | - | Alternative therapies |

| Clinical Actionability | |||

| - Standard care (Level 1/2) | - | - | 51% of late-stage patients |

| - Clinical trials (Level 3/4) | - | - | 49% of late-stage patients |

Table 2: CGP Testing Outcomes Across Multiple Solid Tumors (N=170) [24]

| Testing Outcome | Percentage of Cases | Clinical Implications |

|---|---|---|

| Actionable mutations with FDA-approved drugs | 46.4% | Directly eligible for targeted therapy |

| Alterations with drugs approved for other histologies | 37.6% | Potential for off-label use or trial eligibility |

| Total with therapy-directing recommendations | 84% | Majority benefit from genomic guidance |

| Tier I alterations (strongest evidence) | 22.1% | Highest confidence therapeutic targets |

| Tier II alterations | 11.0% | Validated targets with clinical evidence |

| Common tumor types tested | ||

| - Lung primary tumors | 52.9% | Major application area |

| - Tumors of unknown primary | 10.0% | High diagnostic value |

Essential Research Reagent Solutions

Table 3: Key Reagents for CGP Laboratory Workflow

| Reagent/Category | Function | Technical Considerations |

|---|---|---|

| FFPE DNA/RNA Extraction Kits | Nucleic acid isolation from clinical specimens | Optimized for degraded, cross-linked samples; assess DNA integrity number (DIN) |

| Hybrid Capture Panels | Target enrichment for cancer genes | Comprehensive content (300-500+ genes); coverage uniformity; ability to detect all variant types |

| Library Preparation Master Mixes | NGS library construction | High efficiency for low-input samples; minimal bias; compatibility with degraded DNA |

| MSI and TMB Bioinformatics Algorithms | Genomic signature analysis | Validated against gold standards; appropriate threshold settings (e.g., TMB-H: ≥10 mut/Mb) |

| Tumor Purity Estimation Tools | Sample quality assessment | Integration with copy number analysis; critical for accurate variant calling |

CGP Clinical Application Pathway

Biomarker-Driven Diagnostic Reclassification

Frequently Asked Questions (FAQs)

Q1: What is the primary advantage of using liquid biopsy for treatment response monitoring compared to traditional tissue biopsy? Liquid biopsy offers a minimally invasive method for real-time monitoring of tumor dynamics through a simple blood draw. Unlike tissue biopsies, which are invasive, cannot be frequently repeated, and may not capture tumor heterogeneity, liquid biopsy allows for longitudinal assessment of tumor evolution, treatment efficacy, and emergence of resistance mechanisms by analyzing circulating tumor DNA (ctDNA) shed into the bloodstream from multiple tumor sites [29] [30] [31].

Q2: What factors can lead to a false-negative liquid biopsy result? A false-negative result, where ctDNA is not detected despite the presence of cancer, can occur due to:

- Low tumor shedding: Some cancer types (e.g., brain, renal, thyroid) inherently shed less DNA into the bloodstream [32] [3].

- Low tumor burden: Early-stage cancers or small lesions may release ctDNA levels below the assay's limit of detection [29] [33].

- Successful treatment: Effective therapy that reduces the tumor mass will also lower ctDNA levels, which can be misinterpreted without clinical context [32].

Q3: How do I choose between a targeted and an untargeted NGS approach for ctDNA analysis? The choice depends on the clinical or research objective.

- Targeted NGS (e.g., using multiplex PCR or hybridization capture) sequences a pre-defined panel of genes. It allows for deep sequencing (high sensitivity) to detect low-frequency variants and is ideal for identifying known, actionable mutations for therapy selection [34] [3].

- Untargeted NGS (e.g., Whole-Genome or Whole-Exome Sequencing) does not use a pre-selection step. It provides a broader, hypothesis-free exploration of the genome, useful for discovering novel alterations and researching tumor heterogeneity, but at a lower sequencing depth [34].

Q4: What is clonal hematopoiesis (CHIP), and how can it confound liquid biopsy results? Clonal hematopoiesis of indeterminate potential (CHIP) is an age-related phenomenon where hematopoietic stem cells acquire mutations that are unrelated to the solid tumor. These mutations are also released into the blood and can be detected in cfDNA. CHIP-associated variants can be mistaken for tumor-derived mutations, leading to false-positive results and potentially incorrect treatment decisions [32] [30].

Q5: What is the significance of Variant Allele Frequency (VAF) in ctDNA analysis? Variant Allele Frequency (VAF) is the percentage of DNA fragments carrying a specific mutation out of the total DNA fragments at that genomic locus. It is a crucial quantitative biomarker that can serve as a surrogate for tumor burden, help monitor treatment response (decreasing VAF indicates response, increasing VAF suggests progression), and provide insights into tumor heterogeneity and the clonality of mutations [34] [3].

Troubleshooting Common Experimental Challenges

Challenge: Low ctDNA Concentration or Yield

Low ctDNA yield can compromise assay sensitivity and mutation detection.

Potential Causes and Solutions:

- Cause: Inefficient Blood Collection and Processing.

- Solution: Use blood collection tubes designed for stabilizing cfDNA (e.g., Streck Cell-Free DNA BCT). Ensure plasma separation via centrifugation within the recommended time window (e.g., within 2-6 hours of draw) to prevent leukocyte lysis and contamination of the plasma cfDNA with genomic DNA [31].

- Cause: Low Tumor Shedding.

- Solution: Optimize the NGS wet-lab protocol for low inputs. This includes using Unique Molecular Identifiers (UMIs) to tag individual DNA molecules before amplification to correct for PCR errors and duplicates, and increasing the sequencing depth (coverage) to enhance the probability of detecting low-frequency variants [34] [31].

Challenge: Inability to Detect Low-Frequency Variants

Detecting mutations with very low VAF is critical for early detection of resistance or minimal residual disease (MRD).

Potential Causes and Solutions:

- Cause: Insufficient Sequencing Depth and Technical Noise.

- Solution: Implement highly sensitive NGS methods designed for low-VAF detection. Key techniques include:

- Unique Molecular Identifiers (UMIs): Tags individual DNA molecules to distinguish true mutations from PCR/sequencing errors [34].

- Tagged-Amplicon Deep Sequencing (TAm-Seq): A highly multiplexed PCR approach that can detect mutations at MAFs as low as 0.25% [34].

- CAncer Personalized Profiling by deep Sequencing (CAPP-Seq): Uses a selector probe library to enrich for recurrently mutated regions and combines this with deep sequencing and computational error suppression [34].

- Solution: Implement highly sensitive NGS methods designed for low-VAF detection. Key techniques include:

Challenge: Distinguishing Somatic Tumor Mutations from CHIP

Misattributing CHIP variants to the solid tumor can lead to inaccurate genomic profiling.

Potential Causes and Solutions:

- Cause: Lack of a Paired Normal Control.

- Solution: Always sequence a matched normal sample (e.g., peripheral blood mononuclear cells - PBMCs) from the same patient. Bioinformatic subtraction of variants found in the PBMCs from those in the plasma helps filter out CHIP-derived mutations. If a matched normal is unavailable, use bioinformatics databases and tools that catalog common CHIP mutations to aid in variant annotation and filtering [32] [30].

Essential Experimental Protocols

Protocol: ctDNA Extraction and Library Preparation for Targeted NGS

Principle: To isolate high-quality ctDNA from plasma and prepare a sequencing library optimized for the detection of low-frequency variants.

Reagents and Materials:

- Streck Cell-Free DNA BCT blood collection tubes.

- DNA extraction kit for circulating nucleic acids.

- NGS library preparation kit.

- Targeted gene panel (commercially available or custom-designed).

- Unique Molecular Index (UMI) adapters.

- PCR purification beads.

Methodology:

- Blood Collection and Plasma Separation: Collect venous blood into cfDNA-stabilizing tubes. Centrifuge at 1600 × g for 10-20 minutes to separate plasma from cells. Transfer the supernatant to a new tube and perform a second, high-speed centrifugation (16,000 × g for 10 minutes) to remove any remaining cellular debris [31].

- cfDNA Extraction: Extract cfDNA from the clarified plasma using a silica-membrane column or magnetic bead-based kit according to the manufacturer's protocol. Elute in a low-volume elution buffer.

- Quantification and Quality Control: Quantify cfDNA using a fluorescence-based assay (e.g., Qubit). Assess fragment size distribution using a Bioanalyzer or TapeStation; the main peak should be ~160-170 bp [34].

- Library Preparation with UMIs: a. End-Repair and A-Tailing: Repair DNA ends and add an 'A' base to the 3' ends. b. Ligation of UMI Adapters: Ligate double-stranded adapters containing UMIs to the cfDNA fragments. c. Target Enrichment: Perform hybrid capture or multiplex PCR amplification using the targeted gene panel. d. Library Amplification: Amplify the captured/library fragments with a limited number of PCR cycles. e. Clean-up and QC: Purify the final library using magnetic beads and quantify. Validate library size and quality prior to sequencing [34] [31].

Protocol: Bioinformatic Processing for Low-VAF Variant Calling

Principle: To accurately identify true somatic mutations from NGS data while minimizing false positives from technical artifacts and CHIP.

Workflow:

- Raw Data Processing: Demultiplex sequencing data (bcl2fastq).

- Quality Control: Assess raw read quality (FastQC).

- Adapter Trimming and Read Alignment: Trim adapter sequences and align reads to the reference genome (e.g., BWA-MEM).

- UMI Processing: Group reads by their unique molecular identifiers and consensus-build to generate a single, high-quality read pair per original molecule, correcting for errors.

- Variant Calling: Use a variant caller optimized for ctDNA (e.g., MuTect2, VarScan2) with stringent filters.

- CHIP Filtering: Compare called variants against a database of known CHIP mutations and subtract variants also found in the matched PBMC sample.

- Annotation and Reporting: Annotate filtered variants for functional impact and clinical actionability (e.g., using SnpEff, VEP).

Workflow and Relationship Diagrams

Liquid Biopsy ctDNA Analysis Workflow

Overcoming the Low VAF Detection Challenge

Research Reagent Solutions

Table 1: Essential Reagents and Materials for ctDNA NGS Analysis

| Item | Function/Description | Key Consideration |

|---|---|---|

| cfDNA Blood Collection Tubes | Tubes with preservatives to prevent white blood cell lysis and stabilize cfDNA. | Critical for preserving sample integrity during transport and storage. Example: Streck Cell-Free DNA BCT [31]. |

| cfDNA Extraction Kits | Kits based on magnetic beads or silica membranes to purify cfDNA from plasma. | Select kits optimized for low-concentration, fragmented DNA to maximize yield [31]. |

| UMI Adapters | NGS adapters containing random molecular barcodes to uniquely tag each original DNA molecule. | Enables bioinformatic error correction and accurate quantification of variant alleles [34]. |

| Targeted Gene Panels | A pre-designed set of probes or primers to enrich for cancer-related genes. | Panels can be tailored for specific cancer types or designed as comprehensive cancer gene panels (e.g., covering 70+ genes) [34] [31]. |

| Hybrid Capture or Multiplex PCR Reagents | Reagents for enriching targeted genomic regions from the sequencing library. | Hybrid capture allows for larger panel designs, while multiplex PCR can be faster and require less input DNA [34]. |

| High-Sensitivity DNA Assays | Fluorometric or qPCR-based assays for accurate quantification of low-concentration libraries. | Essential for normalizing library input before sequencing to ensure optimal cluster density on the flow cell. |

Core Biomarkers and Their Clinical Significance

What are the key biomarkers for predicting response to immune checkpoint inhibitors?

The most established biomarkers for predicting response to immune checkpoint inhibitors (ICIs) are tumor mutational burden (TMB), microsatellite instability (MSI), and PD-L1 expression. Each measures a different aspect of tumor-immune system interaction and has varying predictive value across cancer types [35].

PD-L1 Expression is assessed via immunohistochemistry (IHC) using different antibody clones (22C3, 28-8, SP142, SP263) and scoring systems (TPS, CPS). It is approved as a companion diagnostic for several ICIs across multiple tumor types including NSCLC, gastric cancer, and HNSCC. However, its utility is limited by significant tumor heterogeneity, temporal variability, and lack of universal cutoff standards [35].

Microsatellite Instability (MSI) and its counterpart mismatch repair deficiency (dMMR) represent the first tumor-agnostic biomarkers approved for immunotherapy. MSI/dMMR status can be detected via IHC (for MMR proteins MLH1, MSH2, MSH6, PMS2), PCR, or NGS. These biomarkers are strong predictors of response to ICIs like pembrolizumab across all tumor types [35] [36].

Tumor Mutational Burden (TMB) quantifies the total number of somatic non-synonymous mutations within a tumor's genome, serving as a proxy for neoantigen load. The FDA has approved TMB-high (≥10 mutations/megabase) as a tissue-agnostic biomarker for pembrolizumab based on the KEYNOTE-158 trial, which showed a 29% overall response rate in TMB-high solid tumors [35] [37].

Table 1: Clinically Validated Immunotherapy Biomarkers

| Biomarker | Predictive Value | Detection Methods | Key Limitations |

|---|---|---|---|

| PD-L1 | Predicts response in NSCLC, HNSCC, gastric, TNBC, cervical, urothelial cancers | IHC (clones: 22C3, 28-8, SP142, SP263) | Tumor heterogeneity, assay variability, lack of universal cutoff [35] |

| dMMR/MSI-H | Strong predictor of response; FDA-approved for pembrolizumab (tissue-agnostic) | IHC (MMR proteins), PCR, NGS | Discordant cases between methods may still respond to therapy [35] |

| TMB-H | Predicts response; FDA-approved for pembrolizumab (tissue-agnostic) | Targeted panels, WES, WGS, liquid biopsy (ctDNA) | Lack of standardization, variable predictive value across tumor types [35] [36] |

How do TMB and MSI interrelate in predicting immunotherapy response?

While both TMB and MSI measure mutation burden, they represent distinct biological phenomena with an overlapping but not identical relationship. MSI-high status is one specific mechanism that can lead to a high TMB, but many tumors with high TMB are microsatellite stable (MSS) [36].

The underlying mechanism of MSI is exclusively mismatch repair deficiency involving mutations in MSH2, MSH6, MLH1, or PMS2 genes. In contrast, high TMB can result from multiple mechanisms including MMR deficiency, POLE/POLD1 mutations in exonuclease domains, exposure to mutagenic agents (UV light, tobacco smoke), or defects in APOBEC enzyme family members [36].

Research has revealed that the predictive power of TMB varies significantly across cancer types. In "category I" cancers (non-small cell lung cancer, melanoma, bladder carcinoma, colorectal cancer), increasing TMB strongly correlates with improved response to ICIs. However, in "category II" cancers (breast, head and neck, gastroesophageal, prostate, renal carcinomas), the relationship is much weaker. One study showed response rates of 22.6% for MSS/high TMB category I tumors versus only 5% for category II tumors, suggesting the need for cancer-type-specific TMB cutoffs [36].

Table 2: Comparative Analysis of MSI and TMB Biomarkers

| Characteristic | MSI/MMR-Deficiency | Tumor Mutational Burden |

|---|---|---|

| Primary Mechanism | Defective DNA mismatch repair | Multiple mechanisms: MMR-D, POLE mutations, environmental mutagens [36] |

| Nature of Biomarker | Dichotomous (present/absent) | Continuous variable with artificial cutoff [36] |

| Prevalence in Pan-Cancer | ~4% of all cancers | ~16% of TMB-high cases have MMR-D [36] |

| Response in Prostate Cancer | 45% response rate to ICIs | Limited benefit with TMB 10-15 mut/Mb; better response >24.9 mut/Mb [36] |

| Optimal Cutoff | Concrete biological state | Varies by cancer type; 10 mut/Mb may be suboptimal [36] |

Troubleshooting NGS Workflows for Biomarker Detection

How can I troubleshoot low library yield in NGS preparation for TMB analysis?

Low library yield is a common challenge in NGS preparation that can significantly impact downstream TMB analysis. The root causes typically fall into four categories, each with distinct failure signals and corrective actions [11].

Sample Input and Quality Issues: Degraded DNA/RNA or contaminants (phenol, salts, EDTA) can inhibit enzymatic reactions. Failure signals include low starting yield, smear in electropherogram, or low library complexity. Corrective actions include re-purifying input samples, ensuring wash buffers are fresh, and targeting high purity ratios (260/230 > 1.8, 260/280 ~1.8) [11].

Fragmentation and Ligation Failures: Over- or under-fragmentation reduces adapter ligation efficiency. Failure signals include unexpected fragment size distribution and sharp adapter-dimer peaks at ~70-90 bp. Corrective actions involve optimizing fragmentation parameters (time, energy, enzyme concentrations) and titrating adapter:insert molar ratios [11].

Amplification Problems: Overcycling introduces size bias and duplicates. Failure signals include overamplification artifacts and high duplicate rates. Corrective actions include reducing PCR cycles, using efficient polymerases, and optimizing annealing conditions [11].

Purification and Size Selection Errors: Incorrect bead ratios or over-drying beads cause sample loss. Failure signals include incomplete removal of small fragments or adapter dimers. Corrective actions involve optimizing bead:sample ratios, avoiding bead over-drying, and ensuring adequate washing [11].

Table 3: Troubleshooting Guide for Low NGS Library Yield

| Root Cause | Failure Signals | Corrective Actions |

|---|---|---|

| Poor Input Quality | Low yield, smeared electropherogram, enzyme inhibition | Re-purify sample; check 260/230 and 260/280 ratios; use fluorometric quantification [11] |

| Fragmentation Issues | Unexpected fragment sizes, inefficient ligation | Optimize fragmentation parameters; verify size distribution before proceeding [11] |

| Adapter Ligation Problems | High adapter-dimer peaks, low efficiency | Titrate adapter:insert ratio; ensure fresh ligase/buffer; optimize incubation [11] |

| Amplification Errors | High duplication rates, bias, artifacts | Reduce PCR cycles; use high-efficiency polymerases; avoid inhibitors [11] |

| Purification/Sizing Loss | Sample loss, carryover contaminants, incomplete dimer removal | Optimize bead ratios; avoid over-drying; improve washing techniques [11] |

What are common pitfalls in MSI detection via NGS and how can they be resolved?

MSI detection through NGS requires careful attention to several technical challenges that can lead to false positives or negatives:

Sample Degradation and Contamination: Degraded DNA or contamination with host genomic DNA can significantly impact MSI calling accuracy. In plasmid sequencing, degraded samples show multiple peaks in read length histograms or a dominant peak with significant background noise. Solution: Perform quality control using gel electrophoresis or Bioanalyzer/Fragment Analyzer, preferably with linearized plasmid. Consider gel size selection to remove contaminated degraded DNA [27].

Insufficient Sequencing Coverage: Inadequate read depth prevents accurate mutation calling in microsatellite regions. The City of Hope has developed AI tools like MSI-SEER that can identify MSI-high regions often missed by traditional testing, highlighting the need for sensitive detection methods [38]. Solution: Ensure sufficient coverage (typically >100x for tumor samples) across microsatellite loci, and consider validated bioinformatics pipelines specifically designed for MSI detection.

Bioinformatic Challenges: Microsatellite regions are particularly prone to alignment errors and sequencing artifacts. Solution: Implement specialized MSI callers that account for the unique characteristics of repetitive regions, and use established reference panels of microsatellite loci.

Tumor Purity and Heterogeneity: Low tumor purity or intratumoral heterogeneity can mask MSI signals. Solution: Establish minimum tumor purity thresholds (typically >20%) and consider orthogonal validation with IHC for MMR proteins in ambiguous cases.

Advanced Biomarkers and Emerging Applications

What emerging biomarkers beyond TMB and MSI show promise for immunotherapy?

Several novel biomarkers are under investigation to improve patient stratification for immunotherapy:

Tumor Inflammation Signature: Analysis from the CheckMate 142 study in colorectal cancer revealed that higher expression of inflammation-related gene expression signatures (GES) and the presence of tertiary lymphoid structures (TLS) were associated with improved response to nivolumab monotherapy. A four-gene inflammatory GES was particularly predictive, with high expression associated with significantly improved PFS (HR, 0.23) and OS (HR, 0.13) [39].

Tumor Indel Burden (TIB) and MSI Degree: Beyond simple MSI classification, the degree of microsatellite instability and frameshift indel burden show predictive value. In the CheckMate 142 study of nivolumab-ipilimumab combination therapy, higher TIB and degrees of MSI were associated with improved response and survival benefit, suggesting these quantitative measures may refine prediction beyond binary MSI classification [39].

SWI/SNF Complex Mutations: Mutations in chromatin remodeling genes including ARID1A, PBRM1, SMARCA4, and SMARCB1 are emerging as potential predictors of immunotherapy response, though they remain exploratory [35].

POLE/POLD1 Mutations: Mutations in the exonuclease domain of POLE create an ultramutator phenotype associated with exceptional responses to immunotherapy, though clinical application is limited by very low prevalence (<3%) in most cancers [35].

Resistance Mutations: Alterations in B2M, JAK1/2, STK11/LKB1, KEAP1, EGFR, PTEN, and MDM2 have been associated with primary resistance or hyperprogression on ICIs, though their predictive value remains context-dependent [35].

How can dual biomarker matching improve outcomes for combination therapies?

Emerging evidence suggests that matching patients to both targeted therapy and immunotherapy based on distinct genomic and immune biomarkers can yield significant clinical benefits, even in heavily pretreated patients [40].

A University of California, San Diego study of 17 patients with advanced cancers treated with both targeted agents and ICIs matched to dual biomarkers showed a disease control rate of 53%, with median PFS of 6.1 months and OS of 9.7 months despite 29% of patients having undergone ≥3 prior therapies. Remarkably, three patients (~18%) achieved prolonged PFS and OS exceeding 23 months [40].

Despite this promise, an analysis of clinical trials reveals that only 1.3% (4/314) of trials combining targeted therapy with immunotherapy employ biomarkers for both therapeutic modalities. The majority (75%) do not assess any biomarkers for patient inclusion [40].

This dual-matched approach requires sophisticated diagnostic platforms capable of comprehensive genomic and immune profiling. The diagnostic workflow integrates NGS for mutation detection, IHC for protein expression, and advanced bioinformatics to identify actionable biomarkers for both targeted agents and immunotherapies.

Research Reagent Solutions for Biomarker Detection

Table 4: Essential Research Reagents for Immunotherapy Biomarker Detection

| Reagent Category | Specific Examples | Application & Function |

|---|---|---|

| IHC Antibodies | PD-L1 clones (22C3, 28-8, SP142, SP263); MMR proteins (MLH1, MSH2, MSH6, PMS2) | Protein expression analysis; companion diagnostics [35] |

| NGS Library Prep | Illumina Nextera, Thermo Fisher Ion AmpliSeq, QIAseq panels | Target enrichment; library construction for sequencing [41] |

| NGS Panels | FoundationOne CDx, MSK-IMPACT, Tempus xT | Comprehensive genomic profiling; TMB, MSI detection [41] [36] |

| DNA Quantitation | Qubit dsDNA HS/BR assays, Picogreen | Fluorometric quantification for accurate input [11] [27] |

| Quality Control | BioAnalyzer, Fragment Analyzer, TapeStation | Nucleic acid quality assessment; size distribution [11] |

| Automation Platforms | Hamilton STAR, Liquid Handling Systems | Standardization; reduced operator variability [11] |

FAQ: Addressing Common Technical Challenges

How should we handle discordant results between different biomarker testing methods?

Discordant results between IHC, PCR, and NGS methods for MSI/MMR testing occasionally occur, with studies showing that some discordant cases may still respond to immunotherapy. Resolution strategies include:

- Orthogonal Validation: Use an alternative method to confirm results

- Tumor Content Reassessment: Verify adequate tumor purity and cellularity

- Technical Review: Check assay performance controls and thresholds

- Clinical Correlation: Consider patient and family history when available [35]

What is the optimal TMB cutoff for predicting immunotherapy response?

The FDA has approved a cutoff of ≥10 mutations/Mb for pembrolizumab based on the KEYNOTE-158 trial. However, emerging evidence suggests this cutoff may be suboptimal for certain cancer types:

- Category I cancers (NSCLC, melanoma): 10 mut/Mb may be adequate

- Category II cancers (prostate, breast): Higher cutoffs (16-20 mut/Mb) may be needed

- Prostate cancer specifically: Patients with TMB 10-15 mut/Mb show limited benefit, while those >24.9 mut/Mb demonstrate better responses [36]

How can we improve NGS success rates with challenging tumor samples?

Challenging samples (low purity, degraded, low input) require specialized approaches:

- Input Quality Control: Use fluorometric methods (Qubit) instead of spectrophotometry for accurate quantification

- Library Protocol Selection: Choose methods optimized for degraded samples (e.g., hybrid capture)

- Duplicate Marking: Implement careful bioinformatic handling of PCR duplicates

- Panel Optimization: Use targeted panels with high on-target rates for low-input samples [11] [41]

What are the key considerations for implementing in-house NGS testing?

Bringing NGS testing in-house requires careful planning:

- Test Menu: Balance between large comprehensive panels and smaller, rapid panels for time-sensitive results