Overcoming Genetic Heterogeneity in Cancer Biomarker Discovery: Integrated Strategies for Precision Oncology

Genetic heterogeneity presents a fundamental challenge in oncology, undermining the discovery and clinical application of reliable biomarkers for cancer diagnosis, prognosis, and treatment.

Overcoming Genetic Heterogeneity in Cancer Biomarker Discovery: Integrated Strategies for Precision Oncology

Abstract

Genetic heterogeneity presents a fundamental challenge in oncology, undermining the discovery and clinical application of reliable biomarkers for cancer diagnosis, prognosis, and treatment. This article synthesizes current knowledge and emerging strategies to address this complexity. We first deconstruct the nature of genetic heterogeneity and its impact on biomarker performance. We then explore innovative methodological approaches, including multi-omics integration, liquid biopsies, and AI-driven analytics, which are revolutionizing biomarker discovery. The discussion critically assesses translational bottlenecks and offers optimization frameworks for study design and analysis. Finally, we evaluate rigorous validation paradigms and performance metrics essential for establishing clinical utility. This comprehensive resource equips researchers and drug development professionals with the conceptual and practical tools needed to advance biomarker science in the era of precision medicine.

Deconstructing the Challenge: How Genetic Heterogeneity Undermines Cancer Biomarker Development

Conceptual Troubleshooting Guide: Understanding Heterogeneity

Q1: What are the main categories of heterogeneity I might encounter in my cancer genomics research?

A foundational challenge is accurately identifying the type of heterogeneity affecting your experiments. We propose a three-category framework to guide your analysis [1].

- Feature Heterogeneity: This refers to variation in your explanatory variables (inputs). In a genomics study, this could be heterogeneity in gene expression, epigenetic marks, or the cellular microenvironment across your samples. This variation can be a source of noise or a confounder if not properly accounted for [1].

- Outcome Heterogeneity: This describes variation in your dependent variables (outputs). In cancer research, this includes clinical heterogeneity (variability in symptoms) or phenotype heterogeneity (variation in disease presentation among individuals with the same genetic drivers) [1].

- Associative Heterogeneity (Genetic Heterogeneity): This is the core challenge in biomarker discovery. It occurs when the same or similar disease phenotype is caused by different genetic mechanisms in different individuals. This is not simple variation in data, but a complex pattern of association that can lead to missed discoveries and incorrect inferences if not modeled correctly [1].

Q2: Why does my single-gene biomarker fail to generalize across patient cohorts?

This is a classic symptom of unaccounted-for genetic heterogeneity. Your biomarker might be suffering from locus heterogeneity, where mutations in different genes (e.g., RHO and PRPF31) all lead to the same disease outcome (e.g., retinitis pigmentosa) [2]. Alternatively, allelic heterogeneity, where different mutations within the same gene (e.g., over 2,000 variants in CFTR for cystic fibrosis) cause the same disease, can also complicate biomarker specificity [2]. A single-gene approach often cannot capture this complexity.

Q3: My bulk RNA-seq analysis of a tumor shows a clear signal, but subsequent single-cell analysis reveals overwhelming diversity. What happened?

Your experiment has encountered intra-tumor heterogeneity. Bulk sequencing provides an average signal across all cells in a sample, masking the presence of distinct cellular subpopulations [2]. Your "clear signal" from bulk data might be an average of several conflicting signals from different subclones. Single-cell sequencing has revealed that a single tumor can contain genetically distinct cancer cell populations with different mutation profiles, growth rates, and metastatic potential [2]. This diversity is a major driver of drug resistance, as a treatment may eliminate one subclone while leaving another unaffected.

Technical Troubleshooting Guide: Methodological Challenges

Q4: My multi-omics clustering results are inconsistent and not biologically reproducible. What can I do?

Consider moving beyond single-method clustering to a consensus approach. Inconsistent results often stem from technical noise and the high dimensionality of multi-omics data. The consensus MSClustering method is an unsupervised hierarchical network approach designed to address this [3]. It integrates diverse data types to identify robust molecular subtypes and has demonstrated superior performance over existing methods (like COCA/SNF) in classification accuracy, cluster robustness, and computational efficiency [3].

- Protocol Outline: Consensus MSClustering for Robust Subtyping

- Data Input: Integrate multi-omics data (e.g., genomic, transcriptomic, epigenomic) from your tumor samples.

- Key Gene Selection: Use a heterogeneity index to select a functionally coherent set of key genes (e.g., 167 genes were selected in one pan-cancer study) [3].

- Network Construction & Consensus Clustering: Apply an unsupervised hierarchical network approach to build a consensus across the data.

- Validation: Validate the resulting subtypes through:

Q5: How can I accurately model the tumor microenvironment given its immense cellular heterogeneity?

A multi-modal approach that combines single-cell resolution with spatial context is essential. Relying on a single technology will give an incomplete picture.

- Experimental Protocol: Mapping the Tumor Microenvironment

- Single-Cell RNA Sequencing (scRNA-seq): Perform scRNA-seq on dissociated tumor samples. This allows for unsupervised clustering and identification of all major cell types (neoplastic epithelial, immune, stromal, endothelial) and their transcriptionally distinct sub-states [4]. For example, in breast cancer, this has revealed 15 major cell clusters and numerous subclusters of fibroblasts, endothelial, and myeloid cells with unique functional programs [4].

- Spatial Transcriptomics: Integrate spatial transcriptomic data from consecutive tissue sections. This preserves the architectural context that scRNA-seq loses.

- Data Integration and Deconvolution:

- Use computational tools like

inferCNVfor copy number variation inference to classify tumor vs. non-tumor areas. - Use deconvolution tools (e.g.,

CARD) to map the cell types identified in step 1 onto the spatial locations from step 2 [4]. - This reveals region-specific cell distribution, such as immune-enriched versus tumor-enriched zones, and their associations with tumor grade [4].

- Use computational tools like

Q6: My patient-derived organoid (PDO) models show high variability. How can I improve reproducibility?

High variability in PDO generation is a common hurdle, often linked to sample quality and handling. Here is a standardized troubleshooting protocol for establishing colorectal cancer PDOs [5].

- Troubleshooting Protocol: High-Efficiency PDO Generation

- CRITICAL STEP - Tissue Procurement: Transfer samples immediately into cold Advanced DMEM/F12 medium supplemented with antibiotics. Minimize processing delays to preserve cell viability [5].

- CRITICAL STEP - Handling Delays:

- If delay is 6-10 hours: Perform an antibiotic wash and store the tissue at 4°C in DMEM/F12 with antibiotics. Process the next morning.

- If delay exceeds 14 hours: Cryopreserve the tissue. Wash with antibiotic solution and cryopreserve using a freezing medium (e.g., 10% FBS, 10% DMSO in 50% L-WRN conditioned medium). Note that a 20-30% variability in live-cell viability can be expected between these two preservation methods [5].

- Culture Establishment: Isolate crypts and embed in Matrigel. Culture in a specialized medium containing essential growth factors (e.g., EGF, Noggin, R-spondin1) to support long-term expansion [5].

Frequently Asked Questions (FAQs)

Q1: What is the difference between inter-tumor and intra-tumor heterogeneity?

- Inter-tumor heterogeneity refers to differences between tumors from different patients. Tumors of the same histological type can have completely different genetic mutation profiles, which is why a treatment effective for one patient may not work for another [2].

- Intra-tumor heterogeneity refers to the genetic and phenotypic diversity of cancer cells within a single tumor or lesion. This arises from ongoing genetic instability and clonal evolution, leading to subpopulations of cells with different capacities for growth, metastasis, and drug resistance [2].

Q2: What are the most powerful techniques currently available to study tumor heterogeneity?

The table below summarizes the key techniques and their primary applications.

| Technique | Primary Application in Studying Heterogeneity | Key Strength |

|---|---|---|

| Single-Cell Sequencing (scRNA-seq, scDNA-seq) | Analyzing genomic and transcriptomic profiles of individual cells; identifying rare subpopulations and reconstructing clonal evolution [2]. | Reveals diversity masked by bulk sequencing. |

| Spatial Transcriptomics / Multiplex Imaging | Visualizing how different cell populations are organized and interact within the tumor microenvironment [4] [2]. | Provides crucial spatial context. |

| Liquid Biopsy (e.g., ctDNA analysis) | Non-invasively capturing a snapshot of tumor-derived genetic material to monitor heterogeneity, treatment response, and emerging resistance in real-time [6] [2]. | Enables longitudinal monitoring. |

| Consensus Multi-Omic Clustering (e.g., MSClustering) | Integrating multiple data types to discover robust molecular subtypes across different cancers [3]. | Improves classification accuracy and prognostic stratification. |

Q3: How does genetic heterogeneity impact the development of biomarkers for early cancer detection?

Genetic heterogeneity is a major translational barrier. A biomarker based on a single genetic alteration may only be effective for a small subset of patients whose tumors are driven by that specific alteration [6]. For example, emerging biomarkers like circulating tumor DNA (ctDNA) must overcome the challenge of low concentration and high fragmentation, which is compounded by the fact that the genetic alterations being sought can differ vastly between patients [6]. Successful biomarker strategies must therefore target conserved pathways or use multi-analyte panels (e.g., combining ctDNA, exosomes, and microRNAs) to capture a broader range of heterogeneity [6].

The Scientist's Toolkit: Essential Research Reagents & Materials

The table below lists key materials used in the advanced experiments cited in this guide.

| Research Reagent / Material | Function in Experimental Protocols |

|---|---|

| Advanced DMEM/F12 Medium | Serves as the base medium for tissue transport and the foundation for organoid culture growth medium [5]. |

| L-WRN Conditioned Medium | Provides a consistent source of essential growth factors (Wnt3a, R-spondin, Noggin) for establishing and expanding colorectal organoids [5]. |

| Matrigel | A basement membrane extract used as a 3D scaffold to support the self-organization and growth of patient-derived organoids [5]. |

| Antibiotic Solution (e.g., Penicillin-Streptomycin) | Prevents microbial contamination during tissue procurement, transport, and the initial phases of organoid culture establishment [5]. |

| 167 Key Genes (from Heterogeneity Index) | A functionally coherent set of genes, identified via a heterogeneity index, used for precise molecular classification and subtype discovery in pan-cancer studies [3]. |

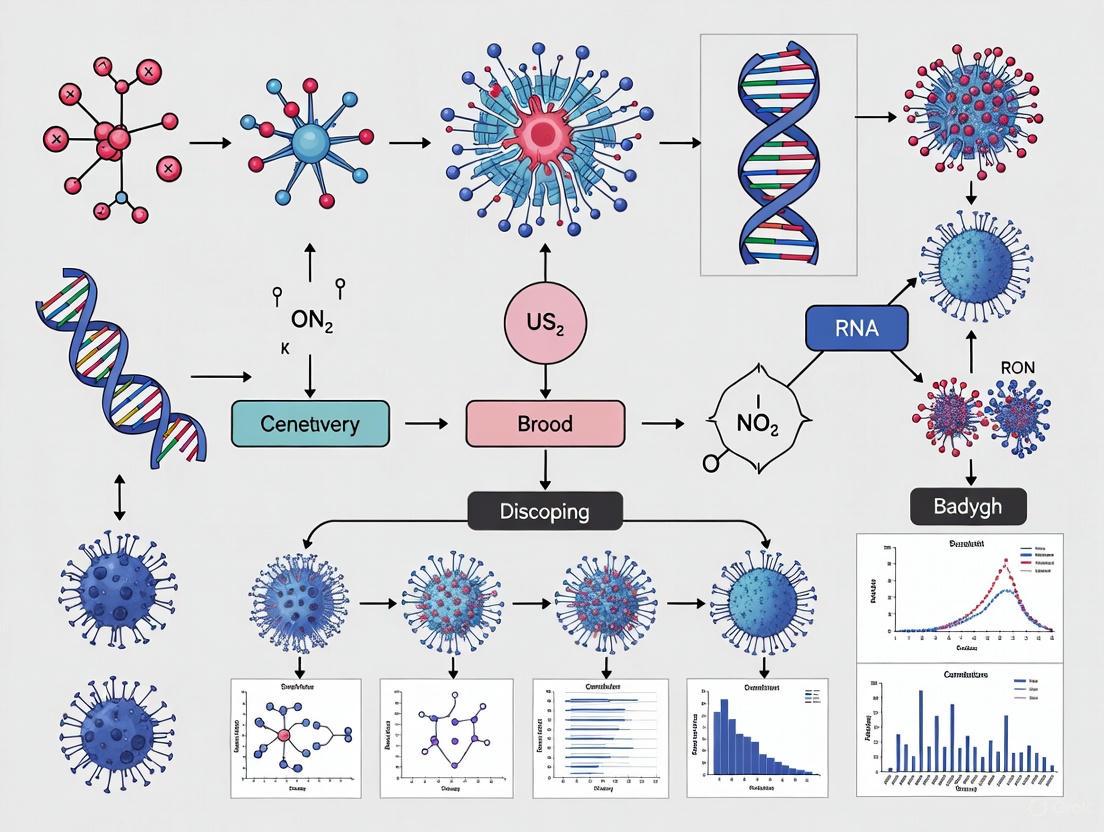

Visualizing Concepts and Workflows

Genetic Heterogeneity Categorization

Single-Cell & Spatial Analysis Workflow

Consensus Clustering for Robust Subtyping

Troubleshooting Guide: Common Biomarker Failure Points

Why do most proposed single-molecule biomarkers fail to reach clinical practice?

The failure rate for new cancer biomarkers is exceptionally high, with less than 1% entering clinical practice [7]. The challenges are particularly pronounced for single markers intended to detect heterogeneous diseases, where a complex interplay of biological, technical, and statistical factors leads to failure.

- Primary Issue: A single biomarker often cannot capture the molecular diversity of a heterogeneous disease. A marker effective for one disease subtype may have low sensitivity for other subtypes, capping its overall performance at the prevalence of that subtype [8].

- Underlying Biology: Diseases like cancer are not monolithic. High-grade serous ovarian cancer (HGSC), for example, shows substantial anatomical site-to-site variation in protein expression, meaning a biomarker discovered in ovarian tissue may not perform reliably in metastatic omental tissue from the same patient [9].

- Troubleshooting Step: If your single-marker candidate shows promising but inconsistent results in your initial cohort, investigate whether its performance is linked to a specific molecular or anatomical subset of the disease.

How does intra-tumoral heterogeneity specifically cause biomarker failure?

Intra-tumoral heterogeneity is a major driver of failure for single-marker strategies, leading to both false-negative results and inaccurate disease classification.

- Primary Issue: A single biopsy may not capture the full genetic, epigenetic, and phenotypic diversity present throughout the entire tumor or in metastatic sites [10]. A biomarker targeting a specific mutation present in only a subpopulation of cells will miss other malignant cells, leading to treatment resistance and disease recurrence [10].

- Experimental Evidence: A 2025 proteomic study of HGSC demonstrated that while many proteins show stable expression within an individual, a significant number vary between the primary ovarian site and the omental metastasis in the same patient. This spatial heterogeneity directly complicates the discovery of a universally reliable single marker [9].

- Troubleshooting Step: When designing a discovery study, profile multiple spatially separated samples from the same tumor and from different metastatic sites, if available. This will help you assess the variability of your candidate marker and avoid overestimating its utility.

What are the critical statistical pitfalls in biomarker discovery for heterogeneous diseases?

Using inappropriate statistical methods and insufficient sample sizes are common errors that generate non-reproducible results.

- Primary Issue: Standard statistical tests (e.g., t-tests) that look for a consistent mean shift between all cases and controls are poorly suited for detecting signals that are only present in a disease subtype [8]. This can cause a powerful biomarker for a 20%-prevalent subtype to be discarded for having "low sensitivity."

- Quantitative Data: Simulation studies show that discovering biomarkers for a heterogeneous disease requires more than a 2-fold larger sample size compared to a homogeneous disease to achieve the same statistical power. The table below summarizes the performance of different statistical selection methods in this context [8].

Table 1: Performance of Statistical Selection Methods for Heterogeneous Diseases

| Method Category | Example Methods | Performance in Heterogeneous Disease |

|---|---|---|

| Tests of Mean Difference | t-test, Welch's t-test, moderated t-test | Suboptimal; fails to detect subtype-specific signals |

| Tests of Stochastic Dominance | Mann-Whitney U test, Kolmogorov-Smirnov test | Better than t-tests, but not ideal |

| Tail-Based Metrics | Sensitivity at fixed specificity (e.g., 95%), Partial AUC | Optimal; directly targets the clinically relevant portion of the ROC curve |

- Troubleshooting Step: For heterogeneous diseases, prioritize statistical methods that are sensitive to changes in the tail of the distribution, such as tests based on sensitivity at high fixed specificity or the partial AUC. Ensure your sample size is calculated to account for disease subtype prevalence [8].

What is the difference between technical validation and clinical validation, and why does the latter often fail?

A crucial misunderstanding is that technical validation of an assay is sufficient to prove a biomarker's clinical value.

- Technical Validation establishes that the test method itself is reliable, evaluating its accuracy, precision, sensitivity, and specificity [11]. It confirms you are "measuring the thing right."

- Clinical Validation establishes that the biomarker acceptably identifies, measures, or predicts the concept of interest for its intended clinical use [11]. It confirms you are "measuring the right thing" for a clinical decision.

- Point of Failure: A biomarker can be technically perfect but fail clinical validation. This happens when the discovered association does not hold in an independent, clinically representative population because it was initially overfitted to a specific dataset or confounded by unaccounted biological variables like heterogeneity [7].

- Troubleshooting Step: Before initiating costly clinical validation, ensure your analytical and clinical validation plans are based on a precisely defined Context of Use (COU), which specifies the biomarker's category and intended clinical decision-making role [11].

Frequently Asked Questions (FAQs)

What is the fundamental reason single biomarkers struggle with heterogeneous diseases like cancer?

Heterogeneous diseases such as cancer consist of multiple molecular subtypes. A single biomarker is analogous to a single key trying to open many different locks; it may work for one but fails for the others. The overall sensitivity of the biomarker is therefore capped by the prevalence of the subtype it detects [8]. Tumor heterogeneity, characterized by diverse genetic, epigenetic, and phenotypic variations within and between tumors, ensures that a single molecular target is seldom present across all malignant cells [10].

Are there experimental designs that can mitigate the risk of failure?

Yes, employing a two-stage design can be a cost-effective strategy. In the first stage, a moderate number of samples are used to screen a large number of candidate biomarkers. The most promising candidates are then advanced to a second stage for validation with the remaining samples. This approach can achieve nearly the same statistical power as a single-stage design at a significantly reduced cost, allowing resources to be focused on the most viable candidates [8]. Furthermore, proactively planning for multimodal biomarker approaches that integrate genomic, proteomic, and clinical data may be necessary to capture a sufficiently full picture of complex biology [12].

How can we discover stable biomarker signatures in a heterogeneous disease?

The key is to focus on identifying molecular features with stable expression within an individual but variable expression between individuals. A 2025 proteomic study on HGSC successfully used this approach. Researchers applied a rigorous qualification filter, requiring proteins to have low variation (Coefficient of Variation < 25%) between multiple samples from the same patient while also showing non-uniform detection across the cohort. This process identified 1,651 stable discriminative proteins, which formed co-expression modules reflecting core biological processes like interferon-mediated inflammation, providing a more robust foundation for biomarker development [9].

What are the operational considerations for a successful biomarker test?

Beyond pure performance, several practical factors determine adoption [12]:

- Actionability: The test result must clearly support a clinical decision (e.g., "use drug A").

- Turnaround Time: Rapid results cause less disruption to clinical workflow.

- Sample Type: Tests using routinely collected biomaterial (e.g., FFPE tissue, blood) are more easily adopted.

- Cost and Reimbursement: The test must demonstrate cost-effectiveness to payers.

Visualizing the Challenge: From Heterogeneity to Biomarker Failure

The following diagram illustrates the central problem: intra-tumoral heterogeneity leads to the failure of single-marker approaches, creating a path to biomarker failure that can only be overcome by robust, multi-faceted strategies.

The Scientist's Toolkit: Key Reagents & Materials for Robust Biomarker Discovery

Table 2: Essential Research Reagents and Materials for Biomarker Discovery in Heterogeneous Cancers

| Item | Function in Research | Consideration for Heterogeneity |

|---|---|---|

| Fresh Frozen (FF) & Formalin-Fixed Paraffin-Embedded (FFPE) Tissues | Source of biomolecules for analysis. | Using matched FF and FFPE samples from the same patient validates biomarker stability across handling protocols [9]. |

| Multi-Region Tumor Samples | Tissue samples from the primary tumor and its metastatic sites. | Critical for assessing spatial heterogeneity and ensuring a candidate biomarker is not site-specific [9]. |

| DNA/RNA Extraction Kits | Isolation of nucleic acids from tissue or blood. | Quality control is paramount. Ensure high-quality yields from both high-tumor-purity and stroma-rich samples. |

| Mass Spectrometry Reagents | For proteomic profiling via Data-Independent Acquisition (DIA-MS). | Allows for deep, quantitative profiling of thousands of proteins to discover stable signatures beyond genomics [9]. |

| Next-Generation Sequencing (NGS) Panels | For mutation profiling and copy number variation analysis. | Helps correlate biomarker expression with underlying genetic drivers of heterogeneity (e.g., TP53, BRCA1/2 status) [9]. |

| Immune Deconvolution Algorithms (e.g., CIBERSORTx) | Computational tool to estimate immune cell abundance from RNA-Seq data. | Quantifies tumor microenvironment heterogeneity, which can confound biomarker signals [9]. |

| Stromal & Immune Signature Panels | Pre-defined gene/protein sets for pathway analysis. | Helps determine if a candidate biomarker's signal is derived from cancer cells or the surrounding microenvironment [9]. |

Technical Support Center: FAQs & Troubleshooting Guides

This technical support center is designed for researchers grappling with the challenges of genetic heterogeneity in cancer biomarker discovery. The following guides provide targeted solutions for common experimental issues.

Frequently Asked Questions

FAQ 1: How can we obtain a representative molecular profile when our tumor biopsy seems to contain multiple distinct cell populations?

Answer: A single biopsy is often insufficient due to spatial heterogeneity. To address this, consider these approaches:

- Multi-Region Sampling: If feasible, collect multiple samples from different regions of the tumor. For glioblastoma, studies using 5-aminolevulinic acid (5-ALA) fluorescence-guided surgery have successfully isolated distinct cellular populations (e.g., from the fluorescent tumor core, the pale infiltrating margin, and the non-fluorescent healthy tissue) for separate analysis [13].

- Liquid Biopsy: Utilize circulating tumor DNA (ctDNA) or circulating tumor cells (CTCs) to capture a global, cross-sectional snapshot of the tumor's genetic landscape from the blood. This can provide a more comprehensive view than a single tissue biopsy [14] [15].

- Single-Cell Sequencing: Employ single-cell RNA or DNA sequencing to deconvolute the different cell populations within a bulk sample. This technology has been pivotal in revealing unique gene expression patterns and cell-to-cell variability in cancers like breast cancer [16].

FAQ 2: Our discovered biomarker shows high sensitivity for only a subset of patient samples. Is this a failure?

Answer: Not necessarily. This is a classic signature of disease heterogeneity. A biomarker with high sensitivity for a specific molecular subtype will have its overall sensitivity capped by the prevalence of that subtype [8]. The solution is to:

- Re-stratify Your Cohort: Re-analyze your data by segregating patients based on established molecular subtypes (e.g., luminal A, basal-like, HER2+ for breast cancer) [16].

- Develop a Multi-Biomarker Panel: Instead of a single biomarker, aim to discover a panel of biomarkers where each member is specific to a different disease subtype. This combined approach can significantly increase overall sensitivity [8].

FAQ 3: Our in vitro drug sensitivity results do not translate to our animal model. What could be going wrong?

Answer: This discrepancy often stems from a lack of tumor microenvironment (TME) in simple cell culture systems. The TME is a critical contributor to heterogeneity and drug response [17].

- Use Advanced Models: Transition to more complex models that better recapitulate the in vivo TME. Patient-derived organoids or patient-derived xenograft (PDX) models maintain more of the original tumor's cellular diversity and stromal interactions [17].

- Characterize Your Model: For PDX models, ensure you are using severely immunocompromised mice to improve engraftment rates. For immunotherapy studies, consider "humanized" mouse models that possess a reconstituted human immune system to provide the necessary immune context [17].

Troubleshooting Guides

Problem: Inconsistent results from bulk sequencing of tumor tissues.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| High Intratumor Heterogeneity | Perform single-cell RNA sequencing on a subset of samples to identify distinct subpopulations. | - Shift to single-cell or spatial transcriptomics [16].- Use multi-region sampling [13].- Use liquid biopsy for a global profile [15]. |

| Sampling Bias | Compare the histology of the sampled region with other regions of the tumor. | Implement image-guided biopsy (e.g., using 5-ALA in glioma) to ensure sampling of representative and viable tumor regions [13]. |

| Low Tumor Purity | Review H&E-stained sections and estimate the percentage of tumor nuclei. | Use laser-capture microdissection to enrich for tumor cells before nucleic acid extraction. |

Problem: Isolated cancer stem cells (CSCs) show variable morphology, growth patterns, and drug responses.

| Observation | Interpretation | Recommended Action |

|---|---|---|

| Mixed adherent and sphere-forming clones from a single tumor. | Evidence of functional heterogeneity at the cellular level, even within the CSC population [18]. | Subclone and characterize individually. Isolate single cells and expand clonally. Compare their growth kinetics, marker expression, and tumorigenic potential in vivo [18]. |

| Differential expression of surface markers (e.g., CD133, CD44, CD24) between subclones. | Indicates the presence of multiple CSC subpopulations, which may have different roles in tumor progression [18]. | Use a panel of markers for isolation and study, rather than relying on a single marker like CD133. |

| Variable drug sensitivity in subclones, e.g., to EGFR inhibitors. | Demonstrates that therapeutic resistance can be intrinsic to specific subclones [18]. | Profile signaling pathways (e.g., PI3K-Akt, MAPK-Erk1/2) in each subclone to identify the molecular basis of resistance and test combination therapies [18]. |

Structured Data & Protocols

Quantitative Data from Key Studies

Table 1: Biomarker Performance in Heterogeneous vs. Homogeneous Disease Models Data derived from simulation studies comparing statistical power in different disease models [8].

| Disease Model | Sensitivity at 95% Specificity | Area Under Curve (AUC) | Required Sample Size (N per group) for 80% Power |

|---|---|---|---|

| Homogeneous Disease | 20% | 0.71 | ~50 |

| Heterogeneous Disease | 20% | 0.59 | >100 |

Table 2: Experimental Profile of Single-Cell Derived Glioblastoma Subclones Data summarizing the functional heterogeneity found in four subclones derived from a single patient's glioblastoma [18].

| Clone ID | In Vitro Morphology | Proliferative Capacity | Tumorigenic Potential In Vivo | Sensitivity to EGFR Inhibitor (Gefitinib) |

|---|---|---|---|---|

| #2 | Sphere-forming | High | High / Lethal | Insensitive |

| #4 | Sphere-forming | High | High / Lethal | Sensitive |

| #3 | Adherent | Low | Low | Insensitive |

| #5 | Adherent | Low | Low | Sensitive |

Detailed Experimental Protocols

Protocol 1: Establishing Single-Cell Derived Subclones from Glioblastoma This protocol is adapted from the methodology used to demonstrate functional heterogeneity in GBM [18].

- Tissue Dissociation: Mechanically and enzymatically dissociate fresh glioblastoma tissue into a single-cell suspension.

- Single-Cell Plating: Using limiting dilution or fluorescence-activated cell sorting (FACS), deposit individual cells into the wells of an uncoated 96-well plate.

- Stem Cell Culture: Culture the cells in serum-free medium supplemented with epidermal growth factor (EGF) and fibroblast growth factor (FGF).

- Clonal Expansion: Monitor wells and expand colonies originating from a single cell. Passage them serially to establish stable subclones.

- Phenotypic Characterization:

- Morphology: Document growth patterns (e.g., spherical vs. adherent).

- Proliferation: Perform growth kinetic assays by counting cell numbers over time.

- Stem Cell Markers: Confirm the expression of neural stem cell markers like Nestin, Sox2, and Musashi-1 via immunocytochemistry.

- Functional Analysis: Proceed with molecular profiling (e.g., FACS for surface markers, cDNA microarrays) and in vivo tumorigenesis assays.

Protocol 2: Multi-Region Sampling and Microenvironment Analysis via 5-ALA FGS This protocol leverages fluorescence-guided surgery to study region-specific heterogeneity in GBM [13].

- Patient Preparation: Administer 5-aminolevulinic acid (5-ALA) prior to surgery as per clinical guidelines.

- Intraoperative Sampling: Under fluorescent light, collect separate tissue specimens from:

- ALA+ Region: Solid tumor core with bright fluorescence.

- ALA PALE Region: Infiltrating margin with pale fluorescence.

- ALA- Region: Non-fluorescent, presumably healthy tissue.

- Histopathological Confirmation: Fix a portion of each sample for H&E staining to confirm the histological features of each region.

- Stromal Cell Isolation: Mechanically and enzymatically dissociate the remaining tissue from each region. Isolate glioma-associated stem cells (GASC) or other stromal components by culturing in appropriate mesenchymal stem cell media.

- Comparative Analysis: Compare the isolated cells from different regions in terms of:

- Growth kinetics.

- Cell surface phenotype (e.g., by flow cytometry).

- Transcriptomic profiles using targeted gene arrays (e.g., for cancer inflammation and immunity genes).

The Scientist's Toolkit

Table 3: Research Reagent Solutions for Heterogeneity Studies

| Item | Function/Application in Heterogeneity Research |

|---|---|

| 5-Aminolevulinic Acid (5-ALA) | A fluorescent dye used in guided surgery to visually distinguish the tumor core (ALA+), infiltrating margin (ALA PALE), and healthy tissue (ALA-), enabling region-specific sampling and analysis [13]. |

| Epithelial Cell Adhesion Molecule (EpCAM) | A common surface marker used for the immunomagnetic enrichment and detection of circulating tumor cells (CTCs) from blood samples [14]. |

| Cancer Stem Cell Markers (e.g., CD133, CD44) | Antibodies against these cell surface proteins are used to isolate and study cancer stem cell (CSC) populations via flow cytometry, which often represent a source of functional heterogeneity [18]. |

| EGFR Inhibitors (e.g., Gefitinib) | Small molecule inhibitors used in functional assays to test the sensitivity of different tumor subclones to targeted therapy, revealing heterogeneity in drug response pathways [18]. |

| Patient-Derived Xenograft (PDX) Models | Immunodeficient mice engrafted with human tumor tissue. These models maintain the heterogeneity of the original patient tumor and are used for in vivo drug testing and biology studies [17]. |

Signaling Pathways & Experimental Workflows

Tumor Heterogeneity and Research Pathways

Single-Cell Subcloning Workflow

Troubleshooting Guide: Frequently Asked Questions

FAQ 1: Why does my discovered biomarker show high sensitivity in some patient cohorts but fails in others?

This is a classic symptom of underlying disease heterogeneity. What is often clinically diagnosed as a single disease (e.g., breast cancer) frequently comprises multiple molecular subtypes, each with unique biological drivers [8]. A biomarker may be exquisitely sensitive for one specific molecular subtype but have little to no sensitivity for others. Its overall performance is therefore capped by the prevalence of that subtype within the tested population [8]. In a heterogeneous disease, a biomarker with 98% sensitivity for a subtype that constitutes 20% of the patient population cannot achieve more than 20% overall sensitivity [8].

- Recommended Action: Re-analyze your discovery cohort data to check for underlying clusters or subgroups. Consider using statistical methods designed for heterogeneous populations, such as tests of stochastic dominance (e.g., Mann-Whitney U test) or metrics focused on the tail of the distribution (e.g., sensitivity at high specificity), which can outperform standard t-tests in these conditions [8].

FAQ 2: My validation study failed to replicate the promising results from my initial biomarker discovery. Could heterogeneity be the cause?

Yes, this is a common consequence of poor patient stratification and unaccounted-for heterogeneity during the discovery phase. If the initial discovery cohort unintentionally over-represents a particular disease subtype, the biomarker will appear strong. When validated in a separate, more representative cohort where that subtype's prevalence is lower, the biomarker's performance will drop significantly [8]. This is often compounded by underpowered studies; heterogeneous diseases require significantly larger sample sizes (more than 2-fold in some simulations) to ensure all relevant subtypes are adequately represented [8].

- Recommended Action: Ensure your discovery cohort is large and clinically diverse. Employ a two-stage discovery design: a first stage to screen many candidates with a moderate number of samples, and a second stage to validate top candidates with the remaining samples. This can achieve nearly the same statistical power as a single-stage design at a reduced cost [8].

FAQ 3: How does intra-tumoral heterogeneity impact the reliability of tissue-based biomarkers?

Spatial heterogeneity within a single tumor and between anatomical sites (e.g., primary ovary vs. metastatic omentum in ovarian cancer) can lead to profound sampling bias [9]. A protein that is highly expressed in one region of a tumor may be absent in another. A biomarker discovered from a single biopsy may not represent the entire tumor's molecular landscape, limiting its utility as a clinical predictive tool [9].

- Recommended Action: For biomarker discovery, prioritize proteins or signatures that demonstrate stable expression across multiple samples from the same individual, yet show variable expression between individuals (stable discriminative proteins) [9]. In high-grade serous ovarian cancer, for instance, focusing on such stable features has revealed consistent inflammatory pathways like the cGAS-STING pathway, which are more reliable for biomarker development [9].

FAQ 4: What are the major pitfalls in using machine learning for patient stratification, and how can I avoid them?

Machine learning (ML) models for stratification are highly vulnerable to overfitting, especially when trained on small, low-quality, or biased datasets [19]. Common flaws include:

- Data Leakage: Improper handling of feature selection during cross-validation, which inflates performance estimates.

- Lack of External Validation: Few models are tested on independent datasets from different clinical sites, which is critical for assessing real-world generalizability [19].

- Selection Bias: Training data from Electronic Health Records (EHR) often over-represents certain patient groups, leading to models that perform poorly on underrepresented populations [19].

- Recommended Action: Use large, representative datasets. Embed all preprocessing and feature selection steps rigorously within cross-validation. The most critical step is to validate your final model on a completely external dataset not used in any part of the training or optimization process [19].

Quantitative Data: Impact of Heterogeneity on Study Design

The table below summarizes key quantitative findings from simulation studies on biomarker discovery in heterogeneous diseases.

Table 1: Sample Size and Method Selection for Biomarker Discovery

| Factor | Homogeneous Disease | Heterogeneous Disease | Implications and Recommendations |

|---|---|---|---|

| Required Sample Size | Smaller (Baseline) | >2-fold larger [8] | Larger samples are needed to ensure adequate representation of all disease subtypes. |

| Optimal Statistical Methods | Traditional t-tests, linear models [8] | Tests of stochastic dominance (e.g., Mann-Whitney U), partial AUC, sensitivity at fixed specificity [8] | Methods focused on distribution tails or stochastic dominance are more robust for detecting subtype-specific signals. |

| Biomarker Performance | Consistent across population | Capped by subtype prevalence [8] | A biomarker's overall sensitivity is limited by the fraction of patients who have the subtype it detects. |

| Study Design Efficiency | Single-stage design | Two-stage design [8] | A two-stage design can achieve similar power to a single-stage design at significantly reduced cost for large studies. |

Detailed Experimental Protocol: Identifying Stable Biomarkers in Heterogeneous Tissues

This protocol is adapted from a study on high-grade serous ovarian cancer (HGSC) to discover biomarkers that remain stable despite spatial heterogeneity [9].

Objective: To identify proteins with stable expression within an individual patient but variable expression between patients, making them suitable candidates for clinical biomarkers.

Materials:

- Tissue Samples: Multiple fresh-frozen (FF) and formalin-fixed, paraffin-embedded (FFPE) tissue samples from different anatomical sites (e.g., ovary and omentum) from the same patient cohort.

- Proteomic Profiling: Data-Independent Acquisition Mass Spectrometry (DIA-MS) platform.

- Bioinformatic Tools: Software for coefficient of variation (CV) calculation, weighted correlation network analysis (WGCNA), and single-sample gene set enrichment analysis (ssGSEA).

Methodology:

- Extensive Multi-Site Sampling: Collect a large number of samples per patient (e.g., 11-80) from both primary and metastatic sites [9].

- Proteomic Data Generation: Perform DIA-MS on all samples to quantify protein abundance. The cited study quantified a median of 5,299 proteins per sample [9].

- Protein Matrix Qualification: Apply a series of filters to the raw protein data:

- Detection Filter: Include only proteins detected in both FF and FFPE tissue types.

- Stability Filter: Retain proteins with a low Coefficient of Variation (CV < 25%) across multiple samples from the same individual. This ensures the protein's expression is consistent within a patient [9].

- Discriminative Power Filter: Exclude proteins that are uniformly detected across all patients to avoid housekeeping proteins. The goal is to find proteins that can differentiate between patients [9].

- Network and Pathway Analysis:

Expected Outcome: A refined list of proteins (e.g., 1,651 stable discriminative proteins as in the cited study) and co-expression modules (e.g., a 52-protein module reflecting interferon inflammation) that are robust to intra-tumoral heterogeneity and represent reliable features for biomarker development [9].

Visualizing the Workflow and Signaling Pathway

Biomarker Discovery Workflow

DNA Sensing and Inflammation in HGSC

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Biomarker Discovery in Heterogeneous Cancers

| Research Reagent / Material | Function in Experimental Protocol |

|---|---|

| Fresh-Frozen (FF) & Formalin-Fixed Paraffin-Embedded (FFPE) Tissues | Provides complementary sample types for discovery and validation. FF tissue is ideal for high-quality proteomics, while FFPE allows the use of vast archival clinical repositories [9]. |

| Data-Independent Acquisition Mass Spectrometry (DIA-MS) | A high-sensitivity proteomic platform capable of quantifying thousands of proteins from biopsy-sized tissue samples, enabling deep profiling of the tumor proteome [9]. |

| Stable Isotope-Labeled Peptide Standards | Used in mass spectrometry for absolute quantification of proteins, improving the accuracy and reproducibility of biomarker measurements across many samples. |

| Weighted Correlation Network Analysis (WGCNA) | A bioinformatic algorithm (R package) used to identify modules of highly correlated proteins or genes. It helps reduce data dimensionality and find co-regulated biological pathways [9]. |

| Single-Sample GSEA (ssGSEA) | An algorithm that calculates the enrichment of a predefined gene or protein set in a single sample. It is used to score pathway activity (e.g., DNA sensing/inflammation score) in individual tumor samples [9]. |

| CIBERSORTx | A computational tool to impute immune cell composition from bulk tumor gene expression or proteomic data, allowing assessment of the tumor immune microenvironment alongside biomarker discovery [9]. |

Innovative Approaches and Technologies for Heterogeneity-Aware Biomarker Discovery

Intratumour heterogeneity (ITH) presents a significant challenge in cancer biomarker discovery, as molecular profiles can vary dramatically between different regions of the same tumour. This variability fosters vulnerability in RNA expression-based biomarkers derived from a single biopsy, making them susceptible to tumour sampling bias and leading to unreliable patient stratification. Fortunately, innovative approaches utilizing multi-marker panels and signature-based methods are demonstrating remarkable potential to overcome these limitations by providing a more comprehensive molecular portrait that transcends regional genetic variations.

The ITH Challenge in Cancer Biomarker Research

Intratumour heterogeneity manifests at multiple biological levels, including genomic, transcriptomic, proteomic, and metabolomic dimensions. At the transcriptomic level, studies have revealed astonishing heterogeneity that directly confounds existing expression-based biomarkers across multiple cancer types.

Quantifying the ITH Problem

Research in hepatocellular carcinoma (HCC) has quantified this challenge, demonstrating that applying 13 published prognostic signatures to classify tumour regions from the same patient resulted in an average discordance rate of 39.9% at the level of individual patients [20]. Similarly, in colorectal cancer (CRC), stromal-derived ITH has been shown to undermine molecular stratification of patients into appropriate prognostic/predictive subgroups, with significant variations observed between central tumour, invasive front, and lymph node metastasis regions from the same patients [21].

Table 1: Impact of Transcriptomic ITH on Biomarker Concordance in HCC

| Metric | Finding | Implication |

|---|---|---|

| Regional classification discordance | 39.9% average discordance across 13 signatures | Single-biopsy approaches yield unreliable patient stratification |

| Sample clustering by patient-of-origin | 0-88% depending on signature type | Signature design determines resistance to ITH effects |

| Cancer-cell intrinsic signatures | Significantly higher concordance | Overcoming stromal-derived ITH contamination |

Multi-Omics Integration Strategies

Multi-omics strategies integrating genomics, transcriptomics, proteomics, and metabolomics have revolutionized biomarker discovery by providing a multidimensional framework for understanding cancer biology [22]. This approach enables the characterization of molecular signatures that drive tumour initiation, progression, and therapeutic resistance beyond what single analytes can reveal.

Multi-Omics Workflow for Overcoming ITH

The diagram below illustrates how multi-omics data integration creates robust biomarkers resistant to heterogeneity effects:

Analytical Techniques for Multi-Omics Data

The computational integration of multi-omics datasets employs both horizontal and vertical integration strategies, complemented by sophisticated machine learning and deep learning approaches for data interpretation [22]. Publicly available multi-omics databases include:

- DriverDBv4: Encompasses data from over 70 cancer cohorts, integrating genomic, epigenomic, transcriptomic, and proteomic data

- GliomaDB: Specifically focuses on glioma research, integrating 21,086 glioblastoma multiforme samples

- HCCDBv2: A comprehensive liver cancer multi-omics database incorporating clinical phenotype data and multiple transcriptomic technologies [22]

Signature-Based Approaches to Overcome ITH

Cancer-Cell Intrinsic Signatures

Research in colorectal cancer has demonstrated that signatures focused on cancer-cell intrinsic gene expression produce more clinically useful, patient-centred classifiers. The CRC intrinsic signature (CRIS) exemplifies this approach, robustly clustering samples by patient-of-origin rather than region-of-origin, thereby minimizing the confounding effects of stromal-derived ITH [21].

In comparative analyses, cancer-cell intrinsic signatures significantly outperformed stroma-influenced signatures:

Table 2: Performance Comparison of CRC Gene Signatures Against ITH

| Signature | Clustering by Patient-of-Origin | Resistance to Stromal ITH |

|---|---|---|

| Kennedy et al. | 88% | High |

| Popovici et al. | 88% | High |

| Sadanandam et al. (CRCA) | 54% | Moderate |

| Eschrich et al. | 38% | Low |

| Jorissen et al. | 29% | Low |

| Stromal-derived signature | 0% | None |

Development of ITH-Free Biomarkers

A novel strategy for developing ITH-free biomarkers involves quantifying transcriptomic heterogeneity utilizing multiregional transcriptome datasets. The AUGUR approach exemplifies this methodology:

This de novo strategy based on heterogeneity metrics was used to develop a surveillant biomarker (AUGUR) that showed significant positive associations with adverse features of HCC and maintained prognostic concordance across multiple cohorts [20].

Liquid Biopsy and Multi-Cancer Detection Platforms

Liquid biopsy approaches represent another powerful strategy for overcoming ITH by capturing tumour heterogeneity through minimally invasive blood-based tests.

Multi-Cancer Detection (MCD) Tests

MCD tests, also referred to as multi-cancer early detection (MCED) tests, measure biological substances that cancer cells may shed in blood and other body fluids [23]. These include:

- Circulating tumor cells (CTCs)

- Circulating tumor DNA (ctDNA)

- Extracellular vesicles (EVs)

- Proteins and metabolites

MCD tests differ from other cancer screening tests in that they use a single blood test to check for many types of cancer from different organ sites simultaneously [23]. Current MCD tests in development measure different biological signals in blood plasma, including changes in DNA and/or RNA sequences, patterns of DNA methylation, patterns of DNA fragmentation, levels of protein biomarkers, and antibodies against tumor components [23].

Multibiomarker Panels in Pancreatic Cancer

For challenging malignancies like pancreatic ductal adenocarcinoma (PDAC), multibiomarker panels in liquid biopsy show promise for early detection. Single biomarkers such as CA19-9 lack sufficient sensitivity and/or specificity for reliable PDAC detection, especially in early stages [24]. Combining circulating biomarkers in multimarker panels significantly improves the sensitivity and specificity of blood test-based diagnosis.

Table 3: Liquid Biopsy Biomarkers for Multi-Marker Panels in PDAC

| Biomarker Category | Specific Analytes | Advantages | Challenges |

|---|---|---|---|

| Cellular Biomarkers | CTCs, cCAFs | Representative of tumour heterogeneity | Low abundance in early stages |

| Nucleic Acids | ctDNA, cfRNA, miRNA | Genetic and epigenetic information | Low concentration in early disease |

| Proteins | CA19-9, novel protein panels | Established methodologies | Limited specificity of individual markers |

| Extracellular Vesicles | Proteins, nucleic acids | Protected cargo, abundant | Standardization of isolation methods |

Technical Support Center

Troubleshooting Guides

FAQ: How can we validate that our multi-marker signature truly overcomes ITH?

Issue: Uncertainty in determining whether a signature reliably classifies patients regardless of tumour sampling region.

Solution:

- Utilize multiregional sampling datasets from public repositories or generate in-house data

- Apply signature to each region independently and assess classification concordance

- Employ correlation analyses comparing intra-patient versus inter-patient sample similarity

- Validate signature performance in multiple independent cohorts with different sampling protocols

Methodology:

- Calculate Pearson correlation coefficients between multi-region samples from the same patient versus different patients

- Implement hierarchical clustering to visualize whether samples cluster by patient-of-origin rather than region-of-origin

- Assess signature stability using metrics like the Sadanandam CRIS classifier, which demonstrated 88% concordant clustering in CRC multi-region data [21]

FAQ: What are common computational challenges in multi-omics integration?

Issue: Technical difficulties in integrating diverse data types with different scales, dimensions, and noise characteristics.

Solution:

- Implement batch effect correction methods to address technical variations

- Employ dimensionality reduction techniques (PCA, t-SNE, UMAP) for visualization

- Use supervised and unsupervised integration algorithms (MOFA, iCluster, mixOmics)

- Apply machine learning approaches for feature selection and pattern recognition

Methodology:

- Pre-process each omics dataset individually with appropriate normalization

- Address missing data using imputation methods or model-based approaches

- Select integration methods based on research question: early (data concatenation), intermediate (transformation to common space), or late (separate analysis with model integration)

- Validate integrated signatures using cross-validation and independent test sets [22]

FAQ: How do we address reproducibility issues in multi-marker panel development?

Issue: Inconsistent results across different experimental batches or platforms.

Solution:

- Implement rigorous quality control measures at each processing step

- Use standardized protocols for sample collection, storage, and processing

- Incorporate internal controls and reference materials

- Apply cross-platform normalization methods when combining datasets

Methodology:

- Establish standard operating procedures (SOPs) for sample processing

- Utilize automation to minimize manual variability (e.g., automated homogenization reduces contamination risks and improves consistency) [25]

- Include technical replicates and randomize processing order

- Validate findings in multiple independent cohorts with different demographic characteristics [20]

Research Reagent Solutions

Table 4: Essential Research Tools for Multi-Marker Panel Development

| Reagent/Technology | Function | Application in Biomarker Discovery |

|---|---|---|

| Next-generation sequencing (NGS) platforms | Comprehensive molecular profiling | Genomics, transcriptomics, epigenomics |

| Mass spectrometry systems | Protein and metabolite identification | Proteomics, metabolomics |

| Automated homogenization systems | Standardized sample preparation | Reduces cross-contamination, improves reproducibility [25] |

| Multiplex immunoassay platforms | Simultaneous protein marker measurement | Validation of protein signatures |

| Single-cell RNA sequencing | Resolution of cellular heterogeneity | Identification of cell-type specific markers |

| Spatial transcriptomics technologies | Tissue context preservation | Correlation of molecular features with histopathology |

The transition from single analyte biomarkers to multi-marker panels and signature-based approaches represents a paradigm shift in cancer biomarker research that directly addresses the challenge of intratumour heterogeneity. Through strategies including multi-omics integration, cancer-cell intrinsic signature development, liquid biopsy platforms, and sophisticated computational integration, researchers can now develop classification systems that remain robust despite the sampling biases introduced by ITH. As these technologies continue to evolve and validate in larger clinical cohorts, they hold tremendous promise for delivering on the goal of precision oncology—reliable patient stratification for improved diagnosis, prognosis, and therapeutic selection.

Frequently Asked Questions (FAQs)

FAQ 1: What are the core components of a liquid biopsy, and how do they help overcome tumor heterogeneity? Liquid biopsy focuses on analyzing tumor-derived components from bodily fluids. The key biomarkers are:

- Circulating Tumor Cells (CTCs): Intact cells shed from primary or metastatic tumors into the bloodstream. They provide a global view of the tumor as they can originate from different sites, capturing cellular-level heterogeneity. They allow for functional analyses and culture [14] [26].

- Circulating Tumor DNA (ctDNA): Short DNA fragments released into the blood from apoptotic or necrotic tumor cells. ctDNA carries genetic alterations (e.g., mutations) from all tumor subclones, enabling a comprehensive genomic profile of the disease burden. It has a short half-life, allowing for real-time monitoring of tumor dynamics [14] [26] [27].

FAQ 2: My tissue biopsy results show a specific mutation, but my liquid biopsy is negative. Why might this happen? This discrepancy can often be attributed to tumor heterogeneity. The tissue biopsy may have sampled a specific region of the tumor harboring the mutation, while the liquid biopsy captures DNA shed from all tumor sites. If the mutation is not present in all subclones or is shed inefficiently into the bloodstream, it may fall below the detection limit of the liquid biopsy assay [8] [9]. It is recommended to interpret results in the clinical context and consider re-testing if the clinical suspicion remains high.

FAQ 3: How can I improve the capture efficiency of rare CTCs from a blood sample? Capturing rare CTCs (as few as 1 per billion blood cells) is a technical challenge [26]. The optimal method depends on your research question. The table below summarizes the primary technologies:

| Method | Principle | Advantages | Limitations |

|---|---|---|---|

| Immunomagnetic Positive Enrichment [26] | Uses antibodies (e.g., anti-EpCAM) on magnetic beads to capture CTCs. | High specificity for EpCAM-positive CTCs. | Misses CTCs that have downregulated epithelial markers (e.g., during EMT). |

| Microfluidics [26] | Uses fluid dynamics and surface markers to isolate CTCs. | High capture efficiency, can process small volumes. | Can be limited by predefined surface markers. |

| Size-Based Filtration [26] | Filters blood based on the larger size and rigidity of most CTCs. | Preserves cell viability, not reliant on surface markers. | May miss small or deformable CTCs; low purity. |

| Density Gradient Centrifugation [26] | Separates CTCs based on buoyant density. | Low cost, can isolate various cell types. | Low separation efficiency and recovery. |

FAQ 4: What are the best practices for ensuring the quality of ctDNA samples for downstream mutation analysis? The quality of ctDNA analysis is highly dependent on the pre-analytical phase. Key considerations include:

- Blood Collection: Use specific blood collection tubes designed to stabilize nucleated cells and prevent genomic DNA contamination from white blood cell lysis.

- Plasma Separation: Process blood samples within a few hours of collection. A double-centrifugation protocol is recommended to obtain cell-free plasma and remove residual cells.

- DNA Extraction: Use dedicated kits optimized for recovering short-fragment DNA. Quantify ctDNA yield and quality, remembering it often constitutes only 0.1-1.0% of total cell-free DNA [14].

- Analysis: Employ highly sensitive and validated methods like PCR-based assays or next-generation sequencing (NGS) to detect low-frequency mutations.

Troubleshooting Common Experimental Challenges

Problem: Low detection rate of ctDNA mutations in early-stage cancer.

- Potential Cause: The tumor burden may be too low, resulting in ctDNA levels below the analytical sensitivity of your assay [14].

- Solutions:

- Increase Input Volume: Use a larger volume of plasma for DNA extraction to obtain more template molecules.

- Use Ultra-Sensitive Assays: Switch to more sensitive technologies like digital PCR or targeted NGS with unique molecular identifiers (UMIs) that can detect mutations at an allele frequency of 0.1% or lower.

- Analyze Multiple Time Points: A single negative result may not be conclusive. Serial monitoring can help detect the emergence of ctDNA as the disease progresses.

Problem: Inconsistent CTC counts between replicate samples.

- Potential Cause: The inherent rarity of CTCs and potential technical variability in enrichment and identification protocols [26].

- Solutions:

- Standardize Protocols: Ensure all sample processing steps (blood draw, storage, enrichment) are rigorously standardized.

- Implement Spike-In Controls: Use defined numbers of cultured tumor cells spiked into healthy donor blood to validate the entire workflow and calculate recovery rates.

- Automate Processes: Where possible, use automated platforms to reduce manual handling variability.

Problem: High background noise in ctDNA sequencing from wild-type DNA.

- Potential Cause: The signal from non-tumor-derived cell-free DNA can obscure low-frequency tumor variants [14].

- Solutions:

- Optimize Bioinformatics Pipelines: Use robust bioinformatics tools specifically designed to distinguish true low-frequency variants from sequencing errors and background noise.

- Apply Duplex Sequencing: Use sequencing methods that sequence both strands of a DNA molecule, significantly reducing errors.

- Improve Blood Collection: As mentioned in the pre-analytical steps, using superior blood collection tubes can minimize white blood cell lysis and the release of wild-type DNA.

Experimental Protocols for Key Applications

Protocol 1: Isolation and Enumeration of CTCs using Immunomagnetic Enrichment

This protocol is based on the principles of the FDA-cleared CellSearch system [14] [26].

1. Sample Preparation:

- Collect peripheral blood (7.5-10 mL) into a CellSave-type tube or similar, which contains an anticoagulant and preservative.

- Store samples at room temperature and process within a specified window (e.g., 96 hours).

2. CTC Enrichment:

- Incubate the blood sample with ferromagnetic nanoparticles coated with antibodies against Epithelial Cell Adhesion Molecule (EpCAM).

- Pass the sample through a magnetic field. The EpCAM-positive CTCs are retained while other blood components are washed away.

3. CTC Identification:

- Stain the enriched cells with fluorescently labeled antibodies:

- Pan-cytokeratin (CK): Positive stain for epithelial-derived CTCs.

- CD45: Negative stain to exclude leukocytes.

- DAPI: Nuclear stain to identify nucleated cells.

- A CTC is defined as a DAPI+/CK+/CD45- cell. Analyze slides using a semi-automated fluorescence microscope system.

Protocol 2: Detection of Somatic Mutations from Plasma ctDNA

This protocol outlines a common workflow for targeted mutation detection [14].

1. Plasma Processing and ctDNA Extraction:

- Centrifuge whole blood to separate plasma. Perform a second, high-speed centrifugation of the plasma to remove any residual cells.

- Extract cell-free DNA from the plasma using a commercial kit (e.g., QIAamp Circulating Nucleic Acid Kit). Elute in a low-volume buffer.

2. Library Preparation and Target Enrichment:

- Prepare a sequencing library from the extracted DNA.

- Use a targeted gene panel (e.g., covering hotspots in KRAS, EGFR, TP53, APC) to enrich for regions of interest via hybrid capture or amplicon-based approaches. Incorporating UMIs at the library preparation step is highly recommended for error correction.

3. Sequencing and Data Analysis:

- Sequence the libraries on a high-throughput sequencer (e.g., Illumina platform).

- Process the raw data through a bioinformatics pipeline: align reads to a reference genome, group reads by UMI to create consensus sequences, and call variants using specialized algorithms for low-frequency mutations.

Table 1: Key Characteristics of Liquid Biopsy Components [14] [26]

| Biomarker | Origin | Approximate Concentration in Blood | Half-Life | Primary Information Carried |

|---|---|---|---|---|

| CTC | Shed from primary or metastatic tumors | 1-10 cells per mL of blood in metastatic cancer | 1-2.5 hours | Whole genome, transcriptome, proteome, functional capacity |

| ctDNA | Released from apoptotic or necrotic tumor cells | 0.1-1.0% of total cell-free DNA | ~2 hours | Somatic mutations, copy number alterations, methylation patterns |

Table 2: Comparison of CTC Isolation Technologies [26]

| Technology | Enrichment Principle | Purity | Cell Viability | Throughput |

|---|---|---|---|---|

| Immunomagnetic (CellSearch) | Biological (EpCAM antibody) | Moderate | Low (fixed cells) | Medium |

| Microfluidic Chips | Biological/Physical | High | High | Low to Medium |

| Size-Based Filtration | Physical (size/deformability) | Low | High | Medium |

| Density Centrifugation | Physical (density) | Low | Variable | High |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Liquid Biopsy Research

| Item | Function/Description | Example Application |

|---|---|---|

| CellSave Tubes | Blood collection tubes with preservative for CTC stabilization | Maintains CTC integrity for up to 96 hours post-draw [26]. |

| EpCAM-coated Magnetic Beads | Antibody-conjugated beads for immunomagnetic positive selection of epithelial CTCs | Isolation of CTCs from whole blood for enumeration or molecular analysis [26]. |

| CD45 Antibody | Marker for hematopoietic cells (leukocytes) | Used in negative enrichment strategies or as a fluorescent stain to exclude white blood cells during CTC identification [26]. |

| Cell-Free DNA Blood Collection Tubes | Tubes containing reagents to prevent white blood cell lysis | Preserves the native cell-free DNA profile and prevents dilution of ctDNA by genomic DNA [27]. |

| Circulating Nucleic Acid Kit | Silica-membrane based kits for isolating short-fragment DNA | Extraction of high-quality ctDNA from plasma or serum [14]. |

| Digital PCR Master Mix | Reagents for partitioning DNA into thousands of individual reactions | Absolute quantification of low-frequency mutations (e.g., KRAS, EGFR) in ctDNA with high sensitivity [14]. |

Workflow and Pathway Visualizations

Liquid Biopsy Workflow for Heterogeneity Assessment

Tumor Heterogeneity & Biomarker Discovery Challenge

cGAS-STING Pathway in Tumor Microenvironment

Core Challenges in Multi-Omic Data Integration

FAQ: What are the primary technical hurdles when combining genomics, proteomics, and epigenetics data?

The integration of multi-omics data presents several key challenges that can impact the robustness of your analysis and the validity of your biological conclusions.

Data Heterogeneity and Scale: Different omics layers produce data in vastly different formats, scales, and dimensions. For instance, RNA-seq can yield thousands of transcripts, while proteomics and metabolomics may produce only hundreds to thousands of features. This complicates direct comparison and integration [28]. Furthermore, the relationship between molecular layers is not linear; a single gene can produce multiple transcripts, which in turn can be translated into different protein isoforms with various post-translational modifications, each potentially having distinct functions [28].

Missing Data Points: Inherent technical limitations lead to missing data across omics layers. Proteomics and metabolomics are particularly affected due to limitations in mass spectrometry, including varying ionization efficiencies and the presence of isomers [28]. Single-cell techniques can have missing value rates as high as 30% due to low capture efficiency and technical variation [28].

Batch Effects and Technical Variation: Unwanted technical variability, such as differences in sample processing days or reagent batches, can introduce strong artifacts that obscure biological signals. If not corrected, analytical models will prioritize capturing this technical noise over more subtle biological variation [29].

Biological Interpretation: Successfully integrating data is only the first step. The subsequent challenge is interpreting the complex, non-linear relationships between different molecular types to extract meaningful biological insights, such as understanding how a genetic variant ultimately influences metabolite abundance [30] [28].

FAQ: How does tumor heterogeneity specifically complicate multi-omics biomarker discovery?

Intratumor heterogeneity (ITH) presents a significant obstacle for reliable biomarker discovery, as molecular profiles can vary substantially within a single tumor.

Spatial Heterogeneity: Molecular profiles differ between anatomical sites. In high-grade serous ovarian cancer (HGSC), for example, inflammatory and immune responses are significantly higher in omental (metastatic) sites compared to ovarian (primary) sites [9]. This means a biomarker identified from a single biopsy may not represent the entire tumor.

Cellular Heterogeneity: A tumor consists of diverse subpopulations of cancer cells with distinct molecular features, alongside various non-malignant cell types like cancer-associated fibroblasts and immune cells, each contributing to the overall molecular signature [31]. A single biopsy may miss critical subclones that drive therapy resistance or metastasis [31].

Epigenetic Plasticity: Epigenetic modifications, such as DNA methylation and histone modifications, can vary between cancer cells without underlying genetic changes and are influenced by the tumor microenvironment [31]. This plasticity allows tumors to adapt and survive under therapeutic pressure, making biomarkers based on a single epigenetic snapshot potentially unreliable over time.

Experimental Design & Quality Control

FAQ: How should I design a robust multi-omics study from the start?

A well-designed experiment is the foundation for successful multi-omics integration. Careful planning at this stage prevents insurmountable problems during analysis.

Define a Clear Biological Question: Let your specific research question guide which omics layers to include, how many time points to collect, and from what sample sources. For complex questions like therapy resistance in cancer, multiple omics approaches applied to the same samples are often necessary [28].

Ensure Adequate Sample Size: Multi-omics studies require sufficient statistical power. The sample size needed is strongly influenced by background noise and expected effect size. Tools like

MultiPowercan help estimate the optimal sample size for your specific experimental design [28]. As a general rule, factor analysis models require a minimum of 15 samples to be useful [29].Plan for Technical Replicates: Include technical replicates during sample preparation and analysis stages to objectively assess the reproducibility and variability of your data. Statistical metrics like the coefficient of variation (CV) can be used to quantify reproducibility across omics layers [30].

Standardize Sample Collection: To minimize batch effects, process samples in randomized order across batches whenever possible. For multi-site studies, implement standard operating procedures (SOPs) for sample collection, storage, and nucleic acid/protein extraction to ensure consistency [32].

Table: Key Considerations for Multi-Omics Experimental Design

| Consideration | Genomics/Epigenetics | Transcriptomics | Proteomics |

|---|---|---|---|

| Sample Input Requirements | Varies by method (e.g., WGBS, RRBS) | RNA quantity and quality (RIN) | Protein amount; consider FFPE compatibility |

| Common Normalization Methods | Quantile normalization, Beta-value transformation | Size factor + variance stabilization, log transformation | Total ion current normalization, log transformation |

| Primary QC Metrics | Bisulfite conversion efficiency (WGBS), peak distribution (ChIP-seq) | Library size, gene body coverage, 3' bias | Ion injection time, number of MS/MS spectra, missing data per sample |

| Handling of Missing Data | Usually minimal with sufficient coverage | Imputation for low-expression genes | High rate of missing data; requires careful imputation or filtering |

FAQ: What are the critical pre-processing steps before data integration?

Proper data pre-processing and normalization are crucial to ensure that different omics datasets are compatible and that technical artifacts are minimized.

Normalize to Remove Technical Bias: Each data type requires a specific normalization approach. For count-based data like RNA-seq or ATAC-seq, size factor normalization followed by variance-stabilizing transformation (e.g., log-transformation) is recommended. For DNA methylation array data (beta values), quantile normalization is often applied [29]. Metabolomics data frequently benefits from log transformation to stabilize variance and reduce skewness [30].

Filter Uninformative Features: It is strongly recommended to filter for highly variable features (HVGs) within each assay before integration. This reduces noise and computational load. When working with multiple sample groups, regress out the group effect before selecting HVGs [29].

Explicitly Regress Out Batch Effects: If you have known technical covariates (e.g., processing batch, sequencing lane), use methods like linear models (

limma) to regress them out prior to integration. Failure to do this will cause integration algorithms to focus on this dominant technical variation, potentially missing more subtle biological signals [29].Address Data Dimensionality Imbalance: Larger data modalities (e.g., transcriptomics with 20,000 genes) can dominate the integration model over smaller ones (e.g., proteomics with 5,000 proteins). Filter uninformative features from the larger datasets to bring the dimensionality of different views to a similar order of magnitude [29].

Data Processing & Integration Workflows

The following diagram illustrates a generalized workflow for processing and integrating multi-omics data, from raw input to biological insight.

FAQ: What are the main computational strategies for integrating different omics layers?

Integration methods can be broadly categorized based on when the different datasets are combined in the analytical pipeline.

Horizontal (Early) Integration: This method involves concatenating or merging different omics datasets into a single large matrix before analysis. While straightforward, this approach can be challenging due to the high dimensionality and heterogeneous scales of the data. It requires careful normalization and scaling to ensure one data type does not dominate [22] [32].

Vertical (Intermediate) Integration: These methods project different omics datasets into a common latent space, where shared sources of variation across the datasets are captured. Tools like Multi-Omics Factor Analysis (MOFA) are powerful examples. MOFA extracts a set of factors that capture the major axes of variability across all omics layers, which can then be interpreted by examining the feature weights for each factor [22] [29].

Multi-Stage (Late) Integration: In this approach, analyses are performed separately on each omics dataset, and the results are combined at the end. For example, you might perform feature selection on each omics type independently, then integrate the selected features into a final predictive model, as seen in the PRISM framework [33]. This can be more flexible but may miss interactions between molecular layers.

FAQ: How do I choose the right normalization method for each data type?

Selecting an appropriate normalization method is critical for making different omics datasets comparable.

Genomics/Epigenomics (e.g., DNA methylation arrays): Use quantile normalization to make the overall distribution of probe intensities consistent across samples. For bisulfite sequencing data (WGBS, RRBS), ensure proper correction for bisulfite conversion efficiency [34] [35].

Transcriptomics (RNA-seq): For count-based data, implement size factor normalization (as in DESeq2) to account for differences in library size, followed by a variance-stabilizing transformation (e.g., log2(x+1)). Avoid inputting raw counts directly into models that assume a Gaussian distribution [29] [33].

Proteomics (LC-MS): Apply total ion current (TIC) normalization to correct for overall differences in protein concentration between samples. Log-transformation is also commonly used to stabilize variance [30].

Table: Common Tools for Multi-Omics Data Processing and Integration

| Tool Name | Primary Function | Key Strengths | Applicable Omics |

|---|---|---|---|

| MOFA2 | Vertical integration via factor analysis | Identifies shared & specific sources of variation; handles missing data | Genomics, Transcriptomics, Epigenomics, Proteomics |

| WGCNA | Network-based integration | Identifies co-expression modules correlated with traits | Transcriptomics, Proteomics, Metabolomics |

| DMRichR | Differential methylation analysis | Statistical analysis and visualization of DMRs from bisulfite sequencing | DNA Methylation (WGBS, RRBS) |

| ChAMP | Quality control and analysis of methylation arrays | Comprehensive pipeline for 450K/EPIC array data, includes CNV detection | DNA Methylation (Array) |

| nf-core/chipseq & nf-core/rnaseq | Standardized pipeline for sequencing data | Portable, reproducible Nextflow workflows for ChIP-seq and RNA-seq | Epigenomics (ChIP-seq), Transcriptomics |

| mixOmics (R) | Multivariate analysis for integration | Wide range of methods (DIABLO, sGCCA) for multi-omics data exploration | All major omics types |

Analytical Approaches & Troubleshooting

FAQ: My integrated model is dominated by technical artifacts. How can I fix this?

If technical factors like batch effects are dominating your model, you need to address them prior to integration.

Proactive Batch Correction: If you have clear technical factors (e.g., processing date), regress them out a priori using a linear model (e.g.,

limma). This is more effective than hoping the integration model will ignore them [29].Validate with Positive Controls: Include known biological positive controls in your experiment. If your model fails to capture variation associated with these controls, it suggests technical noise is masking the biological signal.

Leverage Multi-Group Frameworks: If your experimental design includes multiple groups (e.g., different treatment conditions), use the multi-group functionality in tools like MOFA. This framework is designed to identify sources of variability that are shared across groups versus those that are group-specific, after regressing out the mean group effect [29].

FAQ: How can I link genomic variations to changes in other omics layers?

Connecting genetic variants to downstream molecular phenotypes is a key goal of multi-omics studies.

Correlation-Based Approaches: Perform statistical correlation analyses (e.g., Spearman or Pearson correlation) to assess relationships between genetic variant alleles and transcript, protein, or metabolite levels. A positive correlation suggests a potential regulatory relationship [30].

Pathway-Centric Integration: Map genes, proteins, and metabolites to known biological pathways using databases like KEGG, Reactome, or MetaCyc. If a set of genes involved in a specific pathway shows coordinated changes in both protein and metabolite levels, it provides strong evidence for pathway regulation [30] [28].

Employ Multi-Omic QTL Mapping: Extend the concept of expression Quantitative Trait Loci (eQTLs) to other molecular layers by searching for genomic loci associated with protein abundance (pQTLs) or metabolite levels (mQTLs). This directly links genetic variation to its molecular consequences [30].

Validation & Interpretation

FAQ: How do I resolve discrepancies between transcriptomics, proteomics, and metabolomics data?

Lack of direct correlation between different molecular layers is common and can be biologically informative.