Optimizing Oncology Trials: A Strategic Guide to NGS-Guided Patient Enrollment

This article provides a comprehensive guide for researchers and drug development professionals on implementing next-generation sequencing (NGS) to enhance clinical trial enrollment.

Optimizing Oncology Trials: A Strategic Guide to NGS-Guided Patient Enrollment

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on implementing next-generation sequencing (NGS) to enhance clinical trial enrollment. It explores the foundational role of NGS in precision oncology, details methodological approaches for integrating genomic data into trial workflows, addresses key implementation barriers and optimization strategies, and examines the evidence validating this approach. The content synthesizes current data, including real-world implementation studies and systematic reviews, to offer a actionable framework for leveraging comprehensive genomic profiling to accelerate patient matching, improve trial efficiency, and advance the development of targeted cancer therapies.

The Foundation of Precision Enrollment: How NGS is Reshaping Oncology Trial Design

Defining NGS's Role in Modern Oncology Trials

Next-generation sequencing (NGS) has fundamentally transformed the framework of oncology clinical trials, shifting enrollment from a tissue-of-origin model to a biomarker-driven paradigm. This technology enables massive parallel sequencing of millions of DNA fragments simultaneously, providing comprehensive genomic profiling that identifies actionable mutations across the entire genome [1]. The integration of NGS into trial workflows allows researchers to match cancer patients with targeted therapies based on the specific molecular alterations driving their disease, rather than solely on cancer type or histology [2]. This application note details the quantitative impact, procedural protocols, and essential resources for implementing NGS to enhance patient stratification and accelerate enrollment in modern oncology trials.

Quantitative Impact of NGS on Trial Accrual and Outcomes

Actionable Mutation Detection and Trial Enrollment Rates

Table 1: Impact of NGS Panel Size on Actionable Mutation Detection and Clinical Trial Enrollment

| Metric | Routine NGS Panel | Broad Panel (FoundationOne CDx) |

|---|---|---|

| Patient Cohort Size | 1,456 patients | Not Specified |

| Actionable Alterations Detected | 34.0% | 64.0% |

| Key Mutations Identified | KRAS (43.6%), BRAF (19.0%), PIK3CA (10.8%) | Not Specified |

| Eligible Patients Enrolled in Trials | 10.6% (19/179 eligible) | 16.0% |

| Primary Enrollment Barrier | Undocumented reasons (78.8% of non-included) | Not Specified |

Data from a retrospective cohort analysis demonstrates that comprehensive genomic profiling significantly increases the detection of targetable alterations, nearly doubling the proportion of patients with actionable findings from 34.0% to 64.0% when using a broader panel compared to routine testing [3]. This directly translates to improved trial enrollment rates, which rose from 12.0% to 16.0% with more extensive sequencing [3].

Operational Efficiency Gains with Rapid NGS

Table 2: Operational Impact of Traditional vs. Rapid On-Site NGS Testing

| Parameter | Centralized/Traditional Testing | Rapid On-Site NGS |

|---|---|---|

| Average Turnaround Time | Weeks | As little as 24 hours [2] |

| Clinical Trial Delays | Up to 12.2 months longer than planned [2] | Significant reduction |

| Patient Access Impact | Limited to urban/academic centers | Enables community and rural hospital enrollment [2] |

| Sample Integrity Risk | Higher during transport | Minimal (processed on-site) [2] |

| Therapy Decision Context | Patients often start standard treatment while waiting | Enables treatment hold for trial eligibility assessment [2] |

Decentralizing genomic testing with rapid NGS solutions directly addresses critical bottlenecks in clinical trial enrollment. Traditional pathways suffer from significant delays, with trials extending an average of 12.2 months beyond original timelines [2]. Implementing on-site NGS testing slashes turnaround times to as little as 24 hours, allowing patients to hold treatment while determining trial eligibility rather than defaulting to standard care during prolonged waiting periods [2].

Experimental Protocols for NGS-Guided Patient Stratification

Comprehensive Genomic Profiling (CGP) Protocol for Trial Screening

Objective: To identify actionable genomic alterations in tumor samples for precision oncology trial enrollment.

Sample Requirements:

- Tumor Tissue: FFPE blocks with ≥20% tumor content or core biopsies with sufficient cellularity

- Blood Samples: Circulating tumor DNA (ctDNA) collection tubes for liquid biopsy

- Quality Control: DNA/RNA quantification via fluorometry, integrity assessment via fragment analyzer

Library Preparation Workflow:

- Nucleic Acid Extraction: Isolate DNA and RNA using silica-membrane or magnetic bead-based methods.

- Fragmentatio: Shear DNA to 300bp fragments via acoustic shearing or enzymatic digestion.

- Adapter Ligation: Add platform-specific adapters with unique molecular identifiers (UMIs) to mitigate PCR duplicates.

- Target Enrichment: Hybridize with biotinylated probes targeting cancer-relevant genes (e.g., 500-gene panel).

- Library Amplification: Perform limited-cycle PCR to amplify captured libraries.

- Quality Control: Validate library size distribution and quantity via capillary electrophoresis and qPCR.

Sequencing Parameters:

- Platform: Illumina NovaSeq 6000 (or equivalent)

- Configuration: Paired-end 2x150bp sequencing

- Coverage: ≥500x mean coverage for tumor DNA, ≥1000x for ctDNA

- Controls: Include positive and negative control samples in each run

Bioinformatics Analysis Pipeline

Data Processing Steps:

- Base Calling and Demultiplexing: Generate FASTQ files and assign reads to samples.

- Quality Assessment: Evaluate sequence quality with FastQC.

- Alignment: Map reads to reference genome (GRCh38) using optimized aligners (BWA-MEM).

- Variant Calling:

- Single Nucleotide Variants (SNVs): Use MuTect2 for tumor-normal pairs or VarScan2 for tumor-only.

- Insertions/Deletions (Indels): Apply Pindel or Scalpel.

- Copy Number Variations (CNVs): Implement CONTRA or CNVkit.

- Gene Fusions: Analyze with STAR-Fusion or Arriba from RNA-seq data.

- Annotation: Annotate variants using databases (ClinVar, COSMIC, OncoKB, CIViC).

- Actionability Assessment: Interpret variants against ESMO Scale for Clinical Actionability of Molecular Targets (ESCAT) and clinical trial eligibility.

Validation and Reporting:

- Technical Validation: Confirm variants with orthogonal methods (ddPCR, Sanger sequencing) for low-frequency alterations.

- Clinical Report: Generate structured report indicating:

- Tier I/II actionable alterations with associated clinical trials

- Investigational biomarkers with preclinical evidence

- Germline findings relevant to hereditary cancer syndromes

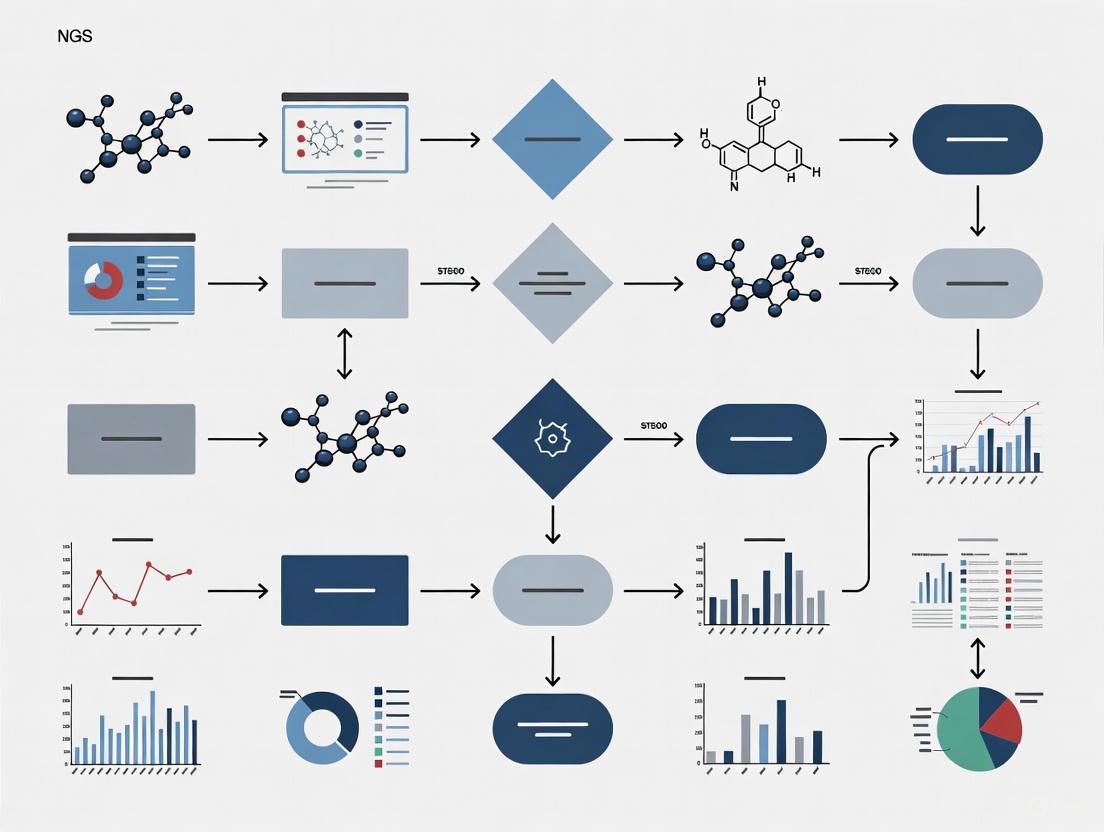

NGS Clinical Trial Screening Workflow

Essential Research Reagent Solutions for NGS-Based Trial Screening

Table 3: Essential Research Reagents for NGS-Based Clinical Trial Screening

| Category | Specific Product/Kit | Application in NGS Workflow |

|---|---|---|

| Nucleic Acid Extraction | QIAamp DNA FFPE Tissue Kit, MagMAX Cell-Free DNA Isolation Kit | Isolation of high-quality DNA from FFPE tissue and plasma ctDNA samples |

| Library Preparation | Illumina TruSight Oncology 500, Thermo Fisher Oncomine Comprehensive Assay | Target enrichment and library construction for comprehensive genomic profiling |

| Target Capture | IDT xGen Pan-Cancer Panel, Roche NimbleGen SeqCap EZ Choice | Hybridization-based capture of cancer-relevant genes |

| Sequencing Reagents | Illumina NovaSeq 6000 S-Prime Reagent Kit, Thermo Fisher Ion 540 Kit | Template preparation and sequencing chemistry |

| Quality Control | Agilent High Sensitivity DNA Kit, KAPA Library Quantification Kit | Assessment of nucleic acid quality and library quantification |

| Bioinformatics | Illumina DRAGEN Bio-IT Platform, Partek Flow Software | Secondary and tertiary analysis of NGS data |

Molecular Pathways in Patient Stratification for Targeted Trials

NGS Biomarker-Driven Trial Matching

Implementation Challenges and Future Directions

Despite its transformative potential, implementing NGS in clinical trials faces several challenges. The transition from single-gene testing to comprehensive genomic profiling requires significant infrastructure investment, bioinformatics expertise, and multidisciplinary molecular tumor boards for data interpretation [1]. Evidence shows that even with detectable actionable alterations, trial enrollment remains suboptimal at 10.6-16.0%, highlighting needs for improved physician awareness of available trials and streamlined enrollment mechanisms [3].

Future innovations focus on integrating multiomics data (genomic, transcriptomic, proteomic) to refine patient stratification beyond DNA sequencing alone [4]. Liquid biopsy approaches using ctDNA enable dynamic monitoring of treatment response and resistance mechanisms, creating opportunities for adaptive trial designs. The recent FDA approval of distributable comprehensive genomic profiling tests, such as TruSight Oncology Comprehensive, promises to expand access to standardized NGS testing across diverse clinical settings [4]. As the field advances, the synergy between NGS technology and clinical trial design will continue to accelerate the development of personalized cancer therapies and improve patient outcomes through precision oncology.

The paradigm of cancer diagnosis and treatment has undergone a fundamental transformation, moving from histopathological classification toward molecular characterization. This shift has been driven by the evolution of biomarker testing from single-gene assays to comprehensive multi-gene panels, enabling unprecedented precision in oncology. Next-generation sequencing (NGS) technologies now form the cornerstone of this approach, providing researchers and clinicians with powerful tools to uncover the complex genetic alterations driving tumorigenesis [5]. The clinical implementation of these advanced profiling techniques has created new opportunities for guiding patient enrollment in clinical trials, ensuring that targeted therapies reach the patients most likely to benefit from them [6].

This evolution reflects our growing understanding of cancer as a genetically heterogeneous disease, where multiple molecular pathways can drive progression within and across cancer types. Where traditional methods examined individual genes sequentially, consuming valuable tissue samples and time, NGS-based panels now facilitate simultaneous assessment of hundreds of genes from minimal tissue input [7]. For researchers designing clinical trials and enrollment strategies, this comprehensive profiling approach provides the molecular data necessary to match patients with targeted therapies and immunotherapies based on their tumor's unique genetic signature, ultimately accelerating drug development and improving patient outcomes [8].

The Trajectory of Biomarker Testing in Oncology

Limitations of Single-Gene Testing Approaches

Traditional single-gene biomarker testing dominated oncology for decades, focusing on individual mutations such as KRAS in colorectal cancer or EGFR in non-small cell lung cancer (NSCLC) [5]. These approaches, while valuable for specific clinical decisions, presented significant limitations for comprehensive tumor profiling:

- Tissue exhaustion: Sequential single-gene tests consumed precious tissue samples from biopsies, often leaving insufficient material for additional testing [9]

- Incomplete genomic picture: Focusing on individual genes failed to capture the complex interplay of co-mutations and resistance mechanisms that influence treatment response

- Limited clinical trial matching: With information on only a handful of biomarkers, many potential therapeutic options remained unidentified for individual patients

The Rise of Comprehensive Genomic Profiling

The advent of NGS technologies enabled a fundamental shift toward multi-gene panels that assess hundreds of cancer-related genes simultaneously. This comprehensive approach has transformed biomarker testing from a targeted interrogation to a broad discovery tool [6]. The advantages of multi-gene panels include:

- Maximized tissue utilization: Comprehensive data from limited tissue specimens, particularly critical for difficult-to-biopsy tumors

- Identification of rare alterations: Detection of low-frequency mutations and novel biomarkers across diverse cancer types

- Co-mutation analysis: Ability to understand mutation patterns and their implications for therapeutic response and resistance

- Efficiency in clinical workflows: Reduced turnaround time from biopsy to treatment decision compared to sequential single-gene testing

Table 1: Comparative Analysis of Single-Gene vs. Multi-Gene Testing Approaches

| Parameter | Single-Gene Testing | Multi-Gene NGS Panels |

|---|---|---|

| Genes Interrogated | 1-3 genes | Hundreds of genes simultaneously [6] |

| Tissue Consumption | High (with sequential testing) | Minimal (maximizes tissue utility) [9] |

| Turnaround Time | Variable (weeks for multiple tests) | 1-2 weeks for comprehensive profile [9] |

| Therapeutic Targets Identified | Limited to known biomarkers | Broad range including rare alterations [6] |

| Clinical Trial Matching Potential | Restricted | Extensive across multiple biomarkers [8] |

NGS-Based Multi-Gene Panel Testing: Protocols and Methodologies

Sample Preparation and Quality Control

Robust sample preparation is fundamental to successful NGS-based biomarker testing. The following protocol outlines critical steps for processing formalin-fixed paraffin-embedded (FFPE) tissue specimens, the most common sample type in clinical cancer research [6] [7]:

FFPE Tissue Processing:

- Sectioning: Cut FFPE tissue blocks at 10μm thickness using a rotary microtome [7]

- Macrodissection: Manually dissect representative tumor areas with sufficient tumor cellularity (>20% tumor content recommended) [6]

- DNA Extraction: Isolate genomic DNA using commercial FFPE-specific extraction kits (e.g., QIAamp DNA FFPE Tissue kit) [6]

- Quality Assessment:

Library Preparation:

- Library Construction: Use hybrid capture-based methods (e.g., Illumina AmpliSeq focus panel or Agilent SureSelectXT) for target enrichment [6] [7]

- Quality Control: Assess library size distribution (150-400 base pairs) using microfluidics-based systems (e.g., Agilent High Sensitivity DNA Kit) [6] [7]

- Sequencing: Process qualified libraries on NGS platforms (e.g., Illumina NextSeq 550Dx) with minimum coverage of 500x and Phred quality score ≥30 [6] [7]

Bioinformatic Analysis and Interpretation

The computational pipeline for processing NGS data requires multiple validation steps to ensure accurate variant calling:

Primary Analysis:

- Alignment: Map sequencing reads to reference genome (hg19/GRCh37) using optimized aligners [6]

- Variant Calling:

- Identify single nucleotide variants (SNVs) and small insertions/deletions (INDELs) using Mutect2 with variant allele frequency (VAF) threshold ≥2% [6]

- Detect copy number variations (CNVs) using CNVkit (amplification threshold: average CN ≥5) [6]

- Identify gene fusions using structural variant callers (e.g., LUMPY) [6]

- Annotation: Annotate variants using SnpEff and cross-reference with population databases (gnomAD) and clinical databases (ClinVar) [6]

Clinical Interpretation:

- Variant Classification: Categorize variants according to Association for Molecular Pathology (AMP) guidelines [6]:

- Tier I: Variants of strong clinical significance

- Tier II: Variants of potential clinical significance

- Tier III: Variants of unknown significance

- Tier IV: Benign or likely benign variants

- Actionability Assessment: Interpret variants for clinical trial eligibility based on their functional and predictive implications

NGS Analysis Workflow for Clinical Trial Enrollment

Application in Clinical Trial Enrollment and Precision Oncology

Biomarker-Driven Patient Stratification

The implementation of NGS-based multi-gene panels has revolutionized clinical trial enrollment by enabling sophisticated patient stratification based on comprehensive molecular profiles rather than traditional histology-based classifications. This approach aligns with the growing recognition that molecular alterations often transcend organ-based cancer classification [8]. Real-world evidence demonstrates the substantial impact of this approach:

In a study of 990 patients with advanced solid tumors who underwent NGS testing using a 544-gene panel, 26.0% (257/990) harbored Tier I variants with strong clinical significance, and 86.8% (859/990) carried Tier II variants with potential clinical significance [6]. Among patients with Tier I variants, 13.7% received NGS-based therapy based on these findings, with particularly high rates in thyroid cancer (28.6%), skin cancer (25.0%), gynecologic cancer (10.8%), and lung cancer (10.7%) [6].

Table 2: Frequency of Actionable Genetic Alterations Identified by NGS in Solid Tumors

| Gene Alteration | Frequency in Solid Tumors | Common Cancer Types | Clinical Trial Implications |

|---|---|---|---|

| KRAS | 10.7% [6] | Colorectal, lung, pancreatic | KRAS G12C inhibitors, downstream pathway targeting |

| EGFR | 2.7% [6] | NSCLC, glioblastoma | EGFR inhibitors, combination therapies |

| BRAF | 1.7% [6] | Melanoma, colorectal, NSCLC | BRAF/MEK inhibitor combinations |

| PIK3CA | 2.4% [7] | Breast, colorectal, endometrial | PI3K inhibitors, AKT inhibitors |

| KIT | 12.3% [7] | GIST, melanoma | KIT inhibitors, immunotherapy combinations |

Biomarker Testing Operational Framework for Clinical Trials

Implementing NGS-based biomarker testing for clinical trial programs requires careful operational planning:

Pre-analytical Phase:

- Establish specimen eligibility criteria (tumor content, necrosis, decalcification status)

- Define optimal biopsy procedures to ensure sufficient material for NGS testing

- Implement standardized tissue handling protocols to preserve nucleic acid integrity

Analytical Phase:

- Select appropriate NGS panels based on trial objectives (targeted vs. comprehensive)

- Validate testing protocols in CAP/CLIA-certified laboratories

- Establish quality metrics (coverage depth, quality scores, validation parameters)

Post-analytical Phase:

- Develop molecular tumor boards for complex interpretation

- Create standardized reporting templates highlighting trial-relevant alterations

- Implement data integration systems for matching patients to appropriate trial arms

Emerging Technologies and Future Directions

Artificial Intelligence and Advanced Computational Methods

The integration of artificial intelligence (AI) and machine learning (ML) is poised to further transform biomarker discovery and clinical trial enrollment [5] [8] [10]. These technologies enable:

- Predictive modeling of treatment responses based on complex multi-omic biomarker profiles [10]

- Automated interpretation of NGS data, reducing turnaround time and increasing accessibility [5]

- Image analysis of histopathology slides to infer transcriptomic profiles and identify potential biomarkers [8]

- Pattern recognition in large datasets to identify novel biomarker signatures for patient stratification [10]

The ARPA-H ADAPT program exemplifies this direction, developing AI-powered platforms that integrate diverse data types to recommend optimal therapy strategies and dynamically track tumor evolution throughout treatment [11].

Liquid Biopsy and Dynamic Monitoring

Liquid biopsy approaches analyzing circulating tumor DNA (ctDNA) are emerging as complementary tools for clinical trial enrollment and monitoring [5] [10]. These technologies offer:

- Non-invasive assessment of tumor biomarkers, enabling repeated sampling throughout trial participation

- Real-time monitoring of treatment response and emerging resistance mechanisms [10]

- Detection of molecular residual disease for adjuvant trial enrollment [8]

- Insight into tumor heterogeneity beyond what is possible with single-site biopsies

Ongoing research is focused on enhancing the sensitivity and specificity of these assays, with the Galleri multi-cancer early detection test representing one such advancement currently in clinical trials [5].

Multi-Omics Integration

The future of biomarker testing lies in integrating multiple data types beyond genomic sequencing alone [5] [10]. Multi-omics approaches combine:

- Genomics: DNA-level alterations (mutations, CNVs, fusions)

- Transcriptomics: Gene expression patterns and splicing variants

- Proteomics: Protein expression and post-translational modifications

- Epigenomics: DNA methylation and chromatin accessibility states

This comprehensive profiling enables deeper understanding of disease mechanisms and more precise patient stratification for clinical trials [10]. The convergence of these technologies with NGS-based DNA analysis will create increasingly sophisticated biomarkers for trial enrollment in the coming years.

Future Biomarker-Driven Clinical Trial Framework

Essential Research Reagent Solutions

Successful implementation of NGS-based biomarker testing requires carefully selected reagents and platforms. The following solutions represent critical components for research and clinical applications:

Table 3: Essential Research Reagents for NGS-Based Biomarker Testing

| Reagent Category | Specific Examples | Research Application |

|---|---|---|

| Nucleic Acid Extraction | QIAamp DNA FFPE Tissue kit [6] | Isolation of high-quality DNA from challenging FFPE specimens |

| Library Preparation | Illumina AmpliSeq focus panel [7], Agilent SureSelectXT [6] | Target enrichment for specific gene panels |

| Targeted Sequencing Panels | SNUBH Pan-Cancer v2.0 (544 genes) [6] | Comprehensive genomic profiling across cancer types |

| Quality Control | Qubit dsDNA HS Assay [6], Agilent High Sensitivity DNA Kit [6] | Quantification and qualification of nucleic acids throughout workflow |

| NGS Platforms | Illumina NextSeq 550Dx [6], Illumina MiniSeq [7] | Sequencing execution with required throughput and quality |

The evolution from single-gene to multi-gene panel testing represents a fundamental advancement in cancer research and clinical trial design. NGS-based comprehensive genomic profiling has enabled more precise patient stratification, accelerated enrollment in biomarker-driven clinical trials, and facilitated the development of targeted therapies for previously untreatable malignancies. As technologies continue to advance—with AI-driven analysis, liquid biopsy applications, and multi-omics integration—the potential for increasingly sophisticated biomarker-driven trial designs grows exponentially. For researchers and drug development professionals, embracing these evolving technologies and methodologies is essential for advancing precision oncology and delivering more effective, personalized cancer treatments to patients.

Key Biomarkers and Actionable Mutations for Trial Stratification

The paradigm of oncology drug development has fundamentally shifted with the integration of molecular biomarkers into clinical trial design. Next-generation sequencing (NGS) technologies now enable comprehensive genomic profiling that identifies actionable mutations, allowing for precise patient stratification in oncology trials [12]. Biomarkers, defined as measurable indicators of biological processes, serve two primary functions in this context: prognostic biomarkers predict disease aggressiveness regardless of treatment, while predictive biomarkers identify patients likely to benefit from specific therapeutic interventions [12]. The European Society for Medical Oncology Scale for Clinical Actionability of Molecular Targets (ESCAT) provides a standardized framework for categorizing molecular targets into evidence-based tiers, enabling clinicians and researchers to prioritize targets according to the strength of clinical evidence [13].

The clinical utility of this approach is demonstrated by real-world evidence from institutional precision medicine programs. At the Vall d'Hebron Institute of Oncology (VHIO), the detection rate of actionable alterations increased substantially from 10.1% in 2014 to 53.1% in 2024, paralleling advances in sequencing technology, expanded biomarker knowledge, and broader assay utilization [13]. Similarly, access to molecularly matched therapies rose from 1% to 14.2% over the same period, with 23.5% of patients with actionable alterations ultimately receiving biomarker-guided therapies, primarily through clinical trials [13]. These findings underscore the critical importance of robust biomarker stratification strategies in modern oncology research and drug development.

Key Biomarkers and Their Prevalence Across Cancers

Established and Emerging Biomarkers

The landscape of actionable biomarkers continues to expand, with certain molecular alterations now recognized as pan-cancer predictors of treatment response. Tumor-agnostic biomarkers represent particularly valuable targets for clinical trial stratification as they transcend traditional histology-based classifications. A comprehensive Asian pan-cancer study of 1,166 tissue samples across 29 cancer types found that 8.4% of samples harbored at least one established tumor-agnostic biomarker, including high tumor mutation burden (TMB-high), microsatellite instability (MSI-high), NTRK fusions, RET fusions, and BRAF V600E mutations [14]. These biomarkers were distributed across 26 different cancer types, highlighting their broad relevance for basket trial designs that enroll patients based on molecular rather than histological characteristics.

Several emerging tumor-agnostic biomarkers show significant promise for future trial stratification. Homologous recombination deficiency (HRD) was observed in 34.9% of samples in the Asian cohort, with particularly high prevalence in breast (50%), colon (49.0%), lung (44.2%), ovarian (42.2%), and gastric (39.5%) cancers [14]. ERBB2 amplification was identified in 3.6% of samples overall, with highest frequency in breast (15.0%), endometrial (11.8%), and ovarian tumors (8.9%) [14]. Other emerging biomarkers include FGFR fusions/mutations, NRG1 fusions, MTAP loss, ALK fusions, KRAS G12C, and TP53 Y220C, all of which are expected to further transform the drug development landscape [14].

The ESCAT classification system provides a critical framework for prioritizing biomarkers based on clinical evidence levels. In the VHIO precision medicine program, 12.7% of samples harbored Tier I alterations (targets linked to approved standard-of-care therapies), while 6.0% contained Tier II alterations (targets with clinical trial evidence but without established standard-of-care status) [13]. This tiered approach enables researchers to stratify patients according to the strength of evidence supporting biomarker-therapy matching, optimizing trial designs for both established and investigational targets.

Cancer-Type Specific Biomarker Landscapes

Lung Cancer

Lung cancer represents a paradigm for precision medicine, with numerous biomarkers guiding treatment decisions and trial enrollment. The ATLAS study, which performed comprehensive molecular profiling on 455 patients with advanced non-small cell lung cancer (NSCLC), demonstrated that centralized NGS testing increased the detection of druggable mutations from 7.9% by local pathology assessments to 25.9% [15]. KRAS G12C was the most prevalent druggable alteration (53.6% of druggable mutations), followed by MET amplification (8.1%) and MET exon 14 skipping (7.3%) [15]. Importantly, 34.5% of patients had molecular alterations matching clinical trials available within the same country, highlighting the critical role of biomarker testing in connecting patients with investigational therapies [15].

In EGFR-mutant NSCLC, understanding resistance mechanisms is essential for trial stratification in the post-osimertinib setting. Resistance mechanisms include on-target mutations (e.g., C797S), bypass signaling (e.g., MET amplification, which occurs in approximately 15-20% of cases), histological transformation (e.g., small cell lung cancer transformation), and downstream pathway activation [16]. The SACHI study demonstrated that combining the MET inhibitor savolitinib with osimertinib in patients with MET-amplified, EGFR-mutant NSCLC after third-generation EGFR-TKI failure significantly improved progression-free survival compared to chemotherapy (8.2 months vs. 4.5 months, HR=0.34) [16]. These findings underscore the importance of repeat biopsy and comprehensive genomic profiling at disease progression to identify resistance mechanisms and guide subsequent trial enrollment.

Brain Tumors

Brain tumors exhibit distinct molecular landscapes that vary significantly across the lifespan, necessitating age-stratified biomarker approaches. In pediatric low-grade gliomas, MAPK/ERK pathway alterations are predominant, with BRAF V600E mutations present in 20-25% of cases and KIAA1549-BRAF fusions in 30-40% [17]. These alterations predict response to BRAF inhibitors (dabrafenib, vemurafenib) and MEK inhibitors (trametinib), which have received regulatory approval for BRAF V600E-mutant pediatric low-grade gliomas [17]. In contrast, adult gliomas more frequently feature IDH mutations, TERT promoter mutations, and EGFR amplifications [17]. The recent FDA accelerated approval of ONC201 (dordaviprone) for recurrent H3 K27M-mutant diffuse midline glioma in August 2025 represents a significant advancement for this previously untreatable malignancy [17].

Table 1: Key Biomarkers and Their Clinical Implications Across Major Cancer Types

| Cancer Type | Key Biomarkers | Prevalence | Clinical Actionability | Trials/Agents |

|---|---|---|---|---|

| NSCLC | KRAS G12C | 53.6% of druggable mutations [15] | Tier I (approved therapies) | Sotorasib, Adagrasib |

| MET amplification | 8.1% of druggable mutations [15] | Tier I/II | Savolitinib combinations [16] | |

| MET exon 14 skipping | 7.3% of druggable mutations [15] | Tier I | Capmatinib, Tepotinib | |

| EGFR C797S | ~7-15% post-osimertinib [16] | Investigational | Fourth-generation EGFR TKIs | |

| Breast Cancer | PIK3CA mutations | 39% [14] | Tier I | Alpelisib + Fulvestrant |

| ERBB2 amplification | 15% [14] | Tier I | Trastuzumab, ADC's | |

| BRCA1/2 somatic | Varies | Tier I/II | PARP inhibitors | |

| Brain Tumors (Pediatric) | BRAF V600E | 20-25% of pLGG [17] | Tier I | Dabrafenib, Vemurafenib |

| KIAA1549-BRAF fusion | 30-40% of pLGG [17] | Tier I | Trametinib, Tovorafenib | |

| H3 K27M | 70-80% of DMG [17] | Tier II | ONC201 (dordaviprone) [17] | |

| Multiple Solid Tumors | MSI-High | 1.4% overall (up to 5.9% in endometrial) [14] | Tier I (tumor-agnostic) | Pembrolizumab, Nivolumab |

| TMB-High | 6.6% overall [14] | Tier I (tumor-agnostic) | Immune checkpoint inhibitors | |

| NTRK fusions | ~0.2-0.3% overall [14] | Tier I (tumor-agnostic) | Larotrectinib, Entrectinib |

Quantitative Data on Biomarker Actionability

Understanding the prevalence and actionability of molecular alterations across different cancer types is essential for designing adequately powered clinical trials. Recent large-scale studies provide comprehensive data on biomarker frequencies and their potential for matching to targeted therapies.

The VHIO precision medicine program, which enrolled 12,168 unique patients between 2014 and 2024, demonstrated a steady increase in both actionable alteration detection and access to matched therapies over time [13]. The rate of patients receiving molecularly matched therapies rose from 1% in 2014 to 14.2% in 2024, with annual rates ranging from 19.5% to 32.7% among patients with actionable alterations [13]. These findings highlight both the progress and persistent challenges in translating biomarker identification to treatment access.

A pan-cancer Asian study utilizing comprehensive genomic profiling on 1,166 samples found that 62.3% contained actionable biomarkers, including 4.7% of somatic variants potentially targetable by regulatory-approved therapies [14]. The likelihood of identifying at least one actionable molecular alteration varied significantly by cancer type, with highest rates in CNS tumors (83.6%), lung cancer (81.2%), and breast cancer (79.0%) [14]. These data underscore the importance of cancer-type specific approaches to biomarker testing and trial stratification.

Table 2: Actionability Rates Across Major Cancer Types Based on Real-World Evidence

| Cancer Type | Samples with Actionable Alterations | ESCAT Tier I Alterations | ESCAT Tier II Alterations | Tumor-Agnostic Biomarkers |

|---|---|---|---|---|

| All Cancers | 62.3% [14] | 12.7% [13] | 6.0% [13] | 8.4% [14] |

| CNS Tumors | 83.6% [14] | Varies by age and subtype [17] | Varies by age and subtype [17] | ~2-5% (including BRAF V600E) [17] |

| Lung Cancer | 81.2% [14] | 25.9% with druggable mutations [15] | 34.5% matching clinical trials [15] | 16.8% [14] |

| Breast Cancer | 79.0% [14] | 39% with PIK3CA mutations [14] | Varies (e.g., ERBB2 mutations) [14] | Varies (e.g., MSI-H, TMB-H) [14] |

| Colorectal Cancer | Data not available in search results | Data not available in search results | Data not available in search results | 12% with tumor-agnostic biomarkers [14] |

| Prostate Cancer | Data not available in search results | 22 with BRCA1/2 alterations [14] | 17 with PTEN alterations [14] | 5 with MSI-H [14] |

The ATLAS study in NSCLC provides particularly compelling evidence for the value of comprehensive NGS testing in clinical trial matching. Beyond the increased detection of druggable mutations (from 7.9% to 25.9%), the study found that a remarkable 34.5% of patients had molecular alterations matching clinical trials available within Spain [15]. This finding highlights the critical role of biomarker testing in identifying patients for targeted therapy trials, especially in malignancies like NSCLC where multiple biomarker-directed therapeutic options exist.

Experimental Protocols for Biomarker Identification

Comprehensive Genomic Profiling Workflow

Implementing robust NGS-based biomarker testing requires standardized protocols from sample collection through data interpretation. The following workflow, adapted from established precision medicine programs, outlines key steps for comprehensive genomic profiling in clinical trial contexts:

Sample Acquisition and Processing: The VHIO precision medicine program utilizes both archived formalin-fixed paraffin-embedded (FFPE) tumor tissues and circulating tumor DNA (ctDNA) from liquid biopsies, without matched germline sequencing [13]. Sample quality control is critical, with pathologist review ensuring adequate tumor content (>20% typically required) and DNA/RNA quality metrics (e.g., DIN >4 for DNA, RIN >6 for RNA). For liquid biopsies, accurate blood sample collection, handling, and storage procedures are essential for reliable ctDNA extraction, with plasma separation within specified timeframes to prevent cell lysis and genomic DNA contamination [12].

Library Preparation and Sequencing: The VHIO program employs multiple NGS assays tailored to specific applications. Their ISO 15189-certified Broad NGS tissue v2.0 panel (Agilent) covers 431 genes and assesses genomic signatures including microsatellite instability (MSI) and tumor mutational burden (TMB) [13]. For liquid biopsies, the Guardant360 CDx assay (Guardant Health) enables ctDNA analysis through technology transfer and subsequent ISO certification [13]. Library preparation follows manufacturer protocols with unique molecular identifiers (UMIs) to enable error correction and more accurate variant calling. Sequencing is typically performed to achieve minimum coverage of 500x for tissue and 10,000x for liquid biopsies, with higher coverage for low-frequency variants.

Bioinformatic Analysis and Interpretation: Data analysis pipelines include quality control metrics (sequencing depth, uniformity, base quality), alignment to reference genome (GRCh38), variant calling (SNVs, indels, CNVs, fusions), and annotation using curated databases (e.g., COSMIC, ClinVar, OncoKB). The VHIO program standardizes interpretation and therapy prioritization through regular multidisciplinary molecular tumor boards, classifying alterations according to ESCAT tiers [13]. Actionability is determined based on evidence levels ranging from standard-of-care biomarkers to exploratory targets suitable for clinical trial enrollment.

Specialized Assays for Specific Biomarker Classes

Different biomarker classes require specialized methodological approaches for optimal detection:

DNA-based Alterations: Single nucleotide variants (SNVs), small insertions/deletions (indels), and copy number variations (CNVs) are detected through DNA sequencing. The Asian pan-cancer study used a comprehensive DNA/RNA panel (UNITED DNA/RNA multigene panel) that identified 4.6% of targetable variants through DNA analysis alone [14]. Copy number analysis requires careful normalization to control samples and accounting for tumor purity and ploidy.

RNA-based Alterations: Gene fusions and alternative splicing events require RNA sequencing for optimal detection. The same Asian study found that 0.1% of targetable variants were identified exclusively through RNA analysis [14]. The VHIO program employs targeted fusion panels (Fusion v2.0, Agilent) in addition to comprehensive NGS assays to ensure sensitive fusion detection [13].

Genomic Signatures: MSI status is determined by analyzing mononucleotide repeats compared to reference samples, while TMB is calculated as the number of somatic mutations per megabase of sequenced genome. The Asian study defined TMB-high as ≥10 mutations/Mb, identifying 6.6% of samples as TMB-high [14]. HRD status can be assessed through genomic scar analysis (loss of heterozygosity, telomeric allelic imbalance, large-scale transitions) or by specific mutational signatures.

NGS Biomarker Testing Workflow: This diagram illustrates the comprehensive pathway from sample collection through clinical interpretation for biomarker-guided trial stratification.

Signaling Pathways and Resistance Mechanisms

Key Oncogenic Signaling Pathways

Understanding the molecular pathways driving oncogenesis is fundamental to effective biomarker stratification. Several key pathways frequently altered in cancer represent prime targets for therapeutic intervention:

MAPK/ERK Pathway: This pathway is frequently activated in multiple cancer types, particularly in pediatric low-grade gliomas where BRAF alterations (V600E mutations or KIAA1549-BRAF fusions) occur in up to 60% of cases [17]. In the Asian pan-cancer cohort, BRAF V600E mutations were identified across multiple cancer types including colorectal cancer, melanoma, thyroid cancer, CNS tumors, and cancers of unknown primary [14]. These alterations constitutively activate the MAPK signaling cascade, driving uncontrolled cell proliferation and representing prime targets for BRAF and MEK inhibitors.

Receptor Tyrosine Kinase Signaling: Multiple receptor tyrosine kinases (RTKs) including EGFR, MET, HER2, and ALK play critical roles in oncogenic signaling. In NSCLC, EGFR mutations occur in 30-60% of Asian populations and drive sensitivity to EGFR tyrosine kinase inhibitors [16]. MET amplification serves as both a primary oncogenic driver and a resistance mechanism to EGFR inhibitors, with the SACHI study demonstrating that combined MET and EGFR inhibition can overcome this resistance [16]. ERBB2 (HER2) amplification, identified in 3.6% of the Asian pan-cancer cohort, activates downstream PI3K/AKT and MAPK pathways, driving sensitivity to HER2-targeted therapies [14].

DNA Damage Response Pathway: Homologous recombination deficiency (HRD), observed in 34.9% of the Asian pan-cancer cohort, represents a therapeutic vulnerability to PARP inhibitors and platinum-based chemotherapy [14]. HRD is particularly prevalent in ovarian, breast, pancreatic, and prostate cancers, creating synthetic lethality opportunities when DNA repair pathways are compromised.

Oncogenic Signaling Pathways: This diagram illustrates key signaling pathways frequently altered in cancer, with associated biomarkers that guide targeted therapy selection.

Resistance Mechanism Networks

Understanding resistance networks is essential for designing sequential trial strategies and combination therapies. In EGFR-mutant NSCLC, four primary resistance mechanisms have been characterized:

On-target Resistance: Secondary mutations within the EGFR kinase domain, most commonly C797S, prevent binding of third-generation EGFR inhibitors like osimertinib while maintaining downstream signaling competence [16]. The spatial relationship between resistance mutations (e.g., cis vs. trans configuration of T790M and C797S) determines sensitivity to next-generation inhibitors and combination approaches.

Bypass Signaling: Activation of alternative signaling pathways compensates for inhibited EGFR signaling. MET amplification represents the most common bypass mechanism, occurring in approximately 15-20% of osimertinib-resistant cases [16]. Other bypass mechanisms include HER2 amplification, BRAF V600E mutation, KRAS mutations, and RET or ALK fusions, each requiring specific targeted approaches.

Histological Transformation: Lineage switching from adenocarcinoma to small cell lung cancer (SCLC) or squamous cell carcinoma occurs in 3-15% of EGFR-TKI resistant cases, typically accompanied by loss of EGFR dependency and acquisition of new therapeutic vulnerabilities [16]. SCLC transformation is strongly associated with concurrent TP53 and RB1 inactivation, requiring platinum-etoposide chemotherapy rather than continued EGFR inhibition.

Downstream Pathway Activation: Mutations in downstream effectors, particularly in the MAPK and PI3K-AKT pathways, can maintain oncogenic signaling despite effective EGFR inhibition. These alterations may coexist with other resistance mechanisms, creating complex molecular landscapes that necessitate comprehensive genomic profiling at progression.

The Scientist's Toolkit: Essential Research Reagents and Platforms

Successful implementation of biomarker-driven trial stratification requires access to specialized reagents, platforms, and analytical tools. The following table outlines essential components of the modern cancer biomarker research toolkit:

Table 3: Essential Research Reagents and Platforms for Biomarker Discovery and Validation

| Category | Specific Tools/Platforms | Key Features | Application in Trial Stratification |

|---|---|---|---|

| NGS Platforms | Illumina NovaSeq, NextSeq; Ion Torrent Genexus | High-throughput sequencing, automated workflows | Comprehensive genomic profiling, variant detection [13] [14] |

| Targeted Panels | VHIO Broad NGS panels (59-431 genes); Oncomine Focus Assay | Focused content, optimized coverage, ISO certification | Targeted biomarker detection, clinical trial eligibility [13] [15] |

| Liquid Biopsy Assays | Guardant360 CDx; In-house ctDNA assays | Non-invasive monitoring, dynamic biomarker assessment | Resistance mechanism detection, therapy response monitoring [13] [12] |

| Bioinformatic Tools | Variant callers (GATK, VarScan); Annotation tools (OncoKB, CIViC) | Variant prioritization, clinical interpretation | ESCAT classification, actionability assessment [13] |

| Cell Line Models | Patient-derived organoids, CRISPR-modified lines | Physiological relevance, genetic manipulability | Functional validation of biomarkers, drug screening [18] |

| Immunoassay Platforms | IHC, FISH, RAD51 foci immunofluorescence | Protein expression, chromosomal rearrangements, functional HRD | Complementary to NGS, biomarker confirmation [13] [17] |

The selection of appropriate testing platforms depends on the specific trial context, including the biomarkers of interest, sample type and quantity, turnaround time requirements, and regulatory considerations. The VHIO program exemplifies how integrated diagnostic platforms evolve over time, expanding from focused NGS panels to comprehensive genomic profiling encompassing DNA and RNA sequencing, with ISO certification enabling their use as in-house alternatives to companion diagnostics for approved drugs and clinical trials [13].

Liquid biopsy technologies represent particularly valuable tools for clinical trial contexts, enabling non-invasive assessment of tumor genomics and monitoring of resistance emergence. The VHIO program introduced a liquid biopsy assay (Broad NGS liquid v1.0) through technology transfer from Guardant Health, subsequently obtaining ISO certification in 2024 [13]. Liquid biopsies are especially useful for assessing dynamic changes in mutation profiles during treatment and capturing spatial heterogeneity, although limitations remain in detecting certain alteration types such as copy number changes and gene fusions in low ctDNA fraction samples [12].

The strategic integration of biomarker-driven approaches into clinical trial design has fundamentally transformed oncology drug development. The systematic classification of molecular alterations using frameworks like ESCAT, combined with comprehensive genomic profiling technologies, enables precise patient stratification that maximizes therapeutic benefit while accelerating drug development timelines. Real-world evidence from large precision medicine programs demonstrates both the substantial progress achieved and the ongoing challenges in matching patients to targeted therapies, with current rates of matched therapy access approaching 14-24% among comprehensively profiled patients [13].

Future directions in biomarker-guided trial stratification will likely include increased incorporation of liquid biopsy technologies for dynamic biomarker monitoring, expanded use of complex biomarker signatures beyond single-gene alterations, and the development of innovative trial designs that accommodate multiple biomarker-defined subgroups within unified master protocols. Additionally, the integration of artificial intelligence and machine learning approaches for biomarker discovery and interpretation holds promise for identifying novel predictive signatures beyond currently established biomarkers. As the field continues to evolve, the systematic application of comprehensive biomarker assessment will remain essential for realizing the full potential of precision oncology in clinical trial contexts and ultimately improving outcomes for cancer patients.

The Growing Market and Adoption Trends for NGS in Clinical Oncology

The integration of next-generation sequencing (NGS) into clinical oncology represents a paradigm shift toward molecularly driven cancer care. This transformation is particularly pivotal within the context of clinical trials, where NGS serves as a fundamental technology for enabling precision oncology by identifying eligible patients based on their tumor's genomic profile. The global clinical oncology NGS market is experiencing robust growth, projected to expand from an estimated $744.4 million in 2025 to over $3.13 billion by 2034, reflecting a compound annual growth rate (CAGR) of 17.3% [19]. This expansion is fueled by the critical need to align the right targeted therapy with the right patient at the right moment, a challenge that remains central to accelerating clinical trial breakthroughs in oncology [20]. As the industry confronts rising cancer incidence rates and the paradox of low clinical trial enrollment, NGS technologies are evolving to democratize genomic testing, reduce turnaround times, and create more efficient patient-trial matching systems that ultimately serve the broader goal of personalized cancer treatment.

The clinical oncology NGS market demonstrates vigorous growth dynamics across all segments, driven by technological advancements, increasing cancer prevalence, and the expanding application of precision medicine principles. The market's trajectory reflects its evolving role from a specialized research tool to an indispensable clinical asset for therapy selection and trial enrollment.

Table 1: Global Clinical Oncology NGS Market Size Projections

| Source | 2024/2025 Base Value | 2034 Projection | CAGR |

|---|---|---|---|

| Future Market Insights [19] | USD 744.4 million (2025) | USD 3.13 billion | 17.3% |

| Market.us [21] | USD 0.7 billion (2024) | USD 3.4 billion | 17.2% |

| Nova One Advisor [22] | USD 551.43 million (2025) | USD 2,129.82 million | 16.2% |

This growth is structurally supported by several key drivers: the rising global cancer burden with an estimated 20 million new cases diagnosed in 2022 [22], the continuous innovation in sequencing technologies, and the expanding clinical utility of NGS in guiding treatment decisions and clinical trial enrollment [21]. The International Agency for Research on Cancer anticipates approximately 27.5 million new cancer cases by 2040, further underscoring the growing need for advanced molecular diagnostics [21].

Table 2: Clinical Oncology NGS Market Share by Segment (2024)

| Segment | Leading Sub-category | Market Share | High-Growth Sub-category |

|---|---|---|---|

| Technology | Targeted Sequencing & Resequencing | 48.6% [21] | Whole Genome Sequencing |

| Workflow | NGS Sequencing | 45.8% [21] | NGS Data Analysis |

| Application | Screening | 52.3% [21] | Companion Diagnostics |

| End-use | Laboratories | 57.4% [21] | Clinics |

| Component | Kits and Reagents | 60.9% [19] | - |

Targeted sequencing maintains dominance due to its clinical utility, faster turnaround times, and lower cost per reportable finding, which aligns well with therapy selection and reimbursement realities [21]. However, whole genome and whole exome sequencing are gaining traction in complex cases and research-driven centers as cost curves continue to fall [21]. The kits and reagents segment remains indispensable, holding approximately 60.9% market share in 2024, as each application requires specialized consumables designed to meet precise assay needs [19].

Geographically, North America leads the market with a 41.3% share in 2023, supported by high cancer incidence, advanced infrastructure, and established precision medicine programs [21]. Europe is advancing through national genomics strategies, while the Asia Pacific region is expected to register the fastest growth, driven by investments in genomics infrastructure, rising healthcare expenditure, and growing awareness [21] [22]. Country-specific projections highlight France as a particularly high-growth market with a anticipated CAGR of 21.6% through 2035, followed by the United States (19.4%) and Germany (19.2%) [19].

Key Adoption Trends and Innovation Drivers

Liquid Biopsy and Minimal Residual Disease Monitoring

Liquid biopsy represents one of the most transformative applications of NGS in clinical oncology, enabling non-invasive cancer detection and monitoring through analysis of circulating tumor DNA (ctDNA) [19]. This technology enables clinicians to track tumor dynamics and treatment responses through a simple blood draw, bypassing the need for invasive tissue biopsies [19]. The clinical value of liquid biopsy is particularly evident in its application for minimal residual disease (MRD) monitoring, which offers a sensitive method to detect cancer recurrence at earlier stages than conventional imaging [1]. The adoption momentum is reflected in recent market developments, such as the May 2025 launch of liquid biopsy testing services by Florida Cancer Specialists & Research Institute for common cancers including breast, lung, colorectal, and prostate cancers [22]. The development of specialized library preparation kits, such as the cfDNA Library Preparation Kit launched by Twist Bioscience in February 2024, further supports this trend by maximizing the capture of unique cfDNA molecules [19].

Artificial Intelligence and Bioinformatics Integration

The integration of artificial intelligence (AI) and machine learning into NGS data analysis represents a paradigm shift in clinical oncology applications [19]. AI algorithms are increasingly embedded throughout the NGS workflow, enhancing variant calling, annotation, and interpretation to detect medically relevant mutations and predict therapeutic responses [19]. Leading companies are actively deploying AI-powered solutions; Illumina utilizes AI-powered software for variant interpretation and machine learning algorithms for tumor-only and tumor-normal analysis [19]. Thermo Fisher Scientific employs AI-driven classifiers to identify rare mutations in liquid biopsy samples [19]. F. Hoffmann-La Roche has developed the NAVIFY digital ecosystem, which combines sequencing data, electronic health records, and AI to create personalized insights for cancer treatment planning [19]. The May 2025 launch of Illumina's DRAGEN version 4.4 software exemplifies this trend, providing groundbreaking oncology applications to simplify NGS analysis and expand multiomics capabilities [22].

Decentralization of Testing and Workflow Automation

A significant trend transforming the clinical trial landscape is the decentralization of NGS testing from large academic centers to community hospitals and local laboratories [20]. This shift is crucial for addressing the clinical trial enrollment bottleneck by expanding access to genomic testing where most patients receive care [20]. Automation plays a pivotal role in this transition, with modern NGS solutions incorporating streamlined library preparation, sequencing, and data analysis workflows that minimize manual intervention and reduce turnaround times [20]. Thermo Fisher Scientific's Ion Torrent Genexus System exemplifies this trend with its end-to-end automation and real-time data analysis capabilities [19]. The practical impact of these advancements is profound – they democratize precision medicine by making rapid NGS testing viable in broader clinical settings, thereby expanding patient access to clinical trials and targeted therapies [20].

Companion Diagnostic Expansion and Regulatory Evolution

The development of NGS-based companion diagnostics (CDx) is accelerating in tandem with targeted therapy approvals [19] [22]. Pharmaceutical companies are increasingly integrating CDx into their drug development workflows to enhance clinical trial success rates and secure regulatory approvals [22]. The regulatory landscape is simultaneously evolving to accommodate these advances, with the FDA classifying certain NGS-based oncology diagnostics as Class II or III medical devices requiring premarket approval or 510(k) clearance [19]. A landmark development occurred in May 2025 when Illumina introduced the TruSight Comprehensive test, the first FDA-approved distributable genomic profiling kit with pan-cancer companion diagnostic claims [19]. This test evaluates both DNA and RNA, enabling rapid tumor profiling and personalized therapy matching, and represents the convergence of several trends: regulatory maturation, assay comprehensiveness, and clinical utility [19]. Similar regulatory frameworks are being implemented globally, with the European Union's In Vitro Diagnostic Regulation (IVDR) and China's NMPA mandates for NGS platform approvals [19].

Experimental Protocols for NGS in Clinical Trial Enrollment

Sample Processing and Library Preparation Protocol

The initial phase of NGS testing requires meticulous sample handling and preparation to ensure reliable results for clinical trial eligibility assessment. The protocol varies based on sample type (tissue or blood) but follows consistent principles for preserving nucleic acid integrity and enabling comprehensive genomic analysis.

Tissue Sample Processing:

- DNA Extraction: Extract genomic DNA from formalin-fixed paraffin-embedded (FFPE) tissue sections or fresh frozen tissue using commercially available kits designed for clinical samples [1]. Assess DNA quality and quantity through spectrophotometry (A260/A280 ratio) and fluorometric methods, with minimum requirements of 50-100 ng DNA for targeted panels.

- RNA Extraction (when required): Isolate total RNA using silica-membrane or magnetic bead-based methods, followed by reverse transcription to generate complementary DNA (cDNA) for expression analysis or fusion detection [1].

- Tumor Content Assessment: Review hematoxylin and eosin (H&E) stained sections by a qualified pathologist to ensure adequate tumor cellularity (typically >20%) and circle areas for macrodissection if needed to enrich tumor content [1].

Liquid Biotype Processing:

- Blood Collection and Plasma Separation: Collect blood in cell-stabilizing tubes (e.g., Streck Cell-Free DNA BCT) and process within 6 hours of collection. Centrifuge at 1600-2000 × g for 10 minutes to separate plasma, followed by a second centrifugation at 16,000 × g for 10 minutes to remove residual cells [19].

- Cell-Free DNA Extraction: Isolate cfDNA from 4-10 mL of plasma using magnetic bead-based kits specifically validated for low-abundance DNA recovery. Elute in 20-50 μL of TE buffer or molecular grade water [19].

- cfDNA Quality Control: Quantify cfDNA using fluorometric methods sensitive to low DNA concentrations (e.g., Qubit dsDNA HS Assay). Assess fragment size distribution using microfluidic electrophoresis (e.g., Bioanalyzer, TapeStation) to confirm expected cfDNA peak at ~166 bp [19].

Library Construction:

- DNA Fragmentation: For high molecular weight DNA (from tissue), fragment to ~300 bp using acoustic shearing or enzymatic fragmentation methods [1]. cfDNA typically does not require additional fragmentation.

- Adapter Ligation: Attach platform-specific adapters containing unique molecular identifiers (UMIs) to DNA fragments using ligase-based methods. UMIs are essential for distinguishing true low-frequency variants from PCR and sequencing errors, particularly critical for liquid biopsy applications [19] [1].

- Library Amplification: Amplify adapter-ligated fragments using limited-cycle PCR (typically 4-10 cycles) to generate sufficient material for sequencing. Use polymerase systems with high fidelity and minimal GC bias [1].

- Library Quality Control: Quantify the final library using fluorometric methods and assess size distribution via microfluidic electrophoresis. For targeted sequencing, proceed to hybridization capture [1].

Target Enrichment (for Targeted Panels):

- Hybridization Capture: Incubate library with biotinylated oligonucleotide probes targeting specific genomic regions of clinical relevance. Use thermal cycler programs with precise temperature control to ensure specific hybridization [1].

- Magnetic Bead Capture: Bind probe-library hybrids to streptavidin-coated magnetic beads, followed by stringent washes to remove non-specifically bound DNA [1].

- Post-Capture Amplification: Amplify captured libraries with limited-cycle PCR (typically 8-12 cycles) to enrich for target regions while maintaining representation of original fragments [1].

- Final Library QC: Confirm library concentration and size distribution before sequencing. Pool libraries at appropriate molar ratios for multiplexed sequencing [1].

Sequencing and Data Analysis Protocol

The sequencing and analysis phase transforms prepared libraries into clinically interpretable data for trial matching, requiring robust bioinformatics pipelines and quality control measures throughout the process.

Sequencing Operation:

- Platform Selection: Choose appropriate sequencing platform based on clinical application: Illumina systems for high-depth targeted sequencing, Ion Torrent for rapid turnaround, or Pacific Biosciences for structural variant detection [1].

- Cluster Generation (Illumina Platforms): Load libraries onto flow cells for bridge amplification, creating millions of clonal clusters to enable detection of incorporated nucleotides [1].

- Sequencing Chemistry: Perform sequencing-by-synthesis with fluorescently labeled nucleotides (Illumina) or semiconductor-based detection of hydrogen ions released during DNA polymerization (Ion Torrent) [1].

- Read Length Configuration: Program instrument for appropriate read lengths (typically 2×75 bp to 2×150 bp for targeted panels) to ensure adequate overlap for paired-end alignment and accurate variant calling [1].

Primary Data Analysis:

- Base Calling: Convert raw signal data (images or voltage changes) into nucleotide sequences with quality scores using platform-specific algorithms (e.g., Illumina's RTA) [1].

- Demultiplexing: Assign sequences to individual samples based on unique barcode sequences incorporated during library preparation [1].

- Quality Control Metrics: Generate quality reports including Q-score distributions, percent bases above quality thresholds, and cluster density statistics to identify potential issues early in the workflow [1].

Secondary Analysis - Sequence Alignment and Variant Calling:

- Read Alignment: Map sequenced reads to reference genome (GRCh38) using optimized aligners such as BWA-MEM or STAR, with duplicate marking to identify PCR artifacts [1].

- Variant Calling: Identify somatic mutations using specialized callers:

- Single Nucleotide Variants (SNVs): Use MuTect2, VarScan2, or similar tools with parameters optimized for clinical sensitivity and specificity [1].

- Insertions/Deletions (Indels): Apply callers with local assembly capabilities such as Pindel or Scalpel, particularly important for frameshift mutations in genes like BRCA1/2 [1].

- Copy Number Variations (CNVs): Implement read-depth based methods (e.g., CNVkit, ADTEx) with GC-content correction and normalization to matched normal or pooled controls [1].

- Structural Variants (SVs): Use split-read and discordant read-pair approaches (e.g., Manta, Delly) for detecting gene fusions and chromosomal rearrangements [1].

- Variant Annotation: Annotate variants using databases such as dbSNP, ClinVar, COSMIC, and OncoKB to determine functional impact and clinical relevance [1].

Tertiary Analysis - Clinical Interpretation and Trial Matching:

- Variant Filtering and Prioritization: Filter variants based on population frequency (<1% in gnomAD), functional impact (missense, nonsense, splice-site), and clinical evidence (OncoKB levels) [19].

- Actionability Assessment: Compare molecular profile against clinical trial databases (e.g., ClinicalTrials.gov) and drug label indications to identify potential therapeutic options [20].

- AI-Enhanced Interpretation: Apply machine learning algorithms to integrate genomic data with clinical variables (tumor type, prior therapies, performance status) for optimized trial matching [19].

- Report Generation: Create clinician-friendly reports highlighting actionable biomarkers, matched therapeutic options (including clinical trials), and evidence levels supporting each recommendation [19].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Research Reagents for NGS-Based Clinical Trial Screening

| Reagent Category | Specific Examples | Function in Workflow | Clinical Trial Application |

|---|---|---|---|

| Nucleic Acid Extraction Kits | QIAamp DNA FFPE Tissue Kit, Qubit dsDNA HS Assay, Circulating Nucleic Acid Kit | Isolation and quantification of high-quality DNA/RNA from diverse sample types | Ensures input material meets quality thresholds for reliable variant detection in trial eligibility assessment |

| Library Preparation Kits | Illumina DNA Prep Kit, Twist cfDNA Library Preparation Kit, KAPA HyperPrep Kit | Fragmentation, adapter ligation, and amplification of DNA for sequencing | Standardizes pre-sequencing workflow; UMI incorporation enables sensitive variant detection in liquid biopsies |

| Hybridization Capture Reagents | Illumina TruSight Oncology 500, Thermo Fisher Oncomine Comprehensive Assay, IDT xGen Pan-Cancer Panel | Target enrichment for specific genomic regions of clinical interest | Focuses sequencing resources on clinically actionable genes; reduces sequencing costs and data burden |

| Sequencing Consumables | Illumina MiSeq/NextSeq Reagent Kits, Ion Torrent Ion 540/550 Chips, SMRT Cells | Platform-specific reagents for cluster generation and sequencing | Generates raw sequencing data; different platforms offer trade-offs in throughput, speed, and read length |

| Bioinformatics Tools | Illumina DRAGEN Platform, GATK, GEMINI, GENEIA, QCI Interpret | Secondary and tertiary analysis of sequencing data | Converts raw sequencing data to clinically actionable information; AI-enhanced tools improve trial matching accuracy |

The integration of NGS into clinical oncology represents a transformative shift in cancer research and treatment, particularly within the context of clinical trial enrollment. The technology's growing adoption, driven by liquid biopsy applications, AI-enhanced interpretation, and decentralized testing models, is rapidly addressing the critical challenge of matching eligible patients with appropriate targeted therapy trials. As the market continues its robust growth trajectory, expanding from approximately $0.7-0.74 billion in 2024/2025 to an anticipated $3.13-3.4 billion by 2034, the ecosystem is evolving toward more accessible, efficient, and comprehensive genomic profiling solutions [19] [21]. For researchers, scientists, and drug development professionals, understanding these trends and implementing standardized protocols for NGS-based patient screening is becoming increasingly essential for accelerating clinical trial enrollment and advancing precision oncology. The continued innovation in reagent systems, sequencing platforms, and analytical frameworks promises to further enhance the role of NGS in connecting cancer patients with potentially life-extending clinical trials, ultimately fulfilling the promise of personalized cancer medicine.

Clinical Guidelines and Evidence Supporting NGS for Trial Eligibility

Next-generation sequencing (NGS) has revolutionized oncology by enabling comprehensive molecular profiling of tumors, which is increasingly critical for determining eligibility for clinical trials. This application note details the clinical evidence and provides structured protocols for implementing NGS to enhance precision oncology and clinical trial enrollment. The content is framed within a broader research thesis on NGS-guided clinical trial enrollment for cancer patients, addressing the needs of researchers, scientists, and drug development professionals. We summarize quantitative evidence of clinical utility, outline validated methodological workflows, and provide resources to facilitate the integration of NGS into clinical trial screening pipelines.

Clinical Evidence for NGS-Guided Trial Enrollment

A comprehensive literature review analyzing 31 publications demonstrated that NGS-informed treatment selection significantly improves patient survival outcomes across various cancer types [23]. The evidence confirms that patients receiving genomically matched therapy based on NGS results experience statistically significant improvements in both progression-free survival (PFS) and overall survival (OS).

Table 1: Survival Outcomes with NGS-Informed Therapy Based on Pan-Cancer Analysis

| Outcome Measure | Number of Publications Reporting Significant Improvement | Range of Hazard Ratios (HR) Reported | Mean HR Reported |

|---|---|---|---|

| Progression-Free Survival (PFS) | 11 publications | 0.24-0.67 | 0.47 |

| Overall Survival (OS) | 16 publications | Not consistently reported | Not consistently reported |

The quantitative evidence demonstrates that NGS-based therapy matching reduces the risk of disease progression or death by approximately half (HR 0.47) compared to non-matched therapy [23].

Real-World Clinical Implementation Data

A large-scale real-world study at Seoul National University Bundang Hospital (SNUBH) involving 990 patients with advanced solid tumors demonstrated successful implementation of NGS testing in routine practice [6]. This study utilized the SNUBH Pan-Cancer v2.0 panel targeting 544 genes and reported microsatellite instability (MSI) status and tumor mutational burden (TMB).

Table 2: NGS Detection Rates and Therapy Matching in Real-World Practice (n=990)

| Parameter | Result | Clinical Implications |

|---|---|---|

| Patients with Tier I variants (strong clinical significance) | 26.0% (257/990) | Potential for FDA-approved or guideline-recommended therapies |

| Patients with Tier II variants (potential clinical significance) | 86.8% (859/990) | Eligibility for investigational therapies or off-label use |

| Most frequently altered Tier I genes | KRAS (10.7%), EGFR (2.7%), BRAF (1.7%) | Common therapeutic targets across multiple cancer types |

| Patients receiving NGS-based therapy | 13.7% of those with Tier I variants | Direct impact on treatment selection |

| Objective response rate in NGS-matched therapy | 37.5% (12/32 with measurable lesions) | Demonstrated clinical efficacy |

Among patients with measurable lesions who received NGS-based therapy, 37.5% achieved partial response and 34.4% achieved stable disease, demonstrating meaningful clinical benefit from precision oncology approaches [6].

Experimental Protocols for NGS Implementation

Sample Preparation and Quality Control

Protocol: Tumor Sample Assessment and Nucleic Acid Extraction

- Specimen Review: For solid tumors, microscopic review by a qualified pathologist is essential to confirm tumor presence, mark areas for macrodissection/microdissection, and estimate tumor cell fraction [24].

- Nucleic Acid Extraction: Extract genomic DNA from formalin-fixed paraffin-embedded (FFPE) tumor specimens using commercial kits (e.g., QIAamp DNA FFPE Tissue kit) [6].

- Quality Assessment: Quantify DNA concentration using fluorometric methods (e.g., Qubit dsDNA HS Assay) and assess purity via spectrophotometry (A260/A280 ratio between 1.7-2.2) [6].

- Minimum Requirements: Use at least 20 ng of DNA input for library preparation, with proper quality control metrics established during validation [6] [24].

Critical Validation Parameters: Establish minimum tumor cellularity requirements (typically >20%), minimum DNA input, and maximum degradation thresholds during assay validation [24].

Library Preparation and Sequencing

Protocol: Hybrid Capture-Based Library Preparation

Two major approaches exist for targeted NGS: hybrid capture-based and amplification-based methods. The following protocol focuses on hybrid capture, which offers advantages in detecting diverse variant types:

- Library Preparation: Fragment DNA and ligate with sample-specific indexing adapters to enable sample multiplexing [6] [25].

- Target Enrichment: Use biotinylated oligonucleotide probes complementary to regions of interest (e.g., Agilent SureSelectXT Target Enrichment System) [6].

- Quality Assessment: Evaluate final library size (250-400 bp) and quantity using automated electrophoresis systems (e.g., Agilent 2100 Bioanalyzer) [6].

- Sequencing: Perform massive parallel sequencing on platforms such as Illumina NextSeq 550Dx with a minimum mean depth of coverage of 500-1000× [6] [24].

Bioinformatics Analysis: Implement pipelines for base calling, read alignment (to hg19 reference genome), variant identification (using tools like Mutect2 for SNVs/indels, CNVkit for copy number variations, and LUMPY for structural variants), and annotation [6].

Analytical Validation Requirements

Protocol: Assay Validation Following Professional Guidelines

The Association for Molecular Pathology (AMP) and College of American Pathologists (CAP) provide joint recommendations for validating NGS panels:

- Performance Establishment: Determine positive percentage agreement and positive predictive value for each variant type (SNVs, indels, CNVs, fusions) using validated reference materials [24].

- Sample Requirements: Use a minimum number of samples to establish test performance characteristics, with recommendations varying by variant type [24].

- Quality Control: Implement an error-based approach that identifies potential sources of errors throughout the analytical process and addresses them through test design and quality controls [24].

- Variant Classification: Report variants using standardized guidelines such as the AMP/ASCO/CAP tier system [6]:

- Tier I: Variants of strong clinical significance

- Tier II: Variants of potential clinical significance

- Tier III: Variants of unknown significance

- Tier IV: Benign or likely benign variants

Visualization of NGS Clinical Trial Matching Workflow

Figure 1: End-to-End NGS Clinical Trial Matching Workflow. This diagram illustrates the complete pathway from patient identification through to improved survival outcomes, highlighting the critical role of comprehensive genomic profiling and automated trial matching technologies.

Technical Methodology for NGS Analysis

Figure 2: NGS Technical Workflow from Sample to Report. This diagram details the key technical steps in NGS testing, from initial nucleic acid extraction through to final clinical reporting of actionable genomic alterations.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagent Solutions for NGS Implementation

| Reagent/Resource | Function | Example Products/Platforms |

|---|---|---|

| Nucleic Acid Extraction Kits | Isolation of high-quality DNA from FFPE tissue | QIAamp DNA FFPE Tissue Kit (Qiagen) [6] |

| Target Enrichment Systems | Capture of genomic regions of interest | Agilent SureSelectXT (hybrid capture) [6] |

| Library Preparation Kits | Fragmentation and adapter ligation for sequencing | Illumina DNA Prep kits [26] |

| NGS Sequencers | Massive parallel sequencing platform | Illumina NextSeq 550Dx [6] |

| Bioinformatics Tools | Variant calling and annotation | Mutect2 (SNVs/indels), CNVkit (CNVs), LUMPY (fusions) [6] |

| Reference Materials | Assay validation and quality control | Cell lines with known mutations [24] |

| Clinical Trial Matching AI | Automated patient-to-trial matching | Massive Bio, Lifebit platforms [27] [28] |

The integration of NGS testing into oncology practice provides substantial benefits for clinical trial enrollment and patient outcomes. Evidence from both large-scale studies and comprehensive literature reviews demonstrates that NGS-informed treatment matching significantly improves progression-free and overall survival across multiple cancer types. Implementation of standardized protocols for sample processing, sequencing, and bioinformatic analysis—following established professional guidelines—ensures reliable identification of actionable genomic alterations. When combined with AI-powered trial matching platforms, NGS testing dramatically increases patient eligibility for biomarker-directed clinical trials, accelerating precision oncology research and expanding treatment options for patients with advanced cancer.

From Sequence to Strategy: Implementing NGS in the Clinical Trial Workflow

Next-generation sequencing (NGS) has revolutionized cancer research and treatment by enabling the simultaneous analysis of numerous genetic alterations. The selection of an appropriate gene panel size—ranging from limited panels targeting a few genes to large panels encompassing hundreds of genes—represents a critical strategic decision in precision oncology. This decision directly impacts the comprehensiveness of biomarker detection, which is fundamental for identifying eligible patients for targeted therapies and clinical trials [25] [29].

The evolution from single-gene tests to massive parallel sequencing technologies has addressed the growing understanding of cancer as a complex genetic disease characterized by multiple molecular alterations and clonal evolution [25]. In the context of clinical trial enrollment, comprehensive biomarker capture is particularly crucial, as it enables researchers to identify patient subgroups most likely to respond to investigational therapies based on their molecular profiles [30].

Comparative Analysis: Large vs. Small Targeted Panels

Technical and Clinical Performance Characteristics

Targeted NGS panels can be broadly categorized by size and application. Small panels (typically <50 genes) focus on established biomarkers with proven clinical utility, while large panels (>50 genes, often hundreds) provide a more comprehensive genomic profile [29] [31].

Table 1: Comparative Performance of Large vs. Small NGS Panels

| Parameter | Large Panels (>50 genes) | Small Panels (<50 genes) |

|---|---|---|

| Biomarker Detection Rate | 51.6% (F1CDX, 324 genes) [30] | 36.9% (CTL, 87 genes) [30] |

| Therapeutic Targets Identified | 14.8 percentage points increase [30] | Baseline reference |

| Tumor Mutational Burden (TMB) | Can be assessed [30] | Generally not assessed |

| Gene Rearrangements | Comprehensive detection [30] | Limited detection |

| Sample Requirements | Higher input requirements [29] | Suitable for limited samples [29] |

| Cost-Effectiveness | Dominant strategy for aNSCLC [31] | Higher long-term costs [31] |

| Turnaround Time | Potentially longer [31] | Typically faster [29] |

| Data Interpretation Complexity | High, more variants of unknown significance [29] | More straightforward [29] |

Impact on Clinical Trial Enrollment and Targeted Therapy