Optimizing Machine Learning Pipelines for Cancer Diagnostics: From Data to Clinical Deployment

This article provides a comprehensive guide for researchers and drug development professionals on building and optimizing robust machine learning pipelines for cancer diagnostics.

Optimizing Machine Learning Pipelines for Cancer Diagnostics: From Data to Clinical Deployment

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on building and optimizing robust machine learning pipelines for cancer diagnostics. It covers the foundational principles of ML in oncology, explores advanced methodological applications like multimodal AI, addresses critical troubleshooting and optimization challenges in production environments, and presents rigorous validation frameworks. By synthesizing the latest research and real-world case studies, this resource aims to bridge the gap between experimental models and reliable, clinically impactful tools that can enhance diagnostic accuracy, personalize treatment strategies, and ultimately improve patient outcomes.

The Foundation of AI in Oncology: Core Concepts and Clinical Imperatives

The Growing Cancer Burden and the Need for Advanced Diagnostics

Cancer remains a principal cause of global mortality, with projections estimating approximately 35 million cases by 2050 [1]. This alarming rise underscores the critical imperative to accelerate progress in cancer research and develop more effective diagnostic strategies. Traditional methods for cancer detection and diagnosis, including tissue biopsies, present several limitations, such as invasive acquisition, clinical complications, and an inability to fully capture tumor heterogeneity [2].

In response to these challenges, artificial intelligence (AI) and machine learning (ML) are revolutionizing the landscape of oncological research and clinical practice [1]. These technologies leverage sophisticated algorithms to analyze complex datasets, enabling automated cancer detection with unprecedented speed, accuracy, and scalability [3] [4]. This document provides detailed application notes and experimental protocols for implementing ML pipelines in cancer diagnostics research, with a specific focus on liquid biopsy analysis and imaging-based detection.

Key Machine Learning Protocols in Cancer Diagnostics

The successful implementation of machine learning for cancer diagnostics relies on a rigorous, multi-stage protocol. The following section outlines the core procedures, from data preparation to model evaluation.

Data Preprocessing and Feature Engineering

Data preprocessing is a foundational step that significantly influences the performance of subsequent ML models [2]. High-dimensional data from liquid biopsies or medical images require careful curation to ensure robust and generalizable model performance.

- Missing Value Solutions: Simply deleting samples with missing values can introduce bias and discard valuable data [2]. Preferred imputation methods include:

- Simple Imputation: Replacing missing values with the feature's mean, median, or mode.

- Model-Based Imputation: Using inference from existing data to predict missing values. For a feature with missing data, a regression or classification model is built using other complete features from the same dataset. This model then predicts the missing values [2].

- Data Normalization: Normalization prevents predictions from being dominated by features with large numeric ranges and ensures comparability across samples [2]. Common techniques include:

- Z-Score Standardization:

x' = (x - μ) / σ, where μ is the mean and σ is the standard deviation of the feature. - Max-Min Normalization:

x' = (x - min) / (max - min), which rescales features to a [0, 1] range. - Decimal Scaling:

x' = x / 10^j, where j is the smallest integer such that max(|x'|) < 1.

- Z-Score Standardization:

- Dimension Reduction: High-dimensional data can lead to model overfitting and increased computational cost. Dimension reduction techniques are essential to mitigate these issues [2].

- Feature Extraction: This method transforms the original high-dimensional space into a new, lower-dimensional space. Principal Component Analysis (PCA) is a widely used linear feature extraction technique [2].

- Feature Selection: This technique directly selects a valuable subset of the original features, retaining their interpretability. It is categorized into three types:

- Filter Methods: Assess feature importance based on statistical properties (e.g., Pearson correlation, F-statistic) independent of any ML model.

- Wrapper Methods: Use a search algorithm to generate feature subsets and evaluate them by training and testing a specific ML model (e.g., Sequential Selection, Genetic Algorithms).

- Embedded Methods: Perform feature selection during the model training process itself (e.g., LASSO, Elastic Net regression) [2].

Model Evaluation and Selection

Once data is preprocessed, the next critical step is to evaluate and select the most appropriate model. This process should incorporate rigorous validation techniques to ensure the model generalizes well to unseen data.

- Performance Metrics: The choice of metrics depends on the clinical task. For classification, key metrics include sensitivity (recall), specificity, precision, and area under the receiver operating characteristic curve (AUC-ROC) [5].

- Validation and Hypothesis Testing: It is crucial to evaluate models on held-out test datasets that were not used during training. Further, statistical hypothesis testing should be employed to confirm that the performance of a proposed model is statistically significant and not due to chance [2].

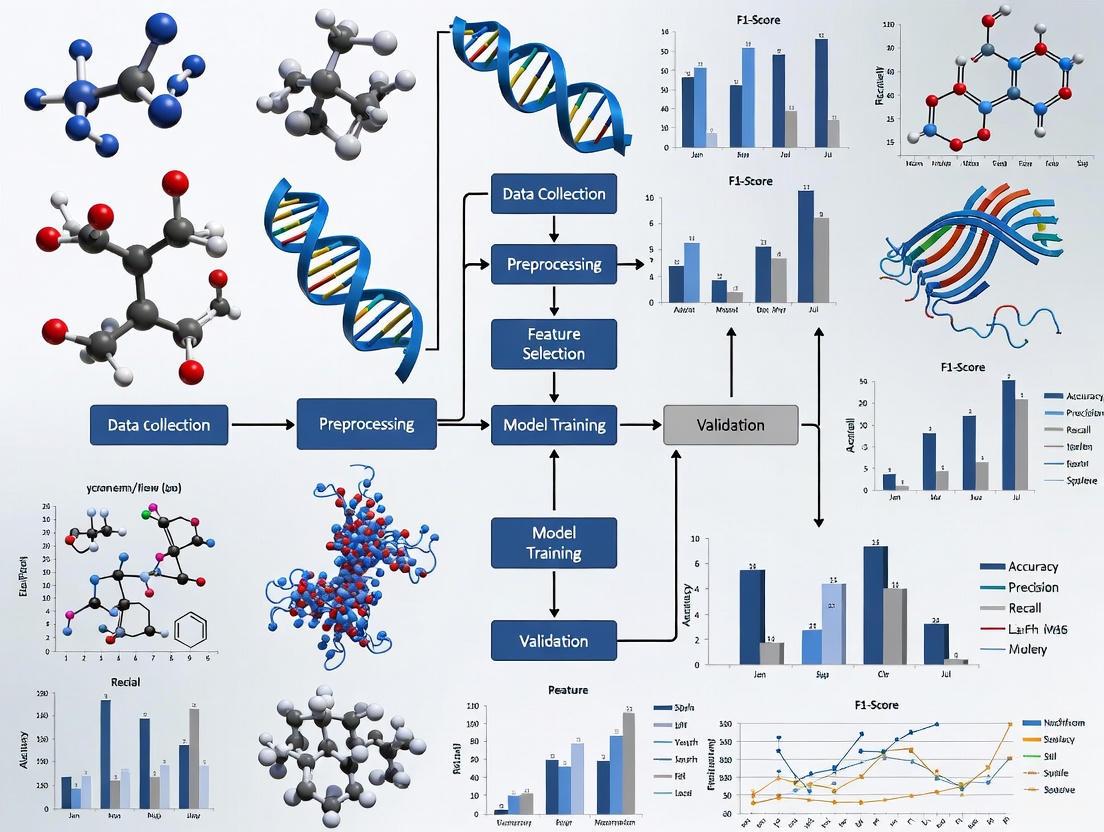

The workflow for these core protocols is outlined in the diagram below.

Application Notes: AI-Powered Diagnostic Tools

Automated Detection in Liquid Biopsy

Liquid biopsy, which analyzes components such as circulating tumor DNA (ctDNA) in blood, offers a non-invasive alternative to tissue biopsies [2]. A novel AI algorithm named RED (Rare Event Detection) has been developed to automate the detection of rare cancer cells in blood samples [3].

- Principle: Unlike traditional methods that search for known cancer cell features, the RED algorithm uses a deep learning approach to identify unusual patterns, ranking cells by rarity. This allows the "most unusual" cells, which are likely to be cancerous, to rise to the top for review, functioning as a "needle in a haystack" detector [3].

- Performance: In validation tests, the RED algorithm demonstrated high sensitivity, finding 99% of added epithelial cancer cells and 97% of added endothelial cells. It also reduced the amount of data a human specialist needed to review by 1,000 times and was able to identify twice as many "interesting" cells associated with cancer compared to previous methods [3].

- Protocol Summary:

- Sample Acquisition: Collect peripheral blood sample.

- Sample Processing: Isolate and prepare mononuclear cells from the blood sample.

- Image Acquisition: Capture high-resolution images of the cells.

- AI Analysis: Process cell images using the RED algorithm to identify and rank rare, anomalous cells based on deep learning-derived patterns.

- Pathologist Review: Examine the top-ranked cells flagged by the AI to confirm the presence of cancer.

Multi-Cancer Early Detection Tests

Multi-cancer early detection (MCED) tests represent a transformative application of liquid biopsy, screening for multiple cancers from a single blood draw [5]. Evaluating their potential population impact requires a specific quantitative framework.

- Key Outcome Metrics:

- Cancers Detected (CD): The expected number of true positive findings.

CD = N * (ρ_A * MS_A + ρ_B * MS_B), where N is the number tested, ρ is cancer prevalence, and MS is marginal sensitivity [5]. - Exposed to Unnecessary Confirmation (EUC): The expected number of people directed to unnecessary confirmatory tests (e.g., biopsies) due to false positives or correct cancer signal with incorrect tissue of origin. For a two-cancer test,

EUC = N * [ρ_A * P_A(T+) * (1-L_A(T+)) + ρ_B * P_B(T+) * (1-L_B(T+)) + (1-ρ_A-ρ_B)(1-Sp)], where Sp is specificity [5]. - Lives Saved (LS): The expected number of cancer deaths averted.

LS = N * (m_A * MS_A * R_A + m_B * MS_B * R_B), where m is the probability of cancer death without screening, and R is the mortality reduction from early detection [5].

- Cancers Detected (CD): The expected number of true positive findings.

- Framework Insight: The harm-benefit tradeoff is overwhelmingly determined by test specificity. For a given specificity, the ratio of unnecessary confirmations per cancer detected (EUC/CD) is most favorable for higher-prevalence cancers. Similarly, the tradeoff improves when the test includes cancers with higher mortality for which effective treatments exist [5].

The relationship between test performance and clinical outcomes is quantified in the table below.

Table 1: Quantitative Framework for a Hypothetical Multi-Cancer Test (Single-Occasion Screening)

| Cancer Type | Prevalence (per 100,000) | Test Sensitivity | Marginal Sensitivity | Specificity | EUC/CD Ratio | Lives Saved per 100,000 Screened |

|---|---|---|---|---|---|---|

| Breast + Lung | ~300-400 | ~60-90%* | Varies by stage | 99.0% | 1.1 | ~20 (assuming 10% mortality reduction) |

| Breast + Liver | ~100-200 | ~60-90%* | Varies by stage | 99.0% | 1.3 | ~10 (assuming 10% mortality reduction) |

| Breast + Pancreatic | ~100-200 | ~60-90%* | Varies by stage | 99.5% | 0.7 | ~15 (assuming 10% mortality reduction) |

Note: *Sensitivities are often stage-dependent, with lower sensitivity for early-stage cancers. EUC/CD and Lives Saved are illustrative estimates based on the framework from [5].

Enhanced Detection in Medical Imaging

AI is playing an increasingly important role in improving the speed and accuracy of cancer detection from medical images, including colonoscopy, mammography, and histopathology slides [1].

- Colorectal Cancer (CRC):

- CADe (Computer-Aided Detection): Deep learning models like CRCNet are trained on large annotated datasets of colonoscopic images to enable real-time, automated polyp detection. Multiple AI systems have received regulatory clearance (FDA K211951, K223473) [1].

- CADx (Computer-Aided Diagnosis): These systems go beyond detection to distinguish benign from malignant lesions. Some research suggests autonomous AI diagnosis can achieve non-inferior accuracy to endoscopists for determining surveillance intervals [1].

- Breast Cancer (BC):

- Mammography: AI systems have been developed that outperform radiologists in identifying breast cancer from 2D and 3D mammograms. Several FDA-cleared products (K220105, K211541) are now available to aid radiologists [1].

- Risk Prediction: Systems like Mirai can predict future five-year breast cancer risk directly from mammograms, offering potential for personalized screening schedules [1].

- Protocol Summary for AI in Histopathology:

- Tissue Preparation: Process tissue sample and create a whole-slide image (WSI).

- AI Segmentation: Use a Convolutional Neural Network (CNN) to segment and classify glands and tumor regions in the WSI.

- Quantitative Analysis: The AI model extracts quantitative features, such as the Tumor-Stroma Ratio (TSR), which has been validated as a prognostic factor for patient survival in colorectal cancer [1].

- Pathologist Review: The pathologist reviews the AI-generated annotations and quantitative data to make a final diagnosis.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key resources and data repositories essential for conducting research in AI and cancer diagnostics.

Table 2: Essential Data and Biospecimen Resources for Cancer Diagnostics Research

| Resource Name | Type | Description & Function | Access |

|---|---|---|---|

| The Cancer Genome Atlas (TCGA) [6] | Genomics Data | A comprehensive, publicly available repository of genomic, epigenomic, transcriptomic, and proteomic data from over 30 cancer types. Used for biomarker discovery and model training. | Open |

| Genomic Data Commons (GDC) [6] | Genomics Data | A unified data repository that supports several NCI cancer genome programs (including TCGA), enabling data sharing and analysis for precision medicine. | Open / Controlled |

| The Cancer Imaging Archive (TCIA) [6] | Imaging Data | A curated archive of medical images (e.g., MRI, CT) linked to other data types like genomics and pathology. Essential for training AI models in radiology. | Open |

| NCI Clinical and Translational Data Commons (CTDC) [6] | Clinical Data | Provides access to clinical and translational data from NCI-funded clinical trials and correlative studies, including the Cancer Moonshot Biobank. | Controlled |

| CellMinerCDB / NCI-60 [6] | Drug Discovery | A database containing the NCI-60 panel of 60 human tumor cell lines, with drug screening data for over 100,000 compounds. Used for drug response studies. | Open |

| RED Algorithm [3] | Software Tool | An AI algorithm for rare event detection in liquid biopsy samples, used to automate the finding of cancer cells in blood. | Upon Request / Code Publication |

Implementation Challenges and Future Directions

Despite its promise, the integration of AI into clinical oncology faces several substantial challenges that must be addressed for broader adoption [4].

- Data Quality and Standardization: AI models require large volumes of high-quality, well-annotated, and standardized data. Variations in data acquisition protocols across institutions can hinder model generalizability [4].

- Model Interpretability and Transparency: The "black box" nature of some complex AI models can erode trust among clinicians. The field of Explainable AI (XAI) is emerging to make model decisions more transparent and understandable [4].

- Ethical and Regulatory Concerns: Key issues include patient data privacy, security, and the potential for algorithmic bias if models are trained on non-representative datasets. Robust regulatory frameworks are needed to ensure the safety and efficacy of AI-based medical devices [1] [7].

- Emerging Solutions:

- Federated Learning: This technique allows AI models to be trained across multiple institutions without sharing raw patient data, thus preserving privacy [4].

- Synthetic Data Generation: Using Generative Adversarial Networks (GANs) or Variational Autoencoders (VAEs) to create synthetic, realistic patient data can help augment limited datasets and mitigate bias [4].

- Interdisciplinary Collaboration: Close collaboration between computer scientists, oncologists, pathologists, and regulatory experts is crucial for developing clinically impactful and ethically sound AI tools [4].

The interconnected nature of these challenges and solutions is visualized below.

The optimization of machine learning (ML) pipelines is critical for advancing cancer diagnostics research. Such a pipeline provides a structured, reproducible framework for transforming raw, heterogeneous medical data into reliable, deployable diagnostic tools. For researchers and drug development professionals, a well-defined pipeline ensures that models are not only statistically sound but also clinically relevant and robust enough for real-world application. This document details the core components of an ML pipeline—data preparation, model development, and deployment strategies—within the context of cancer diagnostics, providing application notes and experimental protocols to guide research and implementation.

The Data Preparation Phase

Data Acquisition and Preprocessing

The foundation of any effective ML pipeline in cancer diagnostics is high-quality, well-curated data. This typically involves acquiring multi-modal data, such as histopathology images, ultrasound and mammography scans, and structured or unstructured data from Electronic Health Records (EHRs) [8] [9] [10].

A critical challenge in medical ML is the prevalence of class imbalance, where one class (e.g., non-cancerous cases) significantly outnumbers another (e.g., cancerous cases). This can lead to models that are biased toward the majority class. To address this, data augmentation techniques are routinely employed. For image data, this can include geometric transformations (rotation, flipping) and color space adjustments [8]. For tabular data, such as patient risk factors, synthetic minority over-sampling techniques like K-Means SMOTE have been shown to be effective, achieving high accuracy and AUC-ROC scores when paired with classifiers like Multi-Layer Perceptrons [11].

Clinical notes within EHRs contain invaluable patient information but are often unstructured. Natural Language Processing (NLP) techniques, particularly Named Entity Recognition (NER) models, can extract critical patient characteristics such as cognitive frailty or non-adherence to medication. Studies show that NER models can achieve high recall (0.81-0.90) and specificity (0.96-1.00) for such tasks, outperforming simpler rule-based queries for complex terminology [12].

Research Reagent Solutions: Data & Annotation

Table 1: Essential Reagents for Data Preparation

| Reagent Category | Example / Tool | Primary Function in the Pipeline |

|---|---|---|

| Structured Datasets | Kaggle Lung Cancer Dataset [11] | Provides a standardized, annotated benchmark for model training and validation. |

| Clinical Text Annotation | SpaCy NLP Library [12] | Facilitates the creation of custom NER models to extract structured data from clinical notes. |

| Data Augmentation | K-Means SMOTE [11] | Generates synthetic samples for minority classes to mitigate dataset imbalance and model bias. |

| Image Pre-processing | ITK-SNAP Software [10] | Used for cropping irrelevant regions and defining regions of interest in medical images. |

Model Development and Experimental Protocols

Model Architecture Selection and Optimization

Selecting the right model architecture is a balance between computational efficiency and diagnostic performance. Research indicates that lightweight models like MobileNet can achieve superior results in certain diagnostic tasks. For instance, in breast cancer diagnosis from ultrasound images, MobileNet with a 224x224 input resolution achieved an Area Under the Curve (AUC) of 0.924, outperforming both senior radiologists and more complex, dense networks [9].

Ensemble methods, which combine the strengths of multiple architectures, have also demonstrated remarkable accuracy. An ensemble of EfficientNetB0, ResNet50, and DenseNet121, optimized using Cat Swarm Optimization (CSO), reported a classification accuracy of 98.19% for breast histopathology images [13]. Similarly, a pipeline integrating EfficientNetV2L for feature extraction with LightGBM (LGBM) for classification achieved a validation accuracy of 99.93% for skin cancer detection [8]. For multi-modal data, fusion models that integrate features from different imaging types, such as a deep learning network combining ultrasound and mammography (DL-UM), have shown improved sensitivity and specificity over single-modality models [10].

Experimental Protocol: Image-Based Cancer Classification

This protocol outlines the key steps for training and validating a deep learning model for cancer diagnosis from medical images, based on methodologies from recent studies [8] [9] [10].

- Objective: To develop a robust deep learning model for the binary classification (benign vs. malignant) of cancer from medical images (e.g., skin lesions, breast ultrasound).

Materials and Equipment:

- Dataset of annotated medical images (e.g., 3,297 skin lesion images [8]).

- High-performance computing workstation with GPU(s) (e.g., NVIDIA RTX 3090).

- Deep Learning Frameworks: TensorFlow or PyTorch.

- Data preprocessing tools (e.g., ITK-SNAP, OpenCV).

Procedure:

- Data Partitioning: Randomly split the dataset at the patient level into training (e.g., 70-80%), validation (e.g., 10-15%), and a held-out independent testing set (e.g., 10-20%) to ensure no data leakage.

- Preprocessing and Augmentation:

- Resize all images to a uniform resolution (e.g., 224x224, 320x320, or 448x448 pixels) [9].

- Normalize pixel values to a [0, 1] range.

- Apply data augmentation techniques to the training set, such as random rotation, flipping, and color jittering.

- Model Training:

- Initialize a pre-trained model (e.g., MobileNet, EfficientNetV2L).

- Train the model using an optimizer (e.g., Adam or AdamW) with a learning rate scheduler (e.g., cosine annealing).

- Use a loss function suitable for imbalanced data (e.g., focal loss [10] or weighted cross-entropy).

- Validate the model on the validation set after each epoch and save the model with the best performance.

- Model Evaluation:

- Evaluate the final model on the independent test set.

- Report standard metrics: Accuracy, Precision, Recall, F1-Score, and AUC-ROC.

- Use 5-fold cross-validation to ensure result stability [8].

Performance Metrics from Recent studies

Table 2: Model Performance in Recent Cancer Diagnostic Studies

| Cancer Type | Proposed Model | Key Performance Metrics | Citation |

|---|---|---|---|

| Skin Cancer | EfficientNetV2L + LightGBM | Validation Accuracy: 99.93%; Test Accuracy: 99.90%; Precision: 0.99 (Benign), 0.98 (Malignant) | [8] |

| Breast Cancer | MobileNet (224x224) | AUC: 0.924; Accuracy: 87.3% (outperformed senior radiologists) | [9] |

| Breast Cancer | CSO-Ensemble (EfficientNetB0, ResNet50, DenseNet121) | Accuracy: 98.19% | [13] |

| Lung Cancer | K-Means SMOTE + Multi-Layer Perceptron | Accuracy: 93.55%; AUC-ROC: 96.76% | [11] |

Deployment and MLOps in Clinical Practice

From Model to Clinical Tool

Deploying a validated model into a clinical environment requires a robust MLOps (Machine Learning Operations) framework. In 2025, this involves a focus on automated pipelines for continuous integration and delivery (CI/CD), real-time monitoring, and scalable deployment strategies [14].

A key decision is the choice of deployment environment. Edge computing allows for models to be run on local devices (e.g., an ultrasound machine), which is ideal for low-latency applications and preserving data privacy. Studies have successfully evaluated models on edge-computing devices like the Jetson AGX Xavier to simulate clinical deployment [9]. Alternatively, cloud-based deployment offers greater scalability and easier updates but may introduce latency and data transmission concerns.

Ensuring Robust Deployment

Once deployed, models must be continuously monitored for model drift, where the statistical properties of the live data change over time, degrading model performance [15]. MLOps practices mandate the establishment of a feedback loop where model predictions and real-world outcomes are logged. This data is used to trigger alerts and schedule model retraining, ensuring long-term reliability.

Furthermore, for clinical adoption, model interpretability is paramount. Techniques like LIME (Local Interpretable Model-agnostic Explanations) can be employed to provide post-hoc explanations for individual predictions, helping clinicians understand the model's reasoning and build trust [11]. Integrating model outputs and heatmaps into clinical workflows has been shown to improve radiologists' diagnostic confidence and interobserver agreement [10].

A meticulously defined machine learning pipeline is the cornerstone of translating algorithmic innovation into tangible improvements in cancer diagnostics. By systematically addressing the challenges of data preparation, model selection and optimization, and deployment through modern MLOps practices, researchers can develop tools that not only achieve high statistical performance but also integrate seamlessly into clinical workflows. The continuous monitoring and refinement of these deployed systems will be crucial for sustaining their accuracy and building the trust required to usher in a new era of AI-assisted medicine.

The advancement of cancer diagnostics is increasingly powered by the integration of multimodal data. Imaging, genomics, and clinical records form the foundational triad of data modalities that, when processed through modern machine learning pipelines, enable a more comprehensive understanding of tumor biology and heterogeneity. The convergence of these data types allows researchers and clinicians to move beyond traditional diagnostic silos, facilitating a holistic approach that spans from macroscopic phenotypic manifestations to molecular and clinical characteristics. This integration is critical for developing robust predictive models that can inform personalized treatment strategies and improve patient outcomes in oncology.

The field of imaging genomics (also known as radiogenomics) exemplifies this integrative approach, seeking to establish connections between medical imaging features and genomic characteristics [16]. This interdisciplinary field lies at the intersection of medical imaging and genomics, with the primary objective of identifying relationships between image features and genomic information to construct association maps that can be correlated with clinical outcomes [16]. The underlying premise is that distinct phenotypic patterns observed on medical images reflect specific molecular alterations within tumors, creating a bridge between macroscopic imaging findings and microscopic genomic drivers.

Table 1: Key Characteristics of Primary Data Modalities in Cancer Diagnostics

| Data Modality | Data Sources & Examples | Key Quantitative Features | Primary Applications in Cancer Diagnostics |

|---|---|---|---|

| Medical Imaging | CT, MRI, PET, X-ray, Digital Pathology [17] [16] | Tumor size, shape, margin, texture, radiomic features (first-order statistics, GLCM, GLRLM) [16] [18] | Early detection, tumor segmentation, treatment response monitoring, subtype classification [17] [19] |

| Genomics | Whole Genome Sequencing, Targeted NGS Panels, Transcriptomics [16] [18] | Mutations, copy number variants, structural variants, gene expression profiles, pathway alterations [20] [18] | Molecular subtyping, identification of actionable mutations, prognosis prediction, targeted therapy selection [21] [18] |

| Clinical Records | EHRs, Pathology Reports, Clinical Notes, Lab Values [22] [1] | Structured data (lab values, medications) and unstructured data (clinical notes) processed via NLP [1] [19] | Patient risk stratification, outcome prediction, treatment trajectory analysis, comorbidity assessment [1] |

Table 2: Data Volume and Processing Considerations by Modality

| Data Modality | Annual Data Generation | Common Data Formats | Primary ML Approaches | Key Preprocessing Challenges |

|---|---|---|---|---|

| Medical Imaging | Hospitals generate ~50 petabytes/year collectively [22] | DICOM, NIfTI, Whole Slide Images (WSI) [19] | Convolutional Neural Networks (CNNs), Deep Learning [17] [20] | Standardization across devices, tumor segmentation, feature extraction [16] [19] |

| Genomics | Whole genome sequencing produces ~200 GB per sample [20] | FASTQ, BAM, VCF, GFF | Recurrent Neural Networks (RNNs), Transformers, Graph Neural Networks (GNNs) [1] [20] | Sequence alignment, variant calling, batch effects, pathway analysis [20] [18] |

| Clinical Records | Significant portion of 80% of medical data is unstructured [22] | HL7 FHIR, CSV, JSON, Plain Text | Natural Language Processing (NLP), Transformer models [1] [19] | De-identification, structuring unstructured notes, data harmonization across systems [22] |

Experimental Protocols for Multimodal Data Integration

Protocol 1: Radiogenomic Analysis for Glioblastoma Subtyping

This protocol details a methodology for identifying distinct glioblastoma subtypes through joint learning applied to radiomic and genomic data, based on a study of 571 IDH-wildtype glioblastoma patients [18].

Materials and Equipment

Table 3: Research Reagent Solutions for Radiogenomic Analysis

| Item | Specification/Function | Implementation Example |

|---|---|---|

| Imaging Data | Pre-operative multi-parametric MRI scans (T1, T1-Gd, T2, T2-FLAIR, DTI, DSC-MRI) [18] | 3 Tesla scanner with standardized acquisition parameters |

| Genomic Data | Targeted Next-Generation Sequencing (NGS) panels for glioblastoma-associated genes [18] | Custom panels covering key pathways (RB1, P53, MAPK, PI3K, RTK) |

| Image Processing Platform | Software for co-registration, normalization, and feature extraction [18] | CaPTk (Cancer Imaging Phenomics Toolkit) version 1.9.0 |

| Feature Selection Method | Algorithm for identifying most informative features from high-dimensional data [18] | L21-norm minimization for radiomic feature selection |

| Joint Learning Framework | Computational method for integrating multimodal data with incomplete entries [18] | Anchor-based Partial Multi-modal Clustering (APMC) |

| Statistical Analysis Tools | Software for survival analysis and cluster validation [18] | R or Python with survival, clustering, and CCA packages |

Procedure

Data Acquisition and Preprocessing

- Acquire pre-operative multi-parametric MRI scans according to standardized protocols [18]

- Perform image preprocessing: reorient to LPS coordinate system, co-register and resample to 1 mm³ resolution using Greedy Algorithm, skull-strip using CaPTk [18]

- Segment tumors into three subregions: enhancing tumor (ET), non-enhancing core (NC), and peritumoral edema (ED) [18]

- Extract radiomic features from all subregions using CaPTk, including first-order statistics, histogram, volumetric, GLCM, GLRLM, GLSZM, NGTDM, and Collage features (total 971 features) [18]

- Normalize features using z-scoring, remove dimensions with small standard deviation (σ ≤ 1E-6) and high correlation (r ≥ 0.85) [18]

Genomic Data Processing

- Sequence tumor samples using targeted NGS panels covering key glioblastoma genes [18]

- Focus on 13 genes from 5 core signaling pathways: RB1 pathway (RB1, CDKN2A), P53 pathway (MDM4, TP53), MAPK pathway (BRAF, NF1), PI3K pathway (PTEN, PIK3R1, PIK3CA), RTK pathway (FGFR2, MET, PDGFRA, EGFR) [18]

- Exclude patients with IDH1 or IDH2 mutations to maintain cohort homogeneity [18]

Feature Selection

- Employ L21-norm minimization for radiomic feature selection, using pathway mutation information as supervised labels [18]

- Apply leave-one-out cross validation to determine optimal number of selected features (12 imaging features selected in reference study) [18]

- Divide patient cohort equally into discovery and replication sets [18]

Joint Learning and Subtyping

- Apply Anchor-based Partial Multi-modal Clustering to handle data incompleteness (some subjects missing one modality) [18]

- Construct anchor graphs to connect all patients' modalities, establish stationary Markov random walks over the graph [18]

- Calculate one-step and two-step transition probabilities to serve as similarities [18]

- Perform Spectral Clustering on the fused similarity matrix [18]

- Determine optimal number of clusters using gap statistic [18]

Subtype Analysis and Validation

- Perform Kaplan-Meier survival analysis to identify distinct subtypes based on overall survival [18]

- Characterize imaging and genomic features of each subtype [18]

- Apply Canonical Correlation Analysis (CCA) to quantify relationships between the two modalities [18]

- Validate subtypes in replication cohort [18]

Diagram 1: Radiogenomic analysis workflow for GBM subtyping.

Protocol 2: Deep Learning for Multi-modal Cancer Detection

This protocol outlines a comprehensive methodology for applying deep learning architectures to integrated imaging and genomic data for cancer detection, based on current approaches in the field [20] [4].

Materials and Equipment

Table 4: Research Reagent Solutions for Deep Learning Implementation

| Item | Specification/Function | Implementation Example |

|---|---|---|

| Deep Learning Framework | Software environment for building and training neural networks | TensorFlow, PyTorch, or Keras with GPU acceleration |

| Convolutional Neural Networks (CNNs) | Architecture for processing imaging data [20] | Models for CT, MRI, or histopathology image analysis |

| Recurrent Neural Networks (RNNs) | Architecture for processing sequential genomic data [20] | LSTM or GRU variants for gene sequence analysis |

| Data Standardization Tools | Methods for normalizing heterogeneous data sources | Z-scoring, min-max scaling, batch normalization |

| Fusion Architectures | Models for integrating multimodal data | Early, intermediate, or late fusion approaches |

| Validation Framework | Methods for assessing model performance | Cross-validation, bootstrapping, external validation sets |

Procedure

Data Preparation and Preprocessing

- Imaging Data: Collect and preprocess medical images (CT, MRI, PET, or digital pathology) according to clinical standards [20]

- Apply data augmentation techniques (rotation, flipping, intensity adjustments) to increase dataset diversity and reduce overfitting [20]

- Genomic Data: Process whole genome or targeted sequencing data, including quality control, alignment, and variant calling [20]

- Encode genomic variants using appropriate numerical representations (one-hot encoding, embeddings) [20]

- Clinical Data: Extract structured and unstructured data from EHRs, applying NLP techniques where necessary for unstructured text [1]

Model Architecture Selection and Design

- Imaging Pathway: Implement CNN architectures (e.g., ResNet, DenseNet) for feature extraction from images [20]

- Genomic Pathway: Implement RNN variants (LSTM, GRU) or Transformers for sequence data processing [20]

- Clinical Data Pathway: Implement MLP or Transformer architectures for structured clinical data [1]

- Fusion Strategy: Select appropriate fusion approach:

- Early Fusion: Concatenate raw data inputs before processing

- Intermediate Fusion: Combine features from intermediate layers

- Late Fusion: Combine predictions from separate models [20]

Model Training and Optimization

- Implement appropriate loss functions for the specific task (cross-entropy for classification, mean squared error for regression) [20]

- Apply regularization techniques (dropout, weight decay) to prevent overfitting [20]

- Utilize optimization algorithms (Adam, SGD with momentum) for parameter optimization [20]

- Employ learning rate scheduling and early stopping based on validation performance [20]

Model Interpretation and Validation

- Apply interpretability techniques (attention mechanisms, saliency maps, SHAP) to understand model decisions [20] [4]

- Perform rigorous validation using held-out test sets and external validation cohorts where available [20]

- Conduct ablation studies to understand contribution of different data modalities [20]

Diagram 2: Deep learning architecture for multi-modal cancer detection.

Implementation Considerations for ML Pipelines

Data Quality and Harmonization

The implementation of robust machine learning pipelines for cancer diagnostics requires careful attention to data quality and harmonization across modalities. Medical data often suffers from heterogeneity due to variations in equipment, protocols, and institutional practices [22]. This is particularly challenging for imaging data, where differences in scanner manufacturers, acquisition parameters, and reconstruction algorithms can introduce significant variability that negatively impacts model generalizability [16]. Establishing standardized preprocessing protocols is essential, including image resampling to consistent resolutions, intensity normalization, and appropriate data augmentation strategies to increase dataset diversity while preserving biological signals [20].

Genomic data presents its own harmonization challenges, with batch effects, different sequencing depths, and variant calling pipelines potentially introducing technical artifacts [18]. Implementing rigorous quality control metrics, utilizing batch correction algorithms, and standardizing processing workflows across datasets are critical steps to ensure data consistency [20]. For clinical records, the extensive use of unstructured text requires robust natural language processing (NLP) approaches to extract structured information, while dealing with variations in terminology, abbreviations, and documentation practices across healthcare systems [1]. The emergence of large language models (LLMs) has significantly advanced capabilities in processing clinical text, enabling more accurate extraction of key clinical concepts and relationships from unstructured narratives [1].

Computational Infrastructure and Model Optimization

The computational demands of processing multimodal cancer data require specialized infrastructure and careful model optimization. Deep learning approaches, particularly for high-resolution imaging data, typically require GPU acceleration and distributed computing resources to manage training times effectively [20]. Memory management becomes particularly important when working with whole slide images in digital pathology or high-resolution 3D medical images, which can exceed several gigabytes per patient [19].

Model optimization should address both performance and efficiency considerations. Techniques such as transfer learning can leverage pre-trained models on large-scale datasets (e.g., ImageNet for CNN architectures) to improve performance with limited medical data [20]. Appropriate regularization strategies, including dropout, batch normalization, and data augmentation, help prevent overfitting given the typically limited dataset sizes in medical applications [20]. For genomic sequence analysis, specialized architectures such as Transformers and Graph Neural Networks (GNNs) have shown promise in capturing long-range dependencies and topological relationships within biological data [20].

Validation Strategies and Clinical Translation

Rigorous validation is essential for establishing the reliability and generalizability of multimodal cancer diagnostic models. Internal validation through techniques such as k-fold cross-validation provides initial performance estimates, but external validation on completely independent datasets from different institutions is necessary to assess true model generalizability [20]. The use of synthetic data generation through approaches such as Generative Adversarial Networks (GANs) can help address data scarcity issues and create diverse validation scenarios, though careful attention must be paid to preserving biological fidelity [4].

Clinical translation of these models requires additional considerations beyond technical performance. Model interpretability is crucial for building clinician trust and facilitating integration into clinical workflows [20] [4]. Techniques such as attention mechanisms, saliency maps, and SHAP (SHapley Additive exPlanations) values can help elucidate the contribution of different input features to model predictions [4]. Regulatory compliance, including adherence to frameworks such as HIPAA for data privacy and FDA requirements for software as a medical device, must be addressed throughout the development process [22]. Finally, prospective clinical validation studies are ultimately necessary to demonstrate real-world clinical utility and impact on patient outcomes before widespread clinical adoption [20].

Artificial intelligence (AI) is revolutionizing the landscape of oncological research and the advancement of personalized clinical interventions [23] [1]. Progress in three interconnected areas—including the development of methods and algorithms for training AI models, the evolution of specialized computing hardware, and increased access to large volumes of cancer data such as imaging, genomics, and clinical information—has converged, leading to promising new applications of AI in cancer research [23]. The selection of AI models depends fundamentally on the data type and clinical objective, with Convolutional Neural Networks (CNNs) and Transformers emerging as two of the most impactful architectures driving innovations across the cancer care continuum [23].

This application note provides a structured overview of these major AI model types, their specific oncological applications, detailed experimental protocols for their implementation, and essential reagent solutions for researchers building optimized machine learning pipelines for cancer diagnostics.

Convolutional Neural Networks (CNNs) in Oncology

Architecture and Clinical Rationale

CNNs are deep learning architectures specifically designed for processing structured, grid-like data such as images, making them exceptionally well-suited for analyzing medical images including histopathology slides, mammograms, and radiology scans [23]. Their architecture utilizes convolutional layers that act as learnable filters, scanning input images to extract hierarchical features—from simple edges and textures in early layers to complex morphological patterns and tissue structures in deeper layers [24]. This spatial hierarchy enables CNNs to identify subtle cancerous patterns that may be imperceptible to the human eye.

Key Oncological Applications and Performance

CNNs have demonstrated remarkable success across multiple cancer types and diagnostic modalities. The table below summarizes quantitative performance data from recent studies implementing CNN architectures for various oncological applications.

Table 1: Performance Metrics of CNN Applications in Oncology

| Cancer Type | Application | AI Model | Dataset Size | Key Metric | Performance | Reference |

|---|---|---|---|---|---|---|

| Colorectal Cancer | Histopathology tissue classification | Lightweight CNN | NCT-CRC-HE-100K & CRC-VAL-HE-7K datasets | Test Accuracy | 0.990 ± 0.003 | [24] [25] |

| Breast Cancer | Screening detection on 2D mammography | Ensemble of three DL models | 25,856 women (UK) & 3,097 women (US) | AUC | 0.889 (UK) & 0.810 (US) | [23] |

| Colorectal Cancer | Polyp detection during colonoscopy | CRCNet | 464,105 training images from 12,179 patients | Sensitivity | 91.3% vs. 83.8% (human) | [23] |

| Breast Cancer | Detection on 2D/3D mammography | Progressively trained RetinaNet | 131 index cancers + 154 confirmed negatives | Absolute Sensitivity Increase | +14.2% at average reader specificity | [23] |

Specialized CNN Architectures

Beyond standard architectures, specialized CNN implementations are addressing specific clinical challenges:

- Lightweight CNNs: Designed for resource-constrained environments, these models offer high performance with significantly reduced computational requirements. One recent example achieves 99.0% accuracy in colon cancer tissue classification with only 4.4 million parameters and a model size of 16.9 megabytes, enabling deployment on mobile health applications and embedded devices [24].

- Hybrid CNN-Transformer Architectures: Frameworks like TransBreastNet combine CNNs for spatial encoding of lesions with Transformer modules for temporal encoding, enabling simultaneous prediction of breast cancer subtypes (95.2% accuracy) and lesion progression stages (93.8% accuracy) [26]. This approach integrates longitudinal image sequences with clinical metadata for more holistic patient assessment.

Transformer Architectures in Oncology

Architecture and Clinical Rationale

Transformer architectures utilize self-attention mechanisms to weigh the importance of different elements in a sequence when processing data, enabling them to capture complex, long-range dependencies and contextual relationships [27]. Unlike CNNs, which are specialized for spatial data, Transformers are sequence-to-sequence models that have demonstrated remarkable flexibility across diverse data modalities including genomic sequences, clinical time-series data, and structured electronic health records [23] [27].

The self-attention mechanism is particularly valuable in oncology for interpreting multimodal patient data, where the clinical significance of a single biomarker often depends on the context provided by other clinical and molecular features [27].

Key Oncological Applications and Performance

Transformers are advancing oncology applications, particularly those involving complex, multimodal data integration. The table below summarizes performance data from recent transformer implementations.

Table 2: Performance Metrics of Transformer Applications in Oncology

| Application Domain | Specific Task | Model Name | Dataset | Key Metric | Performance | Reference |

|---|---|---|---|---|---|---|

| Survival Prediction | Pan-cancer immunotherapy response prediction | Clinical Transformer | 12 datasets, 156,192 patients | Concordance Index (C-index) | 0.73 | [27] |

| Biomarker Discovery | FGFR alteration prediction in bladder cancer | Vision Transformer (ViT) foundation model | >58,000 whole slide images | AUC | 80-86% | [28] |

| Treatment Outcome Prediction | Long-term outcome in NSCLC patients | Transformer-based AI (NAIM) | 1,050 patients across 61 institutions | C-index for risk of death | 62.98 ± 2.11 | [29] |

| Mutational Analysis | Classification of pathogenic variants | Large Language Model | TCGA & AACR Project GENIE | Validation against known pathways | Consistent with Vogelstein model | [30] |

Advanced Transformer Implementations

- Clinical Transformer Framework: This specialized implementation addresses unique challenges in clinical oncology, including small dataset sizes, sparse features, and missing data [27]. Through transfer learning and self-supervised pretraining on large datasets, the model can be fine-tuned for specific prediction tasks while maintaining interpretability through attention mechanisms and SHapley Additive exPlanations (SHAP) analysis [27].

- Vision Transformers in Digital Pathology: Applied to whole slide images, Vision Transformers can predict molecular alterations directly from H&E-stained slides, potentially reducing reliance on more costly molecular tests [28]. For example, Johnson & Johnson's MIA:BLC-FGFR algorithm predicts FGFR alterations in bladder cancer with 80-86% AUC, addressing tissue scarcity challenges in non-muscle invasive bladder cancer [28].

Experimental Protocols for AI Implementation in Oncology

Protocol: Developing a Lightweight CNN for Histopathology Classification

This protocol outlines the procedure for developing and validating a lightweight CNN for colon cancer tissue classification using histopathology images [24].

1. Data Acquisition and Curation

- Obtain annotated histopathology image datasets (e.g., NCT-CRC-HE-100K and CRC-VAL-HE-7K).

- Implement a parametric Gaussian distribution-based data cleaning approach to remove outliers and enhance data quality.

- Partition data into training, validation, and test sets (typical ratio: 70:15:15).

2. Model Architecture Design

- Design a non-pretrained CNN architecture optimized for computational efficiency.

- Configure convolutional layers with increasing filter sizes (e.g., 32, 64, 128) to capture hierarchical features.

- Incorporate pooling layers for spatial dimension reduction and dropout layers for regularization.

- Set the final fully connected layer with softmax activation for multi-class classification.

3. Model Training and Optimization

- Initialize model parameters using He or Xavier initialization.

- Select appropriate loss function (categorical cross-entropy for multi-class classification).

- Implement an optimization algorithm (Adam or SGD with momentum) with learning rate scheduling.

- Train for a sufficient number of epochs (typically 50-100) with batch sizes of 32-64.

- Apply data augmentation techniques (rotation, flipping, color jittering) to improve generalization.

4. Model Validation and Interpretation

- Evaluate model performance on the held-out test set using accuracy, precision, recall, specificity, and F1 scores.

- Generate confusion matrices to identify class-specific performance patterns.

- Implement visualization techniques (Grad-CAM, attention maps) to interpret model decisions and highlight clinically relevant regions.

Protocol: Implementing a Clinical Transformer for Survival Prediction

This protocol details the process for implementing a transformer-based model for predicting cancer survival outcomes [29] [27].

1. Data Preprocessing and Integration

- Collect multimodal patient data including clinical variables, genomic features, and treatment histories.

- Handle missing data through explicit encoding of missingness or using model capabilities that natively handle missing values without imputation.

- Normalize continuous variables and encode categorical variables appropriately.

- Structure data into feature matrices with associated survival time and event indicators.

2. Model Configuration and Pretraining

- Implement transformer encoder layers with multi-head self-attention mechanisms.

- Utilize transfer learning by pretraining on large datasets (e.g., TCGA, GENIE) using self-supervised learning for masked feature prediction.

- Gradually fine-tune the model on the target dataset and specific survival prediction task.

- Configure output heads for survival prediction, typically using a Cox proportional hazards formulation.

3. Model Interpretation and Validation

- Apply explainable AI techniques (SHAP, attention rollout) to quantify feature contributions to predictions.

- Validate model performance on independent test sets using concordance index (C-index) and time-dependent AUC metrics.

- Generate Kaplan-Meier curves to visualize survival stratification between risk groups.

- Perform ablation studies to confirm the contribution of individual model components.

Essential Research Reagent Solutions

The table below catalogues key computational tools and resources essential for developing AI models in oncological research.

Table 3: Essential Research Reagent Solutions for AI Oncology Research

| Reagent Category | Specific Tool/Platform | Primary Function | Application Example | Reference |

|---|---|---|---|---|

| Histopathology Datasets | NCT-CRC-HE-100K & CRC-VAL-HE-7K | Annotated colon tissue images for training | CNN development for colorectal cancer classification | [24] [25] |

| Genomic Data Resources | TCGA & AACR Project GENIE | Curated cancer genomic datasets | Pretraining foundation models for variant interpretation | [30] |

| Vision Foundation Models | Pre-trained Vision Transformers (ViT) | Feature extraction from whole slide images | Predicting molecular alterations from H&E slides | [28] |

| Explainability Frameworks | SHAP (SHapley Additive exPlanations) | Model interpretation and feature importance | Identifying key predictors in survival models | [29] [27] |

| Digital Pathology Platforms | Concentriq, Aperture | Whole slide image management and analysis | Deploying AI algorithms in clinical workflows | [28] |

The integration of CNNs and Transformers into oncology research represents a paradigm shift in cancer diagnostics and treatment optimization. CNNs excel at extracting spatial features from medical images, while Transformers capture complex contextual relationships in multimodal clinical and genomic data. Together, these architectures are enabling more precise cancer classification, prognostic stratification, and treatment response prediction. As these technologies continue to evolve, their clinical translation will increasingly depend on robust validation, interpretability, and seamless integration into diagnostic workflows. The experimental protocols and reagent solutions outlined in this document provide a foundation for researchers to build optimized machine learning pipelines that advance the field of computational oncology.

In cancer diagnostics research, the transition from a high-performing experimental model to a reliable clinical tool represents a critical challenge. Machine Learning Operations (MLOps) provides the essential engineering culture and practices to bridge this gap, ensuring that predictive models for tasks such as tumor detection or risk stratification become dependable production assets [31] [32]. This discipline adapts DevOps principles to manage the unique complexities of ML systems, where performance depends not only on code but also on evolving data and models [33]. Framing this approach within oncology is paramount, as it directly impacts the development of tools that can accelerate progress toward improved health outcomes for all populations [23].

The Core Distinction: Experimentation vs. Production

The fundamental distinction between the experimental and production mindsets lies in their primary objectives. Experimentation is a research-centric process focused on exploratory data analysis, hypothesis testing, and achieving the highest possible predictive performance on historical datasets. In contrast, production is an engineering discipline concerned with reliability, scalability, monitoring, and maintaining model performance over time in a live clinical environment [34] [33].

This dichotomy manifests in the tools and methodologies employed. Data scientists often work interactively with notebooks to verify the applicability of ML for a given problem, delivering a stable proof-of-concept model [34] [33]. The production phase, or "ML Operations," uses established engineering practices such as testing, versioning, continuous delivery, and monitoring to deploy this model into a real-world setting [34].

Table 1: Characterizing the Experimentation and Production Environments in Cancer Research

| Dimension | Experimentation (The Lab) | Production (The Clinic) |

|---|---|---|

| Primary Goal | Verify ML applicability; maximize offline metric performance on holdout datasets [34]. | Deliver reliable, low-latency predictions; maintain performance on live, evolving data [32]. |

| Process | Manual, script-driven, interactive iteration of algorithms and parameters [33]. | Automated, orchestrated pipelines for retraining, validation, and deployment [31]. |

| Output | A single trained model artifact and an evaluation report [33]. | A deployed prediction service (e.g., REST API) with continuous monitoring [33]. |

| Data | Static, historical dataset, often split into train/validation/test sets [35]. | Continuously arriving live data subject to concept drift and shifting distributions [32]. |

| Key Metrics | Offline accuracy, F1-score, Area Under the Curve (AUC) [35]. | Up-time, inference latency, data drift, and business KPIs tied to clinical outcomes [32]. |

A Maturity Framework for MLOps in Healthcare

The progression from a purely manual process to a fully automated MLOps pipeline can be understood through a maturity framework. This framework helps diagnostic research teams assess their current state and identify the next steps toward robust operationalization [31].

Table 2: MLOps Maturity Levels for a Cancer Diagnostics Pipeline

| Maturity Level | Key Characteristics | Training & Deployment Trigger | Monitoring & Retraining |

|---|---|---|---|

| Level 0: Manual Process | Entirely manual, interactive process driven by notebooks; disconnect between ML and operations teams [33]. | Manually triggered by data scientists [33]. | No active performance monitoring; retraining is an infrequent, manual event [33]. |

| Level 1: ML Pipeline Automation | Introduction of automated data and model pipelines; continuous training of the model [34]. | Automated pipeline execution triggered by new data availability [34]. | Presence of continuous monitoring (CM); model retraining is triggered manually by engineers [31]. |

| Level 2: CI/CD Pipeline Automation | Full automation with a CI/CD system; fast and reliable deployments [34]. | Automated triggers from new data, model code changes, or performance alerts [34]. | Presence of continuous monitoring (CM) and continual learning (CL); fully automated retraining and deployment [31]. ``` |

The following workflow diagram illustrates the automated pipeline architecture characteristic of a high-maturity (Level 2) MLOps system in a cancer diagnostics context.

Quantitative Data: Evidence of MLOps Impact in Healthcare

A scoping review on MLOps implementations in healthcare provides quantitative insight into its current adoption. The review analyzed 19 studies and synthesized the reported MLOps workflow components and maturity levels [31].

Table 3: MLOps Workflow Implementation in Healthcare (n=19 Studies)

| MLOps Workflow Stage | Implementation Rate |

|---|---|

| Data Extraction | 19/19 (100%) |

| Data Preparation and Engineering | 18/19 (95%) |

| Model Training | 19/19 (100%) |

| Model Evaluation (ML Metrics) | 17/19 (89%) |

| Model Serving and Deployment | 15/19 (79%) |

| Model Validation and Test in Production | 14/19 (74%) |

| Continuous Monitoring (CM) | 14/19 (74%) |

| Continual Learning (CL) | 13/19 (68%) |

Table 4: Reported MLOps Maturity in Healthcare Studies

| Maturity Level | Prevalence | Key Characteristics |

|---|---|---|

| Low Maturity | 5/19 Studies | Absence of Continuous Monitoring (CM) and Continual Learning (CL) [31]. |

| Partial Maturity | 1/19 Studies | Presence of CM, but lack of CL (model retraining manually triggered) [31]. |

| Full Maturity | 13/19 Studies | Presence of both CM and CL, enabling automated retraining and deployment [31]. |

Experimental Protocols for MLOps Implementation

Protocol: Establishing Model Evaluation and Quality Standards

Objective: To define and automate rigorous evaluation metrics that align model performance with clinical business KPIs, ensuring only high-quality models progress to production [32].

Methodology:

- Define Metric Thresholds: Establish performance thresholds (e.g., minimum precision for cancer detection, maximum mean absolute error for survival prediction) that are directly tied to clinical success criteria [32].

- Build Testing Suites: Develop automated testing suites that execute with every training job. These should validate:

- Capture Lineage Artifacts: For full reproducibility, automatically capture and store data snapshots, hyperparameters, environment details, and evaluation reports for every training run in a model registry [32].

Protocol: Deploying Comprehensive Production Monitoring

Objective: To detect model performance degradation and data drift in real-time after deployment, enabling proactive intervention [32].

Methodology:

- Instrument Inference Services: Capture and log every prediction request, including the feature vector, model version, prediction, and confidence score [32].

- Monitor Key Signals:

- Data Drift: Statistically compare the distribution of live production features against the training data baseline using metrics like Population Stability Index (PSI) [32].

- Concept Drift: Monitor for decay in the relationship between input features and the target output by tracking performance proxies or comparing predictions with later-arriving ground-truth labels [31] [33].

- Business KPIs: Track operational metrics such as inference latency and service uptime [32].

- Configure Alerting: Set up alerting policies tied to service-level objectives. For example, trigger an alert if data drift for a key feature like "tumor size" exceeds a predefined limit, prompting investigation or automated retraining [32].

Protocol: Implementing a Continuous Integration Pipeline for ML

Objective: To automate the building, testing, and validation of ML assets upon every change, reducing manual hand-offs and accelerating release cycles [34] [33].

Methodology:

- Store Pipeline Definitions: Use infrastructure-as-code to define the entire ML pipeline (data processing, training, evaluation) and store it in a version control system (e.g., Git) alongside application code [34].

- Automate the Pipeline: Configure a CI system to automatically trigger the pipeline on every code commit. The pipeline should:

- Lint code and run unit tests.

- Validate data schemas and run data quality checks.

- Execute the model training process.

- Run the comprehensive evaluation suite from Protocol 5.1.

- Package the validated model artifact and store it in a model registry [32].

- Integrate Quality Guardrails: Embed automated evaluation as a gate in the pipeline. If a model fails to meet the predefined accuracy, fairness, or data quality thresholds, the pipeline is halted, and the model is not promoted [32].

The Scientist's Toolkit: Essential MLOps Research Reagents

Implementing a robust MLOps pipeline requires a suite of tools and components that act as the essential "research reagents" for operationalizing cancer diagnostics models.

Table 5: Essential MLOps Components for Cancer Diagnostics Research

| Tool Category | Function | Example Solutions |

|---|---|---|

| Source Control | Versioning for code, data, and ML model artifacts to ensure auditable and reproducible training [34]. | Git, DVC |

| Experiment Tracking | Tracking hyperparameters and metrics of parallel ML experiments to decide which model to promote [34]. | Weights & Biases (wandb), MLflow |

| Feature Store | Providing identical feature transformation logic for both model training and inference to prevent training-serving skew [34] [32]. | Tecton, Feast |

| Model Registry | A centralized repository for storing, versioning, and managing trained ML models throughout their lifecycle [34]. | MLflow Model Registry |

| ML Pipeline Orchestrator | Automating and coordinating the steps of the end-to-end ML workflow, from data ingestion to model deployment [34]. | Kubeflow Pipelines, Apache Airflow |

| Monitoring Platform | Continuously tracking model performance, data drift, and business metrics in production [32]. | Galileo, Evidently AI |

Adopting the MLOps mindset is not merely a technical shift but a fundamental cultural one that is critical for translating predictive models from experimental research into reliable clinical tools. By embracing automation, continuous monitoring, and rigorous governance, research teams can build cancer diagnostic systems that are not only accurate but also resilient, scalable, and trustworthy. This evolution from a focus on isolated model performance to a holistic view of the entire system lifecycle is the key to unlocking the full potential of machine learning in the fight against cancer.

Building Effective Diagnostic Pipelines: Methods and Real-World Applications

Multimodal artificial intelligence (MMAI) is redefining oncology by integrating heterogeneous datasets from diverse diagnostic modalities into cohesive analytical frameworks for more accurate and personalized cancer care [36]. Cancer manifests across multiple biological scales, from molecular alterations and cellular morphology to tissue organization and clinical phenotype [36]. Predictive models relying on a single data modality fail to capture this multiscale heterogeneity, limiting their ability to generalize across patient populations [36].

MMAI approaches enhance predictive accuracy and robustness by contextualizing molecular features within anatomical and clinical frameworks, yielding a more comprehensive representation of disease [36]. Such models are more likely to support mechanistically plausible inferences, improving interpretability and clinical relevance [36]. This integration enables a holistic view of tumor biology that mirrors clinical decision-making, where physicians naturally synthesize information from multiple sources—including imaging results, clinical data, and family history—to reach accurate diagnoses [37].

Current State of MMAI Applications in Oncology

Multimodal AI applications span the entire cancer care continuum, from prevention and early detection to diagnosis, treatment selection, and monitoring. The table below summarizes key applications and representative studies.

Table 1: MMAI Applications Across the Cancer Care Continuum

| Application Area | Specific Task | Data Modalities Integrated | Reported Performance |

|---|---|---|---|

| Cancer Diagnosis | Distinguishing cancer subtypes [37] | Histopathology WSIs, pathology reports | 94.65% accuracy, 0.9553 precision, 0.9472 recall [37] |

| Cancer Diagnosis | Alzheimer's disease diagnosis [38] | Imaging, clinical, genetic information | AUC of 0.993 [38] |

| Risk Stratification | Breast cancer risk prediction [36] | Clinical metadata, mammography, trimodal ultrasound | Similar or better than pathologist-level assessments [36] |

| Risk Stratification | Lung cancer risk prediction [36] | Low-dose CT scans | ROC-AUC up to 0.92 [36] |

| Treatment Response | Melanoma relapse prediction [36] | Histology, genomics | ROC-AUC 0.833 for 5-year relapse [36] |

| Treatment Response | Glioma and renal cell carcinoma risk stratification [36] | Histology, genomics | Outperformed WHO 2021 classification [36] |

| Survival Prediction | Colorectal cancer overall survival [1] | Histology WSIs (tumor-stroma ratio) | Validated in two independent cohorts [1] |

| Drug Development | Target identification [39] | Multi-omics data (genomics, transcriptomics, proteomics) | Reduced discovery timeline from years to months [39] |

The integration of MMAI in clinical workflows addresses fundamental limitations of unimodal approaches. In digital pathology, for instance, AI-assisted diagnostic approaches have demonstrated 96.3% sensitivity and 93.3% specificity across common tumor-type classifiers in a meta-analysis [36]. Furthermore, lightweight architectures can infer genomic alterations directly from histology slides (ROC-AUC 0.89), reducing turnaround time and cost of targeted sequencing across solid tumors [36].

Technical Frameworks for Multimodal Integration

Fusion Strategies and Architectures

Multimodal fusion techniques can be categorized based on the stage at which integration occurs, each with distinct advantages and limitations:

- Early Fusion: Raw data from different modalities are combined before feature extraction. This approach preserves potential cross-modal interactions but requires solving the alignment problem between heterogeneous data sources [40].

- Intermediate (Feature-level) Fusion: Features extracted from unimodal networks are combined using various techniques including operation-based (concatenation, element-wise operations), subspace-based, tensor-based, or graph-based methods [40]. This allows the model to learn cross-modal interactions while handling modality-specific characteristics.

- Late Fusion: Decisions from unimodal models are combined through majority vote, weighted sum, or averaging [40]. This approach is computationally simpler but may miss important cross-modal correlations.

Table 2: Multimodal Fusion Architectures and Their Applications

| Architecture | Mechanism | Advantages | Clinical Applications |

|---|---|---|---|

| Transformer-based Models [38] | Self-attention mechanisms weight importance of different data components | Parallel processing, handles sequential data well, models long-range dependencies | Cancer subtype classification [37], survival prediction [36] |

| Graph Neural Networks (GNNs) [38] | Models data as graph-structured format with nodes and edges | Handles non-Euclidean data structures, captures complex relationships between modalities | Tumor microenvironment modeling [38], cellular interaction networks [41] |

| Tensor Fusion Networks [37] | Uses outer product for intermodal and intramodal feature interactions | Captures higher-order interactions between modalities | Pathomic fusion (histopathology + genomics) [37] |

| Multiple Instance Learning (MIL) [37] | Aggregates patch-level information for slide-level supervision | Handles gigapixel WSIs with weak supervision | WSI classification [37], tumor-stroma ratio quantification [1] |

Foundation Models in Computational Pathology

Recent advances in foundation models are transforming computational pathology by enabling development of AI tools for diagnosis, prognosis, and biomarker prediction from digitized tissue sections [42]. The TITAN (Transformer-based pathology Image and Text Alignment Network) model represents a significant breakthrough—a multimodal whole-slide foundation model pretrained on 335,645 whole-slide images via visual self-supervised learning and vision-language alignment with corresponding pathology reports and 423,122 synthetic captions [42].

TITAN introduces a large-scale pretraining paradigm that leverages millions of high-resolution region-of-interests (ROIs) for scalable whole-slide image encoding [42]. Without any fine-tuning or requiring clinical labels, TITAN can extract general-purpose slide representations and generate pathology reports that generalize to resource-limited clinical scenarios such as rare disease retrieval and cancer prognosis [42].

Experimental Protocols for MMAI Implementation

Multimodal Whole-Slide Image Classification

The MPath-Net framework provides a reproducible protocol for integrating histopathology images with clinical data for cancer subtype classification [37]:

Data Preparation:

- Collect whole-slide images (WSIs) and corresponding pathology reports from cancer genomics programs (e.g., TCGA dataset) [37].

- For WSIs: Extract smaller patches (512×512 pixels) from regions of interest using automated segmentation or tissue detection algorithms [37].

- For text data: Process pathology reports using natural language processing tools (e.g., tokenization, SciSpaCy) to extract meaningful clinical features [37].

Feature Extraction:

- Image features: Use Multiple Instance Learning (MIL) approaches (ABMIL, TransMIL) with self-supervised pretraining on patch-level features [37].

- Text features: Generate embeddings using Sentence-BERT or ClinicalBERT transformers, frozen during initial training to preserve pretrained contextual representations [37].

Multimodal Fusion and Training:

- Concatenate 512-dimensional image and text embeddings [37].

- Pass combined features through custom fine-tuning layers (fully connected layers with dropout) [37].

- Employ end-to-end training where image encoder and fusion layers are trained jointly [37].

- Use cross-entropy loss for classification tasks with Adam optimizer and learning rate 1e-4 [37].

- Validate using k-fold cross-validation (typically k=5) on independent test sets [37].

Performance Evaluation:

- Assess using accuracy, precision, recall, F1-score, and AUC-ROC [37].

- Generate attention heatmaps for model interpretability and tumor localization [37].

- Compare against unimodal baselines and alternative fusion strategies [37].

MMAI for Survival Prediction

For survival analysis using multimodal data, the following protocol has demonstrated success:

Data Integration:

- Combine histopathology WSIs with genomic features (mutation status, gene expression) and clinical variables (age, stage, treatment history) [41].

- Process WSIs using deep learning models (ResNet-50, VGG) pretrained on natural images or via self-supervised learning on medical images [1].

- Encode genomic data using pathway analysis or gene signature methods (PAM50, Oncotype DX) [41].

Fusion Architecture:

- Implement cross-modal attention mechanisms to weight importance of features from different modalities [41].

- Use Cox proportional hazards models with neural network extensions for survival prediction [40].

- Regularize using L1/L2 penalties to prevent overfitting on high-dimensional multimodal data [41].

Validation:

- Evaluate using concordance index (c-index) to measure agreement between predicted risk and observed survival [40].

- Perform stratified analysis across cancer subtypes and demographic groups to ensure generalizability [41].

- Use time-dependent AUC and calibration plots to assess predictive performance at specific timepoints [41].

MMAI Integration Workflow

Successful implementation of MMAI pipelines requires specific computational tools and data resources. The table below details essential components for developing and validating multimodal AI systems in oncology research.

Table 3: Essential Research Resources for MMAI in Oncology

| Resource Category | Specific Tools/Platforms | Key Functionality | Application Context |

|---|---|---|---|

| Whole-Slide Image Analysis | TITAN [42] | Whole-slide foundation model for general-purpose slide representation | Rare cancer retrieval, zero-shot classification, pathology report generation |

| Whole-Slide Image Analysis | CONCH [42] | Patch encoder for feature extraction from histopathology images | Preprocessing WSIs for slide-level representation learning |

| Genomic Data Processing | GATK [41] | Genome Analysis Toolkit for variant discovery | Somatic mutation calling from tumor-normal pairs |

| Genomic Data Processing | DESeq2, EdgeR [41] | Differential expression analysis | Identifying gene expression patterns across cancer subtypes |

| Multimodal Fusion Frameworks | MPath-Net [37] | End-to-end multimodal framework combining WSIs and pathology reports | Cancer subtype classification |

| Multimodal Fusion Frameworks | Pathomic Fusion [36] | Fusion strategy combining histology and genomics | Glioma and renal-cell carcinoma risk stratification |

| Medical Imaging Platforms | MONAI [36] | Open-source PyTorch-based framework for medical imaging | Radiology image analysis, tumor segmentation, detection |

| Data Resources | TCGA [37] | The Cancer Genome Atlas providing multi-omics and clinical data | Pan-cancer analysis, model training and validation |

| Data Resources | CPTAC [40] | Clinical Proteomic Tumor Analysis Consortium | Proteogenomic correlation studies |

Implementation Challenges and Future Directions

Despite promising advances, several challenges remain in the widespread clinical adoption of MMAI systems:

Data Heterogeneity and Quality: Different modalities vary in format, structure, and coding standards, often originating from multiple vendors or institutions, making normalization and harmonization crucial before integration [41]. Data quality issues such as missing values, inconsistencies, and noise can compromise integration efforts and model performance [41].

Computational Demands: The storage and processing requirements for large-scale multimodal datasets—particularly high-resolution imaging and raw genomics data—necessitate advanced infrastructure and scalable analytical tools [37] [41].

Interpretability and Validation: Many AI models, especially deep learning, operate as "black boxes," limiting mechanistic insight into their predictions [39]. Extensive preclinical and clinical validation remains resource-intensive, requiring rigorous evaluation across diverse patient populations [1].

Future development should focus on creating standardized methodologies and workflows for multimodal fusion [41], improving model interpretability through attention mechanisms and explainable AI techniques [37], and advancing federated learning approaches to enable collaboration while preserving data privacy [39]. As these technical and validation challenges are addressed, MMAI is poised to fundamentally transform oncology research and clinical practice, ultimately enabling more precise, personalized cancer care.

MMAI Fusion Strategies

Homologous recombination deficiency (HRD) is a characteristic of cancer cells that impairs their ability to effectively repair double-strand DNA breaks. This condition arises from deficiencies in the homologous recombination repair (HRR) pathway, a high-fidelity DNA repair mechanism [43]. The clinical significance of HRD status is profound, as it serves as a key predictive biomarker for response to targeted therapies like PARP inhibitors (PARPi) and platinum-based chemotherapy [43] [44]. Tumors with HRD positivity exhibit genomic instability, making them particularly vulnerable to these DNA-damaging agents, which lead to synthetic lethality in cancer cells already deficient in DNA repair mechanisms.

Traditional methods for HRD detection rely on molecular biology assays, including genomic instability scoring (e.g., assessment of loss of heterozygosity, telomeric allelic imbalance, and large-scale state transitions), mutational signature analysis, and sequencing of HRR-related genes such as BRCA1 and BRCA2 [43]. While these approaches are established in clinical practice, they present substantial limitations, including high costs, extended turnaround times, and significant failure rates (reported to be 20-30%) due to insufficient tissue quality or quantity [21]. Furthermore, access to these advanced molecular tests is often restricted to specialized centers in high-income countries, creating substantial healthcare disparities in cancer diagnostics and precision oncology implementation [44].