NGS vs Sanger Sequencing in Oncology: A Strategic Guide for Precision Mutation Detection

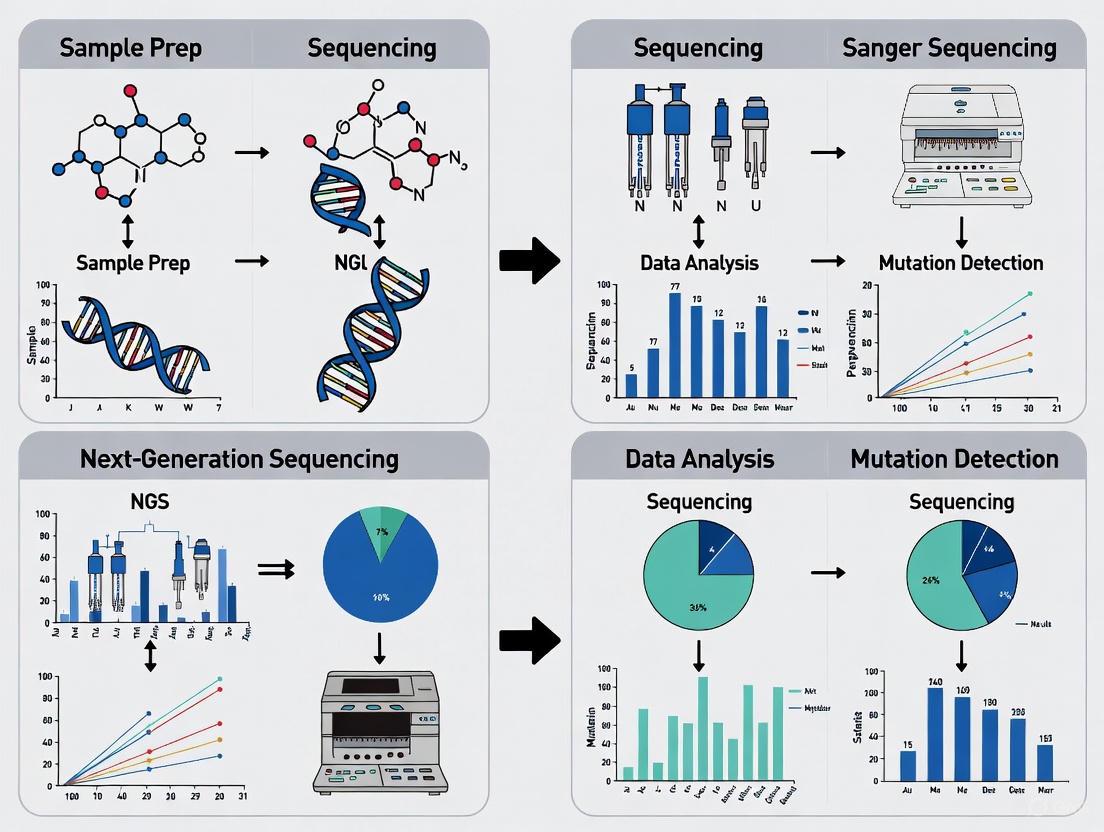

This article provides a comprehensive comparison of Next-Generation Sequencing (NGS) and Sanger sequencing for cancer mutation detection, tailored for researchers and drug development professionals.

NGS vs Sanger Sequencing in Oncology: A Strategic Guide for Precision Mutation Detection

Abstract

This article provides a comprehensive comparison of Next-Generation Sequencing (NGS) and Sanger sequencing for cancer mutation detection, tailored for researchers and drug development professionals. It covers the foundational principles of both technologies, explores their methodological applications in oncology, addresses troubleshooting and optimization strategies for complex genomic analyses, and critically examines validation protocols and comparative performance data. The synthesis of these four intents offers a decisive framework for selecting the appropriate sequencing technology to advance precision medicine, biomarker discovery, and therapeutic development.

Core Technologies Unveiled: The Fundamental Principles of Sanger and NGS

In the evolving landscape of genomic analysis, Sanger sequencing coupled with capillary electrophoresis (CE) maintains a critical role in modern molecular laboratories, particularly for targeted applications in cancer research. Despite the rise of massively parallel next-generation sequencing (NGS), Sanger sequencing remains the gold-standard for validating NGS findings and conducting focused mutation detection due to its exceptional accuracy and long read lengths [1] [2] [3]. This guide objectively examines the principles, performance, and enduring legacy of CE-based Sanger sequencing within the context of cancer mutation detection, providing researchers with a clear framework for selecting appropriate sequencing methodologies based on experimental requirements.

Sanger sequencing, developed by Frederick Sanger in 1977, revolutionized molecular biology by providing the first practical method for deciphering DNA sequences [4]. The subsequent integration of capillary electrophoresis in the 1990s automated and streamlined this process, enabling the high-throughput completion of the Human Genome Project [5]. While next-generation sequencing (NGS) now dominates large-scale genomic studies, Sanger sequencing maintains irreplaceable value in clinical research environments, especially for confirmatory testing of oncogenic mutations like KRAS and FLT3, where its >99.99% accuracy provides essential validation [1] [4] [6].

The core principle of Sanger sequencing involves the selective incorporation of chain-terminating dideoxynucleotides (ddNTPs) during DNA replication, generating DNA fragments of varying lengths that collectively represent the template sequence [4]. Capillary electrophoresis then separates these fragments with single-base resolution, providing the precise readout that has established this technology as a foundational tool in precision oncology [5] [3].

Principles of Capillary Electrophoresis in Sanger Sequencing

Fundamental Separation Mechanisms

Capillary electrophoresis separates DNA sequencing fragments through a sophisticated interplay of electrokinetic phenomena within microscopic capillaries (typically 50-100μm in diameter). The process relies on three primary separation mechanisms that operate depending on DNA fragment size:

- Free-Zone Capillary Electrophoresis: In the absence of a sieving matrix, separation occurs based on the charge-to-size ratio of DNA fragments. However, this method offers limited resolution for DNA sequencing since all DNA fragments have similar charge-to-mass ratios [7].

- Ogston Sieving: This mechanism dominates the separation of smaller DNA fragments (typically <500 bp). The DNA molecules behave as incompressible spheres that migrate through the pores of the polymer matrix. Smaller fragments navigate the porous network more efficiently, resulting in faster migration and excellent resolution ideal for sequencing applications [7].

- Reptation: For larger DNA fragments that cannot fit through the matrix pores, separation occurs through a snake-like motion where DNA molecules unravel and drag through the polymer network. This mechanism provides poorer resolution and non-linear size-based separation, explaining why Sanger sequencing read lengths typically max out at 500-1000 bases [7].

Evolution from Slab Gels to Capillary Systems

The transition from slab gel electrophoresis to capillary electrophoresis represented a watershed moment in DNA sequencing technology. Traditional slab gel methods were labor-intensive, requiring manual pouring of gels, loading of samples in individual lanes, and extended separation times [5]. The introduction of capillary array electrophoresis by Mathies et al. enabled parallel processing of 96 samples simultaneously, dramatically accelerating throughput while maintaining separation efficiency [5]. This innovation was pivotal for large-scale projects like the Human Genome Project, establishing the automated, high-throughput paradigm that modern sequencing relies upon [5].

Sieving Matrices: From Cross-Linked to Replaceable Polymers

The development of advanced sieving matrices was crucial for robust CE performance:

- Linear Polyacrylamide (LPA): Early capillary systems used cross-linked polyacrylamide gels, which suffered from bubble formation and limited stability [5]. The introduction of non-crosslinked LPA solutions addressed these issues while maintaining high separation efficiency, with early demonstrations achieving remarkable resolution of polydeoxyadenylic acids [5].

- Polydimethylacrylamide (POP-4): This later development offered lower viscosity while effectively suppressing electroosmotic flow, making it ideal for automated systems. POP-4 provides single-base resolution for fragments up to 250 bases and two-base resolution up to 350 bases, perfectly suited for Sanger sequencing applications [7].

- Hydroxyethylcellulose: As a polysaccharide-based gel, this matrix offers low cost and low viscosity but requires additional capillary coatings to control electroosmotic flow [7].

The critical innovation of replaceable polymer matrices enabled automatic replenishment of the separation matrix between runs, facilitating the 24/7 operation necessary for production-scale sequencing [5].

Technical Comparison: Sanger Sequencing vs. NGS in Cancer Research

Performance Metrics and Operational Characteristics

Table 1: Technical comparison between Sanger sequencing and Next-Generation Sequencing

| Feature | Sanger Sequencing (CE-based) | Next-Generation Sequencing (NGS) |

|---|---|---|

| Fundamental Method | Chain termination using ddNTPs [6] | Massively parallel sequencing (e.g., Sequencing by Synthesis) [6] |

| Throughput | Single DNA fragment per reaction [2] | Millions to billions of fragments simultaneously [8] [2] |

| Read Length | 500-1000 bp (long contiguous reads) [4] [6] | 50-300 bp (short-read); >100,000 bp (long-read) [8] [6] |

| Accuracy | >99.99% (Phred score > Q50) [4] [6] | 99.9% (0.1% error rate); improved by high coverage [8] [6] |

| Sensitivity | ~15-20% variant detection limit [8] [2] | ~1% variant detection limit [8] [2] |

| Cost Basis | High cost per base, low cost per run (small projects) [6] | Low cost per base, high capital and reagent cost per run [6] |

| Optimal Sample Number | Cost-effective for 1-20 targets [2] | Cost-effective for high sample volumes/many targets [2] |

| Primary Applications | Targeted confirmation, single-gene variants, validation [1] [6] | Whole genomes, exomes, transcriptomes, rare variants [8] [6] |

Diagnostic Performance in Cancer Mutation Detection

Recent meta-analyses of 56 studies involving 7,143 patients provide quantitative insights into the performance of both technologies specifically in non-small cell lung cancer (NSCLC) mutation profiling:

Table 2: Diagnostic accuracy of NGS versus standard methods in NSCLC [9]

| Mutation Type | Sample Type | Sensitivity (%) | Specificity (%) | Recommended Use |

|---|---|---|---|---|

| EGFR mutations | Tissue | 93 | 97 | First-line testing with NGS [9] |

| ALK rearrangements | Tissue | 99 | 98 | First-line testing with NGS [9] |

| EGFR, BRAF V600E, KRAS G12C | Liquid Biopsy | 80 | 99 | When tissue unavailable [9] |

| ALK, ROS1, RET, NTRK rearrangements | Liquid Biopsy | Limited sensitivity | >95 | Require tissue confirmation [9] |

| Turnaround Time | Liquid Biopsy | 8.18 days (significantly shorter, p<0.001) | Clinical urgency [9] |

The data demonstrate that NGS provides comprehensive mutation analysis with high accuracy in tissue samples, while Sanger sequencing maintains its role for targeted verification of specific mutations identified through NGS, particularly in scenarios requiring absolute confidence in variant calling [9].

Experimental Protocols for Mutation Detection in Cancer Research

KRAS Mutation Detection via Sanger Sequencing

Background: Kirsten rat sarcoma viral oncogene homologue (KRAS) is frequently mutated in multiple cancer types and is associated with poor prognosis. Detection of KRAS mutations is crucial for guiding targeted therapy decisions [1].

Protocol Details:

- DNA Extraction: Isolate genomic DNA from tumor tissue (FFPE or fresh frozen) or liquid biopsy samples

- PCR Amplification: Amplify target regions containing known KRAS hotspots (e.g., codons 12, 13, 61) using sequence-specific primers

- Sequencing Reaction: Utilize dye-terminator sequencing chemistry with fluorescently labeled ddNTPs

- Purification: Remove unincorporated dyes using the BigDye XTerminator purification system

- Capillary Electrophoresis: Inject samples onto CE instrument with polymer sieving matrix (e.g., POP-4)

- Data Analysis: Identify mutations by comparing to wild-type sequences using variant analysis software

Performance Metrics: This approach provides a scalable workflow for rapid, reproducible identification of KRAS mutations (e.g., G12A) in less than six hours with single-base resolution [1].

FLT3-ITD Mutation Analysis by Fragment Analysis

Background: FLT3 (FMS-related tyrosine kinase-3) internal tandem duplication (ITD) mutations occur in approximately 30% of acute myeloid leukemia (AML) patients and confer poor prognosis [1].

Protocol Details:

- DNA Extraction: Obtain high-quality DNA from bone marrow or peripheral blood samples

- PCR Amplification: Utilize tandem duplication (TD)-PCR method with fluorescently labeled primers

- Fragment Separation: Perform capillary electrophoresis with high-resolution sieving matrix

- Size Determination: Compare amplified fragments to size standards for precise length determination

- Quantification: Assess mutant allele burden based on peak heights

Performance Metrics: This method detects ITD mutations ranging from 3 to over 400 bp with sensitivity down to four copies of mutant DNA, enabling accurate minimal residual disease monitoring [1].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key research reagent solutions for capillary electrophoresis-based Sanger sequencing

| Reagent/Material | Function | Application Notes |

|---|---|---|

| BigDye Terminator v3.1 | Fluorescent dideoxy chain terminators for sequencing reactions | Provides balanced ddNTP incorporation for even peak heights [1] |

| POP-4 or POP-7 Polymer | Sieving matrix for fragment separation | POP-4: fragment analysis; POP-7: sequencing applications [7] |

| BigDye XTerminator Kit | Purification of sequencing reactions | Removes unincorporated dyes before CE injection [1] |

| Linear Polyacrylamide (LPA) | Alternative sieving matrix | High resolution but higher viscosity than POP polymers [5] [7] |

| Capillary Arrays | Separation channel for electrophoresis | 1-96 capillary formats available for different throughput needs [5] |

| CRISPR-Cas9 Systems | Gene editing verification | Used with TIDE decomposition analysis for editing efficiency [1] |

| Bisulfite Conversion Reagents | DNA methylation analysis | Enables detection of 5-methylcytosine in CpG islands [1] |

Sanger sequencing by capillary electrophoresis maintains a critical niche in contemporary cancer research despite the expanding dominance of NGS technologies. Its unparalleled accuracy for targeted sequencing, relatively low operational costs for small-scale projects, and established validation protocols make it indispensable for confirming oncogenic mutations like KRAS and FLT3-ITD [1] [6]. The technology's ability to generate long, contiguous reads (>500 bp) with minimal infrastructure requirements ensures its continued relevance in both research and clinical settings [4] [10].

Nevertheless, NGS unquestionably surpasses Sanger sequencing in comprehensive genomic profiling, particularly for detecting rare somatic variants in heterogeneous tumor samples and identifying novel cancer biomarkers [8] [9]. The massively parallel nature of NGS provides unprecedented depth of coverage, enabling researchers to detect mutations present at frequencies as low as 1% - far below the ~15-20% detection limit of Sanger sequencing [2]. This sensitivity is crucial for understanding tumor evolution, heterogeneity, and resistance mechanisms.

The future of cancer genomics lies not in choosing one technology over the other, but in strategically deploying both in a complementary framework. Sanger sequencing provides the gold-standard validation for NGS discoveries, while NGS offers the discovery power to identify novel therapeutic targets. This synergistic approach leverages the unique strengths of both technologies, advancing precision oncology through both comprehensive genomic assessment and unequivocal confirmation of clinically actionable mutations [6] [10] [9].

Next-Generation Sequencing (NGS) has fundamentally transformed cancer research by introducing a massively parallel approach to DNA analysis. This technology represents a radical departure from traditional Sanger sequencing, enabling researchers to sequence millions to billions of DNA fragments simultaneously rather than processing single fragments sequentially [8] [11]. The implications for cancer mutation detection are profound: where Sanger sequencing provided a limited snapshot of the cancer genome, NGS delivers a comprehensive landscape of genetic alterations driving tumorigenesis [2].

This revolutionary capacity stems from NGS's core architectural principle—massive parallelism. While Sanger sequencing employs the chain-termination method using dideoxynucleoside triphosphates (ddNTPs) to halt DNA synthesis, followed by capillary electrophoresis to separate fragments by size, NGS technologies utilize diverse chemical approaches including sequencing-by-synthesis, ion semiconductor sequencing, or nanopore sequencing, all sharing the common feature of concurrently processing enormous numbers of DNA fragments [6]. This technical evolution has redefined the scale and scope of cancer genomics, making large-scale projects like comprehensive tumor genomic profiling financially and technically feasible for research laboratories and clinical settings alike [8] [12].

Technical Comparison: NGS Versus Sanger Sequencing

Fundamental Technological Differences

The operational distinction between these sequencing technologies manifests most significantly in their throughput capabilities. Sanger sequencing processes a single DNA fragment per reaction, generating one long contiguous read typically ranging from 500 to 1,000 base pairs with exceptional accuracy (Phred score > Q50 or 99.999% accuracy) in the central read region [6]. In stark contrast, NGS platforms sequence millions to billions of fragments in parallel, producing vast quantities of shorter reads (typically 50-300 bp for short-read platforms) that collectively provide comprehensive genomic coverage [6] [2].

This differential approach creates complementary roles for these technologies in modern research workflows. Sanger sequencing remains the "gold standard" for validating variants identified through NGS screening and for sequencing single-gene targets where long read lengths are advantageous [6]. Meanwhile, NGS has become the preferred technology for discovery-phase research, comprehensive genomic profiling, and applications requiring detection of rare variants in heterogeneous samples [2].

Performance Metrics for Cancer Research

For cancer mutation detection specifically, sensitivity and variant detection capability are critical parameters. Sanger sequencing has a limited detection sensitivity of approximately 15-20% variant allele frequency (VAF), meaning mutations present in fewer than 15-20% of cells in a sample may go undetected [8] [2]. This limitation is particularly problematic for cancer research, where tumor heterogeneity and stromal contamination often result in driver mutations occurring at lower frequencies. NGS, particularly when using deep sequencing approaches, can detect variants with frequencies as low as 1% VAF, providing substantially greater power to identify subclonal mutations that may have clinical significance [8] [2].

Table 1: Key Technical Specifications for Cancer Mutation Detection

| Parameter | Sanger Sequencing | Next-Generation Sequencing |

|---|---|---|

| Throughput | Single DNA fragment per reaction [6] | Millions to billions of fragments simultaneously [6] [2] |

| Detection Sensitivity | ~15-20% variant allele frequency [8] [2] | As low as 1% variant allele frequency with deep sequencing [8] [2] |

| Read Length | 500-1000 bp (long contiguous reads) [6] | 50-300 bp (short-read platforms); up to millions of bp (long-read platforms) [6] [11] |

| Variant Detection Capability | Limited to specific targeted regions; primarily SNPs and small indels [6] | Comprehensive detection of SNPs, indels, CNVs, structural variants, and gene fusions [8] [6] |

| Cost Efficiency | Cost-effective for 1-20 targets [2] | Lower cost per base for large-scale projects; higher upfront costs [6] |

Table 2: Application-Based Technology Selection for Cancer Research

| Research Application | Recommended Technology | Rationale |

|---|---|---|

| Single-gene validation | Sanger Sequencing | High accuracy for focused regions; established validation standard [6] [2] |

| Comprehensive tumor profiling | NGS | Detects multiple variant types across hundreds of genes simultaneously [8] [6] |

| Low-frequency variant detection | NGS with deep sequencing | High sensitivity down to 1% VAF for heterogeneous tumor samples [8] [2] |

| Liquid biopsy applications | NGS | Enables detection of circulating tumor DNA against background normal DNA [8] |

| Structural variant analysis | NGS (especially long-read) | Identifies chromosomal rearrangements, gene fusions, and large deletions [8] [11] |

Experimental Design and Methodologies

NGS Workflow for Cancer Mutation Detection

Implementing NGS for cancer research requires a multi-step experimental workflow that differs significantly from Sanger-based approaches. The process begins with library preparation, where DNA is fragmented, and adapter sequences are ligated to enable binding to the sequencing platform and serve as priming sites for amplification [11]. For cancer studies, both tumor and matched normal samples are typically processed to distinguish somatic (acquired) mutations from germline (inherited) variants.

The subsequent cluster generation phase involves amplifying individual DNA fragments on a solid surface (flow cell) to create millions of identical copies, generating sufficient signal for detection during sequencing [11]. This step is followed by the actual sequencing phase, most commonly using sequencing-by-synthesis technology where fluorescently labeled nucleotides are incorporated one base at a time, with imaging capturing the incorporated base at millions of clusters simultaneously [11].

The final data analysis phase represents the most computationally intensive component, requiring alignment of millions of short reads to a reference genome, followed by variant calling using specialized algorithms to distinguish true somatic mutations from sequencing artifacts [6]. For cancer applications, additional analyses might include determining tumor mutation burden, microsatellite instability status, or specific mutational signatures that have implications for both carcinogenesis and treatment response [8].

Quality Control and Validation Frameworks

Ensuring data quality in NGS experiments requires rigorous quality control measures throughout the workflow. The PhiX control is commonly used as an in-run control for sequencing quality monitoring, helping to assess base calling accuracy and detect any systematic errors [13]. Quality scores (Q-scores) provide a quantitative measure of base-calling accuracy, with Q30 representing a benchmark for high-quality data (99.9% accuracy, or 1 error in 1,000 bases) [13].

For cancer research applications, validation of NGS assays typically involves establishing analytical sensitivity (the ability to detect true mutations), analytical specificity (the ability to avoid false positives), and precision (reproducibility across replicates) [8]. Given the potential clinical implications of findings, many laboratories employ orthogonal validation using Sanger sequencing for a subset of variants, particularly those with potential clinical significance [6] [2].

Table 3: Essential Research Reagents and Platforms for NGS Cancer Studies

| Category | Specific Examples | Research Function |

|---|---|---|

| NGS Platforms | Illumina NovaSeq X, PacBio Revio, Oxford Nanopore | High-throughput sequencing instruments with varying read lengths and applications [12] |

| Library Prep Kits | Illumina DNA Prep, Twist Human Core Exome | Reagents for fragmenting DNA and adding platform-specific adapters [11] |

| Target Enrichment | Hybridization capture panels, Amplicon panels | Systems to focus sequencing on cancer-relevant genes [8] |

| Quality Controls | PhiX Control, DNA Quantitation Standards | Materials to monitor sequencing performance and library quantification [13] |

| Analysis Tools | GATK, DeepVariant, ICE | Bioinformatics software for variant calling and interpretation [12] [14] |

Experimental Data and Performance Benchmarks

Sensitivity in Detecting Somatic Mutations

The enhanced sensitivity of NGS for detecting low-frequency variants has been demonstrated across multiple cancer types. In a study of cerebral cortical malformations, NGS identified somatic mutations with variant allele frequencies as low as 1% that were undetectable by Sanger sequencing due to its higher detection limit [2]. This sensitivity advantage is particularly crucial for cancer applications where tumor heterogeneity results in subclonal populations harboring clinically relevant mutations that would be missed by less sensitive methods.

For liquid biopsy applications, which detect circulating tumor DNA (ctDNA) in blood samples, NGS's sensitivity becomes even more critical since ctDNA often represents a small fraction of total cell-free DNA [8]. Research in breast cancer monitoring demonstrated that NGS-based liquid biopsies could track treatment response and identify emerging resistance mutations months before clinical progression became apparent through traditional imaging [11].

Comprehensive Genomic Profiling in Oncology Research

The capacity of NGS to simultaneously evaluate hundreds of cancer-associated genes has enabled comprehensive genomic profiling approaches that are transforming oncology research. Unlike Sanger sequencing, which requires separate reactions for each gene, NGS can interrogate entire pathways and biological processes in a single assay [8]. This comprehensive approach has revealed the remarkable genomic complexity of many cancers, with individual tumors often harboring dozens of somatic mutations across different genes.

In lung cancer research, NGS-based profiling has identified potentially actionable mutations in over 50% of patients, including alterations in EGFR, ALK, ROS1, BRAF, and other genes that can be targeted with specific therapies [8]. Similar comprehensive profiling approaches have been applied to colorectal, breast, and hematological malignancies, generating vast datasets that are refining cancer classification and revealing new therapeutic opportunities [8].

Integration with Machine Learning Approaches

The rich datasets generated by NGS are increasingly being analyzed with advanced computational approaches, including machine learning algorithms. In a recent study classifying five cancer types (BRCA1, KIRC, COAD, LUAD, and PRAD) based on DNA sequencing data, a blended approach combining logistic regression with Gaussian Naive Bayes achieved accuracies of 100% for BRCA1, KIRC, and COAD, and 98% for LUAD and PRAD [15]. These results demonstrated improvements of 1-2% over recent deep-learning and multi-omic benchmarks, highlighting how NGS data coupled with sophisticated analytical methods can enhance cancer classification [15].

The study employed a 10-fold cross-validation approach with the dataset partitioned into training (194 patients), validation (98 patients), and testing (98 patients) subsets [15]. Feature importance analysis revealed that model decisions were dominated by a small subset of genes—most notably gene28, gene30, gene18, gene44, and gene_45—with importance dropping off sharply after roughly the top 10-12 genes, indicating strong potential for dimensionality reduction with minimal performance loss in cancer prediction models [15].

Future Directions and Research Applications

Emerging Technologies and Applications

The NGS landscape continues to evolve with third-generation sequencing technologies offering advantages for specific research applications. Long-read sequencing platforms from Pacific Biosciences and Oxford Nanopore Technologies address the short-read limitation of earlier NGS systems by generating reads thousands to millions of base pairs long [11]. These technologies are particularly valuable for resolving complex genomic regions, detecting large structural variations, and characterizing epigenetic modifications directly from native DNA [11].

Single-cell sequencing represents another frontier, enabling researchers to profile genomic, transcriptomic, or epigenomic features at single-cell resolution [12]. This approach is particularly powerful for cancer research, where it can reveal tumor heterogeneity, identify rare cell populations (including cancer stem cells), and trace clonal evolution with unprecedented resolution [12]. When combined with spatial transcriptomics, which maps gene expression patterns within the context of tissue architecture, researchers can now correlate genomic alterations with their spatial distribution in the tumor microenvironment [12].

Multi-Omics Integration in Cancer Research

The integration of NGS with other data modalities is creating new opportunities for comprehensive molecular profiling of cancers. Multi-omics approaches combine genomic data with transcriptomic, proteomic, metabolomic, and epigenomic information to build a more complete picture of tumor biology [12]. This integrative strategy helps bridge the gap between genetic alterations and their functional consequences, potentially revealing novel therapeutic vulnerabilities that would not be apparent from genomic analysis alone.

In cancer research, multi-omics studies have been particularly valuable for understanding therapy resistance, tumor heterogeneity, and the complex interactions between cancer cells and their microenvironment [12]. The analysis of these rich multidimensional datasets is increasingly relying on artificial intelligence and machine learning approaches that can identify complex patterns across different data types [12]. Tools like Google's DeepVariant utilize deep learning to identify genetic variants with greater accuracy than traditional methods, demonstrating how computational innovations are enhancing the value of NGS data [12].

The revolution ushered in by Next-Generation Sequencing has fundamentally transformed cancer research, enabling comprehensive genomic profiling that reveals the molecular complexity of malignancies with unprecedented resolution. The massively parallel architecture of NGS provides distinct advantages over Sanger sequencing for most research applications, particularly in sensitivity for low-frequency variants, comprehensive mutation detection across multiple gene classes, and cost-effectiveness when analyzing large genomic regions or multiple samples [8] [6] [2].

While Sanger sequencing maintains an important role as a validation tool for specific variants and for applications requiring long read lengths of limited genomic regions [6] [2], NGS has become the foundational technology for modern cancer genomics. Its integration with emerging approaches—including long-read sequencing, single-cell analysis, spatial transcriptomics, artificial intelligence, and multi-omics integration—promises to further advance our understanding of cancer biology and accelerate the development of more effective, personalized cancer treatments [8] [12].

In the field of cancer genomics, the choice of sequencing technology is fundamentally dictated by the scale of the biological question being asked. Throughput—the amount of genetic data that can be generated in a single experiment—and interrogation scale—the breadth of genomic regions examined—represent critical differentiators between traditional Sanger sequencing and next-generation sequencing (NGS) [2] [16]. Sanger sequencing, developed in the 1970s, operates on a single-gene scale, sequencing individual DNA fragments one at a time [17] [18]. In contrast, NGS technologies perform massively parallel sequencing, simultaneously processing millions to billions of DNA fragments, thereby enabling whole-genome interrogation [2] [19]. This capability has positioned NGS as the cornerstone of precision oncology, facilitating comprehensive genomic profiling of tumors to identify actionable mutations and guide targeted therapy decisions [16].

The evolution from single-gene to whole-genome interrogation represents more than just a technical improvement; it signifies a paradigm shift in cancer research and diagnostics. While Sanger sequencing remains the gold standard for accuracy and continues to play important roles in validation and focused studies [20] [18], the massively parallel nature of NGS has unlocked unprecedented capabilities for discovering novel cancer biomarkers, understanding tumor heterogeneity, and monitoring treatment response [21] [16]. This article provides a detailed comparison of these technologies, focusing specifically on their throughput characteristics and appropriate applications across different scales of genomic interrogation in cancer research.

Technology Comparison: Throughput and Scale Capabilities

The fundamental distinction between Sanger sequencing and NGS lies in their approach to DNA fragment processing. Sanger sequencing employs the chain-termination method, using dideoxynucleotides (ddNTPs) to randomly terminate DNA synthesis during PCR amplification, followed by capillary electrophoresis to separate the resulting fragments by size [6] [17]. This linear process generates a single, long contiguous read per reaction, typically between 500-1000 base pairs [6] [20]. While this approach yields exceptionally high accuracy (exceeding 99.999% for the central read regions), its throughput is inherently limited by its one-fragment-at-a-time processing [6] [18].

NGS technologies, conversely, employ various massively parallel sequencing chemistries—most commonly sequencing-by-synthesis (Illumina), ion semiconductor sequencing (Ion Torrent), or nanopore sequencing (Oxford Nanopore) [6] [19]. These methods simultaneously sequence millions to billions of DNA fragments, generating enormous volumes of data in a single run [2] [19]. While individual NGS reads are typically shorter than Sanger reads (50-500 base pairs depending on the platform), the collective data output is several orders of magnitude greater [6]. This high-throughput capability comes with a significantly lower cost per base, though often with higher initial instrument costs and more complex bioinformatics requirements [6].

Table 1: Key Technical Specifications Comparing Sanger Sequencing and NGS

| Feature | Sanger Sequencing | Next-Generation Sequencing (NGS) |

|---|---|---|

| Fundamental Method | Chain termination with ddNTPs [6] [17] | Massively parallel sequencing (e.g., SBS, ion detection) [2] [6] |

| Sequencing Volume | Single DNA fragment per run [2] | Millions to billions of fragments simultaneously [2] [19] |

| Read Length | 500-1000 bp (long contiguous reads) [6] [20] | 50-500 bp (short reads, platform-dependent) [6] |

| Data Output | Limited data per run [16] | Gigabases to terabases per run [6] |

| Detection Sensitivity | ~15-20% variant allele frequency [21] [20] | As low as 1% variant allele frequency [21] [2] |

| Cost Efficiency | Low cost per run, high cost per base [6] | High capital cost, low cost per base [6] |

Table 2: Application-Based Comparison for Cancer Research

| Application | Recommended Technology | Rationale |

|---|---|---|

| Single-gene variant confirmation | Sanger sequencing [6] [18] | Gold-standard accuracy for known targets; cost-effective for small batches [17] [18] |

| CRISPR editing validation | Sanger sequencing [22] | Accurate sequence confirmation for engineered constructs [22] |

| Multigene panel analysis | Targeted NGS [21] [16] | Cost-effective simultaneous sequencing of hundreds of genes [2] |

| Novel mutation discovery | NGS [2] [16] | Unbiased detection across targeted regions or whole genome [16] |

| Tumor heterogeneity studies | NGS [21] [16] | High sensitivity for low-frequency variants (down to 1%) [21] [2] |

| Whole-genome analysis | NGS [16] [6] | Only feasible technology for comprehensive genomic profiling [6] |

Experimental Data: Direct Comparisons in Cancer Genomics

PIK3CA Mutation Analysis in Breast Cancer

A 2015 study directly compared NGS and Sanger sequencing for detecting PIK3CA mutations in 186 breast carcinoma samples, providing compelling evidence of NGS's superior sensitivity in detecting low-frequency variants [21]. Researchers used a customized targeted NGS panel covering six exons of PIK3CA (1, 4, 7, 9, 13, and 20) alongside traditional Sanger sequencing of the primary hotspot regions (exons 9 and 20) [21]. The experimental protocol involved DNA extraction from formalin-fixed paraffin-embedded (FFPE) tumor samples, with library preparation using 10 ng of genomic DNA and semiconductor-based sequencing on an Ion PGM system [21].

The results demonstrated 64 tumors harbored PIK3CA mutations, with 55 occurring in the conventional exons 9 and 20 hotspots [21]. While there was 98.4% concordance between NGS and Sanger for these hotspot mutations, NGS detected three additional mutations with variant frequencies below 10% that were missed by Sanger sequencing [21]. Furthermore, NGS identified mutations in non-traditional exons (1, 4, 7, and 13) in 4.8% of tumors, expanding the mutational spectrum detectable in clinical samples [21]. This study conclusively demonstrated that NGS provides more comprehensive mutational profiling, particularly valuable for samples with low tumor content or subclonal mutations [21].

MinION vs. Sanger in Hematological Malignancies

A 2025 study comparing Oxford Nanopore MinION technology with Sanger sequencing for detecting variants in hematological malignancies further illustrates the evolving landscape of sequencing technologies [20]. The research analyzed 164 samples with known mutations across 15 genes relevant to myeloproliferative neoplasms, acute myeloid leukemia, and related conditions [20]. The experimental workflow involved DNA/RNA extraction from peripheral blood or bone marrow, followed by marker-specific PCR and library preparation for MinION sequencing according to manufacturer protocols [20].

The results demonstrated 99.43% concordance between MinION and Sanger sequencing while highlighting significant advantages of the nanopore technology [20]. Most notably, MinION offered a turnaround time of under 24 hours for urgent cases, compared to 3-4 days for outsourced Sanger sequencing in their setup, and provided sensitivity comparable to NGS (<1% variant allele frequency) rather than the 15-20% typical of Sanger [20]. This combination of speed and sensitivity positions third-generation sequencing technologies as compelling alternatives for clinical diagnostics where both rapid results and detection of low-frequency variants are critical [20].

Workflow and Experimental Design

The experimental workflows for Sanger sequencing and NGS differ significantly in complexity, timing, and resource requirements, reflecting their fundamentally different approaches to sequence determination. Understanding these workflow differences is essential for researchers planning genomic studies in cancer research.

The Sanger sequencing workflow is relatively straightforward, beginning with DNA extraction followed by PCR amplification of the specific target region [17] [18]. The amplified product is then purified to remove residual primers and enzymes [23]. The critical sequencing reaction utilizes fluorescently labeled dideoxynucleotides (ddNTPs) that terminate DNA strand elongation when incorporated, generating fragments of varying lengths [17]. These fragments are separated by size via capillary electrophoresis, with a laser detecting the fluorescent label of the terminating nucleotide at each position [17] [18]. The final output is a chromatogram showing peak fluorescence corresponding to each base in the sequence [17].

The NGS workflow is considerably more complex, reflecting its massively parallel nature. After DNA extraction, the sample undergoes library preparation where DNA is fragmented and platform-specific adapters are ligated to each fragment [16] [19]. For targeted sequencing approaches, an additional enrichment step using hybridization capture or PCR is performed to isolate specific genomic regions of interest [16]. The library molecules are then immobilized on a solid surface (flow cell) or in emulsion droplets and amplified to create clusters or polonies containing identical copies of each original fragment [16] [19]. The actual sequencing occurs through repeated cycles of nucleotide incorporation and detection, with the specific chemistry varying by platform [19]. The tremendous volume of data generated requires sophisticated bioinformatics analysis for base calling, read alignment to a reference genome, and variant identification [16] [19].

Table 3: Essential Research Reagent Solutions for Sequencing Workflows

| Reagent Category | Specific Examples | Function in Workflow |

|---|---|---|

| Nucleic Acid Extraction | QIAamp DNA Mini Kit [21], QIAamp FFPE DNA extraction kit [23] | Isolation of high-quality DNA from various sample types including FFPE tissue |

| PCR Amplification | Emerald GT PCR master mix [23], Ion AmpliSeq Library Kit [21] | Amplification of target regions prior to sequencing or library preparation |

| Library Preparation | High-Resolution Master mix [23], Ion OneTouch 200 Template Kit [21] | Preparation of DNA fragments for sequencing, including fragmentation and adapter ligation |

| Sequencing Chemistry | BigDye Terminator cycle sequencing kit [23], Ion AmpliSeq custom panels [21] | Platform-specific reagents for the actual sequencing reactions |

| Purification Kits | HighPure PCR product purification kit [23], QIAamp purification systems [21] | Removal of enzymes, salts, and other impurities between workflow steps |

The choice between Sanger sequencing and NGS for cancer mutation detection research is fundamentally determined by the required scale of genomic interrogation. Sanger sequencing remains the optimal choice for applications requiring high accuracy for single-gene targets, validation of known variants, or situations where rapid turnaround for a limited number of samples is prioritized [6] [18]. Its simplicity, long read lengths, and minimal bioinformatics requirements make it ideal for focused investigations [17].

In contrast, NGS technologies provide unparalleled advantages for comprehensive genomic profiling, discovery of novel mutations, and analysis of complex tumor heterogeneity [21] [16]. The massively parallel nature of NGS enables researchers to examine entire genomes, transcriptomes, or customized multigene panels in a single experiment, providing a systems-level view of cancer genomics that is simply unattainable with Sanger sequencing [2] [19]. While NGS requires more substantial infrastructure investment and bioinformatics expertise, its superior throughput, sensitivity for low-frequency variants, and cost-effectiveness at scale have established it as the foundational technology for modern precision oncology research [16] [6].

As sequencing technologies continue to evolve, the distinction between these platforms is becoming increasingly nuanced with the emergence of third-generation technologies like Oxford Nanopore that offer both long reads and high throughput [20]. Nevertheless, the fundamental principle remains: matching the technology to the biological question's scale ensures efficient resource utilization and maximizes scientific insight in cancer genomics research.

Next-generation sequencing (NGS) has fundamentally transformed the approach to cancer mutation detection, offering a powerful alternative to traditional Sanger sequencing. The shift towards molecularly driven cancer care relies on precise genomic profiling to identify actionable mutations, guide targeted therapies, and monitor treatment response [16] [24]. For research and drug development professionals, selecting the appropriate sequencing technology is a critical decision that directly impacts data reliability, sensitivity, and ultimately, research outcomes.

This guide provides an objective comparison of NGS and Sanger sequencing by examining three fundamental technical metrics: read length, coverage depth, and error profiles. Understanding these parameters is essential for designing robust experiments, accurately interpreting genomic data in the context of tumor heterogeneity, and advancing personalized cancer treatment strategies [16] [25].

Core Metric Comparison: NGS vs. Sanger Sequencing

The following table summarizes the fundamental technical differences between NGS and Sanger sequencing that are critical for cancer research applications.

Table 1: Core Technical Metrics for Sanger and Next-Generation Sequencing

| Technical Metric | Sanger Sequencing | Next-Generation Sequencing (NGS) |

|---|---|---|

| Principle of Operation | Dideoxy chain termination with capillary electrophoresis [26] | Massive parallel sequencing of millions of fragments [16] [24] |

| Typical Read Length | Up to 1000 base pairs [24] | 75-300 bp (Illumina short-read); Thousands of bp (PacBio, Nanopore long-read) [24] [27] |

| Throughput & Scalability | Low; processes one DNA fragment at a time [26] [24] | Very high; sequences millions of fragments simultaneously [16] [26] |

| Detection Limit (Variant Allele Frequency) | ~15-20% [26] [24] | ~1% or lower, depending on coverage [26] [24] |

| Typical Cost & Application Fit | Cost-effective for a limited number of targets (e.g., single genes) [26] [24] | Cost-effective for large-scale projects and multi-gene panels [16] [26] |

| Error Profile | Very low error rate (~0.001%) [28] | Varies by platform: ~0.1-0.8% (Illumina), ~1.78% (Ion Torrent) [28] |

Experimental Validation in Cancer Research

Case Study: PIK3CA Mutation Detection in Breast Cancer

A direct comparative study on 186 breast carcinoma samples evaluated the concordance between NGS and Sanger sequencing for detecting mutations in the PIK3CA gene, a critical oncogene in breast cancer [21].

- Methodology: Tumor DNA was extracted from formalin-fixed, paraffin-embedded (FFPE) tissue samples containing at least 30% tumor cells. A customized targeted NGS panel covering six exons of PIK3CA was used, with a mean coverage ranging from 1,552X to 5,237X per amplicon. All samples were also subjected to Sanger sequencing of exons 9 and 20 for comparison [21].

- Key Findings: The study identified 55 PIK3CA mutations in exons 9 and 20. Sanger sequencing failed to detect three of these mutations, all of which had low variant allele frequencies (VF) below 10%. This resulted in a 98.4% concordance between the two methods for these exons. Furthermore, NGS identified additional mutations in exons 1, 4, 7, and 13, which were not part of the standard Sanger sequencing protocol, accounting for 4.8% of the tumors [21].

- Conclusion: The study demonstrated that NGS is superior for correctly assessing mutation status, especially in samples with low tumor cellularity or subclonal mutations, and for simultaneous interrogation of multiple genomic regions [21].

Error Profile Analysis and Impact on Low-Frequency Variant Detection

The accurate identification of low-frequency variants is paramount in cancer research for detecting subclonal populations, minimal residual disease, and heterogenous tumor cells [25]. Sequencing errors are a major confounding factor in these applications.

- Methodology: Researchers systematically investigated substitution error rates using deep sequencing datasets from multiple sequencing centers. They performed a dilution experiment using matched cancer/normal cell lines (COLO829/COLO829BL) to establish a truth set of 19 somatic single-nucleotide variants (SNVs). This allowed them to benchmark the limit of variant detection and quantify errors introduced during sample handling, library preparation, enrichment PCR, and the sequencing process itself [25].

- Key Findings: The study found that the substitution error rate of conventional NGS could be computationally suppressed to between 10⁻⁵ and 10⁻⁴, which is 10 to 100 times lower than the often-cited rate of 10⁻³. Error rates were also found to differ by substitution type and were influenced by sequence context and sample-specific effects. Critically, target-enrichment PCR was shown to increase the overall error rate by approximately six-fold [25].

- Conclusion: With in silico error suppression, the study confirmed that over 70% of known hotspot variants can be reliably detected at frequencies as low as 0.1% to 0.01% using current NGS technology, highlighting its power for sensitive cancer genomic applications [25].

The process of generating sequencing data involves multiple steps, each with distinct error profiles. Understanding this workflow is key to optimizing experiments and interpreting results.

The diagram illustrates a standard NGS workflow and its associated primary error sources. A critical factor influencing data quality in this workflow is the choice of sequencing coverage and read length.

- Sequencing Coverage (Depth): This refers to the average number of reads that align to a known reference at a particular base location [29]. Deep coverage is crucial in cancer genomics because it increases confidence in base calls and enables the detection of low-frequency variants [29] [27]. For human genetic applications, coverage may be 30x or less, while cancer applications often require >1000x coverage to identify rare subclonal mutations [29].

- Read Length and Type: Read length is the number of base pairs sequenced from a DNA fragment [27]. Longer reads are beneficial for assembling complex genomic regions, while shorter reads are cost-effective for targeted sequencing [29] [27]. Furthermore, paired-end reading (sequencing both ends of a fragment) significantly improves the ability to detect insertions, deletions, and gene rearrangements compared to single-end reads, which is invaluable for cancer genome analysis [29].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful NGS experimentation relies on a suite of specialized reagents and materials. The following table details key components used in targeted NGS panels for cancer research.

Table 2: Essential Research Reagents and Materials for Targeted NGS

| Reagent/Material | Function in Workflow | Research Application Context |

|---|---|---|

| Hybridization Capture Probes | Biotinylated oligonucleotides designed to enrich specific genomic regions of interest from a sequencing library [30]. | Target enrichment for cancer gene panels (e.g., 61-gene oncopanels) to focus sequencing power on clinically actionable mutations [30]. |

| Molecular Barcodes (Indexes) | Short, unique DNA sequences ligated to DNA fragments during library prep to allow sample multiplexing [29]. | Enables pooling of dozens or hundreds of different tumor samples in a single sequencing run, drastically reducing per-sample cost [29]. |

| High-Fidelity DNA Polymerase | Enzyme used for PCR amplification during library construction and target enrichment with low error rates [28]. | Critical for minimizing false positive variant calls caused by polymerase errors during amplification, especially for low-frequency variant detection [28]. |

| PhiX Control Library | A well-characterized, standardized library used as an in-run control for sequencing quality monitoring [13]. | Serves as a quality control metric to monitor base-calling accuracy, cluster density, and overall run performance on Illumina platforms [13]. |

| Magnetic Beads (SPRI) | Solid-phase reversible immobilization beads for size selection and purification of DNA fragments during library prep [16]. | Used to remove unwanted artifacts like primer dimers and to select for optimal insert sizes, which improves library complexity and data uniformity [29]. |

The comparative analysis of key technical metrics unequivocally demonstrates that NGS offers significant advantages over Sanger sequencing for comprehensive cancer mutation detection research. The capabilities of NGS in profiling hundreds of genes simultaneously, detecting low-frequency variants critical for understanding tumor heterogeneity, and providing a cost-effective solution for large-scale studies make it an indispensable tool for modern oncology research and drug development [16] [26] [24].

While Sanger sequencing retains its utility for validating specific variants and sequencing single genes, the depth, breadth, and sensitivity of NGS have solidified its role as the cornerstone of precision oncology. As the technology continues to evolve with improvements in read length, error correction, and bioinformatic analysis, its impact on accelerating cancer discovery and personalized therapeutic strategies is poised to grow even further [16] [28] [24].

From Bench to Bedside: Applying Sequencing Technologies in Cancer Research

In the era of next-generation sequencing (NGS), which provides comprehensive genomic profiles for cancer research, Sanger sequencing maintains a critical, well-defined role in molecular diagnostics. While NGS enables massive parallel sequencing of millions of DNA fragments for discovering novel mutations across hundreds to thousands of genes, Sanger sequencing provides exceptional accuracy for focused applications. For researchers and drug development professionals, understanding the strategic implementation of both technologies is essential for rigorous experimental design. This guide details the specific scenarios where Sanger sequencing remains the gold standard, particularly for variant validation and targeted single-gene analysis.

Technical Comparison: Sanger Sequencing vs. NGS

The choice between Sanger and NGS is fundamentally dictated by the research question's scope and scale. The table below summarizes their core technical differences.

Table 1: Key Technical Characteristics of Sanger Sequencing and NGS

| Feature | Sanger Sequencing | Next-Generation Sequencing (NGS) |

|---|---|---|

| Fundamental Method | Chain termination using dideoxynucleotides (ddNTPs) [6] [2]. | Massively parallel sequencing (e.g., Sequencing by Synthesis) [8] [6]. |

| Throughput | Processes a single DNA fragment per reaction [8] [16]. | Sequences millions to billions of fragments simultaneously [8] [16]. |

| Read Length | Long, contiguous reads (500–1000 base pairs) [10] [6]. | Shorter reads (50-300 bp for short-read platforms) [8] [6]. |

| Sensitivity (Limit of Detection) | Lower sensitivity (~15-20% variant allele frequency) [8] [2]. | High sensitivity (down to ~1% for low-frequency variants) [8] [2]. |

| Primary Data Output | Single, high-quality sequence per reaction [6]. | Massive datasets of short reads requiring complex bioinformatics analysis [8] [6]. |

| Optimal Sample Number/Targets | Cost-effective for sequencing 1-20 targets or a limited number of samples [2]. | Cost-effective for high sample volumes or interrogating hundreds to thousands of genes [8] [2]. |

| Key Strength | "Gold standard" for accuracy on defined targets; simple data analysis [10] [6]. | Unbiased discovery power; comprehensive genomic coverage; detects novel/rare variants [8] [16]. |

Core Use Cases for Sanger Sequencing in Modern Research

Orthogonal Validation of NGS Identified Variants

Despite the high accuracy of modern NGS platforms, orthogonal confirmation of clinically or scientifically significant variants using Sanger sequencing remains a recommended practice, especially in diagnostic and clinical trial settings [31]. NGS is a powerful discovery tool, but its data is based on complex computational interpretation of short reads. Sanger sequencing provides an independent verification using a different biochemical method, ensuring that reported variants are not artifacts of the NGS process.

A 2025 study systematically analyzed the concordance between whole-genome sequencing (WGS) and Sanger validation for 1,756 variants. The research established that while "high-quality" NGS variants show near-perfect concordance, a subset of lower-quality calls still requires confirmation. The study achieved 99.72% concordance and demonstrated that applying specific quality thresholds (e.g., depth of coverage ≥ 15, allele frequency ≥ 0.25) could streamline workflows by reducing the need for Sanger validation to just 4.8% of variants, focusing confirmation efforts where it is most needed [31].

Table 2: Key Reagents for Sanger Sequencing Validation Workflow

| Research Reagent Solution | Function in the Experimental Protocol |

|---|---|

| High-Fidelity DNA Polymerase | Enzyme that synthesizes new DNA strands from the template during the PCR amplification and sequencing reaction. Optimized enzymes have strong proofreading activity to reduce base mismatches and improve accuracy [10]. |

| Fluorescently-labeled ddNTPs | Dideoxynucleotides (ddNTPs) lack a 3'-hydroxyl group, causing DNA synthesis to terminate at specific bases. Each base (A, T, C, G) is labeled with a distinct fluorescent dye for detection [6] [2]. |

| Capillary Electrophoresis Sequencer | Instrument that separates the terminated DNA fragments by size via capillary electrophoresis. A laser detects the fluorescent dye of the terminal ddNTP, determining the DNA sequence [10] [6]. |

| PCR Primers | Specific oligonucleotides designed to flank the genomic region of interest. They are used for the initial PCR amplification and, in some protocols, for the subsequent sequencing reaction itself [31]. |

| Sequence Analysis Software | Software that translates the fluorescent trace data from the capillary sequencer into a base-called sequence and facilitates alignment to a reference sequence for variant identification [10]. |

Decision Workflow for Sanger Validation of NGS Variants

Interrogation of Focused Single-Gene Panels

For many research and diagnostic questions, the target of interest is a single gene or a small set of known genes. In these cases, the extensive discovery power of NGS is unnecessary. Sanger sequencing is exceptionally well-suited for simple variant screening in known loci, such as verifying a specific mutation in an oncogene (e.g., BRAF V600E) or tumor suppressor gene [6].

Its long read length (up to 1000 bp) allows it to cover entire exons or small genes in a single reaction, simplifying the workflow and data analysis compared to the assembly of short NGS reads [10] [6]. This makes Sanger sequencing a first-line tool for focused applications like gene editing verification (e.g., confirming CRISPR-Cas9 edits), plasmid sequencing, and testing for highly penetrant hereditary cancer mutations in a family when a specific syndrome is suspected [10].

Decision Framework: Selecting the Right Sequencing Technology

The following diagram outlines the decision-making process for choosing between Sanger sequencing and NGS based on the research objective.

Sequencing Technology Selection Workflow

Experimental Protocol for Key Applications

Protocol: Sanger Sequencing for Variant Validation

This protocol is adapted from methodologies used in recent studies for confirming NGS-derived variants [31].

- Primer Design: Design PCR primers that flank the genomic variant of interest. Ensure the amplicon size is between 500-1000 bp for optimal Sanger sequencing performance.

- PCR Amplification: Perform PCR amplification of the target region from the sample DNA using a high-fidelity DNA polymerase to minimize PCR-introduced errors.

- PCR Product Purification: Clean the PCR product to remove excess primers, dNTPs, and enzymes that could interfere with the sequencing reaction. This can be done using enzymatic clean-up protocols or bead-based purification kits.

- Sanger Sequencing Reaction: Set up the sequencing reaction using fluorescently labeled ddNTPs (chain-terminating nucleotides) and a specific sequencing primer. The reaction will generate a mixture of DNA fragments of varying lengths, each terminating at a specific base.

- Capillary Electrophoresis: Purify the sequencing reaction product to remove unincorporated dyes and load it into a capillary electrophoresis sequencer. The instrument will separate the fragments by size and detect the fluorescent dye at the terminus of each fragment.

- Data Analysis: Use sequence analysis software to compare the resulting chromatogram to the reference sequence. The software will identify the base at each position, allowing for visual confirmation of the variant initially detected by NGS.

Protocol: Sanger Sequencing for Single-Gene Mutation Screening

This protocol is ideal for screening a cohort of samples for mutations in a specific cancer-related gene.

- Target Selection and Primer Design: Identify all exons and flanking splice sites of the gene of interest. Design PCR primers to generate overlapping amplicons that cover the entire coding region.

- Multi-sample PCR: Perform PCR for each amplicon across all patient DNA samples. The use of a 96-well or 384-well plate format is standard for this medium-throughput application.

- PCR Clean-up: Purify the PCR products as in the validation protocol.

- Sequencing and Analysis: Conduct the Sanger sequencing reaction and capillary electrophoresis for each sample and amplicon. Analyze the sequences for the presence of variants by comparing them to the wild-type gene sequence.

Sanger sequencing remains an indispensable tool in the cancer research arsenal, not as a competitor to NGS, but as a complementary technology. Its optimal use cases are clearly defined: providing gold-standard validation for critical variants discovered by NGS and conducting cost-effective, accurate sequencing of single genes or small genomic regions. By leveraging the respective strengths of both Sanger and NGS technologies within a integrated workflow, researchers and drug developers can ensure both the broad discovery power and the specific, high-confidence data required to advance precision oncology.

Cancer was previously regarded as a single disease, but it is now understood to be a collection of hundreds of diseases, each driven by unique genomic characteristics. This means that even when tumor location is the same, the DNA changes that caused the cancer may make each cancer unique [32]. This fundamental shift in understanding has triggered a move away from traditional 'one-size-fits-all' treatment approaches toward therapy that targets the specific genetic changes driving cancer growth [32].

This evolution in cancer treatment has been enabled by parallel advances in DNA sequencing technologies. Historically, Sanger sequencing served as the gold standard for detecting DNA mutations. However, its limitations in sensitivity and inability to perform parallel investigation of multiple targets created bottlenecks in comprehensive cancer analysis [21]. The emergence of next-generation sequencing (NGS) has addressed these challenges through massively parallel sequencing, which increases speed, efficiency, and discovery power for mutation testing in molecular pathology [21] [2]. The convergence of medical knowledge, technology, and data science is now revolutionizing patient care through precision oncology approaches powered by NGS.

Technical Comparison: NGS vs. Sanger Sequencing

Fundamental Technological Differences

In principle, the concepts behind Sanger and next-generation sequencing technologies are similar. In both methods, DNA polymerase adds fluorescent nucleotides one by one onto a growing DNA template strand. The critical difference lies in sequencing volume. While the Sanger method sequences only a single DNA fragment at a time, NGS is massively parallel, sequencing millions of fragments simultaneously per run [2].

Sanger sequencing operates by incorporating fluorescently tagged dideoxynucleotides (ddNTPs) during DNA synthesis. Each ddNTP halts DNA strand elongation at precise nucleotide locations, facilitating sequence determination through capillary electrophoresis [26]. This method provides high-quality data for regions up to 500-700 base pairs [17] but has limited sensitivity for detecting low-frequency variants.

Next-generation sequencing utilizes a diverse array of mechanisms, including reversible terminator chemistry, real-time single-molecule sequencing, and nanopore-based sequencing to accomplish high-throughput sequencing [26]. This parallel processing capability enables researchers to sequence hundreds to thousands of genes simultaneously, providing comprehensive genomic coverage that would be costly and time-consuming with Sanger sequencing [2].

Performance Comparison in Oncology Applications

Table 1: Comparative performance of Sanger sequencing and NGS in cancer genomics

| Parameter | Sanger Sequencing | Next-Generation Sequencing (NGS) |

|---|---|---|

| Detection Limit | ~15-20% allele frequency [2] [26] | As low as 1% allele frequency [2] [26] |

| Throughput | Sequences single DNA fragment per run [2] | Millions of fragments simultaneously [2] |

| Multiplexing Capability | Limited; costly for >20 targets [2] | High; sequences hundreds to thousands of genes [2] |

| Discovery Power | Limited for novel variant discovery [2] | High; identifies novel/rare variants [2] |

| Mutation Resolution | Limited to single nucleotide changes [2] | Detects SNVs, indels, CNAs, fusions [32] |

| Cost-Effectiveness | Cost-effective for 1-20 targets [2] [17] | Cost-effective for larger target numbers [2] [17] |

| Turnaround Time | Faster for low target numbers [17] | Faster for high sample volumes [2] |

Table 2: Concordance study results between NGS and Sanger sequencing for PIK3CA mutation detection in breast cancer

| Sequencing Method | Mutations Detected in Exons 9 & 20 | Additional Mutations Detected Outside Exons 9 & 20 | Overall Concordance |

|---|---|---|---|

| Sanger Sequencing | 52/55 mutations | Not detected | 98.4% for exons 9 & 20 |

| Next-Generation Sequencing | 55/55 mutations | 4.8% of tumors had mutations in exons 1, 4, 7, 13 | Reference standard |

The performance advantages of NGS are particularly evident in clinical oncology studies. A 2015 study investigating PIK3CA mutation status in 186 breast carcinomas demonstrated the superior sensitivity of NGS, which detected mutations in exons 9 and 20 that were missed by Sanger sequencing due to their low variant frequencies (below 10%) [21]. Additionally, NGS identified mutations outside the primary hotspot regions (exons 1, 4, 7, and 13) in 4.8% of tumors, mutations that would have been undetected using conventional Sanger approaches [21].

Comprehensive Genomic Profiling: Expanding Diagnostic Capabilities

Defining Comprehensive Genomic Profiling

Comprehensive genomic profiling (CGP) represents an advanced NGS approach that detects novel and known variants of the four main classes of genomic alterations: base substitutions, insertions and deletions, copy number alterations, and rearrangements or fusions [32]. Unlike traditional single-gene tests or hotspot panels that focus on narrow targets, CGPinterrogates a broad panel of cancer-related genes simultaneously from a single tissue sample, providing complete information on both common oncogenic drivers and complex or rare biomarkers [32].

CGP can be performed on tumor DNA and RNA, as well as non-tumor tissues such as blood, pleural effusion, and ascites [33]. This approach helps uncover the unique "fingerprint" of a cancer tumor, providing physicians with a deep understanding of what is driving an individual's cancer to help determine the best possible treatment [32].

Clinical Impact and Diagnostic Recharacterization

The comprehensive nature of CGP has revealed an unexpected application in diagnostic medicine: tumor reclassification and refinement. In rare cases, CGP has uncovered inconsistencies between primary diagnosis and molecular findings, triggering secondary comprehensive reviews that can result in tumor reclassification or refinement [34].

A 2025 study highlighted 28 cases where CGP findings led to diagnostic re-evaluation. The study documented disease reclassification events in seven cases where initial diagnoses (including NSCLC, sarcoma, and neuroendocrine carcinoma) were reclassified to different tumor types (including renal cell carcinoma, medullary thyroid carcinoma, and melanoma) based on molecular findings [34]. Additionally, disease refinement events occurred in 21 cases where initial diagnoses of "carcinoma of unknown primary" were refined to specific tumor classifications, including NSCLC, cholangiocarcinoma, and high-grade serous ovarian carcinoma [34].

This recharacterization has direct therapeutic implications. In one published case report, NGS testing helped correct an inaccurate primary diagnosis of leiomyosarcoma to liposarcoma. Following tumor reclassification, the patient received indication-matched treatment and exhibited clinical benefit, including improved progression-free survival and quality of life [34].

Liquid Biopsies: Non-Invasive Molecular Profiling

Principles and Advantages of Liquid Biopsy

Liquid biopsy involves the analysis of tumor-derived components from bodily fluids, most commonly blood, but also including urine, cerebrospinal fluid, and pleural effusions [35] [36]. This approach analyzes various tumor-derived components including circulating tumor cells (CTCs), circulating tumor DNA (ctDNA), tumor extracellular vesicles (EVs), and tumor-educated platelets (TEPs) [35].

Liquid biopsy offers several significant advantages over traditional tissue biopsy:

- Minimal invasiveness: Avoids invasive surgical procedures associated with tissue biopsies [35]

- Real-time monitoring: Allows for serial sampling to monitor tumor evolution and treatment response over time [35]

- Assessment of tumor heterogeneity: Captures a more comprehensive representation of tumor heterogeneity than single-site biopsies [35]

- Potential for early detection: Enables detection of minimal residual disease and early relapse [35]

Liquid biopsy is particularly valuable in metastatic settings where tumors have disseminated and continuously undergo evolutionary changes. In these scenarios, obtaining comprehensive molecular information through multiple tissue biopsies presents significant challenges [35].

Emerging Applications in Lung Cancer Diagnostics

Recent advances in liquid biopsy have expanded beyond traditional DNA-based analysis to include RNA and other molecular species. A 2025 study developed a machine-learning model to analyze small RNA sequencing data from 1446 tissue samples to identify a diagnostic tRNA signature for non-small cell lung cancer (NSCLC) [36].

The researchers identified a robust six-tRNA signature with strong diagnostic performance, achieving Area Under the Curve (AUC) values of 0.97 in discovery, 0.96 in hold-out validation, and 0.84 in independent validation using plasma exosome samples [36]. The signature effectively distinguished cancerous from benign samples (AUC = 0.85) and consistently performed across various clinical and demographic variables, with AUC values exceeding 0.80, particularly for early-stage lung cancer diagnosis [36].

This research underscores the diagnostic power of tRNA signatures for NSCLC liquid biopsy and provides epigenetic insights that enhance our understanding of oncogenic molecular pathophysiology [36].

Experimental Approaches and Methodologies

Key Experimental Protocols in NGS-Based Cancer Genomics

Targeted NGS for Mutation Detection

A 2015 study on PIK3CA mutations in breast cancer provides a representative protocol for targeted NGS in oncology [21]:

Sample Preparation: Representative tumor samples containing at least 30% tumor cells were selected. Ten consecutive 10-μm thick sections were prepared, with the first section stained with hematoxylin/eosin and the tumor area marked by a pathologist. The corresponding area was manually microdissected from consecutive unstained sections.

DNA Extraction: DNA was extracted using the QIAamp DNA Mini Kit with enzymatic lysis performed using Proteinase K for 1 hour at 56°C. Total nucleic acid concentrations were measured with a Qubit fluorometer HS DNA Assay.

Library Preparation and Sequencing: Ten nanograms of genomic DNA were utilized for library preparation using the Ion AmpliSeq Library Kit 2.0. A customized sequencing panel consisting of 154 amplicons from 48 genes was designed to cover the most frequent somatic mutations in breast cancer, including six amplicons located in PIK3CA exons 1, 4, 7, 9, 13, and 20. Samples were 8-fold multiplexed and amplified on Ion Spheres Particles using the Ion OneTouch 200 Template Kit. Sequencing was performed using the Ion 318 chip.

Data Analysis: Base calling and alignment to the human genome (hg19) were executed with the Torrent Suite Software 4.0.3. Variant calling was executed using the Torrent Variant Caller 4.2 with low stringency settings. The mean coverages of the amplicons ranged from 1552 bp (exon 20) to 5237 bp (exon 4) [21].

Liquid Biopsy and Exosome Analysis for tRNA Signatures

A 2025 study on NSCLC diagnosis developed the following protocol for liquid biopsy-based tRNA analysis [36]:

Plasma Sample Collection: Plasma specimens and associated patient information were obtained from medical centers, comprising cohorts of individuals diagnosed with NSCLC, subjects with benign lung conditions, and healthy controls.

Exosome Isolation: Exosomes were meticulously isolated from each plasma specimen utilizing the Capturem Extracellular Vesicle Isolation Kit. From an initial volume of 500 μL of plasma, the exosomes were subsequently eluted in 200 μL of buffer.

RNA Extraction and Sequencing: RNA was extracted from isolated exosomes followed by small RNA sequencing. The researchers employed a machine-learning approach to analyze sequencing data and identify diagnostic tRNA signatures.

Data Analysis and Validation: The diagnostic performance of the identified tRNA signature was assessed using Area Under the Curve (AUC) metrics across discovery, hold-out validation, and independent validation cohorts. Signature tRNAs were evaluated across various clinical and demographic variables, with further survival analysis conducted to explore prognostic significance.

Research Reagent Solutions for NGS Oncology Studies

Table 3: Essential research reagents and materials for NGS-based oncology studies

| Reagent/Material | Function | Example Products |

|---|---|---|

| Nucleic Acid Extraction Kits | Isolation of high-quality DNA/RNA from tissue or liquid samples | QIAamp DNA Mini Kit [21] |

| Target Enrichment Panels | Selective amplification of cancer-related genes for targeted sequencing | Ion AmpliSeq Cancer Panels [21] |

| Library Preparation Kits | Preparation of sequencing libraries with appropriate adapters | Ion AmpliSeq Library Kit 2.0 [21] |

| Template Preparation Kits | Generation of template-positive ion sphere particles for sequencing | Ion OneTouch 200 Template Kit [21] |

| Exosome Isolation Kits | Isolation of extracellular vesicles from liquid biopsy samples | Capturem Extracellular Vesicle Isolation Kit [36] |

| Sequenceing Chips | Platforms for massive parallel sequencing | Ion 318 chip [21] |

| Variant Caller Software | Identification of genetic variants from sequencing data | Torrent Variant Caller [21] |

Visualizing NGS Workflows in Oncology

Comprehensive Genomic Profiling Workflow

Liquid Biopsy Analysis Pipeline

The integration of next-generation sequencing into oncology has fundamentally transformed cancer diagnosis and treatment. NGS technologies have demonstrated clear advantages over Sanger sequencing in sensitivity, throughput, and comprehensive genomic coverage, particularly for complex cancer genomes [21] [2]. The ability of comprehensive genomic profiling to detect diverse genomic alterations from a single test provides unprecedented insights into the molecular drivers of malignancy, in some cases even leading to diagnostic recharacterization that directly impacts therapeutic decisions [34] [32].

The emergence of liquid biopsy platforms represents another revolutionary advancement, enabling non-invasive, real-time monitoring of tumor dynamics through the analysis of circulating tumor-derived biomarkers [35] [36]. As the field continues to evolve, the convergence of NGS technologies, liquid biopsy approaches, and advanced computational analysis promises to further advance precision oncology, offering new hope for improved patient outcomes through more accurate diagnosis and personalized treatment strategies.

The precise detection of somatic mutations is foundational to modern oncology research and therapy development. Cancer genomes are characterized by a spectrum of alterations, including single nucleotide variants (SNVs), insertions and deletions (indels), copy number variations (CNVs), and gene fusions—each with distinct clinical implications for diagnosis, prognosis, and treatment selection. The choice of sequencing technology profoundly impacts the sensitivity, scope, and efficiency of mutation detection. For decades, Sanger sequencing represented the gold standard for DNA sequencing, but its technical limitations restrict its utility in comprehensive cancer genomics. The emergence of next-generation sequencing (NGS) has introduced a paradigm shift, enabling massively parallel analysis that dramatically expands mutational profiling capabilities while reducing costs [6] [11].

This guide provides an objective comparison of NGS and Sanger sequencing technologies specifically for detecting key cancer mutations. It synthesizes performance data, details experimental methodologies, and frames these findings within the broader thesis of optimal technology selection for cancer research and drug development. Understanding the relative strengths and limitations of each platform is crucial for researchers designing studies to uncover the genetic drivers of malignancy and to develop targeted therapeutic interventions.

Technical Comparison: NGS vs. Sanger Sequencing

Fundamental Technological Differences

The core distinction between Sanger sequencing and NGS lies in their underlying architecture and scalability. Sanger sequencing, also known as chain-termination method or first-generation sequencing, relies on dideoxynucleoside triphosphates (ddNTPs) to terminate DNA synthesis at specific bases. The resulting fragments are separated by capillary electrophoresis, producing a single, long contiguous read per reaction [6] [8]. In contrast, NGS (next-generation sequencing) employs massively parallel sequencing, simultaneously processing millions to billions of DNA fragments on a solid surface or in microchambers. This is achieved through various chemistries, such as sequencing-by-synthesis (SBS), ion semiconductor sequencing, or ligation-based methods [6] [11] [19]. This parallel processing capability represents a fundamental architectural shift that enables NGS to achieve unprecedented throughput and discovery power.

Performance Metrics for Cancer Mutation Detection

The following table summarizes the critical performance characteristics of each technology for detecting various classes of cancer mutations.

Table 1: Performance Comparison for Key Cancer Mutation Types

| Mutation Type | Sanger Sequencing | Next-Generation Sequencing (NGS) |

|---|---|---|

| Single Nucleotide Variants (SNVs) | Limited sensitivity (~15-20% variant allele frequency) [2]. Suitable for high-frequency mutations in homogeneous samples. | High sensitivity (down to ~1% variant allele frequency) [8] [2]. Enables detection of low-frequency variants in heterogeneous tumors. |

| Insertions/Deletions (Indels) | Can detect small indels in targeted regions but suffers from decreased sensitivity, especially for complex patterns [6]. | Excellent detection capability for small to medium indels. Performance depends on read length and alignment algorithms [8]. |