NGS vs. Sanger Sequencing: A 2025 Guide to Validation, Performance, and Clinical Application in Cancer Genomics

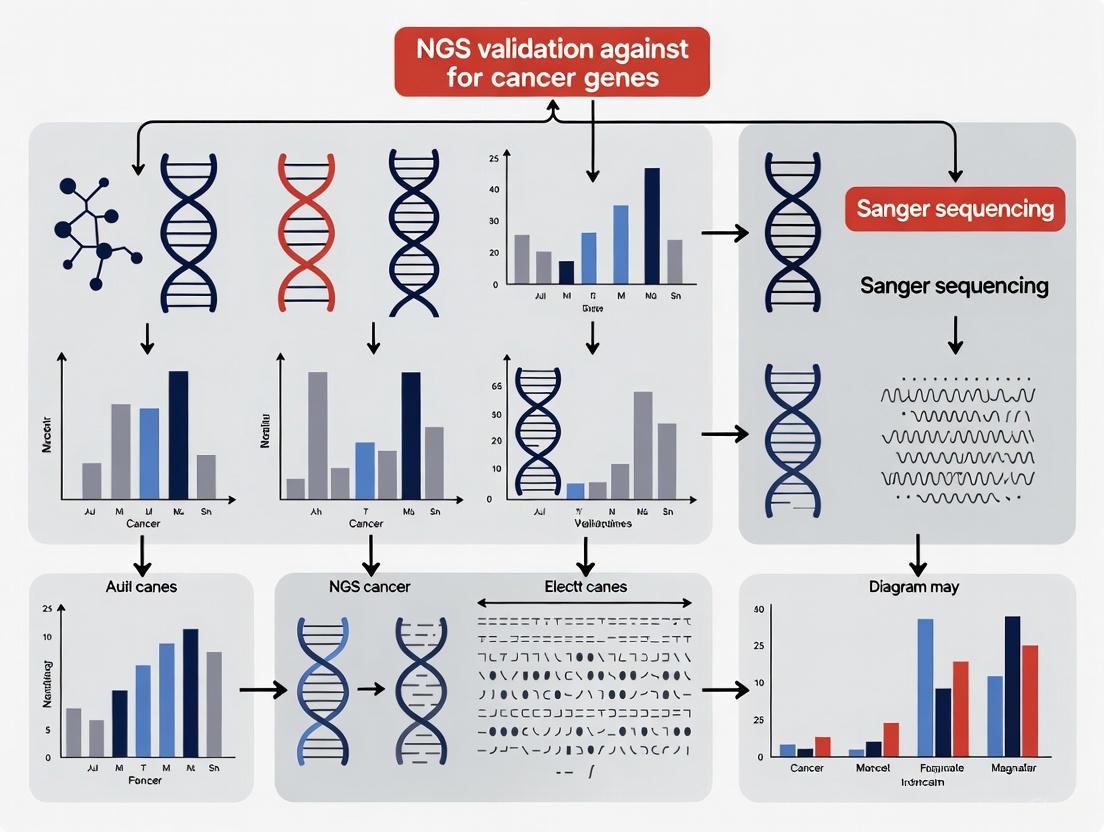

This article provides a comprehensive analysis of next-generation sequencing (NGS) validation against the traditional gold standard, Sanger sequencing, for profiling cancer genes.

NGS vs. Sanger Sequencing: A 2025 Guide to Validation, Performance, and Clinical Application in Cancer Genomics

Abstract

This article provides a comprehensive analysis of next-generation sequencing (NGS) validation against the traditional gold standard, Sanger sequencing, for profiling cancer genes. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of both technologies, details modern NGS methodology and its clinical applications, addresses common troubleshooting and optimization challenges, and presents extensive validation data and comparative performance metrics from recent studies. The synthesis of current evidence demonstrates that rigorously validated NGS panels are not only highly concordant with Sanger sequencing but also offer superior throughput, sensitivity for low-frequency variants, and faster turnaround times, supporting their integration into routine clinical diagnostics and precision oncology workflows.

From Sanger to NGS: Understanding the Technological Revolution in Cancer Genomics

The evolution of DNA sequencing technology from the sequential approach of first-generation methods to the massively parallel architecture of Next-Generation Sequencing (NGS) represents a fundamental paradigm shift in molecular biology and oncology research. This transition has fundamentally transformed our capacity to interrogate cancer genomes, enabling comprehensive genomic profiling that informs personalized treatment strategies. While Sanger sequencing, developed in 1977, long served as the gold standard for genetic analysis, its linear, single-fragment-at-a-time methodology inherently limited its throughput and sensitivity [1] [2]. The emergence of NGS technologies in the mid-2000s introduced a radically different core principle: massively parallel sequencing, whereby millions to billions of DNA fragments are simultaneously sequenced in a single run [1] [3]. This architectural shift has not only dramatically reduced the cost and time required for genomic analyses but has also unlocked new research applications previously considered impossible, particularly in the complex landscape of cancer genomics where tumor heterogeneity, low-frequency somatic variants, and multifaceted resistance mechanisms demand exceptional analytical sensitivity and breadth [1] [3].

In the specific context of cancer genes research, this technological evolution has necessitated rigorous validation protocols to ensure the analytical validity of NGS findings. The research community has traditionally relied on Sanger sequencing as an orthogonal validation method for NGS-detected variants, creating a dynamic interplay between established and emerging technologies [4]. This guide objectively compares the performance of these sequencing approaches through experimental data, detailed methodologies, and practical implementation frameworks relevant to researchers, scientists, and drug development professionals working in oncology.

Fundamental Technological Differences

The core distinction between Sanger sequencing and NGS lies not merely in their contemporary applications but in their fundamental biochemical approaches to determining DNA sequences. Sanger sequencing, also known as chain-termination or dideoxy sequencing, relies on the random incorporation of fluorescently-labeled dideoxynucleotides (ddNTPs) during DNA polymerase-mediated replication [2] [3]. These ddNTPs lack the 3'-hydroxyl group necessary for chain elongation, causing termination of DNA synthesis at specific base positions. The resulting DNA fragments of varying lengths are separated by capillary gel electrophoresis, and the sequence is determined by detecting the fluorescent signal of the terminal nucleotide in each fragment [2]. While modern capillary electrophoresis has streamlined this process, the method remains inherently limited to sequencing one DNA fragment per reaction, creating a natural throughput bottleneck [5].

In contrast, NGS technologies employ diverse biochemical approaches united by their implementation of massively parallel sequencing [1] [3]. One prominent method, Sequencing by Synthesis (SBS), utilizes fluorescently-labeled reversible terminators that allow for the sequential addition of single nucleotides across millions of DNA clusters immobilized on a flow cell surface [3]. After each incorporation cycle, imaging captures the fluorescent signal identifying the base at each cluster, followed by terminator cleavage to enable subsequent cycles. This parallel architecture enables NGS to simultaneously sequence millions to billions of DNA fragments in a single run, generating unprecedented volumes of data that provide both breadth of coverage and depth of sampling for confident variant detection [5] [1].

Table 1: Core Technological Principles Comparison

| Technological Aspect | Sanger Sequencing | Next-Generation Sequencing |

|---|---|---|

| Fundamental Method | Chain termination using ddNTPs | Massively parallel sequencing (e.g., Sequencing by Synthesis) |

| Sequencing Scale | Single DNA fragment per reaction | Millions to billions of fragments simultaneously |

| Read Structure | Long, contiguous reads (500-1000 bp) | Short reads (50-300 bp for Illumina; longer for third-gen) |

| Detection System | Capillary electrophoresis with fluorescent detection | High-resolution optical imaging of clustered fragments |

| Throughput Capacity | Low to medium throughput | Extremely high throughput |

| Data Output Volume | Small data per run (single sequence chromatograms) | Massive datasets (gigabases to terabases per run) |

Performance Comparison in Cancer Genomics

Empirical studies directly comparing Sanger sequencing and NGS in cancer research settings consistently demonstrate distinct performance characteristics that inform their optimal applications. A critical performance differentiator lies in analytical sensitivity – the minimum variant allele frequency (VAF) detectable by each method. Sanger sequencing typically has a detection limit of approximately 15-20% allele frequency, meaning subclonal mutations present in minor tumor cell populations may remain undetected [5] [1]. This limitation proves particularly problematic in analyzing heterogeneous tumor samples or detecting minimal residual disease where cancer-associated mutations may exist at very low frequencies.

NGS platforms significantly surpass this sensitivity threshold through their deep sequencing capabilities. Depending on sequencing depth, NGS can reliably detect variants at frequencies as low as 1-5% [5] [1]. In a 2015 study focused on PIK3CA mutations in breast cancer, NGS identified mutations with variant frequencies below 10% that were missed by Sanger sequencing [6]. This enhanced sensitivity enables researchers to identify subclonal populations within tumors, track evolving mutation patterns during therapy, and detect emerging resistance mechanisms at earlier timepoints.

The throughput and multiplexing capacity of each method also differs substantially. Sanger sequencing operates most efficiently when interrogating a small number of genomic targets (typically 1-20 targets) across limited sample numbers [5] [2]. In contrast, NGS can simultaneously evaluate hundreds to thousands of genes in a single assay, making it uniquely suited for comprehensive genomic profiling [1]. This capability proves invaluable in oncology research, where multiple driver mutations across numerous genes may contribute to tumor pathogenesis and treatment response.

Table 2: Analytical Performance Comparison in Cancer Research

| Performance Metric | Sanger Sequencing | Next-Generation Sequencing | Experimental Evidence |

|---|---|---|---|

| Sensitivity (Limit of Detection) | ~15-20% variant allele frequency | As low as 1% variant allele frequency | NGS detected PIK3CA mutations with <10% VAF missed by Sanger [6] |

| Variant Concordance | Gold standard for single variants | >99.9% concordance for high-quality variants | 99.965% validation rate for NGS variants in ClinSeq study (5,800+ variants) [7] |

| Multiplexing Capacity | Limited; cost-effective for 1-20 targets | High; simultaneous analysis of hundreds to thousands of targets | Custom panels with 57-97 genes enable comprehensive profiling [4] |

| Cost Efficiency | Lower cost for limited targets; high cost per base | Higher initial cost; lower cost per base for large regions | More cost-effective for sequencing multiple genes [6] [5] |

| Discovery Power | Limited to known targets in amplified regions | High; detects novel variants, structural variants, CNVs | Identifies mutations outside traditional hotspots (exons 1, 4, 7, 13 of PIK3CA) [6] |

Experimental Validation and Methodologies

Validation Studies and Concordance Metrics

Rigorous validation studies have quantified the analytical performance and concordance between NGS and Sanger sequencing in cancer gene research. A landmark analysis from the ClinSeq project systematically evaluated Sanger-based validation of NGS variants across 684 exomes [7]. From over 5,800 NGS-derived variants subjected to orthogonal Sanger confirmation, only 19 were not initially validated by Sanger sequencing. Upon further investigation using newly designed sequencing primers, 17 of these 19 variants were confirmed by Sanger, while the remaining two exhibited low quality scores in the exome sequencing data [7]. This resulted in an overall validation rate of 99.965% for NGS variants using Sanger sequencing, leading researchers to question the utility of routine orthogonal validation for NGS variants that meet established quality thresholds [7].

Similar findings emerged from a 2015 breast cancer study focusing on PIK3CA mutation status [6]. In this analysis of 186 breast carcinomas, 55 PIK3CA mutations occurred in exons 9 and 20, with 52 successfully detected by both NGS and Sanger sequencing, yielding a 98.4% concordance between the platforms [6]. Notably, the three mutations missed by Sanger sequencing all had low variant frequencies below 10%, highlighting the sensitivity advantage of NGS for detecting subclonal mutations in heterogeneous tumor samples [6]. Additionally, NGS identified mutations in exons 1, 4, 7, and 13 of PIK3CA that would have been missed by conventional Sanger approaches targeting only known hotspot regions [6].

Detailed Experimental Protocol

For researchers seeking to implement similar validation studies, the following methodology from published literature provides a robust framework:

DNA Extraction and Quality Control

- Extract genomic DNA from tumor samples (e.g., using QIAamp DNA Mini Kit) [6]

- Quantify DNA concentration using fluorometric methods (e.g., Qubit fluorometer) [6]

- Assess DNA quality and ensure absence of degradation

- For tumor samples, ensure representative tumor content (typically ≥30% tumor cells) [6]

Library Preparation for Targeted NGS

- Utilize 10-50 ng of genomic DNA as input [6] [4]

- Employ targeted enrichment approaches:

- Incorporate molecular barcodes (unique identifiers) for sample multiplexing [6]

- Validate library quality and quantity before sequencing (e.g., using Qubit instrument) [6]

NGS Sequencing and Data Analysis

- Perform sequencing on appropriate platform (e.g., Illumina MiSeq, Ion PGM) with sufficient coverage [6] [4]

- Ensure minimum coverage depth of 30×, with recommended 100× or higher for sensitive variant detection [4]

- Implement bioinformatic pipeline:

Sanger Sequencing Validation

- Design PCR primers flanking variant regions using Primer3 algorithm [7] [4]

- Verify primer specificity and absence of polymorphisms in binding sites [4]

- Perform PCR amplification and purify products

- Conduct Sanger sequencing using fluorescent terminator chemistry

- Analyze chromatograms and confirm variants through bidirectional sequencing [7]

Visualization of Sequencing Workflows

Sanger Sequencing Workflow

Next-Generation Sequencing Workflow

Research Reagent Solutions for Sequencing Experiments

Table 3: Essential Research Reagents for Sequencing Studies

| Reagent/Kit | Function | Application Notes |

|---|---|---|

| QIAamp DNA Mini Kit (Qiagen) | DNA extraction from blood or tissue | Provides high-quality DNA for downstream sequencing; suitable for FFPE samples with modifications [6] |

| Ion AmpliSeq Library Kit (Thermo Fisher) | Targeted library preparation for NGS | Enables multiplex PCR-based target enrichment; optimal for small amplicons (<175 bp) [6] |

| SureSelect Target Enrichment (Agilent) | Hybrid capture-based library preparation | Uses biotinylated RNA baits for target capture; suitable for custom gene panels [4] |

| FastStart Taq DNA Polymerase (Roche) | PCR amplification for Sanger sequencing | Provides high fidelity amplification for validation studies [4] |

| BigDye Terminator v3.1 (Thermo Fisher) | Cycle sequencing for Sanger method | Fluorescent dye terminators for capillary electrophoresis [7] |

| MiSeq Reagent Kits (Illumina) | Sequencing chemistry for NGS | Provides cluster generation and sequencing-by-synthesis reagents for Illumina platforms [4] |

The shift from sequential to massively parallel sequencing represents more than a technological upgrade; it constitutes a fundamental transformation in how researchers approach cancer genomics. The core principles of NGS – massive parallelism, deep sequencing, and multiplexing capacity – provide distinct advantages for comprehensive genomic profiling in oncology research, particularly for detecting low-frequency variants, identifying novel cancer-associated mutations outside traditional hotspots, and analyzing complex, heterogeneous tumor samples [6] [1].

While Sanger sequencing maintains its role as a gold standard for validating specific variants and for projects requiring limited targeted sequencing [2] [3], the overwhelming evidence from validation studies demonstrates that NGS technologies deliver exceptional accuracy (>99.9% concordance) when appropriate quality metrics are maintained [7]. The research community is increasingly questioning the necessity of routine orthogonal Sanger validation for all NGS-detected variants, particularly as NGS platforms continue to improve in accuracy and reliability [7] [4].

For cancer researchers and drug development professionals, strategic implementation of both technologies involves matching the sequencing approach to the specific research question. Sanger sequencing remains optimal for simple validation studies and low-target-number projects, while NGS provides unparalleled power for discovery-based research, comprehensive genomic profiling, and studies requiring detection of low-frequency variants in complex cancer genomes. As NGS technologies continue to evolve and integrate with emerging analytical approaches like artificial intelligence and single-cell sequencing, their central role in advancing precision oncology will only intensify, further solidifying the paradigm shift from sequential to massively parallel sequencing.

Within cancer genomics, the accurate detection of somatic and germline variants is paramount for driving research and therapeutic development. For decades, Sanger sequencing has served as the undisputed gold standard for DNA sequencing, providing the foundational data for the Human Genome Project and countless clinical assays. However, the rise of next-generation sequencing (NGS) has transformed the scale of genomic inquiry, enabling the parallel interrogation of hundreds of cancer-related genes. This guide objectively compares the performance of Sanger sequencing against NGS technologies, with a specific focus on the critical practice of using Sanger to validate NGS-derived variants in cancer gene research. We summarize comparative performance data, detail experimental protocols from key studies, and provide a toolkit for researchers navigating the integration of these complementary technologies.

Sanger Sequencing as a Gold Standard

Sanger sequencing, developed in 1977, operates on the principle of chain-termination. It utilizes fluorescently labeled dideoxynucleotides (ddNTPs) that, when incorporated by DNA polymerase, halt DNA strand elongation. The resulting fragments are separated by capillary electrophoresis, generating a chromatogram that reveals the DNA sequence [8] [9]. Its status as a gold standard is anchored on two pillars: exceptional accuracy and widespread application in clinical validation.

Unmatched Accuracy and Reliability

Sanger sequencing is renowned for its high base-calling accuracy, typically cited at 99.99%, with an error rate as low as 0.001% [10] [8]. This precision stems from its robust biochemistry, which is less susceptible to context-specific errors (e.g., in homopolymer regions) that can plague some NGS technologies. The output—a clear chromatogram—allows for direct visual verification of variants, including heterozygous calls, by human experts [11] [10].

The Traditional Role in Orthogonal Validation

Orthogonal validation—confirming a result with a different methodological principle—is a cornerstone of clinical and research genomics. For years, guidelines from bodies like the American College of Medical Genetics (ACMG) have recommended Sanger sequencing as the orthogonal method to confirm variants identified by NGS before reporting [12] [13]. This practice was born from the early need to verify findings from nascent, high-throughput but potentially error-prone NGS platforms.

Performance Comparison: Sanger Sequencing vs. NGS

The following tables provide a quantitative and qualitative comparison of Sanger sequencing and NGS, synthesizing data from multiple studies to offer a clear performance overview.

Table 1: Key Technical and Operational Specifications

| Aspect | Sanger Sequencing | Next-Generation Sequencing (NGS) |

|---|---|---|

| Principle | Chain-termination, capillary electrophoresis [8] | Massively parallel sequencing (e.g., reversible terminators, semiconductor) [5] [9] |

| Throughput | Low (one fragment per reaction) [14] | Very High (millions to billions of fragments per run) [5] [9] |

| Read Length | Long (500–1000 bp) [8] [14] | Short to Long (Illumina: 150-600 bp; PacBio/Nanopore: >10,000 bp) [14] |

| Typical Accuracy | ~99.99% [10] [8] | >99.9% (varies by platform and base position) [14] |

| Detection Limit (VAF) | ~15–20% [5] [9] | ~1–5% (with sufficient depth) [5] [14] |

| Best Applications | Single gene testing, known variant confirmation, validation [11] [9] | Large gene panels, whole exome/genome, novel discovery, low-frequency variant detection [5] [9] |

Table 2: Experimental Validation Data from Comparative Studies

| Study Description | Total NGS Variants | Variants Not Validated by Initial Sanger | Final Concordance Rate | Key Findings |

|---|---|---|---|---|

| ClinSeq Exome Study (2016) [7] | ~5,800 | 19 | 99.97% | 17 of 19 discrepancies were due to Sanger primer issues, not NGS errors. |

| Whole Genome Sequencing Study (2025) [12] [13] | 1,756 | 5 | 99.72% | Proposed quality filters (QUAL≥100, DP≥15, AF≥0.25) could reduce needed Sanger validation to 1.2% of variants. |

| Targeted NGS Panels (Various) [12] | Varies | Varies | 91.29%–98.7% | Concordance is highly dependent on the enrichment panel and specific quality filters applied. |

Key Performance Insights from Data

- Extremely High Concordance: When NGS variants are of high quality, Sanger validation confirms them at a rate exceeding 99.9%, challenging the universal necessity of orthogonal confirmation [7].

- The Source of Discrepancies: A significant portion of initial validation failures can be attributed not to NGS errors but to limitations in Sanger sequencing itself, such as primer binding issues that prevent the variant from being amplified and sequenced [7].

- Shifting Validation Paradigm: Recent large-scale studies conclude that a single round of Sanger sequencing is statistically more likely to incorrectly refute a true NGS variant than to correctly identify a false positive. The field is moving towards using quality thresholds (read depth, allele frequency, quality scores) to define a subset of "high-quality" NGS variants that do not require Sanger validation [7] [12].

Inherent Limitations of Sanger Sequencing

Despite its gold-standard status, Sanger sequencing possesses several inherent limitations that become apparent when compared to NGS, especially in a cancer research context.

Low Throughput and Scalability

Sanger sequencing processes a single DNA fragment per reaction. Sequencing a large gene or multiple genes requires numerous individual reactions, making it cost-prohibitive and inefficient for projects larger than a handful of targets [5] [10] [14]. This is fundamentally incompatible with the scale of modern cancer panel, exome, or genome sequencing.

Poor Sensitivity for Low-Frequency Variants

Sanger sequencing's detection limit for a variant allele in a mixed sample is typically 15–20% [5] [9]. The method generates a composite chromatogram, where a minor allele must be present in a high proportion to be distinguishable from background noise. This makes it ineffective for detecting somatic mutations in heterogeneous tumor samples, minimal residual disease, or subclonal populations—a critical application in cancer genomics where NGS excels with its ability to detect variants at frequencies of 1% or even lower [5] [14].

Limited Discovery Power

Sanger sequencing is a targeted method, ideal for confirming known or suspected variants. It offers minimal power for novel discovery, such as identifying new fusion genes, non-coding drivers, or complex structural variations across the genome [5]. NGS, with its hypothesis-free and comprehensive genomic coverage, is uniquely suited for these discovery applications.

Experimental Protocols for NGS Validation

The standard protocol for validating NGS variants using Sanger sequencing involves a multi-step process to ensure robustness. The following diagram outlines the core workflow and decision-making pathway based on current best practices.

Detailed Methodology for Sanger Validation

The following protocol is adapted from large-scale validation studies [7] [12].

Variant Selection and Prioritization:

- Select NGS variants for confirmation based on clinical or biological significance.

- Apply quality filters (e.g., depth of coverage (DP) ≥ 15, allele frequency (AF) ≥ 0.25, quality score (QUAL) ≥ 100) to identify variants that may not require validation [12].

Primer Design:

PCR Amplification:

- Perform PCR using the original genomic DNA as template.

- Use high-fidelity DNA polymerase to minimize PCR errors.

Sequencing Reaction and Cleanup:

- Perform the cycle-sequencing PCR using fluorescent dye-terminator chemistry (e.g., BigDye).

- Clean up the sequencing reaction to remove unincorporated terminators.

Capillary Electrophoresis:

- Load the purified product onto a capillary sequencer.

Data Analysis:

- Manually inspect the chromatogram files using software such as Sequencher.

- For heterozygous variants, confirm the presence of double peaks (overlapping A/C/G/T) at the variant position. A single, clean peak indicates a homozygous call.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Reagent Solutions for Sanger Sequencing Validation

| Item | Function | Example/Note |

|---|---|---|

| High-Fidelity DNA Polymerase | Amplifies the target region from genomic DNA with minimal errors. | Enzymes with proofreading activity (e.g., Pfu) are preferred. |

| Dye-Terminator Kit | Fluorescently labels DNA fragments during the chain-termination sequencing reaction. | BigDye Terminator v3.1 is a common commercial kit. |

| Capillary Sequencer | Separates fluorescently labeled DNA fragments by size and detects the fluorescence. | Applied Biosystems 3130xl or 3500xl Series. |

| Primer Design Software | Designs specific oligonucleotide primers that flank the variant of interest. | Primer3, PrimerBlast. |

| Sequence Analysis Software | Aligns sequencing traces to a reference sequence and facilitates manual variant review. | Sequencher, GeneCodes; manual review is critical. |

The Evolving Role of Sanger in the NGS Era

The relationship between Sanger sequencing and NGS is best described as complementary, not competitive [9]. While NGS is unequivocally superior for discovery and high-throughput screening, Sanger sequencing retains a vital, albeit more focused, role in the modern genomics laboratory.

Its primary applications are now:

- Validation of Critical Variants: Providing an extra layer of confidence for key findings before publication or clinical reporting, especially when NGS quality metrics are borderline.

- Filling in Gaps: Sequencing regions with poor or no coverage in NGS data due to high GC-content or other technical challenges.

- Rapid, Targeted Sequencing: For projects where only one or a few known loci need to be interrogated, Sanger remains the most cost-effective and rapid technology [11] [9].

As NGS technology continues to mature and quality metrics become more reliable, the mandatory use of Sanger validation is expected to further decline. The future of Sanger sequencing lies not as a universal validator, but as a specialized tool within a broader genomic toolkit, its use dictated by specific project needs rather than blanket policy [7] [12].

Next-generation sequencing (NGS) has revolutionized genomic analysis in cancer research, presenting a paradigm shift from traditional Sanger sequencing. While Sanger sequencing has served as the gold standard for decades, providing high accuracy for interrogating single genes, its limitations in throughput and scalability have become increasingly apparent in oncology, where tumor heterogeneity and complex mutational profiles are the norm [15]. In contrast, NGS technologies leverage massively parallel sequencing, enabling researchers to process millions of DNA fragments simultaneously [5]. This fundamental difference in approach has profound implications for throughput, scale, and cost-effectiveness in cancer gene research. The transition from single-gene analysis to comprehensive genomic profiling represents a critical evolution in molecular diagnostics, allowing scientists to uncover novel biomarkers, identify rare variants, and develop more personalized treatment strategies for cancer patients [16] [15]. This guide objectively compares these technologies within the context of validating NGS for cancer gene research, providing researchers with the analytical framework needed to select the appropriate sequencing method for their specific experimental requirements.

Technology Comparison: Fundamental Differences and Capabilities

The core distinction between Sanger and next-generation sequencing lies in their underlying methodologies and resulting capabilities. Sanger sequencing, also known as dideoxy or capillary electrophoresis sequencing, employs a chain-termination method that generates DNA fragments of varying lengths which are separated by capillary gel electrophoresis [17]. This process sequences a single DNA fragment at a time, making it reliable for small-scale applications but inherently limited for comprehensive genomic studies [5]. The technology requires a homogeneous template for optimal results and provides data in the form of chromatograms (trace or AB1 files) from which DNA sequences are determined [18].

In contrast, NGS technologies utilize massively parallel sequencing, processing millions of fragments simultaneously per run [5]. This high-throughput approach enables the sequencing of hundreds to thousands of genes concurrently, providing unprecedented discovery power to detect novel or rare variants through deep sequencing [5]. The NGS workflow typically involves DNA fragmentation, adapter ligation for library preparation, massive parallel sequencing, and sophisticated bioinformatic analysis to align sequences and identify variants [15]. The output consists of raw data in FASTQ format, which requires specialized computational pipelines for processing and interpretation [18].

Table 1: Core Technology Comparison Between Sanger and Next-Generation Sequencing

| Feature | Sanger Sequencing | Next-Generation Sequencing |

|---|---|---|

| Sequencing Principle | Chain termination with dideoxynucleotides | Massively parallel sequencing |

| Throughput | Single DNA fragment per run | Millions of fragments simultaneously |

| Target Discovery | Limited to known, predefined targets | Unbiased sequence discovery possible |

| Data Output | Limited data output | Large amount of data |

| Multiplexing Capability | Not possible | High capacity with sample multiplexing |

| Quantitative Analysis | Not quantitative; limited heterogeneity detection | Quantitative capability |

| Applications in Cancer Research | Ideal for sequencing single known cancer genes | Detects mutations, structural variants across multiple genes |

Throughput and Scale: A Quantitative Analysis

The throughput advantage of NGS over Sanger sequencing is not merely incremental but represents an exponential improvement that fundamentally transforms research capabilities in cancer genomics. While Sanger sequencing typically processes one gene per reaction, requiring separate reactions for each target, NGS can sequence hundreds to thousands of genes simultaneously in a single run [5] [18]. This massive parallelization enables comprehensive genomic profiling that would be practically impossible with Sanger technology alone.

The scale of data generation highlights this dramatic difference. A single NGS run can generate gigabases to terabases of sequence data, whereas Sanger sequencing produces limited data output in comparison [15]. This high-throughput capability makes NGS particularly valuable for analyzing complex cancer genomes, where multiple genes and regulatory regions must be interrogated to understand tumorigenesis fully. The technology can identify various genomic alterations—including single nucleotide variants (SNVs), insertions and deletions (Indels), copy number variations (CNVs), and structural variants—from a single assay [19].

In practical terms, this throughput advantage translates directly to research efficiency. A 2025 study validating a 61-gene oncopanel demonstrated that NGS successfully detected 794 mutations across 43 unique samples, including all 92 known variants previously identified by orthogonal methods [16]. The assay achieved 98.23% sensitivity for detecting unique variants with 99.99% specificity, performance metrics that would require an impractical number of Sanger reactions to replicate [16]. This comprehensive profiling capability is further enhanced by the ability to multiplex numerous samples in a single sequencing run, dramatically increasing throughput while reducing per-sample costs for large-scale studies [5].

Cost-Effectiveness Analysis: Beyond Per-Base Calculations

When evaluating the cost-effectiveness of sequencing technologies, it is essential to consider both direct costs and holistic economic factors within the research context. While Sanger sequencing remains cost-effective for interrogating fewer than 20 targets, its cost structure becomes prohibitive for larger-scale projects, with expenses reaching approximately $500 per megabase [20]. In contrast, NGS costs have decreased dramatically to less than $0.50 per megabase for platforms like Illumina HiSeq2000, making it significantly more economical for comprehensive genomic studies [20].

A systematic review of cost-effectiveness evidence found that targeted NGS panels (2-52 genes) become cost-effective when 4 or more genes require analysis [21]. This economic advantage extends beyond direct sequencing costs to encompass holistic factors including reduced turnaround time, decreased personnel requirements, fewer hospital visits, and lower overall institutional costs [21]. The economic model shifts from a per-reaction to a per-information basis, with NGS providing substantially more genomic data per dollar invested.

Table 2: Cost and Operational Efficiency Comparison

| Cost Factor | Sanger Sequencing | Next-Generation Sequencing |

|---|---|---|

| Cost per Megabase | ~$500/Mb [20] | <$0.50/Mb (Illumina HiSeq2000) [20] |

| Cost-Effectiveness Threshold | Economical for 1-20 targets [5] | Cost-effective when ≥4 genes require testing [21] |

| Personnel Requirements | Higher per-gene personnel time | Reduced staffing needs through automation |

| Turnaround Time | Faster for single genes; slower for multiple genes | ~4 days for comprehensive 61-gene panel [16] |

| Sample Requirements | Increasing sample amount needed for more targets | More information per sample amount [17] |

| Multiplexing Capability | Not possible | Significant cost savings through sample multiplexing |

The implementation of in-house NGS testing has demonstrated substantial operational efficiencies. A 2025 study reported reducing turnaround time from approximately 3 weeks with external testing to just 4 days with an in-house NGS workflow, while also lowering costs associated with shipping and service fees [16]. This accelerated timeline can be critical in cancer research programs where rapid genomic characterization directly impacts project timelines and therapeutic development.

Experimental Validation: Protocols and Performance Metrics

Validation Protocols for NGS in Cancer Research

Robust experimental validation is crucial for implementing NGS in cancer gene research. A comprehensive protocol for validating targeted NGS panels should include:

Panel Design and Target Enrichment: The TTSH-oncopanel study targeted 61 cancer-associated genes using a hybridization-capture-based DNA target enrichment method with library kits compatible with an automated library preparation system [16]. This approach reduces human error, contamination risk, and improves consistency compared to manual methods.

Sequencing and Quality Control: Researchers performed sequencing using the MGI DNBSEQ-G50RS sequencer with combinatorial Probe-Anchor Synthesis (cPAS) technology [16]. Quality metrics should include assessment of base call quality (percentage of bases ≥ Q30), coverage uniformity (>99%), and percentage of target regions with sufficient coverage (>98% at ≥100× unique molecules) [16].

Variant Calling and Annotation: The protocol should utilize validated bioinformatics pipelines, such as the Sophia DDM software which employs machine learning for variant analysis and connects molecular profiles to clinical insights through OncoPortal Plus, classifying somatic variations by clinical significance [16].

Analytical Validation: Determine the limit of detection (LOD) by titrating DNA input and variant allele frequencies. The TTSH-oncopanel established ≥50 ng DNA input as requisite and minimum detectable VAF of 2.9% for both SNVs and INDELs [16].

NGS Cancer Gene Research Workflow

Performance Metrics and Validation Against Sanger

Recent large-scale studies have systematically evaluated NGS accuracy compared to Sanger sequencing. A landmark analysis from the ClinSeq project compared over 5,800 NGS-derived variants against Sanger sequencing data, measuring a validation rate of 99.965% for NGS variants using Sanger sequencing as the reference [7]. Notably, when discrepancies occurred, a single round of Sanger sequencing was more likely to incorrectly refute a true positive variant from NGS than to correctly identify a false positive variant from NGS [7].

In another comparative study of HIV-1 gp160 amplicons, NGS consensus sequences were either identical or nearly identical to Sanger sequences when available, and in cases of mismatches, the nucleotide in the NGS sequence matched all other sequences from that patient, suggesting potential errors in the Sanger sequence [22]. These findings challenge the conventional wisdom that Sanger sequencing should routinely validate NGS results.

Performance metrics from a 2025 pan-cancer validation study demonstrate the robust analytical performance achievable with NGS: sensitivity of 98.23%, specificity of 99.99%, precision of 97.14%, and accuracy of 99.99% at 95% confidence intervals [16]. The assay also showed 99.99% repeatability and 99.98% reproducibility across technical replicates [16].

Essential Research Toolkit for NGS Implementation

Successful implementation of NGS in cancer gene research requires specific reagents, instruments, and computational resources. The following toolkit outlines essential components for establishing a robust NGS workflow:

Table 3: Research Reagent Solutions for NGS Cancer Gene Studies

| Component | Function | Implementation Example |

|---|---|---|

| Library Preparation Kit | Fragments DNA and adds adapters for sequencing | Sophia Genetics library kits with automated MGI SP-100RS system [16] |

| Target Enrichment System | Captures genomic regions of interest | Hybridization-capture with custom biotinylated oligonucleotides for 61-gene panel [16] |

| Sequencing Platform | Performs massively parallel sequencing | MGI DNBSEQ-G50RS sequencer with cPAS technology [16] |

| Bioinformatics Software | Analyzes sequencing data, calls variants | Sophia DDM with machine learning for variant analysis [16] |

| Reference Standards | Validates assay performance | HD701 positive control with 13 known mutations [16] |

| Quality Control Tools | Assesses DNA quality and quantity | Quantitative PCR for library quantification [16] |

NGS Workflow Component Relationships

In addition to these core components, successful NGS implementation requires appropriate computational infrastructure for data storage and analysis, as a single whole-genome sequencing run can generate 2.5 terabytes of data [20]. The bioinformatics pipeline must include tools for read alignment, variant calling, annotation, and prioritization of clinically actionable mutations in cancer genes such as KRAS, EGFR, ERBB2, PIK3CA, TP53, and BRCA1 [16].

The comparative analysis of NGS and Sanger sequencing reveals a clear technological evolution in cancer genomics research. NGS provides overwhelming advantages in throughput, scale, and cost-effectiveness for comprehensive genomic studies requiring analysis of multiple gene targets. The validation data demonstrates that NGS achieves exceptional accuracy (99.99%) that challenges the necessity of routine Sanger confirmation [16] [7]. For research focused on interrogating a small number of targets (≤3 genes), Sanger sequencing remains a cost-effective and efficient option [5] [21]. However, for comprehensive cancer gene profiling, targeted NGS panels offer superior discovery power, better mutation resolution, and greater overall value when considering holistic research costs [21].

The decision framework for technology selection should consider project scope, target number, available budget, and required turnaround time. As NGS technologies continue to evolve with emerging applications in liquid biopsy, single-cell sequencing, and spatial transcriptomics, their central role in advancing cancer research will undoubtedly expand [19] [15]. Research institutions should prioritize developing the infrastructure and expertise needed to leverage these transformative technologies, ensuring that cancer patients benefit from the most comprehensive genomic insights available.

In the landscape of modern genomics, next-generation sequencing (NGS) has undeniably transformed cancer research with its massively parallel architecture, enabling the comprehensive profiling of tumors across hundreds of genes simultaneously [1] [15]. Despite this revolutionary capability, a critical dependency remains: the validation of NGS-derived findings by Sanger sequencing, the established gold standard for accuracy [3] [23]. First developed by Frederick Sanger in 1977, this method's enduring role is not a relic of tradition but a testament to its unparalleled precision for confirming critical genetic variants [23]. Within oncology research and drug development, where a single-nucleotide error can alter therapeutic decisions or trial outcomes, Sanger sequencing provides the definitive benchmark against which NGS results are verified [24]. This article delineates the technical and methodological reasons for this hierarchical relationship, providing researchers with a clear framework for integrating both technologies into robust, reliable genomic workflows.

Technical Comparison: Throughput Versus Precision

The fundamental distinction between NGS and Sanger sequencing lies in their underlying approach. Sanger sequencing, a capillary electrophoresis-based method, processes a single DNA fragment per reaction, generating a long, contiguous read with exceptional per-base accuracy [3] [24]. In contrast, NGS employs massively parallel sequencing, simultaneously analyzing millions of DNA fragments to deliver immense throughput but yielding shorter reads [1] [25]. This core difference dictates their respective roles in the research pipeline.

Table 1: Fundamental Technical Characteristics Compared

| Feature | Sanger Sequencing | Next-Generation Sequencing (NGS) |

|---|---|---|

| Fundamental Method | Chain termination with dideoxynucleotides (ddNTPs) and capillary electrophoresis [3] | Massively parallel sequencing (e.g., Sequencing by Synthesis) [1] [3] |

| Throughput | Low; one fragment per reaction [24] | Extremely high; millions to billions of fragments simultaneously [1] [25] |

| Read Length | Long (500–1000 base pairs) [3] [24] | Short (typically 50-600 base pairs) [25] |

| Detection Sensitivity | Low (~15-20% variant allele frequency) [1] | High (down to ~1% variant allele frequency) [1] |

| Primary Clinical Utility | Ideal for sequencing single genes and validating specific variants [15] [24] | Comprehensive genomic profiling, detecting novel variants, and analyzing complex structural rearrangements [1] [15] |

The critical advantage of Sanger sequencing is its high per-base accuracy, typically exceeding 99.999% (Phred score > Q50) for the central portion of the read, making it the industry standard for definitive sequence verification [3]. While the per-read accuracy of NGS is lower, its power derives from depth of coverage; by sequencing the same genomic location dozens to thousands of times, statistical models can correct for random errors, achieving high consensus accuracy [3]. However, for confirming a single, defined locus—such as an actionable cancer mutation in EGFR or KRAS—the operational simplicity and definitive result of Sanger are preferred [3].

The Validation Workflow: From NGS Discovery to Sanger Confirmation

A standard genomic workflow in cancer research leverages the respective strengths of each technology: NGS for high-throughput discovery and Sanger for confirmatory validation. This two-tiered approach ensures that key findings, particularly those with clinical or therapeutic implications, are verified with the highest possible accuracy before being reported or acted upon.

The process begins with DNA extraction from patient samples, such as tumor biopsies. The DNA is prepared for NGS through library construction, where it is fragmented and adapter-ligated before undergoing massively parallel sequencing [15]. Bioinformatics pipelines then analyze the millions of short reads, aligning them to a reference genome and calling variants [15]. Variants of high interest—including potential driver mutations, those qualifying patients for clinical trials, or unexpected findings—are flagged for confirmation. For this critical step, specific primers are designed to flank the variant site. The region is amplified via PCR, and the product is sequenced using the Sanger method. The resulting chromatogram provides a clear, visual representation of the base sequence at that locus, allowing for unambiguous confirmation or rejection of the NGS-called variant [23].

Diagram 1: The NGS Discovery and Sanger Validation Workflow. This flowchart outlines the standard practice of using NGS for broad screening and Sanger sequencing for confirming critical genetic variants.

Key Metrics and Experimental Data

The rationale for using Sanger as a validation benchmark is rooted in quantifiable performance metrics. The most significant differentiator is detection sensitivity, which defines the lowest level of a genetic variant that a method can reliably identify. This is particularly crucial in cancer genomics, where tumor heterogeneity means that driver mutations may not be present in all cells.

Table 2: Comparative Performance Metrics for Cancer Genomics

| Metric | Sanger Sequencing | NGS |

|---|---|---|

| Variant Detection Sensitivity | ~15-20% Variant Allele Frequency (VAF) [1] | ~1-5% VAF (can be lower with ultra-deep sequencing) [1] [3] |

| Typical Read Depth | Not applicable (single reaction) | 100x - 1000x+ (depending on application) [3] |

| Optimal Use Case | Confirmatory testing of known or suspected variants [24] | Discovery-based screening for novel and low-frequency variants [1] |

| Ability to Detect Structural Variants | Limited | High (across multiple genes) [1] |

| Cost Model | Cost-effective for low numbers of targets [24] | Cost-effective for high numbers of targets/samples [24] |

Experimental protocols for cross-platform validation are methodical. In a typical experiment, a set of samples with variants identified by NGS is re-analyzed by Sanger sequencing. The methodology for Sanger validation involves:

- Primer Design: Designing specific primers to amplify a 500-1000 bp region encompassing the variant.

- PCR Amplification: Amplifying the target region from the original DNA sample.

- Purification: Cleaning the PCR product to remove excess primers and nucleotides.

- Sequencing Reaction: Performing the cycle sequencing reaction with fluorescently-labeled ddNTPs.

- Capillary Electrophoresis: Running the reaction on an automated sequencer to generate the chromatogram [3] [23].

The results are then compared. A study evaluating a pan-cancer NGS liquid biopsy assay demonstrated this process, using orthogonal methods (including Sanger) to validate its findings and reporting a high concordance of 94% for clinically actionable variants [19]. This experimental paradigm underscores Sanger's role in ensuring the veracity of NGS results before they impact clinical decision-making or drug development pathways.

Essential Research Reagent Solutions

A reliable validation workflow depends on consistent performance from key laboratory reagents. The following table details essential materials and their critical functions in the Sanger sequencing process.

Table 3: Key Research Reagent Solutions for Sanger Sequencing Validation

| Item | Function in the Workflow |

|---|---|

| High-Fidelity DNA Polymerase | Ensures accurate amplification of the target DNA region during PCR, minimizing introduction of replication errors that could be misinterpreted as variants [23]. |

| Fluorescently-Labeled ddNTPs | The chain-terminating nucleotides used in the sequencing reaction; each base (A, T, C, G) is tagged with a distinct fluorescent dye for detection [3]. |

| Capillary Electillation Sequencer | The automated instrument that separates DNA fragments by size via capillary electrophoresis and detects the fluorescent signal to determine the base sequence [3] [23]. |

| Sequence Analysis Software | Specialized software that translates the fluorescent trace data into a sequence chromatogram, facilitating base calling and variant interpretation [23]. |

In conclusion, the narrative of Sanger sequencing versus NGS is not one of obsolescence but of symbiosis. While NGS provides the powerful, wide-angle lens for genomic discovery, Sanger sequencing remains the indispensable magnifying glass for detailed, definitive inspection. Its status as the validation benchmark is anchored in its proven, uncompromising accuracy for targeted sequencing, a quality that continues to be indispensable in cancer research and diagnostic development [3] [24]. As NGS technologies evolve towards greater precision and new methods like nanopore sequencing emerge, the fundamental principle of independent validation will persist [26] [23]. For the foreseeable future, a robust genomic workflow in oncology will continue to rely on the parallel use of both technologies, leveraging the high-throughput capacity of NGS for screening while deferring to the gold-standard accuracy of Sanger sequencing for final confirmation.

Implementing Modern NGS Panels: From Workflow to Clinical Decision-Making

Next-generation sequencing (NGS), also known as massively parallel sequencing (MPS), is a high-throughput technology that enables the simultaneous sequencing of millions to billions of short DNA or RNA fragments in a single run [27]. This core principle of massive parallelization stands in stark contrast to traditional Sanger sequencing, which processes only a single DNA fragment at a time [5] [24]. In the context of cancer research, this transformative capability allows researchers to move beyond examining single genes to performing comprehensive genomic analyses, including whole genomes, exomes, and transcriptomes, providing a systems-level view of the genetic alterations driving cancer progression [28] [29].

The standard NGS workflow consists of four integrated steps: nucleic acid extraction, library preparation, sequencing, and data analysis [30] [31] [32]. This structured pipeline transforms raw biological samples into interpretable genetic data, enabling the discovery of a wide spectrum of genomic variants—from single nucleotide changes to large structural rearrangements—all of which are crucial for understanding tumorigenesis, heterogeneity, and therapeutic resistance [29] [33].

The NGS Workflow: A Step-by-Step Guide

Step 1: Nucleic Acid Extraction

The NGS workflow begins with the isolation of genetic material from samples such as tissue, cells, or biofluids. The quality of this starting material fundamentally impacts all subsequent steps and the reliability of the final data [31] [32]. In cancer research, sample types are diverse, ranging from fresh-frozen tissue and formalin-fixed paraffin-embedded (FFPE) blocks to liquid biopsies containing circulating tumor DNA (ctDNA). Each sample type presents unique challenges; FFPE-derived DNA is often fragmented and cross-linked, while ctDNA is typically very low in abundance within a background of normal cell-free DNA [28].

Three critical metrics must be assessed before proceeding:

- Yield: Sufficient quantity (nanograms to micrograms) must be obtained, especially for limited samples like biopsies [31] [32].

- Purity: Isolated nucleic acids must be free of contaminants like phenol or heparin that can inhibit enzymatic reactions in later steps [31] [32].

- Quality: DNA and RNA integrity is vital. This is often assessed via metrics like the RNA Integrity Number (RIN) for RNA or gel electrophoresis for DNA fragment size [31].

Step 2: Library Preparation

Library preparation converts the isolated nucleic acids into a format compatible with the sequencing platform. This process involves fragmenting the DNA or RNA into smaller pieces and ligating specialized adapter sequences onto them [31] [32]. These adapters serve as universal handles that allow the fragments to bind to the sequencing flow cell and be amplified. Barcodes (or indexes)—short, unique DNA sequences—can also be added during this stage, enabling the pooling (multiplexing) of dozens of samples in a single sequencing run, which dramatically increases throughput and reduces per-sample costs [32].

For targeted sequencing approaches, which are common in cancer research, an enrichment step is included to isolate specific genomic regions of interest, such as known cancer genes [32]. This can be achieved either through amplicon sequencing (using PCR to amplify targets) or hybridization capture (using probe-based pulldown). Targeted panels allow for deeper sequencing of relevant genes, making them ideal for detecting low-frequency variants in heterogeneous tumor samples or liquid biopsies [28] [33].

Step 3: Clonal Amplification and Sequencing

Prior to sequencing, the adapter-ligated DNA fragments are amplified clonally on a flow cell to create millions of clusters, each representing a single original fragment. This intense local amplification is necessary for the sequencer's optical sensor to detect the fluorescence signal during the sequencing reaction [31].

The core of NGS technology is sequencing by synthesis (SBS), a process where DNA polymerase incorporates fluorescently labeled nucleotides into the growing complementary strand one base at a time [5] [31]. Illumina platforms, the most widely used systems, employ a "reversible terminator" method. Each nucleotide is chemically blocked after incorporation, allowing only a single base to be added per cluster per cycle. After imaging to determine the base identity, the terminator is cleaved, and the cycle repeats for the next base [31]. This process generates hundreds of millions of short reads (typically 50-300 base pairs) in a massively parallel fashion.

Step 4: Data Analysis and Interpretation

The final and most computationally intensive step is bioinformatic analysis of the raw sequencing data [30] [29]. This multi-stage process converts raw signal data into biological insights, which is particularly complex in cancer genomics due to tumor heterogeneity and the need to distinguish somatic (tumor-specific) mutations from germline variants.

Table 1: Key Stages in NGS Data Analysis for Cancer Research

| Stage | Key Processes | Common Tools & Applications |

|---|---|---|

| Read Processing | Base calling, adapter trimming, quality filtering, and demultiplexing [32]. | Removes low-quality data and prepares clean reads for analysis. |

| Alignment & Variant Calling | Mapping reads to a reference genome; identifying variants like SNVs and indels [29]. | SAMtools, GATK, VarScan, SomaticSniper; discovers tumor mutations [29]. |

| Interpretation | Pathway analysis, identifying biomarkers/drug targets, correlating variants with clinical data [31] [33]. | PathScan, NetBox; derives biological meaning and clinical relevance [29]. |

The following diagram illustrates the logical progression and key decision points within the core NGS workflow:

Diagram 1: The Core NGS Workflow. This diagram outlines the four major steps, from sample to insight, with detailed sub-processes for library preparation and data analysis.

NGS vs. Sanger Sequencing: A Comparative Analysis for Cancer Research

While both NGS and Sanger sequencing determine the order of nucleotides, their underlying technologies and applications differ substantially [5] [24]. Sanger sequencing, the historical gold standard, is a targeted method best suited for analyzing a single gene or a few amplicons. Its superior accuracy for short reads and simple workflow make it ideal for confirming known mutations. NGS, with its massively parallel nature, provides a panoramic view of the genome, making it the superior tool for discovery and comprehensive profiling [5] [34].

Table 2: Comparative Analysis: NGS vs. Sanger Sequencing in Cancer Research

| Factor | NGS | Sanger Sequencing |

|---|---|---|

| Throughput | High: Millions of reads per run [5] [24]. | Low: Single fragment per run [24]. |

| Genomic Scope | Whole genomes, exomes, transcriptomes, targeted panels [28] [33]. | Single genes or short amplicons [5] [34]. |

| Cost-Effectiveness | Cost-effective for large projects/genes; higher upfront cost [5] [24]. | Cost-effective for interrogating ≤20 targets [5] [24]. |

| Variant Detection | Comprehensive: SNVs, indels, CNVs, fusions, low-frequency variants [5] [28]. | Limited: Best for SNVs/small indels; low sensitivity for variants <15-20% allele frequency [5]. |

| Workflow & Data Analysis | Complex; requires bioinformatics expertise [24] [33]. | Simple workflow; minimal bioinformatics needed [24]. |

Application in Cancer Research: A Hybrid Approach

In modern cancer research, NGS and Sanger are often used synergistically in a hybrid approach [24]. NGS is used for primary discovery—simultaneously screening hundreds of cancer-related genes in a tumor sample to build a comprehensive genetic profile. Subsequently, Sanger sequencing is employed to validate key NGS-identified mutations, especially those with potential clinical significance or those that will be used as biomarkers in downstream assays [24] [29]. This combination leverages the high-throughput discovery power of NGS with the proven accuracy and ease of Sanger for confirmation.

The following decision tree aids in selecting the appropriate method based on project goals:

Diagram 2: Selecting a Sequencing Method for Cancer Research. This decision tree guides the choice between NGS and Sanger based on project scope, objectives, and resources.

Essential Research Reagent Solutions

A successful NGS experiment in cancer research relies on a suite of specialized reagents and tools. The following table details key components of the research toolkit.

Table 3: Essential Research Reagent Solutions for the NGS Workflow

| Item | Function in the NGS Workflow |

|---|---|

| Nucleic Acid Isolation Kits | Extract DNA/RNA from complex sample types (e.g., FFPE, liquid biopsies); critical for yield, purity, and quality [28] [31]. |

| Library Preparation Kits | Fragment nucleic acids and ligate platform-specific adapters and indexes for sequencing [31] [32]. |

| Target Enrichment Panels | Probe sets (e.g., hybrid capture or amplicon) to isolate specific cancer-related genes for targeted sequencing [28] [33]. |

| Sequence Adapters & Barcodes | Oligonucleotides that enable fragment binding to the flow cell and sample multiplexing [31] [32]. |

| Quality Control Assays | Fluorometric and electrophoretic tools (e.g., Qubit, Bioanalyzer) to quantify and qualify samples and libraries pre-sequencing [30] [31]. |

| Bioinformatics Software | Computational tools for base calling, alignment, variant calling, and annotation (e.g., GATK, VarScan, IGV) [29]. |

The NGS workflow—from meticulous library preparation to sophisticated data analysis—provides an unparalleled platform for deciphering the complex genomic landscape of cancer. While Sanger sequencing retains its value for focused applications, the comprehensive nature of NGS has made it the cornerstone of modern oncology research. It enables the discovery of novel driver mutations, the characterization of tumor heterogeneity, and the identification of biomarkers for precision medicine. The choice between these technologies is not a matter of superiority but of strategic alignment with the research objective, whether it is the deep, focused verification of a known variant or the broad, hypothesis-free exploration of the entire cancer genome.

Hybrid-Capture vs. Amplicon-Based Targeted Panels for Solid Tumors

Next-generation sequencing (NGS) has revolutionized genomic profiling in oncology, enabling comprehensive molecular characterization of solid tumors. Two principal methodologies—hybridization capture and amplicon sequencing—dominate targeted NGS approaches for detecting somatic alterations in cancer genomes [35] [36]. The selection between these techniques involves critical trade-offs in performance characteristics, including sensitivity, specificity, workflow efficiency, and genomic coverage [35]. As precision medicine increasingly relies on accurate molecular diagnostics, understanding the technical and performance distinctions between these platforms becomes essential for clinical researchers and drug development professionals.

This comparison guide evaluates hybrid-capture and amplicon-based panels within the context of NGS validation against traditional Sanger sequencing for cancer gene research. While Sanger sequencing previously served as the gold standard for mutation detection, its limitations in throughput, sensitivity, and cost-effectiveness for analyzing multiple genomic regions have led to widespread adoption of NGS technologies [15] [36]. Targeted NGS panels provide a balanced approach, focusing on clinically relevant genomic regions with deeper sequencing coverage and simpler data analysis compared to whole-genome or whole-exome sequencing [36].

Technical Comparison of NGS Methodologies

Fundamental Workflow Differences

Hybridization capture employs biotinylated oligonucleotide probes (baits) designed with homology to genes of interest. These probes selectively hybridize with fragmented DNA libraries, which are then captured using streptavidin-coated magnetic beads to enrich target regions before sequencing [16] [36]. This solution-based capture method provides flexibility in panel design and efficiently covers large genomic regions, including exonic areas with complex architecture [36].

Amplicon sequencing utilizes polymerase chain reaction (PCR) with primers specifically designed to flank target regions of interest. This method directly amplifies target sequences through multiple PCR cycles, creating overlapping amplicons that collectively cover the targeted genes [37] [38]. The amplification-based approach provides inherent target enrichment through primer specificity, resulting in high on-target rates [35].

Table 1: Core Technological Differences Between Hybrid-Capture and Amplicon-Based NGS

| Feature | Hybridization Capture | Amplicon Sequencing |

|---|---|---|

| Enrichment Mechanism | Solution-based probe hybridization | PCR amplification with target-specific primers |

| Number of Workflow Steps | More extensive protocol [35] | Fewer steps, streamlined process [35] |

| Panel Design Flexibility | Virtually unlimited by panel size [35] | Flexible, usually fewer than 10,000 amplicons [35] |

| Input DNA Requirements | Generally higher input requirements | Effective with limited DNA input [36] |

| Optimal Application Scope | Larger target regions, exome sequencing [36] | Smaller, focused gene panels [36] |

Performance Characteristics and Analytical Metrics

The technical differences between these methodologies translate directly into distinct performance profiles that influence their suitability for specific research applications.

Hybrid-capture panels demonstrate superior uniformity of coverage across targeted regions, which is critical for reliable detection of copy number variations (CNVs) and structural variants [38] [36]. These panels also generate lower background noise and fewer false positives due to reduced amplification artifacts and more efficient removal of duplicate reads [35]. The method's solution-phase hybridization allows for more comprehensive coverage of difficult genomic regions, including those with high guanine-cytosine content [36].

Amplicon-based panels typically achieve higher on-target rates because primer-directed amplification provides more specific enrichment of targeted regions [35]. These panels require less input DNA, making them particularly suitable for precious clinical samples with limited material [37] [36]. The streamlined workflow enables shorter turnaround times, a significant advantage in clinical research settings requiring rapid results [35].

Table 2: Performance Comparison of Hybrid-Capture vs. Amplicon-Based NGS

| Performance Metric | Hybridization Capture | Amplicon Sequencing |

|---|---|---|

| On-Target Rate | Moderate due to off-target hybridization [35] | Naturally higher due to primer specificity [35] |

| Coverage Uniformity | Greater uniformity across targets [35] | Variable coverage between amplicons [35] |

| Variant Detection Sensitivity | High sensitivity for SNVs, indels, and CNVs [16] [39] | High for SNVs and indels; variable for CNVs [38] |

| False Positive Rate | Lower noise levels, fewer false positives [35] | Higher potential for amplification artifacts [35] |

| Turnaround Time | More time-intensive process [35] | Less time required from sample to results [35] |

| Multiplexing Capacity | Higher plexity achievable [35] | Limited by primer compatibility [35] |

Experimental Data and Validation Studies

Validation of Hybrid-Capture Panels for Clinical Applications

A 2025 study comprehensively validated a hybridization capture-based panel targeting 61 cancer-associated genes for solid tumor profiling [16]. The researchers developed and optimized the TTSH-oncopanel using a hybridization-capture target enrichment method with library kits from Sophia Genetics, compatible with the automated MGI SP-100RS library preparation system. Sequencing was performed on the MGI DNBSEQ-G50RS platform with cPAS sequencing technology [16].

The validation study demonstrated exceptional analytical performance, with the assay detecting 794 mutations including all 92 known variants from orthogonal methods. Overall performance metrics showed 99.99% repeatability and 99.98% reproducibility across multiple runs. The assay achieved 98.23% sensitivity for detecting unique variants, with 99.99% specificity, 97.14% precision, and 99.99% accuracy at 95% confidence intervals [16]. The study established a minimum detection threshold of 2.9% variant allele frequency (VAF) for both single nucleotide variants (SNVs) and insertion-deletion mutations (indels), with optimal DNA input determined to be ≥50ng [16].

Notably, this hybridization capture approach detected clinically actionable mutations in key cancer genes including KRAS, EGFR, ERBB2, PIK3CA, TP53, and BRCA1. The average turnaround time from sample processing to results was reduced to 4 days, significantly improving upon the 3-week timeframe typically associated with outsourced testing [16].

Performance Evaluation of Amplicon-Based Panels

A 2024 study evaluated the performance of an amplicon-based large panel NGS assay (Oncomine Comprehensive Assay Plus) for detecting MET and HER2 amplification in lung and breast cancers compared to conventional testing methods [38]. This multicenter analysis demonstrated the assay's capability to detect various biomarker types—including single nucleotide variants/indels, copy number variants, fusions, microsatellite instability, tumor mutational burden, and homologous recombination deficiency—in a single workflow [37].

For MET amplification detection in lung cancers, the amplicon-based assay demonstrated 80% sensitivity (4 of 5 FISH-positive cases) and 97.7% specificity (42 of 43 FISH-negative cases), with an overall concordance of 95.8% with fluorescence in situ hybridization (FISH) [38]. For HER2 amplification in breast cancers, the assay showed 66.7% sensitivity (6 of 9 IHC/FISH-positive cases) and 100% specificity (all HER2-negative cases were negative on NGS), with an overall concordance of 93.5% [38].

A critical finding was that all false-negative cases occurred in samples with low-level gene amplification (MET:CEP7 or HER2:CEP17 FISH ratio <3). This limitation highlights a significant challenge for amplicon-based approaches in detecting subtle copy number alterations, potentially due to factors such as inadequate tumor purity, suboptimal DNA quality, or technical limitations in CNV calling from amplicon-based data [38].

Comparative Performance in Detection Efficiency

Hybrid-capture panels demonstrate robust performance across variant types, with particular strength in detecting copy number variations and structural variants. The deeper, more uniform coverage enables more accurate allele frequency quantification and improved detection of subclonal mutations [16] [36].

Amplicon-based panels excel in detecting single nucleotide variants and small indels with high sensitivity, especially when tumor content is limited. However, their performance in copy number variant detection can be inconsistent, particularly for low-level amplifications [38]. The 2024 study revealed that while the overall concordance with conventional methods was high (95.8% for MET and 93.5% for HER2), the reduced sensitivity for amplified targets necessitates careful interpretation of negative results [38].

Figure 1: Comparative Workflows for Hybrid-Capture and Amplicon-Based NGS. The diagram illustrates the fundamental procedural differences between the two target enrichment approaches, highlighting key advantages of each method.

The Scientist's Toolkit: Essential Research Reagents and Platforms

Successful implementation of targeted NGS panels requires carefully selected reagents, platforms, and analytical tools. The following table summarizes key solutions utilized in the cited studies:

Table 3: Essential Research Reagents and Platforms for Targeted NGS

| Category | Specific Products/Platforms | Application/Function |

|---|---|---|

| Hybrid-Capture Panels | TTSH-oncopanel (61 genes) [16] | Comprehensive solid tumor profiling with high sensitivity and specificity |

| Amplicon Panels | Oncomine Comprehensive Assay Plus (501 genes) [37] [38] | Detection of SNVs, indels, CNVs, fusions, and complex biomarkers |

| Library Prep Systems | MGI SP-100RS [16], Ion Chef System [37] | Automated library preparation to reduce human error and increase consistency |

| Sequencing Platforms | MGI DNBSEQ-G50RS [16], Ion GeneStudio S5 Plus [37], Illumina MiSeq [40] | Massively parallel sequencing with platform-specific chemistry approaches |

| Analytical Software | Sophia DDM with OncoPortal Plus [16], Ion Reporter [37] | Variant calling, annotation, and clinical interpretation using bioinformatics pipelines |

| Reference Standards | HD701, HD789, HD827 (Horizon) [16] [37] | Quality control, assay validation, and limit of detection studies |

The choice between hybrid-capture and amplicon-based targeted panels for solid tumor profiling depends primarily on research objectives, sample characteristics, and desired performance metrics. Hybrid-capture panels offer advantages for comprehensive genomic profiling, demonstrating superior performance in detecting copy number variations and structural variants, with higher reproducibility and lower false-positive rates [16] [35]. Amplicon-based panels provide a streamlined workflow with faster turnaround times, higher on-target rates, and better performance with limited DNA input, making them suitable for focused mutation profiling [35] [37] [38].

Both technologies have demonstrated robust validation against orthogonal methods including Sanger sequencing, with hybrid-capture panels achieving >99.99% accuracy and amplicon-based panels showing >94% concordance for most variant types [16] [37]. The emerging trend toward automated library preparation and integrated bioinformatics solutions continues to enhance the reproducibility and standardization of both approaches across research laboratories [16] [37].

For cancer gene research requiring maximal sensitivity for diverse variant types including CNVs, hybrid-capture panels represent the optimal choice. For projects prioritizing rapid turnaround, cost-efficiency, and focused interrogation of known mutational hotspots, amplicon-based panels provide an effective solution. As NGS technologies continue to evolve, both methodologies will maintain important roles in advancing precision oncology research.

The analysis of circulating tumor DNA (ctDNA) represents a cornerstone of precision oncology, offering a minimally invasive method for tumor genotyping, monitoring treatment response, and detecting residual disease. The accurate detection of somatic mutations in ctDNA is technically challenging due to the low abundance of tumor-derived DNA in a high background of normal cell-free DNA. For years, Sanger sequencing (SGS) was the standard for DNA sequencing; however, its low sensitivity (limit of detection ~15–20%) and limited throughput make it unsuitable for detecting low-frequency variants typical in ctDNA [6] [5]. The advent of Next-Generation Sequencing (NGS) has fundamentally transformed this landscape. NGS provides massively parallel sequencing, enabling high-depth coverage that confers a significantly lower limit of detection (down to 0.1–0.5% variant allele frequency) and the ability to interrogate hundreds of genes simultaneously from a limited quantity of input material [41] [42] [5]. This guide provides an objective comparison of validated NGS-based ctDNA assays against traditional Sanger sequencing, framing the discussion within the broader thesis that NGS is an indispensable tool for modern cancer genomics research.

Performance Comparison: NGS vs. Sanger Sequencing

The following tables summarize key performance metrics from recent studies, highlighting the superior capabilities of NGS for ctDNA analysis.

Table 1: Comparative Analytical Performance of NGS ctDNA Assays vs. Sanger Sequencing

| Metric | Sanger Sequencing (SGS) | Targeted NGS for ctDNA | Key Evidence from Validation Studies |

|---|---|---|---|

| Limit of Detection (LoD) | ~15-20% VAF [5] | 0.1% to 0.5% VAF [43] [42] | The PAN100 panel demonstrated an LoD of 0.3% VAF [41]. Northstar Select achieved a 95% LoD of 0.15% VAF for SNVs/Indels [42]. |

| Sensitivity for Low-Frequency Variants | Low; misses subclonal mutations [6] | High; enables detection of rare variants [6] [5] | A multi-site evaluation found mutations above 0.5% VAF were detected with high sensitivity, but performance declined below this threshold [43]. |

| Multiplexing Capability | Single DNA fragment per reaction [5] | Millions of fragments simultaneously; hundreds to thousands of genes [5] | Targeted panels (e.g., 32 to 101 genes) allow parallel detection of SNVs, Indels, CNVs, and fusions from a single assay [41] [19]. |

| Concordance with Tissue NGS | Not routinely used for ctDNA due to low sensitivity | High concordance | The PAN100 panel showed 74.2% overall positive percent agreement (PPA) with tissue NGS [41]. The HP2 assay showed 94% concordance for actionable variants [19]. |

| Variant Types Detected | SNVs, small Indels | SNVs, Indels, CNVs, fusions, MSI [42] [19] | Comprehensive panels like Northstar Select (84 genes) and HP2 (32 genes) report on multiple variant classes from a DNA-only workflow [42] [19]. |

Table 2: Performance of Specific NGS ctDNA Assays from Validation Studies

| Assay Name | Genes Covered | Key Variant Types | Reported Analytical Sensitivity (LoD) | Specificity/PPA |

|---|---|---|---|---|

| PAN100 Panel [41] | 101 | SNVs, Indels | 0.3% VAF | 73.1% PPA (SNVs), 80.0% PPA (Indels) vs. tissue |

| Northstar Select [42] | 84 | SNVs, Indels, CNVs, Fusions, MSI | 0.15% VAF (SNVs/Indels) | Outperformed on-market assays, finding 51% more pathogenic SNVs/Indels |

| Hedera Profiling 2 (HP2) [19] | 32 | SNVs, Indels, CNVs, Fusions, MSI | 0.5% VAF (for reference standards) | 96.92% Sensitivity, 99.67% Specificity (SNVs/Indels) |

Experimental Protocols for ctDNA NGS Validation

Robust validation is critical for implementing NGS-based ctDNA tests. The following protocols are synthesized from published validation studies and best-practice guidelines [44] [45].

Sample Preparation and DNA Extraction

- Sample Collection: Blood samples are collected in cell-stabilizing tubes (e.g., Streck, EDTA) to prevent leukocyte lysis and dilution of ctDNA.

- Plasma Isolation: Centrifugation is performed to separate plasma from cellular components within a few hours of collection.

- Cell-free DNA Extraction: cfDNA is isolated from plasma using commercial silica-membrane or magnetic bead-based kits. The extracted cfDNA is quantified using fluorescence-based assays (e.g., Qubit) and quality-checked using fragment analyzers to confirm a peak at ~160-170 bp [43] [46].

Library Preparation and Target Enrichment

Two primary methods are used for target enrichment in ctDNA NGS assays:

- Hybrid Capture-Based: Following library construction with adapter ligation, biotinylated probes complementary to the target genomic regions (e.g., 101-gene panel) are used to capture the sequences of interest. This method is less prone to allele dropout and can cover larger genomic regions, including introns for fusion detection [41] [44].

- Amplicon-Based: Multiplex PCR primers are used to amplify specific target regions directly from the cfDNA. This method is highly efficient for small target regions but can be susceptible to artifacts from PCR errors or allele dropout if a primer binding site is mutated [6] [44].

To mitigate sequencing errors and enable the detection of very low-frequency variants, Unique Molecular Identifiers (UMIs) are incorporated during library preparation. Each original DNA molecule is tagged with a unique barcode, allowing bioinformatics tools to group duplicate reads and correct for errors introduced during PCR and sequencing [43].

Sequencing and Bioinformatic Analysis

- Sequencing: Libraries are sequenced on a high-throughput NGS platform (e.g., Illumina, Ion Torrent) to achieve a high depth of coverage, often >10,000x, to confidently identify low-frequency variants [43].

- Variant Calling: The bioinformatics pipeline involves:

- Alignment: Reads are aligned to a reference genome (e.g., hg19).

- Consensus Building: Reads with identical UMIs are grouped to generate a consensus sequence, correcting for random errors.

- Variant Calling: Somatic variants (SNVs, Indels) are called against a matched normal sample or a process control. Specialized algorithms are used for calling CNVs and fusions from ctDNA data [43] [44].

The workflow for ctDNA analysis is summarized in the diagram below.

Critical Challenges and Technical Considerations in ctDNA NGS

Despite its advantages, NGS-based ctDNA analysis faces several inherent challenges that validation must address.