Next-Generation Sequencing in Cancer Molecular Profiling: A Comprehensive Guide for Research and Drug Development

Next-generation sequencing (NGS) has fundamentally transformed cancer research and therapeutic development by enabling comprehensive genomic profiling of tumors.

Next-Generation Sequencing in Cancer Molecular Profiling: A Comprehensive Guide for Research and Drug Development

Abstract

Next-generation sequencing (NGS) has fundamentally transformed cancer research and therapeutic development by enabling comprehensive genomic profiling of tumors. This article provides a detailed exploration of NGS technology, from its foundational principles and clinical applications in precision oncology to its crucial role in accelerating drug discovery. It examines key methodological approaches for detecting somatic variants, including single-nucleotide variants (SNVs), insertions/deletions (indels), copy number alterations (CNAs), and structural variants. The content addresses significant implementation challenges such as analytical validation, data interpretation complexities, and reimbursement barriers, while providing practical frameworks for troubleshooting and optimization. Furthermore, it discusses rigorous validation guidelines and comparative effectiveness research essential for clinical translation. Designed for researchers, scientists, and drug development professionals, this resource synthesizes current evidence and best practices to support the effective integration of NGS into cancer research pipelines and precision medicine strategies.

The Genomic Revolution: Understanding NGS Technology and Its Impact on Cancer Biology

Next-generation sequencing (NGS) has fundamentally transformed cancer molecular profiling research, enabling comprehensive genomic characterization that guides diagnostic, prognostic, and therapeutic decisions. This technical guide details the core principles of NGS workflows, from initial library preparation through final data analysis, with specific emphasis on applications in oncology. We provide detailed methodologies for key experiments, quantitative comparisons of current technologies, and standardized bioinformatics approaches tailored to clinical cancer research. The integration of robust NGS methodologies into oncology pipelines has been essential for identifying actionable mutations, tracking clonal evolution, and advancing personalized treatment strategies for cancer patients.

Next-generation sequencing technologies provide massively parallel sequencing capabilities that allow researchers to analyze millions of DNA fragments simultaneously. This high-throughput approach has enabled comprehensive molecular profiling of tumors, revealing the genetic alterations driving oncogenesis, progression, and treatment resistance [1]. The core principle of NGS—massive parallelism—has led to a 96% decrease in sequencing costs per genome since the Human Genome Project, making large-scale cancer genomics studies feasible for research and clinical applications [1].

In cancer research, NGS facilitates a range of applications from targeted panels focusing on known oncogenes and tumor suppressor genes to whole-genome sequencing that reveals complex structural variations and novel drivers. The versatility of NGS platforms allows for analysis of diverse sample types, including challenging formalin-fixed, paraffin-embedded (FFPE) tumor tissues commonly available in pathology archives [2]. As the technology continues to evolve with improved accuracy and throughput, NGS has become an indispensable tool for advancing precision oncology and targeted drug development.

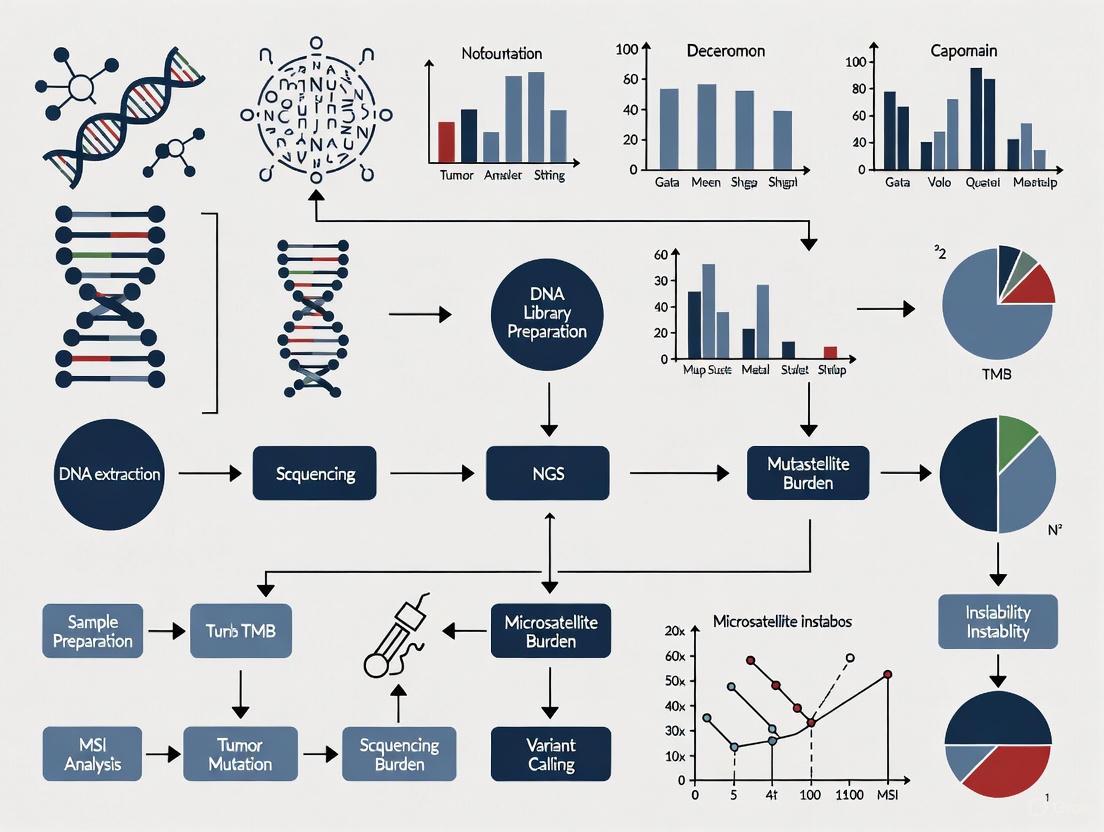

NGS Workflow: From Sample to Insight

The complete NGS workflow encompasses multiple critical stages, each requiring rigorous quality control to ensure data integrity for downstream cancer research applications. The following diagram illustrates the comprehensive pathway from biological sample to clinical insight in cancer profiling:

Sample Preparation and Quality Control

Proper sample preparation is foundational to successful NGS in cancer research, where starting material is often limited or degraded.

Nucleic Acid Extraction: DNA or RNA is extracted from tumor samples, which can include fresh frozen tissue, FFPE blocks, liquid biopsies, or cell-free DNA [3]. For FFPE samples—common in retrospective cancer studies—specialized extraction kits are required to address formalin-induced cross-linking and fragmentation. The RecoverAll Total Nucleic Acid Isolation Kit is specifically designed for this challenging material [2].

Quality Assessment: Rigorous quality control of extracted nucleic acids is critical. Spectrophotometers (e.g., NanoDrop) assess sample concentration and purity through A260/A280 ratios (~1.8 for DNA, ~2.0 for RNA) [4]. Electrophoresis systems (e.g., Agilent TapeStation or Bioanalyzer) evaluate nucleic acid integrity, particularly important for RNA sequencing where the RNA Integrity Number (RIN) predicts sequencing success [4]. For FFPE-derived DNA, fragment size distribution analysis is essential, as samples with extensive degradation (<200 bp) may not be suitable for certain NGS workflows [2].

Table 1: Essential Research Reagent Solutions for NGS Library Preparation

| Reagent/Category | Specific Examples | Function in NGS Workflow | Cancer Research Application |

|---|---|---|---|

| Nucleic Acid Extraction Kits | GeneJet FFPE DNA Purification Kit, RecoverAll Total Nucleic Acid Isolation Kit | Isolate and purify nucleic acids from challenging sample types | Enable analysis of archival FFPE tumor tissues [2] |

| Library Preparation Kits | KAPA HyperPlus Kit, Illumina AmpliSeq v2 Hotspot Panel | Fragment DNA and attach platform-specific adapters | Prepare sequencing libraries from low-input tumor samples [2] |

| Target Enrichment Systems | SeqCap EZ Target Capture System, AmpliSeq Cancer Panels | Selectively enrich genomic regions of interest | Focus sequencing on known cancer-associated genes [2] [3] |

| Target Enrichment Methods | Hybridization capture, Amplicon-based approaches | Enrich for specific genomic regions | Focus on cancer-relevant genes; hybridization capture allows novel variant discovery [2] [1] |

Library Preparation Methods

Library preparation converts extracted nucleic acids into a format compatible with sequencing platforms through fragmentation, adapter ligation, and optional amplification.

Fragmentation and Adapter Ligation: DNA is fragmented by physical (sonication) or enzymatic methods to optimal sizes (100-800 bp) [3]. Platform-specific adapters containing sequencing primer binding sites are ligated to fragment ends. These adapters often include unique molecular barcodes (indexes) that enable multiplexing—pooling multiple samples in a single sequencing run—significantly reducing per-sample costs [1].

Target Enrichment Strategies: In cancer research, targeted sequencing approaches are commonly employed for their cost-effectiveness and depth of coverage for clinically relevant genes. The two primary enrichment methods are:

- Hybridization Capture: Uses biotinylated probes (e.g., SeqCap EZ system) to pull down target regions from fragmented libraries [2]. This method provides more uniform coverage and better capability to detect novel variants within targeted regions.

- Amplicon-Based: Utilizes PCR primers (e.g., Illumina AmpliSeq) to selectively amplify regions of interest [2]. This approach is highly efficient for small target regions but may miss variants in primer-binding sites.

A recent feasibility study on colorectal cancer FFPE samples demonstrated 94% concordance between these two methods for detecting actionable variants across 15 shared cancer-related genes [2].

Sequencing Platforms and Technologies

The NGS landscape in 2025 offers diverse platforms with distinct characteristics suited to different applications in cancer research. The following diagram compares the core technology approaches of major sequencing platforms:

Table 2: Comparison of Current NGS Platforms (2025)

| Platform | Technology | Read Length | Accuracy | Primary Cancer Applications | Throughput Range |

|---|---|---|---|---|---|

| Illumina | Sequencing by synthesis with reversible terminators [1] | 50-300 bp [5] | High (Q30: 99.9%) [4] | Targeted panels, whole exome, RNA-seq, ChIP-seq [5] | Up to 16 Tb/run (NovaSeq X) [6] |

| Pacific Biosciences (Revio) | Single Molecule Real-Time (SMRT) sequencing with HiFi circular consensus [6] | 10-25 kb | Very High (Q30-Q40: 99.9-99.99%) [6] | Structural variant detection, fusion genes, haplotype phasing [6] | 360 Gb/run [6] |

| Oxford Nanopore (Q20+ Kit14) | Nanopore-based electronic signal detection with duplex reading [6] | 1 kb->2 Mb | High (Simplex: Q20/~99%, Duplex: Q30/>99.9%) [6] | Structural variants, epigenetic modifications, rapid diagnostics [6] | Varies by device (MinION to PromethION) |

Bioinformatics Analysis Pipeline

The bioinformatics pipeline transforms raw sequencing data into interpretable results through a multi-stage process requiring specialized computational tools and reference databases.

NGS Data Formats and Quality Control

Standardized file formats enable interoperability between analytical tools throughout the NGS pipeline. The following diagram illustrates the transformation of data through these formats from sequencing to variant calling:

Primary Analysis (Base Calling): Sequencing instruments generate raw data in platform-specific formats (BCL for Illumina, POD5 for Nanopore, BAM for PacBio) that are converted to FASTQ format [5]. FASTQ files contain nucleotide sequences alongside quality scores for each base, representing the fundamental unit of raw NGS data [7].

Quality Assessment: Tools like FastQC provide comprehensive quality metrics including per-base sequence quality, adapter contamination, and GC content [7]. For cancer samples, special attention should be paid to potential contaminants and sample degradation indicators. The quality score (Q-score) is particularly important, with Q30 (99.9% accuracy) being the standard threshold for high-quality data [4].

Read Trimming and Filtering: Preprocessing tools such as CutAdapt, Trimmomatic, or Nanofilt remove low-quality bases, adapter sequences, and artifacts [4]. This step is crucial for FFPE-derived data where degradation and artifacts are more common.

Secondary Analysis: Alignment and Variant Calling

Read Alignment: Processed reads are aligned to a reference genome (e.g., GRCh38) using aligners like BWA or STAR, generating SAM/BAM files [8]. The alignment process determines the genomic origin of each read, enabling variant identification. For cancer samples, it's recommended to use the hg38 genome build as reference, as it provides more comprehensive coverage of clinically relevant regions compared to older builds [8].

Variant Calling: Specialized algorithms identify differences between the sample and reference genome. The consensus recommendations for clinical NGS bioinformatics pipelines include calling multiple variant types [8]:

- Single nucleotide variants (SNVs) and small insertions/deletitions (indels)

- Copy number variants (CNVs)

- Structural variants (SVs) including insertions, inversions, and translocations

- Short tandem repeats (STRs)

- Loss of heterozygosity (LOH) regions

For cancer applications, both germline (inherited) and somatic (tumor-specific) variants are typically identified, requiring paired tumor-normal analysis when possible.

Tertiary Analysis: Annotation and Interpretation

Variant Annotation: Called variants in VCF format are annotated with biological information using tools that incorporate databases of population frequency (gnomAD), functional prediction (SIFT, PolyPhen), and clinical significance (ClinVar, COSMIC) [1]. For cancer, databases like CIViC and OncoKB provide therapeutic, prognostic, and diagnostic annotations for specific mutations.

Variant Filtering and Prioritization: In cancer research, this critical step identifies clinically actionable variants from background noise and benign polymorphisms. Strategies include:

- Frequency-based filtering against population databases

- Functional impact prediction (missense, truncating, splice-site)

- Pathway analysis and known cancer gene databases

- Hotspot mutation analysis for recurrently altered positions

Recent recommendations emphasize using multiple tools for structural variant calling and in-house datasets for filtering recurrent technical artifacts [8].

Experimental Design and Validation in Cancer Research

Method Validation Protocols

For clinical cancer research, rigorous validation of NGS workflows is essential. The Next-Generation Sequencing Quality Initiative (NGS QI) provides frameworks for validation plans and standard operating procedures [9]. Key validation parameters include:

- Accuracy and Precision: Comparison to orthogonal methods (e.g., Sanger sequencing) and replicate sequencing

- Analytical Sensitivity: Detection of variants at low allele frequencies (critical for heterogeneous tumor samples)

- Specificity: False positive rates across variant types

- Reproducibility: Inter-run and inter-operator consistency

A recent feasibility study implementing NGS in the Chilean public health system demonstrated 80.5% concordance for actionable variants in colorectal cancer samples compared to a validated laboratory, with 98.4% of previously detected variants successfully identified in their implementation [2].

Quality Management and Standards

Implementing a robust quality management system (QMS) is recommended for clinical cancer NGS applications. The NGS QI provides assessment tools and key performance indicators to monitor assay performance over time [9]. Regular monitoring of metrics including coverage uniformity, on-target rates, and variant calling sensitivity ensures consistent performance.

For clinical production, bioinformatics should operate at standards similar to ISO 15189, utilizing off-grid clinical-grade high-performance computing systems, standardized file formats, and strict version control [8]. Reproducibility should be ensured through containerized software environments (Docker, Singularity), and pipelines must be thoroughly documented and tested for accuracy.

The core principles of NGS—from meticulous library preparation through rigorous bioinformatics analysis—provide the foundation for robust cancer molecular profiling research. As sequencing technologies continue to evolve with improvements in accuracy, throughput, and multi-omic capabilities, their integration into oncology research pipelines will further advance our understanding of cancer biology and treatment. The standardized workflows, validation frameworks, and quality control measures outlined in this guide provide a roadmap for implementing NGS in cancer research that generates reliable, reproducible, and clinically actionable genomic insights. Future developments in single-cell sequencing, spatial transcriptomics, and long-read technologies will continue to expand the research and clinical applications of NGS in precision oncology.

The advent of DNA sequencing technologies has fundamentally transformed biological research and clinical diagnostics, with next-generation sequencing (NGS) representing one of the most significant technological breakthroughs since the development of Sanger sequencing in 1977. This paradigm shift is particularly evident in oncology, where the comprehensive genomic profiling enabled by NGS has ushered in a new era of precision oncology. The ability to rapidly and cost-effectively sequence entire cancer genomes allows researchers and clinicians to identify the genetic alterations driving tumorigenesis, thereby facilitating personalized treatment strategies tailored to the specific molecular profile of a patient's cancer [10]. This technical guide provides a comparative analysis of NGS versus traditional sequencing methods, with a specific focus on their application in cancer molecular profiling research for scientists, researchers, and drug development professionals.

The transformative impact of NGS becomes evident when considering the limitations of traditional approaches. Prior to NGS, cancer genetic profiling relied heavily on single-gene assays or small panels that could only detect a limited set of predefined mutations, potentially missing rare or novel genetic alterations that contribute to cancer development and progression [10]. The massively parallel nature of NGS enables the simultaneous analysis of hundreds to thousands of cancer-related genes, providing a comprehensive view of the complex genomic landscape of tumors that was previously unattainable with traditional methods.

Technological Foundations and Comparative Specifications

Fundamental Principles of Sequencing Technologies

Traditional Sanger Sequencing, developed by Frederick Sanger in 1977, operates on the principle of chain-termination with dideoxynucleotides (ddNTPs) [11]. This method involves generating DNA fragments of varying lengths that are terminated at specific bases, which are then separated by capillary electrophoresis to determine the sequence [10]. The key limitation of this technology is its fundamental design—it sequences only one DNA fragment at a time, making it prohibitively slow and expensive for large-scale projects [11]. The Human Genome Project, which relied on Sanger sequencing, took 13 years and cost nearly $3 billion to complete the first human genome sequence [11].

Next-Generation Sequencing employs a fundamentally different approach characterized by massively parallel sequencing. Instead of processing single DNA fragments, NGS platforms simultaneously sequence millions to billions of DNA fragments [11] [10]. The core NGS workflow involves: (1) library preparation through fragmentation of DNA and adapter ligation; (2) cluster generation through amplification to create sequencing features; (3) cyclic sequencing using synthesis with fluorescently-labeled nucleotides; and (4) alignment and data analysis using sophisticated bioinformatics tools [11]. This parallel processing architecture provides NGS with its revolutionary throughput advantage, compressing sequencing timelines from years to hours while dramatically reducing costs [11].

Direct Technical Comparison

Table 1: Technical Comparison of Sanger Sequencing vs. Next-Generation Sequencing

| Feature | Sanger Sequencing | Next-Generation Sequencing |

|---|---|---|

| Throughput | Low - processes one DNA fragment at a time [11] | Extremely high - processes millions to billions of fragments simultaneously [11] |

| Cost per Human Genome | ~$3 billion (Human Genome Project) [11] | Under $1,000, with some services as low as $600 [11] [12] |

| Read Length | Long (500-1000 base pairs) [11] | Shorter (50-600 base pairs for short-read NGS) [11] |

| Primary Applications | Ideal for sequencing single genes or confirming specific variants [10] | Whole-genome sequencing, transcriptomics, epigenetics, metagenomics [10] |

| Data Output | Limited data output [10] | Massive amounts of data (terabases per run) [6] |

| Human Genome Sequencing Time | Years [11] | Hours to days [11] |

| Accuracy | High per-base accuracy (>99.9%) [11] | High overall accuracy achieved through depth of coverage [11] |

Evolution to Third-Generation Sequencing

The sequencing technology landscape continues to evolve with the emergence of third-generation sequencing platforms, which address one of the key limitations of mainstream NGS technologies: short read lengths. Platforms from Pacific Biosciences (PacBio) and Oxford Nanopore Technologies (ONT) enable the sequencing of much longer DNA fragments—thousands to tens of thousands of bases—without the need for fragmentation [6]. PacBio achieves this through Single Molecule Real-Time (SMRT) sequencing, which observes DNA polymerization in real time within microscopic wells called zero-mode waveguides [6]. Oxford Nanopore employs a fundamentally different approach by measuring changes in electrical current as DNA molecules pass through protein nanopores [6].

These long-read technologies are particularly valuable in cancer research for resolving complex genomic regions that are challenging for short-read NGS, including repetitive elements, structural variants, and gene fusions [11]. While early long-read technologies suffered from higher error rates, significant improvements have been made. PacBio's HiFi reads now achieve over 99.9% accuracy through circular consensus sequencing, while ONT's latest duplex sequencing chemistry exceeds Q30 (>99.9% accuracy) [6]. The convergence of technologies continues, with short-read companies adding long-read capabilities and vice versa, providing researchers with an increasingly sophisticated toolkit for cancer genomics.

Experimental Design and Methodologies for Cancer Profiling

Comprehensive Genomic Profiling Workflow in Cancer Research

The application of NGS in cancer research follows a standardized yet adaptable workflow designed to maximize DNA yield and sequencing quality from often limited and degraded tumor specimens. The BALLETT study (Belgian Approach for Local Laboratory Extensive Tumor Testing), a large-scale multi-center investigation involving 872 patients with advanced cancers, provides an exemplary model of a robust NGS-based cancer profiling protocol [13]. This study demonstrated the feasibility of implementing comprehensive genomic profiling (CGP) across multiple laboratories with a 93% success rate and a median turnaround time of 29 days from inclusion to molecular tumor board report [13].

Key Research Reagent Solutions for NGS in Cancer Studies

Table 2: Essential Research Reagents and Platforms for NGS-Based Cancer Profiling

| Reagent/Platform Category | Specific Examples | Research Function in Cancer Studies |

|---|---|---|

| Commercial CGP Panels | FoundationOne, Tempus, OncoDEEP, MI Profile [14] | Standardized targeted sequencing of cancer-related genes for consistent analysis across studies |

| Library Preparation Kits | Illumina-compatible kits, QIAseq xHYB Long-Read Panels [15] [16] | Fragment DNA and attach adapters for sequencing; specialized kits enable long-read or hybrid capture |

| NGS Platforms | Illumina NovaSeq X, PacBio Revio, Oxford Nanopore [16] [6] | High-throughput sequencing instruments with varying capabilities for short/long-read data |

| Automation Systems | Automated liquid handlers, library prep stations [15] | Increase throughput, reduce human error, and improve reproducibility in sample processing |

| Bioinformatics Tools | DRAGEN platform, various variant callers [17] | Process raw sequencing data, identify mutations, and annotate potential clinical significance |

Analytical Considerations for Cancer NGS

The analytical phase of NGS-based cancer profiling requires specialized approaches to address the unique challenges of tumor genomes. Unlike germline sequencing, cancer sequencing must account for tumor heterogeneity, variable tumor purity, and the distinction between somatic (acquired) and germline (inherited) variants [14]. The BALLETT study implemented a rigorous bioinformatics pipeline that identified not only single nucleotide variants and small insertions/deletions but also copy number variations, gene fusions, and genome-wide biomarkers including tumor mutational burden (TMB), microsatellite instability (MSI), and homologous recombination deficiency (HRD) [13].

Tumor-only sequencing designs, commonly used in cancer research, present specific analytical challenges. The BALLETT study protocol addressed this by considering variants with a variant allele frequency (VAF) greater than 50% as potentially germline in origin and confirming them with validated germline assays when a hereditary cancer syndrome was clinically suspected [13]. Actionable alterations were classified according to established frameworks such as OncoKB, which incorporates FDA approval status, clinical guideline support, and strength of supporting evidence [13]. This meticulous approach to analytical validation ensures that research findings can potentially translate to clinical applications.

Applications in Cancer Molecular Profiling Research

Comprehensive Genomic Characterization in Sarcoma Research

A recent multicenter study investigating advanced soft tissue and bone sarcomas exemplifies the power of NGS in characterizing molecularly complex cancers [14]. This research employed four different commercial NGS kits to analyze 81 patients with metastatic disease, identifying a total of 223 genomic alterations across the cohort, with at least one type of genomic alteration detectable in 90.1% of tumors [14]. The most frequently mutated genes were TP53 (38%), RB1 (22%), and CDKN2A (14%), revealing key insights into the molecular drivers of these rare malignancies [14].

Critically, this study demonstrated that NGS identified actionable mutations in 22.2% of sarcoma patients, rendering them eligible for FDA-approved targeted therapies that would not have been considered based on conventional histopathological diagnosis alone [14]. Additionally, NGS led to a reclassification of diagnosis in four patients, highlighting its utility not only in therapeutic decision-making but also as a powerful diagnostic tool in cases with ambiguous histological features [14]. The functional analysis of genomic alterations revealed potentially targetable changes in key pathways including genomic stability regulation (TP53, MDM2), cell cycle regulation (RB1, CDKN2A/B), and the phosphoinositide-3 kinase pathway (PTEN, PIK3CA) [14].

Large-Scale Implementation Studies

The BALLETT study provides compelling evidence for the feasibility and utility of large-scale NGS implementation in cancer research [13]. In this comprehensive analysis of 756 patients with advanced cancers across 32 different tumor types, actionable genomic markers were identified in 81% of patients—substantially higher than the 21% actionability rate that would have been detected using traditionally reimbursed, small gene panels [13]. The most frequently altered genes in this pan-cancer analysis were TP53 (46%), KRAS (13%), APC (9%), PIK3CA (11%), and TERT (8%) [13].

The study also demonstrated the importance of genome-wide biomarkers detectable only through comprehensive NGS approaches. Tumor mutational burden (TMB-high) was identified in 16% of patients, with particularly high frequencies in lung cancer, melanoma, and urothelial carcinomas [13]. Microsatellite instability (MSI-high) was detected in eight patients, all of whom also exhibited high TMB [13]. Homologous recombination deficiency (HRD) status was analyzed for 100 patients, with 11% showing positive results, including five breast and two ovarian carcinomas [13]. These biomarkers have significant implications for immunotherapy response and targeted treatment approaches.

Emerging Applications: Liquid Biopsies and Resistance Monitoring

Beyond comprehensive tissue profiling, NGS enables several emerging applications that are transforming cancer research. Liquid biopsies, which involve sequencing circulating tumor DNA (ctDNA) from blood samples, provide a non-invasive method for cancer detection, monitoring treatment response, and identifying emerging resistance mechanisms [11]. This approach is particularly valuable for tracking tumor evolution in response to targeted therapies, as cancer cells often develop resistance through additional genetic alterations that can be detected through serial liquid biopsy sampling [11].

The high sensitivity of NGS also facilitates minimal residual disease (MRD) detection, allowing researchers to identify molecular evidence of residual cancer after treatment that would be undetectable by conventional imaging methods [10]. This application has significant implications for understanding cancer recurrence and developing more effective adjuvant therapy strategies. Furthermore, NGS is playing an increasingly important role in immuno-oncology research by enabling comprehensive analysis of tumor-immune interactions, T-cell receptor repertoires, and biomarkers of immunotherapy response such as TMB and MSI [10].

The comparative analysis of NGS versus traditional sequencing methodologies reveals a fundamental technological shift that has transformed cancer molecular profiling research. The massively parallel architecture of NGS provides unprecedented throughput and cost-efficiency, enabling comprehensive genomic characterization that was scientifically and economically unfeasible with Sanger sequencing. This technological advancement has identified actionable genomic targets in the majority of patients with advanced cancers—81% in the BALLETT study compared to just 21% with conventional approaches—highlighting the critical importance of comprehensive genomic profiling in modern oncology research [13].

The applications of NGS in cancer research continue to expand, from diagnostic reclassification and therapeutic targeting to liquid biopsy monitoring and analysis of novel biomarkers such as TMB and HRD. As sequencing technologies continue to evolve, with improvements in long-read sequencing, single-cell analysis, and multi-omic integration, researchers will gain increasingly sophisticated tools to decipher the complex molecular landscape of cancer. For the research community, embracing these technologies and addressing their associated challenges in data analysis, standardization, and implementation will be essential for advancing our understanding of cancer biology and developing more effective, personalized cancer therapies.

Cancer is not a single disease but a complex ecosystem characterized by profound heterogeneity, which represents one of the most significant barriers to effective treatment. Tumor heterogeneity exists at multiple levels—between different patients (inter-tumor heterogeneity) and within individual tumors and patients (intra-tumor heterogeneity) [18]. This variability stems from an evolutionary process where tumors accumulate genetic alterations over time, leading to diverse subpopulations of cancer cells (clones) with distinct molecular profiles [18]. These competing cellular populations exist within a microenvironment comprising various non-cancerous cells, including immune cells, fibroblasts, and vascular endothelial cells, further compounding the complexity [18].

Next-generation sequencing (NGS) has emerged as a transformative technology for deciphering this complexity, enabling comprehensive genomic profiling that reveals the intricate molecular architecture of tumors. Unlike traditional Sanger sequencing, which processes single DNA fragments sequentially, NGS performs massive parallel sequencing, processing millions of fragments simultaneously [10]. This technological leap has significantly reduced the time and cost associated with genomic analysis while providing unprecedented resolution for detecting the genetic alterations that drive cancer progression and therapeutic resistance [10]. The application of NGS in oncology has fundamentally advanced our understanding of tumor biology and is now an essential component of precision medicine approaches aimed at tailoring treatments to the specific molecular characteristics of individual patients' tumors.

Understanding Tumor Heterogeneity: Models and Molecular Mechanisms

Conceptual Models of Tumor Evolution

The development and progression of tumors are governed by two primary, non-exclusive models that explain the emergence of heterogeneity. The clonal evolution model (stochastic model) posits that tumors evolve through a stepwise accumulation of genomic and epigenetic alterations that provide selective advantages to certain cell lineages, leading to their expansion while other populations are depleted [18]. This dynamic process results in continuous tumor remodeling with distinct dimensions of heterogeneity. In contrast, the cancer stem cell (CSC) model (hierarchical model) proposes that tumors are maintained by a subpopulation of cells with stem-like properties that can differentiate into multiple cell types within the tumor [18]. In reality, both models often co-occur, with CSCs frequently representing the cells that acquire critical mutations driving clonal expansion.

Multi-Level Heterogeneity in Cancer

Tumor heterogeneity manifests across multiple molecular dimensions, each contributing to the overall complexity of the disease:

- Genomic heterogeneity: Variations in DNA sequences, including somatic mutations, copy number alterations, and structural rearrangements that differ between tumor regions and individual cells [18].

- Transcriptomic heterogeneity: Differences in gene expression patterns and RNA processing that lead to phenotypic diversity despite identical genetic backgrounds [19].

- Epigenetic heterogeneity: Variable epigenetic modifications that regulate gene expression without altering the underlying DNA sequence, contributing to cellular plasticity and adaptive responses [19].

Table 1: Common Genetic Alterations Across Cancer Types Based on TCGA Data

| Cancer Type | Sample Size | Significantly Altered Genes |

|---|---|---|

| Glioblastoma | 206 | TP53, ERBB2, NF1, PARK2, AKT3, FGFR2, PIK3R1 |

| Lung Adenocarcinoma | 230 | TP53, KRAS, EGFR, STK11, KEAP1, BRAF, MET |

| Breast Cancer | 510 | PIK3CA, TP53, GATA3, CDH1, RB1, MLL3, MAP3K1 |

| Colorectal Cancer | 276 | APC, TP53, KRAS, PIK3CA, FBXW7, SMAD4 |

| Clear Cell Renal Cell Carcinoma | 446 | VHL, PBRM1, BAP1, SETD2, HIF1A |

Data derived from TCGA analysis illustrates the diverse mutational landscapes across different cancer types [18].

NGS Methodologies for Deciphering Tumor Heterogeneity

Core NGS Technology and Workflow

Next-generation sequencing represents a revolutionary advance over traditional sequencing methods, enabling comprehensive genomic analysis with unprecedented speed and accuracy. The fundamental NGS workflow consists of four critical steps:

Sample Preparation and Library Construction: Nucleic acids (DNA or RNA) are extracted from tumor samples and fragmented into appropriately sized pieces (typically around 300 bp). Adapters—synthetic oligonucleotides with specific sequences—are then ligated to these fragments, creating a sequencing library. The library may undergo enrichment steps to isolate specific genomic regions of interest, such as exons or cancer-related genes [10].

Sequencing Reaction: The prepared library is loaded onto a sequencing platform where fragments are amplified and sequenced simultaneously through massive parallel sequencing. The most common technology (Illumina) involves immobilizing library fragments on a flow cell surface, amplifying them to form clusters of identical sequences, and then determining the sequence through cyclic fluorescence detection as fluorescently-labeled nucleotides are incorporated [10].

Data Generation and Primary Analysis: The sequencing instrument detects signals from each cluster in real-time, converting them into raw sequence data (reads) along with quality metrics. The enormous data output—often terabytes per run—requires sophisticated computational infrastructure [10].

Bioinformatic Analysis: Specialized software aligns the generated reads to a reference genome, identifies variations (including single nucleotide variants, insertions/deletions, copy number alterations, and structural variants), and interprets the biological significance of these findings in the context of cancer biology [10].

Comparative Analysis: NGS vs. Traditional Sequencing

Table 2: Comparison of NGS and Sanger Sequencing Technologies

| Feature | Next-Generation Sequencing | Sanger Sequencing |

|---|---|---|

| Cost-effectiveness | Higher for large-scale projects | Lower for small-scale projects |

| Speed | Rapid sequencing | Time-consuming |

| Application | Whole-genome, exome, transcriptome sequencing | Ideal for sequencing single genes |

| Throughput | Multiple sequences simultaneously | Single sequence at a time |

| Data output | Large amount of data | Limited data output |

| Clinical utility | Detects multiple mutation types, structural variants | Identifies specific known mutations |

NGS offers significant advantages in throughput, comprehensiveness, and efficiency for analyzing complex tumor genomes [10].

Advanced NGS Applications for Heterogeneity Analysis

Several sophisticated NGS-based approaches have been developed specifically to address the challenges of tumor heterogeneity:

Single-Cell Sequencing (SCS): This cutting-edge technology enables genomic, transcriptomic, or epigenomic profiling of individual cells, providing the ultimate resolution for analyzing intra-tumor heterogeneity. By classifying tumor cells into distinct subpopulations from multiple spatial regions within a biopsy, SCS allows researchers to trace tumor cell lineages and elucidate mechanisms of therapeutic failure and resistance [18].

Spatial Transcriptomics Integration: Novel computational approaches like Tumoroscope integrate somatic point mutation data from spatial transcriptomics (ST) reads, clone genotypes reconstructed from bulk DNA-seq, and cancer cell counts from H&E-stained images to unravel the clonal composition of each spot within a tumor sample. This enables precise spatial mapping of clones and their mutual relationships [20].

Liquid Biopsies: Analysis of circulating tumor DNA (ctDNA) and circulating tumor cells (CTCs) from blood samples provides a non-invasive method for monitoring tumor heterogeneity and evolution over time, offering insights into therapeutic response and emergence of resistance [19].

Diagram 1: Single-Cell Sequencing Workflow. SCS enables resolution of tumor heterogeneity at the individual cell level [18].

Experimental Design and Protocols for Heterogeneity Studies

Comprehensive Genomic Profiling (CGP) in Multi-Center Studies

Large-scale genomic studies require standardized protocols to ensure reproducible and comparable results across institutions. The Belgian Approach for Local Laboratory Extensive Tumor Testing (BALLETT) study exemplifies a well-designed framework for implementing CGP in clinical decision-making for patients with advanced cancers. This multi-center study enrolled 872 patients from 12 hospitals and established a consortium of nine local NGS laboratories using fully standardized methodology [13].

The study demonstrated a 93% success rate for CGP profiling across diverse tumor types, with a median turnaround time of 29 days from inclusion to molecular tumor board report. The protocol identified actionable genomic markers in 81% of patients—substantially higher than the 21% actionability rate using nationally reimbursed small panels [13]. This highlights the superior capability of CGP for uncovering therapeutic targets in heterogeneous tumors.

Integrated Spatial Genomic Analysis Protocol

The Tumoroscope methodology represents an advanced experimental approach for integrating multiple data types to reconstruct spatial tumor heterogeneity:

Sample Processing: Fresh-frozen tumor tissues are subjected to parallel processing for H&E staining, bulk DNA sequencing, and spatial transcriptomics [20].

Image Analysis: H&E-stained tissue images are analyzed using custom QuPath scripts to identify ST spots within cancer cell-containing regions and estimate cell counts for each spot [20].

Clone Reconstruction: Somatic mutations and allele-specific copy number data from bulk DNA-seq are analyzed using established methods (Vardict, FalconX, and Canopy) to reconstruct cancer clones, their frequencies, and genotypes [20].

Probabilistic Deconvolution: The Tumoroscope model integrates (i) estimated cell counts per spot, (ii) alternate and total read counts for mutations in ST spots, and (iii) clone genotypes and frequencies to infer the proportions of each clone in every spot [20].

Gene Expression Profiling: A regression model uses gene expression data as independent variables and inferred clone proportions as dependent variables to deduce clonal expression profiles [20].

Diagram 2: Tumoroscope Integrated Analysis. This framework combines multiple data types to spatially map tumor clones [20].

Molecular Tumor Board Implementation

Structured interpretation of complex NGS data requires multidisciplinary expertise. Molecular tumor boards (MTBs) comprising oncologists, pathologists, geneticists, molecular biologists, and bioinformaticians provide a critical framework for translating genomic findings into clinical actionable recommendations [21]. Comparative analysis of independent MTBs reveals that while interpretation of single nucleotide variants and clinically validated biomarkers shows high agreement (66% mean overlap coefficient), interpretation of gene expression changes, preclinically validated biomarkers, and combination therapies remains challenging, highlighting areas requiring further standardization [21].

Key Findings and Clinical Implications

Prevalence of Actionable Alterations in Advanced Cancers

Large-scale genomic profiling studies have demonstrated the high frequency of potentially actionable alterations across diverse cancer types. The BALLETT study identified 1,957 pathogenic or likely pathogenic SNVs/indels, 80 pathogenic gene fusions, and 182 amplifications across 276 different genes in 756 patients [13]. The most frequently altered genes included TP53 (46% of patients), KRAS (13%), APC (9%), PIK3CA (11%), and TERT (8%) [13]. Additionally, genome-wide biomarkers with therapeutic implications were common, with 16% of patients exhibiting high tumor mutational burden (TMB-high) and 11% showing homologous recombination deficiency (HRD) in tested cases [13].

Impact on Treatment Selection and Outcomes

The comprehensive assessment of tumor genomics directly influences therapeutic decision-making. In the BALLETT study, the national molecular tumor board recommended biomarker-directed treatments for 69% of patients, with 23% ultimately receiving matched therapies [13]. Real-world evidence confirms that patients receiving treatment following concordant MTB recommendations experience significantly longer overall survival compared to those receiving treatment based on discrepant recommendations or physician's choice alone [21]. The most frequently identified treatment classes include PARP inhibitors, mTOR inhibitors, immunotherapy (immune checkpoint inhibitors), and various receptor tyrosine kinase inhibitors [21].

Table 3: Actionability of Genomic Findings in Advanced Cancers (BALLETT Study)

| Metric | Value | Implication |

|---|---|---|

| CGP success rate | 93% (756/814 patients) | Reliable implementation in clinical setting |

| Patients with ≥1 actionable marker | 81% (616/756 patients) | High potential for treatment personalization |

| Actionability with standard small panels | 21% | 4-fold increase with CGP |

| Patients with multiple actionable alterations | 41% (311/756 patients) | Opportunity for combination therapies |

| Patients receiving MTB-recommended therapy | 23% | Bridge between identification and implementation |

Comprehensive genomic profiling significantly expands therapeutic options for patients with advanced cancers [13].

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 4: Essential Research Tools for NGS-Based Heterogeneity Studies

| Category | Specific Tools/Platforms | Function in Heterogeneity Research |

|---|---|---|

| NGS Platforms | Illumina NovaSeq X, Ion Torrent, PacBio Sequel, Oxford Nanopore | High-throughput sequencing with varying read lengths and applications |

| Single-Cell Technologies | 10X Genomics, Fluidigm C1 | Isolation and processing of individual cells for genomic analysis |

| Spatial Omics Technologies | 10X Visium, NanoString GeoMx | Preservation of spatial context in transcriptomic analysis |

| Bioinformatics Tools | DeepVariant, SubcloneSeeker, MethylPurify | Variant calling, clonal decomposition, methylation analysis |

| Data Integration Frameworks | Tumoroscope, Canopy | Probabilistic modeling integrating multiple data types |

| Reference Databases | TCGA, cBioPortal, COSMIC | Contextualization of findings within population-level data |

This toolkit enables comprehensive characterization of tumor heterogeneity at multiple molecular levels [10] [20] [18].

The field of tumor heterogeneity research continues to evolve rapidly, driven by technological innovations and increasing integration of multi-omics approaches. Several promising directions are emerging:

Artificial Intelligence in Genomic Analysis: AI and machine learning algorithms are becoming indispensable for analyzing complex genomic datasets. Tools like Google's DeepVariant utilize deep learning to identify genetic variants with greater accuracy than traditional methods, while AI models analyzing polygenic risk scores help predict disease susceptibility and treatment response [22].

Multi-Omics Integration: Combining genomics with transcriptomics, proteomics, metabolomics, and epigenomics provides a more comprehensive view of biological systems, linking genetic information with molecular function and phenotypic outcomes [22]. This approach is particularly valuable for understanding complex diseases like cancer, where genetics alone does not provide a complete picture.

Liquid Biopsy Applications: The use of circulating tumor DNA (ctDNA) assays offers high specificity and sensitivity for monitoring tumor heterogeneity and detecting minimal residual disease, representing a reliable tool for assessing treatment response [23].

In conclusion, next-generation sequencing has fundamentally transformed our understanding of tumor heterogeneity, revealing the complex genomic architecture that underlies cancer progression and therapeutic resistance. By enabling comprehensive molecular profiling at unprecedented resolution, NGS provides the critical tools necessary to decode this complexity and advance personalized cancer treatment. As sequencing technologies continue to evolve and computational methods become more sophisticated, the integration of NGS into routine oncologic practice promises to further refine our approach to molecularly-driven cancer care, ultimately improving outcomes for patients with diverse malignancies.

The comprehensive molecular characterization of cancer has revealed that the disease is fundamentally driven by acquired genomic aberrations. These alterations span a broad spectrum of types and sizes, ranging from single nucleotide variants (SNVs) to large structural variants (SVs) that can reorganize the genome [24]. Next-generation sequencing (NGS) has revolutionized cancer genomics by enabling researchers to identify these changes in an unbiased, genome-wide fashion, providing unprecedented insights into cancer biology and treatment opportunities [25]. The application of NGS in cancer research has demonstrated that cancer is characterized by a small number of frequently mutated genes and a long tail of infrequent mutations in a large number of genes [25]. This understanding forms the foundation of precision oncology, where molecular profiling guides targeted therapeutic interventions.

The genomic alterations in cancer cells encompass several major categories: single nucleotide variants (SNVs), small insertions and deletions (indels), copy number alterations (CNAs), and structural variants (SVs). Each category contributes differently to oncogenesis, with SVs alone affecting more base pairs in the genome than SNVs and being known drivers of carcinogenesis in at least 30% of cancers [24]. The identification and interpretation of these variants through NGS-based molecular profiling have become crucial components of both cancer research and clinical oncology, enabling informed treatment recommendations based on tumor-specific biomarker status [26].

Methodologies for NGS-Based Variant Detection

Preprocessing and Alignment of Sequencing Data

The initial steps in NGS data analysis are critical for ensuring the quality and reliability of downstream variant calling. NGS platforms generate hundreds of millions of sequence reads per instrument run, which must undergo rigorous quality control procedures. Following each sequencing run, standardized instrument manufacturer-defined pipelines process the signal-based data into sequence reads, including routine quality control on a per-lane or per-region basis to provide metrics of success for each data set [25].

A crucial quality control consideration is read duplication, where the same DNA fragment begets multiple reads or read pairs. This artifact has been attributed to the initial PCR-based library amplification steps and can affect as many as 10% of read pairs. Removal of duplicate reads is advantageous to most downstream analytical approaches since these reads may contain PCR-introduced errors that masquerade as variant nucleotides. The Picard suite provides tools for the de-duplication process that operate on both single-end and paired-end data [25]. In addition to de-duplication, data sets containing reads with insufficient read length, base quality, mapping quality, or paired-end reads having an atypical distribution of insert sizes should be flagged, soft-trimmed, and discarded when necessary to ensure data quality.

For alignment, BWA-MEM is predominantly used prior to SV detection, as it provides secondary alignments to reads mapping to multiple locations rather than placing the reads randomly [24]. The reference genome used also influences alignment performance, with studies adopting GRCh38 (hg38) showing improved alignments and fewer false-positive variants compared to GRCh37 (hg19) [24].

Experimental Design Considerations

Effective detection of somatic variants in cancer requires careful experimental design, particularly regarding sequencing depth and the inclusion of matched normal samples. In practice, a minimum of 20% allele frequency is required for reliable variant detection from tumor-normal pairs, with increasing sequencing depth to 75x-90x for tumor samples improving sensitivity for detecting low-frequency variants [24]. The use of paired tumor-normal samples enables the identification of tumor-unique (somatic) variation by distinguishing variants acquired in tumor cells from those present in the germline or as mosaic variants in healthy cells [24].

Table 1: Key Computational Tools for Detecting Genomic Alterations in Cancer

| Variant Type | Software Tools | Methodology | Key Applications |

|---|---|---|---|

| SNV Detection | SAMtools, SOAPsnp | Bayesian statistical approaches for genotype probabilities | Germline SNP calling |

| Somatic SNV Detection | VarScan, SomaticSniper, SNVmix | Heuristic or probabilistic models comparing tumor-normal pairs | Identification of tumor-specific point mutations |

| Indel Detection | Pindel, GATK Indel Genotyper | Pattern growth approach or heuristic cutoffs | Small insertion/deletion discovery |

| Structural Variant Detection | DELLY, LUMPY, Manta, SvABA, GRIDSS | Combinatorial algorithms integrating multiple read-alignment patterns | Detection of SVs across broad size ranges |

| Copy Number Alteration Detection | EWT, SegSeq, CMDS | Read-depth normalization and change-point analysis | Identification of amplifications and deletions |

Single Nucleotide Variants (SNVs) in Cancer

Biological Significance and Detection Methods

Single nucleotide variants represent the most frequent type of genomic alteration in cancer, arising from errors in DNA replication and repair. These point mutations can have profound functional consequences depending on their genomic context, including activating oncogenes through gain-of-function mutations or inactivating tumor suppressor genes through loss-of-function mutations. Notable examples include recurrent mutations in the KRAS oncogene in pancreatic and colorectal cancers, TP53 tumor suppressor mutations across multiple cancer types, and the IDH1 R132C mutations identified in acute myeloid leukemia (AML) through NGS approaches [25].

The comparison of tumor genomes with their matched constitutional genomes enables the identification of tumor-unique somatic variation in an unbiased, genome-wide fashion. Numerous SNV detection algorithms for NGS data have been developed, with SAMtools and SOAPsnp utilizing Bayesian statistics to compute probabilities of all possible genotypes [25]. However, these tools initially expected a heterozygous variant allele frequency of 50%, which is valid for germline sites but does not hold for somatic sites in most tumors due to normal contamination and/or tumor heterogeneity. This limitation has driven the development of callers designed specifically for somatic mutations, such as SNVmix, which utilizes a probabilistic Binomial mixture model and adjusts to deviation of allelic frequencies using an expectation maximization algorithm [25].

Two specifically developed somatic point mutation discovery algorithms are VarScan and SomaticSniper. VarScan determines overall genome coverage, base quality, and the number of strands observed for each allele, using read counts to infer variant allele frequency and calculating somatic status using Fisher's exact test. This approach makes VarScan well suited for somatic mutation detection in data sets having varying coverage depths, such as from targeted capture [25]. SomaticSniper uses Bayesian theory to calculate the probability of differing genotypes in the tumor and normal samples, reporting a phred-scaled probability that the tumor and normal were identical as the 'somatic' score [25]. These tools have been applied to the analysis of hundreds of tumor and normal pairs for various projects such as The Cancer Genome Atlas and the Pediatric Cancer Genome Project.

Figure 1: Computational Workflow for Somatic SNV Detection in Paired Tumor-Normal Samples

Technical Considerations and Best Practices

Effective SNV detection requires careful consideration of several technical factors. Base quality scores are crucial for distinguishing true variants from sequencing errors, with most pipelines requiring minimum quality scores typically above Q20. Mapping quality is equally important, as ambiguously mapped reads can lead to false-positive variant calls. The optimal minimum mapping quality threshold depends on the read length and complexity of the genomic region, with higher stringency required in repetitive regions [25].

Strand bias represents another critical consideration, as true variants should be supported by reads from both strands. Significant strand bias may indicate mapping artifacts or other technical issues. Additionally, the position of a variant within a read can affect confidence, with variants near read ends typically requiring more stringent filtering due to higher error rates in these regions. For somatic mutation calling, the minimum supporting reads threshold must balance sensitivity and specificity, with many pipelines requiring at least 3-5 supporting reads in the tumor sample and fewer than 1-2 in the normal sample [25].

Table 2: Key Parameters for Somatic SNV Detection

| Parameter | Typical Setting | Purpose | Impact on Results |

|---|---|---|---|

| Minimum Base Quality | Q20-Q30 | Filter sequencing errors | Higher values increase specificity but may reduce sensitivity |

| Minimum Mapping Quality | 20-40 | Filter ambiguous alignments | Reduces false positives in repetitive regions |

| Minimum Supporting Reads (Tumor) | 3-5 | Ensure variant evidence | Higher values reduce false positives but may miss low-frequency variants |

| Maximum Supporting Reads (Normal) | 1-2 | Confirm somatic status | Lower values reduce false positives from germline contamination |

| Minimum Allele Frequency | 5-10% | Filter subclonal variants | Balances detection sensitivity with technical artifacts |

| Strand Bias Filter | p-value > 0.05 | Remove technical artifacts | Eliminates variants supported by only one strand |

Small Insertions and Deletions (Indels)

Detection Challenges and Computational Approaches

Small insertions and deletions (indels) represent another class of common genomic alterations in cancer, with particular importance in microsatellite unstable tumors where defects in DNA mismatch repair lead to elevated rates of indel mutations. While existing alignment tools are generally adequate for mapping reads that contain SNVs, they typically lack the necessary accuracy and sensitivity for reads that overlap indels or structural variants [25]. Most tools by default allow only two mismatches and no gaps in the 'seeded' regions (e.g., the first 28 bp in a read), which prohibits indel-containing reads from aligning to the reference genome correctly.

Paired-end mapping is tremendously helpful in identifying larger indels, when read pair alignment occurs in flanking regions and allows the inference of altered intervening sequences [25]. Specialized tools have been developed to address the challenges of indel detection, with Pindel taking a pattern growth approach borrowed from protein data analysis to detect breakpoints of indels from paired-end reads [25]. While Pindel achieves high specificity, it can suffer from lower sensitivity primarily due to not allowing mismatches during the pattern matching process. SAMtools represents another approach that summarizes short indel information by correcting the effect of flanking tandem repeats, though it tends to produce a large number of indel calls that require additional filtering [25].

Local de novo assembly or multiple alignments around candidate indel sites has proven effective for reducing the number of false-positive indels. This process was used in the analysis of whole-genome data from a basal-like breast cancer and is currently one of the methods utilized in advanced pipelines for indel detection [25]. The GATK Indel Genotyper employs a heuristic cutoff-based approach similar to VarScan, collecting raw statistics such as coverage, numbers of indel-supporting reads, read mapping qualities, and mismatch counts, which are useful for post-filtering of the initial calls [25].

Somatic Indel Identification and Validation

Currently, somatic indel identification is generally achieved by simple subtraction of indels also found present in the normal sample. However, this approach has limitations, particularly for indels with low allele frequency or those occurring in technically challenging genomic regions. A probabilistic model for somatic indel detection represents an unmet need in the field [25]. Such a model would ideally account for the specific error profiles associated with indel detection, including the higher likelihood of alignment errors in repetitive regions and the potential for PCR artifacts to generate false-positive calls.

Validation of putative indel mutations often requires orthogonal methods, such as Sanger sequencing or specialized PCR assays, particularly for indels in homopolymer runs or other low-complexity sequences where alignment uncertainty is high. For clinical applications, careful manual review of aligned reads in visualization tools such as the Integrative Genomics Viewer (IGV) is often necessary to confirm the validity of putative indel calls [25]. The development of more robust statistical frameworks for somatic indel calling remains an active area of research in cancer genomics.

Copy Number Alterations (CNAs) in Cancer

Detection Methods and Normalization Approaches

Copy number alterations, including large amplifications or deletions of chromosomal segments, represent an important class of somatic alteration in cancer with significant functional consequences. Amplifications of oncogenes such as MYC and ERBB2 (HER2) can drive tumor progression, while deletions of tumor suppressor genes like CDKN2A contribute to unchecked cell proliferation. While SNP genotyping data have long been utilized for studying CNAs in cancer, whole-genome sequencing of tumor and matched normal samples enables the identification of CNAs at a scale and precision unmatched by traditional array-based approaches [25].

Accurate inference of copy number from sequence data requires normalization procedures to address certain biases inherent in NGS data. GC content bias arises from mechanistic differences between NGS platforms, while read mapping bias originates from the computational difficulties of assigning relatively short sequences (25-450 bp) to their correct locations in a large, complex reference genome [25]. Approaches have been developed for both GC-based coverage normalization and mapping bias correction. Following these corrections, the unique (non-redundant) read depth can serve as the basis for copy number estimation [25].

Several computational approaches have been developed specifically for CNA detection from NGS data. The EWT algorithm employs a change-point detection method to identify transitions in copy number states, while SegSeq utilizes local change-point analysis and merging to define CNA regions [25]. CMDS focuses specifically on copy number alteration calling in sample populations, enabling the identification of recurrent CNAs across multiple tumors [25]. These methods typically segment the genome into regions of constant copy number, then assign absolute copy number states through comparison with matched normal samples or through ploidy estimation algorithms.

Analytical Considerations for CNA Detection

The accurate detection of CNAs in cancer genomes presents several unique challenges beyond those encountered in germline copy number variation analysis. Tumor samples frequently exhibit aneuploidy, where the entire genome has an abnormal number of chromosomes, complicating the baseline for copy number estimation. Additionally, intratumor heterogeneity can result in multiple subclonal populations with different CNA profiles, making it difficult to determine the true cellular prevalence of any specific alteration [24].

Normal contamination represents another significant challenge, as the presence of non-cancer cells in the tumor sample dilutes the signal from tumor-specific CNAs. This effect can be mitigated through computational methods that estimate purity and ploidy, then adjust the copy number estimates accordingly. Tools such as ASCAT and ABSOLUTE have been developed specifically for this purpose, using allele-specific copy number information to simultaneously estimate tumor purity, ploidy, and absolute copy number states [24].

For targeted sequencing approaches, such as those using gene panels, CNA detection requires specialized methods that compare coverage in target regions to a reference set of normal samples. These approaches are particularly challenging for detecting focal amplifications and deletions, as the limited genomic coverage reduces statistical power. Despite these challenges, CNA detection from targeted sequencing data has proven clinically valuable, particularly for the detection of clinically actionable amplifications in genes such as ERBB2, EGFR, and MET.

Structural Variants (SVs) in Cancer Genomics

Diversity and Detection of Structural Variants

Structural variants encompass a broad range of genomic alterations that affect genome organization, including translocations, inversions, deletions, duplications, and insertions larger than typically defined for indels (often >50 bp). SVs are a major contributor to genomic variation in cancer, affecting more base pairs in the genome than SNVs and having serious phenotypic impact [24]. Some SVs are known to drive carcinogenesis directly, with SVs resulting in gene fusions representing the first recurrent mutations observed in many pediatric cancers [24].

In short-read sequencing data, SVs can be detected based on distinctive patterns in aligned reads. Discordant read-pairs that align with an abnormal distance and/or orientation to the reference genome are particularly suited for detecting large SVs. Split or soft-clipped reads, which are partially mapped reads, can indicate breakpoints with base-pair resolution [24]. The latest generation of SV detection algorithms combines multiple read-alignment patterns to detect SVs across a broad range of types and sizes. DELLY, LUMPY, Manta, SvABA, and GRIDSS employ sophisticated methodologies that achieve high performance in detecting both germline and somatic SVs [24].

Since the optimal detection algorithm differs between SV type and size range, full-spectrum SV detection with high recall and precision currently requires multiple algorithms [24]. The methodology used to combine resulting callsets remains an area of active development, with various tools and in-house pipelines currently in use. Simple integration strategies use reciprocal overlap or breakpoint distance to merge SVs, while more complex solutions combine this with read-evidence integration, local assembly, or machine learning [24]. After overlapping variants are merged, integration of SV callsets from multiple algorithms can either be performed by taking the union or intersection, with the intersection strategy often preferred in cancer research and clinical applications where achieving high precision takes priority over recall [24].

Figure 2: Multi-evidence Approach for Structural Variant Detection in Cancer Genomes

Complex Genomic Rearrangements in Cancer

Recent research has highlighted the prevalence and importance of complex genomic rearrangements (CGRs) in cancer, including phenomena such as chromothripsis (where chromosomes undergo massive shattering and reorganization), chromoplexy (involving interlinked rearrangements across multiple chromosomes), and extrachromosomal DNA (ecDNA) that can amplify oncogenes [27]. In pediatric solid tumors, CGRs have been observed in 47% of tumors, and in the majority of these cases, the CGRs affect cancer driver genes or result in unfavorable chromosomal alterations [27]. The presence of CGRs is associated with more adverse clinical events, highlighting their potential for incorporation into risk stratification or exploitation for targeted treatments [27].

The detection and interpretation of CGRs present unique challenges beyond those encountered with simple SVs. The sheer complexity of these events, with dozens or even hundreds of breakpoints concentrated in localized genomic regions, requires specialized analytical approaches. Tools such as ShatterSeek and ComplexFill have been developed specifically for identifying and characterizing chromothripsis and other complex rearrangement patterns. Additionally, the circular nature of ecDNA molecules necessitates specialized detection approaches, as their rearrangement patterns differ from those of linear chromosomal fragments.

Distinguishing Somatic from Germline SVs

The detection of tumor-specific somatic SVs aims to identify variants that uniquely occur in a patient's tumor cells. Typically, paired tumor-normal samples are used to classify SVs as either germline, mosaic-normal, or tumor-specific variants [24]. This process involves two main steps: the detection of SVs in both samples, followed by differential analysis of the callsets. Somatic SV detection algorithms differ in their approach to identify tumor-specific SVs from paired tumor-normal samples, and as a result can classify the same event differently [24].

DELLY and LUMPY use ad hoc filtering whereby SVs supported by at least one read from the normal sample are removed from the tumor SV callset, which is highly sensitive to contamination [24]. In contrast, Manta uses a probabilistic scoring system for somatic SVs integrating evidence from tumor and normal reads, while SvABA uses both the tumor and normal data during assembly before distinguishing somatic variants [24]. GRIDSS applies extensive rule-based filtering to both single break-ends and breakpoints [24]. Specialized somatic SV detection tools such as Lancet and Varlociraptor account for challenges specific to the identification of tumor-specific SVs, including differences in SV breakpoints and types between tumor and normal samples, the presence of complex rearrangements, and issues inherent to analyzing tumor samples such as contamination, polyploidy, and heterogeneity [24].

Table 3: Computational Tools for Structural Variant Analysis in Cancer

| Tool | Primary Methodology | Variant Types Detected | Somatic Classification Approach |

|---|---|---|---|

| DELLY | Integrated read-pair, split-read, and read-depth | Deletions, duplications, inversions, translocations | Filtering of normal-supported variants |

| LUMPY | Probabilistic framework combining multiple signals | Deletions, duplications, inversions, translocations | Evidence-based somatic scoring |

| Manta | Joint assembly and scoring of tumor-normal pairs | Deletions, duplications, inversions, translocations | Integrated somatic likelihood model |

| SvABA | Assembly-based variant calling | Deletions, insertions, translocations | Joint tumor-normal assembly |

| GRIDSS | Break-end assembly with quality scoring | Deletions, duplications, inversions, translocations | Extensive rule-based filtering |

Table 4: Essential Computational Tools and Databases for Cancer Genomic Analysis

| Resource Category | Specific Tools/Databases | Primary Function | Application in Cancer Genomics |

|---|---|---|---|

| Sequence Alignment | BWA-MEM, Bowtie2 | Map sequencing reads to reference genome | Foundation for all variant detection pipelines |

| Variant Calling | VarScan, SomaticSniper, Strelka | Identify somatic mutations | Detection of SNVs, indels in tumor-normal pairs |

| Structural Variant Detection | DELLY, Manta, GRIDSS | Detect large-scale genomic rearrangements | Identification of SVs including gene fusions |

| Copy Number Analysis | GATK CNV, Sequenza, ASCAT | Infer copy number alterations | Detection of amplifications and deletions |

| Visualization | IGV, Pairoscope | Visual exploration of genomic data | Validation and interpretation of variant calls |

| Annotation | ANNOVAR, VEP, FuncAssociate | Functional consequence prediction | Prioritization of biologically relevant variants |

| Data Integration | cBioPortal, IntOGen | Multi-omics data aggregation | Pathway analysis and cross-cancer comparisons |

The comprehensive characterization of genomic alterations in cancer through NGS technologies has fundamentally transformed our understanding of cancer biology and treatment. The integration of SNV, indel, CNA, and SV analyses provides a more complete picture of the molecular events driving individual tumors, enabling more precise classification and targeted therapeutic approaches. As sequencing technologies continue to evolve, particularly with the increasing adoption of long-read sequencing that can resolve complex genomic regions more effectively, our ability to detect and interpret the full spectrum of cancer-associated variants will continue to improve.

The analytical approaches discussed in this review highlight both the tremendous progress made in computational methods for variant detection and the ongoing challenges in this field. The integration of multiple algorithms and data types remains essential for achieving high sensitivity and specificity across different variant classes. As we move toward increasingly comprehensive genomic profiling in both research and clinical settings, the continued refinement of these methodologies will be crucial for realizing the full potential of precision oncology and for developing more effective, targeted cancer treatments based on the unique molecular alterations present in each patient's tumor.

Precision oncology represents a fundamental shift from histology-based to molecularly-driven cancer treatment. This evolution has been powered by advances in genomic technologies, moving from single-gene tests to comprehensive genomic profiling (CGP). Next-generation sequencing (NGS) serves as the cornerstone of this transformation, enabling simultaneous analysis of hundreds of cancer-associated genes to identify actionable biomarkers for targeted therapy selection [28] [29]. The development of precision oncology was initially constrained by technological limitations, with treatment decisions relying on single-gene tests such as immunohistochemistry (IHC) for hormone receptor status in breast cancer and PCR-based methods for detecting EGFR mutations in lung cancer [29]. The advent of NGS has revolutionized this landscape, making multigene panels and CGP standard tools in clinical oncology and accelerating the development of targeted therapies, especially for rare molecularly-defined cancer subtypes [29].

The Technological Evolution of Molecular Profiling

From Single-Gene Analysis to Comprehensive Genomic Profiling

The initial era of precision oncology relied on single-gene testing methodologies. IHC established the paradigm for biomarker-driven therapy by detecting estrogen and progesterone receptor expression to guide endocrine treatment in breast cancer [29]. Similarly, quantitative HER2 IHC was crucial for identifying patients eligible for trastuzumab therapy [29]. For mutation detection, techniques including Sanger sequencing and PCR-based genotyping were used to screen for somatic EGFR mutations in lung cancer patients to guide treatment with EGFR-selective kinase inhibitors [29].

The limitations of these single-gene approaches became apparent as knowledge of cancer genomics expanded. Testing genes sequentially consumed valuable tissue samples and time, potentially delaying critical treatment decisions [30]. The need for more comprehensive profiling led to the development of multigene NGS panels, which concurrently screen large patient populations for both standard and rare biomarkers, making trials for therapies targeting rare molecular subtypes feasible [29].

Comprehensive Genomic Profiling and the Role of NGS

Comprehensive genomic profiling utilizes NGS to perform detailed genomic analysis of cancers through a single assay, assessing hundreds of genes simultaneously [31] [32]. CGP Interrogates multiple variant types, including single nucleotide variants (SNVs), short insertions and deletions (indels), copy-number variants (CNVs), and gene fusions [13]. Additionally, it can identify genome-wide biomarkers such as tumor mutational burden (TMB), microsatellite instability (MSI), and homologous recombination deficiency (HRD) [13] [28].

The analytical scope of CGP is demonstrated by assays such as the TruSight Oncology Comprehensive (TSO Comprehensive) test, which interrogates over 500 genes from a solid tumor sample [32]. This approach provides a more complete molecular portrait of a patient's cancer compared to single-gene tests or small panels, significantly increasing the likelihood of identifying clinically actionable biomarkers [13] [32].

Complementary Sequencing Approaches

While targeted NGS panels form the current backbone of clinical genomic profiling, complementary sequencing approaches provide additional layers of molecular information:

- Whole-Genome Sequencing (WGS) Interrogates the entire ~3.2 billion base pair human genome, enabling unbiased detection of SNVs, indels, CNVs, structural rearrangements, and mutations in non-coding regions. WGS is considered the gold standard for detecting germline variants associated with hereditary cancer predisposition syndromes and complex structural variants [28].

- Whole-Exome Sequencing (WES) Targets the 1-2% of the genome that encodes proteins, providing high coverage of exonic regions where most actionable alterations reside at a lower cost and complexity than WGS [28].

- Whole-Transcriptome Sequencing (RNA-Seq) Provides dynamic representation of gene expression, enabling identification of oncogenic gene fusions, alternative splicing events, and quantitative transcript levels. RNA-Seq is particularly valuable for detecting clinically actionable fusions that may evade DNA-based sequencing [28].

Clinical Implementation and Impact of CGP

Enhanced Actionable Biomarker Detection

The superior ability of CGP to identify clinically relevant biomarkers is demonstrated by real-world studies. The Belgian BALLETT study, a nationwide multicenter trial, assessed the feasibility of using CGP in clinical decision-making for patients with advanced cancers [13]. This study enrolled 872 patients from 12 Belgian hospitals, with CGP successfully performed in 93% of cases [13].

Table 1: Actionable Biomarker Detection in the BALLETT Study (n=756 patients)

| Metric | CGP with 523-Gene Panel | Standard Small Panels (Estimated) |

|---|---|---|

| Patients with ≥1 actionable marker | 81% (616 patients) | 21% (160 patients) |

| Patients with multiple actionable alterations | 41% (311 patients) | Not reported |

| Patients with both actionable alteration and immunotherapy biomarker | 14% (104 patients) | Not reported |

| Most frequently altered genes | TP53 (46%), KRAS (13%), APC (9%), PIK3CA (11%) | Limited to genes in small panels |

| Immunotherapy biomarkers identified | TMB-high: 16% (124 patients); MSI-high: 8 patients | Limited detection capability |

The BALLETT study also demonstrated the feasibility of decentralized CGP implementation across nine local NGS laboratories using standardized methodology, with a median turnaround time of 29 days from inclusion to molecular tumor board report [13]. This highlights the potential for broader access to CGP when expertise is distributed across multiple centers situated close to clinicians and patients.

Comparison of Sequencing Methodologies in Breast Cancer

The clinical impact of testing breadth is further illustrated in advanced HR+/HER2- breast cancer. A prospective, multicenter study compared single-gene testing using the SiMSen-Seq (SSS) assay for PIK3CA hotspot mutations against broader panel-based sequencing using the AVENIO ctDNA Expanded assay (77 genes) [33].

Table 2: Single-Gene vs. Panel Sequencing in Advanced HR+/HER2- Breast Cancer

| Parameter | SiMSen-Seq (Single-Gene) | AVENIO (77-Gene Panel) |

|---|---|---|

| PIK3CA mutation detection rate | 38.4% | 36.85% |

| Concordance for PIK3CA | Reference | 92.6% overall agreement |