Navigating the Signal: Advanced Strategies to Minimize False Positives in ctDNA Detection

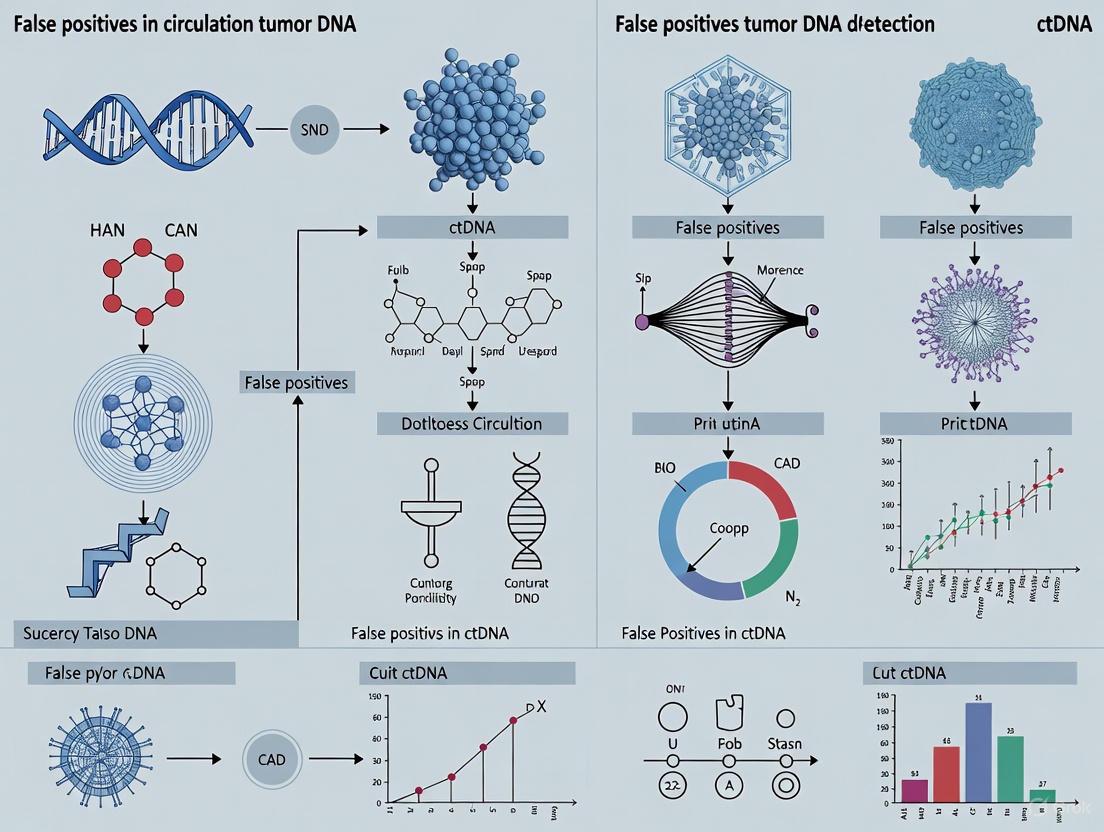

This article provides a comprehensive analysis of the challenges and innovative solutions surrounding false positives in circulating tumor DNA (ctDNA) detection, a critical barrier in liquid biopsy applications.

Navigating the Signal: Advanced Strategies to Minimize False Positives in ctDNA Detection

Abstract

This article provides a comprehensive analysis of the challenges and innovative solutions surrounding false positives in circulating tumor DNA (ctDNA) detection, a critical barrier in liquid biopsy applications. Aimed at researchers, scientists, and drug development professionals, it explores the biological and technical origins of false signals, from low variant allele frequencies and pre-analytical variability to sequencing artifacts. The scope encompasses a review of cutting-edge methodological enhancements—including ultrasensitive assays, multimodal analysis, and sophisticated bioinformatics—designed to improve specificity. Furthermore, the article evaluates validation frameworks and comparative performance metrics essential for translating these technological advances into robust, clinically actionable tools for early cancer detection, treatment monitoring, and minimal residual disease assessment.

Understanding the Root Causes: Biological and Technical Sources of False Positives in ctDNA Analysis

FAQs and Troubleshooting Guides

Low ctDNA Abundance and Detection

FAQ: Why is ctDNA particularly difficult to detect in patients with early-stage cancer?

The primary challenge is the very low concentration of circulating tumor DNA (ctDNA) in the bloodstream during early-stage disease. ctDNA can constitute less than 0.1% of the total cell-free DNA (cfDNA), the majority of which originates from the normal turnover of hematopoietic cells [1] [2]. This creates a significant "needle in a haystack" scenario, where the tumor-derived signal is vastly outnumbered by wild-type DNA from healthy cells [1]. Furthermore, tumor shedding heterogeneity means that some early-stage tumors may release very little DNA into the circulation, sometimes leading to undetectable levels with current technologies [2].

Troubleshooting Guide: My assay is failing to detect ctDNA in samples from early-stage patients. What are the key methodological considerations to improve sensitivity?

- Employ Tumor-Informed Assays: Use sequencing data from a patient's tumor tissue (e.g., from an FFPE block) to create a personalized assay that tracks multiple patient-specific mutations. This increases the breadth of detectable alterations, compensating for low levels of any single mutation [3] [4].

- Utilize Ultra-Sensitive Detection Platforms: Move beyond standard NGS. Techniques like PhasED-seq (which targets multiple single-nucleotide variants on the same DNA fragment) or duplex sequencing can achieve detection sensitivities down to 0.001% variant allele frequency (VAF) [1] [5].

- Optimize Pre-Analytical Workflow: Use specialized blood collection tubes (e.g., Streck tubes) that stabilize blood cells to prevent lysis, which would dilute the ctDNA fraction with wild-type DNA. Implement bead-based or enzymatic size selection during library preparation to enrich for shorter ctDNA fragments (90-150 bp), which can increase the mutant signal several-fold [1] [3].

False Positives and Specificity

FAQ: What are the common sources of false positive results in ctDNA detection, and how can they be mitigated?

False positives can arise from several sources, including sequencing errors, sample cross-contamination, and biological phenomena like Clonal Hematopoiesis of Indeterminate Potential (CHIP) [6].

CHIP is an age-related condition where hematopoietic stem cells acquire mutations, which are then present in the DNA these cells release into the blood. When a ctDNA test detects a mutation derived from CHIP and not the tumor, it is a false positive [6]. Mutations in genes like ATM and CHEK2 are frequently associated with CHIP [6].

Troubleshooting Guide: I am observing mutations in my ctDNA data that were not present in the primary tumor sequencing. How can I determine if this is due to CHIP, tumor heterogeneity, or an artifact?

- Confirm with Paired Whole Blood: The most effective method to rule out CHIP is to sequence a matched whole blood sample (or buffy coat) alongside the plasma. Mutations found in both the plasma cfDNA and the cellular DNA from blood are likely of hematopoietic origin, not tumor-derived [6].

- Apply CHIP Filtering Bioinformatically: If a paired whole blood sample is unavailable, use existing databases of common CHIP mutations to filter out these variants during bioinformatics analysis. Some commercial assays explicitly filter CHIP mutations to reduce false positives [4].

- Validate with Orthogonal Assays: If a new mutation is suspected to be a true resistance mutation from the tumor, confirm it using an orthogonal technology (e.g., ddPCR) or by tracking its VAF longitudinally. True tumor-derived mutations may increase over time with disease progression, while artifacts will not [5].

Analytical Validation and Standardization

FAQ: How can I validate the performance of a new ultrasensitive ctDNA assay in my lab?

Robust validation is critical for reliable results. Key performance metrics to define are the Limit of Detection (LOD), sensitivity, and specificity using contrived and clinical samples [3].

Troubleshooting Guide: How do I establish a reliable limit of detection (LOD) for my assay?

- Use Spike-In Controls: Create dilution series of tumor cell line DNA or synthetic DNA fragments with known mutations into wild-type cfDNA or plasma. This helps establish the lowest VAF at which the assay can reliably and reproducibly detect the mutant allele [3] [5].

- Determine Technical LOD vs. Clinical LOD: The technical LOD is the lowest VAF detectable in a dilution series. The clinical LOD should be established using clinical samples with known outcomes and might be higher than the technical LOD. For MRD detection, an LOD of 0.01% VAF is often targeted [4].

- Assay Breadth is Critical: For tumor-informed assays, the probability of detecting ctDNA is a function of the number of mutations tracked. Validate that your panel's breadth provides a high enough "effective LOD" for your intended clinical application [3].

Experimental Protocols for Key Methodologies

Protocol 1: Tumor-Informed ctDNA Analysis for MRD Detection

This protocol outlines a method for detecting Minimal Residual Disease (MRD) with high sensitivity and specificity by first sequencing the tumor to identify patient-specific mutations [4].

1. Sample Collection and Processing:

- Tissue Sample: Obtain formalin-fixed paraffin-embedded (FFPE) tumor tissue block or slides [4].

- Blood Sample: Draw blood into cell-stabilizing tubes (e.g., Streck tubes). Process within the time window specified by the tube manufacturer (typically 3-5 days) [3].

- Plasma Separation: Centrifuge blood using a two-step protocol (e.g., 1600 × g for 10 min, then transfer plasma and spin at 16,000 × g for 10 min) to remove residual cells [3].

- cfDNA Extraction: Extract cfDNA from plasma using a commercial kit optimized for short fragments. Quantify yield using a fluorometer [3].

2. Whole Exome Sequencing (WES) of Tumor and Normal DNA:

- Perform WES on DNA from the FFPE tumor and a matched normal sample (e.g., buffy coat).

- Bioinformatic Analysis: Identify somatic single nucleotide variants (SNVs) and structural variants (SVs) specific to the tumor. Select a set of 16-50 high-confidence, clonal mutations for tracking in plasma [4].

3. Custom Panel Design and ctDNA Sequencing:

- Design a custom NGS panel (e.g., a multiplex PCR panel) targeting the selected patient-specific mutations.

- Sequence the plasma cfDNA using this custom panel with high depth (e.g., >100,000x coverage) [4].

- Use Unique Molecular Identifiers (UMIs) to tag individual DNA molecules before amplification to correct for PCR errors and sequencing artifacts [5].

4. Bioinformatic Analysis and MRD Calling:

- Generate consensus sequences from reads sharing the same UMI to eliminate errors.

- Align sequences to the reference genome and count mutant molecules.

- A sample is classified as MRD-positive if ≥2 tumor-derived molecules are detected across the set of tracked mutations. The result is quantified as Mean Tumor Molecules per mL (MTM/mL) [4].

Protocol 2: Duplex Sequencing for Ultra-Error-Suppressed Detection

This protocol describes a high-accuracy sequencing method that sequences both strands of a DNA duplex to achieve an extremely low error rate, ideal for detecting very low VAF variants [5].

1. Library Preparation with Double-Stranded Barcoding:

- Extract cfDNA as described in Protocol 1.

- During library preparation, use UMIs that uniquely tag each individual double-stranded DNA molecule. The two strands of the same original molecule receive the same UMI.

2. Sequencing and Strand Separation:

- Perform high-depth NGS on the prepared libraries.

- Bioinformatically separate the sequencing reads based on their UMIs, grouping reads that originated from the same original DNA molecule.

3. Consensus Sequence Generation:

- For each group of reads from the same original molecule, generate a consensus sequence for each of the two strands.

- A true mutation is called only if it is present in the consensus sequences of both strands at the same genomic position. Errors introduced during PCR or sequencing, which typically affect only one strand, are thus filtered out [5].

Data Presentation

Table 1: Comparison of Ultrasensitive ctDNA Detection Technologies

| Technology | Key Principle | Reported Sensitivity (LOD) | Key Advantage | Primary Challenge |

|---|---|---|---|---|

| Structural Variant (SV) Assays [1] | Tracks tumor-specific chromosomal rearrangements (e.g., translocations). | <0.01% VAF (parts-per-million) | High specificity; low background in normal cells. | Requires tumor sequencing for breakpoint identification. |

| PhasED-Seq [1] | Targets multiple phased SNVs on a single DNA fragment. | <0.0001% VAF | Extremely high sensitivity for ultra-low tumor fraction. | Complex bioinformatic analysis. |

| Duplex Sequencing [5] | Sequences both strands of DNA duplex; true variants are found on both. | ~0.001% VAF (1000x higher accuracy than NGS) | Extremely low error rate; high confidence in variants. | Inefficient use of reads; higher input DNA requirements. |

| Personalized MRD Assays [4] | Tumor-informed, multiplex PCR tracking 16-50 patient-specific variants. | 0.01% VAF | High sensitivity and specificity; filters CHIP. | Turnaround time of 3-4 weeks for initial assay design. |

| Nanomaterial Electrochemical Sensors [1] | Uses nanomaterials (e.g., graphene) to transduce DNA binding into electrical signals. | Attomolar concentration | Rapid results (minutes); potential for point-of-care use. | Still in research phase; pre-analytical variability. |

| Source of False Positive | Description | Recommended Mitigation Strategy |

|---|---|---|

| Clonal Hematopoiesis (CHIP) [6] | Somatic mutations from blood cells, common in ATM, CHEK2, DNMT3A. | Sequence paired white blood cell/buffy coat and filter overlapping mutations [6] [4]. |

| Sequencing Errors/Artifacts [1] [5] | Errors introduced during PCR amplification or sequencing. | Use Unique Molecular Identifiers (UMIs) and consensus sequencing [5]. |

| Pre-analytical Variation [3] | White blood cell lysis during transport, adding wild-type DNA. | Use specialized blood collection tubes (Streck, PAXgene) and standardized processing protocols [3]. |

| Index Hopping | Misassignment of reads between samples during multiplex sequencing. | Use unique dual indices (UDIs) and bioinformatic filtering. |

| Cross-Contamination | Physical contamination between samples during processing. | Implement strict laboratory workflows (pre- and post-PCR separation) and use uracil-DNA glycosylase (UDG) treatment. |

Methodology and Workflow Visualizations

Tumor-Informed MRD Analysis Workflow

CHIP Mutation Identification Workflow

Duplex Sequencing Error Correction

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Kits for ctDNA Research

| Reagent/Kit | Function | Key Consideration |

|---|---|---|

| Cell-Stabilizing Blood Collection Tubes (e.g., Streck, PAXgene) [3] [4] | Prevents white blood cell lysis during transport/storage, preserving the native ctDNA profile. | Stability windows differ (e.g., up to 5 days); must adhere to manufacturer protocols. |

| cfDNA Extraction Kits (e.g., QIAamp Circulating Nucleic Acid Kit) | Isletes short-fragment cfDNA from plasma with high efficiency and purity. | Optimized for low analyte concentrations; elution volume affects final concentration. |

| Unique Molecular Identifiers (UMIs) [5] | Short random nucleotide sequences used to tag individual DNA molecules before PCR. | Allows for bioinformatic error correction by generating consensus reads from molecules with the same UMI. |

| Hybrid Capture or MultipPCR Panels | Enriches for genomic regions of interest from the cfDNA library for targeted sequencing. | Personalized panels (tumor-informed) offer higher sensitivity for MRD than fixed panels [4]. |

| Library Preparation Kits for Low-Input DNA | Converts small amounts of cfDNA into sequencing libraries with high efficiency and minimal bias. | Critical for samples with low total cfDNA yield; should minimize PCR duplicates. |

In circulating tumor DNA (ctDNA) detection research, distinguishing true somatic variants from technical noise is not just a procedural hurdle—it is a fundamental requirement for accurate clinical interpretation. Technical noise, comprising artifacts introduced during sequencing preparation, PCR amplification, and the sequencing process itself, can mimic low-frequency somatic variants, leading to false positives. This challenge is particularly acute in liquid biopsy applications, where the true biological signal from ctDNA can be present at very low allelic fractions, often below 1% in early-stage cancer [5]. The presence of clonal hematopoiesis of indeterminate potential (CHIP) further complicates this landscape, as age-related somatic mutations in hematopoietic cells can be detected in plasma and misinterpreted as tumor-derived variants [6]. This article provides a comprehensive troubleshooting framework to help researchers identify, mitigate, and correct for these technical artifacts, thereby enhancing the reliability of ctDNA analysis in both research and clinical settings.

FAQ: Understanding Technical Artifacts in ctDNA Sequencing

What are the primary sources of technical artifacts in ctDNA sequencing? Technical artifacts originate from multiple steps in the sequencing workflow. The major sources include: (1) PCR artifacts introduced during amplification, including stochastic fluctuations in early cycles, polymerase errors in later cycles, and GC-content bias [7]; (2) Library preparation artifacts caused by steps such as acoustic shearing of DNA, which can induce specific base substitutions including C:G > A:T and C:G > G:C transversions due to guanine oxidation [8]; (3) Sequencing run errors from the sequencer chemistry itself, though these are largely removable by quality score filtering [8]; and (4) Biological contaminants such as CHIP, where somatic mutations from blood cells are detected in plasma and mistaken for tumor-derived variants [6].

Why is low-input ctDNA particularly vulnerable to technical artifacts? Low-input ctDNA samples are highly susceptible to PCR stochasticity—the random fluctuation in which molecules are amplified in early PCR cycles. When starting with minimal template copies, this stochastic selection process can dramatically skew sequence representation after amplification [7]. In later PCR cycles, polymerase errors become more common but typically remain at low copy numbers. The combination of these factors means that artifacts can constitute a significant proportion of the final sequencing data when the actual biological target is scarce, effectively lowering the signal-to-noise ratio and making true variant calling more challenging.

How can CHIP be distinguished from true tumor-derived mutations? CHIP represents a significant source of biological false positives in ctDNA research. To distinguish CHIP mutations from tumor variants:

- Paired sample analysis: Sequence matched whole-blood or peripheral blood leukocyte (PBL) DNA alongside plasma samples. Mutations present in both plasma and cellular blood components likely originate from hematopoietic cells [6] [8].

- Age consideration: CHIP is age-related and more common in older populations. In one study, patients with ATM and CHEK2 mutations detected only in ctDNA (not tumor tissue) had a higher median age (74 years) compared to those with tissue-confirmed mutations (70-71 years) [6].

- Gene-specific suspicion: Be particularly cautious with genes commonly affected by CHIP, including ATM, CHEK2, DNMT3A, TET2, and ASXL1 [6].

What are the key indicators of poor-quality sequencing data? Reviewing sequencing chromatograms is essential for identifying poor-quality data. Key indicators include:

- High baseline noise: Excessive multicolored peaks at baseline levels, making true peaks difficult to identify [9].

- Mis-spaced peaks: Irregular spacing between peaks, often indicating erroneous base calling or insertion artifacts [9].

- Declining resolution later in the read: Peak broadening and decreased separation between peaks in later cycles is normal, but excessive degradation makes basecalls unreliable [9].

- Heterozygous (double) peaks: Single positions showing two different colored peaks may indicate true heterozygosity but can be misinterpreted by basecallers [9].

Troubleshooting Guide: Identifying and Resolving Common Issues

High Duplicate Read Rates and Low Library Complexity

| Symptoms | Possible Causes | Solutions |

|---|---|---|

| High percentage of PCR duplicates in sequencing output [10]. | Excessive PCR cycles leading to overamplification [7] [10]. | Reduce number of PCR cycles; optimize cycle number for input DNA amount [11]. |

| Low complexity libraries despite sufficient starting material. | Poor fragmentation or inefficient ligation [10]. | Optimize fragmentation parameters; verify fragment size distribution before proceeding [10]. |

| Low yield leading to required overamplification. | PCR inhibitors in template DNA (phenol, salts, etc.) [12] [11]. | Re-purify input DNA using clean columns or beads; use polymerases tolerant to inhibitors [11]. |

Elevated Background Noise and False Positive Variant Calls

| Symptoms | Possible Causes | Solutions |

|---|---|---|

| High number of low-allelic fraction variants that don't validate. | DNA damage during library prep (e.g., cytosine deamination) [8]. | Use unique molecular identifiers (UMIs) to distinguish true mutations from artifacts [5]. |

| Specific transversion patterns (C:G > A:T, C:G > G:C). | Oxidative DNA damage during acoustic shearing [8]. | Use milder shearing conditions or enzyme-based fragmentation; consider blood collection tubes with preservatives. |

| Artifactual variants particularly in GC-rich regions. | PCR bias due to variable amplification efficiencies [7]. | Use polymerases formulated for high-GC content; add PCR enhancers/co-solvents [12] [11]. |

| Apparent mutations in genes associated with CHIP (ATM, CHEK2). | Clonal hematopoiesis detected in plasma [6]. | Sequence matched whole-blood DNA to identify and filter CHIP mutations [6]. |

Poor Library Yield and Failed Libraries

| Symptoms | Possible Causes | Solutions |

|---|---|---|

| Low final library concentration [10]. | Poor input DNA quality or quantity [10] [11]. | Accurately quantify input DNA using fluorometric methods (Qubit) rather than UV absorbance [10]. |

| Adapter dimer peaks in electropherogram [10]. | Inefficient ligation or incorrect adapter concentration [10]. | Titrate adapter:insert molar ratios; ensure fresh ligase and optimal reaction conditions [10]. |

| No or minimal amplification products. | PCR inhibitors carried over from sample collection [12]. | Dilute template to reduce inhibitor concentration; use polymerases with high tolerance to inhibitors [12]. |

| Smearing or non-specific bands on gels. | Suboptimal PCR conditions [12]. | Increase annealing temperature; use hot-start polymerases; redesign primers [12] [11]. |

Experimental Protocols for Artifact Identification and Mitigation

Protocol: Paired Plasma-Whole Blood Analysis to Identify CHIP

Purpose: To distinguish true tumor-derived ctDNA mutations from somatic mutations originating from hematopoietic cells (CHIP).

Materials:

- Blood collection tubes (e.g., Streck Cell-Free DNA, EDTA)

- Plasma separation equipment (centrifuge)

- DNA extraction kits for plasma and whole blood

- PCR reagents, UMI-adapter ligation kit

- High-sensitivity sequencing platform

Methodology:

- Sample Collection: Collect peripheral blood in appropriate tubes. Process plasma within recommended timeframes to prevent cell lysis.

- Plasma Separation: Perform double centrifugation (e.g., 800 × g for 10 minutes, then 14,000 × g for 10 minutes) to obtain cell-free plasma.

- Cell Pellet Retention: Retain the cellular pellet from the first centrifugation for matched whole-blood DNA extraction.

- DNA Extraction: Extract cfDNA from plasma using a silica-membrane or bead-based method. Extract genomic DNA from the cellular pellet using standard methods.

- Library Preparation: Prepare sequencing libraries from both plasma cfDNA and cellular gDNA using identical protocols with UMIs.

- Sequencing and Analysis: Sequence both libraries. Identify variants present in both plasma and cellular DNA as CHIP-derived. Filter these from subsequent tumor-specific variant calls [6] [8].

Troubleshooting Notes: Consider using specialized collection tubes with preservatives if immediate processing isn't possible. Ensure sufficient sequencing depth for both samples to detect low-frequency CHIP mutations.

Protocol: UMI-Based Error Correction for Low-Frequency Variant Detection

Purpose: To distinguish true low-frequency variants from PCR and sequencing errors using unique molecular identifiers.

Materials:

- UMI-containing adapters

- High-fidelity DNA polymerase

- Standard NGS library preparation reagents

- Bioinformatics tools for UMI consensus calling

Methodology:

- Library Preparation with UMIs: During library prep, ligate UMI-containing adapters to DNA fragments. Each original DNA molecule receives a unique random barcode.

- PCR Amplification: Amplify the library with a high-fidelity polymerase. Avoid excessive cycles (typically 10-16 cycles).

- Sequencing: Sequence the library with sufficient depth to ensure multiple reads per original molecule.

- Consensus Calling: Bioinformatically group reads originating from the same original DNA molecule using their UMIs. Generate a consensus sequence for each molecule, requiring mutations to be present in multiple reads from the same original molecule.

- Variant Calling: Call variants based on consensus sequences rather than individual reads, dramatically reducing false positives from polymerase errors [5].

Advanced Applications: For ultra-high accuracy, use duplex sequencing methods that tag and sequence both strands of DNA duplexes, requiring mutations to be present on both strands for validation [5].

Data Interpretation and Analysis Strategies

Quantitative Characterization of Background Noise

Understanding the expected baseline noise in sequencing data is crucial for setting appropriate variant calling thresholds. The following table summarizes key error rates and their common causes based on empirical data:

| Error Type | Typical Frequency | Primary Contributing Factors | Potential Mitigation Strategies |

|---|---|---|---|

| C:G > A:T Transversions | High (2/3 attributed to shearing) | Guanine oxidation during acoustic shearing [8]. | Enzyme-based fragmentation; antioxidant additives. |

| C > T Transitions | Variable (~20% from hybrid selection) | Cytosine deamination during library prep [8]. | UMI-based error correction; lower-temperature incubation. |

| A > G / A > T Substitutions | Localized to fragment ends | DNA breakage during shearing [8]. | Optimized shearing conditions; fragment end trimming. |

| PCR Stochasticity | Major source of skew in low-input | Random sampling in early PCR cycles [7]. | Increase input DNA; reduce PCR cycles; use digital PCR. |

| Polymerase Errors | Common in later PCR cycles | Misincorporation by DNA polymerase [7]. | Use high-fidelity polymerases; UMI consensus calling. |

Chromatogram Interpretation Guide

Systematic review of sequencing chromatograms is essential for identifying problematic data:

- High-Quality Sequence: Characterized by evenly spaced, single-color peaks with minimal baseline noise. Peak heights may vary up to 3-fold, which is normal [9].

- Problematic Indicators:

- Mis-spaced peaks: Suggest potential base calling errors or insertion artifacts.

- Heterozygous peaks: Single positions showing two different colored peaks may represent true heterozygosity or technical artifacts.

- Declining quality at read ends: Decreasing resolution at later cycles is normal, but requires cautious interpretation [9].

- Basecaller Errors: Automated basecallers may mis-call nucleotides, especially in regions with:

- G-A dinucleotides (often show extra spacing)

- Low peak heights amid noisy baselines

- Late-cycle sequences with poor resolution [9]

Manual verification is particularly important for variant positions and their immediate context.

Visual Guide to Artifact Identification and Mitigation

This decision workflow helps systematically classify potential variants based on their characteristics and laboratory observations.

Experimental Strategy to Minimize Technical Artifacts

This experimental strategy outlines key steps in both wet lab and computational processes to minimize technical artifacts throughout the ctDNA analysis workflow.

Research Reagent Solutions for Artifact Reduction

The following table provides essential reagents and their specific functions in mitigating technical artifacts:

| Reagent Type | Specific Examples | Function in Artifact Reduction | Application Notes |

|---|---|---|---|

| High-Fidelity Polymerases | PrimeSTAR HS, Q5 High-Fidelity | Reduced misincorporation errors during amplification [12] [11]. | Use hot-start versions to prevent nonspecific amplification. |

| UMI Adapters | IDT for Illumina, Twist UMI | Enable consensus sequencing to distinguish true variants from artifacts [5]. | Critical for low-frequency variant detection; increases sequencing requirements. |

| Fragmentation Enzymes | Nextera Tagmentase, Covaris | Alternative to acoustic shearing to reduce oxidation artifacts [8]. | Enzyme-based methods avoid oxidative damage associated with shearing. |

| GC-Rich Additives | GC Enhancer, DMSO, betaine | Improve amplification efficiency in GC-rich regions reducing bias [12] [11]. | Optimize concentration for each template; test different additives. |

| Specialized Blood Collection Tubes | Streck Cell-Free DNA BCT, PAXgene | Preserve blood samples and prevent leukocyte lysis and gDNA release [6]. | Essential for CHIP distinction; enables sample transport without processing. |

| Bead-Based Cleanup Kits | AMPure XP, NucleoSpin | Remove adapter dimers and size selection to improve library quality [10]. | Critical for removing ligation artifacts; optimize bead:sample ratio. |

Frequently Asked Questions (FAQs)

Q1: What are the most critical pre-analytical factors that can lead to false-positive results in ctDNA detection? The most critical pre-analytical factors include the selection of blood collection tubes and handling time, the efficiency of cfDNA extraction, and the prevention of in vitro DNA damage. Using EDTA tubes without proper processing within a few hours can lead to leukocyte lysis and the release of wild-type genomic DNA, diluting the ctDNA fraction and increasing background noise. Inefficient extraction kits can cause selective loss of short cfDNA fragments, while prolonged sample storage or improper temperature can introduce oxidative damage that mimics true mutations during sequencing [13] [14].

Q2: How quickly should plasma be separated from whole blood, and why is this so important? Plasma should be separated from whole blood within a few hours of collection—optimally within 2 to 6 hours. This rapid processing is crucial because delays can lead to the lysis of white blood cells in the sample. This lysis releases large quantities of wild-type genomic DNA, which drastically dilutes the already scarce circulating tumor DNA (ctDNA). This dilution lowers the variant allele frequency (VAF) of true mutations, making them harder to distinguish from technical background noise and significantly increasing the risk of false-negative results [13].

Q3: Can the choice of blood collection tube itself impact my ctDNA results? Yes, absolutely. The choice of collection tube is a fundamental pre-analytical decision.

- K2-EDTA tubes: Are widely used but require plasma separation within a strict timeframe (a few hours) to prevent cell lysis [13].

- Cell-stabilizing tubes: Specialized tubes designed to preserve blood cell integrity for longer periods (e.g., up to several days) are available. These are highly recommended when immediate processing is not feasible, as they maintain sample quality and reduce background variability [13].

Q4: What is the purpose of molecular barcodes in ctDNA sequencing, and how do they reduce errors? Molecular barcodes, also known as Unique Identifiers (UIDs), are short, random DNA sequences ligated to individual cfDNA molecules before any amplification steps. They function as unique molecular tags. By tracking all PCR-amplified descendants of the original molecule, bioinformatic pipelines can generate a consensus sequence. This process effectively filters out errors that are randomly introduced during library preparation, PCR amplification, or sequencing, thereby suppressing false positives and allowing for the accurate detection of true low-frequency variants [14] [15].

Q5: Our lab is validating a new ctDNA panel. How many healthy donor samples are recommended for establishing a background error model? While there is no universal mandate, studies have shown that using a cohort of around 12-14 healthy donor samples is a practical and effective approach for characterizing the assay-specific background error profile. This sample size provides sufficient data to model position-specific and sequence context-specific errors, which can then be applied to polish and correct data from patient samples, enhancing specificity [16]. A Bayesian statistical approach can further improve the robustness of background error estimation, especially when dealing with small sample sizes [16].

Troubleshooting Guide

Table 1: Common Pre-analytical Issues and Corrective Actions

| Problem Area | Specific Issue | Potential Consequence | Corrective Action |

|---|---|---|---|

| Sample Collection | Use of inappropriate collection tube; Prolonged hold time before processing. | Leukocyte lysis, gDNA contamination, false negatives. | Use cell-stabilizing tubes for extended holds; Process EDTA tubes within 2-6 hours of draw [13]. |

| Plasma Processing | Incomplete centrifugation; Multiple freeze-thaw cycles of plasma. | Cellular contamination; Degradation of cfDNA, fragmentation. | Perform double centrifugation (e.g., 1,600-3,000 x g); Aliquot plasma to avoid repeated thawing [13]. |

| cfDNA Extraction | Use of methods with low recovery of short fragments. | Loss of ctDNA (which is often shorter), reduced sensitivity. | Select and validate kits optimized for short-fragment recovery [13] [17]. |

| Library Prep & Sequencing | Oxidative DNA damage during hybridization capture. | G>T transversion artifacts, false positives. | Optimize hybridization time; Employ error-suppression bioinformatics tools (e.g., iDES, TNER) [14] [16]. |

| Quality Control | Inaccurate quantification of low-concentration cfDNA. | Suboptimal sequencing input, failed libraries. | Use fluorescent-based assays (e.g., Qubit) over UV spectrometry for accurate quantitation [17]. |

| Assay Characteristic | Performance Range | Impact on Variant Calling |

|---|---|---|

| cfDNA Input | Low (<20 ng), Med (20-50 ng), High (>50 ng) | Sensitivity drops significantly with low inputs, particularly for VAFs <0.5%. |

| Variant Allele Frequency (VAF) | Low (0.1-0.5%), Intermediate (0.5-2.5%) | All assays show substantially higher sensitivity in the intermediate VAF range. |

| Sequencing Depth | <5,000x to >10,000x | Higher depth (>10,000x) generally enables better detection of low-frequency variants. |

| On-target Rate | ≥50% (considered acceptable) | Lower on-target rates, often associated with low cfDNA input, reduce assay efficiency. |

| Extraction Efficiency | Variation between assays (e.g., 16% to >90%) | Low extraction efficiency directly reduces the number of molecules available for sequencing. |

Experimental Protocols for Key Methodologies

Protocol 1: Implementing a Molecular Barcoding Workflow for Error Suppression

This protocol is adapted from methods used to achieve high specificity in detecting low-frequency variants [14] [15].

1. Adapter Ligation:

- Use sequencing adapters that incorporate a combination of exogenous and endogenous barcodes.

- A recommended design includes:

- A 4-base degenerate UID in the index region for single-strand tracking.

- A 2-base UID on each end of the insert, sequenced as part of the main read, for double-stranded (duplex) tracking.

- Ligate these barcoded adapters to each end of the purified cfDNA fragments using a high-efficiency DNA ligase.

2. Library Amplification and Target Enrichment:

- Amplify the barcoded library with a low-cycle PCR to minimize the introduction of polymerase errors.

- Enrich for your target regions using hybrid capture with biotinylated baits. Note that prolonged hybridization times (e.g., up to 3 days) can increase oxidative damage artifacts [14].

3. Sequencing and Bioinformatics Analysis:

- Sequence the library to a high deduplicated mean depth (e.g., >10,000x) to ensure sufficient coverage of original molecules.

- Process the data through a bioinformatic pipeline that:

- Groups reads by their barcode and mapping coordinates.

- Generates a consensus sequence for each unique molecule.

- For duplex sequencing, pairs the consensus sequences from the two complementary strands to achieve the highest possible accuracy.

Protocol 2: Establishing a Background Error Model with Healthy Donor Samples

This protocol outlines the use of the TNER (Tri-Nucleotide Error Reducer) method to create a robust background model, which is particularly effective with small sample sizes [16].

1. Data Collection:

- Sequence cfDNA from a cohort of healthy donors (n ≥ 12 is a practical target) using your validated ctDNA assay and standard workflow.

- Generate a file of all observed "mutations" in these healthy samples, which represent your technical background noise.

2. Model Estimation:

- Categorize every potential base substitution in your target panel into one of the 96 possible tri-nucleotide contexts (TNCs). This accounts for the mutated base and its immediate 5' and 3' neighboring bases.

- For each TNC group

i, model the number of error readsXat a base positionjwith coverageNas a binomial distribution:X_ij ~ Binom(N_j, π_ij), whereπ_ijis the position-specific error rate. - Apply a Bayesian framework with a Beta prior distribution for

π, using the method of moments to estimate the prior parameters from the average mutation error rate and variance within each TNC across all healthy samples.

3. Application to Patient Data:

- For each position in a patient sample, calculate a posterior mean estimate of the background error rate. This is a weighted average (shrinkage estimator) of the global TNC error rate and the observed error rate at that specific position.

- Use this robust, position-aware background estimate to distinguish true low-frequency variants from technical artifacts in patient cfDNA sequencing data.

Workflow Visualization

Diagram 1: Pre-analytical cfDNA Processing Workflow

Diagram 2: Molecular Barcoding & Error Suppression

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Robust ctDNA Analysis

| Item | Function & Importance | Key Considerations |

|---|---|---|

| Cell-Stabilizing Blood Tubes | Preserves leukocyte integrity for several days at room temperature, preventing gDNA contamination. | Critical for multi-center studies or when rapid processing is logistically challenging [13]. |

| Short-Fragment Optimized cfDNA Kits | Maximizes recovery of short (~166 bp) cfDNA fragments, which are enriched for tumor-derived DNA. | Kit performance varies; extraction efficiency should be validated as it directly impacts input [13] [17]. |

| Molecular Barcoded Adapters | Tags each original DNA molecule with a unique identifier for bioinformatic error suppression. | Look for designs that support both single-strand (SSCS) and double-strand (DSCS) consensus sequencing [14] [15]. |

| Biotinylated Hybrid-Capture Baits | Enriches for specific genomic regions of interest from the complex cfDNA library. | In-house or commercial bait performance (on-target rate) can vary; oxidative damage can be introduced during long hybridizations [14] [15]. |

| Fluorometric Quantification Kits | Accurately measures low concentrations of cfDNA for optimal library input. | Essential for avoiding under- or over-loading libraries, which affects sequencing quality and variant detection sensitivity [17]. |

FAQ: Understanding the Interference

What is clonal hematopoiesis (CHIP) and why does it interfere with ctDNA analysis? Clonal hematopoiesis of indeterminate potential (CHIP) is an age-related condition in which hematopoietic stem cells acquire somatic mutations and expand in the blood, without causing overt hematologic cancer [18]. These mutations are frequently detected in genes such as DNMT3A, TET2, ASXL1, JAK2, TP53, and SF3B1 [18]. Since over 80% of cell-free DNA (cfDNA) in healthy individuals originates from hematopoietic cells, these CHIP mutations are released into the bloodstream and can be detected by next-generation sequencing (NGS) assays [19]. This presents a significant biological confounding factor for early cancer detection assays that rely on identifying somatic mutations in cfDNA, as it can be challenging to distinguish whether a detected mutation originates from a clonal hematopoietic cell or a solid tumor [19].

Can benign inflammatory conditions also cause false positives in ctDNA tests? Yes, emerging evidence indicates that CHIP-associated mutations can alter immune cell function and promote a pro-inflammatory state [18]. For instance, macrophages deficient in TET2 or DNMT3A show increased expression of inflammatory mediators like IL-6 and IL-1B in response to stimuli [18]. Chronic inflammatory conditions can therefore be associated with clonal expansions, and the resulting inflammatory signals can create background noise that complicates the accurate detection of tumor-derived DNA.

Which genes commonly mutated in CHIP are most likely to cause false positives? The most common CHIP mutations occur in DNMT3A (the most frequently mutated), TET2, and ASXL1 [18] [19]. Mutations in these genes are highly prevalent in individuals without cancer. It is important to note that while TP53 mutations are also found in CHIP, they appear to be less common in the cfDNA of healthy individuals, as one study identified only one TP53 mutation in a healthy participant's sample [19]. Activating mutations in oncogenes like KRAS can also originate from CHIP, indicating that the specificity of an oncogenic alteration for a solid tumor may be gene-dependent [19].

At what variant allele frequency (VAF) is CHIP typically detected? CHIP is formally defined by a variant allele fraction (VAF) of >2% (corresponding to ~4% of cells for heterozygous mutations) [18]. However, CHIP variants can be present at very low frequencies (<0.1% VAF), which poses a significant challenge for detection and filtering [19]. The risk of hematologic cancer and other adverse outcomes increases with clone size, while very small clones (below 0.01-0.02 VAF) have minimal clinical consequence [18].

Troubleshooting Guides & Experimental Protocols

Guide 1: Implementing a CHIP Filtering Strategy

A multi-faceted approach is required to effectively distinguish CHIP-related signals from true tumor-derived mutations.

Step 1: Annotate Mutations Against a CHIP Database Prior to analysis, curate a list of genes and specific mutations highly associated with CHIP (e.g., specific loss-of-function variants in DNMT3A and TET2). Flag any variants detected in cfDNA that match this database. Be aware that while their presence suggests CHIP, it does not definitively rule out a concurrent tumor [19].

Step 2: Perform Paired White Blood Cell (WBC) Sequencing This is the most critical step for a wet-lab confirmation. Sequence the DNA from a patient's matched white blood cells to the same unique coverage depth as the cfDNA.

- Protocol: Isolate genomic DNA from peripheral blood mononuclear cells (PBMCs) or buffy coat. Use the same targeted NGS panel and bioinformatic pipeline applied to the cfDNA analysis. A mutation present in both the cfDNA and the matched WBC sample is highly likely to be of clonal hematopoietic origin [19].

- Technical Note: Conventional WBC sequencing (e.g., whole-exome sequencing at ~415x depth) is insufficient to detect low-frequency CHIP variants (<0.1% VAF). To achieve 95% sensitivity for a variant at 0.1% VAF, an original sequencing depth of approximately 3000x is required [19].

Step 3: Analyze Mutational Function Scrutinize the functional impact of the variant. The absence of classic oncogene activating mutations (e.g., in KRAS, BRAF) in healthy cfDNA suggests that their detection may be more specific for solid malignancies, though this is not absolute [19]. Filtering out non-activating mutations in CHIP-associated genes can reduce false positives.

Step 4: Correlate with Other Clinical Information Consider the patient's age, as the prevalence of CHIP increases significantly with age. Also, review any history of non-malignant conditions linked to CHIP, such as cardiovascular disease or inflammatory states [18].

The following diagram illustrates the decision-making workflow for a CHIP filtering strategy:

Guide 2: Optimizing Wet-Lab Protocols for Specificity

Technical artifacts and low-input DNA can exacerbate false positive rates. The following protocols focus on improving analytical specificity.

Protocol: Error-Controlled Library Preparation Utilize library construction kits that incorporate unique molecular identifiers (UMIs). UMIs are short random sequences ligated to each original DNA molecule before amplification. This allows for the creation of consensus reads from multiple PCR duplicates, correcting for errors introduced during amplification and sequencing.

- Method: After plasma centrifugation and cfDNA extraction, use a commercial library prep kit that supports duplex UMIs (tagging both strands of the DNA molecule). While this approach offers very high specificity, it can reduce library complexity. Single-strand UMI approaches offer a balance between sensitivity and error correction [19].

- Performance: Endogenous duplex barcoding can achieve a background error rate of 2x10⁻⁷ errors per base, which is ~50-fold lower than digital error-suppression with single-strand barcoding [19].

Protocol: Adequate cfDNA Input and Sequencing Depth Sensitivity and specificity decrease dramatically with low cfDNA inputs.

- Recommendation: Use a minimum of 20-30 ng of cfDNA input for library preparation. Studies show that performance varies significantly with inputs below 20 ng [17] [20]. Furthermore, ensure that your final deduplicated sequencing depth is sufficient for your intended limit of detection. For detecting variants at 0.1% VAF, a depth of several thousand-fold is required [19] [17].

Protocol: Orthogonal Validation For critical low-frequency variants (e.g., VAF < 0.5%), confirm the result using an orthogonal technology, such as digital PCR (dPCR). This is especially useful for validating potential oncogenic drivers before making clinical decisions.

Quantitative Data on ctDNA Assay Performance

The following tables summarize key performance metrics from recent evaluations of ctDNA assays, which highlight the challenges of low-VAF detection.

Table 1: Assay Sensitivity at Different VAFs and Inputs [17]

| cfDNA Input | VAF 0.1% | VAF 0.5% | VAF 2.5% | Key Challenge |

|---|---|---|---|---|

| Low (<20 ng) | Substantial decrease and variability in sensitivity | Lower sensitivity vs. medium/high input | High sensitivity | High risk of false negatives; low sequencing depth |

| Medium (20-50 ng) | Increased sensitivity vs. low input | ~90% sensitivity or higher for most assays | High sensitivity | Recommended minimum input |

| High (>50 ng) | Best sensitivity | High sensitivity | High sensitivity | Optimal for low-VAF detection |

Table 2: Inter-laboratory Comparison of ctDNA Detection [21]

| Variant Allele Frequency (VAF) | Detection Performance | Technical Requirement |

|---|---|---|

| 1% | Easily identified with high congruence between labs and platforms | Standard NGS protocols with well-validated pipelines |

| 0.1% | Challenging; performance varies widely | Requires error-corrected sequencing (e.g., UMIs) and deep sequencing |

The Scientist's Toolkit: Research Reagent Solutions

This table lists essential materials and their specific functions for conducting reliable ctDNA studies that account for CHIP.

Table 3: Key Reagents for CHIP-Aware ctDNA Analysis

| Research Reagent / Tool | Primary Function | Technical Notes |

|---|---|---|

| Targeted NGS Panels (500+ genes) | Simultaneous profiling of tumor- and CHIP-associated mutations in a single assay. | Large panels (e.g., >1 Mb) increase the chance of detecting CHIP. Include genes like DNMT3A, TET2, ASXL1 [19]. |

| Duplex UMI Adapter Kits | Error-controlled library preparation for ultra-specific variant calling. | Reduces background sequencing errors; critical for low-VAF work but can lower library complexity [19]. |

| cfDNA Extraction Kits | Isolation of high-integrity, short-fragment cfDNA from plasma. | High and consistent extraction efficiency is vital for accurate quantification and avoiding false negatives [17]. |

| WBC Genomic DNA Extraction Kits | Preparation of matched control DNA for CHIP filtering. | Essential for the definitive identification of clonal hematopoietic mutations. |

| Bioinformatic Variant Callers | Distinguishing true low-frequency variants from technical artifacts. | Software is critical; validate performance for different mutation types (SNVs, Indels) [21]. |

| Synthetic ctDNA Reference Standards | Analytical validation and cross-assay performance benchmarking. | Contains predefined mutations at known VAFs (e.g., 0.1%, 0.5%, 1%) to validate sensitivity and specificity [17]. |

FAQ: Core Concepts and Troubleshooting

This guide addresses frequently asked questions to help researchers navigate key metrics and common challenges in circulating tumor DNA (ctDNA) detection.

Limit of Detection (LOD)

Q1: What is the Limit of Detection (LOD), and why is it critical for ctDNA analysis?

The Limit of Detection (LOD) is the lowest concentration of an analyte that can be reliably distinguished from a blank sample with a stated confidence level [22]. In ctDNA research, the analyte is the tumor-derived variant, and the "blank" is the background of wild-type DNA and sequencing noise.

- Clinical Significance: ctDNA analysis often targets low-frequency variants, sometimes at VAFs as low as 0.1% [23]. A well-defined LOD is essential to distinguish true tumor-derived variants from false positives caused by the assay's intrinsic error rate [23].

- Statistical Definition: The LOD is not zero. It is defined by accepting specific probabilities of error. A common approach sets the LOD at a concentration where the risk of a false negative (β error) is 5% [24]. This typically requires a signal approximately 3 standard deviations above the mean of the blank [22] [24].

Q2: How do I troubleshoot an LOD that is higher than expected?

A high LOD reduces your assay's sensitivity. Key areas to investigate are summarized in the table below.

Table: Troubleshooting a High Limit of Detection

| Issue Area | Potential Cause | Corrective Action |

|---|---|---|

| Sample & Prep | High background noise from non-tumor DNA (e.g., clonal hematopoiesis) [6]. | Use matched normal samples (e.g., buffy coat) to identify and filter somatic mutations from hematopoietic cells [6] [25]. |

| Sample & Prep | Inefficient DNA extraction or library preparation. | Optimize protocols and use high-quality reagents. Increase input DNA where feasible. |

| Instrument & Analysis | Low sequencing depth or coverage. | Increase sequencing depth to improve the signal-to-noise ratio [23]. |

| Instrument & Analysis | Suboptimal variant calling parameters or algorithms. | Implement ensemble genotyping (combining multiple callers) or machine learning filters (e.g., logistic regression) to reduce false positives without sacrificing sensitivity [25]. |

Variant Allele Frequency (VAF)

Q3: What does Variant Allele Frequency (VAF) tell me, and how is it calculated?

Variant Allele Frequency (VAF) is the proportion of sequencing reads that carry a specific variant at a particular genomic locus [23] [26]. It is calculated as:

VAF = (Number of mutated reads) / (Total number of reads at the locus) × 100% [23]

VAF provides crucial insights into tumor biology:

- Clonality: A high VAF suggests the mutation is clonal (present in most tumor cells), while a low VAF may indicate subclonality or mosaicism [23] [26].

- Germline vs. Somatic: In tumor-only sequencing, a VAF near 50% or 100% may suggest a germline heterozygous or homozygous variant, respectively, while somatic variants can show a wide range of VAFs [23].

- Tumor Burden: In liquid biopsies, the VAF of driver mutations can be a surrogate for tumor fraction in the blood [26].

Q4: Why can VAF be misleading, and how can I improve its interpretation?

VAF is a powerful metric but requires careful interpretation. The following diagram illustrates the key factors that influence observed VAF.

To improve VAF interpretation:

- Account for Tumor Purity: Normalize VAF based on an estimate of the tumor fraction in the sample [26].

- Use Paired Normal Samples: Always sequence a matched normal sample (e.g., from blood or tissue) to distinguish true somatic variants from germline polymorphisms or CHIP-derived mutations [6] [25].

- Validate with Orthogonal Methods: For critical low-VAF variants, confirm results using a different technology (e.g., digital PCR) [27].

Specificity

Q5: How is specificity defined in the context of diagnostic tests, and how is it calculated?

Specificity measures a test's ability to correctly identify the absence of a condition [28]. It is the proportion of true negatives out of all subjects who do not have the disease.

Specificity = True Negatives (D) / [True Negatives (D) + False Positives (B)] [28]

A highly specific test has a low rate of false positives. In ctDNA testing, this means the assay correctly reports "no variant" when the tumor-derived mutation is truly absent.

Q6: My assay is generating false positives. What are the common sources and solutions?

False positives undermine the validity of your results. The table below outlines common sources and mitigation strategies.

Table: Troubleshooting False Positive Variant Calls

| Source of False Positive | Description | Mitigation Strategy |

|---|---|---|

| Clonal Hematopoiesis (CHIP) | Age-related mutations in blood cells are detected in plasma, mimicking ctDNA [6]. | Sequence paired buffy coat DNA to identify and filter CHIP mutations [6]. |

| Sequencing/Base-Calling Errors | Errors during cluster generation or sequencing, often in homopolymer regions [23]. | Use duplex sequencing; apply quality filters (e.g., base quality score); employ ensemble genotyping with multiple callers [25]. |

| PCR Artifacts | Errors introduced during PCR amplification in library prep. | Use high-fidelity polymerases; reduce PCR cycles; incorporate unique molecular identifiers (UMIs) to tag original molecules [26]. |

| Alignment Artifacts | Misalignment of reads to the reference genome, especially around indels. | Use optimized alignment algorithms and a high-quality reference genome [25]. |

Essential Research Reagent Solutions

The following reagents and materials are critical for robust ctDNA analysis.

Table: Key Reagents and Materials for ctDNA Research

| Item | Function / Application |

|---|---|

| Matched Normal DNA | Typically from peripheral blood leukocytes (buffy coat). Essential for distinguishing somatic tumor mutations from germline variants and CHIP [6] [25]. |

| Cell-free DNA Collection Tubes | Specialized blood collection tubes that stabilize nucleated cells and prevent genomic DNA contamination of plasma, preserving the integrity of ctDNA. |

| High-Fidelity DNA Polymerase | Used during library preparation to minimize errors introduced by PCR amplification, reducing false positive variant calls [26]. |

| Unique Molecular Identifiers (UMIs) | Short random nucleotide sequences that tag individual DNA molecules before amplification. Allows bioinformatic correction of PCR and sequencing errors, significantly improving specificity [26]. |

| Orthogonal Validation Assay (e.g., dPCR) | An independent technology (like digital PCR) used to confirm variants identified by NGS, especially those at low VAF or of high clinical significance [27]. |

Experimental Protocol: Determining Limit of Detection for an NGS Assay

This protocol outlines a method for empirically determining the LOD of your NGS assay for a specific variant.

1. Principle The LOD is estimated by analyzing replicates of samples with known, low concentrations of the target variant. The LOD is the lowest concentration at which the variant is detected with a probability of at least 95% (e.g., β = 0.05) [22] [24].

2. Materials and Reagents

- Synthetic DNA or cell line DNA with the target variant.

- Wild-type genomic DNA (from a confirmed negative source).

- Your standard NGS library preparation kit.

- Your sequencing platform.

3. Procedure

- Step 1: Prepare Dilution Series. Serially dilute the variant DNA into wild-type DNA to create samples spanning a range of expected VAFs (e.g., 2%, 1%, 0.5%, 0.1%, 0.01%).

- Step 2: Replicate Analysis. Process a minimum of 20 replicates for each VAF level, including a blank (wild-type DNA only), following your complete analytical procedure from extraction to sequencing [24].

- Step 3: Data Analysis. For each VAF level, calculate the detection rate (number of replicates where the variant was called / total number of replicates).

4. Data Interpretation and LOD Calculation

- Probit Analysis: Plot the detection probability against the log(VAF). The LOD is the VAF at which 95% of the replicates are successfully detected [22].

- Alternative Method: The LOD can be defined as the lowest VAF level where ≥ 19 out of 20 replicates (95%) are detected [29].

Enhancing Specificity: Next-Generation Assays and Multimodal Approaches for Accurate ctDNA Detection

FAQs: Addressing False Positives in ctDNA Detection

False positives in circulating tumor DNA (ctDNA) analysis can arise from several biological and technical challenges. A significant source is Clonal Hematopoiesis of Indeterminate Potential (CHIP), an age-related condition where hematopoietic cells acquire somatic mutations. A large proportion of cell-free DNA (cfDNA) in plasma derives from these cells, which can lead to false positive results when testing blood samples for certain gene mutations, such as those in ATM and CHEK2 [6].

Multimodal analysis mitigates this by cross-validating signals across different biological layers. For instance, a mutation flagged by a single-analyte approach might be corroborated or refuted by examining the methylation or fragmentation profile of the same DNA fragment. A signal is only considered a true positive if it is supported by multiple features, thereby filtering out noise from non-tumor sources like CHIP [6] [30].

Our single-analyte mutation panel has poor sensitivity for early-stage cancer detection. How can integrating fragmentomics and methylomics improve this?

The low abundance of ctDNA in early-stage disease is a fundamental challenge, often resulting in false negatives with single-analyte tests. Integrating fragmentomics and methylomics significantly boosts sensitivity by capturing a larger set of cancer-derived signals [31] [30].

Methylation changes are among the earliest events in tumorigenesis and involve widespread alterations across the genome. Profiling these changes in cfDNA provides a strong, abundant signal for cancer detection [30]. Fragmentomics analyzes the patterns of how DNA is fragmented in the blood. Cancer cells exhibit different DNA fragmentation patterns compared to healthy cells due to differences in nuclear organization and nuclease activity. These fragmentation patterns are a rich source of cancer-specific information [31] [30].

By combining mutations, methylation, and fragmentomics, assays can achieve high sensitivity even at low sequencing depths. For example, the SPOT-MAS assay, which integrates these modalities, demonstrated a sensitivity of 73.9% for Stage I and 62.3% for Stage II cancers across five cancer types at 97% specificity, using shallow genome-wide sequencing [31].

How can we accurately determine the tissue of origin (TOO) for a cancer signal detected in plasma?

Single-analyte mutation profiles are often not tissue-specific. Multimodal signatures, particularly methylation patterns, are highly effective for tumor of origin (TOO) localization because methylation is strongly tied to cell and tissue identity [31] [30].

The workflow involves:

- Building a Reference Database: Creating a comprehensive map of tissue-specific methylation patterns and fragmentation profiles.

- Profiling Plasma cfDNA: Analyzing the methylation and fragmentation features of the unknown cfDNA sample.

- Pattern Matching: Using machine learning classifiers to compare the plasma sample's multimodal profile against the reference database to predict the most likely tissue of origin.

The SPOT-MAS assay, for instance, achieved a TOO accuracy of 0.7 using its multimodal approach [31]. Similarly, the THEMIS approach utilizes combined methylation and fragmentation profiling at tissue-specific accessible chromatin regions to accurately locate the origin of cancer signals [30].

Troubleshooting Guides

Issue: Suspected CHIP Interference in Mutation Calls

Problem: You are detecting mutations in genes like ATM or CHEK2 in plasma, but these are not validated in matched tumor tissue samples, leading to potential false positives in your study.

Investigation and Solution:

| Step | Action | Purpose and Additional Context |

|---|---|---|

| 1. Confirm CHIP | Perform sequencing on matched whole-blood or buffy coat DNA for the patient. | Confirms if the variant is present in hematopoietic cells, strongly indicating CHIP [6]. |

| 2. Multimodal Verification | Analyze the same sample for methylation and fragmentation patterns. | A true tumor-derived signal should have concordant abnormalities in methylation/fragmentomics; a CHIP mutation will lack these supporting features [6] [30]. |

| 3. Age Correlation | Check the patient's age. | CHIP is age-related; a higher median age in patients with mutations detected only in ctDNA (not tissue) is concordant with CHIP [6]. |

Issue: Low Detection Sensitivity in Early-Stage Patients

Problem: Your current ctDNA assay, based solely on somatic mutations, is failing to detect a sufficient fraction of early-stage (I & II) cancer patients.

Investigation and Solution:

| Step | Action | Purpose and Additional Context |

|---|---|---|

| 1. Assay Expansion | Integrate methylomics and fragmentomics into your sequencing workflow. | These features provide abundant, complementary cancer signals beyond rare mutations, increasing the chance of detecting low-volume disease [31] [30]. |

| 2. Low-Pass Sequencing | Adopt a shallow whole-genome sequencing approach for fragmentomics and copy-number analysis. | This cost-effectively covers the entire genome, capturing widespread fragmentation and methylation changes without the high cost of deep targeted sequencing [31] [30]. |

| 3. Machine Learning | Train a composite model using features from all modalities. | Ensemble models (e.g., SVM, logistic regression) that combine methylation, fragmentation, and mutation scores have been shown to significantly boost sensitivity for early-stage cancers [30]. |

Experimental Protocols & Data

Detailed Methodology: SPOT-MAS Multimodal Assay

The following protocol outlines the workflow for the SPOT-MAS assay, which simultaneously profiles multiple ctDNA features [31].

1. Sample Preparation:

- Input: Collect 4-10 mL of plasma from peripheral blood.

- cfDNA Extraction: Isolate cell-free DNA using a commercial kit (e.g., cfPure Extraction Kit) designed to maximize recovery of 100-500 bp DNA fragments.

- Library Preparation: Prepare sequencing libraries from the extracted cfDNA.

2. Sequencing:

- Utilize targeted and shallow genome-wide sequencing at an average depth of ~0.55x haploid genome coverage.

3. Multimodal Feature Extraction:

- Methylomics (Methylation Profiling): Identify and quantify differentially methylated regions (DMRs) across the genome.

- Fragmentomics: Calculate the fragment size distribution of cfDNA. Cancer-derived fragments often have a different size profile compared to healthy cfDNA.

- Copy Number Alteration (CNA): Assess the genome for regions with abnormal copy numbers.

- End Motifs (EMs): Analyze the frequency of 4-base sequences at the ends of DNA fragments.

4. Data Analysis and Machine Learning:

- Use the discovery cohort to train a machine learning model (e.g., ensemble classifier) to distinguish cancer from healthy controls using the extracted multi-modal features.

- Apply the trained model to the validation cohort for blinded performance evaluation of cancer detection and tissue-of-origin localization.

Quantitative Performance of Multimodal Assays

The table below summarizes the performance of different multimodal assays as reported in recent studies, demonstrating their high sensitivity and specificity.

Table 1: Performance Metrics of Multimodal ctDNA Assays

| Assay Name | Cancer Types Covered | Overall Sensitivity | Stage I Sensitivity | Stage II Sensitivity | Specificity | Tumor of Origin Accuracy |

|---|---|---|---|---|---|---|

| SPOT-MAS [31] | Breast, Colorectal, Gastric, Lung, Liver | 72.4% | 73.9% | 62.3% | 97.0% | 0.7 |

| THEMIS [30] | 7 cancer types | 73% (at 99% spec) | Reported for early-stage combined | Reported for early-stage combined | 99% | Accurate (specific metric not provided) |

Key Signaling Pathways and Biological Rationale

Multimodal assays are powerful because they tap into complementary biological pathways involved in cancer. The following diagram illustrates the relationship between these biological processes and the analytical modalities used to detect them.

Multimodal Detection of Cancer Biology

Biological Rationale:

- Mutations/CNAs arise from genomic instability, a core hallmark of cancer. However, detecting these in early-stage disease is challenging due to low variant allele frequency [6].

- Methylation changes are driven by epigenetic dysregulation, which is an early event in tumorigenesis. Methylation patterns are tissue-specific, aiding in tumor origin localization [30].

- Fragmentomics reflects aberrant chromatin and nucleosome positioning in cancer cells. This provides an independent, nongenomic source of cancer-specific information that is highly sensitive [31] [30].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Reagents and Materials for Multimodal ctDNA Analysis

| Item | Function / Explanation |

|---|---|

| cfDNA Extraction Kit (e.g., cfPure) | Rapid and efficient purification of cell-free DNA from plasma/serum, maximizing recovery of short (100-500 bp) fragments which is critical for yield [32]. |

| Enzymatic Methylation Conversion Reagents | A bisulfite-free method (e.g., using TET2/APOBEC enzymes) to detect methylation with minimal DNA damage, preserving DNA for concurrent fragmentomics analysis [30]. |

| Whole-Genome Sequencing Library Prep Kit | Prepares libraries for shallow whole-genome sequencing, enabling genome-wide analysis of fragmentation and copy number alterations. |

| Targeted Methylation Panel | A set of probes to enrich for genomic regions known to have cancer-specific methylation patterns, allowing for deeper sequencing of key areas. |

| Bioinformatic Pipelines for: - Fragment Size Analysis - Methylation Calling - Copy Number Variation - End Motif Analysis | Custom or commercial software suites are essential for processing raw sequencing data and extracting the quantitative features for each modality [31] [30]. |

| Matched Tumor Tissue DNA | For tumor-informed analysis, used to design patient-specific panels or to validate clonal mutations and distinguish them from CHIP [33]. |

| Matched Buffy Coat DNA | Serves as a germline control to filter out polymorphisms and is essential for confirming CHIP-derived mutations [6]. |

Frequently Asked Questions (FAQs)

Q1: What is the core principle behind using structural variants to reduce background noise in ctDNA detection? The core principle is that each cancer possesses a unique set of somatic structural rearrangements. PCR assays can be designed to span the specific breakpoint junctions of these rearrangements. Because these exact junctions are absent from the normal human genome, the assay will only amplify DNA from tumor-derived ctDNA, effectively eliminating false-positive signals from background noise present in normal cell-free DNA [34].

Q2: My assay has no signal or a very weak signal. What could be the cause? A weak or absent signal can result from several factors [35]:

- Low Abundance of ctDNA: The fraction of ctDNA in the total cell-free DNA may be extremely low [36].

- Reagent Issues: The reagents, such as primers or probes, may not be functional, or the quality of the isolated DNA may be poor.

- Assay Design: The designed assay may not be optimal. It is recommended to design assays for rearrangements that cause a copy number change, as these are present in the vast majority of tumor cells, and to ensure breakpoints are in unique genomic sequence to maximize specificity [34].

Q3: I am observing high background noise in my sequencing-based ctDNA assay. How can I suppress it? High background in sequencing-based assays is often caused by technical errors introduced during library preparation and sequencing [36]. To suppress this noise:

- Use Molecular Barcodes: Implement unique molecular identifiers (barcodes) to distinguish true mutations from PCR amplification errors [36].

- Apply Computational Polishing: Use specialized bioinformatics tools, such as TNER (Tri-Nucleotide Error Reducer) or iDES (integrated Digital Error Suppression), which model the background error rate using data from healthy control subjects to filter out technical artifacts [36].

- Ensure Sufficient Coverage: Use ultra-deep sequencing (e.g., >10,000x coverage) to confidently detect low-frequency variants [36].

Q4: My assay results are highly variable between replicates. What should I check? High variability often stems from technical execution [35]:

- Pipetting Errors: Use a calibrated multichannel pipette and prepare a master mix for your working solution to ensure consistency.

- Reagent Quality: Avoid using old or degraded reagents. Use fresh, newly prepared reagents for each experiment.

- Data Normalization: Incorporate an internal control for normalization. In a dual-reporter system, this helps account for variances in cell viability, transfection efficiency, and pipetting [35].

Q5: Could a structural variant near my gene of interest lead to a false-positive FISH result? Yes. Case studies have shown that structural variants with breakpoints located within the binding sequence of a FISH probe can produce a signal pattern identical to a true gene rearrangement, leading to a false-positive interpretation. In such cases, orthogonal validation with next-generation sequencing (whole-genome or RNA sequencing) is required to confirm the finding [37].

Troubleshooting Guide

| Problem | Possible Cause | Solution |

|---|---|---|

| No/Wrong Assay Window | Incorrect instrument setup or filter selection [38]. | Verify instrument setup and use exactly recommended emission filters. Test setup with control reagents [38]. |

| Weak or No Signal | Low ctDNA fraction; low transfection efficiency; non-functional reagents; weak promoter activity [36] [35]. | Check reagent functionality; optimize transfection; scale up sample volume; use a stronger promoter [35]. |

| High Background Noise | Sequencing artifacts; contaminated reagents; non-specific amplification [36]. | Use error-suppression algorithms (e.g., TNER); use fresh reagents; validate assay specificity with control DNA [36]. |

| High Variability Between Replicates | Pipetting errors; use of different reagent batches; lack of normalization [35]. | Prepare a master mix; use calibrated pipettes; normalize data using an internal control (e.g., dual-reporter assay) [35]. |

| Unexpected Negative Result | The specific SV may not be present in the metastatic lesion due to tumor heterogeneity. | Sequence the primary tumor to identify multiple, patient-specific SVs and design several independent PCR assays to track [34]. |

| Apparent False Positive in FISH | SV breakpoint within the FISH probe-binding region, not the gene itself [37]. | Confirm findings with a higher-resolution method like whole-genome sequencing or RNA sequencing [37]. |

Experimental Protocols

Protocol 1: Identifying Patient-Specific SVs via Whole-Genome Sequencing

This protocol outlines the steps for discovering tumor-specific structural variants from a primary tumor sample [34] [39].

- DNA Extraction: Extract high-quality, high-molecular-weight genomic DNA from fresh-frozen primary tumor tissue and matched normal (germline) tissue.

- Library Preparation & Sequencing: Prepare a whole-genome sequencing library. For optimal SV discovery, use long-insert paired-end sequencing (e.g., fragment sizes of 400-500 bp). Sequence the library on a next-generation sequencing platform to a sufficient physical coverage (e.g., >20X) [39].

- SV Calling: Map the sequenced reads to the reference human genome (e.g., GRCh38). Identify putative somatic genomic rearrangements as clusters of "discordantly mapping" read-pairs—pairs that do not map within the expected insert size or orientation [34].

- Validation & Annotation: Confirm rearrangements as real and somatic by performing PCR and Sanger sequencing across the rearrangement junction in both tumor and germline DNA. This provides base-pair resolution of the breakpoint [34].

Protocol 2: Detecting SVs in Plasma via qPCR

This protocol describes how to use quantitative PCR to detect and monitor tumor-specific SVs in patient plasma [34].

- Assay Design: Design nested, real-time PCR assays that amplify across the tumor-specific rearrangement junction(s) identified in Protocol 1. Criteria for assay design:

- Prefer rearrangements that cause a copy-number change.

- Ensure breakpoints are in unique genomic sequence.

- Keep the maximum PCR product size below 200 bp to accommodate fragmented ctDNA [34].

- Plasma DNA Extraction: Collect blood in EDTA-containing tubes and process within 2 hours. Isolate cell-free DNA from 2-10 mL of plasma using a commercial cfDNA extraction kit.

- qPCR Setup and Run:

- Prepare a standard curve by serially diluting tumor DNA (positive control) in normal DNA or water.

- Include controls: tumor DNA (positive), normal DNA and water (negative), and primers for a non-rearranged genomic region (to quantify total plasma DNA).

- Run the qPCR reaction using the designed junction-specific assays.

- Data Analysis: The assay can detect a single copy of the tumor genome in a background of normal DNA. Quantify the tumor DNA burden by comparing the Ct values of patient samples to the standard curve [34].

Core Concepts and Workflows

Diagram: SV-Based ctDNA Detection Principle

Diagram: Troubleshooting High Background in NGS

Research Reagent Solutions

| Item | Function |

|---|---|

| Long-Insert Paired-End Sequencing Kit | Enables genome-wide discovery of structural variants by identifying discordantly mapped read pairs [34]. |

| Cell-free DNA Extraction Kit | Isulates fragmented circulating tumor DNA from blood plasma samples for downstream analysis [34]. |

| Nested PCR Primers | Designed to span patient-specific SV breakpoint junctions; nested design increases sensitivity and specificity for detecting low-abundance ctDNA [34]. |

| Molecular Barcodes (UMIs) | Unique sequences added to DNA fragments during library prep to tag original molecules, allowing bioinformatics tools to correct for PCR and sequencing errors [36]. |

| Error-Suppression Software (e.g., TNER) | A computational tool that uses a binomial model and tri-nucleotide context to estimate and subtract background sequencing noise, enhancing variant calling specificity [36]. |

| Dual Luciferase Reporter Assay System | Used in assay development and validation to normalize for variables like transfection efficiency and cell viability, reducing experimental variability [35]. |

For researchers in oncology drug development, detecting circulating tumor DNA (ctDNA) at variant allele frequencies (VAF) below 0.1% represents both a critical capability and a significant technical challenge. Ultra-deep sequencing with error-correction methodologies enables monitoring of minimal residual disease (MRD) and therapy response, but requires meticulous optimization to distinguish true tumor-derived variants from false positives arising from sequencing artifacts and clonal hematopoiesis of indeterminate potential (CHIP) [40] [6]. This technical support guide provides actionable strategies to achieve reliable sub-0.1% VAF detection while controlling for confounding biological and technical factors.

Technical Foundations: Core Principles for Enhanced Sensitivity

Error-Correction Methodologies in NGS

Molecular Barcoding (Unique Molecular Identifiers - UMIs)

- Principle: Tag individual DNA molecules before amplification with unique barcodes [40]

- Function: Enables bioinformatic consensus building to correct for PCR and sequencing errors

- Impact: Reduces error rates from 0.5-2% to approximately 0.0001% [40]

Multiple Sequence Alignment (MSA) Approaches

- Principle: Groups similar reads and constructs alignments to identify errors [41]

- Function: Utilizes contextual information from surrounding sequences

- Advantage: Higher precision compared to k-mer based methods [41]

Machine Learning-Enhanced Correction

- Principle: Employs random decision forests to replace hand-crafted correction rules [41]

- Benefit: Reduces false-positive corrections by up to two orders of magnitude [41]

- Example: CARE 2.0 software demonstrates significantly improved precision [41]

Advanced Enzymatic and Molecular Techniques

Quantitative Blocker Displacement Amplification (QBDA)

- Principle: Integrates UMIs with blocker displacement amplification for variant enrichment [42]

- Performance: Achieves calibration-free VAF quantitation below 0.01% with low-depth sequencing [42]

- Application: Particularly valuable for MRD monitoring in AML [42]

Frequently Asked Questions (FAQs)

Q1: What is the minimum sequencing depth required to reliably detect variants below 0.1% VAF?

- A: Experimental validation indicates a minimum depth of >3,000× is required for detection at 0.4% VAF, with proportionally higher depths needed for lower VAFs [40]. For detection below 0.01% VAF, specialized methods like QBDA sequencing are recommended [42].

Q2: How does clonal hematopoiesis (CHIP) interfere with ctDNA analysis, and how can we mitigate it?

- A: CHIP mutations in hematopoietic cells can constitute up to 90% of cell-free DNA in plasma, creating false positives [6]. Effective mitigation strategies include:

Q3: What bioinformatic filters effectively reduce false positives without compromising sensitivity?

- A: Implement a multi-layered filtering approach: