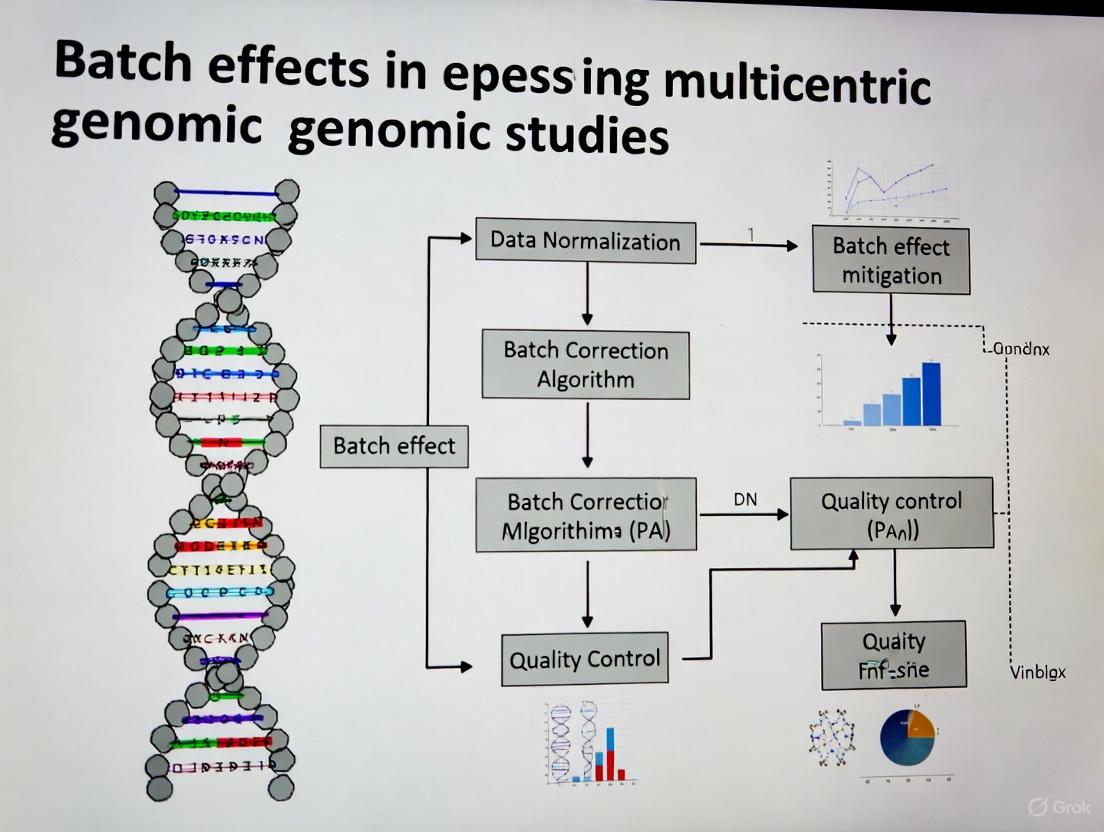

Mitigating Batch Effects in Multicentric Genomic Studies: A Comprehensive Guide from Detection to Correction

This article provides a systematic framework for researchers, scientists, and drug development professionals to address the critical challenge of batch effects in multi-center genomic studies.

Mitigating Batch Effects in Multicentric Genomic Studies: A Comprehensive Guide from Detection to Correction

Abstract

This article provides a systematic framework for researchers, scientists, and drug development professionals to address the critical challenge of batch effects in multi-center genomic studies. It covers the foundational understanding of how technical variations from different labs, platforms, and reagent batches can confound biological signals and lead to irreproducible results. The content details state-of-the-art methodologies for batch effect correction, including empirical Bayes frameworks like ComBat, deep learning approaches for single-cell data, and tools like HarmonizR for handling missing values in proteomics. It further offers practical strategies for troubleshooting and optimizing experimental design, such as the use of bridge samples and sample randomization. Finally, it outlines robust validation techniques and comparative analyses of correction algorithms to ensure biological signals are preserved while technical noise is removed, enabling reliable data integration and robust biomedical discovery.

Understanding the Enemy: What Are Batch Effects and Why Do They Threaten Genomic Discovery?

Frequently Asked Questions

What is a batch effect? A batch effect is a systematic technical variation in data caused by non-biological factors during an experiment. These variations are unrelated to the study's scientific objectives but can lead to inaccurate conclusions if their presence is correlated with an outcome of interest [1] [2] [3].

What are common causes of batch effects? Batch effects can arise at nearly every stage of a high-throughput study [1] [2] [3]. Common sources include:

- Reagent Variations: Changes in reagent lots or antibody batches [1] [4].

- Personnel Differences: Variations in technique between different technicians [1] [4].

- Instrumentation: Differences in instruments, calibration, or instrument drift over time [1] [5].

- Experimental Conditions: Variations in laboratory temperature, humidity, sample processing times, or protocols [1] [6].

- Study Design: Flawed or confounded study design where samples are not randomized across batches [2] [3].

How can I detect batch effects in my data? You can use both visual and quantitative methods to identify batch effects:

- Visual Methods:

- PCA Plot: Perform Principal Component Analysis (PCA) and color the data points by batch. Clustering of samples by batch, rather than biological condition, signals a batch effect [7] [8] [6].

- t-SNE/UMAP Plot: Visualize data using t-SNE or UMAP. If cells or samples from different batches form separate clusters, it indicates a batch effect [7] [8].

- Quantitative Metrics: Metrics like k-nearest neighbor batch effect test (kBET) or Adjusted Rand Index (ARI) provide a less biased assessment of batch effect severity [7] [8].

- Visual Methods:

What is the difference between normalization and batch effect correction? These are distinct steps that address different technical issues [7]:

- Normalization operates on the raw count matrix to correct for differences in sequencing depth, library size, and gene length across samples.

- Batch Effect Correction aims to remove technical variations caused by different sequencing platforms, reagent lots, personnel, or time. It often, but not always, uses normalized data as its input.

What are the signs of overcorrection? Overcorrection occurs when batch effect removal also removes genuine biological signal. Key signs include [7] [8]:

- Distinct biological cell types or sample groups are incorrectly clustered together.

- A complete overlap of samples from very different biological conditions.

- Cluster-specific markers are comprised of ubiquitous genes (e.g., ribosomal genes) or there is a significant overlap of markers between clusters.

- A notable absence of expected canonical markers for known cell types.

How do I choose a batch effect correction method? No single method is universally best. Selection depends on your data type and structure. The table below summarizes common algorithms. It is recommended to test multiple methods and validate the results visually and quantitatively [7] [8].

| Method Name | Primary Application | Key Principle | Considerations |

|---|---|---|---|

| ComBat-seq [6] | Bulk RNA-seq (count data) | Empirical Bayes framework | Good for small sample sizes; works directly on counts. |

| Harmony [7] [9] | Single-cell RNA-seq | Iterative clustering and correction | Fast runtime; good performance in benchmarks. |

| Seurat Integration [7] [9] | Single-cell RNA-seq | Canonical Correlation Analysis (CCA) and Mutual Nearest Neighbors (MNN) | Widely used; can have lower scalability for very large datasets. |

| MNN Correct [7] [9] | Single-cell RNA-seq | Mutual Nearest Neighbors | Can be computationally intensive. |

| SVR (in metaX) [5] | Metabolomics | Support Vector Regression | QC-based; requires quality control samples; models signal drift. |

| Ensemble Learning [10] | Genomic Classifiers | Integrates predictions from models trained per batch | A different strategy; can be more robust to high heterogeneity. |

Troubleshooting Guides

Guide 1: Diagnosing Batch Effects in Multicentric Genomic Studies

Objective: To systematically identify the presence and severity of batch effects in data from multiple centers.

Protocol:

- Data Preparation: Begin with a normalized count or expression matrix. Ensure you have comprehensive metadata that includes the batch identifier (e.g., sequencing center, processing date) and key biological variables (e.g., disease status, cell type).

- Visual Inspection with PCA:

- Perform PCA on the normalized data.

- Generate a scatter plot of the first two principal components (PC1 vs. PC2).

- Interpretation: Color the data points by

batch. If the points cluster strongly by batch, a significant batch effect is present. Next, color the points bybiological condition. If the batch-driven clustering is stronger than the biology-driven clustering, correction is necessary [6].

- Visual Inspection with UMAP:

- Quantitative Assessment:

This workflow for diagnosis can be visualized as follows:

Guide 2: Correcting Batch Effects in RNA-seq Data from Multiple Centers

Objective: To apply and evaluate a batch effect correction method for bulk RNA-seq data.

Protocol (Using ComBat-seq):

- Input Data Preparation: ComBat-seq works directly on raw count data. Ensure your data is in a count matrix (genes x samples) and you have a vector specifying the batch for each sample [6].

- Run ComBat-seq:

- In R, use the

ComBat_seqfunction from thesvapackage. - The basic command is:

- In R, use the

- Validation of Correction:

- Repeat Visualization: Perform PCA on the corrected count matrix (you may need to normalize it first for visualization). Generate the same PCA and UMAP plots as in the diagnostic guide. Successful correction should show batches mixed together, with clustering driven by biological condition [6].

- Check for Overcorrection: Ensure that known biological groups (e.g., case vs. control) remain separable after correction. A loss of this separation indicates overcorrection [8].

Guide 3: An Alternative Strategy via Ensemble Learning for Classifiers

Objective: To build a robust genomic classifier that is less sensitive to batch effects from multiple centers.

Rationale: Instead of merging and correcting data, this method builds models on individual batches and integrates their predictions, which can be more robust to high heterogeneity [10].

Protocol:

- Subset Data by Batch: Split your multi-center training data by batch or study center.

- Train Base Learners: Train a chosen prediction algorithm (e.g., random forest, logistic regression) on each batch independently.

- Integrate via Ensemble:

- Make Final Predictions: The final prediction for a new sample is a weighted average of the predictions from all base models.

The choice between a traditional correction pipeline and an ensemble approach can be guided by the nature of your data, as shown below:

The Scientist's Toolkit: Essential Research Reagent Solutions

For longitudinal or multicentric studies, proactive planning with these materials is crucial for mitigating batch effects.

| Item | Function in Mitigating Batch Effects |

|---|---|

| Bridge/Anchor Samples | A consistent control sample (e.g., aliquots from a large PBMC pool) included in every batch to monitor and quantify technical drift across batches [4]. |

| Pooled QC Samples | A quality control sample made by pooling a small amount of all experimental samples. Inserted at regular intervals during a run to monitor and model instrument drift [5]. |

| Internal Standards (Metabolomics) | Isotopically labeled compounds added to each sample to correct for variations in sample preparation and instrument response [5]. |

| Single Lot of Reagents | Using reagents (especially antibodies with tandem dyes) from a single manufacturing lot for an entire study to avoid lot-to-lot variability [1] [4]. |

| Fluorescent Cell Barcoding Kits | Kits to uniquely label individual samples with different fluorescent tags, allowing them to be pooled, stained, and acquired in a single tube, eliminating staining and acquisition variability [4]. |

FAQs: Understanding Batch Effects in Multicentric Studies

Q1: What are batch effects and why are they a critical problem in multicentric studies? Batch effects are non-biological, technical variations introduced into data due to differences in experimental conditions across different batches. These can arise from using different labs, platforms, reagent lots, or personnel [3]. They are critical because they can obscure true biological signals, reduce statistical power, and lead to misleading or irreproducible conclusions [3] [11]. In severe cases, they have led to incorrect patient classifications in clinical trials and the retraction of high-profile scientific articles [3].

Q2: What are the most common sources of batch effects? The common sources of batch effects can be categorized and are present throughout the experimental workflow:

- Laboratory and Platform Sources: Data generated in different labs, on different instruments (e.g., sequencers, scanners), or using different analysis software can exhibit strong batch effects [3] [12] [13].

- Reagent and Kit Sources: Variations between different lots of reagents, kits, or staining solutions are a frequent source of technical variation [3] [14]. Shortages can exacerbate this by forcing labs to use alternative or lower-quality reagents [14].

- Personnel and Procedural Sources: Differences in how individual technicians perform protocols (e.g., manual pipetting, sample handling) can introduce variation [15]. Personnel shortages can lead to overwork and increased error rates, while staff turnover can create expertise gaps [14] [16].

- Sample Preparation and Storage Sources: Inconsistencies in how samples are collected, processed, fixed, or stored before analysis are a major contributor to batch effects [3] [13].

Q3: In a confounded study design, why do most batch-effect correction algorithms fail? A confounded design occurs when a biological factor of interest (e.g., a specific disease group) is processed entirely in a separate batch [11]. In this scenario, the technical variation (batch effect) is perfectly mixed with the biological variation you want to study. Most computational algorithms struggle to distinguish between the two, meaning they might remove the genuine biological signal along with the technical noise [11]. Using a reference material, which is measured in every batch, provides a stable anchor to correct against. The ratio-based method is particularly effective here, as it scales the data from study samples relative to the reference, effectively canceling out the batch-specific noise [11].

Q4: How can I check if my dataset has batch effects? Several open-source tools are available to diagnose batch effects. For omics data, this often involves visualization techniques like PCA or t-SNE plots to see if samples cluster more strongly by batch (e.g., processing date) than by biological group [3] [17]. For medical images, tools like Batch Effect Explorer (BEEx) can qualitatively and quantitatively identify batch effects by analyzing image features like intensity and texture across different sites or scanners [12].

Troubleshooting Guides

Guide 1: Troubleshooting Low Yield in Sequencing Library Preparation

Low library yield is a common issue that can severely impact downstream data quality and introduce biases. The following table outlines the common causes and solutions.

| Observation / Symptom | Potential Cause | Corrective Action |

|---|---|---|

| Low labeled DNA recovery [18] or low final library concentration [15]. | Poor input DNA quality or homogeneity. DNA may be degraded or contain contaminants (phenol, salts) that inhibit enzymes. | Re-purify input DNA. Ensure homogenization and check concentration with a fluorescence-based assay (e.g., Qubit) [18] [15]. |

| Low yield determined by fluorometry [15]. | Inaccurate quantification of input DNA. UV absorbance (e.g., NanoDrop) can overestimate concentration by counting contaminants. | Use fluorometric methods (Qubit, PicoGreen) for template quantification. Calibrate pipettes and use master mixes to reduce error [15]. |

| Unexpected fragment size distribution; inefficient ligation. | Suboptimal fragmentation or ligation. Over- or under-shearing DNA, or poor ligase performance. | Optimize fragmentation parameters (time, enzyme concentration). Titrate adapter-to-insert molar ratios and ensure fresh ligase buffer [15]. |

| Sharp peak at ~70-90 bp on electropherogram (adapter dimers). | Overly aggressive purification or size selection. Using an incorrect bead-to-sample ratio leads to loss of desired fragments. | Optimize bead-based cleanup ratios. Avoid over-drying beads, which leads to inefficient resuspension [15]. |

Guide 2: Troubleshooting Batch Effects from Reagent and Personnel Variations

This guide addresses systemic issues related to resource management and personnel that can introduce batch effects across multiple experiments or sites.

| Observation / Symptom | Potential Cause | Corrective Action |

|---|---|---|

| Results vary significantly between different reagent lots. | Reagent lot-to-lot variability. Different lots may have slightly different compositions or activities. | Use reference materials. Incorporate a common reference material (e.g., Quartet reference materials) in every batch/experiment to monitor and correct for lot-specific variations [11]. |

| Intermittent, hard-to-diagnose failures that correlate with the operator. [15] | Personnel-based variation. Deviations from standard protocols due to different technicians' techniques (e.g., pipetting, mixing, timing). | Standardize and automate. Implement detailed SOPs, use master mixes, and automate repetitive tasks where possible. Introduce "waste plates" to catch pipetting errors and use checklists [15]. |

| Increased error rates and inconsistencies over time. | Personnel shortages and burnout. A shrinking workforce leads to overwork, reduced efficiency, and higher error rates [16]. | Cross-training and task prioritization. Cross-train staff to diversify skills and allow for coverage. Prioritize critical tasks and delegate effectively to manage workload [14]. |

| Inability to reproduce results from another lab. | Confounded study design and unaccounted technical variation. Biological groups are processed in separate batches/labs, and technical variation is not measured or corrected. | Implement a ratio-based correction. If a reference material was used, apply a ratio-based method (scaling study samples to the reference) to integrate data across labs [11]. |

Experimental Protocols for Batch Effect Mitigation

Protocol 1: Reference Material-Based Ratio Method for Multiomics Data

This protocol is highly effective for correcting batch effects in confounded study designs, where biological groups and batches are intertwined [11].

Methodology:

- Select a Reference Material: Choose a well-characterized and stable reference material. In the Quartet Project, reference materials are derived from immortalized cell lines available for DNA, RNA, protein, and metabolite analyses [11].

- Concurrent Profiling: In every batch of your experiment (whether defined by time, lab, or reagent lot), profile both your study samples and one or more aliquots of the reference material under identical conditions [11].

- Data Transformation: For each feature (e.g., gene, protein) in each study sample, transform the absolute measurement (e.g., intensity, count) into a ratio value. The denominator is the corresponding feature's measurement from the reference material profiled in the same batch.

- Downstream Analysis: Use the resulting ratio-scale data for all integrative downstream analyses, such as clustering, differential expression, and predictive modeling.

Protocol 2: Dissimilarity Matrix Correction for Clustering Analysis

This method directly corrects the sample-to-sample distance matrix instead of the original data matrix, which is useful for clustering applications in RNA-seq data [17].

Methodology:

- Preprocessing and Dissimilarity Calculation: Preprocess your multi-batch dataset (e.g., log transformation). Calculate an N x N dissimilarity matrix between all samples, for example, using 1 minus the correlation coefficient [17].

- Identify Reference Batch: View the dissimilarity matrix as a combination of blocks (within-batch and between-batch). Select the largest within-batch block as your reference block [17].

- Apply Interpolating Quantile Normalization: Use an algorithm to normalize all other blocks within the dissimilarity matrix with respect to the reference block. This can be done by either:

- Clustering: Perform clustering (e.g., hierarchical clustering) on the corrected dissimilarity matrix to recover the true biological sample patterns.

Visualization of Workflows and Relationships

Batch Effect Troubleshooting Pathway

Ratio-Based Correction for Confounded Designs

The Scientist's Toolkit: Key Research Reagent Solutions

The following table lists essential materials for implementing effective batch effect correction strategies.

| Item | Function in Mitigating Batch Effects |

|---|---|

| Reference Materials (e.g., Quartet) | Provides a stable, well-characterized benchmark measured across all batches and labs. Enables the ratio-based correction method, which is robust in confounded study designs [11]. |

| Master Mixes | Pre-mixed, aliquoted reagents reduce pipetting steps and operator-to-operator variation, enhancing reproducibility and minimizing personnel-based batch effects [15]. |

| Automated Liquid Handlers | Automates repetitive pipetting tasks, standardizing protocols across different users and labs, thereby reducing a major source of technical variation [16]. |

| Fluorometric Quantification Kits (e.g., Qubit) | Provides accurate, specific quantification of nucleic acids or proteins, unlike UV absorbance, which is skewed by contaminants. Prevents yield issues rooted in inaccurate input measurements [15]. |

| BEEx Software | An open-source tool for qualitatively and quantitatively assessing batch effects in medical images from different sites or scanners, enabling prescreening before analysis [12]. |

FAQs: Understanding Batch Effects and Their Consequences

What are batch effects and why are they a critical concern in genomic studies? Batch effects are technical variations introduced into high-throughput data due to changes in experimental conditions, such as different processing times, reagent lots, laboratory personnel, or sequencing instruments [2]. These variations are unrelated to the biological factors of interest but can profoundly impact data analysis. In the most benign cases, they increase variability and reduce statistical power to detect real biological signals. In worse scenarios, they can lead to incorrect conclusions, irreproducible findings, and invalidated research, potentially causing economic losses and even affecting patient treatment decisions [2].

Can you provide a real-world example of how severe the impact of batch effects can be? A stark example comes from a clinical trial where a change in the RNA-extraction solution caused a shift in gene-based risk calculations. This technical variation resulted in incorrect classification outcomes for 162 patients, 28 of whom subsequently received incorrect or unnecessary chemotherapy regimens [2]. This case highlights the direct, real-world consequences that batch effects can have on human health and treatment efficacy.

How do batch effects contribute to the "reproducibility crisis" in science? Batch effects are a paramount factor contributing to irreproducibility. A survey by Nature found that 90% of respondents believed there was a reproducibility crisis, with over half considering it significant [2]. Batch effects from reagent variability and experimental bias can lead to rejected papers, discredited research findings, and financial losses. For instance, the Reproducibility Project: Cancer Biology team failed to reproduce over half of high-profile cancer studies, with batch effects across laboratories being a significant hurdle [2].

Are batch effects still a relevant problem with modern, large-scale omics data? Yes, batch effects remain highly relevant. As data expands in size and complexity, particularly with the advent of high-resolution technologies like single-cell RNA sequencing, batch effect correction has become even more important [19]. The increased complexity of next-generation biotechnological data means increased complexities in batch effect management. Experts forecast that batch effects will not only remain relevant in the age of big data but will become even more important to address [19].

What is the key difference between normalization and batch effect correction? Normalization and batch effect correction address different technical variations. Normalization operates on the raw count matrix and mitigates issues like sequencing depth across cells, library size, and amplification bias caused by gene length. In contrast, batch effect correction mitigates variations arising from different sequencing platforms, timing, reagents, or different conditions and laboratories [7].

Troubleshooting Guides

Guide 1: How to Detect and Diagnose Batch Effects

Before correcting batch effects, you must first assess whether they are present in your data. The following workflow provides a systematic approach for detection and diagnosis.

Diagram 1: Workflow for detecting and diagnosing batch effects in omics data.

Step-by-Step Instructions:

Visual Inspection with Principal Component Analysis (PCA):

- Action: Perform PCA on your raw data and create a scatter plot of the top principal components (PCs).

- Interpretation: If the plot shows clear separation of samples based on their batch identity (e.g., all samples from batch 1 cluster together, separate from batch 2), this signals the presence of batch effects [7] [8].

- Tools: This can be done with standard statistical packages in R or Python.

Visual Inspection with t-SNE or UMAP:

- Action: Perform clustering analysis and visualize cell groups on a t-SNE or UMAP plot. Label the cells based on their batch number and biological group (e.g., case/control).

- Interpretation: In the presence of batch effects, cells from different batches tend to form distinct clusters, even if they share the same biological characteristics. After successful batch correction, you expect a more cohesive mixing of cells from different batches based on biological similarities [7] [8].

Quantitative Assessment with Metrics:

- Action: Use quantitative metrics to objectively evaluate the level of batch effect with less human bias.

- Interpretation: Several metrics can be used. The table below summarizes key metrics and their interpretation.

Table 1: Quantitative Metrics for Assessing Batch Effects [7] [8].

| Metric | Full Name | Interpretation |

|---|---|---|

| kBET | k-nearest neighbor batch effect test | Measures how well batches are mixed at a local level. Lower rejection rates indicate better correction. |

| ARI | Adjusted Rand Index | Measures the similarity between two clusterings. Used to compare clustering results before and after correction. |

| NMI | Normalized Mutual Information | Measures the agreement between two clusterings, adjusted for chance. |

| Graph iLISI | Graph-integrated Local Inverse Simpson's Index | Measures the mixing of batches in a shared neighborhood graph. Values closer to 1 indicate better mixing. |

Guide 2: How to Correct Batch Effects and Avoid Over-correction

Once batch effects are diagnosed, selecting an appropriate correction method is crucial. The following guide outlines a standard protocol for correction and validation.

Protocol: Reference-Material-Based Ratio Method for Multiomics Studies

This protocol is based on a comprehensive study from the Quartet Project, which found the ratio-based method to be highly effective, especially when batch effects are confounded with biological factors [11].

Principle: Expression profiles of each study sample are transformed to ratio-based values using expression data from a concurrently profiled reference material as the denominator. This scaling effectively minimizes technical variations across batches [11].

Table 2: Research Reagent Solutions for Batch Effect Correction.

| Item / Reagent | Function in Batch Effect Mitigation |

|---|---|

| Reference Materials (RMs) | Well-characterized control samples (e.g., Quartet Project RMs) profiled in every batch to provide a stable baseline for ratio-based scaling [11]. |

| Standardized Reagent Lots | Using the same lot of key reagents (e.g., RNA-extraction kits, enzymes) across all batches to minimize a major source of technical variation [2]. |

| Platform-Specific Controls | Controls provided by platform manufacturers (e.g., 10x Genomics, Fluidigm) to monitor technical performance within and across runs. |

Procedure:

Experimental Design:

- Plan your study so that a common reference material (RM) is included in every processing batch alongside your study samples.

- Ideally, distribute samples from different biological groups evenly across batches (a balanced design). However, the ratio method is also powerful in confounded scenarios where this is not possible [11].

Data Generation:

- Process all samples and the reference material concurrently within each batch using identical protocols.

- Generate your omics data (e.g., RNA-seq, proteomics) as per standard laboratory protocols.

Data Transformation (Ratio Calculation):

- For each feature (e.g., gene, protein) in each study sample within a batch, calculate a ratio value:

Ratio_value = Absolute_feature_value_study_sample / Absolute_feature_value_Reference_Material

- This creates a new, normalized matrix of ratio-based expression values.

- For each feature (e.g., gene, protein) in each study sample within a batch, calculate a ratio value:

Downstream Analysis:

- Use the transformed ratio-based matrix for all subsequent biological analyses (e.g., differential expression, clustering).

Validation and Checking for Over-correction:

After applying any batch correction method, it is vital to check for signs of over-correction, where genuine biological signal has been erroneously removed [7] [8].

Diagram 2: A guide to diagnosing over-correction after applying batch effect correction algorithms.

Troubleshooting Common Problems:

- Problem: Distinct biological cell types are clustered together in UMAP/t-SNE plots after correction.

- Solution: This is a sign of over-correction. Try a different, potentially less aggressive batch correction method (e.g., switch from a strong deep learning model to Harmony or Seurat) [8].

- Problem: Batch effects remain strong after correction in the PCA plot.

- Solution: The chosen method may be inadequate for the strength of your batch effect. Verify you have used the method correctly. Consider a more powerful method or ensure that you are including all relevant batch factors in your model.

- Problem: The results are not reproducible in a downstream analysis like differential expression.

- Solution: Ensure that batch was included as a covariate in your statistical model during differential analysis, rather than relying on pre-corrected data alone [6]. Also, re-check the diagnostic plots for residual batch effects.

Guide 3: Choosing a Batch Effect Correction Algorithm

The choice of algorithm depends on your data type and experimental scenario. The following table summarizes commonly used algorithms.

Table 3: Overview of Common Batch Effect Correction Algorithms (BECAs).

| Algorithm | Primary Application | Key Principle | Considerations |

|---|---|---|---|

| ComBat / ComBat-seq [6] | Bulk RNA-seq (ComBat-seq for counts) | Empirical Bayes framework to adjust for batch effects. | Powerful but can be prone to over-correction if batches are confounded with biology [11]. |

| Harmony [7] [11] | Single-cell RNA-seq, Multiomics | Iteratively clusters cells and removes batch effects via PCA-based dimensionality reduction. | Known for fast runtime and good performance in many benchmarks [8]. |

| Seurat (CCA) [7] | Single-cell RNA-seq | Uses Canonical Correlation Analysis (CCA) and Mutual Nearest Neighbors (MNNs) as "anchors" to align datasets. | Well-established and widely used, though can have lower scalability than some newer methods [8]. |

| MNN Correct [7] | Single-cell RNA-seq | Detects Mutual Nearest Neighbors (MNNs) across batches to estimate and remove the batch effect. | Computationally intensive as it works in high-dimensional gene expression space. |

| Ratio-based (e.g., Ratio-G) [11] | Multiomics (Transcriptomics, Proteomics, Metabolomics) | Scales absolute feature values of study samples relative to a concurrently profiled reference material. | Highly effective in confounded scenarios; requires careful planning to include reference material in all batches [11]. |

| scGen [7] | Single-cell RNA-seq | Employs a variational autoencoder (VAE) model trained on a reference dataset to correct batch effects. | A deep learning approach that can model complex, non-linear batch effects. |

Why should I be concerned about batch effects in my multi-center study?

Batch effects are technical variations that are unrelated to your study's biological or clinical questions. In multi-center genomic studies, where data is collected from different locations, machines, and over time, these effects are notoriously common. If not corrected, they can dilute true biological signals, reduce the statistical power of your study, and lead to increased false positives or false negatives. In the worst cases, they can cause irreproducible results, misleading conclusions, and even lead to retracted papers [3].

The table below summarizes profound real-world impacts of uncorrected batch effects.

| Case Study | Impact of Batch Effect | Consequence |

|---|---|---|

| Clinical Trial Gene Expression Analysis [3] | A change in RNA-extraction solution caused a shift in gene expression profiles. | Incorrect risk classification for 162 patients, 28 of whom received incorrect or unnecessary chemotherapy. |

| Cross-Species Transcriptomics [3] | Human and mouse data were generated 3 years apart on different platforms. | Misleading conclusion that cross-species differences outweighed cross-tissue differences; after correction, data clustered by tissue type. |

| High-Profile Retracted Paper [3] | Sensitivity of a fluorescent serotonin biosensor was dependent on the batch of fetal bovine serum (FBS). | Key results could not be reproduced when the FBS batch was changed, leading to the article's retraction. |

How can I identify if my data has significant batch effects?

Before any correction, it is crucial to diagnose the presence and extent of batch effects. The following workflow outlines a standard diagnostic process, and the table below details common methods.

| Method | Description | What to Look For |

|---|---|---|

| Principal Component Analysis (PCA) | A linear dimensionality reduction technique. | In the plot of the first few principal components, samples cluster strongly by batch (e.g., lab, sequencing run) rather than by biological condition [8]. |

| UMAP/t-SNE | Non-linear dimensionality reduction techniques. | When batch labels are overlaid on the plot, cells or samples from different batches form distinct clusters instead of mixing by cell type or disease state [8]. |

| Clustering & Heatmaps | Unsupervised clustering of samples based on gene expression. | The resulting dendrogram or heatmap shows samples primarily grouping by batch identifier [8]. |

| Quantitative Metrics (kBET) | The k-nearest neighbor batch effect test provides a quantitative score. | kBET measures local batch mixing. A low acceptance rate indicates that batches are not well-mixed, confirming a significant batch effect [19] [8]. |

What are the key methods for correcting batch effects, and how do I choose?

Multiple batch effect correction algorithms (BECAs) exist, and the choice depends on your data type and experimental design. The field is rapidly evolving, especially with the rise of single-cell technologies and deep learning methods [19].

Method 1: Empirical Bayes Frameworks (e.g., ComBat)

This is one of the most widely used families of methods. ComBat uses an empirical Bayes framework to adjust for batch-specific mean and variance, pooling information across all genes. Its Python implementation, pyComBat, has been shown to offer identical correction power to the original R version with faster computation times [20].

- Best for: Microarray and bulk RNA-seq data (ComBat-Seq for count data).

- Experimental Protocol for ComBat-Seq on RNA-seq data:

- Input Data: Start with a matrix of raw RNA-seq counts.

- Software Implementation: Use the

pycombat_seqfunction from theinmoosePython package or theComBat_seqfunction from thesvaR package. - Define Variables: Specify the

batchvariable (e.g., plate ID, sequencing run). You can also include amodargument to specify biological covariates of interest (e.g., disease status) to preserve during correction. - Output: The function returns a batch-corrected matrix of integer counts, which can be used for downstream differential expression analysis [20].

Method 2: Matrix Factorization and PCA-Based Methods (e.g., Harmony, SVA)

These methods identify and remove unwanted variation, often represented by principal components or surrogate variables, that is associated with batch.

- Best for: Single-cell RNA-seq (scRNA-seq) data integration and complex study designs.

- Experimental Protocol for Harmony on scRNA-seq data:

- Input Data: A normalized and scaled expression matrix (e.g., from Seurat or Scanpy), or a PCA projection of the data.

- Software Implementation: Use the

harmonypackage in R or Python. - Run Integration: Apply the

RunHarmonyfunction (in Seurat) or theharmonypy.runfunction in Python, providing the object, the name of the batch covariate (group.by.vars), and the number of PCA dimensions to use. - Output: A corrected low-dimensional embedding (e.g., Harmony dimensions) where cells are clustered by cell type rather than batch. These embeddings are used for downstream clustering and UMAP visualization [8].

Method 3: Bridging Control-Based Methods (e.g., BAMBOO, SERRF)

These methods require specific experimental designs that include repeated control samples (bridging controls or quality control samples) across all batches. They use these controls to model and correct for technical variation directly.

- Best for: Proteomics (e.g., PEA data), metabolomics, and lipidomics studies where such controls are feasible.

- Experimental Protocol for BAMBOO on Proteomics Data:

- Experimental Design: Include a minimum of 8-12 bridging controls (BCs) on every measurement plate. These are identical aliquots of the same sample pool [21].

- Quality Filtering: Remove BCs that are outliers based on their total batch effect amount. Proteins with too many measurements below the limit of detection should be flagged.

- Model Estimation: Use robust linear regression on the BC data to estimate plate-wide adjustment factors (slope and intercept).

- Apply Correction: Adjust the non-control samples on each plate using the estimated model and protein-specific adjustment factors [21].

The following table benchmarks several popular methods based on independent studies.

| Method | Data Type | Key Strengths | Reported Limitations / Performance |

|---|---|---|---|

| ComBat/pyComBat [20] | Microarray, bulk RNA-seq | Works with small sample sizes; fast parametric version. | Can be affected by outliers in bridging controls [21]. |

| Harmony [8] | scRNA-seq | Fast; good performance and scalability in benchmarks. | Less scalable than some newer deep learning methods [19]. |

| BAMBOO [21] | Proteomics (PEA) | Robust to outliers; corrects protein, sample, and plate-wide effects. | Requires bridging controls to be included in experimental design. |

| SERRF [22] | Metabolomics/Lipidomics | Uses random forest to model complex errors; leverages correlation between compounds. | Requires QC samples; web-based or custom script implementation. |

| limma (with PCs) [23] | Microarray | Flexible; including 2-3 PCs as covariates in the model can be effective. | Performance is best with sufficient sample size (e.g., >40 total samples) [23]. |

| scANVI [8] | scRNA-seq | Top-performing in comprehensive benchmarks; uses deep learning. | Lower computational scalability [8]. |

What are the common pitfalls and how can I avoid them?

Pitfall 1: Over-Correction and Loss of Biological Signal

Aggressive batch effect correction can sometimes remove genuine biological variation. Signs of over-correction include:

- Distinct cell types clustering together on a UMAP plot that should be separate [8].

- A complete overlap of samples from very different biological conditions, suggesting minor but important differences have been removed [8].

- Cluster-specific markers being dominated by housekeeping genes (e.g., ribosomal genes) with widespread expression [8].

- Solution: If you see these signs, try a less aggressive correction method or adjust the parameters of your current method (e.g., using fewer dimensions for correction).

Pitfall 2: Confounded Study Design

This is a critical issue that is difficult to fix computationally. It occurs when a batch variable is perfectly correlated with a biological variable of interest.

- Example: Running all control samples on one plate and all treatment samples on another plate. In this case, it is impossible to distinguish whether the differences are due to the treatment or the plate [3] [24].

- Solution: Always randomize samples across batches whenever possible. A confounded design often cannot be salvaged, highlighting the supreme importance of proper experimental planning [24].

Pitfall 3: Applying the Wrong Correction Tool

Using a method designed for bulk data on single-cell data, or vice-versa, can yield poor results. Single-cell data has unique characteristics, such as high dropout rates and cell-to-cell variation, that require specialized tools [3] [19].

- Solution: Select a BECA that is appropriate for your data type (e.g., bulk vs. single-cell, RNA-seq vs. metabolomics) and has been validated in the literature for that purpose.

Pitfall 4: Ignoring Sample Imbalance

In single-cell studies, sample imbalance (different numbers of cells per cell type across batches) is common and can negatively impact integration results.

- Solution: Be aware of this issue and refer to benchmarking studies that evaluate integration methods specifically under imbalanced conditions [8].

The Scientist's Toolkit: Essential Reagents & Materials for Batch Effect Management

Proper experimental planning is the first and best defense against batch effects. The following materials are crucial for mitigating and correcting technical variation.

| Item | Function in Batch Effect Management |

|---|---|

| Bridging Controls (BCs) | Aliquots of the same sample pool run on every batch/plate. They are used to quantify and model technical variation across runs, enabling methods like BAMBOO [21]. |

| Quality Control (QC) Samples | Similar to BCs, these are typically pooled samples analyzed at regular intervals throughout the analytical sequence. They are essential for methods like SERRF and LOESS to model and correct for instrumental drift [22]. |

| Validated Reagent Lots | Using a single, validated lot of critical reagents (e.g., fetal bovine serum, enzymes) for an entire study prevents reagent lot-specific batch effects, which have been known to cause irreproducible results and retractions [3]. |

| Internal Standards (IS) | For mass spectrometry-based omics (proteomics, metabolomics), known amounts of synthetic compounds spiked into each sample. They correct for variation in sample preparation and instrument response [25]. |

| Tissue-Mimicking Quality Control Standards | In mass spectrometry imaging (MSI), a homogeneous, synthetic material (e.g., propranolol in gelatin) spotted alongside tissue sections. It monitors technical variation from sample preparation and instrument performance [25]. |

Frequently Asked Questions

What is the "fluctuating sensitivity" problem in omics data? The core assumption in quantitative omics is that instrument readout (I) has a fixed, linear relationship with analyte abundance (C): I = f(C). In reality, the sensitivity function (f) fluctuates due to changes in experimental conditions (e.g., different reagent lots, operators, or instruments). This makes the same biological concentration appear as different measured values across batches, creating batch effects [2] [3].

What is the most reliable method to correct for these fluctuations? Evidence from large-scale multiomics studies shows that a ratio-based method is highly effective, especially in common but challenging scenarios where batch effects are completely confounded with biological groups of interest. This method scales the absolute feature values of study samples relative to those of a concurrently profiled reference material in each batch [11].

Can I correct for batch effects if my study design is flawed? While correction algorithms exist, a flawed or confounded study design remains a critical source of irreproducibility. If all samples from one biological group are processed in a single batch and all samples from another group in a different batch, it becomes nearly impossible to distinguish true biological signals from technical artifacts, even with advanced algorithms [2] [3] [11]. Proper, randomized study design is paramount.

Are batch effects more severe in any specific omics technology? Yes. Single-cell RNA-seq (scRNA-seq) technologies suffer from higher technical variations compared to bulk RNA-seq due to lower RNA input, higher dropout rates, and greater cell-to-cell variation. These factors make batch effects more complex and pronounced in single-cell data [2] [3].

Troubleshooting Guides

Problem 1: Poor Discrimination of Biological Groups After Data Integration

Issue: After integrating data from multiple batches or centers, your biological groups of interest (e.g., healthy vs. diseased) do not cluster together in a PCA plot.

Diagnosis: This indicates that technical variations (batch effects) are stronger than the biological signal, obscuring the patterns you want to study.

Solution:

- Diagnose: Use Principal Component Analysis (PCA) to visualize your data before correction. Color the data points by batch and by biological group. If points cluster strongly by batch, a batch effect is present.

- Correct: Apply a batch effect correction algorithm (BECA). The following table summarizes the performance of various methods in different scenarios, based on large-scale multiomics assessments [11].

Table 1: Performance Overview of Batch Effect Correction Algorithms (BECAs)

| Algorithm | Brief Description | Balanced Batch-Group Scenario | Confounded Batch-Group Scenario |

|---|---|---|---|

| Ratio-Based (e.g., Ratio-G) | Scales data relative to a common reference material measured in each batch. | Effective | Highly Effective - Highly recommended for this challenging case |

| ComBat | Empirically Bayesian framework to adjust for batch effects. | Effective | Limited Effectiveness - Can remove biological signal |

| Harmony | Uses PCA and clustering to integrate datasets. | Effective | Performance varies by data type and structure |

| BMC (Per Batch Mean-Centering) | Centers the mean of each feature within a batch to zero. | Effective | Not Recommended - Fails in confounded designs |

| SVA | Estimates and removes surrogate variables representing batch. | Effective | Limited Effectiveness |

| RUV (RUVg, RUVs) | Uses control genes or samples to remove unwanted variation. | Effective | Limited Effectiveness |

Implementation Protocol: Ratio-Based Correction

- Materials: A well-characterized, stable reference material (e.g., commercial reference standards or a pooled sample from your study).

- Method:

- Experimental Design: Include the same reference material in every batch of your experiment.

- Data Generation: Process study samples and the reference material concurrently under identical conditions in each batch.

- Calculation: For each feature (e.g., gene, protein) in a given batch, transform the absolute measurement (Istudy) of every study sample into a ratio relative to the measurement of the reference material (Iref) in the same batch.

- Formula:

Corrected_Value = I_study / I_ref

This workflow transforms your data from an absolute intensity scale, which is susceptible to fluctuating sensitivity (f), to a relative ratio scale, which is more stable and comparable across batches [11].

Problem 2: Inconsistent Findings in a Longitudinal or Multi-Center Study

Issue: Biomarkers or differential features identified in one batch, center, or timepoint fail to validate in another.

Diagnosis: This is a classic symptom of batch effects confounding biological conclusions. In longitudinal studies, the timing of sample processing is often perfectly correlated with the exposure time, making it impossible to separate technical from biological changes [2] [3].

Solution:

- Standardize with Reference Materials: Implement a standardized operating procedure (SOP) across all centers and timepoints that mandates the use of common reference materials in every processing batch.

- Utilize Reference-Based BECAs: Apply the ratio-based method during data analysis to anchor all measurements to a consistent baseline [11].

- Centralized QC: If possible, perform all assays in a single, centralized lab. If not, establish a system where a central lab distributes pre-qualified reference materials and reagents (e.g., RNA-extraction solutions, reagent lots) to all participating centers to minimize variability at the source [2] [26].

Problem 3: Failure to Reproduce Published Results

Issue: You cannot replicate a key finding from a high-profile paper in your own lab.

Diagnosis: Batch effects are a paramount factor contributing to the "reproducibility crisis" in science. Differences in reagent batches (e.g., fetal bovine serum) or other unrecorded experimental conditions can make results irreproducible [2] [3].

Solution:

- Troubleshoot Reagents: If a specific reagent is critical to the protocol, attempt to source it from the same lot used in the original publication.

- Document Everything: Meticulously record all protocol details, including reagent lot numbers, instrument models, software versions, and operator IDs. This metadata is crucial for diagnosing batch effects later.

- Validate with Orthogonal Methods: Where possible, confirm key omics findings using an orthogonal, low-throughput method (e.g., qPCR for transcriptomics, Western blot for proteomics) to ensure the finding is biological and not a technical artifact [27].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Materials for Mitigating Batch Effects

| Item | Function in Batch Effect Control |

|---|---|

| Certified Reference Materials (CRMs) | Commercially available or community-developed standards (e.g., from the Quartet Project) with well-characterized properties. Provides a gold-standard baseline for scaling data across batches [11]. |

| Internal Standard Pool | A pooled sample created from a representative subset of your own study samples. Serves as a cost-effective, study-specific reference material for ratio-based correction. |

| Standardized Reagent Lots | Purchasing large lots of critical reagents (e.g., enzymes, buffers, serum) for use across an entire multi-center study. Minimizes a major source of technical variation [2] [26]. |

| Process Control Samples | Samples with known expected values (e.g., synthetic spikes) that are not part of the biological study. Used to monitor the performance and sensitivity (f) of the assay platform over time. |

The Correction Toolbox: Statistical and Deep Learning Methods for Data Harmonization

Frequently Asked Questions (FAQs)

1. What is the core principle behind the ComBat method for batch effect correction? ComBat and its successors, like ComBat-seq and ComBat-ref, use an empirical Bayes framework to adjust for systematic non-biological variations, or batch effects, in datasets. These methods estimate parameters for location (additive effects) and scale (multiplicative effects) from the data itself and use these estimates to adjust the data from all batches towards a common overall mean, thereby preserving biological signals while removing technical artifacts [28] [3].

2. My data is RNA-seq count data. Should I use the original ComBat or ComBat-seq? You should use ComBat-seq. The original ComBat was designed for microarray data or normalized, continuous data. ComBat-seq uses a negative binomial regression model specifically for RNA-seq count data, which preserves the integer nature of the counts and has been shown to provide better statistical power for downstream differential expression analysis [28].

3. What is the key innovation in the newer ComBat-ref method? ComBat-ref introduces a reference batch approach. It selects the batch with the smallest dispersion as a reference and adjusts all other batches toward this reference. This strategy is particularly effective when batches have different dispersion parameters, as it helps maintain high statistical power comparable to data without batch effects [28].

4. Can batch effects really lead to incorrect scientific conclusions? Yes, profoundly. The literature documents instances where batch effects, such as a change in RNA-extraction solution, led to incorrect gene-based risk calculations for patients, some of whom subsequently received incorrect chemotherapy regimens. In other cases, what appeared to be significant cross-species differences were later attributed to batch effects after re-analysis [3].

5. What are some common sources of batch effects I should document? Batch effects can originate at nearly every stage of a study [3]:

- Study Design: Non-randomized sample collection or a confounded design where batch is correlated with an outcome.

- Sample Preparation: Variations in reagents, kits, personnel, or lab protocols.

- Instrumentation: Using different machines or the same machine calibrated differently over time.

- Data Generation: Processing samples in different sequencing lanes or on different days.

Troubleshooting Guides

Problem: Low Statistical Power After Batch Correction You run ComBat but find that your downstream differential expression (DE) analysis has low sensitivity (high false negative rate).

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| High dispersion differences between batches. | Check the dispersions of your batches before correction. A large disparity suggests this issue. | Consider using ComBat-ref, which is specifically designed to handle batches with varying dispersions by aligning them to a stable reference batch [28]. |

| Using ComBat on raw counts. | Check your input data format. | For RNA-seq count data, use ComBat-seq instead of the standard ComBat to properly model the data distribution [28]. |

| Over-correction removing biological signal. | If possible, compare the corrected data to a known biological truth. | Ensure your model is correctly specified. If the batch effect is mild, using a simpler model (e.g., including batch as a covariate in DESeq2 or edgeR) might be preferable [3]. |

Problem: Inconsistent or Misleading Results After Correction The batch-corrected data yields results that contradict established biology or show unexpected patterns.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Batch effect is confounded with a biological variable of interest (e.g., all cases processed in one batch and controls in another). | Examine your study design matrix. Check for high correlation between batch and biology. | This is a fundamental design flaw that is extremely difficult to correct computationally. The best solution is prevention through randomized sample processing. Results from confounded studies should be interpreted with extreme caution [3]. |

| The chosen method is inappropriate for the data type. | Verify that the assumptions of your chosen ComBat method match your data (e.g., counts vs. continuous). | Switch to the method suited for your data (e.g., ComBat-seq for counts). Exploratory data analysis (PCA plots) before and after correction can help assess performance [28] [3]. |

Experimental Protocols & Data Presentation

Summary of ComBat-ref Performance vs. Other Methods The following table summarizes key comparative metrics as reported in simulation studies. Performance was evaluated based on the ability to detect differentially expressed (DE) genes while controlling false discoveries [28].

| Method | Data Type | Key Model / Approach | True Positive Rate (TPR) | False Positive Rate (FPR) | Key Use Case |

|---|---|---|---|---|---|

| ComBat-ref | RNA-seq Counts | Negative Binomial GLM with Reference Batch | High (Comparable to batch-free data) | Controlled with FDR | Batches with significant dispersion differences |

| ComBat-seq | RNA-seq Counts | Negative Binomial GLM with Mean Dispersion | High (but lower than ComBat-ref with high disp_FC) | Controlled with FDR | Standard batch correction for count data |

| ComBat | Microarray / Continuous | Empirical Bayes on Normalized Data | Moderate | Low | Continuous, normally distributed data |

| NPMatch | Various | Nearest-Neighbor Matching | Good | High (>20% in simulations) | -- |

Detailed Methodology for Implementing ComBat-ref This protocol outlines the key steps for implementing the ComBat-ref method as described in the literature [28].

Data Preparation and Modeling:

- Format your RNA-seq data as a count matrix (genes x samples).

- Model the count data using a negative binomial generalized linear model (GLM). The model is specified as:

log(μ_ijg) = α_g + γ_ig + β_cjg + log(N_j) - Where:

μ_ijgis the expected expression of gene g in sample j from batch i.α_gis the global background expression for gene g.γ_igis the effect of batch i on gene g.β_cjgis the effect of biological condition c on gene g.N_jis the library size for sample j (can be replaced with other normalization factors).

Dispersion Estimation and Reference Selection:

- Pool the gene count data within each batch and estimate a batch-specific dispersion parameter,

λ_i. - Compare the dispersion estimates for all batches.

- Select the batch with the smallest dispersion (

λ_1) as the reference batch.

- Pool the gene count data within each batch and estimate a batch-specific dispersion parameter,

Data Adjustment:

- For all batches

ithat are not the reference batch, adjust the gene expression levels using the formula:log(μ~_ijg) = log(μ_ijg) + γ_1g - γ_ig - This adjusts the expected counts from other batches toward the reference batch's parameters.

- Set the adjusted dispersion for all batches to that of the reference batch,

λ~_i = λ_1.

- For all batches

Count Adjustment:

- The final adjusted counts,

n~_ijg, are calculated by matching the cumulative distribution function (CDF) of the original distributionNB(μ_ijg, λ_i)at the original countn_ijgand the CDF of the adjusted distributionNB(μ~_ijg, λ~_i)at the new countn~_ijg. This ensures the adjusted data remains as integer counts.

- The final adjusted counts,

Workflow Visualization

The following diagram illustrates the logical workflow and decision points for applying ComBat methods within a genomic analysis pipeline.

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational tools and statistical concepts essential for implementing ComBat and related batch correction methods.

| Item / Concept | Function / Description | Relevance to Combat |

|---|---|---|

| Negative Binomial Model | A statistical distribution used to model count data where the variance exceeds the mean (overdispersion). | ComBat-seq and ComBat-ref use this model instead of a normal distribution, making them suitable for raw RNA-seq count data [28]. |

| Empirical Bayes Framework | A statistical method that borrows information across all genes to compute stable parameter estimates for batch effects. | The core of all ComBat methods; it provides robust estimates of batch effect parameters, especially for studies with a small number of samples per batch [28] [3]. |

| Dispersion Parameter | In negative binomial models, this parameter quantifies the extra variance (overdispersion) in the data beyond what a Poisson model would expect. | ComBat-ref innovates by using this parameter to select the most stable batch as a reference, improving correction when dispersions vary widely [28]. |

| R Statistical Language | An open-source programming environment for statistical computing and graphics. | The primary platform for running ComBat and its variants (e.g., via the sva package). Essential for implementing the described methodologies [28]. |

| DESeq2 / edgeR | Bioconductor packages specifically designed for differential expression analysis of RNA-seq count data. | The primary tools for downstream analysis after using ComBat-seq or ComBat-ref. These tools use the adjusted integer counts for robust DE analysis [28]. |

Frequently Asked Questions (FAQs)

Q1: What makes deep learning-based autoencoders superior to traditional methods for single-cell data integration?

Autoencoders, particularly variational autoencoders (VAEs), offer a powerful framework for single-cell data integration because they can learn complex, non-linear relationships in the data and project it into a lower-dimensional, batch-corrected latent space [19]. Unlike linear methods, they are better equipped to handle the high dimensionality, sparsity, and technical noise inherent in single-cell RNA-sequencing (scRNA-seq) data [29]. They scale linearly with the number of cells, making them suitable for datasets of millions of cells [29]. Furthermore, their flexibility allows for the incorporation of specialized count-based loss functions (e.g., Negative Binomial or Zero-Inflated Negative Binomial) that appropriately model scRNA-seq data, unlike mean squared error loss used on log-transformed data [29] [30].

Q2: My integrated data shows good batch mixing, but my known cell types are no longer distinct. What is happening?

This is a classic sign of overcorrection or loss of biological variation [31]. It occurs when the integration process removes not only technical batch effects but also biologically meaningful signal. This can happen if:

- The integration method is too aggressive: Methods that do not distinguish between biological and technical variation, such as those relying solely on high Kullback-Leibler (KL) divergence regularization, can strip away both [32].

- Incorrect assumptions of cell type alignment: Some methods, particularly those using adversarial learning, may incorrectly "mix" cell types that have unbalanced proportions across batches if the model assumes all differences are technical [32].

- Solution: Consider using a semi-supervised approach that incorporates prior cell type knowledge (e.g., STACAS, scANVI) to guide the integration and preserve biological structure [31]. Alternatively, explore models with better priors and consistency losses, such as those combining VampPrior and cycle-consistency (e.g., sysVI) [32].

Q3: How do I choose the right noise model or loss function for my autoencoder?

The choice depends on your scRNA-seq technology and the characteristics of your data [29]:

- Negative Binomial (NB): Recommended for UMI-based datasets (e.g., 10x Genomics), where evidence for zero-inflation is often weaker [29]. It models overdispersed count data effectively.

- Zero-Inflated Negative Binomial (ZINB): May be more suitable for non-UMI, full-length transcript protocols where dropout events are more pronounced [29].

- Guidance: You can perform a likelihood ratio test between NB and ZINB fits on your data to determine if zero-inflation is statistically significant [29]. Starting with a Negative Binomial model is often a good default for UMI data.

Q4: What are the key architectural choices for building an effective denoising autoencoder for scRNA-seq data?

Empirical studies provide specific guidance for optimizing autoencoder design [30]:

- Architecture: Deeper and narrower networks (more layers with fewer neurons per layer) generally lead to better imputation performance compared to wide, shallow networks.

- Activation Functions: The sigmoid and tanh activation functions consistently outperform ReLU and others for scRNA-seq imputation tasks. This differs from common practices in computer vision.

- Regularization: Applying regularization (e.g., L1/L2) improves imputation accuracy and the quality of downstream analyses like cell clustering and differential expression.

Troubleshooting Common Experimental Issues

Problem: Failure to Integrate Datasets with Substantial Batch Effects

Scenario: You are trying to integrate datasets from different biological systems (e.g., human and mouse, organoid and primary tissue, single-cell and single-nuclei RNA-seq), and standard cVAE methods are failing to align the batches effectively.

Diagnosis: Standard cVAE models and their default regularization may be insufficient for "substantial batch effects" where technical and biological differences are deeply confounded [32].

Solution: Implement an advanced cVAE model with stronger and more specific regularization constraints.

- Recommended Model: Use a model that combines a VampPrior and cycle-consistency loss (e.g., the sysVI model) [32].

- Why it works:

- VampPrior: A multimodal prior that better captures the complex structure of biological data, improving the preservation of biological variation.

- Cycle-Consistency Loss: Ensures that translating a cell's profile from one batch to another and back again preserves its original identity, leading to more accurate and biologically meaningful integration.

- Avoid: Relying solely on increasing KL divergence regularization strength, as it removes both biological and technical information non-discriminately [32].

Problem: Inefficient Scaling and Long Runtime with Large Datasets

Scenario: The data integration process is prohibitively slow or runs out of memory when processing a large-scale dataset (e.g., >100,000 cells).

Diagnosis: Not all methods are optimized for computational efficiency on large data. The choice of algorithm and its implementation critically impacts scalability.

Solution:

- Select Scalable Algorithms: Prioritize methods known for their efficiency. Benchmarks indicate that Harmony, LIGER, and Seurat are capable of handling large datasets [33]. Due to its fast runtime, Harmony is often recommended as a first attempt [33].

- Leverage GPU Acceleration: Many deep learning frameworks (e.g., scvi-tools) support GPU acceleration, which can dramatically reduce training times for models like scVI, scANVI, and DCA [29].

- Check Implementation: Ensure you are using the most recent versions of software and following best practices for large data, such as using sparse matrix representations and appropriate batch sizes.

Problem: Poor Integration Results on Complex Data with Cell Type Imbalances

Scenario: Integration works well for abundant cell types but fails for rare cell populations, or it incorrectly merges distinct cell types that are unique to different batches.

Diagnosis: This is a common challenge when batches have highly variable cell type compositions. Unsupervised methods may mistake a rare cell type for noise or incorrectly align transcriptionally similar but biologically distinct cell types.

Solution: Adopt a semi-supervised integration strategy.

- Method: Use tools like STACAS or scANVI that can incorporate prior cell type annotations [31].

- Workflow:

- Generate an initial, preliminary cell type annotation (e.g., by clustering and annotating each batch individually or using an automated classifier).

- Provide these labels—even if they are incomplete or partially inaccurate—to the semi-supervised integration algorithm.

- The method will use this information to guide the search for mutual nearest neighbors (MNNs) or to structure the latent space, ensuring that only cells of the same type are aligned across batches, thereby preserving rare populations and preventing incorrect merging [31].

- Robustness: These methods are designed to be robust to incomplete and imprecise input labels, making them practical for real-world scenarios [31].

Experimental Protocols & Methodologies

Detailed Protocol: Denoising scRNA-seq Data with a Deep Count Autoencoder (DCA)

This protocol outlines the steps for denoising single-cell data using DCA, which can also serve as a preprocessing step for integration [29].

I. Preprocessing

- Data Input: Start with a raw count matrix (cells x genes). Filter out low-quality cells and genes based on standard QC metrics (mitochondrial counts, number of genes/cell).

- Normalization: Normalize the count data for sequencing depth (e.g., counts per 10,000) and log-transform. Optionally, identify highly variable genes to subset the matrix and reduce computational load.

II. Model Configuration and Training

- Software Installation: Install DCA via Python PIP (

pip install dca) or use its integration within the Scanpy preprocessing package. - Noise Model Selection: Choose the appropriate noise model based on your data. As a default for UMI data, use the Negative Binomial (

--type nb) model. For non-UMI data, consider Zero-Inflated Negative Binomial (--type zinb). A likelihood ratio test can guide this choice [29]. - Architecture: The default DCA architecture is typically three hidden layers (e.g., 64, 32, 64 neurons). This follows the "deeper and narrower" principle found to be effective [30].

- Training: Execute the DCA command on your input data. For example:

dca your_data.h5ad output_dir --type nb. The model will learn to reconstruct a denoised expression matrix.

III. Output and Downstream Analysis

- Output: The primary output is a denoised expression matrix, where the counts have been imputed and technical noise suppressed.

- Analysis: Use this denoised matrix for downstream tasks like clustering, visualization (PCA, UMAP), and trajectory inference. Denoising with DCA has been shown to improve the clarity of these analyses [29].

Workflow Diagram: Semi-Supervised Integration with STACAS

The following diagram illustrates the workflow for the STACAS integration method [31].

Performance Benchmarking Data

Table 1: Benchmarking of Batch-Effect Correction Methods

A comprehensive benchmark evaluating 14 methods across multiple datasets provides the following insights into popular algorithms [33].

| Method | Underlying Principle | Scalability to Large Datasets | Key Strength / Recommended Scenario |

|---|---|---|---|

| Harmony | Linear PCA with iterative clustering | Excellent / Fastest runtime | General-purpose; first choice for balanced and confounded scenarios [11] [33]. |

| Seurat v4 | CCA + Mutual Nearest Neighbors (MNN) | Good | High performance in preserving biological variation; robust for diverse data [33]. |

| LIGER | Integrative Non-negative Matrix Factorization (iNMF) | Good | Separates shared and dataset-specific factors; good for cross-species or when biological differences are expected [33]. |

| scVI / scANVI | Variational Autoencoder (VAE) | Excellent (with GPU) | Handles non-linear effects; scalable. scANVI (semi-supervised) improves performance with cell type labels [31] [33]. |

| FastMNN | PCA + Mutual Nearest Neighbors | Good | A fast variant of the original MNN approach, effective for many use cases [33]. |

| DCA | Deep Count Autoencoder | Excellent (with GPU) | Superior for denoising and imputation as a preprocessing step; uses count-based loss [29]. |

Table 2: Evaluation Metrics for Integration Quality

It is crucial to use multiple metrics to evaluate integration success, balancing batch mixing with biological preservation [31].

| Metric | What It Measures | Ideal Value | Interpretation Notes |

|---|---|---|---|

| Cell-type LISI (cLISI) | Preservation of biological variation (cell type separation). | Close to 1 | Measures local cell type purity. A value of 1 indicates all neighbors are the same cell type [31]. |

| Integration LISI (iLISI) | Mixing of batches. | Close to the number of batches being mixed. | Measures local batch diversity. Can be misleading if biological variation is also removed [31]. |

| Per-Cell-type iLISI (CiLISI) | Mixing of batches within the same cell type. | Close to 1 (normalized) | Recommended. A cell type-aware batch mixing metric that does not penalize biological separation [31]. |

| Cell-type ASW | Separation between different cell types. | Close to 1 | Average silhouette width for cell labels. Higher values indicate better-defined clusters [31]. |

| kBET | Local batch mixing based on chi-square test. | Low rejection rate (e.g., <0.1) | Measures if local batch composition matches the global expectation. A low rejection rate indicates good mixing [33]. |

Table 3: Key Computational Tools for Autoencoder-Based Integration

| Tool / Resource | Function | Key Feature | Access |

|---|---|---|---|

| scvi-tools (Python) | A comprehensive library for deep probabilistic analysis of single-cell omics. | Contains implementations of scVI, scANVI, and other VAE models. The sysVI model for substantial batch effects is also available here [32]. | https://scvi-tools.org |

| DCA (Python CLI) | Denoising single-cell data using a deep count autoencoder. | Specialized for scRNA-seq count data with NB/ZINB loss; often used as a preprocessing step [29]. | pip install dca / GitHub |

| STACAS (R) | Semi-supervised integration using reciprocal PCA and cell type labels. | Leverages prior cell type knowledge to guide integration and prevent overcorrection [31]. | GitHub |

| Seurat (R) | A general toolkit for single-cell genomics, including integration. | Provides the popular Seurat Integration (anchor-based) method and workflows [33]. | https://satijalab.org/seurat/ |

| Scanpy (Python) | A general toolkit for single-cell genomics, including integration. | Works seamlessly with scvi-tools and DCA; provides a full ecosystem for analysis [29]. | https://scanpy.readthedocs.io |

HarmonizR FAQs: Core Principles and Applications

What is the primary function of HarmonizR?

HarmonizR is a data harmonization tool designed to reduce batch effects across independent proteomic and metabolomic datasets without relying on data imputation. It achieves this through a missing-value-tolerant matrix dissection strategy, enabling the use of established batch-effect correction methods like ComBat and limma's removeBatchEffect() on datasets with significant missing values [34].

How does HarmonizR's approach to missing values differ from imputation? Traditional imputation methods estimate missing values, which can be error-prone and skew results if the values are not missing at random. HarmonizR instead dissects the data matrix into smaller sub-matrices containing proteins/features present in common sets of batches. It performs batch-effect correction on these complete sub-matrices before recombining them, thereby preserving the integrity of the original data and avoiding the introduction of imputation-related artifacts [34].

What types of batch effects can HarmonizR correct? HarmonizR has been successfully demonstrated to correct for technical variances arising from different tissue preservation techniques, varied LC-MS/MS instrumentation setups, and diverse quantification approaches in proteomic studies. It is designed to handle the complex batch effects common in multi-center, multi-platform omics studies [34] [3].

What are the key recent improvements to the HarmonizR framework? Recent updates to HarmonizR introduce two major enhancements:

- Blocking Strategy: Neighboring batches are grouped and treated as a single unit during matrix dissection, drastically reducing the number of sub-matrices and improving computational runtime without affecting the granularity of the batch-effect correction [35].

- Singular Feature Adjustment: Features that would otherwise be discarded because they appear in a unique combination of batches are "cropped" to fit a more common batch pattern, rescuing valuable data. This has been shown to increase feature rescue by up to 103.9% in tested datasets [35].

Troubleshooting Common Experimental Issues

My dataset has a very large number of batches, and HarmonizR is running slowly. What can I do?

Use the blocking parameter. This is a strategy specifically implemented to address runtime inefficiency in datasets with many batches. By blocking batches together (e.g., setting the parameter to 2 groups two neighboring batches as one during dissection), you severely reduce the number of sub-matrices created, which accelerates processing. The batch effect correction itself still operates on the original, unblocked batch information [35].

After data integration, I am concerned about losing proteins that only appear in one batch. Does HarmonizR handle these? Yes. Proteins or metabolites that are detected in only a single batch do not undergo harmonization, as there is no cross-batch technical variation to correct. However, these features are not discarded; they are retained and added back into the final harmonized matrix, ensuring all available data is preserved for downstream analysis [34].

What should I do if I suspect my study design has confounded batch and biological effects? This is a challenging scenario where biological groups of interest are completely aligned with batch groups (e.g., all controls in one batch and all cases in another). While HarmonizR is an effective tool, its performance, like most batch-effect correction algorithms, can be limited in severely confounded scenarios. The best practice is proactive study design. If possible, process samples in a randomized order across batches. Furthermore, consider using a ratio-based scaling approach with reference materials, where expression profiles of study samples are transformed relative to a common reference sample processed in every batch. This method has been shown to be particularly effective in confounded designs [36].

Experimental Protocols and Data

Protocol: Implementing HarmonizR for Proteomic Data Integration

- Input Data Preparation: Combine your individual, pre-processed datasets into a single matrix. Rows should represent proteins, and columns should represent samples. The matrix must include batch annotation for each sample.

- Matrix Dissection: The HarmonizR algorithm scans the input matrix and creates sub-data frames. Each sub-matrix contains only those proteins that share an identical pattern of batch presence (i.e., are found in the same combination of batches) [34].

- Batch Effect Correction: For each sub-matrix, run the selected batch-effect correction algorithm (ComBat or limma's

removeBatchEffect()). ComBat can be used in parametric or non-parametric mode, with or without scale adjustment, depending on the distribution of your data [34]. - Matrix Reintegration: The corrected sub-matrices are merged to reconstruct the full, harmonized dataset. Proteins found in only one batch are added back to this final matrix without correction [34].

Performance Comparison of Batch-Effect Correction Strategies