Mastering Noise in cfDNA Sequencing: A Comprehensive Guide to Preprocessing Techniques for Reliable Clinical Data

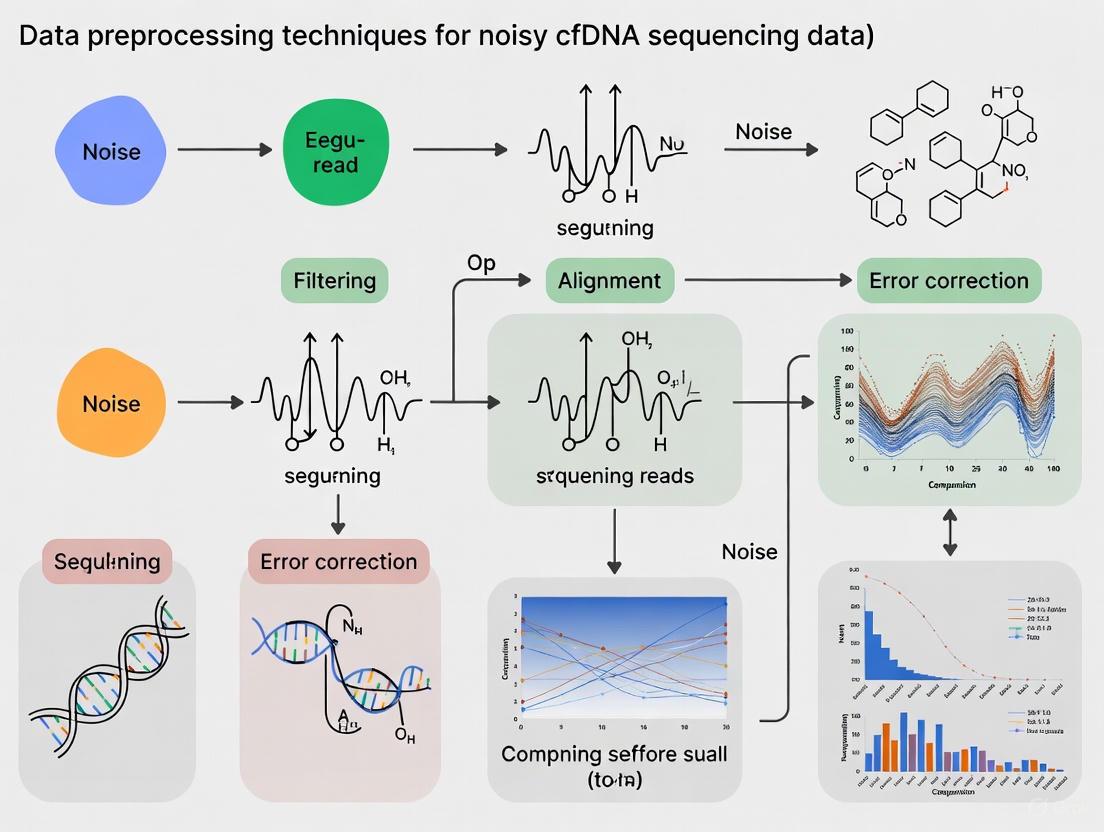

This article provides a comprehensive overview of data preprocessing techniques designed to mitigate noise in cell-free DNA (cfDNA) sequencing data, a critical challenge in liquid biopsy applications.

Mastering Noise in cfDNA Sequencing: A Comprehensive Guide to Preprocessing Techniques for Reliable Clinical Data

Abstract

This article provides a comprehensive overview of data preprocessing techniques designed to mitigate noise in cell-free DNA (cfDNA) sequencing data, a critical challenge in liquid biopsy applications. Aimed at researchers, scientists, and drug development professionals, it covers the foundational sources of noise, from pre-analytical variables to bioinformatic artifacts. The content explores a suite of methodological solutions, including novel machine learning and optimal transport algorithms, and offers practical troubleshooting and optimization strategies. Finally, it presents a framework for the validation and comparative analysis of different preprocessing tools, emphasizing their impact on downstream clinical interpretation and the future trajectory of reliable cfDNA analysis in precision medicine.

Understanding the Signal and the Noise: Foundational Concepts in cfDNA Sequencing Artifacts

FAQs: Understanding Noise in Sensitive Sequencing

What constitutes "noise" in the context of low-frequency variant detection? In sensitive sequencing applications like cell-free DNA (cfDNA) analysis, noise encompasses both biological and technical artifacts that obscure true genetic variants. Biological noise includes environmental contamination from reagents or sample collection, while technical noise arises from sequencing errors, alignment inaccuracies, and annotation errors in reference genomes. In low-biomass samples, such as microbial cfDNA, this contamination can represent a significant portion of the sequenced material, sometimes exceeding 100 pg of DNA, which critically impacts the interpretation of results [1] [2].

Why is low-frequency variant detection particularly vulnerable to noise? The detection of low-frequency variants is vulnerable because the signal from true variants (such as a cancer mutation in ctDNA or a microbial pathogen in metagenomic cfDNA) can be at a similar or even lower level than the background error rate of the sequencing process itself. For instance, the variant allele frequency (VAF) for early cancer detection or monitoring minimal residual disease can be below 0.01% [3]. At this level, the true signal is easily drowned out by stochastic sequencing errors and systematic biases.

How does data preprocessing influence the false positive rate? The choice of data preprocessing tools and algorithms directly impacts the balance between sensitivity and specificity. Inadequate preprocessing can lead to significant fluctuations in mutation frequency detection and even cause completely erroneous results in downstream applications like HLA typing [4]. One study demonstrated that a specialized bioinformatics filter (LBBC) dramatically improved specificity for urinary tract infection diagnosis from 3.3% to 91.8%, while maintaining 100% sensitivity, by systematically removing digital and physical contamination [1] [2].

Troubleshooting Guides: Identifying and Resolving Common Issues

Guide 1: Addressing High False Positive Variant Calls

Problem: An unusually high number of low-frequency variants are detected, many of which are suspected to be false positives.

| Possible Cause | Investigation | Corrective Action |

|---|---|---|

| Environmental Contamination | Check for batch-specific covariation in the abundance of microbial taxa or background alleles [1] [2]. | Implement batch variation analysis to identify and filter contaminants. Include and sequence negative controls (e.g., no-template controls) in every batch [1]. |

| Inhomogeneous Genome Coverage | Compute the coefficient of variation (CV) in per-base coverage for identified species or regions. Compare it to the CV of a uniformly sampled genome [1] [2]. | Filter out taxa or genomic regions where the observed CV significantly exceeds the expected uniform CV, as this indicates alignment crosstalk [1]. |

| Inadequate Data Preprocessing | Evaluate the quality scores along reads and the adapter content in raw FASTQ files. | Select a preprocessing tool (e.g., Cutadapt, FastP, Trimmomatic) carefully, as their performance can vary and directly impact downstream analysis [4]. |

Guide 2: Managing Poor Sequencing Quality in cfDNA Experiments

Problem: Sequencing data from cfDNA samples has low-quality scores, high background noise, or a low signal-to-noise ratio, making variant calling challenging.

| Possible Cause | Investigation | Corrective Action |

|---|---|---|

| Suboptimal Library Preparation | Review the library preparation kit's compatibility with short, fragmented cfDNA. | Use library prep protocols optimized for short, degraded cfDNA, such as single-stranded DNA library preparation, which can improve recovery of microbial cfDNA by up to 70-fold [1] [2]. |

| Low Input DNA Quality/Quantity | Use capillary electrophoresis (e.g., Bioanalyzer) to profile cfDNA fragment size. A peak at ~167 bp indicates good quality [3]. | Optimize plasma separation using a two-step centrifugation protocol to prevent genomic DNA contamination. Use specialized blood collection tubes (e.g., Streck cfDNA BCT) if processing delays are expected [3]. |

| Sequencer-Specific Issues | Compare the error rate distribution of your run to high-quality reference datasets (e.g., from GIAB) [5]. | For data with high error rates, consider using variant callers that are more robust to noise or applying more stringent post-processing filters. Be aware that low-quality data can significantly increase computational processing time [5]. |

Experimental Protocols: Methodologies for Robust Detection

Protocol: Implementing the Low Biomass Background Correction (LBBC) Filter

The LBBC workflow is designed to filter both digital crosstalk and physical contamination in metagenomic cfDNA sequencing data [1] [2].

1. Sequence Alignment and Quantification:

- Align sequencing reads to microbial reference genomes using an alignment tool of your choice.

- Quantify the genomic abundance of each species using a maximum likelihood estimation tool like GRAMMy to handle closely related genomes.

2. Calculate Coverage Uniformity (for Digital Crosstalk Filtering):

- For each identified taxon, compute the coefficient of variation (CV) of its per-base genome coverage.

- Calculate the expected CV for a uniformly sequenced genome of the same size and sequencing depth.

- Filter out taxa where the difference between the observed and expected CV (ΔCV) exceeds a defined threshold (e.g., ΔCVmax = 2.00).

3. Analyze Batch Variation (for Physical Contamination Filtering):

- Calculate the absolute abundance (in picograms) of each species' DNA, considering its relative abundance and genome size.

- Analyze the variation in absolute abundance for each species across all samples in the same processing batch.

- Filter out species with a within-batch variation below a defined threshold (e.g., variance < 3.16 pg²), as this indicates consistent background contamination.

4. Apply Negative Control Filter:

- Remove any species present in the experimental samples at an abundance less than 10-fold the abundance observed in the negative control samples.

Diagram of the LBBC Bioinformatics Workflow

Protocol: The DEEPGENTM Variant Calling Assay for Liquid Biopsy

This protocol outlines the wet-lab and computational steps for the DEEPGENTM assay, optimized for low-frequency variant detection in ctDNA [6].

1. Library Preparation and Sequencing:

- Extract cfDNA using a validated kit (e.g., QIAsymphony DSP Circulating DNA Kit).

- Prepare NGS libraries without fragmentation, as cfDNA is already optimally fragmented (200-300 nt).

- During adapter ligation, use adapters containing a sample index and a Unique Molecular Identifier (UMI).

- Perform target enrichment using a custom panel of primers covering clinically relevant genomic targets.

- Sequence on an Illumina platform (e.g., NovaSeq 6000) to a high raw sequencing depth (e.g., ~150,000x).

2. Bioinformatics Processing:

- Consensus Sequence Building: Group reads based on their UMI and primer sequence. A consensus sequence must be supported by at least 3 copies (UMI ≥ 3) to be retained, filtering low-frequency noise.

- Variant Calling: Align unique consensus fragments to the reference genome (GRCh37/hg19) using a dynamic Smith-Waterman algorithm. Record single nucleotide variants (SNVs), multi nucleotide variants (MNPs), and short insertions/deletions (INDELs) based on a predefined whitelist.

Data Presentation: Quantitative Impact of Noise and Tools

Table 1: Impact of Read Quality on Variant Calling Accuracy

Data derived from benchmarking pipelines on sequencing data with artificially introduced noise ("shift") [5].

| Pipeline/Tool | Baseline SNP Error Count (HiSeq2500) | SNP Error Count at +2.0 SD Quality Shift | % Increase in Errors |

|---|---|---|---|

| GATK | 1,900 | 3,800 | 100% |

| DeepVariant | 1,550 | 2,600 | 68% |

| Strelka2 | 2,100 | 4,400 | 110% |

| Freebayes | 3,900 | 9,200 | 136% |

Table 2: Performance of Noise-Filtering Techniques

Comparison of methods for improving specificity in low-frequency variant detection [1] [6] [2].

| Technique | Principle | Application Context | Reported Specificity/Sensitivity |

|---|---|---|---|

| LBBC Filter | Filters based on coverage uniformity and batch variation of absolute abundance. | Metagenomic cfDNA sequencing for infection diagnosis. | Sensitivity: 100%; Specificity: 91.8% (vs. 3.3% unfiltered). |

| UMI-Based Consensus (DEEPGENTM) | Groups reads from original molecules using UMIs to create a high-fidelity consensus. | Low-frequency variant calling in liquid biopsy (ctDNA). | LOD(90) at 0.18% VAF; effective down to 0.09% VAF. |

| Simple Abundance Threshold | Filters out taxa/variants below a fixed relative abundance threshold. | General metagenomics. | Sensitivity: 81.5%; Specificity: 96.7% (may miss low abundance true signals). |

| Optimal Transport (Domain Adaptation) | Corrects for technical biases (e.g., from different library prep kits) using optimal transport theory. | Integrating cfDNA cohorts from different preanalytical sources. | Improves cancer signal isolation and enables cohort merging [7]. |

The Scientist's Toolkit: Essential Reagents & Materials

Key Research Reagent Solutions

| Item | Function | Specific Example / Note |

|---|---|---|

| Specialized Blood Collection Tubes (BCTs) | Prevents white blood cell lysis during transport/storage, preserving cfDNA profile and reducing wild-type genomic DNA background. | Streck cfDNA BCT, Roche Cell-Free DNA Collection Tube [3]. |

| cfDNA Extraction Kits | Optimized for purification of short, fragmented cfDNA from plasma. Automated options enhance reproducibility. | QIAamp Circulating Nucleic Acid Kit (Qiagen) consistently shows high performance [3]. |

| Single-Stranded DNA Library Prep Kit | Increases recovery of short, degraded DNA fragments, boosting sensitivity for microbial or viral cfDNA. | Can improve recovery of microbial cfDNA relative to host cfDNA by up to 70-fold [1] [2]. |

| Unique Molecular Identifiers (UMIs) | Short random nucleotide sequences added to each DNA molecule before PCR amplification, enabling bioinformatic error correction by grouping reads from the original molecule. | Critical for distinguishing true low-frequency variants from PCR/sequencing errors; used in the DEEPGENTM assay [6]. |

| Hybridization Capture Probes | Used to enrich for a predefined set of genomic targets (e.g., cancer hotspots) from cfDNA libraries, increasing on-target coverage. | Custom panels (e.g., from Integrated DNA Technologies) allow focused investigation [4] [6]. |

Optimal Pre-Analytical and Analytical Workflow for Low-Frequency Variant Detection

FAQ: Core Concepts and Definitions

What are the major classes of sequencing noise in cfDNA research? In circulating cell-free DNA (cfDNA) sequencing, particularly for low-biomass samples, two major classes of sequencing noise critically impact data quality:

- Digital Crosstalk: Bioinformatic artifacts arising from errors in sequence alignment and annotation, or from contaminant sequences present in reference genomes themselves. This creates inhomogeneous coverage of microbial reference genomes [1].

- Physical Contamination: Environmental DNA introduced during sample collection, reagents, or laboratory processing. This includes microbial DNA from kits, human operators, or cross-contamination between samples [8] [9].

Why is low-biomass cfDNA research particularly vulnerable to these noise types? The total biomass of microbial-derived cfDNA in clinical isolates like blood and urine is inherently low. This makes metagenomic cfDNA sequencing highly susceptible to contamination and alignment noise, where the contaminant "noise" can easily overwhelm the true biological "signal" [1] [9].

How can I quickly determine if my data is affected by digital crosstalk versus physical contamination? Table 1: Diagnostic Features of Sequencing Noise Types

| Feature | Digital Crosstalk | Physical Contamination |

|---|---|---|

| Primary Origin | Bioinformatic processes, reference genome errors [1] | Environmental sources, reagents, human operators [8] |

| Manifestation in Data | Inhomogeneous genome coverage; spikes in specific genomic regions [1] | Reproducible microbial taxa across samples in a batch [1] |

| Dependence on Sample Biomass | Indirect (affects signal interpretation) | Direct inverse relationship (lower biomass = greater proportional impact) [9] |

| Primary Mitigation Strategy | Computational filtering (e.g., LBBC, SIFT-seq) [1] [9] | Experimental controls, cleanroom protocols, DNA-free reagents [8] |

FAQ: Troubleshooting and Problem Resolution

My negative controls show microbial reads. Is my dataset useless? Not necessarily. The presence of microbial reads in controls confirms the need for rigorous bioinformatic correction, but doesn't automatically invalidate results. A 2022 study in Nature Communications showed that standard negative control subtraction alone removes only ~46% of physical contaminant species identified by more advanced methods like Low Biomass Background Correction (LBBC) [1]. Implement contamination-aware pipelines such as SIFT-seq or LBBC that use batch variation analysis and uniformity of coverage metrics to distinguish contaminants from true signals [1] [9].

After analysis, I'm detecting unexpected or atypical microbial species. How do I validate these findings? First, apply computational filters for both digital crosstalk and physical contamination. Then, assess the following:

- Uniformity of Coverage: For digital crosstalk, compute the coefficient of variation (CV) in per-base genome coverage. True signals should have coverage uniformity consistent with a uniformly sequenced genome [1].

- Batch Correlation: For physical contamination, identify species whose abundance correlates across samples processed in the same batch, as these are likely reagent or environmental contaminants [1].

- Biomass Correlation: Examine if species abundance inversely correlates with total DNA concentration, which is characteristic of contamination [1].

- Experimental Validation: Where possible, use orthogonal methods (e.g., PCR, culture) to confirm findings.

My variant analysis shows hundreds of unexpected SNPs. Could noise be the cause? Yes. A comprehensive evaluation of over 4,000 bacterial samples found that contamination is pervasive and can introduce large biases in variant analysis, resulting in hundreds of false positive and negative SNPs even with slight contamination [10]. Always run a taxonomic classifier to remove contaminant reads before variant calling [10].

Experimental Protocols: Methodologies for Noise Mitigation

Protocol 1: Implementing a Taxonomic Filter for Physical Contamination Removal

This protocol uses Kraken, a metagenomic read classifier, to remove contaminant reads before variant calling [10].

Procedure:

- Taxonomic Classification: Run Kraken on raw sequencing reads against a standardized database.

- Read Filtering: Extract only reads classified under the target organism's taxonomy.

- Variant Calling: Perform mapping and SNP calling on the filtered read set.

- Validation: Compare variant profiles before and after filtering; significant reductions in SNP counts indicate effective contamination removal.

Key Application: This method was validated on a dataset of 2,600 samples across 13 species, significantly improving variant calling accuracy, especially for non-fixed SNPs [10].

Protocol 2: Low Biomass Background Correction (LBBC) for Comprehensive Noise Filtering

LBBC is a bioinformatics workflow that addresses both digital crosstalk and physical contamination in metagenomic cfDNA sequencing datasets [1].

Procedure:

- Digital Crosstalk Removal:

- Calculate the coefficient of variation (CV) in per-base genome coverage for all identified species.

- Remove taxa where the observed CV significantly differs from the expected CV of a uniformly sampled genome of the same size (ΔCVmax > 2.00).

Physical Contamination Filtering:

- Estimate absolute abundance of microbial DNA using a maximum likelihood model (e.g., GRAMMy).

- Perform batch variation analysis on absolute abundance.

- Filter species showing minimal within-batch variation (σ²min < 3.16 pg²), indicating non-biological, systematic contamination.

Negative Control Subtraction:

- Remove species identified in negative controls (threshold: 10-fold the observed representation in negatives).

Validation: When applied to urinary cfDNA, this protocol achieved 100% diagnostic sensitivity and 91.8% specificity for UTI detection, compared to 3.3% specificity without LBBC filtering [1].

Protocol 3: SIFT-Seq for Contamination-Resistant Metagenomic Sequencing

Sample-Intrinsic microbial DNA Found by Tagging and sequencing (SIFT-seq) is a wet-lab and computational method that tags sample-intrinsic DNA before isolation, making it robust against downstream contamination [9].

Procedure:

- Chemical Tagging:

- Treat plasma or urine samples with bisulfite salts before DNA isolation.

- This converts unmethylated cytosines in sample-intrinsic DNA to uracils.

Library Preparation and Sequencing:

- Proceed with standard DNA isolation and library preparation.

- During sequencing, uracils are read as thymines.

Bioinformatic Filtering:

- Remove host cfDNA via mapping and k-mer matching.

- Flag and remove sequences containing >3 cytosines or one CG dinucleotide as likely contaminants (lacking bisulfite conversion).

- Apply species-level filtering to remove reads from C-poor regions in reference genomes.

Performance: SIFT-seq reduced contaminant genera by up to three orders of magnitude in clinical cfDNA samples and completely removed the common skin contaminant C. acnes from 62 of 196 samples [9].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Reagent Solutions for Sequencing Noise Mitigation

| Reagent/Material | Function in Noise Mitigation | Application Notes |

|---|---|---|

| DNA-free collection swabs/vessels [8] | Prevents introduction of contaminant DNA during sample collection | Single-use, pre-sterilized; critical for low-biomass samples |

| Nucleic acid degrading solutions (e.g., bleach, UV-C light) [8] | Decontaminates reusable equipment and surfaces | Removes cell-free DNA that survives standard sterilization |

| Bisulfite salts [9] | Chemical tagging for SIFT-seq protocol | Tags sample-intrinsic DNA; does not require enzymes that can be contamination sources |

| Personal Protective Equipment (PPE) [8] | Barriers against human-derived contamination | Cleanroom suits, masks, multiple glove layers reduce operator-introduced DNA |

| Negative control materials [8] | Identifies contamination sources during sampling | Empty collection vessels, air-exposed swabs, sample preservation solutions |

Workflow Visualization: Noise Identification and Mitigation

Diagram 1: Computational workflow for simultaneous noise mitigation

Diagram 2: SIFT-seq integrated experimental and computational workflow

In the field of liquid biopsy, the analysis of cell-free DNA (cfDNA) has emerged as a powerful, non-invasive tool for disease detection and monitoring. However, the journey from sample collection to sequencing is fraught with potential biases that can compromise data integrity. The pre-analytical phase—encompassing sample collection, processing, and DNA extraction—is particularly vulnerable, contributing to an estimated 60-70% of all laboratory errors [11] [12]. These confounders introduce systematic noise that can obscure true biological signals, presenting a significant challenge for researchers working with low-abundance targets such as circulating tumor DNA (ctDNA). This technical guide addresses the most impactful pre-analytical variables, providing troubleshooting advice and methodological context to help researchers minimize bias and enhance the reliability of their cfDNA sequencing data.

Troubleshooting Guides & FAQs

Sample Collection & Handling

Q: How does the choice of blood collection tube affect my cfDNA profile, and how can I mitigate bias?

The type of blood collection tube is a primary confounder as it determines the sample's stability between venipuncture and processing. Using standard EDTA tubes requires plasma separation within 6 hours of draw to prevent genomic DNA contamination from leukocyte lysis [13] [14]. Specialist cell-stabilizing tubes contain preservatives that prevent cell lysis and nuclease activity, allowing for longer storage at room temperature (often up to several days). However, it is critical to note that different tube types can systematically alter the observed cell-free DNA methylation profile due to varying effects on leukocyte stability [14] [15]. Mitigation requires strict adherence to processing timelines based on your tube type and consistency across a study cohort.

Q: What are the key centrifugation parameters to isolate plasma cfDNA while minimizing cellular contamination?

A two-step centrifugation protocol is the gold standard for preparing platelet-poor plasma, which is essential to minimize contamination by genomic DNA from cells and cell fragments.

table 1: Centrifugation protocol for plasma preparation

| Step | Relative Centrifugal Force (RCF) | Temperature | Duration | Purpose |

|---|---|---|---|---|

| First Spin | 1,600 - 2,000 x g | 4°C | 10-20 minutes | To separate plasma from blood cells |

| Second Spin | 16,000 x g | 4°C | 10-20 minutes | To remove remaining platelets and cell debris |

Deviations from this protocol, especially in the second, high-speed spin, can leave residual platelets and leukocytes that lysate and release genomic DNA, profoundly diluting the rare cfDNA molecules of interest [13] [14]. Immediate processing of samples after the first centrifugation is critical, as delays can lead to cell degradation and contamination.

DNA Extraction & Analysis

Q: How does the DNA extraction method introduce bias in my cfDNA results, particularly for diverse sample types?

The DNA extraction method is a major source of bias, primarily through its cell lysis efficiency and DNA recovery mechanics. Different kits show vastly different performance based on the sample type and the microbial or cellular communities present [16] [17]. For instance, in activated sludge samples, kits without a bead-beating step significantly underestimated resistant bacterial phyla like Actinobacteria and Nitrospirae while overestimating others [16]. Similarly, for oral microbiome studies, protocols incorporating bead-beating produced more accurate community structure representations than purely enzymatic or chemical lysis methods [17]. This bias arises from the varying toughness of cell walls; methods that fail to lyse certain cells will miss their DNA entirely. Therefore, the selection of an extraction kit must be validated for your specific sample matrix and research question.

Q: Why does the extraction bias matter for my cfDNA study, and how can I choose the right kit?

The choice of extraction kit directly impacts diagnostic sensitivity because it determines the yield, fragment size distribution, and purity of the isolated cfDNA. Commercial kits demonstrate significant variation in their efficiency to recover short-fragment cfDNA, which is often the most biologically relevant [18] [13]. A kit that preferentially recovers longer DNA fragments may systematically under-represent the true abundance of cfDNA, which has a characteristic peak at ~166 bp. To choose the right kit, you must prioritize one that has been validated for high-efficiency recovery of short DNA fragments. Before committing to a large-scale study, conduct a pilot experiment comparing the yield and fragment profile of 2-3 leading kits against a spiked-in synthetic control of known concentration and size to benchmark performance.

Essential Methodologies & Protocols

Evaluating DNA Extraction Kit Performance

To quantitatively assess the potential bias introduced by different DNA extraction kits, researchers can adopt a mock community approach, as used in evaluating kits for activated sludge and oral microbiome studies [16] [17].

Protocol:

- Create a Mock Community: Combine equal numbers of cells from 5-10 different bacterial species (or other relevant cells) with varying cell wall structures (e.g., Gram-positive vs. Gram-negative). This creates a sample with a known "true" composition.

- Extract DNA: Subject identical aliquots of the mock community to DNA extraction using the different kits or methods under evaluation. Ensure multiple replicates (n≥3) for each kit.

- Quantify and Sequence: Measure DNA concentration and purity, then perform 16S rRNA gene amplicon sequencing (for microbial communities) or whole-genome sequencing.

- Analyze Bias: Compare the observed microbial community structure or genomic recovery from each kit to the known composition of the mock community. Key metrics include:

- Total DNA yield.

- Purity (A260/A280 ratio).

- Observed vs. Expected abundance of each species or group.

- Richness (number of OTUs/species).

table 2: Hypothetical results from a DNA extraction kit evaluation using a mock microbial community

| DNA Extraction Kit | Cell Lysis Method | Total DNA Yield (ng/µl) | A260/A280 | Observed/Expected for Gram+ Bacteria | Observed/Expected for Gram- Bacteria |

|---|---|---|---|---|---|

| Kit A | Bead-beating + Chemical | 45.2 | 1.85 | 0.95 | 1.02 |

| Kit B | Chemical + Enzymatic | 18.7 | 1.78 | 0.45 | 1.38 |

| Kit C | Bead-beating | 50.1 | 1.82 | 1.10 | 0.98 |

This table illustrates how Kit B, lacking a vigorous bead-beating step, would significantly under-represent Gram-positive bacteria (Observed/Expected = 0.45) while over-representing easier-to-lyse Gram-negative bacteria, thereby introducing substantial bias.

A Unified Computational Workflow for cfDNA Data Preprocessing

To mitigate technical biases introduced during sequencing of cfDNA samples, specialized computational correction steps are required. The cfDNA UniFlow workflow is a Snakemake-based pipeline designed to standardize this preprocessing [19]. The workflow takes raw sequencing data and applies a series of steps to correct for errors and biases, culminating in a comprehensive report.

Unified cfDNA Preprocessing Workflow

The workflow's Preprocessing & QC stage involves read merging, adapter trimming, and quality filtering to ensure only high-quality fragments are aligned to the reference genome [19]. The critical Bias Correction & Signal Extraction module includes specialized tools like cfDNA_GCcorrection, which calculates sample-specific weights for fragments based on their length and GC content to correct for uneven recovery, a common technical confounder [19]. This structured approach ensures consistent data quality, which is a prerequisite for robust downstream analysis.

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential materials and their critical functions in minimizing pre-analytical bias in cfDNA studies.

table 3: Key research reagents and their functions in cfDNA analysis

| Reagent / Kit | Primary Function | Key Consideration to Minimize Bias |

|---|---|---|

| Cell-Stabilizing Blood Collection Tubes | Preserves blood sample integrity during transport and storage. | Prevents leukocyte lysis and release of genomic DNA, which dilutes rare cfDNA variants. Allows for longer processing windows. |

| Bead-Beating DNA Extraction Kits | Mechanical disruption of cells for DNA liberation. | Essential for unbiased lysis of cells with resistant walls (e.g., Gram-positive bacteria, some eukaryotic cells). Kits without beads can severely under-represent certain populations [16] [17]. |

| Size-Selection Magnetic Beads | Selection of DNA fragments by size. | Critical for enriching the short-fragment cfDNA (e.g., ~166 bp) and removing longer genomic DNA fragments, thereby improving the signal-to-noise ratio for detecting rare variants [20]. |

| Bisulfite Conversion Kits | Chemical treatment for detecting DNA methylation. | Conversion efficiency is paramount. Incomplete conversion leads to false positive signals for methylation and introduces significant noise in epigenetic analyses [14]. |

| DNA Extraction Kits Validated for Short Fragments | Isolation and purification of nucleic acids. | Not all kits recover short DNA fragments with equal efficiency. Using a kit validated for high recovery of short fragments prevents systematic loss of cfDNA [18] [13]. |

The study of low-biomass microbial environments, such as certain human tissues (e.g., fetal tissues, blood) and ultra-clean environments (e.g., deep subsurface, treated drinking water), is fraught with a unique set of challenges. In these contexts, the target microbial DNA signal can be exceptionally low, bringing it perilously close to the limits of detection for standard DNA-based sequencing methods. Consequently, the inevitability of contamination from external sources becomes a critical concern, as even minute amounts of contaminating DNA can disproportionately influence results and lead to spurious conclusions [8]. This technical guide addresses the primary sources of this contamination and provides actionable protocols for its prevention, identification, and removal, with a specific focus on applications in cell-free DNA (cfDNA) sequencing for cancer detection and other liquid biopsy diagnostics.

Frequently Asked Questions (FAQs)

FAQ 1: What defines a "low-biomass" sample, and why is it particularly vulnerable to contamination? A low-biomass sample contains a very small amount of target microbial or cfDNA. In microbiome research, this includes samples like human blood, fetal tissues, and deep subsurface soils [8]. In cfDNA analysis, the analyte is present in very low concentrations (e.g., 1–50 ng/mL in healthy individuals) and is highly fragmented [21]. The vulnerability arises because the low target DNA "signal" can be easily swamped by the contaminant "noise," which is derived from reagents, kits, laboratory environments, and personnel. This can critically impact both PCR-based assays and shotgun metagenomics, distorting ecological patterns, evolutionary signatures, or causing false-positive pathogen or mutation detection [8] [22].

FAQ 2: What are the most common sources of DNA contamination in a laboratory setting? Contamination is ubiquitous and can be introduced at every stage, from sample collection to data analysis. The primary sources are:

- Laboratory Reagents and Kits: DNA extraction kits, molecular grade water, and PCR reagents are well-documented sources of contaminating microbial DNA [22].

- Human Operators and Laboratory Environment: Contaminants can be introduced from skin, hair, and clothing of personnel, as well as from aerosols [8].

- Sampling Equipment: Contamination can originate from collection vessels, swabs, and tools that have not been properly decontaminated [8].

- Cross-Contamination: Transfer of DNA between samples can occur during processing, for example, through well-to-well leakage in plates [8].

- Biological Confounders (for cfDNA): In cfDNA analysis, a key biological confounder is clonal haematopoiesis (CHIP), where somatic mutations from blood cells can be mistaken for tumor-derived variants, leading to false positives [23].

FAQ 3: What are the best practices for collecting blood samples to ensure high-quality cfDNA? To minimize genomic DNA contamination and preserve cfDNA integrity:

- Use Plasma Over Serum: Serum tends to have higher genomic DNA contamination from white blood cell (WBC) lysis during clotting [24].

- Minimize Cell Lysis: Use an appropriate needle size, avoid prolonged tourniquet time, and prevent harsh temperature changes or excessive agitation during transport [24].

- Rapid Processing: Isolate plasma within 6 hours of blood collection when using EDTA tubes, or follow manufacturer instructions for specialized cell-free blood collection tubes containing stabilizers [24].

- Careful Centrifugation: Perform double centrifugation of plasma to minimize carryover of WBCs and avoid contact with the buffy coat layer [24].

Troubleshooting Guides

Problem: Inconsistent or Failed cfDNA Library Preparations

| Potential Cause | Diagnostic Steps | Corrective Action |

|---|---|---|

| Suboptimal DNA quantification | Check A260/280 ratio on NanoDrop; use fluorometry or PCR-based methods. | Use qPCR or ddPCR for accurate quantification of low-abundance cfDNA. Fluorometric methods should include Poly(A) RNA for reliable performance [24]. |

| Insufficient cfDNA input | Review fragment analyzer profile and QC metrics from preprocessing pipelines. | Increase plasma input volume (e.g., 2-5 mL) for extraction to obtain more cfDNA [24]. |

| Inadequate removal of sequencing adapters | Check FastQC reports in cfDNA UniFlow or similar workflows for adapter content. | Ensure proper adapter trimming using tools like NGmerge or Trimmomatic within standardized preprocessing pipelines [19]. |

| Carryover of enzymatic inhibitors | Assess DNA purity (A260/280 ratio); run control PCR. | Ensure complete removal of contaminants during extraction by using silica column or magnetic bead-based kits with thorough wash steps [25] [24]. |

Problem: High Background Noise in cfDNA Sequencing Data

| Potential Cause | Diagnostic Steps | Corrective Action |

|---|---|---|

| High gDNA contamination | Analyze fragment size profile; a peak at ~165 bp indicates good cfDNA, while a smear suggests gDNA. | Optimize blood drawing and plasma separation protocol (see FAQ 3). Perform double centrifugation [24]. |

| CHIP (Clonal Haematopoiesis) | Sequence matched peripheral blood cell DNA to the same depth as cfDNA to identify CHIP variants. | Apply a "CHIP-filter" in variant calling to remove somatic mutations originating from blood cells [23]. |

| Technical biases (e.g., GC-bias) | Use cfDNA UniFlow's cfDNA_GCcorrection module to estimate and visualize GC bias [19]. |

Implement GC-bias correction methods that attach weights to reads based on fragment length and GC content [19]. |

| Reagent-derived contamination | Sequence negative control samples (e.g., blank extractions) concurrently. | Sequence and process negative controls alongside patient samples. Use these to inform contaminant removal bioinformatically [8] [22]. |

Experimental Protocols

Protocol: Implementing a Contamination-Aware Sampling and DNA Extraction Workflow

This protocol is critical for any low-biomass or cfDNA study to minimize and monitor contamination.

I. Materials (Research Reagent Solutions)

- Decontamination Solution: Sodium hypochlorite (bleach) or commercial DNA removal solutions [8]

- Personal Protective Equipment (PPE): Powder-free gloves, cleansuits, face masks, and shoe covers [8]

- DNA-Free Collection Vessels: Autoclaved or UV-C sterilized plasticware, sealed until use [8]

- Stabilized Blood Collection Tubes: e.g., Cell-free DNA Blood Collection Tubes [24]

- Automated cfDNA Extraction Kit: e.g., chemagic cfDNA kit based on M-PVA Magnetic Beads [24]

- Exogenous DNA Control: e.g., synthetic spike-in DNA to monitor extraction efficiency [24]

II. Methodology

- Pre-Sampling Decontamination: Decontaminate all surfaces and reusable equipment with 80% ethanol (to kill microbes) followed by a nucleic acid degrading solution (e.g., bleach) to remove trace DNA. Note that sterility is not the same as being DNA-free [8].

- Sample Collection:

- For Environmental/Low-Biomass Microbiome: Personnel should wear appropriate PPE (gloves, cleansuits) to limit sample contact. Use single-use, DNA-free swabs and vessels [8].

- For Blood/Plasma for cfDNA: Draw blood using stabilized collection tubes. Minimize cell lysis during phlebotomy. Isolate plasma via double centrifugation within 6 hours of collection [24].

- Include Controls: Process multiple negative controls alongside your samples. These are essential for downstream bioinformatic filtering. Examples include:

- DNA Extraction:

- Use kits designed for high sensitivity and low input, such as those employing magnetic beads.

- Automate the extraction where possible (e.g., on a chemagic 360 instrument) to increase throughput, reduce hands-on time, and maintain consistency [24].

III. Workflow Visualization The following diagram summarizes the key stages of the contamination-aware workflow.

Protocol: Bioinformatic Preprocessing and Contaminant Removal for cfDNA

This protocol utilizes the unified cfDNA UniFlow workflow [19] to ensure consistent and bias-aware processing of cfDNA sequencing data, which is vital for distinguishing true signal from noise.

I. Materials

- Computational Environment: A computer or cluster with Snakemake installed.

- cfDNA UniFlow Pipeline: Available from the GitHub repository (https://github.com/kircherlab/cfDNA-UniFlow).

- Reference Genome: e.g., GRCh38/hg38.

II. Methodology

- Core Preprocessing:

- Input: Provide raw FASTQ files or existing BAM files.

- Adapter Removal & Merging: Use NGmerge to remove sequencing adapters, correct errors, and merge reads based on overlap consensus.

- Mapping: Map reads to a reference genome using

bwa-mem2. - Filtering: Filter reads based on length to exclude short fragments.

- Duplicate Marking: Mark duplicate reads using

SAMtools markdup[19].

- Quality Control:

- Generate postalignment statistics (

SAMtools stats) and quality metrics (FastQC). - Calculate coverage metrics (

Mosdepth). - Aggregate all QC results into a unified HTML report using

MultiQC[19].

- Generate postalignment statistics (

- Bias Correction and Signal Extraction (Utility Modules):

- GC-Bias Correction: Run the in-house

cfDNA_GCcorrectionmethod. This estimates the expected fragment distribution based on GC content and fragment length, then calculates correction weights for each read [19]. - Copy Number Alteration (CNA) Estimation: Use

ichorCNAto identify CNAs and estimate tumor fraction [19]. - CHIP-Filtering: For variant calling, use the matched white blood cell DNA sequence (sequenced to the same depth as cfDNA) to filter out mutations associated with clonal haematopoiesis [23].

- GC-Bias Correction: Run the in-house

III. Workflow Visualization The following diagram illustrates the key stages of the cfDNA UniFlow preprocessing pipeline.

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key solutions and materials for establishing a reliable low-biomass and cfDNA research workflow.

| Item | Function & Application | Key Considerations |

|---|---|---|

| Specialized Blood Collection Tubes | Contain stabilizers to prevent white blood cell lysis and preserve cfDNA profile post-phlebotomy [24]. | Use over serum tubes. Follow manufacturer's instructions for storage time after collection. |

| Automated cfDNA Extraction Kits (e.g., magnetic bead-based) | Concentrate cfDNA from large plasma volumes with high efficiency and consistency; reduce manual error [24]. | Look for high recovery of short fragments. Automation increases throughput and reduces hands-on time. |

| Exogenous Controls (Spike-in DNA) | Non-human DNA sequence added to samples to monitor extraction efficiency and potential inhibition [24]. | Allows for normalization and provides a quality check for the entire wet-lab workflow. |

| qPCR/ddPCR Quantification Kits | Accurately quantify low-abundance cfDNA; essential for normalizing input into downstream assays like NGS [24]. | Prefer over spectrophotometric methods for low-concentration samples. Targets short fragments (e.g., ALU115). |

| Fragment Analyzer | Assess size distribution of extracted cfDNA; confirms expected peak at ~165 bp and absence of high molecular weight gDNA [24]. | Used for qualitative assessment, not primary quantification. |

| Unified Computational Workflow (e.g., cfDNA UniFlow) | Standardized, scalable pipeline for preprocessing cfDNA data, including QC, GC-bias correction, and signal extraction [19]. | Ensures reproducibility, reduces technical biases between studies, and aggregates results in a unified report. |

In the analysis of noisy cell-free DNA (cfDNA) sequencing data, bioinformatic artifacts originating from reference genomes pose significant challenges to accurate interpretation. These artifacts—encompassing alignment errors due to sequence inaccuracies and annotation issues from flawed gene models—can severely compromise variant calling, transcript quantification, and downstream biological conclusions. This technical support center provides targeted troubleshooting guides and FAQs to help researchers identify, mitigate, and resolve these critical issues within their cfDNA research workflows.

FAQ: Addressing Common Reference Genome Challenges

Q1: What are the most common types of errors found in reference sequence databases? Reference sequence databases frequently contain several pervasive errors that impact analysis:

- Taxonomic Misannotation: Sequences may be incorrectly labeled, affecting 3.6% of prokaryotic genomes in GenBank and approximately 1% in RefSeq [26]. This can lead to false positive or false negative taxonomic assignments.

- Database Contamination: Systematic evaluations have identified over 2 million contaminated sequences in NCBI GenBank and 114,000 in RefSeq [26]. This includes vector sequences, adapter contaminants, or DNA from other species.

- Inappropriate Inclusion/Exclusion and Sequence Content Errors: This includes sequences with unspecific taxonomic labels (e.g., annotated only to a high-level rank like "Bacteria") or those with technical artifacts that skew analysis [26].

- Use of Incorrect Reference Genome Versions: Using an outdated or improperly indexed reference genome is a common pitthood that leads to misalignments and erroneous variant calls [27].

Q2: How do alignment errors specifically impact the analysis of noisy cfDNA data? In cfDNA analysis, where the circulating tumor DNA (ctDNA) fraction can be as low as 0.01% of the total cell-free DNA, alignment errors are magnified [28]. Using a reference genome with contamination or misannotated regions can cause true, low-frequency variant reads to be misaligned or filtered out. This directly increases false negative rates and reduces the sensitivity of detecting minimal residual disease (MRD) or early-stage cancer signals [28]. The low signal-to-noise ratio inherent to cfDNA makes it exceptionally vulnerable to these artifacts.

Q3: What strategies can mitigate the effects of a flawed reference genome?

- Database Curation: For critical applications, use or create curated reference databases. This involves filtering out known contaminated sequences, verifying taxonomic labels, and including only high-quality, representative genomes [26].

- Leverage Non-Plasma cfDNA Sources: For cancers like colorectal cancer, harvesting cfDNA from stool or peritoneal fluid can provide a higher ctDNA fraction and be more representative of the primary tumor, thus reducing reliance on a perfect plasma-based reference [28].

- Use of Structured Pipelines: Employ robust, version-controlled workflows (e.g., Snakemake, Nextflow) that explicitly document the reference genome version and indexing method. This reduces human error and improves reproducibility [27] [29].

- Validation with Orthogonal Methods: Cross-check key genetic variants identified through sequencing with alternative methods like targeted PCR to rule out artifacts introduced by reference-related misalignment [30].

Troubleshooting Guide: Alignment and Annotation Artifacts

Problem 1: Low Mapping Rates and Misalignments

Symptoms: Unexplained low alignment rates, unusual coverage gaps, or high rates of reads flagged as secondary/supplementary alignments.

| Possible Cause | Diagnostic Steps | Corrective Actions |

|---|---|---|

| Incorrect reference genome version or indexing [27] | Check log files from aligner (e.g., BWA, STAR) for index used. Verify version (e.g., hg38 vs. hg19) matches annotation files. | Download the correct version from a trusted source (e.g., GENCODE, Ensembl) and re-index it with your aligner. |

| Reference genome contamination [26] | BLAST a subset of unaligned reads. Check for high levels of alignment to vectors or non-target species. | Use a curated database that has filtered out known contaminants or switch to a more rigorously maintained reference set. |

| Sequence content errors in reference [26] | Investigate regions with consistently poor coverage or zero reads across multiple samples. | Mask problematic regions in the reference genome or use an alternate assembly if available. |

Problem 2: Annotation-Related Errors in Downstream Analysis

Symptoms: Abnormally high rates of "gene dropouts" (genes with zero counts in RNA-seq), unexpected exon-intron structures, or an inflation of lineage-specific genes in comparative genomics.

| Possible Cause | Diagnostic Steps | Corrective Actions |

|---|---|---|

| Low-quality gene prediction [31] [32] | Run BUSCO to assess annotation completeness. Use GeneValidator to identify problematic gene models [31]. | Re-annotate the genome using a top-performing tool (e.g., BRAKER3, TOGA, StringTie) and integrate RNA-seq evidence [32]. |

| Mixing genome annotation methods in comparative analysis [31] | Check if annotations for compared species were generated using different pipelines or evidence. | Re-annotate all genomes in the comparison using a consistent, high-quality pipeline to minimize artificial inflation of differences [31]. |

| Use of default, uncurated annotations | Verify the source of the annotation (e.g., automated pipeline vs. manually curated). | For well-studied organisms, use community-curated annotations from resources like Ensembl or RefSeq. |

Problem 3: High Duplication Rates and Low Library Complexity in cfDNA

Symptoms: High PCR duplication rates reported by tools like Picard MarkDuplicates, indicating a low diversity of unique DNA fragments.

| Possible Cause | Diagnostic Steps | Corrective Actions |

|---|---|---|

| Over-amplification during library prep [33] | Review the number of PCR cycles used in library preparation. Check BioAnalyzer/Fragment Analyzer traces for smearing or adapter dimer peaks. | Optimize the number of PCR cycles. Use a two-step indexing approach instead of one-step to reduce artifacts [33]. |

| Low input DNA or degraded sample [33] | Check BioAnalyzer/Fragment Analyzer profiles for RNA Integrity Number (RIN) or DNA Degradation Index (DDI). Use fluorometric quantification (Qubit) over absorbance (NanoDrop). | Re-purify the input sample using clean columns or beads to remove inhibitors. Increase input DNA if possible, and use specialized protocols for degraded samples like FFPE. |

Essential Workflows for Error Mitigation

Workflow 1: Pre-Alignment Reference Genome Quality Control

This workflow should be performed before initiating any large-scale sequencing project to validate the reference genome.

Workflow 2: Systematic Diagnosis of Alignment Failures

Follow this logical path when encountering poor alignment results to isolate the root cause.

The Scientist's Toolkit: Key Research Reagent Solutions

This table details essential materials and tools for troubleshooting and improving analyses reliant on reference genomes.

| Item | Function & Application | Key Considerations |

|---|---|---|

| FastQC [27] [29] | Assesses raw sequence data quality; identifies adapter contamination, overrepresented sequences, and low-quality bases. | First-line QC tool. Generates an HTML report. Should be run before and after read trimming. |

| Trimmomatic / Cutadapt [27] | Removes adapter sequences, primers, and low-quality bases from raw sequencing reads. | Critical for preventing misalignment due to adapter contamination. Parameters (e.g., quality threshold) should be tuned for your data. |

| BUSCO [31] | Benchmarks Universal Single-Copy Orthologs to assess the completeness of a genome assembly or annotation. | Provides a quantitative measure (e.g., % of conserved genes found) to compare the quality of different annotations or assemblies. |

| BRAKER3 / TOGA [32] | Automated genome annotation pipelines. BRAKER uses protein and RNA-seq evidence; TOGA uses whole-genome alignment for annotation transfer. | Top performers in cross-species benchmarks. Choice depends on data availability and taxonomic group [32]. |

| BWA / STAR [29] | Standard tools for aligning sequencing reads to a reference genome. BWA is common for DNA-seq; STAR for RNA-seq. | Ensure the reference index is built from the same genome version used for annotation. Version control is critical. |

| SAMtools / GATK [29] | Process alignment files (SAM/BAM). SAMtools for basic operations; GATK for variant discovery and genotyping. | Follow best-practice workflows for data preprocessing and variant calling to minimize reference-related artifacts. |

| Curation Tools (e.g., ANI calculators) [26] | Tools that calculate Average Nucleotide Identity to detect taxonomically misannotated genomes in a database. | Essential for building custom, high-quality reference databases for sensitive applications like clinical metagenomics or cfDNA analysis [26]. |

From Theory to Practice: A Toolkit of Preprocessing and Noise-Filtering Methods

Frequently Asked Questions (FAQs)

Q1: Why was a short, genuine genomic sequence mistakenly trimmed by Cutadapt as an adapter?

This occurs due to Cutadapt's default error-tolerant search algorithm. The tool can identify and remove sequences with even a small partial match (e.g., as low as 3 bp) to the provided adapter sequence if the number of errors (mismatches, insertions, deletions) falls within the allowed limit. The error allowance is calculated based on the full length of the adapter sequence, not the length of the matching segment. Therefore, a short genomic sequence with a few coincidental matches can be mistakenly identified for trimming [34].

- Solution: You can adjust the

--minimum-overlapparameter to require a longer minimum match between the read and the adapter sequence, making the search more stringent and reducing false positives [34].

Q2: My trimming report shows adapters were "trimmed," but all reads are still in the output file and seem unchanged. What does "trimmed" mean?

In this context, "trimmed" means that the adapter sequence and any subsequent bases were cut from the read, not that the entire read was removed or discarded. The shortened read is still written to the output file. If you need to filter out reads that became too short after trimming, you must use the -m or --minimum-length option to remove them [35].

Q3: After processing with fastp, my FastQC report shows new warnings for "Sequence Length Distribution" and "GC Content." Did fastp make my data worse?

Not necessarily. These new warnings are often expected and indicate that fastp has done its job correctly.

- Sequence Length Distribution: A warning here is normal after trimming because reads are shortened by different amounts, making their lengths variable rather than uniform [36].

- GC Content: Trimming can alter the overall composition of the read set, which may shift the GC content away from the theoretical distribution that FastQC uses. It is more important to compare the GC content to what is biologically expected for your organism [36]. As noted in one community discussion, the per-base sequence quality often visibly improves after fastp processing, which is a primary goal [36].

Q4: For a paired-end (PE) library, should I provide the reverse primer sequence for the R2 read as its reverse complement?

No, by default, you should provide all adapter sequences in the same 5' to 3' orientation as the reads. Cutadapt does not automatically consider the reverse complement of the adapters or the reads. If you are unsure, you may need to test both the original sequence and its reverse complement to see which one works [37].

Troubleshooting Common Issues

Cutadapt Trimming Specificity and Output

The table below summarizes common issues and solutions when using Cutadapt, based on real user experiences from support forums [34] [35] [37].

| Problem | Possible Cause | Diagnostic Steps | Solution & Recommended Parameters |

|---|---|---|---|

| Unexpected trimming of genuine genomic sequence [34]. | Overly liberal adapter matching with a short minimum overlap and default error rate. | Check the Cutadapt log file's "Overview of removed sequences" table. It shows the length and error count of all trimmed sequences. | Increase the stringency by setting a longer minimum overlap: --minimum-overlap 5 |

| No reads are removed after trimming; output file has the same number of reads as input [35]. | Misunderstanding of "trimming" vs. "filtering." Cutadapt trims (shortens) reads by default but does not remove them from the output. | Use the --length flag in grep to check the length of sequences in the input and output FASTQ files. You will notice the output sequences are shorter. |

Use the -m/--minimum-length parameter to discard reads that become too short after trimming: -m 20 |

| Incorrect primer/adapter not trimmed in single-end mode [37]. | The reverse primer might be provided in the wrong orientation. | Manually inspect a subset of raw reads to confirm the exact sequence and location of the adapter. | Provide the adapter sequence in the same 5' to 3' orientation as the read. Test with the reverse complement sequence if necessary. |

| Low trimming rate for a known adapter. | The adapter type (3' or 5') might be mis-specified. | Review the official Cutadapt guide to confirm the correct adapter type and option (-a for 3', -g for 5') [38]. |

Use -g ^ADAPTER for an anchored 5' adapter (must be at the very start of the read). Use -a ADAPTER$ for an anchored 3' adapter (must be at the very end) [38]. |

FastP Quality Control and Validation

The table below addresses common points of confusion when using fastp for quality control.

| Problem | Possible Cause | Diagnostic Steps | Solution & Recommended Parameters |

|---|---|---|---|

| How to run an analysis/preview mode to assess data quality without writing large output files? | fastp always requires output file parameters (-o, -O) but can be configured for minimal output. |

Omit the -o and -O parameters. Fastp will exit with an error, showing that outputs are mandatory. |

Use a two-step strategy. First, run fastp on a subset of data to generate the QC report and decide on parameters. A user's initial approach for BGI/MGI data was to first generate a report to diagnose quality before a full run [39]. |

| Interpreting FastQC warnings that appear only after running fastp [36]. | Expected consequences of trimming, not a degradation of data quality. | Compare the "Per base sequence quality" plot in FastQC before and after fastp. You will likely see quality improvements in the tails of the reads. | Trust the fastp report and the improved per-base quality. The length distribution warning is expected, and GC content can be checked against known biological expectations. |

| Need for comprehensive quality control in a single tool. | Using multiple, separate tools for QC and trimming can be inefficient. | Compare the fastp HTML report with a separate FastQC report. The fastp report consolidates both pre- and post-filtering statistics [40]. | Rely on the fastp HTML report, which provides all-in-one QC, including quality curves, base content, adapter content, and duplication rates, both before and after filtering [40]. |

Essential Workflows and Protocols

Standard Preprocessing Workflow for cfDNA Data

The following diagram illustrates a robust, generalized workflow for preprocessing cfDNA sequencing data, incorporating best practices from recent literature [41].

Detailed Protocol: A Two-Step Quality Control Strategy with FastP

This protocol is adapted from a user's approach for metagenomic data [39], which is highly relevant to the noisy data context of cfDNA research.

Preliminary Quality Assessment (Analysis-Only Mode):

- Objective: To diagnose the initial quality of the raw data and inform the parameters for the main filtering step.

- Command Example:

- Key Parameters:

--html/--json: Generate the quality control reports.--adapter_sequence/--adapter_sequence_r2: Manually specify known adapters for your library kit.--trim_poly_g: Especially important for data from NovaSeq/NextSeq platforms.

- Output Analysis: The HTML report will show adapter content, per-base quality, and poly-G tails, allowing you to decide if manual adapter specification or poly-G trimming is needed for the main run [39].

Comprehensive Filtering and Trimming:

- Objective: To execute the actual data cleaning with optimized parameters based on the preliminary report.

- Command Example:

- Key Parameters:

-l 50: Discard reads shorter than 50 bp after processing.-q 20: Set the qualified quality threshold to Q20.-e 30: Discard reads with an average quality below Q30.--correction: Enable base correction in overlapping regions for paired-end reads.

The Scientist's Toolkit: Research Reagent Solutions

For cfDNA studies, the choice of wet-lab reagents, particularly the library preparation kit, can introduce significant bias in downstream fragmentomic analysis. The following table lists common kits and their considerations, as evaluated in a recent study [41].

| Library Kit Name | Primary Application/Focus | Key Characteristics/Considerations |

|---|---|---|

| SureSelect XT HS2 (XTHS2) [41] | Targeted sequencing; sensitive mutation detection | Contains dual sample barcodes and dual molecular barcodes (UMIs), which help mitigate index hopping and improve mutation calling accuracy. |

| NEBNext Enzymatic Methyl-seq (EM_seq) [41] | Multi-omics (Methylation & Fragmentomics) | Allows for simultaneous analysis of genetic and epigenetic markers from the same library, which is valuable for multi-modal AI models in cancer detection. |

| Watchmaker DNA Library Prep Kit [41] | General cfDNA library prep | The study found it yielded a significantly higher fraction of mitochondrial DNA reads, which could be a confounder or a feature depending on the research question. |

| ThruPLEX Tag-Seq [41] | General cfDNA library prep | Known to produce a higher number of mismatches during alignment compared to other kits, which is an important factor for studies focused on single-nucleotide variations (SNVs). |

Frequently Asked Questions (FAQs)

Q1: What is the primary source of contamination that LBBC aims to correct? A1: LBBC primarily targets contamination from "low biomass" sources, where trace amounts of foreign DNA (e.g., from reagents, lab surfaces, or sample cross-talk) constitute a significant portion of the sequenced material in samples with very low native DNA content, such as plasma cfDNA.

Q2: How does LBBC differ from traditional background noise filters? A2: Traditional filters often rely on databases of known contaminants or simple abundance thresholds. LBBC is a data-driven method that does not require a priori knowledge. It identifies contaminants by analyzing two intrinsic properties of sequencing data: uneven coverage distribution across the genome (Coverage Uniformity) and systematic variation across experimental batches (Batch Variation).

Q3: My negative controls show minimal reads. Do I still need to apply LBBC? A3: Yes. Even with clean controls, low-level, batch-specific contamination can be present in your experimental samples and can bias downstream analyses, especially for rare variant detection in cfDNA. LBBC uses the controls to model this background, which may be below the threshold of casual observation but statistically significant across a batch.

Q4: What are the minimum sample and batch sizes required for a robust LBBC analysis? A4: While requirements can vary, a general guideline is:

- Minimum Samples per Batch: 8-10 samples (including controls).

- Minimum Batches: 3 or more distinct processing batches. Smaller sample or batch sizes reduce the statistical power to reliably distinguish batch-specific contaminants from true biological signal.

Q5: After applying LBBC, what is an acceptable post-correction contamination level? A5: The goal is to minimize contamination to a level where it does not interfere with your specific biological question. For cfDNA rare variant calling, a common benchmark is to reduce the contamination signal to below the expected variant allele frequency (e.g., <0.1% for ultra-deep sequencing).

Troubleshooting Guides

Problem: High False Positive Rate After LBBC

- Symptoms: True biological signals (e.g., known cancer mutations) are being filtered out alongside contaminants.

- Potential Causes & Solutions:

- Cause 1: Over-correction due to mis-specification of batch groups.

- Solution: Re-evaluate your batch definitions. Ensure batches are defined by distinct library prep kits, reagent lots, or processing dates, not arbitrary groupings.

- Cause 2: Genomic regions of genuine low coverage in your sample type are being mistaken for contaminant regions.

- Solution: Incorporate a sample-specific "coverage mask" based on a high-quality reference sample or a validated set of regions known to have low mappability in cfDNA.

- Cause 1: Over-correction due to mis-specification of batch groups.

Problem: Inconsistent LBBC Performance Across Batches

- Symptoms: Some batches show excellent contamination removal, while others show little to no effect.

- Potential Causes & Solutions:

- Cause 1: Extreme batch effect where one batch is an outlier, dominating the correction model.

- Solution: Implement a robust statistical method (e.g., median-based normalization instead of mean) that is less sensitive to outliers. Visually inspect PCA plots of coverage to identify and potentially exclude severe outlier batches.

- Cause 2: Insufficient sequencing depth in one or more batches.

- Solution: Ensure uniform and adequate sequencing depth (e.g., >100x for cfDNA) across all batches. Low-depth batches lack the statistical power for accurate coverage uniformity analysis.

- Cause 1: Extreme batch effect where one batch is an outlier, dominating the correction model.

Problem: LBBC Fails to Remove Known Contaminant Signal

- Symptoms: Reads from a known contaminant (e.g., E. coli) are still present post-correction.

- Potential Causes & Solutions:

- Cause 1: The contaminant is present uniformly across all batches and samples, making it indistinguishable from the true background via batch variation analysis.

- Solution: Combine LBBC with a database-driven approach. Use a "blacklist" of known contaminant genomes (e.g., phiX, common lab bacteria) to remove these reads as a primary filtering step before applying LBBC.

- Cause 1: The contaminant is present uniformly across all batches and samples, making it indistinguishable from the true background via batch variation analysis.

Experimental Protocols

Protocol 1: Generating Data for LBBC Analysis

- Sample Preparation:

- Extract cfDNA from plasma using a silica-membrane based kit.

- Quantify using a fluorescence-based assay (e.g., Qubit dsDNA HS Assay).

- Library Construction:

- Use a minimum of 8 patient cfDNA samples and 2 negative controls (e.g., water) per batch.

- Construct sequencing libraries using a targeted or whole-genome kit.

- Crucially, process at least 3 independent batches on different days using different reagent lots.

- Sequencing:

- Sequence on an Illumina platform to a minimum depth of 50x for WGS and 500x for targeted panels.

- Pool samples from multiple batches on the same sequencing lane to avoid confounding sequencing run with library prep batch.

Protocol 2: Computational Implementation of LBBC

- Data Preprocessing:

- Align FASTQ files to a reference genome (e.g., hg38) using BWA-MEM.

- Sort and index BAM files using SAMtools.

- Calculate read depth in non-overlapping genomic bins (e.g., 1kb bins) using

mosdepth.

- Coverage Uniformity & Batch Variation Modeling:

- Construct a coverage matrix (bins x samples).

- Perform Principal Component Analysis (PCA) on the normalized coverage matrix.

- Identify bins with strong loadings on batch-associated principal components (PCs). These represent regions with high batch variation.

- Contaminant Filtering:

- Remove or down-weight reads aligning to the identified contaminant bins from the final BAM files.

- Recalculate coverage metrics on the corrected BAM files.

Data Presentation

Table 1: Impact of LBBC on Simulated cfDNA Data with 0.5% Contamination

| Metric | Pre-LBBC | Post-LBBC | % Change |

|---|---|---|---|

| Mean Contamination Level | 0.50% | 0.07% | -86.0% |

| True Positive Rate (TPR) | 95.2% | 94.8% | -0.4% |

| False Discovery Rate (FDR) | 35.1% | 8.5% | -75.8% |

| Number of Significant Bins (FDR < 0.05) | 12,450 | 1,105 | -91.1% |

Table 2: Key Research Reagent Solutions for LBBC Experiments

| Item | Function in LBBC Context |

|---|---|

| cfDNA Extraction Kit | Isolate and purify low-concentration, fragmented cfDNA from plasma with minimal contamination. |

| Ultra-Pure Water | Serve as a negative control to detect contamination introduced during library preparation. |

| Targeted Sequencing Panel | Enrich for specific genomic regions of interest, allowing for deeper sequencing to better distinguish signal from background. |

| Unique Molecular Indices (UMIs) | Tag individual DNA molecules pre-amplification to correct for PCR duplicates and sequencing errors, improving variant calling accuracy post-LBBC. |

| Different Reagent Lots | Essential for creating the batch variation required to statistically identify lot-specific contaminants. |

Visualizations

LBBC Workflow

LBBC Core Concept

Frequently Asked Questions (FAQs)

Q1: What is DAGIP and what specific problem does it solve in cfDNA research? DAGIP is a novel data correction method that uses optimal transport theory and deep learning to correct for pre-analytical biases in cell-free DNA (cfDNA) sequencing data. It explicitly corrects for technical confounders introduced by variables such as library preparation protocols or sequencing platforms, which are major sources of non-biological variation that can obscure true biological signals. This allows for improved cancer detection, copy number alteration analysis, and fragmentomic analysis by alleviating sources of variation not of biological origin [42] [43].

Q2: What types of cfDNA data modalities can DAGIP correct? DAGIP is designed to correct multiple cfDNA data modalities, including:

- Genome-wide copy-number profiles (coverage profiles)

- Fragmentomics data (fragment size distributions)

- End motif frequencies

- Nucleosome positioning patterns The method operates in the original data space, making the corrections transparently interpretable, which is crucial for genetic research [42] [43].

Q3: How does DAGIP differ from traditional bias correction methods like GC-content correction? Unlike traditional methods that focus primarily on GC-content and mappability biases, DAGIP uses a sample-to-sample relationship approach guided by optimal transport theory. While methods like LOESS GC-content correction decorrelate per-bin GC-content from normalized read counts, DAGIP exploits information from the entire dataset to correct each individual sample, providing more comprehensive bias removal and better cancer signal isolation [42] [43].

Q4: What are the minimum data requirements to use DAGIP? DAGIP requires two groups of matched samples (preferably paired) sequenced under different protocols. The data should be structured as matrices where rows represent samples (e.g., coverage, methylation, or fragmentomic profiles) and columns represent features (e.g., genomic bins or DMRs). One group serves as the source domain (protocol 2) and the other as the target domain (protocol 1) for the correction [44].

Q5: Can DAGIP be used to integrate cohorts from different studies? Yes, a key advantage of DAGIP is its ability to integrate cohorts from different studies by explicitly correcting for technical biases introduced by different pre-analytical settings. This allows researchers to combine datasets produced by different sequencing pipelines or collected at different centers, effectively increasing the statistical power for downstream analyses [42].

Troubleshooting Guides

Issue 1: Installation and Dependency Problems

Problem: Errors during installation or when importing the DAGIP package. Solution:

- Ensure all Python dependencies are installed, including rpy2

- Install the required R packages: dplyr, GenomicRanges, and dryclean

- Install the DAGIP package using:

python setup.py install --user - Verify your environment can execute both Python and R code [44]

Issue 2: Data Formatting and Preparation Errors

Problem: The fit_transform() method fails with dimension or data type errors.

Solution:

- Ensure your input data (X and Y) are NumPy arrays with identical feature dimensions

- Confirm that rows represent profiles and columns represent features

- Verify that samples from protocols 1 and 2 are properly matched

- Check that no missing or infinite values are present in the arrays Example of correct implementation:

Issue 3: Poor Bias Correction Performance

Problem: The corrected data shows minimal improvement or unexpected artifacts. Solution:

- Verify that your source and target domain samples are well-matched biologically

- Ensure adequate sample size in both domains for reliable transport plan estimation

- Check that the neural network architecture and training parameters are appropriate for your data type

- Consider adjusting the optimal transport parameters based on your specific data characteristics

- Validate results using known biological controls if available [42]

Issue 4: Model Saving/Loading Failures

Problem: Errors when saving or loading trained models. Solution:

- Use the exact same package versions when saving and loading models

- Ensure sufficient write permissions in the save directory

- Verify the complete file path is specified when saving:

DAGIP Parameters and Performance Metrics

Table 1: Key Experimental Parameters for DAGIP Implementation

| Parameter Category | Specific Parameter | Recommended Setting | Function | ||

|---|---|---|---|---|---|

| Data Input | Feature Type | Coverage profiles, fragment sizes | Defines the input data modality | ||

| Sample Matching | Paired or biologically matched | Ensures valid domain translation | |||

| Matrix Orientation | Rows: samples, Columns: features | Proper data structure | |||

| Optimal Transport | Cost Function | Quadratic ( | xi-yj | ²) | Determines transport energy |

| Transport Plan | Sample-to-sample mapping | Guides correction direction | |||

| Neural Network | Architecture | Deep learning model | Estimates technical bias | ||

| Training | Paired samples | Learns bias correction function |

Table 2: Comparison of Bias Correction Methods in cfDNA Analysis

| Method | Approach | Data Modalities | Interpretability | Dependencies |

|---|---|---|---|---|

| DAGIP | Optimal transport + deep learning | Coverage, fragmentomics, end motifs | High (original space) | Python, R packages |

| GC-content LOESS | Local regression | Coverage profiles | Medium | None |

| BEADS | Read-level reweighting | Coverage profiles | Medium | None |

| dryclean | Robust PCA | Coverage profiles | Low | R |

| LIQUORICE | Fragment-level weighting | Coverage profiles | Medium | None |

Experimental Protocols

Protocol 1: Basic DAGIP Workflow for Coverage Profile Correction

Purpose: Correct technical biases in coverage profiles from different sequencing protocols.

Materials:

- NumPy arrays of coverage profiles (bins × samples)

- Matched samples from two different protocols

- DAGIP installation with dependencies

Procedure:

- Data Preparation: Format coverage data as NumPy matrices where rows are samples and columns are genomic bins

- Domain Specification: Assign protocol 2 data to X (source domain) and protocol 1 data to Y (target domain)

- Model Initialization: Create DomainAdapter instance

- Model Training and Transformation: Call

fit_transform()to correct source domain data - Model Saving: Save trained model for future use

- New Data Correction: Use

transform()method to correct new samples independently

Validation: Compare principal component analysis (PCA) plots before and after correction to confirm reduced technical variation while preserving biological signals [42] [44].

Protocol 2: Multi-modal cfDNA Analysis with DAGIP

Purpose: Correct technical biases across multiple cfDNA data types simultaneously.

Materials:

- Coverage profiles (read counts per genomic bin)

- Fragment size distributions

- End motif frequency profiles

- Nucleosome positioning data

Procedure:

- Feature Concatenation: Combine different data modalities into a unified feature matrix

- Normalization: Apply appropriate normalization to each data type

- DAGIP Application: Apply standard DAGIP workflow to the multi-modal matrix

- Post-processing: Separate corrected modalities for downstream analysis

- Validation: Assess preservation of known biological relationships across modalities

Note: This approach is particularly valuable for integrated analyses that leverage complementary information from different cfDNA features [42].

Workflow Visualization

Research Reagent Solutions

Table 3: Essential Materials for DAGIP Implementation

| Category | Item/Software | Function/Purpose | Implementation Notes |

|---|---|---|---|

| Computational Tools | DAGIP Python Package | Core bias correction algorithm | Install via: python setup.py install --user |

| R with dplyr, GenomicRanges | Data manipulation and genomic processing | Required dependency | |

| dryclean R package | Background correction reference | Used in comparative analyses | |

| NumPy | Numerical data processing | Handles matrix operations | |

| Data Types | Coverage Profiles | Read count per genomic bin | Primary input for CNA detection |

| Fragment Size Distributions | Fragment length frequencies | Fragmentomics analysis | |

| End Motif Frequencies | 4-nucleotide end patterns | Fragmentomics biomarker | |

| Methylation Profiles | DNA methylation patterns | Multi-modal integration | |

| Validation Methods | PCA Visualization | Technical variation assessment | Pre- vs. post-correction comparison |

| Biological Controls | Known positive/negative samples | Performance validation | |

| Domain Classifiers | Domain shift measurement | Quantify correction effectiveness |

Advanced Troubleshooting

Issue 5: Handling Large-Scale Genomic Data

Problem: Memory errors when processing whole-genome coverage data. Solution:

- Implement data chunking for large matrices

- Consider binning genomic regions to reduce dimensionality

- Use memory-mapped arrays for large datasets

- Ensure adequate RAM allocation for transport plan computation

Issue 6: Domain Shift Validation

Problem: Uncertainty about whether correction successfully reduced technical biases. Solution:

- Train domain classifiers (e.g., SVM) pre- and post-correction

- Use PCA to visualize separation between domains before and after correction

- Validate preservation of known biological signals using positive controls

- Assess improvement in downstream task performance (e.g., cancer detection accuracy) [42] [43]