Managing the NGS Data Deluge: Scalable Storage and Analysis Strategies for Biomedical Research

Next-Generation Sequencing (NGS) generates terabytes of data, posing significant storage, management, and analysis challenges for researchers and drug development professionals.

Managing the NGS Data Deluge: Scalable Storage and Analysis Strategies for Biomedical Research

Abstract

Next-Generation Sequencing (NGS) generates terabytes of data, posing significant storage, management, and analysis challenges for researchers and drug development professionals. This article provides a comprehensive guide to navigating the entire NGS data lifecycle. It covers foundational cloud and security principles, methodological approaches for analysis and workflow automation, strategies for troubleshooting and cost optimization, and finally, a comparative analysis of validation techniques and infrastructure solutions to ensure accuracy and scalability in biomedical and clinical research.

The NGS Data Landscape: Understanding the Scale and Core Challenges

Quantifying the Data Deluge: NGS Output Scales

The volume of data generated by Next-Generation Sequencing (NGS) instruments varies significantly based on the platform and run type, directly impacting storage and computational planning. The table below summarizes the typical raw data output and the resulting file sizes for common sequencing platforms, illustrating the scale of data management required from benchtop to production-level operations [1].

Table 1: NGS Instrument Output and Data Storage Requirements

| Instrument | Run Type | Output (Gigabases) | Run Folder Size (Gigabytes) |

|---|---|---|---|

| MiSeq | 2x150 bp | 5 | 16–18 |

| MiSeq | 2x300 bp | 15 | 22–26 |

| NextSeq500 | 2x150 bp High output | 120 | 60–70 |

| HiSeq2500 | 2x125 bp High output | 500 | 295–310 |

| NovaSeq | 2x150 bp S2 flowcell | 1000 | 730 |

| NovaSeq | 2x150 bp S4 flowcell | 2500 | 2190 |

Recent advancements demonstrate a trend toward higher data yields on smaller instruments. One 2025 study showed that a flexible, production-scale project using a benchtop sequencer successfully processed 807 samples across 313 flow cells, achieving a median quality score (%Q30) of 96.6% and a median %Q40 of 89.31% [2]. This highlights how benchtop instruments can now generate data on a scale once reserved for production-scale machines.

Experimental Protocols for Production-Scale Sequencing

Protocol: Production-Scale hWGS on a Benchtop Sequencer

This protocol, adapted from a 2025 study, outlines a method for achieving high-quality human Whole Genome Sequencing (hWGS) on a benchtop instrument [2].

- Objective: To perform >30x coverage human whole-genome sequencing on a benchtop sequencer at a production scale (hundreds of samples).

- Key Experimental Steps:

- Library Preparation: Prepare sequencing libraries using a standardized kit. The study demonstrated flexibility by also testing libraries with large insert sizes (1kb+) and protocols for rapid WGS.

- Pre-pool QC (Quality Control): To screen library quality and maximize sample yield, perform 48-plex sample pre-pool 'QC' runs. This provides over 1x sequence coverage per sample prior to full-depth sequencing, offering valuable sample-level insights.

- Sample Pooling: Pool samples based on the QC results to ensure balanced representation.

- Sequencing: Load the pooled libraries onto the benchtop sequencer. The study used standard settings for trio sequencing (three-plex) to consistently achieve >30x coverage.

- Rapid Sequencing (Optional Use Case): For time-critical applications, a 2x100 >30x human WGS can be sequenced in under 12 hours, with subsequent file generation completed in less than one additional hour.

- Key Quality Metrics:

- Median %Q30: 96.6%

- Median %Q40: 89.31%

- Coverage: >30x

The following workflow diagram summarizes this experimental protocol.

Protocol: Standardized NGS Data Analysis Workflow

A robust bioinformatics pipeline is crucial for handling the data deluge. The following is a generalized, standardized workflow for NGS data analysis [3] [4].

- Objective: To transform raw sequencing data into aligned, quantified, and interpreted results in a reproducible manner.

- Key Experimental Steps:

- Quality Control (QC): Use tools like FastQC on raw FASTQ files to check base quality, adapter contamination, and overrepresented sequences.

- Trimming/Filtering: Use tools like Trimmomatic or Cutadapt to remove low-quality bases, sequencing adapters, and other contaminants based on QC results.

- Alignment: Map the cleaned sequencing reads to a reference genome (e.g., hg38) using an aligner like BWA or STAR. The reference genome must be downloaded and indexed correctly for the chosen aligner.

- Quantification/Variant Calling: Depending on the experiment, perform tasks such as variant calling (e.g., using GATK), gene expression quantification, or other analyses.

- Visualization & Interpretation: Use visualization tools and annotation databases to interpret the biological significance of the results.

Troubleshooting Guides and FAQs

Troubleshooting Common NGS Data Analysis Bottlenecks

Table 2: Common NGS Data Analysis Pitfalls and Solutions

| Problem Category | Typical Failure Signals | Common Root Causes | Corrective Actions |

|---|---|---|---|

| Sequencing Errors & Quality [4] [5] | Low-quality reads, adapter contamination, high duplication rates. | Degraded DNA/RNA; sample contaminants; inaccurate quantification; over- or under-fragmentation. | Perform rigorous QC (FastQC); trim/filter reads; use fluorometric quantification (Qubit) instead of just UV absorbance; re-purify input sample [3] [5]. |

| Tool Variability & Standardization [4] | Conflicting results from different algorithms or pipelines. | Use of different alignment or variant calling methods without standardization. | Use standardized, version-controlled pipelines (e.g., Snakemake, Nextflow) to reduce inconsistencies and improve reproducibility [3] [4]. |

| Computational Demands [4] [1] | Analyses are slow or fail; inability to handle large datasets (e.g., WGS). | Insufficient RAM, CPU, or storage; non-optimized workflows. | Invest in powerful servers or use cloud computing (AWS, Google Cloud); optimize workflows for efficiency [6] [1]. |

Frequently Asked Questions (FAQs)

Q1: My sequencing run finished, but my analysis pipeline failed due to low-quality reads. What went wrong and how can I prevent this?

A: The problem likely originated during library preparation, not the sequencing run itself [5]. Common causes include:

- Degraded or contaminated nucleic acids: Inhibitors can affect enzymes during prep. Always check sample quality (260/230 and 260/280 ratios) and re-purify if necessary [5].

- Inaccurate quantification: Using only NanoDrop can overestimate usable material. Use a fluorometric method like Qubit for accurate quantification of double-stranded DNA [5].

- Adapter dimer formation: This can be caused by an suboptimal adapter-to-insert ratio or inefficient purification. Titrate adapter concentrations and ensure proper cleanup to remove dimers [5].

- Preventative Measure: Implement a pre-pool QC run, as described in the protocol above, to catch library issues before committing to full-depth sequencing [2].

Q2: What are the best strategies for the long-term storage of large-scale NGS data?

A: There are three primary strategies, each with a different trade-off between cost, storage burden, and reproducibility [1]:

- Complete storage of raw data: Store all files from the instrument (.bcl/.bcf), intermediate files (.fastq, .bam), and final results (.vcf). This offers full reproducibility but has the highest storage cost.

- Storage for repeatable analysis: Archive .fastq and/or .bam files along with all software versions and parameter settings. This allows the primary analysis to be repeated without storing the very largest raw instrument files.

- Storage of results only: Keep only the final variant calls and analysis reports. This is the cheapest option, but the sample must be re-sequenced for any re-analysis. For large-scale WGS projects, the cost of storage can sometimes outweigh the cost of re-sequencing a few samples [1].

Q3: My NGS analysis is too slow on my local server. What are my options?

A: Computational limits are a common bottleneck [4]. You can:

- Optimize your workflow: Use efficient, standardized pipelines to reduce unnecessary steps and resource use [4].

- Upgrade hardware: Invest in more powerful in-house servers with greater CPU, RAM, and fast storage.

- Leverage cloud computing: Platforms like Amazon Web Services (AWS) or Google Cloud Genomics provide scalable infrastructure. You pay for only the compute and storage you use, which is ideal for large, intermittent projects and avoids the problem of over- or under-provisioning in-house IT [6] [1].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Platforms and Technologies in NGS

| Item / Technology | Function / Description | Example Providers / Platforms |

|---|---|---|

| High-Throughput Benchtop Sequencer | Provides production-scale sequencing data on a benchtop instrument. | Element Biosciences AVITI24, Illumina NovaSeq X, MGI DNBSEQ-T1+ [2] [7] |

| Long-Read / Portable Sequencer | Enables real-time sequencing, long reads for superior coverage, and portable use. | Oxford Nanopore Technologies (MinION), PacBio Sequel [6] [7] |

| Library Preparation Kits | Reagent kits for converting DNA/RNA samples into sequence-ready libraries. | A dominant product segment; kits from Illumina, Thermo Fisher, QIAGEN [8] |

| Automated Library Prep Systems | Instruments that automate library preparation to increase throughput, reproducibility, and reduce human error. | Agilent Magnis NGS Prep System, Revvity chemagic 360 [8] [7] |

| Cloud Computing Platform | Provides scalable computational power and storage for massive NGS data analysis. | Amazon Web Services (AWS), Google Cloud Genomics, Microsoft Azure [6] [1] |

| Bioinformatics Pipeline Tools | Frameworks for creating reproducible and scalable data analysis workflows. | Snakemake, Nextflow [3] |

Next-Generation Sequencing (NGS) has revolutionized biological research and clinical diagnostics by enabling the sequencing of millions of DNA fragments simultaneously [9]. While this technology provides unprecedented insights, it generates massive datasets that present significant storage and management challenges. The core challenges revolve around three key areas: the immense volume of data created, the high velocity at which it is produced, and the complexities of long-term archiving for future research and compliance.

The global NGS data storage market, estimated at USD 3.15 billion in 2025, reflects the scale of this challenge, with projections indicating a compound annual growth rate (CAGR) of 14.62% through 2032 [10]. Researchers and institutions must develop robust strategies to manage this data deluge effectively, ensuring data remains accessible, secure, and usable for years to come.

Understanding Data Volume and Velocity

The Data Volume Challenge

NGS technologies produce extraordinarily large datasets. A single whole-human genome sequencing run can generate terabytes of raw data, and large-scale projects can accumulate petabytes of information [10].

Key Volume Statistics:

- The NIH Sequence Read Archive (SRA) alone contains over 36 petabytes of raw sequencing data from more than nine million experiments [10].

- Global NGS data generation is projected to reach 800 million terabytes in 2025, with annual growth exceeding 35% through 2033 [11].

- Cloud storage repositories for NGS data require massive infrastructure, with estimated annual expenditures exceeding $500 million globally in 2025 [11].

The Data Velocity Challenge

The speed of data generation from modern sequencers often outpaces the development of storage infrastructure and analytical capabilities.

Velocity Drivers:

- Modern high-throughput sequencers like Illumina's NovaSeq X can process entire human genomes in hours rather than years [6] [9].

- The continuous evolution of sequencing technologies has reduced costs from billions of dollars per genome to under $1,000, democratizing access and accelerating data production [9].

- Real-time sequencing technologies, such as Oxford Nanopore, provide immediate data streams that require simultaneous processing and storage [6] [8].

Long-Term Archiving Solutions

Storage Media Comparison

Selecting appropriate storage media requires balancing capacity, durability, cost, and access frequency. The table below compares modern archiving technologies:

| Storage Solution | Capacity Range | Estimated Durability | Relative Cost | Best Use Cases |

|---|---|---|---|---|

| LTO-8 Tape | 12-30 TB (compressed) | Up to 30 years | Low | Large-scale institutional archives, infrequent access data [12] |

| Cloud Archiving | Virtually unlimited | Maintained by provider | Variable (pay-as-you-go) | Collaborative projects, scalable needs [12] [13] |

| Cold Storage HDDs | 10-24+ TB | Up to 10 years | Medium | Data requiring occasional access [12] |

| M-DISC | 25-100 GB | Up to 1,000 years | Medium per GB | Critical legal, regulatory, or foundational data [12] |

| DNA Data Storage | ~215 PB/gram | Thousands of years | Very High (currently) | Experimental archival for highest-value data [12] [14] |

| BDXL Discs | Up to 128 GB | 30-50 years | Low | Small to medium datasets, portable archives [12] |

Emerging Storage Technologies

DNA Data Storage: This promising approach encodes digital data into synthetic DNA sequences, offering unparalleled density—theoretically up to 215 petabytes per gram [14]. While currently prohibitively expensive (estimated at $800 million per terabyte), research continues to reduce costs and improve accessibility [14]. DNA storage is particularly valuable for archival purposes due to its stability over millennia under proper conditions.

Optical Archiving Systems: Professional optical systems offer capacities of 300 GB to 1.5 TB per disc with durability up to 100 years, making them suitable for broadcasting, government, and long-term digital preservation [12].

Data Management Workflow

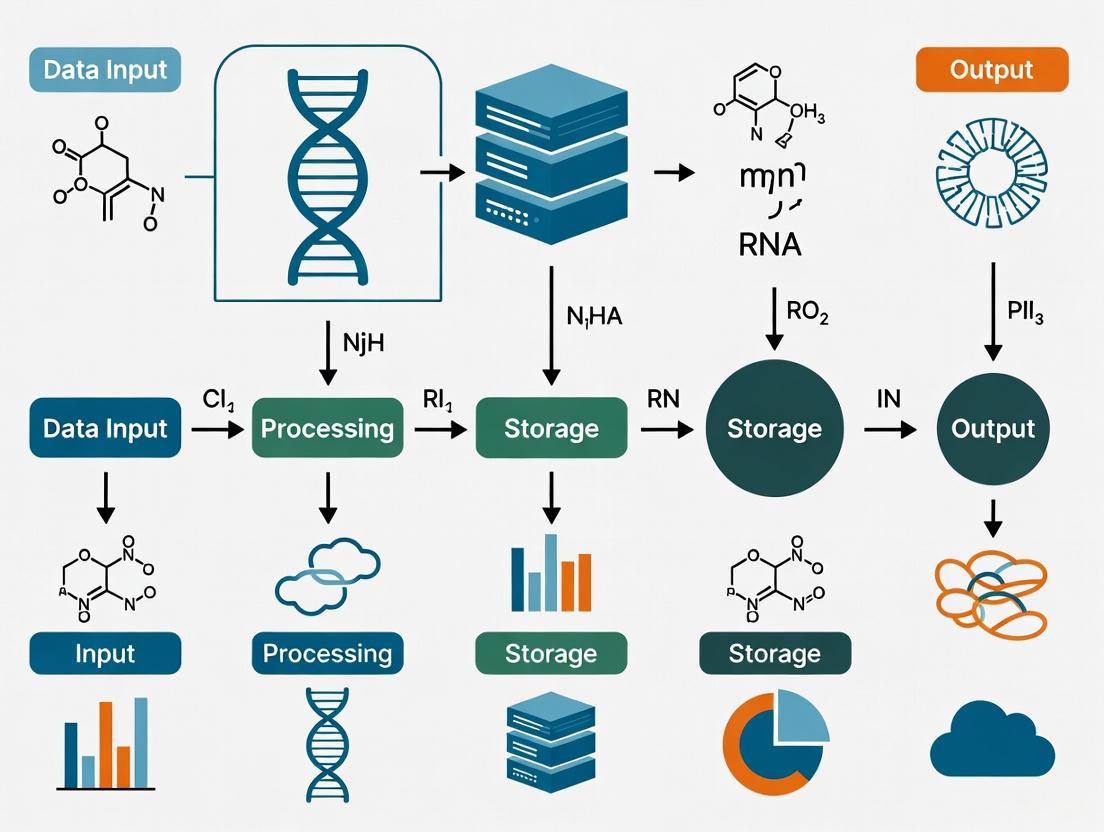

The following diagram illustrates the complete NGS data lifecycle from generation to long-term archiving, highlighting key decision points for storage tiering:

NGS Data Lifecycle and Storage Tiering

Troubleshooting Guides

FAQ: Managing Large NGS Datasets

Q: Our research institute is experiencing rapidly increasing storage costs from NGS data. What strategies can help control expenses? A: Implement a tiered storage architecture with policy-based lifecycle management:

- Move data automatically between performance tiers (fast SSD), capacity tiers (hard disks), and archival tiers (cloud archive or tape) based on access patterns [10] [13]

- Use specialized genomic file formats like CRAM, which provides 30-60% better compression than BAM by storing only differences from reference genomes [15]

- Deploy data deduplication techniques to eliminate redundant copies of identical sequencing reads across projects [10]

Q: How can we ensure long-term data integrity for archived NGS datasets? A: Establish a comprehensive data integrity strategy:

- Implement regular integrity checking using checksum validation to detect data degradation or corruption [13]

- Plan for periodic data refreshing by copying archived data to new media every 3-5 years for magnetic media or 5-10 years for optical media [12] [13]

- Maintain multiple copies in geographically dispersed locations using replication strategies [13]

- Use write-once-read-many (WORM) storage for regulatory compliance to prevent accidental or malicious modification [13]

Q: What are the best practices for balancing cloud vs. on-premises storage for NGS data? A: Most organizations benefit from hybrid approaches:

- Store actively analyzed data on-premises or in cloud hot storage for performance [10] [16]

- Archive processed data in cloud cold storage services (e.g., Amazon S3 Glacier) for cost efficiency [12] [10]

- Consider keeping sensitive patient data in private clouds or on-premises to maintain compliance with regulations like HIPAA and GDPR [6] [10]

- Use cloud bursting capabilities for computationally intensive analyses while maintaining primary storage locally [10]

Q: How do we handle the challenge of obsolete storage media and formats? A: Develop a technology refreshment strategy:

- Monitor industry trends and plan for media migration before formats become obsolete [13]

- Use open, standardized file formats (e.g., FASTQ, BAM, VCF) rather than proprietary formats [15]

- Implement emulation techniques to recreate legacy software environments if needed to access old data [13]

- Consider encapsulation, storing data with its metadata and software requirements to ensure future interpretability [13]

Common Error Resolution

Problem: Slow analysis performance due to storage bottlenecks

- Solution: Implement distributed storage architectures that parallelize I/O operations across multiple nodes. Use NVMe flash storage for hot data and indexing files to accelerate random access patterns common in genomic analysis [10].

Problem: Difficulty locating specific datasets in large archives

- Solution: Enhance metadata management using rich metadata supported by object storage systems. Implement standardized naming conventions and project taxonomy. Deploy scientific data management systems specifically designed for genomic data [10] [13].

Problem: Data security and compliance concerns

- Solution: Implement comprehensive encryption for data both at rest and in transit. Establish strict access controls and audit trails. Use WORM storage for regulatory compliance where data must be preserved in unalterable form [6] [13].

Essential Research Reagents and Solutions

The table below details key resources for managing NGS data storage challenges:

| Resource Category | Specific Solutions | Function & Application |

|---|---|---|

| Storage Hardware | LTO-8 Tape Libraries, High-density HDD Arrays | Provides scalable capacity for large-scale genomic archives [12] |

| Cloud Platforms | AWS Genomics CLI, Google Cloud Genomics, Azure Bioinformatic | Offers scalable, on-demand storage and analysis environments [10] [16] |

| Data Management Software | SAMtools, PICARD, Biocontainers | Handles format conversion, compression, and data manipulation [15] |

| File Formats | CRAM, BAM, VCF, FASTQ | Standardized formats ensure interoperability and efficient storage [15] |

| Metadata Catalogs | NCBI SRA, ENA, GNomAD | Centralized repositories for dataset discovery and metadata management [10] |

| Workflow Systems | Nextflow, Snakemake, Cromwell | Orchestrates distributed storage and computing across environments [9] |

| Security Tools | Encryption Key Management, Audit Logging | Ensures compliance with data protection regulations [6] [13] |

Managing the volume, velocity, and long-term archiving requirements of NGS data demands sophisticated strategies and technologies. By implementing tiered storage architectures, selecting appropriate media based on access patterns, and establishing robust data management practices, research institutions can transform their data challenges into actionable insights. The future will likely bring continued innovation in storage technologies, particularly in emerging areas like DNA-based storage, which may eventually provide revolutionary solutions for preserving our growing genomic understanding for generations to come.

The management of Next-Generation Sequencing (NGS) data presents significant challenges in storage, computation, and security. Cloud-based solutions offer a powerful alternative to local infrastructure, providing scalable computational resources, cost-effective storage tiers, and robust security frameworks designed to meet the stringent requirements of genomic research [17] [18] [19]. For researchers and drug development professionals, the cloud eliminates substantial upfront investments in physical hardware, replacing capital expenditure with a flexible, pay-as-you-go operational model [18] [20]. This shift allows research teams to scale their computational power on-demand, processing large datasets rapidly without being constrained by local server capacities [20].

Adoption is further driven by the integration of specialized tools and services. Major cloud providers offer platforms tailored for the life sciences, providing specialized environments for bioinformatic analysis, multi-omics data integration, and collaborative research [21] [22] [23]. These environments are built with compliance in mind, adhering to standards such as HIPAA and GDPR, which is critical for handling sensitive clinical and genomic data [17] [19].

Troubleshooting Guides

FAQ: How do I reduce cloud computing costs for routine NGS analysis?

- Problem: High compute costs for processing FASTQ files.

- Solution: Optimize virtual machine (VM) selection based on your pipeline's requirements. For CPU-intensive pipelines like Sentieon, use high-CPU VMs. For GPU-accelerated tools like Parabricks, use instances with attached GPUs. Always use spot or preemptible instances for fault-tolerant batch jobs to reduce compute costs by 60-80% [18].

- Prevention: Perform small-scale benchmarking with a subset of your data to accurately right-size computing resources before processing full datasets [18].

FAQ: Why is my data storage cost higher than projected?

- Problem: Inflated costs due to data stored in incorrect storage tiers.

- Solution: Implement a Lifecycle Policy to automatically transition data to cheaper storage tiers. Move raw data (FASTQ, BAM) from "hot" storage (like AWS S3 or Google Cloud Regional) to "cold" archival storage (like AWS Deep Glacier or Google Coldline) after 3 months, which can reduce storage costs by over 90% over a 10-year period [17].

- Prevention: Design a data management strategy at the project's outset that defines the lifecycle for each data type based on its re-access probability [17] [19].

FAQ: How can I ensure my NGS data in the cloud is secure and compliant?

- Problem: Ensuring data security and regulatory compliance (e.g., HIPAA).

- Solution: Leverage the cloud provider's built-in security features. This includes enabling encryption both at rest and in transit, using identity and access management (IAM) controls to enforce the principle of least privilege, and ensuring your cloud provider sign a Business Associate Agreement (BAA) [17] [19].

- Prevention: Choose cloud providers that validate their infrastructure against industry standards like ISO 27001, HIPAA, and GDPR, and conduct regular security audits [19].

FAQ: I am experiencing slow data transfer speeds to the cloud. How can I improve this?

- Problem: Bottlenecks while uploading large sequencing files.

- Solution: Use the cloud provider's accelerated data transfer tools (e.g., AWS Aspera, Google Cloud Storage Transfer Service). These tools use optimized protocols and compression to accelerate uploads. Ensure you have a reliable, high-bandwidth internet connection and consider transferring data during off-peak hours.

- Prevention: For ongoing, large-scale transfers, explore physical data shipment options like AWS Snowball, which can be more cost-effective and faster than internet-based transfer for terabytes of data.

Cloud Storage Cost Analysis

The cost of storing NGS data in the cloud varies dramatically based on the storage class, with archival tiers offering the most significant savings for long-term data retention [17]. The following table summarizes the cost structures across major cloud providers, providing a basis for comparison.

Table: Comparative Cloud Storage Tiers and Costs for NGS Data (Based on 2020 data from PMC) [17]

| Vendor | Storage Tier | Cost per GB-Month | Retrieval Time | Retrieval Cost per GB |

|---|---|---|---|---|

| AWS | S3 Standard | 2.1–2.3 cents | Immediate | - |

| S3 Infrequent Access (IA) | 1.25 cents | Immediate | 1.0 cents | |

| Glacier | 0.4 cents | 3–5 hours | 0.25–3.0 cents | |

| Deep Glacier | 0.099 cents | 12–48 hours | 0.25–2.0 cents | |

| GCP | Regional | 2.0–2.3 cents | Immediate | - |

| Nearline | 1.0 cents | Immediate | 1.0 cents | |

| Coldline | 0.7 cents | Immediate | 2.0 cents | |

| Archive | 0.25 cents | Immediate | 5.0 cents | |

| Azure | LRS Hot | 1.7–2.08 cents | Immediate | - |

| LRS Cool | 1.0–1.5 cents | Immediate | 1.0 cents | |

| LRS Archive | 0.099–0.2 cents | <15 hours | 2.0 cents |

Effective cost management requires a strategic approach to data lifecycle management. The table below illustrates how different data retention strategies can impact the cost per test over a ten-year period.

Table: Impact of Data Lifecycle Strategy on Storage Cost (for 1000 exomes/year, 6 TB/year) [17]

| Strategy | Description | Cost per Test (over 10 years) |

|---|---|---|

| Strategy A | All data stored in "hot" storage (e.g., AWS S3) for 10 years. | $12.39 |

| Strategy B | Data in "hot" storage for 2 years, then moved to "cold" storage (e.g., Glacier) for 8 years. | $3.29 |

| Strategy C | Data in "hot" storage for 3 months, then moved to "cold" storage (e.g., Deep Glacier) for 10 years. | $0.88 |

Experimental Protocols

Protocol: Rapid NGS Analysis on Google Cloud Platform (GCP)

This protocol provides a methodology for deploying and benchmarking ultra-rapid germline variant calling pipelines on GCP, as demonstrated in recent literature [18].

1. Experimental Design

- Objective: Benchmark Sentieon DNASeq and Clara Parabricks Germline pipelines in terms of runtime, cost, and resource utilization on GCP.

- Samples: Use five publicly available whole-exome (WES) and five whole-genome (WGS) samples from the Sequence Read Archive (SRA).

- Pipelines: Process raw FASTQ files to VCF using both pipelines with their default parameters.

2. Cloud Deployment and VM Configuration

- Sentieon DNASeq Setup (CPU-based):

- VM Series: N1 series.

- Machine Type:

n1-highcpu-64(64 vCPUs, 57.6 GB memory). - Estimated Cost: ~$1.79 per hour [18].

- NVIDIA Clara Parabricks Setup (GPU-based):

- VM Configuration: 48 vCPUs, 58 GB memory, 1 T4 NVIDIA GPU.

- Estimated Cost: ~$1.65 per hour [18].

3. Execution and Data Analysis

- Transfer the license file and software to the VM using Secure Copy Protocol (SCP) for Sentieon. Parabricks does not require this step.

- Launch both pipelines with their default execution steps, including alignment, duplicate marking, base quality recalibration, and variant calling.

- Metrics: Record the total runtime for each sample, total cost per sample (based on VM uptime), and monitor CPU/GPU and memory utilization.

Protocol: Cost Analysis for Long-Term NGS Data Archival

This methodology outlines the use of a specialized online calculator (ngscosts.info) to forecast long-term storage costs for a clinical laboratory [17].

1. Parameter Input

- Provide yearly test volumes for WGS, WES, and/or panels.

- Input data storage sizes (canonical: 120GB for WGS, 6GB for WES, 1GB for panels) or use custom values.

- Define key parameters: annual growth rate, data compression factor, data retention policy (in years), and case re-access rate.

2. Cost Simulation

- The tool models complex forecasts over 1–20 year timeframes.

- It calculates the total amount of data stored each year, accounting for growth and compression.

- The tool applies cost calculations across different storage tiers, factoring in lifecycle transition policies.

3. Output and Analysis

- Visualization: An easy-to-interpret chart shows total data stored over time.

- Cost Breakdown: Outputs include yearly costs, total lifetime cost, and a critical marginal "cost per test" estimate.

- Strategy Comparison: Enables quick exploration and comparison of dozens of storage options across three major cloud providers.

Workflow Visualization

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational and data management "reagents" — essential platforms and tools used in modern cloud-based NGS research.

Table: Essential Cloud Platforms and Tools for NGS Research

| Item | Function |

|---|---|

| Terra (Azure/Broad Institute) | An open-source, scalable platform for secure, collaborative biomedical data analysis. It provides access to genomic data and community-developed workflows [23]. |

| Illumina Connected Analytics | A cloud-based platform for secure and scalable multi-omics data management, analysis, and exploration, offering specialized tools for NGS data [19]. |

| DRAGEN Bio-IT Platform | Provides accurate, ultra-rapid secondary analysis of NGS data (e.g., alignment, variant calling) via hardware-accelerated algorithms, available on-premises and in cloud environments [19]. |

| Sentieon DNASeq | A highly optimized, CPU-based software pipeline that provides accelerated, accurate secondary analysis for germline and somatic variants, often deployed on cloud VMs [18]. |

| NVIDIA Clara Parabricks | A GPU-accelerated software suite that uses graphical processing units to dramatically speed up NGS data analysis pipelines like germline and somatic variant calling [18]. |

| Cloud Lifecycle Policies | Automated policies that manage data retention and transfer, moving data from expensive "hot" storage to low-cost "cold" storage after a defined period to optimize costs [17]. |

Regulatory Framework and Key Definitions

Core Data Protection Regulations for NGS Research

Your research involving human genomic data is governed by a complex framework of data protection regulations. Understanding the scope and requirements of these frameworks is the first step toward ensuring compliance.

- HIPAA (Health Insurance Portability and Accountability Act): U.S. regulation that protects Protected Health Information (PHI) and electronic Protected Health Information (ePHI). For healthcare organizations, proposed 2025 updates to the HIPAA Security Rule make encryption explicitly mandatory for all ePHI at rest and in transit, removing the previous "addressable" designation that allowed for flexibility [24].

- GDPR (General Data Protection Regulation): EU regulation that protects personal data of EU residents, which applies globally to any organization processing such data. GDPR Article 32 requires "appropriate technical and organisational measures" including "the pseudonymisation and encryption of personal data" [25] [26].

- State-Level Regulations: Various U.S. states have implemented their own data protection laws. Connecticut and Utah have expanded child protection laws requiring encryption of minors' data "at all times during processing," including the critical phrase "including during active use" [24].

Essential Terminology

- Protected Health Information (PHI/ePHI): Under HIPAA, any individually identifiable health information that is created, stored, or transmitted [27] [28].

- Personal Data: Under GDPR, any information relating to an identified or identifiable natural person [25] [26].

- Data-in-Use Encryption: Protection of information while it's being actively processed in memory or during computation, maintaining protection even during active use [24].

- Data at Rest: Information not actively being accessed, such as files on hard drives or stored emails [28].

- Data in Transit: Any form of digital information currently being transferred between systems, such as file uploads to cloud services or emails sent over the internet [28].

Troubleshooting Common Compliance Issues

Data Transfer and Storage Problems

Issue: "I need to transfer large NGS datasets to cloud storage, but I'm unsure if our encryption method meets compliance requirements."

- Solution: Implement encryption that satisfies both HIPAA and GDPR standards for data in transit.

- Technical Implementation: Use Transport Layer Security (TLS) version 1.2 or higher following NIST Special Publication 800-52 guidelines, or implement IPsec VPNs following NIST Publication 800-77 [28].

- Data-in-Transit Protocols: Ensure all data transfers use encrypted protocols (SFTP, HTTPS) rather than unencrypted alternatives (FTP, HTTP).

- Verification Steps: Regularly test and verify encryption protocols using vulnerability scanning tools to ensure ongoing compliance [24] [28].

Issue: "Our NGS data is stored in the cloud, but I'm concerned about vulnerabilities during data analysis."

- Solution: Implement advanced encryption techniques that protect data during processing.

- Homomorphic Encryption (HE): Consider emerging technologies like SQUiD (Secure Queryable Database), which uses homomorphic encryption to enable direct computations on encrypted genetic data in the cloud without decryption [29].

- Multi-Layer Encryption: For highly sensitive medical data, implement layered approaches combining information technology (IT) layer encryption (e.g., Blowfish algorithm) with biotechnology (BT) layer encryption using physical DNA characteristics [30].

- Technical Consideration: While homomorphic encryption provides superior security for data-in-use, be aware of increased computational requirements and storage overhead [29].

Data Processing and Analysis Challenges

Issue: "When we process genomic data in memory, there's a period where decrypted data is vulnerable to memory attacks."

- Solution: Implement data-in-use encryption technologies.

- Technical Approach: Deploy solutions that maintain encryption even during active processing, addressing the vulnerability gap where traditional encryption falls short [24].

- Implementation Benefit: This approach specifically addresses compliance requirements in states like Texas and California that provide safe harbor from breach notifications only if data remains encrypted when compromised, including in memory [24].

- Practical Consideration: Work with your IT department to evaluate encryption solutions that offer cryptographic agility, allowing algorithm updates without application changes as standards evolve [24].

Issue: "We need to enable collaborative research on our genomic datasets while maintaining compliance with multiple regulatory frameworks."

- Solution: Implement secure, queryable encrypted database architectures.

- Technical Implementation: Deploy systems like SQUiD that use public key-switching techniques, enabling multiple authenticated researchers to query encrypted genotype-phenotype data without decrypting the underlying dataset [29].

- Access Control: Establish strict authentication protocols and maintain detailed audit trails of all data access, which helps demonstrate compliance across multiple frameworks [24] [29].

- Data Minimization: Implement query interfaces that return only the specific information needed for analysis rather than full datasets, adhering to GDPR's data minimization principle [26].

Compliance Implementation Guide

Encryption Standards Comparison

Table 1: Encryption Standards for NGS Data Protection

| Standard/Algorithm | Recommended Use | Key Size | Compliance Alignment |

|---|---|---|---|

| AES (Advanced Encryption Standard) | Data at rest (full disk, virtual disk, file/folder encryption) | 128-bit minimum; 256-bit for highly sensitive data | HIPAA-recommended; GDPR "appropriate" measure [28] |

| Transport Layer Security (TLS) | Data in transit over networks | Version 1.2 or higher | Aligns with NIST SP 800-52 for HIPAA; GDPR-compliant [28] |

| IPsec VPNs | Secure network connections | Following NIST SP 800-77 | HIPAA-compliant for data in transit [28] |

| Homomorphic Encryption | Data-in-use during analysis/querying | Varies by implementation | Emerging standard for ultra-secure genomic data analysis [29] |

| Blowfish Algorithm | Multi-layer encryption approaches | Varies by implementation | Used in specialized DNA data storage applications [30] |

NGS Data Encryption Workflow

NGS Data Encryption Pathway: This workflow illustrates the comprehensive encryption process for genomic data from raw sequencing files through to secure analysis.

Multi-Layer Security Architecture

Multi-Layer Security Framework: This diagram shows the defense-in-depth approach for ultra-secure medical data storage, combining information technology (IT) and biotechnology (BT) encryption layers.

Frequently Asked Questions (FAQs)

Q1: Is encryption explicitly required by HIPAA, or is it optional? A: The 2025 HIPAA updates have made encryption of ePHI mandatory for both data at rest and in transit, removing the previous "addressable" designation that allowed organizational flexibility. While organizations may implement alternative measures that provide equivalent protection, encryption is now explicitly expected as the primary safeguard [24] [28].

Q2: What are the specific encryption algorithms recommended for protecting genomic data? A: For general data protection, NIST recommends:

- AES with 128-bit or higher keys for data at rest [28]

- TLS 1.2+ or IPsec VPNs for data in transit [28]

- For specialized genomic applications, emerging approaches include:

Q3: How does GDPR's encryption requirement differ from HIPAA's? A: While both require encryption, they differ in specificity:

- HIPAA: Provides clearer technical guidelines through NIST publications and increasingly specifies algorithms and key sizes [28].

- GDPR: Takes a principles-based approach, requiring "appropriate technical and organisational measures" without specifying exact algorithms, leaving implementation details to organizations based on risk assessment [25].

Q4: What special encryption considerations exist for NGS data compared to other health data? A: NGS data presents unique challenges:

- Volume: NGS datasets are extremely large, making efficient encryption crucial for practical storage and transfer [31] [32].

- Format Complexity: NGS data includes multiple components (headers, bases, quality scores) that may benefit from different encryption approaches [31].

- Analysis Requirements: Traditional encryption that requires decryption for analysis creates vulnerabilities, making homomorphic encryption particularly valuable for genomic data [29].

Q5: What are the consequences of non-compliance with these encryption standards? A: Non-compliance carries significant consequences:

- Financial penalties: Up to €20 million or 4% of global annual turnover under GDPR; substantial fines under HIPAA [26].

- Loss of safe harbor: Organizations may lose breach notification exemptions if data wasn't properly encrypted when compromised [24].

- Reputational damage: Data breaches can erode patient/participant trust and research collaborations [26].

Research Reagent Solutions: Encryption Tools

Table 2: Essential Encryption Tools for Secure NGS Research

| Tool/Category | Primary Function | Application in NGS Research |

|---|---|---|

| Full Disk Encryption (FDE) | Encrypts entire storage devices | Protection of servers/workstations storing raw NGS data [28] |

| Virtual Disk Encryption (VDE) | Encrypts virtual machines and cloud disk images | Secure cloud-based analysis environments [28] |

| Homomorphic Encryption Platforms (e.g., SQUiD) | Enables computation on encrypted data | Secure querying of genotype-phenotype databases without decryption [29] |

| Secure Compression Algorithms (e.g., SCA-NGS) | Combined compression and encryption | Efficient, secure storage and transfer of large NGS datasets [31] |

| Multi-Layer DNA Encryption | Biological and digital layer encryption | Ultra-secure archival storage of sensitive medical genomic data [30] |

| Transport Layer Security (TLS) | Network transmission encryption | Secure data transfer between sequencing centers, storage, and analysis locations [28] |

From Raw Data to Insights: Storage Architectures and Analysis Workflows

Next-Generation Sequencing (NGS) has revolutionized genomics, but it produces vast amounts of data that require robust, scalable storage solutions [33]. The global NGS data storage market is projected to reach approximately $3,500 million in 2025, growing at a Compound Annual Growth Rate (CAGR) of around 18% through 2033 [11]. With global data creation projected to grow to 181 zettabytes by the end of 2025 and NGS data generation alone estimated to be in the range of 800 million terabytes in 2025, selecting the right data backbone architecture is a critical strategic decision for any research organization [11].

This technical support guide provides a comprehensive comparison of cloud, on-premises, and hybrid storage models specifically for NGS research environments. We include troubleshooting guidance and FAQs to help researchers, scientists, and drug development professionals navigate the specific challenges of managing large genomic datasets.

Model Comparison: Quantitative Analysis

The table below summarizes the core characteristics of each storage model across key decision-making parameters relevant to NGS research.

Table 1: Storage Model Comparison for NGS Data Backbones

| Parameter | Cloud Model | On-Premises Model | Hybrid Model |

|---|---|---|---|

| Cost Structure | Operational Expenditure (OpEx); pay-as-you-go [34] [35] | High Capital Expenditure (CapEx) for hardware [34] | Balanced CapEx and OpEx [34] |

| Scalability | Elastic, virtually unlimited, on-demand [36] [35] | Limited by physical hardware; slow, costly upgrades [34] [35] | Flexible; scale on-premises baseline, burst to cloud for peaks [36] [37] |

| Data Security & Control | Shared responsibility model with provider; advanced features but less direct control [36] [34] | Complete physical and administrative control [34] | Strategic control; sensitive data on-prem, less critical data in cloud [36] [37] |

| Performance & Latency | Subject to network conditions; potential variability [34] | Predictable, low-latency on local network [34] | Optimized; low-latency for on-prem data, cloud for distributed collaboration [36] |

| Compliance & Data Sovereignty | Provider-dependent; must ensure compliance with HIPAA/GDPR [6] [35] | Full internal responsibility; easier to demonstrate for audits [34] | Flexibility to keep regulated data on-prem to meet specific laws [36] |

| IT Management Overhead | Managed by provider; reduces internal IT burden [35] | High overhead; requires specialized in-house team [34] | Moderate; requires expertise to manage both environments [36] |

Architectural Diagrams & Data Flow

Logical Data Flow in a Hybrid NGS Environment

The following diagram illustrates how data moves through a hybrid architecture, which combines the control of on-premises systems with the scalability of the cloud.

Diagram 1: NGS Data Flow in a Hybrid Model

Decision Workflow for Model Selection

This workflow helps researchers determine the most suitable storage model based on their project's specific requirements and constraints.

Diagram 2: Storage Model Selection Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Building a scalable data backbone requires both digital and physical components. The table below details key solutions for managing NGS data workflows.

Table 2: Key Research Reagent Solutions for NGS Data Management

| Solution Category | Specific Examples | Function & Application in NGS Research |

|---|---|---|

| Cloud Platforms | AWS, Google Cloud Genomics, Microsoft Azure [33] [6] [38] | Provides scalable, on-demand infrastructure for storing and computing on massive NGS datasets, enabling global collaboration. |

| Unified Storage Platforms | IBM Spectrum Scale, Dell EMC, Qumulo [33] [39] | Integrates block, file, and object storage into a single architecture to simplify data management and break down silos. |

| Data Management & Analytics | Fabric Genomics, QIAGEN, DNAnexus [11] | Platforms that integrate data storage with advanced analytical capabilities, enabling efficient querying and analysis of vast genomic datasets. |

| Specialized HDDs/SSDs | High-Capacity SMR HDDs, NVMe SSDs [40] | High-capacity Hard Disk Drives (HDDs) offer cost-effective bulk storage, while Solid-State Drives (SSDs) provide high IOPS for rapid data access during analysis. |

Troubleshooting Guides & FAQs

FAQ 1: Our cloud costs for NGS data analysis are spiraling. What are the primary strategies for regaining control?

Unmanaged cloud storage and compute costs can quickly exceed budgets. A 2025 analysis indicates that 21% of enterprise cloud expenditure is wasted on idle or underutilized resources [34].

Troubleshooting Steps:

- Implement a FinOps Culture: Adopt financial operations practices where technical and financial teams collaborate to monitor cloud spend, set budgets, and forecast costs [35].

- Architect to Minimize Egress Fees: Data egress fees (charges for moving data out of the cloud) can be substantial. Design workflows that keep data within the cloud provider's ecosystem for analysis and use architectures like federated analytics to analyze data in place without moving it [35].

- Apply Data Lifecycle Policies: Use cloud-native tools to automatically tier data. Move raw sequencing files that are infrequently accessed to cheaper archival storage classes (e.g., Amazon S3 Glacier) soon after primary analysis is complete [39] [35].

- Leverage Commitment Discounts: For predictable, steady-state workloads, utilize the cloud providers' discounted commitment plans (e.g., AWS Savings Plans, Reserved Instances) to significantly reduce compute costs.

FAQ 2: We are experiencing unacceptable latency when analyzing large BAM files from the cloud, slowing down our research. How can we improve performance?

Performance variability is a common challenge when processing large files over a network.

Troubleshooting Steps:

- Verify Cloud Region Selection: Ensure your cloud compute instances and storage buckets are in the same geographic region. Cross-region data transfer introduces significant latency [34].

- Optimize File Access Patterns: Instead of downloading entire BAM files, use index files (.bai) and tools like

samtoolsthat can read specific regions of interest directly from cloud storage, transferring only the necessary data [6]. - Use High-Performance Compute Instances: For computationally intensive tasks like variant calling, select cloud instances optimized for compute (high-CPU) or memory (high-RAM). Using NVMe-based local instance storage for temporary files during processing can also boost speed [40].

- Consider a Hybrid Approach: If latency remains a critical barrier for core workflows, consider a hybrid model. Store and process the most latency-sensitive components on-premises while using the cloud for less critical tasks, archival, and collaboration [37].

FAQ 3: Our institutional policies require strict data sovereignty. Can we use cloud services while complying with these regulations?

Yes, but it requires careful planning. Data sovereignty laws require that data is stored and processed within specific geographic boundaries [36].

Troubleshooting Steps:

- Select Sovereign Cloud Options: Major cloud providers offer "sovereign cloud" solutions or allow you to specify the region where your data will reside. Ensure you provision all storage and compute resources exclusively within your country's or region's approved data centers [40].

- Enable Data Residency and Compliance Features: Use cloud provider tools that enforce data residency controls, preventing data from being replicated or moved to an unapproved region [36] [35].

- Implement Encryption and Access Controls: Use customer-managed encryption keys and robust Identity and Access Management (IAM) policies to maintain strict control over who can access the data, regardless of its location [36].

- Document Your Architecture: For audit purposes, clearly document your cloud architecture, data flows, and the controls in place to maintain sovereignty. This demonstrates due diligence to compliance officers and regulators.

FAQ 4: How do we choose between building an on-premises cluster versus using the cloud for a new, large-scale NGS project?

The decision hinges on weighing long-term total cost of ownership (TCO) against the need for flexibility.

Decision Protocol:

- Analyze Workload Predictability: If your compute and storage needs are steady and predictable for the next 3-5 years, an on-premises solution may have a lower TCO. For projects with unknown or highly variable demands, the cloud's elasticity is more cost-effective [34].

- Calculate the True TCO: For on-premises, factor in not just hardware costs, but also data center space, power, cooling, hardware maintenance contracts, and the full cost of the specialized IT staff required for support. For cloud, model costs based on expected data volume, compute hours, and egress fees [34].

- Evaluate Technical Debt: Consider the long-term burden of maintaining and refreshing on-premises hardware. Cloud infrastructure is maintained and upgraded by the provider, freeing your team to focus on research [35].

- Start with a Pilot Project: Run a pilot using a hybrid approach. Keep initial data acquisition and primary analysis on a scalable on-premises system, then use the cloud for a specific secondary analysis project. This provides hands-on data to inform your final, larger-scale decision [33].

Implementing Automated NGS Pipelines with Nextflow and Snakemake

Troubleshooting Guides

Pipeline Execution Failures: A Diagnostic Framework

Issue: Pipeline fails at different stages of execution. The troubleshooting approach varies significantly depending on when the error occurs.

Diagnosis and Solutions:

- Error Before First Process: Often related to outdated Nextflow versions or core configuration. Update Nextflow using

nextflow self-updateand verify installation [41]. - Error During First Process: Typically indicates missing software dependencies or incorrect configuration profiles. Ensure correct Docker, Singularity, or Conda profiles are specified [41].

- Error During Run or Output Generation: Check individual process error logs in the Nextflow work directory for tool-specific failures. The system reports "Missing output file(s)" when expected process outputs aren't generated [41].

Diagnostic Table: Execution Failure Symptoms and Solutions

| Failure Timing | Common Symptoms | Immediate Actions | Long-term Prevention |

|---|---|---|---|

| Before First Process | Version compatibility errors, syntax errors | Update Nextflow, validate pipeline syntax [41] | Maintain updated Nextflow installation |

| During First Process | Container errors, missing command errors | Verify container setup, check configuration profiles [41] | Use standardized dependency profiles |

| During Run | "Missing output file(s)" error, process-specific failures | Check .command.log and .command.err in work directory [41] |

Implement comprehensive quality control steps [3] |

Data Quality and Input File Issues

Issue: Pipeline failures due to problematic input data or quality issues.

Diagnosis and Solutions:

- Poor Quality Reads: Use FastQC to identify adapter contamination, overrepresented sequences, or low base quality. Follow with trimming tools like Trimmomatic or Cutadapt [3].

- Incorrect Reference Genomes: Ensure correct genome version (e.g., hg38) and proper indexing for your aligner. Mismatches cause misalignment [3].

- File Format Incompatibility: Verify FASTQ, BAM file structure and compression compatibility. Check paired-end/single-end designation and read length consistency [3].

Computational Resource and Integration Problems

Issue: Failures related to resource management, particularly in HPC or cloud environments.

Diagnosis and Solutions:

- Concurrency Issues: When integrating workflow systems, Snakemake may execute Nextflow rules sequentially instead of concurrently. The

handover: Truedirective can impact parallel execution [42]. - Resource Exhaustion: Monitor disk space to avoid running out of space during pipeline execution. Check compute resource allocation in configuration profiles [41].

- Permission Errors: "Access/permission denied" errors when submitting jobs to grid schedulers. Verify execution permissions and profile configuration [42].

Frequently Asked Questions (FAQs)

Platform Selection and Comparison

Q1: When should I choose Nextflow vs. Snakemake for my NGS analysis?

A: Your choice depends on computational environment, project scale, and team expertise:

- Choose Nextflow for large-scale distributed workflows, cloud execution (AWS, Google Cloud, Azure), and production-ready bioinformatics pipelines. Its dataflow programming model simplifies parallel execution [43] [44].

- Choose Snakemake for smaller to medium projects, quick prototyping, and if your team has strong Python expertise. Its readable, Python-based syntax is more accessible to beginners [43] [44].

Comparison Table: Nextflow vs. Snakemake Feature Analysis

| Feature | Nextflow | Snakemake |

|---|---|---|

| Language Base | Groovy-based DSL [43] | Python-based syntax [43] |

| Learning Curve | Steeper learning curve [43] | Easier for Python users [43] |

| Parallel Execution | Excellent (dataflow model) [43] | Good (dependency graph) [43] |

| Scalability | High (supports cloud, HPC, containers) [43] | Moderate (limited native cloud support) [43] |

| Container Support | Docker, Singularity, Conda [43] | Docker, Singularity, Conda [43] |

| Cloud Integration | Built-in AWS, Google Cloud, Azure [43] | Requires additional tools for cloud usage [43] |

| Reproducibility | Strong (workflow versioning, automatic caching) [43] | Strong (containerized environments) [43] |

| Best Use Cases | Large-scale bioinformatics, HPC, cloud workflows [43] | Python-centric projects, quick prototyping, academic research [43] |

Q2: How do these platforms address data management and reproducibility for large NGS datasets?

A: Both platforms strongly emphasize reproducibility through containerization (Docker/Singularity), environment management, and workflow versioning. Nextflow's nf-core framework provides particularly strong standardization for FAIR (Findability, Accessibility, Interoperability, and Reusability) compliance, essential for managing large NGS datasets [45].

Implementation and Debugging

Q3: Where do I find error logs when my pipeline fails?

A: Nextflow creates detailed log files in its work directory. Key files include:

.command.log: Combined STDOUT and STDERR from the tool [41].command.err: STDERR from the failed process [41].command.out: STDOUT from the process [41]exitcode: Process exit status [41].nextflow.log: Comprehensive pipeline run logging [41]

Q4: Why does my pipeline fail immediately during the first process?

A: This typically indicates dependency issues. Verify that:

- Docker daemon is running (if using Docker) [41]

- Correct configuration profile (e.g.,

-profile docker,singularity,conda) is specified [41] - Software containers are accessible and properly configured [41]

Q5: How can I troubleshoot poor quality NGS data affecting my results?

A: Implement systematic quality control:

- Always run FastQC before analysis to check base quality, adapter contamination [3]

- Perform trimming with tools like Trimmomatic for low-quality reads [3]

- Verify reference genome version and indexing [3]

- Check for consistent metadata and file naming [3]

Experimental Protocols for NGS Analysis

Standardized RNA-seq Analysis Protocol

Objective: Process raw RNA-seq data from FASTQ files to gene expression counts using reproducible, automated workflows.

Methodology:

Quality Control and Trimming

- Execute FastQC for initial quality assessment

- Remove adapters and low-quality bases using Trimmomatic

- Generate pre-alignment QC reports [3]

Alignment and Quantification

- Map reads to reference genome using STAR aligner

- Generate transcript abundance estimates with featureCounts

- Perform post-alignment QC metrics collection [46]

Result Compilation and MultiQC Report

- Aggregate QC metrics from all steps using MultiQC

- Review computational resource usage via Nextflow/Snakemake reports

- Validate output file completeness and structure [47]

Workflow Diagram: NGS Data Analysis Process

NGS Data Analysis Workflow

Essential Research Reagent Solutions

Table: Key Bioinformatics Tools for NGS Analysis

| Tool Name | Function | Application in NGS |

|---|---|---|

| FastQC | Quality control analysis | Assesses read quality, adapter contamination, sequence biases [3] |

| Trimmomatic/Cutadapt | Read trimming and adapter removal | Removes low-quality bases and adapter sequences [3] |

| STAR | Spliced transcript alignment | Aligns RNA-seq reads to reference genome [46] |

| featureCounts | Gene expression quantification | Counts reads mapping to genomic features [46] |

| MultiQC | Quality control aggregation | Compiles QC metrics from multiple tools into a single report [47] |

| Docker/Singularity | Containerization platforms | Ensures reproducible software environments [45] [43] |

Workflow Integration Architecture

Workflow System Integration

Community Support Channels

Both Nextflow and Snakemake have strong community support ecosystems:

- Nextflow/nf-core: Active Slack channel with over 10,000 users, GitHub issue tracking, bytesize webinars, and hackathons [45] [41]

- Snakemake: GitHub discussions, community forum, and academic support networks [43] [44]

When seeking help, provide complete error logs, command parameters, configuration details, and steps to reproduce the issue [41].

Leveraging Cloud Platforms (AWS, GCP, Azure) for Elastic Compute and Storage

Frequently Asked Questions (FAQs)

Q1: What are the primary cost drivers when running NGS pipelines in the cloud? The main costs are compute resources (virtual machines, especially those with GPUs) and data egress fees (transferring data out of the cloud provider's network) [18] [48]. Storage costs, while significant, can be optimized through tiered storage classes. For example, on Google Cloud Platform, a benchmark showed compute costs ranging from approximately $6 to over $100 per sample depending on the pipeline and sequencing type (WES/WGS), while data egress can cost around $0.09-$0.12 per GB [18] [48].

Q2: Which cloud storage option is best for high-performance, large-scale NGS workloads? For large-scale NGS workloads requiring high throughput, Azure Managed Lustre is optimized for HPC and genomics, offering bandwidth up to 512 GB/s [49]. AWS S3 is a mature object storage solution that automatically scales to handle massive concurrency [48], while Google Cloud Storage excels in raw throughput for large sequential transfers, benefiting from Google's global network [48].

Q3: How can I automate a multi-step NGS analysis pipeline in the cloud? You can use event-driven architectures and orchestration tools. On AWS, services like AWS Step Functions and Amazon EventBridge can orchestrate pipelines triggered by events (e.g., a new file uploaded to S3) [50]. Alternatively, purpose-built services like AWS HealthOmics can manage the entire lifecycle of NGS workflows, handling scheduling, compute allocation, and retries for you [50].

Q4: My pipeline failed with a "Permission Denied" error on a cloud storage bucket. What should I check? This is typically an Identity and Access Management (IAM) issue. Verify that the compute resource (e.g., VM, container) has been granted the necessary permissions to read from and write to the specified storage bucket. Each cloud provider has its own IAM system (AWS IAM, GCP IAM, Azure AD) where these policies are configured [51].

Q5: My NGS analysis is running slower than expected. What are the common bottlenecks? Common bottlenecks include:

- Insufficient Compute Resources: The virtual machine may have too few CPUs or not enough memory for the specific pipeline step (e.g., alignment, variant calling) [18].

- Storage Performance: Using a standard storage class instead of a high-performance option (like Premium Blob or Azure NetApp Files) can slow down I/O-intensive operations [49].

- Improper Parallelization: Some pipeline tools can distribute work across multiple cores or nodes; ensure this is configured correctly [18].

Troubleshooting Guides

Issue 1: Managing Cloud Storage Costs for Large Genomic Datasets

Problem: The costs of storing large volumes of genomic data (FASTQ, BAM, VCF files) are becoming unsustainable.

Solution: Implement a data lifecycle management policy to automatically move data to cheaper storage tiers based on access frequency [51] [50].

Step 1: Classify your data. Determine which data is actively used and which is archived.

- Hot/Frequent Access: Recent datasets currently under analysis (use Standard storage tiers).

- Cool/Infrequent Access: Processed data from completed projects that may be needed for occasional re-analysis (use Infrequent Access or Cool tiers).

- Cold/Archive: Raw data that must be kept for long-term reproducibility but is rarely accessed (use Archive or Glacier tiers).

Step 2: Configure lifecycle rules. Use the cloud provider's console or API to set up rules. For example:

Step 3: Leverage cost-saving features.

Issue 2: Selecting the Right Compute Instance for Rapid NGS Analysis

Problem: An NGS pipeline is taking too long to run, delaying critical research outcomes.

Solution: Benchmark pipelines on different instance types to find the optimal balance of speed and cost [18].

Step 1: Choose between CPU and GPU-accelerated pipelines.

Step 2: Run a controlled benchmark.

- Use a small, representative dataset (e.g., one WES sample).

- Launch different virtual machines tailored to each pipeline's requirements.

- Process the same data on each machine, meticulously recording the total runtime and all associated cloud costs.

Step 3: Analyze results and select instance.

- Compare the performance and cost per sample. A benchmark on GCP found that both Sentieon (on an n1-highcpu-64 instance) and Clara Parabricks (on an instance with a T4 GPU) are viable for ultra-rapid analysis, with the best choice depending on your specific throughput and budget requirements [18].

The table below summarizes the benchmark configuration from a study comparing ultra-rapid NGS pipelines on GCP [18].

| Pipeline | Virtual Machine Configuration | Baseline Cost (per hour) | Best For |

|---|---|---|---|

| Sentieon DNASeq | 64 vCPUs, 57 GB Memory | $1.79 | CPU-accelerated processing [18] |

| Clara Parabricks | 48 vCPUs, 58 GB Memory, 1x NVIDIA T4 GPU | $1.65 | GPU-accelerated processing [18] |

Issue 3: Building a Scalable and Automated NGS Pipeline Architecture

Problem: Manually triggering analysis steps and moving data between pipeline stages is inefficient and error-prone.

Solution: Design a serverless, event-driven architecture for full automation [50].

The following workflow diagram illustrates an automated, event-driven pipeline architecture for NGS data processing on a cloud platform.

Step 1: Implement the core workflow.

- Storage Setup: Create dedicated cloud storage buckets for input data (raw FASTQ files) and output data (processed BAM/VCF files) [50].

- Compute Setup: Configure a managed compute service like AWS Batch or use a specialized service like AWS HealthOmics to run your containerized pipeline tools (e.g., Sentieon, Parabricks) [50].

- Orchestration: Use a service like AWS Step Functions to define the sequence of your pipeline stages (QC -> Alignment -> Variant Calling) [50].

Step 2: Automate with events.

- Configure an event notification on your input storage bucket (e.g., Amazon S3 Event Notifications) [50].

- When a new sequencing file is uploaded, this event automatically triggers the orchestration service, which starts the pipeline on the compute cluster without any manual intervention.

Step 3: Enable monitoring.

- Use cloud monitoring tools (e.g., Amazon CloudWatch, Azure Monitor) to track pipeline progress, success rates, and resource utilization for ongoing optimization [50].

The Scientist's Toolkit: Essential Cloud Services for NGS Research

The table below details key cloud services and components used to build scalable NGS research platforms.

| Category / Item | Function | Provider |

|---|---|---|

| Object Storage | ||

| Amazon S3 | Durable, scalable object storage for raw (FASTQ) and processed (BAM, VCF) data [50]. | AWS |

| Google Cloud Storage | High-performance object storage integrated with GCP's analytics and AI services [51]. | GCP |

| Azure Blob Storage | Enterprise-grade object storage with deep integration into the Microsoft ecosystem [51]. | Azure |

| High-Performance Compute | ||

| AWS Batch | Fully managed service for running batch computing jobs at any scale [50]. | AWS |

| Google Compute Engine | Scalable VMs for running CPU/GPU-accelerated NGS pipelines like Sentieon & Parabricks [18]. | GCP |

| Azure HPC VMs | Virtual machines optimized for high-performance computing workloads [49]. | Azure |

| Specialized Workflow Services | ||

| AWS HealthOmics | Purpose-built managed service for storing, analyzing, and querying genomic data [50]. | AWS |

| Orchestration & Automation | ||

| AWS Step Functions | Coordinate multiple AWS services into serverless workflows (e.g., multi-step NGS pipelines) [50]. | AWS |

| Amazon EventBridge | Serverless event bus to connect application data from different sources [50]. | AWS |

Quantitative Data Comparison for Cloud Storage

The tables below summarize key performance metrics and cost considerations for cloud storage services relevant to NGS data.

Table 1: Performance Characteristics of Select Azure HPC Storage Options [49]

| Storage Solution | Max Bandwidth | Max IOPS | Latency | Ideal NGS Workload Use Case |

|---|---|---|---|---|

| Azure Standard Blob | 15 GB/s | 20,000 | <100 ms | General data lake, cost-effective core storage [49] |

| Azure Premium Blob | 15 GB/s | 20,000 | <10 ms | Datasets with many medium-sized files [49] |

| Azure NetApp Files | 10 GiB/s | 800,000 | <1 ms | Small-file datasets (<512 KiB), high IOPS [49] |

| Azure Managed Lustre | Up to 512 GB/s | >100,000 | <2 ms | Large-scale simulations, genomics, bandwidth-intensive workloads [49] |

Table 2: Sample Cloud Storage and Egress Pricing (Approximate) [52] [48]

| Service / Tier | Standard/Hot (per GB-month) | Infrequent Access/Cool (per GB-month) | Archive/Cold (per GB-month) | Egress (per GB, first 10TB) |

|---|---|---|---|---|

| AWS S3 | $0.023 | $0.010 | $0.003 (Glacier) | $0.09 [48] |

| Google Cloud Storage | $0.020 | $0.010 (Nearline) | $0.006 (Coldline) | $0.12 [48] |

| Azure Blob Storage | $0.0184 (LRS) | $0.020 | $0.003 | $0.087 [48] |

Next-Generation Sequencing (NGS) has become a crucial tool in clinical diagnostics, dramatically increasing diagnostic yield compared to traditional methods, particularly for critically ill patients in intensive care units where time-to-results is crucial [18]. However, the widespread adoption of NGS creates substantial computational challenges for data analysis and interpretation [18]. Ultra-rapid analysis tools like Sentieon DNASeq and NVIDIA Clara Parabricks Germline have emerged to address these bottlenecks, but their substantial computational demands often exceed the resources available in many healthcare facilities [18].

Cloud platforms, particularly Google Cloud Platform (GCP), offer scalable solutions that enable healthcare providers to access these advanced genomic tools without maintaining expensive local infrastructure [18]. This technical support center provides essential troubleshooting guidance and performance benchmarks to help researchers and clinicians effectively implement these accelerated solutions within their NGS workflows, framed within the broader context of data storage and management for large-scale genomic datasets.

Technical Support Center: Troubleshooting Guides and FAQs

Sentieon DNASeq Troubleshooting Guide

Common Error Messages and Solutions

Problem: "Error: can not open file (xxx) in mode(r), Too many open files"

- Root Cause: The system limit for concurrently open files is set too low for Sentieon's operations [53].

- Solution:

- Check the current limit with:

ulimit -n - Edit

/etc/security/limits.confas root and add: - On Ubuntu systems, also add

ulimit -n 16384to your~/.bashrc - Log out and back in for changes to take effect [53]

- Check the current limit with:

Problem: "Contig XXX from vcf/bam is not present in the reference" or "Contig XXX has different size in vcf/bam than in the reference"

- Root Cause: Input VCF or BAM file is incompatible with the reference FASTA file, likely due to using files processed with different references [53].

- Solution: Ensure all input files (BAM, VCF) and reference files are generated using the same reference genome build [53].

Problem: "Readgroup XX is present in multiple BAM files with different attributes"

- Root Cause: Multiple input BAM files contain readgroups with the same ID but different attributes [53].

- Solution: Modify the BAM files to make RG IDs unique using

samtools addreplacerg:

Generate FASTA file index:

Generate sequence dictionary:

bash java -jar picard.jar CreateSequenceDictionary REFERENCE=reference.fasta OUTPUT=reference.dict[53]

Known Limitations and Workarounds

- Gzipped VCF files: Sentieon does not support normal gzipped VCF files, only bgzip-compressed files [53].

- Workaround: Use

gunzipfollowed bybgzip, or usesentieon util vcfconvert[53].

- Workaround: Use

- Gzipped FASTA files: Not supported; must gunzip before use [53].

- FASTQ quality format: Requires SANGER format; will not detect or convert older Illumina formats [53].

NVIDIA Clara Parabricks Troubleshooting Guide

License and Installation Issues

Problem: License not working

- Potential Causes and Solutions:

- Incorrect file path: Ensure license is stored at

/opt/parabricks/license.bin[54] [55] - Expired license: Contact NVIDIA developer forums for extension [54]

- Firewall blocking: Add parabricks.com to whitelist if server cannot connect to licensing server [55]

- Wrong filetype: License must have

.binextension [55]

- Incorrect file path: Ensure license is stored at

Problem: Parabricks does not run with Singularity containerization

- Solution: Run the following command if you see initialization errors:

bash nvidia-modprobe -u -c=0[54] - Note: This is only a concern with Parabricks versions prior to v4.0 [56]

Session Management

Problem: Analysis terminates when SSH connection is lost

- Solutions:

Hardware Compatibility

Problem: Can I use Parabricks on my video card?

- Requirements:

- Solution: Check specific hardware requirements in Parabricks documentation

Comprehensive FAQ Section

General NGS Analysis Questions

Q: What are the key advantages of cloud-based NGS analysis over on-premises solutions? A: Cloud platforms eliminate the need for expensive local infrastructure, which typically costs $150,000-$250,000 initially plus 30% annual maintenance [18]. Instead, healthcare providers can use operational expenditure, paying only for resources used while maintaining compliance with regulatory requirements [18].

Q: How do I choose between Sentieon and Parabricks for my institution? A: Consider your existing infrastructure and expertise. Sentieon is CPU-optimized while Parabricks leverages GPU acceleration. Benchmarking shows comparable performance, so the decision may depend on your specific workflow requirements and computational resources [18].

Technical Implementation Questions

Q: What are the essential steps for preparing reference files?

A: Both tools require properly formatted reference genomes including BWA index files (.amb, .ann, .bwt, .pac, .sa), FASTA index (.fai), and sequence dictionary (.dict) [53].

Q: How can I manage large-scale genomic data efficiently? A: Utilize cloud-based solutions like Google Cloud Platform or AWS, which host public datasets like SRA without end-user charges when accessing from the same cloud region [57]. Consider data compression strategies and appropriate file formats for optimal storage.

Performance Benchmarking and Experimental Protocols

Benchmarking Methodology

Recent benchmarking studies provide critical performance data for informed decision-making:

Experimental Design

Researchers benchmarked Sentieon DNASeq (v202308) and Clara Parabricks Germline (v4.0.1-1) on GCP using five whole-exome (WES) and five whole-genome (WGS) samples from publicly available SRA data [18]. The WES data derived from a study on lymphoproliferation, immunodeficiency, and HLH-like phenotypes, sequenced on Illumina NextSeq 500 with 75bp paired-end reads [18]. The WGS data came from Illumina's Polaris project, sequenced on Illumina HiSeqX with 150bp read length [18].

Virtual Machine Configuration

- Sentieon VM: 64 vCPUs, 57GB memory (n1-highcpu-64), cost: $1.79/hour [18]

- Parabricks VM: 48 vCPUs, 58GB memory, 1 T4 NVIDIA GPU, cost: $1.65/hour [18]

Both pipelines were executed with default parameters, including alignment, duplicate marking, base recalibration, and variant calling from raw FASTQ to VCF [18].

Benchmark Results and Comparative Analysis

The table below summarizes the quantitative benchmarking data from the comparative analysis:

Table 1: Performance Benchmarking of Sentieon and Parabricks on GCP

| Metric | Sentieon DNASeq | Clara Parabricks |

|---|---|---|

| VM Configuration | 64 vCPUs, 57GB memory | 48 vCPUs, 58GB memory, 1 T4 GPU |

| Hourly Cost | $1.79/hour | $1.65/hour |

| Processing Approach | CPU-optimized | GPU-accelerated |

| Performance Conclusion | Comparable performance | Comparable performance |

| Key Advantage | Efficient CPU utilization | GPU acceleration for compatible workloads |

Workflow Visualization

The following diagram illustrates the experimental workflow and troubleshooting pathways for both Sentieon and Parabricks:

Diagram 1: NGS Analysis Workflow and Troubleshooting Pathways

Computational Infrastructure Solutions

Table 2: Essential Research Reagents and Computational Solutions

| Resource Type | Specific Solution | Function/Purpose |

|---|---|---|

| Accelerated Analysis Tools | Sentieon DNASeq | CPU-optimized pipeline for rapid variant calling |

| NVIDIA Clara Parabricks | GPU-accelerated pipeline for genomic analysis | |

| Cloud Platforms | Google Cloud Platform (GCP) | Scalable infrastructure for NGS analysis |

| Amazon Web Services (AWS) | Alternative cloud computing resources | |

| Reference Data | Genome Reference Consortium | Maintains human reference genome assembly |

| 1000 Genomes Project | Provides population genetic variation data | |

| Data Repositories | Sequence Read Archive (SRA) | Stores and distributes raw sequencing data |

| UK Biobank | Provides controlled-access genomic and phenotypic data |

Data Management and Workflow Solutions

Containerization Technologies: Docker and Singularity enable reproducible analysis environments, encapsulating software dependencies to ensure consistent results across different computational platforms [57].

Workflow Management Systems: Platforms like Nextflow and Snakemake facilitate scalable, reproducible genomic analyses through structured pipeline definition and execution [57].

Data Format Standards: SAM/BAM for alignments and VCF for variants represent de facto standard formats developed through large-scale collaborations like the 1000 Genomes Project, ensuring interoperability between tools [57].

The implementation of ultra-rapid NGS analysis tools like Sentieon and Parabricks on cloud platforms represents a transformative approach to genomic data management in research and clinical settings. By leveraging the scalable infrastructure of cloud computing and the optimized performance of these specialized pipelines, researchers and healthcare providers can significantly reduce time-to-diagnosis for critical conditions while managing computational costs effectively.

The troubleshooting guides and performance benchmarks provided in this technical support center equip genomic scientists with practical solutions to common implementation challenges, facilitating broader adoption of these accelerated analysis methodologies. As the field continues to evolve with increasing data volumes and analytical complexity, such optimized computational workflows will become increasingly essential for extracting meaningful insights from large-scale genomic datasets.

Optimizing Your NGS Data Strategy: Cost Management and Performance Tuning