Machine Learning in Genomic Cancer Data: From Big Data to Precision Oncology

This article provides a comprehensive introduction to machine learning (ML) applications in genomic cancer data, tailored for researchers, scientists, and drug development professionals.

Machine Learning in Genomic Cancer Data: From Big Data to Precision Oncology

Abstract

This article provides a comprehensive introduction to machine learning (ML) applications in genomic cancer data, tailored for researchers, scientists, and drug development professionals. It covers the foundational need for ML in managing the scale and complexity of cancer genomics, explores key methodologies like convolutional and graph neural networks for tasks such as variant calling and multi-omics integration, and addresses critical challenges including data heterogeneity and model interpretability. Finally, it outlines the path for clinical validation and the future role of ML in advancing precision medicine, offering a holistic view from data analysis to clinical application.

The Genomic Data Deluge: Why Machine Learning is Indispensable in Modern Oncology

The field of genomics is undergoing a data explosion, driven by drastic reductions in the cost of high-throughput sequencing technologies [1]. We have entered the era of millions of available genomes, where each human genome—composed of billions of nucleotides—can occupy over 200 gigabytes of storage as raw sequence data [2]. The total global data generated from processing human genomic sequences is projected to require approximately 40 exabytes of storage capacity [2]. This massive data accumulation represents an unprecedented computational challenge as researchers scale their analyses from single-gene investigations to whole-population studies, particularly within genomic cancer research where integrating multi-omics data is essential for advancing precision medicine [3].

This data deluge is not merely a storage problem but represents a fundamental shift in biological research methodology. The transition from studying single genes in isolation to analyzing entire genomes across populations has revealed extraordinary complexity in genomic information processing [1]. In cancer research, this comprehensive approach is crucial for understanding tumor heterogeneity, identifying driver mutations, and developing personalized treatment strategies [4] [5]. The integration of artificial intelligence and machine learning methods has become indispensable for extracting meaningful patterns from these vast genomic datasets, enabling researchers to translate multidimensional biological information into clinically actionable knowledge [3] [5].

The Scale of Genomic Big Data

Quantitative Dimensions of Genomic Data

The exponential growth of genomic data presents substantial challenges across multiple dimensions—volume, velocity, variety, and complexity—that collectively define the big data paradigm in genomics [1] [2].

Table 1: Quantitative Dimensions of Genomic Data Scale

| Data Dimension | Scale Metrics | Research Implications |

|---|---|---|

| Individual Genome | >200 GB per raw sequence [2] | Requires high-memory computing nodes for assembly and analysis |

| Population Studies | Petabytes to exabytes for millions of genomes [2] | Demands distributed computing frameworks like Spark or Hadoop [1] |

| Variant Burden | >4 million variants per human genome [6] | Creates interpretation challenges with high false-positive rates |

| Data Generation Rate | Drastically decreasing sequencing costs [1] | Enables large-scale projects but exacerbates storage and analysis bottlenecks |

Multi-Omics Data Integration

In cancer genomics, the challenge extends beyond DNA sequence data to include diverse data modalities that must be integrated for comprehensive analysis. These include:

- Epigenomic data: DNA methylation patterns, histone modifications, and chromatin accessibility from assays like bisulfite sequencing and ChIP-seq [1]

- Transcriptomic data: Gene expression quantification from RNA-Seq, including alternative splicing and fusion transcripts [7]

- Proteomic and metabolomic data: Protein expression and metabolic pathway activities [3]

- Clinical and phenotypic data: Patient outcomes, treatment responses, and pathology reports [6] [5]

The integration of these diverse data types creates both computational and analytical challenges, as researchers must develop methods to normalize, harmonize, and jointly analyze data from fundamentally different biochemical sources and measurement technologies [3].

Genomic Data Processing Workflows

Standardized Processing Pipelines

Genomic data processing follows standardized computational workflows that transform raw data into biologically interpretable information. The National Cancer Institute's Genomic Data Commons (GDC) has established robust pipelines for processing various types of genomic data, providing a framework for large-scale cancer genomics research [7].

Table 2: Genomic Data Processing Pipelines

| Pipeline Type | Input Data | Key Processing Steps | Output Data |

|---|---|---|---|

| DNA-Seq Somatic Variant Analysis | Tumor/Normal BAM/FASTQ [7] | Alignment, co-cleaning, variant calling (MuSE, Mutect2, Pindel, Varscan2), annotation | Somatic MAF files, annotated variants |

| RNA-Seq Gene Expression | RNA-Seq FASTQ [7] | Two-pass alignment, gene quantification (STAR), normalization (FPKM, FPKM-UQ) | Gene expression values, fusion transcripts |

| Single-Cell RNA-Seq | scRNA-Seq FASTQ [7] | CellRanger counting, Seurat analysis, dimensional reduction | Filtered/raw counts, cluster coordinates, differential expression |

| miRNA-Seq Analysis | miRNA-Seq FASTQ [7] | Alignment, isoform detection, RPM normalization | miRNA expression levels, isoform data |

| Methylation Analysis | Methylation array intensities [7] | Beta value calculation, germline information masking | Methylation beta values |

End-to-End Genomic Data Flow

The journey of genomic data from instrument to clinical interpretation involves multiple transformation steps and data repositories. The framework developed by the NIH National Human Genome Research Institute (NHGRI) IGNITE Network consortium illustrates this complex data flow, which applies to both germline and somatic testing in cancer genomics [6].

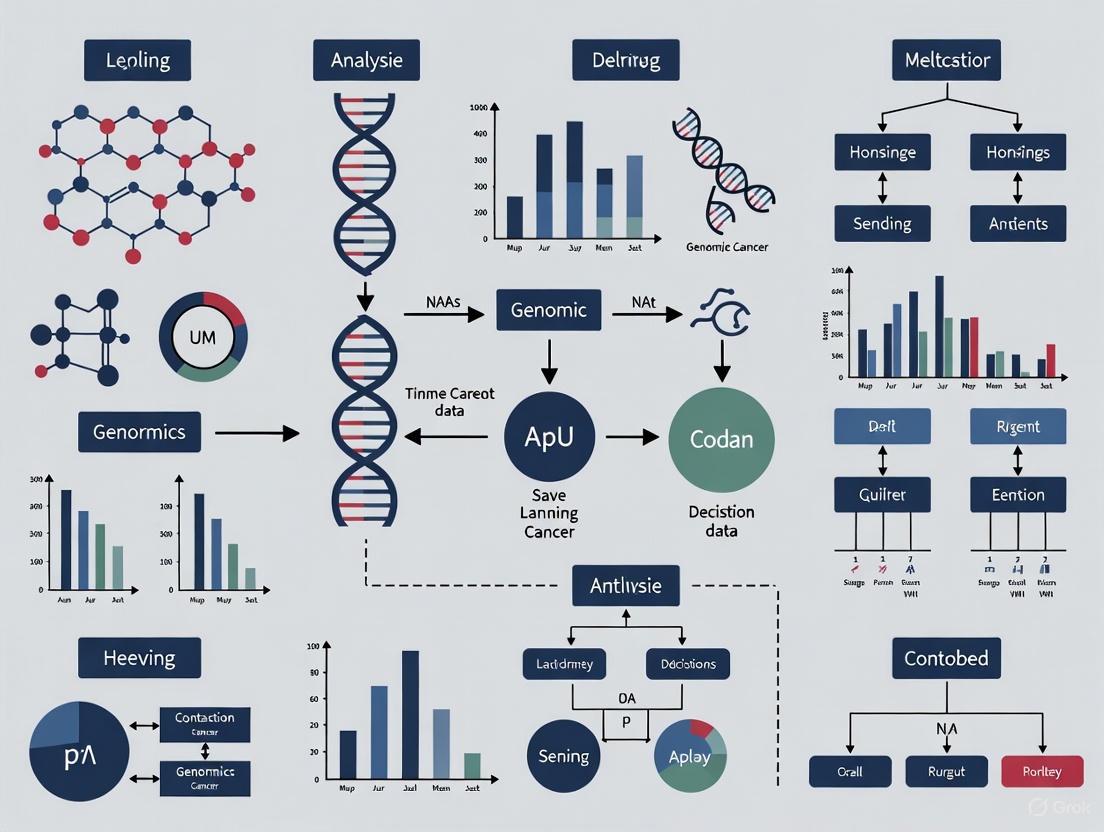

Diagram 1: Genomic Data Analysis Workflow

This data flow framework highlights the critical pathway from raw data generation to clinical application, with particular importance in cancer genomics for identifying actionable mutations and informing treatment decisions [6]. The process requires specialized bioinformatics pipelines that transform sequencing signals into variant calls, followed by annotation and interpretation against established knowledge bases like dbSNP, OMIM, and ClinVar [7] [6].

Computational Frameworks for Genomic Analysis

High-Performance Computing Requirements

The computational intensity of genomic analysis necessitates specialized computing frameworks capable of handling terabyte-scale datasets [1]. Key requirements include:

- Parallel processing capabilities: Genomic data processing is inherently parallelizable, with different genomic regions often analyzed independently [2]

- Large memory capacity: De novo genome assembly and variant calling require substantial RAM, with some operations needing hundreds of gigabytes [1]

- High-performance storage: Parallel file systems that support simultaneous access by multiple computing nodes are essential for collaborative research [2]

- Accelerated computing: GPU-accelerated algorithms are increasingly important for deep learning applications in genomics [2]

Cloud-Based Genomic Data Management

Cloud computing platforms have become essential for genomic data storage and analysis, providing scalable solutions to address the substantial storage and computational demands [8] [2].

Table 3: Cloud Storage Considerations for Genomic Data

| Requirement | Technical Specifications | Implementation Examples |

|---|---|---|

| Scalability | Ability to scale to exabytes across billions of files [2] | AWS HealthOmics, Google Cloud Life Sciences |

| Security & Compliance | HIPAA compliance, in-flight/at-rest encryption [2] | AWS S3 encryption, Azure Blob Storage security |

| Data Access Patterns | Parallel file access, object storage support [2] | WEKA cloud file systems, AWS ParallelCluster |

| Cost Management | Hot/cold storage tiers, lifecycle policies [8] [2] | Amazon S3 Glacier Deep Archive (90% cost savings) |

| Collaboration Features | Secure data sharing, global accessibility [8] | DNAnexus, Seven Bridges platforms on AWS |

Leading genomics organizations including Ancestry, Illumina, and Genomics England leverage cloud platforms to securely store, analyze, and collaborate on genomic data while adhering to data sovereignty requirements [8]. The Registry of Open Data on AWS hosts more than 70 life sciences databases, including The Cancer Genome Atlas, providing researchers with access to large-scale genomic datasets [8].

Machine Learning and AI Applications in Genomic Cancer Research

AI-Driven Genomic Analysis

Artificial intelligence, particularly deep learning, has become indispensable for analyzing complex genomic data in cancer research [4] [5]. These methods excel at identifying patterns in high-dimensional data that may elude traditional statistical approaches.

- Variant Prioritization: Deep learning models can prioritize clinically significant variants from the millions identified in whole genome sequencing, reducing interpretation burden [6] [5]

- Gene Expression Classification: Convolutional neural networks (CNNs) and recurrent neural networks (RNNs) can classify cancer subtypes based on gene expression patterns [4]

- Regulatory Element Prediction: AI models predict non-coding regulatory elements and their potential impact on gene expression in cancer [1] [5]

- Multi-Omics Integration: Transformer-based architectures integrate genomic, transcriptomic, and epigenomic data to predict therapeutic responses [4]

AI in Cancer Diagnosis and Treatment

In clinical oncology, AI applications are transforming cancer diagnosis, treatment selection, and outcome prediction [4] [5].

Diagram 2: AI Applications in Genomic Cancer Research

AI systems demonstrate remarkable performance in cancer diagnostics, with studies showing that deep learning models can match or exceed human expert performance in tasks such as mammogram interpretation [4] [5]. For instance, Google Health's AI system reduced false positives by 5.7% and false negatives by 9.4% in breast cancer screening compared to radiologists [5]. In pathology, AI-powered digital pathology platforms can detect micrometastases and rare cancer subtypes that might be overlooked by human pathologists [5].

Successful genomic cancer research requires both wet-lab reagents and computational resources. The following table outlines key components of the modern genomic researcher's toolkit.

Table 4: Essential Research Reagents and Computational Resources

| Category | Specific Tools/Reagents | Function/Purpose |

|---|---|---|

| Sequencing Technologies | Illumina NGS, PacBio SMRT, Oxford Nanopore | Generate raw genomic sequence data from tumor and normal samples [1] [7] |

| Alignment Tools | BWA-MEM, STAR, HISAT2 | Map sequencing reads to reference genome (GRCh38) [7] |

| Variant Callers | MuSE, Mutect2, Pindel, Varscan2, GATK | Identify somatic and germline variants from aligned reads [7] |

| AI/ML Frameworks | TensorFlow, PyTorch, Scikit-learn | Develop predictive models for classification and outcome prediction [4] [5] |

| Genomic Databases | dbSNP, ClinVar, OMIM, COSMIC, TCGA | Annotate variants and provide clinical interpretations [7] [6] |

| Workflow Management | Nextflow, Snakemake, Cromwell | Orchestrate complex multi-step genomic analyses [7] |

| Visualization Tools | IGV, UCSC Genome Browser, Circos | Visualize genomic data, variants, and rearrangements [7] |

| Cloud Platforms | AWS HealthOmics, DNAnexus, Seven Bridges | Scalable storage and computation for collaborative research [8] |

Future Perspectives and Emerging Challenges

Emerging Technologies and Approaches

The field of genomic data science continues to evolve rapidly, with several emerging technologies poised to address current limitations and generate new data types:

- Third-generation sequencing: Long-read technologies from PacBio and Oxford Nanopore provide more complete haplotype resolution and access to repetitive regions but introduce new computational challenges due to higher error rates [1]

- Single-cell sequencing: Enables resolution of cellular heterogeneity within tumors but generates extremely sparse data requiring specialized statistical methods [1]

- Spatial transcriptomics: Combines gene expression data with spatial localization within tissues, creating massive image-based datasets [1] [3]

- Live-cell imaging and genomics: Integration of dynamic imaging data with genomic profiles to understand temporal dynamics in cancer progression [1]

Ethical and Computational Challenges

As genomic data generation accelerates, several challenges must be addressed to realize its full potential in cancer research:

- Data privacy and security: Genomic data is inherently identifiable and requires robust security frameworks, especially when sharing across institutions [8] [2]

- Algorithmic bias: AI models trained on limited populations may not generalize across diverse ethnic groups, potentially exacerbating health disparities [4] [9]

- Interpretability: The "black box" nature of many deep learning models limits clinical adoption, creating a need for explainable AI in genomic medicine [4] [10]

- Data standardization: Inconsistent data formats and annotation standards hinder data sharing and integrative analyses across research groups [6] [3]

The exponential growth of genomic data represents both a formidable challenge and unprecedented opportunity in cancer research. By leveraging advanced computational frameworks, machine learning algorithms, and cloud-based infrastructure, researchers can translate this wealth of genomic information into improved understanding of cancer biology and enhanced patient care through precision oncology approaches. The continued development of scalable analytical methods will be essential for harnessing the full potential of population-scale genomic data to address the complexity of cancer.

Next-Generation Sequencing (NGS) has fundamentally transformed oncology research by enabling comprehensive genomic profiling of tumors at unprecedented resolution and scale. This family of technologies serves as the foundational data generation engine for modern precision oncology, facilitating the identification of genetic alterations that drive cancer progression, treatment resistance, and metastatic potential. The emergence of machine learning in genomic cancer research has further amplified the value of NGS-derived data, creating synergistic partnerships where high-quality genomic data trains predictive algorithms that in turn extract previously inaccessible biological insights from complex datasets.

The technological evolution from Sanger sequencing to NGS represents a paradigm shift in throughput and capability. Unlike traditional methods that process single DNA fragments sequentially, NGS platforms perform massively parallel sequencing, simultaneously processing millions of DNA fragments [11] [12]. This architectural advancement has dramatically reduced the time and cost associated with comprehensive genomic analysis while providing the rich, multidimensional datasets required for sophisticated machine learning applications. The continuous improvement in sequencing chemistries, detection methods, and bioinformatics pipelines has established NGS as an indispensable tool for researchers and drug development professionals seeking to decode the molecular complexity of cancer.

This technical guide examines the three primary NGS-based approaches for mutation detection and genomic profiling: Whole Genome Sequencing (WGS), Whole Exome Sequencing (WES), and targeted NGS panels. We will explore their respective technical specifications, experimental considerations, and applications within the context of machine learning-driven cancer genomics research, providing researchers with a framework for selecting appropriate methodologies for specific research objectives.

Core Definitions and Genomic Coverage

Whole Genome Sequencing (WGS) provides the most comprehensive approach by sequencing the entire genome, including all coding and non-coding regions. This method captures approximately 3 billion base pairs of the human genome, enabling detection of variants across intergenic, intronic, and regulatory regions alongside the protein-coding exons [13] [14]. The comprehensive nature of WGS makes it particularly valuable for discovering novel biomarkers in non-coding regions and identifying structural variants that may be missed by targeted approaches.

Whole Exome Sequencing (WES) focuses specifically on the protein-coding regions of the genome (exons), which constitute approximately 1-2% of the genome (~30-40 million base pairs) but harbor an estimated 85% of known disease-causing variants [15] [16]. This targeted approach provides deep coverage of clinically relevant regions while generating substantially less data than WGS, making it a cost-effective option for many research applications focused on coding variants.

Targeted NGS Panels sequence a predefined set of genes or genomic regions known to be associated with specific cancer types or pathways. These panels typically cover from dozens to hundreds of genes, with extreme focus enabling very high sequencing depth (often 500x-1000x or higher) that facilitates detection of low-frequency variants in heterogeneous tumor samples [17] [18]. The limited scope makes targeted panels efficient for clinical applications where specific actionable mutations guide treatment decisions.

Comparative Technical Specifications

Table 1: Comparative analysis of key genomic sequencing technologies

| Feature | Targeted NGS Panels | Whole Exome Sequencing (WES) | Whole Genome Sequencing (WGS) |

|---|---|---|---|

| Genomic Coverage | 0.01-5 Mb (targeted genes) | ~30-40 Mb (exonic regions) | ~3,000 Mb (entire genome) |

| Sequencing Depth | Very high (500-1000x+) | High (100-200x) | Moderate (30-60x) |

| Variant Types Detected | SNVs, Indels, CNVs, specific fusions | SNVs, Indels, some CNVs | SNVs, Indels, CNVs, SVs, non-coding variants |

| Data Volume per Sample | Low (1-5 GB) | Moderate (10-20 GB) | High (80-100 GB) |

| Primary Strengths | Cost-effective for focused questions; ideal for low-quality samples | Balanced approach for known and novel coding variants | Most comprehensive variant detection |

| Key Limitations | Limited to predefined targets; poor for novel gene discovery | Misses non-coding and regulatory variants; lower depth than panels | Higher cost; data storage/analysis challenges; VUS in non-coding regions |

| Best Applications | Validation studies; clinical testing; profiling known cancer genes | Rare disease diagnosis; novel gene discovery in coding regions | Comprehensive biomarker discovery; structural variant analysis |

Table 2: Diagnostic performance and clinical utility across sequencing methods

| Performance Metric | Targeted Panels | WES | WGS |

|---|---|---|---|

| Diagnostic Yield | Varies by panel design | ~50-53% in rare diseases [15] [14] | ~61% in pediatric cohorts [14] |

| Ability to Detect CNVs/SVs | Limited to panel design | Limited sensitivity [19] [16] | Comprehensive detection [13] [14] |

| Turnaround Time | 4-7 days [17] | 2-4 weeks | 3-6 weeks |

| Cost per Sample (Relative) | $ | $$ | $$$ |

| Actionable Findings in Cancer | 22-26% of cases [18] | 17.5% of genetic variance [13] | 90% of genetic signal [13] |

Experimental Protocols and Methodologies

Sample Preparation and Library Construction

The initial phase of any NGS workflow involves nucleic acid extraction and quality control. For cancer genomics applications, both fresh-frozen and Formalin-Fixed Paraffin-Embedded (FFPE) tissue specimens are commonly used, each presenting unique challenges. FFPE samples often contain fragmented DNA requiring specialized extraction methods and quality assessment [17]. The minimum input requirement for most NGS assays is ≥50 nanograms of DNA, though this varies by platform and application [17].

Library preparation involves several standardized steps:

- DNA Fragmentation: Mechanical or enzymatic fragmentation of genomic DNA to appropriate size distributions (typically 200-500 bp).

- Adapter Ligation: Addition of platform-specific adapter sequences to fragment ends enabling amplification and sequencing.

- Target Enrichment: Method-dependent selection of genomic regions of interest:

- Library Amplification: PCR amplification to generate sufficient material for sequencing.

- Quality Control: Quantitative and qualitative assessment of final libraries before sequencing.

For WGS, the library preparation process is typically simpler, often employing tagmentation-based approaches (e.g., Illumina Nextera) that combine fragmentation and adapter insertion in a single step [14]. The availability of automated library preparation systems (e.g., MGI SP-100RS) has improved reproducibility while reducing hands-on time and potential for contamination [17].

Sequencing Platforms and Data Generation

Multiple sequencing platforms are available, each with distinct characteristics and applications:

Illumina platforms utilize sequencing-by-synthesis chemistry with fluorescently labeled nucleotides, providing high accuracy (99.9%) and high throughput [11] [12]. These systems generate short reads (75-300 bp) ideal for detecting single nucleotide variants and small indels. Common instruments include NovaSeq 6000, NextSeq 500, and MiSeq, with NovaSeq 6000 being widely used for WGS applications aiming for 30x coverage [14].

MGI Tech platforms employ combinatorial Probe-Anchor Synthesis (cPAS) and DNA Nanoball (DNB) technologies, offering an alternative to Illumina with competitive accuracy and lower operating costs [17]. The DNBSEQ-G50RS platform demonstrates precise SNP and indel detection capabilities suitable for both targeted and whole genome applications.

Third-Generation Technologies including Pacific Biosciences (PacBio) and Oxford Nanopore Technologies (ONT) generate long reads (10kb+) that excel in resolving complex structural variants and repetitive regions [20]. While these platforms traditionally had higher error rates, recent improvements have enhanced their utility in cancer genomics for characterizing fusion genes and complex rearrangements.

Bioinformatics Analysis and Machine Learning Integration

Primary and Secondary Analysis Workflows

The computational analysis of NGS data follows a structured pipeline transforming raw sequencing data into biologically meaningful variant calls:

Diagram 1: NGS data analysis workflow

Primary Analysis begins with base calling and quality assessment using tools like FastQC. Sequencing reads are then aligned to a reference genome (e.g., GRCh38) using aligners such as BWA-MEM or STAR [11] [12]. This step generates BAM files containing aligned reads used for subsequent analysis.

Secondary Analysis involves variant detection using specialized callers:

- SNVs and Indels: GATK, DRAGEN, Strelka2 [19]

- Copy Number Variants (CNVs): CNVkit, DRAGEN CNV [17] [14]

- Structural Variants (SVs): Manta, DRAGEN SV [19]

- Repeat Expansions: ExpansionHunter [19]

The DRAGEN (Dynamic Read Analysis for GENomics) platform exemplifies integrated secondary analysis, providing highly accurate and efficient variant calling through hardware-accelerated processing [13] [14].

Machine Learning Applications in Variant Interpretation

Machine learning has become increasingly integral to genomic data analysis, particularly in distinguishing driver mutations from passenger mutations and predicting variant pathogenicity. Key applications include:

Variant Prioritization: ML models such as PrimateAI-3D use deep learning to predict variant pathogenicity based on evolutionary conservation and biochemical constraints, with studies showing significant correlation between PrimateAI-3D scores and variant effect sizes [13]. These tools help researchers prioritize potentially functional variants from the thousands identified in each tumor sample.

Variant Calling Optimization: ML algorithms improve variant calling accuracy by integrating multiple sequence features and quality metrics. The Sophia DDM software exemplifies this approach, using machine learning for rapid variant analysis and visualization of mutated and wild type hotspot positions [17].

Predictive Biomarker Discovery: ML models applied to WGS and WES data can identify complex genomic signatures predictive of treatment response or clinical outcomes. These approaches are particularly valuable for interpreting the non-coding genome captured by WGS but not by WES or targeted panels [13].

Research Reagent Solutions and Experimental Tools

Table 3: Essential research reagents and platforms for genomic sequencing

| Reagent Category | Specific Examples | Primary Function | Application Notes |

|---|---|---|---|

| Library Prep Kits | Illumina DNA PCR-Free Prep; Twist Library Preparation EF Kit 2.0 | Fragment DNA, add adapters, amplify libraries | PCR-free methods reduce bias for WGS [19] [14] |

| Target Enrichment | Twist Exome 2.0; Comprehensive Exome spike-in; Custom capture probes | Hybridization-based capture of genomic regions | Custom panels enable focused sequencing of cancer genes [17] [19] |

| Sequencing Kits | NovaSeq 6000 S4 Reagent; NextSeq 500/550 High Output Kit | Provide enzymes, buffers, and flow cells for sequencing | Platform-specific reagents determine read length and output [14] |

| Automation Systems | Zephyr G3 NGS Workstation; MGI SP-100RS | Automate library preparation steps | Improve reproducibility and throughput [17] |

| Reference Materials | HG001 (NA12878); HG002 (NA24385); HD701 | Quality control and assay validation | Essential for establishing assay performance [17] [19] |

Technology Selection Guidelines for Research Applications

Decision Framework for Methodology Selection

Choosing the appropriate genomic approach requires careful consideration of research objectives, sample characteristics, and computational resources. The following decision framework provides guidance for selecting optimal methodologies:

Diagram 2: Technology selection decision tree

Targeted NGS Panels are optimal when: Research focuses on established cancer genes with known clinical utility; Sample quantity/quality is limited (e.g., liquid biopsies, degraded FFPE); Budget and timeline constraints require cost-effective, rapid turnaround; High sensitivity for low-frequency variants is critical [17] [18].

Whole Exome Sequencing is recommended when: Investigating heterogeneous conditions without clear molecular etiology; Conducting novel gene discovery within coding regions; Balancing comprehensive coverage with budget considerations; Studying rare diseases where 50-53% diagnostic yields are typical [15] [16].

Whole Genome Sequencing is preferable when: Pursuing comprehensive biomarker discovery including non-coding regions; Detecting complex structural variants and copy number changes; Studying diseases with suspected regulatory or deep intronic mutations; Establishing reference data for long-term research programs [13] [14].

Emerging Trends and Future Directions

The field of genomic sequencing continues to evolve rapidly, with several emerging trends shaping future research applications:

Multi-Omics Integration: Combining genomic data with transcriptomic, epigenomic, and proteomic profiles provides systems-level understanding of cancer biology. WGS serves as the foundational layer for these integrated analyses [11].

Long-Read Sequencing: Third-generation sequencing technologies are overcoming traditional limitations in resolving complex genomic regions, with PacBio and Oxford Nanopore platforms enabling direct detection of epigenetic modifications and phased variant information [20].

Single-Cell Genomics: Applying NGS technologies at single-cell resolution reveals tumor heterogeneity and clonal evolution patterns not apparent in bulk tissue analyses, with implications for understanding therapy resistance [11] [12].

AI-Enhanced Analysis: Deep learning approaches are increasingly being applied directly to raw sequencing data, potentially bypassing traditional alignment and variant calling steps to directly predict functional consequences [13] [16].

The selection of appropriate genomic sequencing technologies represents a critical decision point in cancer research study design. Targeted NGS panels, WES, and WGS each offer distinct advantages and limitations that must be balanced against research objectives, resources, and analytical capabilities. As machine learning becomes increasingly integrated into genomic analysis, the rich datasets generated by these technologies—particularly comprehensive WGS data—will continue to drive innovations in cancer diagnosis, treatment selection, and drug development. Researchers should consider establishing institutional capabilities for all three approaches, recognizing that the optimal technology varies across research questions and that multi-platform approaches often provide the most robust findings.

The advancement of machine learning (ML) in genomic cancer research is fundamentally reliant on large-scale, well-curated public databases. The Cancer Genome Atlas (TCGA), the Catalogue Of Somatic Mutations In Cancer (COSMIC), and the Cancer Cell Line Encyclopedia (CCLE) represent three cornerstone resources that provide complementary data types for training and validating predictive models. TCGA offers molecular characterization of over 20,000 primary cancer and matched normal samples spanning 33 cancer types, providing extensive multi-omics data from patient tumors [21]. COSMIC serves as the most detailed and comprehensive resource for exploring the effect of somatic mutations in human cancer, containing nearly 6 million coding mutations across 1.4 million tumour samples curated from over 26,000 publications [22]. CCLE provides comprehensive molecular profiling of cancer cell lines, enabling functional genomics and drug sensitivity studies [23] [24]. Together, these resources form a powerful ecosystem for developing ML approaches that can decipher cancer heterogeneity, predict drug response, and identify novel therapeutic targets.

Table 1: Key Characteristics of Major Genomic Resources for ML Training

| Resource | Primary Data Type | Sample/Cell Line Count | Key Applications in ML | Access Method |

|---|---|---|---|---|

| TCGA [21] | Multi-omics patient data | >20,000 primary cancer samples; 33 cancer types | Cancer subtype classification; Survival prediction; Biomarker discovery | Genomic Data Commons Data Portal |

| COSMIC [25] [22] | Somatic mutations & mutational signatures | ~6 million coding mutations; 1.4 million tumour samples | Mutational pattern analysis; Etiology identification; Signature extraction | COSMIC web portal (cancer.sanger.ac.uk) |

| CCLE [23] [24] | Cell line molecular profiles & drug response | >1,000 cancer cell lines | Drug sensitivity prediction; Preclinical modeling; Functional genomics | DepMap portal; CCLE website |

Data Modalities Available for ML Training

Table 2: Data Modalities Available Across Genomic Resources

| Data Modality | TCGA | COSMIC | CCLE | ML Application Examples |

|---|---|---|---|---|

| Genomic | Whole exome/genome sequencing; Copy number variations | Comprehensive somatic mutations; Copy number variants | Copy number aberrations; Mutations | Feature selection for classification; Mutation impact prediction |

| Transcriptomic | RNA-seq; miRNA; lncRNA | Gene expression variants | Gene expression; miRNA | Gene expression-based subtyping; Biomarker identification |

| Epigenomic | DNA methylation | Differentially methylated CpGs | DNA methylation | Epigenetic regulation analysis; Methylation-based classification |

| Proteomic | RPPA protein arrays | Limited protein data | Limited protein data | Protein signaling network analysis |

| Drug Response | Limited clinical treatment data | Drug resistance mutations | IC50 values; Drug sensitivity | Drug response prediction; Synergistic drug combination discovery |

Integration Frameworks and Methodologies

Data Alignment and Preprocessing Protocols

Effective integration of these resources requires sophisticated data alignment strategies. A critical challenge in combining TCGA patient data with CCLE cell line profiles involves the systematic differences between tumor samples and in vitro models. Celligner is an unsupervised alignment method that maps gene expression of tumor samples to the expression profiles of cell lines, addressing the technical and biological variances between these systems [24]. The alignment process involves contrastive Principal Component Analysis (cPCA), which detects correlated variance components that differ between datasets. Experimental protocols typically remove the top four principal components (cPC1-4) between DEPMAP and TCGA transcriptomes to significantly reduce the correlation of tumor dependencies with tumor purity [26].

For mutational signature analysis, COSMIC provides SigProfiler, a compilation of bioinformatic tools that address all steps needed for signature identification from raw data [25]. The standard workflow involves: (1) mutation matrix generation from raw sequencing data, (2) decomposition of mutational catalogs into signatures, (3) assignment of signatures to samples, and (4) comparison with reference signatures in the COSMIC database. The current reference includes six different variant classes: single base substitutions (SBS), doublet base substitutions (DBS), small insertions and deletions (ID), copy number (CN) signatures, structural variations (SV), and RNA single base substitutions [25].

ML Model Architectures for Resource Integration

Several pioneering studies have demonstrated effective frameworks for integrating these resources. The CellHit pipeline combines predictive models with Celligner alignment to identify cell lines whose transcriptomic profiles most closely match patient tumors, enabling translation of drug sensitivity predictions from cell lines to patients [23]. This approach uses XGBoost models trained on GDSC and PRISM drug sensitivity datasets, achieving a Pearson correlation coefficient of ρ = 0.89 for IC50 prediction [23].

For TCGA subtype classification, recent approaches have employed elastic-net regularization for feature selection and modeling, training predictive models on genome-wide CRISPR-Cas9 knockout screens from DEPMAP and translating these to TCGA patient tumors [26]. This hybrid dependency map (TCGADEPMAP) leverages experimental strengths of DEPMAP while enabling patient-relevant translatability of TCGA, successfully predicting lineage dependencies and oncogene essentialities [26].

Experimental Protocols and Workflows

Multi-Omics Similarity Scoring Framework

The CTDPathSim2.0 pipeline provides a comprehensive methodology for computing similarity scores between patient tumors and cell lines using multi-omics data [24]. This protocol enables researchers to identify the most relevant cell lines for specific cancer types or individual patients:

Data Acquisition and Processing: Download matched DNA methylation, gene expression, and copy number aberration (CNA) data from TCGA for tumor samples and from CCLE for cell lines. Perform quality control and normalization for each platform separately.

Immune Cell Deconvolution: Apply quadratic programming deconvolution algorithms to bulk tumor gene expression and DNA methylation data using reference signatures from immune cell types (B cells, NK cells, CD4+ T, CD8+ T, monocytes, adipocytes, cortical neurons, and vascular endothelial cells). This step accounts for tumor microenvironment heterogeneity.

Pathway Activity Calculation: Compute enriched biological pathways for patient tumor samples and cancer cell lines using patient-specific and cell line-specific differentially expressed (DE), differentially methylated (DM), and differentially aberrated (DA) genes. Use reference pathway databases such as Reactome.

Similarity Score Computation: Calculate Spearman correlation coefficients to generate gene expression-, DNA methylation-, and CNA-based similarity scores. Integrate these scores using weighted combinations based on data quality and biological relevance for specific cancer types.

Validation and Application: Validate similarity scores by assessing whether top-ranked cell lines recapitulate drug response patterns observed in patient tumors for FDA-approved drugs specific to each cancer type.

Mutational Signature Extraction Protocol

COSMIC provides standardized workflows for extracting mutational signatures from genomic data [25]:

Variant Calling and Classification: Process whole genome or whole exome sequencing data through standardized variant calling pipelines. Classify mutations according to COSMIC standards: 96 single base substitution (SBS) types (considering trinucleotide context), 78 doublet base substitution (DBS) types, and 83 small insertion/deletion (ID) types.

Mutational Catalog Generation: Create a mutational matrix for your dataset, with samples as rows and mutation types as columns. Normalize counts based on sequencing coverage and trinucleotide frequencies.

Signature Extraction: Use SigProfiler (available through COSMIC) to decompose the mutational catalogs into signatures. Apply non-negative matrix factorization (NMF) with multiple initializations to ensure robust results.

Signature Assignment: Compare extracted signatures to the reference COSMIC mutational signatures (v3.5). Assign cosine similarity scores to identify matching known signatures. Signatures with cosine similarity >0.85 are generally considered matches.

Etiology Interpretation: Interpret the biological or environmental processes underlying the identified signatures using COSMIC's detailed annotation of each signature's proposed etiology, associated cancer types, and potential underlying mechanisms.

Visualization of Key Workflows

Multi-Omics Data Integration Workflow

Workflow for Genomic Resource Integration

Cell Line to Patient Translation Framework

Cell Line to Patient Translation

Table 3: Essential Computational Tools for Genomic Resource Utilization

| Tool/Resource | Function | Application Context | Access/Implementation |

|---|---|---|---|

| SigProfiler [25] | Mutational signature extraction and analysis | Identification of mutational patterns from tumor sequencing data | Python package; COSMIC integration |

| Celligner [24] | Alignment of cell line and tumor transcriptomics | Bridging preclinical models and patient data for translation | R package; available through GitHub |

| Elastic-net Regularization [26] | Feature selection for high-dimensional genomic data | Building predictive models of gene essentiality and drug response | Standard implementation in scikit-learn, GLMNET |

| XGBoost [23] | Gradient boosting for structured data | Drug sensitivity prediction with multi-omics features | Python/R packages with GPU support |

| cPCA (contrastive PCA) [26] | Dimensionality reduction emphasizing dataset differences | Removing technical artifacts when integrating different data sources | Python implementation available |

| CTDPathSim2.0 [24] | Multi-omics similarity scoring between tumors and cell lines | Identifying representative cell lines for specific cancer types | R software package |

The integration of TCGA, COSMIC, and CCLE represents a powerful paradigm for advancing machine learning applications in cancer genomics. These resources provide complementary data types that, when properly integrated through sophisticated computational methods, enable robust prediction of cancer subtypes, drug responses, and patient outcomes. Current methodologies including multi-omics alignment, mutational signature analysis, and cross-resource validation provide a strong foundation, yet challenges remain in addressing tumor heterogeneity, improving clinical translatability of cell line models, and developing interpretable ML approaches that provide biological insights alongside predictions [27].

Future directions in the field include the development of more sophisticated alignment methods that better capture tumor microenvironment complexity, the integration of emerging data types such as single-cell sequencing and spatial transcriptomics, and the implementation of privacy-preserving federated learning approaches to enable multi-institutional collaboration without data sharing [27]. As these technical advances progress, the seamless integration of TCGA, COSMIC, and CCLE will continue to drive innovations in precision oncology, ultimately enabling more personalized and effective cancer treatments.

The field of cancer genomics is undergoing a massive transformation, driven by the widespread adoption of Next-Generation Sequencing (NGS). Our DNA holds a wealth of information vital for future healthcare, but its sheer volume and complexity create a significant bottleneck between data generation and clinical application [28]. The process of converting raw sequence data into actionable clinical insights represents one of the most significant challenges in modern oncology research and drug development.

Next-Generation Sequencing has revolutionized genomics by making large-scale DNA and RNA sequencing faster, cheaper, and more accessible than ever [29]. However, this progress has unleashed a data deluge of unprecedented scale. A single human genome generates about 100 gigabytes of data, and with millions of genomes being sequenced globally, the numbers are staggering [28]. By 2025, genomic data is projected to reach 40 exabytes (a billion gigabytes each), creating analytical challenges that outpace traditional computational methods [28]. This bottleneck challenges supercomputers and Moore's Law itself, with analysis pipelines struggling to keep up and delaying critical insights [28].

The integration of artificial intelligence and machine learning offers promising solutions to these challenges. AI and machine learning algorithms have emerged as indispensable tools in genomic data analysis, uncovering patterns and insights that traditional methods might miss [29]. For cancer researchers and drug development professionals, understanding this bottleneck—and the technologies emerging to address it—is crucial for advancing precision oncology and delivering personalized cancer treatments.

The Genomic Data Analysis Pipeline: From Sequencing to Interpretation

The journey from raw sequence to clinical insight follows a multi-stage analytical pipeline, each with its own computational challenges and requirements. Understanding this workflow is essential for identifying where bottlenecks occur and how they can be mitigated.

Pipeline Stages and Technical Challenges

Table 1: Stages in Genomic Data Analysis and Associated Challenges

| Pipeline Stage | Primary Function | Key Technical Challenges | Common Tools/Approaches |

|---|---|---|---|

| Primary Analysis | Base calling, quality scoring | Handling massive raw data volumes from sequencers; real-time processing demands | Illumina DRAGEN, Oxford Nanopore tools |

| Secondary Analysis | Read alignment, variant calling | Computational intensity; sequencing errors; algorithm variability | BWA-MEM, STAR, DeepVariant [28] |

| Tertiary Analysis | Biological interpretation, pathway analysis | Data integration; distinguishing driver from passenger mutations; clinical correlation | GATK, AI/ML models, multi-omics integration |

The analytical process begins with primary analysis, where raw signals from sequencing instruments are converted into nucleotide sequences with corresponding quality scores. The computational demands here are substantial, with modern sequencers generating terabytes of data per run [29].

Secondary analysis represents where the most significant computational bottlenecks traditionally occur. This stage involves aligning sequences to a reference genome and identifying genetic variants—a process complicated by several factors. Sequencing errors can introduce false variants, making proper quality control essential for ensuring reliability [30]. Different alignment algorithms or variant calling methods may produce conflicting results, complicating interpretation [30]. Large datasets from whole-genome or transcriptome studies often require powerful servers and optimized workflows [30].

Tertiary analysis focuses on biological interpretation—connecting genetic variants to clinical meaning. This represents the most complex challenge, requiring integration of diverse datasets and distinguishing biologically significant mutations from benign variations. As Kevin Boehm, MD, PhD, of Memorial Sloan Kettering Cancer Center notes, "We can't just lump all of these histologies together and infer genomic features. Each granular subtype must be considered separately" [31].

Visualizing the Analytical Workflow

The following diagram illustrates the complete genomic data analysis pipeline, highlighting the flow from raw data to clinical insights and key decision points:

Diagram 1: Genomic data analysis pipeline showing key stages and interpretation bottleneck.

AI and Machine Learning Solutions for Genomic Interpretation

Artificial intelligence, particularly machine learning and deep learning, is revolutionizing how we approach the genomic interpretation bottleneck. These technologies offer powerful pattern recognition capabilities that can scale to accommodate the massive datasets typical in cancer genomics.

Core AI Models in Genomic Analysis

Table 2: AI/ML Models and Their Applications in Genomic Cancer Research

| AI Model Type | Primary Applications in Genomics | Key Advantages | Performance Metrics |

|---|---|---|---|

| Convolutional Neural Networks (CNNs) | Sequence pattern recognition; variant calling; image analysis of histopathology | Excellent at identifying spatial patterns in sequence data | DeepVariant achieves >99% accuracy in variant calling [28] |

| Recurrent Neural Networks (RNNs) | Processing sequential genomic data; predicting protein structures | Captures long-range dependencies in sequences | LSTM networks effectively model gene regulatory elements [28] |

| Transformer Models | Gene expression prediction; variant effect prediction | Handles complex relationships across entire genomes | State-of-the-art in predicting non-coding variant effects [28] |

| Generative Models | Creating synthetic patient data; designing novel proteins | Augments limited datasets; generates realistic synthetic data | Synthetic patients show 68.3% accuracy vs 67.9% with real data [31] |

The relationship between artificial intelligence, machine learning, and deep learning is hierarchical: all deep learning is machine learning, and all machine learning is AI [28]. In genomics, ML and especially DL are leveraged to tackle complex, high-dimensional genetic data [28].

Within machine learning, several learning paradigms are particularly relevant to genomic analysis:

- Supervised Learning: The model is trained on a "labeled" dataset where the correct output is known. For instance, training a model on thousands of genomic variants that have been expertly labeled as either "pathogenic" or "benign" enables classification of new, unseen variants [28].

- Unsupervised Learning: The model works with unlabeled data to find hidden patterns or structures. This is useful for exploratory analysis, such as clustering patients into distinct subgroups based on their gene expression profiles, potentially revealing new disease subtypes that respond differently to treatment [28].

- Reinforcement Learning: This involves an AI agent learning to make a sequence of decisions in an environment to maximize a cumulative reward. In genomics, this could optimize treatment strategies over time or create novel protein sequences [28].

AI-Driven Variant Calling and Interpretation

Variant calling in genomics involves identifying all differences in a person's DNA compared to a reference—a process akin to finding every typo in a giant instruction manual [28]. With millions of potential variants in a genome, traditional methods are slow, computationally expensive, and struggle with accuracy, especially for complex variants.

AI has dramatically improved both the speed and accuracy of this process. GPU acceleration, using powerful chips like NVIDIA's H100, has been a game-changer. Tools like NVIDIA Parabricks can accelerate genomic tasks by up to 80x, reducing processes that took hours to minutes [28].

Google's DeepVariant reframes variant calling as an image classification problem. It creates images of the aligned DNA reads around a potential variant site and uses a deep neural network to classify these images, distinguishing true variants from sequencing errors with remarkable precision [28]. This approach often outperforms older statistical methods.

Beyond single-letter changes, AI excels at detecting large Structural Variants (SVs)—deletions, duplications, inversions, and translocations of large DNA segments. These SVs are often linked to severe genetic diseases and cancers but are notoriously difficult to detect with standard methods [28].

Visualizing AI-Enhanced Genomic Analysis

The following diagram illustrates how AI and multi-omics data integration are transforming traditional genomic analysis workflows:

Diagram 2: AI-enhanced genomic analysis workflow compared to traditional approaches.

Multi-Omics Integration: Beyond the Genome

While genomics provides valuable insights into DNA sequences, it is only one piece of the puzzle for understanding cancer biology. Multi-omics approaches combine genomics with other layers of biological information to provide a comprehensive view of biological systems [29].

Components of Multi-Omics Analysis

Multi-omics integration combines several data layers:

- Transcriptomics: RNA expression levels that indicate which genes are actively being transcribed [29].

- Proteomics: Protein abundance and interactions that represent functional molecules in cells [29].

- Metabolomics: Metabolic pathways and compounds that reflect the functional state of biological systems [29].

- Epigenomics: Epigenetic modifications such as DNA methylation that regulate gene expression without changing the DNA sequence itself [29].

This integrative approach provides a more complete picture of biological systems, linking genetic information with molecular function and phenotypic outcomes [29]. In 2025, population-scale genome studies are expanding to an entirely new phase of multiomic analysis enabled by direct interrogation of molecules, moving beyond molecular proxies like cDNA for transcriptomes or bisulfite conversion for methylomes [32].

Applications in Cancer Research

Multi-omics has proven particularly valuable in oncology, where it helps dissect the tumor microenvironment, revealing interactions between cancer cells and their surroundings [29]. By integrating genetic, epigenetic, and transcriptomic data with HiFi accuracy, scientists can uncover the full complexity of biological systems—transforming our understanding of health, disease, and the possibilities for intervention [32].

AI's integration with multi-omics data has further enhanced its capacity to predict biological outcomes, contributing to advancements in precision medicine [29]. As noted by researchers, "By combining these insights with AI-powered analytics, researchers can unravel complex biological mechanisms, accelerating breakthroughs in rare diseases, cancer, and population health" [32].

Experimental Protocols and Methodologies

AI-Assisted Histopathological Image Analysis

Recent advances demonstrate how AI can extract genomic information from standard histopathology images, potentially expanding access to precision oncology.

Protocol: Integrated Histologic-Genomic Analysis

- Sample Preparation: Collect hematoxylin and eosin (H&E)-stained tumor tissue samples from patients with confirmed diagnoses [31].

- Digital Imaging: Digitize H&E images at high resolution (40x magnification recommended) using whole-slide scanners [31].

- AI Model Architecture:

- Implement AEON model to analyze H&E images from approximately 80,000 samples to identify histologic patterns [31].

- Combine pattern recognition with information from cancer classification system OncoTree to classify histologic subtypes [31].

- Integrate Paladin model to infer genomic properties from histologic patterns based on established genotype-phenotype relationships [31].

- Validation: Compare AI-generated classifications with pathologist annotations and genomic sequencing data where available [31].

Performance Metrics: This approach has demonstrated 78% accuracy in classifying cancer subtypes and successfully reclassified tumors into more granular subtypes than initially assigned by pathologists [31].

Synthetic Patient Data Generation

To address data scarcity limitations in AI model development, researchers have created methods for generating synthetic patient data.

Protocol: Synthetic Patient Generation for Model Training

- Data Collection: Compile clinical information and digitized histology images from real patient cohorts with appropriate ethical approvals [31].

- Model Training: Train generative AI models on the real patient data to learn connections between clinical and histologic features [31].

- Reference Mapping: Develop a similarity map that plots real patients based on their clinical and histologic features, with shorter distances indicating greater similarity [31].

- Synthetic Generation: Use the reference map as a guide for generating realistic synthetic patients with both clinical data and corresponding histologic images [31].

- Model Validation: Train diagnostic and predictive models on synthetic data and compare performance against models trained on real patient data [31].

Performance Metrics: When trained on data from 1,000 synthetic lung cancer patients, AI models predicted immunotherapy responses with 68.3% accuracy compared to 67.9% accuracy when trained on data from 1,630 real patients [31].

Table 3: Key Research Reagents and Computational Tools for Genomic Cancer Research

| Tool/Category | Specific Examples | Primary Function | Application in Cancer Genomics |

|---|---|---|---|

| Sequencing Platforms | Illumina NovaSeq X; Oxford Nanopore | Generate raw sequence data | Whole genome sequencing; transcriptomics; structural variant detection [32] [29] |

| AI-Based Analytical Tools | DeepVariant; NVIDIA Parabricks; AEON; Paladin | Variant calling; pattern recognition; predictive modeling | Accurate variant identification; histologic-genomic correlation [28] [31] |

| Data Integration Frameworks | Cloud-based platforms (AWS, Google Cloud Genomics) | Multi-omics data integration; collaborative analysis | Secure data sharing; scalable computation; cross-institutional collaboration [29] |

| Synthetic Data Generators | Custom generative AI models | Create realistic synthetic patient data | Augment training datasets; preserve patient privacy [31] |

| Visualization Tools | Spatial transcriptomics platforms; TensorBoard | Data exploration; model interpretation | Tumor microenvironment mapping; model explainability [32] |

The field of genomic data interpretation is rapidly evolving, with several emerging trends poised to further transform how we approach the bottleneck between raw sequence data and clinical insight.

Spatial biology represents one of the most promising frontiers. The year 2025 is poised to be a breakthrough year for spatial biology, with new high-throughput sequencing-based technologies enabling large-scale, cost-effective studies [32]. Direct sequencing of genomic variations such as cancer mutations, gene edits, and immune receptor sequences in single cells within their native spatial context in tissue will allow researchers to explore complex cellular interactions and disease mechanisms with unparalleled biological precision [32].

Cloud computing will continue to play an essential role in addressing computational challenges. The volume of genomic data generated by NGS and multi-omics is staggering, often exceeding terabytes per project [29]. Cloud computing has emerged as a solution, providing scalable infrastructure to store, process, and analyze this data efficiently [29]. Platforms like Amazon Web Services (AWS) and Google Cloud Genomics can handle vast datasets with ease, enabling global collaboration where researchers from different institutions can work on the same datasets in real time [29].

Ethical considerations and data privacy will remain critical concerns. The rapid growth of genomic datasets has amplified concerns around data privacy and ethical use [29]. Breaches in genomic data can lead to identity theft, genetic discrimination, and misuse of personal health information [29]. Ensuring informed consent for data sharing in multi-omics studies is complex but essential, and addressing equity issues in accessibility to genomic services across different regions will be crucial for preventing health disparities [29].

In conclusion, while the bottleneck in genomic data interpretation remains a significant challenge in cancer research, the integration of artificial intelligence, multi-omics approaches, and cloud computing is creating new pathways to overcome these limitations. As these technologies continue to mature and evolve, they hold the promise of accelerating our understanding of cancer biology and delivering on the potential of precision oncology for all patients.

The integration of artificial intelligence (AI) in genomic cancer research is transforming oncological discovery and therapeutic development. This whitepaper deconstructs the AI landscape—differentiating between weak AI, strong AI, machine learning, and deep learning—and provides a technical framework for their application in multi-omics cancer data analysis. We present standardized experimental protocols, computational workflows, and essential research reagents to equip computational biologists and oncology researchers with the tools to leverage these technologies effectively, with a particular focus on the MLOmics database as a benchmark resource.

Core AI Concepts and Terminology

Weak AI vs. Strong AI: A Fundamental Dichotomy

The current AI landscape is fundamentally divided into two categories: Weak AI and Strong AI.

- Weak AI, also known as Narrow AI or Artificial Narrow Intelligence (ANI), refers to systems designed and trained for a specific task or a narrow range of tasks [33] [34]. These systems excel in their predefined domains but lack general intelligence, consciousness, or the ability to apply knowledge to unrelated problems. Virtually all AI in use today falls under this category [35].

- Strong AI, also known as Artificial General Intelligence (AGI), is a theoretical form of AI that would possess general cognitive abilities comparable to a human being [33] [34]. Such a system would be capable of understanding, learning, and applying knowledge across a wide range of tasks, demonstrating reasoning, creativity, and autonomous problem-solving. Strong AI remains hypothetical and is not yet realized [35].

Table 1: Comparative Analysis of Weak AI vs. Strong AI

| Aspect | Weak AI (Narrow AI) | Strong AI (Artificial General Intelligence) |

|---|---|---|

| Scope & Functionality | Task-specific; focused on a narrow domain [34] | General intelligence; wide range of tasks across domains [34] |

| Cognitive Abilities | Operates on predefined algorithms and learned patterns; no true understanding [34] | Possesses general cognitive abilities, self-awareness, and genuine understanding [34] |

| Consciousness | No consciousness or self-awareness [34] | Theoretical self-awareness and consciousness [34] |

| Autonomy | Requires human oversight and intervention [34] | Would function autonomously, making independent decisions [34] |

| Adaptability | Limited to specific functions; not easily adaptable to new tasks [34] | Highly adaptable; can learn from experiences in novel situations [34] |

| Current Status | Widely deployed and in use today [33] [34] | Purely theoretical; subject of ongoing research [33] [34] |

Machine Learning and Deep Learning within the AI Hierarchy

Machine Learning (ML) is a subset of AI that provides systems the ability to automatically learn and improve from experience without being explicitly programmed. Deep Learning (DL) is a further subset of ML that uses artificial neural networks with multiple layers (deep architectures) to learn complex patterns in large amounts of data [36].

- Machine Learning in Genomics: Traditional ML methods like Support Vector Machines (SVM) and Random Forests (RF) have been widely used for tasks such as molecular subtyping and variant classification [37] [36]. They are particularly effective when feature sets are well-defined and data volumes are moderate.

- Deep Learning in Genomics: DL methods, including convolutional neural networks (CNNs) and recurrent neural networks (RNNs), excel at identifying highly complex patterns in large, raw genomic datasets [36]. They automatically learn relevant features from the data, reducing the need for extensive feature engineering. A key application in cancer genomics is the use of deep learning for variant calling, with tools like Google’s DeepVariant achieving greater accuracy than traditional methods [29].

AI Applications in Genomic Cancer Research: Methodologies and Protocols

The analysis of multi-omics data—integrating genomics, transcriptomics, epigenomics, and proteomics—is pivotal for uncovering the complex mechanisms of cancer. AI models are essential for interpreting these vast, interconnected datasets.

Experimental Protocol: Pan-Cancer and Cancer Subtype Classification

Objective: To develop a machine learning model that can classify tissue samples into specific cancer types (pan-cancer classification) or into known molecular subtypes within a specific cancer (e.g., BRCA, COAD) [37].

Dataset:

- Primary Resource: MLOmics database [37].

- Data Type: Multi-omics data (mRNA expression, miRNA expression, DNA methylation, Copy Number Variations) across 8,314 patient samples and 32 cancer types.

- Feature Versions: Utilize the "Top" feature version from MLOmics, which contains the most significant features selected via ANOVA test and Benjamini-Hochberg correction to reduce noise [37].

Methodology:

- Data Partitioning: Randomly split the dataset into three subsets:

- Training Set (70%): Used to learn model parameters.

- Validation Set (15%): Used for hyperparameter tuning and model selection.

- Test Set (15%): Used for the final, unbiased evaluation of generalization performance [36].

- Model Selection and Training:

- Baseline Models: Implement classical machine learning models as baselines:

- Deep Learning Models: Reproduce and evaluate advanced deep learning models designed for omics data:

- Training: Train each model on the training set. For deep learning models, use techniques like dropout and L2 regularization to mitigate overfitting [36].

- Model Evaluation:

- Metrics: Calculate precision, recall, and F1-score on the held-out test set [37] [36]. Due to potential class imbalance in cancer datasets, these metrics are more informative than simple accuracy [36].

- Validation: Monitor validation performance during training; stop training when validation performance plateaus or begins to decrease to prevent overfitting [36].

AI-Driven Cancer Classification Workflow

Experimental Protocol: Novel Cancer Subtype Discovery via Clustering

Objective: To identify previously unknown molecular subtypes within a specific cancer type using unsupervised clustering algorithms [37].

Dataset:

- Primary Resource: MLOmics unlabeled rare cancer datasets [37].

- Data Type: Multi-omics data from cancers where established subtypes are not fully defined.

Methodology:

- Dimensionality Reduction: Employ autoencoders, a type of neural network designed for nonlinear dimensionality reduction, to compress the high-dimensional omics data into a lower-dimensional latent space that captures the essential biological variation [36].

- Clustering: Apply clustering algorithms (e.g., k-means, hierarchical clustering) to the latent representations generated by the autoencoder.

- Validation:

- Stability Analysis: Evaluate the robustness of clusters across different algorithm initializations.

- Biological Significance: Perform enrichment analysis (e.g., GO, KEGG pathways) on the marker features of each cluster to assess their biological coherence and clinical relevance [37]. MLOmics provides support for linking results to bio-knowledge bases like KEGG for this purpose [37].

- Survival Analysis: Conduct Kaplan-Meier survival analysis to determine if the identified subtypes have significant differences in patient outcomes [37].

Success in AI-driven genomic research relies on a curated set of computational tools and data resources.

Table 2: Key Research Reagent Solutions for AI in Genomic Cancer Research

| Resource / Tool | Type | Primary Function in Research |

|---|---|---|

| MLOmics Database [37] | Data Repository | Provides preprocessed, model-ready multi-omics cancer data (mRNA, miRNA, methylation, CNV) for 32 cancer types, enabling fair benchmarking. |

| TCGA (The Cancer Genome Atlas) [37] | Data Source | The foundational source of raw genomic and clinical data for many cancer studies, accessible via the GDC Data Portal. |

| DeepVariant [29] | Software Tool | A deep learning-based variant caller that converts sequencing data into called genetic variants with high accuracy. |

| CNN (Convolutional Neural Network) [36] | Algorithm | Used for identifying spatially invariant patterns in data; applicable to sequence motifs in DNA or identifying features from genomic matrices. |

| Autoencoder [36] | Algorithm | An unsupervised deep learning model for nonlinear dimensionality reduction, crucial for visualizing and clustering high-dimensional omics data. |

| Cloud Computing Platforms (AWS, Google Cloud) [29] | Infrastructure | Provide scalable computational power and storage necessary for processing terabytes of genomic data and training complex models. |

| STRING [37] | Bio-Knowledge Base | A database of known and predicted protein-protein interactions, used for functional enrichment analysis of gene sets identified by AI models. |

| KEGG (Kyoto Encyclopedia of Genes and Genomes) [37] | Bio-Knowledge Base | A resource linking genomic information with higher-order functional pathways, used to interpret the biological meaning of AI-derived features. |

AI Technology Hierarchy & Applications

Future Directions and Ethical Considerations

The future of AI in genomics points toward the deeper integration of multi-omics data, single-cell analysis, and spatial transcriptomics, powered by increasingly sophisticated AI models [29]. A significant challenge is the transition from highly accurate but narrow weak AI systems toward the flexibility and generalizability of strong AI. Key innovations on the horizon include the use of AI for polygenic risk prediction and the application of foundational models pre-trained on large-scale genomic datasets [29].

Ethical considerations are paramount. The handling of sensitive genomic data demands strict adherence to privacy regulations like HIPAA and GDPR, often facilitated by secure cloud computing environments [29]. Furthermore, researchers must proactively address potential biases in AI models that could lead to health disparities, and ensure transparency and interpretability in AI-driven discoveries to maintain scientific rigor and trust [33] [29].

ML Architectures in Action: Practical Applications for Cancer Detection and Subtyping

Convolutional Neural Networks (CNNs) for Image Analysis and Variant Calling with Tools like DeepVariant

The application of Convolutional Neural Networks (CNNs) represents a paradigm shift in how researchers approach the complexity of genomic cancer data. CNNs, which have revolutionized image processing, are now transforming genomic analysis by interpreting DNA sequence data as specialized images, enabling unprecedented accuracy in identifying cancer-driving genetic mutations [28]. This approach is particularly valuable in cancer research, where precise variant calling can reveal somatic mutations that drive tumorigenesis, inform prognosis, and guide targeted therapy selection [38] [39].

DeepVariant, developed by Google Health, pioneered this approach by reframing variant calling as an image classification problem [38] [40]. By converting aligned sequencing reads into multi-channel pileup images, DeepVariant's CNN architecture can distinguish true biological variants from sequencing artifacts with remarkable precision [41] [40]. This capability is especially crucial in cancer genomics, where detecting low-frequency somatic variants against a background of normal tissue requires exceptional sensitivity and specificity [39].

The integration of CNNs into cancer genomics workflows addresses several longstanding challenges. Traditional variant calling methods often struggle with the high error rates of single-molecule sequencing technologies and the complexities of tumor heterogeneity [42] [39]. CNN-based approaches like DeepVariant and Clairvoyante have demonstrated superior performance across diverse sequencing platforms, making them particularly suitable for cancer research applications where data may originate from multiple sources [42] [41].

Core CNN Architectures for Genomic Data

Fundamental Architecture and Operations

Convolutional Neural Networks process genomic data through a series of hierarchical layers that automatically learn to extract increasingly abstract features. The convolutional layer applies filters that slide across the input data to detect local patterns through weight sharing and spatial hierarchies [43]. This operation can be mathematically represented as features generated through the convolution of inputs with learned kernels, followed by non-linear activation functions. Pooling layers, typically using max or average operations, progressively reduce spatial dimensions while retaining the most salient features, providing translation invariance and computational efficiency [43].

In genomic applications, CNNs process sequencing data converted into image-like representations. The network learns characteristic patterns associated with true genetic variants versus sequencing errors through multiple layers of feature extraction [40]. The final fully connected layers integrate these extracted features to perform classification tasks, such as determining variant zygosity or distinguishing somatic from germline mutations in cancer samples [38].

Specialized CNN Architectures for Genomics

Several specialized CNN architectures have been developed specifically for genomic variant calling:

DeepVariant employs a modified Inception v3 architecture, which uses multi-scale convolutional filters to capture features at different resolutions simultaneously [38] [40]. This enables the model to detect both local sequence patterns and broader genomic context, which is crucial for accurate variant identification in complex cancer genomes.

Clairvoyante utilizes a compact five-layer convolutional network optimized for simultaneous prediction of variant type, zygosity, alternative allele, and indel length [42]. This multi-task architecture improves efficiency and accuracy by leveraging shared features across related prediction tasks.

MobileNetV2 has been adapted for genomic analysis in frameworks like DeepChem-Variant, offering improved computational efficiency through inverted residual blocks and linear bottlenecks [40]. This is particularly valuable for large-scale cancer genomics studies requiring analysis of thousands of tumor samples.

Table 1: CNN Architectures for Genomic Variant Calling

| Architecture | Key Features | Genomics Applications | Advantages |

|---|---|---|---|

| Inception v3 (DeepVariant) | Multi-scale convolutional filters, auxiliary classifiers | General variant calling, cancer somatic mutation detection | Captures features at multiple resolutions, high accuracy |

| Custom 5-layer CNN (Clairvoyante) | Compact design, multi-task learning | Simultaneous variant type and zygosity calling | Computational efficiency, optimized for SMS data |

| MobileNetV2 (DeepChem-Variant) | Inverted residuals, linear bottlenecks | Resource-constrained environments, large-scale studies | Reduced computational requirements, maintained accuracy |

DeepVariant: Framework and Workflow

Core Methodology

DeepVariant transforms variant calling into an image classification problem through a sophisticated pipeline that converts aligned sequencing data into standardized pileup images [38] [40]. The workflow begins with aligned reads in BAM format, which are processed to generate candidate variant positions. For each candidate position, DeepVariant creates a multi-channel tensor representation that encodes various aspects of the sequencing data [40].

The pileup image generation process represents sequencing reads as rows in an image, with columns corresponding to genomic positions around the candidate variant. Six distinct channels capture different data characteristics: (1) base identity (A, C, G, T), (2) base quality scores, (3) mapping quality, (4) strand information, (5) read supports variant, and (6) base differs from reference [40]. This rich representation enables the CNN to learn complex patterns distinguishing true variants from sequencing artifacts, which is particularly valuable in cancer genomics where tumor samples often have lower quality and higher noise levels.

Workflow Implementation

The following diagram illustrates the complete DeepVariant workflow for processing genomic data into variant calls:

Diagram 1: DeepVariant analysis workflow

The implementation begins with read alignment using tools like BWA-MEM or STAR, which map sequencing reads to a reference genome [28]. The resulting BAM file undergoes candidate variant detection, where potential variant positions are identified based on statistical evidence of variation from the reference [40]. For each candidate position, the pileup image generator creates the multi-channel tensor representation, which serves as input to the trained CNN model.

The CNN processes these images through its convolutional and fully connected layers, ultimately producing genotype probabilities for each candidate site [38]. The final output is a standardized VCF file containing the identified variants with quality metrics, ready for downstream analysis in cancer genomics pipelines.

Experimental Protocols and Performance

Benchmarking Methodology

Rigorous evaluation of CNN-based variant callers follows standardized protocols to ensure reproducibility and comparability. The Genome in a Bottle (GIAB) consortium provides benchmark truth sets for several reference genomes, including HG001, HG002, and HG003, which serve as gold standards for performance assessment [41]. These truth sets enable quantitative comparison of variant calling methods using well-established metrics.

Performance evaluation typically focuses on precision (positive predictive value), recall (sensitivity), and F1-score (harmonic mean of precision and recall) [42] [41]. For cancer applications, additional metrics like somatic validation rate and allele frequency concordance are often included. Benchmarking experiments generally compare CNN-based methods against established traditional variant callers such as GATK HaplotypeCaller, Strelka2, and Octopus across multiple sequencing technologies and coverage depths [41].

Performance Comparison

Table 2: Performance Comparison of Variant Calling Methods on HG003 (35x WGS)

| Method | SNP Precision | SNP Recall | Indel Precision | Indel Recall | F1-Score |

|---|---|---|---|---|---|

| DeepVariant-AF | 0.9985 | 0.9978 | 0.9962 | 0.9864 | 0.9947 |

| DeepVariant | 0.9982 | 0.9974 | 0.9951 | 0.9849 | 0.9938 |

| GATK HC | 0.9943 | 0.9957 | 0.9724 | 0.9658 | 0.9821 |

| Strelka2 | 0.9951 | 0.9962 | 0.9815 | 0.9724 | 0.9863 |

| Octopus | 0.9938 | 0.9965 | 0.9742 | 0.9687 | 0.9835 |

Data source: [41]

Recent advances incorporate population-level information directly into the variant calling process. DeepVariant-AF extends the standard DeepVariant architecture by adding an allele frequency channel trained on the 1000 Genomes Project data [41]. This approach demonstrates significant error reduction compared to population-agnostic models, particularly for rare variants and in lower-coverage datasets (20x and below), which is highly relevant for cancer studies with limited tumor material [41].

The performance advantage of CNN-based methods is especially pronounced in challenging genomic regions, including segmental duplications, HLA regions, and low-complexity sequences, which are often problematic for traditional variant callers [38]. In cancer genomics, these regions frequently harbor biologically significant mutations, making robust variant calling in these areas particularly valuable.