Leveraging Transfer Learning for Accurate Cancer Prediction with Limited Genomic Data

This article addresses the critical challenge of developing robust cancer prediction models when genomic data is scarce, a common scenario in clinical and research settings.

Leveraging Transfer Learning for Accurate Cancer Prediction with Limited Genomic Data

Abstract

This article addresses the critical challenge of developing robust cancer prediction models when genomic data is scarce, a common scenario in clinical and research settings. We explore how transfer learning (TL) mitigates data limitations by leveraging knowledge from large-scale source domains, such as public cell line databases or image repositories. The content covers foundational TL concepts, details methodological applications for genomic and imaging data, provides strategies for troubleshooting and optimization, and presents rigorous validation frameworks. Aimed at researchers, scientists, and drug development professionals, this review synthesizes current evidence to guide the effective implementation of TL, ultimately enhancing the accuracy and clinical applicability of cancer prediction tools.

Why Transfer Learning? Overcoming Data Scarcity in Cancer Genomics

In the field of cancer genomics, the pursuit of predictive models is fundamentally constrained by the "dual challenge": the scarcity of large, labeled genomic datasets and the inherent high dimensionality of genomic data. Data scarcity arises from the high costs and logistical complexities of sequencing, particularly for rare cancer subtypes, leading to cohorts that are often insufficient for training complex models [1] [2]. Concurrently, high dimensionality—where each sample is characterized by thousands to millions of features (e.g., genes, mutations) while the number of samples is limited—increases the risk of model overfitting and complicates the extraction of robust biological insights [3]. This combination poses a significant barrier to the clinical translation of AI in oncology. However, within this challenge lies the promise of transfer learning, a paradigm that adapts knowledge from large-scale, data-rich source domains (such as general cancer genome atlases) to enhance performance on data-poor target tasks, effectively bridging the gap between limited data and model generalizability [4].

Quantifying the Challenge: Data and Dimensionality in Perspective

The scale of the data problem in genomics is immense. The following table summarizes the data characteristics and performance impacts observed in contemporary genomic studies.

Table 1: Data Characteristics and Performance Impacts in Genomic Studies

| Aspect | Exemplary Data / Metrics | Context / Impact |

|---|---|---|

| Data Volume | Human Genome: Over 3 billion base pairs; TCGA: >10,000 genomes, 2.5 petabytes of multi-omics data [2]. | Creates storage and processing bottlenecks; necessitates robust computational frameworks [5]. |

| Sequencing Cost | ~$525 per genome (as of 2022) [2]. | A limiting factor for assembling large-scale cohorts, especially for rare cancers. |

| Dimensionality | Single-cell RNA-seq: Tens of thousands of genes (features) per sample [6]. | "Curse of dimensionality"; data sparsity increases overfitting risk and complicates analysis [3]. |

| Model Performance | Deep learning models reduce false-negative rates in variant calling by 30–40% compared to traditional pipelines [1]. | Demonstrates AI's potential but is contingent on sufficient, high-quality data. |

| Feature Selection Impact | Proposed deep learning feature selection method achieved average improvements of 1.5% in accuracy and ~1.8% in precision/recall [3]. | Highlights the critical role of dimensionality reduction in improving model efficacy. |

Application Note: A Transfer Learning Protocol for Limited Genomic Data

This protocol outlines a methodology for adapting large-scale genomic foundation models to specific cancer prediction tasks with limited data, based on the approach described by Jiahui Yu (2025) [4].

Background and Principle

Pre-trained genomic "language models" (e.g., DNA-BERT, Nucleotide Transformer) have learned rich, generalizable representations of genomic sequence context from population-scale germline data. The principle of this protocol is to fine-tune these models on a smaller, targeted dataset of cancer genomes. This allows the model to leverage its pre-existing knowledge of genomic "grammar" while specializing in the detection of somatic variations and other cancer-specific alterations, thereby overcoming the limitations of a small dataset.

Experimental Materials and Reagents

Table 2: Essential Research Reagents and Computational Tools

| Item / Resource | Function / Description | Example Tools / Sources |

|---|---|---|

| Source (Pre-trained) Model | Provides the foundational knowledge of genomic sequences. | DNA-BERT, Nucleotide Transformer, Evo [4] [7]. |

| Target Domain Dataset | The smaller, task-specific cancer genomic dataset for model adaptation. | ICGC Pan-Cancer cohort, TCGA, or in-house WGS/WES data [1] [4]. |

| Raw Sequencing Data | Direct model input, forcing it to learn representations of complex alterations. | WGS/WES data in BAM or FASTA format [4] [6]. |

| High-Performance Computing (HPC) Infrastructure | Provides the computational power required for model fine-tuning. | Cloud platforms (AWS, Google Cloud) or local clusters with GPUs [5] [6]. |

| Explainability Toolkit | Interprets model predictions and validates biological plausibility. | Attention visualization, SHAP, feature occlusion tests [4] [6]. |

Step-by-Step Workflow Protocol

Step 1: Model and Data Acquisition

- 1.1. Obtain a pre-trained genomic language model (e.g., DNA-BERT).

- 1.2. Secure your target domain dataset. For cancer prediction, this should consist of raw sequencing data (e.g., BAM files) from a cohort like ICGC Pan-Cancer, encompassing the cancer types of interest [4].

Step 2: Data Preprocessing

- 2.1. If not using raw data, perform standard bioinformatics preprocessing on the target data: alignment to a reference genome, duplicate removal, and base quality recalibration [6].

- 2.2. Partition the target dataset into training, validation, and hold-out test sets. The hold-out test set must be sequestered before any model fitting or parameter tuning begins [8].

Step 3: Model Fine-Tuning

- 3.1. Initialize the model architecture with weights from the pre-trained source model.

- 3.2. Replace the model's final output layer to match the number of classes in your target task (e.g., cancer type classification).

- 3.3. Train (fine-tune) the model on the training split of your target data. Use the validation split for hyperparameter optimization and to monitor for overfitting. A lower learning rate for the pre-trained layers is typically used to avoid catastrophic forgetting [4].

Step 4: Model Validation and Interpretation

- 4.1. Generalization Assessment: Evaluate the final fine-tuned model's performance on the sequestered hold-out test set and, if possible, on independent external cohorts with varying sequencing technologies [4] [9].

- 4.2. Biological Interpretation: Implement explainability techniques.

- Use attention mechanisms to identify which genomic segments the model "focuses on" for its predictions.

- Perform feature occlusion tests, systematically masking parts of the input sequence to observe changes in prediction confidence [4].

- Compare the model-derived important features against established driver-gene databases (e.g., COSMIC) to assess biological plausibility and clinical relevance.

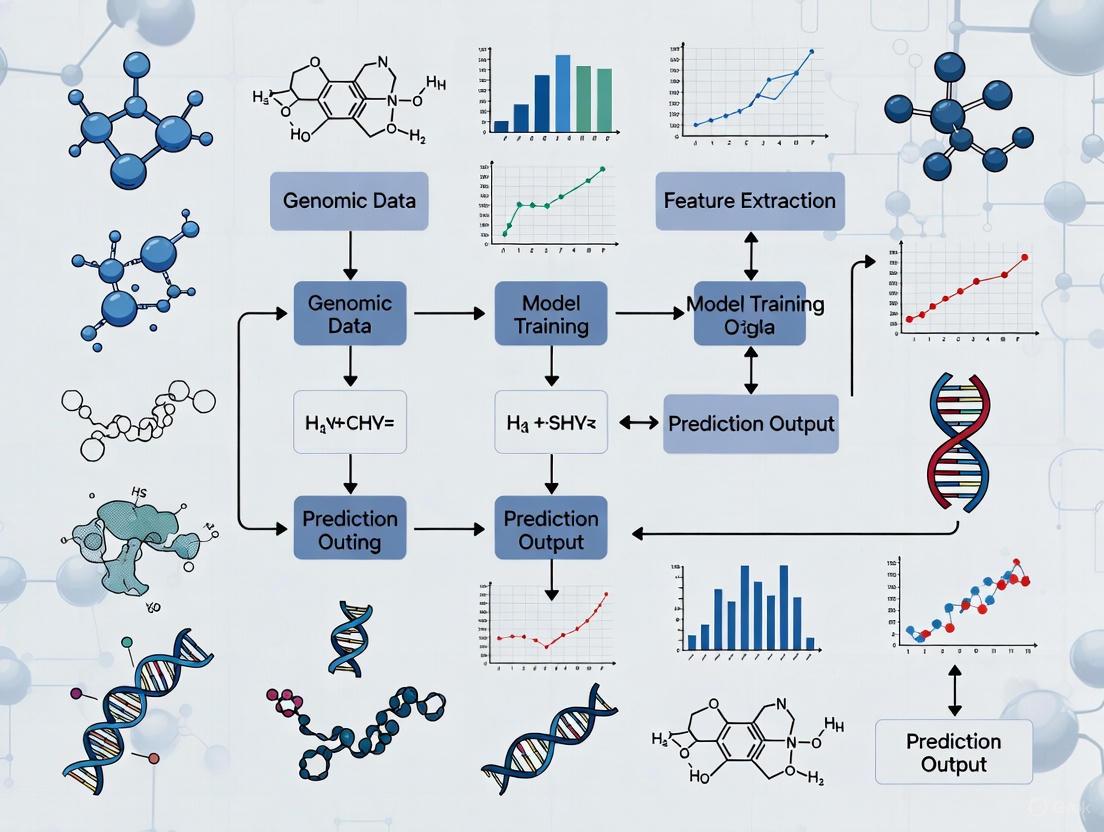

The following diagram visualizes the end-to-end workflow of this transfer learning protocol.

Complementary Protocol: Advanced Feature Selection for High-Dimensional Data

For scenarios not using raw sequencing data but pre-processed feature matrices (e.g., gene expression counts), advanced feature selection is critical. This protocol is based on a novel deep learning and graph-based method [3].

Background and Principle

Traditional feature selection methods often struggle with the complex, non-linear relationships in high-dimensional genomic data. This protocol uses a deep similarity measure to capture intricate dependencies between features, models the feature space as a graph, and employs community detection to identify and select the most influential, non-redundant features from each cluster.

Step-by-Step Workflow Protocol

Step 1: Graph Representation

- 1.1. Input the high-dimensional dataset (e.g., rows=samples, columns=genes/features).

- 1.2. Model the feature space as a graph, where each node represents a feature (e.g., a gene).

- 1.3. Use a deep learning model to calculate a sophisticated similarity measure between every pair of features (nodes), which serves as the weight of the edge connecting them. This step captures complex, non-linear relationships [3].

Step 2: Feature Clustering

- 2.1. Apply a community detection algorithm (e.g., Louvain method) to the constructed graph. This algorithm automatically identifies clusters (communities) of highly interconnected, similar features without requiring a pre-specified number of clusters [3].

Step 3: Influential Feature Selection

- 3.1. Within each identified cluster, calculate a node centrality measure (e.g., PageRank centrality) for every feature.

- 3.2. Select the feature with the highest centrality score from each cluster as the most influential and representative feature for that group.

- 3.3. The union of these selected features from all clusters forms the final, reduced feature set for downstream model training [3].

The logical flow of this feature selection method is illustrated below.

In the field of machine learning applied to biomedical research, transfer learning (TL) represents a powerful paradigm that enables the knowledge gained from solving one problem to be applied to a different but related problem [10]. This approach is particularly transformative for cancer prediction, where acquiring large-scale, labeled genomic and histopathological datasets is often prohibitively expensive, time-consuming, and limited by patient privacy concerns [11] [12]. By leveraging transfer learning, researchers can develop robust models that perform effectively even with limited target data, accelerating the pace of discovery in precision oncology.

The foundational concepts of transfer learning can be defined as follows:

- Source Domain: The original domain from which knowledge is drawn, typically characterized by a large dataset and a specific task on which a model has been pre-trained. In cancer research, common source domains include large public repositories like The Cancer Genome Atlas (TCGA), the Genomics of Drug Sensitivity in Cancer (GDSC) database, or even general-purpose image collections like ImageNet for histopathological image analysis [12] [13]. The source domain provides the initial feature representations and model weights that will be adapted.

- Target Domain: The new, specific domain of interest where knowledge from the source domain is applied. In the context of cancer prediction with limited genomic data, this could be a smaller, institution-specific cohort of patients, a different cancer type, or a new predictive task such as forecasting resistance to a specific therapeutic agent [13] [14]. The target domain often has a different data distribution than the source domain, necessitating careful adaptation strategies.

- Fine-Tuning: A specific technique within transfer learning that involves taking a pre-trained model (from the source domain) and continuing the training process on data from the target domain [15]. This is not merely a process of initializing weights but involves carefully updating the model's parameters using a lower learning rate to prevent catastrophic forgetting of generally useful features while adapting to the specifics of the target task [12] [15].

Transfer Learning Strategies and Types

The application of transfer learning can be categorized based on the relationship between the source and target domains and tasks. Understanding these categories helps researchers select the most appropriate strategy for their specific challenge in cancer prediction.

Table 1: Types of Transfer Learning in Biomedical Research

| Type | Description | Example in Cancer Research |

|---|---|---|

| Inductive Transfer Learning | Source and target domains are the same, but the tasks differ. The pre-trained model is fine-tuned for a new function [10] [15]. | A model pre-trained on general cancer cell line gene expression data (source task: proliferation rate prediction) is fine-tuned to predict the response to a newly developed drug (target task) [13]. |

| Transductive Transfer Learning | Source and target tasks are identical, but the domains differ in data distribution [10] [15]. | A model trained to classify lung cancer subtypes using data from one medical center (source domain) is adapted to perform the same classification on data from a new hospital with different imaging protocols (target domain) [14]. |

| Unsupervised Transfer Learning | Used when there is little to no labeled data available in both the source and target domains. The model learns to transfer feature representations without task-specific labels [10] [15]. | Applying a model pre-trained on unlabeled genomic sequences from a common database to cluster unlabeled single-cell RNA-seq data from a novel tumor sample. |

Fine-Tuning: A Detailed Technical Protocol

Fine-tuning is the practical engine of transfer learning. It involves a nuanced process of continuing the training of a pre-trained model on a new dataset. The core principle is to use a lower learning rate than that used for training from scratch, which allows the model to make small, precise adjustments to its weights without overwriting the generally useful features learned from the source domain [15].

Strategic Approaches to Fine-Tuning

Researchers can select from several fine-tuning strategies based on the size and similarity of their target dataset to the source data:

- Feature Extraction (Frozen Features): All pre-trained weights are kept frozen, and only newly added classifier layers are trained. This is highly efficient and ideal for very small target datasets that are similar to the source [15].

- Partial Fine-Tuning: This is the most common approach. Early layers (which capture universal features like edges and textures in images, or basic sequence patterns in genomics) are frozen, while later layers (which combine these into more task-specific features) are fine-tuned [12] [15].

- Full Fine-Tuning: All weights in the pre-trained model are updated. This is computationally expensive but can yield superior performance when the target dataset is large and/or significantly different from the source domain [15].

- Discriminative Fine-Tuning: Different learning rates are applied to different layers of the network. Earlier layers, which contain more general features, are updated with a much smaller learning rate, while later layers use a higher rate to adapt more quickly to the new task [15].

A Generic Experimental Protocol for Fine-Tuning in Cancer Prediction

The following protocol provides a template for fine-tuning a pre-trained model on a limited genomic or histopathological dataset for a cancer prediction task.

Table 2: Key Hyperparameters for Fine-Tuning

| Hyperparameter | Recommended Setting | Rationale |

|---|---|---|

| Initial Learning Rate | 10-100x smaller than for training from scratch (e.g., 1e-4 to 1e-5) [15] | Prevents catastrophic forgetting of pre-trained features. |

| Learning Rate Schedule | Cyclic Learning Rates or Warm Restarts [15] | Helps the model escape local minima in the loss landscape. |

| Optimizer | Adam, SGD with Nesterov momentum | Proven stable optimizers for fine-tuning tasks. |

| Batch Size | As large as computational resources allow | Improves training stability; can be smaller than for source training. |

Protocol Steps:

- Select a Pre-trained Model: Choose a model pre-trained on a large, relevant source domain. For histopathology, this could be a Convolutional Neural Network (CNN) like InceptionV3, DenseNet, or Xception pre-trained on ImageNet or a specialized pathology foundation model [12] [14]. For genomics, a model pre-trained on a large pan-cancer omics dataset like GDSC is suitable [13].

- Modify the Model Architecture:

- Remove the final task-specific layer(s) of the pre-trained model (e.g., the classification head for ImageNet).

- Introduce new layers on top of the pre-trained base, configured for the target task (e.g., a new fully connected layer with the number of neurons matching the desired cancer classification categories) [10] [12].

- Configure the Fine-Tuning Strategy:

- For small, similar target data: Freeze all pre-trained layers and train only the new layers.

- For moderate target data: Freeze early layers and fine-tune later layers.

- For large, dissimilar target data: Unfreeze all layers for full fine-tuning with a very low learning rate.

- Train the Model on the Target Domain:

- Use a much lower learning rate (see Table 2) for the pre-trained layers.

- Apply robust data augmentation (e.g., rotation, flipping for images; noise injection for genomic data) to prevent overfitting on the limited target dataset [12] [15].

- Monitor performance on a held-out validation set from the target domain and employ early stopping to halt training when performance plateaus.

Visualizing the Fine-Tuning Workflow for Cancer Prediction

The following diagram illustrates the end-to-end workflow for applying transfer learning and fine-tuning to a cancer prediction task, from data preparation to model deployment.

Fine-Tuning Workflow for Cancer Prediction

Case Study: Application in Colorectal Cancer Histopathology

A 2025 study provides a clear example of the successful application of these core principles. The research aimed to improve the classification of colorectal cancer (CRC) histopathological images [12].

- Source Domain: The ImageNet dataset, a large-scale general image repository, and pre-trained CNN architectures including DenseNet121, InceptionV3, and Xception.

- Target Domain: Multiple CRC histopathological image datasets totaling 10,613 images from public and private repositories.

- Fine-Tuning Protocol: The researchers implemented a structured fine-tuning approach. They did not simply train the final layer but performed algorithmic fine-tuning at varying depths of the pre-trained networks, creating the models CRCHistoDense, CRCHistoIncep, and CRCHistoXcep.

- Results and Efficacy: The fine-tuned models achieved exceptional test accuracies of 99.34%, 99.48%, and 99.45%, respectively, across all datasets. Statistical tests confirmed these were significant improvements over baseline methods, demonstrating that targeted fine-tuning based on CNN architecture characteristics dramatically enhances both classification performance and generalizability in cancer diagnostics [12].

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key resources and computational tools essential for conducting transfer learning experiments in cancer research.

Table 3: Research Reagent Solutions for Transfer Learning

| Item / Resource | Function / Description | Example in Context |

|---|---|---|

| Pre-trained Models | Provide the foundational feature extractors and initial weights. | Models like DenseNet121, InceptionV3, Xception [12], or pathology foundation models [14]. |

| Genomic Databases | Serve as large source domains for pre-training or benchmarking. | GDSC, TCGA, PDX Encyclopedia [13]. |

| Digital Histopathology Slides | The raw data for image-based target tasks. | H&E-stained whole slide images (WSIs) of tumor tissues [12] [14]. |

| Cloud Computing Platforms | Provide the computational power required for training and fine-tuning deep learning models. | Amazon SageMaker (e.g., SageMaker JumpStart for pre-trained models) [10]. |

| Data Augmentation Tools | Artificially expand the size and diversity of limited target datasets to prevent overfitting. | Libraries for image rotation, flipping, color jitter; or noise injection for genomic data. |

Transfer learning has emerged as a pivotal methodology in computational biology, particularly for cancer prediction using high-dimensional genomic data where sample sizes are often limited. This approach leverages knowledge from a source domain (with abundant data) to improve model performance in a target domain (with scarce data), simultaneously enhancing predictive accuracy and reducing computational costs [16] [17]. Within oncology, where the acquisition of large, labeled genomic datasets is often prohibitively expensive and time-consuming, transfer learning provides a framework to overcome the "curse of dimensionality" and build more robust, generalizable predictive models for tasks such as cancer diagnosis, subtyping, and survival prediction [18] [19].

Quantitative Evidence of Performance Enhancement

Empirical studies across various cancer genomics tasks consistently demonstrate that transfer learning strategies can significantly boost model performance, especially when the target dataset is small. The following table summarizes key quantitative findings from recent research.

Table 1: Performance Benefits of Transfer Learning in Cancer Genomics

| Target Task | Source Data/Task | Transfer Method | Performance Gain | Reference |

|---|---|---|---|---|

| Lung Cancer Progression-free Interval Prediction | Pan-Cancer (31 tumor types) | CNN pre-training & fine-tuning | Improved prediction vs. non-TL models [19] | |

| Identification of Genome-Matched Therapies | Nationwide CGP database (Japan) | XGBoost (Implied TL context) | AUROC: 0.819 [20] | |

| Cancer Prediction (Gene Expression) | Large Pan-Cancer Microarray Data | Supervised Transfer (Large to Small Set) | Performance matched state-of-the-art only for large training sets; TL was beneficial for small sets [18] | |

| Cancer Prediction (Gene Expression) | Unlabeled Pan-Cancer Data | Unsupervised Pre-training (Autoencoder) | Strongly improved model performance in some cases for small target datasets [18] | |

| Mid-term Load Forecasting (COVID-19 context) | 26 Provinces in Normal Conditions | CNN-based BEST-L Framework | Higher accuracy vs. traditional methods; effective knowledge transfer with small samples [21] |

Beyond raw performance, a major benefit of transfer learning is a reduction in the required training time and computational resources. By repurposing pre-trained models or networks, researchers can reduce the number of training epochs, the volume of training data needed, and the requisite processor units [16]. This efficiency is critical in biomedical research, where computational resources can be a limiting factor.

Detailed Experimental Protocols

This section outlines specific methodologies for implementing transfer learning in cancer genomic studies, detailing the protocols that generated the results discussed above.

Protocol: Robust Transfer Learning for High-Dimensional Generalized Linear Models

This protocol is designed to handle outliers and data contamination, which are common in real-world biomedical data [22].

- Source Model Pre-training: Train a generalized linear model (GLM) on a large-scale source genomic dataset (e.g., TCGA Pan-Cancer data). The training employs minimum γ-divergence to ensure robustness against outliers in the source data.

- Knowledge Transfer: The parameters (weights and biases) learned from the source model are transferred to initialize the target model. This provides a robust starting point that is already attuned to genomic data patterns.

- Target Model Fine-tuning: The initialized target model is then fine-tuned on the smaller, target genomic dataset. The fine-tuning process continues to use the robust minimum γ-divergence estimator to maintain performance even if the small target set contains outliers [22].

Protocol: Unsupervised Pre-training for Cancer Subtype Classification

This protocol uses unsupervised learning on a large, unlabeled dataset to learn a generally useful representation of gene expression data [18].

- Feature Representation Learning: Train a deep autoencoder (e.g., variational or denoising) on a large pan-cancer gene expression dataset (e.g., >10,000 samples). The objective is to reconstruct the input data, forcing the encoder to learn a compressed, meaningful representation.

- Model Initialization: The encoder layers of the trained autoencoder are copied to form the initial hidden layers of a multilayer perceptron (MLP) classifier designed for a specific cancer subtype prediction task.

- Supervised Fine-tuning: A new output layer matching the number of target subtypes is appended to the initialized MLP. The entire network is then trained (fine-tuned) on the smaller, labeled target dataset. The pre-trained weights serve as an informed starting point, accelerating convergence and improving generalization [18].

Protocol: CNN-Based Survival Prediction Using Gene-Expression Images

This protocol adapts convolutional neural networks, which excel with spatially coherent data, to unstructured gene expression data [19].

- Data Transformation: Convert one-dimensional gene-expression vectors into two-dimensional "gene-expression images." This can be done using domain-specific knowledge, such as arranging genes by their relative position on the chromosome or using algorithms like DeepInsight that project features onto a 2D space to create local motifs [19].

- Pan-Cancer Pre-training: Train a CNN architecture (e.g., ResNet) on a large set of these images derived from a pan-cancer dataset (e.g., 31 tumor types from TCGA) for a surrogate task, such as tumor-type classification.

- Task-Specific Fine-tuning: Replace the final classification layer of the pre-trained CNN to predict a clinical outcome like lung cancer progression-free interval. Fine-tune the network on the smaller target dataset of gene-expression images. The early CNN layers, which have learned to detect general genomic "shapes" and patterns, are particularly transferable [19].

Workflow Visualization

The following diagram illustrates the logical sequence and decision points in a generalized transfer learning workflow for cancer genomics.

The Scientist's Toolkit: Research Reagent Solutions

Successful implementation of the protocols above relies on key computational reagents and datasets. The following table catalogues essential resources for transfer learning in cancer genomics.

Table 2: Key Research Reagents for Transfer Learning in Cancer Genomics

| Reagent / Resource | Type | Primary Function | Example Use Case |

|---|---|---|---|

| MLOmics Database [23] | Processed Multi-omics Database | Provides off-the-shelf, uniformly processed multi-omics data for 32 TCGA cancer types, ideal for source pre-training or target task evaluation. | Pan-cancer classification; biomarker discovery. |

| C-CAT Database [20] | Clinical-Genomic Real-World Database | Offers a large-scale, real-world dataset linking comprehensive genomic profiling (CGP) results to clinical outcomes, useful for source training. | Predicting identification of genome-matched therapies. |

| TCGA (The Cancer Genome Atlas) [24] [23] | Genomic Data Portal | A foundational, multi-omics resource for many cancer types. Requires significant processing to be model-ready. | Source data for pre-training autoencoders or CNNs. |

| Pre-trained Autoencoders [18] | Model Weights / Architecture | Provides a pre-learned, low-dimensional representation of gene expression data, serving as a feature extractor or model initializer for small target datasets. | Initializing MLPs for cancer subtype prediction. |

| XGBoost [20] | Machine Learning Algorithm | A powerful, tree-based boosting algorithm that can be used in a transfer context and offers high interpretability via methods like SHAP. | Predicting clinical outcomes from clinical and genomic features. |

| ResNet / CNN Architectures [19] [17] | Model Architecture | Deep neural network architectures that can be pre-trained on source data (e.g., gene-expression images) and fine-tuned for target tasks like survival prediction. | Predicting cancer progression from genomic data. |

In the field of oncology, the development of robust predictive models for tasks such as drug sensitivity assessment, cancer subtype classification, and mutation detection is often hampered by the limited availability of high-quality, labeled genomic and clinical data. Transfer learning has emerged as a powerful strategy to overcome this bottleneck by leveraging knowledge gained from large, diverse source domains to improve performance on target tasks with limited data [25]. The effectiveness of this approach, however, is fundamentally dependent on the selection and utilization of appropriate pre-training data sources. This Application Note details the major categories of data repositories—cancer cell line databases, pan-cancer patient data consortia, and cancer imaging archives—that provide the foundational resources for pre-training models in computational oncology. Furthermore, it provides standardized protocols for implementing a transfer learning workflow from data pre-processing to model fine-tuning, enabling researchers to effectively leverage these diverse data sources to build more accurate and generalizable predictive models for cancer research and personalized treatment.

Data Repositories for Pre-training

Table 1: Major Data Repositories for Pre-training Cancer Models

| Repository Name | Data Type | Key Features | Sample Scale | Primary Use Cases |

|---|---|---|---|---|

| Genomics of Drug Sensitivity in Cancer (GDSC) [26] | Gene expression, drug sensitivity | Largest in vitro drug-sensitivity database; 286 drugs across 686 cell lines [27] | 958 cell lines, 282 drugs [26] | Drug-sensitivity prediction models |

| Cancer Cell Line Encyclopedia (CCLE) [26] | Gene expression, drug response | Drug sensitivity data for 24 drugs; 7 overlap with GDSC [26] | 472 cell lines [26] | Model validation and comparison |

| The Cancer Genome Atlas (TCGA) [18] | Multi-omics, clinical data | Pan-cancer data; 33 cancer types [28] | >20,000 primary cancer and matched normal samples [28] | Pan-cancer and cancer-specific classification |

| Cancer Research Data Commons (CRDC) [29] [28] | Genomic, proteomic, imaging | Federated, cloud-based infrastructure integrating multiple data commons | >9.4 petabytes from 354 studies [28] | Centralized access to diverse NCI data resources |

| Beat Acute Myeloid Leukaemia (Beat AML) [26] | Patient-derived cell culture data | Drug sensitivity for 213 AML patient-derived cell cultures | 213 patients, 109 drugs [26] | Translation from cell lines to patient-derived models |

| Patient-Derived Organoid (PDO) Data [26] | Organoid drug response | Closely resembles patient tumor response [26] | 44 PDOs, 25 drugs [26] | Biomimetic drug response prediction |

Experimental Protocols

Protocol 1: Implementing a Transfer Learning Workflow from Cell Lines to Patient-Derived Models

This protocol describes a method to pre-train a model on abundant cell line data (GDSC) and fine-tune it on smaller, more clinically relevant patient-derived data (e.g., Beat AML or PDOs) to predict drug sensitivity [26].

Materials

- Data: GDSC database (source domain), target patient-derived dataset (e.g., Beat AML, PDO)

- Software: Python environment with deep learning libraries (PyTorch/TensorFlow)

- Computing Resources: GPU-enabled system for efficient model training

Procedure

- Source Model Pre-training:

- Input Features: Process gene expression profiles (e.g., RNA-seq) from GDSC cell lines and drug representations (e.g., one-hot encoding or SMILES strings) [27].

- Output Target: Use the half-maximal inhibitory concentration (IC50) values as the regression target.

- Model Architecture: Train a deep neural network (e.g., a Multilayer Perceptron or a specialized architecture like PaccMann [26]) to predict IC50 from the input features. Use a tissue-aware data split to prevent data leakage [27].

- Validation: Monitor the Pearson correlation coefficient between predicted and experimental IC50 values on a held-out validation set.

Target Data Alignment and Preprocessing:

- Data Alignment: Employ alignment techniques like Celligner [27] to correct for batch effects and distribution shifts between the gene expression profiles of cell lines (source) and patient-derived samples (target).

- Feature Harmonization: Ensure the input feature space (e.g., gene sets) matches between the source and target domains.

Model Transfer and Fine-tuning:

- Parameter Transfer: Initialize the target model (which shares the architecture with the source model) with the pre-trained weights from the source model.

- Fine-tuning: Re-train the model on the target domain data (e.g., Beat AML or PDO drug response). A lower learning rate is typically used to adapt the weights without overwriting the general features learned during pre-training.

- Evaluation: Evaluate the fine-tuned model on a held-out test set from the target domain. The primary performance metric is the Pearson correlation between predicted and observed drug sensitivity, averaged across cell lines or patients (cell cold-start) [26].

Troubleshooting

- If performance on the target domain is poor, check the alignment of feature distributions between source and target data.

- Experiment with "freezing" earlier layers of the network during initial fine-tuning stages to preserve general features.

Protocol 2: Self-Pretraining on Task-Specific Genomic Data

For tasks where large-scale general pre-training is not feasible, self-pretraining on unlabeled task-specific sequences is a compute-efficient alternative [30]. This protocol is applicable to tasks like gene finding or chromatin profiling.

Materials

- Data: Unlabeled DNA or RNA sequences specific to the target task (e.g., genomic regions of interest from ENCODE).

- Software: PyTorch with standard deep learning modules.

Procedure

- Self-Supervised Pre-training:

- Model Setup: Use a residual convolutional neural network (CNN) as an encoder. Attach a masked language modeling (MLM) head [30].

- Input: One-hot encoded DNA sequences. Tokens are randomly masked with a probability of 0.15.

- Training: Train the model to reconstruct the original sequence, using a cross-entropy loss calculated only at the masked positions (

L_MLM).

- Supervised Fine-tuning:

- Head Replacement: Remove the MLM head and replace it with a task-specific predictor (e.g., a classifier for exon/intron regions) [30].

- Fine-tuning: Train the entire model (encoder and new head) end-to-end on the labeled downstream task using an appropriate loss function (e.g., cross-entropy for classification).

Troubleshooting

- For gene finding, adding a Conditional Random Field (CRF) layer on top of the fine-tuned model can enforce global label consistency (e.g., valid exon-intron transitions), significantly improving performance [30].

Workflow Visualization

Transfer Learning Workflow for Drug Response Prediction

Data Validation and Preprocessing Framework

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Resources

| Tool/Resource | Type | Function | Access |

|---|---|---|---|

| Cancer Research Data Commons (CRDC) [29] | Data Infrastructure | Federated, cloud-based platform providing centralized access to NCI's genomic, proteomic, and imaging data. | https://datacommons.cancer.gov/ |

| Genomic Data Commons (GDC) [28] | Data Repository | Primary portal for accessing harmonized genomic data from projects like TCGA. | Via CRDC |

| Imaging Data Commons (IDC) [28] | Data Repository | Provides curated cancer imaging archives for model development and validation. | Via CRDC |

| Celligner [27] | Computational Tool | Algorithm to align RNA-seq data from cell lines and patient tumors, correcting for batch effects. | https://github.com/broadinstitute/celligner |

| Transformer Architectures (e.g., PharmaFormer [31]) | Model Architecture | Neural networks designed to handle sequential data (e.g., genes, DNA sequences), effective for integrating multi-modal inputs. | Custom implementation |

| Cloud Resources (SB-CGC, ISB-CGC) [28] | Computing Platform | Secure cloud workspaces with pre-configured analytical tools and workflows for analyzing CRDC data. | Via CRDC |

| Autoencoders (VAE, DAE) [18] | Model Architecture | Used for unsupervised pre-training to learn compressed, informative representations of gene expression data. | Standard DL libraries |

Practical Strategies and Cutting-Edge Models for Genomic and Multimodal Data

The genomic characterization of cancer cell lines, coupled with high-throughput drug sensitivity screening, has established resources like the Cancer Cell Line Encyclopedia (CCLE) and the Genomics of Drug Sensitivity in Cancer (GDSC) as fundamental tools for precision oncology. These databases provide systematic measurements of drug response across hundreds of cancer cell lines, enabling the development of machine learning models that predict drug sensitivity based on genomic features. However, a significant challenge arises from the distributional shifts between different pharmacogenomic databases, which can limit model generalizability and performance when applied to new data sources or clinical samples.

Transfer learning (TL) methodologies offer a powerful solution to these challenges by leveraging knowledge from a data-rich source domain (e.g., one pharmacogenomic database) to improve predictive performance and generalization in a target domain (e.g., another database or patient data), especially when the target dataset is limited. This Application Note provides detailed protocols for implementing transfer learning across CCLE and GDSC, facilitating robust drug sensitivity prediction even with limited genomic data.

Table 1: Key Public Pharmacogenomic Databases for Transfer Learning

| Database | Primary Focus | Key Content | Utility in Transfer Learning |

|---|---|---|---|

| GDSC (Genomics of Drug Sensitivity in Cancer) [32] [33] | Oncology drug sensitivity | ~1000 cell lines, ~250 compounds; Genomic features (mutations, gene expression), drug sensitivity (IC50, AUC) | Primary source domain; Large-scale data for pre-training. |

| CCLE (Cancer Cell Line Encyclopedia) [32] [33] | Cancer cell line characterization | ~1000 cell lines, ~500 compounds; Genomic features, drug sensitivity data. | Primary or secondary source/target domain; Often used with GDSC. |

| PRISM [32] | Drug repurposing | Predominantly non-oncology drugs screened for anti-cancer activity. | Extends predictions to non-oncology drug space. |

| DrugComb [32] | Drug combination sensitivity | Includes single drug and drug combination screening data. | Source for combination therapy modeling. |

Understanding Data Heterogeneity and Integration Challenges

A critical first step in any transfer learning project is recognizing and addressing the inherent inconsistencies between source and target data. Direct comparisons of drug sensitivity values (e.g., IC50) between GDSC and CCLE have historically shown discordance, which arises from differences in experimental protocols, assay conditions, and drug sensitivity metrics.

To enable meaningful data integration and model transfer, researchers have developed standardized metrics. The area under the dose response curve adjusted for the range of tested concentrations (adjusted AUC) is one such robust metric that allows for the integration of heterogeneous data from CCLE, GDSC, and other resources like the Cancer Therapeutics Response Portal (CTRP) by calculating sensitivity over a shared concentration range [33]. This adjustment mitigates technical biases and facilitates a more reliable comparison and pooling of data across studies, forming a solid foundation for subsequent transfer learning.

Transfer Learning Protocols for Drug Sensitivity Prediction

This section outlines two distinct computational approaches for implementing transfer learning between CCLE and GDSC, moving from latent variable models to more recent federated learning frameworks.

Protocol 1: Latent Variable Cost Optimization

This protocol involves mapping data from both source (e.g., CCLE) and target (e.g., GDSC) domains into a shared latent space where their distributions are aligned, allowing for knowledge transfer [34].

- Primary Objective: To improve drug sensitivity prediction in a target database with limited samples by leveraging the larger dataset of a source database.

- Applications: Enhancing prediction in a newly established or sparsely populated drug sensitivity dataset using a larger, complementary database.

Step-by-Step Procedure:

Data Preprocessing and Feature Selection:

- Identify overlapping cell lines and drugs between CCLE and GDSC.

- Use the Adjusted AUC as the drug sensitivity metric to ensure comparability [33].

- For gene expression data, perform feature selection. A common method is using the ReliefF algorithm to select the top 200 genes from each dataset and taking their intersection as the final feature set [34].

- Normalize the gene expression data (e.g., Z-score normalization).

Model Implementation and Training:

- Implement the Combined Latent Prediction (CLP) model, which has been shown to outperform other latent variable methods [34].

- The CLP model learns a joint latent variable representation for the input gene expression data from both source and target domains and also maps the output sensitivity data into a shared latent space.

- The cost function is optimized using the available target domain data (e.g., a small subset of GDSC) and the entire source domain data (e.g., CCLE).

Prediction and Validation:

- Use the trained model to predict drug sensitivities for the held-out samples in the target domain.

- Validate model performance by comparing predicted versus experimental AUC values using Pearson correlation or mean squared error.

Figure 1: Latent Variable Optimization Workflow

Protocol 2: Federated Learning for Privacy-Preserving Integration

Federated Learning (FL) is a decentralized approach that enables model training across multiple datasets without sharing raw data, thus preserving privacy and addressing data governance concerns while leveraging multi-source data to improve generalizability [35].

- Primary Objective: To build a robust, generalized drug sensitivity prediction model by learning from multiple pharmacogenomic datasets (CCLE, GDSC, gCSI) without centralizing the data.

- Applications: Collaborative model development across different institutions where data sharing is restricted, or to improve model robustness to inter-dataset inconsistencies.

Step-by-Step Procedure:

Data Preparation and Feature Engineering on Each Client:

- For each dataset (CCLE, GDSC), perform client-specific preprocessing.

- Gene Selection: Filter genes based on the L1000 gene set, which is sufficient to predict transcriptome changes upon drug treatment. Further reduce dimensionality using mutual information [35].

- Drug Representation: Obtain Mol2Vec embeddings (300 dimensions) from the SMILES codes of each drug to numerically represent molecular structure [35].

- Tissue Type Encoding: Use one-hot encoding for the tissue type of each cell line.

- Concatenate the processed gene expression, drug embedding, and tissue type vector to form the final input feature.

Federated Model Architecture and Training:

- A central server coordinates the training and maintains a global model.

- Each client (e.g., CCLE, GDSC) trains the model locally on its data for a few epochs.

- The clients send their model updates (e.g., gradients or weights) to the central server.

- The server aggregates these updates (e.g., using Federated Averaging) to improve the global model.

- The updated global model is then sent back to the clients for the next round of training.

Model Inference:

- The final trained global model can be deployed for drug sensitivity prediction on new data from any of the participating domains or new, similar domains.

Table 2: Comparison of Featured Transfer Learning Methods

| Method | Core Mechanism | Data Privacy | Key Advantage | Reported Performance Gain |

|---|---|---|---|---|

| Latent Variable (CLP) [34] | Projects source and target data into a shared latent space. | Low (Requires data centralization) | Effective for direct knowledge transfer between two specific databases. | Superior to non-TL models for 6/7 drugs tested [34]. |

| Federated Learning [35] | Decentralized training; only model updates are shared. | High | Enables multi-institutional collaboration without raw data sharing, improves generalizability. | Outperforms single-database models and traditional FL approaches [35]. |

| scDEAL (Deep Transfer) [36] | Harmonizes bulk and single-cell RNA-seq data via domain adaptation. | Low (Requires data centralization) | Predicts drug response at single-cell resolution, revealing heterogeneity. | High accuracy (Avg. F1-score: 0.892) on six scRNA-seq datasets [36]. |

Figure 2: Federated Learning Setup

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Data Resources

| Tool/Resource | Type | Function in Workflow | Access/Reference |

|---|---|---|---|

| Adjusted AUC Metric | Analytical Metric | Standardizes drug sensitivity measurements across studies with different experimental setups, enabling direct data comparison [33]. | Custom calculation; see [33]. |

| PharmacoGx R Package [35] | Software Tool | Provides unified interface to access and analyze multiple pharmacogenomic databases (CCLE, GDSC, gCSI) within R. | Bioconductor. |

| Mol2Vec [35] | Algorithm | Generates numerical vector representations (embeddings) of drug molecules from their SMILES strings, capturing structural information. | Python package. |

| L1000 Gene Set [35] | Gene Panel | A reduced set of ~1000 landmark genes whose expression is sufficient to accurately impute the rest of the transcriptome, used for dimensionality reduction. | Broad Institute. |

| scDEAL Framework [36] | Software Tool | A deep transfer learning framework for predicting drug response in single-cell RNA-seq data by leveraging bulk cell-line data. | GitHub repository. |

| PharmacoDB [35] | Database Portal | Integrates multiple pharmacogenomic datasets, allowing users to easily identify overlapping cell lines and drugs across studies. | https://pharmacodb.ca/ |

Advanced Applications and Future Directions

Emerging research demonstrates the expanding frontier of transfer learning in pharmacogenomics. The integration of Large Language Models (LLMs) shows promise for tasks such as linking drugs to their mechanisms of action (MOA) by processing unstructured biological text, thereby enriching input features for sensitivity prediction models [27]. Furthermore, the scDEAL framework exemplifies the power of deep transfer learning to bridge the gap between bulk cell line data and single-cell RNA-seq data from clinical samples, allowing for the prediction of drug response heterogeneity within tumors [36]. These advanced protocols enable the translation of pre-clinical findings to clinically relevant predictions, bringing us closer to the goal of true precision oncology.

The integration of advanced deep learning architectures like Transformers and Convolutional Neural Networks (CNNs) is revolutionizing computational oncology. These models are particularly vital for cancer prediction using limited genomic data, a common challenge in clinical settings. By leveraging transfer learning, models pre-trained on large, general genomic datasets can be fine-tuned for specific cancer prediction tasks, effectively overcoming the data scarcity problem. This application note details the protocols and experimental methodologies underpinning these architectures, providing a framework for researchers and drug development professionals to implement these powerful tools.

Key Architectures and Their Quantitative Performance

Advanced architectures for genomic and imaging data in oncology can be broadly categorized into several types, each with distinct strengths. The following table summarizes the performance of key models as reported in recent literature.

Table 1: Performance Metrics of Advanced Architectures in Oncology Applications

| Model Name | Architecture Type | Primary Application | Key Dataset(s) | Reported Performance | Reference |

|---|---|---|---|---|---|

| TransBreastNet | CNN-Transformer Hybrid | Breast cancer subtype & stage classification | Internal mammogram dataset | 95.2% accuracy (subtype), 93.8% accuracy (stage) | [37] |

| DNABERT-2 / Nucleotide Transformer | Transformer | Genetic mutation classification (SNVs, Indels, Duplications) | Custom real-world and synthetic genomic datasets | High performance on F1, recall, accuracy, and precision metrics | [38] |

| DeepVariant | CNN | Germline and somatic variant calling | GIAB, TCGA | 99.1% SNV accuracy | [1] |

| DNN-based TL with MI | Deep Neural Network with Transfer Learning | Drug response prediction | GDSC, PDX, TCGA | Outperformed other methods based on AUCPR | [13] |

| MAGPIE | Attention-based Multimodal Neural Network | Variant prioritization | Rare disease cohorts | 92% variant prioritization accuracy | [1] |

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of these models requires a suite of computational tools and data resources.

Table 2: Key Research Reagent Solutions for Implementation

| Item Name | Type | Function/Application | Example/Note |

|---|---|---|---|

| Pre-trained Genomic Models | Software Model | Foundation for transfer learning on limited genomic data. | DNABERT-2, Nucleotide Transformer, GENAL-LM [38]. |

| Curated Genomic Datasets | Data | Training, fine-tuning, and benchmarking models for cancer genomics. | TCGA, ICGC Pan-Cancer, COSMIC, CCLE, GDSC [4] [13] [1]. |

| Synthetic Data Generators | Algorithm | Addressing class imbalance in genomic data by generating realistic sequences. | WGAN-GP (Wasserstein Generative Adversarial Network with Gradient Penalty) [38]. |

| Multi-omics Fusion Frameworks | Computational Method | Integrating diverse data types (e.g., gene expression, mutations, CNAs) for a holistic view. | Late integration pipelines, attention-based fusion mechanisms [13] [1]. |

| Cloud Computing Platforms | Infrastructure | Providing scalable storage and computation for large genomic and imaging datasets. | Amazon Web Services (AWS), Google Cloud Genomics, Microsoft Azure [39]. |

Experimental Protocols and Workflows

Protocol 1: Implementing a Hybrid CNN-Transformer for Cancer Subtype Classification

This protocol outlines the methodology for developing a hybrid architecture, as exemplified by TransBreastNet, for classifying cancer subtypes from multimodal data [37].

1. Data Preparation and Preprocessing:

- Input Modalities: Collect mammogram images and structured clinical metadata (e.g., hormone receptor status, tumor size).

- Image Preprocessing: Apply standard normalization and augmentation techniques (e.g., random flipping, rotation) to the images to increase robustness.

- Metadata Encoding: Encode categorical clinical variables and normalize continuous variables.

2. Spatial Feature Extraction with CNN:

- Architecture: Utilize a CNN backbone (e.g., ResNet, EfficientNet) pretrained on natural images.

- Procedure: Feed preprocessed images through the CNN to extract high-dimensional spatial feature maps representing lesion morphology.

- Output: A feature vector encapsulating the spatial characteristics of the lesion.

3. Temporal/Sequential Modeling with Transformer:

- Input: If longitudinal data (multiple time-point images) is available, use the CNN-extracted features from each time point as a sequence.

- Synthetic Sequences: If longitudinal data is scarce, generate synthetic temporal sequences using augmentation techniques.

- Transformer Encoder: Pass the sequence of feature vectors through a Transformer encoder. The self-attention mechanism will model the temporal progression and dependencies between sequential scans.

- Output: A context-aware feature representation of the lesion's evolution.

4. Multimodal Feature Fusion:

- Process: Concatenate or use a weighted fusion mechanism to combine the spatial features from the CNN, the temporal features from the Transformer, and the encoded clinical metadata.

- Objective: To create a unified, context-rich feature representation for final prediction.

5. Dual-Task Prediction Head:

- Architecture: Employ a dual-head classifier consisting of fully connected layers.

- Task 1 - Subtype Classification: One head predicts the breast cancer subtype (e.g., Luminal A, HER2+).

- Task 2 - Stage Prediction: The other head predicts the disease progression stage.

- Training: Train the entire model end-to-end using a combined loss function (e.g., weighted cross-entropy for both tasks).

Protocol 2: Transfer Learning with Transformers for Mutation Classification

This protocol describes the use of pre-trained transformer models for classifying genetic mutations from sequence data, a critical task for personalized cancer therapy [4] [38].

1. Data Curation and Tokenization:

- Dataset: Prepare a dataset of DNA sequences centered on mutation sites. This includes sequences from both cancerous and non-cancerous genomes.

- Class Imbalance Handling: For rare mutation types, use a generative model like WGAN-GP to create synthetic genomic sequences and balance the dataset.

- Tokenization: Convert DNA sequences (A, C, G, T) into tokens understandable by the model. For DNABERT-2, this involves Byte Pair Encoding (BPE).

2. Model Selection and Initialization:

- Foundation Models: Initialize the model using a pre-trained transformer like DNABERT-2 or Nucleotide Transformer. These models are pre-trained on large-scale genomic datasets (e.g., 1000 Genomes, multi-species genomes) and understand genomic "syntax".

- Parameter Freezing: Initially, freeze the weights of the pre-trained layers to retain the general genomic knowledge.

3. Model Fine-Tuning:

- Task-Specific Head: Add a new classification head on top of the pre-trained model, tailored for the specific mutation types (e.g., SNVs, Indels, Duplications).

- Progressive Unfreezing: Unfreeze the upper layers of the pre-trained model and train the entire architecture on the curated mutation dataset with a low learning rate. This adapts the general knowledge to the specific task.

- Hyperparameter Tuning: Use frameworks like Optuna to optimize hyperparameters, including learning rate and batch size.

4. Model Evaluation and Interpretation:

- Performance Metrics: Evaluate the model on a held-out test set using metrics such as F1 score, recall, accuracy, and precision, especially for imbalanced classes.

- Explainability: Apply attention visualization techniques to identify which parts of the input sequence the model deems most important for its prediction, adding a layer of biological interpretability.

Protocol 3: Multi-omics Integration for Drug Response Prediction

This protocol details a DNN-based transfer learning approach to predict cancer drug response by integrating multiple omics data types [13].

1. Multi-omics Data Preprocessing and Homogenization:

- Data Types: Collect gene expression, somatic mutation, and copy number aberration (CNA) data from sources like GDSC (for training) and TCGA or PDX (for validation).

- Normalization: Log-transform and normalize gene expression data (e.g., to TPM or RMA). Binarize non-synonymous mutations. Process CNA data relative to ploidy.

- Batch Effect Removal: Use bioinformatics packages (e.g., the

svaR package) to remove batch effects between different datasets (e.g., GDSC vs. TCGA).

2. Feature Selection and Unionization:

- Differential Expression Analysis: For each drug pathway class (e.g., mitosis, DNA replication), identify Differentially Expressed Genes (DEGs) between sensitive and resistant samples in the GDSC dataset.

- Unionized Feature Set: Create a unionized set of DEGs from all drugs within the same pathway class. This feature set captures a robust transcriptional signature of drug response for that pathway.

3. Building a Pan-Drug Model with Transfer Learning:

- Architecture: Construct a Deep Neural Network (DNN) with multiple hidden layers designed to take the unionized multi-omics features as input.

- Pre-training: Train the DNN model on the large, diverse GDSC dataset. This creates a "pan-drug" model that learns general resistance mechanisms within a pathway.

- Transfer Learning: Fine-tune the pre-trained model on smaller, target datasets (e.g., TCGA or PDX) to adapt it to specific patient populations or drug profiles.

4. Prediction and Biological Insight Generation:

- Inference: Use the fine-tuned model to predict drug response (sensitive/resistant) on new patient samples.

- Mechanism Exploration: Perform pathway enrichment analysis (e.g., on genes weighted heavily by the model) to uncover potential biological mechanisms driving drug resistance, such as LDHB-mediated pyruvate metabolism in paclitaxel resistance.

Predicting how a patient will respond to anti-cancer therapy remains a cornerstone challenge in precision oncology. A significant hurdle is the limited availability of large-scale clinical drug response data, which is essential for training robust deep learning models. Transfer learning, which leverages knowledge from a data-rich source domain to improve performance in a data-scarce target domain, presents a powerful strategy to overcome this bottleneck [25] [40] [34].

PharmaFormer is a state-of-the-art framework that exemplifies this approach. It is a custom Transformer-based deep learning model designed to predict clinical drug responses by integrating the vast pharmacogenomic data from traditional cancer cell lines with the high biological fidelity of patient-derived organoids (PDOs) [31]. This model addresses the critical limitation of organoids—their time-consuming and costly culture process—by using transfer learning to compensate for the currently limited organoid drug response data [31]. By initially pre-training on pan-cancer 2D cell line data and then fine-tuning on tumor-specific organoid data, PharmaFormer achieves dramatically improved accuracy in predicting patient outcomes, thereby accelerating precision medicine and drug development [31].

Technology and Model Architecture

The core innovation of PharmaFormer lies in its specialized Transformer architecture and its strategic application of a transfer learning paradigm. The model processes cellular gene expression profiles and drug molecular structures separately through distinct feature extractors before integrating them for prediction [31].

Model Architecture and Workflow

The PharmaFormer model processes inputs through a structured pathway to generate its drug response predictions. The following diagram illustrates the high-level, three-stage workflow of the PharmaFormer framework, from pre-training to clinical application.

The internal architecture of the PharmaFormer model is detailed in the following diagram, which shows the flow of data from input features to the final prediction.

Key Technical Components

Dual-Feature Input Processing: The model accepts two primary types of input data. The gene expression profile, typically from bulk RNA-seq data, is processed through a gene feature extractor consisting of two linear layers with a ReLU activation function. Simultaneously, the drug's molecular structure, represented as a Simplified Molecular-Input Line Entry System (SMILES) string, is processed through a drug feature extractor that employs Byte Pair Encoding (BPE) followed by a linear layer and ReLU activation [31].

Transformer Encoder Core: The concatenated and reshaped features from both input streams are fed into a custom Transformer encoder. This encoder consists of three layers, each equipped with eight self-attention heads [31]. The self-attention mechanism allows the model to weigh the importance of different genes and molecular features dynamically when making a prediction, capturing complex, non-linear interactions.

Transfer Learning Strategy: PharmaFormer is constructed in three critical stages. First, a pre-trained model is developed using gene expression profiles from over 900 cell lines and the area under the dose–response curve (AUC) for over 100 drugs from the Genomics of Drug Sensitivity in Cancer (GDSC) database. Second, this pre-trained model is fine-tuned using a limited dataset of tumor-specific organoid drug response data, employing L2 regularization to optimize parameters. Finally, the fine-tuned model is applied to predict clinical drug responses using gene expression data from patient tumor tissues, such as those available from The Cancer Genome Atlas (TCGA) [31].

Application Notes and Experimental Protocols

This section provides a detailed, actionable protocol for replicating the key experiments that validate PharmaFormer's predictive performance, from data acquisition to clinical correlation.

Protocol 1: Benchmarking PharmaFormer Pre-trained Models

Objective: To establish the benchmark performance of the PharmaFormer pre-trained model against classical machine learning algorithms using pan-cancer cell line data [31].

Step-by-Step Methodology:

- Data Acquisition and Preprocessing:

- Source: Download gene expression profiles and drug sensitivity data (Area Under the Curve, AUC) for over 100 drugs and 900 cancer cell lines from the Genomics of Drug Sensitivity in Cancer (GDSC, version 2) database [31].

- Feature Selection: Use the provided gene expression matrices and compute drug descriptors from SMILES strings.

- Data Partitioning: For robust evaluation, apply a 5-fold cross-validation strategy. Randomly divide the dataset into five non-overlapping subsets.

Model Training and Comparison:

- PharmaFormer Pre-training: Train the PharmaFormer model from scratch on the GDSC data using the architecture described in Section 2.1. Use four subsets for training and one for testing in each fold.

- Baseline Models: Train classical machine learning models on the same data splits for comparison. These should include:

- Support Vector Regression (SVR)

- Multi-Layer Perceptron (MLP)

- Random Forest (RF)

- k-Nearest Neighbors (KNN)

- Ridge Regression

- Performance Metric: For each drug, calculate the Pearson correlation coefficient between the predicted and experimentally measured AUC values across all cell lines.

Validation and Analysis:

- Perform a stratified cross-validation, retaining 20% of cell lines for prediction and using 80% for training, to assess performance across different tissue types and TCGA tumor subgroups.

- Analyze the predictive accuracy for individual FDA-approved drugs and compare performance between targeted therapies and conventional chemotherapies.

Expected Outcomes and Analysis: The pre-trained PharmaFormer model is expected to outperform classical models. The results should be compiled into a table for clear comparison.

Table 1: Benchmarking Performance of PharmaFormer Against Classical Machine Learning Models

| Model | Average Pearson Correlation Coefficient | Key Strengths |

|---|---|---|

| PharmaFormer (Pre-trained) | 0.742 [31] | Captures complex interactions in gene expression and drug structure |

| Support Vector Regression (SVR) | 0.477 [31] | Effective in high-dimensional spaces |

| Multi-Layer Perceptron (MLP) | 0.375 [31] | Can model non-linear relationships |

| Random Forest (RF) | 0.342 [31] | Handles mixed data types, robust to outliers |

| Ridge Regression | 0.377 [31] | Reduces overfitting via regularization |

| k-Nearest Neighbors (KNN) | 0.388 [31] | Simple, instance-based learning |

Protocol 2: Fine-tuning with Patient-Derived Organoids

Objective: To adapt the pre-trained PharmaFormer model to a specific tumor type (e.g., colon cancer) using a limited dataset of patient-derived organoids (PDOs) and enhance its clinical predictive power [31].

Step-by-Step Methodology:

- Organoid Data Collection:

- Source: Generate or acquire a dataset from patient-derived colon cancer organoids. The dataset should include bulk RNA-seq data and corresponding drug sensitivity measurements (AUC) for key drugs like 5-fluorouracil and oxaliplatin [31].

- Dataset Size: The fine-tuning dataset can be relatively small, e.g., data from 29 organoids as used in the original study [31].

Transfer Learning via Fine-tuning:

- Model Initialization: Load the pre-trained PharmaFormer model from Protocol 1.

- Training Configuration:

- Use the organoid pharmacogenomic data as the new training set.

- Employ L2 regularization (weight decay) to prevent overfitting on the small dataset.

- Set a lower learning rate compared to pre-training to ensure stable adaptation.

- Train the model for a sufficient number of epochs until validation loss converges.

Model Output:

- The result of this protocol is an organoid-fine-tuned PharmaFormer model specific to colon cancer.

Protocol 3: Clinical Response Prediction and Validation

Objective: To apply the fine-tuned PharmaFormer model to predict drug response in real-world patient cohorts and validate predictions against clinical outcomes [31].

Step-by-Step Methodology:

- Patient Data Preparation:

- Source: Fetch bulk RNA-seq data from The Cancer Genome Atlas (TCGA) for a specific cohort (e.g., colon adenocarcinoma - COAD).

- Annotation: Collect corresponding data on pharmaceutical therapy strategies and overall survival for these patients [31].

Prediction and Risk Stratification:

- Input: Process the patient tumor RNA-seq data and the relevant drug SMILES strings using the fine-tuned PharmaFormer model.

- Output: The model generates a continuous drug response prediction score for each patient-drug pair.

- Stratification: Dichotomize patients into "drug-sensitive" and "drug-resistant" groups based on a pre-defined cutoff (e.g., median split) of the prediction scores.

Clinical Validation:

- Analysis: Perform survival analysis using the Kaplan-Meier method to compare the overall survival between the sensitive and resistant groups.

- Statistical Test: Calculate the Hazard Ratio (HR) with a 95% confidence interval using a Cox proportional-hazards model to quantify the difference in survival risk.

Expected Outcomes and Analysis: The organoid-fine-tuned model is expected to show a superior correlation with clinical outcomes compared to the pre-trained model. This is indicated by a higher Hazard Ratio, meaning a greater separation in survival between the predicted sensitive and resistant groups.

Table 2: Clinical Validation of PharmaFormer for Two Cancer Types

| Cancer Type | Therapeutic Compound | Model Version | Hazard Ratio (95% Confidence Interval) |

|---|---|---|---|

| Colon Cancer | 5-Fluorouracil | Pre-trained | 2.50 (1.12 - 5.60) [31] |

| Organoid-Fine-Tuned | 3.91 (1.54 - 9.39) [31] | ||

| Oxaliplatin | Pre-trained | 1.95 (0.82 - 4.63) [31] | |

| Organoid-Fine-Tuned | 4.49 (1.76 - 11.48) [31] | ||

| Bladder Cancer | Gemcitabine | Pre-trained | 1.72 (0.85 - 3.49) [31] |

| Organoid-Fine-Tuned | 4.91 (1.18 - 20.49) [31] | ||

| Cisplatin | Pre-trained | 1.80 (0.87 - 4.72) [31] | |

| Organoid-Fine-Tuned | 6.01 (1.XX - XX.XX) [31] |

The Scientist's Toolkit

This section catalogs the essential reagents, datasets, and software required to implement the PharmaFormer framework, providing a practical resource for researchers.

Table 3: Essential Research Reagents and Resources for PharmaFormer

| Category / Item | Specification / Source | Function in the Protocol |

|---|---|---|

| Pharmacogenomic Databases | ||

| GDSC | Genomics of Drug Sensitivity in Cancer (v2) [31] | Source domain dataset for pre-training; provides gene expression and drug AUC for ~900 cell lines. |

| TCGA | The Cancer Genome Atlas [31] | Target domain dataset for clinical validation; provides patient tumor RNA-seq, therapy, and survival data. |

| Software & Libraries | ||

| Deep Learning Framework | PyTorch or TensorFlow | For implementing custom Transformer architecture, linear layers, and ReLU activation. |

| Chemical Informatics | RDKit | For processing drug SMILES strings and generating molecular features. |

| Computational Resources | ||

| GPU | NVIDIA V100/A100 or equivalent | Essential for efficient training of the Transformer model on large genomic datasets. |

| Biological Models | ||

| Patient-Derived Organoids | Tumor-specific (e.g., colon, bladder) [31] | Target domain biomimetic model; provides high-fidelity pharmacogenomic data for fine-tuning. |

Cancer prediction and prognosis have been revolutionized by the integration of multimodal data, including histopathology images, radiology scans, and genomic profiles. Such integration provides a comprehensive view of the complex biological mechanisms driving cancer progression [41]. However, a significant challenge in clinical practice is the scarcity of large, well-annotated genomic datasets, which can limit the development of robust predictive models. Transfer learning has emerged as a powerful strategy to mitigate this limitation by leveraging knowledge from related domains or larger source datasets to improve prediction in data-scarce target environments [42] [43]. This Application Note details practical protocols and fusion techniques for integrating genomic data with histopathological and radiological images, with a specific focus on frameworks that enable effective learning when genomic data is limited.

Multimodal Fusion Strategies in Computational Oncology

Categorization of Fusion Techniques

Integrating disparate data modalities requires specific fusion strategies, which can be categorized based on the stage at which integration occurs.

- Early Fusion (Data-Level Fusion): Raw or pre-processed data from different modalities are combined into a single input vector before being fed into a machine learning model. This approach can capture complex, low-level interactions but is often hampered by high dimensionality and heterogeneity of the input data [44].

- Intermediate Fusion (Feature-Level Fusion): Features are first extracted separately from each modality using dedicated encoders (e.g., CNNs for images, MLPs for genomic data). These feature representations are then integrated in a shared latent space using operations like Kronecker product [45] or concatenation, allowing the model to learn cross-modal interactions [44].

- Late Fusion (Prediction-Level Fusion): Separate models are trained independently on each modality. Their predictions are subsequently combined, often through weighted averaging or stacking. This method is particularly robust when dealing with highly imbalanced dimensionalities across modalities or missing data [44].

Quantitative Comparison of Fusion Performance

The table below summarizes the comparative performance of these fusion strategies as demonstrated in recent oncology studies, particularly in survival prediction tasks.

Table 1: Performance comparison of multimodal fusion strategies in cancer outcome prediction

| Fusion Strategy | Representative Model/Study | Key Advantage | Reported Performance |

|---|---|---|---|

| Intermediate Fusion | Pathomic Fusion [45] | Models pairwise feature interactions via Kronecker product with gating. | Outperformed unimodal models and late fusion in glioma & ccRCC survival prediction. |

| Late Fusion | AZ-AI Multimodal Pipeline [44] | High resistance to overfitting with highly heterogeneous and high-dimensional data. | Consistently outperformed single-modality approaches in TCGA lung, breast, and pan-cancer datasets. |

| Early Fusion | Integrative Genomics Workflow [46] | Directly combines imaging and genomic features for model input. | Risk index correlated strongly with survival, outperforming single-modality models in ccRCC. |

Protocols for Multimodal Data Integration

This section provides detailed experimental protocols for implementing a transfer learning-enhanced, multimodal fusion pipeline, suitable for scenarios with limited genomic data.

Protocol 1: Pathomic Fusion with Transfer Learning

This protocol adapts the Pathomic Fusion framework for a transfer learning context where a source domain with abundant genomic data is used to boost performance in a target domain with limited data [45] [42].

Workflow Diagram: Pathomic Fusion with Transfer Learning

Step-by-Step Procedure:

Source Domain Pre-training:

- Input: Paired histology images (Whole Slide Images, WSIs) and genomic data (e.g., RNA-Seq, mutations) from a large source cohort (e.g., TCGA).

- Histology Feature Extraction: Process WSIs using a Convolutional Neural Network (CNN) to extract image-based features and/or a Graph Convolutional Network (GCN) on cell graphs to capture cellular morphology and spatial relationships [45].

- Genomic Feature Processing: Utilize standard normalized formats (e.g., RPKM for RNA-Seq). Apply dimensionality reduction if necessary.

- Multimodal Fusion: Implement the Pathomic Fusion layer, which takes the Kronecker product of the gated histology and genomic feature representations. This explicitly models pairwise feature interactions across modalities [45].

- Model Training: Train a survival prediction model (e.g., Cox proportional hazards) using the fused feature tensor as input. The trained model's fusion layers and feature extractors serve as the pre-trained model.

Target Domain Transfer Learning:

- Input: The often smaller target dataset with paired histology images and limited genomic data.

- Model Initialization: Initialize the histology and genomic encoders with the weights from the source domain pre-trained model.

- Fine-Tuning: Retrain the entire model on the target dataset. Optionally, use a lower learning rate for the pre-trained layers to avoid catastrophic forgetting. The model can now leverage generalizable features learned from the large source domain to make robust predictions in the target domain, despite its limited genomic data [42].

Protocol 2: Late Fusion for Heterogeneous Data

This protocol is ideal when dealing with highly heterogeneous data types or when certain data modalities are missing for some patients [44].

Workflow Diagram: Late Fusion for Survival Prediction

Step-by-Step Procedure:

Unimodal Model Training:

- Train predictive models independently for each data modality.

- Genomic Model: Use a model like Ridge Regression or a Feedforward Network on genomic features (e.g., eigengenes from gene co-expression modules) [46] [43].

- Histology Model: Train a CNN (e.g., EfficientNetV2, ResNet) or a GCN on cell graphs derived from WSIs [45] [47] [48].

- Radiology Model: Train a 3D CNN or other deep learning architecture on radiological scans (e.g., CT, MRI) [41].

- Output: Each model produces a unimodal risk score or survival prediction.

Prediction Fusion with a Meta-Learner:

- Input: The unimodal predictions (risk scores) from each model on a validation set.

- Meta-Learner Training: Train a fusion model (the meta-learner) that learns the optimal way to combine these unimodal predictions. This can be a simple weighted average or a more complex model like a linear classifier [44].

- Inference: For a new patient, predictions from all unimodal models are generated and fed into the meta-learner to produce the final, fused prediction.

Successful implementation of the above protocols relies on a suite of software tools, datasets, and computational resources.

Table 2: Key research reagents and computational tools for multimodal fusion studies

| Category | Item | Function and Application |

|---|---|---|

| Data Sources | The Cancer Genome Atlas (TCGA) | Primary source for paired histopathology images, genomic data (mutations, CNV, RNA-Seq), and clinical data for multiple cancer types [45] [46]. |

| Cancer Digital Slide Archive (CDSA) | Platform for hosting and visualizing digitized whole-slide images from TCGA and other projects [46]. | |

| Software & Libraries | Pathomic Fusion Framework | Open-source code providing implementation of the multimodal fusion strategy using Kronecker product and gating-based attention [45]. |

| AstraZeneca–AI (AZ-AI) Multimodal Pipeline | A reusable Python library for multimodal feature integration, dimensionality reduction, and survival model training and evaluation [44]. | |

| BGLR R Package | Used for implementing Bayesian generalized linear regression models, including GBLUP for genomic prediction [42]. | |

| Computational Methods | Graph Convolutional Networks (GCNs) | Used to learn features from cell graphs constructed from histology images, capturing cell community structure [45]. |

| Supervised Contrastive Learning (SCL) | A deep learning technique used in frameworks like HistopathAI to improve feature representation, especially with imbalanced datasets [48]. | |