Integrating Multi-Omics Data for Precision Cancer Classification: Methods, Applications, and Future Directions

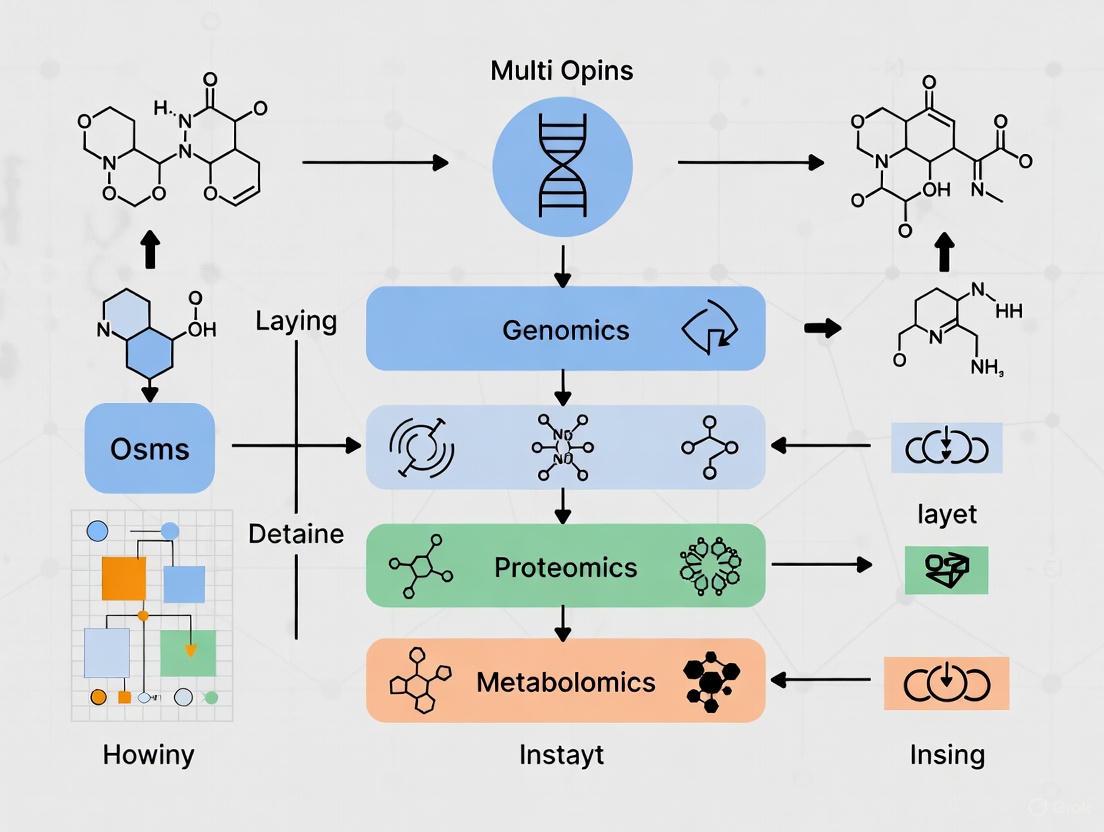

The integration of multi-omics data is revolutionizing cancer research by providing a holistic view of tumor biology, moving beyond the limitations of single-omics approaches.

Integrating Multi-Omics Data for Precision Cancer Classification: Methods, Applications, and Future Directions

Abstract

The integration of multi-omics data is revolutionizing cancer research by providing a holistic view of tumor biology, moving beyond the limitations of single-omics approaches. This article offers a comprehensive resource for researchers and drug development professionals, detailing the foundational principles of multi-omics layers—genomics, transcriptomics, proteomics, and epigenomics. It explores advanced computational methodologies for data integration, from statistical models to deep learning, and provides a practical guide for navigating common challenges like data heterogeneity and dimensionality. Through comparative analysis of tools and validation frameworks, the article equips scientists with the knowledge to enhance cancer subtype classification, identify novel biomarkers, and accelerate the development of personalized therapeutic strategies.

The Multi-Omics Landscape in Oncology: Building a Comprehensive Molecular Portrait of Cancer

Multi-omics approaches represent a transformative paradigm in biological research, particularly in complex disease fields like oncology. These technologies enable a comprehensive understanding of disease mechanisms by integrating data across multiple molecular layers. In cancer research, multi-omics integration has revolutionized our understanding of tumor biology by providing unprecedented insights into the molecular intricacies of various cancers, including breast, lung, gastric, pancreatic, and glioblastoma [1]. The core omics technologies—genomics, transcriptomics, proteomics, and metabolomics—form the foundational pillars of this approach, each contributing unique insights into cancer biology while overcoming the limitations of single-marker analyses [2]. By harmonizing multi-dimensional data, researchers can now reveal driver mutations, dynamic signaling pathways, and metabolic-immune crosstalk, offering systemic solutions to critical bottlenecks in gastrointestinal tumor research and beyond [2].

The technological advances in these fields have been dramatic, especially in DNA sequencing where costs have decreased from billions to under $1,000 per genome while speed has increased exponentially [3]. This progress has created a virtual flood of completely sequenced genomes being deposited in public databases—over 2,000 eukaryotic genomes, 600 archaeal genomes, and nearly 12,000 bacterial genomes to date, with tens of thousands more projects in progress [3]. This explosion of data provides the raw material for multi-omics integration, enabling researchers to ask fundamental questions about patterns common to all genomes, gene organization, feature types, and evolutionary evidence [3].

Table 1: Core Omics Technologies: Overview and Applications in Cancer Research

| Omics Technology | Analytical Focus | Key Applications in Cancer | Common Technologies |

|---|---|---|---|

| Genomics | DNA sequence and structure | Identifying driver mutations, copy number variations, SNPs | WGS, WES, targeted panels, liquid biopsy |

| Transcriptomics | RNA expression profiles | Gene expression profiling, molecular subtyping, immune microenvironment | RNA-seq, scRNA-seq, microarrays |

| Proteomics | Protein structure and function | Biomarker discovery, drug target identification, signaling pathways | Mass spectrometry, protein arrays |

| Metabolomics | Metabolic pathways and regulation | Early diagnosis, metabolic reprogramming, therapeutic response | LC-MS, GC-MS, NMR spectroscopy |

Detailed Technology Analysis

Genomics

Genomics involves the detailed analysis of the complete set of DNA, including all genes, with focus on sequencing, structure, function, and evolution [1]. Through comprehensive analysis of DNA sequences and structural changes in cancers—using methods like whole-genome sequencing (WGS) and whole-exome sequencing (WES)—genomics reveals critical correlations between tumor heterogeneity and genetic complexity [2]. The higher the tumor heterogeneity, the greater its genetic complexity, providing fundamental insights into the molecular mechanisms of tumorigenesis [2].

Cancer mutations are broadly categorized into driver mutations and passenger mutations. Driver mutations provide growth advantage to cells and are directly involved in the oncogenic process, typically occurring in genes involved in key cellular processes like cell growth regulation, apoptosis, and DNA repair [1]. For example, mutations in the TP53 gene are found in approximately 50% of all human cancers, highlighting its crucial role in maintaining cellular integrity [1]. Next-generation sequencing (NGS) technologies have revolutionized cancer research by enabling comprehensive analysis of entire genomes, exomes, or transcriptomes with high accuracy, allowing scientists to identify numerous cancer-associated mutations [1].

Copy number variations (CNVs) represent another critical genomic alteration, involving duplications or deletions of large DNA regions leading to variations in gene copies [1]. These variations significantly influence cancer development by altering gene dosage, potentially leading to overexpression of oncogenes or underexpression of tumor suppressor genes [1]. A well-established example is HER2 gene amplification in approximately 20% of breast cancers, leading to HER2 protein overexpression associated with aggressive tumor behavior and poor prognosis [1]. This discovery led to targeted therapies like trastuzumab, significantly improving patient outcomes [1].

Single-nucleotide polymorphisms (SNPs), the most common genetic variation among people, also play crucial roles in cancer susceptibility and treatment response [1]. While most SNPs have no health effect, some significantly impact cancer development or drug responses—for example, SNPs in BRCA1 and BRCA2 genes increase breast and ovarian cancer risk [1]. Pharmacogenomics studies using SNP data can predict patient responses to cancer therapies, improving treatment efficacy and reducing toxicity [1].

Table 2: Genomic Variations in Cancer Biology

| Variation Type | Description | Cancer Examples | Clinical Implications |

|---|---|---|---|

| Driver Mutations | Provide growth advantage to cancer cells | TP53 mutations (50% of cancers) | Critical for cancer development and progression; potential therapeutic targets |

| Copy Number Variations (CNVs) | Duplications/deletions of DNA regions | HER2 amplification (20% of breast cancers) | Altered gene dosage; leads to oncogene overexpression or tumor suppressor underexpression |

| Single-Nucleotide Polymorphisms (SNPs) | Single-base genetic variations | BRCA1/BRCA2 SNPs (breast/ovarian cancer) | Affect cancer susceptibility and drug response; enable personalized treatment approaches |

Transcriptomics

Transcriptomics provides a unique approach for studying dynamic molecular characteristics of cancers by evaluating RNA expression profiles and regulatory networks [2]. Unlike genomics, which focuses on static DNA variations, transcriptomics captures dynamic changes in gene expression, revealing complex interactions between tumor cells and their microenvironment [2]. RNA sequencing (RNA-seq), the principal transcriptomics technology, comprehensively detects expression levels of mRNA, lncRNA, and microRNA, systematically mapping gene expression profiles in various gastrointestinal tumors and identifying abnormal activation patterns of critical signaling pathways like TGF-β and PI3K-Akt [2].

In colorectal cancer, overexpression of WNT pathway target genes (e.g., MYC and AXIN2) is strongly linked to the adenoma-carcinoma sequence progression [2]. Similarly, high Claudin 18.2 expression in gastric cancer has emerged as a target for antibody-drug conjugate development [2]. Transcriptomics also serves as a key component of tumor immune microenvironment research, enabling characterization of immune cell subsets (e.g., T cells and macrophages) by examining RNA expression in tumor tissues [2]. In esophageal cancer, high PD-L1 mRNA expression often indicates an immunosuppressive microenvironment, while CD8+ T cell-related gene expression correlates with immunotherapy response [2].

Transcriptomics-based immune scoring systems (e.g., CIBERSORT) have been developed to predict patient responses to checkpoint inhibitors, supporting precision immunotherapy [2]. Additionally, transcriptomics reveals gene expression patterns associated with cancer-associated fibroblasts (CAF) and matrix remodeling, strongly correlated with tumor invasion and metastasis [2]. For instance, TGF-β signaling pathway activation in gastric cancer through high expression of CAF markers (e.g., FAP and ACTA2) suggests matrix remodeling as a potential therapeutic target [2].

Transcriptomics Workflow from Sample to Analysis

Proteomics

Proteomics focuses on the study of the structure and function of proteins, the main functional products of gene expression [1]. This field directly measures protein levels and modifications, providing critical links between genotype and phenotype [1]. Proteomics offers several advantages, including the ability to identify post-translational modifications that dramatically alter protein function, but also faces challenges due to proteins' complex structures, dynamic ranges, and the much larger proteome compared to the genome [1].

Applications in cancer research include biomarker discovery, drug target identification, and functional studies of cellular processes [1]. In gastrointestinal tumors, proteomics provides important information on core proteins and the immune microenvironment [2]. Advancements in mass spectrometry have been particularly transformative, enhancing the correlation between molecular profiles and clinical features and refining the prediction of therapeutic responses [1]. These technological improvements have enabled more comprehensive profiling of protein expression patterns, phosphorylation states, and other modifications that drive cancer progression.

The integration of proteomics with genomics—termed proteogenomics—has created particularly powerful insights for cancer research [1]. This approach helps validate genomic findings at the protein level and identifies instances where mRNA expression does not correlate with protein abundance due to post-transcriptional regulation. For example, in breast cancer, proteogenomic analyses have revealed novel protein isoforms and phosphorylation events that would not be detectable through genomic or transcriptomic approaches alone, opening new avenues for therapeutic intervention.

Metabolomics

Metabolomics involves the comprehensive analysis of metabolites within a biological sample, reflecting the biochemical activity and physiological state of cells or tissues [1]. This field provides unique insights into metabolic pathways and their regulation, offering a direct link to phenotype and capturing real-time physiological status [1]. In cancer research, metabolomics has emerged as a promising approach for early diagnosis, with applications in disease diagnosis, nutritional studies, and toxicology/drug metabolism [1].

Cancer cells undergo metabolic reprogramming to support their rapid growth and proliferation, a hallmark known as the Warburg effect where cancer cells preferentially utilize glycolysis even under oxygen-rich conditions [2]. Metabolomics can clarify mutation-induced metabolic phenotypes, such as how KRAS mutations drive specific metabolic alterations that support tumor growth [2]. In colorectal cancer, integrated multi-omics approaches have demonstrated how APC gene deletion activates the Wnt/β-catenin pathway, which subsequently drives glutamine metabolic reprogramming through upregulation of glutamine synthetase [2].

Metabolomics faces technical challenges including the highly dynamic nature of the metabolome influenced by numerous factors, limited reference databases, and technical variability/sensitivity issues [1]. However, advances in analytical technologies like liquid chromatography-mass spectrometry (LC-MS), gas chromatography-mass spectrometry (GC-MS), and nuclear magnetic resonance (NMR) spectroscopy have significantly improved metabolite coverage and quantification accuracy. Recent application notes highlight optimized systems for assessing mitochondrial respiration and glycolysis in complex biological samples, enabling real-time, high-sensitivity metabolic profiling with consistent, reproducible results [4].

Experimental Protocols

Integrated Multi-Omics Sample Processing Protocol

Objective: To obtain comprehensive molecular profiles from a single tumor specimen through coordinated genomics, transcriptomics, proteomics, and metabolomics analyses.

Materials:

- Fresh frozen tumor tissue specimens (snap-frozen in liquid nitrogen within 30 minutes of resection)

- RNA stabilization reagents (e.g., RNAlater)

- Tissue homogenization equipment (e.g., bead mill homogenizer)

- DNA, RNA, protein, and metabolite extraction kits

- Quality control instruments (e.g., Bioanalyzer, spectrophotometer)

Procedure:

- Tissue Partitioning:

- Cryopreserved tissue is cryosectioned into sequential slices (10-20μm thickness)

- Alternate sections are allocated for DNA/RNA, protein, and metabolite extraction

- Adjacent sections are H&E stained for histological validation

Nucleic Acids Co-Extraction:

- Homogenize tissue slices in TRIzol reagent (100mg tissue/mL)

- Separate organic and aqueous phases by centrifugation

- Recover RNA from aqueous phase, DNA from interphase

- Purify RNA using silica membrane columns

- Digest RNA with DNase I (30 minutes, 37°C)

- precipitate DNA from organic phase and wash extensively

Protein Extraction:

- Homogenize tissue in RIPA buffer with protease/phosphatase inhibitors

- Centrifuge at 14,000×g for 15 minutes at 4°C

- Collect supernatant for proteomic analysis

- Quantify protein concentration by BCA assay

Metabolite Extraction:

- Homogenize tissue in 80% methanol (pre-chilled to -80°C)

- Vortex vigorously for 30 seconds

- Incubate at -20°C for 1 hour

- Centrifuge at 14,000×g for 15 minutes at 4°C

- Collect supernatant for metabolomic analysis

Quality Control:

- DNA: A260/A280 ratio ≥1.8, fragment analysis

- RNA: RIN ≥7.0 on Bioanalyzer

- Protein: Clear of degradation on SDS-PAGE

- Metabolites: Stable intensity values in QC samples

LC-MS/MS Metabolomics Profiling Protocol

Objective: To identify and quantify polar and non-polar metabolites from tumor tissue extracts.

Materials:

- UHPLC system with C18 and HILIC columns

- High-resolution mass spectrometer (e.g., Q-Exactive)

- Solvents: LC-MS grade water, acetonitrile, methanol

- Ammonium acetate and ammonium hydroxide for mobile phase

- Internal standards: 13C-labeled amino acid mix

Chromatography Conditions:

- Reverse Phase (C18):

- Column: 2.1 × 100 mm, 1.7μm

- Mobile phase A: Water with 0.1% formic acid

- Mobile phase B: Acetonitrile with 0.1% formic acid

- Gradient: 1% B to 99% B over 15 minutes

- Flow rate: 0.4 mL/min

- Column temperature: 45°C

- HILIC:

- Column: 2.1 × 100 mm, 1.7μm

- Mobile phase A: 95:5 water:acetonitrile with 10mM ammonium acetate

- Mobile phase B: acetonitrile

- Gradient: 85% B to 30% B over 12 minutes

- Flow rate: 0.5 mL/min

- Column temperature: 40°C

Mass Spectrometry Parameters:

- Ionization: ESI positive and negative modes

- Spray voltage: ±3.5 kV

- Capillary temperature: 320°C

- Resolution: 70,000

- Scan range: m/z 70-1050

- Collision energy: Stepped (20, 40, 60 eV)

Data Processing:

- Convert raw files to mzML format

- Feature detection and alignment (XCMS, OpenMS)

- Compound identification against databases (HMDB, METLIN)

- Statistical analysis (MetaboAnalyst)

- Pathway enrichment analysis (KEGG, Reactome)

Multi-Omics Integration Pathway for Cancer Research

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Research Reagents for Multi-Omics Cancer Studies

| Reagent Category | Specific Products | Application | Technical Considerations |

|---|---|---|---|

| Nucleic Acid Extraction | TRIzol, AllPrep kits, QIAamp DNA FFPE | Co-extraction of DNA/RNA from limited specimens | Maintain RNA integrity (RIN >7.0); assess DNA fragmentation |

| Library Preparation | Illumina TruSeq, KAPA HyperPrep, SMARTer | NGS library construction for genomic/transcriptomic analysis | Optimize for input amount; incorporate unique molecular identifiers |

| Protein Digestion | Trypsin/Lys-C mix, RIPA buffer, protease inhibitors | Mass spectrometry sample preparation | Control digestion time/temperature; prevent modifications |

| Metabolite Extraction | 80% methanol, acetonitrile:water (1:1) | Polar/non-polar metabolite recovery | Maintain cold chain; process rapidly to preserve labile metabolites |

| Quality Control | Bioanalyzer/RIN, Qubit/BioRad, Standard reference materials | Assessment of sample quality across omics | Implement pre-analytical scoring system; establish acceptance criteria |

| Internal Standards | SIS peptides, 13C-labeled metabolites, ERCC RNA spikes | Quantification normalization across platforms | Use early in extraction to correct for technical variability |

The integration of core omics technologies represents a paradigm shift in cancer research, moving beyond single-marker analyses to comprehensive molecular portraits of tumors. As these technologies continue to advance—with improvements in third-generation sequencing, mass spectrometry sensitivity, and computational integration methods—their impact on cancer classification and personalized treatment will only intensify [1] [2]. The future of multi-omics research lies in addressing current challenges related to data heterogeneity, algorithm generalization, and clinical translation costs while leveraging emerging opportunities in single-cell multi-omics, artificial intelligence, and spatial molecular profiling [2].

The promise of multi-omics approaches extends beyond basic research to clinical applications, where integrated molecular profiling could revolutionize cancer diagnosis, prognosis, and treatment selection. As standardization improves and costs decrease, multi-omics profiling may become routine in oncology practice, enabling truly personalized cancer therapy based on the complete molecular landscape of each patient's tumor [1]. This comprehensive approach holds the potential to significantly improve patient outcomes through more effective and targeted treatment strategies, ultimately fulfilling the promise of precision oncology [1].

Cancer is a genetic disease characterized by the accumulation of molecular variations that confer a growth advantage to cells. The integration of multi-omics data—spanning genomics, epigenomics, transcriptomics, and proteomics—has become crucial for deciphering the complex molecular mechanisms underlying carcinogenesis [5]. Driver mutations, copy number variations (CNVs), and single nucleotide polymorphisms (SNPs) represent three fundamental classes of molecular alterations that collectively contribute to cancer development, progression, and therapeutic resistance [6] [7] [8]. The identification and characterization of these variations provide not only deeper insights into cancer biology but also valuable biomarkers for diagnosis, prognosis, and personalized treatment strategies.

This application note outlines the key molecular variations in cancer, detailing experimental protocols for their detection and analysis within an integrated multi-omics framework. We present standardized methodologies for identifying driver mutations, CNVs, and SNPs, along with practical guidance for data integration and interpretation to advance cancer classification research.

Driver Mutations

Driver mutations are genomic alterations that provide a selective growth advantage to cancer cells and are positively selected during tumor evolution [6]. These mutations occur more frequently than expected from genome-wide mutation rates and are enriched in hallmark cancer pathways and driver genes. Traditionally, driver mutation detection focused on protein-coding regions; however, increasing evidence underscores the significance of non-coding variants in cancer development, with highly recurrent mutations observed in promoters (e.g., TERT), 3'UTRs (e.g., NOTCH1), and 5'UTRs (e.g., TAOK2, BCL2, CXCL14) [6].

Table 1: Classes of Driver Mutations and Their Functional Impacts

| Mutation Class | Genomic Location | Functional Impact | Example Genes | Cancer Association |

|---|---|---|---|---|

| Coding Mutations | CDS (Coding Sequence) | Alters amino acid sequence, protein function | TTN, TP53, KRAS | Disrupts protein function (e.g., TTN domain folding in LUAD) [6] |

| Promoter Mutations | Promoter regions | Alters transcription factor binding, gene expression | TERT | Upregulates expression in melanoma, CNS, bladder, thyroid cancers [6] |

| 3'UTR Mutations | 3' Untranslated Region | Affects mRNA stability, translation, splicing | NOTCH1 | Enhances activity in chronic lymphocytic leukemia [6] |

| 5'UTR Mutations | 5' Untranslated Region | Modifies mRNA translation efficiency | TAOK2, BCL2, CXCL14 | Alters translation in various cancers [6] |

| Splice Site Mutations | Exon-intron boundaries | Disrupts normal RNA splicing | Multiple genes | Generates aberrant protein isoforms |

Computational tools like geMER (genome-wide Mutation Enrichment Region) identify candidate driver genes by detecting mutation enrichment regions within both coding and non-coding elements, demonstrating that 94.3% of mutations align with functional genomic elements [6]. The Core Driver Gene Set (CDGS) concept has emerged, comprising genes that broadly promote carcinogenesis across multiple cancers, with one study identifying a CDGS of 25 genes for 25 cancer types [6].

Copy Number Variations (CNVs)

CNVs are structural alterations involving gains or losses of DNA segments larger than 1 kilobase, affecting a greater fraction of the genome than SNPs [7]. In cancer, CNVs can range from focal amplifications or deletions to whole-genome doubling events and chromothripsis (massive chromosomal rearrangements) [9]. CNVs contribute to oncogenesis by altering gene dosage, disrupting regulatory regions, and creating genomic instability.

Table 2: Types and Clinical Significance of CNVs in Cancer

| CNV Category | Genomic Scale | Biological Significance | Detection Methods | Clinical Association |

|---|---|---|---|---|

| Focal CNVs | < Several Mb | Amplifies oncogenes or deletes tumor suppressors | WGS, WES, SNP arrays | EGFR amplification in glioblastoma, MYCN in neuroblastoma |

| Arm-Level CNVs | Whole chromosome arms | Indicates chromosomal instability | WGS, SNP arrays | 1q gain in various cancers [9] |

| Whole-Genome Doubling (WGD) | Entire genome | Promotes tumor evolution, therapeutic resistance | Ploidy analysis | Poor prognosis across multiple cancers [9] |

| Chromothripsis | Multiple chromosomes | "Genomic catastrophe" with clustered rearrangements | WGS | Associated with aggressive disease [9] |

| Extrachromosomal DNA (ecDNA) | Circular DNA molecules | Amplifies oncogenes, promotes heterogeneity | WGS, single-cell methods | Oncogene amplification, drug resistance [10] |

Pan-cancer analyses have identified 21 copy number signatures that explain copy number patterns in 97% of TCGA samples, with 17 signatures linked to biological phenomena including whole-genome doubling, aneuploidy, loss of heterozygosity, homologous recombination deficiency, and chromothripsis [9]. These signatures reflect the activity of diverse mutational processes and have clinical implications for prognosis and treatment response.

Single Nucleotide Polymorphisms (SNPs)

SNPs are single base pair substitutions that represent the most frequent form of genetic variation. In cancer, SNPs can occur as either germline variations (constitutional DNA) that predispose to cancer or somatic mutations (acquired in tumor cells) that drive oncogenesis. While early cancer genetics focused on SNPs as risk factors, contemporary research emphasizes their integrated analysis with other variation types.

Advanced detection methods like Uni-C (Uniform Chromosome Conformation Capture) enable comprehensive profiling of SNPs and INDELs (insertions-deletions) at the single-cell level, achieving 86.4% genomic coverage in individual cells [10]. This approach facilitates the identification of driver gene mutations and neoantigen prediction in circulating tumor cells (CTCs), advancing early detection and treatment strategies [10].

Experimental Protocols for Multi-Omics Integration

Protocol 1: Identification of Driver Mutations Using geMER

Purpose: To identify candidate driver genes by detecting mutation enrichment regions within coding and non-coding genomic elements.

Materials:

- Input Data: Whole-genome or whole-exome sequencing data (BAM/VCF formats)

- Software: geMER pipeline

- Reference Databases: COSMIC CGC, TCGA mutation data

Procedure:

- Data Preprocessing: Process somatic mutations from WGS/WES data across cancer types

- Genomic Element Mapping: Align mutations to five genomic elements: CDS (41.2%), promoters (10.3%), splice sites (32.9%), 3'UTRs (11.3%), and 5'UTRs (4.3%)

- Mutation Enrichment Analysis: Apply modified Kolmogorov-Smirnov test to detect mutation enrichment patterns along gene transcripts

- Candidate Driver Identification: Identify genes with significant mutation enrichment (adj. p < 0.05)

- Validation: Compare against COSMIC CGC database; evaluate using F1 score and CGC enrichment metrics

Performance Metrics: geMER outperforms other methods (ActiveDriverWGS, OncodriveFML, DriverPower) across most cancer types, particularly in PRAD, READ, and OV, with higher proportion of CGC genes in results [6].

Protocol 2: Pan-Cancer CNV Signature Analysis

Purpose: To decipher copy number signatures across multiple cancer types and experimental platforms.

Materials:

- Input Data: Copy number profiles from WGS, WES, or SNP6 microarray data

- Software: Copy number signature framework

- Reference Data: TCGA cohort (9,873 cancers, 33 cancer types)

Procedure:

- Copy Number Profiling: Generate allele-specific copy number profiles using platform-optimized calling strategies

- Feature Encoding: Encode copy number profiles into 48-dimensional vectors based on:

- Total copy number (TCN)

- Heterozygosity status (LOH)

- Segment size

- Matrix Construction: Create copy number matrices for all samples

- Signature Decomposition: Apply non-negative matrix factorization to identify shared patterns

- Signature Attribution: Quantify the number of segments attributed to each signature per sample

- Biological Interpretation: Categorize signatures into six groups based on prevalent features

Output: 21 distinct pan-cancer copy number signatures (CN1-CN21) that accurately reconstruct 97% of TCGA samples, with strong concordance across platforms (median cosine similarity >0.8) [9].

Protocol 3: Single-Cell Multi-Omics Integration for Genomic Alteration Detection

Purpose: To comprehensively detect genomic alterations (SNPs, INDELs, CNVs, structural variants) at single-cell resolution.

Materials:

- Technology: Uni-C (Uniform Chromosome Conformation Capture)

- Reagents: Ethylene glycol bis (succinimidyl succinate) (EGS), formaldehyde, phi29 DNA polymerase, α-thiol-modified ddNTPs, exonuclease-resistant random primers

- Equipment: High-throughput sequencer

Procedure:

- Dual Crosslinking: Treat cells with EGS and formaldehyde to preserve chromatin spatial conformation

- Chromatin Fragmentation: Use 4-base cutter restriction endonuclease

- Proximity Ligation: Perform end-repair and proximity ligation in same reaction mixture

- Single-Nucleus Amplification:

- Employ phi29 DNA polymerase with dNTPs and α-thiol-modified ddNTPs

- Control product size (<2 kb) to prevent over-amplification

- Reduce amplification time to ~2 hours

- Library Preparation & Sequencing: Size selection, library preparation, high-throughput sequencing

- Data Integration: Combine 3D chromatin interaction data with whole-genome sequencing data

Performance: Achieves 86.4% genomic coverage at 14.6× sequencing depth per cell; identifies an average of 1.82 million SNPs and 0.28 million INDELs per cell with 86.2% true positive rate after filtering [10].

Data Integration and Analytical Workflows

Multi-Omics Integration Strategies

Integrating molecular variation data with other omics layers requires sophisticated computational approaches. Three primary integration strategies are employed:

- Early Integration: Simple concatenation of features from each omics layer into a single matrix

- Middle Integration: Using machine learning models to consolidate data without concatenating features

- Late Integration: Performing analysis on each omics layer separately, then merging results

Middle integration approaches, particularly those utilizing machine learning and deep learning, have demonstrated superior performance for cancer subtype classification and biomarker discovery [8].

Machine Learning Approaches for Multi-Omics Integration

Table 3: Comparison of Multi-Omics Integration Methods

| Method | Category | Primary Use | Advantages | Limitations |

|---|---|---|---|---|

| MOFA+ | Statistical-based | Dimensionality reduction, feature selection | Identifies latent factors explaining variation across omics; outperforms in BC subtyping (F1=0.75) [11] | Unsupervised, may miss subtype-specific signals |

| MOGCN | Deep learning (Graph CNN) | Cancer subtyping, biomarker identification | Captures non-linear relationships; integrates biological networks | Lower performance in BC subtyping vs. MOFA+ [11] |

| Autoencoder-based | Deep learning | Dimension reduction, latent feature extraction | Learns compressed representations; enables integration of heterogeneous data | Requires careful tuning; black box interpretation |

| Similarity Network Fusion (SNF) | Network-based | Cancer subtyping | Effectively integrates different data types using sample similarity networks | Computationally intensive for large datasets [12] |

Table 4: Key Research Reagent Solutions for Multi-Omics Cancer Studies

| Resource | Type | Function | Access |

|---|---|---|---|

| TCGA (The Cancer Genome Atlas) | Data Portal | Multi-omics data for >20,000 tumors across 33 cancers | https://portal.gdc.cancer.gov/ [8] |

| MLOmics | Database | Preprocessed multi-omics data for machine learning (8,314 samples, 32 cancers) | Open database with Original, Aligned, and Top feature versions [13] |

| COSMIC | Database | Curated multi-omics data for cell lines and tumors, focus on genomics | https://cancer.sanger.ac.uk/cosmic [8] |

| DepMap | Portal | CRISPR screens with multi-omics characterization of cell lines and drug screens | https://depmap.org/portal/ [8] |

| Uni-C | Technology | Single-cell 3D chromatin and genomic alteration profiling | Protocol described in [10] |

| geMER | Algorithm | Identifies candidate driver genes in coding and non-coding regions | http://bio-bigdata.hrbmu.edu.cn/geMER/ [6] |

Workflow Visualization

Multi-Omics Integration and Analysis Workflow

Copy Number Signature Analysis Pipeline

The comprehensive characterization of driver mutations, CNVs, and SNPs through integrated multi-omics approaches provides unprecedented insights into cancer biology and creates new opportunities for precision oncology. The experimental protocols and analytical frameworks outlined in this application note offer researchers standardized methodologies for detecting and interpreting these key molecular variations. As single-cell technologies and artificial intelligence approaches continue to advance, they will further enhance our ability to decipher cancer complexity and develop more effective classification systems and targeted therapies.

The integration of these molecular variation data with other omics layers—including transcriptomics, epigenomics, and proteomics—will be essential for developing a holistic understanding of cancer mechanisms and advancing personalized treatment strategies for cancer patients.

Cancer is fundamentally a complex and heterogeneous disease, characterized by uncontrolled cell growth that can invade surrounding tissues and spread to distant organs. Traditional methods of diagnosis, often relying on single-omics data such as gene expression, DNA methylation, or miRNA profiles, frequently fail to capture the full molecular landscape of a tumor [14] [15]. This limitation is particularly evident in challenging clinical scenarios, such as identifying the tissue of origin when cancer has metastasized to other organs [14]. An analysis limited to a single molecular level is insufficient for understanding the complex pathogenesis of cancer and struggles to meet the need for precise molecular subtyping, treatment selection, and prognosis [16]. The inherent shortcomings of single-omics approaches have catalyzed a paradigm shift toward multi-omics integration, which provides a more comprehensive and holistic perspective by concurrently analyzing multiple strata of biological data [17]. This document outlines the quantitative evidence against single-omics approaches, provides detailed protocols for multi-omics integration, and equips researchers with the necessary tools to advance cancer classification research.

Quantitative Evidence: The Performance Gap Between Single and Multi-Omics

Robust experimental evidence consistently demonstrates that multi-omics integration significantly outperforms single-omics approaches in key cancer research tasks, including classification, subtyping, and clustering. The following tables summarize comparative performance data from recent studies.

Table 1: Comparative Accuracy in Cancer Type and Subtype Classification

| Data Type | Task | Reported Accuracy | Citation |

|---|---|---|---|

| Multi-omics (mRNA, miRNA, Methylation) | Classifying 30 cancer types by tissue of origin | 96.67% (± 0.07) | [14] |

| Multi-omics (mRNA, miRNA, Methylation) | Identifying cancer stages | 83.33% to 93.64% | [14] |

| Multi-omics (mRNA, miRNA, Methylation) | Identifying cancer subtypes | 87.31% to 94.0% | [14] |

| Gene Expression (mRNA) only | Classifying 31 tumor types | 90% | [18] |

| miRNA only | Classifying 32 tumor types | 92% sensitivity | [18] |

Table 2: Clustering Performance for Cancer Subtyping Using Multi-omics Data

| Cancer Type | Subtypes | Metric | Performance | Citation |

|---|---|---|---|---|

| BRCA (Breast) | 5 | NMI | Refer to source study | [16] |

| GBM (Glioblastoma) | 4 | ARI | Refer to source study | [16] |

| LUAD (Lung Adenocarcinoma) | 3 | ACC | Refer to source study | [16] |

The superiority of multi-omics data is visually apparent in clustering analyses. For instance, a t-distributed stochastic neighbor embedding (t-SNE) analysis using cancer-associated multi-omics latent variables showed clear separation between 30 different cancer types. In contrast, t-SNE plots generated from single-omics data—gene expression, miRNA, and methylation separately—showed significant intermingling and co-clustering of distinct cancer types, demonstrating that single-omics data fails to adequately distinguish between them due to intra-tumor heterogeneity [14].

Experimental Protocols for Multi-Omics Integration

Protocol 1: Biologically Informed Deep Learning for Pan-Cancer Classification

This protocol details a hybrid feature selection and deep learning framework for classifying cancer by tissue of origin, stage, and subtype [14].

1. Sample and Data Collection

- Source: Obtain data from public repositories such as The Cancer Genome Atlas (TCGA) or use pre-processed databases like MLOmics [13].

- Omic Types: Collect mRNA expression, miRNA expression, and DNA methylation data.

- Sample Size: The referenced study used 7,632 samples from 30 different cancer types [14].

2. Biologically Informed Feature Selection

- Gene Set Enrichment Analysis (GSEA): Preprocess the gene expression data and perform GSEA to identify genes involved in molecular functions, biological processes, and cellular components (p < 0.05) [14].

- Univariate Cox Regression: Subject the enriched genes to univariate Cox regression analysis using clinical and gene expression data to identify genes linked with patient survival (p < 0.05) [14].

- Multi-Omics Linking:

- Identify miRNA molecules that target the survival-associated genes.

- Screen for CpG sites located in the promoter regions of these survival-associated genes.

- Output: Generate three distinct data matrices: an expression matrix of prognostic genes, a miRNA expression matrix, and a DNA methylation matrix.

3. Data Integration and Dimensionality Reduction with an Autoencoder

- Framework: Construct a deep learning autoencoder (e.g., CNC-AE).

- Input: Concatenate the three processed matrices (mRNA, miRNA, methylation) into a single input.

- Encoding: The encoder network transforms the multi-omics data into latent vectors. Fine-tune the dimensions of the bottleneck layer (e.g., 64 latent variables for each cancer type) [14].

- Training: Train the autoencoder to minimize the reconstruction loss (e.g., Mean Squared Error). A low MSE (0.03-0.29) indicates the model has successfully learned the cancer-specific patterns [14].

- Output: Use the latent variables, termed Cancer-associated Multi-omics Latent Variables (CMLV), for downstream classification tasks.

4. Classification

- Model: Construct an Artificial Neural Network (ANN) classifier.

- Input: The CMLV from the autoencoder.

- Output: Classify tissue of origin, cancer stage, and subtype.

Protocol 2: Multi-Omics Clustering for Cancer Subtyping (MOCSS)

This protocol describes an unsupervised method for cancer subtyping by learning shared and specific information from multi-omics data [16].

1. Data Preprocessing

- Data Types: Collect mRNA expression, miRNA expression, and DNA methylation data for the cancer type of interest.

- Normalization: Normalize the original data for each omics type using Min-Max Normalization to map all values to a [0, 1] range using the formula:

X∗ = (X - min) / (max - min)[16].

2. Shared and Specific Representation Learning

- Model Architecture: For each omics data type, employ two separate autoencoders: one to extract shared (consistent) information and another to extract specific (unique) information.

- Orthogonality Constraint: Apply an orthogonality constraint to the learned representations to reduce redundancy and mutual interference between the shared and specific information.

- Contrastive Learning: Use contrastive learning to align the shared information extracted from the different omics data in a common subspace, thereby strengthening their consistency.

3. Clustering and Subtype Identification

- Feature Fusion: For each sample, combine the learned shared and specific representations into a unified feature vector.

- Clustering Algorithm: Apply the K-means clustering algorithm to the unified representation matrix of all samples to obtain cluster labels.

- Validation: Evaluate the clustering performance using metrics such as Normalized Mutual Information (NMI), Adjusted Rand Index (ARI), and Accuracy (ACC) against known ground-truth labels if available.

Visualization of Multi-Omics Workflows

Multi-Omics Integration and Classification Workflow

Multi-Omics Integration and Classification Workflow

Shared and Specific Information Learning for Subtyping

Shared and Specific Information Learning for Subtyping

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Multi-Omics Cancer Research

| Resource Type | Name / Example | Function and Application |

|---|---|---|

| Public Data Repositories | The Cancer Genome Atlas (TCGA) | Primary source for raw, multi-omics cancer data from patient samples [18] [16]. |

| Preprocessed ML-Ready Databases | MLOmics | Provides off-the-shelf, preprocessed multi-omics data (mRNA, miRNA, methylation, CNV) with aligned features and significance filters, ready for machine learning models [13]. |

| Computational Frameworks & Tools | Autoencoders (e.g., CNC-AE), MOCSS, Subtype-GAN, XOmiVAE | Enable dimensionality reduction, data integration, and model training for classification and subtyping tasks [14] [13] [16]. |

| Bioinformatics Programming Languages | R, Python | Core languages for data preprocessing, statistical analysis (e.g., Cox regression, ANOVA), and implementing machine learning models [19]. |

| Analysis Packages & Platforms | Seurat, Scanpy, MindWalk HYFT Platform | Support comprehensive analysis workflows, including normalization, integration, clustering, and visualization of multi-omics data [20] [19]. |

| Biological Knowledge Bases | STRING, KEGG | Used for functional enrichment analysis, pathway mapping, and validating the biological relevance of identified features [13]. |

The integration of multi-omics data represents a transformative approach in cancer research, enabling a holistic view of the complex molecular interactions that drive oncogenesis. Large-scale public data resources have become indispensable for systematically mapping the genetic, epigenetic, transcriptomic, and proteomic alterations across cancer types. These resources provide the foundational data necessary for developing machine learning models that can classify cancer types, identify novel subtypes, and predict therapeutic vulnerabilities. This application note details the experimental and computational protocols for leveraging four pivotal resources—TCGA, ICGC, CPTAC, and DepMap—within a multi-omics cancer classification framework.

Table 1: Core Characteristics of Major Public Cancer Data Resources

| Resource | Primary Data Types | Sample Focus | Key Applications | Access Portal |

|---|---|---|---|---|

| TCGA (The Cancer Genome Atlas) | Genomics, Epigenomics, Transcriptomics [21] | >20,000 primary tumors across 33 cancer types [21] | Cancer classification, driver gene identification, molecular subtyping | Genomic Data Commons (GDC) Data Portal [21] |

| CPTAC (Clinical Proteomic Tumor Analysis Consortium) | Proteomics, Phosphoproteomics, Genomics, Transcriptomics [22] [23] | >1,000 tumors across 10 cancer types [22] | Proteogenomic analysis, connecting genomic alterations to protein-level phenotypes [23] | Proteomic Data Commons (PDC) [23] |

| DepMap (Cancer Dependency Map) | CRISPR screens, Omics data, Drug response [24] | Cancer cell lines [8] | Identifying cancer vulnerabilities and therapeutic targets [24] | DepMap Portal [24] |

| ICGC (International Cancer Genome Consortium) | Genomics, Transcriptomics [8] | Tumor data [8] | International collaborative genomics, cross-population analyses | ICGC Data Portal [8] |

Data Access and Preprocessing Protocols

Data Retrieval and Harmonization

Efficient access to multi-omics data requires specialized portals and Application Programming Interfaces (APIs). The TCGA data is accessible through the Genomic Data Commons (GDC) Data Portal, which provides web-based analysis and visualization tools [21]. For programmatic access, the CPTAC program has developed a Python API that streams quantitative data directly into pandas dataframes, facilitating integration with machine learning packages like SciKit-learn and PyTorch [23]. Similarly, the R/Bioconductor tool TCGAbiolinks has been expanded to stream CPTAC pan-cancer data [23].

Data harmonization presents significant challenges due to differing sample collection protocols, experimental platforms, and data processing pipelines. CPTAC has addressed this by creating a harmonized dataset where all proteogenomic data has been reprocessed using standardized computational workflows [23]. For transcriptomics data from TCGA, crucial steps include platform identification (e.g., "Illumina Hi-Seq"), conversion of RSEM estimates to FPKM values, and logarithmic transformation [13].

Multi-Omics Data Processing Workflow

The following diagram illustrates the standardized workflow for processing multi-omics data from major resources for cancer classification research:

Diagram 1: Multi-omics Data Processing Workflow. This workflow outlines the standardized pipeline for preparing heterogeneous omics data for machine learning applications.

For genomic data processing, the key steps include identifying copy-number variations (CNVs), filtering somatic mutations, identifying recurrent genomic alterations using tools like the GAIA package, and annotating genomic regions with BiomaRt [13]. DNA methylation data processing requires identifying methylation regions from metadata, performing median-centering normalization with the limma R package, and selecting promoters with minimum methylation levels in normal tissues [13].

Feature Processing for Machine Learning

MLOmics provides a structured approach for creating machine learning-ready datasets with three feature versions [13]:

- Original: Contains the full set of genes directly extracted from collected omics files.

- Aligned: Filters non-overlapping genes and selects genes shared across different cancer types, with z-score normalization.

- Top: Identifies the most significant features using multi-class ANOVA with Benjamini-Hochberg correction (FDR < 0.05), followed by z-score normalization.

This stratified approach enables researchers to select the appropriate feature set complexity for their specific classification task, balancing biological comprehensiveness with computational efficiency.

Experimental Protocols for Multi-Omics Integration

Pan-Cancer Classification Protocol

Pan-cancer classification aims to distinguish different cancer types based on their molecular profiles, providing crucial insights for diagnosis and treatment. The following protocol outlines a standardized workflow for developing and validating classification models:

Table 2: Experimental Protocol for Pan-Cancer Classification

| Step | Procedure | Tools & Techniques | Quality Control |

|---|---|---|---|

| Data Collection | Retrieve multi-omics data from TCGA, CPTAC, or ICGC portals | GDC Data Portal, PDC, TCGAbiolinks R package [21] [23] | Verify sample metadata completeness and experimental platform consistency |

| Feature Selection | Apply ANOVA-based feature selection (p<0.05 with BH correction) [13] | MLOmics Top feature set, SCikit-learn SelectKBest | Control false discovery rate; ensure features present across cancer types |

| Model Training | Implement ensemble classifiers with cross-validation | XGBoost, Random Forest, SVM [13] | 10-fold cross-validation; hyperparameter tuning via grid search |

| Validation | Assess performance on independent test sets | Precision, Recall, F1-score, NMI, ARI [13] | Compare against established baselines; compute confidence intervals |

The computational workflow for pan-cancer classification integrates multiple data types and machine learning approaches as shown below:

Diagram 2: Pan-Cancer Multi-Omics Classification Pipeline. This workflow demonstrates the integration of multiple omics data types through different strategies for cancer classification.

For transcriptomics data, the protocol includes converting scaled gene-level RSEM estimates to FPKM values using the edgeR package, removing non-human miRNA expressions using species annotations from miRBase, and applying logarithmic transformations [13]. For DNA methylation data, median-centering normalization is performed to adjust for systematic biases and technical variations across samples [13].

Translational Dependency Mapping Protocol

The integration of TCGA with DepMap enables the creation of translational dependency maps that predict gene essentiality in patient tumors. This protocol adapts cancer cell line dependencies to patient tumors through machine learning:

Step 1: Model Training on DepMap Data

- Retrieve genome-wide CRISPR-Cas9 knockout screens and multi-omics characterization of cancer cell lines from DepMap [25].

- Train elastic-net regression models to predict gene essentiality scores using gene expression features [25].

- Apply tenfold cross-validation to select models with minimum error (Pearson's r > 0.2; FDR < 1×10^(-3)) [25].

Step 2: Transcriptional Alignment

- Perform quantile normalization of expression data from both DepMap and TCGA.

- Apply contrastive Principal Component Analysis (cPCA) to remove top principal components (cPC1-4) that represent technical variations between cell lines and tumors [25].

- Validate alignment by assessing reduced correlation between predicted essentialities and tumor purity.

Step 3: Dependency Prediction in Patient Tumors

- Apply the trained models to TCGA transcriptomic profiles to predict gene essentiality in patient tumors [25].

- Validate predictions by confirming known lineage dependencies and oncogene associations (e.g., KRAS essentiality in pancreatic adenocarcinoma) [25].

This approach successfully identified patient-translatable synthetic lethalities, including PAPSS1/PAPSS12 and CNOT7/CNOT8, which were subsequently validated in vitro and in vivo [25].

Table 3: Essential Research Reagents and Computational Resources for Multi-Omics Cancer Research

| Resource | Type | Function | Application Example |

|---|---|---|---|

| CPTAC Python API [23] | Computational Tool | Streams processed proteogenomic data directly into pandas dataframes | Enables seamless integration with Scikit-learn and PyTorch for machine learning |

| TCGAbiolinks [23] | R/Bioconductor Package | Accesses and analyzes TCGA and CPTAC data within R environment | Facilitates comprehensive bioinformatic analysis and visualization |

| DepMap Data Explorer [24] | Web-based Tool | Interactive exploration of cancer dependencies and omics data | Identification of candidate therapeutic targets based on genetic dependencies |

| MLOmics Database [13] | Processed Dataset | Provides off-the-shelf multi-omics data for machine learning | Benchmarking classification algorithms on standardized pan-cancer datasets |

| OmicsEV [23] | Quality Control Tool | Evaluates multi-omics data quality using multiple metrics | Assessing data depth, normalization effectiveness, and batch effects |

| FragPipe Pipeline [23] | Proteomics Processing | Provides high-depth proteomic and phosphoproteomic quantification | Processing mass spectrometry data for proteogenomic integration |

Concluding Remarks

The integration of multi-omics data from TCGA, CPTAC, DepMap, and ICGC provides unprecedented opportunities for advancing cancer classification and therapeutic development. The experimental protocols outlined in this application note provide a structured framework for leveraging these resources through standardized computational workflows, validated machine learning approaches, and rigorous analytical techniques. As these data resources continue to expand and evolve, they will undoubtedly yield novel insights into cancer biology and accelerate the development of precision oncology approaches.

Computational Strategies for Multi-Omics Integration: From Statistics to Deep Learning

Multi-omics data integration has emerged as a cornerstone of modern cancer research, providing a powerful framework to address the profound molecular heterogeneity of tumors. By combining information from various molecular layers—such as genomics, transcriptomics, epigenomics, and proteomics—researchers can achieve a more comprehensive understanding of cancer biology than is possible with any single data type. The computational integration of these diverse datasets is primarily accomplished through three strategic paradigms: early, late, and intermediate (middle) fusion. Each paradigm offers distinct advantages and limitations for specific research scenarios in cancer classification, biomarker discovery, and therapeutic development. This article delineates these integration strategies, providing structured comparisons, detailed experimental protocols, and practical toolkits to guide their application in cancer research.

Fusion Paradigms: Core Concepts and Workflows

The integration of multi-omics data involves combining datasets from different molecular levels (e.g., genome, transcriptome, epigenome) to achieve a holistic view of a biological system. The choice of integration strategy significantly impacts the analysis outcome, influencing everything from data preprocessing to model interpretability. The three primary fusion paradigms—early, late, and intermediate—differ fundamentally in the stage at which data from different omics layers are combined.

Early Fusion

Early fusion, also known as data-level integration, involves concatenating raw or pre-processed features from multiple omics datasets into a single, unified matrix before analysis [26]. This approach allows machine learning models to directly learn from the combined feature space and capture potential interactions between different molecular layers from the outset.

Workflow Diagram: Early Fusion

Late Fusion

Late fusion, or decision-level integration, involves building separate models for each omics data type and combining their predictions at the final stage [26] [27]. This approach preserves the unique characteristics of each data modality and mitigates the challenges of heterogeneous data distributions.

Workflow Diagram: Late Fusion

Intermediate Fusion

Intermediate fusion (or middle fusion) represents a hybrid approach that integrates concepts from both early and late fusion. In this strategy, separate feature extractors or encoders are used for each omics type, but integration occurs through shared representation learning before the final prediction layer [28] [14]. This enables the model to capture both modality-specific patterns and cross-modal interactions.

Workflow Diagram: Intermediate Fusion

Comparative Analysis of Fusion Strategies

Table 1: Comparative Analysis of Multi-Omics Fusion Strategies for Cancer Classification

| Feature | Early Fusion | Late Fusion | Intermediate Fusion |

|---|---|---|---|

| Integration Stage | Data level (raw/preprocessed features) | Decision level (model predictions) | Feature level (latent representations) |

| Technical Implementation | Feature concatenation before model training | Separate models with prediction aggregation | Joint representation learning |

| Handling of Data Heterogeneity | Poor (requires extensive normalization) | Excellent (models tailored to each modality) | Good (modality-specific encoders) |

| Capture of Cross-Modal Interactions | High (direct access to all features) | Low (independent modeling) | High (explicit interaction modeling) |

| Model Complexity | Single, potentially large model | Multiple, potentially simpler models | Multiple interconnected components |

| Robustness to Missing Modalities | Poor (requires complete data) | Good (can omit modalities) | Moderate (architecture-dependent) |

| Interpretability Challenges | High (difficult to trace modality contributions) | Low (clear modality-specific contributions) | Moderate (requires specialized techniques) |

| Representative Cancer Study | MLOmics pan-cancer classification [13] | NSCLC subtype classification [29] | ELSM (cfDNA fragmentation) [28], Autoencoder integration [14] |

Table 2: Performance Comparison of Fusion Strategies in Published Cancer Studies

| Study | Cancer Type | Omics Types | Fusion Strategy | Reported Performance |

|---|---|---|---|---|

| ELSM [28] | Pan-cancer (10 types) | 13 cfDNA fragmentomic features | Intermediate (hybrid) | AUC: 0.972 (pan-cancer), 0.922 (gastric cancer) |

| Autoencoder Framework [14] | Pan-cancer (30 types) | mRNA, miRNA, methylation | Intermediate (autoencoder) | Accuracy: 96.67% (tissue of origin) |

| NSCLC Study [29] | Non-small cell lung cancer | mRNA, miRNA, DNA methylation | Late (weighted average) | Superior to single-omics baselines |

| AMOGEL [30] | BRCA, KIPAN | mRNA, miRNA, DNA methylation | Intermediate (graph-based) | Outperformed state-of-the-art models |

| MLOmics [13] | Pan-cancer (32 types) | mRNA, miRNA, methylation, CNV | Early (feature concatenation) | Baseline for comparison studies |

Experimental Protocols and Implementation

Protocol 1: Implementing Early Fusion for Pan-Cancer Classification

Objective: Classify cancer types using concatenated multi-omics features.

Materials and Reagents:

- Multi-omics dataset (e.g., MLOmics [13] with mRNA, miRNA, methylation, CNV)

- Computing environment with Python/R and necessary libraries (scikit-learn, pandas, numpy)

- Feature selection tools (ANOVA, LASSO)

Procedure:

- Data Preprocessing: Normalize each omics dataset independently using z-score normalization or platform-specific methods [13].

- Feature Selection: Apply ANOVA-based feature selection to identify top differentially expressed features across cancer types. Apply Benjamini-Hochberg correction to control false discovery rate [13].

- Feature Concatenation: Combine selected features from all omics types into a unified feature matrix, ensuring sample alignment.

- Model Training: Implement classifiers (XGBoost, SVM, Random Forest) on the concatenated dataset using cross-validation [13].

- Performance Evaluation: Assess using precision, recall, F1-score, and AUC-ROC metrics.

Technical Notes: Early fusion often faces the "curse of dimensionality," requiring robust feature selection to avoid overfitting, particularly with limited samples [26].

Protocol 2: Implementing Late Fusion for NSCLC Subtyping

Objective: Classify NSCLC subtypes using separate omics models with decision-level integration.

Materials and Reagents:

- NSCLC multi-omics dataset (e.g., TCGA NSCLC with mRNA, miRNA, methylation)

- Machine learning libraries supporting ensemble methods

- Weighted averaging algorithm for prediction fusion

Procedure:

- Modality-Specific Modeling: Train separate classification models (e.g., SVM, Random Forest) for each omics type[masked].

- Prediction Generation: Obtain probability estimates for each class from all modality-specific models.

- Decision Fusion: Apply weighted average fusion, assigning weights based on individual model performance on validation data[masked].

- Model Evaluation: Compare fused predictions against ground truth using accuracy and AUC metrics.

- Gene Discovery: Identify top features from each modality-specific model and integrate findings.

Technical Notes: Late fusion is particularly valuable when omics data have different statistical properties or when dealing with missing modalities for some samples [27].

Protocol 3: Implementing Intermediate Fusion Using Autoencoders

Objective: Integrate multi-omics data through latent space representation for cancer classification.

Materials and Reagents:

- Multi-omics dataset (mRNA, miRNA, methylation)

- Deep learning framework (TensorFlow, PyTorch)

- Autoencoder architecture with modality-specific encoders

Procedure:

- Biologically Informed Feature Selection: Apply gene set enrichment analysis and Cox regression to identify survival-associated features [14].

- Modality-Specific Encoding: Process each omics type through separate encoder networks to generate latent representations.

- Feature Fusion: Concatenate latent representations from all modalities in the bottleneck layer [14].

- Joint Representation Learning: Train the autoencoder to minimize reconstruction loss while maintaining biological relevance.

- Classification: Use the latent representations (CMLVs) to train a classifier (ANN) for cancer type, stage, and subtype prediction [14].

Technical Notes: The autoencoder architecture in [14] used bottleneck layers of size 64 for each cancer type, with reconstruction loss (MSE) ranging from 0.03 to 0.29, indicating effective representation learning.

Protocol 4: Implementing ELSM Framework for cfDNA-Based Cancer Detection

Objective: Detect cancer using cell-free DNA fragmentation patterns via hybrid early-late fusion.

Materials and Reagents:

- cfDNA whole-genome sequencing data from plasma

- 13 fragmentomic feature spaces (size distribution, end motifs, etc.)

- Neural network framework with attention mechanisms

Procedure:

- Fragmentomic Feature Extraction: Compute 13 different fragmentation patterns from cfDNA WGS data [28].

- Sample-Level Modality Evaluation: Quantify modality-specific contributions per sample by comparing predictions with individual modalities added/removed [28].

- Projection Layer Processing: Process each modality through configurable projection layers with residual connections.

- Attention-Based Fusion: Apply attention mechanisms to weight modality contributions dynamically.

- Model Output: Generate cancer probability scores through a fully connected layer with Softmax/Sigmoid activation [28].

Technical Notes: ELSM's innovation lies in its sample-level modality evaluation, which precisely captures modality-specific differences across individual samples, enhancing fusion effectiveness [28].

Table 3: Essential Resources for Multi-Omics Fusion Research

| Resource Category | Specific Tools/Databases | Function and Application |

|---|---|---|

| Multi-Omics Databases | MLOmics [13], TCGA, UCSC Genome Browser [18] | Provide integrated multi-omics datasets for model training and validation |

| Bioinformatics Platforms | STRING, KEGG [13] [30] | Offer prior biological knowledge for network-based integration and validation |

| Machine Learning Libraries | scikit-learn, XGBoost [13] | Implement classical ML algorithms for early and late fusion approaches |

| Deep Learning Frameworks | TensorFlow, PyTorch | Enable implementation of complex intermediate fusion architectures |

| Specialized Algorithms | Autoencoders [14], Graph Neural Networks [30], ELSM [28] | Provide specialized architectures for intermediate fusion implementation |

| Evaluation Metrics | AUC-ROC, Precision, Recall, F1-Score [13] | Quantify model performance for cancer classification tasks |

The strategic selection of integration paradigms—early, late, or intermediate fusion—represents a critical decision point in multi-omics cancer research. While early fusion offers simplicity and direct feature interaction, it struggles with data heterogeneity. Late fusion provides robustness but may miss important cross-modal relationships. Intermediate fusion strikes a balance, leveraging the strengths of both approaches through sophisticated representation learning. The ELSM framework [28] and autoencoder approaches [14] demonstrate how hybrid strategies can achieve superior performance in real-world cancer classification tasks. As multi-omics technologies continue to evolve, these integration paradigms will play an increasingly vital role in translating complex molecular measurements into clinically actionable insights for cancer diagnosis, prognosis, and treatment selection.

Cancer is a complex and heterogeneous disease, characterized by molecular alterations across multiple biological layers. The integration of multi-omics data—including genomics, transcriptomics, epigenomics, and proteomics—has emerged as a crucial strategy for unraveling this complexity, enabling improved cancer classification, biomarker discovery, and personalized treatment strategies [31]. Among the computational methods developed for this purpose, statistical and unsupervised models, particularly Multi-Omics Factor Analysis (MOFA+) and various matrix factorization approaches, have demonstrated significant utility in capturing the shared and specific variations across different omics modalities [32] [33].

These unsupervised methods are essential for reducing high-dimensional multi-omics data into lower-dimensional latent representations, which can reveal underlying biological structures without requiring prior label information. This capability is particularly valuable for cancer subtyping, where the objective is to discover novel molecular subtypes rather than predict predefined classes [32]. The application of these models has led to ground-breaking discoveries in cancer biology, providing insights into disease mechanisms and potential therapeutic targets [34].

Theoretical Foundations of MOFA+ and Matrix Factorization

Multi-Omics Factor Analysis (MOFA+)

MOFA+ is an unsupervised Bayesian framework that extends Factor Analysis to multi-omics settings. It models multiple omics datasets as linear combinations of latent factors that capture shared sources of variation across different data modalities [35] [32]. The model assumes that each omics data matrix ( Xi ) of dimensions ( ni \times m ) (with ( n_i ) features and ( m ) samples) can be decomposed as:

[ Xi = Z Wi^T + \epsilon_i ]

Where ( Z ) represents the latent factor matrix (( k \times m )) shared across all omics, ( Wi ) is the omics-specific weight matrix (( ni \times k )), and ( \epsilon_i ) represents noise. The Bayesian framework incorporates sparsity-promoting priors to automatically select relevant features and distinguish between shared and modality-specific signals [36] [37]. This formulation allows MOFA+ to effectively handle different data types and account for technological noise while identifying factors that represent key biological processes.

Matrix Factorization Approaches

Matrix factorization methods for multi-omics data decompose multiple omics matrices into lower-dimensional representations that capture essential biological information. Several variants have been developed:

- Integrative Non-negative Matrix Factorization (intNMF): Extends NMF to the multi-omics setting, producing non-negative factors that often yield more interpretable biological representations [32].

- Multi-Layer Matrix Factorization (MLMF): Processes multi-omics feature matrices through multi-layer linear or nonlinear factorization, decomposing original data into latent feature representations unique to each omics type before fusing them into a consensus form [38].

- Joint and Individual Variation Explained (JIVE): Decomposes omics data into two parts: a joint structure shared across all omics and individual structures specific to each omics layer [32].

These methods differ in their mathematical formulations, constraints, and assumptions about factor distributions, leading to variations in their performance and applicability across different biological contexts [32].

Comparative Performance Analysis

Benchmarking Studies

Comprehensive benchmarking studies have evaluated various multi-omics integration methods to establish their relative strengths and weaknesses. A notable large-scale benchmark compared nine joint dimensionality reduction (jDR) approaches using simulated data, TCGA cancer data, and single-cell multi-omics data [32]. The results demonstrated that methods perform differently depending on the application context, with intNMF excelling in clustering tasks, while Multiple Co-Inertia Analysis (MCIA) offered effective behavior across multiple contexts.

MOFA+ vs. Deep Learning Approaches

A direct comparison between MOFA+ and deep learning-based approaches provides insights into the relative strengths of statistical versus neural methods. A 2025 study comparing MOFA+ with MoGCN (a graph convolutional network approach) for breast cancer subtyping revealed that MOFA+ outperformed MoGCN in feature selection, achieving a higher F1 score (0.75) in nonlinear classification models [35]. MOFA+ also identified 121 biologically relevant pathways compared to 100 pathways identified by MoGCN, with key pathways including Fc gamma R-mediated phagocytosis and the SNARE pathway, both implicated in immune responses and tumor progression [35].

Table 1: Performance Comparison Between MOFA+ and MOGCN for Breast Cancer Subtyping

| Evaluation Metric | MOFA+ | MOGCN |

|---|---|---|

| F1 Score (Nonlinear Model) | 0.75 | Lower than MOFA+ |

| Relevant Pathways Identified | 121 | 100 |

| Key Pathways | Fc gamma R-mediated phagocytosis, SNARE pathway | Not Specified |

| Clustering Quality | Higher Calinski-Harabasz index, Lower Davies-Bouldin index | Inferior to MOFA+ |

Multi-Method Comparative Analysis

Research comparing ten different factorization algorithms applied to a TCGA breast cancer dataset comprising transcriptomics, proteomics, and microRNA profiles revealed that methods with similar mathematical foundations tend to produce correlated results [39]. Specifically, PCA, MOFA, and NMF showed high similarity, while CCA-based methods (SGCCA, RGCCA) formed a separate cluster. MCIA diverged significantly from other methods, highlighting how different algorithmic assumptions can lead to varying biological interpretations [39].

Table 2: Characteristics of Major Multi-Omics Integration Methods

| Method | Category | Key Features | Strengths | Limitations |

|---|---|---|---|---|

| MOFA+ | Factor Analysis | Bayesian framework, latent factors | Handles missing data, interpretable | Requires large sample size for optimal performance |

| intNMF | Matrix Factorization | Non-negative constraints | Effective clustering, interpretable parts | Linear decomposition |

| DIABLO | Supervised Integration | Sparse generalized CCA | Excellent classification performance | Requires predefined classes |

| MCIA | Dimensionality Reduction | Co-inertia analysis | Effective across diverse contexts | Omics-specific factors |

| JIVE | Matrix Factorization | Joint + individual variation | Separates shared/unique variation | Complex implementation |

Experimental Protocols and Application Notes

Standard Protocol for MOFA+ Application in Cancer Subtyping

Objective: To identify breast cancer subtypes through unsupervised integration of transcriptomics, epigenomics, and microbiome data using MOFA+.

Dataset: 960 invasive breast carcinoma samples from TCGA with the following subtype distribution: 168 Basal, 485 Luminal A, 196 Luminal B, 76 HER2-enriched, and 35 Normal-like [35].

Step-by-Step Protocol:

Data Preprocessing

- Download normalized host transcriptomics, epigenomics, and microbiomics data from cBioPortal.

- Apply batch effect correction using unsupervised ComBat for transcriptomics and microbiomics data.

- Apply Harman method for methylation data batch effect correction.

- Filter out features with zero expression in 50% of samples.

- Retain features: D = 20,531 for transcriptome, D = 1,406 for microbiome, D = 22,601 for epigenome.

MOFA+ Model Training

- Implement MOFA+ using R package (v 4.3.2).

- Set training parameters: 400,000 iterations with convergence threshold.

- Select Latent Factors (LFs) explaining minimum 5% variance in at least one data type.

- Extract feature loading scores from the latent factor explaining highest shared variance.

Feature Selection

- Select top 100 features per omics layer based on absolute loadings from the most informative latent factor.

- Combine selected features into a unified input of 300 features per sample.

Downstream Analysis

- Apply t-SNE for visualization and cluster quality assessment.

- Calculate Calinski-Harabasz index (higher values indicate better clustering) and Davies-Bouldin index (lower values indicate better clustering).

- Evaluate biological relevance through pathway enrichment analysis of selected transcriptomic features.

Protocol for Matrix Factorization with Transfer Learning (MOTL)

Objective: Enhance matrix factorization for limited-sample multi-omics datasets using transfer learning.

Rationale: Traditional matrix factorization requires large sample sizes for meaningful representation. MOTL addresses this limitation by transferring knowledge from large, heterogeneous learning datasets to small target datasets [36].

Step-by-Step Protocol:

Learning Dataset Preparation

- Curate a large, heterogeneous multi-omics dataset (e.g., Recount2 compendium with 70,000+ human samples).

- Apply MOFA to learning dataset to infer reference weight matrices.

Target Dataset Processing

- Preprocess small target multi-omics dataset (e.g., glioblastoma samples).

- Align feature spaces between learning and target datasets.

Transfer Learning Implementation

- Apply MOTL framework to factorize target dataset with respect to reference weight matrices.

- Use Bayesian transfer learning to infer latent factors for target dataset.

Validation

- Compare clustering performance with and without transfer learning.

- Assess cancer status and subtype delineation using domain-specific metrics.

Signaling Pathways and Biological Insights

MOFA+ application in breast cancer has revealed enrichment in several key pathways that offer insights into tumor biology. The identification of Fc gamma R-mediated phagocytosis is particularly significant as this pathway plays a crucial role in immune response, connecting antibody-mediated recognition to phagocytic clearance of target cells [35]. This suggests potential mechanisms by which tumors might evade immune surveillance. The SNARE pathway, also identified through MOFA+ analysis, is involved in intracellular membrane trafficking and vesicle fusion, processes that are frequently dysregulated in cancer and contribute to tumor progression and metastasis [35].

The following diagram illustrates the multi-omics integration workflow using MOFA+ and the key biological pathways identified in breast cancer subtyping:

Research Reagent Solutions

Table 3: Essential Computational Tools for Multi-Omics Integration

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| MOFA+ | R Package | Unsupervised multi-omics integration | Bayesian factor analysis for capturing shared variation |

| intNMF | R Package | Non-negative matrix factorization | Cancer subtyping with non-negative constraints |

| DIABLO | R Package (mixOmics) | Supervised multi-omics integration | Classification and biomarker discovery |

| TCGA Data | Database | Multi-omics cancer datasets | Source of validated cancer omics data |

| cBioPortal | Web Resource | Cancer genomics data portal | Data access and preliminary analysis |

| ComBat | R Package | Batch effect correction | Removing technical variability |

| MOTL | Computational Framework | Transfer learning for multi-omics | Matrix factorization with limited samples |

| Omics Playground | Analytics Platform | Multi-omics analysis suite | Method comparison and visualization |

MOFA+ and matrix factorization methods represent powerful unsupervised approaches for multi-omics integration in cancer research. The comparative analyses demonstrate that MOFA+ excels in feature selection and biological interpretability for cancer subtyping, particularly in breast cancer where it has identified novel pathway associations [35]. Matrix factorization methods more broadly offer flexible frameworks for decomposing complex multi-omics data into interpretable latent components.

Future methodological developments are likely to focus on several key areas. Transfer learning approaches, such as MOTL, address the critical challenge of analyzing limited-sample datasets by leveraging information from larger heterogeneous learning datasets [36]. Adaptive integration frameworks that use evolutionary algorithms like genetic programming show promise for optimizing feature selection and integration strategies [37]. Furthermore, methods capable of handling missing omics data, such as MLMF, will expand the applicability of these approaches to real-world clinical datasets where complete multi-omics profiling may not always be feasible [38].

As the field advances, the combination of multiple integration methods through consensus approaches may help identify more robust biomarkers and subtypes, ultimately accelerating the translation of multi-omics discoveries into clinical applications for cancer diagnosis, prognosis, and treatment selection.

The integration of multi-omics data has revolutionized cancer research by providing a comprehensive view of the molecular landscape of tumors. Multi-omics approaches simultaneously analyze various molecular layers, including genomics, transcriptomics, epigenomics, and proteomics, to uncover complex biological interactions that drive cancer progression [1]. These integrative strategies have demonstrated significant potential for improving cancer classification accuracy, identifying novel biomarkers, and enabling personalized treatment approaches [40] [31]. The advent of high-throughput sequencing technologies has enabled the generation of extensive multi-omics datasets, with large-scale archives like The Cancer Genome Atlas (TCGA) providing comprehensive molecular profiling across numerous cancer types [41].