Improving Precision in Copy Number Quantification: Advanced dPCR Methods, Optimization Strategies, and Comparative Validation

This article provides a comprehensive guide for researchers and drug development professionals seeking to enhance the precision of copy number quantification.

Improving Precision in Copy Number Quantification: Advanced dPCR Methods, Optimization Strategies, and Comparative Validation

Abstract

This article provides a comprehensive guide for researchers and drug development professionals seeking to enhance the precision of copy number quantification. Covering foundational principles to advanced applications, it explores the evolution from qPCR to digital PCR technologies, detailing their mechanisms and comparative advantages. The scope includes practical methodological guidance for assay design, troubleshooting common pitfalls, and data interpretation. A significant focus is given to recent technological advancements and validation strategies, incorporating 2025 research on platform comparisons and novel allele-specific dPCR methods. This resource synthesizes current best practices to enable robust, reproducible copy number analysis in both research and clinical settings.

The Evolution of Copy Number Quantification: From qPCR Fundamentals to dPCR Precision

Defining Copy Number Variation and Its Biomedical Significance

FAQs: Core Concepts of Copy Number Variation

1. What is a Copy Number Variation (CNV)? A Copy Number Variation (CNV) is a type of structural variation in the genome characterized by the gain or loss of a DNA segment, typically larger than 50 base pairs. This results in a deviation from the normal diploid copy number, creating differences between individuals of the same species [1] [2] [3]. CNVs encompass duplications (gains) and deletions (losses) of genomic regions and are an essential source of genetic diversity, influencing population diversity, evolution, and the development of various diseases [1].

2. Why are CNVs biologically and medically significant? CNVs are crucial because they can directly alter gene dosage, which in turn can affect gene expression levels, disrupt genomic architecture, and modify phenotypes. In medicine, they are recognized for their role in the molecular diagnosis of many diseases [1]. Specifically, in cancer research, CNVs are linked to structural variations that can lead to the activation of oncogenes or the inactivation of tumor suppressor genes, driving cancer development and progression [4] [5]. Their large effect sizes and high penetrance also make them invaluable for investigating the etiology of complex disorders like neurodevelopmental diseases [6].

3. What is the difference between a CNV and a Single Nucleotide Variant (SNV)? While both CNVs and SNVs are types of genetic variations, they differ fundamentally in scale and mechanism. SNVs are changes at a single nucleotide position. In contrast, CNVs involve larger genomic segments, from 50 base pairs to several megabases, and represent an imbalance (gain or loss) in copy number [2] [7]. A key functional distinction is that CNVs exclusively impact gene dosage, and they often have higher reversion rates compared to SNVs, allowing for more rapid and sometimes reversible phenotypic changes [7].

Troubleshooting Guides for CNV Analysis

Guide 1: Addressing Challenges in CNV Calling from Sequencing Data

CNV calling is a critical but complex step in genomic analysis. Here are solutions to common issues.

Problem: Inconsistent CNV calls between different algorithms.

- Cause: Different computational tools use distinct statistical models and normalization strategies, leading to varying sensitivities and specificities [8] [5].

- Solution:

- Do not rely on a single standard tool. For the most precise results, consider using multiple CNV calling tools and comparing the consensus [4].

- Select a tool that is appropriate for your sequencing data type (e.g., WGS, WES, targeted panels, or scRNA-seq) [4].

- Refer to benchmarking studies to choose a well-performing tool for your specific data type. For example, CNVkit performs well for WES and WGS data, while FACETS can handle WGS, WES, and targeted panels [4].

Problem: Low specificity (too many false positives) or low sensitivity (missed true CNVs).

- Cause: The sensitivity and specificity of CNV detection are highly dependent on technical factors like sequencing coverage, read length, and the choice of a reference genome [4] [5]. The purity of the DNA input and sample preparation method (e.g., FFPE vs. frozen samples) can also significantly impact results [4].

- Solution:

- For bulk sequencing: Ensure high and uniform sequencing coverage. With Whole Genome Sequencing (WGS), coverage tends to be more uniform, providing better sensitivity and specificity than targeted approaches [4].

- For scRNA-seq data: The choice of a high-quality set of euploid reference cells for normalization is critical. Performance can be greatly affected by the dataset size and the number/type of CNVs in the sample [8].

- Benchmark your pipeline: Use a positive control cell line with known CNVs, such as the breast cancer cell line HCC1395, to validate your workflow [5].

Problem: Accurate determination of genome ploidy and cellular subclones.

- Cause: Aneuploidy (an abnormal number of chromosomes) is a major feature of cancer, and tumors are often heterogeneous, containing subpopulations of cells with distinct CNV profiles [4] [8].

- Solution:

- For bulk sequencing, use tools like HATCHet that are designed to analyze variants and duplications jointly across tumor samples to infer subclonal architecture [4].

- For single-cell resolution, employ scRNA-seq CNV callers like InferCNV or Numbat, which can group cells into subclones with the same CNV profile, revealing intra-tumor heterogeneity [8].

Guide 2: Overcoming Obstacles in Validating Pathogenic CNVs

Moving from a computational call to a biologically and clinically validated CNV is a key step.

Problem: Determining the pathogenicity of a CNV in a patient.

- Cause: Not all CNVs are disease-causing; they are also a source of normal population diversity [1] [3].

- Solution:

- Overlap with known genes: Determine if the CNV overlaps with genes already established as having a disease association, such as PRKN in early-onset Parkinson's disease or SNCA duplications in autosomal dominant Parkinson's [9].

- Inheritance pattern: Analyze whether the CNV is de novo or inherited, and if it fits an expected recessive (e.g., homozygous or compound heterozygous) or dominant model [9].

- Functional validation: Use orthogonal molecular methods to confirm the CNV and its functional impact.

Problem: Selecting an orthogonal method for experimental validation.

- Cause: Computational calls from sequencing data require confirmation with an independent technique.

- Solution: The table below lists common validation methods and their applications.

| Method | Function & Application |

|---|---|

| MLPA (Multiplex Ligation-dependent Probe Amplification) | Used to validate CNVs in specific genes (e.g., it validated 87% of CNVs in PD-related genes in a large study) [9]. |

| qPCR (Quantitative PCR) | Provides a quantitative measure of gene copy number for targeted validation of specific loci [9]. |

| FISH (Fluorescent In Situ Hybridization) | Used to visually confirm large structural rearrangements and CNVs on chromosomes [2]. |

| Array CGH (Comparative Genomic Hybridization) | A legacy platform for genome-wide CNV profiling, though with lower resolution and sensitivity than sequencing-based methods [2]. |

The Scientist's Toolkit: Essential Reagents & Computational Tools

| Category | Item | Function & Explanation |

|---|---|---|

| Wet-Lab Reagents | Formalin-Fixed Paraffin-Embedded (FFPE) or Frozen Tissue | Common sample sources; FFPE can introduce more noise and impact CNV calling accuracy compared to frozen samples [4]. |

| Matched Normal Sample (e.g., blood or adjacent tissue) | Serves as a crucial reference to distinguish somatic (tumor-specific) CNVs from germline (inherited) polymorphisms [4]. | |

| Agilent SureSelect Target Enrichment System | Used for exome capture to enrich for coding regions before sequencing, allowing for CNV detection in exomes [3]. | |

| Computational Tools | CNVkit | Analyzes both whole-exome (WES) and whole-genome (WGS) sequencing data for CNV detection [4]. |

| FACETS | Used for Fraction and Allele-Specific Copy Number Estimates from Tumor Sequencing; works with WGS, WES, and panel data [4]. | |

| InferCNV | A popular tool for inferring CNVs from single-cell RNA-seq data, useful for exploring tumor heterogeneity [8] [5]. | |

| CopyKAT | Another scRNA-seq CNV caller that uses a statistical model to identify cellular subpopulations [5]. | |

| DRAGEN | A scalable bioinformatics platform for identifying variants of all sizes, offering efficient CNV calling [4]. | |

| Reference Databases | Genomic Data Commons (GDC) | An NCI resource providing pipelines and data for analyzing and visualizing CNV data, particularly in cancer [4]. |

| gnomAD | Genome Aggregation Database; used to assess the population frequency of CNVs and identify rare variants [9]. |

Experimental Protocols for Key CNV Analyses

Protocol 1: Read-Depth Based CNV Detection from Whole-Genome Sequencing Data

This is a standard protocol for identifying large deletions and duplications from short-read sequencing data [2].

- Sequence Mapping: Map the Illumina whole-genome sequencing reads to a repeat-masked reference genome (e.g., using the mrsFAST or BWA-MEM aligner) [2].

- Read Depth Calculation: Calculate the depth of coverage (read count) in non-overlapping bins across the entire genome.

- Normalization and GC Correction: Normalize the read depth to account for technical biases, such as variations in GC content.

- CNV Calling: Use a read-depth based CNV detection algorithm (e.g., mrCaNaVaR, CNVnator, or cn.MOPS) to identify genomic regions with a significant deviation from the expected diploid coverage [2] [3].

- Segmentation (optional): Some tools perform segmentation to merge adjacent bins with similar copy number states, defining the boundaries of the CNV.

- Annotation and Filtering: Annotate the called CNVs with gene information and filter out low-confidence calls.

Protocol 2: Inferring CNVs from Single-Cell RNA-Seq Data

This protocol allows for the study of copy number heterogeneity within a tumor sample using scRNA-seq data [8] [5].

- Data Pre-processing: Generate a gene expression matrix (counts per gene per cell) from the raw scRNA-seq data.

- Reference Selection: Manually annotate or use a tool to automatically identify a set of euploid (diploid) cells to use as a reference for normalization. This is a critical step that greatly influences the results [8].

- Normalization: Normalize the expression of the analyzed cells (putative tumor cells) against the reference cells to control for technical variation and strong gene-specific expression.

- CNV Inference: Input the normalized data into a scRNA-seq CNV caller (e.g., InferCNV, CopyKAT, or Numbat). These methods use different approaches, such as Hidden Markov Models (HMMs) or segmentation, to infer regions of gain and loss [8] [5].

- Visualization and Clustering: Visualize the inferred CNV profiles as a heatmap and cluster cells based on their CNV signatures to identify distinct subclonal populations within the tumor.

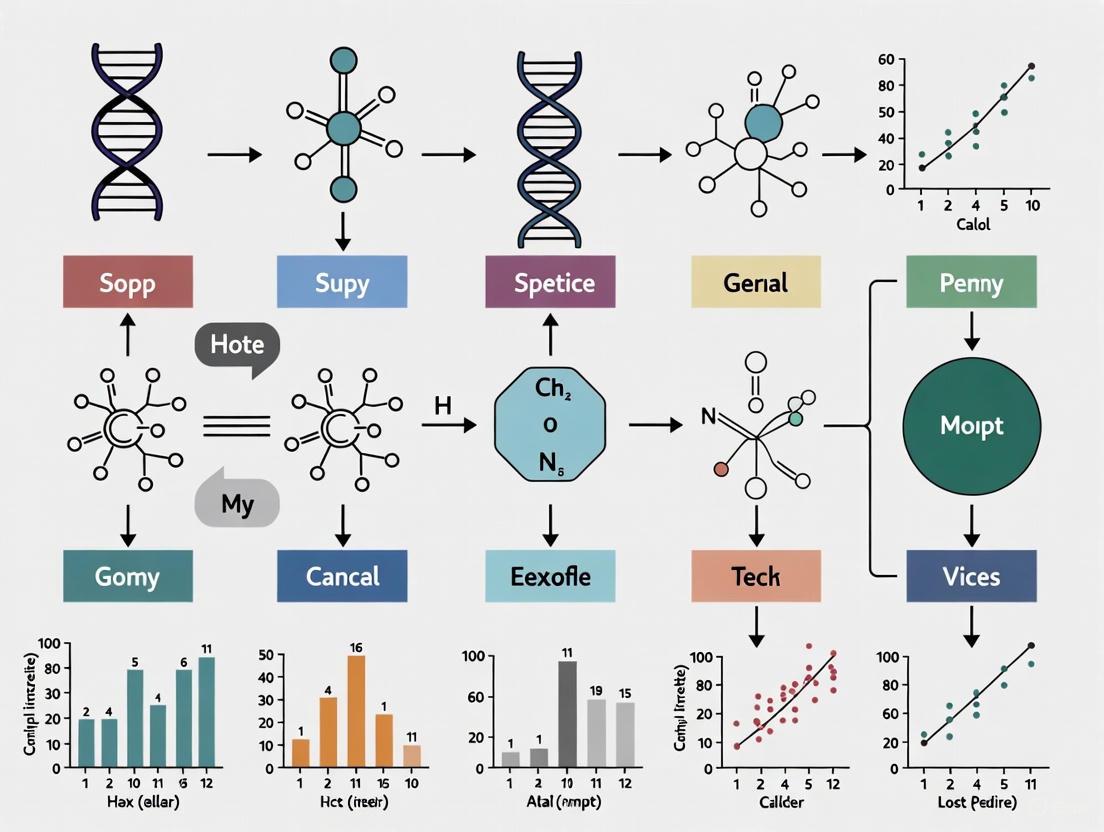

Workflow and Decision Diagrams

CNV Analysis Workflow

Tool Selection Logic

Core Principles of qPCR

Quantitative PCR (qPCR) is a powerful molecular technique that allows for the real-time detection and quantification of nucleic acids. Unlike conventional PCR, which is end-point analysis, qPCR monitors the amplification of DNA during each cycle via fluorescent signals. The core principle revolves around the Cycle threshold (Ct) value, which is the cycle number at which the amplification curve crosses a fluorescence threshold, indicating a detectable level of product. A lower Ct value corresponds to a higher starting concentration of the target template [10].

The quantification is achieved by comparing the Ct values of unknown samples to a standard curve generated from samples with known concentrations. The linear part of this curve defines the assay's quantitative range, bounded by the Limit of Detection (LOD), the lowest concentration that can be detected, and the Limit of Quantification (LOQ), the lowest concentration that can be quantified reliably [10]. The efficiency (E) of a qPCR assay, ideally between 90% and 110%, is calculated from the slope of the standard curve (E = [(10^(-1/slope)) - 1] × 100) and reflects how perfectly the DNA doubles each cycle. Deviations indicate potential issues with reaction inhibitors or primer design [10].

Troubleshooting Guides and FAQs

Abnormal Amplification Curves

1. The amplification curve does not reach a plateau phase.

- Causes: Low template concentration (e.g., Ct value around 35), too few amplification cycles, or low amplification efficiency of the reagents [11].

- Solutions: Increase the template concentration, increase the number of amplification cycles, or optimize the reaction conditions (e.g., increase Mg²⁺ concentration) [11].

2. The amplification curve shows a "sagging" plateau.

- Causes: Degradation of the amplification product or SYBR Green dye, improper tube sealing leading to reagent evaporation, or cDNA concentration that is too high [11].

- Solutions: Improve sample purity to prevent degradation, ensure tube caps are sealed properly, or dilute the template cDNA [11].

3. The amplification curve is irregular or "zigzag" at the plateau.

- Causes: Poor RNA purity, high levels of impurities, or overuse of the qPCR instrument leading to unstable fluorescence collection [11].

- Solutions: Re-extract high-quality RNA, dilute the RNA template to reduce impurity concentration, or perform instrument calibration [11].

4. What causes poor repeatability between technical replicates?

- Causes: Pipetting errors, inadequate mixing of reagents, low template copy number, or not using a proper calibration dye like ROX [11].

- Solutions: Calibrate pipettes, mix the reaction system thoroughly, use more replicates for low-concentration samples, and use ROX dye as a passive reference if compatible with your master mix [11].

Abnormal Melting Curves

5. The melting curve has a double peak, with the lower peak Tm below 80°C.

- Cause: This typically indicates the formation of primer-dimers [11].

- Solutions: Increase the annealing temperature, decrease the primer concentration, or redesign the primers to avoid self-complementarity [11].

6. The melting curve has a double peak, with the lower peak Tm above 80°C.

- Causes: Poor primer specificity leading to non-specific amplification products, or genomic DNA (gDNA) contamination [11].

- Solutions: Use BLAST to check primer specificity and redesign if necessary. Include a no-reverse-transcriptase (NRC) control to check for gDNA contamination and treat samples with DNase if needed [11].

7. The melting curve shows messy or spurious peaks.

- Causes: Contamination of the reaction system, reagent degradation due to improper storage, or a mismatch between the ROX dye concentration and the instrument settings [11].

- Solutions: Systematically check for contamination in water, primers, and enzymes. Use new reagent batches. Calibrate the instrument regularly and ensure the correct ROX reference is selected in the software [11].

Experimental Protocols for Enhanced Precision

Protocol 1: Validating a qPCR Assay Using a Standard Curve

This protocol is essential for establishing a reliable quantitative assay.

- Standard Preparation: Serially dilute (e.g., 10-fold or 3-fold dilutions) a standard of known concentration (e.g., plasmid DNA, synthetic oligonucleotide, or hybrid amplicon) [10] [12].

- qPCR Run: Amplify the standard dilutions and unknown samples in the same qPCR run.

- Generate Standard Curve: Plot the Ct values (y-axis) against the logarithm of the known starting concentrations (x-axis) [10].

- Assess Linearity and Efficiency: Determine the linear range of the assay (where data points fall on a straight line). Calculate the amplification efficiency using the slope of the standard curve [10].

- Quantify Unknowns: Use the standard curve equation (x = (Ct - b)/m) to calculate the starting concentration (x) of unknown samples from their Ct values [10].

Protocol 2: Correcting for Artifact Amplification Using Melting Curve Analysis

When non-specific products are amplified alongside the target, the quantitative result can be biased. This protocol allows for data correction [13].

- Perform qPCR with Melting Curve: Run the qPCR assay using a intercalating dye like SYBR Green I, ensuring a melting curve analysis is performed at the end of the cycling protocol.

- Identify Melting Peaks: Identify the melting peak(s) corresponding to the specific target product and the artifact(s).

- Apply Correction Model: The main assumptions for correction are:

- The melting peak of the correct product can be identified.

- The PCR efficiencies of all amplified products are similar.

- The relative size of the melting peaks reflects the relative concentrations of the products.

- Calculate Corrected Fluorescence: Determine the fraction of the total fluorescence associated with the correct product and use this to correct the quantification cycle (Cq) or the reported concentration [13].

Data Presentation

Table 1: Performance Comparison of Digital PCR Platforms for Copy Number Analysis

This table summarizes key metrics from a comparative study of two dPCR platforms, relevant for researchers considering high-precision copy number quantification [14].

| Parameter | QIAcuity One ndPCR | QX200 ddPCR |

|---|---|---|

| LOD (copies/µL input) | 0.39 | 0.17 |

| LOQ (copies/µL input) | 1.35 | 4.26 |

| LOQ (copies/reaction) | 54 | 85.2 |

| Dynamic Range (input used) | <0.5 to >3000 copies/µL | <0.5 to >3000 copies/µL |

| Precision (CV) with Oligos | 7% - 11% | 6% - 13% |

| Impact of Restriction Enzyme | Less affected by choice (EcoRI vs HaeIII) | Precision significantly improved with HaeIII vs. EcoRI |

| Average CV with HaeIII | < 14.6% | < 5% |

Table 2: Research Reagent Solutions for qPCR

A selection of key reagents and their functions in a qPCR workflow.

| Item | Function / Explanation |

|---|---|

| SYBR Green I Dye | Fluorescent dye that binds double-stranded DNA (dsDNA). A cost-effective option for detection, but requires melting curve analysis to confirm specificity [15]. |

| TaqMan Probes | Sequence-specific oligonucleotide probes that provide higher specificity than intercalating dyes. Rely on the 5' nuclease activity of Taq polymerase to separate a reporter dye from a quencher [15]. |

| Molecular Beacons | Hairpin-shaped probes that fluoresce only upon hybridization to the specific target sequence, reducing background signal [15]. |

| Restriction Enzymes (e.g., HaeIII) | Used in dPCR to digest genomic DNA and improve accessibility to tandemly repeated gene targets, thereby enhancing quantification precision [14]. |

| Hybrid Amplicon Standard | A synthetic DNA fragment containing target sequences (e.g., WPRE and RPP30). Serves as a consistent and well-defined reference control for assay validation [12]. |

Workflow Visualization

Standard Curve Workflow

Artifact Correction Workflow

Troubleshooting Guide

Common Digital PCR Experimental Issues and Solutions

Table 1: Troubleshooting Common dPCR Experimental Challenges

| Problem Area | Specific Issue | Possible Causes | Recommended Solutions |

|---|---|---|---|

| Template DNA | Poor integrity or purity [16] | DNA degradation during isolation; residual PCR inhibitors (phenol, EDTA) [16] | Minimize shearing during isolation; re-purify DNA with 70% ethanol wash [16] |

| Insufficient quantity [16] | Low input DNA concentration | Increase input DNA amount; use DNA polymerases with high sensitivity [16] | |

| Complex targets (GC-rich) [16] | Difficult-to-denature templates | Use PCR additives/co-solvents; increase denaturation time/temperature [16] | |

| Primers | Problematic design [16] | Non-specific binding; primer-dimer formation | Verify specificity; avoid complementary sequences at 3' ends; use design tools [16] |

| Suboptimal concentration [17] | Primer concentrations too high or low | Optimize concentrations (typically 0.1-1 μM); test "high" concentrations (900 nM) [17] | |

| Partition Analysis | "Rain" (intermediate fluorescence) [17] | Delayed PCR onset; partial inhibition; damaged droplets [17] | Optimize annealing/extension temperature; adjust oligonucleotide concentrations [17] |

| Poor separation of positive/negative clusters [17] | Suboptimal probe design or thermal cycling | Use objective separation value algorithms; optimize thermal cycler parameters [17] | |

| Reaction Setup | Suboptimal Mg2+ concentration [16] | Incorrect Mg2+ levels for polymerase | Optimize Mg2+ concentration; account for EDTA or high dNTPs [16] |

| Inappropriate polymerase [16] | Enzyme not suited for application | Use hot-start polymerases for specificity; high-processivity enzymes for complex targets [16] | |

| Quantification | Not in "digital range" [18] | Sample too concentrated | Sufficiently dilute sample so some partitions contain template and others do not [18] |

Frequently Asked Questions (FAQs)

1. What is the fundamental principle that enables dPCR to perform absolute quantification without a standard curve?

dPCR achieves absolute quantification by partitioning a PCR reaction into thousands of nanoscale reactions, so that each contains zero, one, or a few nucleic acid molecules. After amplification, the fraction of positive partitions is counted, and the original target concentration is calculated using Poisson distribution statistics. This partition-and-count method eliminates the need for a calibration curve required by qPCR [19] [20] [21].

2. How does dPCR compare to qPCR and other methods for copy number variation (CNV) analysis?

dPCR provides highly accurate and precise CNV analysis, with lower variability and higher resolution for detecting small fold changes (e.g., from five to six copies) compared to qPCR or microarray methods. It offers absolute quantification of target DNA with extremely high sensitivity, making it suitable for rare targets or precious samples [22].

3. What are the key advantages of droplet digital PCR (ddPCR) specifically?

ddPCR utilizes water-in-oil emulsion droplets to partition samples, generating millions of monodisperse droplets at high speeds. Key advantages include high sensitivity and specificity, absolute quantification without a standard curve, high reproducibility, good tolerance to PCR inhibitors, and high efficacy compared to conventional molecular methods [19].

4. My ddPCR results show a phenomenon called "rain." What is it and how can I minimize it?

"Rain" refers to droplets exhibiting fluorescence intensity between the clear positive and negative populations, which can hinder accurate threshold setting. It is often attributed to delayed PCR onset, partial PCR inhibition in individual droplets, or damaged droplets. To minimize rain, optimize annealing/extension temperature and oligonucleotide concentrations. Employ computer-based algorithms to evaluate assay performance and establish objective criteria for analysis [17].

5. How do I ensure my sample is in the optimal "digital range" for accurate quantification?

The sample must be sufficiently diluted so that some partitions contain template and others do not. If you run a chip with no sample at all, you are not in the digital range. Check that the threshold is set properly in the analysis software, and you may need to set it manually to ensure correct separation between positive and negative partitions [18].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Materials for dPCR Experiments

| Reagent/Material | Function | Application Notes |

|---|---|---|

| ddPCR Supermix for Probes | Provides optimized buffer, dNTPs, polymerase, and stabilizers for probe-based assays [17]. | Standard master mix for hydrolysis probe assays; ensures consistent droplet generation. |

| Hydrolysis Probes (e.g., FAM, HEX/VIC) | Sequence-specific fluorescent detection of amplified targets [17]. | FAM and HEX/VIC common for multiplexing; use HPLC-grade purification [17]. |

| Hot-Start DNA Polymerase | Reduces non-specific amplification by remaining inactive until high-temperature activation [16]. | Crucial for assay specificity; prevents primer-dimer formation during reaction setup. |

| Droplet Generator Cartridge | Microfluidic device for partitioning aqueous reaction mix into thousands of oil-encapsulated droplets [17]. | Creates monodisperse droplets; compatible with specific dPCR systems. |

| Surfactant-Containing Oil | Continuous phase for droplet generation; prevents droplet coalescence during thermal cycling [20]. | Essential for droplet stability, especially during harsh temperature variations of PCR protocol. |

| Mg2+ Solution (MgCl2 or MgSO4) | Cofactor for DNA polymerase activity; concentration critically affects reaction efficiency and specificity [16]. | Concentration requires optimization; check polymerase preference for salt type [16]. |

| PCR Additives (e.g., DMSO, GC Enhancer) | Assist in denaturing complex templates (GC-rich sequences, secondary structures) [16]. | Use lowest effective concentration; may require adjustment of annealing temperature. |

Experimental Workflow for Absolute Quantification

The following diagram illustrates the core workflow of a droplet digital PCR (ddPCR) experiment, from sample preparation to absolute quantification.

Optimized Protocol for Copy Number Variation Analysis

Methodology for Precise CNV Quantification

This protocol is adapted from validated approaches for analyzing copy number variations using digital PCR, particularly relevant for oncology research and genetic disease studies [22].

Sample Preparation:

- Extract genomic DNA using standardized methods (e.g., Maxwell 16 instrument) to minimize shearing and maintain integrity [17].

- For limited samples (<1000 cells), consider crude lysate preparation methods that eliminate DNA extraction steps to prevent target loss. Use lysis Buffer 2 from SuperScript IV CellsDirect cDNA Synthesis Kit with a viscosity breakdown step prior to droplet formation [23].

- Assess DNA purity spectrophotometrically (A260/A280 ratio ~1.8-2.0). Repurify if contaminants are suspected [16].

Reaction Setup:

- Prepare 20-22µL reactions containing:

- 1× ddPCR Supermix for Probes

- 900nM forward and reverse primers (optimized concentration)

- 250nM hydrolysis probes (FAM for target, HEX/VIC for reference gene)

- 5µL template DNA (adjust volume based on concentration)

- Thoroughly mix reaction components to eliminate density gradients [16].

- Include negative controls (water instead of template) and positive controls if available.

Droplet Generation and Thermal Cycling:

- Load reaction mixture into droplet generator cartridges according to manufacturer instructions.

- Generate droplets ensuring monodisperse size distribution (approximately 0.70nL volume) [23].

- Transfer droplets to 96-well PCR plate and seal properly.

- Amplify using the following thermal cycling conditions:

- Initial denaturation: 95°C for 10 minutes

- 40 cycles of:

- Denaturation: 95°C for 30 seconds

- Annealing/Extension: Optimize temperature (55-60°C) for 60 seconds [17]

- Final stabilization: 4°C hold

- Use a ramp rate of 2°C/second for all steps.

Data Acquisition and Analysis:

- Read plate on droplet reader to measure fluorescence in FAM and HEX/VIC channels.

- Analyze data using appropriate software (e.g., QuantaSoft, AnalysisSuite).

- Manually set thresholds if necessary to distinguish positive and negative droplet populations clearly.

- Apply Poisson statistics to calculate absolute copy numbers of both target and reference genes [19] [21].

- Calculate copy number variation using the formula: CNV = (Target copies/µL) / (Reference gene copies/µL)

Quality Control Measures:

- Ensure samples are in the "digital range" with sufficient negative partitions [18].

- Monitor for "rain" and apply correction algorithms if available [17].

- Run technical replicates to assess reproducibility.

- Validate assay performance using control samples with known copy numbers when available.

In copy number quantification research, the reliability of your results hinges on two fundamental performance metrics: the Limit of Detection (LOD) and Limit of Quantification (LOQ). The LOD defines the lowest concentration at which an analyte can be detected but not necessarily quantified, while the LOQ represents the lowest concentration that can be quantitatively measured with acceptable precision and accuracy [24]. Understanding and properly determining these limits is crucial for developing robust assays in gene therapy, cancer research, and environmental monitoring where precise copy number measurement is critical.

Frequently Asked Questions (FAQs)

What are the key differences between LOD and LOQ?

The distinction between these two limits lies in the level of confidence and data quality they provide:

| Feature | Limit of Detection (LOD) | Limit of Quantification (LOQ) |

|---|---|---|

| Definition | Lowest analyte concentration that can be detected | Lowest analyte concentration that can be quantified with acceptable precision and accuracy |

| Signal-to-Noise Ratio | Typically 2:1 to 3:1 [25] | Typically 10:1 [25] |

| Statistical Basis | Mean blank + 1.645 × SD blank (one-sided 95%) [24] | Mean blank + 10 × SD blank [24] |

| Regulatory Guidance | ICH Q2(R1) recommends visual evaluation, signal-to-noise, or statistical methods [26] | ICH Q2(R1) recommends similar approaches with stricter criteria [26] |

| Practical Implication | Answers "Is it there?" | Answers "How much is there?" |

How do I calculate LOD and LOQ for my qPCR/ddPCR experiments?

You can determine these limits using several established methods:

Based on standard deviation of the response and slope: This method uses the calibration curve according to the formulas: LOD = 3.3σ/S and LOQ = 10σ/S, where σ is the standard deviation of the response and S is the slope of the calibration curve [26]. The standard deviation can be derived from the standard error of the calibration curve obtained through regression analysis [26].

Based on signal-to-noise ratio: The LOD is typically set at a signal-to-noise ratio between 2:1 and 3:1, while LOQ uses a ratio of 10:1 [25]. This approach is particularly suitable for analytical methods that exhibit background noise.

Based on standard deviation of the blank: This method uses the mean and standard deviation of blank samples: LOD = Meanblank + 3.3 × SDblank and LOQ = Meanblank + 10 × SDblank [24].

Why does my assay show high variability near the limit of quantification?

High variability near the LOQ is a common challenge with several potential causes:

- Insufficient template copies: At low concentrations, stochastic effects in partitioning become more pronounced, especially in digital PCR platforms [14].

- Inhibition effects: PCR inhibitors present in samples disproportionately affect low-concentration targets [27].

- Platform-specific limitations: Different digital PCR systems show varying precision profiles, with some platforms exhibiting higher coefficients of variation (CV) at concentration extremes [14].

- Enzyme selection: Restriction enzyme choice can significantly impact precision, particularly for targets with tandem repeats [14].

How can I improve the precision of my copy number quantification assays?

Implement these strategies to enhance your assay precision:

- Optimize restriction enzymes: Studies show that enzyme selection (e.g., HaeIII vs. EcoRI) can dramatically improve precision, reducing CV values from >60% to <5% in some ddPCR applications [14].

- Increase technical replicates: Running multiple replicates of the same sample helps mitigate random variation and provides better estimate of true precision [28].

- Validate with appropriate controls: Use hybrid amplicons containing both target and reference sequences as quality controls for viral copy-number assays [12].

- Maintain proper technique: Ensure consistent pipetting, use passive reference dyes for normalization, and avoid exceeding 20% sample volume in PCR reactions to prevent optical mixing effects [28].

Experimental Protocols

Protocol 1: Determining LOD and LOQ Using Calibration Curve Method

This method is widely accepted for its scientific rigor in quantitative assays [26]:

Prepare calibration standards: Create a minimum of 5 concentrations spanning the expected range of your assay, including levels near the anticipated limits.

Run samples in replicates: Analyze each calibration level with at least 3-6 replicates to adequately capture variability.

Perform regression analysis: Use linear regression of the calibration curve to obtain the slope (S) and standard error (σ), which represents the standard deviation about the regression line.

Calculate limits: Apply the formulas LOD = 3.3σ/S and LOQ = 10σ/S.

Experimental validation: Prepare and analyze multiple samples (n ≥ 6) at the calculated LOD and LOQ concentrations to verify they meet performance criteria.

Protocol 2: Validating Copy Number Variation Using ddPCR

This protocol is adapted from studies demonstrating high concordance with pulsed field gel electrophoresis (PFGE) for CNV analysis [29]:

Assay design: Design primer-probe sets for both target and reference genes. Test multiple primer-probe combinations (typically 3) to select for optimal specificity and sensitivity [30].

Partitioning and amplification: Partition the PCR reaction into approximately 20,000 nanodroplets. Amplify using optimized cycling conditions: initial denaturation at 95°C for 10 minutes, followed by 40 cycles of 95°C for 15 seconds and 60°C for 30-60 seconds [27].

Droplet reading and analysis: Read positive and negative droplets using a droplet reader. Apply Poisson statistics to determine absolute copy numbers of target and reference sequences.

Copy number calculation: Calculate the copy number variation using the formula: CNV = 2 × (target copies/reference copies).

Precision assessment: Determine inter-assay and intra-assay precision by testing multiple replicates across different runs. Acceptable precision should typically show <10% CV for most applications [29].

Troubleshooting Guides

Problem: Inconsistent results between technical replicates

Possible causes and solutions:

- Cause: Pipetting inaccuracies, especially with viscous samples or detergent-containing buffers [28].

- Solution: Regularly calibrate pipettes, use tips that fit snugly, and pay special attention to minimum volume requirements. Visually confirm consistent liquid delivery across all wells [28].

- Cause: Inadequate mixing of sealed plates leading to optical anomalies [28].

- Solution: Centrifuge sealed plates to bring liquids to the bottom and remove trapped air bubbles. For sample volumes exceeding 20% of reaction volume, vortex sealed plates briefly to prevent "optical mixing" [28].

Problem: Poor assay sensitivity failing to detect low copy numbers

Possible causes and solutions:

- Cause: Excessive baseline noise masking low-level signals [25].

- Solution: Optimize detector settings rather than relying solely on mathematical smoothing, which can eliminate legitimate low-level signals [25].

- Cause: Suboptimal primer-probe design leading to inefficient amplification [27].

- Solution: Develop and test multiple primer-probe sets (typically three) using codon-optimized sequences that don't share homology with endogenous sequences [30].

- Cause: PCR inhibition from sample matrix components [27].

- Solution: Include matrix DNA in standard and QC samples during assay development to identify inhibition issues. Dilute samples or implement additional purification steps if needed.

Research Reagent Solutions

Essential materials for copy number quantification experiments:

| Reagent/Equipment | Function | Application Notes |

|---|---|---|

| TaqMan Probes | Sequence-specific detection with fluorescent reporters | Superior specificity vs. dye-based methods; enables multiplexing [27] |

| Restriction Enzymes (HaeIII) | Digest genomic DNA to improve target accessibility | Critical for precision in repetitive regions; HaeIII showed better precision than EcoRI in ddPCR [14] |

| Passive Reference Dye | Normalize for volume variations and optical anomalies | Improves precision by correcting for well-to-well volume differences [28] |

| Hybrid Amplicon Controls | Reference standards containing target and reference sequences | Alternative to plasmid/cell line controls for validating viral copy-number assays [12] |

| Digital PCR Systems | Absolute quantification via sample partitioning | Higher precision for CNV analysis vs. qPCR, especially at higher copy numbers [29] |

Workflow and Relationship Visualizations

LOD and LOQ Determination Workflow

LOD and LOQ Calculation Methods

The Impact of Genetic Complexity on Quantification Accuracy

Key Challenges in CNV Quantification

Accurate copy number quantification is compromised by several inherent sources of biological and technical complexity. The table below summarizes the primary challenges and their impacts on data accuracy.

| Challenge | Impact on Quantification Accuracy | Underlying Cause |

|---|---|---|

| Tumor Purity & Heterogeneity [31] [32] | Low tumor purity confounds CNA signals; subclonal populations create mixed read signals. | Mixtures of normal and tumor cells, plus multiple tumor subclones within a single sample. |

| Whole-Genome Duplication (WGD) [31] | Conflates estimates of tumor ploidy and purity, leading to incorrect absolute copy-number calls. | Doubling of the entire chromosome set, altering the baseline copy number. |

| Complex Structural Variants [32] | Detection performance varies significantly by CNV type (e.g., tandem vs. interspersed duplications). | Differences in the genomic architecture of deletions and duplications. |

| Technical Variation [32] | Accuracy is highly dependent on sequencing depth and the specific bioinformatics tool used. | Limitations of sequencing technologies and algorithmic approaches. |

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: My copy-number analysis from a bulk tumor sample shows ambiguous results. What could be the issue?

A: A common issue is the deconvolution of mixed signals from multiple cell populations. The observed read-depth ratio (RDR) and B-allele frequency (BAF) are a weighted average of the signals from normal cells and different tumor clones [31]. This problem is intensified by low tumor purity. For accurate, allele-specific copy-number calling from bulk samples, consider using multi-sample analysis tools like HATCHet, which jointly analyzes several tumor samples from the same patient to resolve these ambiguities [31].

Q2: How does tumor purity affect my CNV detection, and how can I account for it?

A: Tumor purity is a fundamental property that greatly impacts the accuracy and reliability of CNV detection [32]. In the absence of normal sample controls, low tumor purity can cause signal confounding, making true CNVs harder to distinguish from noise [32].

- Solution: Use tools that explicitly estimate and account for tumor purity and ploidy. For instance, a re-implementation of the ASCAT algorithm uses BAF and read counts, and can be strengthened by incorporating the variant-allele frequency of truncal mutations (e.g., TP53) for more robust purity/ploidy estimates [33]. The formula used is:

Purity = 2 / ((CN / VAF) - (CN - 2))[33].

Q3: I have identified a copy-number alteration, but the gene expression of the affected gene does not change. Why?

A: A high CNA does not necessarily translate directly to the gene expression level [33]. The activity of a gene at the mRNA level is regulated by multiple mechanisms beyond copy number, including DNA methylation, micro-RNAs, and other transcriptional and post-transcriptional controls. To identify genes whose expression is truly driven by CNAs, you can apply a Copy-Number Impact (CNI) analysis, which quantifies the degree to which a gene's expression is influenced by its underlying copy number [33].

Q4: Why do I get different CNV calls when using different detection tools on the same dataset?

A: Different tools employ distinct algorithms (e.g., read-depth, paired-end mapping, split-read) and have varying sensitivities to factors like variant length, sequencing depth, and tumor purity [32]. Comprehensive benchmarking studies show that no single method is superior in all scenarios [32]. It is good practice to:

- Benchmark Tools: Compare the performance of several tools on simulated data with known truth sets.

- Understand Strengths: Select a tool based on your specific context (e.g., a read-depth-based tool like CNVnator for WGS, or a combination-method tool like Manta for complex SVs) [32].

Experimental Protocols for Robust CNV Analysis

Protocol: A Multi-Sample, Joint CNV Inference Workflow Using HATCHet

Principle: Simultaneously analyzing multiple related samples (e.g., multi-region or longitudinal) resolves ambiguities that are unsolvable with single-sample analysis [31].

Detailed Workflow:

Input Data Preparation:

Global Clustering:

- Cluster RDRs and BAFs jointly across all samples from the same patient. This leverages the shared evolutionary history of the samples to identify consistent genomic segments [31].

Estimation of Fractional Copy Numbers:

- Infer the allele-specific fractional copy numbers (the average copy number in the mixed sample) for each segment. HATCHet performs this step twice, under assumptions of both the presence and absence of a Whole-Genome Duplication (WGD), to create two alternative models [31].

Copy-Number Deconvolution via Matrix Factorization:

- Solve the problem of factoring the fractional copy-number matrices into two components: the clone-specific copy-number matrices (A, B) and the matrix of clone proportions (U) across samples [31].

- Key Step: Apply constraints during factorization, such as a maximum copy number and a minimum clone proportion, to ensure a biologically plausible solution [31].

Model Selection:

- Select the final solution (including the number of clones and the occurrence of WGD) using a model-selection criterion that balances the fit to the data with model complexity [31].

Diagram 1: HATCHet multi-sample CNV analysis workflow.

Protocol: Assessing the Functional Impact of CNAs on Gene Expression

Principle: Quantify the degree to which a gene's expression is regulated by its copy-number alteration, distinguishing CNA-driven genes from those regulated by other mechanisms [33].

Detailed Workflow:

Data Acquisition:

- Obtain matched whole-genome sequencing (WGS) and RNA-seq data from the same set of tumor samples.

- Critical Preprocessing: Decompose bulk RNA-seq data into its cellular components (e.g., using a tool like PRISM) to extract cancer-cell-specific expression profiles. This ensures that expression data is matched to the tumor cell genetics from WGS [33].

Copy-Number and Expression Profiling:

- Perform absolute copy-number estimation from WGS data (e.g., using an ASCAT-based method) [33].

- Quantify gene expression levels from the processed RNA-seq data.

Copy-Number Impact (CNI) Modeling:

- Fit a statistical model (e.g., a Poisson model) that relates the absolute copy number of a gene to its expression level across all samples [33].

- Calculate a gene-specific CNI value, which quantifies the strength of this relationship.

Pathway-Level Analysis:

- Aggregate gene-level CNI scores to the pathway level to identify biological processes most strongly influenced by CNAs [33].

Diagram 2: Assessing functional CNA impact on expression.

The Scientist's Toolkit: Research Reagent Solutions

The table below catalogs key bioinformatics tools and resources essential for accurate CNV quantification and interpretation.

| Tool Name | Primary Function | Key Application Note |

|---|---|---|

| HATCHet [31] | Joint inference of allele-specific CNAs & WGD from multiple samples. | Resolves subclonal copy-number heterogeneity; superior in multi-sample scenarios. |

| ASCAT [33] | Estimates tumor purity, ploidy, and allele-specific CNAs. | Robust method; can be enhanced with TP53 VAF for better ploidy selection [33]. |

| CNVkit [32] | Read-depth-based CNV detection for WGS and targeted sequencing. | Widely used; good overall performance in benchmarking studies [32]. |

| Control-FREEC [32] | Detects CNVs and calculates BAF from NGS data. | Can operate without a matched normal sample. |

| Manta [32] | SV and CNA caller using paired-end and split-read evidence. | Effective at detecting a range of complex structural variants. |

| GATK [33] | CNV segmentation and calling workflow. | Part of a comprehensive suite; follows established best practices. |

| PRISM [33] | Decomposes bulk RNA-seq into cell-type-specific expression profiles. | Crucial for matching expression data to tumor genetics in heterogeneous samples. |

| AION [34] | Automated CNV prioritization & classification using ACMG/ClinGen guidelines. | Aids in clinical interpretation and pathogenicity assessment of detected CNVs. |

Advanced Methodologies: Implementing dPCR and Novel Approaches for Precision Analysis

Digital PCR (dPCR) is a third-generation PCR technology that enables absolute quantification of nucleic acids without the need for a standard curve [20]. It works by partitioning a PCR reaction into thousands of individual reactions, so that each partition contains either 0, 1, or a few nucleic acid targets. After endpoint amplification, the target concentration is computed using Poisson statistics based on the fraction of positive partitions [20]. This technology offers powerful advantages including high sensitivity, absolute quantification, high accuracy, and reproducibility, making it particularly valuable for copy number quantification research [20].

Two major partitioning methods have emerged: droplet-based dPCR (ddPCR) which uses water-in-oil emulsion droplets, and nanoplate-based dPCR which uses microchambers embedded in a solid chip [20] [35]. Understanding the differences between these platforms is crucial for researchers seeking to optimize precision in their quantification experiments.

Platform Comparison: Technical Specifications

The choice between nanoplate and droplet-based systems involves multiple technical considerations that directly impact experimental outcomes, particularly for precision-focused copy number quantification research.

Table 1: Technical Comparison of dPCR Platforms

| Feature | Nanoplate-Based dPCR | Droplet-Based dPCR (ddPCR) |

|---|---|---|

| Partitioning Method | Microchambers in solid chip [20] | Water-in-oil emulsion droplets [20] |

| Number of Partitions | 8,500 - 26,000 per well [35] | Up to 20,000 (standard) to millions (specialized) [35] |

| Partition Volume | ~10 nL [35] | 10 - 100 pL [35] |

| Workflow Integration | Integrated instrument (partitioning, thermocycling, imaging) [35] | Multiple instruments (generator, thermocycler, reader) [35] |

| Multiplexing Capability | Up to 5-plex reported [35] | Typically 2-4 colors [35] |

| Throughput | 312-1,248 reactions/run [35] | 480 reactions/5 plates [35] |

| Sample Turnaround Time | ~2 hours for complete run [35] | Up to 21 hours for 480 samples [35] |

| Risk of Contamination | Lower (closed system) [36] | Higher (multiple transfer steps) [35] |

| Partition Uniformity | High (fixed geometry) [35] | Variable (droplet size variation) [35] |

Table 2: Performance Comparison in Research Applications

| Application | Nanoplate Performance | Droplet Performance | Evidence |

|---|---|---|---|

| DNA Methylation Analysis | Specificity: 99.62%Sensitivity: 99.08% [37] | Specificity: 100%Sensitivity: 98.03% [37] | Strong correlation between methods (r=0.954) [37] |

| Viral Detection (HAdV) | LoD: 0.95 cp/μl [36] | Comparable sensitivity for low copy numbers | Both suitable for low viral load detection [36] |

| Dynamic Range | 770.4 to 0.9476 cp/μl [36] | ~4 orders of magnitude [38] | Nanoplate shows excellent linearity (r²=0.9986) [36] |

| Inhibitor Tolerance | Moderate | High due to partitioning [38] | ddPCR may perform better with complex samples [38] |

Frequently Asked Questions (FAQs)

Q1: Which platform offers better precision for low copy number quantification? Both platforms demonstrate excellent sensitivity, with studies showing comparable performance for low copy number detection. Recent research on DNA methylation analysis found both technologies achieved sensitivities >98% and specificities >99% [37]. The precision at very low copy numbers (<10 copies/μl) may be marginally better with droplet systems due to higher partition numbers, but nanoplate systems provide sufficient sensitivity for most clinical applications [36].

Q2: How does workflow complexity differ between platforms? Nanoplate systems offer a significant advantage in workflow simplicity. The process involves pipetting the reaction mix directly into the nanoplate, with subsequent partitioning, thermocycling, and imaging occurring within a single integrated instrument [35]. In contrast, droplet systems require multiple instruments: a droplet generator, a conventional thermocycler, and a droplet reader, with transfer steps between each that increase hands-on time and contamination risk [35].

Q3: What are the cost considerations for each platform? While specific pricing varies by manufacturer and region, nanoplate systems generally have higher per-sample consumable costs but lower operational costs due to reduced hands-on time and training requirements. Droplet systems may have lower reagent costs but require more expensive instrumentation and specialized training [35]. The total cost of ownership should factor in throughput needs, with nanoplate systems being more cost-effective for high-throughput laboratories [35].

Q4: Can I transfer assays between platforms easily? Assay transfer requires re-optimization due to differences in chemistry, partition volumes, and thermal cycling conditions. The primer and probe sequences may remain the same, but concentration optimization is typically necessary. Proprietary master mixes with precise formulations are required for each platform, making direct transfer challenging [38].

Q5: Which platform is better for multiplex applications? Nanoplate systems currently offer advantages for higher-order multiplexing, with some systems supporting up to 5-plex detection [35]. This capability is valuable for complex applications like cancer biomarker panels or pathogen detection where multiple targets need simultaneous quantification. Droplet systems typically support 2-4 colors, limiting multiplexing complexity [35].

Troubleshooting Guides

Common Experimental Issues and Solutions

Table 3: Troubleshooting Guide for dPCR Experiments

| Problem | Possible Causes | Solutions |

|---|---|---|

| Low Precision/High Variation | - Insufficient partitions- Uneven partition volume- Poor reaction mix homogeneity | - Ensure adequate partition number- Verify partition quality- Mix reagents thoroughly before partitioning [16] |

| Inconsistent Results Between Replicates | - Pipetting errors- Partition instability- Temperature gradients | - Use calibrated pipettes and proper technique- Check droplet stability (ddPCR) or chip integrity (nanoplate)- Verify thermal cycler calibration [39] |

| Unexpected Negative Partitions | - Inhibitors in sample- Suboptimal primer design- Poor amplification efficiency | - Purify template DNA to remove inhibitors [16]- Verify primer specificity and optimize concentrations [39]- Adjust annealing temperature and cycling conditions [16] |

| High Background or False Positives | - Non-specific amplification- Probe degradation- Contamination | - Optimize annealing temperature [39]- Prepare fresh probe solutions- Implement strict contamination controls [39] |

| Rain Effect (Droplet Systems Only) | - Damaged droplets- Non-specific amplification- Irregular droplet size | - Optimize surfactant concentration- Increase annealing temperature- Ensure proper droplet generation [35] |

Platform-Specific Troubleshooting

For Droplet-Based Systems:

- Droplet Coalescence: Ensure proper oil-surfactant combination and avoid temperature fluctuations during handling [35]

- Low Droplet Yield: Check droplet generator nozzles for clogs and verify aqueous:oil flow rate ratios

- Poor Data Quality from Rain: Optimize template amount and thermal cycling conditions to reduce intermediate amplification [35]

For Nanoplate-Based Systems:

- Incomplete Partition Filling: Verify proper loading technique and check for air bubbles in wells

- Imaging Issues: Ensure plate surface cleanliness and verify camera focus calibration

- Cross-Contamination Between Wells: Check seal integrity and avoid overfilling wells

Experimental Protocols for Platform Evaluation

Protocol for Method Validation and Comparison

When evaluating dPCR platforms for copy number quantification research, proper experimental design is crucial. The following protocol outlines a comprehensive approach for platform comparison:

Sample Preparation:

- Use standardized reference material with known concentration

- Prepare serial dilutions covering the expected dynamic range (e.g., 10⁶ to 10⁰ copies/μl)

- Include biological replicates (n≥3) and technical replicates (n≥3) for each concentration

- Use the same master mix composition adjusted for each platform's requirements

Data Collection Parameters:

- Record the number of partitions generated for each reaction

- Document fluorescence amplitude and separation between positive/negative populations

- Calculate precision (CV%) across replicates for each concentration level

- Assess accuracy by comparing measured concentration to expected values

Analysis Methodology:

- Apply Poisson correction for all concentration calculations

- Use appropriate statistical tests to compare platform performance (e.g., Bland-Altman analysis, linear regression)

- Evaluate limit of detection (LoD) and limit of quantification (LoQ) using established guidelines [36]

Protocol for Optimal Assay Design

Regardless of platform selection, these core principles ensure robust dPCR assay performance:

Primer and Probe Design:

- Design amplicons of 60-100 bp for optimal amplification efficiency

- Verify target specificity using BLAST or similar tools [36]

- For methylation-specific applications, design primers to target bisulfite-converted sequences [40]

- Test multiple primer-probe combinations to identify optimal performance

Reaction Optimization:

- Titrate primer and probe concentrations (typically 0.1-1 μM for primers) [16]

- Optimize annealing temperature using gradient PCR if available [35]

- Validate assay performance with positive and negative controls

- Establish acceptance criteria for partition fluorescence separation

Essential Research Reagent Solutions

Table 4: Key Reagents for dPCR Experiments

| Reagent Category | Specific Examples | Function | Optimization Tips |

|---|---|---|---|

| Polymerase Enzymes | Hot-start DNA polymerases, High-fidelity enzymes | Catalyze DNA amplification with reduced nonspecific products | Use hot-start enzymes to prevent primer-dimer formation [16] |

| Master Mix Components | dNTPs, Mg²⁺, Buffers, Stabilizers | Provide optimal chemical environment for amplification | Optimize Mg²⁺ concentration (typically 1-5 mM) for each assay [39] |

| Probe Systems | Hydrolysis probes (FAM, HEX, etc.), EvaGreen dye | Enable specific target detection and multiplexing | Avoid repeated freeze-thaw cycles; protect from light [16] |

| Partitioning Reagents | Surfactants (ddPCR), Surface treatments (nanoplate) | Enable stable partition formation and maintenance | Use fresh surfactant solutions; verify proper concentration [35] |

| Sample Preparation Kits | cfDNA extraction kits, Bisulfite conversion kits | Isolate and prepare nucleic acids for detection | Follow manufacturer instructions precisely for consistent yield [40] |

| Reference Materials | Synthetic oligonucleotides, Certified reference standards | Enable assay validation and quantification | Use standards traceable to international reference systems |

The choice between nanoplate-based and droplet-based dPCR systems depends on specific application requirements and laboratory constraints. Nanoplate systems offer advantages in workflow simplicity, reduced contamination risk, and higher multiplexing capabilities, making them suitable for high-throughput laboratories and clinical applications requiring rapid turnaround [35]. Droplet systems provide superior partition numbers and established protocols, benefiting applications requiring maximum sensitivity and laboratories with established droplet workflows [37].

For precision in copy number quantification research, both platforms demonstrate excellent performance when properly optimized and validated. Recent comparative studies show strong correlation between measurements obtained from both platforms (r=0.954) [37], suggesting that proper assay validation and optimization may be more important than the specific platform choice. Researchers should consider their specific needs for throughput, multiplexing, sensitivity, and workflow integration when selecting the most appropriate dPCR platform for their copy number quantification research.

Quantitative PCR (qPCR) and digital PCR (dPCR) are fundamental techniques for quantifying DNA copy number variations, plasmid copy numbers in bacteria, and viral vector copies in gene therapy products. [41] [42] [43] These methods enable researchers to measure gene dosage effects, monitor segregational stability of plasmids during fermentation processes, and support biodistribution and safety studies for cell and gene therapies. [42] [43] The precision of these assays hinges on robust primer and probe design, appropriate validation methodologies, and careful troubleshooting of experimental parameters. This technical support center addresses common challenges and provides detailed protocols to enhance reproducibility and accuracy in copy number quantification research.

Primer and Probe Design Fundamentals

Core Design Principles

What are the critical parameters for designing qPCR primers? Well-designed primers are essential for specific and efficient amplification. Follow these evidence-based guidelines: [41] [43]

- GC Content and Length: Aim for 50-60% GC content with primer lengths of 18-24 base pairs. [41]

- Amplicon Length: Ideal amplification products should be 75-200 base pairs, as shorter fragments typically amplify with higher efficiency. [41]

- Sequence Specificity: Ensure primers are unique to the target sequence and do not contain repetitive elements (>4 repeats of single bases) or polymorphisms at annealing sites. [41] Always verify specificity using tools like NCBI's BLAST.

- Structural Considerations: Avoid regions with secondary structures or direct repeats that may cause misalignment. [16]

- 3' End Design: Ensure primers do not contain consecutive G or C nucleotides at the 3' ends to prevent primer-dimer formation. [16]

How should hydrolysis probes (like TaqMan) be designed for optimal performance? For probe-based detection systems, which offer greater specificity than intercalating dyes, follow these design rules: [41]

- Melting Temperature (Tm): The probe Tm should be 5-10°C higher than the primer Tm. [41]

- Length and Composition: Design probes <30 nucleotides with a GC content of 30-80%. The probe should anneal to the strand that has more Gs than Cs. [41]

- 5' End Consideration: Avoid guanine (G) at the 5' end as it can quench fluorescence signal even after hydrolysis. [41]

- Specificity Enhancement: For transgene detection, target the junction between the transgene and neighboring vector components (e.g., promoter/5' or 3' untranslated regions) to distinguish vector-derived sequences from endogenous counterparts. [43]

Design Strategy and Validation

What strategic approach should I take when designing primers and probes for a new assay? Adopt a systematic design and screening process: [43]

- Utilize Design Software: Leverage tools like PrimerQuest (IDT), Primer Express, Geneious, or Primer3 with customized PCR parameters rather than relying solely on default settings. [43]

- Empirical Testing: Design and test at least 3 primer and probe sets since in silico predictions don't always translate to actual performance. [43]

- Specificity Verification: Use NCBI's Primer Blast for preliminary specificity assessment, then confirm empirically in genomic DNA or total RNA from naïve host tissues. [43]

- Cross-Species Validation: If applicable, screen candidate primers in target tissues/biofluids from all species planned for non-clinical studies, plus human materials. [43]

- Platform Considerations: Primers and probes functioning well in qPCR typically work in dPCR, though dPCR may tolerate slightly suboptimal PCR efficiency better. [43]

Experimental Protocols and Validation

qPCR Protocol for Copy Number Analysis

What is a standardized protocol for qPCR-based copy number quantification? This protocol adapts established methodologies for robust CNV analysis: [41]

Materials:

- Samples: Genomic DNA from test and control samples

- DNA Isolation Kit: (e.g., Puregene reagents, Gentra Systems)

- Reaction Mix: 2X SYBR mix containing enzyme, buffer, dNTPs (e.g., Roche Molecular Biochemicals)

- Primers: For gene of interest and reference gene

- Equipment: Robotic liquid handling equipment, qPCR-compatible 96-well plates, optical plate seals, Nanodrop ND-1000, centrifuge with microtiter plate rotor, LightCycler instrument

Procedure:

- DNA Preparation: Isolate DNA and dissolve in 100-500 μl TE buffer (10mM Tris/0.1mM EDTA pH 8). [41]

- Quantification: Measure DNA concentration by spectrophotometry and dilute to working concentration (e.g., 20 ng/μl). [41]

- Primer Dilution: Dilute PCR primers to final concentration of 0.2-1 μM. [41]

- Reaction Setup:

- Total reaction volume: 10-50 μl

- Genomic DNA: 6-50 ng (commonly 7.5 ng for 10 μl reaction)

- Primers: 10 pmol of each primer

- SYBR Green I master mix: 1 μl (Roche)

- Hybridization probes: 2 pmol of each (if using probe-based detection) [41]

- Plate Preparation: Load triplicates of standard curves, control DNA samples, and experimental samples for both internal control gene and gene of interest on the same plate. [41]

- Sealing and Centrifugation: Seal plate tightly and briefly centrifuge at 500×g to ensure all liquid is at well bottom and remove bubbles. [41]

- PCR Amplification:

- Use manufacturer's default protocol or custom protocol (e.g., 95°C for 10 min followed by 35 cycles of 95°C for 15 s, 58°C for 5 s, 72°C for 25 s, with fluorescent detection at 76°C for 1 s). [41]

- Data Collection: Record the cycle number at which each well crosses the fluorescence threshold (Ct value). [41]

- Data Analysis:

Figure 1: qPCR Workflow for Copy Number Analysis

Digital PCR Validation Protocol

How can I implement digital PCR for precise copy number quantification? Digital PCR provides absolute quantification without standard curves and offers enhanced precision for copy number analysis: [14] [12]

Materials:

- dPCR System: QX200 droplet digital PCR (Bio-Rad) or QIAcuity One nanoplate digital PCR (QIAGEN)

- Restriction Enzymes: HaeIII or EcoRI for improved DNA accessibility

- Master Mix: Platform-specific reaction mix

- Reference Materials: Synthetic oligonucleotides or hybrid amplicons as quantification standards

Procedure:

- Template Preparation:

- Reaction Assembly:

- Prepare reaction mix according to platform specifications

- Include reference amplicon containing both target and reference sequences (e.g., WPRE-RPP30 hybrid amplicon) as quality control [12]

- Partitioning:

- For ddPCR: Generate approximately 20,000 droplets per sample

- For ndPCR: Partition into nanoscale chambers [14]

- Amplification: Perform end-point PCR with platform-optimized thermal cycling conditions

- Signal Detection:

- For ddPCR: Scan droplets with laser to detect fluorescence

- For ndPCR: Image entire nanoplate for fluorescence reading [14]

- Quantification Analysis:

Troubleshooting Common Experimental Issues

PCR Performance Problems

What should I do when my qPCR assay shows poor amplification efficiency? Poor efficiency can stem from multiple factors. Consult this troubleshooting guide: [16]

| Problem Area | Possible Causes | Recommended Solutions |

|---|---|---|

| DNA Template | Poor integrity or purity; insufficient quantity | Minimize shearing during isolation; re-purify to remove inhibitors (phenol, EDTA); increase input amount; evaluate integrity by gel electrophoresis [16] |

| Primers | Problematic design; insufficient quantity; degradation | Redesign primers avoiding repeats and secondary structures; optimize concentration (0.1-1 μM); use fresh aliquots [16] |

| Reaction Components | Inappropriate DNA polymerase; insufficient Mg2+; excess additives | Use hot-start polymerases; optimize Mg2+ concentration; reduce concentration of additives (DMSO, formamide) [16] |

| Thermal Cycling | Suboptimal temperatures or cycle times | Optimize denaturation time/temperature; adjust annealing temperature in 1-2°C increments; extend extension time for long targets [16] |

How can I address nonspecific amplification in my copy number assays? Nonspecific products compromise quantification accuracy. Implement these solutions: [16]

- Primer Design: Ensure primers are specific to target with minimal homology to other regions; avoid complementary sequences at 3' ends [16]

- Hot-Start Enzymes: Use polymerases with hot-start technology to prevent nonspecific amplification during reaction setup [16]

- Annealing Optimization: Increase annealing temperature (typically 3-5°C below primer Tm); use gradient cycler for optimization; consider touchdown PCR [16]

- Mg2+ Concentration: Reduce Mg2+ concentration to prevent nonspecific products [16]

- Cycle Number: Reduce number of cycles to prevent accumulation of nonspecific amplicons [16]

Data Quality and Interpretation Issues

Why do my copy number results fall between integers (e.g., 1.3) making interpretation difficult? Non-integer copy number values represent a common challenge with several potential causes: [41]

- Biological Factors: Somatic mosaicism in samples can produce intermediate values [41]

- Technical Variability: Irreproducible DNA isolation, suboptimal reaction efficiency, or poor precision in measurements [41] [42]

- Data Analysis Approach: Using relative quantification without proper efficiency correction [42]

Solutions:

- Run samples in triplicate and include replication to improve reliability [41]

- Use whole cells as template source to avoid DNA isolation variability [42]

- Apply relative quantification methods that account for different amplification efficiencies of target and reference amplicons [42]

- Consider digital PCR for absolute quantification without standard curves [14]

How can I improve the reproducibility of my plasmid copy number determinations during fermentation processes? Fermentation monitoring presents unique challenges addressed by these methods: [42]

- Sample Treatment: Immediately heat-treat whole cells at 95°C for 10 minutes prior to storage at -20°C to preserve nucleic acid quantity and quality [42]

- Template Source: Use minimally processed whole cells instead of purified DNA to avoid extraction variability [42]

- Calculation Method: Apply relative quantification that considers different amplification efficiencies for chromosomal and plasmid amplicons [42]

- Dynamic Range: Ensure quantification range of 2 log units (100 to 10,000 bacteria per well) to cover all fermentation time points [42]

Validation Parameters and Acceptance Criteria

What validation parameters should I establish for regulated bioanalysis of cell and gene therapies? For regulated environments, implement comprehensive validation testing: [43]

Table: Key Validation Parameters for PCR-Based Copy Number Assays

| Validation Parameter | Assessment Method | Recommended Acceptance Criteria |

|---|---|---|

| Accuracy and Bias | Comparison of measured vs. expected values in reference materials | % Recovery within established limits (e.g., 80-120%) [12] |

| Precision | Repeatability (within-run) and intermediate precision (between-run) | Coefficient of variation (CV) ≤ 25% for LLOQ, ≤ 20% for other levels [43] |

| Limit of Quantification (LOQ) | Lowest concentration with acceptable accuracy and precision | CV ≤ 25% and % recovery within 80-120% [43] |

| Linearity and Range | Series of dilutions across expected concentration range | R² ≥ 0.98 [14] |

| Specificity | Amplification in presence of potentially interfering substances | No significant impact on quantification [43] |

| Robustness | Deliberate variations in method parameters | CV within acceptable precision limits [12] |

Research Reagent Solutions

Table: Essential Materials for Copy Number Quantification Assays

| Reagent/Equipment | Function | Considerations |

|---|---|---|

| SYBR Green Master Mix | Intercalating dye for qPCR detection | Cost-effective; requires melting curve analysis to verify specificity [41] |

| Hydrolysis Probes (TaqMan) | Sequence-specific detection with fluorescent reporter | Higher specificity; enables multiplexing; more expensive [41] [43] |

| Hot-Start DNA Polymerases | Enzymes with reduced activity at room temperature | Improve specificity by preventing primer-dimer formation [16] |

| Restriction Enzymes (HaeIII) | Digest genomic DNA to improve target accessibility | Enhances precision, especially for ddPCR and complex templates [14] |

| Reference Assays (e.g., RNase P) | Endogenous reference for normalization | Should have known copy number and stable expression [44] |

| Hybrid Amplicon Standards | Synthetic reference materials for validation | Contain both target and reference sequences; useful for quality control [12] |

Figure 2: Primer/Probe Development and Validation Workflow

Platform Selection Guide

When should I choose dPCR over qPCR for copy number quantification? Digital PCR offers advantages in specific scenarios: [14]

- Required Precision: dPCR demonstrates higher precision (lower CV values), particularly beneficial for detecting small fold-changes [14]

- Inhibition Tolerance: dPCR is less susceptible to PCR inhibitors common in environmental and complex biological samples [14]

- Low Abundance Targets: dPCR shows superior sensitivity for targets present at very low copy numbers [14]

- Absolute Quantification: dPCR provides direct absolute quantification without standard curves [14]

Limitations of dPCR:

- Dynamic range is similar to qPCR [14]

- Higher cost per sample than qPCR [41] [14]

- Requires platform-specific optimization and validation [43] [14]

Comparative Performance: In platform comparisons, the QX200 ddPCR system showed LOD of 0.17 copies/μL and LOQ of 4.26 copies/μL, while the QIAcuity One ndPCR system demonstrated LOD of 0.39 copies/μL and LOQ of 1.35 copies/μL. [14]

Technical Support Center

Frequently Asked Questions (FAQs)

1. What is the primary advantage of allele-specific dPCR over the classic dPCR approach for copy number analysis? The primary advantage is enhanced precision and sensitivity, particularly for detecting copy number (CN) alterations below 4.6 and in samples with significant heterogeneity, such as liquid biopsies or formalin-fixed paraffin-embedded (FFPE) specimens. The SNP-based method can detect a CN of 2.1 in approximately 75% of experiments, a significant improvement over the ~40% detection rate of the classic approach [45].

2. When should I use the classic dPCR approach instead of the allele-specific method? The classic approach remains a valid choice when a stable and reliable genomic reference locus has been identified and validated. Both methods perform equally well under ideal conditions, but the classic approach can fail if the reference locus itself is unstable in the sample being tested [46].

3. My dPCR results show high variability. What could be the cause? High variability can stem from several factors related to sample quality and reaction setup. Key areas to investigate are:

- Template Integrity: Degraded DNA can lead to smeared results. Assess DNA integrity by gel electrophoresis and ensure it is stored in molecular-grade water or TE buffer [16].

- Template Purity: Residual PCR inhibitors like phenol, EDTA, or salts can inhibit the polymerase. Re-purify your DNA or use polymerases with high inhibitor tolerance [16].

- Reaction Non-homogeneity: Ensure all reagent stocks and prepared reactions are mixed thoroughly to eliminate density gradients formed during storage and setup [16].

4. How can I improve the precision of my dPCR measurements? Precision can be improved by:

- Platform and Enzyme Choice: Some digital PCR platforms demonstrate higher precision with specific restriction enzymes. For instance, one study found higher precision using HaeIII instead of EcoRI, especially for a droplet-based system [14].

- Optimizing Additives: The use of PCR additives or co-solvents (e.g., GC Enhancer) can help denature difficult templates, but their concentration should be optimized as excess can reduce precision [16].

5. What are the critical steps in designing primers for allele-specific dPCR? The core principle is to design primers where the 3' terminal nucleotide is complementary to the allele-specific variant you wish to detect. A mismatch at the 3' end will refractory to primer extension under optimized conditions. To enhance specificity, you can also engineer an additional mismatch at the nucleotide immediately 5' of the variant site [47].

Troubleshooting Guides

Problem 1: Low or No Amplification Yield

| Possible Cause | Recommendations & Solutions |

|---|---|

| Insufficient Template Quality/Quantity | - Check DNA integrity by gel electrophoresis. [16]- Use a spectrophotometer to check the 260/280 ratio (≥1.8 for pure DNA) and confirm the absence of inhibitors. [48]- Increase the amount of input DNA template or the number of PCR cycles. [16] |

| Suboptimal Thermal Cycling Conditions | - Optimize the annealing temperature in 1–2°C increments. The optimal temperature is typically 3–5°C below the lowest primer Tm. [16]- Ensure the denaturation temperature and time are sufficient to fully separate double-stranded DNA, especially for GC-rich targets. [16] |

| Primer-Related Issues | - Verify primer design: length (18-30 nt), GC content (40-60%), and Tm of primer pairs within 5°C of each other. [48]- Optimize primer concentration, typically between 0.1–1 µM. [16] |

Problem 2: Multiple or Non-Specific Products

| Possible Cause | Recommendations & Solutions |

|---|---|

| Low Annealing Specificity | - Increase the annealing temperature stepwise to improve stringency. [16] [48]- Shorten the annealing time to minimize binding to non-specific sequences. [16] |

| Excess Reaction Components | - Lower the primer concentration to reduce primer-dimer formation and non-specific annealing. [16]- Review and optimize Mg2+ concentration, as excess Mg2+ can promote non-specific amplification. [16] |

| Inappropriate Polymerase | - Use a hot-start DNA polymerase to suppress enzyme activity during reaction setup, thereby eliminating non-specific amplification at lower temperatures. [16] |

Problem 3: Inaccurate Copy Number Quantification

| Possible Cause | Recommendations & Solutions |

|---|---|

| Suboptimal dPCR Setup | - Ensure proper partitioning. The limits of detection and quantification vary by platform. Know your system's LOD/LOQ. [14]- Use a restriction enzyme to digest the DNA, which can improve the accessibility of the target sequence and increase precision. [14] |

| High Reaction Fidelity Issues | - Use DNA polymerases with high fidelity for applications requiring accurate sequencing or cloning. [16]- Ensure dNTP concentrations are balanced, as unbalanced nucleotides increase the error rate. [16]- Reduce the number of PCR cycles if possible, as high cycle numbers increase misincorporation. [16] |

Experimental Protocols

Protocol 1: Implementing SNP-Based dPCR for Copy Number Alteration