Evaluating Feature Selection Methods: A Comprehensive Guide for Biomedical Data Analysis and Drug Discovery

This article provides a comprehensive framework for evaluating feature selection methods, tailored for researchers and professionals in drug development and biomedical sciences.

Evaluating Feature Selection Methods: A Comprehensive Guide for Biomedical Data Analysis and Drug Discovery

Abstract

This article provides a comprehensive framework for evaluating feature selection methods, tailored for researchers and professionals in drug development and biomedical sciences. It explores the foundational principles of feature selection, details the three primary methodological categories (filter, wrapper, and embedded methods), and addresses critical troubleshooting and optimization strategies for high-dimensional biological data. The guide further presents rigorous validation and comparative benchmarking approaches, drawing on recent studies in drug response prediction and single-cell RNA sequencing to illustrate performance evaluation across diverse biomedical applications. The content synthesizes key insights to enhance model interpretability, predictive accuracy, and computational efficiency in precision medicine initiatives.

Why Feature Selection Matters: Foundational Concepts and Challenges in Biomedical Data

The Critical Role of Feature Selection in High-Dimensional Biomedical Data

High-dimensional biomedical datasets, characterized by a vast number of features relative to sample size, present significant challenges for analysis in fields such as disease diagnostics, biomarker discovery, and drug development. The curse of dimensionality can lead to overfitting, increased computational complexity, and reduced model interpretability [1] [2]. Feature selection (FS) has emerged as a critical preprocessing step that addresses these challenges by identifying and retaining the most informative features while eliminating irrelevant or redundant ones [3].

This guide provides an objective comparison of feature selection methodologies, evaluating their performance across various biomedical applications. By synthesizing experimental data from recent studies, we aim to offer researchers and drug development professionals evidence-based guidance for selecting appropriate FS techniques to enhance model accuracy, stability, and clinical relevance.

Comparative Performance of Feature Selection Methods

Classification Accuracy and Feature Reduction

Experimental comparisons across multiple biomedical datasets reveal significant performance differences among feature selection methods. The following table summarizes results from controlled benchmarking studies:

Table 1: Performance Comparison of Feature Selection Methods on Biomedical Datasets

| Feature Selection Method | Dataset | Classification Accuracy (%) | Feature Reduction (%) | Classifier Used |

|---|---|---|---|---|

| BF-SFLA [4] | High-dimensional biomedical data | Significant improvement reported | Not specified | K-NN, C4.5 Decision Tree |

| TMGWO-SVM [2] | Wisconsin Breast Cancer | 96.0 | Not specified | SVM |

| Ensemble FS (Waterfall) [3] | BioVRSea (Biosignal) | F1-score maintained/increased by up to 10% | >50 | SVM, Random Forest |

| Ensemble FS (Waterfall) [3] | SinPain (Medical Imaging) | F1-score maintained/increased by up to 10% | >50 | SVM, Random Forest |

| DR-RPMODE [5] | 16 classification datasets | Outperformed 7 comparison algorithms | Significant reduction achieved | K-NN |

| Embedded Methods (RFI, RFE) [6] | CWRU Bearing, NASA Battery | >98.4 F1-score | ~33 (to 10 features) | SVM, LSTM |

The Two-phase Mutation Grey Wolf Optimization (TMGWO) hybrid approach demonstrated superior performance in feature selection and classification accuracy compared to other experimental methods, achieving 96% accuracy on the Breast Cancer dataset using only 4 features [2]. Similarly, the BF-SFLA (Bacterial Foraging-Shuffled Frog Leaping Algorithm) obtained better feature subsets and improved classification accuracy compared to improved genetic algorithms, particle swarm optimization, and the basic shuffled frog leaping algorithm [4].

Stability and Robustness Metrics

Stability—the robustness of feature selection to perturbations in training data—is crucial for biomarker discovery. The Adjusted Stability Measure (ASM) accounts for chance selection and provides a more reliable assessment than unadjusted measures [7]:

Table 2: Stability Performance of Classifier-Based Feature Selection Methods

| Feature Selection Method | Average Features Selected | Adjusted Stability (ASM) | Unadjusted Stability (USM) |

|---|---|---|---|

| Support Vector Machine (SVM) | 38 | ~0.25 | ~0.52 |

| Logistic Regression (LR) | 32 | ~0.20 | ~0.48 |

| Naïve Bayes (NB) | 54 | ~0.05 | ~0.68 |

The data demonstrates that Naïve Bayes, while appearing more stable according to unadjusted measures, actually performs worse when correction for chance is applied, primarily due to its selection of larger feature subsets [7]. This highlights the importance of using appropriate stability metrics that account for random selection effects.

Experimental Protocols and Methodologies

Benchmarking Frameworks and Evaluation Metrics

A comprehensive Python framework for benchmarking feature selection algorithms evaluates multiple performance aspects [1]:

- Selection Accuracy: Measures how effectively relevant features are chosen

- Stability: Assesses consistency of selected features under data variations

- Prediction Performance: Evaluates impact on classifier performance

- Computational Efficiency: Measures algorithm runtime requirements

- Redundancy: Quantifies correlation among selected features

The framework employs multiple datasets from domains such as gene expression in cancer patients and hemogram examination data from COVID-19 patients, ensuring robust evaluation across diverse biomedical contexts [1].

Ensemble Feature Selection for Healthcare Data

Recent research introduced a scalable ensemble feature selection strategy for multi-biometric healthcare datasets [3]. The methodology employs a two-stage approach:

- Tree-based feature ranking to initially assess feature importance

- Greedy backward feature elimination to refine the feature subset

The resulting subsets are combined using a specific merging strategy to produce a single set of clinically relevant features. This "waterfall selection" approach demonstrated effective dimensionality reduction, achieving over 50% decrease in feature subsets while maintaining or improving classification metrics when tested with Support Vector Machine and Random Forest models [3].

Workflow for High-Dimensional Feature Selection

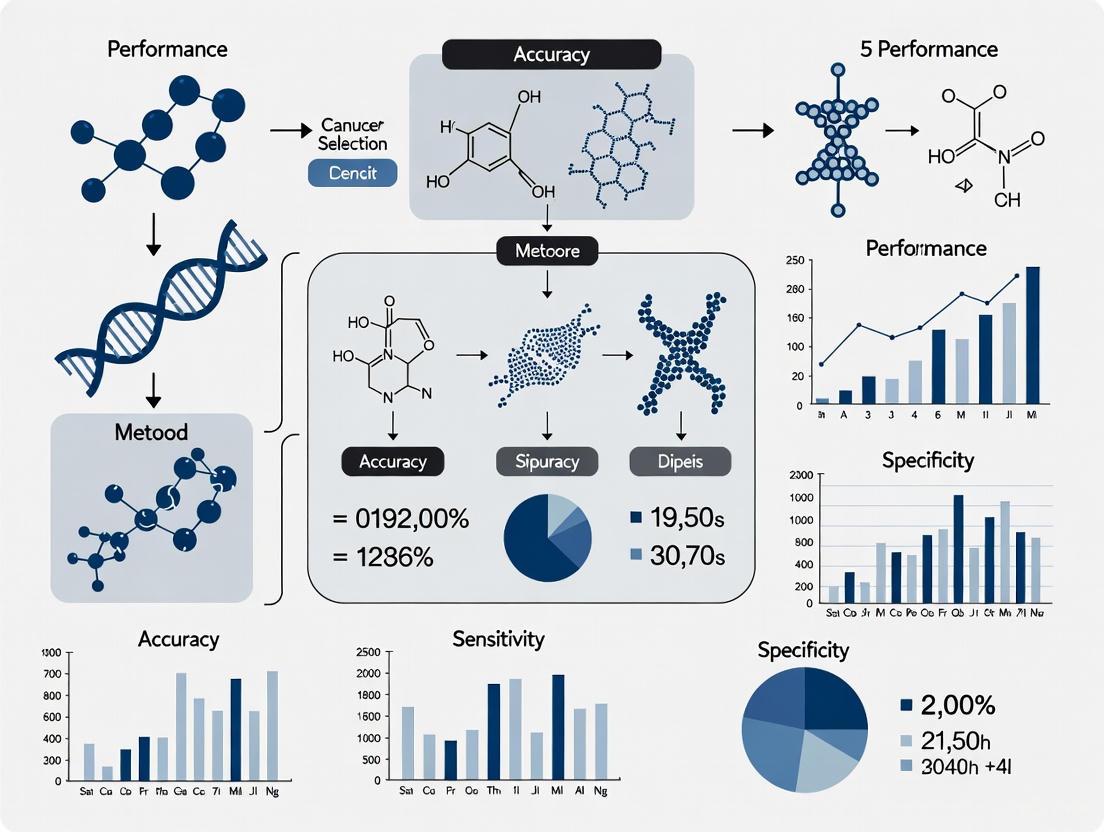

The following diagram illustrates the typical experimental workflow for high-dimensional feature selection in biomedical research:

Advanced Feature Selection Algorithms

Multi-Objective Evolutionary Approaches

The DR-RPMODE algorithm addresses high-dimensional feature selection through a hybrid approach combining fast dimensionality reduction with multi-objective differential evolution [5]. The method consists of two key phases:

- DR Phase: Uses freezing and activation operators to remove irrelevant and redundant features

- RPMODE Phase: Implements multi-objective differential evolution with redundant and preference processing

Experimental results on 16 classification datasets demonstrate that DR-RPMODE outperforms comparison algorithms, with advantages becoming more pronounced as data dimensionality increases [5].

Hybrid Nature-Inspired Algorithms

BF-SFLA improves upon the basic shuffled frog leaping algorithm by introducing chemokine operation and balanced grouping strategies, which maintain balance between global optimization and local optimization while reducing the possibility of the algorithm falling into local optima [4]. This approach is particularly effective for high-dimensional biomedical data containing many irrelevant or weakly correlated features that impact disease diagnosis efficiency.

Domain-Specific Applications

Single-Cell RNA Sequencing Data

Feature selection critically affects performance in scRNA-seq data integration and querying [8]. Benchmarking studies reveal that:

- Highly variable feature selection remains effective for producing high-quality integrations

- The number of selected features significantly impacts integration quality

- Batch-aware feature selection methods improve integration when dealing with data from multiple sources

- Feature selection interacts with integration models, affecting downstream analysis including query mapping and label transfer

Industrial Fault Diagnosis with Biomedical Parallels

While not strictly biomedical, research on industrial fault classification provides valuable insights for biomedical signal processing [6]. Embedded feature selection methods like Random Forest Importance (RFI) and Recursive Feature Elimination (RFE) achieved exceptional performance (average F1-score exceeding 98.40%) using only 10 selected features from time-domain sensor data. These approaches show potential for adaptation to biomedical signal processing applications such as EEG and EMG analysis.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Feature Selection Research

| Tool/Resource | Function | Application Context |

|---|---|---|

| Python Benchmarking Framework [1] | Comprehensive evaluation of FS algorithms | General biomedical data analysis |

| scikit-feature Repository [5] | Provides benchmark datasets and algorithms | Method development and testing |

| WEKA [7] | Implementation of classifier-based FS | Stability analysis and method comparison |

| R Boruta Package [9] | Random forest-based variable selection | Regression modeling of continuous outcomes |

| aorsf R Package [9] | Oblique random forest feature selection | High-dimensional continuous outcome data |

| Open Problems in Single-cell Analysis [8] | Benchmarking platform for scRNA-seq | Single-cell data integration and mapping |

Feature selection plays a critical role in overcoming the challenges posed by high-dimensional biomedical data. Experimental evidence demonstrates that advanced methods, particularly hybrid evolutionary approaches and ensemble techniques, consistently outperform traditional feature selection algorithms in terms of classification accuracy, feature reduction, and model interpretability.

The optimal choice of feature selection method depends on specific data characteristics and analytical goals. For knowledge discovery tasks such as biomarker identification, stability becomes as important as accuracy. Researchers should consider the interplay between feature selection, classifier choice, and domain-specific requirements when designing analytical workflows for biomedical data analysis.

Future research directions include developing more scalable algorithms for ultra-high-dimensional data, improving method stability without sacrificing accuracy, and creating standardized benchmarking frameworks specific to biomedical applications.

Feature selection represents a critical preprocessing step in machine learning pipelines, particularly within scientific research domains where high-dimensional data is prevalent. The core objectives driving feature selection implementation include enhancing model performance, improving computational efficiency, and increasing model interpretability—all essential considerations for researchers, scientists, and drug development professionals working with complex biological and chemical datasets [10] [11]. By strategically reducing the feature space to only the most relevant variables, feature selection methods help mitigate the curse of dimensionality, reduce overfitting, decrease training times, and yield more parsimonious models that are easier to interpret and explain to stakeholders [12] [11].

The theoretical foundation of feature selection rests on its ability to address the challenges inherent in high-dimensional data analysis. As the number of features increases, data points grow more distant within the model space, creating sparse regions that make pattern recognition more difficult for machine learning algorithms [11]. This phenomenon, known as the curse of dimensionality, can severely impair model performance unless addressed through techniques like feature selection or additional data collection [2]. For scientific researchers dealing with -omics data, high-throughput screening results, or complex clinical datasets, feature selection provides a methodological approach to isolate the most biologically or chemically significant variables from thousands of potential candidates.

Methodological Framework: Categories of Feature Selection Techniques

Feature selection methods can be broadly categorized into three distinct approaches—filter, wrapper, and embedded methods—each with characteristic mechanisms, strengths, and limitations. Understanding these methodological categories is essential for selecting appropriate techniques for specific research contexts and data characteristics.

Filter Methods

Filter methods employ statistical measures to evaluate the relevance of features independently of any specific machine learning algorithm [10] [11]. These techniques assess the relationship between each input variable and the target variable using statistical tests such as correlation coefficients, chi-square tests, or information gain [12] [11]. The primary advantage of filter methods lies in their computational efficiency and model independence, making them particularly suitable for high-dimensional datasets during preliminary feature screening [10]. However, their univariate nature means they may overlook interactions between features and fail to account for algorithm-specific characteristics [10].

Common filter techniques include:

- Pearson's correlation coefficient: Measures linear relationships between continuous variables [11] [13]

- Chi-square test: Assesses independence between categorical variables [11]

- ANOVA (Analysis of Variance): Determines whether different feature values affect the target variable [11]

- Mutual information: Captures both linear and non-linear dependencies between variables [11]

- Variance threshold: Removes features with variability below a specified threshold [11]

Wrapper Methods

Wrapper methods evaluate feature subsets by training a specific machine learning algorithm and assessing its performance using metrics such as accuracy or F1-score [10] [12]. These approaches employ search strategies to explore the feature space, making them computationally intensive but often yielding superior performance for the specific algorithm employed [10]. The greedy nature of wrapper methods allows them to capture feature interactions but carries an increased risk of overfitting, particularly with limited samples [10].

Prominent wrapper approaches include:

- Forward selection: Iteratively adds features until performance no longer improves [11]

- Backward elimination: Starts with all features and removes the least important ones sequentially [11]

- Recursive Feature Elimination (RFE): Eliminates features based on their relative importance rankings [12] [11]

- Exhaustive feature selection: Tests all possible feature combinations to identify the optimal subset [11]

Embedded Methods

Embedded methods integrate feature selection directly into the model training process, offering a balanced approach that combines the efficiency of filter methods with the performance-oriented nature of wrapper methods [10] [12]. These techniques leverage the intrinsic properties of algorithms to perform feature selection during model construction, often through regularization mechanisms or importance scoring [11]. Tree-based models, for instance, naturally provide feature importance scores based on how much they reduce impurity across all trees in the ensemble [11].

Key embedded techniques include:

- LASSO regression (L1 regularization): Adds a penalty term to the loss function that drives less important feature coefficients toward zero [11]

- Random Forest importance: Uses Gini impurity or information gain across multiple decision trees to rank feature relevance [11]

- Gradient boosting: Sequentially builds predictors that correct previous errors, highlighting influential features [11]

- SelectFromModel: Meta-transformer that selects features based on importance weights from any estimator [12]

Hybrid and Advanced Approaches

Recent methodological advances have introduced hybrid frameworks that combine elements from multiple feature selection paradigms. These approaches aim to leverage the complementary strengths of different techniques while mitigating their individual limitations [2]. Particularly promising are hybrid metaheuristic algorithms that optimize feature subsets using nature-inspired computation, such as the Two-phase Mutation Grey Wolf Optimization (TMGWO), Improved Salp Swarm Algorithm (ISSA), and Binary Black Particle Swarm Optimization (BBPSO) [2]. These sophisticated methods have demonstrated remarkable performance in high-dimensional classification tasks, achieving accuracy improvements of up to 18.62% compared to baseline approaches across various datasets [2].

Figure 1: Methodological Workflow of Feature Selection Techniques

Comparative Performance Analysis

The efficacy of feature selection methods varies significantly across datasets, problem domains, and evaluation metrics. This section presents empirical comparisons based on recent scientific studies to provide objective performance assessments.

Performance Across Methodological Categories

Comprehensive evaluations across diverse domains reveal distinct performance patterns among the three primary feature selection categories. In IoT intrusion detection scenarios, filter methods employing feature subset selection (FSS) approaches such as Correlation-based Feature Selection (CFS) demonstrated particular effectiveness, achieving F1 scores above 0.99 while reducing feature dimensionality by over 60% [14]. These methods outperformed both filter feature ranking (FFR) techniques, which sometimes selected correlated attributes, and wrapper approaches, which exhibited lengthy execution times despite producing algorithm-specific optimizations [14].

In environmental forecasting applications, research comparing multiple feature selection methods for predicting carbon dioxide emissions found that hybrid approaches integrating filter methods (Pearson correlation), wrapper methods (sequential forward/backward selection), and embedded methods (LASSO regression) significantly enhanced model performance despite small sample sizes [13]. The integration of feature selection with extreme gradient boosting (XGBoost) produced superior results under Gaussian noise conditions, outperforming both statistical models (ridge regression, NGBM) and deep learning approaches (LSTM) in terms of mean squared error and mean absolute percentage error metrics [13].

Hybrid Method Performance in High-Dimensional Classification

Recent advances in hybrid feature selection methods have demonstrated remarkable performance in high-dimensional classification tasks, particularly in biomedical domains. As shown in Table 1, the Two-phase Mutation Grey Wolf Optimization (TMGWO) algorithm combined with Support Vector Machines achieved 96% classification accuracy on the Wisconsin Breast Cancer Diagnostic dataset using only 4 features, outperforming both traditional methods and recent Transformer-based approaches like TabNet (94.7%) and FS-BERT (95.3%) [2].

Table 1: Performance Comparison of Hybrid Feature Selection Methods on Benchmark Datasets

| Method | Dataset | Accuracy | Precision | Recall | Features Selected |

|---|---|---|---|---|---|

| TMGWO-SVM | Breast Cancer (Wisconsin) | 96.0% | 95.8% | 96.2% | 4 |

| ISSA-KNN | Breast Cancer (Wisconsin) | 94.5% | 94.2% | 94.8% | 5 |

| BBPSO-RF | Breast Cancer (Wisconsin) | 95.2% | 95.0% | 95.4% | 6 |

| TabNet (Transformer) | Breast Cancer (Wisconsin) | 94.7% | 94.5% | 95.0% | 8 |

| FS-BERT (Transformer) | Breast Cancer (Wisconsin) | 95.3% | 95.1% | 95.5% | 7 |

| TMGWO-MLP | Differentiated Thyroid Cancer | 93.8% | 93.5% | 94.1% | 5 |

| ISSA-LR | Sonar Dataset | 89.7% | 89.3% | 90.1% | 12 |

The performance advantages of hybrid methods extend beyond simple accuracy metrics. The TMGWO approach incorporates a two-phase mutation strategy that enhances the balance between exploration and exploitation during the feature selection process [2]. Similarly, the Improved Salp Swarm Algorithm (ISSA) integrates adaptive inertia weights, elite salps, and local search techniques to boost convergence accuracy, while Binary Black Particle Swarm Optimization (BBPSO) streamlines the PSO framework through a velocity-free mechanism that preserves global search efficiency while improving computational performance [2].

Computational Efficiency Comparison

Computational requirements represent a critical consideration in feature selection, particularly for resource-constrained environments or large-scale datasets. Filter methods consistently demonstrate superior computational efficiency due to their statistical nature and model independence [10] [11]. Wrapper methods, while often producing optimized feature subsets for specific algorithms, incur significant computational overhead from repeated model training and validation cycles [10] [14]. Embedded methods strike a balance between these extremes, offering algorithm-specific optimization without the exhaustive search procedures of wrapper methods [11].

In practical applications, the computational advantages of filter methods make them particularly suitable for initial feature screening in high-dimensional domains, while wrapper and embedded methods prove more effective during later optimization stages where model performance outweighs efficiency concerns [14]. This efficiency-performance tradeoff necessitates careful consideration based on specific research constraints and objectives.

Table 2: Methodological Tradeoffs in Feature Selection Techniques

| Method Category | Computational Efficiency | Model Performance | Risk of Overfitting | Interpretability |

|---|---|---|---|---|

| Filter Methods | High | Moderate | Low | High |

| Wrapper Methods | Low | High | Moderate to High | Moderate |

| Embedded Methods | Moderate | High | Moderate | Moderate |

| Hybrid Methods | Variable | Very High | Low with proper validation | Moderate |

Experimental Protocols and Validation Frameworks

Robust experimental design is essential for meaningful evaluation of feature selection methods. This section outlines standard protocols and validation methodologies employed in rigorous feature selection research.

Standard Experimental Protocol

A comprehensive feature selection evaluation framework typically incorporates the following methodological components:

Dataset Selection and Partitioning: Experiments should utilize multiple benchmark datasets with varying characteristics (dimensionality, sample size, feature types) to ensure generalizable conclusions. The Wisconsin Breast Cancer Diagnostic dataset, Sonar dataset, and Differentiated Thyroid Cancer recurrence dataset represent examples of commonly employed benchmarks [2]. Standard practice involves partitioning data into training, validation, and test sets, often employing k-fold cross-validation (typically k=10) to mitigate sampling bias [2].

Performance Metric Selection: Multiple evaluation metrics provide complementary insights into method performance. Common classification metrics include accuracy, precision, recall, F1-score, and area under the ROC curve [2] [14]. For regression tasks, mean squared error, mean absolute error, and R-squared values are frequently employed [13].

Baseline Establishment: Comparative analyses must include appropriate baselines, such as performance without feature selection, performance with established feature selection methods, and recent state-of-the-art approaches [2].

Statistical Validation: Significance testing (e.g., paired t-tests, ANOVA) should accompany performance comparisons to ensure observed differences are statistically significant rather than random variations [13].

Robustness Assessment: Introducing noise (e.g., Gaussian noise) to datasets provides valuable insights into method stability and generalization capability [13]. Similarly, testing performance across different training-test splits assesses robustness to data sampling variations.

Validation in Resource-Constrained Scenarios

For research applications involving small sample sizes or limited computational resources, specialized validation protocols are necessary. Studies focusing on small-sample scenarios, such as Taiwan's CO₂ emissions prediction, employ data augmentation techniques and rigorous cross-validation schemes to ensure reliable performance estimation despite limited data [13]. In such contexts, feature selection becomes particularly critical to prevent overfitting and enhance model generalizability.

Computational efficiency validation should include measurements of training time, inference time, and memory requirements under standardized hardware configurations [14]. For embedded or IoT applications, these efficiency metrics may outweigh marginal accuracy improvements when making method selection decisions.

Figure 2: Experimental Validation Framework for Feature Selection Methods

Research Reagents and Computational Tools

Implementation of feature selection methods requires both computational tools and methodological frameworks. The following table outlines essential "research reagents" for conducting rigorous feature selection experiments.

Table 3: Essential Research Reagents for Feature Selection Experiments

| Tool Category | Specific Tools/Libraries | Primary Function | Application Context |

|---|---|---|---|

| Python Libraries | Scikit-learn, SciPy, NumPy | Implementation of filter, wrapper, and embedded methods | General-purpose feature selection |

| Specialized FS Frameworks | MLxtend, Feature-engine | Advanced wrapper and hybrid methods | Research requiring custom FS pipelines |

| Benchmark Datasets | Wisconsin Breast Cancer, Sonar, UCI Repository | Method evaluation and benchmarking | Comparative performance studies |

| Metaheuristic Libraries | Custom implementations (TMGWO, ISSA, BBPSO) | Nature-inspired optimization for feature selection | High-dimensional problem domains |

| Statistical Analysis Tools | StatsModels, R Statistical Environment | Significance testing and result validation | Experimental validation phase |

| Visualization Tools | Matplotlib, Seaborn, Graphviz | Result interpretation and workflow presentation | Results communication and reporting |

This comparative evaluation demonstrates that feature selection method performance is highly context-dependent, with different approaches excelling under specific data characteristics and research objectives. Filter methods provide computational efficiency and interpretability, wrapper methods offer performance optimization for specific algorithms, embedded methods balance efficiency with performance, and hybrid methods push performance boundaries in high-dimensional domains.

The empirical evidence indicates that while traditional methods remain relevant for many applications, emerging hybrid approaches show particular promise for complex scientific domains. The TMGWO algorithm's ability to achieve 96% accuracy with only 4 features on the Breast Cancer dataset exemplifies this potential [2]. Similarly, the integration of multiple feature selection approaches in environmental forecasting demonstrates how methodological synergy can enhance performance even with limited samples [13].

Future research directions should focus on developing more adaptive feature selection methods that automatically adjust to dataset characteristics, enhancing method scalability for ultra-high-dimensional domains, and improving integration with deep learning architectures. Additionally, standardized benchmarking platforms and evaluation protocols would facilitate more reproducible comparisons across studies. For drug development professionals and scientific researchers, these advances will continue to enhance the extract actionable insights from complex high-dimensional data while maintaining computational feasibility and interpretability.

Navigating the Curse of Dimensionality in Genomics and Transcriptomics Data

In the era of high-throughput sequencing, genomics and transcriptomics datasets routinely encompass tens of thousands of features—from genes to genetic variants—creating unprecedented analytical challenges. The curse of dimensionality (COD) represents a fundamental obstacle where the immense number of features causes data sparsity, computational inefficiency, and impaired statistical power. This phenomenon is particularly acute in single-cell RNA sequencing (scRNA-seq) data, where technical noise combines with high dimensionality to obscure true biological signals [15]. Feature selection and dimensionality reduction techniques have emerged as critical computational strategies to overcome these limitations, enabling researchers to extract meaningful biological insights from complex omics data.

Understanding the Curse of Dimensionality in Omics Data

The curse of dimensionality manifests through several distinct statistical problems in high-dimensional omics data. In scRNA-seq data, which typically exceeds 10,000 genes per cell, COD causes three primary issues: loss of closeness (COD1), where distance metrics become unreliable; inconsistency of statistics (COD2), where variance measures fail to converge; and inconsistency of principal components (COD3), where technical noise overwhelms biological signal [15]. These problems fundamentally compromise downstream analyses, including clustering, differential expression testing, and trajectory inference.

Technical noise in scRNA-seq data arises from multiple sources, including low detection rates (approximately 1-60% of the transcriptome, with an average of <10%), random dropouts, and amplification biases [15]. This noise accumulates across thousands of features, creating a dimensionality problem that conventional normalization alone cannot resolve. The resulting data sparsity impedes the identification of true cell-type clusters and transitional states, ultimately limiting the biological insights attainable from large-scale sequencing experiments.

Comparative Analysis of Dimensionality Reduction Techniques

Principal Component Analysis (PCA)

PCA stands as the most widely used linear dimensionality reduction method, identifying orthogonal principal components that capture maximum variance in the data. The algorithm involves standardization of input variables, covariance matrix computation, eigenvector decomposition, and projection of data onto the principal components [16]. While computationally efficient and easily interpretable, PCA assumes linear relationships and may miss complex nonlinear structures in omics data. Its performance is particularly affected by the curse of dimensionality, as technical noise can dominate the leading principal components [15].

Manifold Learning Techniques

Nonlinear manifold learning methods have gained prominence for visualizing and analyzing complex omics data:

- t-Distributed Stochastic Neighbor Embedding (t-SNE): Converts similarities between data points to joint probabilities and minimizes divergence between different spaces, excelling at revealing cluster structures [16].

- Uniform Manifold Approximation and Projection (UMAP): Balances preservation of local and global data structures with superior speed and scalability compared to t-SNE [16].

- Isomap (Isometric Mapping): Extends classical multidimensional scaling by incorporating geodesic distances, effectively preserving global properties when the manifold is isometric to Euclidean space [16].

Feature Selection Approaches

Unlike feature projection, feature selection methods retain original features while selecting informative subsets:

- Filter Methods: Use statistical measures independent of machine learning models, including low-variance filters, correlation-based selection, and significance testing [16].

- Wrapper Methods: Evaluate different feature subsets to find optimal combinations, though computationally intensive [16].

- Embedded Methods: Integrate feature selection within model training, such as LASSO regularization or Random Forest importance scores [16].

Table 1: Comparison of Dimensionality Reduction Techniques

| Technique | Type | Key Advantages | Limitations | Best Suited Applications |

|---|---|---|---|---|

| PCA | Linear | Computationally efficient, preserves global structure | Assumes linearity, sensitive to scaling | Initial exploratory analysis, noise reduction |

| t-SNE | Nonlinear | Excellent cluster separation, intuitive visualization | Computational intensity, perplexity tuning | Cell type identification, cluster visualization |

| UMAP | Nonlinear | Preserves local/global structure, faster than t-SNE | Parameter sensitivity, less established | Large dataset integration, trajectory inference |

| Highly Variable Genes | Feature Selection | Biological interpretability, computational efficiency | May miss subtle patterns, batch effects | Reference atlas construction, differential expression |

Benchmarking Performance and Stability

Recent comprehensive benchmarks reveal critical insights into feature selection performance. A 2024 analysis of feature selection methods for scRNA-seq data integration demonstrated that Highly Variable Genes (HVG) selection, particularly the scanpy implementation of the Seurat algorithm, consistently produces high-quality integrations and effective query mapping [8]. The study evaluated over 20 feature selection methods across five metric categories: batch effect removal, conservation of biological variation, query-to-reference mapping, label transfer quality, and detection of unseen populations.

Stability—the consistency of feature selection under data perturbations—varies significantly across methods. Filter methods generally offer greater stability due to their statistical foundation, while wrapper methods may achieve higher accuracy at the cost of reduced stability. The development of specialized frameworks for benchmarking feature selection algorithms has enabled more rigorous comparisons of these trade-offs [1].

Table 2: Performance Metrics for Feature Selection Methods in scRNA-seq Integration

| Metric Category | Specific Metrics | High-Performing Methods | Key Findings |

|---|---|---|---|

| Integration (Batch) | Batch PCR, CMS, iLISI | Highly Variable Genes | Batch-aware selection improves integration quality |

| Integration (Bio) | bNMI, cLISI, ldfDiff | Lineage-specific features | Biological conservation requires specialized selection |

| Query Mapping | Cell distance, mLISI, qLISI | HVG with 2,000 features | Larger feature sets improve mapping precision |

| Unseen Populations | Milo, Unseen distance | Balanced feature selection | Detection requires preserving rare population signals |

Experimental Protocols for Method Evaluation

Benchmarking Framework Design

Robust evaluation of feature selection methods requires a structured approach. The Python framework proposed by Barbieri et al. provides a modular system for comparing algorithms across multiple dimensions: selection accuracy, redundancy, prediction performance, stability, reliability, and computational efficiency [1]. This framework employs multiple datasets with known ground truths to assess method performance under controlled conditions.

Metric Selection and Validation

Effective benchmarking depends on appropriate metric selection. A 2025 Nature Methods study established a rigorous metric selection process to profile evaluation measures before comparative analysis [8]. This involves:

- Assessing metric score ranges using random and highly variable feature sets

- Evaluating correlations with technical dataset features

- Identifying orthogonal metrics to avoid redundancy

- Establishing baseline performance using control methods

Data Processing Workflow

A standardized preprocessing and analysis pipeline ensures comparable results:

Experimental workflow for evaluating feature selection methods

Advanced Solutions and Emerging Approaches

RECODE: Resolution of the Curse of Dimensionality

RECODE represents a novel approach specifically designed to resolve COD in scRNA-seq data with unique molecular identifiers (UMIs). Unlike imputation methods that attempt to recover missing values, RECODE employs noise reduction based on random sampling theory without dimension reduction [15]. This parameter-free, deterministic algorithm recovers expression values for all genes, including lowly expressed genes, enabling precise delineation of cell fate transitions and identification of rare cell populations with complete gene information.

Multi-Omics Data Integration

Dimension reduction techniques have evolved to address the challenges of integrating multiple data types. Methods like Multiple Co-Inertia Analysis (MCIA) enable simultaneous exploratory analysis of diverse omics datasets, identifying linear relationships that explain correlated structures across data types [17]. These approaches can reveal biological insights obscured when analyzing single data types independently, such as connecting genetic variants to expression changes and pathway alterations.

Commercial Bioinformatics Platforms

Integrated bioinformatics suites like Partek Flow provide user-friendly implementations of dimensionality reduction techniques, including PCA, t-SNE, and UMAP, making these methods accessible to researchers without advanced computational expertise [18]. These platforms offer standardized workflows for analyzing diverse data types, from bulk RNA-Seq to single-cell and spatial transcriptomics, facilitating reproducible research.

Research Reagent Solutions

Table 3: Essential Tools for Genomics and Transcriptomics Data Analysis

| Tool/Platform | Type | Primary Function | Applications |

|---|---|---|---|

| Partek Flow | Commercial Platform | Visual analysis of multiomic data | Bulk RNA-Seq, scRNA-seq, spatial transcriptomics |

| Scanpy | Python Library | Single-cell analysis toolkit | HVG selection, clustering, trajectory inference |

| Seurat | R Package | Single-cell genomics analysis | Integration, visualization, multimodal data |

| RECODE | Algorithm | Noise reduction for high-dimensional data | Resolving COD in scRNA-seq with UMIs |

| MCIA | Algorithm | Multivariate data integration | Multi-omics exploratory analysis |

The curse of dimensionality remains a significant challenge in genomics and transcriptomics, but a diverse arsenal of computational strategies continues to evolve. No single approach universally outperforms others across all scenarios—the optimal method depends on specific data characteristics, analytical goals, and computational constraints. Highly variable feature selection provides a robust default strategy for many single-cell applications, while specialized methods like RECODE offer powerful alternatives for specific data types. As multi-omics integration becomes increasingly central to biological discovery, developing and benchmarking dimensionality reduction techniques will remain crucial for extracting meaningful patterns from increasingly complex and high-dimensional data.

In the realm of modern biomedical research, particularly in drug development and diagnostic innovation, the explosion of high-dimensional data presents both unprecedented opportunities and formidable challenges. The proliferation of omics technologies, high-content screening, and biomedical imaging has enabled researchers to collect millions of features from individual samples. However, this wealth of data is often contaminated with irrelevant features, redundant variables, and inherent biological noise that can obscure meaningful signals and lead to overfitting, reduced model performance, and misleading biological interpretations. The core challenge lies in distinguishing true biological signals from the confounding noise that permeates experimental data, a task that requires sophisticated feature selection methodologies.

The curse of dimensionality is particularly acute in biomedical contexts where sample sizes are often limited due to cost, ethical, or practical constraints, yet feature dimensions can reach into the tens or hundreds of thousands. This imbalance exacerbates the risk of identifying spurious correlations that fail to validate in subsequent experiments. Furthermore, biological noise—stemming from stochastic molecular events, measurement artifacts, and individual heterogeneity—creates additional layers of complexity that must be addressed through robust computational approaches. This guide systematically compares feature selection strategies designed to overcome these challenges, providing researchers with evidence-based guidance for selecting appropriate methods for their specific research contexts.

Comparative Analysis of Feature Selection Performance

Rigorous benchmarking studies provide crucial insights into how different feature selection approaches perform under various biological scenarios. The following analysis synthesizes findings from multiple recent studies to offer a comprehensive performance comparison.

Table 1: Benchmarking Performance of Feature Selection Methods Across Biological Datasets

| Feature Selection Method | Classification Accuracy Range | Optimal Feature Reduction | Biological Validation Rate | Computational Efficiency | Key Strengths |

|---|---|---|---|---|---|

| Hybrid Sequential (HSFS) [19] | 96.5-99.8% | 42,334 to 58 features (99.86% reduction) | 100% (ddPCR confirmed) | Moderate | Exceptional biomarker identification; validated biological relevance |

| Embedded Methods (RFI, RFE) [6] | >98.4% (F1-score) | 15 to 10 features (33% reduction) | Industrial validation | High | Robust performance; reduced computational complexity |

| Highly Variable Genes [8] | Varies by metric | 2,000 features recommended | scRNA-seq benchmarked | High | Effective for single-cell data integration |

| Multi-Model Super-Feature [20] | >99% | Not specified | FTIR spectral validation | Low | Superior predictive accuracy; enhanced interpretability |

| DRF-FM (Bi-level MOEA) [21] | Superior to competitors | Minimized feature count | Synthetic and real data | Moderate | Optimal balance between features and accuracy |

Table 2: Methodological Classification and Application Domains

| Method Category | Specific Techniques | Primary Applications | Noise Robustness | Redundancy Handling |

|---|---|---|---|---|

| Wrapper Methods | Sequential Feature Selection, Recursive Feature Elimination [6] | Industrial fault diagnosis, biomarker discovery | Moderate | High |

| Embedded Methods | Random Forest Importance, LASSO [19] [6] | Transcriptomics, prognostic modeling | High | Moderate |

| Filter Methods | Fisher Score, Mutual Information [6] | Signal processing, preliminary feature screening | Low to Moderate | Low |

| Multi-Objective Evolutionary | DRF-FM, NSGA-II [21] | Complex biological systems, high-dimensional data | High | High |

| Hybrid Approaches | Hybrid Sequential Feature Selection [19] | Rare disease diagnostics, biomarker validation | High | High |

Detailed Experimental Protocols and Methodologies

Hybrid Sequential Feature Selection for mRNA Biomarker Discovery

Recent research on Usher syndrome demonstrates a sophisticated hybrid sequential feature selection approach to identify robust mRNA biomarkers from high-dimensional transcriptomic data [19]. The protocol began with an initial dataset of 42,334 mRNA features derived from immortalized B-lymphocytes of Usher syndrome patients and healthy controls. The methodology employed a multi-stage filtering approach:

- Variance Thresholding: Initial filtering removed low-variance features unlikely to contribute discriminative signal.

- Recursive Feature Elimination: Iterative model training and feature elimination based on importance rankings.

- LASSO Regression: Applied L1 regularization to further sparsify the feature set and eliminate redundant features.

- Nested Cross-Validation: The entire process was embedded within a nested cross-validation framework to prevent overfitting and ensure generalizability.

The selected biomarkers were validated using multiple machine learning models, including Logistic Regression, Random Forest, and Support Vector Machines, all of which demonstrated robust classification performance. Crucially, biological relevance was confirmed through experimental validation using droplet digital PCR (ddPCR), which verified consistent expression patterns for top-ranked mRNA biomarkers [19]. This rigorous approach successfully reduced the feature set from 42,334 to 58 top mRNA biomarkers (99.86% reduction) while maintaining classification accuracy exceeding 96.5%.

Embedded Feature Selection for Industrial Fault Diagnostics

A comprehensive benchmark study evaluated feature selection techniques for industrial fault classification using time-domain features [6]. The research compared five Feature Selection Methods (FSMs): Fisher Score (FS), Mutual Information (MI), Sequential Feature Selection (SFS), Recursive Feature Elimination (RFE), and Random Forest Importance (RFI). The experimental workflow encompassed:

- Feature Extraction: 15 time-domain features were extracted from raw sensor data, including Minimum, Maximum, Mean, Standard Deviation, Root Mean Square, Skewness, Kurtosis, Variance, Peak-to-Peak, Impulse Factor, Crest Factor, Shape Factor, and Hjorth Parameters (Mobility and Complexity).

- Feature Selection Application: Each FSM was applied to identify the most discriminative features for fault detection.

- Classifier Training: Selected features were used to train both Support Vector Machine (SVM) and Long Short-Term Memory (LSTM) models.

- Performance Validation: Models were rigorously evaluated on two publicly available datasets: the Case Western Reserve University (CWRU) bearing dataset and the NASA Ames Prognostics Center of Excellence (PCoE) lithium-ion battery dataset.

The results demonstrated that embedded methods, particularly Random Forest Importance, achieved superior performance with an average F1-score exceeding 98.4% using only 10 selected features, highlighting how strategic feature reduction enhances model performance while minimizing computational complexity [6].

Benchmarking Feature Selection for Single-Cell RNA Sequencing Integration

A landmark registered report in Nature Methods systematically benchmarked feature selection methods for single-cell RNA sequencing (scRNA-seq) data integration and querying [8]. This extensive study evaluated variants of over 20 feature selection methods using metrics spanning five critical categories: batch effect removal, conservation of biological variation, quality of query-to-reference mapping, label transfer quality, and ability to detect unseen populations. The benchmarking pipeline employed:

- Metric Selection and Characterization: Careful profiling of metrics to select those that effectively measure performance, are not overly associated with technical factors, and are non-redundant.

- Baseline Scaling Approach: Using diverse baseline methods (all features, 2,000 highly variable features, 500 random features, 200 stably expressed features) to establish effective ranges for each metric and enable meaningful comparison.

- Comprehensive Evaluation: Assessing methods on multiple datasets with different technical characteristics to ensure robust conclusions.

The study reinforced common practice by demonstrating that highly variable feature selection is effective for producing high-quality integrations, while providing further guidance on the number of features to select, batch-aware feature selection, and lineage-specific feature selection [8]. This work highlights the critical importance of selecting appropriate feature selection strategies for specific biological applications and data types.

Visualizing Feature Selection Workflows and Methodologies

Feature Selection Strategy Workflow for Addressing Key Challenges

Taxonomy of Feature Selection Methods

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Essential Research Reagents and Computational Tools for Feature Selection Research

| Reagent/Tool | Function/Application | Example Use Case | Key Considerations |

|---|---|---|---|

| Immortalized B-Lymphocytes [19] | Non-invasive source for mRNA biomarker studies | Usher syndrome biomarker discovery | Readily available via blood draw; immortalizable with EBV |

| Droplet Digital PCR (ddPCR) [19] | Absolute quantification of nucleic acids | Experimental validation of computationally identified mRNA biomarkers | High sensitivity and precision for low-abundance targets |

| scRNA-seq Platforms [8] | Single-cell transcriptomic profiling | Feature selection for cell atlas construction | Enables analysis of cellular heterogeneity; batch effect challenges |

| Time-Domain Feature Extractors [6] | Signal processing for industrial diagnostics | Bearing fault detection and battery health prognostics | Captures statistical properties of temporal signals |

| Random Forest Classifiers [19] [6] | Embedded feature selection and classification | Biomarker discovery and industrial fault detection | Provides native feature importance metrics |

| Support Vector Machines (SVM) [6] | Supervised classification with selected features | Fault classification in industrial systems | Effective in high-dimensional spaces with appropriate kernels |

| Multi-Objective Evolutionary Algorithms [21] | Simultaneous optimization of feature count and accuracy | Complex biological data with competing objectives | Balances multiple performance metrics effectively |

| Nested Cross-Validation Frameworks [19] | Robust model evaluation and hyperparameter tuning | Preventing overfitting in high-dimensional data | Computationally intensive but essential for reliability |

The comprehensive comparison presented in this guide demonstrates that no single feature selection method universally outperforms all others across every biomedical application. Rather, the optimal approach depends on specific data characteristics, analytical goals, and practical constraints. Hybrid sequential feature selection has proven exceptionally effective for biomarker discovery, achieving remarkable dimensionality reduction (from 42,334 to 58 features) while maintaining biological relevance validated through ddPCR [19]. Embedded methods like Random Forest Importance offer robust performance for industrial diagnostics, achieving F1-scores exceeding 98.4% with reduced feature sets [6]. For specialized applications like single-cell RNA sequencing, highly variable feature selection remains the established standard, though specific implementation details significantly impact performance [8].

The critical challenge of balancing feature reduction with predictive accuracy finds promising solutions in multi-objective evolutionary approaches that systematically navigate the trade-off between these competing goals [21]. Furthermore, multi-model consensus strategies that identify "super-features" consistently prioritized across multiple algorithms demonstrate superior classification accuracy (>99%) while enhancing interpretability [20]. As biomedical data continues to grow in complexity and dimensionality, the strategic selection and implementation of feature selection methodologies will remain essential for extracting meaningful biological insights from the confounding background of irrelevant features, redundancy, and biological noise.

Understanding the Impact on Overfitting and Model Generalization

In the field of machine learning, the twin challenges of overfitting and underfitting represent a fundamental trade-off that directly impacts a model's ability to generalize. Overfitting occurs when a model learns the training data too closely, including its noise and random fluctuations, resulting in poor performance on new, unseen data [22]. In contrast, underfitting happens when a model is too simplistic to capture the underlying patterns in the data, performing poorly on both training and test datasets [22]. The balance between these two extremes is governed by the bias-variance tradeoff, where high bias leads to underfitting and high variance leads to overfitting [23].

Feature selection plays a crucial role in managing this balance. The process of selecting a subset of relevant features for model construction helps mitigate overfitting by reducing the model's complexity and eliminating noise [24]. For researchers and drug development professionals, understanding how different feature selection methods impact generalization is essential for building robust, reliable predictive models that can translate from experimental settings to real-world applications, such as patient outcome prediction or single-cell RNA sequencing analysis [8].

Methodological Approaches to Feature Selection

Categories of Feature Selection Techniques

Feature selection methods can be broadly classified into three main categories, each with distinct mechanisms and implications for model generalization:

- Filter Methods: These techniques select features based on statistical properties (such as correlation) independently of any machine learning model. They are computationally efficient and provide a quick way to remove obviously redundant features but may overlook feature interactions [24].

- Wrapper Methods: These approaches evaluate feature subsets by actually training and testing models on them. While computationally intensive, they can capture feature interactions and often yield high-performing feature sets. Recursive Feature Elimination (RFE) is a prominent example [24].

- Embedded Methods: These techniques integrate feature selection directly into the model training process. Algorithms like Lasso Regression (L1 regularization) automatically perform feature selection by penalizing less important coefficients, offering a practical balance between performance and efficiency [24].

Experimental Workflow for Evaluating Generalization

The evaluation of how feature selection impacts overfitting and generalization follows a systematic workflow that ensures rigorous assessment. The diagram below illustrates this process:

Comparative Performance of Feature Selection Methods

Quantitative Comparison Across Domains

Different feature selection methods exhibit varying effectiveness depending on the application domain, dataset characteristics, and computational constraints. The table below summarizes experimental findings from multiple studies:

Table 1: Performance comparison of feature selection methods across different studies

| Domain | Feature Selection Method | Key Performance Metrics | Impact on Overfitting/Generalization | Citation |

|---|---|---|---|---|

| Diabetes Disease Progression Prediction | Filter Method (Correlation) | R²: 0.4776, MSE: 3021.77 | Removed only one redundant feature, good baseline performance | [24] |

| Diabetes Disease Progression Prediction | Wrapper Method (RFE) | R²: 0.4657, MSE: 3087.79 | Reduced feature set by half but slightly reduced accuracy | [24] |

| Diabetes Disease Progression Prediction | Embedded Method (Lasso) | R²: 0.4818, MSE: 2996.21 | Best balance of accuracy and generalization with 9 features retained | [24] |

| Building Energy Consumption Prediction | Feature Extraction Only | 29-68% median prediction improvement vs. baseline | Noticeable accuracy improvements without significant overfitting | [25] |

| Building Energy Consumption Prediction | Feature Extraction + Selection | Limited additional improvement | High computational cost with minimal practical value for generalization | [25] |

| Single-cell RNA-seq Data Integration | Highly Variable Feature Selection | Effective batch correction and biological variation preservation | Produced high-quality integrations suitable for reference atlases | [8] |

| Single-cell RNA-seq Data Integration | Random Feature Selection | Poor integration quality | Inability to capture meaningful biological patterns | [8] |

| Traumatic Brain Injury Mortality Prediction | Context-Specific Feature Selection | AUC: 0.98 (Manaus model) | Significantly enhanced accuracy by tailoring to local contexts | [26] |

Experimental Protocols and Evaluation Metrics

Benchmarking Framework for Single-Cell RNA Sequencing

The comprehensive benchmarking study on single-cell RNA sequencing data integration employed rigorous experimental protocols to assess how feature selection affects generalization [8]. The methodology included:

- Dataset Selection: Multiple scRNA-seq datasets representing diverse biological conditions and technical variations.

- Feature Selection Methods: Evaluation of over 20 feature selection methods, including highly variable genes, batch-aware selection, and random baselines.

- Integration Techniques: Application of multiple data integration algorithms, including scVI (single-cell Variational Inference).

- Evaluation Framework: Five categories of metrics assessing batch effect removal, biological variation conservation, query mapping quality, label transfer accuracy, and detection of unseen populations.

- Cross-Validation: Robust scaling of metrics using baseline methods to enable fair comparison across techniques.

This protocol revealed that feature selection significantly impacts integration quality and subsequent generalization to query samples, with highly variable feature selection generally producing the most robust integrations [8].

Diabetes Disease Progression Study

The comparative analysis of feature selection methods for diabetes disease progression prediction followed this experimental design [24]:

- Dataset: Diabetes dataset from scikit-learn containing 442 patient records with 10 baseline features.

- Comparison Framework: Three feature selection methods (Filter, Wrapper/RFE, Embedded/Lasso) evaluated using identical baseline conditions.

- Model Training: Linear Regression models trained on selected feature subsets.

- Evaluation Method: 5-fold cross-validation with R² score and Mean Squared Error (MSE) as performance metrics.

- Generalization Assessment: Consistent testing protocol across all methods to evaluate performance on unseen data.

The embedded method (Lasso) demonstrated superior generalization capabilities, achieving the best balance between model complexity and predictive accuracy [24].

Table 2: Key research reagents and computational tools for feature selection experiments

| Resource/Tool | Type | Primary Function | Application Context |

|---|---|---|---|

| scikit-learn | Software Library | Provides implementations of filter, wrapper, and embedded methods | General machine learning workflows [24] |

| Highly Variable Gene Selection | Algorithm | Identifies genes with high cell-to-cell variation | Single-cell RNA sequencing analysis [8] |

| Lasso Regression (L1 Regularization) | Embedded Method | Performs feature selection during model training by shrinking coefficients | Regression problems with many features [24] |

| Recursive Feature Elimination (RFE) | Wrapper Method | Recursively removes least important features based on model performance | Model-specific feature selection [24] |

| Hybrid Sine Cosine - Firehawk Algorithm | Metaheuristic Method | Optimizes feature subsets using hybrid optimization | High-dimensional datasets [27] |

| K-fold Cross-Validation | Evaluation Technique | Assesses model generalization across data splits | Model validation and selection [22] |

| Wattile Software | Energy Forecasting Tool | Automated feature engineering for building energy prediction | Time-series forecasting [25] |

| MLE-bench Benchmark | Evaluation Framework | Standardized assessment of ML engineering capabilities | Comparative evaluation of automated ML systems [28] |

Implications for Research and Practical Applications

Domain-Specific Considerations

The impact of feature selection on overfitting and generalization varies significantly across application domains, necessitating tailored approaches:

Biomedical Research and Drug Development: In single-cell RNA sequencing analysis, feature selection must balance batch effect correction with preservation of biological variation. Highly variable feature selection has proven effective for constructing reliable reference cell atlases, which are crucial for mapping query samples and identifying novel cell populations [8]. The selection of appropriate features directly influences the utility of these resources for downstream analysis and discovery.

Clinical Prediction Models: The study on traumatic brain injury mortality prediction demonstrated that context-specific feature selection dramatically impacts model generalization across different populations [26]. A model trained in São Paulo performed poorly when applied to data from Manaus (AUC drop), highlighting the importance of incorporating region-specific features. This finding has significant implications for developing clinical decision support systems that maintain performance across diverse healthcare settings.

Building Energy Forecasting: Research in energy consumption prediction revealed that while feature extraction substantially improves accuracy, adding sophisticated feature selection methods provided limited practical benefits despite significant computational costs [25]. This suggests that in some domains, straightforward feature engineering may offer better returns on investment than complex selection algorithms.

Strategic Recommendations for Researchers

Based on the comparative analysis of feature selection methods, researchers should consider the following strategic approaches to optimize model generalization:

Prioritize Embedded Methods for Balanced Performance: Embedded methods like Lasso regression often provide the optimal balance between performance and computational efficiency, automatically performing feature selection while maintaining model generalization [24].

Validate Across Multiple Metrics: As demonstrated in scRNA-seq benchmarking, evaluating feature selection methods using multiple metrics (batch correction, biological conservation, query mapping) provides a more comprehensive assessment of generalization capabilities [8].

Consider Domain-Specific Requirements: The effectiveness of feature selection methods depends heavily on domain-specific characteristics. Context-aware feature selection, as shown in traumatic brain injury prediction, can dramatically improve model generalization to specific populations or conditions [26].

Account for Computational Constraints: In applications requiring frequent retraining or deployment at scale, the computational cost of wrapper methods may be prohibitive. Filter methods or simple embedded methods often provide reasonable performance with significantly lower computational requirements [25] [24].

Address Generalization Gaps Systematically: Research on AI agents for machine learning highlights the challenge of generalization gaps during automated model development. Implementing rigorous evaluation protocols and regularization techniques is essential for maintaining performance on held-out test sets [28].

The relationship between feature selection, overfitting, and model generalization represents a critical consideration in machine learning research and application. Through comparative analysis across diverse domains, embedded methods like Lasso regression frequently provide the most practical balance of performance and generalization, while domain-specific considerations often dictate the optimal approach. For biomedical researchers and drug development professionals, selecting appropriate feature selection strategies directly impacts the translational potential of predictive models, enabling more reliable insights from high-dimensional biological data. As automated machine learning systems advance, developing more sophisticated feature selection approaches that explicitly optimize for generalization remains an important frontier in methodology development.

A Practical Guide to Feature Selection Techniques and Their Biomedical Applications

In the field of high-dimensional data analysis, particularly within bioinformatics and drug development, feature selection has become a fundamental preprocessing step. The explosion of data dimensionality in applications such as genomics, transcriptomics, and clinical informatics presents significant challenges including the curse of dimensionality, increased computational costs, and reduced model interpretability [1]. Feature selection methods broadly fall into three categories: filter methods, wrapper methods, and embedded methods [29]. This guide focuses specifically on filter methods, which are model-agnostic approaches that select features based on statistical properties of the data rather than their performance with a specific predictive model.

Filter methods operate by ranking features according to statistical criteria such as correlation, mutual information, or variance, then selecting the top-ranked features [30]. Their principal advantages include computational efficiency, scalability to very high-dimensional datasets, and independence from any specific learning algorithm [31]. This makes them particularly valuable for initial screening of features in ultra-high-dimensional settings where the number of features dramatically exceeds the number of observations [32].

Within the broader context of performance evaluation for feature selection methods, understanding the relative strengths and weaknesses of different filter approaches is crucial for building robust and interpretable predictive models in scientific research and drug development.

Comprehensive Comparison of Filter Method Performance

Quantitative Performance Metrics Across Studies

Table 1: Comparative Performance of Filter Methods Across Multiple Benchmark Studies

| Filter Method | Classification Accuracy (Range) | Stability | Computational Speed | Key Strengths | Primary Datasets Evaluated |

|---|---|---|---|---|---|

| Variance Filter | Competitive predictive accuracy [30] | High [30] | Very Fast [30] | Simplicity, effectiveness with high-dimensional data [30] | Gene expression survival data (11 datasets) [30] |

| Correlation-adjusted Regression Scores (CARs) | Similar to top performers [30] | Moderate [30] | Fast [30] | Multivariate consideration of feature relationships [30] | Gene expression survival data [30] |

| Jensen-Shannon Divergence | Effective for binary classification [32] | High [32] | Fast [32] | Model-free approach, handles categorical data [32] | Ultra-high-dimensional simulated and real data [32] |

| Conditional Mutual Information Maximization (CMIM) | High predictive accuracy [33] | Moderate [33] | Moderate | Balances relevance and redundancy [33] | COVID-19 clinical data [33] |

| Highly Variable Genes | Superior for single-cell data integration [8] | Method-dependent [8] | Fast [8] | Effective for preserving biological variation [8] | Single-cell RNA sequencing data [8] |

Table 2: Specialized Filter Methods for Specific Data Types

| Filter Method | Optimal Application Context | Key Limitations | Representative Performance |

|---|---|---|---|

| VWMRmR | Multi-omics data integration [34] | Computational complexity | Best accuracy for 3 of 5 omics datasets [34] |

| ANOVA F-test | Continuous features with categorical outcomes [33] | Assumes normal distribution | Effective initial screening [33] |

| Mean Decrease Gini | Random Forest-based feature importance [33] | Model-dependent despite being filter method | Identifies non-linear relationships [33] |

| Kolmogorov Filter | Ultra-high-dimensional binary classification [32] | Limited to binary outcomes | Strong theoretical guarantees [32] |

Experimental Protocols for Benchmarking Filter Methods

Standardized Evaluation Framework

Recent comprehensive benchmarks have established rigorous methodologies for evaluating filter methods. Barbieri et al. developed a modular Python framework that enables consistent comparison of feature selection algorithms across multiple dimensions: selection accuracy, redundancy, prediction performance, stability, and computational efficiency [1]. Their experimental protocol involves:

Multiple Dataset Application: Each filter method is applied across diverse high-dimensional datasets, including gene expression data, clinical records, and multi-omics data [1] [34].

Stability Assessment: The robustness of each filter method is evaluated by measuring the consistency of selected features under perturbations of the training data, using metrics like the Jaccard index or Kuncheva's index [1].

Predictive Performance Validation: Selected feature subsets are evaluated by training predictive models (e.g., random forests, support vector machines) and assessing performance via cross-validation on held-out test sets [1] [31].

Statistical Significance Testing: Performance differences between methods are tested for statistical significance using appropriate non-parametric tests to ensure observed differences are not due to random chance [1].

Domain-Specific Benchmarking Protocols

In specialized domains, tailored experimental protocols have been developed:

For single-cell RNA sequencing data, a recent Nature Methods study established a comprehensive benchmarking pipeline evaluating feature selection methods using metrics beyond batch correction, including query mapping accuracy, label transfer quality, and detection of unseen cell populations [8]. Their protocol involves:

Baseline Scaling: Metric scores are scaled relative to baseline methods (all features, highly variable features, random features, and stably expressed features) to establish effective ranges for each dataset [8].

Multi-faceted Metric Selection: Careful selection of non-redundant metrics covering integration quality, biological conservation, and practical utility [8].

Batch-Aware Evaluation: Assessment of method performance when technical batch effects are present, which is crucial for real-world applications [8].

For clinical predictive modeling, studies such as the COVID-19 outcome prediction analysis employ robust evaluation protocols including:

Data Preprocessing: Handling of missing values, outlier detection, and appropriate scaling (e.g., Robust Scaling) to mitigate the impact of extreme values [33].

Stratified Splitting: Use of random stratified splits (typically 70%/30%) to maintain class distribution between training and test sets [33].

Class Imbalance Handling: Application of techniques such as oversampling or specialized algorithms to address imbalanced outcomes common in clinical datasets [33].

Visualizing Filter Method Workflows and Relationships

Generalized Filter Method Evaluation Workflow

Diagram 1: A generalized workflow for benchmarking filter methods in high-dimensional data, incorporating both predictive performance and stability assessments as key evaluation criteria.

Taxonomic Relationships of Filter Methods

Diagram 2: Taxonomic relationships among major filter method families, showing connections and methodological evolution across different approaches.

Table 3: Essential Software Tools and Packages for Filter Method Implementation

| Tool/Package | Primary Function | Supported Filter Methods | Implementation Language | Key Reference |

|---|---|---|---|---|

| mlr3filters | Comprehensive feature selection | 22+ filter methods including correlation, information gain, and variance-based | R [31] | Bommert et al. [31] |

| scikit-learn Feature Selection | Basic filter method implementation | Variance threshold, correlation-based, mutual information | Python [29] | Pedregosa et al. |

| Python Benchmarking Framework | Comparative analysis of feature selection | Custom implementation of multiple filter methods | Python [1] | Barbieri et al. [1] |

| Boruta | Hybrid feature selection | Wrapper around random forest with permutation importance | R/Python [33] | Kursa et al. |

Table 4: Key Statistical Measures Used in Filter Methods

| Statistical Measure | Feature Types | Target Variable | Key Properties | Typical Applications |

|---|---|---|---|---|

| Pearson Correlation | Continuous | Continuous | Measures linear relationships | Initial screening of continuous features [24] |

| Mutual Information | Any | Any | Captures non-linear dependencies | General-purpose filtering [34] |

| Jensen-Shannon Divergence | Any | Categorical | Model-free, information-theoretic | Ultra-high-dimensional classification [32] |

| ANOVA F-statistic | Continuous | Categorical | Tests differences between group means | Omics data with categorical outcomes [34] |

| Variance | Continuous | Unsupervised | Identifies low-information features | Pre-filtering in single-cell analysis [8] |

Based on comprehensive benchmarking studies, several key findings emerge regarding filter method performance. First, no single filter method universally outperforms all others across all datasets and application domains [31]. However, certain methods demonstrate consistent effectiveness: the simple variance filter has shown remarkable performance in gene expression survival data [30], while highly variable feature selection remains the gold standard in single-cell RNA sequencing analysis [8].

For multi-omics data and complex classification tasks, information-theoretic methods such as VWMRmR and Jensen-Shannon divergence often achieve superior performance by effectively capturing non-linear relationships and handling feature interactions [34] [32]. The stability of filter methods varies considerably, with simpler methods typically demonstrating higher robustness to data perturbations [1] [30].

These findings highlight the importance of contextual method selection based on dataset characteristics, computational constraints, and analytical goals. For researchers working with novel data types or specialized applications, implementing a systematic benchmarking approach following established experimental protocols is essential for identifying the optimal filter method for their specific use case.

Feature selection (FS) is a critical preprocessing step in machine learning (ML) that aims to identify the most relevant subset of features from the original data. By reducing dimensionality, it mitigates the curse of dimensionality, combats overfitting, enhances model interpretability, and improves computational efficiency [1]. FS methods are broadly categorized into three groups: filter methods, which select features based on statistical measures independent of any ML model; embedded methods, where feature selection is incorporated into the model training process (e.g., LASSO); and wrapper methods, which evaluate feature subsets based on their performance on a specific ML model [35] [36].

Wrapper methods employ a search algorithm to explore the space of possible feature subsets and use the predictive performance of a predetermined learning algorithm to assess the quality of a given subset [37]. This model-specific approach often leads to superior performance compared to filter methods, as it captures complex feature interactions and dependencies tailored to the classifier used [14] [6]. However, this performance gain comes at a significant computational cost, as the model must be trained and validated repeatedly for each candidate subset [35] [36]. This guide provides a comparative analysis of wrapper methods against other FS paradigms, detailing their operational principles, experimental performance, and implementation protocols to inform their application in scientific research, particularly in drug development.

Comparative Performance Analysis of Feature Selection Methods

The performance of wrapper methods is best understood in comparison to filter and embedded techniques. The table below synthesizes findings from multiple benchmark studies across various domains, including bioinformatics, IoT security, and industrial diagnostics.

Table 1: Comparative Analysis of Feature Selection Method Categories

| Method Category | Key Characteristics | Representative Algorithms | Reported Performance (Example Findings) | Advantages | Disadvantages |

|---|---|---|---|---|---|

| Wrapper Methods | Use a specific ML model to evaluate subsets; performance-driven. | Recursive Feature Elimination (RFE), Sequential Feature Selection (SFS) [6] | - F1-Score > 0.99 for IoT intrusion detection with ~60% feature reduction [14].- Enhanced Random Forest performance in metabarcoding data analysis [36]. | - Often high predictive accuracy.- Captures feature interactions specific to the model. | - Computationally expensive and slow [35] [36].- Risk of overfitting if not properly validated. |

| Embedded Methods | Perform feature selection as part of the model training process. | LASSO, Random Forest Importance (RFI), BP_ADMM [35] [6] | - 77% accuracy for arrhythmia and 100% for oncological database (BP_ADMM) [35].- ~98.4% F1-Score for industrial fault diagnosis [6]. | - Balance between accuracy and efficiency.- Less computationally intensive than wrappers. | - Model-specific (e.g., LASSO for linear models).- Slower than filter methods [35]. |