Ensuring Reproducible Results: A Deep Dive into Inter-laboratory Variability of Digital PCR ctDNA Assays

The analysis of circulating tumor DNA (ctDNA) using digital PCR (dPCR) is transforming cancer management through non-invasive monitoring of minimal residual disease and treatment response.

Ensuring Reproducible Results: A Deep Dive into Inter-laboratory Variability of Digital PCR ctDNA Assays

Abstract

The analysis of circulating tumor DNA (ctDNA) using digital PCR (dPCR) is transforming cancer management through non-invasive monitoring of minimal residual disease and treatment response. However, the transition of these assays from research to clinical practice is contingent upon demonstrating robust inter-laboratory reproducibility. This article provides a comprehensive analysis for researchers and drug development professionals on the factors affecting the consistency of dPCR-based ctDNA testing. We explore the foundational principles of dPCR technology, methodological workflows and their clinical applications, key sources of pre-analytical and analytical variability, and strategies for analytical validation and cross-platform comparison. Synthesizing evidence from recent clinical trials and multi-laboratory studies, this review outlines the critical pathway toward standardizing dPCR ctDNA assays to ensure reliable, reproducible results across different laboratories and platforms.

The Digital PCR Revolution: Core Principles and the Critical Need for Reproducibility in ctDNA Analysis

Introduction to dPCR: Partitioning, End-point Analysis, and Absolute Quantification

Digital PCR (dPCR) represents the third generation of Polymerase Chain Reaction technology, enabling the direct, absolute, and precise quantification of nucleic acids without the need for standard curves. By partitioning a sample into thousands of individual reactions, dPCR allows for single-molecule detection and counting using Poisson statistics. This guide explores the core principles of dPCR, objectively compares its performance to quantitative PCR (qPCR), and provides supporting experimental data within the context of circulating tumor DNA (ctDNA) assay reproducibility.

Core Principles of Digital PCR

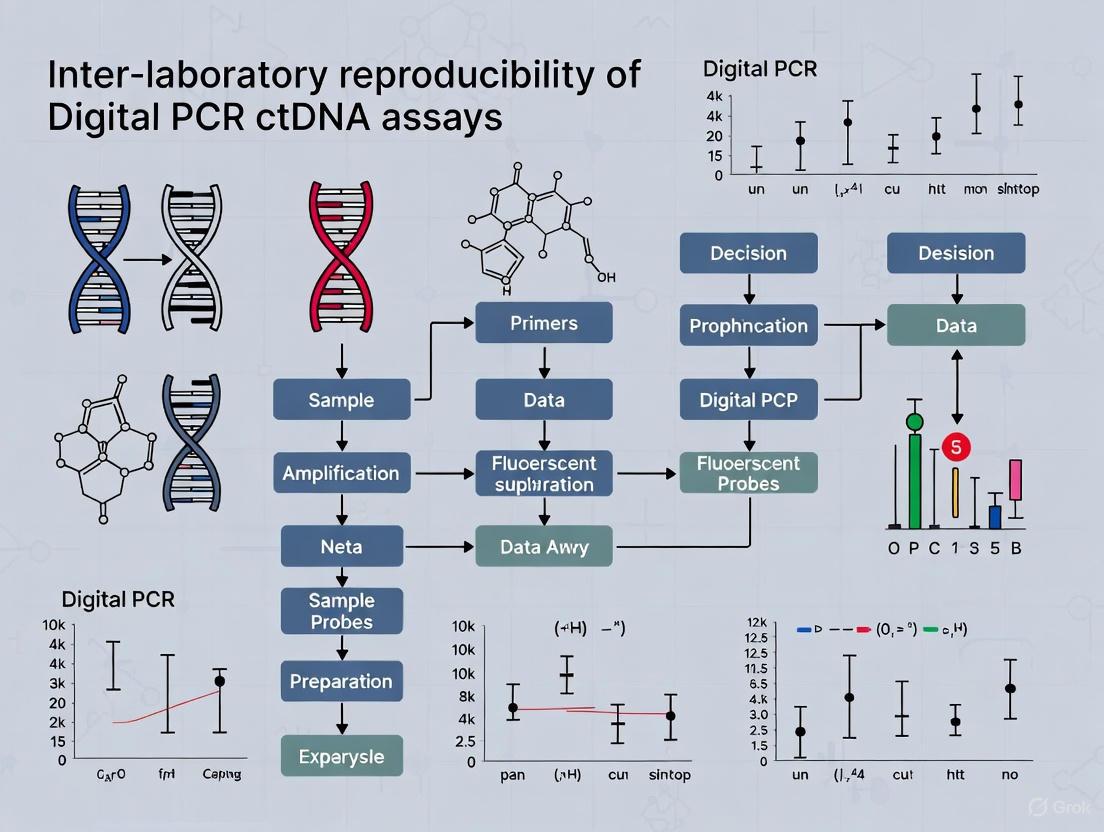

The fundamental workflow of dPCR involves four key steps, which transform the measurement of nucleic acids from an analog to a digital process.

Partitioning

The PCR mixture containing the sample is partitioned into tens of thousands of individual micro-reactions. This is achieved either through water-in-oil droplet emulsification (Droplet Digital PCR, or ddPCR) or by loading the mixture into fixed microchambers or nanowells on a chip (chip-based dPCR). This step effectively dilutes the target molecules across the partitions so that each contains zero, one, or a few target sequences [1] [2].

End-point PCR Amplification

Each partition undergoes a traditional PCR amplification to the endpoint, meaning the reaction runs for a fixed number of cycles regardless of the initial starting concentration. In positive partitions (those containing at least one target molecule), the amplified target generates a strong fluorescent signal. Partitions without the target remain dim [1].

End-point Fluorescence Analysis

After amplification is complete, a detector reads the fluorescence of each partition. Unlike qPCR, which monitors fluorescence in real-time, dPCR makes a single, end-point measurement to classify each partition as positive or negative based on its fluorescence intensity [1] [2].

Absolute Quantification via Poisson Statistics

The ratio of positive to total partitions is used to calculate the absolute concentration of the target molecule in the original sample, applying Poisson statistics. This calculation accounts for the fact that some positive partitions may have contained more than one target molecule at the outset, and provides a precise, copy-per-volume count without reference to external standards [1] [3] [2].

The following diagram illustrates this core workflow and the underlying logical process for quantification.

dPCR vs. qPCR: A Technical Performance Comparison

The key differences between dPCR and qPCR stem from their fundamental approaches to measurement. The following table summarizes their comparative performance based on recent studies.

Table 1: Comprehensive Comparison of dPCR and qPCR Performance Characteristics

| Performance Characteristic | Digital PCR (dPCR) | Quantitative PCR (qPCR) | Supporting Experimental Evidence |

|---|---|---|---|

| Quantification Method | Absolute, via Poisson statistics [1] | Relative, requires a standard curve [1] | |

| Precision & Reproducibility | Higher precision; lower coefficient of variation; superior inter-laboratory reproducibility [1] [4] | Lower precision; more susceptible to inter-assay variability [5] [4] | dPCR demonstrated superior consistency and precision in quantifying respiratory viruses compared to RT-qPCR [5]. |

| Sensitivity | Higher sensitivity for low-abundance targets; can detect rare mutations at frequencies as low as 0.1% to 0.001% [6] [3] | Lower sensitivity for rare targets; performance affected by inhibitors and background DNA [1] | In a study of early-stage breast cancer, dPCR detected ctDNA representing ≤ 0.1% of cell-free DNA [7]. |

| Tolerance to Inhibitors | High; partitioning reduces the effective concentration of inhibitors in each reaction [1] [8] | Low; presence of inhibitors can significantly reduce amplification efficiency [1] | A study on cyanobacteria found ddPCR provided more precise and accurate analysis for environmental samples with PCR inhibitors [8]. |

| Dynamic Range | Narrower; limited by the number of partitions [1] | Wider; suitable for measuring large concentration differences [1] [4] | A study on Infectious Bronchitis Virus found qPCR had a wider quantification range than dPCR [4]. |

| Throughput & Workflow | Moderate; requires partitioning step. However, less need for replicates [1] | High; faster cycling and no partitioning step. May require replicates for precision [1] | |

| Cost Considerations | Higher instrument cost [5] [1] | Lower instrument cost, but requires costs for calibration standards [1] | Noted as a current limitation for the routine implementation of dPCR [5]. |

Experimental Data in ctDNA Research

The application of dPCR in detecting circulating tumor DNA (ctDNA) for minimal residual disease (MRD) monitoring and cancer prognosis highlights its clinical value. The following experiments demonstrate its performance in real-world oncological studies.

TRICIA Trial: Prognostic Risk Stratification in TNBC

This trial evaluated the use of a tumor-informed ddPCR assay for risk stratification in triple-negative breast cancer (TNBC) patients with residual disease after neoadjuvant chemotherapy [9].

- Objective: To determine if ctDNA detection could identify patients at high and low risk of recurrence, thereby guiding adjuvant therapy.

- Methods: Plasma from 92 patients was collected at multiple timepoints: post-NAC/pre-surgery (T1), post-surgery (T2), during adjuvant capecitabine (T3), and post-treatment (T4). ctDNA was analyzed using droplet digital PCR (ddPCR) assays.

- Key Findings: The lack of ctDNA detection at the post-chemotherapy, pre-operative timepoint was highly prognostic, with 95% distant-disease relapse-free survival. Conversely, ctDNA detection was associated with a high risk of recurrence. Furthermore, capecitabine treatment was associated with clearance of ctDNA in 41% of cases, which correlated with a better prognosis [9].

COMBI-AD Trial: Predicting Melanoma Recurrence

This biomarker analysis from a phase 3 trial validated ddPCR for detecting BRAF-mutant ctDNA as a prognostic biomarker in resected stage III melanoma [10].

- Objective: To investigate whether baseline and longitudinal ctDNA measurements could predict survival outcomes in patients receiving adjuvant therapy.

- Methods: Analytically validated, mutation-specific ddPCR assays were used to measure BRAFV600E or BRAFV600K ctDNA in 597 baseline plasma samples from the COMBI-AD trial.

- Key Findings: ctDNA was detectable in 13% (79/597) of baseline samples. Detection was strongly associated with worse recurrence-free survival and overall survival in both placebo and combination therapy groups. Patients with adverse longitudinal ctDNA kinetics had markedly shorter median recurrence-free survival (8.31 and 5.32 months) compared to those with favorable kinetics (19.25 months and "not reached") [10].

Table 2: Summary of Key Experimental Findings from Recent ctDNA Studies Using dPCR

| Study (Trial Name) | Cancer Type | dPCR Platform | Key Clinical Utility Finding | Sensitivity/Concordance |

|---|---|---|---|---|

| TRICIA Trial [9] | Triple-Negative Breast Cancer | Droplet Digital PCR (ddPCR) | ctDNA negativity post-therapy predicted 95% relapse-free survival. | ctDNA detected in 97% of patients before clinical relapse. |

| COMBI-AD Trial [10] | Stage III Melanoma | Droplet Digital PCR (ddPCR) | Baseline ctDNA detection was a strong prognostic biomarker for worse RFS and OS. | ctDNA detected in 13% of baseline patients; strong association with outcomes. |

| Sánchez-Martín et al. [7] | Early-Stage Breast Cancer | ddPCR & Absolute Q pdPCR | Both systems were effective for ctDNA analysis in early-stage disease. | Concordance > 90% in ctDNA positivity between the two dPCR platforms. |

Essential Research Reagent Solutions

The successful implementation of dPCR assays, particularly for ctDNA analysis, relies on a suite of specialized reagents and platforms.

Table 3: Key Research Reagent Solutions for dPCR-based ctDNA Analysis

| Item Category | Specific Examples | Function in the Workflow |

|---|---|---|

| dPCR Platforms | QIAcuity (Qiagen), QX200 ddPCR System (Bio-Rad), QuantStudio Absolute Q (Thermo Fisher) [7] [2] | Instrumentation for sample partitioning, thermal cycling, and fluorescence reading. |

| Nucleic Acid Extraction Kits | MagMax Viral/Pathogen Kit (Thermo Fisher) [5] | Isolation of high-quality cell-free DNA (including ctDNA) from plasma samples. |

| Liquid Biopsy dPCR Assays | Absolute Q Liquid Biopsy dPCR Assays (Thermo Fisher) [6] | Pre-designed, validated assays for reproducible detection of known somatic mutations. |

| Master Mixes | dPCR Master Mixes (various vendors) | Optimized buffers, nucleotides, and enzymes for efficient amplification in partitioned reactions. |

| Partitioning Consumables | DG8 Cartridges & Droplet Generation Oil (Bio-Rad), QIAcuity Nanoplate (Qiagen) | Microfluidic chips, cartridges, and oils required to create the thousands of individual partitions. |

Digital PCR, with its core principles of partitioning, end-point analysis, and absolute quantification, offers a robust tool for applications requiring high precision and sensitivity. As the summarized data shows, dPCR demonstrates superior performance over qPCR in detecting rare targets and providing reproducible data, which is paramount for inter-laboratory studies, especially in the ctDNA field for cancer monitoring. While factors like dynamic range and initial cost may favor qPCR for some applications, the proven ability of dPCR to generate reliable, calibration-free quantitative data makes it an indispensable technology in modern molecular diagnostics and life science research.

In the field of precision oncology, the analysis of circulating tumor DNA (ctDNA) has emerged as a powerful, non-invasive tool for cancer monitoring and treatment selection. Among the various detection technologies, digital PCR (dPCR) has established itself as a cornerstone for sensitive and reproducible ctDNA analysis. This guide objectively compares the performance of dPCR with alternative methods, focusing on its exceptional sensitivity for rare alleles and its role in promoting inter-laboratory reproducibility in ctDNA research.

Circulating tumor DNA (ctDNA) refers to small fragments of tumor-derived DNA found in the bloodstream, carrying tumor-specific genetic alterations. A significant challenge in its analysis is its vanishingly low concentration, especially in early-stage cancers or minimal residual disease (MRD), where it can constitute less than 0.1% of the total cell-free DNA (cfDNA) and be present at fewer than 100 copies per milliliter of plasma [11]. This low abundance demands techniques with exceptional sensitivity and specificity. Digital PCR (dPCR) and next-generation sequencing (NGS) are the two primary methods used, each with distinct advantages and limitations. This article will demonstrate how dPCR's unique approach makes it ideally suited for applications requiring the detection of rare alleles and low-copy targets.

Head-to-Head Technology Comparison

The following table summarizes the core characteristics of dPCR against other common nucleic acid detection technologies in the context of ctDNA analysis.

Table 1: Comparison of Key Technologies for ctDNA Analysis

| Feature | Digital PCR (dPCR) | Quantitative PCR (qPCR) | Next-Generation Sequencing (NGS) |

|---|---|---|---|

| Principle | Absolute quantification via end-point fluorescence of partitioned samples [2] | Relative quantification based on amplification cycle threshold (Ct) [2] | Massively parallel sequencing, often with error-correction methods [12] |

| Sensitivity (VAF) | High (0.1% - 0.5%) [13] [14] | Low (~1-10%) [15] | Variable (Very High <0.1% to ~1%) with advanced error correction [15] [12] |

| Quantification | Absolute, without a standard curve [16] [2] | Relative, requires a standard curve [17] | Semi-quantitative (VAF); quantitative NGS (qNGS) emerging [16] |

| Multiplexing Capability | Low to moderate (typically 2-4 plex) [15] | Low (typically 1-2 plex) | Very High (dozens to hundreds of targets) [12] |

| Throughput & Turnaround | Fast (hours); ideal for rapid, targeted results [15] | Fast (hours) | Slow (days); complex data analysis [18] |

| Tumor Genotype Knowledge | Required | Required | Not required for tumor-agnostic panels [16] |

| Inter-lab Reproducibility | High, as demonstrated in multi-center validations [13] | Moderate, dependent on standard curve | Variable; can be improved with standardized controls [11] [19] |

VAF: Variant Allele Frequency

The Digital PCR Workflow and Principle

dPCR's sensitivity stems from its fundamental workflow, which partitions a sample into thousands of individual reactions. The following diagram illustrates this core principle.

Diagram 1: The dPCR Workflow. The sample is partitioned, amplified, and analyzed to provide an absolute count of target molecules.

This partitioning step is crucial. By diluting the target molecules across thousands of nanoliter-sized droplets or microchambers, dPCR effectively enriches rare mutant alleles by separating them from the abundant wild-type DNA background. This eliminates PCR competition and allows for the detection of a single mutant molecule in a background of thousands of wild-type sequences [2]. The absolute quantification is achieved by counting the positive partitions and applying a Poisson statistical correction, which eliminates the need for a standard curve and reduces a potential source of inter-assay variability [16] [2].

Experimental Validation: Protocol and Data from a Reproducibility Study

Robust validation is key to clinical adoption. The following details a methodology and results from a study that validated a dPCR assay for BRAF mutations, demonstrating high inter-laboratory reproducibility.

- Objective: To clinically validate and assess the inter-laboratory reproducibility of droplet digital PCR (ddPCR) assays for detecting BRAF V600E and V600K mutations in plasma ctDNA.

- Sample Preparation: Blood samples were collected in EDTA tubes or specialized blood collection tubes (BCTs) containing cell-stabilizing preservatives. Plasma was obtained through a double centrifugation protocol:

- First step: 380–3,000 g for 10 minutes at room temperature.

- Second step: 12,000–20,000 g for 10 minutes at 4°C [11]. Cell-free DNA (cfDNA) was then extracted from the plasma using a silica membrane column kit (e.g., QIAamp Circulating Nucleic Acid Kit) and eluted in a defined buffer volume [11] [13].

- Assay Configuration: The ddPCR reaction mixture was prepared using:

- Extracted cfDNA template.

- BRAF V600E or V600K-specific primer/probe assays (FAM-labeled).

- A reference assay for a wild-type genomic locus (HEX-labeled).

- ddPCR Supermix for Probes.

- Partitioning and Amplification: The reaction mixture was partitioned into ~20,000 nanoliter-sized droplets using a droplet generator. The emulsified sample was then transferred to a 96-well plate and PCR-amplified on a conventional thermal cycler using a standardized protocol.

- Data Analysis: The plate was loaded into a droplet reader, which measured the fluorescence (FAM and HEX) in each droplet. Using Poisson statistics, the instrument software calculated the absolute concentration (copies/μL) and variant allele frequency (VAF) of the BRAF mutation in the original sample [13].

Key Validation Data and Performance Metrics

The validation study yielded the following performance characteristics, summarized in the table below.

Table 2: Performance Metrics from a Clinical ddPCR Validation Study for BRAF Mutations [13]

| Performance Metric | Result for BRAF V600E | Result for BRAF V600K |

|---|---|---|

| Limit of Detection (LOD) | 0.5% VAF | 0.5% VAF |

| Accuracy (Concordance with tumor tissue) | 100% (n=36) | 100% (n=30) |

| Inter-laboratory Reproducibility | 100% concordance across 12 plasma samples | 100% concordance across 12 plasma samples |

| Precision | High, with low inter-assay coefficient of variation | High, with low inter-assay coefficient of variation |

This study underscores that dPCR assays can be rigorously validated to achieve a 0.5% VAF sensitivity with perfect inter-laboratory reproducibility, a critical benchmark for reliable MRD detection and therapy monitoring [13].

The Researcher's Toolkit: Essential Reagents for dPCR ctDNA Analysis

Successful dPCR analysis relies on a set of core reagents and materials. The table below details these essential components.

Table 3: Research Reagent Solutions for dPCR-based ctDNA Analysis

| Item | Function | Example Products / Notes |

|---|---|---|

| Blood Collection Tubes (BCTs) | Preserves blood sample integrity by preventing leukocyte lysis and release of wild-type DNA during transport/storage [11]. | cfDNA BCT (Streck), PAXgene Blood ccfDNA (Qiagen) [11]. |

| Nucleic Acid Extraction Kit | Isolves cell-free DNA from plasma with high efficiency and purity. | QIAamp Circulating Nucleic Acid Kit (Qiagen), Maxwell RSC ccfDNA LV Plasma Kit (Promega) [11] [16]. |

| dPCR Supermix | Optimized buffer containing polymerase, dNTPs, and MgCl₂ for robust amplification in partitioned formats. | ddPCR Supermix for Probes (Bio-Rad) [13]. |

| Target-Specific Assays | Fluorescently-labeled probes and primers for specific detection of mutant and reference wild-type sequences. | TaqMan SNP Genotyping Assays [13]. |

| Quantification Standards (QS) | Synthetic DNA molecules spiked into samples to enable absolute quantification and control for extraction/amplification losses, used in quantitative NGS (qNGS) [16]. | Custom-designed synthetic DNA fragments [16]. |

In conclusion, digital PCR stands out as an ideal platform for ctDNA applications where high sensitivity for predefined, low-frequency mutations is paramount. Its strengths in absolute quantification, high reproducibility, and operational simplicity make it a powerful tool for monitoring treatment response, detecting minimal residual disease, and tracking the emergence of resistance mutations in a clinical research setting. While NGS offers unparalleled multiplexing for discovery and broad profiling, dPCR provides a robust, reproducible, and highly sensitive solution for targeted liquid biopsy assays, firmly establishing its role in the advancing field of precision oncology.

Circulating tumor DNA (ctDNA) sequencing has been rapidly adopted in precision oncology, offering a minimally invasive alternative to tissue biopsies for molecular stratification, therapeutic monitoring, and post-treatment surveillance [20]. However, the detection of ctDNA presents significant technical challenges due to its low abundance in plasma (typically <1% of total cell-free DNA) and the limited input material available from standard blood draws [20] [21]. These factors create substantial hurdles for achieving reproducible results across different testing platforms and laboratories.

Inter-laboratory reproducibility represents a fundamental challenge for the clinical implementation of ctDNA assays. Discordant results between alternative assays or parallel ctDNA and tumor-biopsy tests have been reported, highlighting the pressing need for standardized proficiency testing [20]. Understanding the variables that impact analytical performance across different technology platforms and laboratories is essential for establishing confidence in ctDNA testing results, particularly as these assays are increasingly incorporated into clinical trial endpoints and treatment decision-making [22] [23].

Evaluating the Scope of Variability: Key Multi-Center Studies

The SEQC2 Consortium: Cross-Platform Performance Assessment

The Sequencing Quality Control Phase 2 (SEQC2) project conducted a comprehensive multi-site, cross-platform evaluation of five industry-leading ctDNA assays across twelve participating clinical and research facilities [20]. This rigorous proficiency testing utilized standardized cell line-derived reference samples to measure the impact of variables at each step of the ctDNA sequencing workflow. The study revealed that while mutations above 0.5% variant allele frequency (VAF) were detected with high sensitivity, precision, and reproducibility by all assays, performance below this threshold became unreliable and varied widely between assays, especially when input material was limited [20].

A critical finding from this research was that missed mutations (false negatives) were more common than erroneous candidates (false positives), indicating that reliable sampling of rare ctDNA fragments represents the key challenge for ctDNA assays [20]. The study also found that participating assays were generally robust to technical variables between test labs—from plasma extraction to sequencing workflow stages—and were impacted largely by random, rather than systematic variation [20].

Comparative Performance of ctDNA Detection Technologies

Recent studies have directly compared different technological approaches to ctDNA detection, revealing substantial variability in performance characteristics. A 2025 study comparing droplet digital PCR (ddPCR) and next-generation sequencing (NGS) in localized rectal cancer demonstrated significantly different detection rates between the two platforms [24]. In the development cohort, ddPCR detected ctDNA in 24/41 (58.5%) patients while the NGS panel detected ctDNA in only 15/41 (36.6%) of the same baseline plasma samples (p = 0.00075) [24].

This performance disparity highlights how technological approach contributes to inter-assay variability. The authors noted that ddPCR allows for absolute quantification of targeted DNA mutations with high specificity and lower operational costs compared to NGS, though its application is limited to known mutations [24]. This tradeoff between the sensitivity of targeted approaches and the comprehensive profiling capability of NGS panels represents a key consideration for laboratories selecting ctDNA testing methodologies.

Table 1: Key Multi-Center Studies Evaluating ctDNA Assay Reproducibility

| Study | Number of Platforms/Labs | Key Findings on Reproducibility | Major Variability Factors Identified |

|---|---|---|---|

| SEQC2 Consortium [20] | 5 assays across 12 facilities | High reproducibility for variants >0.5% VAF; significant variability below 0.5% VAF | Input material quantity, coverage depth, random sampling |

| Chinese Platform Comparison [25] | 9 commercial assays | Variations in extraction efficiency, sensitivity, and reproducibility, particularly at lower inputs | DNA extraction efficiency, quantification methods, sequencing depth |

| ddPCR vs. NGS in Rectal Cancer [24] | 2 detection platforms | ddPCR detected ctDNA in 58.5% vs. NGS 36.6% of same samples (p=0.00075) | Technology platform, detection thresholds, panel design |

Methodological Approaches for Assessing Reproducibility

Standardized Reference Materials and Study Designs

Well-designed reproducibility studies employ carefully controlled reference materials to enable direct comparison between platforms while eliminating biological variability as a confounding factor. The 2024 analytical evaluation of nine ctDNA sequencing assays used 23 contrived reference samples comprising two sample types (diluted cell-free DNA and synthetic plasma) with precisely defined variant allele frequencies (0.1%, 0.5%, 1%, and 2.5%) and input amounts (10 ng, 30 ng, and 50 ng) [25]. This design allowed systematic evaluation of sensitivity, specificity, and reproducibility across different VAF and input conditions for multiple variant types, including single nucleotide variants (SNVs), insertions/deletions (InDels), structural variants (SVs), and copy number variants (CNVs) [25].

These reference samples contained 45 hotspot alterations in 25 genes, enabling assessment of both intra-lab and inter-lab reproducibility through replicate testing [25]. The use of such standardized materials provides a critical foundation for meaningful cross-platform comparisons, as they eliminate the tumor heterogeneity and pre-analytical variables that complicate patient-derived samples.

Analytical Metrics for Quantifying Reproducibility

Comprehensive reproducibility assessment requires evaluation of multiple performance metrics across different experimental conditions:

Sensitivity and Variant Allele Frequency: Studies consistently demonstrate that reproducibility is highly dependent on VAF, with significantly better concordance at higher allele frequencies. The SEQC2 project found that while mutations above 0.5% VAF were detected with high reproducibility, performance below this threshold declined substantially and varied widely between assays [20]. Similarly, the multi-platform evaluation in China found a substantial increase in sensitivity for ctDNA samples from VAF 0.1% to 0.5% for all assays, with minimal improvements from 0.5% to 2.5% VAF [25].

Impact of Input Material: The quantity of input DNA significantly affects reproducibility, particularly for low-frequency variants. Research has shown that increasing DNA input quantity generally improves fragment depth, sensitivity, and reproducibility [20]. Lower cfDNA inputs tend to result in lower deduplicated mean depth and reduced on-target rates, directly impacting detection capability [25].

Coverage Depth and Uniformity: Fragment depth represents a critical variable in ctDNA assays, with high coverage essential for sensitive detection of low-frequency mutations [20]. Beyond total depth, even coverage across target regions proves important for ensuring high sensitivity and reproducibility [20]. Studies have observed wide variation in sequencing depth across different assays, with some platforms achieving >10,000× coverage while others remained below 5,000×, significantly impacting their ability to detect low-frequency variants [25].

Diagram 1: Comprehensive ctDNA testing workflow highlighting key stages where variability can impact reproducibility. The diagram incorporates critical quality control elements (in green) and error-correction steps (in red) essential for reliable results.

Critical Variables Impacting Inter-laboratory Reproducibility

Technical Factors Contributing to Variability

Multiple technical variables throughout the ctDNA testing workflow contribute to inter-laboratory variability:

DNA Extraction and Quantification Efficiency: Significant variation has been observed in ctDNA extraction efficiency and quantification accuracy across different platforms. In comparative studies, extraction efficiency for plasma samples ranged from 16% to much higher values depending on the assay, directly impacting downstream sensitivity [25]. Accurate quantification is particularly crucial as samples with lower input tend to have lower deduplicated mean depth and reduced on-target rates [25].

Unique Molecular Identifiers (UMIs): The implementation of UMIs represents a critical factor in reducing false positives and improving reproducibility. Studies have demonstrated that UMIs enable effective consensus error correction, minimizing the detection of false positives arising from PCR and sequencing errors [20]. The SEQC2 project recommended that wherever possible, UMIs should be employed for consensus error correction in ctDNA sequencing assays [20].

Target Enrichment Methods: Both amplicon and hybrid-capture enrichment methods are used in ctDNA testing, with studies showing broadly comparable performance between the two approaches when fragment depth is equivalent [20]. However, each method has distinct characteristics—amplicon methods can enable sensitive, cost-effective detection of ctDNA mutations in single genes or mutation hotspots, while hybrid-capture panels offer more comprehensive coverage suitable for unbiased surveillance [20].

Table 2: Critical Technical Variables Affecting ctDNA Assay Reproducibility

| Variable Category | Specific Factors | Impact on Reproducibility | Recommended Best Practices |

|---|---|---|---|

| Pre-Analytical | Blood collection tubes, plasma processing time, cfDNA extraction method | High impact; affects DNA yield and quality | Standardize protocols, use validated extraction kits |

| Input Material | DNA quantity, DNA quality, variant allele frequency | Critical for low VAF variants (<0.5%) | Minimum 20 ng input, higher for low VAF detection |

| Workflow | Enrichment method (amplicon vs. capture), UMI implementation, sequencing depth | Moderate to high impact; UMIs significantly reduce false positives | Implement UMIs, ensure adequate coverage (>5000×) |

| Bioinformatic | Variant calling algorithms, quality thresholds, error correction | High impact for low-frequency variants | Standardize pipelines, use duplex sequencing methods |

Analytical Challenges in Low-Frequency Variant Detection

The reliable detection of mutations below 0.5% VAF remains a key challenge for ctDNA sequencing assays and represents a major source of inter-laboratory variability [20]. Several factors contribute to this technical challenge:

Random Sampling Limitations: The detection of ctDNA fragments occurs by random sampling from a background of non-cancerous cell-free DNA. For low-frequency mutations (VAF < 0.5%), coverage has a pronounced impact on detection sensitivity, with this relationship modeled by a sigmoidal function [20]. The number of sequence fragments containing a given mutation follows a Poisson distribution, with a median fragment count proportional to the product of VAF and global fragment-depth [20].

Coverage Requirements: Simulation studies have demonstrated that at maximum depth, >99% of mutations can be detected by at least two independent fragments. However, any decrease in coverage or increase in detection stringency (i.e., requiring >2 supporting fragments) causes a reduction in sensitivity, particularly for variants below 0.5% VAF [20].

Sequence-Specific Effects: Mutations in challenging genomic contexts, such as regions with high or low GC-content, low sequence complexity, or suboptimal alignability, are detected with lower sensitivity [20]. Additionally, in hybrid-capture sequencing, mutations in exon edge regions are detected with lower sensitivity than central regions due to lower coverage, creating an "exon edge-effect" that can contribute to variability [20].

Essential Research Reagents and Solutions for Reproducible ctDNA Testing

Achieving reproducible ctDNA testing results requires careful selection and implementation of specialized research reagents and solutions throughout the testing workflow. The following table details key components essential for reliable inter-laboratory performance.

Table 3: Essential Research Reagent Solutions for Reproducible ctDNA Testing

| Reagent Category | Specific Examples | Function in Workflow | Impact on Reproducibility |

|---|---|---|---|

| Blood Collection Tubes | Streck Cell-Free DNA BCT tubes [24] | Stabilize nucleated blood cells during transport and storage | Critical for preventing genomic DNA contamination and preserving ctDNA integrity |

| Reference Standards | Cell line-derived reference materials [20], contrived cfDNA/plasma samples [25] | Provide standardized materials with known variants at defined VAFs | Enable cross-platform performance comparison and proficiency testing |

| UMI Adapters | Unique Molecular Identifiers [20] | Tag individual DNA molecules before amplification | Enable consensus error correction to distinguish true mutations from technical artifacts |

| Target Enrichment | Hybrid-capture probes [20], amplicon panels [24] | Enrich cancer-relevant genomic regions from total cfDNA | Impact coverage uniformity and sensitivity for variant detection |

| Size Selection | Bead-based or enzymatic size selection [21] | Enrich shorter DNA fragments (90-150 bp) characteristic of ctDNA | Improve signal-to-noise ratio by leveraging fragmentomic properties |

Strategies for Improving Reproducibility and Standardization

Methodological Enhancements for Reduced Variability

Several methodological approaches can significantly improve the reproducibility of ctDNA testing across laboratories:

Fragment Size Selection: Utilizing the distinct size profile of tumor-derived cfDNA (typically 90-150 bp) compared to non-tumor DNA (which tends to be longer) provides an opportunity to improve sensitivity and reproducibility [21]. Bead-based or enzymatic size selection methods specifically designed to enrich shorter fragments can increase the fractional abundance of ctDNA in sequencing libraries by several folds, particularly enhancing the detection of low-frequency variants when combined with error-corrected next-generation sequencing [21].

Error-Corrected Sequencing Methods: Advanced sequencing approaches that improve error correction can significantly enhance reproducibility. Duplex Sequencing, which tags and sequences each of the two strands of a DNA duplex, represents the gold standard for high-accuracy sequencing [12]. Newer methods including SaferSeqS, NanoSeq, Singleton Correction, and Concatenating Original Duplex for Error Correction (CODEC) offer improved efficiency while maintaining high accuracy [12]. These approaches can achieve 1000-fold higher accuracy than conventional NGS while using up to 100-fold fewer reads than duplex sequencing [12].

Alternative Detection Approaches: Structural variant (SV)-based ctDNA assays can mitigate many challenges associated with single nucleotide variant detection by identifying tumor-specific rearrangements with breakpoint sequences unique to the tumor [21]. These approaches can achieve parts-per-million sensitivity with high specificity, as normal cells lack these specific rearrangement combinations [21]. Similarly, phased variant approaches, such as PhasED-Seq, improve sensitivity by targeting multiple single-nucleotide variants on the same DNA fragment [21].

Quality Framework and Standardization Initiatives

Establishing robust quality frameworks and standardization initiatives is essential for improving inter-laboratory reproducibility:

Regulatory Guidance and Standards Development: The FDA has issued guidance on the use of ctDNA in early-stage solid tumor drug development, focusing on standardization and harmonization of ctDNA assays and methodologies with particular attention to assay considerations for assessing molecular residual disease (MRD) [22]. Such regulatory frameworks provide essential direction for assay validation and implementation.

Coordinated Research Consortia: Initiatives like the Friends of Cancer Research ctDNA for Monitoring Treatment Response (ctMoniTR) project represent coordinated efforts to synchronize data generation across multiple stakeholders, including pharmaceutical companies, diagnostic labs, government health officials, patient advocates, and academic researchers [23]. By aggregating data from multiple clinical trials early in the endpoint development process, these consortia aim to establish standardized evidence generation more efficiently than individual organizations working in isolation [23].

Analytical Validation Guidelines: Comprehensive analytical validation should include assessment of sensitivity, specificity, and reproducibility across the entire range of intended use, with particular attention to low VAF detection. The SEQC2 project recommendations emphasize that well-characterized reference standards can directly measure analytic performance characteristics in the absence of confounding biological variables and serve as valuable tools for comparing ctDNA assays [20].

Diagram 2: Comprehensive framework for assessing and improving ctDNA testing reproducibility, incorporating technical assessment, quality standards, and harmonization initiatives.

The reproducibility of ctDNA testing across laboratories remains challenged by multiple technical factors, particularly at variant allele frequencies below 0.5%. The limited input material, random sampling constraints, and methodological variations contribute significantly to inter-laboratory variability. However, coordinated efforts through research consortia, standardized reference materials, and advanced methodological approaches offer promising pathways toward improved harmonization.

Future progress will depend on continued development and implementation of standardized protocols, reference materials, and proficiency testing programs. Methodological advances including error-corrected sequencing approaches, fragmentomic analyses, and structural variant-based detection hold promise for enhancing reproducibility, particularly for minimal residual disease detection where sensitivity demands are highest. As the field moves toward greater standardization, the establishment of consensus guidelines and quality metrics will be essential for ensuring that ctDNA testing can reliably inform clinical decision-making and drug development programs across different testing environments.

The reproducibility of circulating tumor DNA (ctDNA) assays, particularly digital PCR (dPCR) applications, is a critical challenge in molecular diagnostics and drug development. Pre-analytical variables—those factors affecting the sample before it is analyzed—introduce significant variability that can compromise data integrity and cross-study comparisons. This guide objectively compares the impact of key pre-analytical factors, including blood collection tubes, processing delays, and storage conditions, on sample stability, providing a foundation for standardizing protocols in ctDNA research.

Blood Collection Tubes: A Comparative Analysis

The choice of blood collection tube is the first critical decision in the ctDNA workflow. Different tubes contain specific additives designed to either stabilize blood cells or preserve the cell-free DNA fraction, directly influencing the yield and quality of extracted ctDNA.

Table 1: Comparison of Common Blood Collection Tubes for ctDNA Analysis

| Tube Cap Color | Additive | Primary Mechanism | Key Advantages | Key Limitations & Considerations |

|---|---|---|---|---|

| EDTA (e.g., Lavender/Purple) | EDTA (Chelator) | Binds calcium ions to prevent coagulation [26] [27]. | - Inexpensive and widely available.- Suitable for a wide range of molecular tests [26]. | - Requires rapid processing (typically within 2-6 hours at 4°C) to prevent white blood cell lysis and background wild-type DNA release [11] [28]. |

| Streck-type (White) | Cell-Stabilizing Agents | Cross-links cells to inhibit lysis and nuclease activity [11]. | - Allows room temperature transport/storage for up to 7 days [11].- Excellent for preserving ctDNA integrity during shipping. | - Higher cost than conventional tubes.- May not be compatible with multi-analyte liquid biopsy panels that include circulating tumor cells [11]. |

| Citrate (Light Blue) | Sodium Citrate | Weak calcium chelator [27]. | - Standard for coagulation studies. | - Less common for primary ctDNA analysis. |

| Heparin (Green) | Heparin | Antithrombin effect [26] [27]. | - Can be used for various plasma-based tests. | - Heparin can inhibit PCR and is generally not recommended for PCR-based ctDNA assays [26]. |

| Serum Tubes (Red/Gold) | Clot Activator & Gel | Accelerates clotting and separates serum [26] [27]. | - Ideal for serology and many chemistry tests. | - The clotting process can entrap tumor cells and release genomic DNA, diluting the ctDNA fraction. Not recommended for ctDNA analysis [11]. |

Experimental Protocols for Key Pre-analytical Studies

Protocol: Evaluating Processing Delay and Holding Temperature

A seminal study investigating the impact of pre-processing delays and holding temperatures on protein biomarkers provides a robust methodological template applicable to ctDNA research [29].

- Sample Collection: Venous blood was drawn from healthy subjects and ICU patients into citrate and EDTA tubes [29].

- Experimental Conditions: Samples were subjected to different pre-processing conditions [29]:

- Reference Protocol: 1 hour at room temperature (RT) before processing.

- Immediate Cooling: Placed immediately on ice for 1 hour.

- Delayed Processing: Held for 3 hours at either RT or 4°C.

- Processing: All tubes were centrifuged (2,500 x g for 15 minutes), and plasma was aliquoted and frozen at -80°C [29].

- Analysis: Analytes were measured using multiplex assays (Luminex) and statistical analysis, including Receiver-Operating Characteristic (ROC) curves, was performed to assess the impact on data classification [29].

Protocol: Impact of Extended Processing Delays on Plasma Markers

This protocol assesses the stability of biomarkers relevant to critical illness, including cell-free DNA (cfDNA), under extended delays, mimicking real-world shipping conditions [30].

- Sample Collection: Blood was collected from ICU patients and healthy volunteers into citrate and EDTA tubes [30].

- Delay Simulation: Blood from each tube was aliquoted and stored under various conditions [30]:

- Temperatures: Room temperature (23°C) vs. 4°C.

- Delay Durations: 0, 24, 48, and 72 hours.

- Processing and Storage: After the delay, tubes were centrifuged at 2,500 x g for 15 minutes. Plasma was aliquoted and frozen at -80°C for batch analysis [30].

- Downstream Analysis:

- cfDNA Quantification: Using the Picogreen dsDNA Assay Kit.

- Cytokine/Chemokine Analysis: Using multiplex immunoassays (Meso Scale Discovery).

- Thrombin Generation: Measured with a Technothrombin TGA reagent kit to assess clotting potential [30].

Quantitative Data on Processing Delays and Temperature

The following tables synthesize experimental data on how processing delays and storage temperatures affect sample integrity.

Table 2: Impact of Processing Delay and Temperature on Sample Integrity

| Analyte Category | Delay Duration | Holding Temperature | Observed Effect | Experimental Context |

|---|---|---|---|---|

| Cytokines (e.g., IL-8, IL-9, RANTES, PAI-1) | 1 hour | Ice (4°C) | Significant decrease in serum levels compared to RT; creates viscous, hard-to-handle serum [29]. | Blood from healthy subjects [29]. |

| Various Cytokines & Diabetes Proteins | 3 hours | RT vs. 4°C | Effect was analyte-specific; no single optimal temperature for all. Some showed little change (leptin, insulin), while others were significantly altered [29]. | Blood from healthy subjects [29]. |

| Cell-free DNA (cfDNA), Cytokines, Clotting Factors | Up to 72 hours | RT vs. 4°C | Most biomarkers were significantly affected by delays, with effects varying by analyte. Citrate and EDTA plasma showed different stability profiles [30]. | Blood from ICU patients and healthy volunteers [30]. |

| General cfDNA Integrity | >6 hours (EDTA tubes) | RT | Risk of increased wild-type DNA background from white blood cell lysis, diluting the ctDNA fraction [11] [28]. | Standard protocol for liquid biopsy [11]. |

Pre-analytical Impact on ctDNA Testing

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Materials and Reagents for Pre-analytical ctDNA Workflows

| Item | Function | Considerations for Inter-laboratory Reproducibility |

|---|---|---|

| Cell-Stabilizing Blood Tubes (e.g., Streck, PAXgene) | Preserves nucleated blood cell integrity, preventing release of genomic DNA and stabilizing ctDNA during transport [11]. | Critical for multi-center studies to minimize variability from shipping delays. Allows room temperature storage for up to 7 days [11]. |

| EDTA Blood Collection Tubes | Prevents coagulation by chelating calcium ions; standard for many molecular assays [11] [27]. | Requires strict adherence to a short processing window (2-6h) and consistent temperature (4°C) to prevent sample degradation [11] [28]. |

| Double-Spin Centrifugation Protocol | 1st slow spin (e.g., 380-3,000 g) to separate cells; 2nd high-speed spin (e.g., 12,000-20,000 g) to clear residual platelets and debris from plasma [11]. | Detailed protocol must be harmonized across labs. Variations in g-force and time can affect platelet contamination and cfDNA yield [11] [28]. |

| Specialized cfDNA Extraction Kits | Isolate and purify the low-concentration cfDNA fraction from plasma. Methods include silica membrane columns and magnetic beads [11]. | Kits from different manufacturers have varying efficiencies and propensities to lose short DNA fragments. Using the same kit and lot across a study improves reproducibility [11] [28]. |

| Ultra-Low Temperature Freezers (-80°C) | For long-term storage of extracted cfDNA and plasma samples. | Freeze-thaw cycles must be minimized by storing plasma in small, single-use aliquots. Thawing should be done slowly on ice [11] [28]. |

Strategies for Mitigating Pre-analytical Variability

To enhance the inter-laboratory reproducibility of dPCR-based ctDNA assays, proactive mitigation strategies are essential.

- Adopt Cell-Stabilizing Tubes for Multi-Center Trials: For studies involving sample shipment from multiple clinical sites, the use of cell-stabilizing blood collection tubes is the most effective strategy to control for variability introduced by transportation logistics [11].

- Implement Standardized SOPs with Narrow Tolerances: Laboratory protocols must be highly detailed, specifying exact parameters for centrifugation (g-force, time, temperature), plasma volume for extraction, and acceptance criteria for DNA quality [11] [31].

- Harmonize Pre-analytical Protocols Across Networks: As called for by the Association for Molecular Pathology, consistent reporting and adoption of best practices for pre-analytical variables are needed to ensure high-quality, comparable data across the industry [31].

- Monitor Environmental Transport Conditions: External specimen transport is susceptible to climate variations that can cause pre-analytical errors. Monitoring or controlling temperature and agitation during transport is necessary for sample integrity [32].

Circulating tumor DNA (ctDNA) analysis has emerged as a transformative paradigm in oncology, enabling non-invasive assessment of tumor burden, genetic heterogeneity, and therapeutic response in a real-time manner [21]. This liquid biopsy approach provides a less invasive alternative to tissue biopsies with lower sampling bias and procedural risk [21]. However, the clinical utility of ctDNA analysis depends critically on the reproducibility of detection technologies, particularly for applications involving low variant allele frequencies (VAF) below 0.1% in early-stage disease and minimal residual disease (MRD) monitoring [21] [12].

The fundamental challenge stems from the biologically low abundance of ctDNA, which can represent less than 0.1% of total circulating cell-free DNA (cfDNA) [21] [25]. This technical challenge is compounded by pre-analytical variability, analytical platform differences, and bioinformatic processing inconsistencies that collectively impact the reliability of results across laboratories [21] [25]. As ctDNA analysis becomes increasingly integrated into clinical decision-making for treatment selection and patient risk stratification, ensuring reproducible measurements across platforms and institutions has become a clinical imperative with direct consequences for patient outcomes.

Technology Landscape: dPCR Versus NGS Platforms

Digital PCR Platforms and Principles

Digital PCR (dPCR) represents the third generation of PCR technology, following conventional PCR and real-time quantitative PCR (qPCR) [2]. The fundamental principle involves partitioning a PCR mixture into thousands of individual reactions so that each partition contains zero, one, or a few nucleic acid targets according to a Poisson distribution [2]. Following PCR amplification, the fraction of positive partitions is counted via endpoint measurement, allowing absolute quantification of target concentration without calibration curves [2]. This partitioning enables single-molecule detection with high sensitivity, absolute quantification, and exceptional reproducibility [2].

Two primary partitioning methods have emerged: water-in-oil droplet emulsification (droplet digital PCR or ddPCR) and microchamber-based systems [2]. The ddPCR approach disperses samples into picoliter to nanoliter droplets within an immiscible oil phase, while microchamber systems use arrays of microscopic wells embedded in solid chips [2]. The commercialization of dPCR platforms has accelerated since Fluidigm introduced the first nanofluidic system in 2006, with major platforms now available from Bio-Rad, Qiagen, Thermo Fisher, and Roche [2].

Next-Generation Sequencing Approaches

Next-generation sequencing (NGS) platforms for ctDNA analysis employ targeted panels, whole-exome, or whole-genome sequencing approaches to detect a broader spectrum of genomic alterations without requiring prior knowledge of specific mutations [12]. These methods include tagged-amplicon deep sequencing (TAm-Seq), Safe-Sequencing System (Safe-SeqS), CAncer Personalized Profiling by deep Sequencing (CAPP-Seq), and targeted error correction sequencing (TEC-Seq) [12]. A critical advancement in NGS methodology involves unique molecular identifiers (UMIs), which are molecular barcodes tagged onto DNA fragments before PCR amplification to distinguish true mutations from sequencing artifacts [12]. Techniques such as Duplex Sequencing, which tags and sequences both strands of DNA duplexes, provide gold-standard error correction but with reduced efficiency [12].

Comparative Performance Data

Direct comparisons between dPCR and NGS platforms reveal significant differences in detection capabilities and reproducibility. A 2025 study in rectal cancer patients demonstrated that ddPCR detected ctDNA in 58.5% (24/41) of baseline plasma samples compared to 36.6% (15/41) for targeted NGS panels (p = 0.00075) [24]. This superior detection sensitivity came with the additional advantage of 5-8.5-fold lower operational costs compared to NGS [24].

Table 1: Performance Comparison of ddPCR versus NGS for ctDNA Detection in Localized Rectal Cancer

| Parameter | ddPCR | NGS Panel | Statistical Significance |

|---|---|---|---|

| Detection rate in development cohort (n=41) | 58.5% (24/41) | 36.6% (15/41) | p = 0.00075 |

| Detection rate in validation cohort (n=26) | 80.8% (21/26) | Not reported | Not applicable |

| Cost comparison | Reference (1×) | 5-8.5× higher | Not applicable |

| Optimal variant allele frequency range | 0.01% and above | Generally >0.1% | Methodologically dependent |

The performance differential becomes particularly pronounced at very low variant allele frequencies. A comprehensive 2024 evaluation of nine ctDNA sequencing assays revealed substantial variability in sensitivity, particularly with low DNA inputs (<20 ng) and VAFs below 0.5% [25]. While some NGS assays achieved sensitivity above 95% for single nucleotide variants (SNVs) at VAFs of 0.5%, performance dropped significantly at 0.1% VAF across all platforms [25]. Assays with larger panel sizes (>1 Mb) generally demonstrated lower sensitivity at these challenging thresholds, highlighting the fundamental tradeoff between breadth of genomic coverage and detection sensitivity [25].

Experimental Protocols: Assessing Reproducibility

Sample Collection and Processing

Standardized pre-analytical protocols are critical for reproducible ctDNA analysis. Recommended protocols involve collecting 3×9 mL of blood into specialized cell-free DNA collection tubes (e.g., Streck Cell Free DNA BCT) [24]. Plasma separation should occur within specified timeframes, followed by cfDNA extraction using validated kits [24] [25]. Extraction efficiency varies significantly between platforms, with studies reporting extraction efficiencies as low as 16% for some methods, directly impacting downstream sensitivity and reproducibility [25].

The quantity and quality of extracted cfDNA must be rigorously quantified before analysis, with fluorometric methods (e.g., Qubit) preferred over spectrophotometric approaches for accuracy [25]. Input DNA amounts significantly affect assay performance, with thresholds generally set at <20 ng (low), 20-50 ng (medium), and >50 ng (high) [25]. Studies demonstrate that samples with low cfDNA inputs tend to have lower sequencing depth and on-target rates, directly impacting sensitivity and reproducibility [25].

dPCR Workflow and Analysis

The following diagram illustrates the core dPCR workflow that enables highly reproducible ctDNA detection:

Diagram 1: dPCR Workflow for Reproducible ctDNA Detection

The dPCR process begins with partitioning of the PCR mixture containing the sample into thousands of individual reactions [2]. Following amplification, endpoint fluorescence analysis distinguishes positive from negative partitions [2]. Absolute quantification is calculated using Poisson statistics based on the fraction of positive partitions, providing calibration-free measurement that enhances reproducibility across laboratories [2].

For tumor-informed dPCR assays, the process typically involves initial sequencing of primary tumor tissue to identify mutations, followed by design of custom probes for the highest frequency mutations [24]. This approach allows detection of somatic alterations at frequencies as low as 0.01% VAF by dividing 2-9 μL of extracted DNA into 20,000 droplets and calculating absolute quantities based on PCR-positive and negative droplets [24].

Analytical Validation Standards

Comprehensive validation of ctDNA assays requires assessment of multiple performance parameters across different variant types and allele frequencies. The international multicenter validation of the Hedera Profiling 2 ctDNA test panel exemplifies this approach, evaluating sensitivity, specificity, and reproducibility for single-nucleotide variants (SNVs), insertions and deletions (Indels), fusions, copy number variations, and microsatellite instability status [33]. Using reference standards with variants at 0.5% allele frequency, the assay demonstrated sensitivity of 96.92% and specificity of 99.67% for SNVs/Indels, with 100% sensitivity for fusion detection [33].

Table 2: Analytical Performance Metrics for Reproducible ctDNA Detection

| Performance Metric | Target Threshold | Impact on Reproducibility |

|---|---|---|

| Sensitivity for SNVs/Indels (0.5% VAF) | >95% | Reduces false negatives in MRD detection |

| Specificity | >99.5% | Prevents false positives in treatment selection |

| Limit of Detection | 0.01%-0.1% VAF | Enables early-stage cancer applications |

| Intra-assay reproducibility | CV < 10% | Ensures consistent results across repeated measurements |

| Inter-laboratory concordance | >90% | Supports decentralized testing models |

| Extraction efficiency | >80% | Maintains assay sensitivity with limited samples |

For dPCR platforms, key validation parameters include intra-assay precision (typically CV < 10%), inter-laboratory concordance (>90%), and minimal detectable allele frequency (0.01% for many applications) [24] [2]. These metrics collectively determine the reliability of ctDNA measurements for clinical decision-making, particularly when monitoring molecular response through quantitative changes in ctDNA levels [12].

Clinical Implications of Reproducibility

Risk Stratification in Solid Tumors

Reproducible ctDNA detection directly impacts risk stratification across multiple cancer types. In breast cancer, structural variant-based ctDNA assays can assess residual disease months to years after resection and adjuvant therapy, with detectable ctDNA after treatment completion associated with significantly higher rates of clinical recurrence [21]. Similarly, in colorectal cancer, longitudinal ctDNA monitoring during and after adjuvant chemotherapy provides more reliable recurrence risk assessment than carcinoembryonic antigen (CEA) and imaging, enabling precision treatment intensification or de-escalation [21].

The consequences of poor reproducibility are particularly significant in the minimal residual disease (MRD) setting, where false-negative results may provide false reassurance, while false-positive findings could lead to unnecessary adjuvant therapy [21] [12]. Studies demonstrate that patients with stage II-III colorectal cancers with detectable ctDNA after curative-intent therapy have recurrence risks of 80-100%, underscoring the critical importance of reproducible detection [24].

Treatment Response Monitoring

Reproducible ctDNA quantification enables more accurate treatment response assessment than traditional imaging modalities. In non-small cell lung cancer (NSCLC), declines in ctDNA levels predict radiographic response to therapy more accurately than follow-up imaging [21]. Additionally, resistance mutations often appear in plasma weeks before clinical or radiographic evidence of disease progression, providing a critical window for treatment modification [21].

For B-cell lymphoma, ctDNA-based MRD assays demonstrate superior sensitivity and informativeness compared to standard PET or CT imaging, detecting subclinical disease not visible on imaging [21]. The reproducibility of these measurements across laboratories determines their utility for guiding immunochemotherapy decisions in aggressive disease variants [21].

Essential Research Reagent Solutions

The following reagent solutions are critical for ensuring reproducible ctDNA analysis in research and clinical settings:

Table 3: Essential Research Reagent Solutions for Reproducible ctDNA Analysis

| Reagent/Category | Function | Examples/Specifications |

|---|---|---|

| Cell-free DNA Blood Collection Tubes | Preserves blood samples for ctDNA analysis | Streck Cell Free DNA BCT; prevents white blood cell lysis and background DNA release |

| cfDNA Extraction Kits | Isolate cell-free DNA from plasma | Magnetic bead-based systems; silica membrane columns; evaluate by extraction efficiency |

| dPCR Master Mixes | Enable partitioned amplification | Probe-based chemistry (e.g., TaqMan); EvaGreen dye-based; optimized for partition stability |

| Mutation-Specific Assays | Detect tumor-derived mutations | Custom TaqMan assays; primer-probe sets for hotspot mutations; tumor-informed designs |

| Reference Standards | Validate assay performance | Seraseq ctDNA Reference Materials; Horizon Multiplex I gDNA; characterized variants at known VAF |

| Unique Molecular Identifiers (UMIs) | Reduce sequencing errors | Molecular barcodes ligated to DNA fragments; enable error correction in NGS workflows |

| Bioinformatics Pipelines | Analyze sequencing data | Variant calling algorithms; Poisson statistics for dPCR; error suppression methods |

Future Directions and Standardization Initiatives

Emerging technologies promise to further enhance the reproducibility and clinical utility of ctDNA analysis. Structural variant-based ctDNA assays, nanomaterial-based electrochemical sensors, magnetic nano-electrode platforms, and fragment-enriched library preparation methods have improved sensitivity to attomolar concentrations [21]. Approaches leveraging phased variants (multiple SNVs on the same DNA fragment), such as PhasED-Seq, demonstrate enhanced sensitivity for ctDNA detection [21].

The integration of artificial intelligence-based error suppression methods and microfluidic point-of-care devices may represent the next horizon for ctDNA liquid biopsy technology, potentially reducing inter-laboratory variability [21]. Additionally, multiplexed CRISPR-based ctDNA assays offer promising approaches to enhance specificity while maintaining sensitivity [21].

Standardization initiatives focusing on pre-analytical variables, analytical validation standards, and bioinformatic pipelines are essential for improving reproducibility across platforms and laboratories [25]. The development of well-characterized reference materials and standardized reporting metrics will enable more meaningful comparisons between studies and facilitate the integration of ctDNA analysis into routine clinical practice [33] [25].

As these technologies evolve, the fundamental imperative remains ensuring that reproducibility keeps pace with sensitivity, enabling clinicians to make critical patient management decisions with confidence in the reliability of ctDNA results across testing locations and over time.

From Blood Draw to Data: Standardized dPCR Workflows and Clinical Applications

The analysis of circulating tumor DNA (ctDNA) via liquid biopsy has revolutionized oncological diagnostics and treatment monitoring, offering a minimally invasive window into tumor genetics [34]. The pre-analytical phase—encompassing blood collection, transport, and plasma processing—is a critical determinant of data quality and reliability. Variations in this phase significantly impact the inter-laboratory reproducibility of sensitive downstream applications like digital PCR (dPCR) [35] [31]. Among pre-analytical variables, the choice of blood collection tube is paramount. This guide objectively compares the performance of two primary tube types: the traditional K2EDTA tube and modern cell-stabilizing tubes (e.g., Streck Cell-Free DNA BCT), providing researchers with experimental data and protocols to inform their study designs.

Technical Comparison of Blood Collection Tubes

Mechanism of Action and Primary Applications

- EDTA Tubes: K2EDTA tubes function as an anticoagulant by chelating calcium ions, which are essential cofactors in the coagulation cascade. This effectively prevents blood from clotting, preserving cellular components for analysis [36] [37]. They are the gold standard for routine hematology tests like the complete blood count (CBC) [36].

- Cell-Stabilizing Tubes (e.g., Streck cfDNA BCT): These tubes contain a proprietary preservative that not only prevents coagulation but also actively stabilizes white blood cell membranes. This dual action minimizes cell lysis and the subsequent release of wild-type genomic DNA into the plasma, thereby preserving the native cell-free DNA (cfDNA) profile. They also contain nuclease inhibitors to minimize cfDNA degradation [38] [39].

Direct Performance Comparison for ctDNA Analysis

The most significant operational difference lies in the allowable time between blood draw and plasma processing. The table below summarizes key performance characteristics based on clinical studies.

Table 1: Performance Comparison of EDTA and Cell-Stabilizing Tubes for ctDNA Analysis

| Characteristic | K2EDTA Tubes | Cell-Stabilizing Tubes (Streck cfDNA BCT) |

|---|---|---|

| Mechanism of Action | Calcium chelation to prevent clotting [36] [37] | Cell membrane stabilization and nuclease inhibition [38] [39] |

| Max Storage Time (Room Temperature) | 4-6 hours to prevent genomic DNA contamination [40] [34] | Up to 3-7 days (studied in cancer patients) [40]; up to 14 days per manufacturer [39] |

| Genomic DNA Contamination | Significant increase after 6 hours due to cell lysis [38] [34] | No significant increase in gDNA after 3 days [40] |

| Impact on ctDNA Mutation Detection | Reliable if processed within 4-6 hours; risk of false negatives due to dilution after lysis [34] | Highly comparable mutation allele frequencies to EDTA baselines after 3-day storage [40] |

| Ideal Use Case | Single-site studies with immediate processing capabilities [34] | Multi-center clinical trials; biobanking; shipping samples to central labs [38] [39] |

Supporting Experimental Data and Protocols

Key Experimental Findings

Research consistently demonstrates the superiority of cell-stabilizing tubes for extended sample storage.

- Prevention of Cellular Lysis: A pivotal study comparing Streck BCT and PAXgene tubes in metastatic breast cancer patients measured plasma DNA concentration using droplet digital PCR (ddPCR). Blood stored in BCT tubes for 7 days showed no evidence of cell lysis. In contrast, PAXgene tubes showed an order of magnitude increase in genome equivalents, indicating substantial cellular lysis and genomic DNA contamination [38].

- Reliable Mutation Detection after Storage: A 2023 study with colorectal, pancreatic, and non-small cell lung cancer patients found that cfDNA yield, gDNA contamination levels, and mutational load were highly comparable between samples collected in K2EDTA tubes (processed within 6 hours) and those collected in Streck cfDNA BCTs and stored for 3 days at room temperature. This confirms that BCTs maintain sample integrity for reliable ctDNA analysis [40].

Detailed Experimental Protocol for Method Comparison

The following workflow, derived from the methodologies of the cited studies, provides a template for comparing tube performance in a validation study [38] [40].

Workflow Summary: Tube Comparison Protocol

- Patient Cohort & Blood Draw: Enroll patients (e.g., with metastatic breast cancer). Collect venous blood using a standard phlebotomy technique [38].

- Tube Allocation: Distribute blood into different tube types, including K2EDTA (as a baseline control), PAXgene, and Streck cfDNA BCTs [38].

- Storage Conditions: Process a subset of all tube types within 2 hours of collection. Store the remaining PAXgene and BCT tubes at room temperature for a defined period (e.g., 3-7 days) [38] [40].

- Plasma Processing: Isolate plasma using a double-centrifugation protocol to ensure a cell-free sample. An example protocol is:

- cfDNA Extraction & Analysis: Extract cfDNA from plasma using a dedicated kit (e.g., QIAamp Circulating Nucleic Acid Kit). Analyze the eluted DNA using ddPCR to quantify total wild-type DNA (a measure of contamination) and specific mutations (e.g., PIK3CA E545K and H1047R in breast cancer) [38].

The Scientist's Toolkit: Essential Research Reagents

The table below lists key materials and their functions for conducting studies on blood collection tubes, as derived from the experimental protocols.

Table 2: Essential Reagents and Kits for Blood Collection Tube Research

| Item | Function/Application | Example Product/Brand |

|---|---|---|

| Blood Collection Tubes | Sample acquisition and initial preservation. | K2EDTA tubes [36]; Streck Cell-Free DNA BCT [39]; PAXgene Blood ccfDNA tubes [38] |

| cfDNA Extraction Kit | Isolation and purification of cell-free DNA from plasma. | QIAamp Circulating Nucleic Acid Kit (Qiagen) [38] [40] |

| Digital PCR System | Absolute quantification of DNA molecules and low-frequency mutations. | Bio-Rad QX200 Droplet Digital PCR System [38] |

| qPCR Assay Reagents | Quantification of total cfDNA and assessment of genomic DNA contamination. | LINE-1 qPCR assays (short and long amplicons) [40] |

| Plasma Storage Tubes | Long-term preservation of processed plasma at ultra-low temperatures. | Cryotubes for storage at -80°C [40] [34] |

The choice between EDTA and cell-stabilizing blood collection tubes is fundamentally dictated by the logistical needs of the study and the requirement for inter-laboratory reproducibility.

- For single-site research where plasma can be reliably processed within a narrow 4-6 hour window, K2EDTA tubes remain a cost-effective and valid option [34].

- For multi-center clinical trials, biobanking, or any study involving sample shipment, cell-stabilizing tubes (Streck cfDNA BCT) are strongly recommended. Their ability to maintain sample integrity at room temperature for several days minimizes pre-analytical variability introduced by transport delays, directly enhancing the reliability and reproducibility of ctDNA data across different laboratories [38] [40].

Standardizing blood collection protocols using cell-stabilizing tubes is a critical step toward achieving robust and comparable liquid biopsy results in global research efforts.

The analysis of circulating tumor DNA (ctDNA) from liquid biopsies has become integral to modern precision oncology, enabling non-invasive cancer diagnosis, treatment selection, and disease monitoring [35] [41]. However, the diagnostic accuracy of these assays is significantly impacted by sample quality and pre-analytical variables, with circulating cell-free DNA (cfDNA) extraction representing a critical source of inter-laboratory variability [35] [42]. Circulating tumor DNA typically constitutes less than 1% of total cfDNA in plasma, and this fraction can be even lower in early-stage cancers or minimal residual disease [42] [25]. Efficient and standardized extraction of these low-abundance, fragmented molecules is therefore paramount for obtaining reliable and reproducible results across different laboratories [43] [42].

This guide objectively compares the performance of various cfDNA extraction methods, provides supporting experimental data, and outlines quality control approaches that can enhance inter-laboratory reproducibility for digital PCR-based ctDNA assays.

Comparative Performance of cfDNA Extraction Methods

Extraction Efficiency and DNA Yield

Multiple studies have systematically evaluated the efficiency of commercially available cfDNA extraction kits, revealing significant differences in performance characteristics.

Table 1: Comparison of cfDNA Extraction Kit Performance from Plasma Samples

| Extraction Method | Type | Reported Yield | Recovery Efficiency | Key Characteristics | Primary Reference |

|---|---|---|---|---|---|

| QIAamp Circulating Nucleic Acid Kit (CNA) | Manual/Semi-automated | Consistently highest | ~84% (for 180 bp spike-in) | High yield of short fragments; superior for low VAF detection | [43] [42] [44] |

| QIAamp MinElute ccfDNA Kit (ME) | Automated (QIAcube) | Lower than CNA | Information Missing | Enables high-volume plasma input (8 mL); higher VAF in some cases | [42] |

| Maxwell RSC ccfDNA Plasma Kit (RSC) | Automated | Lower than CNA | Information Missing | Higher variant allelic frequency in some mutations; reproducible | [35] [42] |

| QIAsymphony DSP Circulating DNA Kit (SYM) | Automated | Lower than CNA | Information Missing | Fully automated; good reproducibility but lower yield | [43] |

| Zymo Quick ccfDNA Serum & Plasma Kit | Manual | Lower than CNA | ~59% (for 180 bp spike-in) | Information Missing | [42] [44] |

A comprehensive evaluation of extraction methods using samples from 18 healthy donors revealed that the QIAamp Circulating Nucleic Acid Kit (manual and semi-automated) outperformed other methods, showing significantly higher recovery rates and cfDNA quantity without compromising quality or introducing high-molecular-weight DNA contamination [43]. This study also found all methods to be reproducible with no significant day-to-day variability.

When evaluating cancer patient-derived plasma, the CNA kit consistently demonstrated the highest yield of total ccfDNA and short-sized fragments across 21 samples from patients with gastrointestinal stromal tumors or non-small cell lung carcinoma [42]. However, the Maxwell RSC kit occasionally showed higher variant allelic frequencies for specific mutations, suggesting that yield alone does not fully represent extraction performance for ctDNA analysis [42].

Impact on Downstream Mutation Detection

The choice of extraction method directly influences the sensitivity of subsequent mutation detection assays. In a comparison study, while the CNA kit generally yielded more mutant copies per mL of plasma, the Maxwell RSC kit demonstrated superior mutant detection in some cases, highlighting that the optimal extraction method may be mutation-dependent [42].

For high-volume plasma processing, the QIAamp MinElute ccfDNA kit, which processes 8 mL of plasma, showed higher variant allelic frequencies compared to the CNA kit using 2 mL of plasma, despite lower total yields [42]. This finding is particularly relevant for clinical applications where detecting low-frequency mutations is critical.

Table 2: Impact of Extraction Method on Mutation Detection in Patient-Derived Plasma

| Performance Metric | QIAamp CNA Kit | Maxwell RSC Kit | QIAamp MinElute Kit |

|---|---|---|---|

| Total ccfDNA yield | Highest | Lower | Lower (but from 8 mL input) |

| Short fragment (137 bp) recovery | Highest | Lower | Information Missing |

| Mutant copies detection | Higher in 2/4 cases | Higher in 2/4 cases | Information Missing |

| Variant Allelic Frequency | Lower in 3/4 cases | Higher in 3/4 cases | Higher than CNA |

| Best suited for | Maximizing total yield for multi-analyte tests | Detecting higher VAF mutations | Processing large plasma volumes |

Standardization Through Quality Control and Spike-In Materials

Spike-In Controls for Process Monitoring

The implementation of standardized quality control materials represents a promising approach to monitoring extraction efficiency across laboratories. An interlaboratory study demonstrated that adding exogenous spike-in materials to plasma samples before cfDNA extraction effectively monitors process performance without deleteriously interfering with endogenous ccfDNA recovery [35] [45].

The recommended approach uses spike-in materials containing exogenous sequences with fragment lengths approximating ccfDNA. For example, a spike-in containing an Arabidopsis sequence can be added to plasma before extraction, with its recovery quantified by digital PCR [35]. This method performed consistently across different extraction protocols and blood collection devices, with dPCR quantification demonstrating good repeatability (generally CV <5%) [35] [45].

The CEREBIS spike-in control was specifically designed to evaluate recovery efficiency for both cfDNA extraction and bisulfite modification. This synthetic, non-human DNA fragment mimics mononucleosomal cfDNA size and contains cytosine-free regions to assess bisulfite conversion efficiency [44]. Studies using this control have established reproducible extraction efficiencies specific for each method: 84.1% (± 8.17) for the QIAamp kit in plasma, and 58.7% (± 11.1) for the Zymo kit in urine [44].

Reference Materials for Assay Validation

For validating detection of low-frequency mutations, novel reference materials have been developed. One approach created an SI-traceable "ctDNA" reference material by gravimetrically mixing a 152 bp BRAF V600E PCR amplicon with sonicated wild-type genomic DNA [46] [47]. This material demonstrated high concordance between ddPCR measurements and gravimetrical values across a mutant frequency range from 53.9% to 0.1%, with a limit of quantification of 0.1% [46].

Interlaboratory assessments using such reference materials have identified potential sources of systematic error, such as uncorrected droplet volume in certain ddPCR platforms [46] [47]. Correcting these technical variables significantly improved between-laboratory consistency in copy number measurements [47].

Experimental Protocols for Extraction Efficiency Evaluation

Protocol: Interlaboratory QC Assessment Using Spike-In Controls

Principle: Add exogenous DNA spike-in to plasma samples before extraction to monitor and compare extraction efficiency across laboratories [35] [45].

Materials:

- Spike-in material (e.g., plasmid-derived with Arabidopsis sequence or CEREBIS)

- Plasma samples (healthy donor or patient-derived)

- cfDNA extraction kits for comparison

- Digital PCR system for quantification

Procedure:

- Spike-in Addition: Add a standardized amount of spike-in material (e.g., CEREBIS with 180 bp fragment) to each plasma sample before extraction.

- cfDNA Extraction: Perform extraction according to manufacturer's protocols for each kit being evaluated.

- Quantification: Measure spike-in recovery using target-specific dPCR assays.

- Data Analysis: Calculate extraction efficiency as (measured spike-in concentration / expected spike-in concentration) × 100%.

Validation: This protocol was validated in a five-laboratory study using various blood collection devices (PAXgene, Streck) and extraction methods, demonstrating consistency across platforms and the ability to highlight efficiency differences between methods [35].

Protocol: Comprehensive Extraction Method Comparison

Principle: Systematically compare multiple extraction methods using patient-derived plasma samples with comprehensive downstream analysis [42].

Materials:

- Patient-derived plasma samples (e.g., cancer patients)

- Extraction kits for comparison (e.g., CNA, RSC, MinElute, Zymo)