Ensuring Precision in Oncology: A Deep Dive into Inter-Laboratory Reproducibility of NGS Cancer Panels

Next-generation sequencing (NGS) has become a cornerstone of precision oncology, yet the consistency of results across different laboratories is paramount for clinical trust and drug development.

Ensuring Precision in Oncology: A Deep Dive into Inter-Laboratory Reproducibility of NGS Cancer Panels

Abstract

Next-generation sequencing (NGS) has become a cornerstone of precision oncology, yet the consistency of results across different laboratories is paramount for clinical trust and drug development. This article provides a comprehensive analysis of inter-laboratory reproducibility for NGS cancer panels, tailored for researchers, scientists, and drug development professionals. It explores the foundational importance of reproducibility, examines methodological variables influencing concordance, presents strategies for troubleshooting and optimization, and reviews validation frameworks and comparative performance data. By synthesizing findings from recent multi-institutional studies and technological advancements, this resource aims to equip professionals with the knowledge to implement robust, reliable NGS testing in oncology research and clinical trials.

The Critical Bedrock: Why Inter-Laboratory Reproducibility is Non-Negotiable in Precision Oncology

Defining Reproducibility and Concordance in the Context of Multi-Gene Panels

Next-generation sequencing (NGS) based multi-gene panels have become fundamental tools in precision oncology, enabling comprehensive molecular profiling for therapy selection. However, their translation into clinical practice faces a significant challenge: ensuring that results are consistent and reproducible across different testing laboratories. In the context of multi-gene panels, reproducibility refers to the consistency of results when the same sample is tested multiple times under varying conditions (different laboratories, instruments, or operators), while concordance measures the agreement between different testing methodologies or platforms when analyzing the same biological sample. The clinical implications of variability in molecular testing are substantial, as treatment decisions increasingly rely on the accurate detection of specific genetic alterations. This guide objectively compares the performance of different testing approaches and panels, providing researchers and drug development professionals with experimental data critical for evaluating analytical robustness in multi-gene cancer testing.

Performance Metrics Comparison Across Multi-Gene Testing Approaches

The analytical performance of molecular tests is quantified through specific metrics that collectively define their reliability. The table below summarizes key performance data from recent validation studies of different testing approaches.

Table 1: Analytical Performance Metrics of Selected Multi-Gene Testing Approaches

| Test/Panel Name | Target Specs | Sensitivity (%) | Specificity (%) | Reproducibility (%) | Concordance with Comparator (%) | Key Technology |

|---|---|---|---|---|---|---|

| In-house NGS (50-gene) [1] | 283 NSCLC samples | 99.2% (DNA), 98% (RNA) | Not specified | 95.2% (interlaboratory) | Not specified | Targeted NGS |

| TTSH-Oncopanel (61-gene) [2] | 43 unique samples | 98.23% (unique variants) | 99.99% | 99.98% (inter-run), 99.99% (intra-run) | 100% with orthogonal methods | Hybridization-capture NGS |

| HDPCR NSCLC Panel [3] | 15 variants in 9 genes | 0.1-0.9% MAF for DNA targets | Not specified | >97% | >97% with Oncomine Precision Assay | Digital PCR |

| 35-Gene Hereditary Cancer Panel [4] | 4820 variants across 35 genes | 99.9% | 100% | 99.8% (reproducibility), 100% (repeatability) | Not specified | NGS with hybrid capture |

| SiRe Panel (568 mutations) [5] | 6 genes (EGFR, KRAS, NRAS, BRAF, cKIT, PDGFRα) | Not specified | Not specified | 100% (inter-laboratory concordance) | 0.989 concordance for allelic frequencies | Targeted NGS |

Beyond the core metrics above, additional performance characteristics provide further insights into test reliability. The TTSH-Oncopanel demonstrated a limit of detection at 2.9% variant allele frequency (VAF) for both SNVs and INDELs, with all alterations successfully detected in repeat tests exhibiting a coefficient of variation less than 0.1x [2]. The 35-gene hereditary cancer panel was validated across 4820 variants including single nucleotide variants and small insertions and deletions, showing exceptionally high sensitivity and specificity [4]. The HDPCR NSCLC panel demonstrated capacity for rapid turnaround times of less than 4 hours, excluding extraction time, significantly shorter than typical NGS workflows [3].

Table 2: Sample Requirements and Turnaround Time Comparison

| Test/Panel Name | Recommended DNA Input | Sample Types Validated | Turnaround Time (TAT) | Key Limitations |

|---|---|---|---|---|

| In-house NGS (50-gene) [1] | Not specified | NSCLC tissue samples | 4 days (median) | Not specified |

| TTSH-Oncopanel (61-gene) [2] | ≥50 ng | Clinical tissues, EQA samples, reference controls | 4 days | High VAF threshold (2.9%) |

| HDPCR NSCLC Panel [3] | 7.5-40 ng total DNA | FFPE tissue specimens | <4 hours (excl. extraction) | Limited to 15 variants |

| Lung Cancer Compact Panel [6] | Not specified | Cytology specimens, FFPE | Not specified | Focused on 8 genes |

| SiRe Panel [5] | Not specified | Colon/lung cancer tissue samples | Not specified | Limited to 6 genes |

Experimental Protocols for Determining Reproducibility and Concordance

Multi-Institutional Validation of Targeted NGS Panels

The Italian multi-institutional study evaluating a 50-gene NSCLC panel employed a two-phase validation approach. In the first (retrospective) phase, 21 samples underwent interlaboratory testing with DNA and RNA sequencing. The second (prospective) phase involved intralaboratory testing of 262 samples across participating institutions. The study measured sequencing success rate, interlaboratory concordance, and correlation between observed and expected variant allele fractions (R²=0.94). This design allowed researchers to isolate variability attributable to laboratory-specific factors from technical variability of the assay itself [1].

A similar approach was used in the evaluation of the SiRe panel across five Italian laboratories. In this study, participating institutions analyzed a common set of 20 NSCLC and colorectal cancer samples using identical panel parameters. Each institution then prospectively analyzed an additional 40 routine samples (160 total) to assess reproducibility of NGS run parameters across sites. Concordance was assessed for both mutation detection and allelic frequency distribution, with the latter quantified using intra-class correlation coefficient [5].

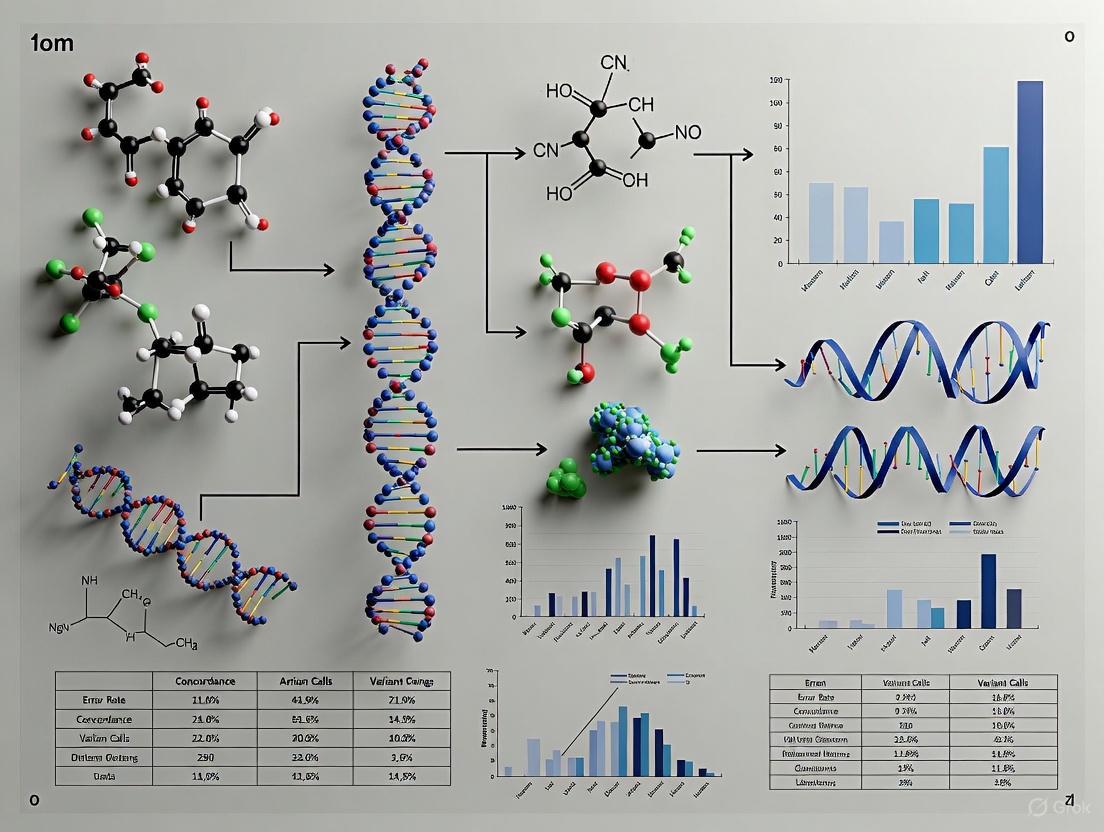

Figure 1: Workflow of Multi-Institutional Validation Study for NGS Cancer Panels

Comprehensive Analytical Validation Framework

The TTSH-Oncopanel validation followed a rigorous three-step protocol to evaluate performance. First, sequencing quality was assessed using reference standards and tumor samples. Second, the somatic mutation landscape was analyzed in 40 diverse tumor specimens to establish reliability and concordance with other NGS methods. Third, clinical relevance was evaluated for routine clinical implementation. Specific experiments included:

- Input Titration: DNA input requirements were determined by testing reference material HD701 at varying concentrations (10-100 ng) to establish the minimum input yielding reliable results [2].

- Limit of Detection: Serial dilutions of HD701 were analyzed to determine the minimum variant allele frequency detectable with 100% sensitivity, established at >3.0% VAF [2].

- Precision Studies: Repeatability (intra-run precision) was assessed by sequencing 5 samples with different barcodes in duplicates or triplicates within a single run. Reproducibility (inter-run precision) was evaluated by comparing replicates of 15 unique samples across different runs [2].

For the 35-gene hereditary cancer panel, validation utilized well-characterized DNA specimens from the NIGMS Human Genetic Cell Repository whose variants had been previously characterized by the 1000 Genome Project and Coriell Catalog. This approach allowed for blinded validation against established truth sets [4].

Alternative Sample Type Validation

The cPANEL trial prospectively evaluated the use of cytology specimens as alternatives to traditional FFPE tissues for NGS testing. The study collected cytology specimens via transbronchial brushing, needle aspiration washing, and pleural effusion, preserving them in a nucleic acid stabilizer. The primary endpoint was the success rate of gene analysis compared to conventional tissue specimens. The study demonstrated a 98.4% success rate with cytology specimens, with high concordance (97.3%) to other companion diagnostic methods. The research also compared nucleic acid yield and quality between matched FFPE and cytology samples, finding the latter offered significantly higher quality DNA [6].

Essential Research Reagent Solutions

Successful implementation of reproducible multi-gene panel testing requires specific reagent systems and reference materials. The table below details key solutions used in the validation studies discussed in this guide.

Table 3: Essential Research Reagents for Multi-Gene Panel Validation

| Reagent Category | Specific Product | Function/Purpose | Validation Context |

|---|---|---|---|

| Reference Materials | HD701 (Horizon Discovery) | Limit of detection and input titration studies | TTSH-Oncopanel validation [2] |

| Reference Materials | NIST GIAB Reference Materials | Benchmarking variant calls against truth sets | Targeted panel performance metrics [7] |

| Reference Materials | Coriell Institute DNA samples | Analytical validation with previously characterized variants | 35-gene hereditary cancer panel [4] |

| Nucleic Acid Stabilizer | GM tube (GeneMetrics) | Preserves DNA/RNA in cytology specimens | cPANEL trial [6] |

| Library Preparation | Maxwell RSC FFPE Kits (Promega) | Nucleic acid extraction from challenging samples | Various panel validations [6] |

| Target Enrichment | TruSight Inherited Disease Panel (Illumina) | Hybrid-capture based target enrichment | GIAB reference material evaluation [7] |

| Target Enrichment | Ion AmpliSeq Inherited Disease Panel (ThermoFisher) | Amplicon-based target enrichment | GIAB reference material evaluation [7] |

| Analysis Software | Sophia DDM with OncoPortal Plus | Variant analysis and clinical interpretation | TTSH-Oncopanel [2] |

Figure 2: End-to-End Workflow for Multi-Gene Panel Testing Showing Key Reagent Integration Points

Factors Influencing Reproducibility and Concordance

Several technical factors significantly impact the reproducibility and concordance of multi-gene panel testing:

Sample Quality and Input Requirements: The quality and quantity of input nucleic acids profoundly affect assay performance. The TTSH-Oncopanel validation demonstrated that while some mutations could be detected with inputs as low as 25 ng, consistent detection of all expected variants required ≥50 ng input [2]. The HDPCR NSCLC panel was specifically designed to work with limited input amounts (7.5-40 ng total DNA), making it suitable for samples with limited material [3]. The cPANEL trial further demonstrated that cytology specimens preserved in nucleic acid stabilizer could yield higher quality DNA than FFPE samples, potentially improving reproducibility [6].

Bioinformatics Pipeline Standardization: Variant calling and interpretation pipelines represent a significant source of variability in multi-gene panel testing. The Association for Molecular Pathology and College of American Pathologists jointly recommend using an error-based approach that identifies potential sources of errors throughout the analytical process [8]. Standardization of bioinformatics pipelines was a key factor in achieving 100% inter-laboratory concordance with the SiRe panel across five institutions [5].

Coverage Requirements and Panel Design: The depth of sequencing coverage and uniformity across targeted regions significantly impacts detection sensitivity. The National Institute of Standards and Technology recommends using Genome in a Bottle reference materials to establish coverage-dependent sensitivity metrics for targeted panels [7]. The SiRe panel's focused design on 568 clinically relevant mutations across just six genes contributed to its high inter-laboratory reproducibility, suggesting that narrower, more focused panels may offer advantages for standardized testing [5].

The establishment of reproducible and concordant multi-gene panel testing requires meticulous validation across multiple dimensions. Current data demonstrate that both large (50-61 gene) and focused (6-gene) panels can achieve greater than 95% inter-laboratory concordance when implemented with standardized protocols [1] [2] [5]. The choice between broader and more targeted panels involves trade-offs between comprehensive genomic assessment and optimization for reproducibility, with narrower panels potentially offering advantages for standardized testing across multiple sites. As molecular testing continues to evolve, adherence to established validation frameworks [8] and utilization of well-characterized reference materials [7] will remain critical for ensuring that multi-gene panels deliver consistent, reliable results across diverse laboratory settings - a fundamental requirement for both clinical decision-making and drug development research.

The Impact on Clinical Decision-Making and Patient Outcomes

Next-generation sequencing (NGS) cancer panels have revolutionized oncology by enabling comprehensive genomic profiling of tumors, thereby facilitating personalized treatment strategies. However, their full integration into clinical practice is contingent upon demonstrating consistent performance and reliable inter-laboratory reproducibility. Inconsistent variant calling between different laboratories, even when using the same raw sequencing data, poses significant challenges for clinical decision-making and genetic data sharing [9]. This guide objectively compares the performance of various NGS panels and platforms, evaluating their concordance with established orthogonal methods and their reproducibility across different testing environments. The findings underscore the critical importance of standardized protocols and validation frameworks for ensuring that NGS-derived genomic information can be trusted for therapeutic decisions, ultimately impacting patient outcomes in precision oncology.

Performance Comparison of NGS Panels vs. Orthogonal Methods

The analytical and clinical performance of NGS panels is typically benchmarked against established orthogonal methods, such as polymerase chain reaction (PCR), fluorescence in situ hybridization (FISH), and Sanger sequencing. The concordance rates between these methodologies provide a critical measure of reliability for clinical application.

Table 1: Concordance of NGS Panels with Orthogonal Methods Across Cancer Types

| Cancer Type | Gene/Alteration | Orthogonal Method | Sensitivity of NGS (%) | Specificity of NGS (%) | Citation |

|---|---|---|---|---|---|

| Colorectal Cancer | KRAS mutation | PCR | 87.4 | 79.3 | [10] |

| Colorectal Cancer | NRAS mutation | PCR | 88.9 | 98.9 | [10] |

| Colorectal Cancer | BRAF mutation | PCR | 77.8 | 100.0 | [10] |

| Non-Small Cell Lung Cancer | EGFR mutation | PCR/Pyrosequencing | 86.2 | 97.5 | [10] |

| Non-Small Cell Lung Cancer | ALK fusion | IHC/FISH | 100.0 | 100.0 | [10] |

| Breast Cancer | ERBB2 amplification | IHC/ISH | 53.7 | 99.4 | [10] |

| Gastric Cancer | ERBB2 amplification | IHC/ISH | 62.5 | 98.2 | [10] |

| Multiple Solid Tumours | 92 known variants | Various | 100.0 | N/A | [2] |

Data from a large-scale study comparing the K-MASTER NGS panel with standard diagnostic tests reveals a variable degree of agreement, which is gene- and alteration-specific [10]. While detection of fusions like ALK showed perfect concordance, sensitivity for detecting ERBB2 amplification was lower, potentially due to differences in the genomic regions probed or the limitations of NGS in calling focal amplifications compared to ISH [10]. In contrast, a separate validation study of a 61-gene oncopanel demonstrated 100% detection of all 92 known variants from orthogonal methods, indicating that well-validated NGS panels can achieve exceptionally high sensitivity [2].

A key advantage of NGS is its ability to interrogate multiple genes simultaneously from a small tissue sample, which is crucial when tumor material is limited [11]. This comprehensive profiling is particularly valuable given the complex clonal evolution and tumor heterogeneity observed in cancers, where traditional single-gene tests are insufficient to capture the complete mutational landscape [11].

Inter-Laboratory Reproducibility and Variant Calling

The reproducibility of NGS results across different laboratories is a cornerstone of reliable clinical genomics. Inconsistent results can directly impact clinical decisions, such as the selection of targeted therapies.

Table 2: Inter-Laboratory and Inter-Platform Reproducibility Metrics

| Study Focus | Metric | Performance | Citation |

|---|---|---|---|

| UMA Panel (Multiple Myeloma) | Balanced Accuracy for CNA/t-IgH vs. FISH | > 93% | [12] |

| UMA Panel (Multiple Myeloma) | Inter-laboratory Robustness | Confirmed | [12] |

| 61-Gene Oncopanel (Solid Tumours) | Assay Repeatability (Intra-run) | 99.99% | [2] |

| 61-Gene Oncopanel (Solid Tumours) | Assay Reproducibility (Inter-run) | 99.98% | [2] |

| Breast Cancer Variant Calling | ClinVar Significant Variants Detected by One Caller | 16.50% | [9] |

| MiSeq vs. Ion Proton | Concordance for Somatic Variants | 100% | [13] |

The Unique Molecular Assay (UMA) panel for multiple myeloma demonstrated a balanced accuracy of over 93% compared to FISH and showed robust inter-laboratory reproducibility for genomic alteration calls, a critical validation for clinical-grade diagnostics [12]. Similarly, a solid tumor oncopanel demonstrated 99.99% repeatability and 99.98% reproducibility, with a long-term reproducibility coefficient of variation of less than 0.1x for repeated controls [2].

A critical study on breast cancer patients revealed that different variant-calling algorithms (GATK HaplotypeCaller, VarScan, and MuTect2) detected significantly different sets of variants from the same raw data [9]. On average, 16.5% of clinically significant variants (annotated in ClinVar) were detected by only one variant caller. This highlights that the choice of bioinformatics pipeline alone can introduce substantial variation, potentially affecting patient management [9]. Conversely, a comparison of the MiSeq and Ion Proton platforms with their respective panels showed 100% concordance for detecting somatic variants in genomic regions covered by both panels, including 27 variants with low allele frequency (<15%) [13]. This suggests that a combined workflow can be highly effective for verifying somatic variants.

NGS Clinical Testing Workflow

Experimental Protocols and Key Methodologies

Targeted Sequencing and Validation Protocol

The experimental protocols for validating NGS panels are rigorous and multi-faceted. The following methodology is adapted from recent high-impact studies [10] [2] [12]:

- Sample Selection and DNA Extraction: The study includes formalin-fixed, paraffin-embedded (FFPE) tumor tissues and reference control samples (e.g., HD701). DNA is extracted using standardized kits, and quantification is performed using fluorometric methods. A minimum input of 50 ng DNA is typically required for robust sequencing [2].

- Library Preparation and Target Enrichment: Library preparation uses hybridization-capture-based methods (e.g., Sophia Genetics or SureSelect Agilent kits) [2] [12]. The process involves DNA fragmentation, adapter ligation, and sample indexing. Libraries are enriched for target genes using custom-designed biotinylated oligonucleotide probes.

- Sequencing: Sequencing is performed on platforms such as the MGI DNBSEQ-G50RS or Illumina sequencers [2]. The required sequencing depth is high, with a median coverage of >650x, to reliably detect low-frequency variants [10] [2].

- Bioinformatic Analysis: Raw sequencing data is processed through a bioinformatics pipeline, which includes base calling, read alignment to a reference genome (e.g., GRCh37/38), and variant calling. For somatic variants, a minimum variant allele frequency (VAF) threshold is set (e.g., 1-5% for actionable variants) [10] [2].

- Validation and Concordance Testing: The NGS results are compared against orthogonal methods. For mutations, this involves PCR-based methods; for fusions, IHC/FISH; and for copy number alterations, SNP arrays [10] [12]. Sensitivity, specificity, and concordance rates are calculated.

- Reproducibility Assessment: Intra-run (repeatability) and inter-run (reproducibility) precision are assessed by sequencing replicate samples within and across different sequencing runs. Inter-laboratory reproducibility is tested by analyzing the same sample set in different laboratories [2] [12].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagents and Solutions for NGS Panel Validation

| Item | Function in the Experiment | Example |

|---|---|---|

| FFPE Tumor Samples | Provides the source of tumor DNA for sequencing; represents real-world clinical material. | Colorectal, breast, NSCLC, and gastric cancer samples [10]. |

| Reference Control DNA | Serves as a positive control for assay performance and variant calling accuracy. | HD701 Reference Standard [2]. |

| DNA Extraction Kits | Isolate high-quality genomic DNA from FFPE tissues, a critical step for library success. | (Implied: various standardized kits) [10] [12]. |

| Hybridization Capture Kit | Enriches DNA libraries for the specific genes/regions targeted by the panel. | SureSelect Agilent [12], Sophia Genetics [2]. |

| Sequencing Platform | Performs massively parallel sequencing of the enriched libraries. | MGI DNBSEQ-G50RS [2], Illumina MiSeq [13]. |

| Bioinformatics Pipeline | Transforms raw sequencing data into annotated variant calls; includes alignment and variant calling tools. | Sophia DDM [2], GATK, VarScan [9]. |

Variant Calling & Concordance Workflow

Impact on Clinical Decision-Making and Patient Outcomes

The integration of reproducible NGS panels into clinical diagnostics directly influences patient care by providing a more comprehensive and accurate genomic profile to guide therapy. This impact is evident in several key areas:

- Informed Therapeutic Selection: NGS panels identify actionable mutations in genes like KRAS, EGFR, ERBB2, PIK3CA, and BRCA1, which are critical for matching patients with targeted therapies [2]. This moves treatment beyond a "one-size-fits-all" approach to precision interventions.

- Timely Clinical Interventions: Reducing the turnaround time (TAT) for NGS results is a significant factor in patient management. The development of in-house panels has demonstrated a reduction of TAT from 3 weeks to as little as 4 days, enabling more timely and personalized clinical interventions [2].

- Comprehensive Risk Stratification: In diseases like multiple myeloma, NGS panels enable precise risk stratification according to systems like R2-ISS by simultaneously detecting mutations (e.g., TP53), copy number alterations (e.g., gain/amp(1q)), and immunoglobulin translocations, providing a more detailed prognostic picture than FISH alone [12].

- Overcoming Tumor Heterogeneity: The capacity of NGS to interrogate hundreds of targets in one test is essential for understanding clonal evolution and tumor mutation heterogeneity, allowing clinicians to adapt treatment strategies as the disease progresses [11].

The body of evidence confirms that NGS cancer panels are powerful tools for precision oncology, showing high overall concordance with orthogonal methods and demonstrating excellent inter-laboratory reproducibility when validated protocols are followed. However, challenges remain, particularly in the detection of specific alteration types like gene amplifications and in the standardization of bioinformatic pipelines. Discrepancies in variant calling can directly affect the identification of clinically actionable variants, underscoring the non-negotiable need for standardized NGS workflows and data-sharing practices. As the field advances, the continued focus on rigorous validation, reproducibility studies, and reduced turnaround times will be paramount. This ensures that NGS technology can reliably fulfill its promise to improve clinical decision-making and patient outcomes by providing a robust foundation for personalized cancer therapy.

Implications for Biomarker Discovery and Clinical Trial Integrity

Next-generation sequencing (NGS) has fundamentally transformed biomarker discovery and clinical trial design in oncology, enabling comprehensive genomic profiling that guides personalized treatment strategies. However, the transition from research discovery to clinical application faces a significant challenge: ensuring inter-laboratory reproducibility of NGS cancer panels. Consistent biomarker identification across different testing sites is paramount for clinical trial integrity, as it ensures patient stratification accuracy, reliable endpoint assessment, and valid cross-trial comparisons. This guide objectively compares the performance of various NGS approaches and protocols, focusing specifically on their demonstrated reproducibility and implications for robust biomarker development.

Table: Key Performance Metrics Across NGS Cancer Panel Studies

| Study & Panel Type | Genes/Targets | Concordance Rate | Sensitivity | Specificity | TAT (Days) |

|---|---|---|---|---|---|

| In-House Multi-Institutional (NSCLC) [1] | 50 genes | 95.2% (Inter-lab) | N/A | N/A | 4 |

| TTSH-Oncopanel (Solid Tumors) [2] | 61 genes | 99.98% (Reproducibility) | 98.23% | 99.99% | 4 |

| UMA Panel (Multiple Myeloma) [12] | 82 genes / 0.46 Mbp | >93% (vs. FISH) | N/A | N/A | N/A |

| Commercial NGS (Meta-Analysis) [14] | Variable | High for SNVs | 93% (EGFR, tissue) | 97% (EGFR, tissue) | 8.18 (Liquid) |

| In-House NGS (Meta-Analysis) [14] | Variable | High for SNVs | 80% (EGFR, liquid) | 99% (EGFR, liquid) | 19.75 (Tissue) |

Comparative Performance Analysis of NGS Approaches

Inter-laboratory Reproducibility and Concordance

The consistency of results across different testing laboratories is a cornerstone of clinical trial integrity. A multi-institutional Italian study evaluating an in-house 50-gene NGS panel for non-small cell lung cancer (NSCLC) demonstrated a 95.2% inter-laboratory concordance rate in a retrospective analysis of 21 samples, with a 100% sequencing success rate for both DNA and RNA [1]. Similarly, the Unique Molecular Assay (UMA) for multiple myeloma was explicitly validated across two laboratories (Bologna and Milan), showing a balanced accuracy of over 93% compared to fluorescence in situ hybridization (FISH) for detecting copy number alterations and immunoglobulin heavy chain translocations [12]. The TTSH-Oncopanel demonstrated exceptional reproducibility (99.98%) and repeatability (99.99%) in its validation, which was crucial for its implementation in a clinical setting previously reliant on external laboratories [2].

Diagnostic Accuracy and Variant Detection Sensitivity

Diagnostic accuracy, measured by sensitivity and specificity against standard methods, is critical for reliable biomarker identification. A comprehensive meta-analysis of 56 studies involving 7,143 advanced NSCLC patients found that tissue-based NGS had a sensitivity of 93% and specificity of 97% for detecting EGFR mutations, and 99% sensitivity for ALK rearrangements [14]. For liquid biopsy, NGS performed well for single-gene mutations like EGFR, BRAF V600E, and KRAS G12C (sensitivity ~80%, specificity 99%), but showed limited sensitivity for detecting gene rearrangements (ALK, ROS1, RET, NTRK) [14]. The TTSH-Oncopanel validation reported a sensitivity of 98.23% and a specificity of 99.99% for detecting unique variants, with a limit of detection for variant allele frequency (VAF) set at 2.9% for both SNVs and INDELs [2].

Operational Efficiency and Turnaround Time

Turnaround time (TAT) directly impacts clinical trial enrollment and patient management. In-house NGS testing significantly reduces TAT compared to outsourcing. The Italian multi-institutional study reported a median TAT of 4 days from sample processing to final report [1], while the TTSH-Oncopanel also achieved a 4-day average TAT, a substantial improvement over the 3-week TAT experienced when using external laboratories [2]. The meta-analysis by Navarro et al. confirmed that liquid biopsy NGS has a significantly shorter TAT (8.18 days) compared to tissue-based methods (19.75 days, p<0.001) [14].

Detailed Experimental Protocols for Reproducibility

Protocol 1: Hybridization-Capture Based NGS (TTSH-Oncopanel)

The TTSH-Oncopanel employs a hybridization-capture target enrichment method, a common and robust approach for clinical NGS.

- Library Preparation: The process uses library kits from Sophia Genetics, compatible with the automated MGI SP-100RS library preparation system. Automation reduces human error, contamination risk, and improves consistency compared to manual methods [2].

- Sequencing: Sequencing is performed on the MGI DNBSEQ-G50RS sequencer employing cPAS (combinatorial probe- anchor synthesis) technology, which provides high SNP and INDEL detection accuracy [2].

- Bioinformatic Analysis: The Sophia DDM software platform, which incorporates machine learning algorithms, is used for variant analysis and visualization. The software connects mutational profiles to clinical annotations via OncoPortal Plus, using a four-tiered system for classifying clinical significance [2].

- Quality Control Metrics: The panel requires ≥50 ng of DNA input. Sequencing runs must meet specific quality thresholds, including >99% of processed reads with a base call quality ≥Q20 and >98% of target regions covered at ≥100x unique molecular coverage [2].

Protocol 2: Multi-Institutional Validation (NSCLC Panel)

This protocol emphasizes inter-laboratory standardization for a multi-institutional study.

- Study Design: The validation was conducted in two phases. The first was a retrospective inter-laboratory study using 21 samples. The second was a prospective intra-laboratory study analyzing 262 samples [1].

- Reagent Standardization: A key to ensuring reproducibility was that reagents for the inter-laboratory validation phase were provided by a single agreement with Thermo Fisher Scientific, minimizing batch-to-batch variability [1].

- Performance Assessment: The study evaluated sequencing success rate, inter-laboratory concordance, and correlation between observed and expected variant allele fractions (R² = 0.94) [1].

- Variant Characterization: The prospective phase identified 285 relevant variants, with 81.1% being SNVs/INDELs, 9.8% copy number variants (CNVs), and 9.1% gene fusions. It also detected co-mutations in 20.5% of samples with main oncogenic drivers [1].

Impact on Biomarker Discovery and Clinical Trial Design

Enhancing Biomarker Discovery through Comprehensive Profiling

Reproducible NGS panels facilitate the discovery of complex biomarker signatures beyond single-gene alterations. The in-house NSCLC study identified co-mutations with potential clinical relevance in 20.5% of samples positive for main oncogenic drivers, and alterations in other relevant genes in 11% of wild-type samples [1]. This comprehensive profiling is essential for identifying resistance mechanisms and developing combination therapies. The UMA panel for multiple myeloma successfully integrated the detection of mutations, copy number alterations, and translocations into a single assay, enabling precise risk stratification according to the R2-ISS system [12]. This holistic approach is superior to sequential single-gene tests for uncovering the complex genomic landscape of tumors.

Strengthening Clinical Trial Integrity and Patient Stratification

The reproducibility of NGS panels directly impacts the integrity of clinical trials by ensuring consistent patient stratification across multiple trial sites. Biomarker-guided patient selection has been shown to significantly increase success rates in drug development (10.7% vs. 1.6%) [15]. Reproducible NGS is critical for the accurate assessment of established immunotherapy biomarkers such as tumor mutational burden (TMB), microsatellite instability (MSI), and PD-L1, though the latter suffers from technical variability due to different antibody clones and scoring systems [15]. The implementation of validated, reproducible in-house panels reduces turnaround time, facilitating faster patient screening and enrollment, which is particularly crucial for trial candidates with advanced disease [1] [2].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table: Key Reagents and Platforms for Reproducible NGS

| Reagent/Platform | Function | Example Use Case |

|---|---|---|

| Automated Library Prep Systems (e.g., MGI SP-100RS) | Standardizes library construction, reduces manual error and variability. | Used in TTSH-Oncopanel validation for consistent library prep [2]. |

| Hybridization-Capture Kits (e.g., Sophia Genetics, Agilent SureSelect) | Enriches for target genomic regions using biotinylated oligonucleotide probes. | Core enrichment method for TTSH-Oncopanel and UMA Panel [2] [12]. |

| Benchtop Sequencers (e.g., MGI DNBSEQ-G50, Illumina MiSeq i100) | Provides the sequencing platform; choice impacts read length, accuracy, and cost. | MGI DNBSEQ-G50 used for TTSH-Oncopanel; Illumina MiSeq i100 validated for rapid NGS [2] [16]. |

| Bioinformatic Pipelines & Software (e.g., Sophia DDM, Custom Pipelines) | Analyzes raw sequencing data, calls variants, and filters artifacts. | Sophia DDM with machine learning used for TTSH-Oncopanel analysis [2]. |

| Validated Reference Standards | Serves as positive controls for assay performance, sensitivity, and limit of detection. | HD701 control used for LOD and long-term reproducibility in TTSH-Oncopanel [2]. |

The inter-laboratory reproducibility of NGS cancer panels is not merely a technical benchmark but a fundamental prerequisite for robust biomarker discovery and clinical trial integrity. Evidence demonstrates that standardized, validated in-house panels can achieve high inter-laboratory concordance (>95%), excellent sensitivity and specificity (>98%), and significantly reduced turnaround times (~4 days). The consistent implementation of detailed experimental protocols, including automated library preparation, standardized reagents, and validated bioinformatic pipelines, is critical for generating reliable, comparable data across multiple research and clinical sites. As oncology continues to advance toward personalized, biomarker-driven therapies, ensuring the reproducibility of the genomic tools used in drug development will be paramount for delivering effective and safe treatments to patients.

Next-Generation Sequencing (NGS) has fundamentally transformed oncology, enabling comprehensive genomic profiling that guides precision therapy. As this technology transitions from research laboratories to clinical diagnostics, inter-laboratory reproducibility has emerged as a critical challenge with direct implications for patient care. The consistency of NGS results across different testing sites is foundational to reliable molecular diagnostics, affecting treatment decisions, clinical trial outcomes, and regulatory approvals. This guide examines the current landscape of NGS cancer panel reproducibility through a systematic analysis of performance data, experimental protocols, and technological standardization efforts that engage stakeholders across the healthcare ecosystem. Understanding these factors is essential for researchers, clinical laboratories, and drug developers who depend on accurate, reproducible genomic data to advance cancer care.

Performance Comparison of NGS Approaches

The analytical performance of NGS panels varies significantly based on technology platform, gene content, and application. The following tables summarize key performance metrics from recent multi-institutional studies, providing a comparative view of NGS reproducibility across different testing scenarios.

Table 1: Inter-laboratory Reproducibility of NGS Assays

| Study / Panel | Cancer Type | Genes Targeted | Concordance Rate | Sequencing Success Rate | Key Metrics |

|---|---|---|---|---|---|

| Italian Multi-Institutional Study [1] | NSCLC | 50 genes | 95.2% inter-laboratory concordance | 99.2% (DNA), 98% (RNA) | Median TAT: 4 days; Detected 285 relevant variants |

| K-MASTER Panel [10] | Colorectal, NSCLC, Breast, Gastric | 183-409 genes | Variable by gene/alteration | 96.8% | Sensitivity: 53.7-100%; Specificity: 79.3-100% depending on alteration type |

| Targeted NGS for GMO Detection [17] | Oilseed rape (model system) | Specific edited loci | High reproducibility between facilities | N/A | Effective detection of 0.1% GMO spike; low inter-lab variation for targeted NGS |

| TTSH-Oncopanel [2] | Pan-cancer solid tumors | 61 genes | 100% for known variants | >98% target coverage ≥100x | Sensitivity: 98.23%; Specificity: 99.99%; Reproducibility: 99.98% |

| UMA Panel (Multiple Myeloma) [12] | Hematologic (MM) | 82 genes + CNA + translocations | >93% vs. FISH | Median coverage: 233x (≥4M reads/sample) | Balanced accuracy >93% for CNA and IgH translocations |

Table 2: Platform-Specific Performance Characteristics

| Platform / Panel | Technology | Coverage | VAF Sensitivity | Variant Types Detected | Strengths |

|---|---|---|---|---|---|

| Foundation One (F1) [18] | Hybridization capture | ~250x | Not specified | SNVs, indels, CNAs, chromosomal rearrangements | Comprehensive genomic profile |

| Paradigm Cancer Diagnostic (PCDx) [18] | PCR-based, Ion PGM | >5,000x | 4% for SNVs, 7% for indels | SNVs, indels, CNAs, mRNA expression | Faster TAT (9 days earlier than F1); deeper coverage |

| TTSH-Oncopanel [2] | Hybridization capture, DNBSEQ-G50RS | Median 1671x | 2.9% for SNVs/indels | SNVs, indels | High sensitivity/specificity; reduced TAT (4 days) |

| UMA Panel [12] | Custom capture-based | Median 233x | Not specified | SNVs, indels, CNA, IgH translocations | Comprehensive MM profiling; validated vs. FISH/SNP arrays |

| Short-read Targeted [17] | Illumina, amplicon | Not specified | Effective at 0.1% spike | SNVs, indels | High inter-lab reproducibility; standardized workflows |

Experimental Protocols for Reproducibility Assessment

Multi-Institutional Validation Frameworks

Robust assessment of NGS reproducibility requires carefully designed experiments that evaluate consistency across laboratories, platforms, and sample types. The Italian multi-institutional study on NSCLC employed a two-phase validation approach [1]. In the first retrospective phase, 21 samples underwent interlaboratory testing with identical wet-lab protocols and bioinformatics pipelines. The second prospective phase evaluated intralaboratory consistency across 262 clinical samples. This design allowed researchers to isolate variables affecting reproducibility while assessing real-world performance.

The K-MASTER project implemented a comparative validation approach against orthogonal methods [10]. Researchers compared NGS results for actionable mutations (KRAS, NRAS, BRAF in colorectal cancer; EGFR, ALK, ROS1 in NSCLC; ERBB2 in breast/gastric cancers) with established clinical methods including PCR, pyrosequencing, IHC, and FISH. Discordant results underwent additional verification using droplet digital PCR (ddPCR), providing a robust truth-set for calculating sensitivity and specificity.

Inter-laboratory Reproducibility Study Design

A comprehensive study on NGS reproducibility for genetically modified organism detection established a standardized framework applicable to cancer panels [17]. Researchers prepared 36 spiked samples with known admixtures (0.1% and 1.0% GE GMO content) and distributed replicate sets to three independent NGS service providers. Each laboratory followed their standard workflows for both short-amplicon (Illumina) and long-amplicon (PacBio) sequencing, mimicking real-world variability in laboratory protocols. This approach directly measured inter-laboratory variance while controlling for sample quality and composition.

Analytical Validation Metrics

The TTSH-Oncopanel validation established comprehensive performance benchmarks for reproducibility assessment [2]. Researchers evaluated:

- Repeatability (intra-run precision): Sequencing the same sample with different barcodes within a single run

- Reproducibility (inter-run precision): Comparing replicates of 15 unique samples across different runs

- Long-term reproducibility: Repeated testing of positive controls over multiple runs

- Limit of detection: Titrating DNA input (10-100ng) and variant allele frequencies (2.9% minimum VAF established)

This systematic approach identified sources of technical variability, including low VAF variants, regions with high background noise, and insufficient read support that required filtering from reproducibility calculations.

Standardized Workflows and Reagent Solutions

Consistent results across laboratories depend on standardized materials and protocols. The following section details essential research reagent solutions and their functions in ensuring NGS reproducibility.

Table 3: Essential Research Reagent Solutions for Reproducible NGS

| Reagent / Material | Function in NGS Workflow | Impact on Reproducibility |

|---|---|---|

| Formalin-Fixed Paraffin-Embedded (FFPE) Tumor Tissues [10] [18] | Source of tumor DNA for clinical sequencing | Standardized extraction and quality control essential for consistent yields |

| Reference Standard Controls (HD701, HD780) [10] [2] | Positive controls with known variants and allele frequencies | Enable cross-lab performance comparison and limit of detection determination |

| Hybridization Capture Probes (SureSelect, Sophia Genetics) [2] [12] | Target enrichment for relevant genomic regions | Consistent coverage uniformity across target regions minimizes false negatives |

| DNA Library Preparation Kits (MGI, Illumina, Thermo Fisher) [17] [2] | Fragment processing and adapter ligation | Standardized fragmentation and amplification reduce technical artifacts |

| Bioinformatic Pipelines (Sophia DDM, Custom Algorithms) [2] [12] | Variant calling, annotation, and filtering | Consistent variant identification and classification across datasets |

Reference Materials and Standardized Protocols

The ATCC has addressed reproducibility challenges by developing standardized sequencing pipelines from authenticated biological materials [19]. Their ISO 9001-compliant database provides reference-quality whole-genome sequences from over 4,500 microbial strains and 400 cell lines, enabling benchmarking of laboratory-specific protocols against gold-standard references.

For clinical NGS, the Unique Molecular Assay (UMA) panel for multiple myeloma demonstrates how customized targeted sequencing can overcome limitations of traditional diagnostics [12]. By integrating detection of mutations, copy number alterations, and IgH translocations in a single streamlined assay (0.46 Mbp footprint), the UMA panel achieves >93% concordance with FISH while enabling inter-laboratory reproducibility through standardized wet-lab and bioinformatic protocols.

Stakeholder Engagement and Regulatory Considerations

The reproducibility of NGS data involves multiple stakeholders across the development and implementation pipeline. Each group has distinct responsibilities in ensuring consistent, reliable results.

Regulatory Pathways and Standardization Efforts

Regulatory agencies recognize that traditional single-analyte companion diagnostic models are insufficient for NGS-based tests. The FDA has explored flexible regulatory pathways that can accommodate rapidly evolving NGS technologies while ensuring reliability [20]. This includes potential use of "special controls" for certain markers and categorization based on available evidence levels.

Standardization initiatives are critical for reproducibility. The National Institute of Standards and Technology (NIST) Genome in a Bottle program provides standardized reference materials, while professional societies develop guidelines for best practices [20]. Efforts to harmonize minimal reportable information for sequencing and establish quality metrics enable cross-platform comparability.

Reimbursement and Clinical Implementation

Reproducibility directly impacts test reimbursement and clinical adoption. Payers require evidence of analytical validity and clinical utility before covering NGS tests [20]. The lack of test-specific CPT codes that communicate test quality, intent of use, or clinical trial eligibility creates barriers to appropriate reimbursement. Transparent collaboration between laboratories, regulators, and payers is essential to establish value-based reimbursement models that recognize the comprehensive genomic profiling provided by NGS panels while ensuring reliable results across testing sites.

Inter-laboratory reproducibility of NGS cancer panels requires coordinated efforts across research laboratories, clinical diagnostics facilities, regulatory agencies, and industry partners. Current data demonstrates that targeted NGS panels can achieve >95% concordance between laboratories when standardized protocols, reference materials, and bioinformatic pipelines are implemented. The evolution from single-analyte tests to comprehensive genomic profiling necessitates new validation frameworks that maintain reliability while accommodating technological innovation. As NGS becomes increasingly integrated into routine cancer care, continued focus on reproducibility standards will be essential for ensuring that patients receive accurate molecular information to guide their treatment regardless of testing location.

Inside the Black Box: Methodological Variables Driving NGS Concordance Across Labs

Targeted next-generation sequencing (NGS) has become an indispensable tool in cancer genomics, enabling focused analysis of genomic regions of interest. The two predominant methods for target enrichment—hybridization capture and amplicon sequencing—each present distinct advantages and limitations that impact their utility in research and clinical diagnostics. This comparative analysis examines the technical performance, experimental workflow, and inter-laboratory reproducibility of these methods within the context of NGS cancer panel validation. Recent multi-institutional studies demonstrate that both methods can achieve greater than 95% inter-laboratory concordance when standardized protocols are implemented, with in-house NGS testing reducing turnaround times from 3 weeks to just 4 days. By synthesizing performance metrics from recent validation studies, this guide provides researchers with objective data to inform method selection for cancer genomics applications.

Next-generation sequencing (NGS) has revolutionized genomic analysis, with targeted sequencing emerging as a cost-effective approach that focuses on specific genomic regions while omitting irrelevant portions of the genome [21]. Target enrichment is a critical pre-sequencing step that enables this focused analysis by amplifying or capturing genomic regions of interest from the whole genome background [22]. The two primary enrichment methods—hybridization capture and amplicon sequencing—employ fundamentally different technologies with significant implications for workflow efficiency, data quality, and reproducibility [21] [22].

The selection between these methods carries particular importance in cancer research and diagnostics, where factors such as variant detection accuracy, input DNA requirements, and technical reproducibility directly impact clinical decision-making [2] [1]. With the increasing implementation of in-house NGS testing in molecular pathology laboratories, understanding the performance characteristics of these enrichment methods becomes essential for ensuring reliable, reproducible results across institutions [1].

This analysis examines the fundamental principles, performance metrics, and experimental considerations of hybridization capture and amplicon-based methods, with particular emphasis on their application in cancer genomics and inter-laboratory reproducibility study contexts.

Fundamental Principles and Methodologies

Hybridization Capture Technology

Hybridization capture, also referred to as target enrichment, utilizes long, biotinylated oligonucleotide baits (probes) that hybridize to specific genomic regions of interest [23]. The process begins with random shearing of DNA samples followed by ligation of sequencing adaptors to create sequencing libraries [23]. Biotinylated baits designed to complement target regions are then hybridized to these libraries, and the target-bound complexes are isolated using streptavidin-coated magnetic beads [23] [22].

This method offers several design flexibilities, including tiling baits to cover large contiguous regions and overlapping baits to ensure comprehensive coverage without gaps [23]. A significant advantage of hybridization capture is its capacity for pre-capture multiplexing, where multiple samples are pooled before target enrichment, thereby conserving reagents and improving workflow efficiency [23]. The method is particularly valuable for applications requiring high accuracy for mutation detection and superior performance with complex genomic regions [23].

Amplicon Sequencing Technology

Amplicon sequencing relies on polymerase chain reaction (PCR) amplification of targeted genomic regions using sequence-specific primers [22]. Multiple primers are designed to flank regions of interest and are typically used in multiplexed PCR reactions to simultaneously amplify all target regions [22]. The resulting amplicons (PCR products) have sequencing adapters attached either through ligation or as part of the primer design, creating a library of enriched DNA ready for sequencing [22].

This method has evolved to include several technological variations that enhance its application. Long-range PCR utilizes specialized polymerases to amplify longer DNA fragments (3-20 kb), reducing the number of primers needed and improving amplification uniformity [22]. Anchored multiplex PCR employs only one target-specific primer combined with a universal primer, enabling detection of novel fusions without prior knowledge of both sequences [22]. Droplet PCR and microfluidics-based approaches compartmentalize reactions to minimize primer interference and improve uniformity while reducing reagent requirements [22].

Comparative Performance Analysis

Technical Performance Metrics

Direct comparisons between hybridization capture and amplicon sequencing reveal distinct performance characteristics that influence their suitability for specific applications.

Table 1: Core Method Characteristics Comparison [21] [24]

| Feature | Hybridization Capture | Amplicon Sequencing |

|---|---|---|

| Number of Steps | More steps | Fewer steps |

| Number of Targets per Panel | Virtually unlimited by panel size | Flexible, usually fewer than 10,000 amplicons |

| Total Time | More time | Less time |

| Cost per Sample | Varies | Generally lower cost per sample |

| Typical Gene Content | Larger, typically >50 genes | Smaller, typically <50 genes |

| Variant Type Coverage | Comprehensive for all variant types | Ideal for SNVs and indels |

Table 2: Performance Metrics from Experimental Studies [25] [2]

| Metric | Hybridization Capture | Amplicon Sequencing |

|---|---|---|

| On-Target Rate | Lower due to off-target capture | Naturally higher due to specific primer design |

| Coverage Uniformity | Superior (≥99% reported) | Lower variability between regions |

| Variant Detection Sensitivity | >98.23% (validated in oncopanels) | High for known targets |

| Variant Detection Specificity | >99.99% (validated in oncopanels) | High for known targets |

| False Positive Rate | Lower | Higher due to PCR errors |

| Reproducibility | 99.99% repeatability, 99.98% reproducibility | Platform-dependent |

A comprehensive evaluation of whole-exome sequencing approaches found that while amplicon methods achieved higher on-target rates, hybridization capture demonstrated better coverage uniformity [25]. The latter also exhibited lower noise levels and fewer false positives, making it particularly suitable for detecting rare variants [21]. Amplicon sequencing, however, showed advantages in workflow simplicity and required fewer hands-on steps [21] [24].

Experimental Validation Data

Recent validation studies of cancer panels provide empirical performance data for these enrichment methods. A hybridization capture-based oncopanel targeting 61 cancer-associated genes demonstrated exceptional performance in detecting clinically actionable mutations in genes such as KRAS, EGFR, ERBB2, PIK3CA, TP53, and BRCA1 [2]. The assay achieved 98.23% sensitivity for detecting unique variants with 99.99% specificity at 95% confidence intervals [2].

For reproducibility assessment, the same study evaluated both inter-run and intra-run precision. The results showed 99.99% repeatability and 99.98% reproducibility at 95% confidence intervals, with remarkable consistency in variant allele fractions between replicate algorithm runs [2]. The minimum detectable variant allele frequency (VAF) was established at 2.9% for both single nucleotide variants (SNVs) and insertions/deletions (indels) [2].

Another multi-institutional study evaluating in-house NGS testing for non-small cell lung cancer (NSCLC) demonstrated a 100% sequencing success rate for DNA and RNA, with 95.2% interlaboratory concordance and a strong correlation (R² = 0.94) between observed and expected variant allele fractions [1]. The implementation of in-house testing significantly reduced turnaround time from approximately 3 weeks to a median of 4 days from sample processing to molecular report [2] [1].

Inter-Laboratory Reproducibility and Implementation

The reproducibility of NGS cancer panels across different laboratories is a critical consideration for both research consortia and clinical implementation. A key study examining the Unique Molecular Assay (UMA) panel for multiple myeloma genomics demonstrated that hybridization capture-based approaches can achieve high inter-laboratory concordance when standardized protocols are implemented [12].

This validation involved sequencing 207 DNA samples across two laboratories (Bologna and Milan) using a customized capture-based NGS panel designed to detect genomic aberrations in multiple myeloma [12]. The assay achieved a balanced accuracy of over 93% compared to traditional fluorescence in situ hybridization (FISH) for detecting copy number alterations and immunoglobulin heavy chain translocations [12]. The study attributed this reproducibility to several factors:

- Standardized bioinformatic pipelines for variant calling and analysis

- Uniform library preparation protocols across participating laboratories

- Comprehensive validation against orthogonal methods including FISH and SNP arrays

- Clear quality metrics for sequencing performance, including coverage requirements

Similar reproducibility was observed in the Italian multi-institutional experience with NSCLC testing, where prospective validation across multiple sites demonstrated a 99.2% sequencing success rate for DNA and 98% for RNA [1]. This study identified 285 relevant variants across different alteration types, with co-mutations of potential clinical relevance detected in 20.5% of samples positive for main oncogenic drivers [1].

The implementation of in-house NGS testing with standardized enrichment methods has demonstrated significant benefits in operational efficiency. Laboratories reported reducing turnaround times from 3 weeks to just 4 days by bringing testing in-house rather than relying on external providers [2] [1]. This acceleration facilitates more timely clinical interventions while maintaining high analytical performance.

Application in Cancer Genomics

Method Selection Guidelines

The choice between hybridization capture and amplicon sequencing depends on specific research objectives, sample characteristics, and technical requirements.

Table 3: Application-Based Method Selection [21] [24] [26]

| Application | Recommended Method | Rationale |

|---|---|---|

| Large Gene Panels (>50 genes) | Hybridization Capture | More efficient for larger target regions |

| Small to Medium Panels (<50 genes) | Amplicon Sequencing | Cost-effective with streamlined workflow |

| Rare Variant Detection | Hybridization Capture | Lower noise and fewer false positives |

| Low DNA Input Samples | Amplicon Sequencing | More efficient with limited starting material |

| Complex Genomic Regions | Hybridization Capture | Superior performance with repeats |

| Known Fusion Detection | Amplicon Sequencing | High sensitivity for characterized fusions |

| Novel Fusion Discovery | Hybridization Capture | Ability to detect uncharacterized rearrangements |

| Copy Number Variation Analysis | Hybridization Capture | More accurate for quantitative assessments |

Experimental Protocols

For researchers designing validation studies for NGS cancer panels, specific experimental protocols have demonstrated success in recent publications:

Hybridization Capture Protocol for Solid Tumors [2]:

- DNA Input: ≥50 ng of DNA extracted from clinical tissues, FFPE samples, or reference controls

- Library Preparation: Automated library preparation systems (e.g., MGI SP-100RS)

- Target Enrichment: Hybridization with biotinylated oligonucleotide probes targeting genes of interest

- Sequencing: Platforms with combinatorial probe-anchor synthesis technology (e.g., MGI DNBSEQ-G50RS)

- Quality Control: Minimum of 4 million reads per sample, with ≥98% of target regions covered at ≥100× molecular coverage

- Bioinformatic Analysis: Machine learning-based variant calling with validation against orthogonal methods

Amplicon Sequencing Protocol for Cancer Hotspots [26]:

- DNA Input: Adaptable to low inputs (1-10 ng), suitable for FFPE and liquid biopsy samples

- Library Preparation: Multiplex PCR-based approaches (e.g., CleanPlex technology)

- Target Enrichment: Single-tube amplification with target-specific primers

- Sequencing: Compatible with Illumina, MGI, and Ion Torrent platforms

- Quality Control: Uniformity of coverage across amplicons, minimum read depth of 500× for low-frequency variants

- Variant Calling: Pipeline optimized for amplicon-based data with management of allele drop-out

The Scientist's Toolkit

Table 4: Essential Research Reagent Solutions for Target Enrichment

| Reagent Solution | Function | Example Products |

|---|---|---|

| Hybridization Capture Panels | Enrich large genomic regions through probe hybridization | xGen Exome Research Panel, SureSelect, SeqCap EZ [23] [25] |

| Amplicon Sequencing Panels | Target specific regions through multiplex PCR amplification | CleanPlex Panels, Ion AmpliSeq, HaloPlex [26] [25] |

| Library Preparation Kits | Prepare sequencing libraries with adapters and barcodes | Illumina DNA Prep with Enrichment, Sophia Genetics Library Kits [2] [24] |

| Automated Library Preparation Systems | Standardize and accelerate library prep workflow | MGI SP-100RS, Automated MGI System [2] |

| Unique Molecular Identifiers (UMIs) | Enable error correction and accurate variant quantification | UMI Adapters for Hybridization Capture [23] |

| Bioinformatic Analysis Pipelines | Analyze sequencing data and call variants | Sophia DDM, Custom Bioinformatics Pipelines [2] [12] |

Hybridization capture and amplicon sequencing represent complementary approaches for target enrichment in cancer genomics, each with distinct strengths and optimal applications. Hybridization capture excels in comprehensive variant detection, reproducibility across laboratories, and applications requiring large gene content or discovery of novel variants. Amplicon sequencing offers advantages in workflow efficiency, cost-effectiveness for smaller panels, and performance with challenging sample types.

Recent multi-institutional validation studies demonstrate that both methods can achieve greater than 95% inter-laboratory concordance when implemented with standardized protocols and bioinformatic pipelines. The selection between these methods should be guided by specific research goals, sample characteristics, and operational constraints. As NGS continues to be integrated into routine clinical practice, ongoing refinement of both enrichment technologies will further enhance their reproducibility, sensitivity, and utility for personalized cancer treatment.

The Role of Automation in Library Preparation for Reducing Human Error

Next-Generation Sequencing (NGS) has become indispensable in oncology research and drug development. However, the complexity of manual library preparation introduces significant variability, posing a major challenge for inter-laboratory reproducibility of cancer panels. Automation addresses this critical bottleneck by standardizing processes, enhancing precision, and minimizing human intervention, thereby ensuring that genomic data is reliable and comparable across different research settings.

Quantitative Evidence: Manual vs. Automated Library Preparation

Experimental data from recent studies demonstrates that automated workflows significantly improve key performance metrics compared to manual processing.

Table 1: Performance Metrics of Manual vs. Automated NGS Library Preparation

| Performance Metric | Manual Processing | Automated Processing | Improvement & Citation |

|---|---|---|---|

| Hands-on Time | ~23 hours per run [27] | ~6 hours per run [27] | 74% reduction [27] |

| Overall Process Time | 42.5 hours [27] | 24 hours [27] | ~44% reduction [27] |

| Coefficient of Variation (% On-Target Reads) | Higher (specific value not given) [28] | Threefold reduction [28] | Marked improvement in reproducibility [28] |

| Sample Throughput | Limited by operator capacity [29] | Up to 384 libraries per day [30] | Massive scalability for large studies [30] |

| Data Quality (% Aligned Reads) | ~85% [27] | ~90% [27] | Enhanced data quality for analysis [27] |

| Variant Detection Concordance | N/A (Reference) | Pearson r = 0.94 [31] | Highly comparable to manual reference [31] |

Table 2: Comparison of Automation Platforms for NGS Library Preparation

| Platform / Solution | Throughput | Key Features | Supported Kits/Chemistries |

|---|---|---|---|

| Open Microfluidic Platform (e.g., Vivalytic) [31] | Low-to-medium | Integrated purification & quantification; shuttling PCR; designed for smaller labs [31] | Customizable protocols (e.g., NEBnext Ultra II Library Kit) [31] |

| Agilent Bravo Automated Liquid Handling Platform [28] | Up to 96 samples | Improved reproducibility; reduced variance in % on-target reads [28] | SureSeq NGS Library Preparation Kit; enzymatic fragmentation workflows [28] |

| Tecan DreamPrep NGS [30] | Up to 96 samples per run (high capacity) | Open platform; integrated plate reader for QC; long walk-away times [30] | Open platform (compatible with various kits); Tecan's proprietary NGS reagents [30] |

| Tecan DreamPrep NGS Compact [30] | 8-48 samples per day | Smaller footprint; upgradable configurations; on-deck thermal cycler [30] | Open platform compatible with several NGS protocols [30] |

| Automated MGI SP-100RS System [32] | Not specified | Open platform for third-party kits; reduces human error and contamination risk [32] | Hybridization-capture based library kits (e.g., from Sophia Genetics) [32] |

Detailed Experimental Protocols

The following sections detail specific automated methodologies cited in the performance data, providing a blueprint for implementation.

Automated Library Preparation on an Open Microfluidic Platform

This protocol was used to generate the high concordance data (Pearson r = 0.94) shown in Table 1 [31].

- Platform: Vivalytic lab-on-a-chip cartridge and analyzer (Bosch Healthcare Solutions GmbH) [31].

- Sample Type: Cell-free DNA (cfDNA) reference material with known mutations at variable allelic frequencies [31].

- Reagents: NEBnext Ultra II Library Kit for Illumina [31].

- Workflow Steps:

- Multiplex PCR: Target enrichment was performed on the cfDNA samples.

- Enzyme Reactions: The workflow integrated end-repair, adapter ligation, and adapter finalization steps.

- Nucleic Acid Purification: Three short purifications using carboxylated magnetic beads (Solid Phase Reversible Immobilization, SPRI) were implemented within the cartridge.

- Indexing PCR: An Index-PCR (iPCR) was performed to barcode libraries.

- Integrated Quantification: The cartridge included a built-in quantification step.

- Control: The entire process was run in parallel with a manual reference workflow for comparison [31].

- Sequencing & Analysis: Final libraries were sequenced on an Illumina MiSeq system. Data analysis involved alignment with BWA-MEM and visualization with the Integrative Genomic Viewer (IGV) [31].

Automated Hybridization-Based Library Preparation

This protocol yielded the threefold reduction in the coefficient of variation for % on-target reads [28].

- Platform: Agilent Bravo Automated Liquid Handling Platform [28].

- Sample Type: Genomic DNA [28].

- Reagents: SureSeq NGS Library Preparation Kit and panels (e.g., myPanel Custom Myeloid Panel) [28].

- Workflow Steps:

- DNA Fragmentation: Genomic DNA was enzymatically sheared using NEBNext dsDNA Fragmentase into 150–250 bp fragments on the Bravo instrument [28].

- Library Preparation & Hybridization: The Bravo system automated the entire SureSeq NGS library preparation protocol, including hybridisation and washing steps for target enrichment [28].

- Bead-Based Purification: AMPure bead clean-ups were performed automatically on the platform [28].

- Sequencing & Analysis: Resulting libraries were sequenced on an Illumina MiSeq (2 x 150 bp). Sequencing data was processed using dedicated software (SureSeq Interpret Software) [28].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Kits for Automated NGS Library Preparation

| Item | Function | Example Use Case |

|---|---|---|

| NEBnext Ultra II Library Kit | Provides enzymes and buffers for end-repair, dA-tailing, adapter ligation, and library amplification [31]. | Used in the automated microfluidic workflow for classical ligation-based library preparation, ideal for cfDNA samples [31]. |

| SureSeq NGS Library Preparation Kit | Facilitates hybridization-based target enrichment, requiring automated hybridization and washing steps [28]. | Automated on the Agilent Bravo platform for consistent, high-performance target sequencing [28]. |

| Magnetic Beads (e.g., AMPure XP) | Solid-phase reversible immobilization (SPRI) for size selection and purification of nucleic acids between reaction steps [31] [28]. | A cornerstone of automation, enabling hands-free cleanup and concentration of libraries on nearly all liquid handling platforms [31] [28]. |

| QIAseq Library Kits | Used for targeted DNA genotyping and other NGS applications on automated systems [30]. | Compatible with Tecan's Fluent automation workstation for high-throughput library prep [30]. |

Workflow Visualization: From Manual Steps to Automated Process

The following diagram illustrates the transition from a manual, variable-prone workflow to a streamlined, reproducible automated process.

Key Mechanisms for Error Reduction

Automation mitigates human error through several core mechanisms:

- Precision Liquid Handling: Automated systems use precise robotic dispensing, eliminating pipetting inaccuracies and volume inconsistencies that are common in manual protocols [33]. This directly improves library yield uniformity and variant calling accuracy.

- Standardized Protocol Execution: By following predefined, validated scripts, automated platforms ensure that incubation times, temperatures, and reaction conditions are identical for every sample in every run, which is vital for inter-laboratory reproducibility [33].

- Reduced Contamination Risk: The use of disposable tips and minimal human intervention significantly lowers the risk of cross-contamination between samples [33].

- Integrated Quality Control: Advanced systems incorporate real-time quality control checks, flagging samples that fail pre-defined thresholds before they consume valuable sequencing resources [33]. Technologies like Tecan's NuQuant enable rapid, sample-saving quantification directly on the deck [30].

The integration of automation into NGS library preparation is no longer a luxury but a necessity for rigorous scientific inquiry. As the data unequivocally shows, automated systems dramatically reduce hands-on time, improve key sequencing metrics, and most importantly, minimize human-induced variability. For researchers and drug developers working to ensure the inter-laboratory reproducibility of cancer panel research—a cornerstone of precision oncology—the adoption of robust, automated library preparation protocols is a critical step toward generating reliable, comparable, and clinically actionable genomic data.

Next-generation sequencing (NGS) has revolutionized genomics, becoming a fundamental tool for researchers across diverse disciplines, from basic biology to clinical diagnostics [34]. The advent of advanced NGS platforms has transformed the field of genomics by allowing the parallel sequencing of millions to billions of DNA fragments, unlocking new opportunities for understanding genetic variation and disease mechanisms [34]. However, this rapid technological expansion has introduced significant challenges in inter-laboratory reproducibility, particularly for sensitive applications such as cancer genomic profiling where consistent variant detection directly impacts clinical decision-making.

This guide provides an objective comparison of current sequencing platforms and chemistries, framing performance characteristics within the critical context of assay reproducibility. For research and clinical teams navigating the complex NGS landscape, understanding how platform selection, chemistry differences, and analytical parameters contribute to variability is essential for generating reliable, comparable data across laboratories.

Sequencing Technology Generations and Principles

DNA sequencing technologies have evolved rapidly over the past two decades, leading to the emergence of three distinct generations [34]. First-generation sequencing, dominated by Sanger's chain termination method, provided read lengths of up to a few hundred nucleotides but was limited by low throughput [34]. Second-generation sequencing (next-generation sequencing) revolutionized the field by enabling massively parallel sequencing of thousands to millions of DNA fragments simultaneously, dramatically increasing throughput while reducing costs [34]. These platforms include Illumina (sequencing-by-synthesis), Ion Torrent (semiconductor sequencing), and SOLiD (sequencing by ligation) [34]. Third-generation sequencing introduced the ability to sequence single molecules and produce much longer reads (thousands to tens of thousands of bases), represented by Pacific Biosciences (PacBio) and Oxford Nanopore Technologies (ONT) [35].

The following diagram illustrates the core workflow for NGS data generation and analysis, a process that remains fundamentally similar across platforms despite their technological differences.

Figure 1: Core NGS Workflow. The process begins with library preparation, where DNA is fragmented and adapters are added. This is followed by amplification, the sequencing run itself, and then three stages of computational analysis [36] [37].

Comparative Analysis of Major Sequencing Platforms

Technical Specifications

The table below summarizes the key technical characteristics of major sequencing platforms available as of 2025, highlighting the diversity of performance characteristics that can impact reproducibility.

Table 1: Sequencing Platform Technical Specifications and Performance Characteristics

| Platform | Technology | Read Length | Accuracy | Throughput Range | Key Strengths | Primary Limitations |

|---|---|---|---|---|---|---|

| Illumina | Sequencing-by-Synthesis | 36-300 bp (short-read) [34] | >99.9% (Q30) [36] | Low to Ultra-high (e.g., NovaSeq X: 16 TB/run) [35] | High accuracy, established workflows | Short reads limit SV detection [34] |

| Ion Torrent | Semiconductor sequencing | 200-400 bp [34] | Similar to Illumina for most applications [38] | Low to Medium | Fast run times, simple workflow | Homopolymer errors [34] |

| PacBio HiFi | Single Molecule Real-Time (SMRT) | 10,000-25,000 bp average [34] | >99.9% (Q30) via circular consensus [35] | Medium to High | Long reads, high accuracy, epigenetic detection | Higher cost per sample [34] |

| Oxford Nanopore | Nanopore sensing | 10,000-30,000 bp average [34] | ~99% (Q20) simplex; >99.9% (Q30) duplex [35] | Low to Ultra-high (PromethION) | Longest reads, real-time analysis, portability | Higher error rate for simplex reads [34] [35] |

Performance Comparison Data

Direct comparative studies provide the most valuable insights for platform selection. The table below synthesizes experimental data from controlled studies evaluating platform performance.

Table 2: Experimental Performance Comparison Across Sequencing Platforms

| Comparison Focus | Methodology | Key Concordance Finding | Discordance Analysis |

|---|---|---|---|

| Illumina MiSeq vs. Ion Torrent S5 Plus [38] | Parallel processing of samples for AMR gene analysis; Common bioinformatics workflow | No statistically significant differences for most genes; Results closely comparable | Single significant difference for tet-(40) gene, potentially due to short amplicon length |

| Tumor-Only vs. Paired Tumor-Normal Panels [39] | Identical DNA samples analyzed on different CLIA-certified panels; 30 patients | 71.8% overall discordance rate | FFPE samples showed significantly higher discordance (p<0.05); 32.3% of TO-only variants were germline; 30.3% had AF <5% |

| Liquid Biopsy Validation [40] | Reference standards & 137 clinical samples; Orthogonal validation | 96.92% sensitivity, 99.67% specificity for SNVs/Indels at 0.5% AF; 100% for fusions | 94% concordance for ESMO Level I variants in clinical samples |

Factors Impacting Inter-Laboratory Reproducibility

Sample and Pre-Analytical Variables

The journey toward reproducible NGS results begins with sample quality and handling. Research has demonstrated that sample type significantly impacts reproducibility, with fresh frozen (FF) tissues showing superior concordance compared to formalin-fixed paraffin-embedded (FFPE) samples [39]. In one comparative study, FFPE samples exhibited significantly higher discordance rates (p < 0.05) between different NGS panels, attributed to factors like DNA fragmentation and lower amplifiable DNA quality [39].

The Q-value, representing the ratio of PCR-amplifiable DNA to total double-stranded DNA, serves as a critical quality metric. Studies have systematically classified samples based on DNA library concentrations (e.g., ≥5 nM vs. <5 nM), with lower-concentration libraries demonstrating reduced concordance in inter-assay comparisons [39]. Even when using the same FFPE block, substantial discordance (55.3%) can occur between technical replicates from sequentially sliced sections, highlighting the impact of tissue heterogeneity and sampling region [39].

Analytical and Bioinformatics Factors

The following diagram illustrates how wet-lab and computational factors converge in the NGS workflow, creating multiple potential sources of variability.

Figure 2: Sources of Inter-Assay Variability. Technical differences in both wet-lab procedures and bioinformatics analysis contribute significantly to discordance between NGS results [39] [36].

Variant calling and filtering approaches significantly influence reproducibility, particularly for low-frequency variants. Studies show that approximately 30% of variants detected in only one of two compared assays had allele frequencies below 5%, with some representing artificial calls [39]. The use of tumor-only versus paired tumor-normal sequencing also dramatically impacts results, with one study finding that 32.3% of variants reported only in a tumor-only panel were consistent with germline polymorphisms that were correctly filtered out in a paired tumor-normal approach [39].

Database selection for antimicrobial resistance gene analysis has demonstrated variable performance, with the Comprehensive Antibiotic Resistance Database (CARD) identifying the highest number of genes compared to other databases [38]. This highlights how functional annotation resources can introduce variability in comparative genomic studies.

Experimental Protocols for Reproducibility Assessment

Inter-Assay Comparison Methodology