Ensuring Precision in Oncology: A Comprehensive Guide to NGS Quality Control Metrics for Cancer Diagnostics

Next-generation sequencing (NGS) has become a cornerstone of precision oncology, enabling comprehensive genomic profiling that guides diagnosis, prognostication, and therapeutic selection.

Ensuring Precision in Oncology: A Comprehensive Guide to NGS Quality Control Metrics for Cancer Diagnostics

Abstract

Next-generation sequencing (NGS) has become a cornerstone of precision oncology, enabling comprehensive genomic profiling that guides diagnosis, prognostication, and therapeutic selection. However, the clinical utility of NGS data is entirely dependent on rigorous quality control (QC) throughout the entire workflow. This article provides researchers, scientists, and drug development professionals with a detailed framework for implementing robust NGS QC metrics. We cover foundational principles, methodological applications for both tissue and liquid biopsy samples, troubleshooting for common pitfalls, and best practices for analytical validation. By synthesizing current standards and emerging practices, this guide aims to support the generation of reliable, clinically actionable genomic data that can safely inform patient care and therapeutic development.

The Bedrock of Reliability: Foundational NGS QC Metrics and Their Critical Role in Precision Oncology

The Four Stages of the NGS Workflow

Next-generation sequencing (NGS) is a high-throughput methodology that enables the massively parallel sequencing of millions of DNA fragments simultaneously [1]. In clinical oncology, this technology is pivotal for identifying tumor profiles essential for selecting targeted therapies and improving personalized patient care [2]. The workflow can be distilled into four critical stages, each with specific quality control (QC) checkpoints to ensure data accuracy and reliability.

Table 1: Core Stages of the NGS Workflow and Their Purpose

| Workflow Stage | Primary Purpose | Key Output |

|---|---|---|

| 1. Nucleic Acid Isolation | To extract genetic material (DNA or RNA) from a sample with sufficient yield, purity, and integrity for sequencing [3] [4] [5]. | High-quality genomic DNA or RNA. |

| 2. Library Preparation | To fragment the nucleic acids and attach adapter sequences, creating a "library" of molecules that are compatible with the sequencer [3] [4]. | A library of adapter-ligated DNA fragments. |

| 3. Sequencing | To determine the nucleotide sequence of every fragment in the library in a massively parallel manner [3] [6]. | Raw sequencing data (FASTQ files). |

| 4. Data Analysis | To process, analyze, and interpret the massive volume of raw data to generate meaningful biological insights [3] [4]. | Aligned sequences, variant calls, and annotated reports. |

Stage 1: Nucleic Acid Isolation

The process begins with the extraction of nucleic acids (DNA or RNA) from a sample, such as a tumor biopsy, which is often formalin-fixed and paraffin-embedded (FFPE) [2]. The quality of the input material is the first major determinant of success. Key considerations and QC metrics include [4] [5]:

- Yield: Obtain nanograms to micrograms of nucleic acid, which can be challenging with limited samples like biopsies or cell-free DNA (cfDNA) [4].

- Purity: Isolates must be free of contaminants like phenol, ethanol, or heparin that can inhibit enzymes used in later steps. Purity is assessed using UV spectrophotometry, with ideal A260/A280 ratios around ~1.8 and A260/A230 ratios >1.8 [7] [4].

- Quality/Integrity: Assess the molecular weight and intactness of the nucleic acids. For DNA, this means high molecular weight and intact strands; for RNA, minimal degradation is critical. Methods include fluorometric assays and gel-based electrophoresis. For FFPE-derived DNA, a QC ratio (e.g., Q129/Q41 ≥0.4) can be used to confirm suitability [2].

Stage 2: Library Preparation

In this step, the extracted nucleic acids are fragmented and modified into a sequenceable library [3] [6]. For RNA, this involves reverse transcription to cDNA first [1]. The process involves:

- Fragmentation: Shearing DNA into short fragments (e.g., 200-500 bp) [6].

- Adapter Ligation: Attaching platform-specific oligonucleotide adapters to the fragment ends. These adapters often contain barcodes (indexes) that allow multiple samples to be pooled and sequenced simultaneously in a process called multiplexing [4] [5].

- Library Amplification: Amplifying the library using PCR, especially when starting with low quantities of input material [4].

- QC Checkpoints: The prepared library must be quantified (e.g., via fluorometry or qPCR) and its size distribution assessed (e.g., via Bioanalyzer). A critical QC is checking for and removing adapter dimers—sharp peaks at ~70-90 bp that can dominate sequencing runs and reduce useful data output [7] [8].

Stage 3: Sequencing

The library is loaded onto a sequencer, where the DNA fragments are clonally amplified and sequenced. The most common method is sequencing by synthesis (SBS) [3].

- Clonal Amplification: Each DNA fragment is locally amplified on a flow cell to form a cluster, providing a strong enough signal for detection [4] [6].

- Base Detection: In Illumina's SBS, fluorescently labeled, reversibly terminated nucleotides are incorporated one at a time. After each incorporation, the flow cell is imaged to identify the base at every cluster [4] [6].

- QC Metrics: Key run-level metrics include chip loading (>70%), percentage of usable sequences (>55%), and low quality reads (<20%) [2].

Stage 4: Data Analysis

The raw signal data is converted into actionable biological knowledge through a multi-stage bioinformatic process [4].

Table 2: Key Stages in NGS Data Analysis

| Analysis Stage | Key Processes |

|---|---|

| Processing | Base calling, demultiplexing, adapter trimming, and quality filtering [4] [5]. |

| Analysis | Read alignment to a reference genome, variant calling, and annotation [4]. |

| Interpretation | Determining the biological and clinical significance of the findings, such as identifying actionable mutations in cancer genes [4]. |

For cancer diagnostics, sample-level QC is vital. This includes ensuring on-target reads (>90%), coverage uniformity (>90%), and that a high percentage of amplicons or genomic regions meet a minimum coverage depth (e.g., ≥95% of amplicons with 500x coverage) to confidently detect somatic variants down to a specific allele frequency (e.g., ≥5%) [2].

Troubleshooting Common NGS Workflow Issues

Frequently Asked Questions

Q1: My sequencing run returned a high percentage of adapter dimers. What went wrong and how can I fix it? A high adapter dimer peak (~70-90 bp) indicates that adapter-adapter ligation products were not sufficiently removed before sequencing [7] [8].

- Root Cause: This is typically a library preparation issue, often due to an suboptimal adapter-to-insert molar ratio during ligation or an inefficient size selection cleanup step [7].

- Solution: Perform an additional cleanup or size selection step after library preparation to remove short fragments. Titrate your adapter concentration and ensure your purification beads are used at the correct sample-to-bead ratio [7] [8].

Q2: I am getting low library yield after preparation. What are the potential causes? Low library yield can stem from problems at multiple points in the preparation workflow [7].

- Root Causes:

- Poor Input Quality: Degraded DNA or RNA, or the presence of enzymatic inhibitors from the isolation step [7].

- Inefficient Ligation: Poor ligase performance, incorrect reaction conditions, or faulty fragmentation [7].

- Overly Aggressive Cleanup: Sample loss during purification or size selection steps [7].

- Diagnostic Strategy: Check the electropherogram profile for abnormalities. Use fluorometric methods (Qubit) over UV spectrophotometry (NanoDrop) for accurate quantification of usable material. Trace backwards from the failed step to identify the source of the problem [7].

Q3: How does FFPE sample processing impact my NGS results, and how can I manage it? FFPE processing is known to fragment and damage nucleic acids, which can lead to lower yields, higher failure rates, and false-negative results due to amplicon drop-outs [2] [9].

- Impact: The formalin fixation time and the sample's location within the paraffin block can cause variable quality degradation [9].

- Quality Management:

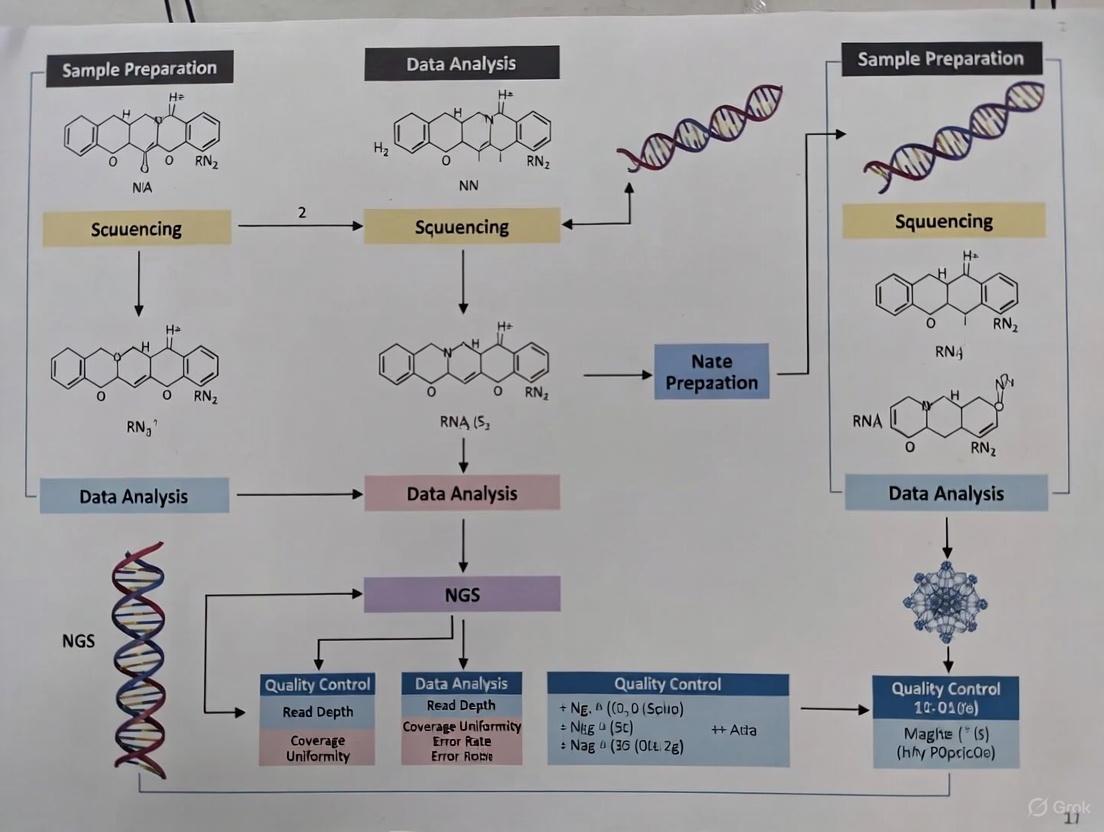

Workflow Visualization

The following diagrams illustrate the logical flow of the entire NGS process and the specific library preparation stage.

NGS Workflow with QC Checkpoints

Library Preparation Steps and Failure Points

Table 3: Key Research Reagent Solutions for NGS in Cancer Diagnostics

| Item | Function | Application Note |

|---|---|---|

| Nucleic Acid Isolation Kits | Extract DNA/RNA from complex samples like FFPE tissue or liquid biopsies, maximizing yield and purity while removing inhibitors [4]. | Select kits validated for your specific sample type (e.g., FFPE, cfDNA). |

| Library Prep Kits | Provide the enzymes and buffers for fragmenting, end-repairing, A-tailing, adapter ligating, and amplifying the sequencing library [4] [5]. | Choose based on input amount, sample type, and desired application (e.g., whole genome, targeted). |

| Adapter/Oligo Mixes | Double-stranded or single-stranded oligonucleotides containing sequences for binding to the flow cell and indexing (barcoding) samples [1] [5]. | Critical for multiplexing. Sequences are platform-specific. |

| Target Enrichment Panels | Designed to capture and sequence specific genomic regions of interest, such as a comprehensive cancer gene panel, rather than the whole genome [9] [5]. | Faster and more cost-effective for profiling known cancer-associated genes. |

| Reference Standards | Commercially available control samples with a known set of mutations at defined allele frequencies [9]. | Essential for validating assay performance, determining sensitivity/specificity, and monitoring cross-lab reproducibility. |

| Internal Standards (Spike-ins) | Synthetic molecules spiked into each sample to control for technical variability and enable precise measurement of error rates for each variant [10]. | Particularly valuable for detecting low-frequency variants in ctDNA liquid biopsies [10]. |

FAQs & Troubleshooting Guides

Q1: My DNA sample has a low A260/A280 ratio (<1.8). What contaminants are likely present, and how can I clean the sample? A: A low A260/A280 ratio typically indicates protein contamination. For remediation, perform an additional purification step.

- Protocol: Ethanol Precipitation for DNA Clean-up:

- Add 0.1 volume of 3M sodium acetate (pH 5.2) to your DNA sample.

- Add 2 volumes of ice-cold 100% ethanol.

- Incubate at -20°C for 30 minutes.

- Centrifuge at >12,000 x g for 15 minutes at 4°C.

- Carefully decant the supernatant.

- Wash the pellet with 500 µL of 70% ethanol.

- Centrifuge again for 5 minutes, discard supernatant, and air-dry the pellet.

- Resuspend the DNA in nuclease-free water or TE buffer.

Q2: My RNA sample has a high A260/A280 ratio (>2.2). What does this mean? A: A ratio significantly above 2.2 often indicates residual guanidine thiocyanate or other chaotropic salts from the extraction process (e.g., using TRIzol). This can inhibit downstream enzymatic reactions. A column-based clean-up protocol is recommended to remove these salts.

Q3: My sample has a good concentration and purity, but my NGS library preparation failed. Could sample integrity be the issue? A: Yes. Quantity and purity do not assess the fragmentation of the nucleic acids. For RNA, a low RIN (<7 for most cancer transcriptome applications) indicates degradation, leading to 3' bias and loss of full-length transcript information. For DNA, a degraded sample will produce short fragments, compromising library complexity.

Q4: What is an acceptable RIN value for RNA-Seq of patient-derived cancer samples? A: While a RIN of 8-10 is ideal, clinically derived samples (e.g., FFPE tissue) often have lower integrity. The following table provides general guidance:

| Sample Type | Minimum Recommended RIN | Rationale |

|---|---|---|

| Fresh Frozen Tissue | 8.0 | Ensures high-quality, full-length transcripts for accurate gene expression analysis. |

| FFPE Tissue | 6.5 - 7.0 | Acknowledges inherent degradation; specialized library prep kits are required. |

| Liquid Biopsy (Cell-Free RNA) | N/A | RIN is not applicable due to short, fragmented nature; use DV200 instead (>30% is favorable). |

Q5: How do I interpret the DV200 metric for highly fragmented RNA? A: DV200 is the percentage of RNA fragments longer than 200 nucleotides. It is a more reliable metric than RIN for degraded samples.

| DV200 Value | Usability for RNA-Seq |

|---|---|

| ≥ 30% | Generally suitable for sequencing with specialized kits. |

| < 30% | Low success rate; requires ultra-low input or single-cell protocols. |

Experimental Protocols

Protocol 1: Spectrophotometric Assessment of Nucleic Acid Quantity and Purity

- Instrument Calibration: Blank the spectrophotometer (e.g., NanoDrop) with the same buffer used to elute/resuspend your sample.

- Measurement: Apply 1-2 µL of sample to the pedestal and measure the absorbance at 230nm, 260nm, and 280nm.

- Data Analysis:

- Concentration (ng/µL): A260 x 50 (for DNA) or A260 x 40 (for RNA).

- Purity (A260/A280): Ratio of A260/A280. Ideal: ~1.8 (DNA), ~2.0 (RNA).

- Contaminant Check (A260/A230): Ratio of A260/A230. Ideal: >2.0. Lower values indicate salt or solvent carryover.

Protocol 2: Fluorometric Quantification using Qubit

- Prepare Working Solution: Mix the Qubit reagent with the buffer at a 1:200 ratio.

- Prepare Standards: Add 190 µL of working solution to each of two tubes and add 10 µL of the provided standards.

- Prepare Samples: Add 199 µL of working solution to assay tubes and add 1 µL of sample.

- Incubate and Read: Vortex, incubate for 2 minutes, and read on the Qubit fluorometer. Select the appropriate assay (e.g., dsDNA HS, RNA HS).

Protocol 3: Assessment of RNA Integrity (RIN) using Agilent Bioanalyzer

- Chip Preparation: Prime the RNA Nano chip with gel-dye mix using the provided syringe.

- Sample Loading: Load 5 µL of marker into the appropriate well. Load 1 µL of each RNA sample (or ladder) into subsequent wells.

- Run: Place the chip in the Bioanalyzer and run the "RNA Nano" program.

- Analysis: The software automatically calculates the RIN (1-10) by analyzing the electrophoretic trace.

Visualizations

NGS QC Workflow Decision Tree

Impact of Failed QC Metrics on NGS Data

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Kit | Function |

|---|---|

| Qubit dsDNA/RNA HS Assay Kits | Fluorometric quantification specific to dsDNA or RNA, unaffected by contaminants. |

| Agilent Bioanalyzer RNA Nano Kit | Microfluidics-based system for evaluating RNA integrity and concentration (RIN). |

| TapeStation Systems & Screentapes | Alternative to Bioanalyzer for automated electrophoresis of DNA and RNA. |

| AMPure XP Beads | Solid-phase reversible immobilization (SPRI) beads for DNA size selection and clean-up. |

| RNase Inhibitors | Essential additives in RNA reactions to prevent degradation by RNases. |

| DNase I, RNase-free | For removing genomic DNA contamination from RNA samples prior to RNA-Seq. |

| FFPE RNA/DNA Extraction Kits | Specialized kits designed to recover nucleic acids from cross-linked, degraded tissues. |

Core Concepts FAQ

What is a Q Score and why is it critical for cancer diagnostics?

A Q Score (Quality Score) is a Phred-scaled measure that estimates the probability that a given base in a sequencing read was called incorrectly. It is defined by the equation: ( Q = -10 \times \log_{10}(e) ), where ( e ) is the estimated probability of an incorrect base call [11]. In cancer diagnostics, high Q Scores are non-negotiable because they minimize false-positive variant calls, which could directly lead to inaccurate therapeutic conclusions [11] [12].

Key Q Score Benchmarks [11]:

| Quality Score | Probability of Incorrect Base Call | Base Call Accuracy |

|---|---|---|

| Q20 | 1 in 100 | 99% |

| Q30 (Common Benchmark) | 1 in 1,000 | 99.9% |

| Q40 | 1 in 10,000 | 99.99% |

For clinical applications, a Q score of above 30 is generally considered good quality, and bases with a Q score below 20 should be considered low quality [13] [12].

How do Sequencing Depth and Coverage differ, and what are their targets?

Although often used interchangeably, sequencing depth and coverage are distinct concepts that are both vital for reliable variant detection [14].

- Sequencing Depth (or Read Depth): Refers to the average number of times a specific nucleotide is read during sequencing. It is expressed as a multiple, such as 100x [14] [15].

- Coverage: Refers to the percentage of the target genome or region that has been sequenced at least once [14]. High coverage ensures there are no gaps in the data that could cause you to miss a critical mutation.

Recommended Coverage for Common Oncology NGS Methods [15]:

| Sequencing Method | Recommended Coverage |

|---|---|

| Whole Genome Sequencing (WGS) | 30x - 50x |

| Whole-Exome Sequencing (WES) | ≥ 100x |

| Targeted Panels (e.g., for rare variants) | Often much higher (e.g., 500x-1000x+) |

What is Coverage Uniformity and why does it matter?

Coverage uniformity describes how evenly sequencing reads are distributed across the target genome. Two datasets can have the same average coverage (e.g., 30x), but their scientific value can differ drastically if one has poor uniformity [16]. In cancer diagnostics, low-coverage regions can lead to false negatives and missed variants, compromising the test's clinical utility [15] [16].

What is Cluster Density and how does it impact my run?

In Illumina platforms, cluster density measures the number of DNA clusters generated per square millimeter on a flow cell during library preparation. Achieving the manufacturer's recommended density is crucial for optimal data output and quality [17] [18].

- Too High: Leads to overlapping clusters, misidentification of signals, and a lower percentage of clusters passing filter (% PF).

- Too Low: Results in suboptimal data yield, wasting sequencing capacity and increasing cost per sample.

Troubleshooting Guides

How to Diagnose and Fix Poor Q Scores

Detailed Protocols:

Assess Data Quality with FastQC:

Trim and Filter Reads:

- Use tools like CutAdapt or Trimmomatic to remove low-quality bases (e.g., those with Q < 20) and adapter sequences [13].

- Command-line example (conceptual):

trimmomatic SE -phred33 input.fastq output_trimmed.fastq LEADING:20 TRAILING:20 SLIDINGWINDOW:4:20 MINLEN:36

Verify Sequencing Run Metrics:

- Consult your platform's run report (e.g., from Illumina's SAV) for key metrics [17].

- Phasing/Prephasing: Should be < 0.1% per cycle. High values indicate loss of synchrony during sequencing [17].

- Cluster Density: Ensure it is within the instrument's recommended range (e.g., for MiSeq, 1,000-1,200 K/mm²) [17]. Adjust library loading concentration for future runs.

How to Resolve Inadequate Coverage or Poor Uniformity

Detailed Protocols:

Calculate and Diagnose Coverage:

- Use the Lander/Waterman equation to estimate or verify coverage: ( C = LN / G )

- ( C ): Coverage

- ( L ): Read length

- ( N ): Number of reads

- ( G ): Haploid genome length [15].

- Generate a coverage histogram using your alignment data (e.g., from BAM files). A ideal distribution is Poisson-like with a small standard deviation. A broad spread indicates poor uniformity [15].

- Use the Lander/Waterman equation to estimate or verify coverage: ( C = LN / G )

Optimize Wet-Lab Procedures:

- Sample QC: For DNA, use spectrophotometry (e.g., NanoDrop) with A260/A280 ratio ~1.8. For RNA, use an instrument like the Agilent TapeStation to obtain an RNA Integrity Number (RIN); a score of 8+ is ideal for most applications [13].

- Library Preparation: Use library prep kits designed to minimize bias in GC-rich or other difficult-to-sequence regions, which are common in cancer genomes [13] [16].

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function in NGS Workflow | Key Considerations for Cancer Diagnostics |

|---|---|---|

| Nucleic Acid Extraction Kits | Isolate DNA/RNA from patient samples (tissue, blood, FFPE). | Yield and purity (A260/280) are critical; FFPE samples require specialized protocols [13]. |

| Library Preparation Kits | Prepare nucleic acid fragments for sequencing by adding adapter sequences. | Choice depends on application (WGS, WES, RNA-Seq); must be compatible with sequencer [13] [18]. |

| Quality Control Instruments (e.g., Agilent Bioanalyzer/TapeStation, Qubit Fluorometer) | Assess sample quality, quantity, and library fragment size. | Essential for verifying input material integrity and final library quality before sequencing [13] [18]. |

| Indexed Adapters | Enable multiplexing of multiple samples in a single sequencing run. | Unique dual indexing is recommended to minimize index hopping and cross-contamination [17]. |

| Sequencing Flow Cells & Reagent Kits (e.g., Illumina S1-S4, P1-P4) | Execute the sequencing-by-synthesis reaction on the instrument. | Selection balances required output, read length, and cost [18]. Monitor cluster density for optimal performance [17]. |

| Positive Controls (e.g., PhiX) | Monitor sequencing performance, error rate, and cluster identification. | Should be spiked into every run as an in-run quality control measure [11] [17]. |

Fundamental QC Differences Between FFPE and Liquid Biopsy Samples

What are the primary QC challenges unique to FFPE tissue samples?

FFPE samples present specific challenges due to the fixation and embedding process. Formalin fixation causes cross-linking and fragmentation of nucleic acids, which can impact sequencing quality. The most critical QC parameters include:

- Tumor Purity and Cellularity: The percentage of tumor nuclei significantly impacts assay success. One large real-world study (n=1,204) found tumor purity below 35% dramatically increases qualified/invalid results. Computational tumor purity estimation during sequencing provides the most accurate QC assessment [19].

- FFPE Block Storage Time: Blocks stored longer than three years show increased failure rates, though this effect is less impactful than tumor purity. The Japanese Society of Pathology recommends using blocks under three years old for genomic studies [19].

- DNA Integrity: While formalin fixation fragments DNA, the DNA Integrity Number (DIN) shows variable correlation with storage time and QC status, with cancer-type specific degradation patterns observed [19].

What specific QC parameters are critical for liquid biopsy (ctDNA) samples?

Liquid biopsy quality control focuses on pre-analytical factors and ctDNA recovery:

- Plasma Processing Protocols: Standardized centrifugation is crucial to prevent cellular DNA contamination. Two-step centrifugation (4°C, 2,000 × g, 10 minutes) effectively separates plasma from buffy coat [20].

- cfDNA Concentration and Input: Minimum 20ng of cell-free DNA is typically required for library preparation. Input below this threshold risks assay failure [20].

- Sequencing Depth: Mean effective depths >1,400× are necessary for reliable detection at low variant allele frequencies (VAFs), with one study establishing this as a critical QC metric [20].

Quantitative Performance Metrics Comparison

Table 1: Analytical Performance Benchmarks for FFPE Tissue vs. Liquid Biopsy NGS

| Performance Parameter | FFPE Tissue Samples | Liquid Biopsy Samples |

|---|---|---|

| Typical Input Requirements | ≥50ng DNA [21] | ≥20ng cfDNA [20] |

| Recommended Sequencing Depth | ≥500× (for 2% VAF) [21] | >1,400× mean effective depth [20] |

| Variant Allele Frequency (VAF) Detection Limit | 0.5%-1% [21] | 0.1%-0.2% [20] [22] |

| Sensitivity | 84.62%-100% (depends on VAF) [21] | 98.5% (vs. ddPCR) [22] |

| Specificity | 100% [21] | 98.9% (vs. ddPCR) [22] |

| Target Coverage | ≥99% of bases covered at ≥50× [21] | Varies by panel design |

Table 2: Success Rate Influencing Factors in Real-World Practice

| Factor | Impact on FFPE Samples | Impact on Liquid Biopsy Samples |

|---|---|---|

| Tumor Purity/Cellularity | Most significant factor; >35% tumor nuclei recommended [19] | Not applicable (no direct tumor cells) |

| Sample Antiquity | Significant degradation after 3 years [19] | Fresh samples only (plasma) |

| Sample Type | Biopsy specimens fail more frequently than surgical specimens [19] | Plasma processing critical |

| Cancer Type | Pancreatic and biliary tract cancers show highest failure rates [19] | Varies by cancer type and stage |

| Pre-analytical Handling | Cold ischemic time and fixation duration matter [19] | Centrifugation protocols and tube types crucial |

Experimental Workflows and Methodologies

How do experimental protocols differ for FFPE versus liquid biopsy samples?

FFPE Sample Processing Protocol [23] [19]:

- Sample Selection and Sectioning: Select FFPE blocks with >35% tumor nuclei. Cut 5-10 μm sections using a microtome.

- DNA/RNA Extraction: Use specialized kits designed for FFPE samples (e.g., QIAamp DNA FFPE Tissue Kit, Maxwell RSC FFPE Plus DNA Kit). These kits include steps to reverse cross-links and fragment DNA to appropriate sizes.

- Quality Assessment: Quantify DNA using fluorometric methods (Qubit dsDNA HS Assay). Assess fragment size using Agilent TapeStation. A260/A280 ratio should be 1.7-2.2.

- Library Preparation: Employ hybridization capture-based methods (e.g., Agilent SureSelectXT) targeting cancer-related genes. Input DNA typically 50-200ng.

- Sequencing: Sequence to minimum 500× coverage for 2% VAF detection, with 99% of targets covered at ≥50×.

Liquid Biopsy Processing Protocol [20] [22]:

- Blood Collection and Processing: Collect 14-20mL peripheral blood in cell-free DNA BCT tubes (Streck). Process within one week of collection.

- Plasma Separation: Two-step centrifugation (4°C, 2,000 × g, 10 minutes) to separate plasma from buffy coat.

- cfDNA Extraction: Isolate from 4mL plasma using specialized cfDNA extraction kits (e.g., Nucleic Acid Extraction Kit, QIAamp Circulating Nucleic Acid Kit).

- Quality Assessment: Quantify cfDNA using Qubit dsDNA HS Assay. Minimum 20ng input required for library preparation.

- Library Preparation: Use error-reduction methods like Unique Molecular Identifiers (UMIs) or Molecular Amplification Pools (MAPs). These approaches track original molecules to reduce sequencing errors.

- Sequencing: Ultra-deep sequencing (>1,400× mean effective depth) to detect variants at 0.1-0.2% VAF.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Kits for FFPE and Liquid Biopsy NGS

| Reagent/Kits | Function/Purpose | Sample Type |

|---|---|---|

| QIAamp DNA FFPE Tissue Kit | DNA extraction from FFPE with cross-link reversal | FFPE Tissue |

| Maxwell RSC FFPE Plus DNA Kit | Automated extraction of high-quality DNA from FFPE | FFPE Tissue |

| Nucleic Acid Extraction Kit | Optimized cfDNA extraction from plasma | Liquid Biopsy |

| QIAamp Circulating Nucleic Acid Kit | Simultaneous extraction of cfDNA and cfRNA | Liquid Biopsy |

| Agilent SureSelectXT | Hybridization capture-based target enrichment | Both |

| Cell-Free DNA BCT Tubes | Blood collection tubes that stabilize nucleated blood cells | Liquid Biopsy |

| Qubit dsDNA HS Assay | Accurate quantification of low-concentration DNA | Both |

| Agilent TapeStation | Fragment size distribution analysis | Both |

Troubleshooting Common QC Failure Scenarios

Why does my FFPE sample keep failing QC, and how can I improve success rates?

The most common causes of FFPE sample failure and their solutions include:

- Low Tumor Purity (<35%): This is the primary reason for failure. Solution: Enrich tumor content through macrodissection or microdissection of FFPE sections prior to DNA extraction [19].

- Extended FFPE Block Storage: Blocks older than three years have increased failure rates. Solution: When possible, select recently prepared blocks or request recuts from pathology archives [19].

- Insufficient DNA Input: Low DNA yield from small biopsies. Solution: Optimize extraction protocols for small samples and use whole genome amplification if necessary, acknowledging potential biases [23] [19].

Why is my liquid biopsy assay sensitivity lower than expected?

Low sensitivity in liquid biopsy assays typically results from:

- Insufficient Sequencing Depth: Sensitivity drops dramatically below 1,400× mean effective depth. Solution: Increase sequencing depth or use molecular barcoding techniques like UMIs or MAPs to improve signal-to-noise ratio [20] [22].

- Suboptimal Plasma Processing: Cellular contamination from improper centrifugation. Solution: Implement strict two-step centrifugation protocols and process samples within 24-72 hours of blood draw [20].

- Low ctDNA Fraction: Early-stage cancers often have low ctDNA concentration. Solution: Increase plasma input volume (4-10mL) and utilize more sensitive error-suppression technologies [22].

Concordance Between Sample Types and Clinical Implications

How concordant are results between matched FFPE and liquid biopsy samples?

Concordance varies significantly by cancer stage and technical factors:

- Stage-Specific Performance: In stage IV NSCLC, liquid biopsy shows >99% positive and negative percentage agreement with tissue testing. In stage III disease, sensitivity drops to 28.57% while specificity remains high (99.20%) [20].

- Complementary Alterations: Different CGP tests applied to the same patients detect overlapping but non-identical variant profiles. One study found 55% sensitivity between platforms, with each detecting unique clinically relevant variants [24].

- Actionable Mutation Detection: Liquid biopsy identifies NCCN-recommended targetable mutations in 45.59% of stage III/IV NSCLC patients, demonstrating clinical utility comparable to tissue testing [20].

Next-generation sequencing (NGS) has revolutionized cancer diagnostics, enabling comprehensive genomic profiling for personalized therapy. However, the accuracy of these results is highly dependent on sample quality. Researchers and clinicians routinely face three significant challenges: degraded samples, low tumor purity, and contamination. These pre-analytical variables can introduce artifacts, skew variant allele frequencies, and lead to false positives or negatives, ultimately compromising clinical decision-making. This guide provides targeted troubleshooting strategies and FAQs to help navigate these common QC hurdles, ensuring the generation of reliable and actionable NGS data.

Troubleshooting Guides

Challenge: Degraded or Low-Quality Samples

Formalin-fixed paraffin-embedded (FFPE) tissues are a primary source for cancer diagnostics but are prone to nucleic acid degradation, which can hinder analysis or yield unreliable results [25] [26].

- Problem Identification: A common indicator is the failure to generate libraries of sufficient size or quantity for sequencing. This can manifest as low coverage, poor variant detection, or assay failure.

- Root Cause: The formalin fixation process causes cross-linking and fragmentation of DNA and RNA [25] [26]. Extended fixation times or suboptimal storage can exacerbate this degradation.

- Mitigation Strategies:

- Use Paired Fresh-Frozen (FF) Tissue: When possible, use FF tissue as a primary source. Studies demonstrate that FF tissues provide higher-quality genetic material, resulting in superior performance for detecting small variants, tumor mutational burden (TMB), and microsatellite instability (MSI) compared to FFPE samples [25] [26].

- Optimize Nucleic Acid Extraction: For FFPE samples, use specialized kits designed for cross-linked and fragmented nucleic acids, such as the AllPrep DNA/RNA FFPE kit, and incorporate a gentle deparaffinization step [26].

- Implement Robust QC: Quantify DNA using fluorometric methods (e.g., Qubit) and assess fragment size distribution with an instrument like the Agilent Bioanalyzer. Ensure the DNA has an A260/A280 ratio between 1.7 and 2.2 before library preparation [23].

- Employ DNA Repair Enzymes: Use dedicated FFPE DNA repair mixes during library preparation to correct damage caused by formalin fixation [27].

Challenge: Low Tumor Purity

Tumor purity, or the proportion of tumor cells in a sample, is a critical factor for accurate variant calling, especially for copy number alterations and homologous recombination deficiency (HRD) scoring [28].

- Problem Identification: Low tumor purity can lead to false-negative results for copy number variants (CNVs) and an underestimation of variant allele frequencies (VAFs). It is a major confounder for HRD score determination [28].

- Root Cause: The biopsy contains a high proportion of non-cancerous cells, such as stromal, immune, or normal epithelial cells.

- Mitigation Strategies:

- Enhance Tumor Purity Estimation: Move beyond conventional pathology estimates. Implement digital pathology to determine tumor cell content more accurately. Studies show conventional pathology can systematically overestimate tumor purity by ~8% compared to digital methods [28].

- Bioinformatic Correction: Use computational tools that explicitly account for tumor purity and ploidy during CNV and HRD analysis. Tools like Sequenza and ASCAT can incorporate tumor purity estimates to improve the accuracy of genomic instability scores [28]. For low-pass whole-genome sequencing (lpWGS), newer tools like BACDAC can calculate ploidy and purity even with low effective tumor coverage [29].

- Macrodissection: Prior to nucleic acid extraction, a pathologist should mark regions of interest on an H&E-stained slide. Manual microdissection of these tumor-rich areas from subsequent sections can significantly enrich tumor cell content [23].

Challenge: Contamination and Sequencing Artifacts

Artifacts can be introduced at various stages, from sample handling to library preparation and sequencing, leading to false-positive variant calls [30] [31] [32].

- Problem Identification: The presence of unexpected low-VAF variants, specific patterns of "noise" on certain chromosomes (e.g., 7, 11, 16, 19), or chimeric reads in alignment files [33] [31] [32].

- Root Cause:

- Sample Handling: Cross-contamination between samples or contaminating salts and solvents [30] [27].

- Library Preparation: DNA fragmentation methods can introduce artifacts. Enzymatic fragmentation has been shown to generate significantly more artifactual SNVs and indels than sonication [32]. Biases in primer binding ("mispriming") also contribute [30].

- Sequencing Process: Unexplained run-specific noise events at discrete sequencing cycles can generate high-coverage noise sequences that mimic true alleles [31].

- Mitigation Strategies:

- Prevent Cross-Contamination: Sterilize workstations and tools thoroughly. Handle one sample at a time and include DNA-free negative controls in every batch to detect contamination [30].

- Choose Fragmentation Method Wisely: If possible, use sonication over enzymatic fragmentation to reduce artifact burden. If using enzymes, be aware of the potential for artifacts derived from palindromic sequences (PS) and inverted repeat sequences (IVS) [32].

- Automate Library Prep: Use liquid handling robots to minimize pipetting errors and inconsistencies, reducing batch effects and operator-related variability [30].

- Bioinformatic Filtering: Employ specialized algorithms to create artifact "blacklists." Tools like ArtifactsFinder can identify and filter variants likely caused by specific sequence structures in the genome [32].

Frequently Asked Questions (FAQs)

Q1: Our FFPE samples often fail NGS QC. What is the most effective way to improve success rates? A1: The most impactful step is to ensure high-quality input material. If available, prioritize using fresh-frozen (FF) tissue, as it provides higher-quality nucleic acids and reduces issues associated with FFPE samples [25] [26]. For FFPE, implement gentle, optimized extraction protocols with dedicated repair enzymes and rigorous QC of DNA quantity and size before proceeding to library prep [26] [27] [23].

Q2: How does tumor purity affect specific biomarkers like HRD scores, and how can we improve accuracy? A2: Homologous recombination deficiency (HRD) scoring is strongly dependent on accurate tumor purity [28]. Low purity leads to inaccurate allele-specific copy number calling, which directly impacts the HRD score. For correct determination, combine digital pathology for precise tumor cell content estimation with bioinformatic tools (e.g., Sequenza) that are informed by this purity value [28].

Q3: We see consistent, low-level noise on chromosomes 7, 11, 16, and 19 in our NGS data. Is this biological or technical? A3: This is likely a technical artifact. Studies in Preimplantation Genetic Testing (PGT-A) and other NGS applications have identified recurring artifacts on these specific chromosomes [33]. These are often introduced during whole genome amplification or library preparation and can be mistaken for true mosaicism or CNVs. Awareness of these common artifact locations is crucial, and repeating library preparation can help normalize them [33].

Q4: What are the best practices to minimize batch effects in library preparation? A4: To minimize batch effects:

- Randomize sample processing across different batches.

- Include positive controls in each batch to monitor performance [30].

- Use multiplexing kits that offer high auto-normalization to achieve consistent read depths across samples, reducing the need for individual normalization [30].

- Automate the library preparation process where possible to reduce operator-related variability [30].

Table 1: Impact of Sample Type on NGS Quality Metrics

This table summarizes key findings from a comparative study of 69 paired Fresh-Frozen (FF) and Formalin-Fixed Paraffin-Embedded (FFPE) samples using the Illumina TruSight Oncology 500 assay [25] [26].

| Quality Metric / Alteration Type | Performance in FF Samples | Performance in FFPE Samples | Concordance Note |

|---|---|---|---|

| Small Variants (SNVs/Indels) | Superior quality and detection | More prone to unreliable results | High concordance |

| Tumor Mutational Burden (TMB) | More reliable detection | Less reliable detection | High concordance |

| Microsatellite Instability (MSI) | More reliable detection | Less reliable detection | High concordance |

| Splice Variants | --- | --- | Lower concordance |

| Gene Fusions | --- | --- | Lower concordance |

| Copy Number Variants (CNVs) | --- | --- | Lower concordance |

Table 2: Comparison of Tumor Purity Estimation Methods

This table compares different methods for determining tumor purity, a critical parameter for accurate genomic analysis [28].

| Estimation Method | Principle | Advantages | Limitations |

|---|---|---|---|

| Conventional Pathology | Microscopic inspection of H&E slides by a pathologist. | Standard practice, readily available. | Systematically overestimates purity (~8% vs. digital). Subjective. |

| Digital Pathology | Digital image analysis of H&E slides using software (e.g., QuPath). | More accurate, quantitative, reproducible. | Requires specialized equipment and software. |

| Bioinformatic (Sequenza) | Computational estimation from WES data. | Does not require additional wet-lab work. | Accuracy depends on sequencing depth and sample quality. |

| Bioinformatic (Sclust) | Computational estimation from WES data. | Does not require additional wet-lab work. | Accuracy depends on sequencing depth and sample quality. |

Table 3: Common NGS Artifacts and Their Mitigation

This table outlines common artifacts, their characteristics, and strategies to address them [33] [31] [32].

| Artifact Type | Common Causes | How to Identify | Recommended Mitigation |

|---|---|---|---|

| Fragmentation Artifacts | Enzymatic or sonication fragmentation during library prep. | Chimeric reads with inverted repeat or palindromic sequences; low-VAF SNVs/indels. | Use sonication over enzymes; employ bioinformatic filters (e.g., ArtifactsFinder). |

| Run-specific Noise Spikes | Unexplained errors during sequencing cycles. | Spikes in substitutions/indels at specific cycle positions across an entire run. | Re-sequence the library; develop quality-based noise thresholds. |

| Chromosome-specific Artifacts | Errors in DNA amplification or library prep. | Recurrent aneuploidy-like signals on chr7, 11, 16, 19. | Be aware of common artifact locations; use updated NGS kits. |

| Sample Cross-Contamination | Improper sample handling. | Detection of alleles in negative controls; mixed profiles. | Use single-use reagents; handle one sample at a time; include negative controls. |

Experimental Protocols

Detailed Methodology: Comparative Analysis of FF and FFPE Samples

The following protocol is adapted from Loderer et al., which compared NGS metrics between paired FF and FFPE samples [26].

Sample Collection and Processing:

- Obtain informed consent and ethical approval.

- Immediately after surgical resection, deliver the unfixed specimen to the pathology lab.

- A pathologist selects a tumor tissue sample of sufficient volume and divides it into two adjacent parallel aliquots.

- FFPE Aliquot: Fixed in 10% neutral buffered formalin for 24 hours at room temperature, then processed and embedded in paraffin using standard clinical protocols.

- FF Aliquot: A tissue volume of ~3.4 mm³ is submerged in RNAprotect Tissue Reagent and stored at -80°C.

Nucleic Acid Extraction:

- FFPE DNA/RNA: Cut four 20 µm sections. Use the AllPrep DNA/RNA FFPE kit with a gentle deparaffinization step (incubation with solution at 56°C for 3 min). Elute in nuclease-free water [26].

- FF DNA/RNA: Extract from the frozen tissue aliquot using a compatible protocol.

Quality Assessment:

- Quantification: Use a fluorometer (e.g., Qubit) for dsDNA and RNA.

- Tumor Cell Content: For FFPE sections, a pathologist determines the tumor cell ratio (>20% required) from an H&E-stained slide subsequent to the sections used for extraction.

Library Preparation and Sequencing:

- Use the Illumina TruSight Oncology 500 (TSO 500) assay according to the manufacturer's instructions for comprehensive genomic profiling.

- Sequence on an appropriate Illumina platform (e.g., NovaSeq 6000).

Data Analysis:

- Annotate all identified alterations using clinical genomics software (e.g., PierianDx Clinical Genomics Workspace).

- Compare quality control metrics and variant concordance between the paired FF and FFPE samples.

Workflow Visualization

NGS QC Troubleshooting Pathway

This diagram outlines a systematic approach to addressing the three core QC challenges, leading from problem identification to validated solutions and reliable data output.

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent / Tool | Primary Function | Application Context |

|---|---|---|

| RNAprotect Tissue Reagent | Stabilizes nucleic acids immediately after tissue resection to prevent degradation. | Preservation of RNA and DNA for Fresh-Frozen (FF) tissue biobanking [26]. |

| AllPrep DNA/RNA FFPE Kit | Simultaneous extraction of high-quality DNA and RNA from challenging FFPE tissue sections. | Nucleic acid isolation from archived clinical FFPE samples for comprehensive profiling [26]. |

| Illumina TruSight Oncology 500 (TSO 500) | Comprehensive hybrid-capture assay for detecting SNVs, CNVs, fusions, TMB, and MSI. | Genomic profiling of solid tumors in both FFPE and FF samples in a clinical research setting [25] [26]. |

| Qubit Fluorometer & dsDNA HS Assay | Highly accurate fluorescent quantification of double-stranded DNA concentration. | Critical quality control step to ensure adequate and accurate DNA input for library prep [26] [23]. |

| Agilent Bioanalyzer / TapeStation | Microfluidic electrophoresis for assessing DNA integrity and library fragment size distribution. | QC of extracted nucleic acids and final sequencing libraries to check for degradation and appropriate size selection [23]. |

| PierianDx Clinical Genomics Workspace | Cloud-based software for the annotation, interpretation, and reporting of NGS variants. | Analysis and clinical interpretation of variants detected by the TSO 500 assay [25] [26]. |

| Digital Pathology Software (e.g., QuPath) | Open-source software for digital image analysis to quantitatively assess tumor cell content. | Accurate and reproducible determination of tumor purity from H&E-stained slides [28]. |

From Data to Diagnosis: A Methodological Guide to NGS QC in Clinical Cancer Genomics

In cancer diagnostics research, the accuracy of next-generation sequencing (NGS) data is paramount. The first critical step in most NGS workflows, including whole-genome and transcriptome sequencing for tumor profiling, is the quality control (QC) of raw sequence data [34] [1]. This process helps identify issues that could compromise downstream analysis and lead to incorrect clinical interpretations.

FastQC is a widely used tool that provides a simple way to perform quality control checks on raw sequence data from high-throughput sequencing pipelines [35]. It offers a modular set of analyses to quickly assess whether your data has any problems you need to be aware of before proceeding with further analysis. For cancer researchers, this initial QC step is vital for ensuring the reliability of data used to identify genetic alterations, guide targeted therapies, and monitor disease progression [1] [23].

Understanding the FASTQ File Format

Before using FastQC, it's helpful to understand the data it analyzes. NGS raw data is typically stored in FASTQ files, which contain both the sequence reads and quality information for each base call [34].

Structure of a FASTQ File: Each sequence read in a FASTQ file consists of four lines:

- Line 1: Always begins with '@' followed by sequence identifier information

- Line 2: The actual nucleotide sequence

- Line 3: Always begins with a '+' character

- Line 4: Encoded quality scores for each base in Line 2 [34]

Quality Score Encoding: The quality scores in Line 4 use Phred quality scores encoded in ASCII characters. The most common encoding is Phred+33 (fastqsanger). These scores represent the probability that a base was called incorrectly, calculated as Q = -10 × log₁₀(P), where P is the probability of an erroneous base call [34].

Table: Interpretation of Phred Quality Scores

| Phred Quality Score | Probability of Incorrect Base Call | Base Call Accuracy |

|---|---|---|

| 10 | 1 in 10 | 90% |

| 20 | 1 in 100 | 99% |

| 30 | 1 in 1,000 | 99.9% |

| 40 | 1 in 10,000 | 99.99% |

Using the quality encoding character legend, you can determine the quality of each nucleotide in your sequence [34].

Running FastQC: A Step-by-Step Protocol

Basic Command Line Usage

The basic syntax for running FastQC from the command line is straightforward:

For processing multiple files simultaneously:

Output Files

After execution, FastQC generates:

- An HTML report (.html) containing the visual QC report

- A compressed data file (.zip) with the underlying data for each module [36]

Aggregating Multiple Reports with MultiQC

When working with multiple samples (common in cancer studies), use MultiQC to aggregate all FastQC reports into a single, interactive report:

This command searches the current directory for FastQC reports and compiles them into one comprehensive HTML file [37].

Interpreting FastQC Reports: Key Modules and Cancer Research Context

FastQC reports consist of multiple analysis modules. Understanding how to interpret these in the context of your specific experiment is crucial.

Basic Statistics

- What it shows: File name, file type, encoding, total sequences, sequence length, and %GC content.

- What to look for: Ensure the number of sequences and GC content align with expectations for your organism and sample type [34] [36].

Per Base Sequence Quality

- What it shows: Distribution of quality scores at each position across all reads using a boxplot format.

- Interpretation guide:

- Expected pattern: Quality scores may start lower for the first few bases, then remain high before potentially declining toward the end of reads due to signal decay or phasing in Illumina sequencing [34] [36].

- Concerning patterns: Sudden drops in quality, consistently low quality across all positions, or large proportions of low-quality bases [34].

Per Base Sequence Content

- What it shows: Proportion of each nucleotide (A, T, C, G) at every position.

- Cancer research context: For RNA-seq data (common in cancer transcriptomics), this module typically shows biased nucleotide composition at the beginning of reads due to random hexamer priming. This is normal and expected, despite FastQC flagging it as a "FAIL" [34] [38] [36].

Per Sequence GC Content

- What it shows: Distribution of GC content across all sequences compared to a theoretical normal distribution.

- Interpretation guide: Sharp peaks or broad distributions may indicate contamination or over-represented sequences. In cancer research, this could reveal microbial contamination in tumor samples or highly expressed oncogenes [34] [39].

Sequence Duplication Levels

- What it shows: Percentage of sequences that are duplicated at various levels.

- Cancer research context: High duplication levels are expected in RNA-seq of tumor samples with highly expressed genes or in targeted amplicon sequencing. This may not indicate a problem but rather biological reality [38].

Overrepresented Sequences

- What it shows: Sequences that appear in more than 0.1% of the total reads.

- Troubleshooting tip: Use the BLAST function to identify unknown overrepresented sequences, which could indicate contaminants or highly expressed genes of interest in cancer pathways [34].

Table: Common FastQC Warnings/Fails and Their Clinical Research Implications

| Module | Common Flag | Is This Concerning? | Potential Cause | Action |

|---|---|---|---|---|

| Per base sequence content | FAIL (RNA-seq) | Usually not | Random hexamer bias | Typically ignore for RNA-seq [38] |

| Per sequence GC content | WARN/FAIL | Possibly | Contamination, low diversity | Investigate further [34] |

| Sequence duplication | FAIL (RNA-seq) | Usually not | Highly expressed transcripts | Expected for RNA-seq [38] |

| Adapter content | FAIL | Yes | Adapter read-through | Trim adapters [37] |

Troubleshooting Common Quality Issues

Issue 1: Poor Quality at Read Ends

- Problem: Significant quality drop at the 3' end of reads.

- Cause: Expected signal decay in Illumina sequencing [34] [36].

- Solution: Trim low-quality ends using tools like Trimmomatic [37].

Issue 2: Adapter Contamination

- Problem: Detection of adapter sequences in reads.

- Cause: Library fragments shorter than read length.

- Solution: Trim adapter sequences before alignment [37] [38].

Issue 3: Unexpected GC Distribution

- Problem: GC content distribution doesn't match theoretical expectation.

- Cause: Could indicate contamination or specialized library type.

- Solution: For cancer metagenomics studies, this might actually represent microbial contamination of interest that warrants further investigation [39].

The following workflow diagram summarizes the key steps in raw data QC and troubleshooting:

The Scientist's Toolkit: Essential Research Reagents and Software

Table: Essential Tools for NGS Quality Control in Cancer Research

| Tool/Reagent | Function/Purpose | Application Context |

|---|---|---|

| FastQC | Comprehensive quality control tool for raw NGS data | Initial QC for all NGS-based cancer studies [35] |

| MultiQC | Aggregate multiple QC reports into a single interface | Essential for studies with multiple patient samples [37] |

| Trimmomatic | Read trimming tool to remove adapters and low-quality bases | Pre-processing step after identifying QC issues [37] |

| Bioanalyzer/TapeStation | Quality control of nucleic acids before sequencing | Assess DNA/RNA integrity prior to library prep [23] |

| FFPE DNA/RNA Extraction Kits | Specialized kits for extracting nucleic acids from archived samples | Critical for cancer research using clinical archives [23] |

| Targeted Enrichment Panels | Gene panels for capturing cancer-relevant genes | Tumor profiling with focused gene sets [23] |

Frequently Asked Questions (FAQs)

Q1: My RNA-seq data failed the "Per base sequence content" module. Should I be concerned? A: Typically, no. This "failure" is expected for RNA-seq data due to non-random hexamer priming during library preparation, which creates biased nucleotide composition at the beginning of reads. This is a technical artifact of the method rather than an indication of poor data quality [34] [38] [36].

Q2: What percentage of reads is acceptable for adapter contamination? A: Any non-zero adapter content should be addressed, as adapters can interfere with alignment. Tools like Trimmomatic or Cutadapt can remove these sequences. The example in the search results showed that even a small percentage of adapter contamination is worth trimming before alignment [37].

Q3: How do I interpret high sequence duplication levels in my cancer RNA-seq data? A: High duplication levels may reflect biological reality rather than technical issues in cancer studies. Highly expressed oncogenes or tumor-specific transcripts will naturally produce duplicate reads. Only be concerned if duplication levels are extreme and correlate with other quality issues [38].

Q4: What quality threshold should I use for filtering cancer NGS data? A: While specific thresholds depend on your application, the generally recommended minimum quality score is Q20 (99% accuracy) for variant calling in cancer studies. However, more stringent thresholds (Q30) are preferred for detecting low-frequency variants in heterogeneous tumor samples [40].

Q5: How can I quickly compare quality metrics across multiple tumor samples? A: Use MultiQC, which automatically compiles FastQC reports from multiple samples into a single interactive report, allowing easy comparison of quality metrics across your entire sample set [37].

Effective quality control of raw NGS data using FastQC is a critical first step in ensuring the reliability of cancer genomics research. By understanding how to properly interpret FastQC reports in the context of specific experiment types—particularly recognizing which "failures" are expected for certain assays like RNA-seq—researchers can avoid discarding good data while identifying true quality issues that need addressing. Implementing robust QC practices enables more accurate detection of cancer-associated variants and ultimately supports the development of more precise diagnostic and therapeutic approaches.

In the context of cancer diagnostics research, the quality of next-generation sequencing (NGS) data directly determines the reliability of variant calling and subsequent clinical interpretations. Effective pre-processing of raw sequencing data is not merely a preliminary step but a fundamental component that ensures the detection of true somatic mutations, copy number variations, and fusion events while minimizing false positives caused by technical artifacts. Formalin-fixed paraffin-embedded (FFPE) tissues, widely used in oncology due to their long-term storage stability, present specific challenges including nucleic acid degradation and increased adapter contamination, making rigorous pre-processing essential for accurate comprehensive genomic profiling [25] [26].

This guide addresses common challenges researchers encounter during NGS pre-processing and provides troubleshooting solutions framed within the stringent requirements of cancer genomics, where identifying clinically actionable variants with high confidence is paramount.

Frequently Asked Questions (FAQs)

Q1: Why is adapter removal particularly crucial when working with FFPE-derived cancer samples?

Adapter contamination occurs when the DNA fragment being sequenced is shorter than the read length, resulting in the sequencing of adapter sequences ligated during library preparation. This is especially problematic with FFPE samples because formalin fixation causes DNA fragmentation, producing shorter inserts [25] [41]. When adapter sequences remain in reads, they can prevent correct alignment to the reference genome and lead to misleading mismatches that hinder accurate SNP calling and variant detection [41]. In cancer diagnostics, this can directly impact the identification of clinically significant variants used for treatment selection.

Q2: What quality score threshold should I use for trimming low-quality bases in cancer panels?

For Illumina data used in cancer panel sequencing (e.g., TruSight Oncology 500), a minimum quality score (Q) of 30 is recommended, which corresponds to a base call accuracy of 99.9% [13] [42]. This stringent threshold ensures that only high-confidence bases contribute to variant calling. For platforms with inherently higher error rates, such as Oxford Nanopore Technologies, a lower threshold (e.g., Q7) may be appropriate [42]. Quality trimming should be performed before adapter removal to ensure the remaining sequences are of sufficient quality for accurate adapter detection.

Q3: How does sample type (FFPE vs. fresh-frozen) impact pre-processing decisions?

Fresh-frozen (FF) tissue generally yields higher-quality nucleic acids compared to FFPE samples. A recent study comparing paired FFPE and FF samples using the Illumina TruSight Oncology 500 assay demonstrated that FF tissue serves as a superior source of genetic material for detecting small variants, microsatellite instability, and tumour mutational burden [25] [26]. FFPE samples typically require more stringent quality trimming and often benefit from overlapping paired-read collapsing to reconstruct shorter fragments. When working with FFPE samples, consider implementing read merging to combine overlapping paired-end reads into single, higher-quality consensus sequences [41].

Q4: What metrics indicate successful pre-processing before proceeding to alignment?

After pre-processing, your data should meet these key quality indicators:

- Adapter Content: <0.1% in FastQC reports

- Per Base Sequence Quality: Q-score >30 across all bases

- Read Length Distribution: Majority of reads remain after trimming (minimally >70% of original length)

- Ambiguous Bases: N content <5% of total bases

Systematic removal of lower quality samples within datasets has been shown to improve the clustering of disease and control samples in downstream analyses [40].

Q5: When should I use read merging versus maintaining paired-end information?

Read merging (collapsing) is recommended when sequencing short inserts from fragmented DNA, such as that from FFPE samples, where paired-end reads overlap. Merging overlapping reads generates a single, higher-quality consensus sequence and can significantly improve the detection of true variants [41] [42]. However, for non-overlapping pairs or when analyzing structural variants where paired-end information is crucial for detection, maintain the separate paired reads. Tools like AdapterRemoval v2 can identify overlapping regions and merge reads in a quality-aware manner while preserving non-overlapping pairs [43].

Experimental Protocols: Implementing a Robust Pre-Processing Workflow

Standardized Quality Control Protocol for Raw Sequencing Data

- Initial Quality Assessment: Run FastQC on raw FASTQ files to generate baseline quality metrics including per base sequence quality, adapter content, and GC content [13] [40].

- Multi-Tool QC Verification: Use multiple QC tools to increase sensitivity and specificity of problem detection. Combine FastQC with platform-specific tools like Nanoplot for long-read data [13] [40].

- Quality Metric Documentation: Record key metrics including total reads, Q30 score, GC content, and adapter contamination levels for inclusion in experimental records.

- Sample Quality Classification: Implement data-driven guidelines, such as those derived from ENCODE project analysis, to classify files by quality based on thresholds appropriate for your specific experimental conditions [40].

Comprehensive Trimming and Adapter Removal Protocol

The following workflow diagram illustrates the sequential steps for comprehensive NGS data pre-processing:

Detailed Protocol Steps:

- Demultiplexing: Separate multiplexed samples by barcodes using tools like BBDuk or AdapterRemoval v2's demultiplexing function. Always perform this step before adapter trimming [42].

- Adapter Trimming: Use AdapterRemoval v2 (for high throughput and accurate alignment-based detection) or CutAdapt with appropriate adapter sequences. For Illumina data, use standard adapter sequences provided by the manufacturer [41] [13].

- Quality Trimming: Trim low-quality bases from read ends using a sliding window approach. For Illumina data in cancer applications, use a minimum quality threshold of Q30 [42]. Trim ambiguous bases (N) from both ends of reads.

- Read Merging: For paired-end data with overlapping reads, use AdapterRemoval v2 or BBMerge to combine read pairs into single consensus sequences with recalculated quality scores [41] [42].

- Length Filtering: Discard reads falling below a minimum length threshold (typically 20-25 bp) as these are unlikely to map uniquely to the reference genome.

- Post-Processing QC: Re-run FastQC on trimmed files to verify improvement in quality metrics and ensure adapter contamination has been successfully removed [13].

Tool Comparison and Selection Guide

Table 1: Comparison of Adapter Trimming and Quality Control Tools

| Tool | Primary Function | Strengths | Considerations for Cancer Genomics |

|---|---|---|---|

| AdapterRemoval v2 [41] [43] | Adapter trimming, read merging | High throughput with SIMD optimization, handles multiple adapter sets, quality-aware merging | Particularly suitable for FFPE samples with short inserts; improves mutation detection in low-quality samples |

| CutAdapt [13] [44] | Adapter trimming | Simple workflow, precise adapter sequence matching | Effective for standard adapter layouts; may struggle with highly degraded samples |

| Trimmomatic [13] [44] | Quality trimming, adapter removal | Sliding window quality trimming, multi-threaded | Provides flexible trimming parameters for different quality thresholds |

| FastQC [13] [40] | Quality control | Comprehensive visual report, established standard | Requires experience to interpret results in context of cancer genomics; compare against ENCODE guidelines [40] |

| BBDuk [42] | Trimming, filtering | Integrated in Geneious Prime, user-friendly interface | Good for labs using Geneious ecosystem; may lack advanced features of command-line tools |

Table 2: Key Quality Metrics and Target Thresholds for Cancer NGS Data

| Quality Metric | Calculation Method | Target Threshold | Impact on Cancer Variant Calling |

|---|---|---|---|

| Q30 Score [13] | Percentage of bases with quality score ≥30 | >80% | Higher scores reduce false positive variant calls |

| Adapter Content [41] | Percentage of reads containing adapter sequence | <0.1% | Prevents misalignment that can obscure true somatic variants |

| Reads Passing Filters [13] | Percentage of reads retained after trimming | >70% | Ensures sufficient coverage for detecting low-frequency variants |

| Average Read Length | Mean length after trimming | >50 bp (FFPE), >75 bp (FF) | Longer reads improve mapping accuracy and fusion detection |

| Unmapped Read Rate [40] | Percentage of reads failing to align | <10% | High rates may indicate persistent adapter content or quality issues |

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Essential Research Reagents and Platforms for NGS Pre-processing

| Reagent/Solution | Function | Application in Cancer NGS |

|---|---|---|

| Illumina TruSight Oncology 500 [25] [26] | Comprehensive genomic profiling assay | Simultaneously analyzes 523 cancer-related genes for small variants, fusions, CNVs, TMB, and MSI |

| AllPrep DNA/RNA FFPE Kit [26] | Nucleic acid extraction | Simultaneous DNA/RNA extraction from precious FFPE samples; maximizes yield from limited material |

| Qubit dsDNA HS Assay [26] [23] | DNA quantification | Fluorometric measurement specific for double-stranded DNA; more accurate for FFPE samples than spectrophotometry |

| Agilent SureSelectXT Target Enrichment [23] | Library preparation | Hybrid capture-based target enrichment for focused cancer panels; effective with degraded DNA |

| Agilent High Sensitivity DNA Kit [23] | Library quality control | Assesses size distribution and quantity of sequencing libraries before sequencing |

Troubleshooting Common Issues

Problem: High adapter content persists after trimming. Solution: Verify you're using the correct adapter sequences for your library preparation kit. For Illumina data, standard adapters are publicly available [13]. For paired-end reads, use tools like AdapterRemoval v2 that leverage information from both reads to identify adapter contamination with higher sensitivity, even for very short adapter fragments [41] [43].

Problem: Excessive read loss during quality trimming. Solution: If >50% of reads are discarded, consider relaxing the quality threshold (to Q20) while increasing sequencing depth to compensate. For FFPE samples with inherent quality issues, implement read merging to rescue reads that would otherwise be discarded [41]. Always assess input DNA quality using methods like the Agilent TapeStation to identify samples with severe degradation before sequencing [13].

Problem: Poor concordance in variant detection between FFPE and fresh-frozen pairs. Solution: This is a recognized challenge in cancer genomics. Focus on optimizing pre-processing parameters specifically for FFPE samples. A recent study found lower concordance for splice variants, fusions, and copy number variants compared to small variants when comparing FFPE and fresh-frozen pairs [25] [26]. Consider using fresh-frozen tissue as the primary source when possible, or apply specialized FFPE-optimized pre-processing workflows.

Implementing rigorous pre-processing practices for adapter removal and quality trimming establishes the foundation for reliable cancer genomic analysis. The selection of appropriate tools and thresholds should be guided by sample type (FFPE vs. fresh-frozen), sequencing platform, and specific research questions. By adhering to the protocols and troubleshooting guidelines presented here, researchers can significantly improve the quality of their NGS data, leading to more accurate detection of cancer-associated variants and ultimately, more reliable diagnostic and therapeutic decisions.

Fundamental Concepts and Definitions

What is Variant Allele Frequency (VAF) and how is it calculated? Variant Allele Frequency (VAF) is a critical metric in next-generation sequencing (NGS) that represents the proportion of sequencing reads that contain a specific genetic variant compared to the total number of reads at that genomic position. The basic calculation formula is:

VAF = (Number of reads containing the variant) / (Total reads at that position) × 100%

For example, if a targeted NGS panel yields 1,000 reads at a given position and 50 of those reads show a variant, the VAF would be calculated as 5% [45]. In oncology, VAF is particularly valuable as it provides insights into tumor heterogeneity, clonal evolution, and can serve as a biomarker for monitoring treatment response and disease progression [46].

How do VAF sensitivity and specificity differ in clinical NGS applications? In NGS-based cancer diagnostics, VAF sensitivity refers to the ability to correctly detect low-frequency variants present in a small percentage of cells, which is crucial for applications like minimal residual disease (MRD) monitoring. VAF specificity indicates the assay's ability to distinguish true variants from sequencing errors and false positives, ensuring that reported variants are biologically real rather than technical artifacts [45].

The relationship between these metrics is inverse; as sensitivity increases to detect lower VAF variants, specificity challenges may emerge due to background technical noise. Achieving optimal balance requires careful consideration of sequencing depth, error rates, and bioinformatic filtering strategies [45] [47].

Technical Factors Influencing VAF Performance

What is the relationship between sequencing depth and VAF sensitivity? Sequencing depth (coverage) directly determines VAF sensitivity, with deeper sequencing enabling more reliable detection of low-frequency variants. The probabilistic nature of sequencing means that with limited reads, there is higher uncertainty in VAF measurement and greater potential to miss rare variants [45].

The table below illustrates how sequencing depth affects confidence in detecting a 1% VAF variant:

| Sequencing Depth | Variant Reads | Confidence in 1% VAF | Recommended Application |

|---|---|---|---|

| 100x | ~1 read | Low: High probability of missing variant | Germline variants (~50% VAF) |

| 1000x | ~10 reads | Moderate: Suitable for higher VAF somatic variants | Routine somatic testing |

| 10,000x | ~100 reads | High: Reliable low VAF detection | MRD, liquid biopsy, resistance mutations |

Higher sequencing depth reduces the impact of sampling effects and sequencing errors, providing greater confidence in VAF calculations. For instance, detecting a single variant read out of 100 total reads (1% VAF) has high uncertainty, whereas detecting 100 variant reads out of 10,000 total reads (same 1% VAF) provides substantially more reliable measurement [45]. This principle is particularly important in hematological malignancies and solid tumors where detecting clonal mutations at low frequencies is crucial for clinical decision-making [45].

What methodological factors affect VAF sensitivity and specificity? Multiple technical factors throughout the NGS workflow influence VAF performance:

Tumor Purity: The percentage of tumor cells in the sample directly impacts maximum detectable VAF. A mutation present in all tumor cells will show a VAF of approximately 50% in a diploid genome with 100% tumor purity, but proportionally less in samples with lower tumor content [48].

Sample Type: Formalin-fixed paraffin-embedded (FFPE) tissues may exhibit DNA damage that introduces artifacts, reducing specificity. Circulating tumor DNA (ctDNA) samples typically have very low VAF variants (often <1%), requiring exceptional sensitivity [47].

Library Preparation Method: Hybrid capture-based methods generally offer better uniformity and fewer amplification artifacts compared to amplicon-based approaches, though the latter can achieve higher depth with less sequencing [48].

Unique Molecular Identifiers (UMIs): Incorporating UMIs during library preparation improves specificity by enabling error correction and distinguishing true biological variants from PCR and sequencing errors [10].

Bioinformatic Pipelines: Variant calling algorithms significantly impact both sensitivity and specificity. Combining multiple callers and implementing sophisticated filtering strategies can enhance performance, particularly for low-VAF variants [47].

Validation and Quality Control Methods

What are the recommended approaches for validating VAF sensitivity? Robust validation of VAF sensitivity requires carefully designed experiments using reference materials with known mutation frequencies:

Limit of Detection (LOD) Studies: Determine the minimum VAF detectable with high confidence by testing serial dilutions of reference standards. For example, one study established a minimum detectable VAF of 2.9% for both SNVs and INDELs using a 61-gene oncopanel [49].

Titration Experiments: Assess performance across a range of VAFs and DNA inputs. One validation study demonstrated that ≥50ng DNA input was necessary to reliably detect all expected mutations, with sensitivity declining substantially at lower inputs [49].

Precision Studies: Evaluate repeatability (intra-run precision) and reproducibility (inter-run precision) through replicate testing. One reported assay achieved 99.99% repeatability and 99.98% reproducibility for variant detection [49].

The wet-lab protocol for VAF sensitivity validation typically involves:

- Obtain characterized reference standards with known mutations

- Prepare serial dilutions in wild-type DNA to simulate different VAF levels (e.g., 10%, 5%, 2.5%, 1%)

- Process dilutions through entire NGS workflow in replicates

- Sequence with intended coverage depth

- Analyze variant calling performance at each VAF level

- Establish LOD as the lowest VAF where variants are detected with ≥95% probability [48] [49]

What quality control metrics ensure reliable VAF measurement? Implementing comprehensive QC checks throughout the NGS workflow is essential:

Pre-analytical QC: Pathologist review of solid tumor samples to estimate tumor cell percentage; DNA quality and quantity assessment [48].

Sequencing QC: Monitor metrics including average base call quality (Q-score ≥20 expected), percentage of target regions covered at minimum depth (e.g., ≥100x), and coverage uniformity (>99% ideal) [49].

Bioinformatic QC: Novel methods like EphaGen estimate the probability of missing variants from a defined spectrum, providing diagnostic sensitivity estimation superior to conventional coverage metrics [50].

Internal Standards: Synthetic spike-in controls enable calculation of technical error rates, limit of blank, and limit of detection for each variant position in each sample [10].

The following workflow diagram illustrates the key stages where QC metrics should be applied in NGS testing:

Troubleshooting Common VAF Issues

How can I improve detection of low-VAF variants? Several strategies can enhance sensitivity for low-frequency variants:

Increase Sequencing Depth: Higher coverage directly improves low-VAF detection. One study recommended depths >1000x for reliable detection of variants below 5% VAF [47].

Implement UMIs: Unique Molecular Identifiers enable accurate error correction and improve signal-to-noise ratio, facilitating detection of variants at frequencies as low as 0.1% with certain technologies [10].

Optimize Bioinformatics: Employ specialized variant callers designed for low-frequency variants (e.g., LoFreq) and implement stringent filtering against background error profiles [47].

Fragment Size Selection: For ctDNA analysis, select shorter DNA fragments (∼100–150 bp) which are enriched for tumor-derived DNA compared to longer fragments from non-malignant cells [46].

What are common causes of false positive VAF results and how can they be mitigated? False positive variant calls can arise from multiple sources:

FFPE Artifacts: Cytosine deamination in FFPE samples causes C>T/G>A artifacts. Mitigation strategies include using damage-repair enzymes, duplex sequencing, and bioinformatic filters [48].

Clonal Hematopoiesis: Somatic mutations in blood cells can be misattributed as tumor variants. Sequencing matched normal DNA (e.g., from peripheral blood) enables identification and filtering of these variants [46].

PCR Errors: Amplification artifacts during library preparation. Using high-fidelity polymerases, limiting PCR cycles, and implementing UMIs can reduce these errors [45] [10].

Mapping Errors: Incorrect alignment of reads to repetitive regions. Improved alignment algorithms and manual inspection of difficult genomic regions can address this issue [48].

The following table outlines common issues and solutions for VAF specificity:

| Problem | Potential Causes | Recommended Solutions |

|---|---|---|

| High false positive rate | FFPE damage, PCR errors, clonal hematopoiesis | Use UMIs, repair enzymes, matched normal sequencing, bioinformatic filtering [46] [48] [10] |

| Inconsistent VAF measurements | Low sequencing depth, coverage dropouts | Increase coverage (>1000x), improve library uniformity, target enrichment optimization [45] [49] |

| Systematic VAF underestimation | Allele dropout, amplification bias | Hybrid capture methods, optimize primer/probe design, validate with orthogonal methods [48] |

| High variant calling variability | Inadequate bioinformatic parameters | Standardize variant calling pipelines, use multiple callers, implement machine learning approaches [50] [49] |

Clinical Applications and Interpretation

What VAF thresholds are clinically relevant in cancer diagnostics? Clinically relevant VAF thresholds vary by application and sample type: