Defining Precision: A Comprehensive Guide to the Analytical Sensitivity and Specificity of Next-Generation Sequencing Panels

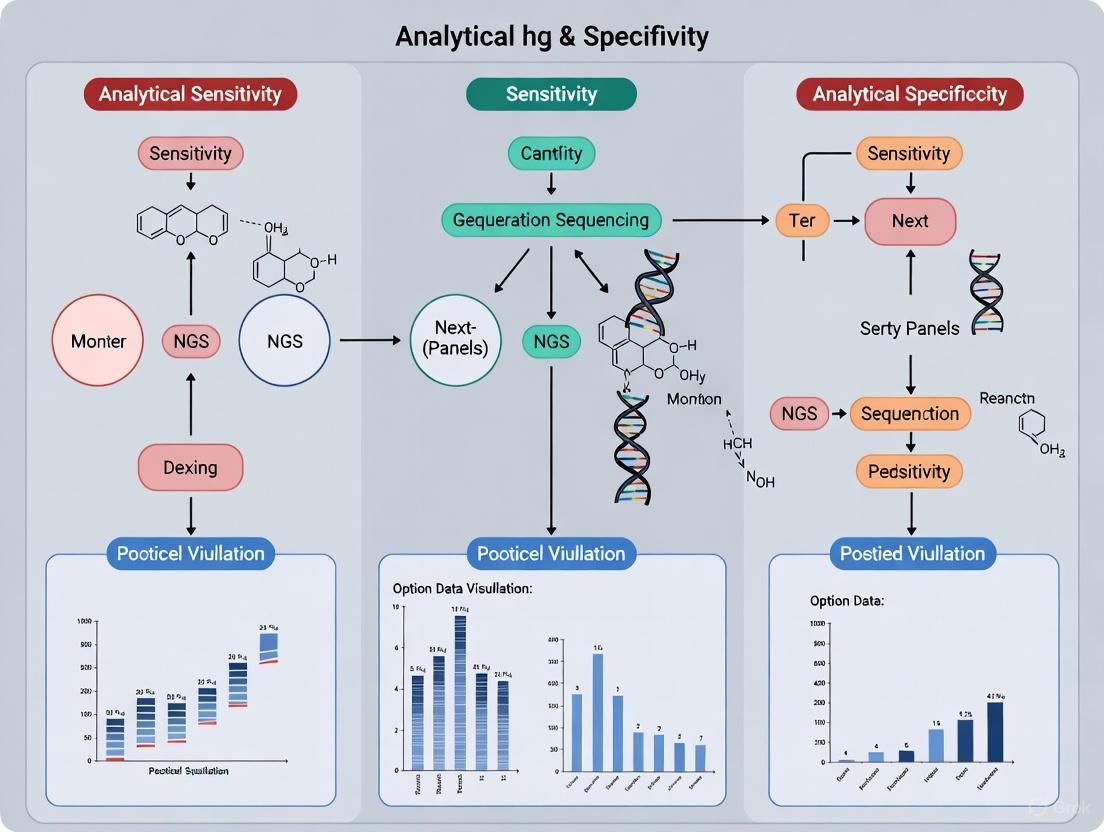

This article provides a comprehensive overview of the critical performance metrics—analytical sensitivity and specificity—for Next-Generation Sequencing (NGS) panels in a clinical and research context.

Defining Precision: A Comprehensive Guide to the Analytical Sensitivity and Specificity of Next-Generation Sequencing Panels

Abstract

This article provides a comprehensive overview of the critical performance metrics—analytical sensitivity and specificity—for Next-Generation Sequencing (NGS) panels in a clinical and research context. Aimed at researchers, scientists, and drug development professionals, it covers foundational definitions, methodological approaches for assay design and implementation, strategies for troubleshooting and optimization, and rigorous frameworks for test validation and comparison. By synthesizing current guidelines, best practices, and recent validation data, this resource aims to support the development of robust, reliable NGS assays that underpin precision medicine and therapeutic development.

Core Concepts: Understanding Sensitivity and Specificity in NGS Diagnostics

Next-generation sequencing (NGS) has revolutionized genomic analysis across basic research, clinical diagnostics, and drug development. The technology's value in these high-stakes applications hinges entirely on the rigorous demonstration of its analytical performance. For researchers, scientists, and drug development professionals, understanding and verifying the key metrics of analytical sensitivity, specificity, precision, and accuracy is not merely a technical formality but a fundamental requirement for generating reliable, interpretable, and actionable data.

These metrics form the backbone of assay validation, providing a quantitative framework to assess how well an NGS test performs against a known reference. They answer critical questions: Can the test reliably detect true positive mutations (sensitivity)? Can it correctly identify true negative results (specificity)? Are the results consistent across repeated runs (precision)? And how close are the test results to the true genetic variant profile (accuracy)? This guide objectively compares the performance of different NGS approaches and alternative technologies, supported by experimental data, to inform strategic decisions in assay development and platform selection.

Quantitative Comparison of NGS Performance Across Applications

The performance of an NGS assay is not a single universal value but varies based on the application, variant type, and technology platform. The following tables summarize key performance metrics from recent studies across different genomic contexts.

Table 1: Performance Metrics of NGS Across Different Testing Contexts

| Application / Test Focus | Sensitivity (%) | Specificity (%) | Precision (%) | Accuracy (%) | Reference / Context |

|---|---|---|---|---|---|

| Solid Tumour (61-gene Oncopanel) [1] [2] | 98.23 | 99.99 | 97.14 | 99.99 | Validation against orthogonal methods |

| NSCLC (Tissue, EGFR) [3] | 93 | 97 | N/R | N/R | Meta-analysis vs. standard techniques |

| NSCLC (Tissue, ALK) [3] | 99 | 98 | N/R | N/R | Meta-analysis vs. standard techniques |

| Spinal Muscular Atrophy (SMN1) [4] | 100 | 100 | 100 | 100 | vs. MLPA (for 0, 1, ≥2 copies) |

| Gastrointestinal Cancer (93-gene panel) [5] | >99 (for SNVs/Indels) | 97.4 (SNVs), 93.6 (Indels) | N/R | N/R | Validation with reference DNA |

Abbreviations: N/R: Not Reported; NSCLC: Non-Small Cell Lung Cancer. Note: Performance can vary based on DNA input, variant allele frequency (VAF), and sample type.

A critical differentiator in NGS performance is the type of genomic alteration being detected. The limit of detection (LOD), often defined as the lowest variant allele frequency (VAF) an assay can reliably identify, is a direct reflection of its analytical sensitivity. Furthermore, the choice between in-house developed tests and commercial kits represents a significant decision point for laboratories, each with implications for performance, cost, and flexibility.

Table 2: Comparing Detection Capabilities and Test Types

| Aspect | Performance / Characteristic | Context / Implication |

|---|---|---|

| Limit of Detection (LOD) | ~3% VAF for SNVs and Indels [2] | Defined during analytical validation; crucial for detecting low-frequency variants. |

| Commercial NGS Panels | Pre-designed, clinically validated [3] | Standardized, often with regulatory approval; easier implementation. |

| In-House NGS Panels | Customizable, cost-effective [1] [6] | Can be tailored to specific research needs; requires extensive internal validation. |

| Turnaround Time (In-House vs. Outsourced) | 4 days (in-house) vs. ~3 weeks (outsourced) [1] [2] | In-house testing facilitates more timely clinical decisions and research progress. |

Experimental Protocols for Establishing Key Metrics

Validating an NGS assay requires carefully designed experiments to empirically determine each performance metric. The following outlines standard protocols for this validation.

Sample Selection and Reference Materials

The foundation of a robust validation is the use of well-characterized reference materials. These include:

- Cell Line DNA: Commercial reference standards (e.g., HD701) with known mutations at defined allele frequencies are used to titrate input DNA and establish sensitivity and LOD [1] [2] [5].

- Clinical Samples: Archived, previously characterized formalin-fixed, paraffin-embedded (FFPE) tumor samples or liquid biopsy specimens are run in parallel to confirm concordance with orthogonal methods (e.g., PCR, Sanger sequencing, FISH) [3] [5].

- Orthogonal Methods: Results from the NGS assay are compared to those from established, often single-gene, tests considered the "gold standard" for that specific variant [4].

Experimental Workflow for Validation

The typical workflow involves a multi-step process to evaluate the assay from sample to result:

Data Analysis and Metric Calculation

After sequencing, variants are called, and the results are compared against the "truth set" (the known variants in the reference materials) to populate a contingency table. The key metrics are then calculated as follows [1] [2] [4]:

Analytical Sensitivity (Recall): The ability of the test to correctly identify true positive variants.

Sensitivity = (True Positives) / (True Positives + False Negatives)

Analytical Specificity: The ability of the test to correctly identify true negative regions.

Specificity = (True Negatives) / (True Negatives + False Positives)

Precision (Positive Predictive Value): The proportion of positive test results that are true positives.

Precision = (True Positives) / (True Positives + False Positives)

Accuracy: The overall agreement between the test results and the true condition.

Accuracy = (True Positives + True Negatives) / (Total Tests)

The Scientist's Toolkit: Essential Reagents and Materials

Implementing a validated NGS test requires a suite of reliable reagents and platforms. The table below details key solutions used in the featured studies.

Table 3: Key Research Reagent Solutions for Targeted NGS

| Item / Solution | Function / Role | Example Use-Case |

|---|---|---|

| Hybridization-Capture Library Kits | Target enrichment by capturing genomic regions of interest using biotinylated oligonucleotide probes. | Sophia Genetics kit for 61-gene oncopanel [1] [2]. |

| Automated Library Prep Systems | Automates library preparation to reduce human error, contamination risk, and improve consistency. | MGI SP-100RS system [1] [2]. |

| Benchtop Sequencers | High-throughput sequencing platforms that form the core of NGS testing. | MGI DNBSEQ-G50RS [1], Illumina MiSeq [7], Thermo Fisher Ion S5 [7]. |

| Bioinformatics Software | Analyzes sequencing data, performs variant calling, and filters results; some platforms use machine learning. | Sophia DDM with OncoPortal Plus [1] [2]. |

| Multiplex Ligation-Dependent Probe Amplification (MLPA) | A gold standard method for copy number variation detection, used for orthogonal confirmation. | Confirmatory testing for SMN1 copy number in SMA [4]. |

Interrelationships of Performance Metrics in NGS Validation

Understanding how the four key metrics interrelate is crucial for a holistic interpretation of an assay's performance. They are intrinsically linked, and optimization efforts often involve balancing these metrics against one another.

As illustrated, a primary relationship exists between sensitivity and precision. Setting a lower variant allele frequency (VAF) threshold for variant calling can increase sensitivity by capturing more true positive variants. However, this can also increase false positives from sequencing artifacts, thereby reducing precision. A high-quality, well-validated NGS assay achieves a balance where both sensitivity and precision are maximized at a defined, optimal threshold [2]. High accuracy and specificity are foundational, indicating that the test is fundamentally sound and correctly identifies the wild-type background.

The rigorous definition and measurement of analytical sensitivity, specificity, precision, and accuracy are non-negotiable for the credible application of NGS in research and drug development. Data from recent studies confirm that well-validated NGS panels, whether commercial or custom in-house designs, can achieve performance metrics exceeding 98% sensitivity and 99% specificity for key variant types like SNVs and indels, with accuracy often reported at 99.99% [1] [2] [6]. This performance is comparable to, and often surpasses, that of conventional single-gene assays while providing a comprehensive genomic profile.

For professionals in the field, this underscores the importance of a thorough validation strategy based on reference standards and orthogonal confirmation. The choice between testing options should be informed by these validated performance characteristics, alongside practical considerations such as turnaround time and cost. As NGS technology continues to evolve, these core metrics will remain the essential language for assessing its reliability and driving its informed adoption across genomics-driven fields.

The advent of next-generation sequencing (NGS) has fundamentally transformed genomic research and clinical diagnostics, enabling the simultaneous analysis of millions of DNA fragments to uncover genetic variations with unprecedented resolution [7]. In the realm of precision oncology and genetic disease research, accurate detection of diverse variant types—including single nucleotide variations (SNVs), insertions/deletions (indels), copy number variations (CNVs), and gene fusions—has emerged as a critical requirement for guiding therapeutic decisions and understanding disease mechanisms [2] [3]. Each variant class presents unique detection challenges; SNVs and small indels require high base-level accuracy, while CNVs demand precise quantification of genomic copy number, and fusions need specialized algorithms to identify structural rearrangements and chimeric transcripts [8].

The establishment of rigorous performance benchmarks across these variant types is not merely an academic exercise but a fundamental prerequisite for clinical implementation. Current trends in genomic analysis reveal a significant limitation: many studies and diagnostic pipelines focus on only subsets of variant types independently due to the technical challenges of joint detection and accurate reporting [8] [9]. This fragmented approach obscures the complex interactions between different variant classes and potentially misses clinically significant findings. Research by Bianchi et al. highlights this gap, noting that "a common shortfall is their focus on either SNVs/Indels or CNVs separately, thus omitting a comprehensive pipeline that addresses all variants simultaneously" [9].

This article establishes a structured framework for evaluating the analytical performance of NGS methodologies across the full spectrum of variant types, with particular emphasis on their applications in cancer genomics and rare disease research. By synthesizing recent benchmarking data from multiple studies and consortia, we provide researchers and clinicians with standardized metrics for assessing variant calling accuracy, sensitivity, and specificity across different technological platforms and analytical pipelines.

Performance Metrics and Benchmarking Standards

Established Benchmarking Frameworks and Gold Standard Datasets

The creation of reliable performance benchmarks for variant calling relies on well-characterized reference materials and standardized evaluation methodologies. The Genome in a Bottle (GIAB) Consortium, in collaboration with the National Institute of Standards and Technology (NIST), has developed extensively validated human genome benchmarks that serve as gold standards for evaluating variant calling performance [10] [11]. These benchmarks have evolved substantially over time, with recent expansions incorporating long-read and linked-read sequencing technologies to include difficult-to-map regions and segmental duplications that challenge short-read technologies [12]. The latest benchmarks cover approximately 92% of the autosomal GRCh38 assembly for the HG002 sample, including medically relevant genes such as PMS2 that were previously problematic for variant calling [12].

The Global Alliance for Genomics and Health (GA4GH) has established best practices for benchmarking variant calls, providing a standardized framework for performance assessment [10]. This framework employs sophisticated evaluation tools such as hap.py, which enables stratified performance analysis across different genomic contexts—distinguishing performance in high-confidence regions versus challenging repetitive elements, and segmenting results by variant type and functional genomic category [10]. This stratified approach is particularly valuable for identifying specific weaknesses in variant calling pipelines that might be masked by overall performance metrics.

For cancer genomics, additional benchmarking resources include commercially available reference standards with predetermined variant profiles. Studies validating pan-cancer NGS panels frequently utilize samples from the Horizon Discovery HD701 series, which contains 13 predefined mutations across key cancer genes at known allele frequencies, enabling precise determination of analytical sensitivity and limit of detection [2]. Similarly, the Hedera Profiling 2 (HP2) circulating tumor DNA test was validated using reference standards with variants spiked in at 0.5% allele frequency, demonstrating the high sensitivity required for liquid biopsy applications [13].

Key Performance Metrics for Variant Calling

The analytical performance of variant detection pipelines is quantified through standardized metrics that provide comprehensive assessment of accuracy:

- Sensitivity (Recall): The proportion of true positive variants correctly identified by the assay, calculated as TP/(TP+FN) [2] [11]

- Specificity: The proportion of true negative variants correctly identified by the assay, calculated as TN/(TN+FP) [2] [3]

- Precision: The proportion of called variants that are true positives, calculated as TP/(TP+FP) [2] [11]

- Accuracy: The overall agreement between the test results and the reference standard, calculated as (TP+TN)/(TP+FP+TN+FN) [2]

- Limit of Detection (LOD): The lowest variant allele frequency (VAF) at which a mutation can be reliably detected, typically established through dilution series [2]

Different variant types exhibit characteristically different performance profiles. SNVs generally achieve the highest sensitivity and precision, often exceeding 99% in well-powered assays, while indels typically show slightly lower performance (96-98%) due to the increased complexity of alignment and calling [11]. CNV detection sensitivity varies considerably based on exon coverage and bioinformatic approach, and fusion detection presents unique challenges, particularly in liquid biopsy contexts where breakpoints may be present at very low frequencies [13] [3].

Table 1: Standard Performance Metrics by Variant Type

| Variant Type | Typical Sensitivity Range | Typical Specificity Range | Key Technical Challenges |

|---|---|---|---|

| SNVs | 93-99.9% [2] [3] [11] | 97-99.99% [2] [3] | Low allele fractions, sequencing artifacts |

| Indels | 80-98% [2] [11] | >99% [2] | Homopolymer regions, alignment errors |

| CNVs | 85-95% (tissue) [13] | >95% [13] | Coverage uniformity, ploidy estimation |

| Fusions | 80-99% [13] [3] | >98% [13] [3] | Breakpoint resolution, low tumor fraction |

Comparative Performance of Variant Calling Technologies

Platform and Software Performance Benchmarks

Rigorous benchmarking studies have revealed substantial differences in performance across variant calling pipelines, with important implications for both research and clinical applications. A comprehensive evaluation of 45 different combinations of read alignment and variant calling tools demonstrated that the choice of variant caller has a greater impact on accuracy than the selection of read aligner [10]. Among the tools evaluated, DeepVariant consistently showed superior performance and robustness, with other actively developed tools such as Clair3, Octopus, and Strelka2 also performing well, though with greater dependence on input data quality and type [10].

For researchers without extensive bioinformatics expertise, several user-friendly commercial solutions have emerged. A recent benchmark of four non-programming variant calling software packages revealed notable performance differences [11]. Illumina's DRAGEN Enrichment achieved the highest precision and recall scores, exceeding 99% for SNVs and 96% for indels across three GIAB reference samples (HG001, HG002, and HG003) [11]. The CLC Genomics Workbench also demonstrated strong performance with significantly faster run times (6-25 minutes), while Partek Flow using unionized variant calls from Freebayes and Samtools showed the lowest indel calling performance with considerably longer processing times (3.6-29.7 hours) [11].

The integration of machine learning and pangenome references represents the cutting edge of variant calling innovation. DRAGEN's approach, which uses multigenome mapping with pangenome references, hardware acceleration, and machine learning-based variant detection, demonstrates how comprehensive variant detection can be achieved in approximately 30 minutes of computation time from raw reads to variant detection [8]. This platform incorporates specialized methods for medically relevant genes with high sequence similarity to pseudogenes or paralogs, including HLA, SMN, GBA, and LPA [8].

Table 2: Performance Comparison of Selected Variant Calling Pipelines

| Variant Caller | SNV Sensitivity | SNV Precision | Indel Sensitivity | Indel Precision | Runtime (WES) |

|---|---|---|---|---|---|

| DRAGEN | >99% [8] [11] | >99% [8] [11] | >96% [8] [11] | >96% [8] [11] | ~30 min [8] |

| DeepVariant | >99% [10] | >99% [10] | >95% [10] | >95% [10] | Hours [10] |

| GATK | 98-99% [10] | 98-99% [10] | 90-95% [10] | 90-95% [10] | Hours [10] |

| Strelka2 | >98% [10] | >98% [10] | >94% [10] | >94% [10] | Hours [10] |

Technology-Specific Performance Characteristics

The performance of variant detection assays varies significantly based on the technological approach and sample type. Hybrid capture-based targeted sequencing panels have demonstrated exceptionally high accuracy for SNV and indel detection, with sensitivities of 98.23% and specificities of 99.99% at 95% confidence intervals when validated using reference standards [2]. These panels achieve comprehensive coverage of targeted regions, with >98% of target bases reaching at least 100× unique molecular coverage, enabling reliable detection of variants at allele frequencies as low as 2.9% [2].

In liquid biopsy applications, specialized ctDNA assays such as the Hedera Profiling 2 (HP2) panel have shown robust performance for detecting SNVs and indels at very low allele frequencies (0.5%), with demonstrated sensitivities of 96.92% and specificities of 99.67% [13]. However, fusion detection in liquid biopsy presents greater challenges, with significantly variable sensitivity depending on the specific genes involved [13] [3]. For example, while EGFR T790M mutations can be detected with high sensitivity in plasma, ALK, ROS1, RET, and NTRK rearrangements show limited sensitivity in liquid biopsy compared to tissue testing [3].

The turnaround time for NGS-based variant detection represents another critical performance metric with direct clinical implications. Comprehensive in-house targeted sequencing panels have demonstrated the ability to reduce turnaround time from 3 weeks to approximately 4 days compared to outsourced testing [2]. Similarly, liquid biopsy testing shows significantly shorter turnaround times compared to tissue-based profiling (8.18 days versus 19.75 days, p < 0.001), facilitating more timely clinical decision-making [3].

Experimental Protocols for Validation Studies

Benchmarking Study Design and Methodology

Robust validation of variant calling performance requires carefully controlled experimental designs that incorporate reference materials, orthogonal validation methods, and standardized analysis pipelines. The following protocol outlines key considerations for conducting comprehensive variant calling benchmarks:

Sample Selection and Preparation:

- Incorporate GIAB reference materials (e.g., HG001-HG007) with established truth sets to enable standardized performance comparisons [10] [11]

- Include commercially available reference standards with predefined variant profiles (e.g., Horizon Discovery HD701) at multiple input concentrations to establish limits of detection [2]

- Utilize clinical samples with orthogonal validation data to assess real-world performance across variant types [13]

- Ensure DNA input meets minimum requirements (typically ≥50 ng for tissue-based assays) to maintain variant detection sensitivity [2]

Sequencing and Data Generation:

- Employ standardized library preparation protocols suitable for the variant types being investigated (e.g., hybridization capture for comprehensive profiling, amplicon-based for focused panels) [2]

- Sequence to appropriate coverage depths based on variant detection requirements (typically >500× mean coverage for targeted panels, >100× for whole exome) [2] [10]

- Include both tissue and liquid biopsy samples when assessing liquid biopsy performance, with matched samples when possible [13] [3]

Data Analysis and Validation:

- Process raw sequencing data through standardized bioinformatic pipelines including read alignment, duplicate marking, and base quality recalibration [10]

- Apply multiple variant calling algorithms specifically tuned for different variant types (e.g., Manta for structural variants, ExomeDepth for CNVs) [8] [9]

- Validate variant calls using orthogonal technologies such as digital PCR, Sanger sequencing, or immunohistochemistry depending on variant type [13] [3]

- Assess performance using standardized metrics (sensitivity, specificity, precision, accuracy) with stratification by variant type, genomic context, and allele frequency [2] [10]

Protocol for Determining Limit of Detection

Establishing the limit of detection (LOD) for variant calling is particularly important for clinical applications, especially in oncology where low-frequency variants may have therapeutic implications. The following stepped protocol is adapted from validated approaches used in recent studies [2] [13]:

Prepare Sample Dilutions: Serially dilute reference standards with known variant allele frequencies (e.g., HD701) with wild-type DNA to create a series of samples with progressively lower variant allele frequencies (e.g., 10%, 5%, 2.5%, 1%, 0.5%) [2]

Process Replicate Samples: For each dilution level, process a minimum of 3-5 replicates across different sequencing runs to assess technical reproducibility [2]

Sequence and Analyze: Sequence all samples using the standardized NGS protocol and process through the established bioinformatic pipeline [2]

Determine Detection Rate: For each variant at each dilution level, calculate the detection rate as the percentage of replicates in which the variant was called at the expected position with the correct genotype [2]

Establish LOD: Define the LOD as the lowest allele frequency at which variants are detected with ≥95% detection rate across replicates [2]

Validate with Clinical Samples: Confirm established LOD using clinical samples with known low-frequency variants as determined by orthogonal methods such as digital PCR [13]

This protocol typically establishes LODs between 2.9-5.0% for SNVs and indels in tissue-based assays [2], while specialized liquid biopsy assays can achieve LODs of 0.5% or lower for certain variant types [13].

Essential Research Reagents and Computational Tools

The consistent and accurate detection of genetic variants across different classes requires carefully selected research reagents and computational resources. The following toolkit represents essential components for establishing and maintaining robust variant detection pipelines in research and clinical settings:

Table 3: Essential Research Reagents and Computational Tools for Variant Detection

| Category | Specific Tools/Reagents | Function | Considerations |

|---|---|---|---|

| Reference Materials | GIAB references (HG001-HG007) [10] [11] | Benchmarking standard | Provide truth sets for multiple variant types |

| Commercial standards (Horizon HD701) [2] | Limit of detection studies | Include known variants at defined frequencies | |

| Wet Lab Reagents | Hybridization capture kits [2] | Target enrichment | Better uniformity than amplicon-based approaches |

| PCR-free library prep kits [10] | Whole genome sequencing | Reduce amplification artifacts | |

| Alignment Tools | BWA-MEM [10] | Read alignment | Gold standard for short reads |

| Isaac aligner [10] | Read alignment | Optimized for Illumina data | |

| Variant Callers | DRAGEN [8] [11] | Multi-variant detection | Integrated platform with hardware acceleration |

| DeepVariant [10] [11] | SNV/indel calling | Deep learning-based approach | |

| GATK HaplotypeCaller [9] [10] | Germline variant calling | Widely adopted in research communities | |

| ExomeDepth/cn.MOPS [9] | CNV calling | Read depth-based CNV detection | |

| Manta [8] | Structural variant calling | Integrated in DRAGEN platform | |

| Validation Tools | hap.py [10] | Benchmarking | Implements GA4GH benchmarking standards |

| VCAT [11] | Performance assessment | User-friendly benchmarking interface | |

| Computational Resources | DRAGEN server [8] | Accelerated processing | Hardware-optimized for speed |

| High-performance computing cluster [9] | Data analysis | Essential for large-scale studies |

Workflow Visualization of Comprehensive Variant Detection

The following diagram illustrates the integrated bioinformatic workflow for comprehensive variant detection from NGS data, highlighting the parallel processing paths required for different variant types:

Diagram 1: Comprehensive variant detection workflow illustrating parallel processing paths for different variant types

The establishment of comprehensive performance benchmarks across the full spectrum of variant types represents a critical foundation for the advancement of precision medicine. Through systematic evaluation of variant calling methodologies, this analysis demonstrates that while modern NGS technologies can achieve high accuracy for SNVs and indels (exceeding 99% sensitivity and specificity for many platforms), significant challenges remain for consistent detection of structural variants, CNVs, and fusions, particularly in challenging genomic contexts and liquid biopsy applications [8] [13] [3].

The evolving landscape of variant detection is characterized by several promising trends. The integration of machine learning approaches directly into variant calling pipelines, as demonstrated by tools like DeepVariant and DRAGEN, continues to push performance boundaries, especially for difficult-to-detect variant types [8] [10]. The adoption of pangenome references that better represent human genetic diversity shows particular promise for improving variant detection in structurally complex regions of the genome that have traditionally posed challenges for short-read technologies [8]. Additionally, the development of specialized methods for medically relevant genes with high sequence similarity to pseudogenes (e.g., PMS2, SMN1, GBA) addresses critical gaps in clinical variant detection [8] [12].

For researchers and clinicians implementing NGS-based variant detection, this benchmarking analysis highlights several key considerations. First, the selection of variant calling pipelines should be guided by the specific variant types most relevant to the research or clinical question, as performance varies substantially across variant classes. Second, rigorous validation using appropriate reference materials and orthogonal methods remains essential, particularly for clinical applications. Finally, the field would benefit from continued development of more diverse reference materials that better represent global genetic diversity and expanded truth sets that include challenging variant types in medically relevant genes.

As genomic technologies continue to evolve, the establishment of comprehensive, standardized benchmarks across all variant types will be essential for ensuring that research findings are robust and clinical applications are safe and effective. The integration of multi-platform sequencing data, advanced computational methods, and diverse reference materials represents the most promising path toward truly comprehensive variant detection that can fully support the goals of precision medicine.

The performance of a targeted next-generation sequencing (NGS) panel is not a matter of chance but a direct consequence of deliberate test design choices. The selection of panel content, definition of target regions, and clarity of intended use collectively establish the analytical foundation for detecting somatic variants in cancer [14]. As clinical laboratories increasingly adopt NGS for cancer testing, understanding how these design elements dictate performance goals has become crucial for developing reliable assays that inform diagnostic classification, guide therapeutic decisions, and provide prognostic insights [14]. This guide examines the fundamental relationship between test design decisions and the resulting analytical performance metrics, providing researchers and developers with evidence-based frameworks for optimizing NGS panel design and validation.

Core Design Elements and Their Performance Implications

Panel Content Selection and Target Region Definition

The genetic composition of an NGS panel establishes the fundamental boundaries of its analytical capabilities. Design decisions must balance clinical relevance with technical feasibility, considering whether to focus on mutational hotspots, entire gene sequences, or specific variant types including single-nucleotide variants (SNVs), insertions and deletions (indels), copy number alterations (CNAs), and structural variants (SVs) [14].

Hotspot versus Comprehensive Coverage: Targeted panels may cover specific mutational hotspots of clinical significance (e.g., exons 18-21 of EGFR) or provide comprehensive coverage of entire coding and non-coding regions relevant to a gene [14]. The choice between these approaches directly impacts the panel's utility: hotspot panels offer cost efficiency for detecting established biomarkers, while comprehensive designs enable novel variant discovery and more accurate CNA assessment [14].

Variant Type Capabilities: Panel design must explicitly consider the types of variants to be detected. SNVs and small indels represent the most common mutation types in solid tumors and hematological malignancies [14]. However, detecting CNAs requires sufficient probe or amplicon coverage across the gene, as measurements from a single hotspot region lack the accuracy of probes covering all exonic regions [14]. Similarly, structural variant detection demands specialized approaches, such as intron-spanning hybridization capture probes for DNA-based fusion detection or RNA sequencing for transcriptome-level fusion identification [14].

Intended Use and Performance Requirements

The intended clinical or research application directly dictates the performance specifications required of an NGS panel. Key considerations include sample types (solid tumors versus hematological malignancies), variant frequency expectations, and necessary detection limits [14].

Tumor Purity and Limit of Detection: The required sensitivity of a panel is directly influenced by the expected tumor purity of samples. For solid tumors, pathologist review and potential microdissection are necessary to enrich tumor content and establish reliable variant detection thresholds [14]. The limit of detection (LOD) must be established according to variant type, with studies demonstrating LODs of approximately 2.8% for SNVs, 10.5% for indels, and 6.8% for large indels (≥4 bp) [15].

Turnaround Time and Throughput: Practical considerations such as required turnaround time influence design choices between large comprehensive panels and focused targeted approaches. Research demonstrates that optimized in-house panels can reduce turnaround time from 3 weeks to just 4 days while maintaining high sensitivity (98.23%) and specificity (99.99%) [2].

Performance Metrics and Experimental Validation

Establishing Analytical Performance

Robust validation of NGS panels requires systematic assessment using well-characterized reference materials and standardized metrics. The Association of Molecular Pathology (AMP) and College of American Pathologists (CAP) have established best practice guidelines for determining positive percentage agreement and positive predictive value for each variant type [14].

Table 1: Key Performance Metrics for Targeted NGS Panels

| Metric | Definition | Acceptance Criteria | Impact Factor |

|---|---|---|---|

| Sensitivity | Ability to detect true positive variants | >96.98% for known mutations [15] | Depth of coverage, panel design [16] |

| Specificity | Ability to exclude false positives | >99.99% [15] | Probe specificity, bioinformatic filtering [17] |

| Reproducibility | Consistency across runs and operators | >99.99% inter-operator concordance [15] | Standardized protocols, quality controls [2] |

| Limit of Detection | Lowest variant allele frequency reliably detected | SNVs: 2.8%; Indels: 10.5% [15] | Sequencing depth, tumor purity [14] |

| Coverage Uniformity | Evenness of sequencing depth across targets | Fold-80 penalty <2.0 [17] | Probe design, GC content, target capture efficiency [16] |

| On-target Rate | Percentage of reads mapping to intended regions | Varies by panel size; higher is better [17] | Probe design, hybridization efficiency [18] |

Reference Materials and Validation Protocols

The National Institute of Standards and Technology (NIST) Genome in a Bottle (GIAB) reference materials provide essential resources for benchmarking panel performance [19] [20]. These well-characterized genomes with high-confidence variant calls enable standardized performance assessment across different panels and platforms.

Experimental Protocol for Panel Validation:

- Sample Selection: Utilize GIAB reference materials or commercially available characterized samples encompassing all variant types of interest [19]

- Sequencing Runs: Process samples across multiple runs, operators, and instruments to assess reproducibility [15]

- Data Analysis: Compare variant calls to truth sets using standardized tools like the GA4GH Benchmarking application [19]

- Metric Calculation: Determine sensitivity, specificity, precision, and accuracy with confidence intervals [2]

- Coverage Analysis: Assess depth and uniformity across all target regions [16]

Recent validation of a 61-gene oncopanel demonstrated the effectiveness of this approach, achieving 99.99% reproducibility and 99.98% repeatability across 43 unique samples [2]. The assay detected 794 mutations including all 92 known variants from orthogonal methods, confirming that rigorous validation protocols ensure reliable performance [2].

Design Optimization and Technical Considerations

Coverage and Specificity Parameters

The technical execution of panel design directly influences key performance metrics including on-target rates, coverage uniformity, and variant detection sensitivity.

Sequencing Depth and Coverage: Depth of coverage refers to the average number of reads aligning to a target region, while breadth of coverage describes the proportion of the target region sequenced at a specified depth [16]. Higher depth enables more reliable detection of low-frequency variants, but must be balanced against cost and throughput requirements [17].

On-target Efficiency: The proportion of sequencing reads mapping to intended target regions is a crucial efficiency metric [17]. Factors influencing on-target rates include panel size, probe design quality, and the presence of challenging genomic regions with extreme GC content or repetitive elements [18]. Well-designed panels typically achieve on-target rates exceeding 80% [16].

Coverage Uniformity: The evenness of read distribution across target regions significantly impacts variant calling reliability [16]. The Fold-80 base penalty metric quantifies uniformity by measuring how much additional sequencing is required to bring 80% of target bases to the mean coverage [17]. Ideal uniformity yields a Fold-80 penalty of 1.0, while values above 2.0 indicate substantial coverage variability that may require additional sequencing to avoid gaps in variant detection [17].

Managing Technical Artifacts and Biases

Effective panel design must anticipate and mitigate common technical challenges that can compromise data quality.

GC Bias: Regions with extremely high or low GC content are often unevenly represented in sequencing data [17]. This bias can be introduced during library preparation, hybrid capture, or the sequencing process itself. Strategies to minimize GC bias include using robust library preparation workflows, optimizing PCR conditions, and employing well-designed probes [17].

Duplicate Reads: Sequencing reads mapped to identical genomic coordinates provide no additional information and inflate coverage estimates [17]. High duplication rates typically result from low-input library preparation, PCR over-amplification, or low-complexity libraries. Paired-end sequencing and appropriate sample input can help minimize duplication rates [17].

Table 2: Technical Optimization Strategies for NGS Panel Design

| Challenge | Impact on Performance | Optimization Strategies |

|---|---|---|

| High/Low GC Regions | Reduced coverage in specific genomic contexts | Probe redesign, optimized hybridization conditions, specialized library prep [17] |

| Sequence Homology | Off-target capture and misalignment | Strategic masking of repetitive elements, stringent alignment parameters [18] |

| Amplification Bias | Uneven coverage and false positives | Limit PCR cycles, use unique molecular identifiers (UMIs) [18] |

| Low Input Samples | Increased duplicates and reduced library complexity | Implement whole-genome amplification, specialized low-input protocols [2] |

| Complex Structural Variants | Limited detection of fusions and rearrangements | Combine DNA and RNA approaches, intron-spanning designs [14] |

Comparative Performance Across Applications

Tissue versus Liquid Biopsy Applications

The intended sample type significantly influences panel design choices and performance expectations. Tissue samples remain the gold standard for tumor profiling, while liquid biopsies offer non-invasive alternatives for circulating tumor DNA (ctDNA) analysis [3].

Meta-analyses of NGS performance in advanced non-small cell lung cancer demonstrate high accuracy in tissue for EGFR mutations (sensitivity: 93%, specificity: 97%) and ALK rearrangements (sensitivity: 99%, specificity: 98%) [3]. In liquid biopsy, NGS effectively detects EGFR, BRAF V600E, KRAS G12C, and HER2 mutations (sensitivity: 80%, specificity: 99%) but shows limited sensitivity for ALK, ROS1, RET, and NTRK rearrangements [3].

Liquid biopsy applications present unique design challenges, including lower tumor DNA fraction and increased potential for clonal hematopoiesis interference [3]. These factors necessitate specialized approaches such as error-corrected sequencing, unique molecular identifiers, and significantly higher sequencing depths to achieve reliable detection of low-frequency variants [2].

Commercial versus Custom Panels

The choice between commercial and laboratory-developed panels involves trade-offs between standardization and customization.

Commercial Panels: Offer standardized content, validated protocols, and regulatory compliance advantages [15]. The NCI-MATCH trial successfully employed a commercial panel across four clinical laboratories, achieving 96.98% sensitivity for 265 known mutations with 99.99% mean inter-operator concordance [15].

Custom Panels: Enable focus on specific research questions, inclusion of novel targets, and optimization for local patient populations [2]. Recent work demonstrates that custom panels can achieve performance metrics comparable to commercial solutions, with one 61-gene oncopanel reporting 99.99% specificity and 98.23% sensitivity for unique variants [2].

Visualization of NGS Panel Design and Optimization Workflow

NGS Panel Design and Optimization Workflow: This diagram illustrates the sequential process of targeted panel development, from initial design choices through validation and implementation.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents for NGS Panel Development and Validation

| Reagent/Material | Function | Application Notes |

|---|---|---|

| GIAB Reference Materials | Benchmarking variant detection performance | Five well-characterized human genomes with high-confidence variant calls [19] |

| Characterized Cell Lines | Analytical validation controls | FFPE pellets with known variant profiles [15] |

| Hybridization Capture Probes | Target region enrichment | Solution-based biotinylated oligonucleotides; design impacts specificity [14] |

| Library Preparation Kits | NGS library construction | Compatibility with automation systems improves consistency [2] |

| Unique Molecular Identifiers (UMIs) | Error correction and duplicate removal | Molecular barcodes to distinguish PCR duplicates from unique fragments [18] |

| Bioinformatic Pipelines | Variant calling and analysis | Standardized tools like GA4GH Benchmarking for performance assessment [19] |

The performance goals of targeted NGS panels are intrinsically defined by their design parameters. Panel content establishes the genetic landscape for variant detection, while target region specification determines the technical approach for capturing different variant types. The intended use context—including sample types, clinical applications, and practical constraints—directly dictates the required sensitivity, specificity, and operational characteristics. Evidence from method validation studies demonstrates that rigorously designed and optimized panels can achieve high performance metrics, with sensitivities exceeding 96.98% and specificities above 99.99% [15]. By understanding these fundamental relationships between design choices and performance outcomes, researchers and clinical developers can create targeted NGS panels that reliably meet their specific application requirements while maintaining analytical rigor and operational efficiency.

Next-Generation Sequencing (NGS) has revolutionized genomic medicine, enabling comprehensive analysis of genetic variations associated with human diseases. The analytical sensitivity and specificity of NGS panels are critical parameters that determine their clinical utility and reliability. To ensure standardized practices across laboratories, professional organizations including the Association for Molecular Pathology (AMP), College of American Pathologists (CAP), and initiatives such as the French Genomic Medicine Initiative (PFMG2025, representing the Medical Genome Initiative context) have developed comprehensive guidelines and frameworks. This guide objectively compares the recommendations from these organizations, with a specific focus on their implications for the analytical performance of NGS panels in research and clinical settings. Understanding these evolving standards is essential for researchers, scientists, and drug development professionals who rely on accurate genomic data to advance precision medicine.

Organizational Guidelines and Strategic Frameworks

Association for Molecular Pathology (AMP)

AMP has established itself as a leader in developing standards for molecular testing, with a significant focus on variant interpretation and reporting in oncology. The organization is currently updating its landmark 2017 guideline through a collaborative working group with the American Society of Clinical Oncology (ASCO) and CAP [21] [22]. These updated recommendations, scheduled for discussion at the AMP 2025 Annual Meeting, reflect technological advancements and address classification challenges that have emerged since the original publication [21].

A key proposed update to the AMP/ASCO/CAP framework addresses a critical gap in the original four-tier system for somatic variant classification [23]. The 2025 update introduces a new Tier IIE classification for variants that are "oncogenic or likely oncogenic based on oncogenicity assessment but lacking clear evidence of clinical diagnostic, prognostic, or therapeutic significance in the tumor tested based on the currently available clinical evidence" [23]. This addition resolves a longstanding dilemma in variant interpretation where laboratories had to choose between classifying oncogenic variants without clear clinical utility as Variants of Uncertain Significance (VUS/Tier III) or overstating clinical evidence to place them in higher tiers [23].

AMP is also actively developing guidelines for other critical applications, including molecular testing strategies for measurable residual disease (MRD) monitoring in acute myeloid leukemia (AML) and expert consensus recommendations for detecting homologous recombination deficiency (HRD) in cancer [22]. These efforts demonstrate AMP's comprehensive approach to establishing standards that enhance test accuracy and clinical relevance.

College of American Pathologists (CAP)

CAP provides detailed technical guidance for implementing NGS tests through its collaborative worksheets, initially developed in 2018 with AMP representation and subsequently refined through partnership with the Clinical and Laboratory Standards Institute (CLSI) [24]. These structured worksheets guide laboratories through the entire lifecycle of an NGS test, with a current focus on germline applications and ongoing work to expand to somatic oncology applications [24].

The seven CAP worksheets provide a systematic framework for NGS test implementation [24]:

- Test Familiarization: Strategic considerations prior to test development

- Test Content Design: Guidance on gene/variant selection and reference materials

- Assay Design and Optimization: Translation of design requirements into operational assays

- Test Validation: Analytical performance metrics, validation study design, and data analysis

- Quality Management: Procedure monitors for all testing phases

- Bioinformatics and IT: Computational infrastructure and pipeline validation

- Interpretation and Reporting: Variant interpretation, classification, and reporting standards

These worksheets are incorporated into the CLSI MM09 guideline, "Human Genetic and Genomic Testing Using Traditional and High-Throughput Nucleic Acid Sequencing Methods," which provides step-by-step recommendations for designing, validating, reporting, and quality management of clinical NGS tests [24]. This comprehensive approach ensures laboratories implement robust NGS tests with demonstrated analytical sensitivity and specificity.

Medical Genome Initiative (Represented by PFMG2025)

The 2025 French Genomic Medicine Initiative (PFMG2025) represents a large-scale national implementation of genomic medicine that exemplifies the principles of the Medical Genome Initiative [25]. France has invested €239 million in this program with the ambition to integrate genome sequencing into routine clinical practice, focusing initially on rare diseases, cancer genetic predisposition, and cancers [25].

PFMG2025 has established a structured genomic healthcare pathway that includes multidisciplinary meetings for case review, standardized information sheets and consent forms, and specific analysis strategies for different clinical scenarios [25]. For rare diseases and cancer predisposition, short-read genome sequencing is performed, preferably including trio- or duo-based family analysis. For cancers, the program utilizes genome sequencing, exome sequencing, and RNAseq from frozen tumor tissues in addition to germline genome sequencing to detect actionable somatic variants [25].

The initiative represents one of the most comprehensive implementations of genomic medicine, providing real-world data on performance metrics at a national scale. As of December 2023, the program had returned 12,737 results for rare disease/cancer genetic predisposition patients with a median delivery time of 202 days and a diagnostic yield of 30.6%, and 3,109 results for cancer patients with a median delivery time of 45 days [25].

Table 1: Key Characteristics of Guideline Organizations

| Organization | Primary Focus Areas | Guideline Update Status | Key Deliverables |

|---|---|---|---|

| AMP | Somatic variant classification, MRD monitoring, HRD detection | 2025 Update in progress (AMP/ASCO/CAP variant interpretation guidelines) [21] [22] | Tiered variant classification system, Technical standards [23] |

| CAP | NGS test implementation, quality management, validation | Ongoing updates to NGS worksheets; MM09 guideline published 2023 [24] | Structured worksheets, Laboratory accreditation standards [24] |

| Medical Genome Initiative (PFMG2025) | National implementation, rare diseases, cancer | Operational since 2016; continuous expansion [25] | Genomic medicine pathways, Performance metrics, Economic sustainability models [25] |

Comparative Analysis of Methodological Recommendations

Test Validation and Verification

The CAP NGS worksheets provide the most detailed technical requirements for test validation, outlining specific analytical performance metrics with associated formulas and suggested reference materials [24]. This comprehensive approach includes guidance on validation study design and subsequent data analysis, enabling laboratories to establish robust evidence for their test's analytical sensitivity and specificity.

In contrast, AMP's guidelines focus more heavily on the interpretation and reporting aspects of testing, with the updated AMP/ASCO/CAP variant classification guidelines providing a framework for categorizing variants based on their oncogenic strength and clinical significance [21] [23]. This framework indirectly influences test validation requirements by defining the types of variants that must be reliably detected.

PFMG2025 represents a real-world implementation of these principles at scale, with common protocols established across sequencing laboratories and a structured genomic healthcare pathway that ensures standardized practices [25]. The program's reported diagnostic yield of 30.6% for rare diseases and cancer predisposition provides a benchmark for assessing the clinical effectiveness of comprehensive genomic testing approaches [25].

Quality Management and Ongoing Monitoring

CAP's quality management worksheet provides an overview of procedure monitors for the pre-analytical, analytical, and post-analytical phases of NGS-based testing [24]. This comprehensive approach ensures continuous monitoring of test performance throughout its lifecycle.

AMP addresses quality considerations through its detailed specifications for variant interpretation, aiming to reduce inconsistencies in classification practices across laboratories [23]. The clarification of the Tier IIE category for oncogenic variants lacking clear clinical significance represents a significant advancement in classification precision, potentially reducing false-positive interpretations of clinical utility [23].

PFMG2025 has implemented a nationwide quality framework through its network of clinical laboratories and a national facility for secure data storage and intensive calculation [25]. The program's use of multidisciplinary meetings for case review and its network of genomic pathway managers to assist with prescription quality represent innovative approaches to maintaining testing standards at scale [25].

Table 2: Performance Metrics from Large-Scale Genomic Medicine Implementation

| Performance Metric | Rare Diseases/Cancer Predisposition | Cancers | Source |

|---|---|---|---|

| Number of results returned | 12,737 | 3,109 | [25] |

| Median delivery time | 202 days | 45 days | [25] |

| Diagnostic yield | 30.6% | Not specified | [25] |

| Investment to date | €239 million (French government) | [25] | |

| Clinical utility rate | Ranged from 4-100% across studies (literature) | [26] |

Experimental Protocols and Data Generation

NGS Test Validation Protocol

The CAP guidelines provide a structured approach for NGS test validation based on their Test Validation worksheet [24]. The following protocol outlines key steps for establishing analytical sensitivity and specificity:

Sample Selection and Preparation

- Select well-characterized reference materials with known variants across different genomic contexts

- Include samples with variants at various allele frequencies (5%, 10%, 15%, 20%, 50%) to establish limit of detection

- Ensure coverage of variant types relevant to the test's intended use (SNVs, indels, CNVs, fusions)

- Incorporate challenging genomic regions (e.g., GC-rich, homologous, pseudogenes)

Sequencing and Analysis

- Process samples across multiple runs to assess inter-run reproducibility

- Include technical replicates to determine intra-run precision

- Sequence at multiple coverage depths to establish minimum coverage requirements

- Process data through the entire bioinformatics pipeline, including alignment, variant calling, and annotation

Data Analysis and Performance Calculation

- Calculate analytical sensitivity: (Number of true positives)/(Number of true positives + Number of false negatives)

- Determine analytical specificity: (Number of true negatives)/(Number of true negatives + Number of false positives)

- Establish precision: Percentage agreement between replicate samples

- Assess reproducibility: Concordance between different runs, operators, and instruments

Variant Interpretation Workflow

The AMP/ASCO/CAP guidelines provide a standardized approach for variant interpretation in cancer [23]. The following workflow is adapted from their tiered classification system:

Oncogenicity Assessment

- Evaluate variant using established oncogenicity criteria (population frequency, functional studies, computational predictions, etc.)

- Classify as oncogenic, likely oncogenic, uncertain, likely benign, or benign

- For tumor testing, compare variant frequency in matched normal tissue (if available)

Clinical Significance Evaluation

- Assess therapeutic implications (FDA-approved therapies, clinical trials)

- Evaluate prognostic associations

- Determine diagnostic utility

- Review evidence strength (well-powered studies, consensus guidelines, case reports)

Tier Assignment

- Tier I: Variants with strong clinical significance

- Tier II: Variants with potential clinical significance (including new IIE category for oncogenic variants lacking clinical evidence)

- Tier III: Variants of unknown significance

- Tier IV: Benign or likely benign variants

Figure 1: AMP/ASCO/CAP Variant Classification Workflow. This diagram illustrates the decision process for classifying somatic variants according to the updated AMP/ASCO/CAP guidelines, including the new Tier IIE category [23].

Essential Research Reagents and Materials

The implementation of NGS tests requiring high analytical sensitivity and specificity depends on several critical reagents and materials. The following table outlines key components and their functions in ensuring reliable test performance.

Table 3: Essential Research Reagent Solutions for NGS Testing

| Reagent/Material | Function | Performance Considerations |

|---|---|---|

| Reference standards | Positive controls for validation and QC | Should cover variant types and frequencies relevant to test claims |

| NGS library prep kits | Convert nucleic acids to sequenceable libraries | Impact on GC bias, duplicate rates, and coverage uniformity |

| Capture probes | Target enrichment for panel/exome sequencing | Coverage of critical regions, specificity, off-target rates |

| Bioinformatics tools | Variant calling, annotation, interpretation | Sensitivity/specificity balance, handling of challenging regions |

| QC metrics | Monitor assay performance | Minimum coverage, uniformity, duplicate rates, quality scores |

Implications for Analytical Sensitivity and Specificity Research

The guidelines from AMP, CAP, and implementation frameworks like PFMG2025 have significant implications for research on analytical sensitivity and specificity of NGS panels. The six-tiered efficacy model for genomic sequencing, which includes technical efficacy, diagnostic accuracy efficacy, diagnostic thinking efficacy, therapeutic efficacy, patient outcome efficacy, and societal efficacy, provides a comprehensive framework for evaluating test performance beyond basic analytical metrics [26].

Research should focus on generating evidence across all six tiers, with particular attention to the relationship between analytical performance and clinical utility. The PFMG2025 experience demonstrates that real-world implementation requires careful consideration of organizational frameworks, multidisciplinary collaboration, and economic sustainability [25]. Future research should explore how analytical sensitivity and specificity at the technical level (tier 1) translate to diagnostic thinking efficacy (tier 3) and therapeutic efficacy (tier 4) in different clinical contexts.

The evolving regulatory landscape, with ongoing updates to AMP/ASCO/CAP guidelines and CAP/CLSI standards, underscores the importance of establishing robust validation protocols that can adapt to new evidence and technologies. Research that systematically compares different approaches to NGS test validation and quality management will provide valuable insights for laboratories implementing these complex tests.

Figure 2: Six-Tiered Efficacy Model for Genomic Sequencing. This hierarchical model outlines the different levels for evaluating the effectiveness of genomic sequencing, from technical performance to broader societal impact [26].

The regulatory and professional guidelines from AMP, CAP, and large-scale implementations like PFMG2025 provide complementary frameworks for ensuring the analytical sensitivity and specificity of NGS panels. AMP's focus on variant interpretation standards, CAP's comprehensive test implementation worksheets, and PFMG2025's real-world operational model collectively address the multifaceted challenges of genomic test quality. The ongoing updates to these guidelines, particularly AMP's clarification of oncogenic variants without immediate clinical utility, demonstrate the dynamic nature of this field and the need for continuous refinement of standards. Researchers and drug development professionals should consider these evolving guidelines when designing validation studies and implementing NGS tests to ensure reliable results that advance precision medicine while maintaining rigorous quality standards.

From Theory to Practice: Methodological Approaches for Robust NGS Panel Design

Next-generation sequencing (NGS) has revolutionized genomic research, enabling comprehensive analysis of genetic variations across diverse applications. For targeted sequencing, the choice of enrichment method—amplicon-based or hybridization-capture—represents a critical decision point that directly impacts data quality, workflow efficiency, and research outcomes. Within the broader context of analytical sensitivity and specificity research for NGS panels, understanding the technical and performance characteristics of these two predominant approaches is fundamental. This guide provides an objective comparison grounded in experimental data to inform researchers, scientists, and drug development professionals in selecting the optimal method for their specific research objectives.

Methodological Fundamentals and Workflows

The core distinction between amplicon-based and hybridization-capture approaches lies in their fundamental mechanisms for target enrichment, which directly influences their workflow complexity, required reagents, and overall efficiency.

Amplicon-Based Sequencing Workflow

Amplicon sequencing utilizes polymerase chain reaction (PCR) to create DNA sequences known as amplicons from specific genomic regions of interest [27]. In this method, multiple pairs of primers are designed to target and amplify these regions simultaneously through multiplex PCR. The resulting amplicons are then converted into sequencing libraries by adding platform-specific adapters and sample-specific barcodes, which allow for sample multiplexing and adherence to sequencing flow cells [27]. This approach features a relatively streamlined workflow with fewer processing steps compared to hybridization capture methods [28].

Hybridization-Capture-Based Workflow

Hybridization capture employs biotinylated oligonucleotide probes (baits) that are complementary to the genomic regions of interest [27]. The process begins with fragmentation of genomic DNA, followed by enzymatic end-repair and ligation of platform-specific adapters containing sample indexes [27] [29]. The adapter-ligated libraries are then hybridized with the bait probes, and target-probe hybrids are captured using streptavidin-coated magnetic beads. After washing to remove non-specifically bound DNA, the enriched targets are amplified and prepared for sequencing. This method involves more processing steps than amplicon-based approaches but offers greater flexibility in target design [28].

The following diagram illustrates the key procedural differences between these two fundamental workflows:

Comparative Performance Analysis

Extensive validation studies have quantified the performance characteristics of both enrichment methods across multiple parameters. The following table summarizes key metrics based on experimental data:

Table 1: Performance Comparison of Amplicon-Based and Hybridization-Capture Methods

| Performance Parameter | Amplicon-Based | Hybridization-Capture | Experimental Context |

|---|---|---|---|

| Sensitivity for SNVs/Indels | 94.8% concordance [30] | 96.92-97.14% [13] [2] | Validation against orthogonal methods [30] [13] [2] |

| Specificity | Not explicitly reported | 99.67-99.99% [13] [2] | Reference standards and replicate analysis [13] [2] |

| Limit of Detection (VAF) | ~5% [27] | 0.38-2.9% [31] [2] | Serial dilution experiments [31] [2] |

| On-Target Rate | Higher [29] | Lower but more uniform coverage [29] | Whole-exome sequencing comparison [29] |

| Coverage Uniformity | Lower [29] | Higher [28] [29] | Whole-exome sequencing comparison [29] |

| Variant Type Capability | SNVs, Indels, known fusions [28] | SNVs, Indels, CNVs, fusions, complex biomarkers [30] [13] | Multi-biomarker validation studies [30] [13] |

Beyond the metrics above, analytical sensitivity comparisons have revealed that hybridization capture demonstrates superior capability in detecting low-frequency variants, with one study achieving 100% sensitivity for single-nucleotide variants (SNVs) at 0.38% variant allele frequency (VAF), insertions and deletions (InDels) at 0.33% VAF, and fusions at 0.33% VAF [31]. In contrast, amplicon-based methods typically achieve sensitivity around 5% VAF, making them less suitable for detecting low-frequency variants such as those found in circulating tumor DNA (ctDNA) or heterogeneous tumor samples [27].

The breadth of variant detection also differs substantially between methods. Hybridization-capture panels have demonstrated robust performance in detecting multiple variant types simultaneously, including copy number variations (CNVs) with 96.5% concordance, fusions with 94.2% concordance, and complex biomarkers such as microsatellite instability (MSI) and tumor mutational burden (TMB) [30]. Amplicon-based approaches are primarily optimized for SNVs and Indels, with more limited capability for detecting structural variants and complex biomarkers [28].

Practical Implementation Considerations

When selecting an enrichment method for research or clinical applications, practical considerations around workflow, scalability, and resource requirements significantly influence decision-making.

Table 2: Practical Implementation Considerations

| Parameter | Amplicon-Based | Hybridization-Capture |

|---|---|---|

| DNA Input Requirements | 10-100 ng [27] | 1-250 ng (library prep) + 500 ng (capture) [27] |

| Workflow Steps | Fewer steps [28] | More steps [28] |

| Hands-on Time | Less [28] | More [28] |

| Total Time to Results | Shorter (~1.5 days) [28] | Longer (~3-4 days) [28] [2] |

| Cost Per Sample | Generally lower [28] | Generally higher [28] |

| Panel Scalability | Flexible, usually <10,000 amplicons [28] | Virtually unlimited [28] |

| Multiplexing Capacity | Moderate | High |

The computational requirements also differ between these approaches. Amplicon-based data analysis is generally more straightforward, with variant calling focused on specific targeted regions. However, careful bioinformatic processing is required to address PCR artifacts and primer alignment issues. Hybridization-capture data analysis involves more complex processing for duplicate marking, local alignment optimization, and coverage uniformity normalization, but benefits from more uniform coverage distribution across targets [29].

Application-Specific Recommendations

Based on comparative performance data and practical implementation factors, each enrichment method demonstrates distinct advantages for specific research applications.

Recommended Applications for Amplicon-Based Sequencing

- Germline variant detection (SNPs, Indels) in inherited disease research [28] [27]

- CRISPR edit validation and engineered mutation verification [28] [27]

- Small target panels focusing on known hotspot mutations (<10,000 amplicons) [28]

- Resource-limited settings requiring rapid turnaround time and lower cost per sample [28]

- Studies with degraded DNA where shorter amplicons can be designed [30]

Recommended Applications for Hybridization-Capture Sequencing

- Comprehensive genomic profiling in oncology research [28] [30]

- Exome sequencing and large gene panel applications [28] [29]

- Rare variant detection in heterogeneous samples (e.g., ctDNA) [28] [31]

- Complex biomarker assessment including TMB, MSI, and HRD [30]

- Variant discovery across broad genomic regions [28]

The following diagram illustrates the decision-making process for selecting the appropriate enrichment method based on key experimental parameters:

Essential Research Reagent Solutions

Successful implementation of either enrichment method requires specific reagent systems and specialized tools. The following table outlines core components needed for establishing these workflows in a research setting:

Table 3: Essential Research Reagent Solutions for Target Enrichment Methods

| Reagent Category | Specific Examples | Function | Compatibility |

|---|---|---|---|

| Library Preparation Kits | Ion Torrent NGS Reverse Transcription Kit [30], Swift Rapid Library Preparation Kit [32] | Convert nucleic acids to sequence-ready libraries | Platform-specific |

| Target Enrichment Probes | Burning Rock Biotech 101-gene panel [31], Twist Bioscience double-stranded DNA probes [32] | Hybridize to and enrich specific genomic regions | Hybridization-capture |

| Target Amplification Primers | Oncomine Comprehensive Assay Plus primers [30], Custom-designed PCR primers [33] | Amplify specific genomic regions via PCR | Amplicon-based |

| Nucleic Acid Extraction Kits | QIAamp Circulating Nucleic Acid Kit [31], MagPure Universal DNA Kit [31] | Isolve high-quality DNA/RNA from various sample types | Universal |

| Target Capture Reagents | Sophia Genetics capture library kits [2], SureSelectXT Target Enrichment System [29] | Enable hybridization and capture of target regions | Hybridization-capture |

| Sequence Adapters & Barcodes | Illumina platform adapters [31], Unique molecular barcodes [32] | Facilitate platform sequencing and sample multiplexing | Platform-specific |

| Quality Control Assays | Qubit dsDNA HS Assay Kit [31], Agilent Bioanalyzer assays [33] | Quantify and qualify nucleic acids throughout workflow | Universal |

The choice between amplicon-based and hybridization-capture enrichment methods represents a significant decision point in targeted NGS panel design and implementation. Amplicon-based methods offer advantages in workflow simplicity, speed, and cost-efficiency for smaller target panels and higher VAF applications. In contrast, hybridization-capture approaches provide superior sensitivity for low-frequency variants, broader variant type detection, and greater scalability for large genomic regions. Within the framework of analytical sensitivity and specificity research, this comparative analysis demonstrates that method selection must be guided by specific research objectives, sample characteristics, and practical resource constraints. As NGS technologies continue to evolve, both enrichment strategies will maintain important roles in advancing genomic research and precision medicine applications.

Next-generation sequencing (NGS) has revolutionized genomic research and clinical diagnostics, enabling the parallel analysis of millions of DNA fragments. The analytical sensitivity and specificity of NGS panels are paramount for generating reliable data, particularly in clinical contexts where results directly impact patient management. Achieving optimal performance requires careful optimization of critical wet-lab parameters throughout the workflow. This guide objectively compares the impact of DNA input, library preparation methods, and sequencing depth on NGS panel performance, providing a structured framework for researchers and drug development professionals to maximize data quality and reproducibility.

DNA Input: Balancing Quantity and Library Complexity

The quantity of DNA used to initiate library preparation is a fundamental parameter that directly influences the complexity and quality of the resulting sequencing library. Library complexity refers to the number of unique DNA molecules represented in the library, which is critical for achieving uniform coverage and sensitive variant detection.

Impact of Low DNA Input

- Reduced Library Complexity: Using very low DNA input increases the risk that the final library will not adequately represent the original diversity of DNA molecules in the sample. This occurs because PCR amplification, while capable of generating unlimited product from limited input, cannot create information that was not present in the original template [34].

- Compromised Assay Sensitivity: As DNA input decreases, the depth of coverage with unique sequence reads (those derived from input DNA molecules) versus duplicate sequence reads (those resulting from overamplification of particular molecules) may be insufficient for confident variant calling [34].

- Technical Variability: Fluctuations in library complexity due to low DNA input can complicate variant detection, often resulting in technical replicates with vastly different estimates of variant allelic fraction [34].

Optimal DNA Input Recommendations

Recent studies have systematically evaluated DNA input requirements for targeted NGS panels. The following table summarizes key experimental findings:

Table 1: DNA Input Requirements for NGS Library Preparation

| Study Context | Minimum DNA Input | Optimal DNA Input | Key Observations | Source |

|---|---|---|---|---|

| TTSH-Oncopanel Validation | ≤25 ng detected only 8/13 mutations | ≥50 ng detected all 13 mutations | Two EGFR mutations showed low quality at 50ng input; ≥50ng established as requisite | [2] |

| General Library Preparation | Not specified | More starting material means less amplification | Higher input generally improves library complexity by reducing PCR amplification bias | [35] |

| Unique Molecular Identifier Tracking | Library complexity compromised at low input | Input should provide sufficient unique molecules | Depth of coverage with unique reads must be tracked to maintain sensitivity | [34] |

Experimental Protocol: DNA Input Titration

To establish minimum DNA input requirements for a custom NGS panel, the following titration protocol was employed in recent validation studies [2]:

- Sample Selection: Use a well-characterized reference standard (e.g., HD701) with known mutations across different allelic frequencies.

- DNA Titration: Prepare aliquots of the reference standard at varying concentrations (e.g., 10 ng, 25 ng, 50 ng, 100 ng).

- Library Preparation: Process all samples using the standardized NGS library preparation protocol.

- Sequencing and Analysis: Sequence all libraries and compare the detection of known variants.

- Quality Assessment: Establish the minimum input that reliably detects all expected variants with acceptable quality metrics.

This systematic approach ensures that the selected DNA input quantity maintains assay sensitivity while accommodating typical sample limitations in clinical practice.

Library Preparation: Methodologies and Efficiency Comparisons

Library preparation is the process of converting extracted DNA into a format compatible with the sequencing platform. The efficiency of this process significantly impacts the quality and quantitative accuracy of NGS results.

Library Preparation Workflow

The following diagram illustrates the core steps in a standard NGS library preparation workflow:

Comparison of Library Preparation Methods

Two primary approaches dominate targeted NGS for oncology specimens: hybrid capture-based and amplification-based methods [14]. The choice between methods influences several performance characteristics, including uniformity, ability to detect different variant types, and susceptibility to specific artifacts.

Table 2: Comparison of Library Preparation Methodologies

| Parameter | Hybrid Capture-Based | Amplification-Based (Amplicon) |

|---|---|---|

| Principle | Solution-based biotinylated oligonucleotide probes hybridize to target regions | PCR primers flanking target regions amplify regions of interest |

| Variant Types Detected | SNVs, indels, CNAs, gene fusions (with appropriate design) | Primarily SNVs and small indels |

| Advantages | Tolerates mismatches in probe binding site, reducing allele dropout; flexible for adding new targets | Faster protocol; requires less DNA input; higher on-target rates |

| Disadvantages | Longer protocol; higher sample input requirements; more expensive | Susceptible to allele dropout from primer binding site variants; limited to targeted regions |

| GC Bias | Less prone to extreme GC bias | Can have significant amplification bias in high or low GC regions |

Efficiency Comparisons Across Commercial Kits

A systematic comparison of nine commercially available library preparation kits revealed significant variations in efficiency [36]:

- Protocol Efficiency: Kits that combine several steps (end-repair, A-tailing, adapter ligation) into a single reaction demonstrated final yields 4 to 7 times higher than conventional kits with separate steps.

- Adapter Ligation Yield: The efficiency of the adapter ligation step varied by more than a factor of 10 between kits (3.5% to 100%), with low ligation efficiency potentially impairing original library complexity.

- PCR Bias: The yield of the PCR enrichment step was anticorrelated with the yield of the ligation step, with lower adaptor-ligated DNA inputs leading to greater amplification yields.

- Fragment Size Bias: Different kits showed varying propensities to alter the representation of different fragment sizes in the final library despite using identical input DNA and cleanup conditions.

Sequencing Depth and Coverage: Fundamentals and Optimization

Sequencing depth and coverage are distinct but related parameters that must be optimized to ensure comprehensive and reliable variant detection.

Definitions and Relationship