Decoding Cancer Complexity: How Next-Generation Sequencing Unravels Tumor Heterogeneity for Precision Oncology

Next-generation sequencing (NGS) has fundamentally transformed our understanding and investigation of cancer heterogeneity, moving beyond organ-based classification to a molecular-level understanding of tumor evolution, resistance, and metastasis.

Decoding Cancer Complexity: How Next-Generation Sequencing Unravels Tumor Heterogeneity for Precision Oncology

Abstract

Next-generation sequencing (NGS) has fundamentally transformed our understanding and investigation of cancer heterogeneity, moving beyond organ-based classification to a molecular-level understanding of tumor evolution, resistance, and metastasis. This article provides a comprehensive resource for researchers and drug development professionals, exploring the foundational principles of NGS in characterizing genomic diversity. It delves into advanced methodological applications like single-cell sequencing and liquid biopsy, addresses critical troubleshooting and optimization challenges in clinical implementation, and validates NGS findings through comparative analyses with real-world data. By synthesizing current evidence and future directions, this review underscores the indispensable role of NGS in advancing personalized cancer therapeutics and overcoming the clinical challenges posed by tumor heterogeneity.

The Genomic Landscape of Cancer: Foundational Concepts of Heterogeneity and NGS

Cancer is not a single disease but a collection of genetically and phenotypically diverse malignancies characterized by extensive heterogeneity at multiple levels. This heterogeneity presents the most significant challenge in oncology today, influencing diagnosis, treatment selection, and ultimately, patient outcomes. Intratumoral heterogeneity (ITH) refers to the genetic, epigenetic, and phenotypic diversity observed within a single tumor, where distinct cellular sub-populations coexist and evolve [1]. In contrast, intertumoral heterogeneity describes the variations observed between tumors of the same histological type from different patients, driven by differences in genetic background, environmental exposures, and etiological factors [2]. Together, these dimensions of heterogeneity create a complex biological landscape that confounds traditional therapeutic approaches and drives drug resistance, metastasis, and disease recurrence.

The clinical implications of tumor heterogeneity are profound. ITH enables Darwinian selection within the tumor ecosystem, where pre-existing resistant subclones or newly evolved variants can survive therapy and initiate relapse [1]. From a diagnostic perspective, heterogeneity challenges the representativeness of single biopsies, as they may miss critical subclonal populations that dictate therapeutic response. Furthermore, heterogeneity complicates biomarker development, as molecular signatures may vary spatially within a tumor and temporally throughout disease progression and treatment [3]. Understanding and addressing these heterogeneities is therefore paramount for advancing precision oncology and improving patient outcomes.

Molecular Mechanisms Driving Heterogeneity

Genetic and Non-Genetic Mechanisms

Tumor heterogeneity arises through multiple interconnected mechanisms that operate at different molecular levels. The primary drivers can be categorized into genetic, epigenetic, and microenvironmental factors that collectively shape the tumor's evolutionary trajectory.

Genetic instability forms the foundation of ITH, generating diverse subclonal populations through various mechanisms. This includes an elevated point mutation rate, chromosomal segregation errors, and copy number alterations that accumulate during tumor progression [1]. The tolerance for genomic instability in cancer cells allows them to withstand increased mutational burdens, with certain therapies even exacerbating this process by inducing a hypermutator phenotype [1].

Epigenetic modifications represent another crucial layer of heterogeneity, independent yet often complementary to genetic changes. These include DNA methylation patterns, histone modifications, and chromatin remodeling that create phenotypic diversity without altering the underlying DNA sequence [1]. Epigenetic plasticity enables rapid adaptation to therapeutic pressures and microenvironmental changes, contributing to functional heterogeneity among cancer cells.

Microenvironmental influences further shape heterogeneity through dynamic interactions between tumor cells and their surrounding stroma. The tumor microenvironment (TME) comprises various cell types, including immune cells, cancer-associated fibroblasts, and endothelial cells, which secrete signaling molecules, create metabolic gradients, and exert selective pressures that influence tumor evolution [1]. Spatial variations in oxygen tension, nutrient availability, and mechanical forces within the TME create distinct ecological niches that support and maintain phenotypic diversity.

Spatial and Temporal Dimensions of Heterogeneity

Heterogeneity manifests across both spatial and temporal dimensions, each with distinct clinical implications. Spatial heterogeneity refers to the regional variations observed within a single tumor mass, between primary and metastatic lesions, and among different metastatic sites [1]. For instance, significant genetic discordance often exists between primary tumors and their metastases, with site-specific factors driving genetic divergence after initial colonization [1]. Even within a single tumor tissue block, coexisting subpopulations with different genotypes (e.g., EGFR mutant and wild-type cells in NSCLC) can demonstrate varied responses to targeted therapies [1].

Temporal heterogeneity reflects the dynamic evolution of tumors over time, particularly under therapeutic pressure. successive biopsies have revealed that chemotherapy can alter the mutational spectrum and induce molecular changes, with targeted therapies exerting particularly strong selective pressures that enrich for resistant subclones [1]. The genomic instability of cancer cells, combined with the asymmetric distribution of extrachromosomal DNA to daughter cells, results in continuous evolution and accumulated variation, producing molecular and phenotypic profiles that diverge from the original primary tumor [1].

Table 1: Mechanisms Driving Tumor Heterogeneity

| Mechanism Category | Specific Processes | Impact on Heterogeneity |

|---|---|---|

| Genetic Instability | Point mutations, chromosomal rearrangements, copy number alterations, extrachromosomal DNA amplification | Generates diverse subclones with varying genetic backgrounds and selective advantages |

| Epigenetic Modulation | DNA methylation changes, histone modifications, chromatin remodeling, non-coding RNA regulation | Creates phenotypic plasticity and adaptive responses without genetic changes |

| Microenvironmental Influences | Hypoxia, nutrient gradients, stromal interactions, immune pressure | Creates selective niches that maintain and shape phenotypic diversity |

| Tumor Evolution | Branched evolution, clonal selection, therapy-induced mutagenesis | Drives temporal changes and therapy resistance through Darwinian selection |

Advanced Technologies for Mapping Heterogeneity

Next-Generation Sequencing and Its Applications

Next-generation sequencing (NGS) has emerged as a transformative technology for dissecting tumor heterogeneity at unprecedented resolution. Unlike traditional Sanger sequencing, which processes DNA fragments individually, NGS enables massive parallel sequencing of millions of fragments simultaneously, significantly reducing time and cost while providing comprehensive genomic data [4]. This technological advancement has made large-scale genomic profiling feasible in clinical settings, enabling detailed characterization of heterogeneity patterns.

The core NGS workflow involves several critical steps: sample preparation, library construction, sequencing, and data analysis [4]. For tumor heterogeneity studies, sample preparation often requires careful microdissection of distinct morphological regions or single-cell isolation to resolve spatial heterogeneity [5]. Library construction fragments the genomic DNA and attaches adapters for sequencing, with targeted enrichment strategies often employed to focus on cancer-relevant genes [4]. The sequencing phase then generates massive datasets that undergo sophisticated bioinformatic processing for variant calling, copy number analysis, and phylogenetic reconstruction [6].

Various NGS approaches offer complementary insights into heterogeneity. Whole-genome sequencing (WGS) provides the most comprehensive view of genetic alterations, including non-coding regions, while whole-exome sequencing (WES) focuses on protein-coding regions at higher depth [4]. Targeted sequencing panels offer cost-effective profiling of established cancer genes with enhanced sensitivity for detecting low-frequency subclones [7]. Beyond DNA sequencing, RNA sequencing reveals transcriptional heterogeneity and can identify expressed gene fusions, while single-cell RNA sequencing (scRNA-seq) resolves cellular hierarchies and rare subpopulations within tumors [5].

Table 2: NGS Approaches for Studying Tumor Heterogeneity

| NGS Method | Resolution | Key Applications in Heterogeneity | Limitations |

|---|---|---|---|

| Whole-Genome Sequencing (WGS) | Base pair to chromosomal level | Comprehensive identification of SNVs, indels, structural variations, CNVs across entire genome | Higher cost, computational burden, lower depth for rare subclones |

| Whole-Exome Sequencing (WES) | Coding regions at ~100-200x depth | Detection of coding mutations across tumor subclones | Misses non-coding and regulatory alterations |

| Targeted Gene Panels | Selected genes at >500x depth | High-sensitivity detection of low-frequency subclones, clinical utility | Limited to predefined gene set |

| Single-Cell DNA/RNA Sequencing | Individual cell level | Resolution of cellular hierarchies, rare subpopulations, phylogenetic relationships | Technical noise, high cost, computational complexity |

| Spatial Transcriptomics | Tissue context with gene expression | Mapping gene expression patterns to histological locations, revealing microenvironmental niches | Lower resolution than scRNA-seq, specialized equipment |

Spatial Profiling and Artificial Intelligence Approaches

While NGS provides detailed molecular information, preserving spatial context is essential for understanding the architectural organization of heterogeneity. Spatial transcriptomics has emerged as a powerful innovation that enables precise allocation of gene expression to distinct histological features within tissue sections [5]. This technology bridges the gap between traditional histopathology and molecular profiling by capturing transcriptomic data while maintaining spatial coordinates.

In a landmark study investigating mixed neuroendocrine-nonneuroendocrine neoplasms (MiNEN), spatial transcriptomics revealed distinct transcriptional profiles aligned with histologically annotated compartments (e.g., adenocarcinoma, neuroendocrine carcinoma, precursor lesions) [5]. Notably, the study uncovered transcriptomic subclusters within morphologically homogeneous neuroendocrine carcinoma regions in two of three cases, demonstrating that heterogeneity often extends beyond morphological recognition [5]. These subclusters exhibited significant differences in immune regulation, proliferation signaling, and cell-cycle control, with associated divergent predicted chemotherapy-response signatures [5].

Artificial intelligence (AI) and deep learning approaches complement molecular profiling by extracting quantitative morphological features from digital pathology images. In a comprehensive study of breast cancer intra-tumor heterogeneity, researchers developed an AI-based algorithm that extracted and quantified 162 morphological features from whole-slide images [8]. These features demonstrated significant association with patient outcomes, and when combined into an overall heterogeneity score, stratified luminal breast cancer patients into low- and high-risk groups [8]. The AI approach revealed associations between high heterogeneity scores and aggressive tumor characteristics, including larger tumor size, poor differentiation, high proliferation, and low estrogen receptor expression [8].

Experimental Protocols for Heterogeneity Analysis

Multi-Region Sequencing Protocol

Comprehensive assessment of ITH requires sophisticated sampling strategies and analytical approaches. The following protocol outlines a standardized method for multi-region sequencing to resolve spatial heterogeneity:

Sample Collection and Processing:

- Macrodissection of Tumor Regions: Following pathological review of H&E-stained slides, distinct morphological regions are marked by an experienced pathologist for separate analysis [5]. Regions should include various histological patterns and suspected precursor lesions when present.

- DNA Extraction: Using the Maxwell RSC FFPE Plus DNA Kit or equivalent, extract genomic DNA from each macrodissected region. Quantify DNA concentration using fluorometric methods (e.g., Qubit dsDNA HS Assay) and assess purity via spectrophotometry (A260/A280 ratio between 1.7-2.2) [7].

- Quality Control: Ensure minimum DNA input of 20ng with minimal fragmentation. For heavily degraded FFPE samples, consider specialized repair protocols before library preparation.

Library Preparation and Sequencing:

- Library Construction: Using the Agilent SureSelectXT Target Enrichment System or equivalent, prepare sequencing libraries with unique dual indices for each sample to enable multiplexing [7].

- Target Enrichment: Hybridize libraries to custom bait panels (e.g., SNUBH Pan-Cancer v2.0 targeting 544 genes) to enrich for cancer-relevant genomic regions [7].

- Sequencing: Pool libraries in equimolar ratios and sequence on Illumina platforms (NextSeq 550Dx or NovaSeq 6000) to achieve minimum mean coverage of 500x with >80% of bases at 100x coverage [7].

Bioinformatic Analysis:

- Variant Calling: Align reads to reference genome (hg19/GRCh37) using BWA-MEM, then call somatic variants using Mutect2 with minimum variant allele frequency threshold of 2% [7].

- Clonal Decomposition: Use computational tools such as PyClone or SciClone to infer subclonal architecture and cellular prevalences across tumor regions based on variant allele frequencies and copy number profiles.

- Phylogenetic Reconstruction: Construct phylogenetic trees representing evolutionary relationships between tumor regions using tools such as PhyloWGS or CITUP, based on shared and private mutations.

Spatial Transcriptomics Protocol

For integrating gene expression with histological context, the following spatial transcriptomics protocol enables mapping of transcriptional heterogeneity:

Tissue Preparation and Processing:

- Sample Selection: Identify FFPE tissue blocks containing representative tumor regions with diverse morphological patterns. Verify RNA integrity meets quality threshold (DV200 > 50%) [5].

- Sectioning: Cut 5μm sections onto Visium Spatial Gene Expression slides (10x Genomics) containing 6.5mm × 6.5mm capture areas with spatially barcoded oligo-dT primers [5].

- H&E Staining and Imaging: Deparaffinize sections, perform H&E staining, and image at high resolution using a brightfield slide scanner to document tissue morphology and spatial context [5].

Library Construction and Sequencing:

- mRNA Capture: Permeabilize tissue to release mRNA, which binds to spatially barcoded primers on the slide surface [5].

- cDNA Synthesis: Reverse transcribe bound mRNA to create cDNA with spatial barcodes and unique molecular identifiers (UMIs).

- Library Preparation: Amplify cDNA and construct sequencing libraries following the Visium Spatial Gene Expression for FFPE protocol (10x Genomics) [5].

- Sequencing: Sequence libraries on Illumina platforms (NextSeq 550 or equivalent) to achieve minimum depth of 25,000 read pairs per spot [5].

Data Integration and Analysis:

- Alignment and Processing: Process sequencing data through the SpaceRanger pipeline (10x Genomics) to align reads to the reference transcriptome (GRCh38) and assign gene counts to spatial barcodes [5].

- Pathologist Annotation: Annotate histological regions of interest (e.g., adenocarcinoma, neuroendocrine carcinoma, stroma) on the H&E image using Loupe Browser or equivalent software [5].

- Differential Expression: Perform differential gene expression analysis between annotated regions using Seurat or equivalent packages in R [5].

- Gene Set Enrichment: Conduct gene set enrichment analysis (GSEA) to identify pathway-level differences between tumor compartments and subclusters [5].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents for Heterogeneity Studies

| Reagent/Material | Specific Examples | Function in Heterogeneity Research |

|---|---|---|

| Nucleic Acid Extraction Kits | QIAamp DNA FFPE Tissue Kit, Maxwell RSC RNA FFPE Kit | Isolate high-quality DNA/RNA from challenging FFPE specimens for reliable sequencing results |

| Target Enrichment Systems | Agilent SureSelectXT, Illumina TruSight Oncology | Enrich cancer-relevant genomic regions for efficient sequencing and sensitive variant detection |

| Library Preparation Kits | Illumina DNA Prep, NEBNext Ultra II DNA | Prepare sequencing libraries with high complexity and minimal bias for accurate representation of subclones |

| Spatial Transcriptomics Kits | 10x Genomics Visium Spatial Gene Expression for FFPE | Capture transcriptomic data while preserving spatial information in tissue sections |

| Single-Cell Isolation Platforms | 10x Genomics Chromium, BD Rhapsody | Partition individual cells for high-resolution profiling of cellular heterogeneity |

| Multiplex Immunofluorescence Kits | Akoya Biosciences OPAL, Fluidigm Maxpar | Simultaneously detect multiple protein markers in situ to characterize phenotypic heterogeneity |

| Cell Culture Matrices | Cultrex BME, Matrigel | Support 3D organoid growth to model tumor heterogeneity and microenvironment interactions ex vivo |

| NGS Validation Reagents | IDT xGen Lockdown Probes, Archer VariantPlex | Orthogonal validation of discovered variants to confirm heterogeneity patterns |

Clinical Implications and Therapeutic Applications

Impact on Treatment Resistance and Prognosis

Tumor heterogeneity directly enables therapeutic resistance through multiple mechanisms. Pre-existing resistant subclones present within heterogeneous tumors can be selected under therapeutic pressure, leading to outgrowth and clinical relapse [1]. Additionally, functional heterogeneity and cellular plasticity allow tumors to adapt to targeted therapies through non-genetic mechanisms, including epigenetic reprogramming and phenotype switching [1]. The presence of multiple resistance mechanisms within different subclones often necessitates combination therapies that simultaneously target multiple vulnerabilities.

Clinical evidence consistently demonstrates the prognostic significance of heterogeneity metrics. In a real-world study of NGS implementation involving 990 patients with advanced solid tumors, 26.0% harbored tier I variants (strong clinical significance), while 86.8% carried tier II variants (potential clinical significance) [7]. Among patients with tier I variants, 13.7% received NGS-based therapy, with response rates varying by cancer type [7]. This highlights both the clinical actionability of heterogeneity characterization and the current limitations in matching patients to effective therapies based on genomic findings.

Novel Therapeutic Strategies Addressing Heterogeneity

Emerging therapeutic approaches specifically aim to overcome heterogeneity-driven resistance. Combination therapies targeting multiple pathways simultaneously address co-existing driver alterations in different subclones [1]. Evolutionary-informed therapies apply principles from evolutionary biology to suppress resistance development, including adaptive therapy approaches that maintain sensitive cells to compete with resistant populations [1]. Immunotherapeutic strategies leverage the immune system's capacity to recognize diverse neoantigens presented by heterogeneous tumor populations, though heterogeneity can also enable immune escape through various mechanisms [1].

Patient-derived organoids (PDOs) represent a powerful platform for addressing heterogeneity in personalized therapy development. These three-dimensional structures derived from patient tumors retain genetic, epigenetic, and phenotypic features of the primary malignancy, including its heterogeneity patterns [2]. PDOs can be used for high-throughput drug screening to identify effective therapeutic combinations that address the complete spectrum of subclonal populations within an individual's tumor [2]. Co-culture systems incorporating immune cells further enable evaluation of immunotherapeutic approaches in a patient-specific context [2].

The comprehensive characterization of intra- and intertumoral heterogeneity represents both a central challenge and promising frontier in oncology. While NGS technologies have dramatically advanced our understanding of heterogeneity patterns and their clinical implications, translating these insights into improved patient outcomes requires continued methodological innovation. Future progress will depend on several key developments: the integration of multi-omics data across spatial and temporal dimensions; the refinement of single-cell and spatial profiling technologies to enhance resolution and accessibility; the development of sophisticated computational models to predict evolutionary dynamics and therapeutic responses; and the implementation of clinical trial designs that account for and target tumor heterogeneity.

As these advancements mature, they promise to transform oncology from a discipline often confounded by heterogeneity to one that leverages understanding of tumor diversity for more effective, personalized cancer care. The ongoing convergence of sequencing technologies, spatial profiling, artificial intelligence, and functional modeling approaches will ultimately enable clinicians to navigate the complex landscape of tumor heterogeneity and design therapeutic strategies that preempt resistance and improve long-term outcomes for cancer patients.

Next-generation sequencing (NGS) has revolutionized oncology research by enabling comprehensive genomic analysis that transcends traditional single-gene approaches. This transformation has been particularly profound in cancer heterogeneity studies, where pan-cancer genomic profiling provides unprecedented insights into shared molecular pathways across diverse malignancies. The evolution from targeted gene investigations to comprehensive genomic profiling (CGP) has revealed common driver mutations and molecular signatures that operate independently of tumor origin, fundamentally reshaping cancer classification systems. This technical review examines the experimental frameworks, computational methodologies, and research applications of NGS technologies in characterizing tumor heterogeneity, with specific emphasis on their implications for drug discovery and development. We detail standardized protocols for pan-cancer analysis and demonstrate how CGP identifies actionable alterations across cancer types, facilitating biomarker-driven therapeutic development and enabling a more nuanced understanding of treatment resistance mechanisms.

The advent of next-generation sequencing technologies has fundamentally transformed cancer research methodologies, enabling a systematic transition from single-gene investigations to genome-wide analyses. This technological evolution has positioned NGS as a cornerstone of precision oncology, providing researchers with powerful tools to decipher the complex genomic architecture of human malignancies [4]. Pan-cancer analysis approaches leverage NGS to assess frequently mutated genes and genomic abnormalities common to many different cancers, regardless of tumor origin, revealing that although all cancers are molecularly distinct, many share common driver mutations [9].

The implications for cancer heterogeneity research are profound, as NGS facilitates the comprehensive molecular characterization of tumors across traditional histopathological classifications. Large-scale pan-tumor projects such as The Cancer Genome Atlas have made significant contributions to our understanding of DNA and RNA variants across many cancer types, establishing new frameworks for classifying cancers based on molecular signatures rather than solely on tissue of origin [9]. This paradigm shift has been particularly valuable for understanding the molecular basis of therapeutic response and resistance, enabling drug development professionals to identify targetable pathways operating across multiple cancer types.

Technological Evolution: From Single-Gene Assays to Comprehensive Genomic Profiling

The Limitation of Traditional Sequencing Approaches

Traditional approaches to cancer genomic analysis relied heavily on single-gene assays and Sanger sequencing, which presented significant limitations for comprehensive tumor profiling. Single-gene assays typically focus on a small set of genes and ignore the genomic complexity of the tumor from a genetic perspective [4]. These methods cannot detect mutations in non-coding regions that may contribute to cancer development and may miss opportunities for early detection and optimization of treatments [4]. Furthermore, an iterative single-gene testing approach can lead to tissue depletion and repeat biopsies, creating practical challenges in research settings [10].

Sanger sequencing, while groundbreaking for its time, processes one DNA fragment at a time, making it laborious, costly, and time-consuming for large-scale analysis [11]. It exhibits lower sensitivity, with a detection limit typically around 15-20%, and is not cost-effective for analyzing more than 20 targets [11]. While Sanger sequencing offers a familiar workflow and can sequence up to 1000 base pairs, its limited throughput and scalability make it less suitable for comprehensive genomic analyses required for understanding cancer heterogeneity [11].

The NGS Revolution: Principles and Advantages

Next-generation sequencing represents a revolutionary leap in genomic technology, enabling the rapid sequencing of entire genomes or targeted genomic regions with unprecedented speed and accuracy [4]. Unlike traditional Sanger sequencing, NGS allows for massively parallel sequencing, processing millions of fragments simultaneously, which has significantly reduced the time and cost associated with sequencing [4]. This technological advancement has made comprehensive genomic analysis accessible for widespread research use.

The core NGS workflow involves several key steps: sample preparation, library construction, sequencing, and data analysis [4]. During library preparation, genomic samples (DNA or cDNA) are fragmented, and adapters are attached to these fragments [4]. These adapters are essential for attaching the DNA fragments to the sequencing platform and for subsequent amplification and sequencing [4]. The sequencing reaction then converts the library to single-stranded DNA, which is amplified to generate sufficient signal for sequence identification [4]. Various technologies are used for NGS, with Illumina sequencing being the most common, involving library fragments immobilized on a solid surface (flow cell) and amplified to form clusters of identical sequences [4].

Table 1: Comparison of Sequencing Technologies

| Feature | Next-Generation Sequencing | Sanger Sequencing |

|---|---|---|

| Cost-effectiveness | Higher for large-scale projects | Lower for small-scale projects |

| Speed | Rapid sequencing | Time-consuming |

| Application | Whole-genome sequencing, targeted sequencing | Ideal for sequencing single genes |

| Throughput | Multiple sequences simultaneously | Single sequence at a time |

| Data output | Large amount of data | Limited data output |

| Clinical utility | Detects mutations, structural variants | Identifies specific mutations |

NGS provides several critical advantages for cancer research:

- Massively parallel sequencing enables concurrent analysis of millions of DNA fragments [4]

- Markedly increased sequencing depth and sensitivity, detecting low-frequency variants down to ~1% variant allele frequency [11]

- Superior discovery power, detecting novel or rare variants, structural rearrangements, and large chromosomal abnormalities at single-nucleotide resolution [11]

- Comprehensive genomic coverage with sample multiplexing makes NGS cost-effective for screening large numbers of samples [11]

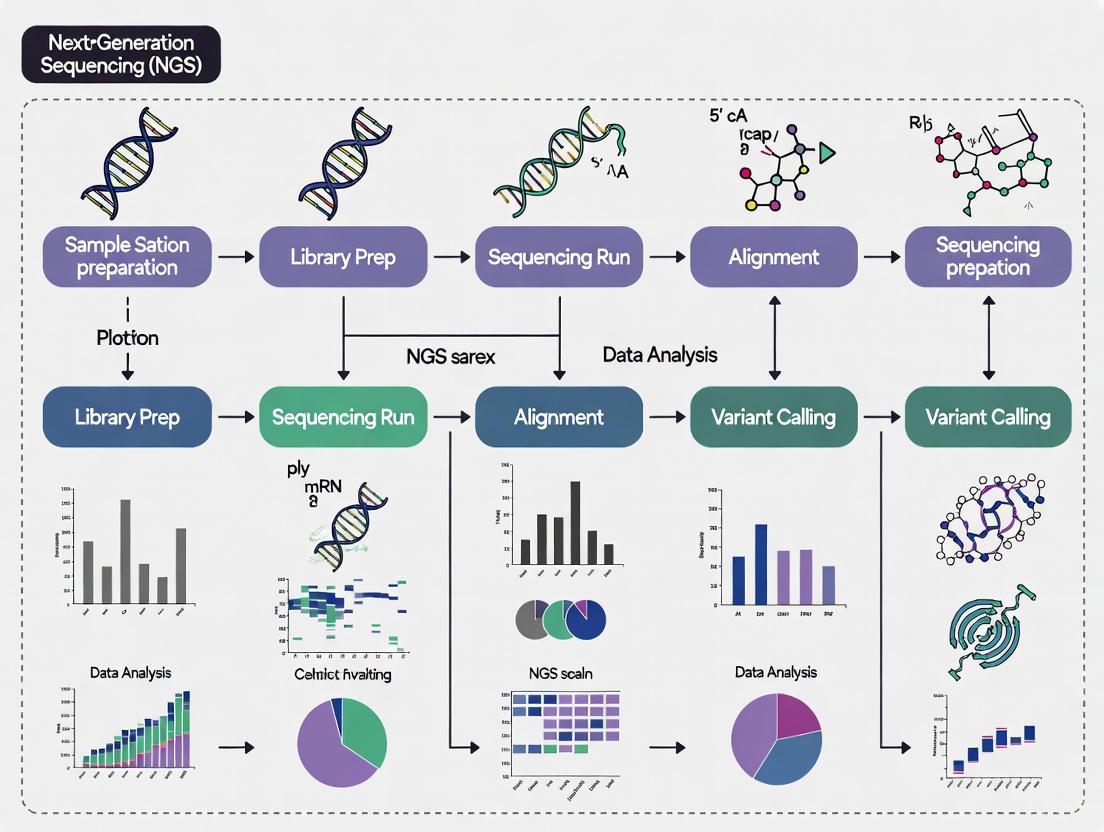

Diagram 1: NGS Workflow for Genomic Profiling

Pan-Cancer Genomic Profiling: Methodologies and Applications

Comprehensive Genomic Profiling Platforms

Comprehensive genomic profiling represents the most advanced application of NGS technology in cancer research, enabling simultaneous analysis of multiple genomic alteration classes across hundreds of cancer-related genes. CGP can detect biomarkers at nucleotide-level resolution and typically comprises all major genomic variant classes - single nucleotide variants (SNVs), indels, copy number variants (CNVs), fusions, and splice variants [10]. Additionally, CGP can detect genomic signatures such as tumor mutational burden (TMB) and microsatellite instability (MSI), maximizing the ability to find clinically actionable alterations [10].

The research utility of CGP is particularly valuable for consolidating biomarker detection into a single multiplex assay, eliminating the need for iterative testing [10]. With a single test, researchers can simultaneously detect both common and rare biomarkers to increase the likelihood of identifying actionable alterations, potentially providing faster results and limiting the input of precious biopsy samples [10]. This approach is especially valuable for rare cancers or limited samples, where tissue availability is a significant constraint.

Table 2: Major NGS Platforms and Their Research Applications

| Platform | Sequencing Technology | Amplification Type | Read Length (bp) | Primary Research Applications |

|---|---|---|---|---|

| Illumina | Sequencing by synthesis | Bridge PCR | 36-300 | Whole-genome sequencing, transcriptome analysis, targeted sequencing |

| Ion Torrent | Sequencing by synthesis | Emulsion PCR | 200-400 | Targeted sequencing, gene expression profiling |

| PacBio SMRT | Single molecule real-time | Without PCR | 10,000-25,000 | Full-length transcript sequencing, complex structural variation |

| Nanopore | Electrical impedance detection | Without PCR | 10,000-30,000 | Real-time sequencing, direct RNA sequencing, epigenetics |

| 454 Pyrosequencing | Pyrosequencing | Emulsion PCR | 400-1000 | Targeted gene sequencing, metagenomics |

Experimental Design and Protocol Implementation

Sample Preparation and Quality Control

Robust sample preparation is fundamental to successful pan-cancer genomic profiling. The first step in NGS involves the extraction and preparation of DNA or RNA from the sample of interest, with quality and quantity of nucleic acids assessed to ensure they meet sequencing requirements [4]. For DNA sequencing, this typically involves extracting genomic DNA from cells or tissues, while RNA sequencing requires isolation of total RNA followed by reverse transcription to generate complementary DNA (cDNA) [4].

For formalin-fixed paraffin-embedded (FFPE) samples - common in cancer research - specific protocols have been established. In validated pan-cancer approaches, manual microdissection of representative tumor areas with sufficient tumor cellularity is performed [7]. Genomic DNA is extracted using specialized kits (e.g., QIAamp DNA FFPE Tissue kit), with concentration quantified using fluorometric methods (e.g., Qubit dsDNA HS Assay) and purity measured by spectrophotometry (e.g., NanoDrop) [7]. Minimum input requirements are typically at least 20 ng of DNA with A260/A280 ratio between 1.7 and 2.2 [7].

Library Preparation and Target Enrichment

Library preparation for pan-cancer profiling typically uses hybrid capture-based methods for target enrichment. The process involves fragmenting genomic DNA, followed by adapter ligation [4] [7]. The hybrid capture method is performed according to standardized protocols using kits such as the Agilent SureSelectXT Target Enrichment System [7]. Final library size and quantity are assessed using bioanalyzer systems (e.g., Agilent 2100 Bioanalyzer) with cutoff parameters typically set at 250-400 bp for size and specific concentration thresholds (e.g., 2nM) [7].

For pan-cancer panels targeting hundreds of genes, quality control throughout library preparation is critical. In the validation of the CANSeqTMKids pan-cancer panel, conditions were optimized to use as low as 20% neoplastic content with 5 ng of nucleic acid input, demonstrating the sensitivity achievable with optimized protocols [13]. The validation established a limit of detection at 5% allele fraction for SNVs and INDELs, 5 copies for gene amplifications, and 1,100 reads for gene fusions [13].

Sequencing and Data Analysis

Pan-cancer profiling utilizes various sequencing platforms depending on research needs. The Illumina sequencing platform is most commonly used, where library fragments are immobilized on a flow cell and amplified through bridge PCR to form clusters [4]. Sequencing-by-synthesis then occurs with fluorescently labeled nucleotides incorporated into growing DNA strands, with the instrument detecting fluorescence in real-time [4]. Other platforms such as Ion Torrent and Pacific Biosciences use different sequencing chemistries and detection methods, such as semiconductor-based detection and single-molecule real-time (SMRT) sequencing, respectively [4].

Bioinformatic analysis represents a critical component of pan-cancer profiling. The enormous data volume generated by NGS presents significant interpretation challenges [4]. Standardized pipelines typically include:

- Sequence alignment to reference genomes (e.g., hg19, GRCh38)

- Variant calling using specialized tools (e.g., Mutect2 for SNVs and small INDELs)

- Annotation of identified variants (e.g., using SnpEff)

- Copy number analysis (e.g., using CNVkit)

- Fusion detection (e.g., using LUMPY) [7]

For comprehensive profiling, additional analyses include microsatellite instability status detection (using tools like mSINGs) and tumor mutational burden calculation [7].

Diagram 2: Pan-Cancer Analysis Framework

Research Applications in Cancer Heterogeneity Studies

Revealing Shared Molecular Pathways Across Cancers

Pan-cancer genomic profiling has enabled the discovery of molecular commonalities across histologically distinct cancer types, revealing that tumors originating from different tissues often share fundamental molecular pathways. The Cancer Genome Atlas (TCGA) Research Network has demonstrated through pan-cancer analysis that molecular similarities among tumors can transcend tissue-of-origin classifications [9]. These findings have reshaped our understanding of cancer biology, highlighting that shared driver mutations exist across many different cancers regardless of tumor origin [9].

This approach has proven particularly valuable for identifying targetable pathways in rare cancers or those with limited treatment options. For example, NTRK fusions have been identified across multiple histologically distinct tumor types, occurring in less than 1% of all cancers but presenting a highly targetable alteration with TRK inhibitors [14]. Similarly, tumor mutational burden (TMB) and microsatellite instability (MSI) have emerged as predictive biomarkers for immunotherapy response across diverse cancer types, demonstrating how pan-cancer analysis can identify therapeutic opportunities that transcend traditional classification systems [10] [14].

Tumor Reclassification and Diagnostic Refinement

Comprehensive genomic profiling has demonstrated significant utility in refining and sometimes reclassifying tumor diagnoses based on molecular features rather than solely on histopathology. In certain cases, comprehensive genomic profiling results may be inconsistent with initial pathological diagnosis and clinical presentation, warranting secondary clinicopathological review to explore alternative diagnostic explanations more consistent with the genomic results [15]. This molecular-driven reclassification can have profound implications for treatment selection and clinical trial eligibility.

A study of 28 cases where CGP results prompted diagnostic re-evaluation demonstrated two primary patterns of reclassification: (1) disease reclassification, involving a change from one distinct indication to another, and (2) disease refinement, where cancers of unknown primary (CUP) origin are assigned a more definitive tumor classification [15]. Specific examples included initial diagnoses of non-small cell lung cancer reclassified to renal cell carcinoma, sarcoma reclassified to melanoma, and neuroendocrine carcinoma reclassified to prostate carcinoma based on molecular findings [15]. These reclassifications enabled more precise therapeutic targeting based on the underlying molecular drivers.

Biomarker Discovery for Targeted Therapies

Pan-cancer approaches have dramatically accelerated the discovery of predictive biomarkers for targeted therapy development. By analyzing molecular patterns across diverse cancer types, researchers can identify genomic alterations that may be rare in individual cancer types but collectively significant as therapeutic targets. Comprehensive genomic profiling can provide more complete information on common oncogenic drivers (like EGFR, KRAS, BRAF) and new information on complex or rare biomarkers (like MET Exon 14, NTRK1, NTRK2, NTRK3) all from a single test [14].

The efficiency of CGP for biomarker discovery is particularly valuable in the context of tissue limitations. Sequential testing of single biomarkers or use of limited molecular diagnostic panels may quickly exhaust sample availability [14]. Professional guidelines now recommend that broad molecular profiling be conducted as part of biomarker testing for eligible patients using a validated test, which can help minimize tissue use and potential wastage [14]. This approach maximizes the information obtained from limited tissue resources, a critical consideration in cancer research.

Table 3: Key Genomic Alterations Identified Through Pan-Cancer Profiling

| Gene/Alteration | Alteration Type | Primary Cancer Types | Targeted Therapies |

|---|---|---|---|

| EGFR | SNVs, Indels | NSCLC, Glioblastoma | EGFR TKIs (erlotinib, osimertinib) |

| KRAS | SNVs | Pancreatic, Colorectal, NSCLC | KRAS G12C inhibitors (sotorasib) |

| BRAF V600E | SNV | Melanoma, Colorectal, NSCLC | BRAF/MEK inhibitors (dabrafenib/trametinib) |

| NTRK fusions | Gene fusion | Multiple rare tumors | TRK inhibitors (larotrectinib, entrectinib) |

| MSI-High | Genomic signature | Colorectal, Endometrial, Multiple | Immune checkpoint inhibitors |

| TMB-High | Genomic signature | Melanoma, Lung, Bladder | Immune checkpoint inhibitors |

The Scientist's Toolkit: Essential Research Reagents and Platforms

Successful implementation of pan-cancer genomic profiling requires specialized reagents, platforms, and computational tools. The following research toolkit outlines essential components for establishing robust NGS workflows in cancer research settings.

Table 4: Research Reagent Solutions for Pan-Cancer Genomic Profiling

| Category | Product/Platform | Specifications | Research Application |

|---|---|---|---|

| Pan-Cancer Panels | TruSight Oncology 500 | 523 cancer-relevant genes, TMB, MSI | Comprehensive genomic profiling for clinical research |

| Targeted Panels | AmpliSeq for Illumina Cancer HotSpot Panel v2 | Hotspot regions of 50 genes | Targeted investigation of known cancer hotspots |

| RNA Pan-Cancer | TruSight RNA Pan-Cancer | 1385 oncology genes | Gene expression, variant and fusion detection |

| Sequencing Platforms | Illumina NextSeq 550 | Desktop sequencer | Flexible throughput for targeted to whole-genome sequencing |

| Automation Systems | Agilent SureSelectXT | Automated target enrichment | Streamlined library preparation for large gene panels |

| Analysis Software | Illumina DRAGEN Bio-IT Platform | Secondary analysis of sequencing data | Ultra-rapid processing of somatic datasets |

| QC Instruments | Agilent 2100 Bioanalyzer | Microfluidics-based analysis | Assessment of DNA/RNA quality and library quantification |

The evolution from single-gene analysis to pan-cancer genomic profiling represents a fundamental transformation in cancer research methodology. Next-generation sequencing technologies have enabled this paradigm shift, providing researchers with powerful tools to decipher the molecular complexity of cancer across traditional histological boundaries. Comprehensive genomic profiling approaches have revealed shared molecular pathways operating across diverse cancer types, facilitating biomarker-driven therapeutic development and enabling more precise tumor classification systems.

For cancer heterogeneity studies, pan-cancer profiling offers unprecedented insights into the molecular basis of treatment response and resistance, enabling drug development professionals to identify targetable alterations that may be rare in individual cancer types but collectively significant. As NGS technologies continue to evolve, with advancements in single-cell sequencing, liquid biopsy applications, and computational analytics, their impact on cancer research will undoubtedly expand, further refining our understanding of cancer biology and accelerating the development of personalized therapeutic approaches.

Next-Generation Sequencing (NGS) has fundamentally transformed oncology research by providing unprecedented capabilities to characterize the complex genomic architecture of cancers. The profound genetic heterogeneity inherent in malignant tumors, both between patients (inter-tumor heterogeneity) and within individual patients (intra-tumor heterogeneity), represents a significant challenge for effective cancer management and treatment [16]. NGS technologies address this challenge by enabling comprehensive genomic profiling that reveals the molecular basis of cancer development, progression, and therapeutic resistance [16] [6]. Through massively parallel sequencing of millions of DNA fragments, NGS facilitates the identification of key genetic alterations across entire genomes, transcriptomes, and epigenomes, providing researchers and clinicians with a powerful toolset for precision oncology [16] [6].

The application of NGS in cancer heterogeneity studies primarily focuses on three critical areas: identifying driver mutations that initiate and promote tumor growth, detecting structural variants that redefine genomic architecture, and reconstructing clonal evolution that underlies treatment resistance and disease progression [16] [17]. This technical guide explores these core applications, detailing the experimental methodologies, analytical frameworks, and practical implementations of NGS that are essential for advancing our understanding of cancer biology and developing more effective, personalized treatment strategies.

NGS Technologies and Workflows for Cancer Genomics

Technology Platforms and Selection Criteria

The selection of an appropriate NGS platform is a critical strategic decision that directly influences the success of cancer genomics research. Second-generation platforms (e.g., Illumina) dominate the landscape with their exceptionally high throughput, low error rates (typically 0.1–0.6%), and cost-effectiveness, making them suitable for a wide range of applications from whole-genome sequencing to targeted panels [16] [18]. Third-generation technologies (e.g., PacBio, Oxford Nanopore) introduce distinctive approaches with their long-read capabilities, enabling better resolution of complex genomic variations, including structural variants and repetitive regions that are challenging for short-read platforms [16] [19].

Table 1: Comparison of Primary NGS Technologies in Cancer Research

| Technology | Read Length | Error Profile | Optimal Applications in Cancer Research | Throughput Range |

|---|---|---|---|---|

| Illumina | 75-300 bp (short) | Low error rate (0.1-0.6%) | Whole genome, exome, transcriptome, targeted sequencing; variant calling | High to ultra-high |

| Oxford Nanopore | Ultra-long (100,000+ bp) | Higher error rate, random errors | Structural variant detection, complex rearrangement resolution, epigenetics | Flexible |

| PacBio | 3-10 kb (long) | Higher error rate, random errors | Full-length transcript sequencing, complex structural variants, haplotype phasing | Medium |

The fundamental advantage of NGS over traditional sequencing methods lies in its massively parallel architecture, which enables concurrent analysis of millions of DNA fragments [16]. This parallel processing provides markedly increased sequencing depth and sensitivity, detecting low-frequency variants down to ~1% variant allele frequency, and significantly shortens turnaround times—an entire human genome can now be sequenced in approximately one week compared with years using Sanger technology [16]. This comprehensive genomic coverage and higher capacity with sample multiplexing make NGS particularly valuable for profiling the complex mutational landscape of tumors [16].

Essential NGS Workflow for Cancer Analysis

The NGS workflow encompasses multiple critical steps from sample preparation to data interpretation, each requiring rigorous quality control to ensure reliable results for cancer heterogeneity studies.

Diagram 1: Comprehensive NGS workflow for cancer genomics applications, spanning experimental, bioinformatics, and interpretation phases.

The workflow begins with sample preparation and quality control, where factors such as nucleic acid quality, tumor content, and appropriate sample type selection directly impact data quality [20]. For cancer samples, particularly formalin-fixed paraffin-embedded (FFPE) tissues, special considerations are necessary due to potential DNA degradation and cross-linking [20]. The library preparation step converts the extracted nucleic acids into sequencing-ready formats, with targeted approaches often preferred for clinical cancer samples due to their higher sensitivity and lower input requirements [20] [21].

Following sequencing, the primary analysis phase involves base calling and quality assessment, while secondary analysis includes alignment to reference genomes and initial variant calling [6]. The tertiary analysis represents a critical stage for cancer studies, encompassing variant annotation, filtering, and interpretation to distinguish driver mutations from passenger mutations, and determining clinical actionability based on evidence databases [6]. Throughout this workflow, quality control metrics must be rigorously monitored, including coverage uniformity, mapping quality, and sensitivity for variant detection [21].

Identifying Driver Mutations in Cancer

Methodologies for Driver Mutation Detection

Driver mutations confer selective growth advantage to cancer cells and have been functionally implicated in oncogenesis, progression, and treatment response [16]. Their identification is crucial for understanding tumor biology and guiding targeted therapy decisions. NGS enables comprehensive detection of driver mutations across multiple variant classes, including single nucleotide variants (SNVs), small insertions and deletions (indels), and copy number alterations [20].

Liquid biopsy approaches using cell-free DNA (cfDNA) have emerged as powerful non-invasive tools for detecting driver mutations, particularly in advanced cancers where tissue biopsies may be challenging to obtain. A study analyzing plasma cfDNA from 117 stage I-IV lung adenocarcinoma cases demonstrated that cancer-specific mutations could be detected in approximately 72% of cases across all stages, with detection rates increasing with advancing disease stage [22]. The concordance between cfDNA and tumor tissue also correlated with disease stage, ranging from 0% in stage I to 75% in stage IV, highlighting the potential of liquid biopsy for identifying therapeutic targets, especially in advanced disease [22].

Table 2: NGS Methodologies for Driver Mutation Identification in Cancer

| NGS Approach | Target Region | Optimal Sample Types | Advantages | Limitations |

|---|---|---|---|---|

| Whole Genome Sequencing (WGS) | Entire genome | High-quality DNA from fresh-frozen tissue | Comprehensive variant detection; identifies novel/non-coding drivers | High cost; large data storage; interpretation challenges |

| Whole Exome Sequencing (WES) | Protein-coding exons (1-2% of genome) | High-quality DNA from blood or fresh-frozen tissue | Focused on coding regions; higher depth than WGS | Misses non-coding variants; not recommended for FFPE |

| Targeted Sequencing Panels | Pre-selected cancer-related genes | FFPE, fine-needle aspirates, liquid biopsies | High sensitivity for low-frequency variants; cost-effective; fast turnaround | Limited to known targets; discovery power restricted |

| Liquid Biopsy NGS | Circulating tumor DNA in blood | Plasma from blood samples | Non-invasive; enables monitoring; captures heterogeneity | Lower sensitivity in early-stage disease; limited by ctDNA fraction |

Experimental Protocol for Targeted Driver Mutation Detection

Targeted NGS panels have become the most widely used approach in clinical oncology research due to their sensitivity, cost-effectiveness, and faster turnaround times [20] [21]. The following protocol outlines a robust method for identifying driver mutations using targeted panels:

Step 1: Sample Preparation and Quality Control

- Extract DNA from tumor samples (FFPE, fresh-frozen, or liquid biopsy) using specialized kits designed for the specific sample type [20].

- Quantify DNA using fluorescence-based methods (e.g., Qubit) rather than UV spectrophotometry to avoid overestimation from contaminants [20].

- Assess DNA quality and fragmentation: for FFPE samples, use agarose gel electrophoresis or fragment analyzers; ensure minimum DNA input of 50 ng for optimal results [21].

- Evaluate tumor content through pathological review; typical minimum requirement is 10-20% tumor cells; perform macrodissection if necessary to enrich tumor content [20].

Step 2: Library Preparation

- For hybridization capture-based approaches: Shear DNA to appropriate fragment sizes (200-500 bp), followed by end-repair, A-tailing, and adapter ligation [21].

- Hybridize with biotinylated oligonucleotide probes targeting cancer-associated genes; the TTSH-oncopanel targeting 61 genes exemplifies this approach [21].

- Wash to remove non-specific binding and amplify captured libraries using PCR (typically 8-12 cycles).

- For amplicon-based approaches: Use multiplex PCR to amplify targeted regions simultaneously.

Step 3: Sequencing and Data Analysis

- Sequence libraries on appropriate platforms (e.g., Illumina MiSeq/NextSeq, Ion Torrent) with sufficient coverage depth (typically 500-1000x for tumor samples) [21].

- Process raw data through bioinformatics pipelines: quality control (FastQC), alignment (BWA, STAR), variant calling (GATK, VarScan), and annotation (ANNOVAR, VEP) [6].

- Filter variants based on quality metrics, population frequency, and functional impact.

- Focus on known driver genes and pathways (e.g., KRAS, EGFR, TP53, PIK3CA) and interpret variants using clinical databases (OncoKB, CIViC, COSMIC) [6] [21].

This protocol, when implemented with rigorous validation, can achieve sensitivity of 98.23% and specificity of 99.99% for variant detection, with variant allele frequency thresholds as low as 2.9% [21].

Detecting Structural Variants in Cancer Genomes

Complex Structural Variants in Cancer

Structural variants (SVs), defined as genetic alterations involving 50 base pairs or more, play crucial roles in cancer development by disrupting tumor suppressor genes, activating oncogenes, and generating novel fusion transcripts [19]. While simple deletions and duplications represent the most common SVs, complex structural variants—involving clustered breakpoints originating from a single event—are increasingly recognized as important drivers in cancer pathogenesis [19].

Recent research has revealed that complex SVs constitute approximately 8.4% of all de novo structural variants, making them the third most common type after simple deletions and tandem duplications [19]. These complex rearrangements can be classified into distinct subtypes, including reciprocal inversions, reciprocal translocations, and templated insertions, each with different mechanistic origins and functional consequences [19]. In cancer genomics, the accurate detection and characterization of these complex SVs is essential for understanding the full spectrum of genomic alterations driving tumorigenesis.

Methodologies for Structural Variant Detection

The detection of structural variants presents significant technical challenges, particularly for short-read sequencing technologies whose limited read lengths often result in fragmented or incomplete representations of complex genomic rearrangements [19]. However, rigorous analytical approaches applied to large-scale datasets have enabled substantial progress in SV detection.

Table 3: Approaches for Structural Variant Detection in Cancer

| Method | Principle | SV Types Detected | Advantages | Limitations |

|---|---|---|---|---|

| Short-read WGS | Detection of discordant read pairs, split reads, and read depth changes | Deletions, duplications, inversions, translocations | High throughput; cost-effective; well-established pipelines | Limited resolution in repetitive regions; may miss complex SVs |

| Long-read WGS | Single-molecule sequencing spanning entire SVs | All SV types, including complex rearrangements | Complete characterization of complex SVs; phased haplotypes | Higher cost; lower throughput; higher error rates |

| Targeted RNA-seq | Sequencing transcriptome to detect fusion genes | Gene fusions, exon-skipping events | Direct evidence of functional consequences; high sensitivity | Limited to expressed genes; misses non-genic SVs |

| Hybrid Approaches | Integration of multiple data types | Comprehensive SV profiling | Complementary strengths; higher validation rate | Complex analysis; resource intensive |

The development of specialized bioinformatics tools has been critical for advancing SV detection. Pipelines incorporating multiple callers (e.g., Manta, Delly, Lumpy) followed by rigorous filtering and manual inspection have demonstrated high validation rates in large-scale studies [19]. For clinical applications, targeted approaches focusing on known cancer-relevant SVs (e.g., BCR-ABL, EML4-ALK, NTRK fusions) offer a practical alternative to whole-genome methods.

Experimental Protocol for Structural Variant Detection

Step 1: Sample and Library Preparation

- Extract high-molecular-weight DNA (≥50 ng) from tumor samples; fresh-frozen tissue is preferred over FFPE for SV detection due to better DNA quality [20].

- Prepare sequencing libraries with insert sizes optimized for SV detection (300-800 bp); larger insert sizes facilitate detection of larger SVs.

- For targeted approaches, use hybridization capture with probes designed to cover known breakpoint regions and cancer-associated genes.

Step 2: Sequencing and Primary Analysis

- Sequence using appropriate platform: short-read (Illumina) for cost-effective coverage, or long-read (PacBio, Oxford Nanopore) for complex regions.

- Achieve sufficient coverage: ≥30x for WGS, ≥100x for targeted approaches.

- Perform quality control: assess read length distribution, mapping quality, and coverage uniformity.

Step 3: SV Calling and Interpretation

- Run multiple SV callers with different detection principles (read-pair, read-depth, split-read, assembly-based).

- Merge and filter calls based on supporting evidence, quality scores, and population frequency.

- Annotate SVs for functional impact: gene disruptions, fusion genes, regulatory element alterations.

- Prioritize cancer-relevant SVs using databases like COSMIC, DGVa, and ClinGen [19] [6].

Long-read sequencing technologies are particularly valuable for resolving complex SVs that remain ambiguous with short-read data. The enhanced contiguity of long reads enables direct spanning of breakpoint junctions and more accurate reconstruction of complex rearrangement structures [19].

Analyzing Clonal Evolution in Tumors

Understanding Tumor Heterogeneity and Evolution

Cancer evolution is characterized by branching phylogenies, where subclones with unique genetic profiles emerge at different time points and anatomical locations, contributing to therapeutic resistance and disease progression [17]. The dynamic nature of tumor clonal architecture presents a major challenge for cancer treatment, as resistant subclones may expand under selective pressure from therapies [17]. Understanding these evolutionary trajectories is therefore essential for designing effective treatment strategies that can anticipate or prevent resistance.

Clonal evolution analysis leverages somatic mutations as natural barcodes to reconstruct the phylogenetic history of tumors and quantify the prevalence of distinct subclones. Advanced cancers typically exhibit significant intra-tumor heterogeneity, with multiple co-existing subclones harboring unique combinations of mutations [23] [17]. Monitoring changes in this clonal composition over time and in response to therapy provides critical insights into the evolutionary dynamics that underlie treatment failure and disease relapse.

Computational Methods for Clonal Reconstruction

Several computational approaches have been developed to reconstruct clonal population structures from bulk or single-cell sequencing data. MyClone represents a recent advancement in this field—a probabilistic method that processes read counts and copy number information of single nucleotide variants from deep sequencing data to determine the mutational composition of clones and their cancer cell fractions [23].

Diagram 2: Computational workflow for clonal evolution analysis from NGS data, showing the progression from raw data to evolutionary interpretation.

Compared to existing methods, MyClone demonstrates enhanced clustering accuracy and cancer cell fraction prediction when applied to deep-targeted sequencing data and bulk tumor sequencing data with deep coverage [23]. Additionally, it achieves substantial improvements in computational speed, making it suitable for clinical applications where timely analysis is critical for treatment decision-making [23].

Experimental Protocol for Clonal Evolution Analysis

Step 1: Study Design and Sample Collection

- Collect multiple samples from the same patient: spatially distinct regions of the same tumor (multi-region sequencing) and/or serial samples over time (longitudinal monitoring) [17].

- Include liquid biopsy samples for non-invasive monitoring of clonal dynamics through circulating tumor DNA [22] [23].

- Sequence matched normal tissue to distinguish somatic from germline variants.

Step 2: Sequencing and Variant Calling

- Perform deep sequencing (≥500x coverage) to detect low-frequency subclones; ultra-deep sequencing (≥1000x) is preferred for liquid biopsy samples [23].

- Use targeted panels focusing on known cancer genes or whole-exome sequencing for discovery approaches.

- Call variants using sensitive methods optimized for low variant allele frequencies.

Step 3: Clonal Reconstruction

- Estimate copy number alterations and tumor purity using tools like ASCAT, Sequenza, or PureCN.

- Calculate cancer cell fractions (CCFs) for each mutation by adjusting variant allele frequencies for copy number and purity.

- Cluster mutations based on their CCF distributions across samples using methods like PyClone, PhyloWGS, or MyClone [23].

- Build phylogenetic trees representing the evolutionary relationships between subclones.

Step 4: Interpretation and Clinical Translation

- Identify truncal mutations (present in all samples) representing early events in tumorigenesis.

- Detect branch-specific mutations associated with spatial heterogeneity or temporal evolution.

- Correlate clonal dynamics with treatment response to identify resistance mechanisms.

- In metastatic breast cancer, applications of this approach have identified mutated genes potentially associated with drug resistance or sensitivity, informing combination therapy strategies [23].

The integration of clonal evolution analysis into clinical trials and practice enables more dynamic treatment adaptation, with approaches such as adaptive therapy, extinction therapy, and reflexive control therapies showing promise for managing evolutionary-driven resistance [17].

Successful implementation of NGS applications in cancer heterogeneity research requires access to specialized reagents, computational tools, and reference databases. The following toolkit compiles essential resources referenced in the studies discussed.

Table 4: Essential Research Reagent Solutions for NGS Cancer Studies

| Resource Category | Specific Examples | Function/Application | Key Features |

|---|---|---|---|

| Targeted Sequencing Panels | Oncomine Precision Assay [22], TTSH-oncopanel (61 genes) [21] | Focused profiling of cancer-related genes | High sensitivity; rapid turnaround; FFPE-compatible |

| Library Prep Kits | Sophia Genetics library kits [21], AmpliSeq panels [20] | Preparation of sequencing libraries from limited samples | Automated compatibility; low input requirements |

| Reference Standards | HD701 reference control [21] | Assay validation and quality control | Known variant profile; enables sensitivity determination |

| Bioinformatics Tools | Sophia DDM [21], MyClone [23], BWA [16], GATK [16] | Data analysis, variant calling, clonal reconstruction | Machine learning integration; user-friendly interfaces |

| Clinical Interpretation Databases | OncoPortal Plus [21], COSMIC [6], dbSNP [6] | Variant annotation and clinical significance | Tiered evidence system; curated therapeutic associations |

In addition to wet laboratory reagents, computational resources play an indispensable role in NGS cancer studies. Cloud-based platforms have streamlined the storage, management, and processing of the vast datasets generated by NGS technologies, making large-scale genomic analyses more accessible to research institutions and clinical laboratories [6]. These platforms often integrate with established bioinformatics pipelines and databases, facilitating the translation of raw sequencing data into biologically and clinically meaningful insights.

Next-Generation Sequencing has fundamentally transformed our approach to studying and treating cancer by providing unprecedented insights into driver mutations, structural variants, and clonal evolution. The applications detailed in this technical guide—from targeted detection of actionable mutations to comprehensive reconstruction of tumor evolutionary histories—represent powerful approaches for addressing the profound challenge of cancer heterogeneity. As these methodologies continue to mature and integrate into clinical practice, they promise to advance personalized cancer treatment by matching patients with optimal therapies based on the molecular characteristics of their tumors.

Looking ahead, several emerging trends are poised to further expand the impact of NGS in cancer research. The integration of multi-omics data—combining genomic, transcriptomic, epigenomic, and proteomic profiles—offers a more comprehensive understanding of cancer biology and therapeutic vulnerabilities [16] [6]. Single-cell sequencing technologies enable the resolution of cellular heterogeneity within tumors at unprecedented resolution, revealing rare subpopulations that may drive resistance and metastasis [6] [24]. Spatial transcriptomics preserves the architectural context of cellular communities within tumor microenvironments, adding another dimension to our understanding of cancer heterogeneity [16]. Finally, the application of artificial intelligence and machine learning to NGS data holds tremendous potential for pattern recognition, predictive modeling, and the discovery of previously unrecognized relationships between genomic alterations and clinical outcomes [16] [6].

As these advancements mature, the ongoing challenges of data interpretation, standardization, accessibility, and ethical considerations must be addressed to fully realize the potential of NGS in cancer research and clinical oncology [6]. Through continued dedication to technological innovation and biological discovery, NGS will remain an indispensable tool in the ongoing effort to understand and overcome cancer heterogeneity.

The evolution of DNA sequencing from the first-generation Sanger method to massively parallel Next-Generation Sequencing (NGS) represents a foundational technological revolution in genomics research. This shift has been particularly transformative in oncology, enabling unprecedented insights into the complex genomic landscape of cancer heterogeneity. NGS technologies now allow researchers to decode entire cancer genomes, transcriptomes, and epigenomes at single-nucleotide resolution, providing the comprehensive data required to decipher tumor evolution, clonal dynamics, and resistance mechanisms. This technical review examines the core principles, performance metrics, and experimental methodologies underlying this paradigm shift, with a specific focus on applications in cancer heterogeneity studies that are driving the development of personalized therapeutic strategies.

The landscape of genomic analysis has undergone a radical transformation since the completion of the Human Genome Project, which relied on Sanger sequencing and required over a decade and nearly $3 billion to generate the first human genome sequence [25]. The advent of massively parallel NGS technologies has fundamentally redefined the scale and scope of possible genetic investigations, compressing similar sequencing endeavors into a matter of hours at a cost below $1,000 per genome [25]. This dramatic improvement in throughput and cost-efficiency has positioned NGS as an indispensable tool in molecular biology and clinical diagnostics.

In oncology research, this technological shift has been particularly impactful. Cancer is fundamentally a disease of the genome, characterized by accumulating genetic alterations that drive uncontrolled growth, metastasis, and therapeutic resistance [16]. The complex heterogeneity within tumors – both spatial and temporal – presents a formidable scientific challenge that requires deep, comprehensive genomic profiling to unravel. While Sanger sequencing provided excellent accuracy for focused studies of individual genes, its low-throughput, serial approach was ill-suited for capturing the full genomic complexity of malignancies [4]. NGS has filled this critical gap, enabling researchers to simultaneously interrogate millions of DNA fragments across hundreds to thousands of cancer-related genes in a single assay [16] [4].

The clinical implementation of NGS in oncology has demonstrated significant utility, with real-world studies showing that approximately 26% of advanced cancer patients harbor clinically actionable mutations detectable through comprehensive NGS profiling [7]. This capability has transformed cancer from a tissue-defined disease to a molecularly-defined constellation of subtypes, each with distinct therapeutic vulnerabilities. This whitepaper examines the technical foundations of this sequencing revolution, its application in deciphering cancer heterogeneity, and the methodological frameworks enabling these advances.

Fundamental Principles: Sanger Sequencing vs. Massively Parallel NGS

Core Methodological Differences

The fundamental distinction between Sanger sequencing and NGS lies in their underlying biochemistry and processing architecture. Sanger sequencing, also known as chain-termination sequencing, relies on the selective incorporation of dideoxynucleoside triphosphates (ddNTPs) during DNA synthesis [26]. These chain-terminating nucleotides lack the 3'-hydroxyl group necessary for continued DNA strand elongation, resulting in DNA fragments of varying lengths that can be separated by capillary electrophoresis and detected via fluorescent labels [26] [12]. This process generates a single, long contiguous read per reaction, typically ranging from 500 to 1,000 base pairs, with exceptional per-base accuracy exceeding 99.999% (Phred score > Q50) [26].

In contrast, NGS employs massively parallel sequencing, simultaneously processing millions to billions of DNA fragments in a single run [16] [12]. The most prevalent NGS methodology – Sequencing by Synthesis (SBS) – utilizes fluorescently-labeled, reversible terminator nucleotides that are incorporated one base at a time across millions of clustered DNA fragments immobilized on a solid surface [27] [26]. After each incorporation cycle, the fluorescent signal is imaged, the terminator is cleaved, and the process repeats, building up the DNA sequence base by base [27]. This parallel architecture enables the extraordinary throughput that characterizes NGS technologies.

Performance and Economic Comparison

The transition from Sanger sequencing to NGS has fundamentally altered the economics and capabilities of genomic analysis. The table below summarizes the key performance characteristics of each approach:

Table 1: Performance and Economic Comparison of Sanger Sequencing vs. NGS

| Aspect | Sanger Sequencing | Next-Generation Sequencing (NGS) |

|---|---|---|

| Throughput | Single DNA fragment at a time [16] | Millions to billions of fragments simultaneously [16] [12] |

| Sequencing Speed | Years for a human genome [16] | Approximately one week for a human genome [16] |

| Cost Trajectory | ~$3 billion for first human genome [25] | Under $1,000 per human genome [25] |

| Detection Sensitivity | ~15-20% variant allele frequency [16] | ~1% variant allele frequency [16] |

| Read Length | 500-1000 base pairs [26] | 50-300 bp (short-read); 10,000+ bp (long-read) [12] |

| Applications in Oncology | Single gene mutation analysis [4] | Comprehensive genomic profiling, tumor heterogeneity studies, liquid biopsies [16] [4] |

| Data Output | Limited data per run [4] | Gigabases to terabases per run [27] |

| Economic Efficiency | Cost-effective for 1-20 targets [16] | Cost-effective for large-scale projects and multiple samples [16] [28] |

The economic advantage of NGS becomes particularly evident in large-scale projects. While Sanger sequencing maintains lower per-run costs for small targets, its cost structure scales linearly with the number of sequences analyzed [28]. In contrast, NGS leverages multiplexing capabilities to process hundreds of samples simultaneously, dramatically reducing the per-sample cost for comprehensive genomic analyses [26]. This efficiency has made large-scale cancer genomics initiatives economically feasible, enabling population-level studies of cancer genomics and biomarker discovery.

NGS Methodologies and Experimental Frameworks in Cancer Research

Core NGS Workflow for Cancer Genomics

The implementation of NGS in cancer research follows a standardized workflow with specific considerations for tumor samples. The process begins with sample acquisition, which can include tumor tissue biopsies, liquid biopsies (blood samples for circulating tumor DNA analysis), or single-cell suspensions [4] [7]. Nucleic acids are then extracted, with quality control being particularly critical for degraded samples from formalin-fixed paraffin-embedded (FFPE) tissues commonly used in pathology archives [7].

Library preparation represents a crucial step where DNA is fragmented, and platform-specific adapters are ligated to the fragments [4]. For targeted sequencing approaches commonly used in oncology, hybrid capture methods using biotinylated probes to enrich for cancer-relevant genes are employed [7]. The prepared libraries are then sequenced using NGS platforms, with Illumina's Sequencing by Synthesis technology currently dominating the clinical oncology landscape due to its high accuracy and throughput [27] [12].

Table 2: Key NGS Platforms and Their Applications in Cancer Research

| Platform | Sequencing Technology | Read Length | Primary Applications in Oncology | Limitations |

|---|---|---|---|---|

| Illumina | Sequencing by Synthesis (SBS) | 75-300 bp [12] | Whole genome, exome, and transcriptome sequencing; targeted panels [27] [12] | Higher cost for large genomes; short reads limit structural variant detection [12] |

| Oxford Nanopore | Nanopore sensing | 10,000-30,000 bp [12] | Detection of large structural variants, fusion genes, epigenetic modifications [12] | Higher error rates (up to 15%) requiring computational correction [12] |

| Pacific Biosciences (PacBio) | Single Molecule Real-Time (SMRT) | 10,000-25,000 bp [12] | Characterization of complex genomic regions, haplotype phasing [12] | Higher cost per sample; requires high molecular weight DNA [12] |

| Ion Torrent | Semiconductor sequencing | 200-400 bp [12] | Targeted sequencing; rapid turnaround time applications [12] | Homopolymer sequence errors [12] |

The final stage involves bioinformatic analysis of the massive datasets generated. This includes sequence alignment to reference genomes, variant calling to identify mutations, and interpretation of the clinical significance of detected alterations [4]. In cancer studies, specialized algorithms are employed to distinguish somatic (tumor-specific) mutations from germline variants, determine tumor mutational burden, assess microsatellite instability, and reconstruct clonal architecture from variant allele frequencies [7].

Essential Research Reagents and Solutions for NGS in Cancer Studies

The successful implementation of NGS in cancer research requires specialized reagents and materials optimized for challenging tumor-derived samples. The following table details key components of the NGS workflow:

Table 3: Essential Research Reagents and Solutions for NGS in Cancer Studies

| Reagent Category | Specific Examples | Function in NGS Workflow | Considerations for Cancer Samples |

|---|---|---|---|

| Nucleic Acid Extraction Kits | QIAamp DNA FFPE Tissue Kit [7] | Isolation of high-quality DNA from tumor samples | Optimized for cross-linked FFPE DNA; removes inhibitors that affect library preparation |

| Library Preparation Kits | Agilent SureSelectXT Target Enrichment System [7] | Fragmentation, adapter ligation, and target enrichment | Efficient capture of degraded DNA; compatibility with low-input samples from biopsies |

| Target Enrichment Panels | SNUBH Pan-Cancer v2.0 (544 genes) [7] | Selective capture of cancer-relevant genomic regions | Comprehensive coverage of oncogenes, tumor suppressors, biomarkers (TMB, MSI) |

| Sequencing Reagents | Illumina SBS chemistry [27] | Nucleotides and enzymes for sequence determination | Balanced error rates; optimized for variant detection at low allele frequencies |

| Quality Control Tools | Qubit dsDNA HS Assay, Bioanalyzer [7] | Quantification and quality assessment of DNA and libraries | Sensitive detection for limited samples; accurate sizing of fragmented DNA |

| Bioinformatics Tools | MuTect2, CNVkit, LUMPY [7] | Variant calling, copy number analysis, fusion detection | Specialized algorithms for low VAF detection; tumor-normal comparison |

Applications in Cancer Heterogeneity Studies

Deciphering Tumor Evolution and Clonal Architecture

The massively parallel nature of NGS has enabled unprecedented insights into the dynamic landscape of intratumoral heterogeneity, a fundamental challenge in oncology. Deep sequencing approaches allow researchers to identify and quantify multiple subclonal populations within individual tumors based on their unique mutational signatures [16]. By sequencing at high coverage depths (often >500x for tumor samples), NGS can detect minor subclones present at frequencies as low as 1-2%, which would be undetectable by Sanger sequencing with its ~15-20% detection limit [16] [7].

Longitudinal application of NGS through liquid biopsies provides a non-invasive method for monitoring clonal dynamics during treatment [16] [4]. The analysis of circulating tumor DNA (ctDNA) in blood samples enables real-time tracking of tumor evolution, including the emergence of resistant subclones often months before clinical progression is radiographically detectable [25] [4]. This capability has profound implications for adaptive therapy approaches and understanding resistance mechanisms to targeted therapies.

Biomarker Discovery and Therapeutic Targeting

NGS has dramatically expanded the repertoire of actionable biomarkers in oncology, moving beyond single-gene markers to comprehensive mutational signatures. By simultaneously profiling hundreds of cancer-associated genes, NGS panels can identify targetable alterations across multiple signaling pathways, including EGFR, ALK, ROS1, BRAF, and KRAS, among others [16] [7]. This comprehensive approach is particularly valuable for tumors with complex genomic landscapes, such as pancreatic, ovarian, and glioblastoma malignancies.