Data Preprocessing and Augmentation for Medical Imaging: A 2025 Guide for AI-Driven Drug Development

This article provides a comprehensive guide for researchers and drug development professionals on leveraging data preprocessing and augmentation to overcome the critical challenge of limited and imbalanced medical imaging data.

Data Preprocessing and Augmentation for Medical Imaging: A 2025 Guide for AI-Driven Drug Development

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on leveraging data preprocessing and augmentation to overcome the critical challenge of limited and imbalanced medical imaging data. Covering foundational concepts, advanced methodological applications, troubleshooting for real-world optimization, and rigorous validation frameworks, it synthesizes current best practices and emerging trends. Readers will gain actionable insights into building more robust, generalizable, and clinically impactful AI models for diagnostic and therapeutic innovation, with a specific focus on applications in the pharmaceutical pipeline.

Why Data Preprocessing and Augmentation is Fundamental to Medical AI

The advancement of artificial intelligence (AI) in medical imaging is fundamentally constrained by the quality, quantity, and diversity of the underlying datasets. Data scarcity, particularly for rare diseases or specific patient populations, limits the ability to train robust models. Data imbalance, where certain classes or demographic groups are underrepresented, leads to models that fail to generalize. Data bias, stemming from unrepresentative data collection or processing, can cause AI systems to perform poorly for underrepresented patient groups, potentially exacerbating existing healthcare disparities [1] [2]. One stark example is in pediatric care; while AI is transforming healthcare, only 17% of FDA-approved medical AI devices are labeled for pediatric use, which a recent preprint links to a fundamental data gap, finding that children represent less than 1% of the data in public medical imaging datasets [3]. This article details the scope of these challenges and provides actionable protocols and solutions for researchers to build more reliable and equitable medical imaging AI.

Quantifying the Data Challenge

The scale of scarcity, imbalance, and bias in medical imaging can be characterized through recent empirical findings. The following tables summarize key quantitative evidence of these challenges.

Table 1: Evidence of Data Scarcity and Imbalance in Medical Imaging AI

| Evidence Type | Domain | Finding | Source/Reference |

|---|---|---|---|

| Pediatric Data Gap | Public Medical Imaging Datasets | Children represent <1% of available data. | Erdman et al. [3] |

| FDA Approval Disparity | Medical AI Devices | Only 17% of FDA-approved AI devices are labeled for pediatric use. | Erdman et al. [3] |

| Demographic Reporting | Public Chest Radiograph Datasets | Only 17% of 23 public datasets reported race or ethnicity. | Yi et al. [4] |

| Risk of Bias (ROB) | Healthcare AI Models | 50% of sampled AI studies demonstrated a high risk of bias. | Kumar et al. [1] |

Table 2: Impact of Data Preprocessing and Augmentation on Model Performance

| Technique | Task | Impact on Performance | Notes |

|---|---|---|---|

| Hybrid Data Augmentation | Corneal Topographic Map Classification | Achieved 99.54% accuracy, significantly outperforming individual techniques. | Combines traditional transformations and Generative Adversarial Networks (GANs) [5]. |

| Data Augmentation (General) | Medical Image Analysis (across organs/modalities) | Found to be beneficial across all organs, modalities, and tasks. | Highest performance increase associated with heart, lung, and breast applications [6]. |

| Histogram Equalization (HE) | Chest X-ray Preprocessing | Can lead to poorer generalizability on external validation sets. | Suggests potential overfitting and information loss; model performance is highly dependent on preprocessing [7]. |

| DICOM VOI LUT Preprocessing | Chest X-ray (Pneumothorax) | Improves model robustness by using pixel values closer to clinical standards. | Mimics the standard clinical workflow for radiologists [7]. |

Experimental Protocols for Bias Mitigation and Data Enhancement

Protocol 1: Systematic Evaluation of Dataset Demographics

Objective: To identify and quantify potential age, sex, race, and ethnicity biases in a medical imaging dataset before model development.

Materials: The dataset (in DICOM, .nii, or other format), computing environment with Python, and relevant libraries (e.g., Pandas, SimpleITK, pydicom).

Methodology:

- Data Extraction: For each subject, extract available demographic metadata. For DICOM files, this includes tags for Patient Age (0010, 1010), Patient Sex (0010, 0040), and other relevant fields. If demographics are stored in a separate spreadsheet, ensure it is properly linked.

- Data Summary: Calculate summary statistics (counts, percentages) for each demographic variable.

- Gap Analysis: Identify which demographic variables are missing or incomplete. Report the percentage of records with missing data for each variable.

- Representation Analysis: Compare the demographic distribution of your dataset to the target population or broader census data to identify underrepresentation.

Deliverable: A demographic summary report and a table similar to Table 1, specific to your dataset.

Protocol 2: Implementing a Hybrid Data Augmentation Pipeline

Objective: To increase the effective size and diversity of a training dataset, thereby improving model robustness and mitigating overfitting.

Materials: Training dataset, deep learning framework (e.g., PyTorch, TensorFlow).

Methodology:

- Apply Affine Transformations: Use a combination of random rotations (e.g., ±10°), translations, scaling, and flipping. These are simple but effective and often achieve the best trade-off between performance and complexity [6].

- Apply Pixel-Level Transformations: Introduce variations in image appearance using techniques like adjusting brightness, contrast, and adding Gaussian noise.

- Integrate Generative Models (for severe scarcity): For selected organs or conditions with extreme data scarcity, employ Generative Adversarial Networks (GANs) or other generative models to create synthetic, realistic images [6] [5]. The hybrid of traditional and generative methods has been shown to achieve top performance [5].

- Validation: Always reserve a completely separate, non-augmented validation set to monitor performance and ensure the augmentation is not introducing unrealistic artifacts.

Protocol 3: Clinical-Standard DICOM Preprocessing for Chest X-Rays

Objective: To preprocess DICOM images in a way that retains diagnostically relevant information and improves model generalizability across datasets.

Materials: Raw DICOM files from chest X-rays, DICOM processing library.

Methodology:

- Extract Raw Pixels: Access the original pixel array from the DICOM tag (7fe0, 0010) [7].

- Apply VOI LUT Transformation: Transform the raw pixels using the DICOM Values-of-Interest Look-Up Table (VOI LUT) function (tag 0028, 1056). This can be a linear or non-linear (sigmoid) transformation specified by the manufacturer to produce P-values for clinical presentation [7].

- Avoid Non-Standard Enhancements: Refrain from applying aggressive image enhancements like Histogram Equalization (HE) as a default, as they can lead to information loss and poor performance on external datasets [7].

- Normalization: Finally, normalize the processed pixel values to a standard range (e.g., 0 to 1) for model consumption.

Deliverable: A dataset of preprocessed images that closely resemble the images used by radiologists in clinical practice.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Medical Imaging Data Preprocessing and Augmentation

| Tool / Reagent | Function | Application Note |

|---|---|---|

| SimpleITK / pydicom | Python libraries for reading medical image formats (DICOM, .nii, .mha). | Essential for accessing raw pixel data and metadata. Prefer DICOM over preprocessed .jpg to retain control [7] [8]. |

| ITK-SNAP | Free software for 3D medical image visualization and segmentation. | Used for exploring image structure, creating annotations, and verifying segmentation results [8]. |

| DICOM VOI LUT | A transformation that converts raw pixel values to clinically meaningful "P-values". | Critical for standardizing image presentation. Using this mimics the radiologist's workflow and improves model robustness [7]. |

| TorchIO | A Python library for efficient preprocessing, augmentation, and patch-based sampling of 3D medical images. | Simplifies the implementation of complex spatial and intensity transformations in a deep learning pipeline [6]. |

| Generative Adversarial Networks (GANs) | A class of AI models that generate new, synthetic data instances that resemble the training data. | Used in hybrid augmentation strategies to address severe data scarcity for specific conditions or populations [6] [5]. |

| Fairness Metrics (e.g., Demographic Parity, Equalized Odds) | Statistical tools to measure performance differences between demographic groups. | No single metric is universal; must be selected based on clinical context to evaluate and prove algorithmic fairness [4] [1]. |

Addressing the core challenges of scarcity, imbalance, and bias is not optional but a prerequisite for developing trustworthy AI in medical imaging. As summarized in the workflows and protocols, solutions require a multi-faceted approach: a rigorous, standardized preprocessing methodology that respects clinical standards [7]; strategic data augmentation to expand and balance training data [6] [5]; and a committed, ongoing effort to audit datasets and models for demographic representation and fairness [3] [4] [1]. By integrating these practices throughout the AI lifecycle, from data curation to deployment, researchers can mitigate the risks of biased algorithms and pave the way for equitable, reliable, and generalizable medical imaging AI.

In medical imaging research, the scarcity of large, well-annotated datasets remains a significant bottleneck for developing robust deep-learning models [9]. Two fundamental techniques to address this challenge are data preprocessing and data augmentation. While these terms are sometimes used interchangeably, they represent distinct phases in the model development pipeline with different objectives.

This application note provides a clear, operational distinction between preprocessing and augmentation. We define data preprocessing as a set of deterministic, mandatory operations applied to all images to standardize data and correct acquisition artifacts, ensuring data quality and consistency. In contrast, we define data augmentation as a set of randomized, optional transformations applied during model training to artificially expand the dataset and improve model generalization [10]. We structure quantitative performance comparisons, detailed experimental protocols, and visual workflows to equip researchers with practical guidelines for implementing these techniques effectively.

Conceptual Distinctions and Definitions

Core Objectives and Methodologies

Data Preprocessing involves operations that prepare raw medical data for analysis. The goal is to format data and reduce acquisition artifacts to create a standardized input for deep learning models. Preprocessing is typically applied consistently to all images in the dataset (both training and validation) and is often necessary to ensure the data is in a clinically meaningful state for interpretation [7] [8]. Key characteristics include:

- Deterministic Application: The same operations and parameters are applied to every image.

- Data Quality Focus: Aims to enhance signal quality, standardize pixel values, and format data correctly.

- Mandatory Nature: Considered an essential, non-negotiable step for model input.

Data Augmentation involves artificially expanding a training dataset by creating modified versions of existing images. The goal is to increase the amount and variability of training data to prevent overfitting and improve model robustness [6] [11] [9]. It is applied randomly and only during the model training phase. Key characteristics include:

- Randomized Application: Transformations are applied with random parameters during training.

- Data Quantity & Variety Focus: Aims to expose the model to a wider range of anatomical and pathological variations.

- Optional, Strategic Nature: A tactical choice to improve model performance and generalization.

Operational Workflow

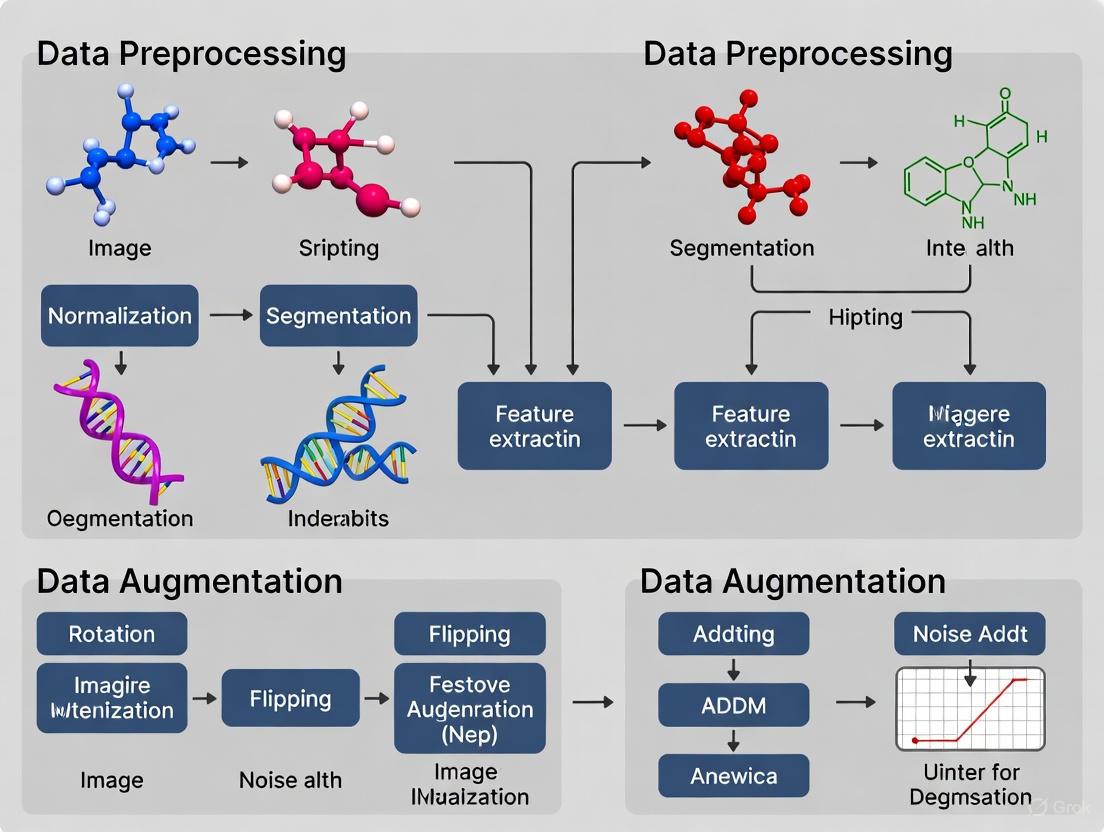

The following diagram illustrates the distinct roles and sequential relationship of preprocessing and augmentation in a typical medical image analysis pipeline.

Quantitative Performance Comparison

Empirical evidence consistently shows that the choice and combination of preprocessing and augmentation techniques significantly impact model performance. The tables below summarize key findings from recent systematic evaluations.

Table 1: Impact of Preprocessing Techniques on Diagnostic Accuracy (Adapted from [12])

| Preprocessing Method | Reported Effectiveness | Key Strengths / Impact on Performance |

|---|---|---|

| Median-Mean Hybrid Filter | 87.5% Efficiency Rate | Effective noise reduction while preserving edges; improves generalizability. |

| Unsharp Masking + Bilateral Filter | 87.5% Efficiency Rate | Enhances edge clarity and detail; combines sharpening with noise reduction. |

| CLAHE + Median Filter | Evaluated | Contrast enhancement coupled with noise suppression. |

| DICOM VOI LUT Transformation | Clinically Standardized [7] | Retains diagnostically significant features; aligns data with clinical workflow. |

Table 2: Performance of Deep Learning Models with Various Preprocessing Techniques (Sourced from [12])

| Deep Learning Model | Efficiency Ratio | Computational Efficiency | Recommended Preprocessing Pairing |

|---|---|---|---|

| EfficiencyNet-B4 | 75% | High | Median-Mean Hybrid Filter |

| MobileNetV2 | 75% | 34% shorter runtime | Unsharp Masking + Bilateral Filter |

| DenseNet-169 | Evaluated | Standard | CLAHE + Butterworth |

| ResNet-50 | Evaluated | Standard | Multiple |

Table 3: Effectiveness of Augmentation Techniques Across Medical Image Modalities (Sourced from [9])

| Augmentation Technique | Brain MR | Lung CT | Breast Mammography | Eye Fundus |

|---|---|---|---|---|

| Geometric (Rotation, Flip) | High | High | Medium | High |

| Intensity Adjustment | Medium | Medium | High | Low |

| Advanced (MixUp, CutMix) | High [13] | High | Evaluated | Evaluated |

| GAN-based Synthesis | Evaluated | Evaluated | High | Medium |

Detailed Experimental Protocols

Protocol 1: Standardized DICOM Preprocessing for Chest X-rays

This protocol is essential for creating a consistent dataset from raw DICOM files, which is critical for model generalizability [7].

Research Reagent Solutions

| Item / Tool | Function / Explanation |

|---|---|

| PyDICOM / SimpleITK | Python libraries for reading and processing DICOM files and metadata. |

| DICOM VOI LUT Function | Applies manufacturer-specific transformation to convert raw pixels to P-values for clinical presentation. |

| HU Value Scaling (for CT) | Converts raw pixel data to standardized Hounsfield Units using rescale slope and intercept. |

| NumPy | For efficient array operations and conversion of image data. |

Methodology

- Data Reading: Use SimpleITK or PyDICOM to load the DICOM file and extract the pixel array and metadata tags [8].

- VOI LUT Application: Apply the Values-of-Interest Look-Up Table (VOI LUT) transformation specified in the DICOM tags (0028,1050) Window Center and (0028,1051) Window Width. This transformation, which can be linear or non-linear (sigmoid), maps the raw pixel values to a range optimized for clinical display [7].

- Normalization: Scale the resulting pixel intensities to a fixed range, typically [0, 1], to ensure stable model training.

Validation Scheme

- Assess preprocessing consistency by comparing pixel value distributions across multiple datasets and manufacturers.

- Evaluate the impact on model performance by training pneumothorax classification models on datasets preprocessed with VOI LUT versus aggressive histogram equalization. Models using VOI LUT show better generalizability to external validation sets [7].

Protocol 2: Augmentation for Medical Image Segmentation

This protocol details the HSMix method, a local image-editing augmentation technique designed for segmentation tasks where contour preservation is crucial [13].

Research Reagent Solutions

| Item / Tool | Function / Explanation |

|---|---|

| Superpixel Algorithm (e.g., SLIC) | Decomposes images into homogeneous regions, providing the structural basis for contour-aware mixing. |

| Saliency Map Generator | Calculates pixel-wise importance coefficients used for soft brightness mixing. |

| U-Net (or variant) | A standard deep learning architecture used for semantic segmentation of medical images. |

Methodology

- Hard Mixing:

- Select two training images and their corresponding segmentation masks (Image A, Mask A; Image B, Mask B).

- Generate superpixels for both Image A and Image B.

- Randomly select a set of superpixels from Image B and paste them into the corresponding location in Image A.

- Perform the identical cut-and-paste operation on Mask B and Mask A to create the augmented segmentation mask.

- Soft Mixing:

- Using the same superpixel regions defined in the hard mixing step, perform a pixel-wise blending between Image A and Image B.

- The blending coefficient for each pixel is determined by its saliency value within the superpixel, rather than using a fixed ratio for the entire image.

- Apply the same soft mixing operation to the pair of segmentation masks.

- Training: The augmented images and masks from both hard and soft mixing are used to train the segmentation model.

Validation Scheme

- Performance is measured using segmentation metrics like Dice Similarity Coefficient (DSC) and Hausdorff Distance on held-out test sets.

- Compare HSMix against baseline augmentation methods (e.g., CutOut, CutMix, MixUp). HSMix has demonstrated superior performance by preserving contour information and creating a more diverse augmentation space [13].

The strategic integration of preprocessing and augmentation is paramount. A recommended workflow is to first establish a robust, standardized preprocessing pipeline based on clinical standards (like DICOM VOI LUT), and then strategically select augmentation techniques that address specific data limitations and task requirements [7] [9].

The following diagram synthesizes the decision-making process for building an effective data preparation pipeline, connecting the foundational choices of preprocessing with the tactical selection of augmentation.

In conclusion, preprocessing and augmentation are complementary but distinct tools. Preprocessing ensures data quality and clinical relevance, forming a reliable foundation for any model. Augmentation strategically enhances model robustness and generalizability by simulating data variation. The most successful medical imaging AI projects will be those that rigorously apply both, with a clear understanding of their unique roles in the research pipeline.

Deep learning has revolutionized medical image analysis, but its success depends on large, diverse datasets that are often unavailable in clinical settings due to privacy concerns, annotation costs, and inherent data limitations [6]. Most manually annotated medical datasets suffer from severe class imbalance, with specific conditions or patient demographics significantly underrepresented [6] [14]. These limitations lead to three fundamental challenges: model overfitting on limited training examples, poor generalization to unseen data or diverse populations, and prohibitive data collection costs [15] [16]. Data preprocessing and augmentation strategies have emerged as crucial solutions to these challenges, enabling more robust and clinically viable AI systems without requiring extensive new data collection [6].

The unique characteristics of medical images—including subtle pathological features, low inter-class variance, high intra-class variability, and diverse imaging modalities—necessitate specialized augmentation approaches tailored to the medical domain [17] [18]. This document presents comprehensive application notes and experimental protocols for implementing effective data augmentation strategies that enhance model robustness, prevent overfitting, and reduce dependency on large-scale data collection in medical imaging research.

Data Augmentation Techniques: Comparative Analysis

Taxonomy of Augmentation Methods

Data augmentation techniques for medical imaging can be broadly categorized into transformation-based methods (applying image manipulations to existing data) and synthetic data generation (creating new samples through generative models) [6]. Transformation-based methods include affine transformations (rotation, scaling, translation), elastic deformations, and intensity modifications, while synthetic generation encompasses Generative Adversarial Networks (GANs), variational autoencoders, and more recent diffusion models [6] [16].

Advanced mix-based augmentation strategies have shown particular promise for medical imaging applications. These methods semantically combine multiple images and their corresponding labels to generate novel training examples [18]. The table below summarizes the performance of prominent mix-based techniques across different medical imaging tasks and model architectures:

Table 1: Performance Comparison of Mix-Based Augmentation Techniques on Medical Imaging Tasks

| Augmentation Method | Dataset | Backbone Architecture | Accuracy (%) | Key Advantages |

|---|---|---|---|---|

| MixUp | Brain Tumor MRI | ResNet-50 | 79.19 | Smooths decision boundaries, effective for data scarcity |

| SnapMix | Brain Tumor MRI | ViT-B | 99.44 | Preserves critical spatial features using activation maps |

| YOCO | Eye Disease Fundus | ResNet-50 | 91.60 | Enhances local and global diversity through subregion augmentation |

| CutMix | Eye Disease Fundus | ViT-B | 97.94 | Maintains spatial context while expanding sample variety |

| KeepMask | Multi-organ Segmentation | U-Net | +3.2% IoU vs baseline | Preserves foreground integrity, transplantable across models |

| KeepMix | Multi-class Segmentation | DeepLabV3 | +2.7% mIoU vs baseline | Perturbs background without affecting target organs |

Domain-Specific Considerations

The effectiveness of augmentation strategies varies significantly across medical specialties, organs, and imaging modalities [6]. Research indicates that the highest performance increases associated with data augmentation are observed for cardiac, pulmonary, and breast imaging applications [6]. This variability necessitates careful selection of augmentation techniques based on the specific clinical context and imaging characteristics.

For segmentation tasks, techniques like KeepMask and KeepMix have demonstrated particular value by ensuring the reliability of foreground structures (organs or lesions) while perturbing less clinically relevant background areas [19]. These approaches can be seamlessly transplanted across various model architectures and adapted for both binary and multi-class segmentation problems, making them particularly valuable for resource-constrained research environments [19].

Experimental Protocols for Augmentation Implementation

Protocol 1: Evaluation Framework for Augmentation Techniques

Objective: Systematically compare and evaluate data augmentation strategies for medical image classification.

Materials:

- Medical image dataset (e.g., DermaMNIST, BloodMNIST, OCTMNIST)

- Deep learning framework (PyTorch or TensorFlow)

- Computational resources (GPU recommended)

Methodology:

- Data Preparation:

- Split dataset into training (70%), validation (15%), and test (15%) sets

- Apply baseline normalization specific to imaging modality

- Establish un-augmented baseline performance metrics

Augmentation Implementation:

- Implement multiple augmentation strategies in parallel:

- Basic transformations: Rotation (±15°), flipping (horizontal/vertical), scaling (0.8-1.2x)

- Mix-based methods: Implement MixUp (α=0.2), CutMix (α=1.0), and SnapMix

- Advanced techniques: Implement KeepMask for segmentation tasks

- For each technique, generate augmented training sets (3-5x original size)

- Implement multiple augmentation strategies in parallel:

Model Training & Evaluation:

- Train identical model architectures on each augmented dataset

- Use consistent optimization parameters (learning rate: 0.001, batch size: 32)

- Validate on unaugmented validation set after each epoch

- Evaluate final models on held-out test set

- Perform statistical analysis of performance differences (p<0.05)

Robustness Assessment:

- Test model performance on corrupted data (MedMNIST-C benchmark)

- Evaluate cross-domain generalization where possible

- Assess fairness across patient subgroups (age, sex, ethnicity)

Deliverables: Comparative performance metrics, robustness analysis, computational efficiency assessment.

Protocol 2: KeepMask Augmentation for Medical Segmentation

Objective: Implement and validate the KeepMask augmentation technique to improve segmentation accuracy while preserving critical anatomical structures.

Materials:

- Medical image segmentation dataset with corresponding masks

- Segmentation model (U-Net, DeepLab, or similar)

- KeepMask implementation ( [19])

Methodology:

- Background-Foreground Separation:

- Use provided ground truth masks to identify foreground (target) regions

- Apply morphological operations to ensure mask continuity

- Generate inverse masks for background regions

KeepMask Application:

- Select two training samples (Image A, Mask A; Image B, Mask B)

- Extract foreground from Image A using Mask A

- Extract background from Image B using inverse of Mask B

- Combine foreground from A with background from B

- Apply analogous operation to corresponding segmentation masks

KeepMix Variant:

- For multi-class segmentation, extend KeepMask to preserve multiple foreground classes

- Implement class-specific preservation parameters based on clinical importance

- Ensure anatomical plausibility in combined images

Model Training:

- Incorporate KeepMask-augmented samples into training pipeline (25-50% of batch)

- Use compound loss function (Dice + Cross-Entropy)

- Implement progressive augmentation scheduling (increasing intensity over epochs)

Validation:

- Quantitative evaluation using Dice coefficient, IoU, and Hausdorff distance

- Qualitative assessment by clinical experts for anatomical plausibility

- Comparison with conventional augmentation baselines

Deliverables: Segmentation performance metrics, qualitative results, clinical validation report.

Implementation Workflows

Medical Image Augmentation Workflow: This diagram illustrates the comprehensive pipeline for implementing data augmentation in medical imaging applications, progressing through four critical phases from raw data to deployable model.

Augmentation Technique Pathways: This diagram outlines three distinct augmentation methodologies suitable for medical imaging applications, each addressing different aspects of model robustness and data scarcity.

The Scientist's Toolkit: Essential Research Reagents

Table 2: Essential Tools and Resources for Medical Imaging Augmentation Research

| Tool/Resource | Type | Function | Example Implementations |

|---|---|---|---|

| MedMNIST+ Dataset Collection | Benchmark Datasets | Standardized evaluation across multiple imaging modalities and tasks | DermaMNIST, BloodMNIST, OCTMNIST, PneumoniaMNIST [17] |

| KeepMask/KeepMix | Augmentation Algorithm | Preserves foreground integrity while augmenting background context | Custom implementation per [19] |

| MixUp/CutMix/SnapMix | Mix-based Augmentation | Generates novel samples by semantically combining images and labels | TorchIO, Albumentations, Custom PyTorch/TensorFlow [18] |

| Generative Models (GANs/VAEs) | Synthetic Data Generation | Creates entirely new training samples from data distribution | StyleGAN, DCGAN, VAE implementations [6] |

| Robustness Evaluation Benchmarks | Evaluation Framework | Assesses model performance under corruption and distribution shifts | MedMNIST-C, Corruption robustness metrics [17] [15] |

| Fairness Assessment Tools | Bias Evaluation | Measures performance disparities across patient subgroups | Group fairness metrics (demographic parity, equality of opportunity) [14] |

Data preprocessing and augmentation represent foundational components of robust medical imaging AI systems. The protocols and application notes presented herein demonstrate that strategic data augmentation can simultaneously address the interconnected challenges of model overfitting, data scarcity, and collection costs while enhancing generalization capabilities [6] [20]. The systematic implementation of these techniques enables researchers to extract maximum value from limited medical datasets while building more reliable and equitable diagnostic systems.

Future research directions should focus on developing organ-specific and modality-specific augmentation policies, advancing learnable augmentation techniques that adapt to dataset characteristics, and creating more sophisticated fairness-aware augmentation strategies that proactively address performance disparities across patient demographics [6] [14]. Additionally, the integration of generative AI for synthetic data generation presents promising avenues for creating diverse training examples while maintaining privacy through synthetic data generation [21]. As medical AI continues to evolve, systematic data augmentation methodologies will remain essential for building trustworthy, robust, and clinically applicable diagnostic systems.

Application Note

This document details standard protocols and application notes for the critical stages of modern drug development, with a specific focus on the role of data preprocessing and augmentation in medical imaging research. The methodologies outlined herein support the broader thesis that robust data handling is fundamental to generating reliable, reproducible results across the drug development pipeline, from initial target discovery to final clinical trial analysis.

Target Identification and Validation

Target identification is the foundational step in drug discovery, involving the pinpointing of biological entities (e.g., proteins, genes) whose modulation is expected to have a therapeutic effect. Target validation then confirms the role of this entity in the disease process and its potential as a druggable target. [22]

Cutting-edge techniques in this phase are increasingly reliant on artificial intelligence (AI) and high-throughput screening. For instance, one novel framework, optSAE + HSAPSO, integrates a stacked autoencoder (SAE) for robust feature extraction with a hierarchically self-adaptive particle swarm optimization (HSAPSO) algorithm for adaptive parameter optimization. This approach has demonstrated a 95.52% accuracy in drug classification and target identification on datasets from DrugBank and Swiss-Prot, while also reducing computational complexity to 0.010 seconds per sample. [23] Other standard laboratory protocols include:

- Design and Preliminary Screen of Antisense Oligonucleotides: Used to inhibit gene expression and validate target function. [22]

- A Robust siRNA Screening Approach: Enables large-scale transfection in multiple human cancer cell lines for functional genomics. [22]

- Click Chemistry for Target Engagement Studies: Confirms direct binding between a drug candidate and its intended target. [22]

- Fragment Screening via ¹⁹F NMR Spectroscopy: A powerful method for assessing target ligandability and identifying initial hit compounds. [22]

AI-Enhanced Druggability Assessment and Candidate Selection

The assessment of a target's "druggability" and the selection of candidate molecules have been transformed by AI. Traditional methods like support vector machines (SVMs) and XGBoost often struggle with the complexity and scale of modern pharmaceutical datasets. Deep learning models address these limitations by automatically learning intricate molecular patterns. [23]

The optSAE + HSAPSO framework is a prime example of this advancement. The stacked autoencoder compresses high-dimensional input data (e.g., molecular descriptors, protein sequences) into a lower-dimensional, informative representation. The HSAPSO algorithm then optimizes the model's hyperparameters, dynamically balancing exploration and exploitation during training. This results in a model with superior performance, faster convergence, and greater resilience to data variability compared to state-of-the-art methods. [23] This AI-driven prioritization significantly accelerates the identification of viable clinical candidates.

Medical Image Preprocessing and Augmentation in Clinical Research

Medical imaging is a critical biomarker in many clinical trials, particularly in neurology and oncology. Standardized image preprocessing is essential for reliable quantification. The Centiloid method, for example, provides a standardized scale for quantifying brain amyloid burden from PET scans, but has a high failure rate in populations with anatomical differences, such as individuals with Down syndrome (DS). [24]

A study developed and evaluated five alternative preprocessing pipelines (PPMs) to improve the success rate of Centiloid processing. These pipelines were constructed from combinations of steps including image origin reset, filtering, MRI bias correction, and MRI skull stripping. This approach successfully improved the processing success rate in a DS cohort from 61.3% to 95.6%, demonstrating the profound impact of tailored preprocessing on data yield and quality. [24]

Data augmentation, the artificial expansion of a dataset using transformations, is equally vital for training robust AI models in healthcare. It reduces data collection requirements, prevents model overfitting, and enhances the model's ability to generalize to real-world, imperfect images. [25] [11]

Table 1: Key Medical Image Preprocessing and Augmentation Techniques

| Technique Category | Specific Method | Primary Function | Common Tools / Libraries |

|---|---|---|---|

| Medical Image Reading | SimpleITK, ITK-SNAP, pydicom | Handles 3D medical formats (.dcm, .nii, .mha) and visualization. [8] | Python, ITK-SNAP |

| Preprocessing | Normalization (e.g., to Hounsfield Units), Standardization, Skull Stripping, Bias Field Correction | Optimizes data for neural networks; standardizes and harmonizes data across sites. [24] [8] | SPM, PMOD, SimpleITK |

| Geometric Augmentation | Flipping, Rotation, Translation, Cropping, Shearing | Teaches models invariance to object orientation and position. [11] | TensorFlow, PyTorch, OpenCV |

| Color & Lighting Augmentation | Brightness/Contrast Adjustment, Color Jittering, Grayscale Conversion | Makes models robust to varying acquisition conditions and camera types. [11] | TensorFlow, PyTorch, OpenCV |

| Advanced & Generative Augmentation | MixUp, CutMix, CutOut, Generative Adversarial Networks (GANs) | Combines multiple images or generates new, realistic synthetic images to improve generalization. [25] [11] | TensorFlow, PyTorch |

Clinical Trial Analysis and Trends in 2025

Analysis of clinical trial initiations in 2025 indicates a strong recovery and growth in the sector. According to data from TA Scan and GlobalData, the first half of 2025 saw 6,071 Phase I-III interventional trials begin globally, a 20% increase from the same period in 2024. This surge is driven by stronger biotech funding, fewer trial cancellations, and more efficient operational processes. [26] [27]

Table 2: Clinical Trial Initiations and Trends in H1 2025

| Metric | H1 2024 | H1 2025 | Change & Key Observations |

|---|---|---|---|

| Total Trial Initiations | 4,972 | 6,071 | +20% Year-over-Year (YoY), returning to 2021/pre-pandemic levels. [27] |

| Phase 1 Trials | 1,187 | 1,560 | +21% YoY, indicating a healthy early-stage pipeline. [27] |

| Phase 2 Trials | 1,711 | 2,278 | Significant jump, now the primary growth engine. [27] |

| Leading Therapeutic Area | Oncology | Oncology | Top 10 therapeutic areas are all oncology; Thoracic cancer saw the fastest growth (25%). [27] |

| Key Regional Hubs | - | - | North America (2,134 trials), Europe (1,488 trials), and East Asia/China (1,268 trials). [27] |

Visual aids are increasingly critical for communicating the results of these trials. As emphasized by regulatory guidelines, tools like visual synopses and graphical abstracts enhance comprehension for a diverse audience, including patients and healthcare professionals, thereby supporting patient-focused drug development. [28]

Protocols

Protocol 1: Preprocessing of Amyloid PET Imaging for Centiloid Standardization

This protocol outlines a procedure to improve the success rate of Centiloid processing for magnetic resonance imaging (MRI) and amyloid positron emission tomography (PET) scans, particularly in cohorts with anatomical variations.

1. Reagents and Materials

- T1-weighted MRI scan and corresponding amyloid PET scan (e.g., [¹¹C]PiB) in DICOM or NIfTI format.

- Function: Source medical images for analysis.

- Computing workstation with SPM8 (or later) software and MATLAB/Python environment.

- Function: Provides the computational platform for image processing.

- In-house white matter and gray matter only template (TPM_MRI.nii).

- Function: A brain-tissue-only registration target to improve warping accuracy.

- Montreal Neurological Institute (MNI) 152 template.

- Function: Standard brain atlas for spatial normalization.

2. Preprocessing Steps

- Step 1: Image Preparation. If using multiframe PET scans, perform frame-to-frame motion correction and average the frames (e.g., 50-70 min post-injection) to create a single-frame image. [24]

- Step 2: Preprocessing Combination (PPM). Apply a combination of the following preprocessing steps to the MRI scan to enhance subsequent coregistration and warping:

- Image origin reset.

- Filtering.

- MRI bias field correction.

- MRI skull stripping. [24]

- Step 3: Rigid Registration to Template. Use the SPM8 "Coregister" function to rigidly align the (preprocessed) subject MRI scan to the MNI152 template. Use the synthetic TPM_MRI.nii (GM + 1.7*WM) as the reference image. [24]

- Step 4: PET to MRI Coregistration. Rigidly register the subject PET image to the aligned subject MRI image using SPM8 "Coregister". [24]

- Step 5: Non-linear Warping. Perform non-linear warping of the MRI image to the MNI152 template using the SPM8 Unified Segmentation method. Apply the resulting deformation parameters to the co-registered PET image. [24]

- Step 6: Quantification. Extract tracer concentrations from the warped PET image using the standard Centiloid cortical region of interest (ROI) and the whole cerebellum ROI. Calculate the cortical-to-cerebellum ratio and convert to Centiloid units via the established linear transformation. [24]

3. Analysis and Quality Assurance Evaluate the success of processing by checking the alignment of the warped images with the MNI template and the plausibility of the extracted ROI values. The implementation of this protocol with five accepted PPMs has been shown to increase processing success rates from 61.3% to 95.6% in a Down syndrome cohort. [24]

Centiloid Preprocessing Workflow

Protocol 2: Deep Learning-Based Data Augmentation for Medical Image Analysis

This protocol describes the application of data augmentation techniques to improve the training of deep learning models for medical imaging tasks such as classification and segmentation.

1. Reagents and Materials

- Curated dataset of medical images (e.g., CT, MRI, X-ray) with corresponding labels.

- Function: The base training data for the model.

- Python programming environment with deep learning libraries (e.g., TensorFlow/Keras, PyTorch, OpenCV).

- Function: Provides the functions and classes to implement augmentation.

- GPU-accelerated computing hardware.

- Function: Speeds up the training process, especially when using real-time augmentation.

2. Procedure

- Step 1: Define Augmentation Strategy. Select a set of transformations suitable for the medical task and imaging modality. A comprehensive strategy often includes:

- Geometric Transformations: Random horizontal/vertical flip, random rotation (e.g., ±15°), random translation (shift), random zoom. [11]

- Photometric Transformations: Random adjustments to brightness, contrast, hue, and saturation. Random application of blur or sharpening filters. [11]

- Advanced Techniques: Consider using CutOut (random erasing) to simulate occlusions, or MixUp/CutMix to blend images and labels. [25] [11]

- Step 2: Implementation. Augmentation can be implemented in two ways:

- Offline/Ahead-of-Time: Apply transformations to the entire dataset and save the expanded set of images to disk. This is simple but storage-intensive.

- Online/Real-Time: Integrate the transformation functions into the data loader, so new random variations are created on-the-fly during each training epoch. This is more efficient and provides nearly infinite variability. [11]

- Step 3: Integration and Training. Feed the augmented images into the deep learning model (e.g., a Convolutional Neural Network) during the training phase. Monitor performance on a separate, non-augmented validation set to ensure the model is generalizing and not overfitting to the augmented artifacts.

3. Analysis and Notes The success of augmentation is evaluated by comparing the model's performance on a held-out test set with and without the use of augmentation. Key metrics include accuracy, sensitivity, specificity, and area under the ROC curve. Effective augmentation should lead to higher performance and better generalization to unseen clinical data. [25]

Data Augmentation Strategy

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools and Materials for Featured Protocols

| Item | Function / Application |

|---|---|

| ITK-SNAP / SimpleITK | Software and library for reading, visualizing, and processing 3D medical images (e.g., DICOM, NIfTI). [8] |

| SPM (Statistical Parametric Mapping) | A widely used software package for the analysis of brain imaging data sequences, essential for the Centiloid protocol. [24] |

| TensorFlow / PyTorch | Open-source libraries for building and training deep learning models, including the implementation of data augmentation pipelines. [11] |

| siRNA / Antisense Oligonucleotides | Research reagents used in target validation to selectively silence or inhibit the expression of a candidate gene. [22] |

| Fragment Libraries (for ¹⁹F NMR) | Curated collections of small, simple molecules used in fragment-based drug discovery to identify initial hits against a target. [22] |

| Clinical Trials Database (e.g., GlobalData, TA Scan) | Intelligence platforms used for analyzing clinical trial trends, sponsor activities, and regional growth patterns. [26] [27] |

A Technical Deep Dive: Core and Advanced Augmentation Techniques

Data augmentation is a fundamental strategy in medical imaging research to overcome limitations posed by small, imbalanced datasets and to improve the generalization of deep learning models. By applying label-preserving transformations, researchers can artificially expand the diversity and size of training data. This document details the application notes and experimental protocols for basic geometric and photometric transformations, framed within a broader thesis on data preprocessing and augmentation. These techniques are essential for building robust, clinically viable AI systems for classification, segmentation, and detection tasks [6] [18].

Transformation Definitions and Specifications

Geometric Transformations

Geometric transformations modify the spatial arrangement of pixels in an image. They are crucial for teaching models to be invariant to changes in object orientation and position, which is vital in medical imaging where anatomy can appear in different views [29] [30].

- Rotation: Rotates the image around a specified axis by a defined angle. In 2D, this uses a rotation matrix. For 3D medical volumes (e.g., MRI, CT), rotation can be applied around different planes (axial, coronal, sagittal) [29] [31].

- Flipping/Reflection: Creates a mirrored version of the image. Horizontal flipping reverses the order of pixels in each row, while vertical flipping reverses the order of pixels in each column [29] [31].

- Translation: Shifts all pixels of an image horizontally, vertically, or both, according to specified offset values (

tx,ty). This can simulate variations in the positioning of an organ or lesion within the image frame [29] [31]. - Scaling: Enlarges or reduces the image dimensions. Isometric scaling is often preferred in medical imaging to preserve anatomical proportions [29] [31].

Photometric Transformations

Photometric transformations alter the pixel intensity values to make models robust to changes in image acquisition, such as variations in lighting and scanner settings [32].

- Contrast Adjustment: Modifies the difference between the darkest and lightest areas of an image. In X-ray imaging, this can emulate changes in acquisition parameters like kilovoltage (kV), which directly affect image contrast [32].

- Brightness Adjustment: Adds or subtracts a constant value to all pixel intensities, simulating changes in exposure or the number of photons (mAs) [32].

Table 1: Standard Parameters for Basic Transformations in Medical Imaging

| Transformation Category | Specific Technique | Common Parameter Ranges | Medical Imaging Considerations |

|---|---|---|---|

| Geometric | Rotation | ±5° to 15° (conservative); ±180° (broad) | Small angles often suffice; large rotations may create anatomically implausible images [31]. |

| Flipping (2D) | Horizontal and/or Vertical | Anatomical symmetry determines applicability (e.g., horizontal flip often valid for brain MRI) [29] [31]. | |

| Translation | ±10% to 20% of image dimension | Useful for centering objects; requires padding for empty regions [29] [31]. | |

| Scaling (Zoom) | 0.8x to 1.2x (typical) | Simulates differences in distance to object or field of view [31]. | |

| Photometric | Contrast Adjustment | Factor range: [0.7, 1.3] | Must preserve critical diagnostic features; avoid extreme values that mask lesions [32]. |

| Brightness Adjustment | Offset range: [-0.2, 0.2] (normalized) | Simulates variations in radiation dose (mAs) or scanner gain [32]. |

Experimental Protocols

Protocol 1: Evaluating a Single Transformation

Objective: To assess the individual impact of a specific geometric or photometric transformation on model performance for a defined medical imaging task (e.g., tumor classification).

Materials:

- Medical image dataset (e.g., brain tumor MRI [18])

- Deep learning framework (e.g., PyTorch, TensorFlow)

- Computing hardware with GPU acceleration

Methodology:

- Dataset Partitioning: Split the dataset into training, validation, and test sets, ensuring no data leakage.

- Baseline Model Training: Train a baseline model (e.g., ResNet-50) using only the original training images.

- Augmented Model Training: Train an identical model architecture from scratch, applying the target transformation (e.g., random rotation within ±10°) to the training images in each epoch.

- Performance Comparison: Evaluate both models on the same, untouched test set. Compare key metrics such as accuracy, sensitivity, specificity, and Area Under the Curve (AUC).

Protocol 2: Benchmarking a Transformation Pipeline

Objective: To systematically compare the performance of multiple transformation strategies and their combinations against a baseline.

Materials:

- As in Protocol 1.

Methodology:

- Define Strategies: Establish several training conditions:

- Baseline: No augmentation.

- Geometric-only: A combination of rotation, flipping, and scaling.

- Photometric-only: A combination of contrast and brightness adjustments.

- Combined: All geometric and photometric transformations applied.

- Consistent Training: Train separate models for each strategy, keeping all other hyperparameters (learning rate, number of epochs, etc.) constant.

- Comprehensive Evaluation: Compare final performance on the test set. The systematic review by Sciencedirect suggests that combining affine (geometric) and pixel-level (photometric) transformations often achieves an excellent trade-off between performance and complexity [6].

The following workflow diagrams the benchmarking process for data augmentation strategies.

Performance Benchmarks

Empirical evidence from recent literature demonstrates the significant benefits of data augmentation. A comprehensive systematic review of over 300 articles found data augmentation to be beneficial across all organs, modalities, and tasks, with the highest performance increases noted for heart, lung, and breast applications [6]. Furthermore, advanced mix-based strategies show considerable promise.

Table 2: Performance Impact of Advanced Mix-based Augmentation Strategies (Adapted from MediAug Benchmark [18])

| Backbone Model | Augmentation Strategy | Brain Tumor Classification Accuracy (%) | Eye Disease Classification Accuracy (%) |

|---|---|---|---|

| ResNet-50 | MixUp | 79.19 | - |

| ResNet-50 | YOCO | - | 91.60 |

| ViT-B | SnapMix | 99.44 | - |

| ViT-B | CutMix | - | 97.94 |

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Medical Image Augmentation

| Item | Function/Application | Example/Notes |

|---|---|---|

| PyTorch / TensorFlow | Deep Learning Frameworks | Provide modular APIs for implementing data augmentation pipelines (e.g., torchvision.transforms). |

| OpenCV (cv2) | Computer Vision Library | Used for implementing core transformation functions like cv2.warpAffine for geometric manipulations [29]. |

| Scipy | Scientific Computing | Offers multi-dimensional image processing functions like ndimage.zoom and ndimage.rotate for 3D medical volumes [31]. |

| NiBabel | Medical Image I/O | Python library for reading and writing neuroimaging data formats (e.g., NIfTI) [31]. |

| DICOM Standard | Medical Image Format & Metadata | The universal standard for storing and transmitting medical images and their critical metadata (e.g., kV, mAs) [32] [32]. |

Implementation Workflow

A standard implementation workflow for applying basic transformations in a training pipeline involves both geometric and photometric steps, as visualized below.

Pixel-level image manipulation forms a critical foundation for data preprocessing and augmentation in medical imaging research. These techniques—encompassing noise injection, blurring, and sharpening with kernel filters—directly address key challenges in developing robust deep learning models, including limited dataset sizes, variable image quality, and the need for enhanced feature visibility. Within a comprehensive data augmentation pipeline, these methods serve to artificially expand training datasets, improve model generalization, and ultimately enhance diagnostic accuracy for researchers, scientists, and drug development professionals working with medical imaging data. This document provides detailed application notes and experimental protocols for implementing these advanced pixel-level techniques in medical research contexts, with a focus on quantitative outcomes and reproducible methodologies.

Technique-Specific Quantitative Performance

The efficacy of pixel-level techniques is quantitatively assessed through standardized image quality metrics. The following table summarizes the performance characteristics of noise reduction and edge enhancement techniques as established in recent research.

Table 1: Quantitative Performance of Denoising and Edge Enhancement Techniques

| Technique Category | Specific Method | Performance Metrics | Key Findings |

|---|---|---|---|

| Hybrid Denoising | Adaptive Median Filter (AMF) + Modified Decision-Based Median Filter (MDBMF) | PSNR: Improvement up to 2.34 dBMSE: Up to 15% improvementSSIM: Improvement up to 0.07IEF: Improvement >20%FOM: 0.68, VIF: 0.61 | Significantly outperforms BPDF, AT2FF, and SVMMF; effectively preserves edges and structural similarity [33]. |

| Deep Learning Denoising | Fully Convolutional Neural Network (FCNN) with Wavelet Filter | Segmentation Accuracy: 98.84%BMD Correlation: 0.9928 | Outperforms standalone noise reduction algorithms for femur segmentation in DXA images [34]. |

| Edge Enhancement | Endoscopic Edge Enhancement (Various Levels) | Sharpness Increase: Factor of 3Noise Increase: Factor of 4 | Measured level range: 0 to 1.3; enhances perceived sharpness but amplifies noise [35]. |

Detailed Experimental Protocols

Protocol 1: Hybrid Denoising for High-Density Impulse Noise

This protocol outlines the application of a hybrid Adaptive Median Filter (AMF) and Modified Decision-Based Median Filter (MDBMF) algorithm, designed to remove high-density salt-and-pepper noise (10-90%) while preserving critical edge information in medical images [33].

Materials and Equipment

- Source Images: Nine benchmark images, including standard and medical datasets (e.g., Chest and Liver images) [33].

- Noise Introduction: Algorithm for adding bipolar impulse noise with varying densities (10% to 90%) [33].

- Computing Environment: MATLAB or Python with image processing工具箱.

Step-by-Step Procedure

Noise Detection with AMF:

- For each pixel in the noisy image, select an initial filtering window (e.g., 3x3).

- Within the window, calculate the median, minimum, and maximum intensity values.

- If the median value is not an extreme value (neither min nor max), proceed to the replacement check. If it is, increase the window size and repeat until a maximum window size is reached.

- This adaptive process dynamically identifies pixels corrupted by impulse noise [33].

Noise Removal with MDBMF:

- For a pixel identified as noisy, process it with the MDBMF.

- The filter replaces the corrupted pixel value with the median of its non-noisy neighbors.

- If all pixels in the window are noisy, the mean of the window is used as the replacement value.

- This selective recovery ensures that intact regions of the image remain unaffected [33].

Performance Validation:

- Compare the denoised output against the original, noise-free image.

- Calculate quantitative metrics including PSNR, MSE, SSIM, IEF, FOM, and VIF to validate performance against state-of-the-art methods [33].

Protocol 2: Edge Enhancement and Sharpness Quantification

This protocol provides a method to objectively quantify the level of edge enhancement applied by a video processor and measure its effects on image sharpness and noise, particularly relevant for endoscopic and laryngoscopic imaging [35].

Materials and Equipment

- Test Target: Rez checker target matte (or similar standardized test chart with slanted edges and gray patches) [35].

- Imaging System: Flexible digital endoscope connected to its video processor with adjustable edge enhancement settings [35].

- Image Capture: Frame grabber (e.g., Epiphan DVI2USB3) to record uncompressed images (e.g., 24-bit RGB bitmap) from the processor's output [35].

- Analysis Software: Custom MATLAB script for ISO12233-compliant analysis [35].

Step-by-Step Procedure

Image Acquisition:

- Position the endoscope tip at a representative operational distance (e.g., 30mm) from the test target.

- Adjust illumination to avoid pixel saturation in the brightest gray patch.

- For each level of edge enhancement (from zero to maximum), capture an image of the test target.

- Ensure consistent white balance and avoid ambient light interference [35].

Image Analysis and Linearization:

- Automatically identify Regions of Interest (ROIs) containing slanted edges and gray patches.

- Convert RGB values to luminance (Y) using the formula:

Y = 0.2125*R + 0.7154*G + 0.0721*B. - Estimate the system's gamma value (

γ) by fitting the log of normalized luminance values from the gray patches against their known status-T densities. - Linearize the luminance values in the edge ROIs using:

Y_lin = 255 * (Y/255)^(1/γ)[35].

Quantifying Edge Enhancement Level:

- Measure the Step Response (SR) from the linearized slanted-edge ROI.

- Subtract the SR of the image with no edge enhancement from the SR of the image with enhancement applied.

- The Edge Enhancement Level is calculated as the peak-to-peak difference of the resulting signal, normalized by the step size [35].

Measuring Sharpness and Noise:

- Compute the Modulation Transfer Function (MTF) from the linearized slanted-edge data.

- Characterize sharpness by reporting the spatial frequency at which the MTF decays to 50%.

- Measure noise by calculating the weighted sum of variances from the luminance and chrominance channels on a uniform gray patch [35].

Kernel Filter Operations: A Foundational Workflow

The following diagram illustrates the universal workflow for applying a kernel filter to a medical image, which forms the basis for many blurring and sharpening operations.

Diagram 1: Kernel Filter Application Workflow (64 characters)

Table 2: Common Kernel Filters and Their Medical Imaging Applications

| Kernel Type | Kernel Matrix | Primary Effect | Typical Medical Application |

|---|---|---|---|

| Identity | [0, 0, 0; 0, 1, 0; 0, 0, 0] |

Leaves image unchanged. | Baseline for filter development [36]. |

| Sharpening | [0, -1, 0; -1, 5, -1; 0, -1, 0] |

Emphasizes differences in adjacent pixels, increasing perceived vividness and edge acuity. | Enhancing subtle edges in radiographs or retinal scans prior to analysis [36]. |

| Unsharp Masking (Sample) | [-1/8, -1/8, -1/8; -1/8, 2, -1/8; -1/8, -1/8, -1/8] |

Enhances edges in all directions by subtracting a blurred version from the original [37]. | General edge enhancement for diagnostic clarity [37] [35]. |

| Gaussian Blur (3x3 approx.) | [1/16, 1/8, 1/16; 1/8, 1/4, 1/8; 1/16, 1/8, 1/16] |

De-emphasizes pixel differences, reducing noise and creating a smoothing effect. | Preprocessing for noise reduction prior to segmentation or edge detection [38] [36]. |

| Mean Blur | [1/9, 1/9, 1/9; 1/9, 1/9, 1/9; 1/9, 1/9, 1/9] |

Simplest smoothing filter; replaces each pixel with the average of its neighbors. | Basic noise reduction (can blur edges significantly) [38]. |

| Edge Detection (Horizontal) | [1, 0, -1; 2, 0, -2; 1, 0, -1] (Sobel) |

Highlights horizontal edges and lines. | Isolating specific anatomical structures oriented horizontally [37] [36]. |

| Edge Detection (Vertical) | [1, 2, 1; 0, 0, 0; -1, -2, -1] (Sobel) |

Highlights vertical edges and lines. | Isolating specific anatomical structures oriented vertically [37] [36]. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Medical Image Filtering Experiments

| Item Name | Function/Application | Example/Specification |

|---|---|---|

| Standardized Test Target | Objective quantification of sharpness (MTF), noise, and edge enhancement levels. | Rez checker target matte (Imatest) with slanted edges and gray patches [35]. |

| Frame Grabber | Capturing uncompressed, high-fidelity images directly from medical video processors for analysis. | Epiphan DVI2USB3.0 [35]. |

| Medical Image Datasets | Benchmarking and validating algorithm performance on clinically relevant data. | DermaMNIST, BloodMNIST, OCTMNIST, Fitzpatrick17k, custom clinical datasets (e.g., DXA femur images) [34] [17]. |

| Computing Environment & Libraries | Implementing, training (for DL methods), and applying filtering algorithms. | Python with Skimage, PyTorch, TorchIO; MATLAB with Image Processing Toolbox [39]. |

| Quantitative Metrics Software | Standardized calculation of image quality metrics to enable fair comparison between techniques. | Custom MATLAB/Python scripts for PSNR, SSIM, MTF, etc., compliant with standards like ISO12233 [35]. |

Integrated Preprocessing and Augmentation Workflow

The most effective application of these pixel-level techniques is often within a sequential pipeline designed to prepare medical images for deep learning models. The following diagram depicts a robust integrated workflow that combines preprocessing and augmentation.

Diagram 2: Integrated Preprocessing and Augmentation Pipeline (67 characters)

Generative Artificial Intelligence (GenAI) has emerged as a transformative force in scientific research, particularly in the field of medical imaging where data scarcity, class imbalance, and privacy concerns are significant obstacles [6] [40]. These models offer a powerful solution for synthetic data creation, enabling researchers to augment limited datasets and accelerate the development of robust, generalizable AI systems [41]. The field has witnessed rapid evolution from early Generative Adversarial Networks (GANs) and Variational Autoencoders (VAEs) to the current dominance of diffusion models, each offering distinct advantages for scientific image synthesis [42] [43]. Within medical imaging research, these technologies are primarily applied to overcome data limitations through realistic data augmentation, to generate rare or critical pathological cases for training, and to create privacy-preserving synthetic datasets for method development and sharing [6] [44]. This article details the core architectures, their specific applications, and provides practical experimental protocols for implementing generative AI within medical imaging research workflows.

Core Generative Architectures: Mechanisms and Comparative Analysis

Architectural Foundations and Workflows

Variational Autoencoders (VAEs) operate on the principle of probabilistic latent variable models. They learn to encode input data into a lower-dimensional latent space characterized by a known probability distribution (typically Gaussian) and then decode samples from this space back to the original data domain [42] [45]. This enforced structure of the latent space facilitates smooth interpolation and data generation. VAEs are trained by maximizing the evidence lower bound (ELBO), which balances reconstruction fidelity with the closeness of the latent distribution to its prior [42].

Generative Adversarial Networks (GANs) employ an adversarial training framework between two neural networks: a generator and a discriminator. The generator creates synthetic images from random noise, while the discriminator learns to distinguish between real and generated images [46] [47]. This competition drives both networks to improve, resulting in the generator producing highly realistic data. Architectures like StyleGAN allow for fine-grained control over image attributes by manipulating the latent space [42] [47].

Diffusion Models generate data through a iterative denoising process. They operate via a forward process that gradually adds noise to data until it becomes pure noise, and a reverse process where a neural network learns to progressively remove this noise to reconstruct the data from random noise [43]. Models like Denoising Diffusion Probabilistic Models (DDPMs) and Latent Diffusion Models (LDMs) have set new standards for image quality and diversity [42] [43]. LDMs, in particular, perform this diffusion in a compressed latent space, significantly improving computational efficiency [43].

The workflows of these three fundamental architectures are visualized below.

Quantitative Performance Comparison

The selection of an appropriate generative model requires careful consideration of performance trade-offs. The following table synthesizes quantitative findings from comparative evaluations across multiple scientific imaging domains, including microCT scans, composite fibers, and plant root images [42].

Table 1: Comparative Performance of Generative Models in Scientific Imaging

| Model Architecture | Perceptual Quality (FID) | Structural Coherence (SSIM) | Training Stability | Computational Cost | Key Strengths |

|---|---|---|---|---|---|

| GANs (e.g., StyleGAN) | High (Low FID) | High | Low | Moderate | High perceptual quality, fine-grained control [42] [46] |

| VAEs | Moderate (Higher FID) | Moderate | High | Low | Stable training, meaningful latent space, fast generation [42] [45] |

| Diffusion Models | Very High (Lowest FID) | High | High | Very High | State-of-the-art image quality, diversity, avoidance of mode collapse [42] [43] |

Evaluation metrics critical for scientific validation include Frechet Inception Distance (FID) for perceptual quality, Structural Similarity Index (SSIM) for structural coherence, and Learned Perceptual Image Patch Similarity (LPIPS) for assessing feature-level diversity [42]. It is crucial to note that quantitative metrics alone are insufficient for scientific applications; domain-expert validation remains essential to verify the scientific relevance and accuracy of generated images [42] [45].

Application Notes: Use Cases in Medical Imaging Research

Data Augmentation for Enhanced Model Robustness

Generative models significantly improve the performance and robustness of deep learning models in medical image analysis, particularly in data-scarce environments. A systematic review of over 300 articles found consistent benefits across all organs, modalities, and tasks, from classification to segmentation [6]. The strategic application of different generative models can mitigate specific challenges.

For instance, a 2025 study on lower limb MRI segmentation demonstrated that data augmentation dramatically improves model resilience to motion artifacts [48]. Models trained with MRI-specific augmentations maintained segmentation quality (Dice score: 0.79±0.14 vs. 0.58±0.22 without augmentation) and measurement precision (Mean Absolute Deviation: 5.7±9.5° vs. 20.6±23.5°) even under severe artifact conditions [48].

The DreamOn framework exemplifies advanced augmentation, using a conditional GAN to generate REM-dream-inspired interpolations between image classes [47]. This approach creates challenging samples near decision boundaries, resulting in substantial improvements in classification accuracy under high-noise conditions compared to standard augmentation strategies [47].

Overcoming Ultra Low-Data Regimes

Generative AI enables complex medical image analysis in ultra low-data regimes where annotated samples are exceptionally scarce. The GenSeg framework addresses this challenge through a generative deep learning approach that produces high-quality image-mask pairs optimized specifically for segmentation performance [44].

Using multi-level optimization, GenSeg generates synthetic data that directly improves segmentation outcomes, demonstrating strong generalization across 11 medical image segmentation tasks and 19 datasets [44]. When training with only 50-100 samples, models augmented with GenSeg achieved performance improvements of 10-20% absolute percentage points compared to baseline models, while matching baseline performance with 8-20 times fewer labeled samples [44].

Domain-Specific Image Synthesis and Evaluation

Clinical evaluation of synthetic medical images requires rigorous validation protocols. The Clinical Evaluation of Medical Image Synthesis (CEMIS) protocol provides a comprehensive framework for assessing synthetic image quality, diversity, realism, and clinical utility [45].

In a case study on wireless capsule endoscopy, the TIDE-II model (a VAE-based architecture) generated high-resolution synthetic images of inflammatory bowel disease that were systematically evaluated by 10 international WCE specialists [45]. The evaluation assessed texture quality, anatomical structure plausibility, and diagnostic relevance, demonstrating that generative models can produce clinically plausible images for rare conditions [45].

Table 2: Research Reagent Solutions for Generative AI in Medical Imaging

| Reagent Category | Specific Examples | Function in Research Pipeline |

|---|---|---|

| Generative Model Architectures | StyleGAN, Stable Diffusion, DDPM, VAE | Core engines for synthetic data generation; choice depends on fidelity needs and computational constraints [42] [43] [46] |

| Evaluation Metrics | FID, SSIM, LPIPS, CLIPScore | Quantitative assessment of image quality, diversity, and semantic alignment [42] |

| Domain-Specific Datasets | BUSI (Breast Ultrasound), Kvasir-Capsule, BraTS (Brain Tumor) | Benchmark datasets for training and validation; often include expert annotations [47] [45] |

| Clinical Validation Protocols | CEMIS, Visual Turing Tests, Expert Consensus Reviews | Essential for verifying clinical relevance and utility of synthetic images [45] |

| Segmentation Frameworks | nnU-Net, DeepLab, UNet | Downstream task models for evaluating utility of synthetic data in applications [48] [44] |

Experimental Protocols

Protocol: Evaluating Augmentation Strategies for AI Robustness

Objective: To systematically evaluate how different data augmentation strategies affect a deep learning model's segmentation performance under variable artifact severity [48].

Materials and Methods:

- Imaging Data: Axial T2-weighted MR images of lower limbs (hips, knees, ankles)

- AI Model: nnU-Net architecture for automatic bone segmentation and torsional angle quantification

- Training Groups: Three models trained with (1) no augmentation, (2) standard nnU-Net augmentations, and (3) standard plus MRI-specific artifact simulations

- Test Set: 600 MR image stacks from 20 healthy participants, each imaged five times under standardized motion conditions

- Artifact Grading: Two radiologists independently grade artifact severity as none, mild, moderate, or severe

Experimental Workflow:

- Acquire baseline MRI scans without induced motion

- Acquire additional scans under breath-synchronized foot motion and gluteal contraction conditions

- Train three separate nnU-Net models with different augmentation strategies

- Evaluate segmentation quality using Dice Similarity Coefficient (DSC)

- Compare torsional angle measurements between manual and automatic methods using Mean Absolute Deviation (MAD) and Intraclass Correlation Coefficient (ICC)

Validation Metrics:

- Segmentation quality: Dice Similarity Coefficient (DSC)

- Measurement accuracy: Mean Absolute Deviation (MAD), Intraclass Correlation Coefficient (ICC), Pearson's correlation coefficient (r)

- Statistical analysis: Linear Mixed-Effects Model to account for repeated measures [48]

Protocol: Clinical Evaluation of Synthetic Image Quality

Objective: To clinically evaluate the quality, diversity, and diagnostic utility of synthetic medical images using a standardized protocol [45].

Materials and Methods:

- Synthetic Data Generation: TIDE-II model (VAE-based) trained on WCE images from KID 2 and Kvasir-Capsule datasets

- Evaluation Framework: Clinical Evaluation of Medical Image Synthesis (CEMIS) protocol

- Experts: 10 WCE specialists with 5-27 years of experience

- Assessment Procedures: Five distinct evaluation modes (A1-A5)

Experimental Workflow:

- Individual Image Assessment (A1): Experts evaluate 50 real and synthetic images for quality, texture, anatomy, and pathology likelihood using Likert scales

- Real vs. Synthetic Discrimination (A2): Experts attempt to distinguish real from synthetic images in paired comparisons

- Similarity Assessment (A3): Experts evaluate similarity between real and corresponding synthetic images

- Group Plausibility Ranking (A4): Experts rank groups of images by plausibility

- Diagnostic Accuracy Assessment (A5): Experts identify pathological findings in synthetic images and rate diagnostic difficulty

Validation Metrics:

- Image Quality Score (IQS): Mean rating across quality, texture, and anatomy

- Realism Score: Percentage of synthetic images mistaken for real

- Plausibility Score: Ranking of image groups by clinical plausibility

- Diagnostic Accuracy: Correct identification of pathological findings in synthetic images [45]

The following diagram illustrates the key decision points and methodological considerations for researchers implementing generative AI solutions for medical imaging challenges.

Generative AI models have fundamentally expanded the possibilities for medical imaging research by addressing critical data limitations. GANs, VAEs, and diffusion models each offer distinct advantages, with the optimal choice depending on specific research requirements regarding image quality, training stability, and computational resources [42]. The implementation of rigorous experimental protocols and comprehensive evaluation frameworks like CEMIS is essential for ensuring the scientific validity and clinical utility of synthetic data [45]. As these technologies continue to mature, future developments will likely focus on improved model interpretability, reduced computational costs, standardized verification protocols, and enhanced capabilities for cross-modality synthesis [42] [40]. By integrating these generative approaches into research workflows, scientists can accelerate innovation in medical image analysis while navigating the challenges of data privacy, scarcity, and imbalance.

In medical imaging, deep learning models face significant challenges due to limited annotated datasets, class imbalance, and the need for robust generalization in clinical practice. Data augmentation has emerged as a crucial strategy to artificially expand training datasets and improve model performance. While traditional augmentation techniques involve basic image manipulations, and generative approaches create entirely new samples, hybrid strategies combine the strengths of both paradigms. These integrated approaches range from simple combinations of transformations to sophisticated learning-based methods that generate challenging interpolations, offering powerful solutions to address dataset limitations and enhance model robustness across various medical imaging modalities and clinical tasks [6] [16].

The unique characteristics of medical images—including their anatomical consistency, diagnostic significance of subtle features, and domain-specific artifacts—necessitate specialized augmentation approaches. Hybrid methods effectively bridge the gap between the computational efficiency of traditional techniques and the data diversity offered by generative models, enabling more effective regularization of deep neural networks without compromising anatomical plausibility [6]. This approach is particularly valuable for enhancing performance on underrepresented classes, improving segmentation accuracy of anatomical structures, and increasing resistance to image degradation commonly encountered in clinical settings [47] [48].

Comparative Analysis of Hybrid Augmentation Techniques

Performance Metrics Across Medical Imaging Tasks

Table 1: Quantitative Performance of Hybrid Augmentation Methods Across Medical Imaging Modalities

| Augmentation Method | Architecture | Dataset | Task | Performance Metrics |

|---|---|---|---|---|

| MixUp [49] | ResNet-50 | Brain MRI | Tumor Classification | Accuracy: 79.19% |

| SnapMix [49] | ViT-B | Brain MRI | Tumor Classification | Accuracy: 99.44% |

| YOCO [49] | ResNet-50 | Eye Fundus | Disease Classification | Accuracy: 91.60% |

| CutMix [49] | ViT-B | Eye Fundus | Disease Classification | Accuracy: 97.94% |

| DreamOn [47] | ResNet-18 | Breast Ultrasound | Classification | Substantial improvement in high-noise robustness |

| RPS [50] | Multiple CNNs/Transformers | Lung CT | Cancer Diagnosis | Accuracy: 97.56%, AUROC: 98.61% |

| MRI-Specific Augmentation [48] | nnU-Net | Lower Limb MRI | Segmentation | DSC: 0.79±0.14 (severe artifacts) |

Methodological Characteristics and Clinical Applications

Table 2: Characteristics and Applications of Hybrid Augmentation Strategies

| Technique | Core Mechanism | Advantages | Clinical Considerations | Optimal Use Cases |

|---|---|---|---|---|

| MixUp [47] [49] | Linear interpolation of image-label pairs | Smooths decision boundaries, prevents overconfidence | May blur fine anatomical details; requires careful parameter tuning | General classification tasks with sufficient inter-class separation |

| CutMix [50] [49] | Replaces image regions with patches from other images | Preserves spatial context, maintains localization information | Patch boundaries may create artificial edges; label proportionality critical | Organ segmentation, lesion detection requiring spatial awareness |