Cost-Effectiveness in Modern Oncology: An Economic Analysis of Genomic, AI-Driven, and Traditional Testing Approaches

This article provides a comprehensive analysis of the cost-effectiveness of evolving cancer testing modalities, tailored for researchers, scientists, and drug development professionals.

Cost-Effectiveness in Modern Oncology: An Economic Analysis of Genomic, AI-Driven, and Traditional Testing Approaches

Abstract

This article provides a comprehensive analysis of the cost-effectiveness of evolving cancer testing modalities, tailored for researchers, scientists, and drug development professionals. It explores the foundational economic principles and the shifting paradigm towards precision medicine. The analysis delves into methodological frameworks for economic evaluation and presents real-world applications across various cancer types, including non-small cell lung cancer (NSCLC), colorectal cancer, and prostate cancer. It further addresses key challenges in implementation and optimization strategies, such as biomarker prevalence and technology integration. Finally, the article offers a comparative validation of testing strategies—from comprehensive genomic profiling and liquid biopsy to artificial intelligence (AI)-assisted pathology—synthesizing evidence on their value in improving patient outcomes and optimizing healthcare resource allocation.

The Economic Imperative and Evolving Paradigm of Cancer Diagnostics

The Rising Global Burden of Cancer and Healthcare Costs

Cancer remains a leading cause of global mortality, with an estimated 10 million deaths annually worldwide, creating substantial economic burdens on healthcare systems [1]. National costs for cancer care in the United States alone are projected to exceed $245 billion by 2030 [2]. This economic burden stems from multiple factors, including late-stage diagnosis, expensive targeted therapies, and the complex management of advanced disease. In this challenging landscape, cost-effectiveness analysis has emerged as a critical tool for evaluating healthcare interventions, balancing clinical benefits against economic constraints.

The emergence of genomic technologies represents a paradigm shift in oncology, offering unprecedented opportunities for personalized medicine through improved risk stratification, earlier detection, and more targeted treatment approaches. This article provides a comparative analysis of the cost-effectiveness of various cancer testing modalities, including comprehensive genomic profiling, multicancer early detection tests, and genetic risk-stratified screening, to inform researchers, scientists, and drug development professionals about their value in contemporary cancer care.

Comparative Analysis of Cancer Testing Modalities

Table 1: Cost-Effectiveness Comparison of Advanced Cancer Testing Approaches

| Testing Modality | Cancer Type/Context | Incremental Cost-Effectiveness Ratio (ICER) | Key Clinical Benefits |

|---|---|---|---|

| Comprehensive Genomic Profiling (CGP) | Advanced Non-Small-Cell Lung Cancer (US) | $174,782 per life-year gained [3] | Improved average overall survival by 0.10 years compared to small panel testing [3] |

| Comprehensive Genomic Profiling (CGP) | Advanced Non-Small-Cell Lung Cancer (Germany) | $63,158 per life-year gained [3] | Higher percentage of patients receiving targeted therapies [3] |

| Multicancer Early Detection (MCED) + Usual Care | Multiple cancer types (US, age 50-79) | $66,048 per QALY gained [2] | Shifted 7200 cancers to earlier stages at diagnosis per 100,000 individuals [2] |

| Polygenic Risk Score (PRS) Stratified Screening | Breast Cancer (Taiwan) | $75.71 per QALY gained [4] | Enables tailored screening intensity based on genetic risk [4] |

| CGP (with increased treatment access) | Advanced Non-Small-Cell Lung Cancer (US) | $86,826 per life-year gained [3] | Demonstrated improved cost-effectiveness with broader treatment access [3] |

Table 2: Testing Performance Characteristics and Economic Parameters

| Testing Modality | Sensitivity Range by Stage | False Positive Rate | Test Cost/Price | Population Studied |

|---|---|---|---|---|

| Multicancer Early Detection (MCED) | Stage I: 22-61% (varies by cancer); Stage IV: 95-96% [2] | 0.5% [2] | $949 [2] | Adults aged 50-79 years [2] |

| Comprehensive Genomic Profiling | Not explicitly stated in results | Not specified | Not specified | Patients with advanced non-small-cell lung cancer [3] |

| Polygenic Risk Score Screening | Not specified | Not specified | Not specified | 35-year-old Taiwanese women without family history of breast cancer [4] |

Methodologies in Cost-Effectiveness Research

Partitioned Survival Modeling

The partitioned survival model (PSM) has become a standard methodology for evaluating the cost-effectiveness of cancer interventions. This approach was utilized in multiple studies analyzed in this review [3] [5] [6]. The PSM typically incorporates three distinct health states: progression-free survival (PFS), progressive disease (PD), and death [5]. Patients transition between these states based on transition probabilities derived from clinical trial data.

In practice, researchers extract survival curve data from clinical trials using tools like GetData Graph Digitizer software, then reconstruct and simulate survival curves using various distribution models including Weibull, log-logistic, Gompertz, gamma, exponential, and log-normal distributions [5]. The optimal distribution is selected based on statistical criteria such as the Akaike Information Criterion (AIC) and Bayesian Information Criterion (BIC), complemented by visual inspection to ensure clinical validity [5].

Hybrid Cohort-Level Modeling for Early Detection

For evaluating multicancer early detection tests, researchers have developed hybrid cohort-level models that combine state transitions with decision tree analysis [2]. This approach compares annual MCED testing plus usual care screening against usual care alone for cancer detection over a lifetime horizon. The model estimates cancer diagnoses in the usual care arm based on age-specific and stage-specific incidence rates for each cancer type, typically sourced from comprehensive registries like the Surveillance, Epidemiology, and End Results (SEER) program [2].

These models incorporate stage shift analysis, where MCED testing enables earlier detection and diagnosis of cancer compared to usual care alone. The magnitude of stage shifting is estimated by accounting for MCED testing frequency, sensitivity, and cancer progression speed (dwell time) by cancer type and stage [2]. Advanced models also consider differential survival based on cell-free DNA detectability status, recognizing that cancers detectable by MCED tests may have different biological aggressiveness [2].

Network Meta-Analysis for Comparative Effectiveness

When direct comparative evidence between interventions is limited, network meta-analysis (NMA) provides a valuable methodological approach. This technique was employed in the evaluation of first-line treatments for recurrent or metastatic head and neck cancer, enabling indirect comparison of multiple treatment strategies across different clinical trials [6]. NMA extends beyond traditional pairwise comparisons by simultaneously evaluating and ranking multiple treatment strategies in terms of overall efficacy, making it increasingly valuable in pharmacoeconomic evaluations [6].

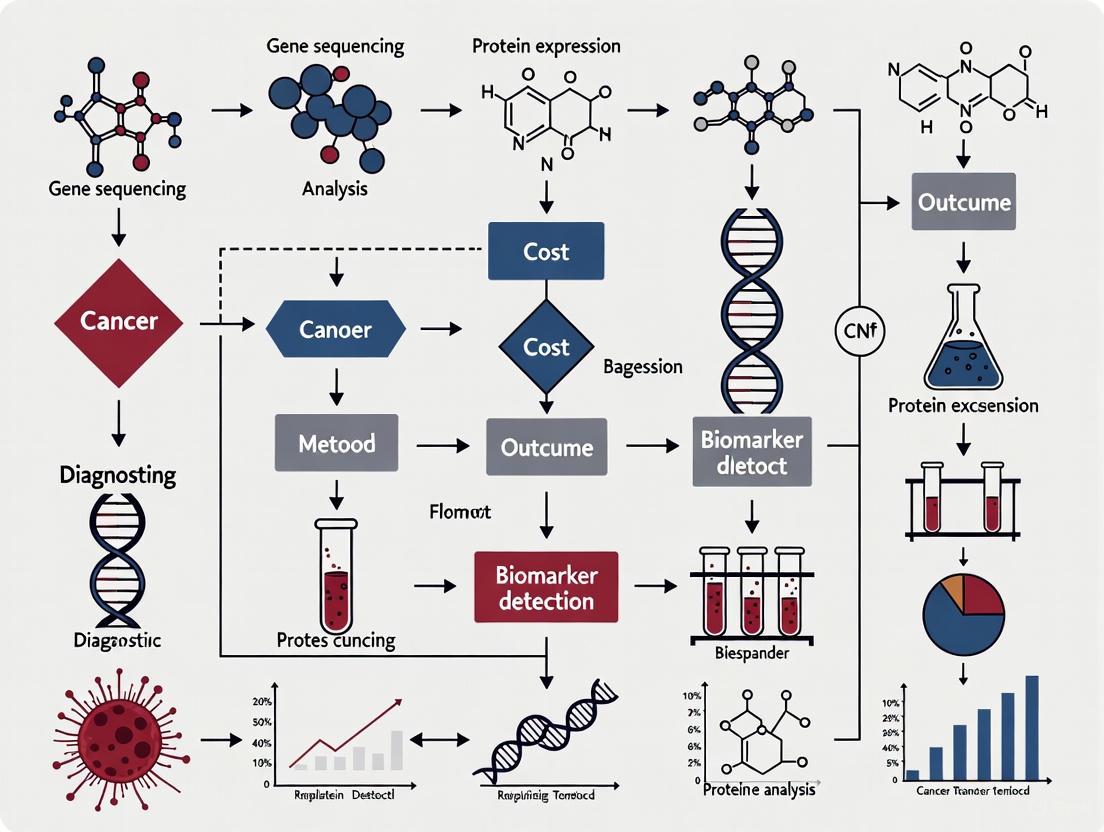

Cost-Effectiveness Analysis Workflow

Key Research Reagents and Technologies

Table 3: Essential Research Reagents and Technologies in Genomic Cancer Testing

| Reagent/Technology | Primary Function | Application in Research |

|---|---|---|

| Cell-free DNA (cfDNA) Methylation Patterns | Detection of shared cancer signals across multiple cancer types [2] | Multicancer early detection testing [2] |

| Polygenic Risk Scores (PRS) | Aggregate risk assessment based on multiple single nucleotide polymorphisms (SNPs) [4] | Risk-stratified screening for breast cancer [4] |

| Comprehensive Genomic Profiling (CGP) | Broad genomic analysis to identify targetable mutations [3] | Guidance for matched targeted therapies in advanced cancers [3] |

| Programmed Death-Ligand 1 (PD-L1) Staining | Assessment of tumor immunogenicity and prediction of immunotherapy response [5] [6] | Patient selection for immune checkpoint inhibitor therapies [5] [6] |

| Next-Generation Sequencing Panels | Simultaneous analysis of multiple cancer-related genes [3] | Identification of actionable genomic alterations in tumor tissue [3] |

Signaling Pathways in Cancer Testing and Treatment

Testing-Informed Treatment Pathways

Discussion

Economic Value Across Testing Modalities

The cost-effectiveness of genomic testing varies significantly across cancer types, stages, and healthcare systems. Comprehensive genomic profiling in advanced non-small-cell lung cancer demonstrates substantially different ICERs between the United States ($174,782 per life-year gained) and Germany ($63,158 per life-year gained), highlighting the importance of regional healthcare economics in determining value [3]. This disparity may reflect differences in drug pricing, healthcare delivery systems, and implementation costs between countries.

For early detection, MCED testing presents a compelling economic value proposition at $66,048 per QALY gained, primarily through substantial reductions in late-stage cancer diagnoses and associated high treatment costs [2]. The test's ability to detect over 50 cancer types, particularly those without recommended screening protocols, addresses a significant gap in current cancer control strategies. Similarly, risk-stratified screening using polygenic risk scores for breast cancer demonstrates exceptional cost-effectiveness at $75.71 per QALY gained, suggesting that precision prevention approaches may offer exceptional value in appropriate populations [4].

Methodological Considerations in Economic Evaluation

The partitioned survival model has emerged as the predominant analytical framework for evaluating cancer testing strategies, though specific implementations vary substantially between studies [3] [5] [6]. These models require careful consideration of transition probabilities, utility values, and long-term extrapolation beyond clinical trial observation periods. The selection of appropriate survival distributions (e.g., Weibull, log-logistic, Gompertz) significantly influences cost-effectiveness results and requires both statistical rigor and clinical validation [5].

Recent methodological innovations include the incorporation of differential survival based on molecular detectability status, recognizing that cancers detectable by emerging technologies like MCED tests may have different biological behaviors than undetectable cancers [2]. This represents an important advancement in the methodological sophistication of economic evaluations, moving beyond simple stage-shift models to incorporate the biological implications of testing technologies.

Implications for Research and Development

For researchers and drug development professionals, these findings highlight the growing importance of economic evidence in the development and adoption of new cancer technologies. The demonstrated cost-effectiveness of comprehensive genomic profiling supports continued investment in broad-panel molecular diagnostics, particularly for guiding targeted therapies in advanced cancers [3] [1]. Similarly, the exceptional economic value of risk-stratified screening approaches suggests significant potential for precision prevention strategies that optimize resource allocation based on individual risk [4].

Future research should address evidence gaps in low- and middle-income countries, where the cost-effectiveness of genomic medicine remains largely unexamined despite bearing 65% of global cancer mortality [1]. Additionally, more economic evaluations are needed for rare cancers and cancers of unknown primary, where genomic technologies may offer particularly significant clinical benefits despite current limited evidence [1].

The rising global burden of cancer and associated healthcare costs necessitates careful evaluation of the economic value of emerging testing technologies. Current evidence demonstrates that comprehensive genomic profiling, multicancer early detection tests, and genetic risk-stratified screening can provide cost-effective approaches to cancer care across various clinical contexts and healthcare systems. The substantial regional variation in cost-effectiveness highlights the importance of local economic conditions and healthcare infrastructures in technology adoption.

For researchers and drug development professionals, these findings underscore the critical relationship between molecular testing, treatment personalization, and economic value in contemporary oncology. As genomic technologies continue to evolve, ongoing economic evaluations will be essential for guiding their responsible integration into healthcare systems, ensuring that scientific advancements translate into sustainable improvements in cancer outcomes despite growing economic pressures.

Precision medicine represents a fundamental shift in oncology, moving away from the traditional one-size-fits-all approach toward therapies tailored to the unique molecular characteristics of a patient's tumor. This approach recognizes that cancers with similar histology may harbor different genetic drivers, requiring different therapeutic strategies. By leveraging advanced genomic technologies, precision medicine aims to match patients with treatments that target the specific molecular alterations driving their cancer, potentially leading to improved outcomes and reduced toxicity.

The concept of 'precision cancer medicine' (PCM) stands as one of the most promising frontiers in the evolving landscape of oncology. By tailoring treatment to the unique genetic and molecular profile of each patient's tumor, PCM offers a vision of cancer treatment that is more effective, less toxic, and personalized [7]. However, it is crucial to distinguish between 'precision medicine' and 'personalized medicine,' terms often used interchangeably but with important distinctions. True personalized medicine would involve a comprehensively tailored treatment based on the predictive power from a joint analysis of all possible biomarkers, not only genomics, and selected from all available drugs [7]. In contrast, current precision medicine primarily focuses on genomics-guided approaches that stratify patients into subgroups based on molecular characteristics [7].

Comparative Analysis of Testing Approaches

Economic and Performance Characteristics of Genomic Testing Modalities

Table 1: Comparison of Genomic Testing Approaches in Oncology

| Testing Approach | Typical Gene Coverage | Cost-Effectiveness Threshold | Key Applications | Strengths | Limitations |

|---|---|---|---|---|---|

| Single-Gene Tests | 1 gene | Cost-effective for single biomarkers | Testing for individual high-impact mutations (e.g., EGFR in NSCLC) | Inexpensive, readily accessible, fast turnaround | Only detects a single mutation; inefficient when multiple biomarkers needed |

| Targeted NGS Panels | 2-52 genes | Cost-effective when 4+ genes require assessment [8] [9] | Comprehensive biomarker profiling for treatment selection; residual disease monitoring | Simultaneous detection of multiple biomarkers; reduces turnaround time and healthcare staff requirements [8] [9] | Limited to predefined gene sets; may miss novel biomarkers |

| Comprehensive Genomic Profiling (CGP) | Hundreds of genes | Generally not cost-effective for routine use [8] [9] | Research settings; complex diagnostic cases; clinical trial enrollment | Unbiased discovery; identifies rare alterations; broad molecular portrait | Higher cost; complex data interpretation; uncertain clinical utility for many findings |

| Whole Genome/Exome Sequencing | Entire exome or genome | Not yet cost-effective for routine clinical care | Discovery research; diagnosis of rare cancers; tertiary care centers | Most comprehensive coverage; identifies non-coding variants | Highest cost; extensive data storage needs; majority of findings of unknown significance |

Methodological Framework for Economic Evaluation

The assessment of precision medicine interventions relies on standardized health economic methodologies. Cost-effectiveness analysis in healthcare typically uses quality-adjusted life years (QALYs), which incorporate both the quality and the quantity of life gained from healthcare interventions. The incremental cost-effectiveness ratio (ICER) is the primary metric, representing the additional cost per QALY gained [10]. Thresholds for cost-effectiveness are often set between $50,000 and $150,000 per QALY in the United States, representing the amount generally considered reasonable to gain one additional year of life in perfect health [10].

Economic evaluations of precision medicine must account for multiple cost components beyond the initial test price, including:

- Direct testing costs: Reagents, equipment, and personnel for test administration

- Downstream treatment costs: Targeted therapies guided by test results

- Healthcare system costs: Staff requirements, hospital visits, and infrastructure

- Long-term outcomes: Survival benefits, quality of life improvements, and reduced adverse events

Holistic analysis demonstrates that next-generation sequencing reduces turnaround time, healthcare staff requirements, number of hospital visits, and hospital costs, providing economic advantages beyond simple test cost comparisons [8] [9].

Evidence Base: Precision Medicine in Practice

Clinical and Economic Outcomes Across Cancer Types

Table 2: Cost-Effectiveness Evidence for Precision Medicine Applications

| Cancer Type/Context | Precision Medicine Approach | Comparative Intervention | Incremental Cost-Effectiveness Ratio (ICER) | Key Outcomes |

|---|---|---|---|---|

| Non-Small Cell Lung Cancer (EGFR+) | EGFR testing + gefitinib | Conventional chemotherapy | $110,000-$150,000 per QALY [10] | Improved progression-free survival and quality of life; at upper end of cost-effectiveness threshold |

| Advanced NSCLC (CGP vs. small panels) | Comprehensive Genomic Profiling | Small panel testing (SP) | $174,782 per life-year gained (US); $63,158 (Germany) [11] | Improved overall survival (0.10 years); higher percentage receiving targeted therapies |

| Hereditary Breast/Ovarian Cancer | NGS for BRCA + personalized risk assessment | Conventional risk assessment | Cost-effective in specific situations [12] | Favorable ICER (<$50,000/QALY) in high-risk populations; cost savings from avoided cancer cases |

| Pediatric High-Risk Cancer | Multi-omics profiling (WGS, RNAseq, methylation) | Conventional diagnostic workup | $12,743 per patient for program access [13] | Identifies molecular causes and actionable targets in refractory childhood cancers |

| Lung Cancer Screening (High-risk) | Liquid biopsy (EarlyCDT-Lung) | Standard clinical diagnosis | $75,436 per QALY (Brazilian context) [14] | Exceeded local cost-effectiveness thresholds; potentially cost-effective in populations with >4% prevalence |

Implementation Considerations and Real-World Evidence

The real-world implementation of precision medicine reveals substantial variability in costs and outcomes. The Zero Childhood Cancer Precision Medicine Programme in Australia demonstrated costs of $12,743 per patient for program access, $14,262 per identification of molecular cause, and $21,769 per multidisciplinary tumor board recommendation [13]. These figures highlight the significant infrastructure and expertise required for comprehensive precision oncology.

The clinical impact of precision medicine extends beyond traditional cost-effectiveness metrics. Comprehensive genomic profiling in advanced non-small cell lung cancer has been shown to change management and improve survival in real-world settings [11]. However, the percentage of patients who ultimately benefit from genomics-guided precision medicine remains limited, as many tumors lack actionable mutations, and inherent or acquired treatment resistance is often observed [7].

Experimental Approaches and Methodologies

Technical Workflows in Precision Oncology

Table 3: Essential Research Reagents and Platforms for Precision Medicine Studies

| Research Reagent/Platform | Primary Function | Application in Precision Medicine |

|---|---|---|

| Next-Generation Sequencers | High-throughput DNA/RNA sequencing | Whole genome, exome, transcriptome, and targeted panel sequencing for mutation detection |

| Whole Genome Sequencing (WGS) | Comprehensive analysis of entire genome | Identification of coding and non-coding variants, structural rearrangements |

| RNA Sequencing | Transcriptome analysis | Gene expression profiling, fusion detection, alternative splicing analysis |

| Methylation Arrays | Epigenetic profiling | Methylation pattern analysis for classification and prognostic stratification |

| High-Throughput Drug Screening (HTS) | In vitro drug sensitivity testing | Functional assessment of treatment response in patient-derived cells |

| Patient-Derived Xenografts (PDX) | In vivo drug efficacy testing | Evaluation of treatment response in animal models maintaining tumor heterogeneity |

| Circulating Tumor DNA Assays | Liquid biopsy analysis | Non-invasive tumor genotyping, monitoring treatment response, minimal residual disease detection |

Computational Analysis and Clinical Interpretation Workflow

The following diagram illustrates the core workflow for precision oncology analysis, from sample collection to clinical reporting:

Precision Medicine Analysis Workflow

This workflow represents the standardized process for implementing precision medicine in oncology, beginning with sample collection of tumor and matched normal tissue, proceeding through comprehensive molecular profiling and computational analysis, and culminating in clinical interpretation and reporting through multidisciplinary tumor boards [13].

Future Directions and Implementation Challenges

Addressing Current Limitations

While precision medicine shows significant promise, several challenges remain. Currently, only a minority of patients benefit from genomics-guided precision medicine [7]. Many tumors lack actionable mutations, and even when targets are identified, inherent or acquired treatment resistance often occurs. The concept of precision medicine is sometimes overstated in public discourse, as true personalized medicine would require integration of multiple biomarker layers beyond genomics, including pharmacokinetics, pharmacogenomics, other 'omics' biomarkers, imaging, histopathology, patient nutrition, comorbidity, and concomitant medications [7].

Additional limitations include the need for better clinical trial designs to demonstrate utility. Many current trials of tumor-agnostic approaches report surrogate endpoints rather than true clinical benefit, with considerable attrition at each step of the process and difficulty drawing definitive conclusions due to heterogeneous patient populations and lack of control groups [7]. More selective patient recruitment based on comprehensive tumor biology knowledge, earlier intervention in the treatment course, and combination therapies targeting multiple genomic aberrations represent promising directions for future research [7].

Advancing Toward Truly Personalized Cancer Medicine

The future evolution of precision medicine will require integrating multiple data layers to create comprehensive patient-specific models. This includes:

- Multi-omics integration: Combining genomic, transcriptomic, proteomic, and metabolomic data

- Pharmacokinetic and pharmacogenomic factors: Enabling individualized drug dosing

- Microbiome analysis: Understanding how commensal organisms influence drug metabolism and efficacy

- Digital health technologies: Continuous monitoring of treatment response and toxicity

- Artificial intelligence: Identifying complex patterns across diverse data types to predict treatment response

Only when bringing information from many such biomarkers into complex, AI-generated treatment predictors will precision medicine advance toward truly personalized cancer medicine [7]. Principles for such an approach have been outlined and should form the basis for future clinical trials [7].

Precision medicine represents a transformative approach to oncology that increasingly demonstrates both clinical benefit and economic viability in specific contexts. The evidence base supports the cost-effectiveness of targeted genetic testing in high-risk populations, NGS panels when multiple genes require assessment, and comprehensive genomic profiling in advanced cancers where therapeutic matching improves outcomes. However, significant challenges remain in expanding the proportion of patients who benefit, integrating multidimensional data sources, and demonstrating value across diverse healthcare systems and populations. As the field evolves toward increasingly personalized approaches, continued rigorous economic evaluation alongside clinical validation will be essential to ensure the sustainable implementation of these transformative technologies.

In an era of advancing but often expensive medical technologies, health economic evaluations have become indispensable for informing resource allocation decisions, particularly in oncology. These analyses provide a structured framework to compare the value of different healthcare interventions. At the core of this evaluation lie three fundamental metrics: the Quality-Adjusted Life Year (QALY), the Incremental Cost-Effectiveness Ratio (ICER), and the Willingness-to-Pay (WTP) Threshold. Together, these metrics form a standardized methodology for assessing whether a new intervention, such as a novel cancer testing approach, provides sufficient health benefit to justify its cost. The QALY serves as the standardized measure of health benefit, integrating both survival and quality of life. The ICER then calculates the cost to achieve each unit of this benefit compared to an alternative. Finally, the WTP threshold provides the decision-making benchmark against which the ICER is judged. For researchers and drug development professionals in oncology, understanding the interplay of these metrics is crucial for demonstrating the value proposition of new technologies within constrained healthcare budgets.

The Quality-Adjusted Life Year (QALY)

Definition and Calculation

The Quality-Adjusted Life Year (QALY) is a generic measure of disease burden that combines both the quality and the quantity of life lived into a single index number [15]. It is the primary outcome measure used in cost-utility analysis, a form of economic evaluation that allows for comparisons across different disease areas and treatments [16]. The core principle of the QALY is that a year of life lived in perfect health is assigned a value of 1.0 QALY, whereas a year of life lived in a state of less than perfect health is assigned a value between 0 (equivalent to death) and 1 [17] [18]. Health states considered "worse than death" can theoretically have negative values [15].

The calculation of QALYs is mathematically straightforward. It involves multiplying the utility weight (a measure of health-related quality of life) associated with a particular health state by the duration of time spent in that state [16] [17]. The formula is:

QALYs = Utility Weight × Time (in years)

For example, if a patient lives for 5 years with a utility weight of 0.8 (indicating a health state valued at 80% of perfect health), they would accumulate 4 QALYs (5 × 0.8) [17]. Similarly, 1 year of life lived with a utility of 0.5 yields 0.5 QALYs, meaning the individual values that year of compromised health as much as half a year in perfect health [15].

Measurement of Utilities

The utility weights central to QALY calculation are typically derived from multi-attribute utility (MAU) instruments [18]. These instruments consist of a questionnaire that patients complete to describe their health state and a scoring algorithm, based on public preferences, that converts this description into a utility value.

Common MAU instruments include [18]:

- EQ-5D (EuroQol 5-Dimension): Covers mobility, self-care, usual activities, pain/discomfort, and anxiety/depression [17] [15].

- SF-6D (Short-Form 6-Dimension): Derived from the SF-36 or SF-12 health surveys.

- HUI (Health Utilities Index): A comprehensive system for measuring health status and health-related quality of life.

When clinical trials do not include these MAU instruments, researchers may use statistical mapping to predict utility values from other clinical outcome assessments (COAs), thereby bridging the evidence gap for cost-effectiveness analysis [18].

Conceptual Workflow of QALY Calculation

The following diagram illustrates the logical process of calculating QALYs, from defining the health state to arriving at the final metric.

The Incremental Cost-Effectiveness Ratio (ICER)

Definition and Calculation

The Incremental Cost-Effectiveness Ratio (ICER) is a statistic that summarizes the cost-effectiveness of a healthcare intervention [19]. It represents the additional cost required to generate one additional unit of health benefit (measured in QALYs) when compared to an alternative treatment, typically the current standard of care [20]. The ICER is the fundamental output of a cost-utility analysis and is used by health technology assessment (HTA) bodies worldwide to inform reimbursement decisions.

The ICER is calculated using the following formula [19]:

ICER = (Cost of New Intervention − Cost of Comparator) / (Effectiveness of New Intervention − Effectiveness of Comparator)

Where:

- Cost includes all relevant healthcare costs associated with the interventions.

- Effectiveness is measured in QALYs gained.

For example, if a new digital product for managing heart failure costs £4,000 more than the standard care and generates 4 additional QALYs, the ICER would be £1,000 per QALY gained (£4,000 / 4 QALYs) [17].

Use in Decision-Making and Controversies

The ICER provides a common denominator that allows decision-makers to compare diverse health interventions across different disease areas [19]. For instance, the ICER can help determine whether a new cancer drug provides better value for money than a new surgical technique or a public health initiative.

However, the use of ICERs is not without controversy. A primary concern is that it can be seen as a form of healthcare rationing [19]. Critics argue that using a strict cost-per-QALY threshold may limit patient access to treatments, particularly for those with severe illnesses or rare conditions. Due to these ethical concerns, the use of QALYs and ICERs in the United States for Medicare coverage decisions was prohibited by the Affordable Care Act [19] [15]. In response to such limitations, some HTA bodies, like England's NICE, have implemented higher, flexible thresholds for end-of-life care and treatments for rare diseases [19].

ICER Decision Framework

The following diagram outlines the logical process of using the ICER to inform healthcare reimbursement decisions, from calculation to the final funding decision.

Willingness-to-Pay (WTP) Thresholds

Definition and Purpose

The Willingness-to-Pay (WTP) Threshold represents the maximum amount a healthcare system is willing to pay for one additional QALY gained [21]. It serves as the critical decision rule or benchmark against which the ICER is judged [19]. If the ICER of a new intervention falls below this threshold, it is typically deemed "cost-effective" and is a candidate for funding. Conversely, if the ICER exceeds the threshold, the intervention is considered poor value for money and is unlikely to be recommended for reimbursement [19].

The establishment of a WTP threshold is fundamentally about opportunity cost—the health benefits that are forgone when resources are allocated to one intervention instead of another. By setting a threshold, payers aim to maximize the total health benefits delivered to the population from a limited budget [21].

Variations in Thresholds Across Health Systems

There is no universal WTP threshold, and values vary significantly between countries and healthcare systems. Different methodologies are used to set these thresholds, including benchmarking against past decisions, using multiples of a country's GDP per capita, or attempting to estimate the health displaced by new expenditures [21].

Table: Examples of Willingness-to-Pay Thresholds in Different Jurisdictions

| Country/Region | WTP Threshold (per QALY) | Notes | Source |

|---|---|---|---|

| England and Wales (NICE) | £20,000 - £30,000 | Standard range; higher thresholds for end-of-life and rare diseases. | [17] [19] |

| Canada (CADTH) | $50,000 (CAD) | Used for both oncology and non-oncology drugs since late 2020. | [22] |

| United States | ~$100,000 - $150,000 | Commonly cited informal threshold, though not used for Medicare. | [20] |

| International Survey | $36,000 - $77,000 (USD) | Range found in a multi-country study (UK, US, Japan, etc.). | [21] |

The table above illustrates the lack of global consensus. For example, Canada's CADTH employs a threshold of $50,000 CAD per QALY for all drugs, a level that has been shown to necessitate significant price reductions for many oncology products [22]. In contrast, decision-makers in the United States often reference an informal threshold of approximately $100,000 per QALY [20]. International surveys reveal an even wider variation, with estimates ranging from $36,000 per QALY in the UK to $77,000 per QALY in Taiwan when adjusted for comparative price levels [21].

Interrelationship and Application in Cancer Testing

The Integrated Decision-Making Framework

In practice, QALYs, ICERs, and WTP thresholds function as an integrated system for health technology assessment. The logical flow begins with the estimation of QALYs for the intervention and comparator, which is used to calculate the ICER. This ICER is then evaluated against the pre-determined WTP threshold to arrive at a reimbursement recommendation. This framework is applied globally by HTA bodies like NICE in England and the Canadian Agency for Drugs and Technologies in Health (CADTH) to determine access to new cancer therapies and diagnostics [22] [17] [18].

Impact on Oncology Drug Access

The application of this framework, particularly the WTP threshold, has a direct and measurable impact on patient access to new oncology drugs. A study of CADTH recommendations between 2020 and 2022 revealed that to meet the $50,000 per QALY threshold, 57% (59/103) of oncology drug assessments required a price reduction of greater than 70% off the list price [22]. Furthermore, 8% (8/103) were deemed not cost-effective even at a 100% price reduction [22]. This demonstrates the powerful influence of these metrics on pricing and market access.

The study also found a temporal impact: the median time to price negotiation for assessments requiring at least a 70% price reduction was 4.8 months, compared to 2.6 months for those requiring a smaller reduction [22]. This shows that the degree of cost-effectiveness, as measured by the required price reduction to meet the WTP threshold, can directly affect the speed at which new treatments reach patients.

Case Study: Cost-Effectiveness of Genomic Profiling in NSCLC

A concrete application of these metrics in cancer testing is illustrated by a 2025 cost-effectiveness analysis of Comprehensive Genomic Profiling (CGP) versus Small Panel (SP) testing in patients with advanced non-small-cell lung cancer (NSCLC) in the United States and Germany [3].

Table: Cost-Effectiveness Results for CGP vs. SP Testing in Advanced NSCLC

| Parameter | United States | Germany | Notes |

|---|---|---|---|

| Incremental Overall Survival | 0.10 years | 0.10 years | Model input from real-world data. |

| Base Case ICER | $174,782 per LY | $63,158 per LY | LY = Life Year. |

| ICER (Scenario: More Patients Treated) | $86,826 per LY | $29,235 per LY | More patients receiving targeted therapy. |

The study used a partitioned survival model informed by real-world data. It found that while CGP improved average overall survival, it was also associated with higher costs due to more patients receiving matched targeted therapies [3]. The resulting ICER was $174,782 per life-year gained in the US and $63,158 in Germany [3]. These figures would be judged differently against each country's informal WTP benchmarks. The analysis also showed that the ICER was highly sensitive to model parameters; when the scenario assumed a higher proportion of patients received treatment, the ICER became more favorable, falling to $86,826 in the US [3]. This case highlights how these key metrics are used to quantify the value of advanced cancer testing and how results can vary by healthcare system and underlying assumptions.

Researchers conducting cost-effectiveness analyses in oncology require a specific set of tools and resources to generate robust evidence on QALYs and ICERs. The following table details key research reagents and their functions.

Table: Key Research Reagent Solutions for Cost-Effectiveness Analysis

| Tool/Resource | Function in Research | Relevance to Metrics | |

|---|---|---|---|

| Multi-Attribute Utility (MAU) Instruments (e.g., EQ-5D, SF-6D, HUI) | Standardized questionnaires to measure health-related quality of life and generate utility weights. | Foundational for calculating QALYs. Essential for populating economic models. | [17] [18] |

| PROQOLID Database | A comprehensive online database providing detailed information on over 7,000 clinical outcome assessments (COAs), including their development, validity, and translations. | Aids in selecting the most appropriate COA or MAU for a given study population and therapeutic area. | [18] |

| HERC Mapping Database | A database from the Health Economics Research Centre that catalogs studies which have statistically mapped non-preference-based COAs to utility instruments like the EQ-5D. | Crucial for deriving utility values when a MAU instrument was not used in a clinical trial, enabling QALY calculation. | [18] |

| Partitioned Survival Model | A common type of health economic model that uses survival curves to partition a patient cohort into different health states over time (e.g., progression-free, progressed, dead). | The primary framework for estimating long-term survival (life-years) and assigning utility values to different health states, leading to QALY estimation. Used to calculate the ICER. | [3] |

| Real-World Evidence (RWE) | Data derived from electronic health records, registries, and other non-randomized sources that reflect routine clinical practice. | Informs key model parameters (e.g., overall survival, treatment patterns) with data from real-world settings, making the resulting ICER more generalizable. | [3] |

The metrics of QALYs, ICERs, and WTP thresholds form the cornerstone of modern health economic evaluation, providing a standardized, though not uncontested, framework for assessing the value of medical interventions. For researchers and developers in the field of oncology, a deep understanding of these concepts is critical. The QALY integrates survival and quality of life into a single measure of benefit. The ICER quantifies the economic efficiency of a new technology compared to existing standards. Finally, the WTP threshold represents the societal or systemic benchmark for value, completing the chain from clinical research to reimbursement policy. As the case of cancer testing and therapeutics demonstrates, the interaction of these metrics directly influences which innovations reach patients and at what price. While debates about their methodological limitations and ethical implications continue, their role in guiding resource allocation in increasingly constrained healthcare systems worldwide is likely to grow.

Precision oncology represents a paradigm shift in cancer care, moving away from empirical chemotherapy towards treatments tailored to the genomic profile of a patient's tumor. This approach leverages advanced diagnostic technologies like comprehensive genomic profiling (CGP) to identify targetable mutations and select optimal targeted therapies. While these advancements have improved patient outcomes, they have also introduced significant economic challenges. The rising costs of cancer care, projected to exceed $245 billion in the U.S. by 2030, have intensified the focus on understanding the specific drivers of expense throughout the precision oncology pipeline [23]. For researchers and drug development professionals, analyzing these cost components is essential for developing more efficient technologies, optimizing resource allocation, and demonstrating the value of genomic-guided treatment approaches.

This analysis examines the cost structures of two fundamental components of modern oncology: the diagnostic sequencing process that enables treatment selection, and the targeted therapeutics that constitute the treatment itself. By dissecting these cost drivers and presenting comparative cost-effectiveness data, this guide provides a framework for evaluating the economic efficiency of different approaches to precision cancer medicine.

Cost Drivers in Diagnostic Sequencing

Microcosting Analysis of Genomic Profiling

Advanced molecular diagnostics form the foundation of precision oncology by identifying the genetic alterations that drive cancer progression. A detailed microcosting study of genomic profiling within Norway's National Infrastructure for Precision Diagnostics revealed a total cost of $2,944 per sample using the TruSight Oncology 500 broad gene panel, with costs ranging from $2,366 to $4,307 when accounting for estimation uncertainties [24].

Table 1: Cost Breakdown for Genomic Profiling in Precision Cancer Medicine

| Cost Category | Percentage of Total Cost | Key Findings |

|---|---|---|

| Consumables | Major driver | Highest material cost component across workflow |

| Personnel | Major driver | Significant contributor across analyses; potential bottleneck for scaling |

| Equipment/Overhead | Variable component | Automation adds cost but enables higher throughput |

| Bioinformatics | 21.3%-58.3% | Highly variable; bespoke analyses increase cost substantially |

The study developed a flexible costing framework that calculated expenses by workflow steps and cost categories, identifying consumables and personnel as the most resource-intensive cost categories across all analyses [24]. Importantly, the research highlighted how operational factors influence overall costs, noting that automating the resource-intensive library preparation step enabled a higher weekly batch size with slightly lower costs per sample ($2,881) despite the additional equipment investment [24].

Bioinformatics and Specialized Analysis Costs

The bioinformatics component of genomic sequencing represents a substantial and highly variable cost driver. Research on genome sequencing for Indigenous children with suspected rare diseases found that bioinformatics accounted for 21.3% to 58.3% of total costs, with bespoke analyses required due to underrepresentation in reference genome libraries significantly increasing expenses [25]. With standard bioinformatics, costs ranged from C$3,645 for singletons to C$7,402 for trios. However, with advanced, bespoke bioinformatics, costs increased to C$5,344 for singletons and C$9,760 for trios [25]. The time required for these analyses ranged from 71 hours for standard analyses to 215 hours for advanced analyses, highlighting the substantial personnel resources involved in data interpretation [25].

Cost Drivers in Targeted Cancer Therapeutics

Escalating Prices for Targeted Therapies

Targeted cancer therapies command premium prices that have escalated substantially over the past decade. In the U.S., the average monthly launch price for targeted therapies has increased from approximately $10,950 during 2012-2014 to over $27,800 by 2025—representing a 150% increase in just over a decade [26]. Some therapies now exceed $30,000 per month, creating significant financial burdens for healthcare systems and patients [26].

Several factors drive these rising costs, including extensive research and development expenses, complex manufacturing processes for biological therapies, and market dynamics that limit competition for targeted agents. Additionally, the targeted nature of these treatments means they are developed for smaller patient populations, requiring higher prices to recoup development costs [26].

International Price Variations

Significant international price variations for targeted therapies reveal how healthcare systems and pricing policies influence costs. Comparative data shows Germany's average monthly cost is approximately $5,900, while Canada offers therapies in the $3,000-$4,000 monthly range [26]. Australia's pricing before subsidies ranges from $5,000-$11,000 monthly [26]. These differences reflect varying approaches to drug price negotiation, healthcare financing, and value assessment across healthcare systems.

Cost-Effectiveness Analysis of Testing Approaches

Comprehensive Genomic Profiling vs. Small Panel Testing

A critical consideration in precision oncology is whether the additional information provided by broader genomic testing approaches justifies their higher upfront costs compared to more limited testing. A 2025 cost-effectiveness analysis compared comprehensive genomic profiling (CGP) versus small panel (SP) testing in patients with advanced non-small-cell lung cancer using real-world data from the Syapse study [3].

Table 2: Cost-Effectiveness Analysis: CGP vs. Small Panel Testing in Advanced NSCLC

| Parameter | United States | Germany | Notes |

|---|---|---|---|

| Overall Survival Benefit | +0.10 years | +0.10 years | Consistent benefit observed |

| Incremental Cost-Effectiveness Ratio (ICER) | $174,782/LYG | $63,158/LYG | Base case scenario |

| ICER with More Patients Treated | $86,826/LYG | $29,235/LYG | Increased treatment rates improve cost-effectiveness |

| Key Cost Driver | Higher percentage of patients receiving targeted therapies | Higher percentage of patients receiving targeted therapies | Drug costs primary factor |

The analysis demonstrated that CGP improved average overall survival by 0.10 years compared with SP testing. The resulting incremental cost-effectiveness ratio (ICER) was $174,782 per life-year gained in the U.S. and $63,158 in Germany [3]. Scenario analyses revealed that increasing the number of patients receiving matched targeted therapies significantly improved cost-effectiveness, decreasing ICERs to $86,826 in the U.S. and $29,235 in Germany [3].

Value-Based Assessment and Considerations

When evaluating the economic value of precision oncology approaches, researchers must consider both quantitative metrics and qualitative factors. The U.S. cost-effectiveness ratios for some targeted therapies exceed $174,000 per life-year gained, necessitating careful value assessment [26]. Quality-Adjusted Life Year (QALY) considerations incorporate both survival and quality of life, providing a more comprehensive measure of therapeutic value [26].

The economic evaluation must balance clinical benefits against financial impact, considering not only drug costs but also potential reductions in unnecessary treatment, improved resource allocation, and the value of hope for patients with limited alternatives. This balanced approach is essential for drug development professionals seeking to optimize the value proposition of new targeted therapies and diagnostic approaches.

Experimental Protocols and Methodologies

Microcosting Methodology for Genomic Profiling

The microcosting study from Norway employed a comprehensive methodology to capture all cost components associated with genomic profiling [24]. Site visits and structured discussions with staff at Oslo University Hospital informed the diagnostic workflow, validation of the costing framework, and resource use inputs. The research team developed a flexible costing framework that enabled calculation of costs per sample, by workflow steps and cost categories. Sensitivity analyses addressed alternative resource use estimates, higher batch sizes, and investment costs for automation of the library preparation step. This rigorous methodology provides a template for researchers conducting similar economic evaluations of diagnostic technologies.

Cost-Effectiveness Analysis Design

The cost-effectiveness analysis of CGP versus small panel testing utilized a partitioned survival model to estimate life years and drug acquisition costs associated with each testing strategy [3]. Key model parameters were informed by real-world data derived from the Syapse study. The analysis considered three patient pathways: those receiving matched targeted therapy, matched immunotherapy, or no matched therapy. Scenario analyses tested the robustness of the findings, and sensitivity analyses explored the impact of varying key parameters. This methodology demonstrates how real-world evidence can be incorporated into economic evaluations of precision oncology approaches.

Visualizing Cost Drivers and Workflows

Precision Oncology Cost Driver Analysis

Comprehensive vs Small Panel Testing Value Assessment

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Platforms for Precision Oncology

| Reagent/Platform | Function | Application in Research |

|---|---|---|

| TruSight Oncology 500 | Broad gene panel for genomic profiling | Identification of targetable mutations in cancer [24] |

| PEG-based Hydrogels | 3D cell culture matrix | Creating more physiologically relevant cancer models for drug testing [27] |

| CellTiter-Glo 3D | Viability assay for 3D cultures | Measuring treatment efficacy in complex disease models [27] |

| Producer Cell Lines | Viral vector production | Gene therapy development; potential for cost reduction [28] |

| Affinity Chromatography | Purification technology | Selective capture of full capsids in viral vector manufacturing [28] |

| Organotypic Models | Advanced 3D culture systems | Studying metastasis and tumor microenvironment interactions [27] |

The economic landscape of modern oncology is characterized by significant investments in both diagnostic technologies and targeted therapeutics. The primary cost drivers identified—consumables and personnel in genomic profiling, and drug acquisition costs for targeted therapies—present opportunities for optimization through technological innovation and operational improvements. For researchers and drug development professionals, understanding these cost structures is essential for advancing the field in an economically sustainable manner. The growing precision oncology market, projected to reach $158.9 billion by 2029, underscores both the financial stakes and the opportunity for continued innovation [29]. Future progress will depend on developing more efficient technologies, implementing value-based care models, and demonstrating the economic as well as clinical benefits of precision approaches to cancer care.

The landscape of cancer diagnostics has expanded significantly, moving beyond traditional tissue biopsy to include minimally invasive liquid biopsy, comprehensive genomic profiling (CGP), and artificial intelligence (AI)-assisted tools. These modalities offer complementary approaches for tumor characterization, treatment selection, and disease monitoring within precision oncology. The integration of these technologies into clinical practice requires careful consideration of their respective technical capabilities, clinical applications, and economic impacts. Health technology assessments now must evaluate not only clinical benefits and costs but also factors such as test feasibility, journey for patients and physicians, wider implications of diagnostic results, laboratory organization, and scientific spillover [30]. This guide provides an objective comparison of these testing modalities, focusing on their performance characteristics, experimental protocols, and cost-effectiveness to inform researchers and drug development professionals.

Technical Comparison of Testing Modalities

Tissue Biopsy vs. Liquid Biopsy

Table 1: Comparative Analysis of Tissue and Liquid Biopsy

| Parameter | Tissue Biopsy | Liquid Biopsy |

|---|---|---|

| Invasiveness | Invasive surgical procedure | Minimally invasive (blood draw) |

| Analytes Detected | Tumor tissue, cancer cells | ctDNA, cfRNA, CTCs, EVs, TEPs [31] |

| Tumor Heterogeneity | Limited to sampled region | Captures heterogeneity from multiple tumor sites [31] |

| Clinical Applications | Gold standard for initial diagnosis and molecular profiling [31] | Monitoring treatment response, detecting MRD, tracking resistance [31] [32] |

| Turnaround Time | Typically longer (days to weeks) | Shorter (days) [31] |

| Sensitivity in Early-Stage Cancer | High (direct tissue examination) | Limited due to low analyte concentration [31] |

| Spatial Information | Preserved | Lost |

| Feasibility of Serial Sampling | Limited due to invasiveness | High, enabling real-time monitoring [31] [32] |

| Tumor Evolution Tracking | Single time point | Dynamic, captures clonal evolution [31] |

Tissue biopsy remains the gold standard for initial cancer diagnosis and characterization, providing essential histopathological information and material for molecular testing [31]. However, liquid biopsy has emerged as a complementary revolutionary tool that analyzes circulating tumor DNA (ctDNA), cell-free RNA (cfRNA), circulating tumor cells (CTCs), extracellular vesicles (EVs), and tumor-educated platelets (TEPs) [31]. The most significant advantage of liquid biopsy is its capacity for real-time monitoring of tumor dynamics, assessment of treatment response, and detection of minimal residual disease (MRD) or early recurrence [31]. Serial sampling of biofluids allows clinicians to track tumor evolution, monitor disease progression, and gain insights into tumor heterogeneity and clonal evolution [31]. A key limitation of liquid biopsy is reduced sensitivity in early-stage cancers due to low concentrations of circulating analytes [31].

Small Panel Testing vs. Comprehensive Genomic Profiling

Table 2: Small Panel Testing vs. Comprehensive Genomic Profiling

| Parameter | Small Panel (SP) Testing | Comprehensive Genomic Profiling (CGP) |

|---|---|---|

| Number of Genes | Limited (often 10-50 genes) | Extensive (≥200 genes, WES, or WGS) [30] |

| Technologies | IHC, FISH, Sanger sequencing, targeted NGS panels [30] | Large NGS panels, Whole Exome Sequencing (WES), Whole Genome Sequencing (WGS) [30] |

| Tissue Requirements | Lower | Higher |

| Diagnostic Cost | $63-$526 (depending on tumor type) [30] | Approximately $2,925 per cancer patient [30] |

| Actionable Targets Identified | Limited to predefined alterations | Broad, including rare and novel biomarkers |

| Therapy Guidance | Standard targeted therapies | Personalized therapy, clinical trial matching [3] [11] |

| Turnaround Time | Shorter | Longer |

| Incidental Findings | Minimal | More likely, requiring interpretation |

Small panel testing includes single-gene technologies such as immunohistochemistry (IHC), Sanger sequencing, fluorescence in situ hybridization (FISH), and targeted next-generation sequencing (NGS) panels [30]. These approaches are often part of standard care assays for biomarker testing. In contrast, comprehensive genomic profiling (CGP) includes NGS panels containing ≥200 genes, whole exome sequencing (WES), or whole genome sequencing (WGS) [30]. The diagnostic costs for these approaches vary significantly, with CGP costing approximately €2,925 per cancer patient compared to €63-€526 for targeted approaches depending on tumor type [30]. CGP provides more comprehensive genomic information, potentially identifying more actionable targets for personalized therapy and clinical trial matching [3] [11].

Cost-Effectiveness Analysis of Testing Approaches

Economic Evaluations of Genomic Testing

Table 3: Cost-Effectiveness Evidence for Genomic Testing Modalities

| Testing Modality | Cancer Type | Incremental Cost-Effectiveness Ratio (ICER) | Key Outcomes |

|---|---|---|---|

| Comprehensive Genomic Profiling (CGP) | Advanced NSCLC (US) | $174,782 per life-year gained [3] [11] | Improved OS by 0.10 years vs. SP; higher percentage receiving targeted therapies [3] [11] |

| Comprehensive Genomic Profiling (CGP) | Advanced NSCLC (Germany) | $63,158 per life-year gained [3] [11] | More cost-effective than in US setting [3] [11] |

| Liquid Biopsy (Autoantibody test) | Lung cancer screening (Brazil) | $75,435.63 per QALY [33] | Exceeded willingness-to-pay threshold in Brazil; only cost-effective if prevalence >4.0% [33] |

| Genomic Medicine | Breast and ovarian cancer | Likely cost-effective for prevention and early detection [1] | Convergent evidence supports cost-effectiveness [1] |

| Genomic Medicine | Colorectal and endometrial cancers | Likely cost-effective for prevention and early detection [1] | Strong evidence for Lynch syndrome applications [1] |

Cost-effectiveness analyses provide critical insights for healthcare decision-makers evaluating genomic testing technologies. A 2025 study comparing CGP versus small panel testing in advanced non-small cell lung cancer (NSCLC) demonstrated that CGP improved average overall survival by 0.10 years compared to SP testing [3] [11]. This survival benefit resulted from a higher percentage of patients receiving matched targeted therapies with CGP (cohort A in the Syapse study) [11]. The incremental cost-effectiveness ratio (ICER) of CGP versus SP was $174,782 per life-year gained in the United States and $63,158 per life-year gained in Germany [3] [11]. The study noted that increasing the number of patients receiving treatment decreased the ICERs ($86,826 in the United States and $29,235 in Germany), while switching from immunotherapy plus chemotherapy to chemotherapy alone increased the ICERs ($223,226 in the United States and $83,333 in Germany) [3] [11].

For liquid biopsy, a cost-effectiveness assessment of an autoantibody test (EarlyCDT-Lung) for early lung cancer detection in Brazil found an ICER of $75,435.63 per quality-adjusted life year (QALY) gained, which far exceeded the willingness-to-pay threshold in Brazil ($7,017.54-21,052.62/QALY) [33]. The analysis concluded that liquid biopsy screening would only become cost-effective in contexts where lung cancer prevalence exceeds 4.0%, assuming no significant cost reductions or accuracy improvements [33].

A broader systematic review of genomic medicine in cancer control found convergent cost-effectiveness evidence for the prevention and early detection of breast and ovarian cancer, and for colorectal and endometrial cancers (particularly Lynch syndrome) [1]. For cancer treatment, the use of genomic testing for guiding therapy was highly likely to be cost-effective for breast and blood cancers [1].

AI-Assisted Tools in Cancer Diagnostics

Table 4: Economic Impact of AI in Healthcare Applications

| AI Application | Clinical Context | Economic Outcome | Key Findings |

|---|---|---|---|

| ML-based Risk Prediction | Atrial fibrillation screening | ICER: £4,847-£5,544 per QALY [34] | Substantially below NHS threshold of £20,000 per QALY [34] |

| AI-driven Screening | Diabetic retinopathy | ICER: $1,107.63 per QALY [34] | Reduced per-patient screening costs by 14-19.5% [34] |

| AI Feature Selection | Oncology | Significant cost reductions [34] | Improved economic performance through enhanced clinical precision [34] |

| AI Integration | Liquid Biopsy | Improved diagnostic accuracy [31] | Multimodal approaches combining multiple biomarkers show promise [31] |

Artificial intelligence is demonstrating significant potential to improve the economic value of cancer diagnostics. A systematic review of cost-effectiveness and budget impact of AI in healthcare found that AI interventions improve diagnostic accuracy, enhance quality-adjusted life years, and reduce costs—largely by minimizing unnecessary procedures and optimizing resource use [34]. Several interventions achieved incremental cost-effectiveness ratios well below accepted thresholds [34]. In oncology specifically, AI-driven feature selection demonstrated significant cost reductions through enhanced clinical precision and resource utilization [34].

AI integration has also shown promise in enhancing the diagnostic accuracy of liquid biopsy. Recent advancements in artificial intelligence have improved diagnostic accuracy by integrating data, and multimodal approaches that combine multiple biomarkers such as ctDNA, CTCs, EVs, and TEPs show promise in providing a more comprehensive view of tumor characteristics [31]. The global AI in cancer diagnostics market, calculated at $1.07 billion in 2024 and expected to reach $2.61 billion by 2034, reflects the growing investment and adoption of these technologies [35].

Experimental Protocols and Methodologies

Liquid Biopsy Workflow and Protocols

The liquid biopsy workflow involves several critical steps from sample collection to data analysis. For circulating tumor cell (CTC) isolation, the FDA-approved CellSearch system uses a two-step process: first, sample centrifugation to eliminate blood components, followed by CTC detection using anti-EpCAM antibodies conjugated with magnetic ferrofluid beads; second, an immunofluorescence step to further purify CTCs from contaminant blood cells using anti-cytokeratin antibodies and DAPI nuclear staining [31]. The cells are scanned to detect EpCAM+/Cytokeratin+/DAPI+/CD45− cells, which are considered CTC candidates [31]. A limitation of this approach is that it primarily identifies epithelial CTCs and may miss mesenchymal CTCs that have undergone epithelial-mesenchymal transition (EMT) [31].

Alternative technologies include ScreenCell, which isolates and sorts cells by size from blood samples using a microporous membrane filter [31]. Additionally, novel approaches like immunomagnetic beads conditioned with graphene nanosheets (protein corona disguised immunomagnetic beads, or PIMBs) have been developed to enhance CTC enrichment [31]. These conditioned beads can be disguised with blood proteins to prevent absorption and subsequent detection of non-specific proteins [31]. PIMBs disguised with Human Serum Albumin (HSA) demonstrated a leukocyte depletion percentage of approximately 99.996%, obtaining 62 to 505 CTCs from 1.5 mL of blood from cancer patients [31].

For ctDNA analysis, the general workflow involves blood collection in specialized tubes, plasma separation, nucleic acid extraction, library preparation, sequencing, and bioinformatic analysis. The choice of specific protocols depends on the intended application, such as mutation detection, copy number variation analysis, or epigenetic profiling.

Liquid Biopsy Workflow

Comprehensive Genomic Profiling Methodology

Comprehensive genomic profiling requires robust experimental protocols to ensure accurate and reproducible results. The general workflow begins with sample acquisition, either from tissue or liquid biopsy sources, followed by DNA/RNA extraction, quality control, library preparation, sequencing, and comprehensive bioinformatic analysis.

For tissue-based CGP, the process typically involves:

- Sample Selection and Macrodissection: Pathologist-reviewed FFPE tissue sections with adequate tumor content (>20-30% tumor cellularity)

- Nucleic Acid Extraction: DNA and/or RNA extraction using validated kits

- Quality Control: Assessment of DNA/RNA quantity, quality, and fragmentation

- Library Preparation: Construction of sequencing libraries with unique molecular identifiers (UMIs) to reduce errors

- Target Enrichment: Hybridization-based capture using comprehensive gene panels

- Sequencing: High-throughput sequencing on NGS platforms

- Bioinformatic Analysis: Pipeline for alignment, variant calling, annotation, and interpretation

For liquid biopsy CGP, the protocol is similar but requires additional sensitivity to detect low-frequency variants, often employing unique molecular identifiers (UMIs) and error-suppression algorithms to distinguish true somatic variants from sequencing artifacts.

CGP Testing Workflow

Research Reagent Solutions and Essential Materials

Table 5: Key Research Reagents for Cancer Testing Modalities

| Reagent/Material | Function | Application Context |

|---|---|---|

| Anti-EpCAM Antibodies | Immunomagnetic capture of CTCs | CTC isolation in liquid biopsy [31] |

| Magnetic Ferrofluid Beads | Cell separation using magnetic fields | CTC enrichment in CellSearch system [31] |

| Anti-cytokeratin Antibodies | CTC identification via immunofluorescence | CTC purification from blood cells [31] |

| DAPI Nuclear Stain | Nuclear staining for cell identification | CTC confirmation (nucleated cells) [31] |

| CD45 Antibodies | Leukocyte marker (negative selection) | Exclusion of hematopoietic cells in CTC analysis [31] |

| Graphene Nanosheets | Surface conditioning for beads | Protein corona disguised immunomagnetic beads (PIMBs) [31] |

| Human Serum Albumin (HSA) | Protein disguise for beads | Prevention of non-specific protein absorption in PIMBs [31] |

| Next-Generation Sequencing Kits | Library preparation and target enrichment | CGP and small panel testing [30] |

| Unique Molecular Identifiers (UMIs) | Error suppression and quantification | Liquid biopsy CGP to detect low-frequency variants |

The essential reagents for cancer testing modalities vary by technology platform. For CTC isolation, key reagents include anti-EpCAM antibodies for immunomagnetic capture, magnetic ferrofluid beads for cell separation, anti-cytokeratin antibodies for CTC identification via immunofluorescence, DAPI nuclear stain for cell confirmation, and CD45 antibodies for negative selection of leukocytes [31]. Novel reagent solutions such as graphene nanosheets and Human Serum Albumin (HSA) are used in protein corona disguised immunomagnetic beads (PIMBs) to prevent non-specific protein absorption and enhance CTC enrichment efficiency [31].

For genomic profiling approaches, essential materials include next-generation sequencing kits for library preparation and target enrichment, unique molecular identifiers (UMIs) for error suppression and accurate quantification of low-frequency variants in liquid biopsy, and various bioinformatic tools for data analysis and interpretation [30].

The spectrum of cancer testing modalities offers complementary approaches with distinct advantages and limitations. Tissue biopsy remains essential for initial diagnosis, while liquid biopsy provides unprecedented opportunities for longitudinal monitoring and assessment of tumor dynamics. Small panel testing offers a cost-effective approach for focused biomarker assessment, whereas comprehensive genomic profiling enables broad detection of actionable alterations across many genes. AI-assisted tools are enhancing the accuracy and efficiency of all testing modalities while demonstrating improved cost-effectiveness in various clinical applications.

The choice between these modalities depends on clinical context, cancer type, stage, and specific clinical questions. Economic evaluations indicate that while advanced technologies like CGP and liquid biopsy may have higher upfront costs, they can provide value through improved patient outcomes and more targeted treatment selection. Future developments in testing technologies, coupled with more sophisticated AI integration and reduced sequencing costs, are likely to further transform the cancer diagnostic landscape, enabling more personalized and effective cancer care.

Frameworks and Real-World Applications in Cost-Effectiveness Analysis

In the field of health technology assessment, mathematical models serve as critical tools for evaluating the cost-effectiveness of cancer interventions, including screening programs and therapeutic agents. These models project long-term health outcomes and economic impacts by simulating disease progression and intervention effects within defined populations. Within oncology, two distinct yet complementary modeling approaches have emerged as standards for economic evaluations: partitioned survival models (PSMs) and microsimulation models such as the Microsimulation SCreening Analysis (MISCAN) platform [36] [37]. PSMs are commonly employed for technology appraisals of pharmaceutical products, directly utilizing survival curves from clinical trials to estimate time spent in different health states [37] [38]. In contrast, microsimulation models like MISCAN-Colon operate at the individual level, simulating the life courses of many simulated persons to capture heterogeneous disease pathways and complex intervention scenarios, particularly in cancer screening [39] [40]. The selection between these approaches significantly influences cost-effectiveness results, with each offering distinct advantages for addressing specific research questions in cancer control.

Table 1: Fundamental Characteristics of Modeling Approaches

| Feature | Partitioned Survival Model (PSM) | Microsimulation Model (MISCAN) |

|---|---|---|

| Model Structure | Cohort-level approach | Individual-level simulation |

| Analytical Foundation | Direct analysis of aggregate survival curves (PFS, OS) | Simulation of discrete event state transitions in continuous time [39] |

| Disease Process Representation | Limited to defined health states (e.g., progression-free, progressed, death) | Comprehensive natural history from adenoma initiation to cancer death [39] [40] |

| Handling of Heterogeneity | Limited to subgroup analysis | Incorporates individual characteristics (age, sex, race, lesion location) [39] |

| Primary Applications | Drug reimbursement decisions, clinical trial extrapolation | Cancer screening evaluation, public health policy planning [39] [36] |

Theoretical Foundations and Model Structures

Partitioned Survival Modeling Framework

Partitioned Survival Models (PSMs) employ a relatively straightforward structure that divides overall survival into mutually exclusive health states, typically progression-free, post-progression, and death [41]. The membership in these health states is determined directly from clinical trial survival curves without modeling transitions between states. Specifically, the proportion of patients in the progression-free state is defined by the progression-free survival (PFS) curve, while the proportion in the post-progression state is calculated as the difference between the overall survival (OS) and PFS curves [37]. This approach directly utilizes the primary endpoints collected in oncology trials, providing transparency and requiring fewer structural assumptions than state-transition models. However, PSMs lack a structural link between intermediate clinical endpoints (like disease progression) and survival, which limits the ability to explore how changes in time-to-progression might impact overall survival in sensitivity analyses [37] [38].

The PSM framework possesses notable advantages in terms of transparency and implementation efficiency. Because PSMs directly use investigator-assessed endpoints from clinical trials, they offer a clear audit trail connecting model inputs to trial results. This characteristic makes them particularly accessible to decision-makers who need to understand the relationship between trial data and model projections [37]. Additionally, PSMs can be developed without access to individual patient data, relying instead on published survival curves. This practical advantage has contributed to their widespread adoption for reimbursement submissions of oncology drugs. However, this simplicity comes with analytical limitations, particularly when attempting to model complex treatment sequences or disease processes with multiple possible pathways [37].

Microsimulation Modeling Framework

Microsimulation models, exemplified by MISCAN-Colon, operate on fundamentally different principles than PSMs. Rather than tracking cohort aggregates, microsimulation models generate virtual populations in which each simulated individual experiences a unique life history with multiple possible health events [39] [40]. The MISCAN-Colon model specifically implements discrete event state transitions in continuous time, simulating the complete natural history of colorectal cancer from adenoma initiation through potential cancer development and progression [39]. This approach begins by generating a time of birth and non-CRC death for each simulated individual, then modeling adenoma development through a non-homogeneous Poisson process that depends on age, sex, and race [39].

The MISCAN framework incorporates sophisticated disease biology by modeling two distinct adenoma types—progressive and nonprogressive—with different malignant potential [39]. Progressive adenomas can transition through three size categories (small, medium, large) before potentially developing into preclinical cancer stages (I-IV). Each adenoma is assigned a specific location within the large intestine, and transition probabilities between states can depend on multiple factors including lesion location, age, sex, and calendar time [39]. This granular representation enables the model to capture the complex interplay between individual risk factors, screening test characteristics, and disease progression, making it particularly valuable for evaluating screening interventions that act at different points in the natural history of cancer development.

Diagram 1: Structural comparison of partitioned survival, state transition, and microsimulation modeling approaches. PSMs derive health state membership directly from survival curves, while state transition models use explicit transition probabilities (TPs). Microsimulation models simulate multiple potential pathways for each individual, including competing mortality risks.

Methodological Implementation and Experimental Protocols

MISCAN-Colon Model Implementation

The MISCAN-Colon model employs a sophisticated microsimulation framework that generates virtual individuals with specific demographic characteristics and simulates their potential colorectal cancer pathways through continuous time [39]. The model implementation follows a structured protocol beginning with the generation of a hypothetical population resembling the target demographic (typically the U.S. population) in terms of life expectancy and CRC risk [36]. For each simulated person, the model first generates a time of birth and a time of death from causes other than colorectal cancer, establishing the individual's lifespan framework without cancer mortality [39]. The model then simulates adenoma development using a non-homogeneous Poisson process, where the individual-level hazard rate ratio is drawn from a gamma distribution and may depend on sex and race [39].

Once initiated, each adenoma progresses through the model's natural history framework, being assigned to one of seven locations within the large intestine and categorized into three size classifications: small (1-5 mm), medium (6-9 mm), and large (10+ mm) [39]. A critical feature of MISCAN-Colon is its differentiation between progressive and nonprogressive adenomas, with only progressive adenomas having the potential to develop into cancer. The probability that an adenoma is progressive depends on the age of adenoma onset [39]. Progressive medium and large adenomas can transition to stage I preclinical cancer, with progression rates faster for larger adenomas. Preclinical cancers then progress through stages I-IV, with the possibility of clinical diagnosis at any stage due to symptom development or screening detection [39].

Table 2: MISCAN-Colon Model Parameters and Input Sources

| Parameter Category | Specific Parameters | Data Sources |

|---|---|---|

| Demographic Inputs | Age-specific mortality, population distribution | National vital statistics, census data |

| Adenoma Natural History | Adenoma incidence, progression rates, size distribution | Epidemiological studies, autopsy studies [39] |

| Cancer Progression | Sojourn times by stage, stage distribution | SEER registry data, clinical studies [39] |

| Test Characteristics | Sensitivity by lesion size/type, specificity | Clinical validation studies [36] |

| Survival Outcomes | Stage-specific CRC survival, other-cause mortality | SEER program data, clinical trials [39] |

Partitioned Survival Model Implementation

The implementation of Partitioned Survival Models follows a more direct approach focused on extrapolating clinical trial endpoints. The experimental protocol begins with obtaining time-to-event data from clinical trials, typically in the form of Kaplan-Meier curves for progression-free survival (PFS) and overall survival (OS) [37]. The first critical step involves selecting appropriate parametric survival distributions (e.g., exponential, Weibull, log-normal, log-logistic, generalized gamma) to fit the observed trial data. Statistical goodness-of-fit measures such as Akaike Information Criterion (AIC) and Bayesian Information Criterion (BIC) guide the selection of the most appropriate distribution for each endpoint [37].