Comprehensive NGS Protocols for Advanced Tumor Profiling: From Foundational Principles to Clinical Validation

This article provides a comprehensive guide to next-generation sequencing (NGS) protocols for tumor profiling, tailored for researchers, scientists, and drug development professionals.

Comprehensive NGS Protocols for Advanced Tumor Profiling: From Foundational Principles to Clinical Validation

Abstract

This article provides a comprehensive guide to next-generation sequencing (NGS) protocols for tumor profiling, tailored for researchers, scientists, and drug development professionals. It explores the foundational principles of NGS technology and its transformative role in precision oncology. The content details methodological approaches for various applications, including somatic variant detection, liquid biopsies, and immunotherapy biomarker identification. Practical strategies for optimizing wet-lab and bioinformatics workflows are discussed, alongside rigorous protocols for analytical validation and comparative assessment against traditional methods. By synthesizing current standards and emerging trends, this resource aims to support the implementation of robust, clinically actionable NGS-based genomic profiling in cancer research and therapeutic development.

The Technological Foundation of NGS in Oncology: Principles, Platforms, and Workflows

Next-generation sequencing (NGS) represents a fundamental shift from traditional sequencing methods, enabling the simultaneous analysis of millions to billions of DNA fragments [1]. This core principle of massive parallelism has revolutionized genomic research by making large-scale sequencing projects dramatically faster and more cost-effective than previously possible [1]. The technology has been particularly transformative in oncology, where comprehensive genomic profiling of tumors provides critical insights for precision medicine approaches [2]. Whereas the first human genome sequence required over a decade and nearly $3 billion to complete using Sanger sequencing, NGS can now sequence an entire genome in days for under $1,000 [1]. This remarkable advancement in throughput and accessibility forms the foundation for modern tumor profiling research and therapeutic development.

Table 1: Key Differences Between Sanger Sequencing and Next-Generation Sequencing

| Feature | Sanger Sequencing | Next-Generation Sequencing |

|---|---|---|

| Throughput | Low (single fragment per reaction) | Ultra-high (millions to billions of fragments per run) |

| Cost per Genome | High (approximately $3 billion for first human genome) | Significantly lower (under $1,000 per genome) |

| Speed | Slow (days for individual genes) | Rapid (whole genomes in days, targeted panels in hours) |

| Accuracy | Very high (gold standard for validation) | High, with deep coverage providing robust variant detection |

| Scalability | Limited to small regions or single genes | Highly scalable, from targeted panels to whole genomes |

The NGS process transforms biological samples into interpretable genetic data through four integrated phases: nucleic acid extraction, library preparation, sequencing, and data analysis [3] [4]. Each stage requires specific technical considerations to ensure data quality and reliability, particularly when working with clinical tumor samples which often present challenges such as low input material or degradation [5].

Nucleic Acid Extraction and Quality Control

The initial step in any NGS workflow involves isolating high-quality genetic material from biological samples [3]. For tumor profiling, sample sources may include fresh tissue, formalin-fixed paraffin-embedded (FFPE) blocks, blood (for liquid biopsy), or fine-needle aspirates [5]. Success in subsequent workflow stages depends heavily on the yield, purity, and integrity of extracted nucleic acids [3].

Critical quality assessment methods include:

- UV spectrophotometry (A260/A280 and A260/A230 ratios) to evaluate sample purity and detect contaminants

- Fluorometric assays (e.g., Qubit) for accurate quantification of specific nucleic acid types

- Microfluidic electrophoresis (e.g., Bioanalyzer, TapeStation) to assess fragment size distribution and RNA integrity numbers (RIN) [3]

For challenging samples with limited starting material, such as small tumor biopsies, whole genome amplification (WGA) or whole transcriptome amplification (WTA) may be employed to generate sufficient material for library preparation [3]. The enzyme Phi29 DNA polymerase is particularly valuable for this application due to its high processivity, reduced amplification bias, and ability to synthesize DNA isothermally [3].

Library Preparation: From Raw Nucleic Acids to Sequence-Ready Libraries

Library preparation converts purified nucleic acids into a format compatible with sequencing instruments through a series of enzymatic reactions [1]. This critical process involves fragmenting DNA or cDNA, attaching platform-specific adapter sequences, and often incorporating sample indexes to enable multiplexing [6].

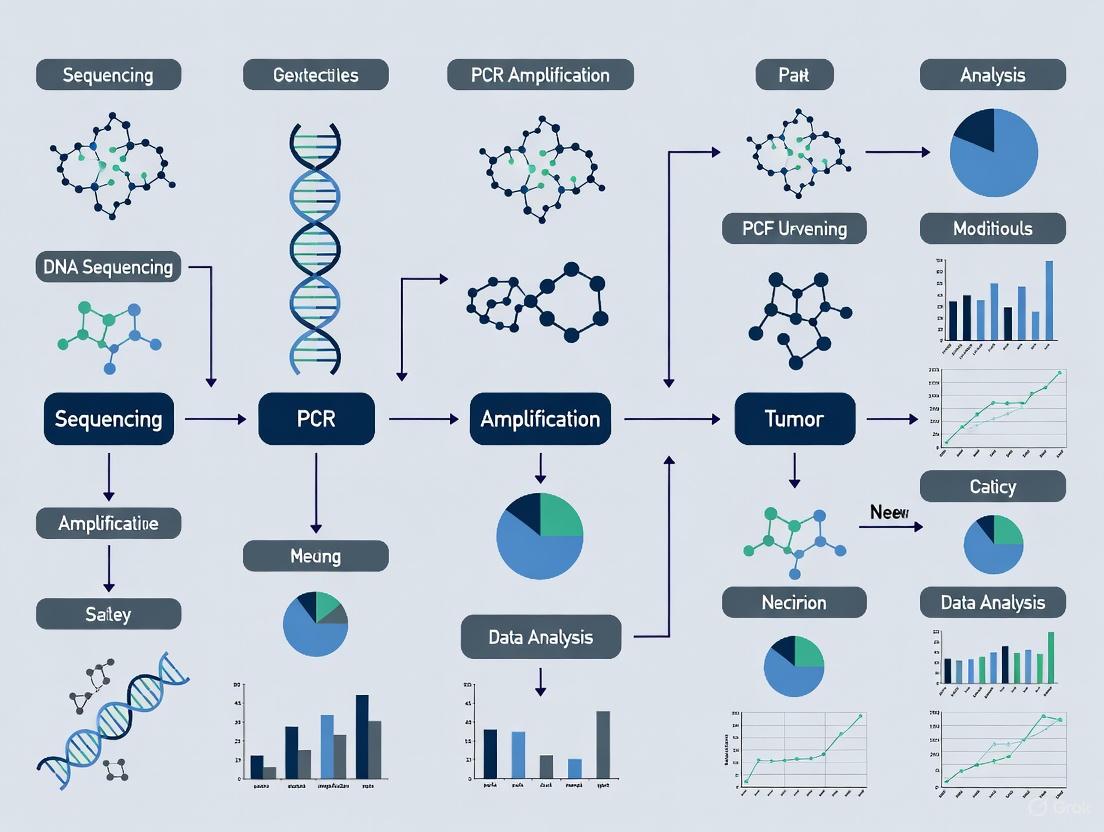

The following diagram illustrates the core workflow for NGS library preparation:

Key considerations during library preparation include:

- Fragmentation Methods: Physical (e.g., sonication) or enzymatic (e.g., tagmentation) approaches to generate optimal fragment sizes [1]

- Adapter Design: Platform-specific sequences that enable binding to flow cells and serve as priming sites; may include unique molecular identifiers (UMIs) for error correction [6]

- Target Enrichment: For tumor profiling, hybridization capture or amplicon-based approaches focus sequencing power on clinically relevant genes [1]

- PCR Amplification: Necessary for low-input samples but requires optimization to minimize duplicates and bias [5]

Sequencing Chemistry and Cluster Amplification

Modern NGS platforms utilize sophisticated chemistry to determine nucleotide sequences [7]. The Illumina platform, widely used in clinical research, employs sequencing-by-synthesis (SBS) with reversible terminators [3]. Prior to sequencing, library fragments undergo clonal amplification on a flow cell to create clusters of identical molecules, generating sufficient signal for detection [3].

Two primary amplification methods are used:

- Bridge Amplification: On non-patterned flow cells, DNA fragments form bridges with complementary oligos, creating clonal clusters through repeated denaturation and amplification cycles [3]

- Exclusion Amplification (ExAmp): On patterned flow cells, each DNA fragment is individually amplified in a predefined location, preventing polyclonal cluster formation [3]

During sequencing, fluorescently labeled nucleotides with reversible terminators are incorporated one base at a time, with imaging occurring after each incorporation [3]. The terminator is then cleaved to enable the next cycle. Base calling software converts the fluorescence data into sequence reads with associated quality scores (Q-scores), where Q30 represents 99.9% accuracy [1].

Data Analysis: From Raw Images to Biological Insights

The massive data output from NGS instruments requires sophisticated computational pipelines for interpretation [1]. The analysis workflow occurs in three distinct phases:

- Primary Analysis: Conversion of raw imaging data into sequence reads (FASTQ files) with quality scores [1]

- Secondary Analysis: Alignment of reads to a reference genome (e.g., GRCh38) and variant calling to identify mutations (SNPs, indels, CNVs, SVs), generating BAM and VCF files [1]

- Tertiary Analysis: Annotation of variants using databases (e.g., dbSNP, gnomAD, ClinVar) and interpretation according to established guidelines (e.g., ACMG) to determine clinical significance [1]

For tumor profiling, this process identifies actionable genomic alterations that inform treatment decisions [2].

Key NGS Library Preparation Technologies

Library preparation methods have evolved to address diverse research needs and sample types. Three principal technologies dominate current NGS workflows, each with distinct advantages for specific applications [6].

Table 2: Comparison of Major NGS Library Preparation Technologies

| Technology | Mechanism | Advantages | Common Applications |

|---|---|---|---|

| Bead-Linked Transposome Tagmentation | Transposomes bound to beads simultaneously fragment DNA and add adapters | Uniform reaction, reduced hands-on time, minimal sample input | Whole genome sequencing, ATAC-seq |

| Adapter Ligation | DNA fragmentation followed by enzymatic ligation of adapters | High complexity libraries, compatibility with degraded samples | FFPE samples, ancient DNA, microbiome studies |

| Amplicon-Based Prep | PCR with primers containing adapters and target-specific sequences | Simple workflow, high sensitivity for variant detection | Targeted sequencing, liquid biopsy, infectious disease |

NGS in Tumor Profiling: Applications and Protocols

The implementation of NGS in oncology has transformed cancer diagnostics and treatment selection. Comprehensive genomic profiling (CGP) enables simultaneous assessment of multiple biomarker classes from limited tumor material [2].

Comprehensive Genomic Profiling for Precision Oncology

CGP utilizes large NGS panels to identify clinically actionable alterations across various genomic variant types [2]. The BALLETT study, a nationwide Belgian initiative, demonstrated the feasibility of this approach across 12 hospitals, achieving a 93% success rate with median turnaround time of 29 days [2]. This study identified actionable genomic markers in 81% of patients with advanced cancers - substantially higher than the 21% detection rate using nationally reimbursed small panels [2].

Key genomic alterations detected in tumor profiling include:

- Single nucleotide variants (SNVs) and insertions/deletions (indels): The most common alterations, with TP53 (46%), KRAS (13%), and PIK3CA (11%) being frequently mutated in advanced cancers [2]

- Gene fusions: Oncogenic rearrangements such as NTRK and RET fusions that may qualify patients for targeted therapies [8]

- Copy number alterations: Amplifications of oncogenes like HER2 that inform treatment selection [2]

- Genome-wide biomarkers: Tumor mutational burden (TMB), microsatellite instability (MSI), and homologous recombination deficiency (HRD) that predict response to immunotherapy or targeted agents [2]

Table 3: Tumor Genomic Alterations Detected by Comprehensive Genomic Profiling

| Alteration Type | Detection Method | Clinical Significance | Frequency in Advanced Cancers |

|---|---|---|---|

| SNVs/Indels | Hybridization capture or amplicon-based NGS | Targeted therapy selection, prognosis | 1957 alterations in 756 patients [2] |

| Gene Fusions | RNA sequencing or DNA-based fusion panels | Tumor-agnostic therapy targets | 80 fusions in 756 patients [2] |

| Copy Number Variants | Coverage depth analysis | Amplification-targeted therapies | 182 amplifications in 756 patients [2] |

| TMB-High | Genome-wide mutational counting | Immunotherapy response prediction | 16% of patients (124/756) [2] |

| MSI-High | Microsatellite region analysis | Immunotherapy response prediction | 1% of patients (8/756) [2] |

Molecular Tumor Boards and Clinical Interpretation

The complexity of CGP data necessitates multidisciplinary review through molecular tumor boards (MTBs) [9]. These expert panels comprising oncologists, pathologists, geneticists, and bioinformaticians translate genomic findings into clinically actionable treatment recommendations [2]. In the BALLETT study, the national MTB recommended treatments for 69% of patients, with 23% ultimately receiving matched therapies [2]. The Precision Oncology Program (POP) similarly integrates real-world data and advanced proteomics through MTB review to inform personalized treatment decisions [9].

Essential Research Reagents and Solutions

Successful NGS experimentation requires carefully selected reagents and materials optimized for each workflow step. The following table details critical components for tumor profiling applications.

Table 4: Essential Research Reagents for NGS-Based Tumor Profiling

| Reagent Category | Specific Examples | Function and Importance |

|---|---|---|

| Nucleic Acid Extraction Kits | FFPE DNA/RNA isolation kits, cell-free DNA extraction kits | High-quality input material from challenging samples; critical for success rates [5] |

| Library Preparation Kits | Illumina DNA Prep, Illumina RNA Prep, hybrid capture kits | Convert nucleic acids to sequence-ready libraries; impact library complexity and bias [6] |

| Target Enrichment Systems | Hybridization capture baits, amplicon panels | Focus sequencing on cancer-relevant genes; improve cost-efficiency for tumor profiling [1] |

| Quality Control Reagents | Fluorometric dyes, qPCR quantification kits, fragment analyzers | Ensure library quality and optimal sequencing performance; prevent failed runs [3] |

| Sequence Adapters and Indexes | Unique dual indexes, unique molecular identifiers (UMIs) | Enable sample multiplexing and accurate variant detection; reduce index hopping and errors [6] |

| Sequencing Controls | PhiX control library, positive control DNA | Monitor sequencing performance and base calling accuracy; essential for clinical validation [6] |

Next-generation sequencing has fundamentally transformed oncology research and clinical practice by providing comprehensive insights into tumor genomics. The core principles of massive parallelism, combined with continuously improving library preparation methods and analysis pipelines, enable researchers to identify actionable alterations that guide therapeutic decisions. As the BALLETT study demonstrates, standardized CGP approaches successfully identify actionable targets in most patients with advanced cancers, highlighting the critical role of NGS in advancing precision oncology. The ongoing development of more efficient library preparation technologies, enhanced sequencing chemistries, and sophisticated bioinformatics pipelines will further solidify NGS as an indispensable tool for tumor profiling and drug development.

Next-generation sequencing (NGS) technologies have fundamentally transformed genomic research, enabling massively parallel DNA sequencing that is faster, cheaper, and more accurate than traditional Sanger sequencing [10] [11]. The evolution of these technologies has progressed through distinct generations, from foundation methods (Sanger sequencing) to second-generation short-read platforms (Illumina and Ion Torrent), and more recently to third-generation long-read technologies (Pacific Biosciences and Oxford Nanopore) [7]. This technological progression has been driven by continuous improvements in throughput, read length, accuracy, and cost-effectiveness, making comprehensive genomic profiling accessible for both research and clinical applications.

In the context of tumor profiling research, NGS has become an indispensable tool for precision oncology. It enables comprehensive genomic characterization of tumors, identifying actionable mutations, immunotherapy biomarkers, and complex structural variations that drive cancer progression [11] [12]. The selection of an appropriate NGS platform is a critical strategic decision that directly influences the feasibility and success of research projects, as each platform offers distinct advantages and limitations for specific applications [10]. This comparative analysis examines the technical specifications, performance characteristics, and practical implementation of major NGS platforms, with particular emphasis on their applications in cancer genomics and tumor profiling research.

Second-Generation Short-Read Platforms

Illumina platforms utilize a sequencing-by-synthesis approach with fluorescently labeled, reversible-terminator nucleotides [10]. DNA libraries are loaded onto a flow cell where they undergo cluster generation through bridge amplification, forming millions of clusters of identical sequences. During sequencing, the system cycles through the four labeled nucleotides, with DNA polymerase incorporating a complementary base at each cluster. A high-resolution camera captures the fluorescent signal emitted, and after imaging, the terminator is chemically cleaved to allow incorporation of the next base [10]. This cyclical process enables the instrument to read hundreds of millions of clusters in parallel, generating massive amounts of data with high accuracy. A key advantage of Illumina technology is its capability for paired-end sequencing, where both ends of each DNA fragment are sequenced, effectively doubling the information per fragment and significantly aiding in read alignment and detection of structural variants [10].

Ion Torrent platforms employ a fundamentally different approach based on semiconductor technology [10]. Instead of optical detection, the platform measures the hydrogen ions (pH changes) released during nucleotide incorporation. DNA libraries are prepared similarly to other NGS platforms, but amplification is performed via emulsion PCR on microscopic beads. Each DNA-coated bead is deposited into a well on a semiconductor chip containing millions of wells. As the sequencer cycles through each DNA base, incorporation of a complementary base releases a proton, causing a minute pH change detected by an ion-sensitive sensor under each well [10]. This direct translation of chemical signals into digital data eliminates the need for lasers or cameras, resulting in more compact instruments and simplified maintenance. However, this method faces challenges with homopolymer regions, where precise counting of identical consecutive bases can be difficult, leading to insertion/deletion errors [10].

Third-Generation Long-Read Platforms

Pacific Biosciences (PacBio) pioneered single-molecule real-time (SMRT) sequencing, which involves observing individual DNA polymerase molecules in real time as they incorporate fluorescently labeled nucleotides [7]. The system uses specialized structures called zero-mode waveguides (ZMWs) that create illuminated chambers where single polymerase molecules are immobilized. As nucleotides are incorporated, the fluorescent pulse is detected and used to determine the sequence. The platform's key innovation is HiFi (High-Fidelity) sequencing, which involves circularizing DNA fragments to form SMRTbell templates [7]. The polymerase continuously reads around the circular molecule multiple times (typically 10-20 passes), and consensus sequencing from these multiple observations generates highly accurate long reads (Q30-Q40 accuracy) ranging from 10-25 kilobases [7].

Oxford Nanopore Technologies (ONT) utilizes an entirely different approach based on protein nanopores embedded in an electrically resistant polymer membrane [7]. As single-stranded DNA molecules pass through these nanopores, they cause characteristic disruptions in ionic current that are measured and interpreted by sophisticated machine learning algorithms to determine the nucleotide sequence. A significant advancement is the introduction of duplex sequencing, where both strands of a double-stranded DNA molecule are sequenced in succession using a specially designed hairpin adapter [7]. The basecaller then aligns the two reads and compares them to correct random errors, achieving accuracy exceeding Q30 (>99.9%), rivaling short-read platforms while maintaining the advantage of extremely long read lengths (tens of kilobases or more) [7].

Table 1: Comparison of NGS Platform Sequencing Chemistries and Core Features

| Platform | Sequencing Chemistry | Detection Method | Template Preparation | Key Innovation |

|---|---|---|---|---|

| Illumina | Sequencing-by-synthesis with reversible terminators | Fluorescent imaging | Bridge amplification on flow cell | Reversible terminator chemistry enabling base-by-base sequencing |

| Ion Torrent | Semiconductor sequencing | pH change detection | Emulsion PCR on beads | Direct translation of chemical signals to digital data |

| PacBio | Single Molecule Real-Time (SMRT) sequencing | Fluorescent detection in zero-mode waveguides | SMRTbell library preparation | Circular consensus sequencing for high-fidelity long reads |

| Oxford Nanopore | Nanopore sequencing | Ionic current disruption | Native DNA library preparation | Protein nanopores for single-molecule, label-free sequencing |

Performance Specifications and Technical Comparison

Throughput and Output Characteristics

NGS platforms vary significantly in their throughput capabilities, output volumes, and run times, making them suitable for different applications and scales of operation [10]. Illumina offers the most comprehensive range of instruments, from benchtop systems like the MiSeq to production-scale sequencers like the NovaSeq X series, which can output up to 16 terabases of data in a single run (approximately 26 billion reads per flow cell) [7]. Run times correspondingly vary from a few hours for smaller runs to 1-2 days for the largest datasets. Illumina platforms generate highly uniform read lengths determined by the number of sequencing cycles (e.g., 2×150 or 2×300 cycles for paired-end reads), with all reads in a run typically being the same length [10].

Ion Torrent systems provide more moderate throughput, with output ranging from millions to tens of millions of reads depending on chip size [10]. For example, the mid-range Genexus sequencer produces approximately 15-60 million reads, while high-capacity S5 chips can generate up to 130 million reads. The platform's key advantage is rapid turnaround time, with small runs completing in just a few hours and integrated systems like the Genexus automating the entire workflow from sample to result in approximately 14-24 hours [10]. Ion Torrent generates single-end reads only, with read lengths that can vary within a run (typically ~400-600 bases on newer systems) as fragments may finish sequencing at different cycles [10].

Pacific Biosciences' Revio system, launched in 2023, provides high-throughput long-read sequencing with HiFi chemistry, while Oxford Nanopore offers flexible sequencing capacity through various flow cell options, with the unique capability of real-time sequencing and adaptive sampling [7]. Nanopore's MinION device, a USB-sized sequencer, exemplifies the platform's versatility, bringing sequencing capabilities to unconventional environments.

Table 2: Performance Specifications of Major NGS Platforms

| Platform | Maximum Output (per run) | Read Length | Run Time | Error Profile |

|---|---|---|---|---|

| Illumina | Up to 16 Tb (NovaSeq X) [7] | 75-300 bp (per end) [10] | 1-48 hours [10] | Substitution errors (<0.1-0.5%) [10] |

| Ion Torrent | Up to ~130 million reads (S5 chip) [10] | ~400-600 bases (single-end) [10] | 2-24 hours [10] | Indels in homopolymer regions (~1% error rate) [10] |

| PacBio HiFi | Varies by instrument | 10-25 kb [7] | 0.5-30 hours | Random errors, correctable via CCS [7] |

| Oxford Nanopore | Varies by flow cell | Tens of kb, up to 100+ kb [7] | Real-time; 1-72 hours | Mostly indels, improved with duplex sequencing [7] |

Accuracy and Error Profiles

Each NGS platform exhibits distinct error profiles that significantly impact their suitability for specific applications. Illumina platforms are renowned for their high accuracy, with error rates typically well below 1% (often around 0.1-0.5% per base) [10]. This high fidelity makes Illumina data particularly trusted for applications requiring precise variant detection, such as single nucleotide variant (SNV) calling in cancer genomics. The predominant error type in Illumina sequencing is substitution errors rather than insertions or deletions.

Ion Torrent systems tend to have higher raw error rates (approximately 1% per base), roughly double that of Illumina sequencing [10]. The technology's well-known limitation is its accuracy in homopolymer regions (stretches of identical bases), where the method of measuring cumulative proton release struggles to precisely count long runs of the same nucleotide, leading to insertion/deletion errors [10] [13]. This characteristic must be carefully considered when studying genomic regions rich in homopolymers.

Pacific Biosciences' HiFi reads combine the length advantages of long-read sequencing with high accuracy (Q30-Q40, or 99.9-99.99%) through circular consensus sequencing [7]. By generating multiple observations of the same DNA fragment, random errors are effectively averaged out, producing highly accurate consensus sequences. Oxford Nanopore has dramatically improved its accuracy with recent chemistry advances; simplex reads now achieve approximately Q20 (~99%) accuracy, while duplex reads regularly exceed Q30 (>99.9%) [7]. This improvement has expanded Nanopore's applications to include low-frequency variant detection and methylation-aware diagnostics.

Application in Tumor Profiling Research

Comprehensive Genomic Profiling in Oncology

NGS has become central to precision oncology, enabling comprehensive genomic profiling that identifies actionable mutations, biomarkers for immunotherapy response, and mechanisms of therapy resistance [11] [12]. In clinical oncology practice, NGS-based tumor profiling can identify targetable genomic alterations in a significant proportion of patients. For example, one real-world study of 990 patients with advanced solid tumors found that 26.0% harbored tier I variants (strong clinical significance), and 86.8% carried tier II variants (potential clinical significance) [14]. Among patients with tier I variants, 13.7% received NGS-based therapy, with 37.5% of those with measurable lesions achieving partial response [14].

The application of NGS in sarcoma research demonstrates its utility in characterizing complex tumors. A study of 81 patients with soft tissue and bone sarcomas identified genomic alterations in 90.1% of patients, with the most frequent mutations in TP53 (38%), RB1 (22%), and CDKN2A (14%) [12]. Actionable mutations were identified in 22.2% of patients, rendering them eligible for FDA-approved targeted therapies. Furthermore, NGS led to reclassification of diagnosis in four patients, demonstrating its value not only in therapeutic decision-making but also as a powerful diagnostic tool [12].

Tumor Diagnosis Recharacterization

Comprehensive genomic profiling can reveal inconsistencies between primary diagnosis and molecular findings, leading to diagnostic reclassification with significant therapeutic implications [15]. In a study of 28 cases where NGS findings were inconsistent with initial pathological diagnosis, secondary clinicopathological review resulted in disease reclassification or refinement for all cases [15]. These included reclassification events where initial diagnoses of non-small cell lung cancer, sarcoma, neuroendocrine carcinoma, and other tumors were reclassified to different tumor types based on molecular findings. Additionally, disease refinement events occurred where initial diagnoses of carcinoma of unknown primary were refined to specific tumor types including NSCLC, cholangiocarcinoma, melanoma, and others [15].

The biomarkers driving these diagnostic changes included single nucleotide variants, indels, gene fusions, and high tumor mutational burden. For example, diagnostically informative biomarkers included RET M918T (medullary thyroid carcinoma), TMPRSS2-ERG fusion (prostate carcinoma), FGFR2-ITPR2 fusion (cholangiocarcinoma), and various EGFR mutations (NSCLC) [15]. These findings highlight the value of CGP beyond therapy selection, supporting its complementary use in diagnostic confirmation to enable precision medicine strategies.

Experimental Protocols for Tumor Profiling

Sample Preparation and Library Construction

Robust sample preparation is critical for successful NGS-based tumor profiling. The following protocol outlines the key steps for DNA library preparation from formalin-fixed paraffin-embedded (FFPE) tumor specimens, based on established methodologies from clinical NGS implementation studies [14]:

Protocol 1: DNA Library Preparation from FFPE Tumor Tissue

Manual Microdissection and DNA Extraction

- Select representative tumor areas with sufficient tumor cellularity via hematoxylin and eosin staining

- Perform manual microdissection to enrich tumor content (>20% tumor cellularity recommended)

- Extract genomic DNA using the QIAamp DNA FFPE Tissue kit (Qiagen)

- Quantify DNA concentration using the Qubit dsDNA HS Assay kit on the Qubit 3.0 Fluorometer

- Assess DNA purity using NanoDrop Spectrophotometer (A260/A280 ratio between 1.7-2.2 acceptable)

- Use at least 20 ng of input DNA for library generation

Library Preparation and Target Enrichment

- Perform library preparation using hybrid capture-based method (Agilent SureSelectXT Target Enrichment System)

- Fragment DNA to optimal size (250-400 bp) if necessary

- Repair DNA ends and add 3' adenylation

- Ligate platform-specific adapters with unique dual indexes for sample multiplexing

- Amplify adapter-ligated DNA with limited-cycle PCR (typically 8-12 cycles)

- Assess library quality and quantity using Agilent 2100 Bioanalyzer with High Sensitivity DNA Kit

- Proceed with libraries meeting quality thresholds (size: 250-400 bp, concentration: ≥2 nM)

Target Enrichment and Sequencing

- Hybridize libraries to biotinylated probes targeting cancer-relevant genes (e.g., 500+ gene panel)

- Capture target DNA-streptavidin bead binding and washing

- Amplify captured libraries with post-capture PCR

- Normalize and pool enriched libraries for sequencing

- Load onto appropriate sequencing platform (Illumina NextSeq 550Dx or similar)

- Sequence with minimum 200x average depth coverage, with >80% of targets at 100x coverage

Data Analysis and Variant Calling

The computational analysis of NGS data from tumor profiling requires a standardized bioinformatics pipeline to ensure accurate variant detection and interpretation:

Protocol 2: Bioinformatics Analysis for Somatic Variant Detection

Primary Data Analysis and Quality Control

- Demultiplex sequencing data using bcl2fastq or similar tools

- Assess sequencing quality metrics (Q-score distribution, base composition, etc.)

- Verify sample identity and cross-contamination using genetic fingerprints

Sequence Alignment and Processing

- Align reads to reference genome (hg19/GRCh37) using optimized aligners (BWA-MEM, NovoAlign)

- Process aligned BAM files: coordinate sorting, duplicate marking, and local realignment

- Perform base quality score recalibration using machine learning approaches

- Calculate coverage statistics and uniformity metrics across target regions

Variant Calling and Annotation

- Call single nucleotide variants and small indels using Mutect2 or similar variant callers

- Apply filters for minimum read depth (recommended ≥200x) and variant allele frequency (VAF ≥2%)

- Identify copy number variations using CNVkit (average CN ≥5 considered amplification)

- Detect gene fusions using structural variant callers (LUMPY, with read counts ≥3 considered positive)

- Annotate variants using SnpEff and clinical databases (ClinVar, COSMIC, OncoKB)

- Calculate tumor mutational burden (TMB) as number of eligible variants per megabase

- Determine microsatellite instability (MSI) status using mSINGs or similar algorithms

Clinical Interpretation and Reporting

- Classify variants according to AMP/ASCO/CAP guidelines (Tiers I-IV)

- Annotate therapeutic implications using evidence-based frameworks (OncoKB)

- Generate clinical reports with actionable findings prioritized

- Integrate findings with clinicopathological data for comprehensive assessment

Research Reagent Solutions for NGS-Based Tumor Profiling

Table 3: Essential Research Reagents and Kits for NGS-Based Tumor Profiling

| Product Category | Example Products | Key Features | Application in Tumor Profiling |

|---|---|---|---|

| DNA Extraction Kits | QIAamp DNA FFPE Tissue Kit (Qiagen) [14] | Optimized for challenging FFPE samples; removes inhibitors | Extraction of high-quality DNA from archival tumor specimens |

| Library Preparation Kits | Agilent SureSelectXT [14]; Illumina Nextera XT [16] | Streamlined workflow; compatibility with low-input DNA | Construction of sequencing libraries from tumor DNA |

| Target Enrichment Panels | SNUBH Pan-Cancer v2 (544 genes) [14]; FoundationOne CDx | Comprehensive cancer gene coverage; TMB and MSI analysis | Capturing coding regions of cancer-relevant genes for sequencing |

| Sequence Capture Reagents | Twist Core Exome [17]; IDT xGen Pan-Cancer Panel | Uniform coverage; high on-target rates | Hybrid capture-based enrichment of target genomic regions |

| Quality Control Tools | Agilent Bioanalyzer kits [14]; Qubit assays | Accurate quantification of DNA and libraries | Quality assessment of input DNA and final libraries before sequencing |

| NGS Control Materials | Horizon Multiplex I cfDNA Reference Standard; Seraseq FFPE Tumor DNA | Defined variant allele frequencies; FFPE-like damage | Process controls for assay validation and quality monitoring |

Workflow Visualization and Experimental Design

The following diagrams illustrate key experimental workflows and analytical processes in NGS-based tumor profiling:

Comprehensive Genomic Profiling Workflow

Tumor Profiling Data Analysis Pipeline

The comparative analysis of major NGS platforms reveals a dynamic technological landscape with multiple options optimized for different applications in tumor profiling research. Illumina systems remain the gold standard for high-throughput, accurate short-read sequencing, while Ion Torrent offers rapid turnaround times with simpler workflows. Third-generation platforms from PacBio and Oxford Nanopore provide long-read capabilities that are increasingly competitive in accuracy while enabling more comprehensive genomic characterization.

For tumor profiling applications, the selection of an appropriate NGS platform involves careful consideration of multiple factors including required throughput, read length, accuracy needs, budget constraints, and intended applications. Hybrid approaches that combine multiple technologies may offer the most comprehensive solution for complex genomic analyses. As sequencing technologies continue to evolve, trends toward multi-omic integration, spatial transcriptomics, and ultra-high throughput will further expand the capabilities of NGS in precision oncology [7].

The implementation of robust experimental protocols and standardized analytical pipelines is essential for generating clinically actionable results from NGS-based tumor profiling. With proper validation and quality control, these technologies provide powerful tools for advancing cancer research and enabling personalized treatment strategies based on the unique molecular characteristics of each patient's tumor.

Next-generation sequencing (NGS) has revolutionized tumor profiling research by enabling comprehensive genomic analysis with unprecedented speed and accuracy [18]. For researchers and drug development professionals, mastering the core workflow components—sample preparation, library construction, and sequencing reactions—is fundamental to generating reliable, clinically actionable genomic data. The massively parallel sequencing capability of NGS allows millions of DNA fragments to be sequenced simultaneously, a stark contrast to traditional Sanger sequencing that processes single DNA fragments sequentially [11] [19]. This technological leap has transformed cancer research, particularly in identifying driver mutations, fusion genes, and predictive biomarkers across diverse cancer types [11]. This application note details the essential protocols and methodologies underpinning robust NGS workflows specifically for tumor genomic studies, providing a structured framework for implementation in research and diagnostic settings.

Core NGS Workflow Components

Sample Preparation and Quality Control

The initial phase of the NGS workflow is critical, as the quality of the starting material directly impacts all subsequent steps and the ultimate reliability of sequencing data. For tumor profiling, this typically begins with extracting nucleic acids from formalin-fixed paraffin-embedded (FFPE) tissue specimens or liquid biopsy samples [14].

Protocol: Nucleic Acid Extraction from FFPE Tumor Specimens

- Deparaffinization and Lysis: Incubate FFPE tissue sections in xylene or a commercial deparaffinization solution to remove paraffin, followed by rehydration through an ethanol series. Digest tissue using proteinase K in an appropriate buffer at 56°C until fully lysed [14].

- Nucleic Acid Purification: Isolate genomic DNA using silica-based membrane technology (e.g., QIAamp DNA FFPE Tissue kit). Bind DNA to the membrane, wash with ethanol-based buffers, and elute in a low-salt buffer or nuclease-free water [14].

- Quality and Quantity Assessment: Precisely quantify DNA concentration using fluorometric methods (e.g., Qubit dsDNA HS Assay). Assess purity by measuring A260/A280 ratio (target: 1.7-2.2) via spectrophotometry (e.g., NanoDrop) [14]. Evaluate DNA integrity and fragment size distribution using microfluidic capillary electrophoresis (e.g., Agilent Bioanalyzer).

Successful sequencing requires a minimum of 20 ng of high-quality DNA with minimal degradation [14]. For samples with lower tumor cellularity, manual microdissection of representative tumor areas is recommended to ensure sufficient material for analysis [14].

Library Construction

Library construction prepares nucleic acid fragments for the sequencing platform by adding platform-specific adapters and, in many cases, amplifying the material to generate sufficient signal for detection [18].

Protocol: Library Preparation via Hybridization Capture

- DNA Fragmentation and Repair: Fragment purified genomic DNA to approximately 300 base pairs using acoustic shearing or enzymatic fragmentation (e.g., NEBNext Ultra II FS DNA Library Prep Kit). Perform end-repair and dA-tailing to generate blunt-ended, 5'-phosphorylated fragments with 3'-dA overhangs [18] [20].

- Adapter Ligation: Ligate platform-specific adapter sequences (synthetic oligonucleotides) to both ends of the DNA fragments using T4 DNA ligase. These adapters facilitate binding to the sequencing flow cell and serve as primer binding sites for amplification and sequencing [18].

- Target Enrichment: For targeted sequencing panels (e.g., SNUBH Pan-Cancer v2.0 Panel covering 544 genes), hybridize adapter-ligated libraries with biotinylated probes complementary to genomic regions of interest [14]. Capture probe-bound fragments using streptavidin-coated magnetic beads, then wash away non-specific fragments. Amplify the enriched library using PCR with primers complementary to the adapter sequences [18].

- Library Quality Control: Precisely quantify the final library using quantitative PCR and assess size distribution and quality via microfluidic capillary electrophoresis (e.g., Agilent High Sensitivity DNA Kit) [14]. Libraries should demonstrate a tight size distribution (typically 250–400 bp) and sufficient concentration (≥2 nM) for sequencing [14].

Table 1: Key Library Construction Methods for Tumor Profiling

| Method | Principle | Best For | Advantages | Limitations |

|---|---|---|---|---|

| Hybridization Capture | Solution-based hybridization with biotinylated probes [14] | Large gene panels (>500 genes), whole exome | Comprehensive coverage, high specificity | Requires more input DNA, longer workflow |

| Amplicon-Based | Multiplex PCR amplification of target regions [21] | Small to medium panels, low DNA input | Fast workflow, low input requirements | Limited to predefined targets, primer bias |

| Ligation-Based | Sequencing by oligonucleotide ligation and detection [22] | Detection of specific variants | Reduced amplification bias | Lower throughput, complex data analysis |

Sequencing Reactions

The sequencing reaction phase involves the actual determination of nucleotide sequences through various technology-dependent detection methods.

Protocol: Sequencing by Synthesis (Illumina Platform)

- Cluster Generation: Denature the adapter-flanked library into single strands and load onto a flow cell. Bridge amplification occurs as fragments bind to complementary oligos on the flow cell surface, creating clusters of identical DNA fragments (approximately 1,000 copies per cluster) [18] [19].

- Sequencing Cycle: Incorporate fluorescently-labeled reversible terminator nucleotides one at a time. After each incorporation, excite the flow cell with a laser and image the fluorescence emitted by each cluster to identify the base. Chemically cleave the fluorescent dye and terminating group to enable incorporation of the next nucleotide [18] [19].

- Data Acquisition: Repeat the sequencing cycle for the desired read length (typically 75-300 bp). For paired-end sequencing, perform a second round of sequencing from the opposite end of each fragment to generate higher quality data and improve mapping accuracy [19].

Different NGS platforms employ distinct detection methods. Ion Torrent sequencing detects hydrogen ions released during DNA polymerization, while Pacific Biosciences' single-molecule real-time (SMRT) sequencing detects fluorescence in real-time as DNA polymerase incorporates nucleotides [22]. Oxford Nanopore technologies measure changes in electrical current as DNA molecules pass through protein nanopores [22].

Table 2: Comparison of Major NGS Platforms for Tumor Profiling

| Platform | Technology | Read Length | Error Profile | Tumor Profiling Applications |

|---|---|---|---|---|

| Illumina | Sequencing by synthesis with reversible dye terminators [11] [22] | 75-300 bp (short) [19] | Low per-base error rate (0.1-0.6%) [11] | Targeted panels, whole genome, whole exome |

| Ion Torrent | Semiconductor sequencing detecting H+ ions [22] | 200-400 bp (short) [22] | Homopolymer errors [22] | Targeted gene panels, rapid sequencing |

| Pacific Biosciences | Single-molecule real-time (SMRT) sequencing [11] [22] | 10,000-25,000 bp (long) [22] | Random errors, higher per-base error rate | Complex structural variants, fusion genes |

| Oxford Nanopore | Nanopore-based electrical signal detection [11] [22] | 10,000-30,000 bp (long) [22] | Higher error rates, particularly indels [22] | Real-time sequencing, epigenetic modifications |

Workflow Visualization

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Research Reagents for NGS Tumor Profiling

| Reagent/Category | Function | Example Products | Application Notes |

|---|---|---|---|

| DNA Extraction Kits | Purify genomic DNA from FFPE tissue | QIAamp DNA FFPE Tissue kit [14] | Optimized for degraded cross-linked DNA from archived samples |

| DNA Quantitation Assays | Precisely measure DNA concentration | Qubit dsDNA HS Assay [14] | Fluorometric method superior for FFPE-derived DNA |

| Library Prep Kits | Fragment DNA, add adapters, amplify targets | NEBNext Ultra II FS DNA Library Prep [20] | Integrated enzymatic fragmentation and library construction |

| Target Enrichment Kits | Capture genomic regions of interest | Agilent SureSelectXT Target Enrichment [14] | Solution-based hybridization for custom gene panels |

| Sequence Adaptors | Platform-specific oligos for binding | NEBNext Multiplex Oligos [20] | Include barcodes for sample multiplexing |

| Quality Control Kits | Assess library size and quantity | Agilent High Sensitivity DNA Kit [14] | Critical for optimal cluster density on flow cell |

The essential components of the NGS workflow—sample preparation, library construction, and sequencing reactions—form an integrated system that enables comprehensive tumor genomic profiling. Successful implementation requires meticulous attention to each step, from initial nucleic acid extraction through final sequencing reactions. The protocols and reagents detailed in this application note provide a foundation for generating high-quality NGS data suitable for identifying actionable mutations, guiding targeted therapy selection, and advancing precision oncology research. As NGS technologies continue to evolve toward single-cell resolution, liquid biopsy applications, and integrated multi-omics approaches [11], these core workflow principles will remain essential for researchers and drug development professionals working to translate genomic insights into improved cancer outcomes.

The advent of precision oncology has fundamentally transformed cancer management, shifting the paradigm from histology-based classification to molecularly-driven therapeutic decision-making. Next-generation sequencing (NGS) and Sanger sequencing represent two distinct technological generations that enable clinicians and researchers to decipher the genomic alterations driving tumorigenesis. While Sanger sequencing, developed in 1977, provided the foundational technology for reading DNA and played a crucial role in the Human Genome Project, NGS has emerged as a revolutionary approach that leverages massively parallel sequencing to comprehensively profile cancer genomes [7] [23]. Understanding the technical capabilities, limitations, and appropriate clinical applications of each platform is essential for optimizing oncologic research and molecular diagnostics.

The selection between NGS and Sanger sequencing depends on multiple factors, including the scope of genomic interrogation required, desired sensitivity, turnaround time, and cost considerations. In modern oncology practice, each technology maintains a distinct role: NGS provides an unbiased, comprehensive view of the cancer genome, while Sanger sequencing offers a highly accurate, focused analysis of specific genomic regions [24] [11]. This application note delineates the operational parameters, clinical utility, and implementation protocols for both sequencing platforms within oncology research and diagnostics, with particular emphasis on their respective strengths in tumor profiling.

Technology Comparison: Key Operational Differences

The fundamental distinction between Sanger sequencing and NGS lies in their underlying biochemistry and detection methodologies. Sanger sequencing utilizes the chain termination method, employing dideoxynucleoside triphosphates (ddNTPs) to halt DNA synthesis at specific bases. The resulting fragments are separated by capillary electrophoresis, generating a single, long contiguous read per reaction [24]. In contrast, NGS employs massively parallel sequencing, simultaneously processing millions to billions of DNA fragments on solid surfaces or in microfluidic chambers through various chemistries, including sequencing-by-synthesis, ion semiconductor, or nanopore-based detection [24] [25].

Table 1: Technical and Operational Comparison of Sanger Sequencing and NGS

| Parameter | Sanger Sequencing | Next-Generation Sequencing |

|---|---|---|

| Fundamental Method | Chain termination with ddNTPs | Massively parallel sequencing (e.g., SBS, ion detection) |

| Throughput | Low to medium (single fragment per reaction) | Extremely high (millions to billions of fragments simultaneously) |

| Read Length | 500-1000 bp (long contiguous reads) | 50-300 bp (short-read) to >10,000 bp (long-read) |

| Sensitivity (Variant Detection) | ~15-20% variant allele frequency (VAF) | ~1-5% VAF (down to 1% with sufficient coverage) |

| Cost per Base | High | Very low |

| Cost per Run/Experiment | Low (for small projects) | High (capital and reagent costs) |

| Time per Run | Fast (minutes to hours for individual reactions) | Hours to days (including library preparation) |

| Primary Applications in Oncology | Single-gene variant confirmation, validation of NGS findings, PCR product sequencing | Comprehensive genomic profiling, whole-genome/exome sequencing, transcriptomics, epigenomics |

| Variant Detection Capability | Limited to specific targeted regions | Single-nucleotide variants (SNVs), insertions/deletions (indels), copy number variations (CNVs), structural variants (SVs), fusion genes |

| Multiplexing Capability | Limited or none | High (hundreds of samples can be barcoded and pooled) |

| Bioinformatics Requirements | Basic (sequence alignment software) | Advanced (specialized pipelines, high-performance computing) |

The dramatically different operational characteristics of these technologies directly impact their suitability for various research and clinical applications in oncology. Sanger sequencing provides exceptional accuracy for focused analyses but lacks the scalability required for comprehensive genomic profiling. Conversely, NGS enables unparalleled discovery power through its ability to simultaneously detect multiple variant classes across hundreds of genes, albeit with more complex infrastructure requirements [24] [11].

Throughput and Cost Analysis

The economic and operational efficiencies of NGS and Sanger sequencing follow fundamentally different trajectories based on project scale. Sanger sequencing exhibits a low initial instrument cost and remains cost-effective for analyzing single genes or a limited number of targets. However, its sequential processing approach results in a high cost per base, making comprehensive genomic analyses prohibitively expensive and time-consuming [24]. The limited throughput of Sanger sequencing restricts its utility in oncology applications requiring broad genomic assessment, as analyzing hundreds of genes would necessitate hundreds to thousands of individual reactions.

NGS fundamentally altered the economics of genomic sequencing through its massively parallel architecture. While the initial capital investment for an NGS platform is substantial, the technology delivers a dramatically lower cost per base, making large-scale projects financially viable [24]. This economy of scale is particularly advantageous in oncology, where simultaneous assessment of hundreds of cancer-related genes, transcriptome profiling, and epigenetic markers may be required for comprehensive molecular characterization. The capacity for high-degree multiplexing, where hundreds of barcoded samples are pooled and sequenced simultaneously, further optimizes reagent use and operational efficiency [24] [26].

Table 2: Economic Considerations for Sequencing Platforms in Oncology

| Cost Factor | Sanger Sequencing | Next-Generation Sequencing |

|---|---|---|

| Instrument Cost | Lower initial investment | Substantial capital investment ($250,000-$1,000,000+) |

| Cost per Base | High | Extremely low (enables large-scale projects) |

| Cost per Genome | Prohibitively high for WGS | $80-$200 (down from $3 billion in early 2000s) |

| Reagent Cost per Run | Low for individual reactions | High per run, but low per sample when multiplexed |

| Labor Costs | High for large gene panels (manual processing) | Lower per data point (automated workflows) |

| Infrastructure/Bioinformatics | Minimal | Significant ongoing investment required |

| Optimal Use Case by Scale | 1-20 targets | 20+ targets or multiple samples |

The remarkable reduction in NGS costs has been particularly transformative for oncology research and clinical applications. The cost of sequencing a human genome has plummeted from approximately $3 billion during the Human Genome Project to as low as $80-$200 in 2025, a reduction of over 99% [27] [26] [28]. This precipitous cost decline has enabled the implementation of large-scale cancer genomics initiatives and made genomic profiling accessible for routine clinical care. Leading NGS platforms capable of achieving the $100-200 genome include Illumina's NovaSeq X series, Complete Genomics' DNBSEQ-T20x2 and T7 platforms, and Ultima Genomics' UG100 [26] [28].

It is crucial to consider the total cost of ownership beyond sequencing reagents alone. Additional expenses include library preparation, bioinformatics infrastructure, data storage, and specialized personnel. These hidden costs can substantially impact the overall economics of NGS implementation, particularly in clinical settings requiring rigorous quality control, validation, and data management [29].

Clinical Utility in Oncology

The distinct technical capabilities of NGS and Sanger sequencing have established complementary roles for these technologies in clinical oncology. Appropriate technology selection depends on the specific clinical or research question, with each platform offering unique advantages for particular applications.

Sanger Sequencing Applications in Oncology

Sanger sequencing maintains a vital role in modern oncology practice, primarily in scenarios requiring high accuracy for focused genomic regions:

- Validation of NGS Findings: Confirmatory testing of clinically significant variants initially identified through NGS, leveraging Sanger's high per-base accuracy over short, focused regions [24] [11]. This practice is particularly important for verifying therapeutic targets or diagnostic markers before initiating treatment.

- Single-Gene Diagnostic Tests: Interrogation of known cancer-associated genes in hereditary cancer syndromes (e.g., BRCA1/2 in familial breast cancer) when NGS-based testing is unavailable or unnecessary [24].

- Quality Control and Verification: Essential for validating DNA constructs, CRISPR-Cas9 gene editing outcomes, and synthetic genes in basic and translational cancer research [23].

- Low-Complexity Mutation Detection: Testing for recurrent mutations in known loci, such as specific single-nucleotide polymorphisms (SNPs) or small insertions/deletions (indels) with established clinical significance [24].

The operational simplicity, long read lengths (500-1000 bp), and exceptional accuracy (Phred score > Q50 or 99.999%) of Sanger sequencing make it ideally suited for these focused applications [24]. Furthermore, the minimal bioinformatics requirements and established validation frameworks facilitate implementation in clinical laboratory settings.

NGS Applications in Oncology

NGS has become the cornerstone of precision oncology, enabling comprehensive genomic profiling that guides diagnosis, prognostication, therapeutic selection, and monitoring of treatment response [11]. Key applications include:

- Comprehensive Genomic Profiling: Simultaneous assessment of hundreds of cancer-related genes to identify actionable mutations, including SNVs, indels, CNVs, and SVs, in a single assay [11] [14]. This approach is particularly valuable in advanced malignancies with complex genomic landscapes.

- Whole-Genome Sequencing (WGS): Unbiased analysis of the entire genome, enabling detection of novel structural variations, non-coding mutations, and complex rearrangements that may drive tumorigenesis [24].

- Whole-Exome Sequencing (WES): Focused sequencing of protein-coding regions to identify causative mutations in Mendelian cancer predisposition syndromes or somatic alterations in tumors [24].

- Transcriptome Sequencing (RNA-Seq): Quantitative and qualitative analysis of gene expression, fusion transcripts, alternative splicing, and non-coding RNAs that may serve as diagnostic, prognostic, or predictive biomarkers [24] [11].

- Liquid Biopsy: Detection of circulating tumor DNA (ctDNA) in blood samples to enable non-invasive tumor genotyping, monitoring of minimal residual disease (MRD), and assessment of treatment resistance [11].

- Immuno-oncology Biomarker Discovery: Evaluation of tumor mutational burden (TMB), microsatellite instability (MSI) status, and neoantigen load to predict response to immune checkpoint inhibitors [11] [14].

- Epigenomic Profiling: Mapping of DNA methylation patterns, chromatin accessibility, and histone modifications that regulate gene expression in cancer cells [24].

The massively parallel nature of NGS provides unprecedented sensitivity for detecting low-frequency variants present in heterogeneous tumor samples. With sufficient coverage depth, NGS can reliably identify variants with allele frequencies as low as 1-5%, a crucial capability for analyzing subclonal populations in treatment-resistant cancers [24] [11]. Furthermore, the ability to multiplex hundreds of samples in a single run significantly improves operational efficiency and reduces per-sample costs for high-volume testing.

Experimental Protocols for Tumor Profiling

NGS-Based Comprehensive Genomic Profiling Protocol

The following protocol outlines a standardized workflow for targeted NGS-based comprehensive genomic profiling of solid tumors, adapted from established clinical pipelines [14].

Sample Preparation and Quality Control

- Tumor Specimen Selection: Identify formalin-fixed paraffin-embedded (FFPE) tumor blocks with adequate tumor cellularity (>20% tumor nuclei). Hematoxylin and eosin (H&E) stained sections should be reviewed by a qualified pathologist to annotate regions of interest for macrodissection.

- DNA Extraction: Using the QIAamp DNA FFPE Tissue Kit (Qiagen):

- Cut 4-8 sections of 5-10 μm thickness from selected FFPE blocks.

- Deparaffinize with xylene and wash with ethanol.

- Digest tissue with proteinase K at 56°C for 3 hours to overnight.

- Isolate DNA using spin columns according to manufacturer's instructions.

- Elute DNA in 30-50 μL of elution buffer.

- DNA Quantification and Quality Assessment:

- Quantify DNA using the Qubit dsDNA HS Assay Kit on the Qubit 3.0 Fluorometer.

- Assess DNA purity using NanoDrop Spectrophotometer (A260/A280 ratio between 1.7-2.2).

- Evaluate DNA fragmentation using the Agilent 2100 Bioanalyzer with the Agilent High Sensitivity DNA Kit.

- Inclusion Criteria: Minimum 20 ng DNA with A260/A280 ratio 1.7-2.2 and adequate fragmentation (majority of fragments between 200-500 bp).

Library Preparation and Target Enrichment

- Library Preparation: Using the Agilent SureSelectXT Target Enrichment System:

- Fragment DNA to 150-200 bp using ultrasonication (Covaris S2) or enzymatic fragmentation.

- Repair DNA ends and add 'A' bases to 3' ends.

- Ligate Illumina-compatible adapters with unique dual indices for sample multiplexing.

- Amplify ligated DNA with 8-10 PCR cycles.

- Validate library size distribution (250-400 bp) using the Agilent High Sensitivity DNA Kit.

- Target Enrichment:

- Hybridize libraries to biotinylated RNA baits targeting a pan-cancer gene panel (e.g., 544 genes as in the SNUBH Pan-Cancer v2.0 Panel [14]).

- Incubate at 65°C for 16-24 hours.

- Capture hybridized fragments using streptavidin-coated magnetic beads.

- Wash to remove non-specifically bound DNA.

- Amplify captured libraries with 12-14 PCR cycles.

- Library Quantification and Normalization: Quantify final libraries using qPCR and normalize to 2-4 nM for sequencing.

Sequencing and Data Analysis

- Sequencing:

- Denature and dilute libraries to appropriate loading concentration (1.2-1.8 pM).

- Load onto Illumina NextSeq 550Dx or similar sequencing platform.

- Sequence using 2×150 bp paired-end chemistry with a minimum of 80% of targets at 100× coverage and average mean depth of 500-800×.

- Bioinformatics Analysis:

- Demultiplex reads based on dual indices.

- Align to reference genome (hg19/GRCh37) using optimized aligners (e.g., BWA-MEM).

- Perform base quality score recalibration and local realignment.

- Call variants using validated pipelines:

- SNVs/indels: Mutect2 with minimum VAF ≥ 2% [14]

- CNVs: CNVkit with amplification threshold ≥ 5 copies

- Structural variants: LUMPY with read count ≥ 3 for positive calls

- Annotate variants using SnpEff and clinical databases.

- Calculate TMB and MSI status using established algorithms.

- Variant Interpretation and Reporting:

- Classify variants according to AMP/ASCO/CAP guidelines:

- Tier I: Variants of strong clinical significance

- Tier II: Variants of potential clinical significance

- Tier III: Variants of unknown significance

- Tier IV: Benign or likely benign variants [14]

- Generate clinical report highlighting actionable findings.

- Classify variants according to AMP/ASCO/CAP guidelines:

NGS Tumor Profiling Workflow: Sample to Report Pathway

Sanger Sequencing Validation Protocol

This protocol describes the standard workflow for validating NGS-derived variants using Sanger sequencing, ensuring high-confidence variant detection for clinical reporting.

PCR Amplification

- Primer Design:

- Design primers flanking the target variant using software such as Primer3.

- Ensure amplicon size of 300-500 bp for optimal sequencing quality.

- Position primers to avoid known polymorphisms, repetitive elements, and secondary structures.

- Verify specificity using BLAST against the reference genome.

- PCR Reaction Setup:

- Prepare 25 μL reactions containing:

- 10-20 ng genomic DNA

- 1× PCR buffer

- 1.5 mM MgCl₂

- 0.2 mM dNTPs

- 0.2 μM forward and reverse primers

- 1 U high-fidelity DNA polymerase

- Perform thermal cycling:

- Initial denaturation: 95°C for 2 minutes

- 35 cycles: 95°C for 30 seconds, 58-62°C for 30 seconds, 72°C for 45 seconds

- Final extension: 72°C for 5 minutes

- Prepare 25 μL reactions containing:

- PCR Product Purification:

- Treat with exonuclease I and shrimp alkaline phosphatase to remove excess primers and dNTPs.

- Incubate at 37°C for 30 minutes followed by 80°C for 15 minutes for enzyme inactivation.

- Alternatively, use commercial PCR purification kits.

Sequencing Reaction and Electrophoresis

- Cycle Sequencing:

- Prepare 10 μL reactions containing:

- 1-5 ng purified PCR product

- 1× sequencing buffer

- 0.5 μM sequencing primer (forward or reverse)

- 0.5 μL BigDye Terminator v3.1

- Perform thermal cycling:

- Initial denaturation: 96°C for 1 minute

- 25 cycles: 96°C for 10 seconds, 50°C for 5 seconds, 60°C for 4 minutes

- Prepare 10 μL reactions containing:

- Purification of Sequencing Products:

- Remove unincorporated dyes using ethanol/sodium acetate precipitation or commercial purification plates.

- Resuspend in 10-15 μL of Hi-Di formamide.

- Capillary Electrophoresis:

- Denature samples at 95°C for 2 minutes and immediately place on ice.

- Load onto ABI 3500 or similar genetic analyzer.

- Run using standard sequencing module with POP-7 polymer.

- Data Analysis:

- Base calling using Sequencing Analysis Software.

- Align sequences to reference using programs such as Sequencher or Geneious.

- Manually inspect chromatograms for variant confirmation.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Reagents and Materials for Sequencing-Based Tumor Profiling

| Category | Specific Products/Kits | Application | Key Features |

|---|---|---|---|

| DNA Extraction | QIAamp DNA FFPE Tissue Kit (Qiagen) | Isolation of high-quality DNA from FFPE tumor specimens | Optimized for fragmented DNA, removes PCR inhibitors |

| DNA Quantification | Qubit dsDNA HS Assay Kit (Invitrogen) | Accurate quantification of double-stranded DNA | Fluorometric specificity for dsDNA, insensitive to RNA |

| DNA Quality Assessment | Agilent High Sensitivity DNA Kit (Bioanalyzer) | Evaluation of DNA fragmentation and size distribution | Microfluidics-based analysis, requires small sample input |

| NGS Library Preparation | SureSelectXT Target Enrichment System (Agilent) | Library preparation and hybrid capture-based target enrichment | Compatible with FFPE DNA, flexible target design |

| NGS Sequencing | Illumina NextSeq 550Dx, MiSeqDx | Clinical-grade sequencing platforms | FDA-cleared systems, integrated data analysis |

| Sanger Sequencing | BigDye Terminator v3.1 (Applied Biosystems) | Cycle sequencing for variant validation | Optimized chemistry, high signal-to-noise ratio |

| Capillary Electrophoresis | ABI 3500 Genetic Analyzer (Applied Biosystems) | Fragment separation and detection for Sanger sequencing | 8-capillary array, high base-calling accuracy |

| Variant Annotation | SnpEff, ANNOVAR | Functional annotation of genetic variants | Open-source tools, comprehensive database integration |

| Variant Interpretation | ClinVar, OncoKB, COSMIC | Clinical interpretation of cancer variants | Expert-curated databases, therapy-specific annotations |

Technology Application Map: NGS and Sanger Sequencing Roles

The complementary roles of NGS and Sanger sequencing in modern oncology reflect a sophisticated approach to genomic medicine that leverages the unique strengths of each technology. NGS provides the comprehensive, unbiased profiling capability essential for deciphering the complex genomic landscape of cancer, while Sanger sequencing delivers the exceptional accuracy required for definitive validation of critical findings. This synergistic relationship enables clinicians and researchers to balance breadth of genomic interrogation with analytical precision, optimizing patient care and research outcomes.

Future developments in sequencing technology will likely further refine these roles. Third-generation sequencing platforms offering long-read capabilities, real-time analysis, and direct epigenetic detection continue to mature, potentially addressing current limitations in structural variant detection and haplotype phasing [7]. Meanwhile, ongoing innovations in Sanger sequencing, including microfluidic integration and enhanced detection chemistries, promise to maintain its relevance for focused applications requiring the highest accuracy [23]. As the cost of comprehensive genomic profiling continues to decline, the strategic implementation of both technologies within integrated diagnostic workflows will be essential for advancing precision oncology and delivering on the promise of personalized cancer care.

Comprehensive Genomic Profiling (CGP) represents a transformative approach in oncology that utilizes next-generation sequencing (NGS) technologies to perform detailed genomic analysis of cancers [30]. Unlike traditional single-gene tests that focus on a limited set of mutations, CGP simultaneously analyzes hundreds of gene markers across the tumor genome, providing unprecedented insights into the complex molecular landscape of individual cancers [31]. This comprehensive analysis identifies clinically relevant mutations that can be targeted with specific drug therapies, making CGP an indispensable tool for advancing precision oncology and moving beyond the limitations of histology-based treatment decisions [31] [2].

The clinical implementation of CGP has demonstrated significant impact on patient management. In the large-scale BALLETT study, which analyzed 872 patients with advanced cancers, CGP successfully identified actionable genomic markers in 81% of patients—substantially higher than the 21% detection rate achievable using nationally reimbursed small panels [2]. This enhanced detection capability directly translates to improved therapeutic matching, with studies confirming that patients receiving CGP-guided targeted therapies experience significantly longer progression-free survival (PFS) and overall survival (OS) across multiple tumor types [2].

Key Analytical Targets and Detection Capabilities

CGP provides a consolidated approach to biomarker detection by simultaneously evaluating multiple genomic alteration types and complex biomarkers that traditionally required separate testing methodologies. The comprehensive nature of this analysis enables a more complete understanding of tumor biology and therapeutic opportunities.

Table 1: Genomic Alterations Detectable by Comprehensive Genomic Profiling

| Alteration Type | Detection Capability | Clinical Significance |

|---|---|---|

| Single Nucleotide Variants (SNVs) | Base substitutions | Driver mutations, therapeutic targets |

| Insertions/Deletions (Indels) | Small sequence additions/removals | Protein function alteration |

| Copy Number Variations (CNVs) | Gene amplifications/deletions | Oncogene activation, tumor suppressor loss |

| Gene Rearrangements | Structural variants, gene fusions | Novel oncogenic drivers |

| Tumor Mutational Burden (TMB) | Mutations per megabase | Immunotherapy response predictor |

| Microsatellite Instability (MSI) | Repetitive DNA sequence stability | Immunotherapy eligibility |

| Homologous Recombination Deficiency (HRD) | DNA repair deficiency | PARP inhibitor sensitivity |

The detection frequency of these alterations varies significantly across cancer types. In advanced Non-Small Cell Lung Cancer (NSCLC), for instance, CGP identifies clinically actionable alterations in approximately 45% of patients, with KRAS G12C mutations (18%) and EGFR alterations (14%) being among the most common [31]. In advanced soft tissue and bone sarcomas, CGP reveals a different molecular landscape, with TP53 mutations (38%), RB1 alterations (22%), and CDKN2A mutations (14%) predominating [12]. This tumor-specific variation underscores the importance of comprehensive rather than targeted mutation testing, particularly for cancers with complex genomic architectures.

CGP Workflow and Experimental Protocol

The successful implementation of CGP requires meticulous attention to each step of the analytical process, from sample acquisition through data interpretation. The following workflow outlines the standardized protocol for CGP analysis:

Sample Preparation and Quality Control

The initial phase begins with nucleic acid extraction from formalin-fixed paraffin-embedded (FFPE) tumor tissue, which remains the most common sample type for CGP analysis [31]. The quality and quantity of extracted DNA and RNA are critically assessed to ensure they meet platform-specific requirements, typically requiring a minimum of 50ng DNA for library construction [31]. For cases where tissue samples are inadequate, liquid biopsy alternatives using circulating tumor DNA from plasma can be employed, though this approach may have limitations in genomic coverage [31] [2]. Sample age does not significantly impact CGP success rates, enabling the utilization of archival tissue specimens [2].

Library Construction and Target Enrichment

Library preparation involves fragmenting the genomic DNA to appropriate sizes (approximately 300bp) and attaching platform-specific adapter sequences [18]. These adapters are essential for fragment amplification and sequencing platform attachment. Following adapter ligation, target enrichment is performed using either PCR amplification with specific primers or hybridization-based capture with exon-specific probes to isolate coding regions of interest [18]. The constructed libraries undergo rigorous quality assessment through quantitative PCR and other metrics to ensure they meet sequencing standards before proceeding to the sequencing reaction.

Sequencing Reaction and Data Generation

CGP utilizes massive parallel sequencing technology, processing millions of DNA fragments simultaneously—a significant advancement over traditional Sanger sequencing that processes fragments individually [18]. The most commonly employed technology is Illumina sequencing, which involves immobilizing library fragments on a flow cell surface, amplifying them through bridge PCR to form clusters of identical sequences, and then performing cyclic fluorescence-based nucleotide incorporation detection [18]. Other platforms such as Ion Torrent and Pacific Biosciences employ different detection methodologies including semiconductor-based detection and single-molecule real-time sequencing [18].

Bioinformatic Analysis and Variant Calling

The massive data output from CGP requires sophisticated bioinformatics pipelines for processing and interpretation. Initial steps include sequence alignment to reference genomes, followed by variant calling to identify mutations, copy number alterations, and structural rearrangements [18]. Additional algorithms assess complex biomarkers such as tumor mutational burden (TMB), calculated as mutations per megabase, and microsatellite instability (MSI) status [12] [2]. The analytical challenge lies in distinguishing driver mutations from passenger mutations and accurately interpreting the clinical significance of identified variants.

Key Reagent Solutions for CGP Implementation

The successful implementation of CGP requires specialized reagents and platforms designed to handle the complexity of genomic analysis. The following table outlines essential research reagent solutions and their functions in the CGP workflow:

Table 2: Essential Research Reagent Solutions for Comprehensive Genomic Profiling

| Reagent Category | Specific Examples | Function in Workflow |

|---|---|---|

| Commercial CGP Panels | FoundationOne CDx, FoundationOne Liquid CDx, Tempus xT | Targeted gene panels for comprehensive mutation profiling |

| Nucleic Acid Extraction Kits | FFPE DNA/RNA extraction kits, plasma ctDNA kits | High-quality nucleic acid isolation from various sample types |

| Library Preparation Kits | Hybridization capture kits, amplicon-based kits | Fragment end-repair, adapter ligation, target enrichment |

| Sequencing Reagents | Illumina sequencing chemistry, Ion Torrent reagents | Fluorescently-labeled nucleotides, polymerase enzymes |

| Bioinformatic Tools | Variant callers, TMB algorithms, fusion detectors | Automated variant identification and annotation |

Commercial CGP panels such as FoundationOne CDxinterrogate 324 genes for substitutions, indels, copy number alterations, rearrangements, and genomic signatures including TMB and MSI [31]. The analytical validation of these platforms ensures reliable detection of clinically actionable biomarkers across diverse cancer types, enabling their implementation in both research and clinical settings.

Analytical Considerations and Quality Metrics

The implementation of CGP requires careful attention to analytical performance characteristics and quality metrics. The BALLETT study demonstrated a 93% success rate for CGP across 814 patients, with variability observed based on tumor type and laboratory procedures [2]. The median turnaround time from sample acquisition to final report was 29 days, though this varied significantly across institutions (range: 18-45 days) [2]. This timeline represents a critical consideration for clinical implementation, particularly in advanced cancer settings where treatment decisions are time-sensitive.

The complexity of CGP data interpretation necessitates multidisciplinary collaboration through Molecular Tumor Boards (MTBs), where oncologists, pathologists, geneticists, and bioinformaticians collectively review findings and generate clinical recommendations [2]. In the BALLETT study, MTBs provided treatment recommendations for 69% of patients, with 23% ultimately receiving matched therapies [2]. The primary barriers to implementation included drug accessibility, clinical trial eligibility, and patient performance status—highlighting that technological capability alone is insufficient without corresponding systemic support.

Comprehensive Genomic Profiling represents a fundamental advancement in cancer diagnostics, consolidating multiple biomarker assessments into a unified platform that provides unprecedented insights into tumor biology. The ability to simultaneously evaluate hundreds of genes and complex genomic signatures positions CGP as an essential tool for precision oncology, enabling the identification of actionable therapeutic targets across diverse cancer types. As the technology continues to evolve and implementation barriers are addressed, CGP promises to become increasingly integral to cancer research and drug development, ultimately improving outcomes for patients with advanced malignancies through more personalized treatment approaches.

Implementing NGS in Tumor Profiling: Targeted Panels, Liquid Biopsies, and Clinical Applications

Targeted Next-Generation Sequencing (NGS) has revolutionized oncological research by enabling researchers to sequence specific genomic regions of interest while omitting irrelevant portions of the genome. This approach significantly reduces the time and cost associated with whole-genome sequencing while providing deeper coverage of targeted regions, facilitating the identification of both known and novel variants within a defined gene set [32]. For cancer research, targeted NGS panels allow high-throughput analysis of large genomic regions in a single, efficient assay, providing significantly higher sensitivity for discovering rare somatic mutations that often serve as important cancer drivers [33].

The fundamental principle behind target enrichment is that DNA libraries can be modified to deliberately overrepresent specific genetic loci prior to sequencing [34]. By focusing only on regions relevant to cancer biology, researchers can reallocate resources to achieve deeper, higher-quality data, which is particularly valuable for detecting low-frequency variants in heterogeneous tumor samples or minimal residual disease [35]. Two primary methodologies have emerged for target enrichment: amplicon-based sequencing and hybridization capture-based sequencing. Each approach offers distinct advantages and limitations that must be carefully considered when designing tumor profiling studies [32].

Key Methodological Differences

Amplicon-Based Sequencing