CNN vs. RNN in Cancer Genomics: A Comprehensive Performance Comparison for Precision Oncology

Deep learning architectures, particularly Convolutional and Recurrent Neural Networks, are revolutionizing cancer genomics by enabling the analysis of high-dimensional data for improved detection, classification, and treatment selection.

CNN vs. RNN in Cancer Genomics: A Comprehensive Performance Comparison for Precision Oncology

Abstract

Deep learning architectures, particularly Convolutional and Recurrent Neural Networks, are revolutionizing cancer genomics by enabling the analysis of high-dimensional data for improved detection, classification, and treatment selection. This article provides a systematic comparison of CNN and RNN performance across key applications in cancer research, including gene expression-based classification, somatic variant detection, and integration with histopathological data. We explore foundational principles, methodological adaptations for genomic sequences, and strategies to overcome challenges such as data heterogeneity and model interpretability. By synthesizing evidence from recent studies and benchmarking efforts, this review offers actionable insights for researchers and clinicians selecting optimal deep-learning frameworks to advance precision oncology, highlighting future directions for clinical translation and multimodal data integration.

Understanding CNN and RNN Architectures: Core Principles for Genomic Data Analysis

In the field of cancer genomics, the selection of an appropriate neural network architecture is a fundamental decision that directly impacts the performance and efficacy of computational models. As high-throughput technologies generate increasingly complex and voluminous genomic data, deep learning architectures offer powerful tools for extracting meaningful patterns. This guide provides an objective comparison of three foundational architectures—Multi-Layer Perceptron (MLP), Convolutional Neural Network (CNN), and Recurrent Neural Network (RNN)—specifically for cancer genomics research. We evaluate their performance based on experimental data, detail key methodologies, and provide visualizations of their application workflows to inform researchers, scientists, and drug development professionals.

The core building blocks of MLP, CNN, and RNN architectures process genomic information differently, leading to distinct strengths and limitations for specific tasks in cancer research.

Multi-Layer Perceptrons (MLPs), also known as fully connected networks, form the most basic type of artificial neural network. In an MLP, each neuron is connected to every neuron in the previous and subsequent layers. For genomic data, the input layer typically receives a vector representing the expression levels of thousands of genes [1]. These models excel at learning global, non-linear relationships across the entire input feature set but lack inherent mechanisms to capture spatial or sequential dependencies in the data.

Convolutional Neural Networks (CNNs) were originally designed for processing image data but have been successfully adapted for genomic sequences. They utilize mathematical convolution operations and pooling layers to automatically extract hierarchical features [2]. Their strength lies in identifying local patterns—such as motifs in a DNA sequence or specific gene expression signatures—regardless of their position, making them highly efficient for detecting characteristic genomic markers of cancer [3] [1].

Recurrent Neural Networks (RNNs), including variants like Long Short-Term Memory (LSTM) networks, are specialized for sequential data. They process inputs step-by-step while maintaining an internal "memory" of previous information through recurrent connections [2] [1]. This architecture is particularly suited for modeling genomic sequences where the order of elements (e.g., nucleotides in a gene or temporal changes in gene expression) carries critical biological meaning [1].

Table 1: Performance Comparison of Neural Network Architectures in Cancer Genomics Applications.

| Architecture | Reported Best Accuracy | Application Context | Key Strength | Primary Limitation |

|---|---|---|---|---|

| MLP | Varies significantly with model configuration [4] | Predicting radiosensitivity from gene expression data [4] | Fast initial convergence and short training time per epoch [4] | Lower prediction accuracy and high performance dependence on model configuration [4] |

| CNN | High accuracy; often superior to MLP for gene expression [4] [1] | Radiosensitivity prediction [4]; Cancer-type classification [3] [1] | High prediction accuracy, low training fluctuations, efficient at capturing local spatial features [4] [1] | Requires transformation of gene data into image-like formats in some applications [1] |

| RNN | Effective for sequence and time-series modeling [1] | Analyzing gene sequences and temporal expression patterns [2] [1] | Models long-range dependencies and sequential dependencies in data [1] | Higher computational cost, more susceptible to overfitting with small datasets [1] |

| Hybrid (1D-CNN + RNN) | 100% (Brain cancer classification on CuMiDa dataset) [3] | Multi-class classification of brain cancer from gene expression data [3] | Combines local feature detection (CNN) with sequence modeling (RNN) for superior performance [3] | Increased model complexity and computational demands [3] |

Detailed Experimental Protocols

To ensure the reproducibility of the cited performance benchmarks, this section outlines the key methodological details from the featured experiments.

CNN and MLP for Radiosensitivity Prediction

A direct comparison of MLP and CNN models was conducted to predict the clonogenic surviving fraction at 2 Gy (SF2)—a measure of cellular radiosensitivity—using microarray gene expression data from the National Cancer Institute-60 (NCI-60) cell line panel [4].

- Data Source: Publicly available gene expression data and clonogenic SF2 values from the NCI-60 cell lines.

- Model Variants: The study compared three distinct MLP architectures and four different CNN models.

- Training Protocol: Models were trained and evaluated using a folded cross-validation approach to ensure robust performance estimation.

- Performance Metrics: Prediction accuracy was assessed based on absolute error (< 0.02) or relative error (< 10%). The study also compared models on secondary metrics like training time per epoch, training fluctuations, and computational resource requirements [4].

Hybrid 1D-CNN and RNN for Brain Cancer Classification

A state-of-the-art result was achieved using a hybrid deep-learning model for classifying five categories of brain cancer from gene expression data [3].

- Dataset: The GSE50161 brain cancer gene expression dataset from the Curated Microarray Database (CuMiDa). It contains 54,676 genes and 130 samples across five classes: Ependymoma, Glioblastoma, Medulloblastoma, Pilocytic Astrocytoma, and normal tissue [3].

- Data Partitioning: The dataset was split into 80% for training and 20% for testing.

- Model Architecture:

- 1D-CNN Stage: A one-dimensional convolutional network was applied directly to the gene expression profile vectors to extract local features.

- RNN Stage: The features learned by the CNN were then fed into a recurrent neural network to model dependencies and contextual information.

- Hyperparameter Optimization: A final version of the model used Bayesian optimization (BO) to automatically find the best hyperparameters, maximizing classification performance [3].

- Performance Benchmarking: The hybrid model's performance was compared against six conventional machine learning models (Support Vector Machine, Random Forest, etc.) previously applied to the same dataset [3].

Workflow Visualization

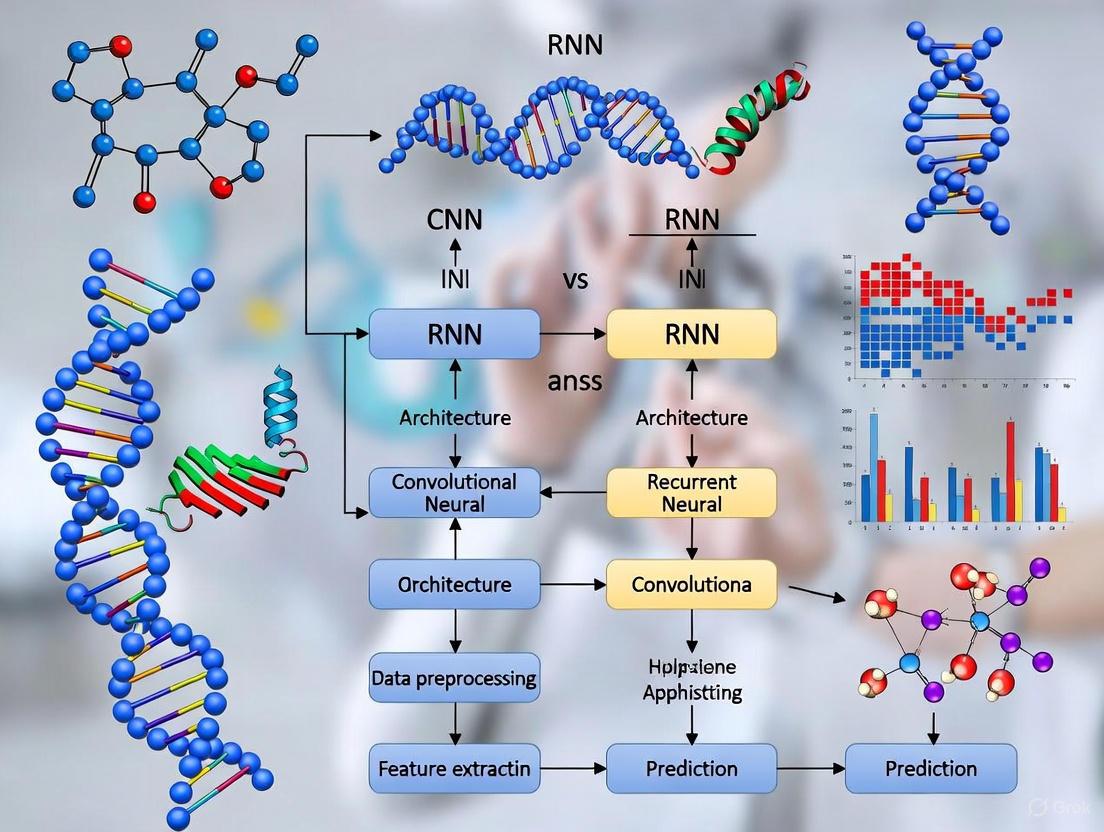

The following diagram illustrates the workflow of the hybrid 1D-CNN and RNN model, which achieved the highest performance in brain cancer classification as discussed in the experimental protocols [3].

Diagram 1: Hybrid 1D-CNN and RNN workflow for brain cancer classification.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of deep learning models in cancer genomics relies on a foundation of specific data resources and computational tools. The table below details key components used in the featured experiments.

Table 2: Key Research Reagents and Materials for Cancer Genomics with Deep Learning.

| Item Name | Type | Function in Research |

|---|---|---|

| NCI-60 Cell Line Panel [4] | Biological Dataset | A panel of 60 diverse human cancer cell lines used as a benchmark for therapeutic discovery and genomic studies, including radiosensitivity prediction. |

| CuMiDa (Curated Microarray Database) [3] | Genomic Database | A publicly accessible, curated repository of cancer microarray datasets, specifically designed for benchmarking machine learning algorithms. |

| GSE50161 (Brain Cancer Dataset) [3] | Genomic Dataset | A specific gene expression dataset within CuMiDa containing 130 samples of five brain tissue classes, used for multi-class cancer classification. |

| Bayesian Hyperparameter Optimization [3] | Computational Method | An automated technique for finding the optimal set of model parameters (hyperparameters) to minimize the loss function and maximize performance. |

| Folded Cross-Validation [4] | Statistical Protocol | A robust model validation technique used to assess how the results of a predictive model will generalize to an independent dataset, mitigating overfitting. |

Convolutional Neural Networks (CNNs) have emerged as powerful computational tools for analyzing genomic data by extracting spatially localized patterns. While originally developed for image processing, CNNs are uniquely suited to genomics because they can identify hierarchical features and local dependencies within biological sequences and expression profiles [5]. This capability is particularly valuable in cancer research, where detecting subtle genomic patterns can lead to more accurate diagnosis and classification.

CNNs excel at learning representations of genomic elements through their architecture of stacked convolutional layers with shared weights, non-linear activation functions, and pooling operations. This allows them to detect sequence motifs in regulatory DNA, identify co-expression patterns from transcriptomic data, and recognize characteristic signatures of cancer subtypes from high-dimensional genomic measurements [5] [6]. The spatial feature extraction capabilities of CNNs provide distinct advantages over other neural network architectures for many genomic applications.

Performance Comparison: CNN vs. RNN in Cancer Genomics

Direct comparisons between CNN and Recurrent Neural Network (RNN) architectures reveal distinct strengths and optimal applications for each approach in cancer genomics. The table below summarizes quantitative performance comparisons across multiple studies:

Table 1: Performance comparison of CNN vs. RNN frameworks in cancer genomics

| Study & Architecture | Primary Application | Dataset | Performance Metrics | Key Strengths |

|---|---|---|---|---|

| GONF Framework (CNN with mRMR) [7] | Cancer type classification | TCGA & AHBA datasets | 97% accuracy (TCGA), 95% accuracy (AHBA) | High accuracy for spatial feature extraction from gene expression |

| 1D-CNN/2D-CNN Models [8] | Cancer type prediction | TCGA (10,340 samples, 33 cancer types) | 93.9-95.0% accuracy across 34 classes | Excellent at classifying tumor vs. normal and cancer subtypes |

| RNN Framework for Mutation Progression [9] [10] | Cancer severity prediction & mutation progression | TCGA mutation sequences | ~60% accuracy, similar to existing diagnostics | Effective for temporal progression modeling of mutations |

| RCANE (Hybrid CNN-RNN) [11] | SCNA prediction from RNA-seq | TCGA, DepMap cell lines | F1 scores: 0.80 (sensitivity), 0.97 (specificity) | Combines spatial (CNN) and sequential (LSTM) modeling advantages |

The performance differential highlights a fundamental principle: CNNs generally outperform RNNs for classification tasks relying on spatial patterns in genomic data, while RNNs excel at modeling temporal progression and sequential dependencies. The GONF framework demonstrates state-of-the-art performance by integrating minimum Redundancy Maximum Relevance (mRMR) gene selection with CNN architecture, effectively reducing dimensionality while preserving biologically relevant features [7].

Table 2: Architectural advantages for different genomic data types

| Data Type | Optimal Architecture | Key Advantages | Limitations |

|---|---|---|---|

| Gene expression profiles [7] [8] | CNN (1D/2D) | Captures co-expression patterns; identifies biomarker combinations | Less effective for time-series progression |

| Mutation sequences over time [9] [10] | RNN (LSTM) | Models evolutionary trajectories; predicts future mutations | Lower accuracy for static classification |

| RNA-seq for SCNA prediction [11] | Hybrid (CNN + LSTM) | Captures both local patterns and long-range dependencies | Increased computational complexity |

| Genomic sequences for regulatory elements [5] | CNN with tailored filter sizes | Identifies sequence motifs and regulatory grammars | Filter size must match biological context |

Experimental Protocols and Methodologies

CNN Protocols for Cancer Classification

The high-performing CNN architectures share several methodological commonalities despite application differences. The GONF framework employs a sophisticated pipeline that integrates image processing techniques such as Hough Transform and Watershed segmentation for preprocessing microarray-derived visual data, followed by a six-layer CNN architecture with dropout regularization and max-pooling [7]. This approach effectively addresses the high dimensionality, noise, and sparsity inherent in microarray data.

For TCGA pan-cancer classification, researchers have developed multiple CNN configurations:

- 1D-CNN: Processes gene expression as vectors using one-dimensional kernels with stride equal to kernel size to capture global features [8]

- 2D-Vanilla-CNN: Reshapes expression data into 2D matrix format using standard 2D convolution kernels [8]

- 2D-Hybrid-CNN: Employs matrix input with 1D kernels, combining benefits of both approaches [8]

These models typically incorporate shallower architectures (1-3 convolutional layers) rather than the very deep networks used in computer vision, as genomic datasets have limited samples relative to the number of parameters [8]. This design choice helps prevent overfitting while maintaining high predictive accuracy.

RNN Protocols for Mutation Progression

The RNN framework for oncogenic mutation progression employs a different approach tailored to sequential data. The methodology involves isolating mutation sequences from TCGA, applying a novel preprocessing algorithm to filter key mutations by frequency, then feeding this data into an RNN with Long Short-Term Memory (LSTM) units to predict cancer severity [10]. The model then probabilistically combines RNN predictions with drug-target databases to recommend treatments and predict future mutations.

This approach leverages the attention mechanism inherent in RNN architectures, allowing the model to maintain context across mutation sequences - analogous to how language models maintain context across words in a sentence [10]. However, the typically lower accuracy (approximately 60%) reflects the greater challenge of predicting progression dynamics compared to static classification.

CNN Workflow for Genomic Data

Molecular Insights: What CNNs Learn from Genomes

CNN architectures learn biologically meaningful representations from genomic data, though the specific patterns detected depend on architectural choices. Studies systematically varying filter size and max-pooling parameters demonstrate that CNNs can learn either partial motif representations or whole motif representations in their first-layer filters depending on the network's capacity for hierarchical feature assembly in deeper layers [5].

When CNN architectures foster hierarchical representation learning (assembling partial features into whole features in deeper layers), first-layer filters tend to learn distributed representations (partial motifs). Conversely, when architectural constraints limit hierarchical building in deeper layers, first-layer filters learn more interpretable localist representations (whole motifs) [5]. This principle enables intentional CNN design choices based on whether interpretability or performance is prioritized.

For cancer type prediction, CNN interpretation using guided saliency techniques has identified biologically relevant marker genes. One study discovered 2,090 cancer markers (approximately 108 per class on average) with confirmed differential expression concordance [8]. In breast cancer, for instance, CNNs identified well-known markers including GATA3 and ESR1 without prior biological knowledge [8].

CNN Hierarchical Feature Learning from Genomic Sequences

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential research reagents and computational resources for genomic CNN studies

| Resource Type | Specific Examples | Function in Research | Key Characteristics |

|---|---|---|---|

| Genomic Datasets | TCGA [8] [11] [12] | Training and validation data | Comprehensive pan-cancer molecular data |

| AHBA [7] | Benchmark dataset | Gene expression across brain regions | |

| DepMap [11] | Model fine-tuning | Cancer cell line molecular data | |

| Bioinformatics Tools | TCGAbiolinks [8] | Data acquisition and preprocessing | R/Bioconductor package for TCGA access |

| Annovar [6] | Variant annotation | Functional annotation of genetic variants | |

| BCFtools/VCFTools [6] | Variant filtering | Manipulation and analysis of VCF files | |

| Deep Learning Frameworks | TensorFlow/PyTorch | Model implementation | Flexible deep learning platforms |

| Custom CNN architectures [7] [8] | Specific model designs | Tailored for genomic data structure | |

| Validation Resources | Drug-target databases [10] | Therapeutic prediction | Connecting mutations to treatments |

| Pathway databases (KEGG, GO) [13] | Biological interpretation | Functional enrichment analysis |

Convolutional Neural Networks demonstrate distinct advantages for spatial feature extraction from genomic data, achieving superior performance in cancer classification tasks compared to RNN-based approaches. The exceptional accuracy of CNN frameworks (up to 97% for cancer type classification) highlights their capability to identify biologically relevant patterns in high-dimensional genomic data [7].

Future developments will likely focus on hybrid architectures that combine CNN spatial feature extraction with RNN temporal modeling where appropriate, as demonstrated by the RCANE framework for somatic copy number aberration prediction [11]. Additional advances will come from improved interpretability methods such as saliency maps and attribution techniques that bridge computational findings with biological mechanisms [8] [5].

As genomic datasets continue to expand in size and complexity, CNN architectures will play an increasingly vital role in translating molecular measurements into clinically actionable insights - ultimately advancing precision oncology through more accurate diagnosis, prognosis, and treatment selection.

In the field of cancer genomics, the ability to accurately interpret sequential genomic data is paramount for early detection, prognosis prediction, and personalized treatment strategies. Deep learning architectures have emerged as powerful tools for this task, with Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs) representing two fundamentally different approaches to pattern recognition in genetic sequences. While CNNs excel at identifying local spatial patterns and motif structures within DNA sequences, RNNs and their variants—specifically Long Short-Term Memory (LSTM) and Gated Recurrent Unit (GRU) networks—are specifically designed to model sequential dependencies and temporal dynamics, capturing long-range contextual information that is often critical for understanding genomic function and regulation [14] [2].

The sequential nature of genomic data presents unique analytical challenges. DNA sequences exhibit complex dependencies where nucleotides distant in the sequence can influence biological function through intricate three-dimensional structures and regulatory mechanisms. RNN-based architectures address this challenge by processing sequences step-by-step while maintaining a memory of previous elements through their hidden states, making them particularly suited for tasks such as mutation progression prediction, gene expression classification, and pathway analysis in cancer genomics [10]. This review provides a comprehensive performance comparison between these architectural paradigms, synthesizing experimental evidence from recent studies to guide researchers in selecting appropriate models for specific genomic analysis tasks.

Biological Foundations: Sequential Dependencies in Genomic Data

Genomic sequences fundamentally encode biological information through ordered nucleotides that exhibit complex dependencies across multiple spatial scales. At the molecular level, DNA serves as the fundamental genetic blueprint governing development, functioning, growth, and reproduction of all living organisms [14]. The precise sequence of nucleotides forms functional elements including genes, regulatory regions, and structural domains, with alterations through germline and somatic mutations potentially leading to cancer and other genetic disorders [14].

The sequential nature of genomic information manifests in several biologically significant patterns that RNNs are particularly well-suited to model. Coding sequences follow grammatical rules where nucleotide triplets (codons) sequentially determine amino acid sequences in proteins. Regulatory motifs often appear in specific spatial configurations, with transcription factor binding sites exhibiting distance-dependent cooperative interactions. Splicing signals involve coordinated recognition of splice donor and acceptor sites that may be separated by long intronic sequences. Furthermore, higher-order chromatin structure creates functional relationships between genomically distant elements through looping and spatial organization [14] [10].

Cancer genomics specifically reveals the critical importance of sequential patterns, where accumulation of mutations in driver genes follows temporal sequences that influence disease progression and therapeutic response [10]. The sequential activation and suppression of biological pathways in oncogenesis represents another dimension where order and timing of molecular events determine clinical outcomes. These multi-level sequential dependencies create an analytical domain where RNNs' innate capacity to model context and temporal relationships provides distinct advantages over position-independent approaches.

Experimental Comparisons: RNNs versus CNNs in Genomic Applications

Performance Benchmarking Across Applications

Table 1: Performance comparison of CNN, RNN, and hybrid architectures across genomic tasks

| Application Domain | Model Architecture | Performance Metric | Result | Reference |

|---|---|---|---|---|

| Brain cancer gene expression classification | 1D-CNN + RNN with Bayesian optimization | Accuracy | 100% | [3] |

| Brain cancer gene expression classification | 1D-CNN + RNN | Accuracy | 90% | [3] |

| Brain cancer gene expression classification | Support Vector Machine (SVM) | Accuracy | 95% | [3] |

| DNA sequence classification | LSTM + CNN hybrid | Accuracy | 100% | [15] |

| DNA sequence classification | DeepSea (CNN) | Accuracy | 76.59% | [15] |

| DNA sequence classification | Random Forest | Accuracy | 69.89% | [15] |

| Oncogenic mutation progression prediction | RNN with embedding | Accuracy | >60% | [10] |

Experimental results demonstrate that hybrid architectures combining CNNs and RNNs frequently achieve superior performance compared to either architecture alone. The integration of local feature detection capabilities of CNNs with sequential modeling strengths of RNNs creates synergistic effects that are particularly beneficial for genomic applications [3] [15]. For brain cancer classification using gene expression data, a hybrid 1D-CNN and RNN model with Bayesian hyperparameter optimization achieved perfect classification accuracy (100%), significantly outperforming the same hybrid architecture without optimization (90%) and traditional machine learning approaches like SVM (95%) [3].

Similarly, in DNA sequence classification, a strategically designed LSTM and CNN hybrid achieved 100% accuracy, dramatically outperforming CNN-based implementations like DeepSea (76.59%) and traditional machine learning methods including random forest (69.89%) and logistic regression (45.31%) [15]. This performance advantage stems from the model's ability to simultaneously capture local sequence motifs through convolutional operations and long-range dependencies through recurrent connections, effectively addressing the multi-scale nature of genomic information.

For oncogenic mutation progression prediction, an RNN framework with embedding layers achieved accuracy exceeding 60%, comparable to existing cancer diagnostics while providing the additional capability of projecting future mutation pathways and potential treatment recommendations [10]. This demonstrates RNNs' unique value in temporal projection tasks that require modeling of sequential patterns across time, a capability not inherently present in CNN architectures.

Architectural Strengths and Limitations

Table 2: Characteristics of deep learning architectures for genomic sequence analysis

| Architecture | Strengths | Limitations | Ideal Genomic Applications |

|---|---|---|---|

| RNN/LSTM/GRU | Models long-range dependencies; Processes variable-length sequences; Captures temporal dynamics | Computationally intensive; Vanishing gradient problem (addressed by LSTM/GRU); Requires large datasets | Mutation progression prediction; Gene expression time series; Pathway analysis |

| CNN | Excels at local pattern detection; Position-invariant feature recognition; Parallelizable computation | Limited contextual window; Fixed-length processing; Less effective for long-range dependencies | Motif discovery; Regulatory element prediction; Sequence classification |

| Hybrid (CNN+RNN) | Captures both local and global sequence contexts; Synergistic feature learning; State-of-the-art performance | Complex architecture design; Increased hyperparameter space; Higher computational demand | Comprehensive genome annotation; Cancer subtype classification; Functional genomics |

The comparative analysis of architectural characteristics reveals complementary strengths that inform model selection for specific genomic tasks. RNN variants (LSTM, GRU) demonstrate particular proficiency in modeling long-range dependencies and temporal dynamics, making them ideal for mutation progression prediction and gene expression time series analysis [2] [10]. Their sequential processing approach naturally aligns with the directional nature of genomic sequences and biological pathways.

CNN architectures excel at detecting local patterns and position-invariant features, providing superior performance for motif discovery, regulatory element prediction, and straightforward sequence classification tasks [2] [16]. Their parallelizable computation offers efficiency advantages for whole-genome scanning applications. However, their limited contextual window and fixed-length processing constraints reduce effectiveness for applications requiring integration of distant sequence elements.

Hybrid architectures strategically combine convolutional and recurrent layers to capture both local and global sequence contexts, achieving state-of-the-art performance across multiple genomic classification tasks [3] [15]. The synergistic feature learning enabled by these architectures comes with increased complexity in design and higher computational demands, creating practical implementation challenges for large-scale genomic analyses.

Methodological Protocols for Genomic Sequence Analysis

RNN Framework for Mutation Progression Prediction

Experimental Protocol [10]:

- Data Acquisition and Preprocessing: Isolate mutation sequences from The Cancer Genome Atlas (TCGA) database. Implement a preprocessing algorithm to filter key mutations by mutation frequency, reducing dimensionality while retaining biologically significant variants.

- Sequence Embedding: Transform genomic sequences into continuous vector representations using embedding layers, enabling the model to learn semantic relationships between genetic elements.

- Model Architecture: Implement a multi-layer LSTM network with attention mechanisms to process mutation sequences while preserving contextual information across time steps. The attention mechanism amplifies informative previous results to make more informed decisions at current time steps.

- Training Configuration: Utilize teacher forcing during training with backpropagation through time. Implement gradient clipping and learning rate scheduling to stabilize training.

- Prediction and Treatment Recommendation: Employ the RNN's hidden states to predict cancer severity and future mutation progression. Integrate drug-target databases to generate targeted treatment recommendations based on projected mutation pathways.

Diagram 1: RNN framework for mutation progression prediction and treatment recommendation

Hybrid CNN-RNN Architecture for Gene Expression Classification

Experimental Protocol [3]:

- Data Preparation: Obtain brain cancer gene expression data from Curated Microarray Database (CuMiDa). Partition data into training (80%), validation (10%), and testing (10%) sets while maintaining class distribution.

- Input Representation: Apply Z-score normalization to gene expression values. For sequence-based approaches, implement one-hot encoding or k-mer embeddings to represent genomic sequences.

- Hybrid Architecture Design:

- Implement 1D convolutional layers with increasing filter sizes (16, 32, 64) to extract local genomic patterns and motifs.

- Incorporate max-pooling operations after convolutional layers for dimensionality reduction.

- Connect convolutional feature maps to bidirectional LSTM layers with 64-100 units to capture long-range dependencies in genomic sequences.

- Model Optimization: Apply Bayesian hyperparameter optimization to tune layer configurations, learning rates, and regularization parameters. Utilize dropout (0.2-0.5) and L2 regularization to prevent overfitting.

- Training and Evaluation: Train with categorical cross-entropy loss using Adam optimizer. Evaluate performance using accuracy, precision, recall, and F1-score across multiple cancer subtypes.

Diagram 2: Hybrid CNN-RNN architecture for gene expression classification

Table 3: Essential research reagents and computational resources for genomic deep learning

| Resource Category | Specific Tools/Databases | Application in Genomic Analysis | Key Features |

|---|---|---|---|

| Genomic Databases | The Cancer Genome Atlas (TCGA) | Provides comprehensive mutation and expression data across cancer types | Multi-dimensional data including genomic, transcriptomic, and clinical information |

| Genomic Databases | Curated Microarray Database (CuMiDa) | Offers curated gene expression datasets for cancer classification | 78 datasets across 13 cancer types with standardized processing |

| Genomic Databases | Brain Cancer Gene Database (BCGene) | Specialized resource for brain cancer genomics | 40 categories of brain cancer with associated genetic markers |

| Sequence Encoders | One-hot Encoding | Basic sequence representation for deep learning models | Simple binary representation of nucleotides |

| Sequence Encoders | K-mer Embeddings | Statistical representation of sequence segments | Captures local sequence composition and context |

| Sequence Encoders | Neural Word Embeddings | Learned continuous representations of genomic elements | Captures semantic similarities between sequence patterns |

| Computational Frameworks | TensorFlow/Keras | Deep learning model implementation and training | High-level API for rapid prototyping of architectures |

| Computational Frameworks | Bayesian Optimization | Hyperparameter tuning for model optimization | Efficient search through high-dimensional parameter spaces |

The experimental workflows and predictive pipelines for genomic sequence analysis depend on specialized computational resources and biological datasets. High-quality genomic databases form the foundation for training and validating deep learning models, with TCGA providing comprehensive mutation profiles across cancer types, CuMiDa offering curated gene expression datasets specifically optimized for classification tasks, and BCGene delivering specialized information for brain cancer genomics [3] [10].

Sequence encoding methods represent a critical preprocessing step that transforms raw genomic sequences into numerical representations compatible with deep learning architectures. One-hot encoding provides a fundamental representation scheme, while k-mer embeddings capture local sequence composition through overlapping fixed-length segments. Neural word embeddings offer more sophisticated learned representations that capture semantic relationships between genomic elements, potentially enhancing model performance for tasks requiring understanding of functional similarity [14] [15].

Computational frameworks including TensorFlow and Keras enable efficient implementation of complex architectures, while Bayesian optimization tools systematically navigate the high-dimensional hyperparameter spaces characteristic of hybrid deep learning models. These resources collectively provide the infrastructure necessary for developing, training, and validating RNN-based genomic sequence analysis pipelines.

The comparative analysis of RNNs and CNNs for genomic sequence analysis reveals a complex performance landscape shaped by architectural strengths aligned with specific biological questions. RNN variants including LSTMs and GRUs demonstrate superior capabilities for modeling temporal dynamics and long-range dependencies in genomic sequences, making them particularly valuable for mutation progression prediction, pathway analysis, and time-series gene expression modeling [2] [10]. CNN architectures excel at detecting local sequence motifs and position-invariant patterns, providing efficient solutions for regulatory element prediction and sequence classification tasks [16].

Hybrid architectures that strategically integrate convolutional and recurrent layers have achieved state-of-the-art performance across multiple genomic applications, leveraging CNNs for local feature detection and RNNs for contextual sequence modeling [3] [15]. The demonstrated 100% classification accuracy for brain cancer gene expression and human DNA sequences highlights the transformative potential of these integrated approaches [3] [15].

Future research directions should focus on developing more efficient attention mechanisms for modeling ultra-long genomic sequences, optimizing computational requirements for whole-genome analysis, and improving model interpretability to extract biologically meaningful insights from trained networks. As genomic datasets continue to expand in scale and complexity, the strategic integration of RNN-based sequential modeling with complementary architectural elements will play an increasingly vital role in advancing cancer genomics and precision medicine.

Cancer research has entered an era of big data, driven by breakthroughs in high-throughput technologies that generate massive amounts of molecular and phenotypic information [17]. The analysis of these complex datasets requires sophisticated computational approaches and has become foundational to precision oncology. Multi-omics approaches integrate various biological data layers—including genomic, transcriptomic, and epigenetic information—to provide a comprehensive view of cancer biology that transcends what any single data type can reveal [18]. This integrated perspective is essential for understanding the complex molecular interactions and dysregulations associated with specific tumor cohorts.

The value of multi-omics integration lies in its capacity to link genetic information with molecular function and phenotypic outcomes, enabling researchers to dissect the tumor microenvironment, reveal interactions between cancer cells and their surroundings, and identify biomarkers for disease progression and treatment response [18]. For instance, combining genomics with metabolomics has identified biomarkers for heart diseases, while multi-omics studies have helped unravel the complex pathways involved in neurodegenerative conditions like Parkinson's and Alzheimer's [18]. In cancer research specifically, this approach helps reveal how genetic mutations influence cellular behavior and metabolism, thereby improving our understanding of disease mechanisms and therapeutic targets.

Machine learning, particularly deep learning models including Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs), has demonstrated substantial potential for analyzing these complex multi-omics datasets to enhance cancer detection, diagnosis, and treatment planning [2] [19]. These models can autonomously extract valuable features from large-scale datasets, thus enhancing early detection accuracy and providing innovative approaches for precision diagnosis and personalized treatment [2]. The performance of these models, however, depends critically on both the quality of the input data and the architectural choices suited to the specific characteristics of genomic data structures.

Molecular Data Types in Cancer Genomics

Genomic Data: DNA-Level Alterations

Genomic data provides insights into the DNA sequence and its variations, serving as a fundamental data type for understanding cancer genetics. Whole genome data encompasses the complete DNA sequence of an individual and identifies genetic variants associated with cancer, including mutations, copy number variants (CNVs), and structural variants [2] [17]. These variations can be quantified using specific formulas that assess the contribution of different mutations to cancer development, incorporating factors such as mutation effect functions and location weights [2].

Somatic mutation data helps identify specific molecular features of cancers, guiding the selection of targeted therapies [2]. For example, mutations in BRCA1 and BRCA2 genes are strongly associated with an elevated risk of breast and ovarian cancer [2]. Technologies for generating genomic data include whole-exome and whole-genome sequencing, which reveal DNA nucleotide mutations, copy number alterations, and large structural variants such as genome rearrangements [17]. Single-cell genome sequencing, though challenging, is possible on a limited number of cells, providing higher resolution insights into tumor heterogeneity [17].

Transcriptomic Data: Gene Expression Profiles

Transcriptomic data captures the expression levels of RNA molecules, reflecting the active genetic processes within cells. This data type provides dynamic information about which genes are being transcribed and to what extent, offering insights into the functional state of cancer cells [19]. Gene expression can play a fundamental role in the early detection of cancer, as it is indicative of the biochemical processes in tissue and cells, as well as the genetic characteristics of an organism [19].

The primary technologies for generating transcriptomic data include microarrays and RNA-sequencing (RNA-Seq) methods [19]. RNA-Seq offers several advantages over microarray technologies, including greater specificity and resolution, increased sensitivity to differential expression, and a greater dynamic range [19]. Additionally, RNA-Seq can be used to examine the transcriptome of any species to determine the amount of RNA at a specific time. Single-cell RNA sequencing (scRNA-seq) technologies have further advanced the field by allowing transcriptomic profiling at the individual cell level, revealing tumor heterogeneity at unprecedented resolution [17]. Spatial transcriptomic techniques represent another advancement, generating gene expression data with spatial location information based on positional barcoding or in situ sequencing [17].

Epigenomic Data: Regulatory Modifications

Epigenomic data captures modifications to DNA and associated proteins that regulate gene expression without altering the underlying DNA sequence. These modifications include DNA methylation, histone modifications, and chromatin accessibility, all of which play crucial roles in cancer development and progression [18] [17]. DNA methylation involves the addition of methyl groups to cytosine bases in DNA, typically leading to gene silencing when it occurs in promoter regions [17]. Technologies for profiling DNA methylation include bisulfite sequencing and BeadChip arrays, with single-cell bisulfite sequencing now enabling methylation readouts at single-cell resolution [17].

Chromatin accessibility data, generated through techniques such as ATAC-seq or DNase I-seq, reveals accessible chromatin regions that represent active regulatory elements in the genome [17]. Histone modification data, obtained through chromatin immunoprecipitation followed by sequencing (ChIP-seq), identifies the genome-wide location of DNA-binding proteins or histones with diverse modifications that influence gene expression [17]. These epigenetic markers provide critical information about the regulatory landscape of cancer cells, offering insights into how gene expression programs are dysregulated in tumors beyond what can be explained by genetic mutations alone.

Table 1: Key Data Types in Cancer Genomics

| Data Type | Molecular Level | Key Technologies | Biological Information Captured |

|---|---|---|---|

| Genomic | DNA | Whole-genome sequencing, Whole-exome sequencing | DNA sequence variations, mutations, copy number alterations, structural variants |

| Transcriptomic | RNA | RNA-Seq, Microarrays, scRNA-seq | Gene expression levels, transcript isoforms, fusion genes, non-coding RNA expression |

| Epigenomic | DNA modifications & chromatin | Bisulfite sequencing, ATAC-seq, ChIP-seq | DNA methylation patterns, chromatin accessibility, histone modifications |

Machine Learning Approaches for Multi-Omics Data Analysis

Convolutional Neural Networks (CNNs) in Genomics

Convolutional Neural Networks (CNNs) represent one of the most widely used deep learning architectures for genomic data analysis, particularly for sequence-based classification tasks [2] [20] [19]. CNNs automatically extract key features from genomic sequences through locally sensing the input data via convolutional layers, effectively capturing spatial patterns in genomic sequences [2] [19]. The mathematical foundation of CNNs involves convolution operations that apply filters across input sequences to detect locally relevant patterns, followed by pooling operations that reduce dimensionality while preserving salient features [2].

CNNs have demonstrated remarkable success in various cancer genomics applications, including the identification of regulatory elements such as promoters, enhancers, and transcription factor binding sites [21]. Their ability to learn hierarchical representations of genomic sequences makes them particularly well-suited for detecting motifs and other local sequence patterns predictive of functional genomic elements. For cancer classification using gene expression data, some studies have transformed gene expression profiles into two-dimensional image-like arrays with rows and columns that serve as inputs to CNN models, leveraging the architecture's capacity to capture local spatial relations in input data [19].

Specialized frameworks such as GenomeNet-Architect have been developed to optimize CNN architectures specifically for genomic data [20]. This framework uses neural architecture search to identify optimal network configurations for genome sequence data, resulting in models that outperform expert-guided architectures. On viral classification tasks, models optimized through this approach reduced misclassification rates by 19%, with 67% faster inference and 83% fewer parameters compared to the best-performing deep learning baselines [20].

Recurrent Neural Networks (RNNs) for Sequential Genomic Data

Recurrent Neural Networks (RNNs) represent another important class of deep learning architectures particularly well-suited for processing sequential data, including genomic sequences and time-series gene expression data [2] [19]. Unlike CNNs, which excel at detecting local patterns, RNNs are characterized by their ability to model temporal dependencies and long-range relationships in sequential data by preserving information from previous time steps through recurrent connections [2]. This makes them advantageous for processing genetic data, medical records, and other sequential biological data types.

Standard RNNs suffer from the vanishing gradient problem, which limits their effectiveness in processing long sequences. To address this limitation, variants such as Long Short-Term Memory Networks (LSTMs) and Gated Recurrent Units (GRUs) have been introduced, incorporating gating mechanisms that mitigate the vanishing gradient problem [2]. These RNN variants are widely used in genomics, particularly in cancer prediction and progression analysis [2]. For instance, LSTMs are employed to predict cancer occurrence and progression based on gene expression data, while GRUs are used to detect cancer-associated mutations and analyze temporal patterns in gene sequences [2].

RNNs have shown particular utility in applications requiring modeling of dependencies across genomic sequences, such as predicting splicing patterns, identifying non-coding variants, and analyzing time-course gene expression data during cancer progression. However, RNNs typically require more computational resources and are more susceptible to overfitting with small datasets compared to CNN architectures [19].

Comparative Performance of CNN vs. RNN Architectures

The comparative performance of CNN and RNN architectures for cancer genomics applications depends on multiple factors, including the specific analytical task, data characteristics, and model configuration. CNNs generally demonstrate advantages in processing genomic sequences for classification tasks where local patterns (e.g., transcription factor binding sites, splice sites) are highly predictive [20] [21]. Their architectural bias toward translation invariance and local connectivity aligns well with the properties of many functional genomic elements that are defined by short, conserved sequence motifs.

RNNs, particularly LSTM and GRU variants, typically excel in tasks requiring modeling of long-range dependencies in sequential data, such as predicting RNA secondary structure or analyzing temporal gene expression patterns [2] [19]. The ability of RNNs to maintain internal state information across sequence positions enables them to capture relationships between distant genomic elements that may influence regulatory function.

Hybrid architectures that combine convolutional and recurrent layers have emerged as powerful alternatives, leveraging the strengths of both approaches [20]. For example, some models place RNN layers on top of convolutional layers to first detect local patterns and then model global sequence dependencies [20]. The DanQ model exemplifies this hybrid approach, using convolutional layers to detect motifs in DNA sequences followed by a bidirectional LSTM layer to capture long-range regulatory interactions [20].

Table 2: Comparison of CNN and RNN Architectures for Cancer Genomics

| Feature | CNN | RNN (LSTM/GRU) |

|---|---|---|

| Primary Strength | Local pattern detection | Long-range dependency modeling |

| Typical Applications | Regulatory element prediction, motif discovery, sequence classification | Time-series gene expression, splice site prediction, RNA structure prediction |

| Data Requirements | Large labeled datasets | Sequential data with temporal dependencies |

| Computational Efficiency | High (parallelizable) | Moderate to low (sequential processing) |

| Interpretability | Moderate (visualization of filters) | Lower (internal states less interpretable) |

| Common Hybrid Approaches | CNN layers for feature extraction followed by RNN layers for sequence modeling |

Experimental Framework and Benchmarking

Standardized Datasets for Model Evaluation

Robust evaluation of CNN and RNN models requires carefully curated benchmark datasets that enable fair comparison across different architectures and approaches. Several community resources have been developed to address this need. The MLOmics database provides a comprehensive collection of cancer multi-omics data specifically designed for machine learning applications, containing 8,314 patient samples covering all 32 cancer types with four omics types: mRNA expression, microRNA expression, DNA methylation, and copy number variations [22]. This database offers multiple feature versions (Original, Aligned, and Top) to support different analytical needs and includes extensive baselines with classical machine learning methods and deep learning approaches for comparison [22].

For genomic sequence classification, the genomic-benchmarks collection provides curated datasets focusing on regulatory elements (promoters, enhancers, open chromatin regions) from model organisms including human, mouse, and roundworm [21]. These benchmarks are distributed as a Python package with utilities for data processing, cleaning procedures, and interfaces for popular deep learning frameworks, facilitating standardized evaluation and reproducibility [21].

The Cancer Genome Atlas (TCGA) represents one of the most comprehensive resources for cancer genomics data, containing 2.5 petabytes of raw data encompassing transcriptomic, proteomic, genomic, and epigenomic data for more than 10,000 cancer genomes and matched normal samples across 33 cancer types [17]. This resource has been instrumental in advancing cancer research, with thousands of publications and NIH grants citing TCGA data according to PubMed searches [17].

Experimental Protocols for Model Training and Validation

Standardized experimental protocols are essential for ensuring fair comparison between CNN and RNN architectures. The MLOmics database provides well-defined protocols for pan-cancer and cancer subtype classification tasks, including standardized data splits, evaluation metrics, and baseline implementations [22]. For classification tasks, common evaluation metrics include precision, recall, and F1-score, while clustering tasks typically employ normalized mutual information (NMI) and adjusted rand index (ARI) to assess agreement between clustering results and true labels [22].

Proper handling of the high dimensionality of genomic data is crucial for model performance. Feature selection techniques, such as filter methods (removing irrelevant features based on statistical relationships), wrapper methods (using classification algorithms to evaluate feature importance), and embedded approaches (integrating feature selection with model training), are commonly employed to address this challenge [19]. Additionally, techniques such as transfer learning have been used to tackle the problem of small training datasets by transferring information from models trained on large datasets to those with limited samples [19].

For architecture optimization, frameworks like GenomeNet-Architect employ model-based optimization to jointly tune network layout and hyperparameters, using multi-fidelity approaches that initially evaluate configurations with shorter training times before devoting more resources to promising candidates [20]. This approach has demonstrated significant improvements over manually designed architectures, highlighting the importance of systematic architecture search for genomic applications.

Performance Metrics and Comparison Results

Comprehensive evaluation of CNN and RNN models requires multiple performance metrics that capture different aspects of model capability. For cancer classification tasks, common metrics include accuracy, area under the receiver operating characteristic curve (AUC-ROC), precision-recall curves, and F1-scores [22] [19]. Additionally, model interpretability and computational efficiency (training and inference time, memory requirements) are important practical considerations for real-world deployment.

Benchmark studies have demonstrated that deep learning-based methods generally outperform conventional machine learning approaches for cancer classification using gene expression data [19]. Several approaches employing multi-layer perceptron (MLP) or CNN networks in combination with efficient feature engineering and transfer learning techniques have achieved test accuracies upwards of 90% [19]. However, performance remains sensitive to various parameters, and further improvements are needed for generalization and robustness.

The optimal architecture choice depends significantly on the specific analytical task. For viral classification from genomic sequences, optimized CNN architectures have achieved 19% reduction in misclassification rates with 67% faster inference and 83% fewer parameters compared to the best-performing deep learning baselines [20]. For tasks involving time-series gene expression data or modeling of long-range dependencies in sequences, RNN architectures typically demonstrate superior performance despite their higher computational requirements [2] [19].

Research Reagents and Computational Tools

Essential Research Reagents and Databases

Successful implementation of CNN and RNN models for cancer genomics research relies on access to high-quality data resources and computational tools. The following table summarizes key resources used in the field:

Table 3: Essential Research Reagents and Computational Tools

| Resource Name | Type | Function/Application | Key Features |

|---|---|---|---|

| MLOmics [22] | Database | Machine learning-ready cancer multi-omics data | 8,314 patient samples, 32 cancer types, 4 omics types, standardized preprocessing |

| TCGA [17] | Data Repository | Comprehensive cancer genomics data | 2.5 PB of raw data, 33 cancer types, multiple omics data types |

| Genomic Benchmarks [21] | Dataset Collection | Genomic sequence classification benchmarks | Curated datasets for regulatory elements, interface for deep learning libraries |

| GenomeNet-Architect [20] | Software Framework | Neural architecture optimization for genomics | Automated architecture search, domain-specific search space, multi-fidelity optimization |

| STRING [22] | Database | Protein-protein interaction networks | Functional protein associations, network analysis |

| KEGG [22] | Database | Biological pathways and functional hierarchies | Pathway maps, gene functional annotation |

Experimental Workflows and Data Processing

Standardized workflows for data processing are critical for ensuring reproducible results in cancer genomics research. For genomic data, typical processing steps include adapter trimming and quality filtering using tools like Trimmomatic, alignment to reference genomes using BWA, duplicate read marking, and variant calling using tools like GATK or DeepVariant [2] [23]. For transcriptomic data from RNA-Seq experiments, processing typically involves converting scaled gene-level RSEM estimates into FPKM values using packages like edgeR, removing features with zero expression in a significant proportion of samples, and applying logarithmic transformations to normalize data distributions [22].

Epigenomic data processing varies by data type. For DNA methylation data, standard approaches include median-centering normalization to adjust for systematic biases using packages like limma, and selecting promoters with minimum methylation when multiple promoters exist for a gene [22]. For chromatin accessibility data from ATAC-seq, processing typically involves identifying accessible regions, filtering artifacts, and normalizing for sequencing depth and technical variation.

The following diagram illustrates a typical multi-omics data processing and analysis workflow for cancer genomics:

Multi-Omics Data Analysis Workflow

The integration of genomic, transcriptomic, and epigenetic data provides a powerful foundation for advancing cancer research through deep learning approaches. Both CNN and RNN architectures offer distinct advantages for different aspects of cancer genomics analysis, with CNNs excelling at local pattern recognition in genomic sequences and RNNs demonstrating strengths in modeling temporal dependencies and long-range interactions in sequential data [2] [20] [19]. The choice between these architectures depends on the specific analytical task, data characteristics, and practical constraints such as computational resources and interpretability requirements.

Future research directions in this field include developing more sophisticated hybrid architectures that combine the strengths of CNNs and RNNs while addressing their respective limitations [20] [19]. Improved model interpretability remains a critical challenge, as clinical adoption requires transparency in model decision-making processes [2] [24]. Additionally, addressing data heterogeneity and improving model generalization across different populations and sequencing platforms will be essential for robust clinical applications [2].

The creation of standardized benchmarks and data resources, such as MLOmics and genomic-benchmarks, represents significant progress toward reproducible and comparable research in computational cancer genomics [22] [21]. Continued development of these community resources, coupled with advances in neural architecture search and automated machine learning for genomics, will likely accelerate progress in the field [20]. As these technologies mature and validation in clinical settings expands, deep learning approaches for multi-omics data integration are poised to make substantial contributions to precision oncology, potentially improving cancer detection, diagnosis, and treatment selection for patients.

Why Deep Learning for Cancer Genomics? Addressing High Dimensionality and Complex Patterns

Cancer genomics presents a formidable analytical challenge characterized by high-dimensional data, where the number of features (genes) vastly exceeds the number of samples, and complex, non-linear patterns that underlie cancer development and progression. Traditional statistical and machine learning methods often struggle to capture the intricate interactions within biological systems, creating an pressing need for more sophisticated analytical approaches [7]. Deep learning has emerged as a powerful solution to these challenges, offering the capacity to automatically learn hierarchical representations from raw genomic data without relying on manual feature engineering [2] [3].

Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs) represent two dominant deep learning architectures applied to cancer genomics, each with distinct strengths for particular data types and analytical tasks. While CNNs excel at identifying spatial patterns and local dependencies in structured data, RNNs specialize in capturing temporal relationships and dependencies in sequential data [2]. The selection between these architectures depends on multiple factors including data structure, the specific biological question, and the desired output. This guide provides an objective comparison of their performance, supported by experimental data and detailed methodologies, to inform researchers and drug development professionals in selecting appropriate tools for cancer genomic analysis.

Architectural Strengths: CNN vs. RNN for Genomic Data

Convolutional Neural Networks (CNNs) in Cancer Genomics

CNNs employ a series of convolutional layers that act as learned filters, scanning input data to detect spatially-local patterns through parameter sharing and hierarchical feature learning. In genomics, this architecture proves particularly valuable for identifying functionally relevant patterns that may be distributed across genomic coordinates or within transformed data representations [8].

The fundamental strength of CNNs lies in their ability to detect local patterns through their kernel-based architecture, which slides across input data to identify features regardless of their absolute position. This translational invariance makes them exceptionally suited for genomic applications where meaningful biological signals—such as transcription factor binding sites or conserved protein domains—may occur at various positions within a sequence or data structure [7]. Additionally, their hierarchical nature enables them to build increasingly complex representations from simple features, mirroring how complex biological systems are organized.

Recurrent Neural Networks (RNNs) in Cancer Genomics

RNNs and their variants (LSTMs and GRUs) incorporate internal memory mechanisms that process sequential inputs while maintaining information about previous elements through hidden states. This architecture naturally aligns with the sequential nature of genomic data, whether considering nucleotide sequences in DNA or temporal progression in cancer evolution [2] [3].

The unique advantage of RNN architectures lies in their capacity to model dependencies across time steps or sequence positions, with LSTMs and GRUs specifically addressing the vanishing gradient problem through gating mechanisms that regulate information flow [2]. This makes them particularly suitable for modeling biological sequences where long-range dependencies are critical, such as understanding how mutations in non-coding regulatory elements might influence downstream gene expression in cancer pathways.

Architectural Comparison and Selection Guidelines

Table 1: Architectural Comparison Between CNN and RNN for Cancer Genomics

| Feature | Convolutional Neural Networks (CNNs) | Recurrent Neural Networks (RNNs/LSTMs) |

|---|---|---|

| Core Strength | Spatial pattern recognition | Sequential dependency modeling |

| Data Compatibility | Structured data (2D matrices, images), spatially-related features | Sequential data (time series, nucleotide sequences) |

| Memory Mechanism | Parameter sharing across spatial dimensions | Internal state memory across sequence positions |

| Feature Hierarchy | Built through stacked convolutional layers | Built through sequential processing steps |

| Computational Efficiency | Highly parallelizable | Sequential processing can limit parallelism |

| Common Genomic Applications | Gene expression classification, protein structure prediction, network analysis [8] [12] | Cancer progression modeling, sequence mutation analysis, temporal expression patterns [3] |

Figure 1: Architectural comparison of CNN and RNN pathways for genomic data analysis

Performance Comparison: Experimental Evidence

Cancer Type Classification Performance

Multiple studies have demonstrated the effectiveness of both CNN and RNN architectures in classifying cancer types from genomic data, with performance often exceeding 90% accuracy across diverse datasets.

Table 2: Performance Comparison of Deep Learning Models in Cancer Genomics

| Study | Architecture | Cancer Types | Dataset | Accuracy | Key Findings |

|---|---|---|---|---|---|

| Milad et al. (2020) [8] | 1D-CNN | 33 cancer types | TCGA (10,340 samples) | 93.9-95.0% | Lightweight model identified 2090 cancer marker genes including known markers (GATA3, ESR1) |

| Chen et al. (2025) [12] | CNN + PPI Networks | 11 cancer types | TCGA (6,136 samples) | 95.4% (cancer type)97.4% (tumor vs normal) | Integration of protein-protein interaction networks improved biological interpretability |

| Brain Cancer Study (2024) [3] | Hybrid (1D-CNN + RNN) | 5 brain cancer types | CuMiDa (130 samples) | 100% | Bayesian optimization enhanced performance from 90% to 100% accuracy |

| Gene-Optimized Framework (2025) [7] | mRMR-CNN Hybrid | Multiple cancers | TCGA & AHBA | 97% (TCGA)95% (AHBA) | Integration of feature selection with CNN reduced false positives/negatives |

The experimental evidence indicates that CNN architectures currently dominate cancer type classification tasks, particularly when dealing with structured genomic data. The 1D-CNN implementation by Milad et al. demonstrated that even relatively simple CNN architectures can achieve high accuracy (93.9-95.0%) while remaining computationally efficient and interpretable [8]. Similarly, the CNN model integrating protein-protein interaction networks developed by Chen et al. achieved remarkable accuracy (95.4%) across 11 cancer types by transforming genomic data into 2D network representations [12].

Notably, hybrid approaches that combine architectural elements have shown exceptional performance. The Bayesian-optimized 1D-CNN + RNN model for brain cancer classification achieved perfect accuracy (100%) on the CuMiDa dataset, suggesting that strategic combination of architectures can leverage their complementary strengths [3].

Handling Data Challenges: High Dimensionality and Limited Samples

A critical challenge in cancer genomics is the "curse of dimensionality," where datasets contain thousands of genes but only hundreds of samples. Both CNN and RNN architectures address this challenge through different regularization strategies and architectural constraints.

CNNs effectively manage high dimensionality through parameter sharing in convolutional layers and progressive dimensionality reduction in pooling layers. The Gene-Optimized Neural Framework (GONF) combined minimum Redundancy Maximum Relevance (mRMR) feature selection with a deep CNN to achieve 97% accuracy on TCGA data while significantly reducing model complexity [7]. This approach demonstrates how strategic feature selection paired with CNN architecture can optimize performance while maintaining biological interpretability.

RNNs address sequential dependencies in genomic data through their memory mechanisms, but may require more samples for effective training due to their parameter-intensive nature. The hybrid 1D-CNN + RNN approach addressed this by using the CNN component for feature extraction before sequence processing by the RNN, thereby reducing the parameter space and improving training efficiency [3].

Experimental Protocols and Methodologies

CNN Protocol for Cancer Type Prediction

The experimental protocol for CNN-based cancer classification typically involves structured data preparation, model configuration with convolutional and pooling layers, and comprehensive validation.

Data Preparation and Preprocessing:

- Data Source: The Cancer Genome Atlas (TCGA) pan-cancer RNA-Seq data, containing 10,340 tumor samples and 713 normal samples across 33 cancer types [8]

- Normalization: Gene expression values are transformed using log2(FPKM + 1) to stabilize variance and normalize distribution

- Gene Filtering: Removal of low-information genes (mean < 0.5 or standard deviation < 0.8 across all samples), resulting in approximately 7,091 genes for analysis

- Structuring: For 1D-CNN, genes are organized into vectors with zero-padding to standardize input dimensions; for 2D-CNN, vectors are reshaped into matrix formats resembling images [8]

Model Architecture and Training:

- Input Layer: 1D vector of 7,100 gene expression values (including padding) or 2D matrix representation

- Convolutional Layers: 1D or 2D kernels that scan input data to detect local patterns and feature hierarchies

- Pooling Layers: Max-pooling operations to reduce spatial dimensions while retaining salient features

- Fully Connected Layers: Integration of extracted features for final classification

- Output Layer: Softmax activation for multi-class cancer type prediction

- Training Parameters: Adam optimizer with categorical cross-entropy loss, batch sizes of 32-64, and learning rates typically between 0.001-0.0001 [8] [12]

Validation and Interpretation:

- Validation Strategy: Hold-out validation with 75% training, 15% validation, and 10% testing splits; some studies employ k-fold cross-validation

- Interpretation Methods: Guided saliency maps and attention mechanisms to identify genes with highest contribution to classification decisions [8]

- Biological Validation: Confirmation of identified marker genes through differential expression analysis and literature mining

RNN/Hybrid Protocol for Sequential Genomic Analysis

The hybrid 1D-CNN + RNN protocol exemplifies how sequential modeling can be integrated with spatial feature extraction for enhanced genomic analysis.

Data Preparation and Preprocessing:

- Data Source: Curated Microarray Database (CuMiDa) specifically filtered for brain cancer datasets (GSE50161)

- Dataset Characteristics: 54,676 genes across 130 samples representing 5 brain cancer classes (Ependymoma, Glioblastoma, Medulloblastoma, Pilocytic Astrocytoma, and normal tissue)

- Normalization: Z-score standardization or min-max scaling applied to gene expression values

- Structuring: Data organized as sequential inputs while preserving sample-to-feature relationships [3]

Hybrid Model Architecture and Training:

- 1D-CNN Component: Initial convolutional layers for local pattern detection and feature extraction from gene expression vectors

- RNN Component: LSTM or GRU layers to model dependencies and interactions within the feature space

- Bayesian Optimization: Hyperparameter tuning using Bayesian optimization to identify optimal architecture configurations, learning rates, and regularization parameters

- Training Parameters: Adaptive moment estimation with learning rate decay, gradient clipping to address exploding gradients, and class-weighted loss functions to handle imbalanced datasets [3]

Validation and Interpretation:

- Validation Strategy: Stratified k-fold cross-validation (typically k=5 or k=10) to ensure representative distribution of cancer subtypes

- Performance Metrics: Multi-class accuracy, precision-recall curves, F1-score, and confusion matrix analysis

- Biological Interpretation: Functional enrichment analysis of genes identified as important by the model, pathway analysis using KEGG or GO databases

Figure 2: Generalized workflow for deep learning applications in cancer genomics

Essential Research Reagents and Computational Tools

Successful implementation of deep learning approaches in cancer genomics requires both biological datasets and computational frameworks. The following table summarizes key resources mentioned across the evaluated studies.

Table 3: Essential Research Reagents and Computational Tools for Cancer Genomics Deep Learning

| Resource Category | Specific Examples | Function/Purpose | Key Features |

|---|---|---|---|

| Genomic Databases | The Cancer Genome Atlas (TCGA) | Provides comprehensive pan-cancer genomic data | 33 cancer types, multi-omics data, clinical correlations [8] [12] |

| Genomic Databases | Curated Microarray Database (CuMiDa) | Specially curated microarray data for cancer classification | 78 datasets, 13 cancer types, quality-controlled [3] |

| Protein Networks | BioGRID, DIP, IntAct, MINT | Protein-protein interaction data for network-based analysis | 16,433 proteins, 181,868 interactions for biological context [12] |

| Computational Frameworks | TensorFlow, PyTorch, Keras | Deep learning model development and training | Flexible architecture design, GPU acceleration, extensive documentation |

| Model Interpretation | Guided Saliency, XAI Techniques | Identification of important features and biomarkers | Reveals model decision processes, validates biological relevance [8] [25] |

| Preprocessing Tools | TCGAbiolinks, scikit-learn | Data acquisition, normalization, and feature selection | Streamlined workflows, integration with analysis pipelines [8] |

The experimental evidence demonstrates that both CNN and RNN architectures offer powerful approaches for addressing the fundamental challenges of high dimensionality and complex patterns in cancer genomics. CNN architectures currently show superior performance in cancer type classification tasks, particularly with structured genomic data, achieving accuracies between 93-97% across multiple studies [8] [7] [12]. RNN and hybrid approaches excel in capturing sequential dependencies and have demonstrated remarkable performance in specific applications, with one hybrid model achieving 100% accuracy in brain cancer classification [3].

The future of deep learning in cancer genomics lies in several promising directions: improved model interpretability through explainable AI techniques [25], sophisticated multimodal data integration combining genomic, imaging, and clinical data [2] [26], and development of more biologically-informed architectures that incorporate prior knowledge about gene networks and pathways [12]. As these technologies mature, they hold increasing potential for clinical translation in cancer diagnosis, prognosis, and personalized treatment selection.

Researchers selecting between CNN and RNN approaches should consider both their data structure and analytical objectives. CNN architectures are generally preferred for classification tasks involving structured genomic measurements, while RNN and hybrid approaches show particular promise for modeling temporal progression, sequential dependencies, and complex feature interactions in genomic data.

Methodological Implementations and Real-World Applications in Cancer Research

CNN Applications in Cancer Type Prediction from Gene Expression Profiles

Convolutional Neural Networks (CNNs), a cornerstone of deep learning, have demonstrated remarkable success in image recognition tasks. Their application has expanded into genomics, where they are increasingly used to predict cancer types from gene expression profiles. This capability is vital for precision oncology, as accurate cancer typing can inform targeted treatment strategies and improve patient outcomes. This guide explores the application of CNNs in this domain by examining seminal studies, detailing their experimental protocols, and quantitatively comparing their performance with alternative methods, including Recurrent Neural Networks (RNNs), within the broader context of cancer genomics research.

Key Methodologies and Experimental Protocols

Researchers have developed several innovative CNN architectures to process structured genomic data for cancer classification. The following section details the foundational experimental approaches from key studies in the field.

CNN Models for Pan-Cancer Classification

A pivotal 2020 study introduced several CNN models designed to classify tumor and non-tumor samples into 33 designated cancer types or as normal using data from The Cancer Genome Atlas (TCGA) [8].

- Dataset: The study utilized gene expression profiles from 10,340 tumor samples (33 cancer types) and 713 matched normal tissue samples. A total of 7,091 genes remained after filtering for high expression and variability [8].

- Model Architectures: Three distinct CNN models were implemented based on different input structuring and convolution schemes [8]:

- 1D-CNN: This model treats the gene expression vector as a 1D input. Its architecture includes a 1D convolutional layer, a max-pooling layer, a fully connected (FC) layer, and a final prediction layer.

- 2D-Vanilla-CNN: The gene expression data is reshaped into a 2D matrix to construct an image-like input. The model then applies standard 2D convolutional kernels, followed by a max-pooling layer, an FC layer, and a prediction layer.

- 2D-Hybrid-CNN: This model takes a 2D matrix as input but applies 1D convolutional kernels, combining aspects of the other two architectures.

- Training and Interpretation: The models were trained with a constrained number of parameters to prevent overfitting on the limited sample size. The 1D-CNN model was interpreted using a guided saliency technique, which identified 2,090 potential cancer marker genes, including well-known markers like GATA3 and ESR1 for breast cancer [8].

Spectral CNN with Protein-Protein Interaction (PPI) Networks

Another significant approach integrated genomic data with biological networks to create 2D images for CNN analysis [12].

- Dataset and Preprocessing: RNA-Seq data from 5,528 tumors and 608 normal tissues across 11 cancer types were collected from TCGA. A universal PPI network with 6,261 genes and 28,439 interactions was compiled from public databases [12].

- Spectral Clustering and 2D Representation: The core of this methodology is the use of spectral clustering to transform the complex cancer-specific PPI network into a 2D image. The Laplacian matrix of the network was computed, and its eigenvalues and eigenvectors were used to map the network into a 2D space, preserving its topological structure. Gene expression values were then assigned to the nodes in this 2D representation [12].

- CNN Architecture: The generated 2D images were fed into a CNN architecture consisting of three successive convolutional layers (with 5x5, 3x3, and 3x3 kernels) and pooling layers, followed by three fully connected hidden layers and a final output layer for classifying the 11 cancer types and normal tissue [12].

The workflow for this approach is summarized in the diagram below.

Performance Comparison: CNN vs. RNN and Other Methods