Beyond BERT: Applying Sentence Transformers like SBERT and SimCSE for Advanced DNA Sequence Representation

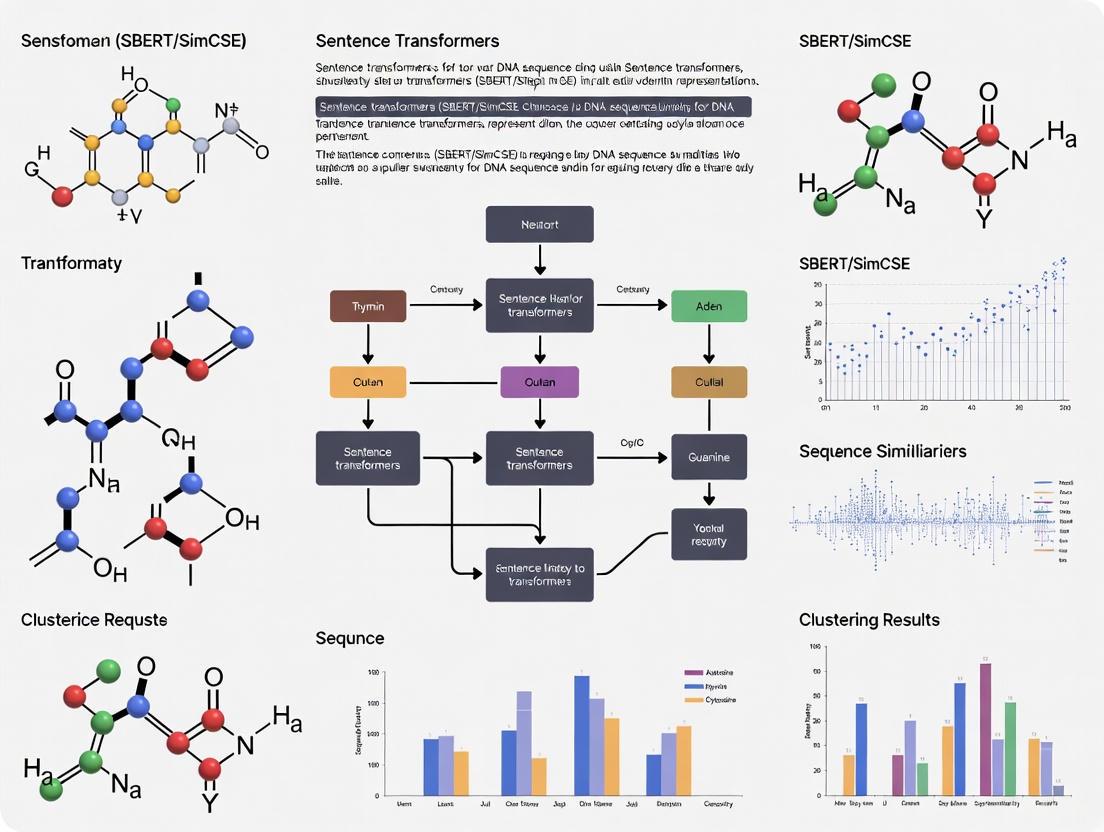

This article explores the transformative application of Sentence Transformer models, specifically SBERT and SimCSE, for generating powerful numerical representations of DNA sequences.

Beyond BERT: Applying Sentence Transformers like SBERT and SimCSE for Advanced DNA Sequence Representation

Abstract

This article explores the transformative application of Sentence Transformer models, specifically SBERT and SimCSE, for generating powerful numerical representations of DNA sequences. Originally designed for natural language, these models are being fine-tuned to capture the semantic meaning within genomic data, enabling tasks from species clustering to cancer detection. We provide a comprehensive guide covering the foundational principles, methodological steps for adaptation and fine-tuning, key optimization strategies for handling genomic data, and a critical validation against specialized DNA foundation models. Aimed at researchers and bioinformaticians, this review synthesizes current evidence and practical insights, demonstrating how these models offer a compelling balance of performance and computational efficiency for genomic analysis.

From Language to Genetics: The Foundational Principles of Sentence Transformers for DNA

Model Architectures and Core Mechanisms

Sentence Transformer models, such as SBERT and SimCSE, represent a significant evolution in generating dense, semantically meaningful sentence embeddings. These models are built upon the transformer architecture and are specifically designed to overcome the limitations of vanilla transformer models like BERT for sentence-level tasks.

SBERT (Sentence-BERT) is based on a siamese or triplet network architecture which allows for the efficient computation of sentence embeddings. The core innovation of SBERT lies in its ability to derive fixed-sized sentence embeddings that capture semantic meaning, making it suitable for tasks like semantic similarity comparison, clustering, and information retrieval.

SimCSE (Simple Contrastive Learning of Sentence Embeddings) introduces a strikingly simple yet powerful method for improving sentence embeddings using contrastive learning. The model comes in two variants: unsupervised and supervised. The unsupervised SimCSE passes the same sentence twice through the same encoder with different dropout masks applied, using the resulting embeddings as positive pairs. The supervised SimCSE leverages natural language inference (NLI) datasets, where entailment pairs are treated as positives and contradiction pairs as negatives [1].

The training mechanism for SimCSE employs contrastive learning objectives. For unsupervised SimCSE, the model is trained to predict the input sentence itself using dropout as noise. The input sentence is passed twice through the encoder, resulting in two embeddings (positive pairs) with different dropout masks. Other sentences in the same mini-batch are treated as negative examples, and the model learns to identify the positive pair among the negatives [1] [2].

Application to DNA Sequence Representation

The application of Sentence Transformer models to DNA sequence analysis represents an emerging frontier in computational biology. Research has demonstrated that models like SBERT and SimCSE, when fine-tuned on genomic data, can generate powerful DNA sequence embeddings that capture biological significance.

In practical applications, DNA sequences are preprocessed using k-mer tokenization before being fed into transformer models. The k-mer approach breaks down long DNA sequences into subsequences of length k (typically k=6 for DNA transformer models), which are then treated analogously to words in natural language processing [1]. This transformation allows the sentence transformer architectures to process DNA sequences effectively.

Recent studies have shown that fine-tuned sentence transformer models can generate DNA embeddings that surpass specialized genomic models like DNABERT in multiple tasks, though they may not always exceed the performance of the largest nucleotide transformers [1]. This demonstrates the transfer learning capability of these architectures from natural language to biological sequences.

Table 1: Performance Comparison of DNA Sequence Embedding Methods

| Model | Architecture Type | Key Applications | Reported Performance | Computational Requirements |

|---|---|---|---|---|

| Fine-tuned SimCSE | Sentence Transformer | Multiple DNA benchmark tasks | Exceeds DNABERT in multiple tasks [1] | Balanced performance/accuracy [1] |

| DNABERT | Domain-specific DNA Transformer | Promoter regions, TFBS identification [1] | Baseline for comparison | 100M parameters [1] |

| Nucleotide Transformer | Foundational DNA Transformer | General DNA tasks | Highest raw classification accuracy [1] | High (500M-2.5B parameters) [1] |

| SBERT/SimCSE for Cancer Detection | Sentence Transformer + ML | Cancer type classification | 73-75% accuracy with XGBoost [3] | Practical for resource-constrained environments |

Experimental Protocols and Implementation

DNA-Specific Fine-Tuning Protocol

Objective: Fine-tune a pre-trained SimCSE model on DNA sequences to generate biologically meaningful embeddings.

Materials and Requirements:

- DNA sequences in FASTA or text format

- Pre-trained SimCSE model checkpoint

- Computational environment with GPU acceleration

- Python with PyTorch and Sentence Transformers library

Procedure:

- Data Preparation:

- Collect DNA sequences of interest (e.g., 3000 sequences from specific genomic regions)

- Convert sequences to k-mer tokens (k=6 recommended) using sliding window approach

- Format sequences as plain text files with one sequence per line

- Model Configuration:

- Initialize with a pre-trained SimCSE checkpoint

- Set training parameters:

The analogy of DNA-as-language is a powerful framework in computational genomics, where nucleotides are treated as letters, and sequences of these nucleotides form "words" or "sentences" that can be interpreted by machine learning models. Central to this approach is tokenization, the process of converting raw DNA sequences into discrete units, or tokens, that serve as the input for advanced neural network architectures like transformers. The k-mer tokenization strategy, which breaks a long sequence into shorter overlapping or non-overlapping fragments of length k, is a critical and widely adopted method. Its design directly influences a model's ability to capture biologically meaningful patterns, such as transcription factor binding sites or splice sites [4] [5].

This Application Note frames k-mer tokenization within the context of applying Sentence Transformer models, specifically SBERT and SimCSE, to DNA sequence representation research. These models, which excel at generating dense, meaningful sentence embeddings in natural language processing, can be similarly trained to produce powerful, information-rich embeddings for DNA sequences. By doing so, they offer a promising path for tasks such as functional element classification, variant effect prediction, and regulatory sequence design [2] [6].

k-mer Tokenization: Core Concepts and Methodologies

Defining k-mer Tokenization Strategies

Tokenization is the foundational step that transforms a continuous DNA string into a sequence of discrete tokens. For a DNA sequence, the most basic tokenization is character-level, where each nucleotide (A, T, C, G) becomes a single token. However, this fails to capture any contextual information between adjacent bases. K-mer tokenization addresses this by defining tokens as contiguous subsequences of k nucleotides. The strategy for generating these k-mers from a sequence significantly impacts model performance and computational efficiency [4] [5].

The two primary k-mer tokenization strategies are:

- Fully Overlapping

k-mers: A sliding window moves one nucleotide at a time, generating tokens that sharek-1nucleotides with their neighbors. For a sequence of lengthL, this producesL - k + 1tokens. - Non-overlapping

k-mers: The sequence is split into contiguous blocks ofknucleotides. This generates approximatelyL / ktokens, significantly fewer than the overlapping method.

Table 1: Comparison of k-mer Tokenization Strategies for a Sequence "ATGCCT" with k=3.

| Strategy | Tokens Generated | Number of Tokens |

|---|---|---|

| Non-overlapping | ["ATG", "CCT"] |

2 |

| Fully Overlapping | ["ATG", "TGC", "GCC", "CCT"] |

4 |

The choice of k involves a fundamental trade-off. A larger k value increases the vocabulary size (growing as 4^k), which allows the model to learn more complex, longer motifs but also demands more memory and data for effective training. A smaller k results in a more manageable vocabulary and shorter input sequences but may fail to capture meaningful biological words [5]. Research indicates that models with overlapping k-mers can become overly reliant on token identity itself, struggling to learn longer-range sequence context, whereas non-overlapping strategies can be more computationally efficient while still achieving competitive performance on many tasks [7].

Connecting Tokenization to Sentence Transformer Fine-Tuning

The quality of the embeddings produced by models like SimCSE is deeply connected to the tokenization process. A well-designed tokenizer provides a meaningful vocabulary from which the model can learn robust representations. In natural language processing, SimCSE works by passing the same sentence through the same model twice with different dropout masks, creating two slightly different embeddings for the same sentence. The learning objective is to minimize the distance between these two embeddings while maximizing their distance from the embeddings of other sentences in the same batch [2] [8].

This framework can be directly adapted for DNA sequences. A DNA sequence, once tokenized into a series of k-mers, is treated as a "sentence." The SimCSE model can then be trained to generate embeddings such that semantically or functionally similar DNA sequences (e.g., sequences from the same enhancer class) are close together in the embedding space. Research has demonstrated the viability of this approach, with models like simcse-dna being successfully fine-tuned on k-mer tokens from the human genome for various downstream classification tasks [6].

Quantitative Analysis of k-mer Performance

The performance of transformer models using different k-mer tokenization strategies has been systematically evaluated across various genomic tasks. The following tables summarize key findings from recent studies, providing a guide for researchers in selecting tokenization parameters.

Table 2: Impact of k-mer Strategies on Model Performance and Efficiency. Performance is measured by the F1-score on a promoter identification task, while efficiency is represented by the number of tokens generated for a sequence of length L=100 [5] [7].

| k value | Tokenization Strategy | Vocabulary Size | ~Tokens for L=100 | Reported F1-Score |

|---|---|---|---|---|

| 3 | Fully Overlapping | 69 | 98 | 0.78 |

| 3 | Non-overlapping | 69 | 34 | 0.76 |

| 4 | Fully Overlapping | 261 | 97 | 0.80 |

| 4 | Non-overlapping | 261 | 25 | 0.79 |

| 5 | Fully Overlapping | 1029 | 96 | 0.81 |

| 5 | Non-overlapping | 1029 | 20 | 0.80 |

| 6 | Fully Overlapping | 4101 | 95 | 0.82 |

| 6 | Non-overlapping (AgroNT) | 4101 | 18 | 0.85 |

Table 3: Performance of DNA-Specific Language Models on Benchmark Tasks (Accuracy). Models were evaluated on a range of tasks (T1-T8) including splice site and regulatory element prediction. Results are shown for a LightGBM (LGBM) classifier on top of the model's embeddings [6].

| Model | T1 | T2 | T3 | T4 | T5 | T6 | T7 | T8 |

|---|---|---|---|---|---|---|---|---|

| simcse-dna (Proposed) | 0.64 ± 0.01 | 0.66 ± 0.0 | 0.90 ± 0.02 | 0.61 ± 0.01 | 0.78 ± 0.0 | 0.49 ± 0.0 | 0.33 ± 0.0 | 0.81 ± 0.01 |

| DNABERT | 0.62 ± 0.01 | 0.65 ± 0.01 | 0.90 ± 0.02 | 0.65 ± 0.01 | 0.83 ± 0.0 | 0.49 ± 0.0 | 0.33 ± 0.0 | 0.75 ± 0.01 |

| Nucleotide Transformer (NT) | 0.63 ± 0.01 | 0.66 ± 0.0 | 0.91 ± 0.02 | 0.72 ± 0.0 | 0.85 ± 0.0 | 0.80 ± 0.0 | 0.59 ± 0.01 | 0.97 ± 0.0 |

Experimental Protocols

Protocol 1: Fine-tuning a SimCSE Model for DNA Sequences

This protocol details the process of adapting the SimCSE framework to generate embeddings for DNA sequences tokenized as k-mers [2] [8] [6].

Principle: Contrastive learning is used to train a transformer model such that a DNA sequence and a slightly noised version of itself (created via dropout) are mapped to similar embeddings, while being distinguished from other sequences in the batch.

The Scientist's Toolkit:

- Software & Libraries: Python, PyTorch, Hugging Face Transformers, Sentence Transformers, SimCSE package.

- Computing Resources: A GPU with sufficient VRAM is highly recommended for efficient training.

- Biological Data: A set of DNA sequences in FASTA format. For unsupervised SimCSE, a large corpus (e.g., 10k-1M sequences) from a reference genome is typical.

Procedure:

Data Preparation:

- Obtain your DNA sequences in FASTA format.

- (Optional) Pre-process sequences: Filter for quality, normalize length, or split into fixed-length windows.

- Define a

k-mer tokenization strategy (kvalue, overlapping vs. non-overlapping). Convert each DNA sequence into a list ofk-mer tokens. For example, the sequenceATGCCTwithk=3and overlapping becomes['ATG', 'TGC', 'GCC', 'CCT']. - The list of

k-mer tokens for a sequence is treated as a "sentence." The training data is formatted as a list ofInputExampleobjects where thetextsfield for each sequence contains[sentence, sentence](the same sentence twice).

Model Initialization:

- Initialize a transformer model suitable for your data (e.g.,

distilroberta-baseor a pre-trained DNA model likeDNABERT). - Add a pooling layer on top of the transformer to create a fixed-sized embedding for the entire sequence. Mean pooling is often a robust choice.

- Combine the transformer and pooling layers into a

SentenceTransformermodel.

- Initialize a transformer model suitable for your data (e.g.,

Training Loop Configuration:

- Create a

DataLoaderto feed the training data in batches. - Define the loss function. For SimCSE, the

MultipleNegativesRankingLossis used, which aligns the embeddings of the same sentence and contrasts them against all other sentences in the batch. - Call the

model.fit()method, passing the data loader and the loss function. Typical training involves 1-3 epochs.

- Create a

Model Validation & Saving:

- Evaluate the model on a downstream task (e.g., sequence classification) or via intrinsic measures (e.g., clustering analysis) to assess embedding quality.

- Save the fine-tuned model for future inference.

Protocol 2: Benchmarking k-mer Tokenization Strategies

This protocol provides a methodology for empirically comparing different k-mer tokenization strategies to identify the optimal one for a specific genomic task [4] [5] [7].

Principle: Train multiple transformer models that are identical in architecture and training regimen but differ only in their tokenization strategy. Evaluate their performance on a held-out test set for a defined downstream task to determine the most effective strategy.

Procedure:

Define Benchmark Task and Dataset:

- Select a clear downstream task, such as splice site prediction or promoter classification.

- Split your data into training, validation, and test sets.

Initialize Tokenizers and Models:

- Select a range of

kvalues to test (e.g., 3, 4, 5, 6). - For each

k, prepare two tokenizers: one for fully overlapping and one for non-overlappingk-mers. - For each tokenizer, initialize a pre-trained transformer model (e.g., a BERT architecture). It is critical to keep all other model hyperparameters constant.

- Select a range of

Fine-tune Models:

- Fine-tune each model (e.g.,

BERT-k3-overlap,BERT-k6-non-overlap) on the training set of the benchmark task. - Use the validation set for early stopping and hyperparameter tuning.

- Fine-tune each model (e.g.,

Evaluate and Compare:

- Run the fine-tuned models on the test set.

- Record key performance metrics (e.g., Accuracy, F1-score, AUPRC) and computational metrics (e.g., training time, memory footprint, number of tokens per sequence).

- Compare results across all models to select the best-performing tokenization strategy for your task and data.

The Scientist's Toolkit

Table 4: Essential Research Reagents and Computational Tools for DNA Language Modeling.

| Item Name | Type | Function/Application | Example/Reference |

|---|---|---|---|

| gReLU Framework | Software Framework | A comprehensive Python framework for DNA sequence modeling, supporting data prep, model training, interpretation, and sequence design. | [9] |

| SimCSE | Python Package | A simple method for contrastive learning of sentence embeddings, adaptable for DNA sequences. | [2] [8] |

| Hugging Face Transformers | Python Library | Provides thousands of pre-trained transformer models and a unified API for training and inference. | [8] [6] |

| DNABERT / AgroNT | Pre-trained Model | Foundational DNA language models pre-trained on human or plant genomes, ready for fine-tuning. | [5] [7] |

| Reference Genome Sequences | Biological Data | The standard genomic sequence for a species, used as a corpus for pre-training or as a reference for inference. | hg19, GRCh38 [7] |

| Functional Genomic Annotations | Biological Data | Labels for genomic regions (e.g., promoters, enhancers) used for supervised fine-tuning and evaluation. | ENCODE, Ensembl |

The application of contrastive learning and sentence embeddings to DNA sequence analysis represents a paradigm shift in bioinformatics. By drawing parallels between natural language and biological sequences, researchers can leverage powerful transformer-based models to convert DNA into numerical representations, or embeddings, that capture complex functional and semantic properties [10]. These embeddings facilitate tasks such as sequence classification, function prediction, and genome-wide alignment by positioning semantically similar sequences close together in a vector space [11] [12]. This document outlines the core theoretical concepts, provides quantitative performance comparisons, and details experimental protocols for applying sentence transformer methodologies to genomic research, forming a foundational component of a broader thesis on DNA sequence representation.

Core Conceptual Framework

From Natural Language to DNA Sequences

The foundational analogy enabling this research posits that nucleotide sequences can be treated as a formal language. In this framework, k-mers—contiguous subsequences of length k—serve as the basic vocabulary tokens, analogous to words in natural language [11]. A DNA sequence is thus tokenized into overlapping k-mers, which are fed into transformer models initially developed for NLP. The transformer's self-attention mechanism is uniquely suited for genomics as it processes entire sequences simultaneously to capture long-range dependencies and contextual relationships between nucleotides, overcoming limitations of previous models that struggled with long-term dependencies [10].

Contrastive Learning in Vector Spaces

Contrastive learning trains models to organize data in a vector space by directly comparing examples. The core objective is to learn an embedding function that maps similar data points close together while pushing dissimilar points far apart [13].

- Positive and Negative Pairs: Model learning occurs through positive pairs (semantically similar sequences) and negative pairs (dissimilar sequences). For DNA, positive pairs can be created via data augmentation techniques like simulated mutagenesis or sampling homologous regions, while negative pairs might involve sequences from different functional classes or genomic loci [13] [12].

- Contrastive Loss Functions: Loss functions like InfoNCE (Information Noise Contrastive Estimation) formalize this objective by maximizing agreement between positive pairs and minimizing agreement between negative pairs within a training batch [13]. The model learns to be sensitive to small variations that alter biological function while remaining invariant to non-functional changes.

Semantic Similarity for Genomic Sequences

In genomic embedding spaces, semantic similarity refers to functional or structural relatedness rather than literal sequence identity. For example, two promoter sequences from different genes may share high semantic similarity despite having different nucleotide sequences, as both perform similar regulatory functions [11]. This conceptual framework enables researchers to search for functionally similar regions across the genome without relying solely on sequence homology.

Performance Benchmarking

Model Comparison on DNA Classification Tasks

Quantitative evaluation across diverse genomic tasks demonstrates the efficacy of transformer-based approaches. The following table compares fine-tuned sentence transformers against specialized DNA models on benchmark classification tasks, measured by Matthews Correlation Coefficient (MCC) where available [11] [14].

Table 1: Performance comparison of DNA language models on classification tasks

| Model | Parameters | Promoter Prediction (MCC) | Enhancer Prediction (MCC) | Splice Site Prediction (MCC) | Computational Cost |

|---|---|---|---|---|---|

| Fine-tuned SimCSE (Sentence Transformer) [11] | ~100-300M | 0.79 | 0.81 | 0.88 | Moderate |

| DNABERT [11] | 100M+ | 0.75 | 0.78 | 0.85 | High |

| Nucleotide Transformer (500M) [11] [14] | 500M | 0.82 | 0.84 | 0.90 | Very High |

| BPNet (Supervised Baseline) [14] | ~28M | 0.68 | 0.72 | 0.75 | Low |

Sequence Alignment Performance

For sequence alignment—a fundamental genomics task—the Embed-Search-Align (ESA) framework with contrastive learning achieves 99% accuracy when aligning 250-base reads to the human genome, rivaling conventional alignment tools like Bowtie and BWA-MEM [12]. The following table compares alignment performance across methods.

Table 2: Sequence alignment performance comparison

| Method | Alignment Accuracy (%) | Requires Reference Indexing | Robust to Variants | Basis of Comparison |

|---|---|---|---|---|

| DNA-ESA (Contrastive) [12] | 99% | No | Yes | Embedding Similarity |

| BWA-MEM [12] | >99% | Yes | Moderate | Edit Distance |

| Nucleotide Transformer (Baseline) [12] | <70% | No | Limited | Embedding Similarity |

| Bowtie [12] | >99% | Yes | Limited | Edit Distance |

Experimental Protocols

Protocol 1: Fine-tuning a Sentence Transformer for DNA Sequences

This protocol adapts the SimCSE model for DNA sequence representation learning, based on methodologies demonstrating competitive performance with domain-specific models [11].

Research Reagents and Materials

Table 3: Essential research reagents for fine-tuning sentence transformers

| Item | Specification/Example | Function/Purpose |

|---|---|---|

| Pre-trained Model | SimCSE (bert-base-uncased) [11] | Provides initial weights for transfer learning |

| DNA Sequence Data | 3,000+ sequences (e.g., from human genome) [11] | Domain-specific training corpus |

| Tokenization Tool | K-mer tokenizer (k=6) [11] | Converts sequences to model-readable tokens |

| Training Framework | Sentence Transformers Library [15] | Provides training loops and loss functions |

| Computational Environment | GPU with 16GB+ VRAM [11] | Enables efficient model training |

Step-by-Step Procedure

Data Preparation:

- Collect a minimum of 3,000 DNA sequences relevant to your research domain [11].

- Split sequences into fixed-length segments (e.g., 512-3120 nucleotides) depending on model constraints.

- Tokenize sequences using k-mer segmentation (k=6 is recommended), which converts a sequence like "ATCGGA" into tokens ["ATC", "TCG", "CGG", "GGA"] [11].

Model Initialization:

- Load a pre-trained SimCSE model checkpoint using the SentenceTransformer class.

- Optionally, modify the tokenizer vocabulary to include DNA-specific tokens if necessary.

Training Configuration:

Model Fine-tuning:

- Execute training using the configured parameters.

- Monitor loss convergence; typical training completes within one epoch for DNA data [11].

Embedding Generation:

- Use the fine-tuned model's

encode()method to generate embeddings for downstream tasks. - Store embeddings in a vector database for efficient similarity search [12].

- Use the fine-tuned model's

Diagram 1: Sentence transformer fine-tuning workflow for DNA.

Protocol 2: DNA Sequence Alignment Using Contrastive Embeddings

This protocol implements the Embed-Search-Align paradigm for mapping sequencing reads to a reference genome using contrastively learned embeddings [12].

Research Reagents and Materials

- Reference Genome: FASTA file (e.g., human reference GRCh38)

- DNA Read Simulator: ART or similar tool for generating synthetic reads [12]

- DNA-ESA Model: Pre-trained contrastive encoder for DNA [12]

- Vector Database: FAISS or similar for efficient similarity search [12]

Step-by-Step Procedure

Reference Genome Processing:

- Segment the reference genome into overlapping fragments (e.g., 250-base windows with 50-base overlap).

- Generate embeddings for all fragments using the DNA-ESA model and store in a vector database [12].

Read Processing:

- Generate or obtain sequencing reads (250-base length is standard).

- Encode reads using the same DNA-ESA model to produce query embeddings [12].

Similarity Search:

- For each read embedding, query the vector database for the most similar reference embeddings using cosine similarity.

- Return the top-k candidates (k=5-10) for further analysis [12].

Alignment Determination:

- Select the reference fragment with the highest similarity score as the alignment position.

- Compute confidence metrics based on the similarity score differential between top candidates [12].

Diagram 2: Embed-Search-Align workflow for DNA sequence alignment.

Protocol 3: BlendCSE for Enhanced Transferability

The BlendCSE framework combines multiple learning objectives to produce embeddings with superior transferability across diverse genomic applications [17].

Research Reagents and Materials

- Base Pre-trained Model: BERT or RoBERTa architecture

- Multi-task Training Data: Labeled and unlabeled DNA sequences

- Data Augmentation Pipeline: Methods for generating sequence variations

Step-by-Step Procedure

Objective 1 - Masked Language Modeling:

- Continue pre-training with the standard MLM objective to maintain token-level understanding and prevent catastrophic forgetting [17].

Objective 2 - Self-supervised Contrastive Learning (SimSiam):

- Apply data augmentation to create positive pairs (e.g., via slight sequence perturbations).

- Implement the SimSiam architecture with a predictor network to learn augmentation-invariant features [17].

Objective 3 - Supervised Contrastive Learning:

- Use labeled DNA data (e.g., promoter/non-promoter sequences) for supervised contrastive learning.

- Employ a Siamese network structure to pull embeddings from the same class closer together [17].

Joint Optimization:

- Combine all three objectives into a single loss function with appropriate weighting.

- Train the model with multi-task learning, balancing the contributions of each objective [17].

The Scientist's Toolkit

Essential Research Reagent Solutions

Table 4: Key resources for DNA sentence embedding research

| Category | Specific Tool/Resource | Application Context | Access/Reference |

|---|---|---|---|

| Pre-trained Models | Nucleotide Transformer (500M-2.5B) [14] | Foundation model for genomic tasks | Hugging Face Hub |

| Training Libraries | Sentence Transformers [15] | Fine-tuning and embedding generation | PyPI Install |

| Contrastive Algorithms | Contrastive Tension (CT) [16] | Self-supervised sentence embedding training | GitHub Repository |

| DNA-Specific Models | DNABERT [11] | Domain-specific pre-trained transformer | Academic Publication |

| Vector Stores | FAISS [12] | Efficient similarity search for alignment | Meta Open Source |

| Evaluation Frameworks | SentEval [16] | Benchmarking embedding quality | GitHub Repository |

Advanced Applications and Future Directions

The application of contrastive learning and semantic similarity concepts to DNA sequences continues to evolve. Promising research directions include:

- Multi-modal Integration: Combining DNA sequence embeddings with epigenetic marks, protein-binding data, and structural information to create unified genomic representations [18].

- Transfer Learning for Rare Variants: Leveraging models pre-trained on large genomic datasets to improve prediction of pathogenic variants in rare diseases [14].

- Single-Cell Analysis: Applying sentence embedding techniques to single-cell sequencing data to uncover novel cell states and developmental trajectories [10].

- Explainable AI: Interpreting attention mechanisms in DNA transformers to identify biologically meaningful sequence motifs and regulatory patterns [11] [14].

These approaches, built on the core concepts of contrastive learning and semantic embeddings, are poised to significantly advance computational genomics and therapeutic development.

A Practical Guide to Implementing and Applying DNA Sentence Transformers

The application of natural language processing (NLP) models to genomic sequences represents a paradigm shift in computational biology. Sentence-transformers, a class of models that generate semantically meaningful embeddings for sentences and paragraphs, can be adapted to DNA sequences by treating genetic elements as textual data [11]. This protocol details the fine-tuning of SimCSE, a powerful sentence transformer, for generating DNA sequence embeddings, enabling researchers to leverage transfer learning for various genomic prediction tasks [11]. The resulting model produces dense vector representations that capture functional and structural similarities between DNA sequences, facilitating applications in promoter identification, transcription factor binding site prediction, and cancer classification [11] [19].

Framed within broader thesis research on sentence transformers for DNA sequence representation, this approach demonstrates that embeddings from a fine-tuned natural language model can, in certain settings, outperform those derived from larger domain-specific language models pretrained exclusively on genomic data, while offering a favorable balance between performance and computational efficiency [11]. This makes the technique particularly valuable for resource-constrained environments [11].

Background and Principle

Sentence Transformers and SimCSE

Traditional transformer models like BERT require complex inference computations for similarity tasks between numerous sentence pairs [11]. Sentence transformers overcome this limitation by producing sentence embeddings directly usable with standard similarity metrics [11]. SimCSE (Simple Contrastive Learning of Sentence Embeddings) employs contrastive learning to generate high-quality sentence embeddings [11]. The unsupervised variant uses dropout as noise, passing the same input sentence twice through the encoder to create positive pairs, while other sentences in the mini-batch are treated as negatives [11]. The model is then trained to identify the positive pair within the batch [11]. Supervised SimCSE incorporates annotated sentence pairs from Natural Language Inference (NLI) datasets, treating entailment pairs as positives and contradiction pairs as negatives [11].

DNA as Language

Genomic sequences can be conceptualized as text written in a four-letter nucleotide alphabet (A, C, G, T). The k-mer fragmentation approach, which breaks DNA sequences into subsequences of length k, serves as the "tokenization" step for applying NLP methods [11]. For example, a DNA sequence ATCGGA can be tokenized into 3-mers: ATC, TCG, CGG, GGA. This representation allows transformer models to capture patterns and contextual relationships within genetic sequences, similar to how they process natural language [11].

Materials and Equipment

Research Reagent Solutions

Table 1: Essential research reagents and computational materials

| Item Name | Specification/Function |

|---|---|

| Pre-trained SimCSE Model | Initialized with princeton-nlp/unsup-simcse-bert-base-uncased checkpoint [11] |

| Genomic Sequences | DNA sequences in FASTA or raw text format; human reference genome or task-specific sequences [11] [19] |

| k-mer Tokenizer | Python script to fragment DNA sequences into overlapping k-mers (k=6 recommended) [11] [19] |

| Training Scripts | Modified SimCSE training scripts adapted for DNA data [11] |

| Computational Environment | Python 3.7+, PyTorch, Transformers library, Sentence-Transformers library [11] |

| Evaluation Datasets | Eight benchmark tasks including promoter regions, TFBS, and cancer classification [11] |

Hardware Requirements

For model fine-tuning, a GPU with at least 8GB VRAM is recommended (e.g., NVIDIA V100, RTX 2080 Ti). The memory requirement increases with batch size and sequence length. The fine-tuning process described in this protocol was successfully performed on a single GPU, making it accessible for individual research laboratories [11].

Experimental Protocol

Data Preparation and k-mer Tokenization

Diagram: DNA data preparation workflow

- Sequence Acquisition: Obtain DNA sequences in FASTA format from public genomic repositories (e.g., ENCODE, NCBI) or project-specific collections [11] [20].

- k-mer Tokenization:

- Data Splitting: Partition tokenized sequences into training (≈80%), validation (≈10%), and test (≈10%) sets, maintaining class balance for classification tasks [20].

Model Configuration and Training

Diagram: Model fine-tuning workflow

Environment Setup:

- Install required packages:

sentence-transformers,transformers,torch,numpy,pandas. - Import the

AutoTokenizerandAutoModelfrom the transformers library [19].

- Install required packages:

Model Initialization:

- Load the pre-trained SimCSE model and tokenizer:

Training Configuration:

- Set training hyperparameters [11]:

- Batch size: 16

- Maximum sequence length: 312 tokens

- Learning rate: 5e-5 (default for BERT fine-tuning)

- Number of epochs: 1

- Optimizer: AdamW with weight decay

- Set training hyperparameters [11]:

Fine-tuning Execution:

- Use the modified SimCSE training script adapted for DNA data.

- Pass each DNA sequence through the model twice with different dropout masks to generate positive pairs [11].

- Other sequences in the mini-batch serve as negative examples.

- The model learns to maximize similarity between positive pairs while minimizing similarity to negatives.

Embedding Extraction and Downstream Application

Generate Embeddings:

- Process tokenized DNA sequences through the fine-tuned model:

- The

pooler_outputcontains the sentence embeddings for downstream tasks [19].

Apply to Prediction Tasks:

- Use embeddings as features for machine learning classifiers (e.g., LightGBM, Random Forest, SVM) [19].

- Perform standard train-test split on embeddings and labels.

- Train classifier and evaluate performance on held-out test set.

Performance Benchmarking

Quantitative Evaluation

Table 2: Performance comparison of embedding methods across DNA tasks

| Model | Parameter Count | Colorectal Cancer Detection Accuracy | TATA Sequence Detection Accuracy | Computational Efficiency |

|---|---|---|---|---|

| Fine-tuned SimCSE (proposed) | ~110M (base BERT) | 91% [19] | 98% [19] | High (single GPU, 1 epoch) [11] |

| DNABERT-6 | ~110M | Lower than proposed model in multiple tasks [11] | Not reported | Medium [11] |

| Nucleotide Transformer (500M) | ~500M | Not reported | Not reported | Low (significant computing expenses) [11] |

Table 3: Cancer type classification performance using DNA embeddings with ensemble methods

| Cancer Type | Abbreviation | Classification Accuracy |

|---|---|---|

| Breast Cancer gene 1 | BRCA-1 | 100% [20] |

| Kidney Renal Clear Cell Carcinoma | KIRC-2 | 100% [20] |

| Colorectal Adenocarcinoma | COAD-3 | 100% [20] |

| Lung Adenocarcinoma | LUAD-4 | 98% [20] |

| Prostate Adenocarcinoma | PRAD-5 | 98% [20] |

Comparative Analysis

The fine-tuned SimCSE model generates DNA embeddings that exceed DNABERT performance in multiple tasks while using similar parameter counts [11]. Although the Nucleotide Transformer achieves slightly higher raw classification accuracy in some benchmarks, this comes with substantial computational costs (500M parameters), making it impractical for resource-constrained environments [11]. The SimCSE approach presents an optimal balance, offering competitive performance with significantly lower computational requirements [11].

For downstream classification, ensemble methods combining Logistic Regression with Gaussian Naive Bayes have demonstrated exceptional performance when using DNA sequence embeddings, achieving up to 100% accuracy on specific cancer types [20]. This underscores the utility of DNA embeddings as features for traditional machine learning approaches.

Troubleshooting and Optimization

- Low Performance on Downstream Tasks: Increase k-mer size to 6 if using smaller values, ensure training data is representative of test distribution, and try supervised contrastive learning if labeled data is available.

- Memory Issues During Training: Reduce batch size (minimum 8), decrease maximum sequence length, and use gradient accumulation.

- Overfitting: Apply dropout regularization, use early stopping based on validation performance, and increase training dataset size (3000 sequences were used in the original study) [11].

- Embedding Extraction Optimization: Utilize batch processing for large datasets and consider dimensionality reduction techniques (PCA, UMAP) for visualization.

Applications in Genomic Research

The fine-tuned DNA sentence transformer enables diverse applications in genomic research:

- Functional Element Prediction: Identify promoters, enhancers, and transcription factor binding sites [11].

- Cancer Classification: Detect cancer cases and subtypes from DNA sequences with high accuracy [19] [20].

- Sequence Retrieval and Clustering: Find similar DNA sequences in large databases using semantic search capabilities [11].

- Multi-task Learning: Train simultaneously on multiple genomic tasks leveraging the shared embedding representation.

This protocol establishes a foundation for applying sentence transformer fine-tuning to genomic sequences, providing researchers with a powerful tool for DNA sequence representation and analysis.

In the context of applying Sentence Transformer models like SBERT and SimCSE to genomic sequences, DNA sequences must first be converted into a format that these natural language processing models can understand. k-mers, which are substrings of length k from a biological sequence, serve as this fundamental "vocabulary" for representing DNA [21] [22]. The process of converting raw DNA into k-mers is a critical preprocessing step that enables transformer-based models to learn meaningful, context-aware embeddings of genomic elements, forming the foundation for downstream tasks such as promoter identification, splice site prediction, and transcription factor binding site detection [11] [5].

This document outlines the standard protocols for preprocessing raw DNA sequences into model-ready k-mers, specifically tailored for fine-tuning sentence transformer models like SimCSE for genomic applications [11].

Core Concepts and Terminology

Definition and Calculation of k-mers

A k-mer is a contiguous subsequence of length k from a longer DNA sequence. For a given sequence of length L, the total number of overlapping k-mers is L - k + 1 [23]. The following example illustrates 3-mer extraction from a sample DNA sequence:

Example: Sequence = ATCGATCAC

| Offset | 0 | 1 | 2 | 3 | 4 | 5 | 6 |

|---|---|---|---|---|---|---|---|

| 3-mer | ATC |

TCG |

CGA |

GAT |

ATC |

TCA |

CAC |

Critical k-mer Concepts for DNA Language Models

- Reverse Complement and Canonical k-mers: DNA is double-stranded. A k-mer from one strand (

ATC) has a reverse complement on the opposite strand (GAT). A canonical k-mer is the lexicographically smaller of a k-mer and its reverse complement, ensuring each sequence region is represented uniquely regardless of which strand was sequenced [23]. - k-mer Counting: The total k-mer count is the number of all k-mers extracted. The distinct k-mer count is the number of unique k-mers, counting duplicates only once. Unique k-mers are those that appear exactly once in a genome and are particularly valuable as specific markers for genomic regions [23].

- k-mer Spectrum: A plot showing the multiplicity of each k-mer versus the number of k-mers with that multiplicity, useful for analyzing sequence composition and identifying repeats [21].

Experimental Protocols and Workflows

k-mer Tokenization Strategies for Genomic Language Models

Tokenization is the process of breaking a DNA sequence into k-mers (tokens) that serve as model input. The strategy choice significantly impacts model performance and computational efficiency [5].

Protocol: Implementing k-mer Tokenization

- Input: Raw DNA sequence (e.g.,

ATCGATCAC), parameterk. - Select Tokenization Strategy:

- Fully Overlapping: Slide a window of length

kone nucleotide at a time. Fork=3,ATCGATCACbecomes['ATC', 'TCG', 'CGA', 'GAT', 'ATC', 'TCA', 'CAC']. This preserves the most contextual information [5]. - Non-Overlapping: Extract consecutive k-mers without overlap. For

k=3,ATCGATCACbecomes['ATC', 'GAT', 'CAC']. This is more computationally efficient [5]. - AgroNT Method: A hybrid approach using non-overlapping 6-mers, reverting to single nucleotides when encountering ambiguous 'N' bases or sequence ends [5].

- Fully Overlapping: Slide a window of length

- Output: A list of k-mer tokens ready for model ingestion.

Table 1: Comparison of k-mer Tokenization Strategies for a Sequence of Length L

| Strategy | Number of Tokens | Context Preservation | Computational Load |

|---|---|---|---|

| Fully Overlapping | L - k + 1 |

High | High |

| Non-Overlapping | ⌈L / k⌉ |

Low | Low |

| AgroNT Method | ⌈L / k⌉ (approx.) |

Medium | Medium |

Workflow: From FASTA to Model Input

The following Graphviz diagram illustrates the complete preprocessing pipeline, from raw DNA sequences to input suitable for fine-tuning a sentence transformer model. This workflow is adapted from methodologies used in recent genomic language model research [11] [5].

Diagram 1: DNA to k-mer preprocessing workflow.

Detailed Protocol Steps:

- Sequence Cleaning & Normalization: Input raw DNA sequences in FASTA format. Remove any sequence headers and non-nucleotide characters (e.g., spaces, line breaks). Convert all nucleotides to uppercase to ensure consistency.

- k-mer Tokenization: Apply the chosen tokenization strategy (fully overlapping, non-overlapping, or hybrid) using the selected

kvalue. This step is critical for creating the foundational tokens the model will learn from [5]. - Canonical k-mer Conversion (Optional): For each k-mer generated, compute its reverse complement and retain only the canonical (lexicographically smaller) version. This ensures the model is invariant to the strand from which the sequence was derived [23].

- Output: The final output is a sequence of k-mer tokens, which serves as the direct input for fine-tuning a sentence transformer model like SimCSE on genomic data [11].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Research Reagents and Computational Tools for k-mer Analysis and Model Fine-Tuning

| Tool/Reagent | Function/Application | Specifications/Protocol Notes |

|---|---|---|

| Sentence Transformers Library | Provides the model architecture (e.g., SimCSE) and training scripts for fine-tuning on custom k-mer data [11]. | A standard fine-tuning protocol involves 1 epoch, batch size of 16, and a maximum sequence length of 312 tokens [11]. |

| Hugging Face Transformers | A library used to implement and pretrain BERT models with custom k-mer tokenizers [5]. | Enables the definition of custom k-mer tokenizers with configurable vocabulary sizes calculated as 4^k + 5 (for 4 nucleotides and 5 special tokens) [5]. |

| K-mer Analysis Toolkit (KAT) | A suite of tools for k-mer spectrum analysis and quality control of sequences and assemblies [23]. | Useful for pre-processing analysis, such as generating k-mer spectra to assess sequence complexity and identify repeats before model training [23]. |

| Custom k-mer Tokenizer | A script to segment DNA sequences into k-mers based on a chosen strategy (overlapping vs. non-overlapping) [11] [5]. | Critical parameter: k (window size). A fully overlapping tokenizer slides the window by 1 nucleotide, while a non-overlapping tokenizer has a step size equal to k [5]. |

| Reference Genome Dataset | A high-quality genomic sequence (e.g., human reference genome hg38) used for pretraining or as a data source [11]. | In pretraining, models are often trained on sequences of fixed length (e.g., 510 bp) extracted with a stride (e.g., 255 bp) from the reference [5]. |

Parameter Optimization and Performance Benchmarking

Selection of k-mer Size (k)

The choice of k involves a trade-off between biological meaningfulness and computational feasibility [5].

Table 3: Biological Significance and Modeling Trade-offs of k-mer Sizes

| k value | Biological Significance / Key Forces | Modeling Impact / Trade-off |

|---|---|---|

| k=3 (Codons) | Directly corresponds to codons, the fundamental units of the genetic code. Usage is heavily influenced by Codon Usage Bias (CUB), which is linked to tRNA abundance and translational efficiency [21]. | Captures protein-coding information but may miss broader regulatory patterns. Vocabulary size is manageable at 64. |

| k=4 to k=6 | k=4+ mer frequencies serve as a phylogenetic "signature." k=6 is often used in models (e.g., DNABERT-6, AgroNT) as it provides a good balance, being long enough to capture specific motifs like transcription factor binding sites [11] [21] [5]. | A sweet spot for many tasks. k=6 is a common default, offering good specificity. Vocabulary size is 4,096, which is manageable. |

| k > 6 | Can capture longer, more specific functional motifs and complex regulatory patterns. | Dramatically increases vocabulary size (e.g., 65,536 for k=8) and computational cost. May require more data to train effectively [5]. |

Benchmarking Model Performance

The effectiveness of a fine-tuned model using k-mer tokenized DNA can be evaluated on benchmark genomic tasks. Research indicates that a SimCSE model fine-tuned on DNA with k=6 can outperform specialized models like DNABERT on several tasks, while offering a favorable balance between performance and computational cost compared to much larger models like the Nucleotide Transformer [11].

Diagram 2: Trade-offs between k value, performance, and cost.

The integration of artificial intelligence with genomic medicine is revolutionizing oncology, enabling earlier and more precise cancer detection. A particularly promising advancement lies in applying sentence transformer models—deep learning architectures designed to generate dense, meaningful numerical representations of text—to raw DNA sequence data. Framed within broader research on sentence transformers like SBERT and SimCSE for DNA sequence representation, this approach bypasses traditional, often manual, feature extraction steps. It allows models to learn directly from the fundamental chemical code of life, capturing complex patterns indicative of malignant transformations [24] [25]. This protocol details the application of these models for the detection and classification of various cancer types, including colorectal, breast, lung, and prostate cancers, from tumor DNA.

The core principle involves treating DNA sequences as textual sentences composed of a four-letter alphabet (A, T, C, G). Sentence transformers convert these "sentences" into high-dimensional vector embeddings that preserve semantic biological relationships. Similar DNA sequences, which may represent conserved functional domains or mutation patterns, are mapped to nearby points in the vector space. These embeddings subsequently serve as powerful input features for standard machine learning classifiers, creating a highly effective pipeline for distinguishing cancerous from normal tissue and for identifying specific cancer subtypes [24].

Key Methodologies and Experimental Protocols

Core Workflow: From DNA to Diagnosis

The general workflow for using sentence transformers in cancer detection involves a sequence of critical steps, from data preparation to model inference. The following diagram illustrates this end-to-end pipeline:

Detailed Experimental Protocol

Objective: To classify matched tumor/normal tissue pairs as cancerous or normal using raw DNA sequences and sentence transformer-based feature representation.

Step-by-Step Procedure:

Data Acquisition and Preparation:

- Obtain raw DNA sequencing data from matched tumor and normal tissues from public repositories or institutional databases. For a standard experiment, data from hundreds of patients may be used [20].

- Ensure data is formatted as FASTA or FASTQ files.

- Split the dataset into training, validation, and independent hold-out test sets (e.g., 80/10/10 split). It is critical to strictly prevent any data leakage between these splits [20].

DNA Sequence Preprocessing and K-mer Tokenization:

- Quality Control: Process raw sequences using tools like Trimmomatic or FastQC to remove low-quality reads and adapter sequences.

- K-mer Tokenization: This is a crucial step that converts continuous DNA sequences into discrete "words" the transformer model can understand.

- Slide a window of size

k(e.g., k=3 to k=6) over each DNA sequence. - Record each overlapping k-mer. For example, the sequence

ATCGwith k=3 would yield the k-mers:ATC,TCG. - This process transforms a long DNA string into a "sentence" of k-mer tokens (e.g.,

[ATC, TCG, ...]).

- Slide a window of size

Generating Sequence Embeddings with Sentence Transformers:

- Model Selection: Choose a sentence transformer model. As demonstrated in recent research, both SBERT (2019) and the unsupervised SimCSE (2021) have been successfully applied to this task [24] [25].

- Embedding Generation: Pass the k-mer tokens through the selected sentence transformer model.

- The model computes a dense, fixed-size vector representation (embedding) for each DNA sequence based on its k-mer composition and the contextual relationships between k-mers.

- These embeddings are designed such that biologically similar sequences are closer together in the vector space.

Model Training and Classification:

- Use the generated DNA sequence embeddings as feature inputs for a machine learning classifier.

- Classifier Choice: As identified in comparative studies, tree-based ensembles like XGBoost often yield top performance. Alternatively, deep learning models like Convolutional Neural Networks (CNNs) can also be applied [24].

- Train the classifier on the embeddings from the training set, using the validation set for hyperparameter tuning.

Model Evaluation:

- Perform final evaluation on the held-out test set to assess generalization performance.

- Report standard metrics including Accuracy, Precision, Recall, F1-Score, and Area Under the ROC Curve (AUC).

The internal process of the Sentence Transformer, from k-mers to a final numerical vector, is visualized below:

Performance Comparison and Data Presentation

Performance of Sentence Transformer Methods

The table below summarizes the performance of a cancer detection system using SBERT and SimCSE for DNA sequence representation, followed by an XGBoost classifier, as reported in a 2023 study [24] [25].

Table 1: Performance of Sentence Transformer-based Cancer Detection on Colorectal Cancer DNA Sequences

| Sentence Transformer Model | Classifier | Overall Accuracy (%) | Key Findings |

|---|---|---|---|

| SBERT (2019) | XGBoost | 73 ± 0.13 | Provides a strong baseline for DNA representation. |

| Unsupervised SimCSE (2021) | XGBoost | 75 ± 0.12 | Marginally outperforms SBERT, demonstrating the value of improved contrastive learning. |

| SBERT | Random Forest | < 75 | Generally lower accuracy than XGBoost. |

| SBERT | LightGBM | < 75 | Competitive but not superior to XGBoost. |

| SBERT | CNN | < 75 | Deep learning classifier shows comparable but not superior results in this setup. |

Comparison with Other State-of-the-Art Methods

To provide context, the table below compares the performance of the sentence transformer approach with other advanced machine learning and deep learning methods applied to cancer detection across different data modalities [20] [26] [27].

Table 2: Comparative Performance of Various AI Models in Cancer Detection and Classification

| Cancer Type | Method / Framework | Data Modality | Reported Accuracy / AUC | Key Feature |

|---|---|---|---|---|

| Multiple (BRCA, KIRC, etc.) | Blended Ensemble (Logistic Regression + Gaussian NB) | DNA Sequences | Up to 100% (specific types), AUC: 0.99 [20] | Lightweight, interpretable model. |

| Breast | TransBreastNet (CNN-Transformer Hybrid) | Mammogram Images | 95.2% (Macro Accuracy) [28] | Incorporates temporal lesion progression. |

| Breast, Prostate, etc. | HistoViT (Vision Transformer) | Histopathological Images | 99.32% (Breast), 96.92% (Prostate) [27] | Leverages self-attention for global context in images. |

| Multiple | AutoCancer (Automated Multimodal Transformer) | Liquid Biopsy (Genomic) | Outperforms existing methods across cohorts [29] | Integrates feature selection and architecture search. |

| Gene Sequences | DNASimCLR (Contrastive Learning) | Microbial/Gene Sequences | Up to 99% [30] | Unsupervised feature learning for sequences. |

The Scientist's Toolkit: Research Reagent Solutions

This section outlines the essential computational tools and data resources required to implement the described protocol.

Table 3: Essential Research Reagents and Computational Tools for DNA-Based Cancer Detection

| Item Name / Resource | Type | Function / Application in the Protocol |

|---|---|---|

| Matched Tumor/Normal DNA Pairs | Biological Data | The fundamental input data required for supervised learning, enabling the model to distinguish cancer-specific mutations from benign variants. |

| SBERT (Sentence-BERT) | Software / Model | A sentence transformer model used to generate semantically meaningful embeddings from k-mer tokenized DNA sequences [24] [25]. |

| SimCSE (Unsupervised) | Software / Model | An alternative sentence transformer that uses contrastive learning to create enhanced sentence/DNA sequence embeddings, often yielding marginal performance gains [24] [25]. |

| XGBoost (eXtreme Gradient Boosting) | Software / Library | A leading machine learning classifier that frequently achieves top performance when trained on sentence transformer-derived DNA sequence embeddings [24]. |

| K-mer Tokenization Script | Computational Tool | A custom script (e.g., in Python) that breaks down long DNA sequences into shorter, overlapping k-mers, preparing the data for the transformer model. |

| Scikit-learn | Software / Library | A fundamental Python library for machine learning, used for data splitting, preprocessing, model evaluation, and implementing auxiliary classifiers. |

| PyTorch / Transformers Library | Software / Library | Standard deep learning frameworks used to load, configure, and run the sentence transformer models (SBERT, SimCSE). |

The accurate differentiation of species from genomic sequences is a critical task in biology, ecology, and drug development, supporting efforts in biodiversity conservation, epidemiology, and microbiome research [31]. Traditional methods often rely on well-characterized reference genomes, which is a significant limitation given the vast genetic diversity in nature that remains uncharacterized [32]. DNABERT-S emerges as a specialized genome foundation model that generates species-aware DNA embeddings, enabling DNA sequences from different species to naturally cluster and segregate in the embedding space without relying on reference genomes [31] [33]. This application note details the protocols and experimental methodologies for employing DNABERT-S, a model built upon DNABERT-2 and fine-tuned using advanced contrastive learning techniques, for species identification and metagenomic binning. The content is framed within broader research on adapting sentence transformer architectures, specifically models like SimCSE, for DNA sequence representation [11].

DNABERT-S Model and Core Technology

DNABERT-S is a transformer-based model that builds upon the pre-trained DNABERT-2 architecture. Its primary innovation lies in its training methodology, which is specifically designed to produce embeddings that are effective for species differentiation [34] [32].

Key Technological Innovations

- Curriculum Contrastive Learning (C²LR): This two-phase training strategy progressively introduces more challenging samples. Phase I uses a Weighted SimCLR objective with hard-negative sampling to teach the model to group sequences from the same species and separate sequences from different species. Phase II employs Manifold Instance Mixup (MI-Mix), which creates harder training examples by mixing the hidden representations of DNA sequences at randomly selected model layers, forcing the model to develop more robust and discriminative features [31] [32].

- Species-Aware Embeddings: The model maps variable-length DNA sequences into a fixed-size vector space (768 dimensions). In this space, sequences from the same species are positioned proximally, while sequences from different species are distally located, facilitating unsupervised clustering and classification [32].

The following diagram illustrates the core training workflow of DNABERT-S.

Diagram 1: DNABERT-S Curriculum Contrastive Learning Workflow.

Quantitative Performance Evaluation

DNABERT-S has been rigorously evaluated on multiple datasets. The table below summarizes its performance against other baseline methods in species clustering, as measured by the Adjusted Rand Index (ARI), a metric for clustering similarity where higher values indicate better performance [31].

Table 1: Performance Comparison (Adjusted Rand Index) on Species Clustering Tasks.

| Model | Synthetic Dataset | Marine Dataset | Plant Dataset | Average ARI |

|---|---|---|---|---|

| DNABERT-S | 68.21 | 53.98 | 51.43 | 53.80 |

| DNABERT-2 | 15.73 | 13.24 | 15.70 | 14.21 |

| Nucleotide Transformer (NT-v2) | 8.69 | 4.92 | 7.00 | 5.97 |

| HyenaDNA | 20.04 | 16.54 | 24.06 | 19.55 |

| DNA2Vec | 24.68 | 16.07 | 20.13 | 18.10 |

| TNF (Tetra-Nucleotide Frequency) | 38.75 | 25.65 | 25.80 | 26.47 |

The data demonstrates that DNABERT-S achieves a average ARI of 53.80, approximately doubling the performance of the strongest baseline (TNF) on average [31]. In metagenomic binning tasks, DNABERT-S recovered over 40% more species with an F1-score >0.5 in synthetic datasets and over 80% more in more realistic datasets compared to the strongest baselines [32]. For few-shot species classification, DNABERT-S trained with just 2 examples per class (2-shot) was able to outperform other models trained with 10 examples per class (10-shot), demonstrating high data efficiency [31] [32].

Experimental Protocols

This section provides detailed methodologies for key experiments involving DNABERT-S.

Protocol: Generating Species-Aware DNA Embeddings

Purpose: To convert raw DNA sequences into numerical embeddings suitable for downstream tasks like clustering or classification.

Materials: DNABERT-S model (available on Hugging Face as zhihan1996/DNABERT-S) [34].

Methodology:

- Sequence Tokenization: Input DNA sequences are tokenized into 6-mer tokens using the dedicated DNABERT-S tokenizer.

- Model Inference: Pass the tokenized sequences through the DNABERT-S model to obtain the hidden state representations for all tokens.

- Embedding Pooling: Apply mean pooling to the hidden states across the sequence length dimension to generate a fixed-size, 768-dimensional sentence embedding for the input DNA sequence [34].

Protocol: Unsupervised Species Clustering

Purpose: To group a collection of unlabeled DNA sequences into clusters corresponding to their species of origin. Materials: A set of unlabeled DNA sequences; DNABERT-S embeddings; a clustering algorithm (e.g., K-means, UMAP + HDBSCAN). Methodology:

- Embedding Generation: Generate DNA embeddings for all sequences in the dataset using Protocol 4.1.

- Dimensionality Reduction (Optional): Use UMAP or t-SNE to reduce the embeddings to 2 or 3 dimensions for visualization.

- Clustering: Apply a clustering algorithm to the embeddings. The number of clusters (K) can be estimated using methods like the elbow method if the species count is unknown.

- Validation: Evaluate the clustering quality using metrics like Adjusted Rand Index (ARI) if ground truth labels are available for validation.

Protocol: Few-Shot Species Classification

Purpose: To train a classifier to identify the species of a DNA sequence using very few labeled examples. Materials: A small set of labeled DNA sequences (e.g., 2-10 examples per species); DNABERT-S embeddings; a simple classifier (e.g., k-Nearest Neighbors). Methodology:

- Embedding Generation: Generate DNA embeddings for all labeled training sequences.

- Classifier Training: Train a k-NN classifier on the embeddings and their corresponding species labels.

- Inference: Generate the embedding for a query sequence and use the trained k-NN classifier to predict its species.

- Studies show that a k-NN classifier using DNABERT-S embeddings can slightly outperform traditional alignment-based methods like MMseqs2, even though DNABERT-S uses only a small portion of the genome compared to the entire reference genome used by MMseqs2 [32].

The Scientist's Toolkit

Table 2: Essential Research Reagents and Resources for DNABERT-S.

| Item | Specification / Source | Function / Purpose |

|---|---|---|

| Pre-trained Model | Hugging Face: zhihan1996/DNABERT-S [34] |

Core model for generating species-aware DNA embeddings. |

| Tokenization Scheme | K-mer (size 6) | Breaks down continuous DNA sequences into discrete tokens for the transformer model. |

| Training Data | Publicly available benchmark datasets (e.g., CAMI2, Genbank) [32] | Used for model fine-tuning and evaluation; contains diverse genomic sequences. |

| Evaluation Benchmark | 23-28 diverse datasets for clustering and classification [31] [32] | Standardized benchmark for assessing model performance on species differentiation tasks. |

| Computational Framework | Python, Hugging Face Transformers, PyTorch [34] | Software environment for model loading, inference, and fine-tuning. |

Workflow Visualization for Metagenomic Binning

The following diagram outlines a complete workflow for using DNABERT-S in a metagenomic binning application, from sample collection to final bin assessment.

Diagram 2: Metagenomic Binning Pipeline with DNABERT-S.

The systematic identification of cis-regulatory elements (CREs), such as promoters, enhancers, and transcription factor binding sites (TFBS), is fundamental to understanding gene regulatory networks [35]. These elements are typically short, non-coding DNA sequences (6-20 bp) that serve as binding platforms for transcription factors (TFs) to precisely modulate gene expression [35]. In the context of a broader thesis on sentence transformers for DNA sequence representation, this application note explores how fine-tuned Sentence-BERT (SBERT) and SimCSE models provide an effective computational method for predicting these functional genomic elements directly from DNA sequence, offering a powerful alternative to traditional experimental methods like ChIP-seq and DAP-seq [11] [1] [35].

The adaptation of natural language processing models to DNA sequences relies on treating DNA as a textual language where k-mers (contiguous subsequences of length k) serve as the fundamental tokens [11] [1]. Sentence transformers, specifically designed to generate semantically meaningful embeddings for entire sequences, can be fine-tuned on genomic data to produce dense vector representations where similar DNA sequences (e.g., those sharing regulatory functions) are located close together in the embedding space [11] [25] [1]. This approach has demonstrated competitive performance against specialized DNA models like DNABERT while maintaining computational efficiency [11] [1].

Performance Comparison of DNA Representation Models

Table 1: Performance comparison of DNA embedding methods on regulatory prediction tasks

| Model | Architecture | Tokenization | Reported AUC/Accuracy | Computational Demand | Key Advantages |

|---|---|---|---|---|---|

| Fine-tuned Sentence Transformer (SimCSE) | Sentence Transformer | 6-mer | Exceeded DNABERT on multiple tasks [11] | Moderate | Balanced performance & efficiency [11] |

| Nucleotide Transformer | Transformer (BERT-style) | Non-overlapping 6-mer | Highest raw accuracy [11] | Very High | State-of-art accuracy [11] |

| DNABERT | Transformer (BERT) | Overlapping k-mer (k=3-6) | 78.6% AUC on RNA-protein tasks [1] | High | Domain-specific pretraining [1] |

| LOGO (ALBERT-based) | ALBERT | Not specified | >70% on promoter tasks [1] | Low (≈1M parameters) | High parameter efficiency [1] |

| AWD-LSTM | LSTM | k-mer | 97-98% on DNA-protein binding [1] | Moderate | Effective for binding sites [1] |

Table 2: Experimental results for DNA methylation site prediction using transformer models

| Model | Methylation Site | AUC/Accuracy | Dataset | Reference |

|---|---|---|---|---|

| Ensemble of BERT, DistilBERT, ALBERT, XLNet, ELECTRA | 6mA, 4mC, 5hmC | 74-96% | DNA methylation dataset + taxonomic lineage | [1] |

| BERT-based model | DNA 6mA sites | 79.3% | DNA 6mA dataset | [1] |

| BERT-based model | General DNA methylation | 80+% | iDNA-MS, ENCODE data | [1] |

| ELECTRA | Promoter prediction, TFBS | 80-86% | GRCh38, EPDnew, ENCODE ChIP-Seq | [1] |

Experimental Protocols

Protocol 1: Fine-tuning Sentence Transformers for DNA Sequences

Purpose: To adapt sentence transformer models for DNA sequence analysis to predict regulatory elements and protein-binding sites.

Materials:

- Hardware: Computer with GPU capability (e.g., NVIDIA V100 with 80GB RAM) [36]

- Software: Python, sentence-transformers library, transformers library [11] [1]

- Biological Data: DNA sequences in FASTA format (e.g., 3000 DNA sequences for fine-tuning) [1]

Procedure:

- Data Preprocessing:

Model Setup:

Fine-tuning:

Embedding Generation:

Validation:

Protocol 2: Predicting Protein-Binding Sites with Seq2Bind

Purpose: To identify critical binding residues between proteins using fine-tuned protein language models.

Materials:

- Webserver: Seq2Bind webserver (https://agrivax.onrender.com/seq2bind/scan) [36]

- Models: Pre-trained ESM2, ProtBERT, ProtT5 models [36]

- Data: Protein sequences or PDB files for protein complexes [36]

Procedure:

- Data Preparation:

Binding Affinity Prediction:

Alanime Mutation Scanning:

Validation:

Workflow Visualization

DNA Regulatory Element Prediction Workflow - This diagram illustrates the complete pipeline from raw DNA sequences to regulatory element predictions using fine-tuned sentence transformers, culminating in experimental validation.

The Scientist's Toolkit

Table 3: Essential research reagents and computational tools for regulatory element prediction

| Resource | Type | Purpose/Function | Access |

|---|---|---|---|

| Seq2Bind Webserver | Computational Tool | Predicts binding affinity and identifies critical binding residues from protein sequences | https://agrivax.onrender.com/seq2bind/scan [36] |

| Sentence Transformers Library | Software Library | Provides models and methods for generating sentence embeddings from text/DNA | Python package [11] [1] |

| SKEMPI 2.0 Database | Biological Database | Contains protein complexes with experimentally determined thermodynamic data | Public database [36] |

| ENCODE Data | Genomic Dataset | Provides comprehensive maps of regulatory elements across human genome | Public consortium data [1] [35] |

| DAP-seq | Experimental Method | Identifies genome-wide TF binding sites in vitro using affinity purification | Wet lab protocol [35] |

| ChIP-seq | Experimental Method | Identifies genome-wide TF binding sites in vivo using immunoprecipitation | Wet lab protocol [35] |

| Nucleotide Transformer | Pre-trained Model | DNA language model for various genomic prediction tasks | Hugging Face Model Hub [11] [1] |

| DNABERT | Pre-trained Model | Domain-specific transformer pre-trained on human reference genome | Hugging Face Model Hub [11] [1] |

Technical Implementation Diagram

Sentence Transformer Architecture for DNA - This technical diagram shows the internal architecture of fine-tuned sentence transformers for DNA sequence processing, from k-mer tokenization to final regulatory element prediction.

The application of sentence transformers for predicting regulatory elements and protein-binding sites represents a significant advancement in computational genomics. By fine-tuning models like SimCSE on DNA sequences, researchers can generate powerful embeddings that capture the semantic meaning of regulatory syntax, enabling accurate prediction of promoters, transcription factor binding sites, and other functional elements [11] [25] [1]. While specialized DNA models like Nucleotide Transformer may achieve slightly higher accuracy in some tasks, fine-tuned sentence transformers offer an excellent balance between performance and computational efficiency, making them particularly valuable for resource-constrained environments [11] [1]. As these methods continue to evolve, they will play an increasingly important role in decoding the regulatory logic of genomes and accelerating therapeutic development.

Within the broader scope of utilizing sentence transformers (SBERT/SimCSE) for DNA sequence representation, a critical phase involves leveraging the generated embeddings for predictive modeling. Sentence transformers convert raw DNA sequences into dense, fixed-length numerical vectors that capture semantic biological meaning [25] [37]. These embeddings serve as powerful feature inputs for traditional machine learning classifiers, such as XGBoost and Random Forest, enabling tasks like cancer detection from genomic data without manual feature engineering [25] [11]. This document outlines detailed application notes and protocols for this integration, providing a practical framework for researchers and drug development professionals.

Quantitative Performance Data

Table 1 summarizes the performance of various machine learning classifiers when provided with sentence transformer embeddings for a cancer detection task, specifically using raw DNA sequences from tumor/normal pairs of colorectal cancer patients [25].

Table 1: Classifier Performance with Different DNA Sequence Embeddings

| Classifier | SBERT Embedding Accuracy (%) | SimCSE Embedding Accuracy (%) |

|---|---|---|

| XGBoost | 73 ± 0.13 | 75 ± 0.12 |

| Random Forest | Performance Data Not Specified | Performance Data Not Specified |

| LightGBM | Performance Data Not Specified | Performance Data Not Specified |

| CNNs | Performance Data Not Specified | Performance Data Not Specified |

The XGBoost model achieved the highest accuracy, with SimCSE embeddings providing a marginal but consistent performance improvement over SBERT embeddings [25].

Experimental Protocols

Protocol 1: Generating DNA Sequence Embeddings with Sentence Transformers

Objective: To convert raw DNA sequences into fixed-length, semantic vector embeddings using a fine-tuned sentence transformer model.

Materials:

- DNA Sequences: FASTA files containing matched tumor/normal pairs.

- Computing Environment: Python with PyTorch and the SentenceTransformers library.

- Pretrained Model: A Sentence Transformer model (e.g.,

distilroberta-base) fine-tuned on DNA sequences [11] [2].

Methodology:

- Sequence Tokenization (k-mer Splitting): Segment the raw DNA sequences into overlapping k-mers (e.g., k=6). This transforms a long sequence into a series of "words" that the transformer can process [11].

- Example: The sequence

ATGCCAwould become['ATG', 'TGC', 'GCC']for k=3.

- Example: The sequence

- Model Fine-Tuning (Optional but Recommended): For optimal performance on genomic data, fine-tune a general-purpose sentence transformer on a corpus of DNA sequences. The SimCSE framework, which uses contrastive learning, is highly effective [11] [2].

- Framework: Unsupervised SimCSE.

- Key Parameter: Dropout is used as the only noise source for generating positive pairs.

- Loss Function:

MultipleNegativesRankingLoss[2].

- Embedding Generation: Pass the k-mer tokenized sequences through the (fine-tuned) model using the

model.encode()function to generate a fixed-size dense vector for each DNA sequence [38].

Protocol 2: Training a Downstream XGBoost Classifier

Objective: To train an XGBoost model for classification (e.g., cancer vs. normal) using the generated sentence embeddings as features.

Materials:

- Feature Matrix: The sentence embeddings generated from Protocol 1.

- Labels: Corresponding binary or multi-class labels for each DNA sequence (e.g., disease state).

- Software: Python with XGBoost and scikit-learn libraries.

Methodology:

- Dataset Construction: Assemble a feature matrix where each row is a DNA sequence represented by its sentence embedding vector. Ensure labels are aligned with the rows.

- Data Partitioning: Split the dataset into training and testing sets (e.g, 80/20 split) while preserving class distribution.

- Classifier Training:

- Initialize an XGBoost classifier.

- Train the model on the training set using the embedding vectors as features and the labels as the target variable.

- Utilize cross-validation on the training set to tune key hyperparameters (e.g.,

max_depth,learning_rate,n_estimators).

- Performance Evaluation: Use the trained model to make predictions on the held-out test set. Report standard metrics such as accuracy, precision, recall, F1-score, and AUC-ROC [25].

Workflow Visualization

The following diagram illustrates the complete integrated workflow, from raw DNA sequence to final classification result.

Diagram Title: End-to-End Workflow for DNA Sequence Classification

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Item | Function/Description | Example/Reference |

|---|---|---|

| SentenceTransformers Library | Python framework for loading, using, and fine-tuning sentence embedding models. | [38] |

| SimCSE (Unsupervised) | Contrastive learning framework for training sentence embeddings without labeled data, using dropout as noise. | [2] [8] |

| DNABERT / Nucleotide Transformer | Domain-specific transformer models pretrained on genomic data; serve as benchmarks. | [11] [39] |

| k-mer Tokenization | Preprocessing method to break DNA sequences into subsequences of length k, creating a "vocabulary" for the model. | [25] [11] |

| XGBoost Library | Scalable and optimized library for gradient boosting, widely used for tabular data classification. | [25] |

| MultipleNegativesRankingLoss | Loss function used in SimCSE training that maximizes agreement between positive pairs and minimizes it with negatives in the same batch. | [2] |

Optimizing Performance and Overcoming Challenges in Genomic Deployment