Beyond Accuracy: A Comprehensive Guide to Performance Metrics for Cancer Classification Models

This article provides a comprehensive guide to performance metrics for cancer classification models, tailored for researchers, scientists, and drug development professionals.

Beyond Accuracy: A Comprehensive Guide to Performance Metrics for Cancer Classification Models

Abstract

This article provides a comprehensive guide to performance metrics for cancer classification models, tailored for researchers, scientists, and drug development professionals. It covers foundational concepts like the confusion matrix, accuracy, precision, recall, and F1-score, and explores their application in complex, real-world scenarios such as multi-omics data integration and multi-class cancer subtype classification. The guide addresses critical challenges including class imbalance and the precision-recall trade-off, and outlines robust validation methodologies and statistical testing for comparative model analysis. By synthesizing these elements, the article aims to equip professionals with the knowledge to select, interpret, and validate metrics that align with both clinical imperatives and research objectives in oncology.

Core Concepts: Understanding the Basic Metrics for Cancer Classification

In the high-stakes field of cancer classification research, the accurate evaluation of diagnostic models is paramount. For researchers, scientists, and drug development professionals, a model's predictive performance directly influences clinical insights and potential patient outcomes. Among the available evaluation tools, the confusion matrix stands as the fundamental, interpretable cornerstone for assessing binary classification models. It provides a detailed breakdown of a model's predictions versus actual outcomes, forming the basis for critical metrics like sensitivity and precision. This guide explores the architecture of the confusion matrix, its derived metrics, and their practical application in cancer diagnostics, providing a structured framework for objective model comparison.

Demystifying the Confusion Matrix: A Detailed Breakdown

A confusion matrix, sometimes called an error matrix, is a specific table layout that visualizes the performance of a classification algorithm [1]. It moves beyond simple accuracy by providing a granular view of where a model succeeds and, crucially, where it becomes "confused" [2] [1].

In its simplest form for binary classification, the matrix is a 2x2 table that cross-references the actual conditions with the predicted conditions, creating four distinct outcomes [3] [2] [4]:

- True Positive (TP): The model correctly predicts the positive class. In a cancer context, this is a patient with cancer correctly identified as having cancer [1].

- True Negative (TN): The model correctly predicts the negative class. This is a healthy patient correctly identified as not having cancer [1].

- False Positive (FP): The model incorrectly predicts the positive class. This is a healthy patient wrongly flagged as having cancer—a Type I error [3] [5].

- False Negative (FN): The model incorrectly predicts the negative class. This is a patient with cancer who is missed by the model—a Type II error [3] [5].

The following diagram illustrates the logical relationship between these components and the key metrics derived from them.

Key Metrics Derived from the Confusion Matrix

The raw counts within the confusion matrix are used to calculate powerful metrics that evaluate model performance from different perspectives. The choice of which metric to prioritize depends heavily on the specific clinical or research objective [5].

| Metric | Formula | Clinical Interpretation in Cancer Diagnostics |

|---|---|---|

| Accuracy | (TP + TN) / Total [3] [5] | The overall proportion of correct diagnoses. Can be misleading if the dataset is imbalanced [5] [1]. |

| Recall (Sensitivity) | TP / (TP + FN) [3] [5] | The model's ability to correctly identify all patients who actually have cancer. Critical for minimizing missed diagnoses [2] [5]. |

| Precision | TP / (TP + FP) [3] [5] | The accuracy of the model's positive predictions. Important when the cost of false alarms (unnecessary biopsies) is high [2] [5]. |

| Specificity | TN / (TN + FP) [3] [6] | The model's ability to correctly identify healthy patients. The inverse of the False Positive Rate [2] [4]. |

| F1-Score | 2 * (Precision * Recall) / (Precision + Recall) [3] [5] | The harmonic mean of precision and recall. Provides a single balanced metric for imbalanced datasets [3] [5]. |

Experimental Protocols & Data Presentation in Cancer Research

To illustrate the practical application of these metrics, consider the following experimental data synthesized from recent studies on cancer classification models. The table below provides a comparative analysis of different AI model architectures, highlighting their performance across key confusion matrix-derived metrics.

Table 1: Comparative Performance of Recent Cancer Classification Models

| Model / Study | Cancer Focus | Data Modality | Accuracy | Recall (Sensitivity) | Precision | Specificity |

|---|---|---|---|---|---|---|

| DenseNet121 with Multi-Scale Feature Fusion [7] | Breast Cancer | Histopathological Images (BreakHis) | 97.1% | 92.0% (Malignant) | Not Reported | 93.8% (Benign) |

| Stacked Deep Learning Ensemble [8] | Multi-Cancer (5 types) | Multiomics (RNA-seq, Methylation) | 98.0% | Implied High | Implied High | Implied High |

| ResNet-SVM Hybrid [7] | Breast Cancer | Mammogram & Ultrasound Fusion | 99.22% | Not Reported | Not Reported | Not Reported |

| Optimized Bayesian CNN (OBCNN) [7] | Breast Cancer (IDC) | Histopathology Images | Not Reported | Demonstrated Robustness | Demonstrated Robustness | Demonstrated Robustness |

Detailed Experimental Methodology

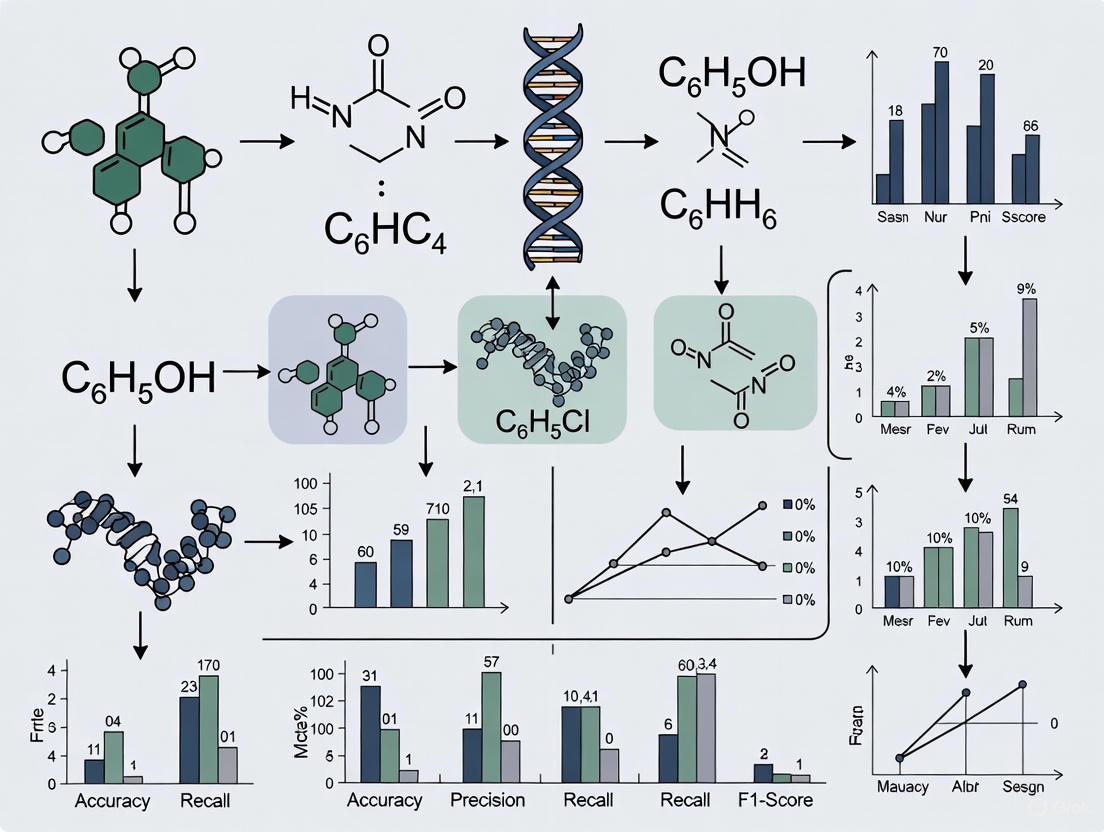

The performance metrics in Table 1 are the result of rigorous experimental protocols. The following diagram outlines a generalized workflow for developing and evaluating a cancer classification model, from data preparation to performance validation.

Key Experimental Steps:

- Data Collection & Curation: Models are trained on curated biomedical datasets. For example, the DenseNet121 model for breast cancer used the publicly available BreaKHis dataset of histopathological images [7], while the multiomics ensemble model utilized RNA sequencing and methylation data from The Cancer Genome Atlas (TCGA) [8].

- Data Preprocessing: This critical step ensures data quality and model stability. It includes:

- Model Training with Cross-Validation: To ensure robustness and avoid overfitting, models are often trained using techniques like 5-fold cross-validation, especially on limited medical data [7].

- Performance Evaluation: Predictions on a held-out test set are compiled into a confusion matrix, which is then used to calculate the final performance metrics [7] [8].

The Scientist's Toolkit: Essential Research Reagents & Solutions

The development of high-performing cancer classification models relies on a suite of computational "reagents" and datasets. The table below details these essential components and their functions.

Table 2: Key Research Reagent Solutions for Cancer Classification Models

| Item Name | Category | Function / Description |

|---|---|---|

| The Cancer Genome Atlas (TCGA) | Datasets | A comprehensive public dataset containing molecular characterization and clinical data from over 20,000 primary cancer samples across 33 cancer types [8]. |

| BreaKHis | Datasets | A public dataset of histopathological breast cancer biopsy images, used for developing and testing image-based classification models [7]. |

| Convolutional Neural Network (CNN) | Algorithm | A deep learning architecture highly effective for analyzing image data (e.g., histopathological slides, mammograms) by learning spatial hierarchies of features [7] [8]. |

| Stacking Ensemble | Algorithm | A advanced technique that combines multiple machine learning models (e.g., SVM, RF, CNN) using a meta-learner to improve overall predictive performance and robustness [8]. |

| Autoencoder | Tool | A neural network used for unsupervised feature extraction and dimensionality reduction, crucial for handling high-dimensional omics data [8]. |

| Synthetic Minority Over-sampling Technique (SMOTE) | Tool | An algorithm used to address class imbalance in datasets by generating synthetic samples of the underrepresented class, preventing model bias [8]. |

The confusion matrix is an indispensable tool for objectively evaluating binary classification models in cancer research. By providing a detailed breakdown of a model's predictive behavior, it enables researchers to move beyond simplistic accuracy and understand the true diagnostic capabilities of their models. The derived metrics—recall, precision, specificity, and F1-score—each tell a different part of the story, allowing scientists to select and optimize models based on specific clinical priorities, whether that is minimizing deadly false negatives or reducing costly false positives. As AI continues to integrate into biomedical research and diagnostics, the rigorous, metric-driven framework provided by the confusion matrix will remain the foundation for validating and comparing the performance of these powerful tools.

In the development of cancer classification models, the selection of appropriate performance metrics is not merely a technical formality but a foundational aspect of clinical relevance and model utility. Models that distinguish malignant from benign tissues or classify cancer subtypes must be evaluated beyond simple correctness, as the real-world costs of different types of errors—missing a cancer versus raising a false alarm—are profoundly asymmetric [5] [9]. For researchers, scientists, and drug development professionals, understanding the trade-offs encapsulated by accuracy, precision, recall, and specificity is critical for translating algorithmic predictions into reliable diagnostic tools. This guide provides a comprehensive comparison of these core metrics, grounded in their application to cancer classification research, and supported by experimental data from contemporary studies.

The evaluation of a classification model begins with the confusion matrix, a table that breaks down predictions into four fundamental categories [10] [11]. True Positives (TP) and True Negatives (TN) are cases where the model correctly identifies the positive class (e.g., malignant cancer) and the negative class (e.g., benign), respectively. False Positives (FP) occur when the model incorrectly labels a negative case as positive (a "false alarm"), while False Negatives (FN) occur when it misses a positive case (a "missed detection") [12] [9]. In medical diagnostics, a False Negative in cancer detection is often considered a more severe error than a False Positive, as it could delay life-saving treatment [11] [13].

Metric Definitions and Formulas

Core Definitions and Mathematical Formulations

Accuracy measures the overall proportion of correct predictions made by the model across both positive and negative classes [5] [14]. It is calculated as:

Accuracy = (TP + TN) / (TP + TN + FP + FN)While intuitive, accuracy can be a misleading metric for imbalanced datasets, which are common in medical contexts where the number of healthy patients often far exceeds the number of sick patients. A model that simply always predicts "negative" could achieve high accuracy while being clinically useless [10] [11].Precision (also known as Positive Predictive Value) measures the proportion of positive predictions that are actually correct. It answers the question: "When the model predicts cancer, how often is it right?" [5] [12]. It is calculated as:

Precision = TP / (TP + FP)High precision indicates that the model is reliable when it flags a case as positive. This is crucial in scenarios where subsequent procedures are costly, invasive, or carry significant psychological burden [10] [9].Recall (also known as Sensitivity or True Positive Rate - TPR) measures the model's ability to correctly identify actual positive cases. It answers the question: "Of all the patients who truly have cancer, what fraction did the model successfully find?" [5] [12]. It is calculated as:

Recall = TP / (TP + FN)A high recall is paramount in applications like cancer screening, where the cost of missing a disease (a False Negative) is unacceptably high [5] [11].Specificity (also known as True Negative Rate - TNR) measures the model's ability to correctly identify actual negative cases. It answers the question: "Of all the patients who are truly healthy, what fraction did the model correctly clear?" [10] [11]. It is calculated as:

Specificity = TN / (TN + FP)It is the complement of the False Positive Rate (FPR), which is defined asFPR = 1 - Specificity = FP / (TN + FP)[5] [12].

Visualizing the Relationships

The following diagram illustrates the logical relationships between the core metrics and the confusion matrix components from which they are derived.

Comparative Analysis in Cancer Classification

Performance of Deep Learning Models on Breast Cancer Histopathology

A 2025 study evaluating 11 different deep learning algorithms for classifying breast cancer biopsy images into benign and malignant categories provides a clear comparison of these metrics in a realistic research setting [15]. The models were trained and tested on a dataset of 10,000 images. The table below summarizes the performance of the top-performing model and provides a comparative benchmark.

- Experimental Protocol: The study used a dataset of 10,000 histopathological images (6,172 IDC-negative and 3,828 IDC-positive). The data was split into 80% for training, 10% for validation, and 10% for testing. Various deep learning architectures, including DenseNet201, ResNet50, and VGG16, were trained and evaluated on the held-out test set [15].

Table 1: Performance Metrics of DenseNet201 on Breast Cancer Classification

| Model | Accuracy | Precision | Recall | F1-Score | AUC |

|---|---|---|---|---|---|

| DenseNet201 | 89.4% | 88.2% | 84.1% | 86.1% | 95.8% |

- Result Interpretation: The DenseNet201 model achieved a high overall Accuracy of 89.4%. Its Precision of 88.2% indicates that when it predicts a sample as malignant, it is correct about 88% of the time. The Recall of 84.1% shows it successfully identifies over 84% of all actual malignant cases. The F1-Score (the harmonic mean of precision and recall) of 86.1% reflects a strong balance between the two metrics [15].

Analysis of a Hybrid Ensemble for Skin Cancer Classification

Further insights can be drawn from a 2025 study on skin cancer classification, which proposed a hybrid deep learning ensemble model. The analysis of its confusion matrix offers a granular view of the trade-offs between sensitivity and specificity [13].

- Experimental Protocol: This study utilized a publicly available Kaggle dataset of 10,000 dermoscopic images. The proposed hybrid model combined a CNN-LSTM architecture and a DenseNet-like model, with a Gradient Boosting Classifier acting as a meta-learner to integrate their predictions. Performance was evaluated on a separate test set [13].

Table 2: Confusion Matrix Analysis of a Hybrid Skin Cancer Model (Normalized)

| True Label | Predicted: Benign | Predicted: Malignant |

|---|---|---|

| Benign | True Negative (TN): 94% | False Positive (FP): 6% |

| Malignant | False Negative (FN): 11% | True Positive (TP): 89% |

Data derived from the normalized confusion matrix of the meta-learner model [13].

- Result Interpretation: From this matrix, key metrics can be derived. The Recall (Sensitivity) is 89% (TP / [TP + FN] = 89% / [89% + 11%]). The Specificity is 94% (TN / [TN + FP] = 94% / [94% + 6%]). This demonstrates a critical balance: the model is highly effective at correctly identifying healthy lesions (high specificity), while also being robust at catching cancerous ones (high recall), with a false negative rate of 11% that was the best among the models compared in the study [13].

The Metric Selection Guide for Cancer Research

Choosing which metric to optimize is a strategic decision driven by the clinical and research context. The following workflow diagrams the decision process for selecting a primary evaluation metric.

Guidance for Researchers

Prioritize Recall when the primary goal is to identify all positive cases and the cost of a False Negative is high. This is the case in initial cancer screening programs (e.g., mammography, skin cancer checks) where missing a malignant case is unacceptable, and following up on a false alarm is an acceptable trade-off [5] [9]. As one source states, in a scenario checking for a dangerous insect species, it makes sense to maximize recall because "false alarms (FP) are low-cost, and false negatives are highly costly" [5].

Prioritize Precision when it is critical that positive predictions are highly trustworthy. This is often the case in a second-stage confirmation or when deciding to initiate invasive, costly, or risky treatments (e.g., chemotherapy, surgery). A high precision ensures that patients are not subjected to undue harm and resources are not wasted on false alarms [10] [9].

Use the F1-Score when you need a single metric to compare models and there is a need to balance both False Positives and False Negatives, especially on imbalanced datasets. The F1-score is the harmonic mean of precision and recall, which penalizes extreme values more than the arithmetic mean, thus providing a more conservative estimate of performance [5] [14].

Report Specificity alongside Sensitivity when correctly identifying negative cases is a key measure of success. This is important for assessing the overall diagnostic capability of a test and for understanding the rate of false alarms, which can cause patient anxiety and lead to unnecessary follow-up procedures [11] [13].

The Scientist's Toolkit: Essential Research Reagents and Solutions

The following table details key computational tools and methodologies frequently employed in modern cancer classification research, as evidenced by the cited studies.

Table 3: Key Computational Tools in Cancer Classification Research

| Tool / Solution | Function in Research | Exemplar Use Case |

|---|---|---|

| Convolutional Neural Networks (CNNs) | Automatically extract hierarchical features from medical images for classification. | Used as backbone architectures (e.g., DenseNet, ResNet) in breast and skin cancer image classification [15] [13]. |

| Ensemble Learning & Meta-Learners | Combines predictions from multiple models to improve overall accuracy, robustness, and generalization. | A Gradient Boosting meta-learner was used to combine CNN-LSTM and DenseNet models for skin cancer classification, achieving top performance [13]. |

| Data Augmentation Techniques | Artificially expands the training dataset by applying random transformations (flips, contrast changes) to improve model generalization. | Applied to dermoscopic images to mitigate overfitting and improve model robustness to real-world variation [13]. |

| Stratified Cross-Validation | A resampling procedure that ensures each fold of the data retains the same class distribution as the whole dataset, leading to a more reliable performance estimate. | Crucial for robust model evaluation, particularly with imbalanced medical datasets, to ensure metrics are not biased [16]. |

| ROC-AUC Analysis | Evaluates the model's performance across all possible classification thresholds, providing a comprehensive view of the trade-off between Sensitivity and Specificity. | Reported as a key metric (e.g., AUC of 0.974) to demonstrate the high discriminative power of the skin cancer ensemble model [13]. |

Pan-cancer research represents a transformative approach in oncology, moving beyond the study of individual cancer types to uncover the shared and unique molecular mechanisms that drive cancer pathogenesis across tissues. This paradigm shift is powered by the integration of multi-omics data—comprehensive molecular profiles spanning the genome, transcriptome, epigenome, and proteome. The systematic collection and analysis of these data types enable researchers to dissect tumor heterogeneity, identify novel biomarkers, and develop more accurate classification models that transcend traditional, histology-based cancer typology [17]. The critical role of data in these endeavors cannot be overstated; the quality, volume, and integration of multi-omics datasets directly dictate the performance and clinical applicability of the computational models built upon them. This guide provides an objective overview of the multi-omics data landscape in pan-cancer research, comparing the performance of various data types and analytical methodologies in the critical task of cancer classification.

Multi-Omics Data Types and Their Roles in Pan-Cancer Analysis

Multi-omics data provides a multi-layered view of the biological processes involved in carcinogenesis. The table below summarizes the key omics data types used in pan-cancer studies, their descriptions, and their primary analytical strengths.

Table 1: Key Multi-Omics Data Types in Pan-Cancer Research

| Omics Data Type | Biological Description | Role in Pan-Cancer Analysis |

|---|---|---|

| mRNA Expression | Quantifies messenger RNA levels, reflecting gene activity [17]. | Identifies dysregulated oncogenes and tumor suppressor genes; used for molecular subtyping and prognostic stratification [17] [18]. |

| miRNA Expression | Measures levels of small non-coding RNAs that regulate gene expression post-transcriptionally [17]. | Serves as diagnostic and prognostic biomarkers; helps classify tumor types based on regulatory profiles [17]. |

| lncRNA Expression | Profiles long non-coding RNAs involved in epigenetic and transcriptional regulation [17]. | Provides potential diagnostic markers; helps distinguish between tumor types and understand regulatory mechanisms in cancer [17]. |

| Copy Number Variation (CNV) | Identifies gains or losses of genomic DNA segments [17]. | Pinpoints genes with amplified oncogenes or deleted tumor suppressors; reveals genomic instability patterns across cancers [17]. |

| DNA Methylation | Maps epigenetic modifications that alter gene expression without changing DNA sequence [19]. | Used for epigenetic subtyping; identifies silenced tumor suppressor genes and provides insights into cancer development [20]. |

| Proteomics | Quantifies protein abundance and post-translational modifications [20]. | Connects genomic alterations to functional phenotypes; identifies activated pathways and therapeutic targets [20]. |

Performance Comparison of Omics Data and Models in Classification

The choice of omics data and computational model significantly impacts classification performance. The following tables compare the effectiveness of different approaches based on published studies.

Table 2: Performance of Machine Learning Models on RNA-Seq Data for Cancer Type Classification

This table compares the performance of various machine learning models applied to a pan-cancer RNA-Seq dataset from TCGA, which included 801 samples across five cancer types (BRCA, KIRC, COAD, LUAD, PRAD) [18].

| Machine Learning Model | Reported Accuracy (%) | Key Strengths / Context |

|---|---|---|

| Support Vector Machine (SVM) | 99.87% (5-fold cross-validation) [18] | Achieved the highest accuracy in this comparative study [18]. |

| Random Forest | Performance reported, but accuracy not specified in snippet [18] | Utilized for feature selection and classification; robust to noise [18]. |

| K-Nearest Neighbors (KNN) | Performance reported [18] | Applied in combination with genetic algorithms for feature selection [17]. |

| Decision Tree | Performance reported [18] | Provides interpretable models [18]. |

| Artificial Neural Network (ANN) | Performance reported [18] | A baseline deep learning approach [18]. |

Table 3: Performance of Data Types and Advanced Models in Pan-Cancer Classification

This table synthesizes findings from multiple studies that utilized different omics data and more complex models, including deep learning, for pan-cancer classification.

| Data Type / Model | Reported Performance | Study Context / Key Findings |

|---|---|---|

| Convolutional Neural Network (CNN) | 95.59% precision in classifying 33 cancers [17] | Leveraged guided Grad-CAM for biomarker identification, adding interpretability [17]. |

| miRNA Expression + Random Forest | 92% sensitivity in classifying 32 tumor types [17] | Combined genetic algorithms with Random Forest for feature selection and classification [17]. |

| mRNA Expression + KNN | 90% precision in classifying 31 tumor types [17] | Used a genetic algorithm for feature selection prior to classification [17]. |

| Denoising Autoencoder + Multi-Kernel Learning | Superior performance with NMI gains up to 0.78 [21] | Effectively integrated multi-omics data for cancer subtyping in LGG and KIRC [21]. |

| Large Language Models (GPT-4o) | 81.9% accuracy on free-text EHR diagnoses [22] | Demonstrated strong performance in categorizing unstructured clinical notes into 14 cancer types [22]. |

| BioBERT | 90.8% accuracy on structured ICD codes [22] | A domain-specific model that excelled in processing structured clinical data [22]. |

Experimental Protocols for Pan-Cancer Classification

To ensure reproducibility and robust model performance, researchers follow standardized experimental workflows. Below is a detailed protocol for a typical pan-cancer classification study using machine learning on omics data.

Data Acquisition and Curation

- Data Source: The Cancer Genome Atlas (TCGA) is the primary source for publicly available, harmonized multi-omics data. Repositories like the UCI Machine Learning Repository and the MLOmics database provide pre-processed, model-ready datasets [18] [19]. For example, the "Gene Expression Cancer RNA-Seq" dataset from UCI contains 801 samples from five cancer types, with expression data for 20,531 genes [18].

- Data Types: Studies may focus on a single omics type (e.g., mRNA) or integrate multiple types (e.g., mRNA, miRNA, methylation, CNV) [17] [19].

Data Preprocessing and Feature Selection

This step is critical for handling the high-dimensionality of omics data, where the number of features (genes) far exceeds the number of samples.

- Normalization: Data is normalized to correct for technical variability. For RNA-Seq data, FPKM values are often log-transformed [19].

- Quality Control: Features with zero expression in more than 10% of samples or with undefined values are removed [19].

- Feature Selection: Dimensionality reduction is performed to identify the most informative genes and prevent overfitting. Common methods include:

- Lasso Regression (L1 Regularization): Performs variable selection by shrinking some coefficients to exactly zero, leaving a subset of relevant features [18].

- Ridge Regression (L2 Regularization): Shrinks coefficients to reduce model complexity and multicollinearity without eliminating features entirely [18].

- ANOVA Testing: Used to identify genes with significant variance across multiple cancer types, selecting the top features for the "Top" feature version in the MLOmics database [19].

Model Training and Validation

- Model Selection: A range of classifiers are evaluated, from traditional ML models (e.g., SVM, Random Forest) to deep learning architectures (e.g., CNN, Denoising Autoencoders) [17] [18].

- Validation Strategy: A rigorous validation protocol is essential for reliable performance estimates:

- Train-Test Split: The dataset is split, typically with 70% of samples for training and 30% held out for testing [18].

- K-Fold Cross-Validation: The data is partitioned into k folds (e.g., k=5); the model is trained on k-1 folds and validated on the remaining fold, repeated until each fold has been used for validation. This provides a more robust performance measure [18].

- Evaluation Metrics: Models are evaluated using metrics such as:

- Accuracy: The proportion of correct predictions among the total predictions.

- Precision, Recall, and F1-Score: Metrics that provide a more nuanced view of performance, especially for imbalanced datasets [19].

- Normalized Mutual Information (NMI) and Adjusted Rand Index (ARI): Used to evaluate the quality of clustering in subtyping tasks [19].

The following diagram illustrates the standard workflow for building a pan-cancer classification model.

Figure 1: Standard Pan-Cancer Classification Workflow.

Integrated Multi-Omics Analysis: A Pathway to Deeper Insights

While single-omics analyses are powerful, integrating multiple data types provides a more holistic view of cancer biology. Advanced computational frameworks are required to fuse these disparate data layers effectively. The DAE-MKL (Denoising Autoencoder-Based Multi-Kernel Learning) framework is one such method that integrates genomic, transcriptomic, and epigenomic data [21]. Denoising Autoencoders (DAEs) first extract non-linearly transformed features from each omics data type, reducing noise and redundancy. These refined feature representations are then integrated using Multi-Kernel Learning (MKL), which constructs a composite kernel to capture complex relationships across omics layers, ultimately leading to more accurate identification of cancer subtypes [21]. This approach has been validated on real datasets from TCGA, identifying subtypes of low-grade glioma and kidney renal clear cell carcinoma with significant survival differences [21].

The following diagram illustrates this integrative architecture.

Figure 2: Multi-Omics Integration with DAE-MKL.

Successful pan-cancer research relies on a suite of publicly available data resources, computational tools, and software libraries. The table below details key components of the modern pan-cancer research toolkit.

Table 4: Essential Resources for Pan-Cancer Multi-Omics Research

| Resource / Tool Name | Type / Category | Primary Function in Research |

|---|---|---|

| The Cancer Genome Atlas (TCGA) | Data Repository | The cornerstone resource providing comprehensive, multi-omics data for over 10,000 tumor samples across 33 cancer types [17] [20]. |

| MLOmics | Processed Database | An open, unified database providing off-the-shelf, preprocessed multi-omics datasets (mRNA, miRNA, methylation, CNV) for machine learning, including "Original," "Aligned," and "Top" feature versions [19]. |

| UCSC Genome Browser | Data Portal & Visualization | An interactive platform that integrates various types of molecular data (e.g., CNV, methylation, gene expression) and supports efficient data analysis and visualization [17]. |

| BioBERT | Computational Model | A domain-specific language model pre-trained on biomedical literature, fine-tuned for tasks like classifying cancer diagnoses from clinical text in EHRs [22]. |

| Cbioportal | Analysis Portal | A web resource for exploring, visualizing, and analyzing multi-dimensional cancer genomics data from TCGA and other studies, including mutation pattern analysis [23]. |

| Python (scikit-learn, PyTorch, TensorFlow) | Programming Software | The primary programming environment for implementing data preprocessing, feature selection, machine learning, and deep learning models [18]. |

| R (survival, limma, maftools) | Statistical Software | Widely used for statistical analysis, differential expression, survival analysis, and genomic data visualization [20] [23]. |

The landscape of pan-cancer research is unequivocally data-driven. The performance of cancer classification and subtyping models is directly contingent on the richness of the underlying multi-omics data and the sophistication of the methods used to integrate and analyze it. As this guide has illustrated, while models like SVMs can achieve remarkably high accuracy on single-omics data, the future of precision oncology lies in the seamless integration of diverse molecular data types—from genomics and transcriptomics to proteomics and epigenomics. Frameworks like DAE-MKL that effectively reduce noise and leverage complementary information from multiple omics layers are demonstrating superior performance in identifying clinically relevant cancer subtypes. For researchers and drug developers, the path forward involves leveraging centralized, model-ready resources like MLOmics, adhering to rigorous experimental and validation protocols, and continuously adopting advanced integrative analytical methods. This disciplined, data-centric approach is critical for translating the vast potential of pan-cancer studies into tangible improvements in cancer diagnosis, prognosis, and treatment.

While traditional metrics like accuracy, precision, and recall provide valuable isolated insights into model performance, their individual limitations are particularly pronounced in cancer classification research. The introduction of composite scores, primarily the F1 score, represents a critical advancement for evaluating models where class imbalance is common and both false positives and false negatives carry significant clinical consequences [14] [24]. This guide objectively compares the performance of models using single metrics against those evaluated with the F1 score, providing researchers and drug development professionals with experimental data and methodologies to inform their model validation protocols.

The Critical Limitation of Single Metrics in Cancer Diagnostics

In cancer classification, datasets are often inherently imbalanced, with rare cancer types or positive disease cases vastly outnumbered by normal samples or more common cancers [14]. In such contexts, relying solely on accuracy can be profoundly misleading.

- The Accuracy Deception: A model that simply always predicts "no cancer" on a dataset where 95% of samples are truly negative will achieve 95% accuracy, yet fail completely at its primary task of identifying cancer cases [25]. This metric measures overall correctness but fails when the cost of errors is unevenly distributed [24].

- Precision and Recall Trade-Offs: Precision (the measure of correctness among positive predictions) and Recall (the measure of ability to find all positive instances) often exist in tension [14].

- High Precision, Low Recall: A model is cautious, generating few false positives but missing many true positive cases (e.g., failing to identify actual cancers).

- High Recall, Low Precision: A model is aggressive, catching most positive cases but also generating many false alarms (e.g., over-diagnosing healthy patients as having cancer) [25].

- The Composite Solution: The F1 score, calculated as the harmonic mean of precision and recall, balances this trade-off, providing a single metric that only achieves a high value when both precision and recall are high [14] [25]. The formula is: F1 Score = 2 * (Precision * Recall) / (Precision + Recall) [14].

Quantitative Performance Comparison: Single Metrics vs. F1 Score

The following tables summarize experimental data from recent cancer classification studies, demonstrating model performance across single metrics and the composite F1 score.

Table 1: Performance Metrics of Recent Multi-Cancer Classification Deep Learning Models

| Model / Framework | Cancer Types | Accuracy | Precision | Recall | F1 Score | Reference / Dataset |

|---|---|---|---|---|---|---|

| GraphVar (Multi-representation DL) | 33 types from TCGA | 99.82% | 99.85% | 99.82% | 99.82% | [26] |

| CancerDet-Net (Vision Transformer) | 9 subtypes across 4 types (Lung, Colon, Skin, Breast) | 98.51% | Data Not Specified | Data Not Specified | >98.00%* | LC25000, ISIC 2019, BreakHis [27] |

| CNN-RF / CNN-LR (Hybrid Model) | Skin Cancer | 99.00% | Data Not Specified | Data Not Specified | >98.00%* | HAM10000 [28] |

Note: For studies reporting accuracy >98%, it is inferred that the F1 score is similarly high, as major discrepancies between metrics would typically be noted.

Table 2: Comparative Performance in a Binary Classification Scenario with Class Imbalance

| Evaluation Metric | Model A (High Accuracy) | Model B (High F1 Score) |

|---|---|---|

| Description | Naive model that predominantly predicts the majority class. | Balanced model optimized for the F1 score. |

| Accuracy | 95.0% | 90.0% |

| Precision | 50.0% | 85.0% |

| Recall | 10.0% | 80.0% |

| F1 Score | 16.7% | 82.4% |

Table 2 illustrates a hypothetical scenario common in cancer screening. While Model A appears superior in accuracy, its low F1 score reveals poor effectiveness at identifying the positive class. Model B, with a high F1 score, is clinically more useful. [24] [25]

Experimental Protocols and Methodologies

To ensure the validity and reproducibility of composite score evaluations, researchers should adhere to rigorous experimental protocols. The following methodologies are drawn from cited studies.

Data Preparation and Model Training for Multi-Cancer Classification

The GraphVar framework provides a robust protocol for developing a classifier evaluated with high F1 scores [26]:

- Data Sourcing: Somatic variant data (e.g., Mutation Annotation Format files) are retrieved from repositories like The Cancer Genome Atlas (TCGA).

- Cohort Curation: A rigorous multi-step pipeline removes duplicate patient entries and verifies each sample corresponds to a unique patient to prevent data leakage.

- Data Partitioning: The dataset is partitioned at the patient level into three mutually exclusive sets:

- Training Set (70%): For model training.

- Validation Set (10%): For hyperparameter optimization and early stopping.

- Independent Test Set (20%): For the final, unbiased evaluation of the fully trained model.

- Stratified sampling is employed to preserve the proportional representation of all cancer types in each partition.

- Multi-Representation Learning:

- Variant Map Construction: Gene-level variants are encoded into spatial variant maps (images), with color channels denoting different mutation types (e.g., SNPs, insertions, deletions).

- Numeric Feature Matrix: A separate matrix captures quantitative properties like allele frequencies and mutation spectra.

- Model Architecture & Training:

- A dual-stream deep neural network is used. A ResNet-18 backbone extracts features from variant images, while a Transformer encoder models the numeric feature matrix.

- Features from both streams are concatenated into a comprehensive feature vector and passed to a classification head.

GraphVar Experimental Workflow: The process from data sourcing to model evaluation, highlighting the independent test set for unbiased F1 score calculation. [26]

The Double-Loop Cross-Validation Protocol

For robust feature selection and classifier evaluation without a single held-out test set, the Amsterdam Classification Evaluation Suite (ACES) implements a Double-Loop Cross-Validation (DLCV) protocol [29].

- Outer Loop (Performance Estimation):

- The dataset (D) is split into five parts.

- Iteratively, four parts serve as the training set, and one part is the test set.

- Inner Loop (Model Selection):

- The training set from the outer loop is again split into five parts.

- This inner set is used to train models with different numbers of features and select the optimal model configuration.

- Feature Selection & Classification:

- Within the inner loop, feature selection methods (e.g., single-gene or composite-feature methods) provide a ranked list of features.

- Classifiers (e.g., Nearest Mean Classifiers) are trained by sequentially adding features according to their ranking.

- The model performance from the inner loop guides the selection of the best model to be evaluated on the outer loop's test set.

- Performance Aggregation: The process is repeated for all outer and inner folds, and the results are aggregated to produce a final, stable performance estimate, including the F1 score.

Double-Loop Cross-Validation: This protocol ensures strict separation between training and testing data for reliable F1 score estimation. [29]

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key solutions and materials essential for conducting rigorous cancer classification research with composite metric evaluation.

Table 3: Essential Research Reagents and Computational Tools for Cancer Classification

| Item / Solution | Function / Application | Specific Use-Case Example |

|---|---|---|

| The Cancer Genome Atlas (TCGA) | A comprehensive public repository of genomic, epigenomic, and clinical data from over 20,000 primary cancers across 33 cancer types. | Sourcing somatic variant data (MAF files) for training and testing multi-cancer classification models like GraphVar [26]. |

| Spatial Transcriptomics (ST) Slides | Glass slides with oligonucleotides to capture mRNAs from histological tissue sections while maintaining spatial information. | Generating spatially-resolved gene expression data for breast cancer region classification (DCIS vs. IDC) in machine learning models [30]. |

| Amsterdam Classification Evaluation Suite (ACES) | A Python package for objective evaluation of classification and feature-selection methods, including DLCV protocols. | Standardized performance comparison of single-gene classifiers versus composite-feature classifiers on large, pooled breast cancer gene expression datasets [29]. |

| PRO-CTCAE (Patient-Reported Outcomes) | A library of items designed for patient-reported adverse event monitoring in oncology clinical trials. | Developing composite grading algorithms to map multiple symptom attributes (frequency, severity, interference) into a single, clinically actionable toxicity grade [31]. |

| PyTorch / scikit-learn | Open-source software libraries for deep learning (PyTorch) and traditional machine learning (scikit-learn). | Implementing deep learning frameworks (e.g., GraphVar) [26] and support vector machine classifiers for spatial transcriptomics data [30]. |

The move beyond single metrics to composite scores like the F1 score is not merely a technical adjustment but a fundamental necessity for advancing cancer classification research. As evidenced by state-of-the-art models, the F1 score provides a balanced and stringent assessment that aligns with clinical needs, especially when dealing with imbalanced datasets where both false positives and false negatives are critical. By adopting rigorous experimental protocols, such as those demonstrated by GraphVar and ACES, and leveraging essential research tools, scientists and drug developers can ensure their models are robust, reliable, and truly fit for the purpose of improving cancer diagnostics and patient outcomes.

From Theory to Practice: Applying Metrics in Complex Cancer Research Scenarios

In the field of oncology research, accurate evaluation of classification models is not merely a statistical exercise—it can directly impact clinical decision-making and patient outcomes. Machine learning models for cancer classification must reliably distinguish between multiple cancer types, disease stages, or molecular subtypes, often working with imbalanced datasets where some categories are naturally rare yet clinically significant. Macro and micro averaging provide two distinct philosophical approaches to summarizing model performance across multiple classes, each with different implications for how we prioritize certain types of classification errors in medical applications [32].

The choice between macro and micro averaging becomes particularly crucial in cancer informatics, where the clinical cost of misclassifying a rare but aggressive cancer type may far outweigh the cost of misclassifying more common variants. Understanding these metrics enables researchers to select evaluation frameworks that align with clinical priorities, ensuring that models are optimized for patient benefit rather than merely abstract statistical performance [16].

Conceptual Foundations: Macro vs. Micro Averaging

Core Definitions and Mathematical Formulations

In multi-class classification settings, performance metrics such as precision, recall, and F1-score cannot be directly computed as in binary classification without first establishing an aggregation method. Macro and micro averaging represent two fundamentally different approaches to this challenge [33].

Macro-averaging calculates metrics independently for each class and then computes the arithmetic mean, thereby treating all classes equally regardless of their frequency in the dataset. For a multi-class system with N classes, the macro-averaged precision is calculated as:

[ \text{Macro-P} = \frac{\sum{i=1}^{N} Pi}{N} ]

where ( P_i ) represents the precision for class i [33].

Micro-averaging aggregates the contributions of all classes by summing all true positives, false positives, and false negatives across all classes, then calculating the metrics based on these global sums. The micro-averaged precision is calculated as:

[ \text{Micro-P} = \frac{\sum{i=1}^{N} TPi}{\sum{i=1}^{N} TPi + \sum{i=1}^{N} FPi} ]

where ( TPi ) and ( FPi ) represent true positives and false positives for class i, respectively [34] [35].

Visualizing the Calculation Pathways

The fundamental difference between macro and micro averaging lies in the sequence of aggregation operations applied to the per-class confusion matrices. The following diagram illustrates these distinct calculation pathways:

Key Behavioral Differences in Imbalanced Scenarios

The conceptual differences between macro and micro averaging lead to dramatically different behaviors when dealing with imbalanced datasets, which are common in medical applications [34].

Consider a hypothetical cancer classification system with four classes:

- Class A: 1 TP and 1 FP → Precision = 0.5

- Class B: 10 TP and 90 FP → Precision = 0.1

- Class C: 1 TP and 1 FP → Precision = 0.5

- Class D: 1 TP and 1 FP → Precision = 0.5

The macro-average precision would be ( (0.5 + 0.1 + 0.5 + 0.5) / 4 = 0.4 ), while the micro-average precision would be ( (1 + 10 + 1 + 1) / (2 + 100 + 2 + 2) = 13/106 ≈ 0.123 ) [34].

This example demonstrates how macro-averaging can present a more optimistic view by giving equal weight to each class's performance, while micro-averaging provides a more pessimistic but data-volume-weighted perspective that strongly reflects performance on the majority class [34].

Experimental Evidence from Cancer Classification Research

Multiomics Cancer Type Classification

A 2025 study on multiomics cancer classification provides compelling real-world evidence of how these averaging techniques perform in practice. Researchers developed a stacked deep learning ensemble to classify five common cancer types in Saudi Arabia: breast, colorectal, thyroid, non-Hodgkin lymphoma, and corpus uteri [8]. The model integrated RNA sequencing, somatic mutation, and DNA methylation profiles using a stacking ensemble of five established methods: support vector machine, k-nearest neighbors, artificial neural network, convolutional neural network, and random forest [8].

Table 1: Performance Metrics for Multiomics Cancer Classification

| Data Type | Accuracy | Note on Averaging |

|---|---|---|

| Multiomics (Integrated) | 98% | Demonstrates micro-like behavior |

| RNA Sequencing | 96% | Majority class influence |

| Methylation | 96% | Majority class influence |

| Somatic Mutation | 81% | Lower performance on sparse data |

The high accuracy (98%) with multiomics data integration essentially reflects a micro-averaged perspective, as it gives equal weight to each instance rather than each class [8]. The dataset exhibited notable class imbalance, with breast cancer (BRCA) having 1,223 cases while non-Hodgkin lymphoma (NHL) had only 481 cases in the RNA sequencing data [8]. Despite this imbalance, the overall accuracy remained high, suggesting the model performed well on the majority classes.

Cancer Diagnosis Categorization in Electronic Health Records

Another 2025 study evaluated large language models and BioBERT for classifying cancer diagnoses from both structured ICD codes and unstructured free-text entries in electronic health records [32]. This research specifically utilized weighted macro F1-scores, recognizing the importance of accounting for class imbalance in clinical applications.

Table 2: Performance on Cancer Diagnosis Categorization

| Model | Data Format | Weighted Macro F1-Score | Accuracy |

|---|---|---|---|

| BioBERT | ICD Codes | 84.2 | 90.8% |

| GPT-4o | ICD Codes | ~84.0 | 90.8% |

| GPT-4o | Free-text | 71.8 | 81.9% |

| BioBERT | Free-text | 61.5 | 81.6% |

The researchers explicitly chose weighted macro F1-score as a primary metric because it "balances precision and recall across all diagnosis categories while assigning greater influence to frequently occurring diagnoses via sample weights" [32]. This approach ensured that performance on common categories meaningfully impacted the overall score while still considering the model's ability to classify less frequent diagnoses—an essential consideration for clinical deployment where even rare cancers must be identified correctly.

Calculation Methodologies and Workflows

Practical Computation of Averaging Metrics

The computational workflow for deriving macro and micro averages follows systematic processes that can be visualized as parallel pathways. The following diagram details the specific calculation steps for each approach:

Important Mathematical Properties

A critical mathematical relationship emerges in micro-averaging: for multi-class classification where each instance receives a single label, the micro-averaged precision, micro-averaged recall, micro F1-score, and overall accuracy are all numerically identical [35]. This occurs because:

[ \text{Micro-P} = \frac{\sum TP}{\sum TP + \sum FP} = \frac{\sum TP}{\sum TP + \sum FN} = \text{Micro-R} ]

when each data point is assigned to exactly one class, and:

[ \text{Accuracy} = \frac{\sum TP}{\text{Total Instances}} = \frac{\sum TP}{\sum TP + \sum FP} = \text{Micro-P} ]

since in single-label classification, every false positive for one class is necessarily a false negative for another class, making (\sum FP = \sum FN) [35].

Guidelines for Metric Selection in Cancer Research

Decision Framework for Researchers

Selecting between macro and micro averaging depends primarily on the clinical context, class distribution characteristics, and the relative importance of minority classes in the specific research application. The following decision framework provides guidance for researchers:

Table 3: Metric Selection Guide for Cancer Classification Research

| Scenario | Recommended Metric | Rationale | Clinical Example |

|---|---|---|---|

| Balanced classes with equal clinical importance | Macro-average | Treats all cancer types equally | Classifying common cancers with similar prevalence |

| Imbalanced data with majority classes dominating | Micro-average | Reflects performance on most frequent cases | Screening where common cancers represent most cases |

| Imbalanced data with critical minority classes | Weighted Macro-average | Balances recognition of rare but lethal cancers | Identifying rare pediatric cancers with high mortality |

| Need for intuitive, overall performance measure | Micro-average (same as accuracy) | Easily interpretable for clinical stakeholders | Communicating model performance to hospital administrators |

| Focus on specific rare cancer detection | Per-class metrics + Macro-average | Ensures minority class performance is visible | Early detection of rare but aggressive cancer subtypes |

Special Considerations for Medical Applications

In cancer research, the choice of evaluation metric should align with clinical priorities. If all cancer types are considered equally important regardless of prevalence, macro-averaging provides a more appropriate evaluation framework [34]. However, if the clinical application will predominantly encounter majority classes, micro-averaging may better reflect real-world performance [35].

For datasets with significant class imbalance where all classes remain clinically important, the weighted macro-average offers a pragmatic compromise. This approach calculates the macro-average but weights each class's contribution according to its support (the number of true instances), thus providing a balance between the macro and micro perspectives [36].

Essential Research Reagent Solutions

The experimental protocols cited in this guide utilize several key computational tools and frameworks that constitute essential research reagents for conducting similar investigations in cancer classification research.

Table 4: Essential Research Reagents for Cancer Classification Metrics Research

| Reagent/Solution | Function | Example Implementation |

|---|---|---|

| Python Machine Learning Stack | Core computational environment | Scikit-learn for metric calculation |

| Statistical Bootstrap Methods | Uncertainty quantification for metrics | 95% confidence intervals for F1-scores |

| Multiomics Data Integration Platforms | Handling diverse biological data types | TCGA and LinkedOmics dataset access |

| Deep Learning Frameworks | Implementing complex classification models | TensorFlow, PyTorch for neural networks |

| Natural Language Processing Tools | Processing clinical text data | BioBERT for biomedical text classification |

| Ensemble Learning Methodologies | Combining multiple classification approaches | Stacking SVMs, KNN, ANN, CNN, and Random Forest |

Macro and micro averaging provide complementary perspectives on model performance in multi-class cancer classification tasks. The experimental evidence from recent cancer informatics research demonstrates that metric selection should be driven by clinical requirements rather than statistical convenience. While micro-averaging offers an intuitive volume-weighted perspective that often aligns with overall accuracy, macro-averaging ensures that rare cancer types receive appropriate consideration in model evaluation. Weighted macro-averaging represents a particularly valuable approach for imbalanced medical datasets where all classes hold clinical significance. As cancer classification models continue to evolve in complexity and clinical application, thoughtful metric selection will remain essential for ensuring that these tools deliver meaningful improvements in patient care and oncological outcomes.

In the high-stakes domain of cancer classification, the pursuit of model performance often leads researchers to a deceptive benchmark: accuracy. This metric, defined as the proportion of correct predictions among all classifications, becomes particularly misleading when dealing with imbalanced medical datasets where healthy patients significantly outnumber those with disease [37] [5]. Consider a model designed to detect a cancer type present in only 5% of a population. A naive classifier that simply predicts "no cancer" for every case would achieve 95% accuracy, creating the illusion of competence while failing completely at its intended purpose [37]. This phenomenon, known as the accuracy paradox, underscores a critical limitation in traditional evaluation approaches for medical machine learning applications.

The challenge of imbalanced data is particularly pronounced in cancer diagnostics and prognosis, where the number of diseased patients is naturally smaller than healthy individuals [38] [39]. Standard machine learning algorithms, designed with the assumption of relatively balanced class distributions, frequently develop a bias toward the majority class, effectively ignoring the rare cases that are often of greatest clinical interest [39]. The consequences of such oversights can be dire—false negatives in cancer detection may delay critical treatments, adversely affecting patient outcomes and survival rates [39]. Consequently, researchers must look beyond accuracy to metrics that more accurately reflect model performance on imbalanced datasets, particularly those that prioritize correct identification of the minority class.

Beyond Accuracy: Essential Evaluation Metrics for Imbalanced Data

When evaluating cancer classification models on imbalanced datasets, researchers should consider multiple metrics that collectively provide a more nuanced understanding of model performance. The following table summarizes key evaluation metrics and their relevance to imbalanced cancer classification problems:

| Metric | Formula | Clinical Interpretation | When to Prioritize |

|---|---|---|---|

| Precision | ( \frac{TP}{TP + FP} ) | When the model predicts cancer, how often is it correct? | When false positives (unnecessary biopsies) are clinically concerning [5] [40] |

| Recall (Sensitivity) | ( \frac{TP}{TP + FN} ) | What proportion of actual cancer cases were detected? | When false negatives (missed cancers) are dangerous [5] [39] |

| F1 Score | ( 2 \times \frac{\text{Precision} \times \text{Recall}}{\text{Precision} + \text{Recall}} ) | Harmonic mean balancing precision and recall | When seeking a single metric that balances both false positives and false negatives [37] [40] |

| ROC AUC | Area under ROC curve | Model's ability to distinguish cancerous from non-cancerous cases across thresholds | When overall ranking performance is important and dataset isn't severely imbalanced [40] [41] |

| PR AUC | Area under Precision-Recall curve | Model performance focused specifically on the positive (cancer) class | Preferred over ROC AUC for imbalanced data [41] |

For cancer classification, the choice of metric should reflect clinical priorities. Recall becomes paramount when missing a cancer case (false negative) could have severe consequences, as early detection significantly improves outcomes [5]. Precision gains importance when false positives lead to invasive follow-up procedures that carry their own risks and costs [40]. The F1 score provides a balanced perspective when both types of errors need consideration.

The ROC AUC (Receiver Operating Characteristic Area Under Curve) represents the probability that a randomly chosen positive instance (cancer case) is ranked higher than a randomly chosen negative instance (non-cancer case) [41]. However, with imbalanced medical data, the Precision-Recall AUC (PR AUC) often provides a more informative assessment as it focuses specifically on the model's performance regarding the positive class, without being influenced by the abundance of true negatives [41].

Experimental Insights: Performance Comparisons in Cancer Classification

Recent research provides compelling evidence for the necessity of alternative metrics and techniques when working with imbalanced cancer datasets. A comprehensive 2024 study evaluated 19 resampling methods and 10 classifiers across five cancer datasets, revealing significant performance differences between methods [38].

Table: Classifier Performance on Imbalanced Cancer Data (Adapted from [38])

| Classifier | Mean Performance (%) | Key Strengths | Optimal Resampling Partner |

|---|---|---|---|

| Random Forest | 94.69% | Robustness, handles high-dimensional data | SMOTEENN |

| Balanced Random Forest | 94.69% | Built-in handling of class imbalance | (Native implementation) |

| XGBoost | 94.69% | Handling complex non-linear relationships | SMOTEENN |

| Baseline (No Resampling) | 91.33% | - | - |

Table: Resampling Method Performance on Cancer Data (Adapted from [38])

| Resampling Method | Mean Performance (%) | Category | Key Characteristics |

|---|---|---|---|

| SMOTEENN | 98.19% | Hybrid | Combines oversampling and cleaning |

| IHT | 97.20% | Under-sampling | Removes noisy majority class instances |

| RENN | 96.48% | Under-sampling | Removes instances misclassified by k-NN |

The experimental protocol employed in this research involved systematic comparison across multiple diagnostic and prognostic cancer datasets, including the Wisconsin Breast Cancer Database, Lung Cancer Detection Dataset, and SEER Breast Cancer Dataset [38]. Researchers applied resampling techniques from three categories (oversampling, undersampling, and hybrid methods) before training and evaluating classifiers using appropriate metrics for imbalanced data. The performance advantage of hybrid sampling methods like SMOTEENN highlights the effectiveness of combining synthetic minority oversampling with cleaning of the majority class.

In a separate study focused on osteosarcoma classification, researchers found that combining random oversampling with the Extra Trees algorithm achieved 97.8% area under the ROC curve with acceptably low false alarm and misdetection rates [42]. This further reinforces the importance of combining appropriate data-level techniques with well-suited algorithms for optimal performance on imbalanced medical data.

Methodological Approaches: Techniques for Handling Imbalanced Data

Data-Level Techniques: Resampling Methods

Resampling methods modify the training dataset to create a more balanced distribution between classes, enabling standard algorithms to learn more effectively from minority class examples:

Oversampling: Increasing the representation of the minority class by creating copies of existing instances or generating synthetic examples [37] [39]. The Synthetic Minority Oversampling Technique (SMOTE) creates synthetic samples by interpolating between existing minority class instances, though it may not preserve non-linear relationships in the data [37].

Undersampling: Reducing the majority class instances by randomly removing examples or employing more sophisticated selection methods [37] [39]. Techniques like RENN (Repeated Edited Nearest Neighbors) remove majority class instances that are misclassified by k-nearest neighbors, effectively cleaning the decision boundary [38].

Hybrid Methods: Combining both oversampling and undersampling approaches for improved effectiveness [38] [39]. SMOTEENN, the top-performing method in recent cancer research, first applies SMOTE to generate synthetic minority instances, then uses ENN (Edited Nearest Neighbors) to remove both majority and minority instances identified as noisy [38].

Algorithm-Level Techniques and Threshold Tuning

Beyond manipulating training data, several algorithm-level approaches can enhance performance on imbalanced cancer datasets:

Cost-Sensitive Learning: Modifying algorithms to impose heavier penalties for misclassifying minority class instances [37]. This approach aligns with clinical reality where the cost of missing a cancer case typically far exceeds the cost of a false alarm.

Ensemble Methods: Combining multiple models to improve overall performance and robustness [38] [42]. Random Forest and Balanced Random Forest have demonstrated particular effectiveness on imbalanced cancer data, as evidenced by their top performance in comparative studies [38].

Threshold Tuning: Adjusting the default classification threshold (typically 0.5) to optimize for specific metrics [37]. Increasing the threshold makes the model more conservative in predicting cancer, potentially improving precision, while decreasing the threshold makes it more sensitive, potentially improving recall. This approach allows clinicians to calibrate models based on specific clinical requirements and risk tolerance.

Diagram: Comprehensive Approach to Handling Imbalanced Cancer Data

Successfully navigating the challenges of imbalanced cancer datasets requires access to appropriate datasets, algorithms, and evaluation frameworks. The following table outlines key resources for researchers working in this domain:

Table: Research Reagent Solutions for Imbalanced Cancer Classification

| Resource Category | Specific Tools & Datasets | Function & Application | Access Information |

|---|---|---|---|

| Public Cancer Datasets | Wisconsin Breast Cancer DB [38] | Binary classification (benign/malignant); 699 samples | Publicly available via Kaggle |

| Lung Cancer Detection Dataset [38] | Risk assessment with demographic/clinical factors; 309 samples | Publicly available via Kaggle | |

| SEER Breast Cancer Dataset [38] | Prognostic modeling with clinical outcomes; 4024 patients | Publicly available via Kaggle | |

| Resampling Algorithms | SMOTE [38] [39] | Synthetic minority oversampling to balance class distribution | Implemented in imbalanced-learn (Python) |

| SMOTEENN [38] | Hybrid approach combining oversampling and cleaning | Implemented in imbalanced-learn (Python) | |

| RENN, IHT [38] | Undersampling methods that remove noisy majority instances | Implemented in imbalanced-learn (Python) | |

| Classification Algorithms | Random Forest [38] | Ensemble method demonstrating top performance on cancer data | scikit-learn, Python |

| Balanced Random Forest [38] | Random Forest variant with built-in class weight adjustment | scikit-learn, Python | |

| XGBoost [38] | Gradient boosting effective with complex non-linear relationships | XGBoost library, Python | |

| Evaluation Metrics | PR AUC [41] | Focused assessment of positive class performance | scikit-learn, Python |

| F1 Score [37] [40] | Balanced measure of precision and recall | scikit-learn, Python | |

| Recall/Sensitivity [5] [39] | Critical for minimizing false negatives in cancer detection | scikit-learn, Python |

The critical evaluation of model performance on imbalanced cancer datasets demands a nuanced approach that moves beyond traditional accuracy metrics. As demonstrated by comparative studies, employing appropriate evaluation metrics—particularly recall, F1 score, and PR AUC—provides a more clinically relevant assessment of model capability [38] [41]. Furthermore, combining strategic resampling techniques like SMOTEENN with robust classifiers such as Random Forest delivers substantially improved performance on minority class prediction without sacrificing overall model quality [38].

Future research directions in this domain include developing more sophisticated hybrid approaches that integrate data-level and algorithm-level solutions [39], creating domain-specific evaluation metrics that incorporate clinical costs and benefits, and advancing interpretability methods that build trust in model predictions among healthcare professionals [43]. As machine learning continues to transform cancer diagnostics and prognosis, maintaining rigorous, clinically-informed evaluation standards will be essential for deploying models that genuinely enhance patient care and outcomes.

In the development of cancer classification models, from early detection to prognosis prediction, evaluating model performance is as crucial as the algorithm design itself. The Receiver Operating Characteristic (ROC) curve and the Area Under this Curve (AUC) provide a comprehensive framework for assessing diagnostic accuracy across all possible decision thresholds [44] [45]. This is particularly vital in clinical settings, where the consequences of false negatives (missed cancers) and false positives (unnecessary biopsies) must be carefully balanced based on the specific clinical context [44] [46].

The ROC curve visually represents the trade-off between a model's sensitivity (ability to correctly identify cancer cases) and its 1-specificity (tendency to falsely classify healthy cases as cancer) at every possible classification threshold [44] [45]. The AUC summarizes this curve into a single numeric value representing the model's overall ability to distinguish between positive (cancer) and negative (non-cancer) classes [47] [48]. For cancer researchers, this provides an essential tool for selecting optimal models and classification thresholds suited to specific clinical requirements, whether for highly sensitive cancer screening or highly specific confirmatory testing [44].

Core Concepts and Clinical Interpretation

The Anatomy of a ROC Curve

The ROC curve is created by plotting the True Positive Rate (TPR), also known as sensitivity or recall, against the False Positive Rate (FPR), which equals 1-specificity [44] [45]. Each point on the curve represents a sensitivity/specificity pair corresponding to a particular decision threshold [44] [48].

- True Positive Rate (Sensitivity): The proportion of actual cancer cases correctly identified by the model [45]. Calculated as TP/(TP+FN), where TP=True Positives and FN=False Negatives [49].

- False Positive Rate (1-Specificity): The proportion of actual non-cancer cases incorrectly flagged as cancer [45]. Calculated as FP/(FP+TN), where FP=False Positives and TN=True Negatives [49].

The curve's shape reveals critical information about model performance. A curve arching toward the upper-left corner indicates strong discriminatory power, while a curve following the diagonal suggests performance no better than random guessing [44] [45].

Understanding the AUC Metric

The Area Under the ROC Curve (AUC) quantifies the overall performance across all thresholds [44] [47]. The AUC value ranges from 0 to 1 and has a probabilistic interpretation: it represents the probability that the model will rank a randomly chosen positive instance (e.g., a cancer case) higher than a randomly chosen negative instance (e.g., a non-cancer case) [44] [48].

The following diagram illustrates key ROC curve shapes and their corresponding AUC values:

Clinical Meaning of AUC in Cancer Diagnostics

In clinical oncology, the AUC represents an "optimistic" estimate of global diagnostic accuracy when diseased and non-diseased groups are balanced [46]. Research has demonstrated that the AUC provides an upward-biased measure of the proportion of correct classifications at an optimal accuracy cut-off, with the magnitude of bias depending on the shape of the ROC curve [46]. This understanding is essential when translating model performance metrics to expected real-world clinical performance.

For cancer detection, an AUC of 0.8 means there is an 80% probability that the model will correctly rank a random cancer case higher than a random non-cancer case [44] [48]. As a general guideline in medical diagnostics, AUC values of 0.9-1.0 are considered excellent, 0.8-0.9 good, 0.7-0.8 fair, and 0.5-0.7 poor [48].

Experimental Applications in Cancer Research

Case Study: Breast MRI Protocol Comparison

A 2025 study directly compared the diagnostic accuracy of abbreviated versus full MRI protocols for detecting breast lobular carcinoma using ROC analysis [50] [51]. This research exemplifies the application of ROC/AUC methodology in clinical cancer imaging research.

Table 1: Diagnostic Performance of MRI Protocols for Breast Lobular Carcinoma Detection

| Protocol | AUC | Sensitivity | Specificity | Clinical Implications |

|---|---|---|---|---|

| Full MRI Protocol | 1.0 | 100% | 100% | Gold standard performance |

| Abbreviated Protocol (Radiologist A) | 0.920 | 100% | 73.3% | High sensitivity, reduced specificity |

| Abbreviated Protocol (Radiologist B) | 0.922 | 100% | 53.5% | Maintained sensitivity, significantly reduced specificity |

The study demonstrated that while the abbreviated protocol maintained perfect sensitivity (critical for cancer screening), it showed significantly reduced specificity compared to the full protocol [50]. This trade-off has direct clinical implications: higher false positive rates may lead to unnecessary biopsies and patient anxiety, despite the protocol's advantage of being faster and more cost-effective [50] [51].

Implementation in Microbiome-Cancer Research

Machine learning approaches using microbiome data for cancer characterization represent another significant application of ROC/AUC analysis [52]. Studies have explored using microbial abundance profiles as features for classifiers to distinguish cancer patients from healthy controls [52].

The experimental workflow typically involves:

In this domain, Random Forests and Logistic Regression have shown promising results, though model generalizability remains challenging due to dataset limitations and technical artifacts in microbiome data [52]. ROC analysis provides the standard framework for evaluating and comparing these models across all classification thresholds.

Comparative Analysis of Classification Models

Performance Across Algorithm Types

Different machine learning algorithms produce distinct ROC characteristics when applied to cancer classification tasks. The following table summarizes typical performance patterns:

Table 2: Comparative Performance of Machine Learning Models in Cancer Classification

| Model Type | Typical AUC Range | Strengths | Limitations | Common Cancer Applications |

|---|---|---|---|---|

| Logistic Regression | 0.75-0.90 | Interpretable, stable, fast training | Limited complex pattern detection | Preliminary screening models |

| Random Forest | 0.80-0.95 | Handles high dimensionality, robust to outliers | Black box, can overfit | Microbiome-based classification [52] |

| Deep Learning | 0.85-0.98 | Automatic feature extraction, high accuracy | Large data requirements, computationally intensive | Medical imaging analysis |

| Support Vector Machines | 0.78-0.92 | Effective in high-dimensional spaces | Sensitive to parameter tuning | Genomic data classification |

Practical Implementation Framework

Implementing ROC analysis requires specific computational tools and methodologies. The following code framework illustrates a typical implementation for comparing multiple models:

Research Reagent Solutions for Cancer Classification Studies

Table 3: Essential Research Materials for Microbiome-Based Cancer Classification Studies

| Reagent/Resource | Function | Application in Cancer Research |

|---|---|---|

| DNA/RNA Extraction Kits | Nucleic acid isolation from samples | Obtain genetic material from tissue, fecal, or blood samples [52] |

| 16S rRNA Sequencing Reagents | Taxonomic profiling of bacteria | Characterize microbiome composition in cancer vs normal samples [52] |

| Shotgun Metagenomics Kits | Comprehensive genomic analysis | Identify functional potential of cancer-associated microbiomes [52] |

| The Cancer Genome Atlas (TCGA) Data | Reference genomic datasets | Benchmarking and validation of classification models [52] |

| Computational Frameworks (scikit-learn, TensorFlow) | Model implementation and evaluation | Develop and validate cancer classification algorithms [47] |

Advanced Considerations and Limitations

Threshold Selection for Clinical Deployment

Choosing the optimal operating point on the ROC curve represents a critical decision in clinical implementation [44]. The choice depends on the relative clinical consequences of false positives versus false negatives:

- Conservative Threshold (Low FPR): Appropriate when false positives have serious consequences (e.g., unnecessary invasive biopsies, patient trauma) [44]

- Sensitive Threshold (High TPR): Essential when missing true positives is dangerous (e.g., cancer screening where early detection is critical) [44]

- Balanced Threshold: Suitable when costs of false positives and false negatives are roughly equivalent [44]

Limitations and Complementary Metrics

While ROC/AUC provides valuable insights, several limitations must be considered:

Class Imbalance Concerns: ROC curves can present an overly optimistic view when dealing with highly imbalanced datasets common in cancer research (where healthy individuals may far outnumber cancer cases) [44] [49]. In such cases, precision-recall curves may offer more meaningful evaluation [44] [52].

Clinical Relevance: The AUC represents an aggregate measure across all thresholds, but clinical practice typically operates at a single threshold [46]. Additional metrics such as positive and negative predictive values may be more directly informative for clinical decision-making.

Shape Considerations: The clinical meaning of AUC depends on the shape of the ROC curve, with different curve shapes potentially having identical AUC values but different clinical implications [46].

ROC curve analysis and AUC quantification provide an essential framework for evaluating cancer classification models across all decision thresholds. These tools enable researchers to make informed decisions about model selection and threshold determination based on specific clinical requirements. The application of these methods spans diverse domains from medical imaging to microbiome-based classification, demonstrating their fundamental importance in oncology research.

As cancer characterization models continue to evolve, ROC/AUC analysis will remain central to validating their performance and ensuring their appropriate implementation in clinical practice. Future directions include addressing class imbalance challenges, improving model generalizability across diverse populations, and developing standardized reporting guidelines for ROC analysis in cancer research.