Analytical Validation in Cancer Molecular Diagnostics: Standards, Methods, and Clinical Translation

This article provides a comprehensive overview of the principles and practices of analytical validation for molecular diagnostics in oncology.

Analytical Validation in Cancer Molecular Diagnostics: Standards, Methods, and Clinical Translation

Abstract

This article provides a comprehensive overview of the principles and practices of analytical validation for molecular diagnostics in oncology. Aimed at researchers, scientists, and drug development professionals, it explores the foundational standards required for robust test development, examines current and emerging methodologies from NGS to liquid biopsy, addresses common troubleshooting and optimization challenges, and outlines rigorous validation and comparative frameworks. The content synthesizes recent advancements and regulatory considerations to guide the development of accurate, reliable, and clinically actionable diagnostic assays for precision oncology.

The Bedrock of Reliability: Foundational Principles and Regulatory Standards for Cancer Diagnostics

Analytical validation is a fundamental process in molecular diagnostics that confirms a test or technology performs as intended in a controlled, pre-clinical setting. It provides the essential evidence that an assay is reliable, reproducible, and fit for its purpose before it is used in clinical decision-making. For researchers and drug development professionals, robust analytical validation is the cornerstone of credible experimental and clinical results. It ensures that the data generated accurately reflects biological reality rather than technical artifact. Within this framework, sensitivity, specificity, and precision emerge as the three pillars upon which the validity of any diagnostic measurement rests. These parameters are quantitatively assessed to define the operational performance characteristics of an assay, creating a foundation of trust for subsequent clinical validation and application.

The roles of these key parameters are distinct yet interconnected. Sensitivity measures the test's ability to correctly identify true positives, minimizing false negatives, while specificity measures its ability to correctly identify true negatives, minimizing false positives [1] [2]. Precision, often expressed as positive predictive value (PPV) in diagnostic contexts, evaluates the reproducibility of measurements and the reliability of positive results [3]. Understanding the rigorous assessment of these parameters is critical for evaluating the quality of molecular diagnostics, especially in oncology where diagnostic results directly influence therapeutic choices. This guide objectively compares how different cutting-edge molecular profiling assays demonstrate these parameters through their experimental validation.

Core Parameter Definitions and Calculations

Foundational Definitions and Statistical Framework

The evaluation of diagnostic test accuracy relies on a standardized statistical framework based on a 2x2 contingency table, which compares test results against a known truth condition [1]. From this table, key performance metrics are derived:

- Sensitivity (True Positive Rate): The proportion of individuals with the disease who are correctly identified by the test as positive [1] [2]. The formula is: Sensitivity = True Positives / (True Positives + False Negatives) [1]. A highly sensitive test is crucial for "ruling out" disease when the result is negative, as it misses few true cases [2] [4].

- Specificity (True Negative Rate): The proportion of individuals without the disease who are correctly identified by the test as negative [1] [2]. The formula is: Specificity = True Negatives / (True Negatives + False Positives) [1]. A highly specific test is valuable for "ruling in" disease when the result is positive, as few healthy individuals are incorrectly classified [2] [4].

- Precision (Positive Predictive Value - PPV): The proportion of positive test results that are true positives [1] [3]. The formula is: Precision (PPV) = True Positives / (True Positives + False Positives) [1]. Unlike sensitivity and specificity, PPV is influenced by disease prevalence in the population being tested [1] [4].

Table 1: Diagnostic Test Outcome Matrix and Key Metrics

| Test Result vs. Truth | Condition Present | Condition Absent | Calculation Formula |

|---|---|---|---|

| Positive | True Positive (TP) | False Positive (FP) | |

| Negative | False Negative (FN) | True Negative (TN) | |

| Metric | Definition | Focus | Formula |

| Sensitivity | Ability to detect true positives | Disease detection | TP / (TP + FN) |

| Specificity | Ability to exclude true negatives | Specific identification | TN / (TN + FP) |

| Precision (PPV) | Accuracy of positive calls | Result reliability | TP / (TP + FP) |

Relationships and Trade-offs Between Parameters

A fundamental understanding for researchers is that sensitivity and specificity often exist in a trade-off relationship [1] [2]. Adjusting the threshold or cut-off point for a positive test result typically increases one of these metrics at the expense of the other [2] [5]. This relationship is visually represented in receiver operating characteristic (ROC) curves, which plot the true positive rate (sensitivity) against the false positive rate (1 - specificity) across various thresholds [3]. The optimal balance depends on the clinical or research context. For a life-threatening disease with an effective treatment, a highly sensitive test is prioritized to avoid missing cases. Conversely, if a positive test leads to an invasive follow-up procedure, high specificity becomes more critical to minimize false positives [2] [4]. Precision is profoundly impacted by disease prevalence; even tests with high sensitivity and specificity can have low precision (PPV) when screening for rare conditions because the number of false positives may overwhelm the true positives [1] [4].

Comparative Performance of Molecular Diagnostic Assays

Comprehensive Genomic Profiling Assays

Comprehensive genomic profiling assays represent the cutting edge of precision oncology, utilizing next-generation sequencing (NGS) to analyze a wide spectrum of genomic alterations. The validation of these assays demands rigorous demonstration of sensitivity, specificity, and precision across diverse genomic contexts.

MI Cancer Seek is an FDA-approved comprehensive molecular test that utilizes whole exome and whole transcriptome sequencing. Its clinical and analytical validation demonstrated non-inferiority to other FDA-approved companion diagnostic (CDx) tests, achieving >97% positive percent agreement (sensitivity) and >97% negative percent agreement (specificity) [6]. The assay is designed to maximize information from limited patient tissue, requiring a minimum input of only 50 ng of DNA and RNA for simultaneous analysis. This high level of accuracy supports its use as a safe and effective option for comprehensive molecular profiling in oncology, enabling biomarker-directed therapy selection [6].

FoundationOneCDx, another major comprehensive genomic profiling assay, has also undergone extensive clinical and analytical validation. While the provided search results do not give its exact sensitivity and specificity figures, they confirm it is a widely recognized and validated comprehensive genomic profiling assay for solid tumors, establishing a benchmark in the field [6].

Table 2: Comparative Analytical Performance of Select Diagnostic Assays

| Assay / Test | Technology / Method | Reported Sensitivity | Reported Specificity | Key Application Context |

|---|---|---|---|---|

| MI Cancer Seek [6] | Whole Exome & Whole Transcriptome Sequencing | >97% (PPA) | >97% (NPA) | Solid tumor profiling, 8 CDx indications |

| OncoSeek [7] | AI-enabled protein tumor markers (PTMs) | 58.4% (overall) | 92.0% (overall) | Multi-cancer early detection (MCED) |

| Galleri [8] | Methylation-based liquid biopsy | Not specified in results | >99.5% (in case-control studies) | Multi-cancer early detection (MCED) |

Multi-Cancer Early Detection (MCED) Tests

MCED tests represent a revolutionary approach to oncology, aiming to detect multiple cancers from a single blood sample. Their validation presents unique challenges, as performance must be characterized across a wide array of cancer types and stages.

OncoSeek is an AI-empowered, blood-based MCED test that measures seven protein tumor markers (PTMs). In a large-scale validation study encompassing 15,122 participants from seven centers, the assay demonstrated an overall sensitivity of 58.4% and a specificity of 92.0% [7]. Performance varied significantly by cancer type, with sensitivities ranging from 38.9% for breast cancer to 83.3% for bile duct cancer, highlighting how the same test can have varying analytical performance across different biological contexts [7]. The area under the curve (AUC) for the overall cohort was 0.829, indicating good classification ability.

The Galleri MCED test illustrates the critical importance of validation context. In retrospective case-control studies, it demonstrated a specificity of greater than 99.5% [8]. However, the test's developers emphasize that performance in such controlled studies can differ from performance in prospective, interventional studies within the intended-use population. For instance, they note that another MCED test, CancerSEEK, reported a specificity of >99% in a case-control study [8], but when evaluated in a clinical trial, its specificity was 95.3%—a more than four-fold increase in the false-positive rate [8]. This underscores that analytical performance claimed from early-stage studies may not translate directly to real-world clinical settings, and validation in the intended-use population is paramount.

Experimental Protocols for Parameter Validation

General Workflow for Test Validation

The validation of sensitivity, specificity, and precision follows a structured experimental pathway designed to comprehensively challenge the assay's performance limits. The process begins with the establishment of a "ground truth" or reference standard, which serves as the benchmark against which the new test is compared [3]. Subsequent steps involve testing well-characterized sample sets that include both positive and negative cases to populate the 2x2 contingency matrix from which all metrics are calculated [1]. A critical phase is the determination of the test's cutoff value, which directly governs the balance between sensitivity and specificity; this is often explored using ROC curves [3]. Finally, the assay's precision is assessed through repeatability and reproducibility experiments, measuring its consistency across different runs, operators, and laboratories [7].

Key Methodologies from Featured Studies

Validation of MI Cancer Seek: The validation process for this NGS-based assay was designed to demonstrate non-inferiority to existing FDA-approved CDx tests [6]. The methodology involved analyzing a large number of samples with pre-determined mutation statuses across the genes covered by the test. The protocol simultaneously analyzed DNA and RNA from a minimal tissue input (50 ng), assessing accuracy by comparing its results to the outcomes of established, validated comparator assays. The study measured Positive Percent Agreement (PPA, analogous to sensitivity) and Negative Percent Agreement (NPA, analogous to specificity), with both values exceeding 97% [6].

Large-Scale Multi-Centre Validation of OncoSeek: This study integrated four additional cohorts with previously published data, creating a massive validation set of 15,122 participants from seven centers in three countries [7]. The experimental protocol was designed to test robustness across variables. It included:

- Repetitive Experiments: A subset of samples was tested across different laboratories (SeekIn and Shenyou) using the same platform (Roche Cobas e401) and also across different sample types (plasma and serum from the same patients) and different Roche instruments (Cobas e411 and e601) [7].

- Consistency Assessment: The correlation of protein tumor marker (PTM) results was evaluated using Pearson correlation coefficients, which reached 0.99 to 1.00, demonstrating high inter-laboratory and inter-platform reproducibility [7].

- Performance Calculation: For each cohort and the combined "ALL" cohort, sensitivity and specificity were calculated based on the test's ability to classify cancer patients versus non-cancer individuals, with results visualized using ROC curves [7].

The Scientist's Toolkit: Essential Research Reagents and Materials

The consistent and accurate validation of diagnostic assays relies on a standardized set of high-quality materials and reagents. The following table details key components used in the featured experiments and their critical functions in the validation workflow.

Table 3: Essential Research Reagents and Materials for Diagnostic Validation

| Item / Reagent | Function in Validation | Example from Context |

|---|---|---|

| Biobanked Samples | Provide the "ground truth" for calculating sensitivity/specificity; include both positive (disease) and negative (control) specimens. | Used in all large-scale studies [6] [7] to establish accuracy metrics. |

| Reference Standard / Gold Standard Test | Serves as the benchmark against which the new test is validated. | FDA-approved CDx tests used as comparator for MI Cancer Seek [6]; NDI database for mortality validation [9]. |

| Quantification Platforms | Instrumentation for precisely measuring analyte levels (e.g., DNA, RNA, proteins). | Roche Cobas e411/e601, Bio-Rad Bio-Plex 200 used for OncoSeek [7]. |

| Protein Tumor Markers (PTMs) | Analyte targets for tests based on protein signatures. | Panel of seven selected PTMs used in the OncoSeek assay [7]. |

| NGS Library Prep Kits | Reagents for preparing sequencing libraries from DNA and RNA. | Implicitly required for whole exome and whole transcriptome sequencing in MI Cancer Seek [6]. |

| Bioinformatic Analysis Pipelines | Software and algorithms for processing raw data into interpretable results. | AI-algorithm for OncoSeek [7]; analysis pipelines for NGS data in MI Cancer Seek [6]. |

Critical Considerations in Validation Study Design

A sophisticated understanding of validation data requires scrutiny of the study design from which it originated. Several factors can significantly influence the reported performance of an assay.

- Impact of Imperfect Gold Standards: A foundational assumption is that the reference test is 100% accurate. However, this is often not the case [9]. An imperfect gold standard can systematically bias results. For example, a simulation study showed that decreasing gold standard sensitivity leads to underestimation of test specificity, with the effect magnified at higher disease prevalence [9]. At 98% prevalence, a gold standard with 99% sensitivity suppressed a test's measured specificity from 100% to below 67% [9].

- Variation Across Healthcare Settings: Test accuracy is not necessarily a fixed attribute. A meta-epidemiological study found that sensitivity and specificity can vary in both direction and magnitude between non-referred (e.g., primary care) and referred (e.g., specialist care) settings [10]. These differences did not follow a universal pattern, varying by test and condition, which underscores the importance of considering the validation setting when interpreting performance data [10].

- Study Population and Intended Use: Perhaps the most critical consideration is whether a test has been validated in its intended-use population [8]. Performance in retrospective case-control studies—which often use readily available samples from sick patients and healthy controls—can be markedly different from performance in prospective, interventional studies that screen a general population with low disease prevalence [8]. This difference arises from factors like spectrum bias, disease prevalence, and the "healthy volunteer" effect. True clinical validation is only established in studies that reflect real-world conditions [8].

The development and approval of Companion Diagnostics (CDx) require navigation through a complex, multi-agency regulatory landscape in the United States. This framework is primarily governed by the Food and Drug Administration (FDA), which oversees test approval and categorization, and the Clinical Laboratory Improvement Amendments (CLIA), which regulate laboratory testing quality standards. A critical understanding of these interconnected pathways is essential for researchers and drug development professionals aiming to bring novel cancer diagnostics to the clinical market. The FDA's recent shift from enforcement discretion to active regulation of Laboratory Developed Tests (LDTs) marks the most significant regulatory change in decades, fundamentally altering the compliance timeline for many molecular assays [11].

CLIA establishes the foundational quality standards for all laboratory testing performed on human specimens, with regulations enforced by the Centers for Medicare & Medicaid Services (CMS) [12]. Tests are categorized based on their complexity—waived, moderate, or high—which determines the stringency of the applicable CLIA requirements [13]. For commercially available FDA-cleared or approved tests, the FDA itself assigns the complexity category during the pre-market review process [13]. The College of American Pathologists (CAP) offers an accreditation program that often exceeds basic CLIA requirements, providing an additional layer of quality assurance through peer-based inspections and specialized checklists [12]. For CDx developers, understanding the interplay between FDA pre-market review and ongoing CLIA compliance, potentially enhanced by CAP accreditation, is crucial for strategic planning.

Comparative Analysis of Regulatory Bodies

The successful commercialization of a companion diagnostic hinges on meeting the distinct yet overlapping requirements of the FDA, CLIA, and voluntary accreditation bodies like CAP. The table below summarizes the core focus and requirements of each.

Table 1: Key Regulatory and Accreditation Bodies for CDx Development

| Agency/ Body | Primary Role & Focus | Key Requirements for CDx | Applicability to CDx |

|---|---|---|---|

| FDA | Pre-market review and approval of tests as medical devices; ensures safety and effectiveness [11]. | Premarket Approval (PMA), 510(k), or De Novo classification; Quality System Regulation (QSR); labeling requirements [11]. | Mandatory for CDx claims. Phased implementation for LDTs from 2025-2028 [11]. |

| CLIA (via CMS) | Regulates laboratory operations and quality standards for patient testing [12] [13]. | Quality control, proficiency testing, personnel qualifications, quality assurance [12]. | Mandatory for any laboratory performing clinical testing for patient care [12]. |

| CAP | Voluntary accreditation that often exceeds CLIA standards through peer-based inspections [12]. | Adherence to detailed checklists for all laboratory disciplines; stricter proficiency testing standards [12] [14]. | Voluntary, but demonstrates a higher commitment to quality and is often required by sponsors. |

The FDA's Evolving Role and Phased Implementation for LDTs

The FDA's authority over CDx is exercised through its standard medical device regulatory pathways. The agency categorizes tests as waived, moderate complexity, or high complexity based on seven specific criteria, which directly influence their CLIA requirements [12] [15] [13]. A pivotal recent development is the FDA's April 2024 final rule, which phases out its enforcement discretion policy for Laboratory Developed Tests (LDTs). This rule explicitly includes LDTs in the definition of in vitro diagnostic products (IVDs), subjecting them to medical device regulations under a structured, multi-year timeline [11].

Table 2: FDA Phased Compliance Timeline for Laboratory Developed Tests (LDTs)

| Stage | Deadline | Key Requirements |

|---|---|---|

| Stage 1 | May 6, 2025 | Medical Device Reporting (MDR), correction and removal reporting, and complaint handling [11]. |

| Stage 2 | May 6, 2026 | Establishment registration & device listing, labeling, and investigational use requirements [11]. |

| Stage 3 | May 6, 2027 | Implementation of Quality System Requirements (21 CFR Part 820) [11]. |

| Stage 4 | November 6, 2027 | Premarket Approval (PMA) submissions for high-risk IVDs [11]. |

| Stage 5 | May 6, 2028 | 510(k) or De Novo submissions for moderate- and low-risk IVDs [11]. |

CLIA Compliance and Laboratory Certification

CLIA compliance is non-negotiable for any laboratory reporting patient-specific results. For non-waived testing (moderate and high complexity), laboratories must earn a CLIA certificate by meeting specific quality standards. There are several pathways to certification. A laboratory may obtain a Certificate of Compliance by passing a state agency survey, or a Certificate of Accreditation by being surveyed by an approved accreditation organization like CAP or COLA, which have "deeming authority" from CMS [12]. These accrediting bodies evaluate laboratories to ensure they not only meet but often exceed CLIA standards, providing sponsors with greater confidence in data quality [12].

Recent Regulatory Updates for 2025

The regulatory environment is dynamic, with key changes taking effect in 2025. CLIA updates now classify hemoglobin A1c as a regulated analyte, with CMS setting a performance criterion of target value ±8% for proficiency testing. CAP-accredited laboratories, however, must meet a stricter ±6% accuracy threshold, illustrating how accreditation can impose higher standards [16] [14]. Personnel qualifications have also been revised; nursing degrees no longer automatically qualify as equivalent to biological science degrees for high-complexity testing, though equivalency pathways exist [16]. Furthermore, proficiency testing criteria for unexpected antibody detection have tightened, requiring 100% accuracy compared to the previous 80% [14].

Analytical Validation Case Study: MI Cancer Seek

A recent example of a comprehensively validated CDx is the MI Cancer Seek assay from Caris Life Sciences. This FDA-approved test is a whole exome and whole transcriptome sequencing-based comprehensive molecular profiling assay intended for adult and pediatric tumor profiling [6]. Its validation provides a robust model for the analytical rigor required for regulatory approval.

Experimental Protocol for Test Validation

The clinical and analytical validation study for MI Cancer Seek followed a rigorous protocol to demonstrate its safety and efficacy as a CDx. The key methodological components included:

- Sample Input and Processing: The assay was validated to work with a minimal input of 50 ng of DNA and RNA, co-extracted from formalin-fixed paraffin-embedded (FFPE) tumor samples. This is a critical validation step given the frequent sample limitations in oncology [6].

- Comparative Agreement Study: The test's performance was benchmarked against other FDA-approved companion diagnostic tests. The study demonstrated a >97% negative percent agreement (NPA) and positive percent agreement (PPA), establishing non-inferiority and reliability [6].

- Precision, Sensitivity, and Specificity Analysis: The validation included standard measurements of analytical precision (repeatability and reproducibility), as well as assessments of sensitivity and specificity to confirm the test's accuracy in detecting a wide range of genomic alterations, including mutations in PIK3CA, EGFR, BRAF, and KRAS/NRAS [6].

- Biomarker Detection Performance: The assay was specifically validated for complex biomarkers crucial for immunotherapy, such as Tumor Mutational Burden (TMB) and Microsatellite Instability (MSI), showing near-perfect accuracy for MSI status in colorectal and endometrial cancers [17].

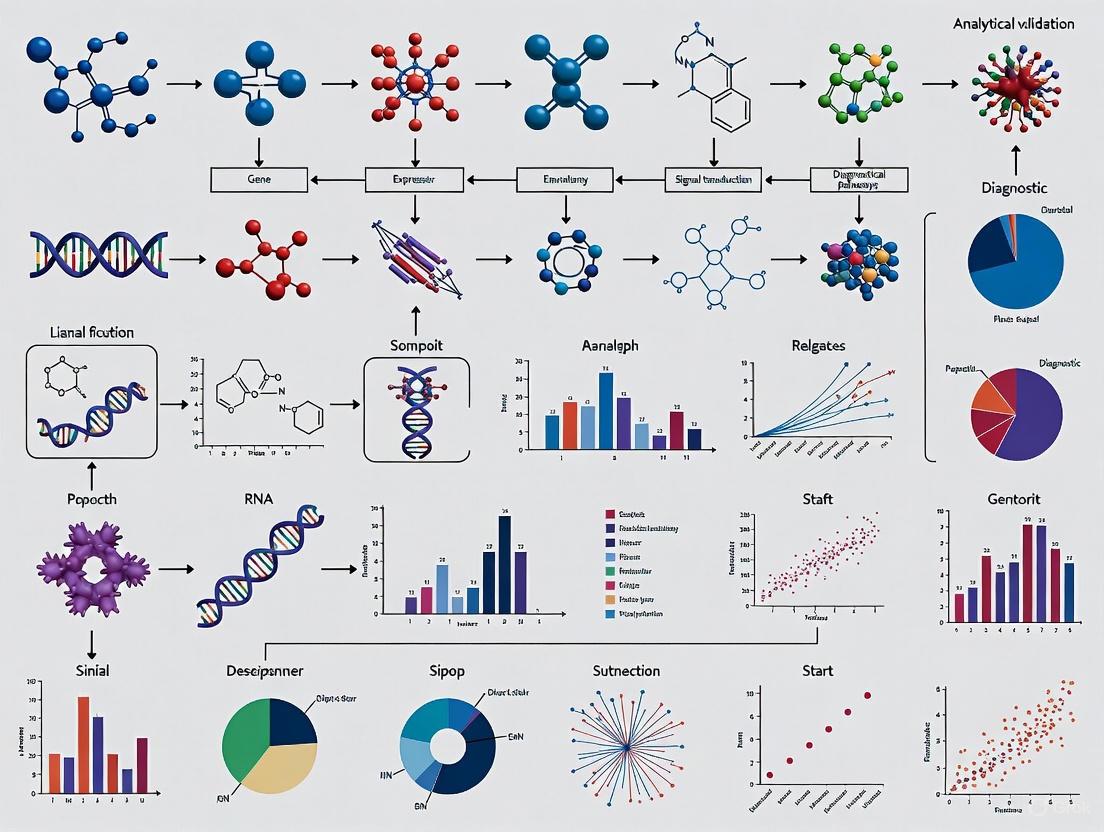

Diagram 1: MI Cancer Seek Assay Workflow

Performance Data and Comparison

The validation data for MI Cancer Seek demonstrates its capability as a comprehensive profiling tool. The following table summarizes key performance metrics as reported in its validation study, providing a benchmark for expected data quality in CDx submissions.

Table 3: MI Cancer Seek Analytical Validation Performance Summary

| Validation Parameter | Performance Metric | Experimental Detail |

|---|---|---|

| Analytical Concordance | >97% NPA and PPA | Comparison against other FDA-approved CDx tests [6]. |

| Tissue Input Requirement | 50 ng DNA and RNA | Validated using FFPE tumor samples [6]. |

| MSI Detection Accuracy | Near-perfect concordance | Specifically in colorectal and endometrial cancers [17]. |

| Key Alterations Detected | Mutations in PIK3CA, EGFR, BRAF, KRAS/NRAS; TMB; MSI | Broad biomarker coverage supporting therapy selection [17]. |

Essential Research Reagent Solutions for CDx Development

The development and validation of a CDx like MI Cancer Seek require a suite of specialized reagents and materials. The table below details key components of the "research toolkit" for comprehensive molecular profiling.

Table 4: Essential Research Reagent Solutions for Molecular Profiling Assays

| Reagent / Material | Function in Assay Workflow | Application in Validation |

|---|---|---|

| FFPE Tumor Tissue Sections | Source of tumor DNA and RNA; mimics real-world clinical samples. | Used for analytical validation with minimal input (50 ng) [6]. |

| Nucleic Acid Co-Extraction Kits | Simultaneous purification of DNA and RNA from a single sample. | Maximizes patient tissue and ensures analyte integrity for sequencing [6]. |

| Whole Exome & Transcriptome\nLibrary Prep Kits | Prepares sequencing libraries to target all protein-coding genes and expressed RNA. | Enables comprehensive biomarker detection from a single test [6]. |

| Multiplex NGS Panels | Allows parallel sequencing of multiple genes for variant detection. | Validated for detection of SNVs, indels, CNAs, fusions, TMB, and MSI [6]. |

| Reference Standard Materials | Samples with known genomic alterations. | Used for determining assay sensitivity, specificity, and precision [6]. |

Successfully navigating the regulatory landscape for companion diagnostics demands a strategic, integrated approach that views FDA pre-market review and CLIA/CAP laboratory compliance not as separate hurdles, but as interconnected components of a single product lifecycle. The recent FDA final rule on LDTs and updated 2025 CLIA standards signal a clear regulatory trend toward greater scrutiny and higher quality demands. For researchers and drug development professionals, early and continuous engagement with these requirements is paramount. Building a robust analytical validation dossier, as demonstrated by the MI Cancer Seek case study, with a focus on precise performance metrics and rigorous experimental protocols, provides the foundation for not only regulatory approval but also clinical confidence in the era of precision oncology.

The Critical Role of Reference Materials and Standardized Procedures in Biomarker Verification

In the era of precision medicine, cancer biomarkers are indispensable tools for guiding clinical decisions, from risk assessment and early detection to treatment selection and therapeutic monitoring [18]. The journey of a biomarker from initial discovery to routine clinical application is long and arduous, requiring rigorous validation to ensure it delivers reliable, actionable information [18] [19]. This process rests on two foundational pillars: analytical validation, which assesses the assay's performance characteristics, and clinical validation, which establishes its ability to inform about a clinical condition [20]. A biomarker is only as good as the procedure used to measure it, and even a biomarker with strong clinical potential will fail if the assay lacks accuracy, reproducibility, and reliability [19] [20].

Within this framework, biomarker verification represents a critical early stage where initial discoveries are assessed for analytical robustness. The core objective of verification is to determine whether a biomarker assay performs with sufficient precision, sensitivity, and accuracy to justify proceeding to larger-scale clinical validation studies [21]. This phase demands standardized procedures and well-characterized reference materials (RMs) to ensure that results are consistent, comparable, and trustworthy across different laboratories and platforms [21] [22]. Without these standards, the promise of precision medicine is jeopardized by inconsistent results and a lack of analytical reliability.

The Necessity of Standards and Reference Materials

The Challenge of Analytical Variability

The development of cancer biomarker tests, particularly for novel analytes like circulating tumor DNA (ctDNA), faces a significant hurdle: the wide variety of measurement technologies can lead to profound inconsistencies that undermine progress in the field [23]. For ctDNA analysis, the challenge is especially acute because somatic variant alleles are typically present in very low concentrations relative to the background of germline DNA, pushing the limits of assay sensitivity and requiring meticulous validation [22]. Similarly, in measurements of DNA methylation—a key hallmark of many cancers—the bisulfite treatment process, considered the gold standard, can introduce false positives and lead to DNA fragmentation, resulting in biased data [22]. These technical variabilities mean that without a common ground for calibrating instruments and evaluating performance, results from different laboratories and technology platforms cannot be meaningfully compared or trusted.

Reference Materials as a Solution

Reference Materials are well-characterized, homogeneous, and stable samples that serve as a benchmark to ensure measurement methods are working correctly [24]. They are essential for several key aspects of the verification process [22] [24]:

- Assay Development and Validation: RMs provide a known quantity of an analyte to help optimize and test new assay protocols.

- Quality Control: They can be run alongside patient samples to verify that an entire testing process is under control.

- Harmonization: By using the same standard, different laboratories and different assays can align their results, ensuring that a patient receives the same diagnosis regardless of where they are tested.

- Regulatory Pathways: The availability of recognized RMs can accelerate the regulatory approval of novel diagnostic technologies [23].

Major initiatives recognize this need. The National Institute of Standards and Technology (NIST) develops RMs for cancer biomarkers, such as SRM 2373 for HER2 gene copy number measurement and RM 8366 for EGFR and MET gene copy numbers [22]. Furthermore, the Biomarkers Consortium's ctDNA Quality Control Materials Project brings together stakeholders from industry, academia, and government to develop a set of nationally recognized standards for ctDNA testing, which is vital for advancing liquid biopsy applications [23].

Standardized Procedures in Biomarker Verification

Principles of Analytical Validation

Analytical validation is the systematic process of evaluating a biomarker assay to confirm that its performance characteristics are fit for its intended purpose [25]. It is a prerequisite for establishing the assay's clinical utility [19] [20]. This process requires the unambiguous identification of the biomarker and a clear definition of the test's intended use context (e.g., prognosis, prediction) [20]. A rigorously validated assay must be demonstrated to be accurate, reproducible, and reliable [19]. The core components of analytical validation are detailed in the table below.

Table 1: Core Components of Analytical Validation for Biomarker Assays

| Performance Characteristic | Definition | Importance in Verification |

|---|---|---|

| Accuracy | The closeness of agreement between the test result and the true value of the analyte. | Ensures the assay provides unbiased, "true" results, which is fundamental to all clinical applications [25]. |

| Precision | The closeness of agreement between repeated measurements of the same sample under specified conditions. | Demonstrates the assay's reproducibility and reliability across different runs, operators, and days [20]. |

| Sensitivity (Analytical) | The lowest concentration of an analyte that an assay can reliably detect. | Critical for detecting low-abundance biomarkers, such as ctDNA in early-stage cancer [22]. |

| Specificity | The ability of the assay to detect only the intended analyte, without cross-reactivity. | Minimizes false-positive results by ensuring the signal is generated by the target biomarker [25]. |

| Linearity | The ability of the assay to produce results that are directly proportional to the analyte concentration in the sample. | Confirms that the assay provides quantitative results across a defined measuring range [25]. |

Controlling Bias and Ensuring Reproducibility

Beyond the core performance metrics, the design of verification studies is paramount to success. Bias—a systematic shift from the truth—is one of the greatest causes of failure in biomarker studies [18]. Bias can enter during patient selection, specimen collection, processing, and data analysis. To mitigate this, verification procedures must incorporate randomization and blinding [18]. For example, specimen from cases and controls should be randomly assigned to testing plates to control for "batch effects," and the individuals generating the biomarker data should be blinded to the clinical outcomes to prevent assessment bias [18]. Furthermore, the pre-specification of an analytical plan—defining outcomes, hypotheses, and success criteria before data are generated—is essential to avoid data-driven analyses that are less likely to be reproducible in an independent dataset [18].

Comparative Analysis of Reference Material Applications

The utility of reference materials is best illustrated through specific examples. The following table compares the application and impact of different standardized materials in verifying assays for distinct types of biomarkers.

Table 2: Comparison of Reference Materials for Different Biomarker Types

| Biomarker Type / Material | Intended Use | Key Features & Composition | Impact on Assay Verification |

|---|---|---|---|

| Genomic DNA: HER2 (SRM 2373) [22] | Verify assays for HER2 gene copy number variation (CNV) in breast cancer. | Certified values for HER2 gene copy number ratio to reference genes. | Enabled harmonization of HER2 CNV measurements across qPCR, dPCR, and NGS platforms, ensuring accurate patient selection for anti-HER2 therapy. |

| Circulating Tumor DNA (ctDNA) [22] [23] | Validate "liquid biopsy" assays for detecting somatic variants in blood. | Customizable DNA fragments with specific cancer variants spiked into a background of normal cfDNA at defined variant allele fractions (VAFs). | Provides a controlled material to validate the sensitivity and specificity of ctDNA assays, especially for detecting low-frequency variants. |

| Methylated DNA (Phase I & II) [22] | Control for DNA methylation measurements, e.g., for cancer early detection. | Genomic DNA (Phase I) and cell-free DNA (Phase II) with defined methylation patterns. | Helps identify false positives from incomplete bisulfite conversion and standardizes methylation quantification across labs and technologies. |

Experimental Workflows and Protocols

A Generalized Workflow for Biomarker Verification

The following diagram outlines a logical workflow for biomarker verification, highlighting the iterative role of reference materials and standardized protocols.

Detailed Protocol: Verification of a ctDNA Assay

This protocol provides a detailed methodology for using NIST-style ctDNA test materials to verify the analytical sensitivity of a sequencing-based liquid biopsy assay.

Objective: To determine the limit of detection (LoD) for a panel of somatic single-nucleotide variants (SNVs) in a ctDNA assay.

Materials:

- NIST ctDNA Test Materials (or equivalent): Comprising a mix of synthetic ~160 bp DNA fragments harboring specific SNVs and a background of sheared normal genomic DNA [22].

- DNA Quantification Kit (e.g., fluorometric).

- Next-Generation Sequencing Library Prep Kit.

- Bioinformatics Pipeline for variant calling.

Procedure:

- Reconstitution and Dilution: Reconstitute the NIST ctDNA test material according to the manufacturer's instructions. Perform a serial dilution to create a panel of samples with variant allele fractions (VAFs) spanning the expected LoD (e.g., 2%, 1%, 0.5%, 0.1%, 0.01%).

- Library Preparation and Sequencing: For each dilution level (including a negative control with 0% VAF), perform library preparation in a minimum of n=5 replicates to assess reproducibility. Sequence all libraries on the designated NGS platform to achieve a minimum coverage of 10,000x.

- Data Analysis: Process the raw sequencing data through the established bioinformatics pipeline to generate VCF files.

- Calculate Sensitivity: For each variant at each VAF level, sensitivity is calculated as (Number of replicates where variant was correctly called / Total number of replicates) * 100.

- Calculate Specificity: Assess the false positive rate in the negative control and across the genome in regions not expected to contain variants.

- Determine LoD: The LoD is defined as the lowest VAF at which all targeted variants are detected with ≥95% sensitivity and ≥99.9% specificity across all replicates.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagent Solutions for Biomarker Verification

| Reagent / Material | Function in Verification | Example Application |

|---|---|---|

| Certified Genomic DNA RMs | Calibrate and validate assays for gene copy number variation and mutations. | Using SRM 2373 to ensure accuracy of HER2 amplification testing by qPCR or NGS [22]. |

| Cell-free DNA RMs | Act as a commutable control for liquid biopsy assay development. | Using characterized ctDNA materials with known VAFs to establish the sensitivity and precision of a new ctDNA panel [22] [23]. |

| DNA Methylation RMs | Control for biases introduced during bisulfite conversion and quantify methylation levels. | Using Phase II methylated cfDNA RMs to compare the performance of different methylation detection assays [22]. |

| Stable, Multiplexed Controls | Monitor the performance of complex, multi-analyte assays over time. | A plasmid-based control containing multiple SNVs, indels, and fusions to run as a within-batch control for an NGS cancer panel. |

| Standardized Nucleic Acid Extraction Kits | Ensure consistent yield and quality of analytes from raw specimens, controlling for pre-analytical variability. | Using the same validated kit for extracting DNA from plasma across all samples in a verification study to minimize introduction of bias [18]. |

The path from a promising biomarker discovery to a clinically useful diagnostic test is paved with analytical rigor. Reference materials and standardized procedures are not merely supportive elements but are foundational to the entire verification process. They provide the objective benchmarks needed to ensure that biomarker assays are accurate, reproducible, and comparable across the global diagnostic and research landscape. As technologies evolve towards analyzing ever more challenging analytes like low-frequency ctDNA and complex epigenetic markers, the demand for high-quality standards will only intensify. The ongoing collaborative efforts by organizations like NIST, the FNIH Biomarkers Consortium, and international alliances are critical to fulfilling this demand. By steadfastly adhering to the principles of analytical validation and leveraging these essential tools, researchers and drug developers can confidently translate biomarker discoveries into reliable tests that truly advance the field of precision oncology.

The landscape of cancer diagnostics and therapeutic development is undergoing a fundamental transformation, moving from a traditional "one-drug-fits-all" approach to a biomarker-driven personalized medicine paradigm [26]. This shift places molecular biomarkers at the center of clinical decision-making, requiring robust and validated assays that can reliably inform treatment selection. Advances in biotechnology, particularly next-generation sequencing (NGS) and liquid biopsy applications, have redefined what constitutes a biomarker, enabling assays to provide biological information down to the single-cell level [26]. The journey of a biomarker assay from initial discovery to clinical application is a complex, multi-stage process requiring rigorous analytical validation, clinical verification, and demonstration of clear clinical utility. This guide objectively compares the performance of current biomarker technologies and platforms, providing researchers and drug development professionals with a structured framework for navigating this critical pathway.

Biomarker Assay Development Roadmap: From Discovery to Clinical Utility

The transition of a biomarker assay to clinical use follows a defined pathway encompassing discovery, translation, and qualification [26]. The following diagram illustrates this roadmap, highlighting key activities, stakeholders, and decision points at each phase.

This development pathway requires careful consideration at each transition point. The discovery phase leverages large-scale multi-omics data initiatives and computational approaches like genome-wide association studies (GWAS) and quantitative systems pharmacology (QSP) to identify biologically relevant and druggable targets [26]. Success in this phase depends heavily on deep understanding of disease pathophysiology and the efficient utilization of real-world data [26]. During the translation phase, promising biomarkers are developed into robust, clinically feasible assays, with optimization of technical parameters and platform selection. The qualification phase involves rigorous analytical validation and clinical verification to establish the relationship between the biomarker measurement and clinical endpoints. Finally, clinical implementation requires demonstration of utility in real-world settings leading to incorporation into clinical guidelines and routine patient management.

Comparative Analysis of Biomarker Technologies and Platforms

Performance Metrics for Molecular Biomarker Assays

Well-chosen biomarkers with strong analytical and clinical performance are essential for increasing the efficiency of clinical trials and drug discovery [27]. The field has moved toward standardized frameworks for comparing biomarkers on predefined criteria including precision in capturing change and clinical validity [27]. The table below summarizes key performance metrics for recently developed biomarker assays across different technological approaches.

Table 1: Comparative Performance Metrics of Select Biomarker Assays

| Assay/Platform | Technology | Indication | Sensitivity | Specificity | Key Performance Characteristics | Regulatory Status |

|---|---|---|---|---|---|---|

| OncoDetect (Exact Sciences) [28] | Tumor-informed MRD (ctDNA) | Stage II-IV Colorectal Cancer | N/A | N/A | 24-37x increased recurrence risk when ctDNA-positive; Tracks ~5,000 variants; LOD: <1 part per million [28] | Clinical validation completed; Next-gen launch 2026 [28] |

| MI Cancer Seek (Caris) [6] | Whole Exome & Whole Transcriptome Sequencing | Solid Tumors (Companion Diagnostic) | >97% PPA (Positive Percent Agreement) | >97% NPA (Negative Percent Agreement) | 50 ng minimum input; MSI identification: near-perfect accuracy in colorectal/endometrial cancer [17] [6] | FDA-approved; 8 CDx indications [6] |

| DNA Methylation Biomarkers (Liquid Biopsy) [29] | Various (WGBS, RRBS, Targeted) | Multi-Cancer Early Detection | Varies by marker and cancer type | Varies by marker and cancer type | Early emergence in tumorigenesis; Stable epigenetic marks; Enrichment in cfDNA due to nuclease resistance [29] | Few FDA-approved (Epi proColon, Shield); Others with Breakthrough Device designation [29] |

| Structural MRI Biomarkers [27] | Volumetric MRI | Alzheimer's Disease (MCI & Dementia) | N/A | N/A | High precision in detecting change: Ventricular volume & hippocampal volume best; Clinical validity varies by group [27] | Research use; Framework for surrogate endpoint evaluation [27] |

Analytical Validation Frameworks and Statistical Considerations

Robust analytical validation is fundamental to establishing clinical utility. A standardized statistical framework has been proposed to operationalize comparison criteria for biomarkers, including precision in capturing change and clinical validity [27]. This framework enables inference-based comparisons of biomarker performance, which is particularly valuable when evaluating multiple candidate biomarkers simultaneously. Key considerations in analytical validation include:

Precision: The ability of a biomarker to capture change over time with small variance relative to the estimated change [27]. For example, in Alzheimer's disease, ventricular volume and hippocampal volume showed the best precision in detecting change over time in individuals with both mild cognitive impairment and dementia [27].

Clinical Validity: The association between the biomarker measurement and clinically relevant endpoints. This varies by disease state and population, requiring careful stratification in validation studies [27].

Limit of Detection (LOD): Particularly critical for liquid biopsy applications where tumor-derived material may be present at extremely low concentrations. Next-generation approaches like the MAESTRO technology aim for detection below 1 part per million [28].

Reproducibility: Consistency of results across different laboratory conditions, operators, and sample types. MI Cancer Seek, for example, maintains precision across different lab conditions and varying DNA input levels [17] [6].

Experimental Protocols for Key Biomarker Validation Studies

Liquid Biopsy DNA Methylation Analysis Workflow

DNA methylation biomarkers in liquid biopsies represent a promising approach for minimally invasive cancer detection and monitoring, though few have achieved routine clinical implementation [29]. The following workflow outlines a comprehensive protocol for development and validation of DNA methylation-based biomarkers.

Key Methodological Considerations:

Sample Collection & Processing: Plasma is generally preferred over serum for ctDNA analysis due to less contamination from genomic DNA of lysed cells and higher stability of ctDNA [29]. For cancers with direct access to local fluids (e.g., urine for bladder cancer, bile for biliary tract cancers), these sources often provide higher biomarker concentration and reduced background noise [29].

Bisulfite Conversion: The cornerstone of most DNA methylation analysis methods, this chemical treatment converts unmethylated cytosines to uracils while leaving methylated cytosines unchanged. Alternative enzymatic methods (e.g., EM-seq) better preserve DNA integrity, particularly valuable with limited DNA quantities in liquid biopsies [29].

Discovery vs. Targeted Approaches: Whole-genome bisulfite sequencing (WGBS) and reduced representation bisulfite sequencing (RRBS) provide broad methylome coverage for biomarker discovery. For clinical validation, targeted methods like digital PCR (dPCR) offer highly sensitive, locus-specific analysis appropriate for low-abundance targets [29].

Bioinformatic Analysis: Specialized pipelines account for bisulfite conversion rates, align sequences to reference genomes, and calculate methylation levels at individual CpG sites. The stability of cancer-specific DNA methylation patterns, which often emerge early in tumorigenesis, makes them particularly attractive as biomarkers [29].

Comprehensive Genomic Profiling Validation Protocol

The validation of comprehensive genomic profiling assays like MI Cancer Seek follows a rigorous protocol to establish performance characteristics for companion diagnostic applications [6]. The experimental framework includes:

Sample Requirements: Validation of performance with minimal input (50 ng) of formalin-fixed paraffin-embedded (FFPE) tissue, representing real-world challenging samples [17] [6].

Analytical Accuracy: Comparison against FDA-approved companion diagnostic tests with calculation of positive percent agreement (PPA) and negative percent agreement (NPA), demonstrating >97% concordance for key biomarkers [6].

Precision Assessment: Evaluation of repeatability (same operator, same instrument) and reproducibility (different operators, different instruments) across multiple runs and days [6].

Limit of Detection (LOD) Determination: Serial dilutions of known positive samples to establish the lowest analyte concentration detectable with stated accuracy [6].

Specificity Evaluation: Testing against samples with known negative status and potentially cross-reactive substances to establish assay specificity [6].

The Scientist's Toolkit: Essential Research Reagent Solutions

The successful development and validation of biomarker assays depends on a suite of specialized research reagents and platforms. The following table details key solutions and their applications in biomarker research and development.

Table 2: Essential Research Reagent Solutions for Biomarker Assay Development

| Research Reagent / Platform | Function | Application in Biomarker Development |

|---|---|---|

| Next-Generation Sequencing Kits (e.g., Whole Exome, Whole Transcriptome) | Comprehensive genomic and transcriptomic profiling | Identification of novel biomarkers; Companion diagnostic development; Tumor mutational burden assessment [6] |

| Bisulfite Conversion Kits | Chemical conversion of unmethylated cytosines to uracils | DNA methylation biomarker discovery; Preservation of methylation patterns during sequencing [29] |

| Digital PCR Reagents | Absolute quantification of target sequences without standard curves | Validation of biomarker candidates; Low-abundance target detection in liquid biopsies [29] |

| Cell-Free DNA Extraction Kits | Isolation of circulating cell-free DNA from plasma, urine, other liquids | Liquid biopsy applications; Maximize yield and integrity of scarce ctDNA [29] |

| Immunoassay Reagents (e.g., antibodies, detection systems) | Protein biomarker detection and quantification | Measurement of protein biomarkers; Validation of proteomic discoveries [30] |

| MAESTRO Technology (Broad Institute) | Whole-genome sequencing for MRD detection with ultra-low LOD | Molecular residual disease monitoring; Tracking up to 5,000 patient-specific variants [28] |

| Bioinformatic Pipelines (e.g., for methylation analysis, variant calling) | Computational analysis of sequencing data | Methylation calling; Variant identification; Data normalization and interpretation [29] |

The successful transition of biomarker assays from discovery to clinical utility requires navigating a complex pathway of technical validation, clinical verification, and demonstration of patient benefit. The comparison of current technologies reveals a rapidly evolving landscape where liquid biopsy applications, comprehensive genomic profiling, and sensitive molecular residual disease detection are pushing the boundaries of cancer diagnostics. As the field advances, standardized statistical frameworks for biomarker comparison [27] and increased utilization of real-world evidence [26] will be crucial for identifying the most promising biomarkers and accelerating their implementation into clinical practice. The ongoing innovation in sensitivity, as demonstrated by technologies capable of detecting ctDNA below 1 part per million [28], promises to further expand the clinical utility of biomarker-driven approaches across the cancer care continuum. For researchers and drug development professionals, maintaining rigor in analytical validation while embracing innovative technologies will be essential to delivering on the promise of precision oncology.

Next-Generation Tools: Methodological Advances and Their Clinical Applications in Oncology

Performance Comparison of WES/WTS Assays and Alternatives

Comprehensive Genomic Profiling (CGP) using Whole Exome and Whole Transcriptome Sequencing (WES/WTS) represents a significant advancement in precision oncology. This section provides a data-driven comparison of leading WES/WTS assays against alternative genomic testing approaches, highlighting key performance metrics and clinical implications.

Commercially Available WES/WTS Assays

Table 1: Comparison of Commercially Available WES/WTS Comprehensive Genomic Profiling Assays

| Assay Name | Developer/Company | Key Technological Features | FDA Status | Reported Clinical Performance |

|---|---|---|---|---|

| OncoExTra | Exact Sciences | Whole exome, whole transcriptome, tumor-normal sequencing; detects SNVs, indels, CNAs, fusions, TMB, MSI | Laboratory-developed test (LDT) | Identified therapeutically actionable alterations in 92.0% of patient samples (n=11,091); 29.2% had on-label biomarkers [31]. |

| MI Cancer Seek | Caris Life Sciences | Combined WES/WTS; simultaneous DNA/RNA extraction from minimal tissue input (50 ng) [32] | FDA-approved Companion Diagnostic | Positive/Negative Percent Agreement: 97-100% for CDx claims; detects SNVs/indels in 228 genes, MSI, TMB [32]. |

| WES/WTS (Unspecified) | Academic/Research Implementation | Paired tumor-normal sequencing; enables accurate TMB calculation and germline variant discrimination [33] | Research Use | In NSCLC, reduced costs by $14,602 per patient vs. sequential single-gene testing with minimal survival benefit [33]. |

WES/WTS Versus Alternative Testing Strategies

Table 2: Economic and Clinical Outcome Comparison in Advanced NSCLC

| Testing Approach | Cost per Patient (USD) | Median Overall Survival | Key Limitations |

|---|---|---|---|

| WES/WTS | Lowest cost (Baseline) | Increased by 3.9 months vs. no testing [33] | Requires specialized infrastructure and bioinformatics expertise [34]. |

| No Genomic Testing | + $8,809 [33] | Baseline (Lowest) | Patients are excluded from potentially life-extending targeted therapies [33]. |

| Sequential Single-Gene Testing | + $14,602 [33] | Minimal benefit vs. WES/WTS [33] | Misses fusions without RNA sequencing; cannot identify TMB; risks tissue exhaustion [33]. |

| DNA Sequencing Alone | + $400 - $1,724 [33] | Not Specified | Misses 2.3%-13.0% of actionable alterations (RNA fusions) depending on prevalence [33]. |

The economic model demonstrates that WES/WTS is not only clinically superior but also cost-saving, primarily by efficiently matching patients to effective targeted therapies and avoiding the cumulative cost of multiple single-gene tests [33].

Detection of Structural Variants and Copy Number Alterations

Table 3: Analytical Performance in Detecting Complex Genomic Alterations

| Alteration Type | Testing Modality | Reported Performance / Findings | Context |

|---|---|---|---|

| Gene Fusions | WES/WTS (OncoExTra) | Detected clinically relevant fusions in 7.5% of samples; up to 42.0% in prostate cancer [31]. | Broad, unbiased detection across the transcriptome. |

| Copy Number Variants (CNVs) | WES (in Multiple Myeloma) | Specificity ≥91%; sensitivity 67-83% for large CNVs. Sensitivity dropped for FISH-detected gains/losses <20% [35]. | Less sensitive than FISH for subclonal CNVs but provides genome-wide data. |

| TERT Promoter Mutations | WES (OncoExTra) | Identified in 8.4% of solid tumor samples, including common and rare variants [31]. | Interrogates non-coding regions of clinical significance. |

Experimental Protocols and Validation Methodologies

The rigorous validation of WES/WTS assays is critical for their translation into clinical practice. This section outlines the standard experimental workflows and benchmarking methodologies used to establish the performance metrics described in the previous section.

Sample Preparation and Library Construction

A robust and standardized workflow is essential for generating high-quality, reliable sequencing data, especially from challenging but clinically ubiquitous FFPE tissue samples.

- Sample Quality Control (QC): DNA from FFPE tissues is rigorously quantified using fluorometry (e.g., Qubit) and assessed for fragmentation. QC steps include calculating a DNA Integrity Number (DIN) via TapeStation and performing multiplex PCR (e.g., for GAPDH) to determine an Average Yield Ratio, which estimates DNA fragmentation [36].

- Library Preparation: A typical protocol involves mechanically shearing 200-300 ng of genomic DNA to a fragment size of 100-700 bp using a focused ultrasonicator (e.g., Covaris E210). The sheared DNA undergoes end repair, A-tailing, and adapter ligation. For transcriptome analysis, RNA is extracted and converted to cDNA before library prep. Libraries are uniquely dual-indexed to enable multiplexing [37] [36].

- Hybridization Capture: Libraries are enriched using solution-based probes targeting the exome and transcriptome. Key commercial probe sets include:

- Twist Exome 2.0 (Twist Bioscience)

- IDT's xGen Exome Hyb Panel v2 (Integrated DNA Technologies)

- TargetCap Core Exome Panel v3.0 (BOKE Bioscience)

- EXome Core Panel (Nanodigmbio Biotechnology) [37]

- The hybridization reaction is typically incubated for a defined period (e.g., 1 hour to 24 hours) before captured libraries are amplified via PCR for sequencing [37] [36].

Analytical Validation and Benchmarking

To establish clinical validity, WES/WTS assays are benchmarked against gold standards and orthogonal methods.

- Variant Calling Performance: Benchmarking utilizes well-characterized reference standards from the Genome in a Bottle (GIAB) consortium (e.g., NA12878/HG001). Variant call files (VCFs) generated by different software are compared against GIAB's high-confidence truth sets using tools like the Variant Calling Assessment Tool (VCAT) or hap.py. Performance is measured by Precision (True Positives / [True Positives + False Positives]) and Recall (True Positives / [True Positives + False Negatives]) for SNVs and indels [38].

- Orthogonal Confirmation: Assay performance for specific biomarkers is validated against established companion diagnostics or methods. For example, in the validation of MI Cancer Seek, results for mutations (e.g., PIK3CA, EGFR, BRAF, KRAS), TMB, and MSI were compared to other FDA-approved tests, demonstrating >97% positive and negative percent agreement [32] [39].

- Limit of Detection (LOD): Analytical sensitivity is determined by testing variants at low allele frequencies. The OncoExTra assay demonstrated that 9.8% (558 of 5,690) of hotspot alterations linked to therapy were detected at a variant allele frequency (VAF) of <5%, highlighting its capability to identify subclonal alterations [31].

Key Research Reagent Solutions

Table 4: Essential Research Reagents and Kits for WES/WTS Workflows

| Reagent / Kit | Function | Example Products / Providers |

|---|---|---|

| Nucleic Acid Extraction Kits | Isolate high-quality DNA and RNA from FFPE and fresh frozen tissues. | GeneRead DNA FFPE Kit (Qiagen), QIAamp DNA Mini Kit [36]. |

| Library Preparation Kits | Convert extracted DNA and RNA into sequencing-ready libraries with adapters and barcodes. | MGIEasy UDB Universal Library Prep Set (MGI), KAPA library prep kits (Roche), SureSelect XT (Agilent) [37] [36]. |

| Exome Capture Panels | Enrich for exonic regions of the genome through solution-based hybridization. | Twist Exome 2.0, IDT xGen Exome Hyb Panel v2, SeqCap EZ (Roche), SureSelect XT (Agilent) [37] [36]. |

| Variant Calling Software | Identify somatic and germline genetic variants from sequenced data without requiring programming expertise. | DRAGEN Enrichment (Illumina), CLC Genomics Workbench, Partek Flow, Varsome Clinical [38]. |

| Reference Standards | Benchmark variant calling accuracy and assay performance. | Genome in a Bottle (GIAB) samples (e.g., NA12878), PancancerLight gDNA Reference Standard [37] [38]. |

Integration into the Precision Oncology Ecosystem

The full potential of WES/WTS is realized only when embedded within a supportive healthcare and research infrastructure that facilitates data interpretation and clinical action.

Clinical Utility and Actionability

The primary value of WES/WTS lies in its ability to inform treatment decisions. A key framework for interpreting results is the ESMO Scale for Clinical Actionability of molecular Targets (ESCAT), which ranks alteration-drug matches based on levels of evidence [34]. In a large-scale analysis of over 10,000 solid tumors, WES/WTS identified biomarkers associated with on-label FDA-approved therapies in 29.2% of samples and supported off-label matched therapy in an additional 28.0% [31]. Furthermore, the combined DNA/RNA approach is critical, as tests using DNA sequencing alone can miss 2.3% to 13.0% of actionable alterations, primarily RNA-detectable fusions, depending on their prevalence in a given cancer type [33].

The Learning Healthcare System

WES/WTS generates comprehensive, standardized datasets that are invaluable beyond individual patient care. When linked with clinical outcome data in a learning healthcare system, this information fuels hypothesis generation for basic research and identifies molecularly defined patient subgroups for basket and umbrella clinical trials [34]. This ecosystem creates a virtuous cycle: comprehensive profiling supports trial enrollment, and trial results, in turn, enhance the clinical utility of the profiling data.

The evolution of precision oncology has been significantly accelerated by the advent of liquid biopsy, which enables non-invasive profiling of tumor genetics through the detection of circulating tumor DNA (ctDNA). Analytical validation of these assays is paramount for their translation into clinical practice, particularly for the detection of key variant types—single nucleotide variants (SNVs), insertions/deletions (indels), and copy number variations (CNVs). This review provides a comparative analysis of recently validated ctDNA assays, focusing on their analytical performance metrics, underlying technologies, and implications for cancer research and drug development.

Methodological Frameworks for Assay Validation

Core Experimental Protocols

Analytical validation of ctDNA assays follows rigorous methodological standards to establish sensitivity, specificity, and reproducibility. Key experimental approaches include:

Limit of Detection (LOD) Studies: Assays are tested against serial dilutions of reference materials with known variant allele frequencies (VAFs) to determine the lowest concentration at which variants can be reliably detected. The LOD is typically established at 95% detection probability (LOD95) [40] [41].

Analytical Specificity Assessment: Specificity is evaluated using plasma or cfDNA from cancer-free donors to determine false positive rates. This includes assessing background error rates and interference from clonal hematopoiesis [40] [42].

Reproducibility and Precision Testing: Intra-run, inter-run, and inter-operator variability is assessed through repeated testing of contrived samples across different days, operators, and reagent lots [42] [41].

Orthogonal Validation: Results from novel assays are frequently confirmed using established technologies such as digital droplet PCR (ddPCR) to verify true positives, particularly for variants detected at low VAFs [40] [43].

Head-to-Head Comparisons: Prospective studies directly compare the performance of new assays against commercially available alternatives using identical patient samples, providing real-world performance data [40] [43].

Critical Technical Parameters

The analytical sensitivity of ctDNA assays is influenced by several technical factors:

- cfDNA Input Mass: Sensitivity decreases with lower input DNA (<20 ng) compared to medium (20-50 ng) or high (>50 ng) inputs [41]

- Sequencing Depth: Higher deduplicated mean depth (>10,000x) improves detection capability for low-frequency variants [41]

- On-Target Rate: Assays with on-target rates ≥50% are considered acceptable, though higher rates improve efficiency [41]

Comparative Performance of ctDNA Assays

The table below summarizes the analytical performance of recently validated ctDNA assays across different variant types:

| Assay Name | Technology | Genes Covered | SNV/Indel LOD95 (VAF) | CNV LOD95 (Copies) | Fusion LOD95 (VAF) | Key Performance Findings |

|---|---|---|---|---|---|---|

| Northstar Select | smNGS [43] | 84 | 0.15% [40] | 2.11 (amp), 1.80 (loss) [40] | 0.30% [40] | 51% more pathogenic SNV/indels, 109% more CNVs vs. comparators [40] |

| 33-gene ctDNA Panel | NGS [44] | 33 | Not specified | Not specified | Not specified | 76% sensitivity for Tier I variants vs. tissue; 14.3% more actionable variants with ctDNA [44] |

| PhasED-Seq MRD Assay | PhasED-Seq [42] | Not specified | 0.7 parts per million [42] | Not specified | Not specified | 90.62% positive percent agreement for MRD detection in DLBCL [42] |

| Multi-Assay Benchmark | Various NGS [41] | 25-523 | Varies by assay (0.1-0.5%) | Varies by assay | Varies by assay | Substantial sensitivity variability at VAF <0.5%; best-performing assays reached ~0.95 sensitivity at VAF 0.5% [41] |

Detection of Copy Number Variations

CNV detection presents particular challenges in liquid biopsy due to the non-specific nature of aneuploidies. Advanced assays now demonstrate improved capability to differentiate focal "driver" amplifications from broad chromosomal aneuploidies, which lack specific therapeutic targets [43]. The Northstar Select assay achieves a five-fold better LOD95 for CNVs compared to many first-generation liquid biopsies, with sensitivity down to 2.11 copies for amplifications and 1.80 for losses [40] [43].

Performance in Low-Shedding Tumors

Enhanced sensitivity is particularly beneficial for tumors with traditionally low ctDNA shedding, such as central nervous system cancers. One study reported detection rates of 87% in CNS cancers, significantly higher than the 27-55% range reported with other platforms [43]. This improved detection capability reduces null reports (reports with no pathogenic or actionable results) by 45% [40] [43].

Research Reagent Solutions for ctDNA Analysis

The table below outlines essential research reagents and materials used in advanced ctDNA assay workflows:

| Research Reagent | Function in Workflow | Application Example |

|---|---|---|

| Hybrid Capture Probes [42] | Target enrichment for specific genomic regions | PhasED-Seq MRD assay for B-cell malignancies |

| Single-Molecule Counting Templates [43] | Enable quantitative molecule counting for smNGS | Northstar Select's ultrasensitive detection |

| Plasma Collection Tubes (e.g., Streck, EDTA) [41] | Blood sample stabilization for ctDNA preservation | Multi-center clinical sample collection |

| Digital Droplet PCR Reagents [40] | Orthogonal validation of NGS-detected variants | Confirmation of low-VAF variants in validation studies |

| Unique Molecular Identifiers (UMIs) [41] | Error correction and artifact removal in NGS | Reducing false positives in low-frequency variant detection |

Technological Workflow for ctDNA Assay Validation

The following diagram illustrates the core workflow for analytical validation of ctDNA assays:

Implications for Research and Drug Development

The enhanced sensitivity of modern ctDNA assays has significant implications for cancer research and therapeutic development:

Clinical Trial Stratification: More sensitive detection of molecular minimal residual disease (MRD) can improve patient stratification for clinical trials and serve as a potential surrogate endpoint [42].

Therapeutic Resistance Monitoring: The ability to detect emerging resistance mutations at lower VAFs enables earlier intervention and treatment adaptation [45].

Tumor Evolution Tracking: Ultrasensitive assays provide insights into tumor heterogeneity and evolution under therapeutic pressure by capturing subclonal variants that were previously undetectable [40] [45].

First-Line Testing Feasibility: With high sensitivity (76% for Tier I variants versus tissue) and actionability (65% of patients with Tier I/II variants), ctDNA assays are increasingly viable as first-approach tests, particularly when tissue is limited or unavailable [44] [46].

The analytical validation of ctDNA assays for detection of SNVs, indels, and CNVs has reached a sophisticated stage, with newer technologies demonstrating significantly improved sensitivity compared to earlier approaches. The implementation of single-molecule counting methods and phased variant detection has enabled reliable detection of variants at VAFs below 0.5%, addressing a critical limitation in liquid biopsy applications. For researchers and drug development professionals, these technological advances offer enhanced capabilities for biomarker discovery, therapy response monitoring, and understanding tumor evolution. As validation standards continue to evolve, the integration of these highly sensitive assays into research protocols promises to accelerate the development of more effective, personalized cancer therapeutics.

The analysis of gene expression and the detection of structural alterations, such as fusion genes, are fundamental to advancing precision oncology. While DNA sequencing reveals the genetic blueprint, RNA sequencing (RNA-seq) provides a dynamic view into the active transcriptional landscape of a cell, offering unique insights into cancer biology. The advent of high-throughput technologies has positioned RNA-seq as a powerful, multi-faceted tool that can simultaneously interrogate gene expression profiles, identify fusion transcripts, discover novel isoforms, and even detect RNA modifications within a single test [47] [48]. This capability is crucial because fusion genes are major drivers of many cancers, and specific targeted therapies are approved for fusion-positive malignancies, such as those involving the ALK, ROS1, NTRK, and RET genes [48]. This guide provides an objective comparison of RNA-seq methodologies, supported by recent experimental data, to inform their application and validation in cancer research and diagnostic development.

Comparative Analysis of RNA-Sequencing Technologies

RNA-seq protocols can be broadly categorized by read length (short-read vs. long-read) and their technical approach. Each offers distinct advantages and limitations for transcript-level analysis.

Short-Read vs. Long-Read RNA Sequencing

Short-read sequencing (e.g., Illumina) has been the workhorse for transcriptomics due to its high throughput and accuracy. However, its fundamental limitation is the inference of transcript isoforms from fragmented sequences, which complicates the accurate quantification of highly similar alternative isoforms and the unambiguous detection of fusion events [49]. In contrast, long-read sequencing technologies, exemplified by Oxford Nanopore Technologies (ONT) and PacBio Iso-Seq, sequence entire RNA transcripts in a single pass. This directly reveals the complete structure of RNA molecules, thereby more robustly identifying major isoforms, novel transcripts, and fusion genes [49] [50].

Table 1: Comparison of Key RNA-Sequencing Technologies and Their Performance Characteristics.

| Technology / Protocol | Read Length | Key Strengths | Key Limitations | Primary Applications |

|---|---|---|---|---|

| Illumina (Short-read) | Short (75-300 bp) | High throughput, low cost per base, high base-level accuracy [47]. | Inference of isoforms and fusions from fragments, limited in complex regions [49] [51]. | Gene-level expression quantification, splicing analysis (via junctions), miRNA analysis [47] [52]. |

| PacBio Iso-Seq | Long (full-length) | High accuracy circular consensus sequencing, direct isoform discovery [49]. | Lower throughput, higher DNA input requirements, generally higher cost [49]. | Definitive isoform identification and quantification, novel transcript discovery. |

| Nanopore Direct RNA | Long (full-length) | Sequences native RNA, enables detection of RNA modifications (e.g., m6A) [49]. | Lower throughput, requires more input RNA. | Isoform analysis, epitranscriptome (RNA modification) discovery. |

| Nanopore direct cDNA | Long (full-length) | Amplification-free, avoids PCR biases [49]. | Requires significant input RNA. | Accurate transcript quantification, isoform analysis. |

| Nanopore PCR-cDNA | Long (full-length) | Highest throughput, lowest input RNA requirement for long-read protocols [49]. | Subject to PCR amplification biases [49]. | Fusion detection, isoform analysis when material is limited. |

Performance in Fusion Gene Detection

Multiple studies have benchmarked the performance of RNA-seq for detecting fusion genes—a critical application in cancer diagnostics.

In a study of 101 acute leukemia patients, RNA-seq demonstrated a 83.3% sensitivity compared to a composite result from conventional diagnostics (karyotyping, FISH, RT-PCR). It identified 52 fusion genes in 51 (50.5%) patients, with the highest detection rate in B-cell acute lymphoblastic leukemia (70.3%) [51]. Notably, RNA-seq clarified previously unspecified rearrangements and detected 12 novel and rare fusions in 56 cases that had tested negative by conventional methods, highlighting its superior discovery power [51].

Another pivotal study compared fusion detection in matched formalin-fixed paraffin-embedded (FFPE) and fresh-frozen (FF) colorectal cancer tissues from 29 patients. Despite theoretical concerns about RNA degradation in FFPE samples, the research found no statistically significant difference in the number of chimeric transcripts detected between the two sample types [48]. This is a critical finding for clinical workflows, which heavily rely on FFPE archives. The study used the STAR-Fusion software and identified a known frequent fusion (KANSL1-ARL17A/B) and 93 new fusion genes, including a potentially clinically actionable in-frame fusion of LRRFIP2 and ALK [48].

Experimental Protocols and Workflows

A robust analytical pipeline is paramount for deriving reliable biological insights from RNA-seq data. The workflow encompasses sample preparation, sequencing, and computational analysis.

Sample Preparation and Sequencing

Methodologies vary based on the sample type and technology.

- FFPE vs. Fresh-Frozen Samples: For the comparative fusion detection study [48], FFPE samples were fixed for 16 hours, while matched fresh tissues were stored in RNAlater. RNA was extracted from both using the QIAGEN RNeasy Kit. Library construction used the KAPA RNA Hyper with rRNA Erase kit, and sequencing was performed on a Genolab M engine for paired-end 75 bp reads.

- The SG-NEx Protocol Benchmark: The Singapore Nanopore Expression (SG-NEx) project provides a comprehensive benchmark [49] [50]. They profiled seven human cell lines using five protocols: Illumina short-read, PacBio IsoSeq, and three Nanopore protocols (Direct RNA, direct cDNA, and PCR-cDNA). This design allows for direct comparison of the impact of fragmentation, amplification, and sequencing principle on transcript-level analysis.

- Leukemia Fusion Detection Protocol: In the acute leukemia study [51], whole RNA-seq was performed using the Illumina Stranded mRNA Prep kit, representing a standard, widely adopted short-read workflow for fusion screening in a clinical context.

Bioinformatics Analysis for Differential Expression and Fusion Detection

The computational transformation of raw sequencing data into meaningful results involves several standardized steps.

- Preprocessing and Quantification: A typical robust pipeline for RNA-seq data involves quality control of raw reads with FastQC, trimming of adapters and low-quality bases with Trimmomatic, and quantification of transcript abundance using alignment-free tools like Salmon [52].

- Normalization and Differential Expression: Normalization, such as the Trimmed Mean of M-values (TMM) method in

edgeR, is critical to account for technical variability [52]. For identifying differentially expressed genes (DEGs), several statistical methods are available, includingdearseq,voom-limma,edgeR, andDESeq2, each with specific strengths depending on experimental design and sample size [52]. - Fusion Detection: The specialized tool STAR-Fusion is widely used to detect chimeric transcripts from RNA-seq data [48]. It works by aligning reads to a reference genome and identifying supporting evidence for fusion events through junction reads and spanning fragments.

The diagram below illustrates the core logical workflow for an integrative RNA-seq analysis encompassing both gene expression and fusion detection.

Successful implementation and, crucially, analytical validation of RNA-seq assays require access to high-quality, well-characterized reagents and resources.

Table 2: Key Research Reagent Solutions for RNA-Seq Assay Development and Validation.

| Resource / Reagent | Function / Purpose | Example Products / Sources |

|---|---|---|

| RNA Extraction Kits | Isolate high-quality, intact RNA from diverse sample types (e.g., fresh frozen, FFPE). | QIAGEN RNeasy Kit [48] |

| RNA Library Prep Kits | Prepare sequencing libraries from RNA; type depends on protocol (cDNA vs. direct RNA). | Illumina Stranded mRNA Prep [51], KAPA RNA Hyper with rRNA Erase [48] |

| Spike-in Control RNAs | Added to samples in known quantities to monitor technical performance, quantify absolute expression, and evaluate sensitivity. | Sequins, ERCC, SIRVs [49] |