Advancing Genomic Analysis: A Comprehensive Guide to Hybrid LSTM-CNN Models

Hybrid models combining Long Short-Term Memory (LSTM) networks and Convolutional Neural Networks (CNN) represent a transformative approach in genomic sequence analysis.

Advancing Genomic Analysis: A Comprehensive Guide to Hybrid LSTM-CNN Models

Abstract

Hybrid models combining Long Short-Term Memory (LSTM) networks and Convolutional Neural Networks (CNN) represent a transformative approach in genomic sequence analysis. These models synergistically leverage CNNs for detecting local patterns and conserved motifs with LSTMs for capturing long-range dependencies critical for understanding gene regulation and function. This article provides a foundational explanation of these architectures, details their methodology for diverse applications—from DNA classification and essential gene prediction to COVID-19 severity forecasting—and addresses key challenges like data imbalance and model interpretability. We further present a comparative analysis of model performance against traditional machine learning and standalone deep learning methods, underscoring the superior accuracy and robust generalization capabilities of hybrid LSTM-CNN frameworks. This resource is tailored for researchers, scientists, and drug development professionals seeking to implement these powerful tools in genomic research and precision medicine.

Understanding the Power of Hybrid LSTM-CNN Models in Genomics

The field of genomic sequence analysis is being transformed by deep learning, yet the complexity of biological data demands specialized architectural solutions. Genomic data possesses a hierarchical structure; local sequence motifs, such as transcription factor binding sites, exert immediate functional influences, while long-range dependencies, like those found in gene regulatory networks, control broader phenotypic outcomes [1]. Standard models often capture only one of these facets. The hybrid CNN-LSTM architecture directly addresses this duality, strategically combining Convolutional Neural Networks (CNNs) for extracting salient local patterns with Long Short-Term Memory (LSTM) networks for modeling contextual, long-range dependencies [2]. This synergy is particularly powerful for tasks in precision medicine and drug development, enabling more accurate prediction of disease severity from viral sequences, classification of functional genomic elements, and identification of pathogenic mutations [3] [2].

Core Architectural Components and Synergy

Convolutional Neural Networks (CNNs) for Local Pattern Extraction

CNNs operate as powerful feature detectors within biological sequences. Their architecture is designed to scan input data using filters (or kernels) that identify conserved motifs, functional domains, and other spatially local patterns critical to biological function [2].

- Operation Principle: A CNN applies these filters across the input sequence in a sliding-window fashion. Each filter is specialized to recognize a specific type of local feature, generating a feature map that highlights the presence and location of that feature throughout the sequence [1].

- Biological Relevance: In proteins, local relations between residues—such as conserved motifs and structural interactions—strongly influence biological properties. In DNA, CNNs can identify promoter regions, transcription factor binding sites, and other short, regulatory sequence patterns [2] [1]. The CNN component effectively builds a representation of the sequence from these foundational, local elements.

Long Short-Term Memory (LSTM) for Long-Range Dependency Capture

LSTMs are a specialized form of Recurrent Neural Network (RNN) engineered to overcome the vanishing gradient problem, which plagues standard RNNs and prevents them from learning long-term dependencies in sequential data [4] [5].

- Gated Architecture: The key to LSTM's effectiveness lies in its gating mechanism [4] [5]:

- Forget Gate: Determines what information from the previous cell state should be discarded.

- Input Gate: Decides what new information from the current input should be stored in the cell state.

- Output Gate: Controls what information from the cell state is output as the hidden state for the current time step.

- Biological Relevance: This gating mechanism allows the LSTM to maintain information over long sequence distances. This is crucial in genomics and proteomics, where residues or bases distant in the linear chain may have interdependent functions, such as in the formation of active sites or the interaction between a gene and its distant enhancer [2].

Synergistic Integration: The CNN-LSTM Hybrid

The hybrid model leverages the strengths of both architectures in a complementary, sequential pipeline.

- Information Flow: The raw sequence is first processed by the CNN layers. The CNN acts as a high-resolution feature extractor, transforming the raw sequence data into a richer representation of salient local features [1] [2].

- Contextual Modeling: This sequence of feature vectors is then fed into the LSTM layer. The LSTM processes this sequence, analyzing the temporal relationships and dependencies between the locally extracted features. It learns how these local patterns interact and combine over long ranges to influence the overall function or property being predicted [2].

- Analogy: This process is analogous to understanding a text: the CNN identifies the key words and short phrases (local motifs), while the LSTM understands the context, narrative, and long-range grammatical structure (long-range dependencies) that gives the entire text its meaning [6].

Quantitative Performance in Genomic Analysis

Empirical studies demonstrate that the CNN-LSTM hybrid architecture consistently outperforms traditional machine learning methods and often surpasses the performance of individual deep learning models in genomic classification tasks.

Table 1: Performance Comparison of Various Models on DNA Sequence Classification

| Model | Reported Accuracy | Key Application Context |

|---|---|---|

| Hybrid LSTM + CNN | 100% [1] | Human DNA sequence classification |

| XGBoost | 81.50% [1] | DNA sequence classification |

| Random Forest | 69.89% [1] | DNA sequence classification |

| DeepSea | 76.59% [1] | Genomic annotation |

| k-Nearest Neighbor | 70.77% [1] | DNA sequence classification |

| Logistic Regression | 45.31% [1] | DNA sequence classification |

| DeepVariant | 67.00% [1] | Variant calling |

| Graph Neural Network | 30.71% [1] | DNA sequence classification |

Beyond classification, hybrid models show significant promise in clinical prediction tasks. One study predicting COVID-19 severity from spike protein sequences and clinical data achieved an F1-score of 82.92% and an ROC-AUC of 0.9084 [2]. In cancer genomics, deep learning models have reduced false-negative rates in somatic variant detection by 30–40% compared to traditional bioinformatics pipelines [3]. Tools like MAGPIE, which uses an attention-based multimodal neural network, have achieved 92% accuracy in prioritizing pathogenic variants from sequencing data [3].

Application Notes & Experimental Protocols

Application Note: COVID-19 Severity Prediction from Spike Protein Sequences

Objective: To develop a predictive model for COVID-19 disease severity (Mild vs. Severe) using SARS-CoV-2 spike protein sequences and associated patient metadata [2].

Background: The spike protein is critical for viral entry and exhibits high mutation rates. Predicting severity based on viral genetics can aid in early intervention and resource allocation [2].

Table 2: Research Reagent Solutions for Genomic Sequence Analysis

| Reagent / Resource | Function / Description | Example Source / Tool |

|---|---|---|

| GISAID Database | Repository for viral genomic sequences and associated metadata; primary data source. | http://www.gisaid.org [2] |

| Biopython Library | A suite of tools for computational molecular biology; used for sequence analysis and feature extraction. | ProteinAnalysis module [2] |

| One-Hot Encoding | Preprocessing technique to represent nucleotide or amino acid sequences in a numerical, machine-readable format. | Standard pre-processing [1] |

| Physicochemical Descriptors | Numerical representations of biochemical properties (e.g., hydrophobicity, charge) for amino acids. | Kyte-Doolittle scale, Hopp-Woods scale [2] |

| Domain-Aware Encoding | A weighting scheme that emphasizes functionally critical regions of a sequence, such as the Receptor-Binding Domain (RBD). | Residues 319-541 in SARS-CoV-2 spike [2] |

Experimental Workflow:

Protocol Details:

Data Acquisition and Curation:

- Retrieve spike protein FASTA sequences and associated patient metadata from the GISAID database.

- Apply inclusion criteria: complete genome, human host, high coverage (<1% undefined bases), and presence of patient status.

- Standardize free-text patient status into "Mild" and "Severe" clinical categories [2].

Feature Engineering:

- Global Physicochemical Descriptors: For each amino acid sequence, compute:

- Amino Acid Composition (AAC): Normalized frequency of each residue.

- Mean hydrophobicity (Kyte-Doolittle scale).

- Net charge at pH 7.4.

- Predicted secondary structure content (helix, strand, coil).

- Region-Specific Encoding:

- Define the Receptor-Binding Domain (RBD), e.g., residues 319-541.

- Represent each residue by a vector of properties (polarity, charge, hydrophobicity).

- Apply a position-specific weighting scheme (e.g., weight of 5 for RBD residues, 1 for others) to emphasize functionally critical regions [2].

- Clinical and Epidemiological Data: One-hot encode demographic variables (age, gender) and viral lineage data.

- Global Physicochemical Descriptors: For each amino acid sequence, compute:

Model Implementation and Training:

- Architecture: Implement a hybrid model where initial CNN layers perform local feature extraction from the engineered sequence input. The output feature maps from the CNN are then fed into an LSTM layer to model long-range dependencies across the sequence. Finally, the output of the LSTM is combined with clinical data and processed by fully connected layers for the final prediction.

- Training: Use a standardized split of data into training, validation, and test sets. Train the model using an appropriate optimizer and loss function for binary classification, monitoring for overfitting on the validation set [2].

Application Note: Human DNA Sequence Classification

Objective: To accurately classify human DNA sequences, distinguishing them from those of closely related species, by identifying characteristic local and long-range patterns [1].

Background: DNA sequence classification is a fundamental task in genomics for identifying regulatory regions, genetic variations, and functional elements. The complex and hierarchical nature of genomic information makes it well-suited for hybrid deep-learning approaches [1].

Experimental Workflow:

Data Preprocessing:

- Sequence Representation: Convert DNA sequences (A, C, G, T) into a numerical format using one-hot encoding. This creates a binary matrix where each base is represented by a unique 4-dimensional vector [1].

- Data Partitioning: Split the data into training, validation, and test sets, ensuring no data leakage between sets.

Hybrid Model Architecture:

- CNN Module: The one-hot encoded sequences are fed into convolutional layers to detect short, conserved motifs (e.g., transcription factor binding sites). This is followed by pooling layers to reduce dimensionality and introduce translational invariance.

- LSTM Module: The feature maps from the CNN are reshaped into a sequence and passed to the LSTM layer. The LSTM learns the long-range grammatical structure of the DNA, capturing how local motifs interact over thousands of bases to influence function.

- Classification Head: The final hidden state from the LSTM is passed through a fully connected layer with a softmax activation function to produce the final classification probabilities (e.g., human, chimpanzee, dog) [1].

Performance Benchmarking:

- As shown in Table 1, the hybrid LSTM+CNN model achieved 100% classification accuracy on a specific task, significantly outperforming other machine learning and deep learning models, demonstrating the power of this architectural synergy [1].

Discussion and Future Perspectives

The integration of CNNs and LSTMs represents a robust framework for genomic sequence analysis, effectively mirroring the multi-scale nature of biological information. However, several challenges and future directions remain.

A primary consideration is model interpretability. While highly accurate, deep learning models are often perceived as "black boxes." Future work should integrate attention mechanisms, which allow the model to highlight which specific regions of the input sequence (e.g., which bases or amino acids) were most influential in making a prediction. This is crucial for generating biologically testable hypotheses and for clinical adoption [3].

Another challenge is data scarcity and quality for specific tasks. Techniques such as transfer learning, where a model pre-trained on a large, general dataset is fine-tuned for a specific application, and federated learning, which allows model training across multiple institutions without sharing sensitive patient data, are promising avenues to overcome these limitations [3].

Finally, the field is moving beyond pure sequence data. The most powerful future models will seamlessly integrate multi-omics data—such as transcriptomics, proteomics, and epigenomics—alongside clinical variables. This will provide a more holistic view of the genotype-phenotype relationship, further advancing drug discovery and personalized medicine [7] [3].

The functional properties of DNA and protein sequences are governed by complex patterns that operate at multiple spatial scales. Local motifs, short, conserved sequences responsible for specific biological functions (e.g., transcription factor binding sites in DNA or catalytic domains in proteins), are embedded within a broader sequential context that modulates their activity. The integration of these two elements is therefore critical for accurate sequence-to-function modeling [8] [9].

Deep learning architectures, particularly hybrid models combining Convolutional Neural Networks (CNNs) and Long Short-Term Memory networks (LSTMs), are uniquely suited for this biological hierarchy. These models biologically mirror the structural organization of genomic information, where CNNs excel at detecting local, position-invariant motifs, and LSTMs capture the long-range dependencies and grammatical structures formed by the arrangement of these motifs [1] [2]. This document details the application of such hybrid models through specific protocols and notes, providing a framework for their use in genomic analysis and drug discovery.

Key Biological Concepts and Computational Analogs

Table 1: Biological Concepts and Their Computational Counterparts in Hybrid Models

| Biological Concept | Description | Computational Analog in Hybrid CNN-LSTM |

|---|---|---|

| Local Motif | Short, conserved sequence patterns (e.g., zinc fingers, helix-turn-helix) determining specific molecular interactions. | CNN Feature Maps: Filters scan the sequence to detect these local, invariant patterns [2]. |

| Sequential Context | The long-range spatial arrangement and dependency between motifs that influence overall function and regulation. | LSTM Memory Cells: Capture long-term dependencies and the "grammar" of motif arrangement [1] [2]. |

| Nucleotide/Amino Acid Dependency | The statistical relationship between adjacent residues in a sequence. | k-spectrum/k-mer Models: Capture local context and nucleotide dependency at the highest possible resolution [8] [9]. |

| Sequence Resolution | The granularity at which sequence information is considered for analysis. | Model Input Encoding: One-hot encoding, DNA embeddings, or physicochemical feature vectors provide the raw data resolution [1] [2]. |

Application Note: DNA Motif Recognition from Protein Sequence

Background and Rationale

Understanding the binding specificity of DNA-binding proteins is fundamental to deciphering gene regulatory networks. A significant challenge is mechanistically inferring DNA motif preferences directly from protein sequences across diverse families without resorting to extensive wet-lab experiments [8] [9]. The k-spectrum recognition model addresses this by operating at a high resolution to capture complex patterns from protein sequences.

Experimental Results and Performance

The k-spectrum model was validated on a massive scale, demonstrating its robust performance and generalizability across different protein families.

Table 2: Performance of k-spectrum Model on DNA-Binding Protein Families

| DNA-Binding Domain Family | Key Evaluation Metric | Model Performance & Competitive Edge |

|---|---|---|

| bHLH | Multiple metrics measured on millions of k-mer binding intensities | Demonstrated competitive edges in motif recognition [8] [9]. |

| bZIP | Multiple metrics measured on millions of k-mer binding intensities | Demonstrated competitive edges in motif recognition [8] [9]. |

| ETS | Multiple metrics measured on millions of k-mer binding intensities | Demonstrated competitive edges in motif recognition [8] [9]. |

| Forkhead | Multiple metrics measured on millions of k-mer binding intensities | Demonstrated competitive edges in motif recognition [8] [9]. |

| Homeodomain | Multiple metrics measured on millions of k-mer binding intensities | Demonstrated competitive edges in motif recognition [8] [9]. |

Protocol: k-spectrum Recognition Modeling

Objective: To build a model that predicts DNA binding motif sequences from the amino acid sequence of a DNA-binding protein.

Materials:

- Protein Sequences: FASTA files for proteins from DNA-binding domain families (e.g., bHLH, bZIP, ETS, Forkhead, Homeodomain).

- Binding Intensity Data: Experimentally derived k-mer binding intensity data for the proteins of interest.

- Computational Environment: Python with scikit-learn and Biopython libraries.

Methodology:

- Data Acquisition and Curation:

- Collect a curated set of protein sequences and their corresponding DNA binding specificity data (e.g., from publicly available databases like UniProt).

- Ensure sequences are labeled by their DNA-binding domain family.

k-mer Spectrum Feature Extraction:

- For a given value of k, decompose each protein sequence into all possible contiguous amino acid sub-sequences of length k (k-mers).

- Construct a feature vector for each protein sequence that represents the normalized frequency of these k-mers. This step captures the local sequence context and residue dependency [8] [9].

Model Training and Validation:

- Train the k-spectrum model on the feature vectors to predict DNA binding intensities for different k-mers.

- Validate the model using cross-validation and independent test sets. Measure performance using multiple metrics (e.g., AUC, precision, recall) across the different DNA-binding domain families.

Application:

- The trained model can be used to predict the DNA motif binding preferences of novel DNA-binding proteins based solely on their sequence.

- It can also help prioritize the impact of single nucleotide variants (SNVs) on transcription factor binding sites in a genome-wide manner, identifying regulatory variants that may be associated with disease [8] [9].

Application Note: Hybrid CNN-LSTM for DNA Sequence Classification

Background and Rationale

DNA sequence classification is a cornerstone of genomics, essential for identifying regulatory regions, pathogenic mutations, and functional genetic elements. The complexity and long-range dependencies within genomic data pose significant challenges for traditional machine learning models. A hybrid CNN-LSTM architecture was developed to synergistically extract local patterns and long-distance dependencies, achieving superior classification performance [1].

Experimental Results and Performance

The hybrid model was benchmarked against a wide array of traditional machine learning and deep learning models, demonstrating its significant advantage.

Table 3: Performance Comparison of DNA Sequence Classification Models

| Model Type | Specific Model | Reported Classification Accuracy |

|---|---|---|

| Traditional Machine Learning | Logistic Regression | 45.31% |

| Naïve Bayes | 17.80% | |

| Random Forest | 69.89% | |

| k-Nearest Neighbor | 70.77% | |

| Advanced Machine Learning | XGBoost | 81.50% |

| Deep Learning | DeepSea | 76.59% |

| DeepVariant | 67.00% | |

| Graph Neural Network | 30.71% | |

| Hybrid Deep Learning | LSTM + CNN (Proposed) | 100.00% [1] |

Protocol: Implementing a Hybrid CNN-LSTM for DNA Classification

Objective: To classify DNA sequences (e.g., human vs. non-human, enhancer vs. non-enhancer) using a hybrid deep learning model.

Materials:

- DNA Sequences: Labeled genomic sequences in FASTA format.

- Computational Resources: GPU-accelerated environment (e.g., with TensorFlow or PyTorch).

- Data Preprocessing Tools: For one-hot encoding or DNA embedding generation.

Methodology:

- Sequence Preprocessing and Encoding:

- Standardize sequence length by truncating or padding.

- Convert nucleotide sequences into numerical representations. One-hot encoding (A=[1,0,0,0], C=[0,1,0,0], etc.) is a common and effective approach, though learned DNA embeddings can also be used [1].

Hybrid Architecture Configuration:

- Input Layer: Accepts the numerically encoded DNA sequences.

- CNN Module (Local Motif Detector):

- LSTM Module (Sequential Context Modeler):

- Output Layer: A fully connected layer with a softmax activation function to produce the final classification probabilities.

Model Training and Evaluation:

- Compile the model using an optimizer (e.g., Adam), a loss function (e.g., categorical cross-entropy), and track accuracy as a metric.

- Train the model on a labeled dataset, using a separate validation set for hyperparameter tuning to prevent overfitting.

- Evaluate the final model on a held-out test set to report unbiased performance metrics like accuracy, F1-score, and AUC-ROC [1].

Table 4: Key Resources for Sequence Analysis and Modeling

| Resource Name | Type | Function & Application |

|---|---|---|

| MEME Suite [10] | Software Toolkit | Discovers novel motifs (MEME, STREME) and performs motif enrichment analysis (AME, CentriMo) in nucleotide or protein sequences. |

| GISAID [2] | Database | Provides access to annotated viral genomic sequences (e.g., SARS-CoV-2 spike protein), crucial for training predictive models. |

| Sanger Sequencing [11] | Laboratory Technique | Provides gold-standard validation for sequence modifications, engineered constructs, and low-frequency variant detection. |

| Addgene Protocol - Sequence Analysis [12] | Laboratory Protocol | Guidelines for Sanger sequencing primer design and analysis of trace files (.ab1) for plasmid sequence verification. |

| Sage Science HLS2 [13] | Laboratory Instrument | Performs high-molecular-weight DNA size selection for long-read sequencing technologies (e.g., Oxford Nanopore, PacBio). |

Integrated Workflow: From Sequence to Functional Insight

The power of the hybrid modeling approach is fully realized when computational predictions are integrated with experimental validation. The following workflow outlines this cycle for a project aimed at characterizing a novel DNA-binding protein.

Next-generation sequencing (NGS) technologies have revolutionized genomics, with Whole Genome Sequencing (WGS) and Whole Exome Sequencing (WES) serving as two foundational approaches for detecting genetic variation [14] [15]. WGS aims to sequence and detect variations across an organism's entire DNA, providing an unbiased view of genetic variation including single nucleotide variants (SNVs), insertions and deletions (indels), structural variants, and copy number variations [14]. In contrast, WES specifically targets the protein-coding exonic regions, which constitute approximately 2% of the genome yet harbor an estimated 90% of known disease-causing variants [15]. The selection between these approaches involves significant trade-offs: WES offers substantial cost savings in both sequencing and data storage (typically 5-6 GB per file versus 90 GB or more for WGS), while WGS provides more comprehensive variant detection due to its higher coverage and ability to interrogate non-coding regions [15]. For researchers applying deep learning models like hybrid LSTM-CNN architectures to genomic sequences, understanding these data sources and their preprocessing requirements is fundamental to building effective predictive models.

Genomic Data Types: WES vs. WGS

Technical Specifications and Applications

Table 1: Comparison of Whole Exome Sequencing (WES) and Whole Genome Sequencing (WGS)

| Parameter | Whole Exome Sequencing (WES) | Whole Genome Sequencing (WGS) |

|---|---|---|

| Target Region | Protein-coding exons (1-2% of genome) | Entire genome (virtually every nucleotide) |

| Data Volume per Sample | 5-6 GB | 90 GB or more |

| Variant Types Detected | SNVs, small indels, some CNVs | SNVs, indels, structural variants, CNVs, regulatory elements |

| Primary Applications | Rare disease diagnosis, identifying coding variants associated with complex diseases, cancer genomics | Comprehensive variant discovery, non-coding region analysis, structural variant detection, population genomics |

| Cost Considerations | Lower sequencing and data storage costs | Higher sequencing and data storage costs |

| Capture Method | Hybridization-based capture using probes | No capture required |

| Clinical Relevance | Captures ~90% of known disease-causing variants | Potential to identify novel disease mechanisms in non-coding regions |

Implications for Deep Learning Applications

The choice between WES and WGS has significant implications for downstream deep learning applications. WES data provides a focused dataset enriched for clinically relevant variants, reducing the feature space for model training [15]. This can be advantageous for LSTM-CNN models working with limited computational resources or sample sizes. Conversely, WGS offers a more complete genomic context, potentially enabling models to identify complex patterns across coding and non-coding regions that might be missed in exome-only data [14]. The substantially larger data volume of WGS, however, demands more sophisticated data handling and computational resources for model training. For hybrid LSTM-CNN models specifically designed for genomic sequence analysis, WGS data may provide opportunities to learn long-range dependencies across the genome that are inaccessible through exome sequencing alone.

Standardized Preprocessing Workflows

Primary Preprocessing Steps

The transformation of raw sequencing reads into analysis-ready data follows a structured pipeline with rigorous quality control at each stage. The following workflow diagram illustrates the complete process from raw reads to variant calling:

Quality Control and Read Preprocessing: Initial quality assessment using tools like FastQC examines base quality scores, GC content distribution, adapter contamination, and sequence length distribution [14]. Artifacts are removed through preprocessing steps including trimming, filtering, and adapter clipping to prevent mapping biases [15].

Alignment to Reference Genome: Processed reads are mapped to a reference genome (e.g., GRCh38/hg38 for human data) using aligners such as BWA-MEM or Bowtie [14] [15]. This step generates alignment files in BAM format, where each read is positioned against the reference sequence.

Post-Alignment Processing: Critical processing steps include marking duplicate reads (to minimize allelic biases) and base quality score recalibration (BQSR) to correct for systematic errors in base quality scores [14]. The GATK pipeline uses known variant sites from resources like dbSNP, HapMap, and 1000 Genomes for BQSR [14].

Variant Calling and Filtering: Variant calling algorithms like GATK HaplotypeCaller calculate the probability that a genetic variant is truly present in the sample [15]. To avoid false-positive calls, parameters such as maximum read depth per position, minimum number of gapped reads, and base alignment quality recalculation are optimized [15]. Finally, quality filters are applied to retain high-confidence variants.

Experimental Protocol: GATK Workflow for Variant Discovery

For researchers implementing the GATK workflow, the following protocol provides a detailed methodology:

Environment Setup: Create a dedicated computational environment using Conda to isolate tools and prevent conflicts [14]:

Reference Genome Preparation: Download the human reference genome GRCh38 and create alignment indices [14]:

This index creation step requires 2-3 hours but only needs to be performed once [14].

Execute Preprocessing Pipeline:

- Perform quality control:

fastqc sample.fastq - Trim adapters and low-quality bases:

trim_galore --quality 20 --length 50 sample.fastq - Align to reference:

bwa mem -t 20 reference.fa sample_trimmed.fq > sample.sam - Process alignments:

gatk MarkDuplicates -I sample.bam -O sample_dedup.bam --METRICS_FILE metrics.txt - Recalibrate base quality scores:

gatk BaseRecalibrator -I sample_dedup.bam -R reference.fa --known-sites known_sites.vcf -O recal_data.table

- Perform quality control:

Variant Calling:

Feature Engineering for Genomic Sequences

Encoding Methods for Deep Learning

Raw DNA sequences comprise nucleotides (A, C, G, T for DNA; A, C, U, G for RNA) that must be converted to numerical representations for deep learning applications [16]. The encoding method significantly impacts model performance, with different approaches offering distinct advantages.

Table 2: Feature Encoding Techniques for Genomic Sequences

| Encoding Method | Description | Advantages | Limitations | Suitable Model Types |

|---|---|---|---|---|

| Label Encoding | Each nucleotide is assigned a unique integer value (e.g., A=0, C=1, G=2, T=3) | Preserves positional information, simple implementation | Creates artificial ordinal relationships between nucleotides | CNN, LSTM, CNN-LSTM |

| K-mer Encoding | DNA sequence is split into overlapping subsequences of length k, creating English-like statements | Reduces sequence length, captures local context | Increases feature dimensionality for large k values | CNN, CNN-Bidirectional LSTM |

| One-Hot Encoding | Each nucleotide is represented as a binary vector (e.g., A=[1,0,0,0], C=[0,1,0,0]) | No artificial ordinal relationships, widely compatible | High dimensionality for long sequences, sparse representation | CNN, LSTM, Hybrid models |

| Physicochemical Descriptors | Incorporates biochemical properties (hydrophobicity, charge, polarity) | Encodes biologically relevant information, domain-specific | Requires domain knowledge, complex implementation | CNN, CNN-LSTM for prediction tasks |

Advanced Feature Engineering Pipeline

For hybrid LSTM-CNN models, a comprehensive feature engineering pipeline can extract both local patterns and global sequence characteristics. The following workflow illustrates an advanced feature extraction process:

K-mer Encoding Implementation: K-mer encoding breaks DNA sequences into overlapping subsequences of length k. For example, the sequence "ATCGGA" with k=3 generates "ATC", "TCG", "CGG", "GGA". This approach effectively reduces sequence dimensionality while capturing local contextual information [16]. Research has demonstrated that CNN and CNN-Bidirectional LSTM models with K-mer encoding can achieve high accuracy (up to 93.16% and 93.13% respectively) in DNA sequence classification tasks [16].

Region-Specific Feature Enhancement: Biologically important regions can be emphasized through position-specific weighting schemes. For instance, in spike protein analysis, residues within the receptor-binding domain (RBD, positions 319-541) might receive 5× higher weight than other regions [2]. Each residue can be represented by a multi-dimensional vector incorporating normalized values of polarity, isoelectric point, hydrophobicity, and binary indicators for physicochemical classes [2].

Physicochemical Descriptor Extraction: Global protein features include [2]:

- Amino acid composition (normalized frequency of each residue)

- Sequence length and amino acid diversity

- Mean hydrophobicity (Kyte-Doolittle scale)

- Net charge at physiological pH (7.4)

- Predicted secondary structure content (helix, strand, coil proportions)

- Polarity index (Hopp-Woods scale)

- Hydrogen bonding potential (frequency of Ser, Thr, Asn, Gln, His, Tyr)

Experimental Protocol: Feature Engineering for LSTM-CNN Models

Sequence Preprocessing:

Multi-Modal Encoding:

Data Partitioning:

- Split data into training, validation, and test sets (e.g., 70-15-15 ratio)

- Maintain consistent preprocessing across splits

- Ensure representative distribution of sequence classes in each split

Table 3: Key Research Reagent Solutions for Genomic Sequencing Analysis

| Resource Category | Specific Tools/Resources | Function | Application Context |

|---|---|---|---|

| Variant Calling Pipelines | GATK, DeepVariant, Strelka2, FreeBayes | Identify genetic variants from aligned sequencing data | Germline and somatic variant discovery; GATK is industry standard for germline variants [14] |

| Alignment Tools | BWA-MEM, Bowtie | Map sequencing reads to reference genome | Essential preprocessing step for both WES and WGS [14] [15] |

| Variant Annotation | SnpEff/SnpSift, VEP (Variant Effect Predictor) | Add functional information to identified variants | Critical for interpreting variant effects on genes and proteins [15] |

| Exome Capture Kits | Illumina Nexome, IDT xGen, Agilent SureSelect | Enrich exonic regions through hybridization-based capture | WES-specific; impacts coverage uniformity [15] |

| Reference Genomes | GRCh38/hg38, GRCh37/hg19 | Provide reference sequence for read alignment | Foundation for all alignment and variant calling [14] |

| Variant Databases | dbSNP, HapMap, 1000 Genomes, GnomAD | Provide known variants for filtering and annotation | Used for base quality score recalibration and identifying novel variants [14] |

| Genomic Data Repositories | GISAID, NCBI GenBank | Provide access to genomic sequences and metadata | Essential for obtaining spike protein sequences and clinical metadata [16] [2] |

| Quality Control Tools | FastQC, fastp, Trimmomatic | Assess sequencing data quality and perform preprocessing | Initial QC step to identify potential issues before alignment [14] [15] |

The journey from raw WES/WGS data to feature-engineered sequences suitable for hybrid LSTM-CNN analysis requires meticulous preprocessing and thoughtful feature engineering. The selection between WES and WGS represents a fundamental trade-off between comprehensiveness and practicality, with implications for downstream analytical approaches. Standardized preprocessing workflows, particularly those implemented in GATK, transform raw sequencing reads into high-quality variants through a multi-step process of quality control, alignment, and variant refinement. For deep learning applications, feature engineering methods including K-mer encoding, physicochemical property extraction, and domain-specific weighting create numerical representations that preserve biologically relevant patterns. These processed sequences enable hybrid LSTM-CNN models to effectively capture both local genomic motifs through convolutional operations and long-range dependencies through recurrent connections, ultimately supporting accurate prediction of functional outcomes from genomic sequences.

The analysis of genomic sequences represents one of the most computationally complex challenges in modern biology. Traditional statistical and machine learning methods often struggle with the high-dimensional nature of genomic data, where the number of features (e.g., nucleotides, sequence variants) vastly exceeds the number of samples, and with the intricate long-range dependencies that govern regulatory elements. Hybrid deep learning architectures, particularly those combining Long Short-Term Memory (LSTM) networks and Convolutional Neural Networks (CNN), have emerged as powerful solutions that fundamentally outperform traditional approaches. These hybrid models excel at capturing both the local spatial features and long-range temporal dependencies inherent in DNA sequences, enabling more accurate classification, prediction, and functional annotation. This document outlines the quantitative advantages of these hybrid models and provides detailed protocols for their implementation in genomic research, framed within the context of a broader thesis on hybrid LSTM-CNN models for genomic sequence analysis.

Quantitative Performance Comparison

The superiority of hybrid LSTM-CNN models is demonstrated through substantial improvements in key performance metrics across diverse genomic applications compared to traditional machine learning and single-architecture deep learning models.

Table 1: Performance Comparison of DNA Sequence Classification Models

| Model Type | Specific Model | Accuracy (%) | Application Context | Key Advantage |

|---|---|---|---|---|

| Traditional ML | Logistic Regression | 45.31 | Human DNA Classification | Baseline performance [1] |

| Traditional ML | Naïve Bayes | 17.80 | Human DNA Classification | Baseline performance [1] |

| Traditional ML | Random Forest | 69.89 | Human DNA Classification | Handles non-linearity [1] |

| Advanced ML | XGBoost | 81.50 | Human DNA Classification | Ensemble learning [1] |

| Deep Learning | DeepSea | 76.59 | Human DNA Classification | CNN-based [1] |

| Deep Learning | DeepVariant | 67.00 | Human DNA Classification | CNN-based [1] |

| Deep Learning | CNN | 93.16 | Virus DNA Classification | Local feature extraction [16] |

| Deep Learning | CNN-Bidirectional LSTM | 93.13 | Virus DNA Classification | Captures sequence context [16] |

| Hybrid DL | LSTM + CNN (Hybrid) | 100.00 | Human DNA Classification | Captures local & long-range patterns [1] |

| Hybrid DL | CNN-LSTM | High* | Genomic Prediction (Crops) | Superior for complex traits [17] |

| Hybrid DL | LSTM-ResNet | High* | Genomic Prediction (Crops) | Best performance on 10/18 traits [17] |

Note: The crop genomics study [17] reported that hybrid models like LSTM-ResNet consistently achieved the highest prediction accuracy but did not provide a single aggregate percentage for the model class.

Table 2: Performance of Hybrid Models in Other Genomic Applications

| Hybrid Model | Application | Key Performance Metrics | Interpretation |

|---|---|---|---|

| LSTM, BiLSTM, CNN, GRU, GloVe Ensemble | Gene Mutation Classification | Training Accuracy: 80.6%, Precision: 81.6%, Recall: 80.6%, F1-Score: 83.1% [18] | Surpassed advanced transformer models in classifying cancer gene mutations. |

| Multi2-Con-CAPSO-LSTM | DNA Methylation Prediction | High Sensitivity, Specificity, Accuracy, and Correlation across 17 species [19] | Robust generalization across different methylation types and species. |

| Hybrid Deep Learning Approach | Low-Volume High-Dimensional Data | Outperformed standalone ML and DL [20] | Effectively addresses the "low n, high d" problem common in genomics. |

Experimental Protocols

Protocol 1: DNA Sequence Classification for Pathogen Identification

This protocol details the methodology for classifying viral DNA sequences (e.g., COVID-19, SARS, MERS) using a hybrid CNN-LSTM model, achieving over 93% accuracy [16].

1. Data Collection & Preprocessing:

- Data Source: Obtain FASTA format genomic sequences from public databases like NCBI GenBank.

- Handling Class Imbalance: Apply the Synthetic Minority Oversampling Technique (SMOTE) to generate synthetic samples for under-represented virus classes (e.g., MERS, dengue) to match the sample count of the majority class [16].

- Sequence Encoding: Convert categorical nucleotide sequences (A, C, G, T) into numerical representations. Two primary methods are used:

- Label Encoding: Assign a unique integer index to each nucleotide (e.g., A=0, C=1, G=2, T=3), preserving positional information.

- K-mer Encoding: Break the sequence into overlapping fragments of length k (e.g., k=3). These k-mers are treated as words, and the entire sequence is converted into a sentence-like structure. This is subsequently fed into an embedding layer to create dense vector representations [16].

2. Model Architecture (CNN-Bidirectional LSTM):

- Input Layer: Accepts the numerically encoded sequences.

- Convolutional Layers (1D): Use multiple 1D convolutional filters to scan the sequence and detect local, invariant motifs (e.g., promotor signals, protein-binding sites). Apply a ReLU activation function.

- Pooling Layer (MaxPooling1D): Follows convolutional layers to reduce spatial dimensionality, improve computational efficiency, and provide basic translational invariance.

- Bidirectional LSTM Layer: The output from the CNN is fed into a Bidirectional LSTM. This layer processes the sequence forwards and backwards, capturing long-range dependencies and contextual information from both directions, which is crucial for understanding gene structure and regulation.

- Fully Connected & Output Layer: The final hidden states are passed through dense layers with dropout for regularization, culminating in a softmax output layer for multi-class classification [16].

3. Training & Evaluation:

- Partition the data into training, validation, and testing sets (e.g., 80/10/10 split).

- Train the model using a categorical cross-entropy loss function and an Adam optimizer.

- Evaluate performance on the held-out test set using accuracy, precision, recall, and F1-score [16].

Protocol 2: Genomic Prediction for Complex Crop Traits

This protocol describes the use of hybrid models like LSTM-ResNet for genomic selection in plant breeding, where they have shown superior performance for complex, polygenic traits [17].

1. Data Preparation:

- Genotype Data: Use high-density genome-wide molecular markers, typically Single Nucleotide Polymorphisms (SNPs). The data is structured as a matrix of individuals (rows) by SNPs (columns), with values indicating the allele state.

- Phenotype Data: Collect measurements of the target trait(s) (e.g., yield, drought tolerance) for the same individuals.

- Quality Control & Imputation: Filter SNPs based on minor allele frequency and call rate. Impute missing genotypes if necessary.

2. Model Architecture (LSTM-ResNet):

- Input Layer: Takes the vector of SNP markers for an individual.

- ResNet Blocks: These blocks consist of convolutional layers with skip connections. The skip connections bypass one or more layers and perform identity mapping, which mitigates the vanishing gradient problem, enabling the training of very deep networks. This allows the model to hierarchically learn complex, non-additive genetic interactions (epistasis) [17].

- LSTM Layers: The features extracted by ResNet are sequentially fed into LSTM layers. The LSTM is adept at modeling the linkage disequilibrium (LD) structure and long-range dependencies along the chromosome, effectively capturing the spatial relationships between genetic markers [17].

- Output Layer: A regression or classification output layer to predict the trait value.

3. Training & Analysis:

- The model is trained to minimize the difference between predicted and observed phenotypic values.

- Performance is evaluated by calculating the prediction accuracy (correlation between predicted and observed values) on a validation set.

- Analysis of SNP sampling suggests maintaining SNP counts between 10,000 and 15,000 can optimize computational efficiency without significant loss of predictive power [17].

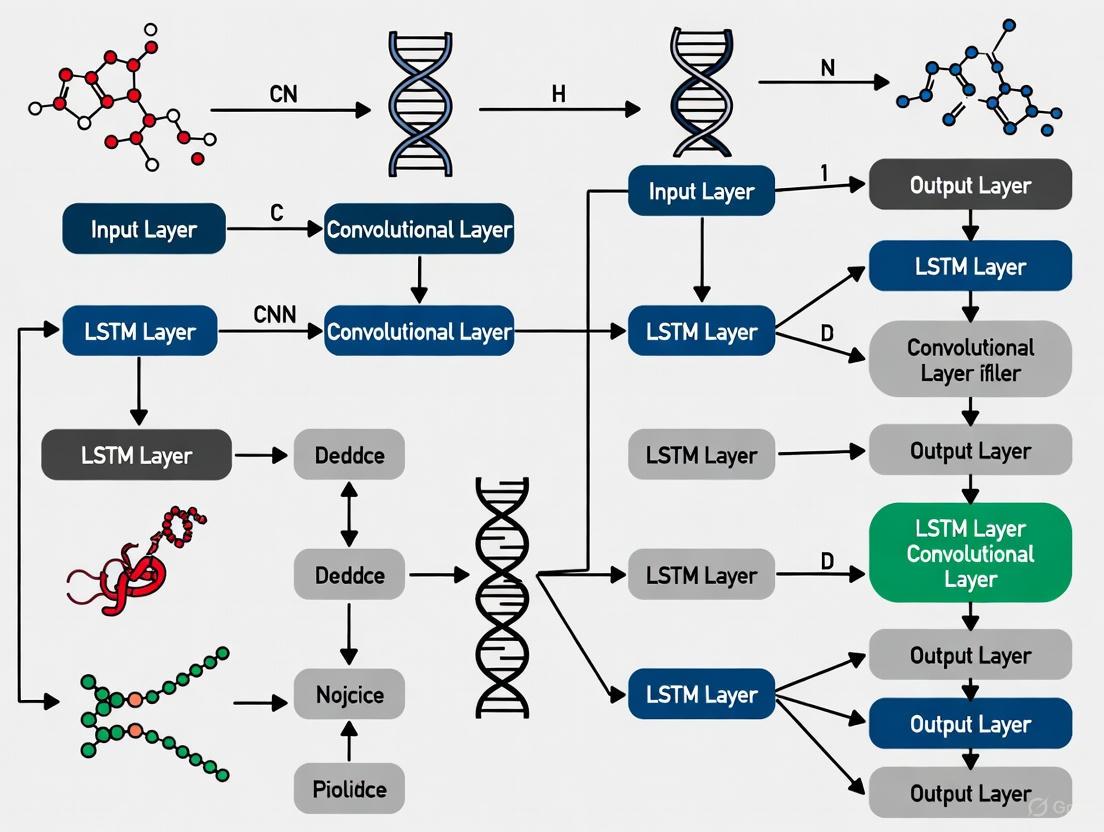

Workflow and Model Architecture Diagrams

The following diagram illustrates the logical flow and key components of a hybrid LSTM-CNN system for genomic sequence analysis.

Genomic Analysis with Hybrid LSTM-CNN Models

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for Hybrid Genomic Modeling

| Item Name | Function/Application | Specification Notes |

|---|---|---|

| Genomic Sequence Data | Raw input data for model training and validation. | Sourced from public repositories (e.g., NCBI GenBank, Sequence Read Archive) in FASTA, BAM, or VCF format [16] [21]. |

| SNP Genotyping Array | Provides genotype matrix for genomic prediction. | High-density arrays (e.g., Illumina Infinium) generating 1,000 to 100,000+ SNPs for constructing the input feature matrix [17]. |

| Python with Deep Learning Libraries | Core programming environment for model implementation. | Requires TensorFlow/Keras or PyTorch, along with bioinformatics packages like Biopython for data handling [16] [1]. |

| High-Performance Computing (HPC) Cluster | Accelerates model training and hyperparameter optimization. | Equipped with multiple GPUs (e.g., NVIDIA Tesla) to handle the computational load of large genomic datasets and complex hybrid architectures [17] [21]. |

| SMOTE Algorithm | Addresses dataset imbalance in genomic studies. | A preprocessing technique (e.g., from imbalanced-learn Python library) crucial for mitigating bias against rare viral classes or disease variants [16] [20]. |

Implementing Hybrid Architectures: From DNA Classification to Clinical Prediction

The analysis of genomic sequences represents one of the most complex challenges in modern computational biology, requiring models capable of capturing both local patterns and long-range dependencies within DNA. The integration of Convolutional Neural Networks (CNNs) and Long Short-Term Memory (LSTM) networks addresses this fundamental biological reality. Genomic function is governed not only by local sequence motifs—short, conserved patterns recognized by transcription factors and DNA-binding proteins—but also by long-range regulatory interactions where distant genomic elements influence expression through chromosomal looping and three-dimensional genome architecture. This biological imperative necessitates a hybrid computational approach that can simultaneously detect conserved local features and model their interactions across thousands of nucleotide positions.

The synergistic combination of CNN and LSTM architectures creates a powerful framework for genomic sequence analysis. CNNs excel at identifying local sequence motifs through their convolutional filters that scan input sequences for conserved patterns, effectively recognizing protein-binding sites, nucleotide composition biases, and other short-range signals. Meanwhile, LSTMs process sequential information with memory capabilities, allowing them to learn dependencies between genomic elements regardless of their separation distance. This proves particularly valuable for modeling enhancer-promoter interactions, polycistronic gene regulation, and other long-range genomic relationships that defy simple positional analysis. When strategically combined, these architectures form a comprehensive model that mirrors the multi-scale organization of genomic information, from local nucleotide interactions to chromosome-scale regulatory networks.

Quantitative Performance Landscape

Recent research demonstrates the superior performance of hybrid CNN-LSTM architectures compared to traditional machine learning approaches and standalone deep learning models in genomic sequence classification tasks. The quantitative evidence strongly supports the adoption of hybrid models for DNA sequence analysis.

Table 1: Performance Comparison of DNA Sequence Classification Models

| Model Type | Specific Model | Accuracy (%) | Key Strengths |

|---|---|---|---|

| Hybrid Deep Learning | LSTM + CNN | 100.0 | Captures both local patterns and long-range dependencies |

| Traditional ML | Logistic Regression | 45.3 | Interpretability, computational efficiency |

| Traditional ML | Naïve Bayes | 17.8 | Probability-based classification |

| Traditional ML | Random Forest | 69.9 | Handles non-linear relationships |

| Advanced ML | XGBoost | 81.5 | Gradient boosting effectiveness |

| Deep Learning | DeepSea | 76.6 | Specialized for genomic tasks |

| Deep Learning | DeepVariant | 67.0 | Variant calling accuracy |

| Deep Learning | Graph Neural Networks | 30.7 | Graph-based data representation |

The remarkable 100% classification accuracy achieved by the LSTM-CNN hybrid model underscores the critical importance of architectural design in genomic deep learning applications. This performance advantage stems from the model's ability to leverage complementary strengths: CNNs provide translational invariance and hierarchical feature learning at local scales, while LSTMs model temporal dependencies across the entire sequence length. The hybrid approach effectively addresses the multi-scale nature of genomic information, where functional elements operate at different spatial resolutions—from three-nucleotide codons to multi-gene regulatory domains spanning hundreds of kilobases.

Table 2: Hyperparameter Configuration for Optimized Hybrid Architecture

| Parameter Category | Specific Setting | Biological Rationale |

|---|---|---|

| CNN Component | Multiple filter sizes (3, 5, 7) | Detect variable-length motifs |

| CNN Component | 128-256 filters per size | Capture diverse sequence features |

| LSTM Component | 120 hidden units | Model long-range dependencies |

| LSTM Component | Bidirectional configuration | Context from both genomic directions |

| Training | Adam optimizer | Efficient gradient-based learning |

| Training | 0.002 learning rate | Stable convergence |

| Training | 200 epochs | Sufficient training iterations |

| Training | Gradient clipping at 1 | Prevent exploding gradients |

Experimental Protocol for Genomic Sequence Classification

Data Acquisition and Preprocessing

The foundation of any successful genomic deep learning project begins with comprehensive data acquisition and rigorous preprocessing. For human DNA sequence classification, researchers can access curated datasets from public genomic repositories such as the UCSC Genome Browser, ENCODE, and specialized databases outlined in recent literature [22]. These resources provide experimentally validated sequences with functional annotations including promoter regions, enhancer elements, and transcription factor binding sites. The initial data acquisition phase should prioritize balanced class distributions and representative sampling across genomic contexts to prevent algorithmic bias and ensure generalizable model performance.

Critical preprocessing steps include sequence normalization, categorical encoding, and appropriate data partitioning. Genomic sequences must be standardized to consistent lengths through strategic padding or trimming operations, preserving biological relevance while meeting computational requirements. One-hot encoding represents the gold standard for transforming nucleotide sequences into numerical representations, creating binary vectors where each nucleotide (A, T, C, G) occupies a unique positional encoding. This approach preserves sequence information without imposing artificial ordinal relationships between nucleotides. The dataset should be partitioned into training (80%), validation (10%), and test (10%) sets using stratified sampling to maintain consistent class distributions across splits. For enhanced model robustness, implement k-fold cross-validation with biologically independent segments to prevent information leakage between training and evaluation phases.

Step-by-Step Model Implementation Protocol

Step 1: Input Layer Configuration

- Define input dimensions based on sequence length and encoding scheme

- For one-hot encoded DNA sequences of length L, input shape = (L, 4)

- Implement using sequenceInputLayer function with specified dimensions

Step 2: Convolutional Feature Extraction

- Design parallel convolutional pathways with varying filter sizes

- Filter dimensions: 3×4, 5×4, 7×4 (width × input channels)

- Apply 128-256 filters per pathway to capture diverse motif representations

- Follow each convolution with ReLU activation: max(0, x) for nonlinear transformation

- Include batch normalization layers to stabilize training and accelerate convergence

Step 3: Dimensionality Reduction

- Implement max-pooling operations with pool size 2 and stride 2

- This reduces spatial dimensions by half while retaining dominant features

- Alternative: global max-pooling for fixed-size representations regardless of input length

Step 4: Temporal Dependency Modeling

- Route pooled features to bidirectional LSTM layers with 120 hidden units

- Bidirectional processing captures contextual information from both genomic directions

- LSTM memory cells maintain relevant information across long sequence distances

- Set outputMode to "last" for sequence classification tasks

Step 5: Classification Head

- Flatten multi-dimensional features into vector representation

- Include fully connected layer with number of units matching class count

- Apply softmax activation for multi-class probability distribution

- Output layer produces final classification scores

Model Training and Optimization Protocol

Training Configuration:

- Initialize optimizer: Adam with learning rate = 0.002, beta1 = 0.9, beta2 = 0.999

- Set batch size: 32-128 based on available memory and dataset size

- Establish training duration: 200 epochs with early stopping patience = 20 epochs

- Define loss function: categorical cross-entropy for multi-class classification

- Implement gradient clipping: threshold = 1.0 to prevent exploding gradients

- Configure validation: monitor validation accuracy for model selection

Regularization Strategy:

- Apply L2 weight regularization: coefficient = 0.001 in convolutional and dense layers

- Implement dropout: rate = 0.5 after LSTM layer and between dense layers

- Utilize batch normalization: after each convolutional layer before activation

- Employ data augmentation: random reverse-complement generation during training

Performance Monitoring:

- Track training/validation loss curves for overfitting detection

- Monitor multiple metrics: accuracy, precision, recall, F1-score

- Implement learning rate reduction on validation plateau (factor = 0.5, patience = 10)

- Conduct error analysis: examine misclassified sequences for systematic patterns

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Hybrid Model Development

| Tool Category | Specific Tool/Platform | Function in Workflow |

|---|---|---|

| Deep Learning Frameworks | TensorFlow with Keras API | Model architecture implementation and training |

| Deep Learning Frameworks | PyTorch | Flexible research prototyping and experimentation |

| Bioinformatics Libraries | Biopython | Genomic sequence processing and manipulation |

| Bioinformatics Libraries | Bedtools | Genomic interval operations and dataset management |

| Data Science Ecosystem | NumPy, Pandas | Numerical computation and data manipulation |

| Data Science Ecosystem | Scikit-learn | Data preprocessing, evaluation metrics, and traditional ML |

| Visualization Tools | Matplotlib, Seaborn | Performance metric visualization and result reporting |

| Visualization Tools | Plotly | Interactive visualization of genomic annotations |

| Specialized Genomics | Selene | Deep learning platform for genomic sequence analysis |

| Specialized Genomics | Jupyter Notebooks | Interactive development and exploratory analysis |

Table 4: Experimental Data Resources for Genomic Sequence Analysis

| Resource Type | Specific Database/Resource | Application Context |

|---|---|---|

| Public Data Repositories | ENCODE (Encyclopedia of DNA Elements) | Functional genomic element annotation |

| Public Data Repositories | UCSC Genome Browser | Genome visualization and data integration |

| Public Data Repositories | NCBI Gene Expression Omnibus (GEO) | Access to published genomic datasets |

| Public Data Repositories | UK Biobank (490,640 WGS datasets) | Large-scale human genetic variation [23] |

| Sequence Databases | Ensembl | Genome annotation and comparative genomics |

| Sequence Databases | NCBI Nucleotide | Reference sequences and curated collections |

| Benchmark Datasets | 140 benchmark datasets across 44 DNA analysis tasks | Model training and comparative evaluation [22] |

| Preprocessing Tools | DeepVariant | Accurate variant calling from sequencing data |

| Preprocessing Tools | CRISPResso2 | Analysis of genome editing outcomes |

Advanced Applications in Genomic Medicine

The integration of CNN-LSTM hybrid models extends beyond basic sequence classification to address complex challenges in genomic medicine and therapeutic development. In cancer genomics, these architectures enable precise tumor subtyping based on mutational profiles and regulatory element alterations, facilitating personalized treatment strategies. For rare disease diagnosis, hybrid models can identify pathogenic variants in non-coding regions that traditional exome sequencing approaches would miss, significantly improving diagnostic yield for previously undiagnosed conditions.

In pharmaceutical development, CNN-LSTM models accelerate target identification and validation by predicting the functional consequences of non-coding variants on gene expression and protein function. This capability proves particularly valuable for understanding regulatory variant mechanisms in pharmacogenomics, where interindividual differences in drug response often trace to non-coding regions that modulate metabolic enzyme expression. Additionally, these models power advanced biomarker discovery pipelines by integrating multi-modal genomic data to identify complex signatures predictive of therapeutic response, enabling more precise patient stratification for clinical trials and ultimately improving success rates in drug development programs.

Workflow Integration and Experimental Validation

The successful implementation of hybrid CNN-LSTM models requires careful attention to biological validation and experimental confirmation. Model predictions should be systematically verified through targeted experimental approaches that test specific biological hypotheses generated by the computational analysis. For genomic element classification, orthogonal validation methods such as reporter assays, CRISPR-based functional screens, and chromatin conformation analyses provide essential confirmation of model predictions. This iterative cycle of computational prediction and experimental validation establishes a rigorous framework for biological discovery while continuously improving model performance through incorporation of additional experimentally-derived training data.

Critical considerations for experimental validation include designing appropriate positive and negative control sequences, establishing quantitative metrics for functional assessment, and ensuring biological relevance through cell-type-specific or condition-specific experimental contexts. The integration of wet-lab experimentation with computational modeling creates a powerful virtuous cycle: model predictions guide targeted experiments, while experimental results refine model training, ultimately leading to increasingly accurate and biologically relevant genomic sequence analysis capabilities. This integrated approach maximizes the translational potential of deep learning methodologies in genomic medicine and therapeutic development.

DNA Sequence Classification and Species Identification

DNA sequence classification enables rapid and objective species identification by analyzing variability in specific genomic regions, revolutionizing fields from biodiversity monitoring to pharmaceutical authenticity control [24]. This methodology, known as DNA barcoding, functions like a universal product code for living organisms, allowing non-experts to identify species from small, damaged, or industrially processed materials where traditional morphological identification fails [24]. The fundamental premise relies on comparing short, standardized genomic sequences (approximately 700 nucleotides) against reference databases containing validated sequences from known species [25] [24].

The technological evolution from Sanger sequencing to next-generation sequencing (NGS) and third-generation sequencing has dramatically enhanced throughput while reducing costs, enabling researchers to process thousands of specimens simultaneously [26] [27]. Concurrently, artificial intelligence methodologies—particularly hybrid LSTM-CNN models—have emerged as powerful tools for analyzing the complex patterns within genomic sequences, offering improved accuracy in species prediction and classification tasks [26] [28].

Key Genomic Regions for Taxonomic Classification

Table 1: Standard DNA Barcode Regions for Major Taxonomic Groups

| Taxonomic Group | Primary Barcode Region | Alternative Regions | Key Characteristics |

|---|---|---|---|

| Animals | Cytochrome c oxidase subunit I (COI) | - | Mitochondrial gene; high mutation rate; proven diagnostic power for metazoans [24] |

| Flowering Plants | rbcL + matK | ITS, trnH-psbA | Chloroplast genes; rbcL offers easy amplification while matK provides higher discrimination [24] |

| Fungi | Internal Transcribed Spacer (ITS) | - | Nuclear ribosomal region; multi-copy nature aids amplification; high variability [24] |

| Green Macroalgae | tufA | rbcL | Chloroplast gene; elongation factor Tu; used when standard plant barcodes fail [24] |

These genomic regions are selected based on several criteria: significant interspecies variability with minimal intraspecies variation, presence across broad taxonomic groups, and reliable amplification using universal primers [24]. For particularly challenging taxonomic distinctions or when working with degraded samples, combining multiple barcode regions significantly enhances discriminatory power and identification confidence [24].

Experimental Protocol: Sample to Sequence

Sample Preparation and DNA Extraction

Proper sample preparation is foundational to successful DNA barcoding. The specific methodology varies by sample type [25]:

- Plant tissues: Surface sterilization followed by tissue disruption using bead beating or grinding in liquid nitrogen. Cell wall digestion may require extended incubation with specialized enzymes.

- Animal tissues: Muscle or epithelial tissues typically provide high-quality DNA with minimal inhibitors. Ethanol preservation is preferred over formalin which fragments DNA.

- Fungal specimens: Both fruiting bodies and mycelial cultures are suitable, though may require additional purification steps to remove polysaccharides.

- Processed products: Food and herbal products often require specialized extraction protocols to overcome PCR inhibitors and accommodate degraded DNA.

DNA extraction should prioritize yield, purity, and fragment size suitable for amplification of the target barcode region. While numerous commercial kits are available, protocols must be adapted for specific sample types [25]. Post-extraction quantification via fluorometry or spectrophotometry ensures optimal template concentration for subsequent amplification steps [25].

Target Amplification and Sequencing

Table 2: PCR Amplification Components and Conditions

| Component/Parameter | Specification | Notes |

|---|---|---|

| DNA Template | 1-10 ng/μL | Adjust based on extraction quality and sample type [25] |

| Primer Pair | 0.2-0.5 μM each | Taxon-specific barcode primers (see Table 1) [25] |

| Polymerase | 0.5-1.25 units/reaction | High-fidelity enzymes recommended for complex templates [25] |

| Thermal Cycling | 35 cycles of: 94°C (30s), 50-60°C (30s), 72°C (45-60s) | Annealing temperature primer-specific; extension time depends on amplicon length [25] |

| Amplicon Verification | 1.5-2% agarose gel electrophoresis | Confirm single band of expected size [25] |

Following successful amplification, PCR products require purification to remove primers, enzymes, and nucleotides before sequencing [25]. For traditional Sanger sequencing, which remains the gold standard for individual specimens, the purified product is sequenced bidirectionally to ensure high-quality base calling across the entire barcode region [25] [24]. For high-throughput applications involving multiple specimens or mixed samples, next-generation sequencing platforms with specialized library preparation protocols are employed [26] [27].

Experimental Workflow from Sample to Sequence

Computational Analysis Pipeline

Sequence Processing and Quality Control

Raw sequencing data requires substantial preprocessing before biological interpretation. For Sanger sequences, this involves base calling, trace quality assessment, and contig assembly from bidirectional reads [25]. NGS data demands more extensive processing including adapter trimming, quality filtering, and demultiplexing of pooled samples [26] [27].

Quality thresholds must be established a priori—typically requiring Phred quality scores ≥30 (indicating 99.9% base call accuracy) across the majority of the barcode region [25]. Sequences failing quality metrics should be excluded or targeted for re-sequencing to prevent erroneous classifications.

Feature Engineering for Machine Learning

Genomic sequences require transformation into numerical representations compatible with machine learning algorithms like hybrid LSTM-CNN models [28]. The most common approaches include:

K-mer frequency analysis: Decomposes sequences into all possible subsequences of length k, creating a frequency vector representation [28]. For example, a sequence "ATGAAGA" with k=3 generates the k-mers: "ATG", "TGA", "GAA", "AAG", "AGA". Optimal k-values typically range from 3-6, balancing computational efficiency with biological meaningfulness [28].

One-hot encoding: Represents each nucleotide as a four-dimensional binary vector (A=[1,0,0,0], C=[0,1,0,0], G=[0,0,1,0], T=[0,0,0,1]) [28]. This approach preserves positional information but creates high-dimensional representations for long sequences.

MinHashing: Efficiently approximates sequence similarity by converting k-mer profiles into signature matrices, particularly valuable for comparing large datasets [28].

Computational Analysis Pipeline

Database Searching and Phylogenetic Placement

For identification (rather than classification), the processed barcode sequence is compared against reference databases using similarity search tools like BLAST (Basic Local Alignment Search Tool) [24]. Multiple databases exist for this purpose:

- BOLD Systems: The Barcode of Life Data System specializing in COI sequences for animal identification [24]

- GenBank: Comprehensive sequence repository at NCBI containing all publicly available DNA sequences [24]

- RefSeq: Curated non-redundant database particularly useful for well-characterized organisms [28]

Top matches are evaluated based on percent identity, query coverage, and e-value. Specimens are typically assigned to species when sequence similarity exceeds 97-99% with a reference sequence, though these thresholds vary by taxonomic group [24]. For novel species or ambiguous matches, phylogenetic analysis using neighbor-joining or maximum likelihood methods provides evolutionary context and confirms placement relative to closest relatives [24].

Hybrid LSTM-CNN Architecture for Sequence Classification

Model Architecture and Implementation

Hybrid LSTM-CNN models leverage the complementary strengths of both architectures: CNNs excel at detecting local motifs and position-invariant patterns, while LSTMs capture long-range dependencies and sequential context [26] [28]. For genomic sequence classification, a typical implementation includes:

- Input layer: Accepts k-mer frequency vectors or one-hot encoded sequences

- Convolutional blocks: Multiple 1D convolutional layers with increasing filter sizes to capture nucleotide motifs of varying lengths

- Pooling layers: Reduce dimensionality while preserving important features

- LSTM layers: Process sequential features extracted by convolutional blocks, modeling dependencies across the barcode region

- Fully connected layers: Integrate features for final classification

- Output layer: Softmax activation generating probability distribution across candidate species

This architecture has demonstrated superior performance compared to single-modality networks, particularly for distinguishing closely related species with subtle sequence variations [26] [28].

Hybrid LSTM-CNN Model Architecture

Training Considerations and Optimization

Successful implementation requires careful attention to several factors:

Class imbalance mitigation: Taxonomic databases typically contain disproportionate representation across species. Techniques including oversampling, undersampling, class weighting, or synthetic data generation should be employed [26].

Regularization strategies: Dropout layers, L2 regularization, and early stopping prevent overfitting, particularly important with limited training data for rare species [26].

Hyperparameter optimization: Grid search or Bayesian optimization for filter sizes, LSTM units, learning rates, and batch sizes significantly impact model performance [26].

Interpretability: Visualization techniques like saliency maps highlight nucleotides and regions most influential in classification decisions, providing biological validation [26].

Research Reagent Solutions

Table 3: Essential Research Reagents for DNA Barcoding Workflows

| Reagent Category | Specific Examples | Function & Application Notes |

|---|---|---|

| DNA Extraction Kits | DNeasy Blood & Tissue (Qiagen), CTAB protocols | Cell lysis and nucleic acid purification; selection depends on sample type and preservation method [25] |

| Polymerase Master Mixes | Platinum Taq (Thermo Fisher), Q5 High-Fidelity (NEB) | PCR amplification with optimized buffer conditions; high-fidelity enzymes recommended for complex templates [25] |

| Barcode-Specific Primers | COI: LCO1490/HCO2198, rbcL: rbcLa-F/rbcLa-R | Universal primer pairs targeting standardized barcode regions; require validation for specific taxonomic groups [25] [24] |

| Sequencing Kits | BigDye Terminator (Thermo Fisher), Nextera XT (Illumina) | Sanger or NGS library preparation; selection determined by throughput requirements and available instrumentation [25] [27] |

| Quality Control Reagents | Qubit dsDNA HS Assay (Thermo Fisher), Bioanalyzer DNA chips (Agilent) | Accurate quantification and size distribution analysis; critical for sequencing success [25] |

Applications in Research and Drug Development

DNA sequence classification enables numerous applications with particular relevance to pharmaceutical research and development:

Authenticity verification of botanical ingredients: Herbal medicines and natural product extracts can be validated against reference standards, detecting adulteration or substitution with inferior species [24]. Studies have demonstrated misidentification in approximately 25% of commercial herbal products [24].

Biomaterial characterization: Cell lines and tissue samples used in research can be authenticated, preventing costly experiments with misidentified biological materials [24].

Bioprospecting and novel compound discovery: Rapid surveys of biodiversity hotspots identify previously uncharacterized species with potential pharmaceutical value [24].

Quality control in production systems: Fermentation systems and biological manufacturing processes can be monitored for microbial contamination using metabarcoding approaches [26].

The integration of AI-enhanced sequencing analysis creates unprecedented opportunities for scaling these applications to industrial-level throughput while maintaining rigorous accuracy standards demanded by regulatory agencies [26].

The identification of essential genes and long non-coding RNAs (lncRNAs) represents a cornerstone in genomics and therapeutic development. Essential genes constitute the minimal gene set required for organism survival and growth, while lncRNAs—transcripts longer than 200 nucleotides with limited or no protein-coding potential—play crucial regulatory roles in diverse biological processes, including cell proliferation, stress responses, and gene expression regulation [29] [30]. Experimental methods for identifying these elements, such as single-gene knockout and CRISPR screens, are considered gold standards but face limitations of being time-consuming, resource-intensive, and technically challenging [29] [31]. Computational approaches, particularly hybrid deep learning models integrating Long Short-Term Memory (LSTM) networks and Convolutional Neural Networks (CNN), have emerged as powerful alternatives, enabling accurate prediction and functional characterization from genomic sequences [30] [29].

Key Models and Performance Metrics

Recent research has yielded several sophisticated computational models for predicting essential genes and lncRNAs. The table below summarizes the key models and their reported performance metrics.

Table 1: Performance Metrics of Recent Prediction Models

| Model Name | Primary Application | Architecture | Key Performance Metrics | Reference |

|---|---|---|---|---|

| EGP Hybrid-ML | Essential Gene Prediction | GCN + Bi-LSTM + Attention Mechanism | Sensitivity: 0.9122; Accuracy: ~0.9 (average across species) [29] [32] | |

| Hybrid-DeepLSTM | Plant lncRNA Classification | Deep Neural Network with LSTM layers | Accuracy: 98.07% [30] | |

| RPI-SDA-XGBoost | ncRNA-Protein Interaction | Stacked Denoising Autoencoder + XGBoost | Precision: 94.6% on RPI_NPInter v2.0 dataset [33] | |

| CasRx Screening Platform | Functional lncRNA Interrogation | CRISPR/Cas13d-based experimental method | Enabled genome-scale identification of context-specific and common essential lncRNAs [31] |

Detailed Experimental Protocols

Protocol 1: Essential Gene Prediction with EGP Hybrid-ML

The EGP Hybrid-ML model demonstrates how hybrid architectures effectively tackle the challenges of essential gene prediction [29] [32].

- Data Acquisition and Preprocessing: Obtain essential and non-essential gene sequences from the public Database of Essential Genes (DEG). Process data using CD-HIT algorithm with a sequence identity threshold of 20% to reduce redundancy and eliminate homology bias. Partition the dataset into a training set (70%) and a testing set (30%) [29] [32].

- Multidimensional Multivariate Feature Coding: Implement feature coding to integrate temporal data and sequence information. This involves transforming gene sequences into visualized graphics for feature extraction [29].

- Model Training and Optimization:

- Hardware: Utilize a standard computing setup (e.g., 16 GB RAM, Intel i7 processor).

- Architecture: Employ a hybrid model comprising Graph Convolutional Neural Networks (GCN) to extract features from sequence graphics, and Bidirectional LSTM (Bi-LSTM) with an attention mechanism to assess feature importance and handle long-term dependencies.

- Parameters: Use the Adam optimizer with a learning rate of 0.001 and train for 1000 epochs. Perform statistical reporting based on the mean of six repeated trials [29] [32].

- Validation: Conduct cross-species cross-validation experiments to evaluate the model's generalization capability across 31 species from Archaea, Bacteria, and Eukaryotes [29].

Figure 1: Workflow of the EGP Hybrid-ML model for essential gene prediction, integrating GCN for feature extraction from sequence graphics and attention-based Bi-LSTM for classification [29] [32].

Protocol 2: Plant lncRNA Identification with Hybrid-DeepLSTM

The Hybrid-DeepLSTM protocol is specifically designed for the statistical analysis-based classification of lncRNAs in plant genomes [30].

- Feature Extraction: Develop a hybrid feature method to extract relevant characteristics from genomic sequences. This includes dinucleotide-based auto-covariance, nucleotide compositions, and pseudo-k-tuple composition. Use composite feature extraction to reduce bias while preserving sequential patterns [30].

- Model Architecture:

- Layer 1 & 2: Two LSTM layers for sequence modeling.

- Hidden and Output Layers: Hybrid-DeepLSTM layers for final classification of plant lncRNA sites [30].

- Simulation and Benchmarking: Execute the model on a benchmark plant genomic dataset. Compare its performance against established models like Gradient Boosting, Autoencoders, and XGBoost classifiers using accuracy as a primary metric [30].

Protocol 3: Functional Validation via CasRx Screening

Computational predictions require experimental validation. The CasRx platform provides a state-of-the-art method for genome-scale functional interrogation of predicted lncRNAs [31].