Advanced Feature Selection Techniques for High-Dimensional Genomic Data: A Comprehensive Guide for Biomedical Research

This article provides a comprehensive overview of feature selection strategies specifically designed for high-dimensional genomic data, addressing the critical p >> n problem prevalent in modern bioinformatics.

Advanced Feature Selection Techniques for High-Dimensional Genomic Data: A Comprehensive Guide for Biomedical Research

Abstract

This article provides a comprehensive overview of feature selection strategies specifically designed for high-dimensional genomic data, addressing the critical p >> n problem prevalent in modern bioinformatics. It explores foundational concepts, diverse methodological approaches—including filter, wrapper, embedded, and novel hybrid techniques—and addresses key challenges in computational efficiency, biomarker stability, and model optimization. Drawing from recent research, the content offers practical validation frameworks and comparative analyses to guide researchers and drug development professionals in selecting optimal feature selection strategies for genomic prediction, biomarker discovery, and clinical translation.

Understanding the High-Dimensional Genomic Data Landscape and Why Feature Selection is Crucial

In genomic research, the p >> n problem describes the significant statistical and computational challenge that arises when the number of features (p; e.g., single nucleotide polymorphisms or gene expression levels) vastly exceeds the number of observations (n; e.g., individual patients or biological samples) [1] [2]. This scenario is now commonplace with the widespread adoption of whole-genome sequencing (WGS), which can generate millions of genetic variants for a limited number of individuals [1]. The p >> n problem introduces substantial obstacles for accurate statistical inference and machine learning, including difficulties in parameter estimation, heightened risks of overfitting, increased potential for false positive associations, and ambiguous class assignments in classification tasks [1].

Feature selection (FS) has emerged as a critical preprocessing step to address these challenges by identifying the most biologically relevant features, thereby reducing data dimensionality and complexity for downstream analysis [1] [2]. This Application Note provides a structured overview of contemporary feature selection strategies, detailed experimental protocols, and essential computational tools specifically designed for ultra-high-dimensional genomic data.

Feature Selection Strategies for Genomic Data

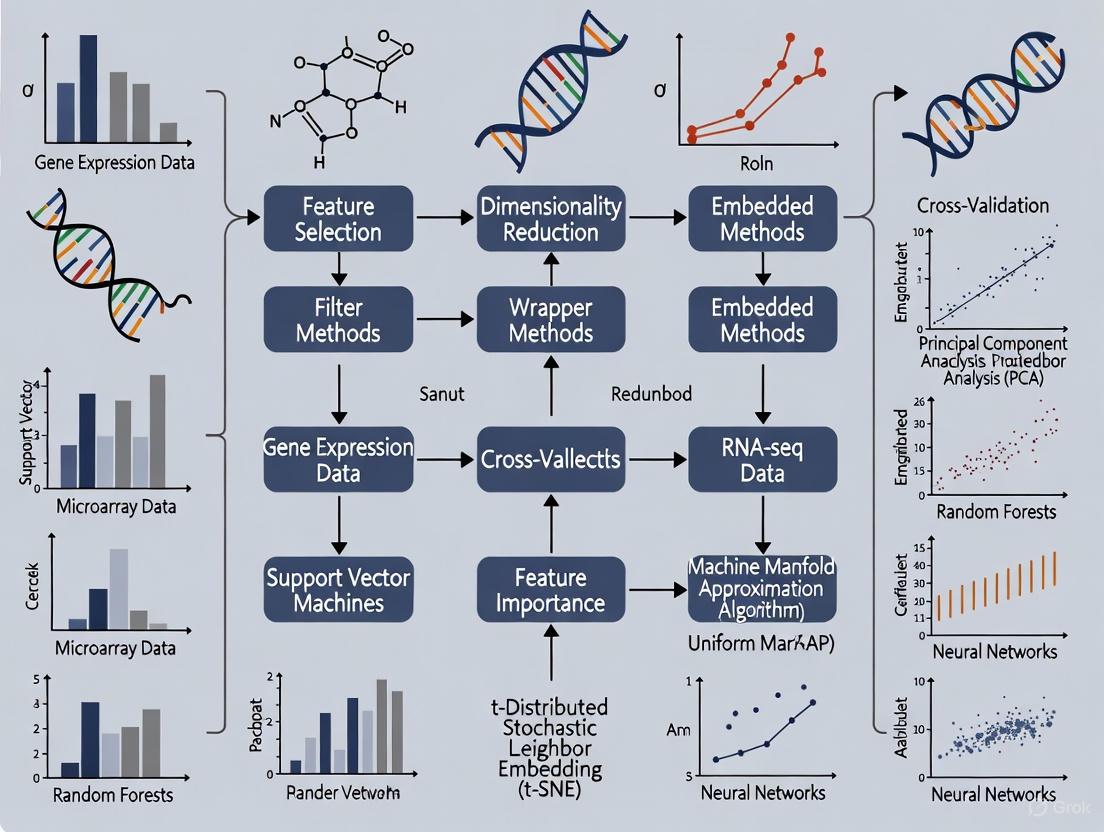

Feature selection methods are broadly classified into three primary categories—filter, wrapper, and embedded methods—with advanced ensemble and hybrid approaches building upon these foundations [2] [3].

Table 1: Categories of Feature Selection Methods

| Method Type | Core Principle | Advantages | Limitations | Genomic Applications |

|---|---|---|---|---|

| Filter Methods | Selects features based on statistical measures (e.g., correlation, mutual information) independent of a classifier. | Computationally fast, scalable, less prone to overfitting. | Ignores feature dependencies and interaction with the classifier. | Pre-filtering of SNPs, initial gene screening. |

| Wrapper Methods | Evaluates feature subsets using a specific classifier's performance (e.g., accuracy). | Considers feature interactions, often high-performing. | Computationally intensive, high risk of overfitting. | SNP set selection for breed classification [1]. |

| Embedded Methods | Performs feature selection as part of the model training process. | Balances performance and efficiency, model-specific. | Tied to a specific learning algorithm. | LASSO regularization in regression models. |

| Ensemble/Hybrid | Combines multiple models or methods (e.g., rank aggregation) to improve robustness. | Increased stability and accuracy, reduces variance. | Complex implementation, computationally demanding. | Supervised Rank Aggregation (SRA) for WGS data [1]. |

Advanced Feature Selection Algorithms

Recent research has introduced sophisticated algorithms to handle the scale and complexity of genomic data:

- Supervised Rank Aggregation (SRA): This ensemble approach combines feature importance scores from multiple models to create a robust overall feature ranking. The Multidimensional SRA (MD-SRA) variant provides an effective balance between classification quality (achieving 95.12% F1-score in breed classification) and computational efficiency, offering a 17x reduction in analysis time and 14x lower data storage requirements compared to simpler methods [1].

- Simultaneous Perturbation Stochastic Approximation (SPSA): A derivative-free optimization algorithm recently applied to large-scale cancer genomic datasets containing 35,924 to 44,894 features. This method treats feature selection as a stochastic optimization problem, efficiently navigating high-dimensional spaces to identify optimal feature subsets for cancer classification [3].

- Dimension Reduction based on Perturbation Theory (DRPT): A linear method that first removes irrelevant features by solving a least squares problem and weighting features, then detects correlations among remaining features through matrix perturbation and clustering. This approach has demonstrated effectiveness on genomic datasets ranging from 9,117 to 267,604 features [4].

Table 2: Performance Comparison of Advanced Feature Selection Methods on Genomic Data

| Method | Dataset Scale | Reduction Rate | Reported Performance | Computational Notes |

|---|---|---|---|---|

| SNP Tagging (LD Pruning) | 11.9M SNPs | 93.51% (to 773K SNPs) | F1-score: 86.87% | Fastest (74 min), minimal storage [1]. |

| 1D-SRA | 11.9M SNPs | 63.14% (to 4.39M SNPs) | F1-score: 96.81% | High resource demand (46.5 hrs, 3.1 TB storage) [1]. |

| MD-SRA | 11.9M SNPs | 67.39% (to 3.89M SNPs) | F1-score: 95.12% | Balanced efficiency (2.7 hrs, 227 MB storage) [1]. |

| SPSA | ~40,000 features | Variable (5-15% top features selected) | Favorable vs. 10 benchmark methods | Effective on large-scale cancer data [3]. |

| DRPT | Up to 267,604 features | Varies by dataset | Favorable vs. 7 state-of-the-art methods | Noise-robust and stable to row/column permutation [4]. |

Experimental Protocols

Protocol 1: Implementing Multidimensional Supervised Rank Aggregation (MD-SRA) for WGS Data

This protocol outlines the steps for applying MD-SRA to whole-genome sequencing data for multi-class classification tasks, adapted from ultra-high-dimensional genomic data classification studies [1].

Research Reagent Solutions

Table 3: Essential Components for MD-SRA Implementation

| Component | Specification | Function/Purpose |

|---|---|---|

| Genomic Dataset | VCF files with 11M+ SNPs from 1800+ individuals | Primary input data for feature selection. |

| Computational Environment | High-performance computing (HPC) cluster with CPU/GPU capabilities | Enables parallel processing of large-scale data. |

| Memory Mapping Tools | Python NumPy memmap or similar | Allows access to large datasets without loading entirely into RAM. |

| Multinomial Logistic Regression | With L1/L2 regularization | Base model for generating initial feature importance scores. |

| Clustering Algorithm | Weighted multidimensional clustering | Groups correlated features based on importance scores. |

Procedure

Data Preparation and Partitioning

- Convert genomic data (VCF format) into a numerical matrix (samples × SNPs).

- Implement memory mapping to handle data larger than system RAM.

- Randomly partition data into 100-500 subsets using stratified sampling to maintain class distributions.

Base Model Training

- Train multinomial logistic regression models on each data subset.

- Extract feature importance scores (regression coefficients) from each model.

- Store scores in a performance matrix (features × subsets).

Rank Aggregation via Multidimensional Clustering

- Apply weighted multidimensional clustering to the performance matrix.

- Group features with similar importance profiles across subsets.

- Select representative features from each cluster based on highest average importance.

Validation and Classification

- Train a deep learning classifier (e.g., Convolutional Neural Network) on the selected feature set.

- Evaluate using k-fold cross-validation, reporting F1-score, precision, and recall.

- Compare against traditional methods (e.g., SNP tagging) for benchmarking.

Protocol 2: SPSA-Based Feature Selection for Cancer Genomic Data

This protocol details the application of Simultaneous Perturbation Stochastic Approximation for feature selection on high-dimensional cancer genomic datasets, based on recent research [3].

Research Reagent Solutions

Table 4: Essential Components for SPSA Implementation

| Component | Specification | Function/Purpose |

|---|---|---|

| Cancer Genomic Dataset | RNA-seq or microarray data (35,000-45,000 features) | Input data for cancer classification. |

| SPSA Algorithm | With Barzilai-Borwein non-monotone gains | Core optimization for feature selection. |

| Classification Models | SVM, Random Forest, Neural Networks | Evaluation of selected feature subsets. |

| Feature Ranking Framework | Based on SPSA-generated weights | Ranks features by importance. |

| Statistical Testing Suite | t-tests, ANOVA, multiple comparison correction | Validates significance of performance differences. |

Procedure

Data Preprocessing and Normalization

- Load cancer genomic dataset (e.g., TCGA RNA-seq data).

- Apply quantile normalization and log2 transformation for gene expression data.

- Remove low-variance features (bottom 10%) to reduce noise.

SPSA Feature Selection and Ranking

- Initialize SPSA parameters: gain sequences, perturbation size, and iteration count.

- Implement binary SPSA to treat feature selection as stochastic optimization.

- Iteratively update feature weights based on classification performance.

- Rank features by their final weights in descending order.

Feature Subset Evaluation

- Select top-ranked features at multiple thresholds (5%, 10%, 15%).

- Train classifiers (SVM, Random Forest) on each feature subset.

- Evaluate performance using 10-fold cross-validation with accuracy, precision, and F1-score.

Statistical Validation and Comparison

- Compare SPSA performance against 10 benchmark methods (Boruta, RFE, etc.).

- Perform statistical significance testing (paired t-tests) on classification results.

- Identify optimal feature subset based on performance and computational efficiency.

Effective navigation of the p >> n problem requires leveraging contemporary computational frameworks and tools.

Table 5: Essential Computational Tools for High-Dimensional Genomic Analysis

| Tool Category | Specific Technologies | Application in Genomic Research |

|---|---|---|

| Workflow Management | Nextflow, Snakemake, Cromwell | Creates reproducible, scalable analysis pipelines for NGS data [5]. |

| Containerization | Docker, Singularity | Ensures environment consistency and portability across computational platforms [5]. |

| Cloud Computing Platforms | AWS HealthOmics, Google Cloud Genomics, Illumina Connected Analytics | Provides scalable storage and processing for large genomic datasets [6] [5]. |

| Variant Calling | DeepVariant (AI-powered), Strelka2 | Accurately identifies genetic variants from sequencing data using deep learning [7] [5]. |

| AI/ML Frameworks | TensorFlow, PyTorch, Scikit-learn | Enables development of custom feature selection and classification models [8]. |

| Data Visualization | Integrated visualization platforms | Enables interactive exploration of complex genomic datasets [5]. |

The p >> n problem in ultra-high-dimensional genomic data presents significant but surmountable challenges through the strategic application of advanced feature selection techniques. Methods such as Multidimensional Supervised Rank Aggregation and Simultaneous Perturbation Stochastic Approximation offer compelling approaches that balance classification performance with computational efficiency. The protocols and tools detailed in this Application Note provide researchers with practical frameworks for implementing these strategies in their genomic studies. As the field evolves, the integration of AI-powered analytics with multi-omics data integration will further enhance our ability to extract biological insights from increasingly complex and dimensional genomic datasets [9] [10].

High-dimensional genomic datasets present a paradigm shift in biological research, enabling unprecedented opportunities for biomarker discovery and clinical diagnostics. However, the analytical landscape of these datasets is fraught with significant challenges that can obscure true biological signals and compromise the validity of research findings. Technical noise, feature redundancy, and multicollinearity represent three fundamental obstacles that researchers must navigate to extract meaningful insights from genomic data. Technical noise stems from various sources including sequencing stochasticity, amplification biases, and background contamination, particularly affecting low-abundance molecular species [11]. Feature redundancy arises from biological systems where multiple genes or proteins perform overlapping functions, or from technological artifacts where correlated measurements capture the same underlying biological phenomenon [12]. Multicollinearity occurs when predictor variables in genomic datasets exhibit strong intercorrelations, complicating the interpretation of individual feature importance and destabilizing model estimates [13]. Within the broader context of feature selection techniques for high-dimensional genomic data research, addressing these intertwined challenges is paramount for developing robust, interpretable, and biologically relevant models that can reliably inform drug development and clinical applications.

Understanding the Core Challenges

Technical noise in genomic datasets encompasses non-biological variations introduced during experimental procedures. In sequencing-based technologies, this noise manifests as background contamination from ambient RNA or DNA, barcode swapping events, amplification biases, and mapping inaccuracies [11] [14]. These technical artifacts are particularly problematic for detecting subtle expression changes in low-abundance transcripts, where noise can constitute a substantial proportion of measured signals. In droplet-based single-cell RNA-seq experiments, for instance, background noise has been demonstrated to account for 3-35% of total counts per cell, significantly impacting marker gene detection and interpretation [14]. The presence of such noise increases false discovery rates in differential expression analysis, reduces power for detecting genuine biological effects, and can lead to spurious conclusions regarding cell-type identification or disease-associated genes.

Feature Redundancy: Biological and Technical Perspectives

Feature redundancy in genomic data operates at two distinct levels. Biologically, redundancy emerges from evolutionary processes that create backup systems within organisms, such as gene families with overlapping functions, parallel metabolic pathways, and correlated gene expression programs [12]. Technically, redundancy arises when multiple genomic features capture the same underlying biological phenomenon due to measurement correlations. This redundancy dilutes statistical power, increases computational complexity, and complicates biological interpretation. From an evolutionary perspective, redundancy is more common in organisms with low mutation rates and small population sizes, while antiredundancy (hypersensitivity to mutation) predominates in organisms with high mutation rates and large populations [12]. This evolutionary principle has practical implications for genomic analysis, as the same molecular system may exhibit different redundancy patterns across species or biological contexts.

Multicollinearity in High-Dimensional Settings

Multicollinearity refers to the phenomenon where genomic features are highly correlated with each other, creating statistical challenges in distinguishing their individual effects. In high-dimensional genomic datasets where the number of features (p) vastly exceeds the number of samples (n), multicollinearity is pervasive rather than exceptional [13]. Strong inter-feature correlations arise from functional biological networks, coordinated regulation of gene expression, and linkage disequilibrium in genetic variants. Multicollinearity inflates variance in coefficient estimates, leading to unstable model performance and unreliable feature importance rankings [13] [15]. This instability is particularly problematic for biomarker discovery, where identifying causal features rather than correlated proxies is essential for understanding disease mechanisms and developing targeted therapies.

Quantitative Comparison of Challenges Across Genomic Data Types

Table 1: Impact of Core Challenges Across Different Genomic Data Types

| Data Type | Technical Noise Characteristics | Feature Redundancy Sources | Multicollinearity Patterns |

|---|---|---|---|

| Single-Cell RNA-seq | 3-35% background noise from ambient RNA [14] | Correlated expression programs across cell types | High correlation within gene modules and pathways |

| Bulk RNA-seq | Low-level technical variation affecting low abundance genes [11] | Gene families with overlapping functions | Co-expression networks and regulatory programs |

| Genotyping Arrays | Genotype calling errors, batch effects | Linkage disequilibrium blocks | High correlation between proximal SNPs |

| Whole Genome Sequencing | Sequencing errors, coverage unevenness | Functional element redundancy | Haplotype blocks and structural variants |

| Proteomics | Technical variability in mass spectrometry [13] | Protein complex subunits | Strong inter-protein correlations from biological networks |

Table 2: Performance Comparison of Feature Selection Methods Addressing These Challenges

| Method | Technical Noise Handling | Feature Redundancy Reduction | Multicollinearity Management | Reported Performance |

|---|---|---|---|---|

| ST-CS (Soft-Thresholded Compressed Sensing) | Robust to technical noise through 1-bit quantization and K-Medoids clustering [13] | Enforces sparsity with dual regularization | Balances sparsity and stability via and constraints | AUC: 97.47% with 57% fewer features vs. HT-CS [13] |

| CEFS+ (Copula Entropy FS) | Captures full-order interaction gains between features [16] | Maximum correlation minimum redundancy strategy | Models non-linear dependencies via copula entropy | Highest classification accuracy in 10/15 scenarios [16] |

| WFISH (Weighted Fisher Score) | Prioritizes informative features based on expression differences [17] | Assigns weights to reduce impact of less useful features | Not explicitly addressed | Lower classification errors with RF and kNN classifiers [17] |

| noisyR | Assesses signal distribution variation across replicates [11] | Filters background noise outside consistency range | Not explicitly addressed | Improves consistency of differential expression calls [11] |

Detailed Experimental Protocols

Protocol 1: Implementing ST-CS for Proteomic Data

Principle: Soft-Thresholded Compressed Sensing (ST-CS) integrates 1-bit compressed sensing with K-Medoids clustering to automate feature selection, dynamically partitioning coefficient magnitudes into discriminative biomarkers and noise without manual thresholding [13].

Reagents and Materials:

- High-dimensional proteomic dataset (e.g., mass spectrometry measurements)

- Computational environment with R and Python installed

- R packages:

Rdonlp2for optimization

Procedure:

- Data Preprocessing: Normalize protein intensity measurements using quantile normalization. Standardize features to zero mean and unit variance.

- Linear Decision Function Formulation: Define the decision score for the i-th sample as: ( di = \langle \mathbf{w}, \mathbf{x}i \rangle ) where ( \mathbf{w} ) denotes the coefficient vector and ( \mathbf{x}_i ) the proteomic profile.

- Constrained Optimization: Solve the optimization problem: maximize ( \sum{i=1}^{n} yi \langle \mathbf{w}, \mathbf{x}i \rangle ) subject to ( \|\mathbf{w}\|1 \leq \lambda ) and ( \|\mathbf{w}\|_2^2 \leq 1 ), where ( \lambda ) controls sparsity-intensity trade-off.

- K-Medoids Clustering: Apply K-Medoids clustering (K=2) to the coefficient magnitudes ( |w_j| ) to automatically separate true biomarkers (large coefficients) from noise (near-zero coefficients).

- Biomarker Identification: Select features corresponding to the cluster with larger coefficient magnitudes as the final biomarker set.

Technical Notes: The dual ( \ell1 ) and ( \ell2 ) constraints balance sparsity and stability. The ( \ell1 )-norm promotes sparsity by shrinking irrelevant coefficients to zero, while the ( \ell2 )-norm controls multicollinearity. The method has demonstrated 20-50% reduction in false discovery rates compared to hard-thresholded approaches [13].

Protocol 2: CEFS+ for Genetic Data with Feature Interactions

Principle: The Copula Entropy Feature Selection (CEFS+) approach combines feature-feature mutual information with feature-label mutual information using a maximum correlation minimum redundancy strategy, specifically designed to capture interaction gains in high-dimensional genetic data [16].

Reagents and Materials:

- Genomic dataset with potentially interacting features (e.g., SNP data, gene expression)

- Python programming environment with scikit-learn

- Specialized CEFS+ implementation for copula entropy calculation

Procedure:

- Data Preparation: Encode genetic variants appropriately (e.g., additive encoding for SNPs). Remove features with excessive missing values (>20%).

- Copula Entropy Calculation: Estimate copula entropy for feature pairs and feature-label combinations using nonparametric estimators.

- Divisibility of Multiple Mutual Information: Apply the proven relationship where information in variable set pointing to a variable equals all information minus information in the variable set.

- Greedy Selection with Rank Strategy: Implement the maximum correlation minimum redundancy criterion with rank stabilization to overcome instability on certain datasets.

- Feature Subset Evaluation: Validate selected features using cross-validation with multiple classifiers (e.g., random forests, SVM).

Technical Notes: CEFS+ specifically addresses the limitation of most feature selection methods in capturing interaction gains, where the value of multiple features together exceeds the sum of their individual values. This is particularly important for genetic data where epistasis and gene-gene interactions play crucial roles in complex traits and diseases [16].

Protocol 3: Background Noise Removal with noisyR

Principle: The noisyR pipeline assesses variation in signal distribution to achieve optimal information consistency across replicates and samples, filtering out technical noise to facilitate meaningful pattern recognition outside the background-noise range [11].

Reagents and Materials:

- Sequencing count matrix or alignment data (BAM files)

- R statistical environment

- noisyR package installed from Bioconductor

Procedure:

- Data Input: Load unnormalized count matrix or alignment data. For alignment data, specify genomic features of interest.

- Noise Quantification: Execute the noise assessment function to evaluate correlation of expression across subsets of genes in different samples/replicates across all gene abundances.

- Threshold Determination: Calculate sample-specific signal/noise thresholds based on consistency of expression patterns.

- Matrix Filtering: Generate filtered expression matrices excluding genes falling below the consistency thresholds.

- Downstream Analysis Validation: Compare differential expression results, enrichment analyses, and gene regulatory network inferences before and after noise filtering.

Technical Notes: noisyR effectively minimizes technical noise that can obscure patterns in downstream analyses. Applications have demonstrated improved convergence of predictions (differential expression calls, enrichment analyses, and inference of gene regulatory networks) across different analytical approaches after noise filtration [11].

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 3: Key Research Reagent Solutions for Genomic Data Challenges

| Reagent/Resource | Function | Application Context |

|---|---|---|

| CellBender | Quantifies and removes background noise from single-cell data | scRNA-seq experiments with ambient RNA contamination [14] |

| SoupX | Estimates contamination fraction using marker genes and empty droplets | Background noise correction in droplet-based sequencing [14] |

| DecontX | Models background noise using mixture distributions based on cell clusters | Single-cell data decontamination [14] |

| noisyR | Comprehensive noise filtering for sequencing data | Bulk and single-cell RNA-seq denoizing [11] |

| ST-CS Implementation | Automated feature selection with compressed sensing and clustering | High-dimensional proteomic and genomic biomarker discovery [13] |

| CEFS+ Package | Copula entropy-based feature selection with interaction capture | Genetic data with epistatic interactions [16] |

| WFISH Algorithm | Weighted Fisher score for gene expression data | Differential expression analysis in classification tasks [17] |

Workflow Visualization

Diagram 1: Comprehensive workflow addressing core challenges in genomic datasets

Diagram 2: ST-CS workflow integrating compressed sensing with clustering

High-dimensional genomic data, characterized by a vastly greater number of features (e.g., genes, single nucleotide polymorphisms or SNPs) than samples (the p >> n problem), presents a fundamental challenge in bioinformatics research [18] [19]. This dimensionality curse significantly increases the risk of model overfitting, where a model learns noise and spurious correlations specific to the training data, failing to generalize to new, unseen datasets [19] [20]. Feature selection (FS) has emerged as a critical preprocessing step to mitigate these issues. By identifying and retaining only the most informative and non-redundant features, FS directly reduces model complexity, enhances the generalizability of predictive models, and is instrumental in preventing overfitting [16] [19] [21]. This document outlines the application of robust feature selection protocols within high-dimensional genomic research, providing actionable notes and methodologies for scientists and drug development professionals.

The Necessity of Feature Selection in Genomics

The High-Dimensional Genomic Data Landscape

Genomic data, derived from technologies like microarrays, RNA-sequencing, and Whole-Genome Sequencing (WGS), is inherently high-dimensional. For instance, gene expression datasets may profile tens of thousands of genes from only hundreds of samples [22], and WGS can identify millions of SNPs from a much smaller cohort of individuals [18]. This imbalance creates a statistical challenge where models can easily memorize the training data without learning underlying biological patterns.

Overfitting and Its Consequences

Overfitting occurs when a model learns the training data too well, including its noise. In genomics, this is often driven by the inclusion of a large number of trait-irrelevant or neutral markers [20] [21]. The consequences are severe:

- Exaggerated Heritability Estimates: Studies have demonstrated that using all markers in a genomic selection (GS) model without feature selection leads to a significant overestimation of genetic variance and, consequently, trait heritability [20].

- Inflated Prediction Accuracy: Selecting features based on the entire dataset, including the test set, can inflate prediction accuracy by up to 2-fold in cross-validation and up to 9-fold in external validation, providing a misleading assessment of model utility [21].

- Poor Generalizability: An overfitted model performs poorly on independent validation cohorts or in real-world clinical settings, undermining its translational potential [19].

A Framework for Feature Selection Methods

Feature selection techniques can be broadly categorized into three main types, each with distinct mechanisms and implications for model complexity and overfitting. The diagram below illustrates the logical workflow and key characteristics of these categories.

Filter Methods

Filter methods assess feature relevance based on intrinsic data properties, independent of a machine learning classifier [2] [23]. They are fast and computationally efficient, making them suitable for an initial screening of thousands of features.

- Mechanism: Features are ranked using univariate statistical measures, such as differential expression analysis (t-test, p-value), Signal-to-Noise Ratio (SNR), or mutual information [16] [24]. A threshold is then applied to select the top-k features.

- Impact on Overfitting: While efficient, univariate filters often ignore interactions between features (epistasis) and may select redundant features due to linkage disequilibrium (LD) in genetic data, potentially limiting generalization [19].

Wrapper Methods

Wrapper methods evaluate feature subsets based on their performance with a specific predictive model (e.g., a classifier) [2] [23]. They can capture feature interactions but are computationally intensive.

- Mechanism: A search algorithm (e.g., forward/backward selection, genetic algorithms) is used to generate candidate feature subsets, which are then evaluated by training and testing a model. The subset yielding the best model performance is selected [16].

- Impact on Overfitting: These methods carry a high risk of overfitting if the feature selection process is not rigorously cross-validated separately from the model performance evaluation [21]. The search must use only training data.

Embedded Methods

Embedded methods integrate the feature selection process directly into the model training algorithm [2] [23]. They offer a balance between the efficiency of filters and the performance of wrappers.

- Mechanism: The model itself performs feature selection during training. Examples include:

- Impact on Overfitting: These methods naturally penalize model complexity (e.g., via regularization), which directly helps prevent overfitting [20].

Quantitative Comparison of Feature Selection Performance

The effectiveness of feature selection is ultimately quantified by improved model performance on unseen data. The table below summarizes reported performance gains from recent studies applying different FS methods to genomic data.

Table 1: Performance comparison of feature selection methods on genomic classification tasks.

| Feature Selection Method | Dataset Type | Classifier Used | Key Performance Metric | Result | Reference |

|---|---|---|---|---|---|

| CEFS+ (Copula Entropy-based) | High-dimensional genetic data | Multiple Classifiers | Highest Accuracy in Scenarios | 10/15 scenarios achieved highest accuracy | [16] |

| WFISH (Weighted Fisher Score) | Gene expression data | RF, k-NN | Classification Error | Consistently lower error vs. other techniques | [17] |

| MD-SRA (Supervised Rank Aggregation) | WGS (11.9M SNPs) | CNN (Deep Learning) | F1-Score | 95.12% (vs. 86.87% for SNP-tagging) | [18] |

| SNR + Mood median test (Hybrid Filter) | Microarray data | RF, KNN | Classification Accuracy | Significant improvements in accuracy and error reduction | [24] |

| Supervised FS (Scenario 4) | GWAS (Height, HDL, BMI) | G-BLUP, Bayes C | Prediction Accuracy | Effective as flexible alternative to Bayes C | [21] |

Application Notes and Protocols

Protocol 1: A Robust Cross-Validation Workflow for Supervised Feature Selection

A critical protocol to prevent overfitting during feature selection is to keep the test set completely separate. The following workflow, adapted from [21], ensures an unbiased evaluation.

Procedure:

- Initial Split: Divide the full dataset into a Training Set (e.g., 80%) and a Hold-out Test Set (e.g., 20%). The test set must be locked away and not used in any way during feature selection or model tuning.

- Inner Cross-Validation Loop: a. Further split the Training Set into K folds (e.g., 5 or 10). b. For each fold: * Use the K-1 folds as the Fold Training Set. * Perform feature selection (using a Filter, Wrapper, or Embedded method) only on the Fold Training Set. * Train a model on the Fold Training Set using the selected features. * Evaluate the model on the remaining fold (the Fold Validation Set). c. Aggregate the performance across all K folds to estimate the generalizability of the FS method.

- Final Model Training: Once the FS method is validated, apply it to the entire Training Set to get the final set of selected features. Train the final model on the entire Training Set with these features.

- Unbiased Evaluation: Evaluate the final model's performance exactly once on the Hold-out Test Set.

Protocol 2: Implementing a Hybrid Filter Method for Gene Expression Data

This protocol details the steps for applying a hybrid filter method, such as combining Signal-to-Noise Ratio (SNR) with the Mood median test, as described in [24].

Objective: To select a robust subset of genes from high-dimensional, non-normally distributed gene expression data for a classification task (e.g., tumor vs. normal).

Materials & Input Data:

- A gene expression matrix (rows: samples, columns: genes).

- A class label vector (e.g., Case/Control).

Procedure:

- Data Preprocessing:

- Perform standard quality control (remove genes with low expression, impute missing values if necessary).

- Log-transform the data if needed to stabilize variance.

- Calculate Univariate Scores:

- For each gene, calculate the Signal-to-Noise Ratio (SNR). A high SNR indicates a gene whose expression differs significantly between classes relative to within-class variation.

- For the same gene, perform the Mood median test. This non-parametric test assesses whether the medians of the two classes are different, making it robust to outliers and non-normal distributions.

- Compute a Hybrid Score:

- For each gene, compute a combined score, e.g.,

Md_score = SNR / P_value, whereP_valueis from the Mood median test. This prioritizes genes with a high SNR and a highly significant P-value.

- For each gene, compute a combined score, e.g.,

- Feature Ranking and Selection:

- Rank all genes in descending order based on their

Md_score. - Select the top

kgenes, wherekcan be determined by a pre-defined threshold (e.g., top 100) or by evaluating classification performance on a validation set across different values ofk(using the cross-validation protocol from 5.1).

- Rank all genes in descending order based on their

- Validation:

- Use the selected gene subset to train a classifier (e.g., Random Forest or k-NN) on the training data.

- Assess the model's accuracy, precision, and recall on the independent test set.

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 2: Key resources for implementing feature selection in genomic studies.

| Category | Item / Solution | Function / Description | Relevance to Genomic Data |

|---|---|---|---|

| Computational Algorithms | Fisher Score / WFISH | Filter method that prioritizes features with large between-class and small within-class variance. | Effective for gene expression data; WFISH is a weighted version for improved performance [17]. |

| Copula Entropy (CEFS+) | Information-theoretic filter that captures full-order interaction gains between features. | Particularly suited for genetic data where gene-gene interactions (epistasis) are important [16]. | |

| LASSO (L1 Regularization) | Embedded method that performs feature selection by shrinking some coefficients to zero. | Widely used in GWAS for creating sparse, interpretable models [16] [19]. | |

| Supervised Rank Aggregation (SRA) | Ranks and selects features based on aggregated results from multiple supervised criteria. | Designed for ultra-high-dimensional data like WGS; MD-SRA offers a balance of quality and efficiency [18]. | |

| Software & Libraries | R GSMX Package |

An R package for genomic selection and cross-validation. | Helps control overfitting of heritability in Genomic Selection models [20]. |

| Python: Scikit-learn | Provides implementations of various filter, wrapper (e.g., RFE), and embedded (e.g., LASSO) methods. | General-purpose machine learning library for building end-to-end FS and modeling pipelines. | |

| Deep Learning Frameworks (PyTorch, TensorFlow) | Enable custom implementation of gradient-based feature selection for neural networks. | Allow for feature selection in complex models like CNNs for genomic classification [18] [23]. | |

| Data Considerations | Linkage Disequilibrium (LD) Clustering | Pre-processing step to group highly correlated SNPs, selecting one tag-SNP per cluster. | Reduces redundancy in GWAS data, preventing inflation from correlated features [19] [21]. |

| Principal Components (PCs) | Ancestry principal components used as covariates in models. | Corrects for population stratification, a confounder in genomic analysis [21]. |

Feature selection (FS) is an indispensable pre-processing step in the analysis of high-dimensional genomic data, directly addressing the "small n, large p" problem prevalent in modern genomic research. This article provides a structured taxonomy of FS methodologies—filter, wrapper, embedded, and hybrid approaches—detailing their underlying principles, operational mechanisms, and specific applications within genomics. Supported by comparative performance data from recent studies and complemented by detailed experimental protocols and visual workflows, this review serves as a comprehensive resource for researchers and drug development professionals seeking to enhance model accuracy, computational efficiency, and biological interpretability in genomic studies.

The advent of high-throughput sequencing technologies has revolutionized genomic research by enabling the generation of vast amounts of data. Whole-Genome Sequencing (WGS) and single-cell RNA sequencing (scRNA-seq) often involve measuring hundreds of thousands to millions of features (e.g., Single Nucleotide Polymorphisms or SNPs, gene expressions) across a relatively small number of samples, creating a significant statistical challenge known as the "p >> n" problem [18] [25]. In this context, feature selection becomes a critical pre-processing step for building robust and interpretable models. FS aims to identify and select the most relevant subset of features that contribute meaningfully to the prediction variable or output, thereby improving learning performance, increasing computational efficiency, reducing memory storage, and constructing better generalized models [16]. For genomic data, this is particularly crucial as it helps in pinpointing potential genetic markers and biomarkers relevant to complex traits and diseases [26]. This article establishes a detailed taxonomy of FS methods, providing a structured framework for their application in high-dimensional genomic data research.

A Detailed Taxonomy of Feature Selection Methods

Feature selection methods can be broadly categorized based on their selection strategy and their interaction with learning algorithms. The following sections delineate the four primary categories.

Filter Methods

Principles and Mechanism: Filter methods assess the relevance of features based on the intrinsic properties of the data, without involving any specific learning algorithm. They rely on statistical or information-theoretic measures to evaluate and rank individual features [27] [16]. Common evaluation criteria include distance, information, dependency, and consistency measures.

Common Algorithms: Prominent examples include Chi-square tests, Pearson’s correlation coefficient, Mutual Information, ReliefF, and Symmetrical Uncertainty (SU) [27] [28]. The Max-Relevance-Max-Distance (MRMD) metric is another filter method designed specifically for high-dimensional data, balancing accuracy and stability in the feature ranking process [29].

Genomic Applications: Filter methods are often the first choice for high-dimensional genomic datasets due to their computational efficiency and scalability. They are extensively used in genome-wide association studies (GWAS) to rank SNPs based on their p-values or to select highly variable genes in scRNA-seq data for integration tasks [21] [25].

Wrapper Methods

Principles and Mechanism: Wrapper methods utilize the performance of a specific predetermined learning algorithm to evaluate the usefulness of feature subsets. They search the feature space iteratively, generating candidate subsets and using the classifier's accuracy as the fitness measure [27].

Common Algorithms: These methods often employ search strategies like Sequential Forward Selection (SFS), Sequential Backward Selection (SBS), and heuristic or metaheuristic algorithms such as Genetic Algorithms (GA), Particle Swarm Optimization (PSO), and the Harris Hawks Optimization (HHO) [27] [29].

Genomic Applications: Although computationally intensive, wrapper methods can provide high classification accuracy for specific classifiers. For instance, the Incremental Wrapper-based Subset Selection (IWSS) approach has been used to guide wrapper methods using ranked features from a filter step, proving effective in medical data classification [27].

Embedded Methods

Principles and Mechanism: Embedded methods integrate the feature selection process directly into the model training phase. The selection is embedded within the learning algorithm's optimization objective, making them more efficient than wrapper methods while still being tailored to a specific model [27] [16].

Common Algorithms: Classic examples include decision tree-based algorithms like Random Forest, which provides feature importance scores, and regularization methods like LASSO (L1 regularization) and Elastic Net (a combination of L1 and L2 regularization) [21] [28].

Genomic Applications: Embedded methods like Elastic Net regression are widely used in epigenomics for developing DNA methylation-based estimators of traits like telomere length and biological age [28]. They effectively handle multicollinearity, a common issue in genomic data.

Hybrid and Ensemble Methods

Principles and Mechanism: Hybrid methods combine the strengths of filter and wrapper methods to achieve a balance between computational efficiency and performance. Typically, a filter method is first used to reduce the feature space, and a wrapper method is then applied to refine the selection [27] [29]. Ensemble methods further extend this concept by aggregating the results of multiple feature selection algorithms or models to improve stability and robustness [27].

Common Algorithms: The Ensemble of Filter-based Hybrid Feature Selection (EFHFS) model is one such approach that uses an ensemble of filters for ranking before applying a wrapper like SFS [27]. Other advanced hybrid methods incorporate metaheuristic algorithms like an improved Harris Hawks Optimization with genetic operators [29].

Genomic Applications: These approaches are particularly valuable for capturing complex interactions, such as those between genes. For example, the Copula Entropy-based FS (CEFS+) method was designed to capture the full-order interaction gain between features, proving highly effective on high-dimensional genetic datasets [16].

Performance Comparison of Feature Selection Methods

The following table summarizes the relative performance, strengths, and weaknesses of different feature selection methods as evidenced by recent genomic studies.

Table 1: Comparative Analysis of Feature Selection Methodologies in Genomic Studies

| Method Category | Example Algorithms | Computational Efficiency | Model Accuracy | Key Strengths | Primary Weaknesses |

|---|---|---|---|---|---|

| Filter | GWAS p-values, Highly Variable Genes, MRMD [21] [29] [25] | High | Variable, can be lower | Fast, scalable, model-agnostic | May select redundant features, ignores interaction with classifier |

| Wrapper | Sequential Forward Selection, Genetic Algorithm [27] | Low | High for specific classifiers | Considers feature dependencies, high accuracy | Computationally expensive, prone to overfitting |

| Embedded | LASSO, Elastic Net, Random Forest [21] [28] | Medium | High | Model-specific efficiency, handles multicollinearity | Selection tied to the specific learning model |

| Hybrid/Ensemble | EFHFS, MD-SRA, CEFS+ [18] [27] [16] | Medium to High | Very High | Balances speed and accuracy, robust, handles interactions | Design and implementation can be complex |

A study on ultra-high-dimensional genomic data classifying 1825 individuals into five breeds based on ~11.9 million SNPs demonstrated the efficacy of advanced hybrid methods. The Multidimensional Supervised Rank Aggregation (MD-SRA) approach provided an excellent balance between classification quality (95.12% F1-score) and computational efficiency (17x lower analysis time and 14x lower data storage compared to other methods) [18]. Another study on medical data classification across twenty datasets showed that a proposed hybrid Ensemble-Filter Wrapper approach significantly outperformed 14 state-of-the-art algorithms in terms of accuracy, sensitivity, specificity, and F1-score [27].

Experimental Protocols for Genomic Feature Selection

This section provides a detailed, actionable protocol for applying a hybrid feature selection method to a high-dimensional genomic dataset, such as a DNA methylation array or SNP data.

Protocol: Hybrid Ensemble-Filter Wrapper for Genomic Data

This protocol is adapted from successful methodologies applied in recent literature [27] [29] [28].

I. Research Reagent Solutions and Data Preparation

Table 2: Essential Materials and Tools for Genomic Feature Selection

| Item Name | Function/Description | Example Tools / Packages |

|---|---|---|

| Genomic Dataset | The raw input data containing samples and a high number of genomic features. | DNA methylation array data, SNP data (e.g., PLINK files), scRNA-seq count matrix. |

| Computing Environment | A software environment for statistical computing and scripting. | R (with packages like wateRmelon [28]), Python (with libraries like scikit-learn, scanpy [25]). |

| Filter Method Library | A collection of algorithms for the initial filter-based ranking. | Statistical tests (t-test, ANOVA), Mutual Information, Chi-squared, ReliefF. |

| Wrapper/Classifier | The machine learning model used to evaluate subset performance. | Support Vector Machine (SVM), Random Forest, k-Nearest Neighbors (KNN). |

| Search Strategy | The algorithm used to navigate the feature subset space. | Sequential Forward Selection, Genetic Algorithm, Harris Hawks Optimization. |

Steps:

Data Preprocessing and Partitioning:

- Perform standard quality control on the genomic data (e.g., normalization for methylation data, variant calling for SNP data).

- Partition the entire dataset into training and hold-out test sets using a cross-validation procedure (e.g., 80/20 split or 10-fold cross-validation). It is critical to completely withhold the test set from any part of the initial feature selection process to avoid bias [21].

Ensemble Filter Step (on Training Data only):

- Apply multiple filter methods (e.g., Mutual Information, Chi-square, FStatistic) to the training data. Each method will assign a score or weight to each feature based on its perceived relevance to the outcome.

- Aggregate the rankings from these different filter methods into a single, robust ranked list of features. This ensemble approach mitigates the limitations of any single filter method.

Wrapper-based Subset Selection (on Training Data only):

- Use a greedy search algorithm like Sequential Forward Selection (SFS), guided by the ranked list from the previous step.

- Process: Start with an empty set. Iteratively add the top-ranked feature from the filter list that most improves the performance of a chosen classifier (e.g., SVM, Random Forest) evaluated via cross-validation on the training data.

- Continue this process until a stopping criterion is met (e.g., performance gain falls below a threshold). The output is an optimal feature subset.

Model Validation and Evaluation:

- Train a final model on the entire training set using only the optimal feature subset identified in Step 3.

- Evaluate the performance of this model on the completely held-out test set using appropriate metrics (e.g., Accuracy, F1-score, AUC-ROC).

The workflow for this protocol is visualized below.

Figure 1: Workflow for a Hybrid Ensemble-Filter Wrapper Feature Selection Protocol.

The Scientist's Toolkit: Implementation Guide

Selecting the most appropriate feature selection method depends on the specific research goals, data characteristics, and computational resources. The following decision diagram can guide researchers in this choice.

Figure 2: Decision Guide for Selecting a Feature Selection Method.

A well-chosen feature selection strategy is paramount for unlocking the full potential of high-dimensional genomic data. Filter methods offer speed, wrapper methods promise high accuracy for targeted models, embedded methods provide an efficient middle ground, and hybrid/ensemble approaches deliver a robust balance of performance and efficiency. As genomic datasets continue to grow in size and complexity, the adoption of these sophisticated FS methodologies, particularly hybrid and ensemble frameworks that can capture complex genetic interactions, will be crucial for advancing biomedical discovery and precision drug development.

A Practical Guide to Feature Selection Algorithms and Their Implementation in Genomic Studies

In the analysis of high-dimensional genomic data, the "curse of dimensionality" – where the number of features (p) vastly exceeds the number of samples (n) – presents significant statistical challenges. These include difficulties in accurate parameter estimation, model interpretability, and an inflated risk of false positive associations [1] [19]. Feature selection is therefore a critical preprocessing step, essential for building robust, generalizable models and for identifying biologically relevant features for downstream analysis [1] [19]. This document details the application notes and experimental protocols for three foundational feature selection methods in genomic research: SNP-tagging, ANOVA, and correlation-based filtering.

The following table summarizes the key characteristics, advantages, and limitations of the three traditional feature selection methods.

Table 1: Comparison of Traditional Statistical and Filter Feature Selection Methods

| Method | Core Principle | Primary Use Case | Key Advantages | Key Limitations |

|---|---|---|---|---|

| SNP-Tagging | Selects a representative SNP from a group in high Linkage Disequilibrium (LD) to reduce redundancy [30]. | Genome-wide association studies (GWAS) to minimize feature correlation and data volume [1] [30]. | Dramatically reduces data dimensionality; computationally efficient; leverages known population genetic structure [1]. | Purely mechanistic; does not consider phenotype; may exclude causal variants in high-LD regions [1] [19]. |

| ANOVA | Evaluates the difference in genotype distributions between pre-defined case and control groups [19]. | Identifying SNPs with statistically significant univariate associations with a phenotype. | Simple, interpretable, and fast; provides a clear p-value for association [31] [19]. | Univariate (ignores feature interactions); performance is sample size and effect size dependent; prone to false positives in structured populations [19]. |

| Correlation-Based Filtering | Ranks SNPs based on the strength of their association with the phenotype, often using likelihoods or p-values from univariate models [31]. | Fine-mapping regions to prioritize SNPs following a GWAS hit [31]. | Directly assesses feature-phenotype relationship; more statistically powerful than tagging for causal variant identification [31]. | Computationally intensive on ultra-high-dimensional data; results can be confounded by local LD structure [1] [31]. |

Quantitative data from a recent study classifying cattle breeds using over 11 million SNPs highlights the practical trade-offs between these methods. SNP-tagging was the most computationally efficient, reducing the feature set by 93.51% in just 74 minutes, but yielded the least satisfactory classification F1-score (86.87%). In contrast, a supervised rank aggregation method (a sophisticated form of correlation-based filtering) achieved a superior F1-score of 96.81% but required 37.7 times more computing time and massive data storage [1].

Experimental Protocols

Protocol 1: Feature Selection via SNP-Tagging

Principle: Leverages Linkage Disequilibrium (LD) to identify a minimal set of tag SNPs that represent the genetic variation of a larger haplotype block, thereby reducing data redundancy [30].

Procedure:

- Data Input: Load genotype data (e.g., in VCF or PLINK format) for your population of interest.

- LD Calculation: Calculate pairwise LD statistics (e.g., r² or D') for all SNPs within a defined genomic window or chromosome. Tools like PLINK or Haploview are standard for this step.

- Define Haplotype Blocks: Partition the genome into haplotype blocks using an algorithm such as the four-gamete rule or based on LD confidence intervals [30].

- Select Tag SNPs: Within each haplotype block, select a subset of SNPs (tag SNPs) that can predict the non-selected SNPs with a high degree of accuracy (e.g., r² > 0.8). Greedy or clustering algorithms are commonly used for this NP-complete problem [30].

- Output: Generate a new genotype dataset containing only the selected tag SNPs.

Protocol 2: Feature Selection via ANOVA F-Test

Principle: Tests the null hypothesis that the mean value of a continuous phenotype is the same across different genotype groups (e.g., AA, Aa, aa). A low p-value suggests the SNP is associated with phenotypic variation.

Procedure:

- Data Input: A matrix of genotypes (coded as 0, 1, 2 for additive model) and a vector of phenotypic values for all samples.

- Group Means Calculation: For each SNP, calculate the mean phenotypic value for each genotype group.

- Variance Decomposition:

- Calculate the "Between-Group" variance (Mean Square Between, MSB), which measures the variability among the different genotype group means.

- Calculate the "Within-Group" variance (Mean Square Error, MSE), which measures the variability within each genotype group.

- F-Statistic Calculation: Compute the F-statistic as F = MSB / MSE.

- Significance Testing: Compare the calculated F-statistic to the F-distribution with corresponding degrees of freedom (df1 = k-1, df2 = n-k, where k is the number of genotype groups and n is the sample size) to obtain a p-value.

- Multiple Testing Correction: Apply a multiple testing correction (e.g., Bonferroni or False Discovery Rate) to the p-values of all SNPs to control for false positives.

- Output: A ranked list of SNPs based on their p-values or F-statistics, from which top candidates can be selected.

Protocol 3: Feature Selection via Correlation-Based Likelihood Filtering

Principle: Ranks SNPs based on the likelihood from a univariate logistic regression model, which measures the strength of association between a SNP and a binary phenotype. This method has been shown to be highly effective for fine-mapping [31].

Procedure:

- Data Input: A matrix of genotypes and a vector of binary case-control labels (0/1).

- Model Fitting: For each SNP, fit a univariate logistic regression model: logit(p) = β₀ + β₁ * SNP, where p is the probability of being a case.

- Likelihood Calculation: Obtain the maximum likelihood estimates for the model parameters and compute the log-likelihood of the fitted model.

- Ranking: Rank all SNPs based on their log-likelihood value (higher values indicate a stronger association with the phenotype).

- Filtering: Retain a pre-specified top percentage (e.g., top 5%) or a fixed number of the highest-ranked SNPs [31].

- Output: A reduced set of candidate SNPs for downstream predictive modeling or biological validation.

Workflow Visualization

The following diagram illustrates the logical relationship and decision process for implementing these feature selection methods within a genomic research pipeline.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software Tools for Traditional Feature Selection

| Tool / Resource | Type | Primary Function | Relevance to Protocol |

|---|---|---|---|

| PLINK | Software Toolset | Whole-genome association analysis. | Core tool for LD calculation, SNP-tagging, and basic association analysis (ANOVA, correlation) [32]. |

| BCFtools | Software Library | VCF/BCF file manipulation and querying. | Data preprocessing, indexing, and filtering of genomic variants before feature selection [32]. |

| HapMap Project | Public Database | Catalog of human genetic variation and haplotype patterns. | Provides reference LD structures and haplotype blocks for tag SNP selection in human studies [30]. |

| R / Python (scikit-learn) | Programming Environment | Statistical computing and machine learning. | Implementation of ANOVA, logistic regression, and custom filtering scripts; data visualization and analysis [31] [19]. |

| SNP Annotation Databases (e.g., dbSNP) | Public Database | Functional and positional annotation of SNPs. | Annotating and prioritizing selected SNPs post-filtering for biological interpretation [32]. |

Feature selection is a critical preprocessing step in the analysis of high-dimensional genomic data, where datasets often contain tens of thousands of features (e.g., gene expression levels, SNPs) but only a limited number of samples. This dimensionality curse poses significant challenges for building robust predictive models in biomedical research and drug development. Wrapper methods, which evaluate feature subsets using a specific learning algorithm, often provide superior performance by accounting for feature dependencies and interactions. Evolutionary computation algorithms, including Genetic Algorithms (GA), Grey Wolf Optimization (GWO), and Particle Swarm Optimization (PSO), have emerged as powerful search strategies for wrapper-based feature selection, effectively navigating the vast search space of potential feature combinations to identify optimal subsets that maximize predictive accuracy while minimizing dimensionality.

In genomic studies, where biological data is characterized by high noise, redundancy, and multicollinearity, traditional filter methods may overlook biologically relevant feature interactions. Evolutionary approaches overcome these limitations by performing global searches that balance exploration and exploitation. For instance, in genome-wide association studies (GWAS), where each Single Nucleotide Polymorphism (SNP) represents a feature, the risk of overfitting is high when using high-dimensional genomic data without appropriate feature selection [21]. These methods are particularly valuable for identifying biomarker signatures, understanding disease mechanisms, and developing diagnostic classifiers from omics data, making them indispensable tools for modern computational biologists and pharmaceutical researchers.

Algorithmic Foundations and Methodologies

Genetic Algorithms (GAs)

Genetic Algorithms are population-based optimization techniques inspired by Darwinian evolution. In the context of feature selection for genomic data, each chromosome typically represents a feature subset encoded as a binary string, where '1' indicates feature inclusion and '0' indicates exclusion. The GARS (Genetic Algorithm for the identification of a Robust Subset) implementation exemplifies a GA tailored for high-dimensional datasets. Its distinctive characteristic is a fitness function based on Multi-Dimensional Scaling (MDS) and the averaged Silhouette Index (aSI), which evaluates subset quality by measuring class separability in a reduced dimensional space [33].

The GARS workflow operates through five fundamental steps: (1) Population Initialization: Generation of a random set of chromosomes, each representing a candidate feature subset; (2) Fitness Evaluation: Assessment of each chromosome using the MDS-based silhouette score; (3) Selection: Application of tournament or roulette wheel selection to identify promising chromosomes; (4) Crossover: Recombination of parent chromosomes using one-point or two-point crossover to produce offspring; and (5) Mutation: Random replacement of feature indices with new ones to maintain population diversity. This process iterates until convergence, progressively evolving toward feature subsets with optimal discriminatory power [33].

Grey Wolf Optimization (GWO)

Grey Wolf Optimization algorithm mimics the social hierarchy and hunting behavior of grey wolves in nature. In GWO, solutions are represented as wolves positions in a multidimensional search space, with the alpha (α), beta (β), and delta (δ) wolves representing the top three solutions, and omega (ω) wolves constituting the remaining population. The mathematical model of GWO consists of three main processes: encircling prey, hunting, and attacking prey [34] [35].

Recent advancements have produced several enhanced GWO variants for feature selection:

- GWO-SRS: Incorporates a self-repulsion strategy that flattens the wolf pack hierarchy to accelerate convergence and uses time-dependent hybrid transfer functions to balance exploration and exploitation [35].

- GWOGA: A hybrid approach combining GWO with Genetic Algorithm, utilizing chaotic maps and Opposition-Based Learning for population initialization, with GWO driving early optimization and GA refining the search in later stages [34].

- MOBGWO-GMS: A multi-objective binary GWO employing a guided mutation strategy based on Pearson correlation coefficients to navigate local search spaces while maintaining population diversity [36].

Particle Swarm Optimization (PSO)

Particle Swarm Optimization is inspired by the social behavior of bird flocking and fish schooling. In PSO for feature selection, each particle represents a candidate feature subset and moves through the binary search space adjusting its position based on personal experience and social learning. The standard PSO velocity and position update equations are modified for discrete optimization using transfer functions to convert continuous velocities to binary positions [37] [38].

Advanced PSO implementations for high-dimensional genomic data include:

- PSO-CSM: Employs a comprehensive scoring mechanism that integrates feature importance (measured by symmetric uncertainty) with population feedback to progressively narrow the feature space [38].

- Guided PSO: Incorporates filter-based methods to guide the search process and prevent premature convergence [37].

- VLPSO: A variable-length PSO representation that allows particles to search in different feature subspaces, enhancing exploration capability for high-dimensional problems [38].

Experimental Protocols and Implementation

Genomic Data Preprocessing Protocol

Proper data preprocessing is essential before applying evolutionary feature selection methods to genomic data. The following protocol ensures data quality and compatibility:

Data Acquisition and Quality Control: Obtain genomic data from reliable sources such as NCBI, TreeFam, or GTEx portals. For gene expression data, verify RNA integrity and sequencing quality metrics. Filter out samples with poor quality and genes with excessive missing values [39] [33].

Normalization: Apply appropriate normalization techniques to remove technical variations. For microarray data, use quantile normalization; for RNA-Seq data, employ TPM (Transcripts Per Million) or FPKM (Fragments Per Kilobase Million) normalization followed by log2 transformation to stabilize variance [39].

Handling Alternative Splicing: For gene family analysis, retain the longest mRNA sequence when multiple alternative splicing variants exist to prevent bias in downstream analyses [39].

Data Partitioning: Split the dataset into independent training (70-80%), validation (10-15%), and test (10-15%) sets. Maintain class proportions across splits, especially for imbalanced datasets common in disease studies [33].

Feature Pre-filtering (Optional): For extremely high-dimensional data (>50,000 features), apply mild univariate pre-filtering (e.g., based on variance or basic statistical tests) to reduce computational burden, while retaining >10,000 features for the wrapper method to ensure comprehensive search [4].

GARS Implementation Protocol for Multi-class Genomic Data

The following step-by-step protocol details the implementation of GARS for feature selection in transcriptomic data:

Parameter Configuration: Set population size (typically 50-200 chromosomes), number of generations (100-500), crossover rate (0.7-0.9), mutation rate (0.01-0.1), and chromosome length range (5-100 features). For high-dimensional data, initialize with shorter chromosomes to promote sparse solutions [33].

Fitness Evaluation:

- Extract the feature subset corresponding to each chromosome from the training data.

- Perform Multi-Dimensional Scaling (MDS) using the selected features to project samples into 2D space.

- Calculate the averaged Silhouette Index (aSI) to quantify class separation.

- Apply the fitness function: Fitness = aSI if aSI > 0, otherwise 0 [33].

Evolutionary Operations:

- Selection: Apply tournament selection (size 3-5) to choose parent chromosomes while maintaining elitism (preserve top 1-5% solutions).

- Crossover: Implement single-point or two-point crossover on selected parent pairs to generate offspring.

- Mutation: Randomly replace feature indices in chromosomes with new features not currently included, using a low probability to maintain diversity [33].

Termination and Validation: Execute the evolutionary process until convergence (no fitness improvement for 20-50 generations) or maximum generations reached. Validate the final feature subset on the independent test set using appropriate classifiers (SVM, Random Forest) and performance metrics (accuracy, AUC-ROC) [33].

GWO-SRS Protocol for High-Dimensional Feature Selection

This protocol implements the enhanced Grey Wolf Optimizer with Self-Repulsion Strategy:

Initialization:

- Set parameters: population size (20-50 wolves), maximum iterations (100-200), convergence parameter a (decreases linearly from 2 to 0).

- Generate initial population using chaotic maps or Opposition-Based Learning to ensure diversity [35].

Fitness Evaluation and Hierarchy Establishment:

- Evaluate fitness of each wolf (solution) using classification accuracy with a simple classifier (K-NN) or minimum redundancy maximum relevance criterion.

- Designate the top three solutions as α, β, and δ wolves in the flattened hierarchy [35].

Position Update:

- Calculate convergence factors A and C using the proposed nonlinear equations incorporating trigonometric functions.

- Update positions of ω wolves based on the positions of α, β, and δ wolves using the standard GWO equations adapted for binary search space [35].

- Apply the self-repulsion strategy to the α wolf to avoid local optima by eliminating less relevant features.

Termation and Feature Subset Selection: Iterate until convergence criteria met (parameter a reaches 0 or maximum iterations). Select the feature subset represented by the α wolf as the optimal solution [35].

Performance Comparison and Analysis

Quantitative Performance Metrics

The performance of evolutionary feature selection methods is typically evaluated using multiple criteria. The table below summarizes key metrics and their significance in genomic applications:

Table 1: Performance Metrics for Evolutionary Feature Selection Methods

| Metric | Description | Importance in Genomics |

|---|---|---|

| Classification Accuracy | Proportion of correctly classified instances using selected features | Measures predictive power of identified biomarker signatures |

| Feature Subset Size | Number of features in the final selected subset | Critical for interpretability and cost-effective biomarker development |

| Computational Time | Time required to complete the feature selection process | Practical consideration for high-dimensional genomic data |

| AUC-ROC | Area Under Receiver Operating Characteristic Curve | Assesses diagnostic capability of selected features for disease classification |

| Silhouette Index | Measures cluster separation quality in reduced feature space | Evaluates ability to distinguish biological classes or subtypes |

Comparative Performance Analysis

Recent studies demonstrate the competitive performance of evolutionary methods compared to traditional feature selection approaches:

Table 2: Performance Comparison of Evolutionary Feature Selection Methods on Genomic Data

| Method | Dataset | Accuracy | Feature Reduction | Reference |

|---|---|---|---|---|

| GARS | GTEx Brain Regions (11 classes) | 89.1% | ~95% (from 20k to ~100 features) | [33] |

| GWO-SRS | UCI Benchmark Datasets | ~85% (avg) | 80% reduction | [35] |

| PSO-CSM | High-dimensional Microarray | 87.3% (avg) | Selects <0.67% of original features | [38] |

| MOBGWO-GMS | 14 Benchmark Datasets | Superior to 8 comparison algorithms | Optimal trade-off between size and accuracy | [36] |

| DRPT | Genomic Datasets (9k-267k features) | Favorable vs. 7 state-of-the-art methods | Effective irrelevant feature removal | [4] |

The GARS implementation demonstrated particular effectiveness for multi-class genomic data, achieving 89.1% accuracy with an AUC of 0.919 when classifying insect genomes based on gene family distributions [33]. Similarly, a modified GWO optimized for high-dimensional gene expression data selected less than 0.67% of features while improving classification accuracy, demonstrating substantial dimensionality reduction capability [40].

Comparative studies consistently show that evolutionary methods outperform filter-based approaches (such as Selection By Filtering) and embedded methods (like LASSO) in complex multi-class genomic problems, particularly when biological classes have overlapping feature signatures [33]. The hybrid nature of these algorithms enables them to capture nonlinear relationships and feature interactions that are common in genomic regulatory networks but difficult to detect with univariate methods.

The Scientist's Toolkit

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for Genomic Feature Selection

| Item | Function/Application | Implementation Notes |

|---|---|---|

| TreeFam Database | Phylogenetic trees of gene families for ortholog assignment | Used for defining gene families and establishing evolutionary relationships [39] |

| Symmetric Uncertainty (SU) | Filter method for evaluating feature-class correlation | Employed in PSO-CSM for initial feature importance scoring [38] |

| Pearson Correlation Coefficient | Measures linear relationships between features | Utilized in MOBGWO-GMS for guided mutation strategy [36] |

| Multi-Dimensional Scaling (MDS) | Dimension reduction for visualization and fitness evaluation | Core component of GARS fitness function [33] |

| ReliefF Algorithm | Filter method for feature weighting based on nearest neighbors | Incorporated in modified GWO for population initialization [40] |

| Support Vector Machine (SVM) | Classifier for wrapper-based feature evaluation | Common choice for fitness evaluation in GA approaches [33] |

| K-Nearest Neighbors (K-NN) | Simple classifier for subset evaluation | Used in GWO variants with leave-one-out cross-validation [36] |

Workflow Visualization

Diagram 1: Workflow for Evolutionary Feature Selection in Genomic Data Analysis

Evolutionary feature selection methods represent powerful approaches for addressing the dimensionality challenges inherent in genomic research. Genetic Algorithms, Grey Wolf Optimization, and Particle Swarm Optimization each offer unique advantages for identifying robust feature subsets that maximize predictive performance while maintaining biological interpretability. The experimental protocols and performance analyses presented provide researchers with practical frameworks for implementing these methods in diverse genomic applications.

Future developments in evolutionary feature selection will likely focus on several key areas: (1) enhanced computational efficiency for ultra-high-dimensional data (e.g., single-cell multi-omics), (2) improved integration of biological knowledge through specialized fitness functions and constraints, (3) multi-objective optimization frameworks that simultaneously optimize predictive accuracy, biological relevance, and implementation cost, and (4) adaptive mechanisms that automatically adjust algorithmic parameters during the search process. As genomic technologies continue to evolve, producing increasingly complex and high-dimensional data, wrapper and evolutionary feature selection methods will remain indispensable tools for extracting biologically meaningful insights and advancing personalized medicine initiatives.

High-dimensional genomic data, characterized by a vast number of features (p) and a relatively small sample size (n), presents significant challenges for statistical analysis and biomarker discovery. Technical noise, feature redundancy, and multicollinearity can obscure true biological signals and lead to model overfitting [13]. Embedded and regularization techniques address these challenges by integrating feature selection directly into the model training process, promoting sparsity and enhancing the interpretability and generalizability of results. These methods are particularly vital in genomic research for identifying biologically relevant features, such as genes or genetic variants, associated with diseases or traits of interest [41] [42].

This document provides application notes and detailed protocols for three prominent embedded techniques: LASSO (Least Absolute Shrinkage and Selection Operator), Elastic Net, and Sparse Partial Least Squares Discriminant Analysis (SPLSDA). LASSO employs L1-norm regularization to perform continuous shrinkage and automatic feature selection [43] [42]. Elastic Net combines L1 and L2-norm penalties to overcome LASSO's limitations in handling highly correlated variables [43] [44]. SPLSDA integrates sparsity into a dimension-reduction framework, making it highly effective for multicollinear data common in genomics [41]. The following sections synthesize the most current research to offer a quantitative comparison, standardized methodologies, and practical implementation guidelines for these powerful tools in genomic research and drug development.

Comparative Performance Analysis

The selection of an appropriate feature selection method depends on the dataset characteristics and research objectives. The following tables summarize the performance of LASSO, Elastic Net, and SPLSDA across various genomic studies.

Table 1: Performance Comparison on Proteomic and Gene Expression Data

| Method | Dataset | Key Performance Metrics | Number of Selected Features |

|---|---|---|---|

| LASSO | CPTAC Proteomic (Intrahepatic Cholangiocarcinoma) [13] | AUC: Matched HT-CS | >86 |

| Glioblastoma Data [13] | AUC: 67.80% | Not Specified | |

| Ovarian Serous Cystadenocarcinoma [13] | AUC: 61.00% | Not Specified | |

| Leukemia Subtype Classification [44] | Accuracy: 0.9057, Kappa: 0.8852 | Aggressive feature selection | |

| Elastic Net | Simulated GWAS Data (Moderate/High LD) [43] | Best compromise between few false positives and many correct selections at α ~0.1 | 161 (QTLMAS 2010 data) |

| Cattle GWAS (Milk Fat Content) [43] | Identified 1291-1966 SNPs | 1291-1966 | |

| Leukemia Subtype Classification [44] | Accuracy: 0.9057, Kappa: 0.8852 (Highest overall performance) | Aggressive feature selection | |

| LDL-Cholesterol GWAS [42] | Best performance when combined with SVR for association testing | Subset of 5000 SNPs | |

| SPLSDA | CPTAC Proteomic (Intrahepatic Cholangiocarcinoma) [13] | AUC: 97.47% | 37 (57% fewer than HT-CS) |

| Glioblastoma Data [13] | AUC: 71.38% | Not Specified | |

| Ovarian Serous Cystadenocarcinoma [13] | AUC: 70.75% | Not Specified | |

| Multiclass Microarray Data (e.g., Leukemia, SRBCT) [41] | Classification performance similar to other wrappers, superior computational efficiency and interpretability | Varies by dataset |

Table 2: Strengths, Weaknesses, and Ideal Use Cases

| Method | Strengths | Weaknesses | Ideal Application Context |

|---|---|---|---|