A Researcher's Guide to Public Cancer DNA Sequencing Datasets: Access, Analysis, and Application

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on leveraging public datasets for cancer DNA sequence analysis.

A Researcher's Guide to Public Cancer DNA Sequencing Datasets: Access, Analysis, and Application

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on leveraging public datasets for cancer DNA sequence analysis. It covers the foundational landscape of major genomic repositories, practical methodologies for data access and integration, strategies to overcome common analytical challenges, and best practices for clinical validation and database comparison. By synthesizing information from key resources like TCGA, AACR Project GENIE, ICGC, and NIST's latest benchmarks, this guide aims to empower the cancer research community to fully utilize existing data to accelerate precision oncology discoveries.

Navigating the Landscape of Public Cancer Genomic Repositories

Cancer genomics research has been revolutionized by large-scale international consortia that generate and provide public access to comprehensive genomic and clinical datasets. These resources enable researchers to uncover the molecular basis of cancer, identify new therapeutic targets, and advance precision oncology. Three of the most prominent consortia are The Cancer Genome Atlas (TCGA), the International Cancer Genome Consortium Accelerating Research in Genomic Oncology (ICGC ARGO), and the AACR Project GENIE. Each consortium has a distinct operational model and data focus—TCGA provides deeply characterized molecular profiles across cancer types, ICGC ARGO emphasizes longitudinal clinical data integration with genomics, and AACR Project GENIE aggregates real-world clinico-genomic data from participating institutions globally. Together, they provide complementary resources that have become indispensable for contemporary cancer research, drug development, and biomarker discovery.

Table 1: Core Characteristics of Major Cancer Genomics Databases

| Feature | TCGA | ICGC ARGO | AACR Project GENIE |

|---|---|---|---|

| Primary Focus | Pan-cancer molecular characterization [1] | Linking genomic data to detailed clinical outcomes [2] [3] | Real-world clinico-genomic data [4] [5] |

| Data Status | Program closed; data publicly available [6] | Active; data releases ongoing [2] | Active; data releases every 6 months [7] |

| Sample/Donor Count | >20,000 primary cancer samples [1] | >5,500 donors (Release 13) [2] | >211,000 patients [4] |

| Key Data Types | WGS, WES, methylation, RNA expression, proteomic, clinical [6] | Genomic, transcriptomic, detailed clinical, treatment history [2] [3] | Somatic sequencing data, limited clinical data [4] [5] |

| Access Portal | NCI Genomic Data Commons (GDC) [1] | ICGC ARGO Platform [2] | cBioPortal, Synapse [4] |

In-Depth Database Profiles

The Cancer Genome Atlas (TCGA)

TCGA was a landmark joint effort between the National Cancer Institute (NCI) and the National Human Genome Research Institute that ran from 2006 to 2018 [1] [6]. It molecularly characterized over 20,000 primary cancer and matched normal samples spanning 33 cancer types, generating over 2.5 petabytes of multi-omics data. The program's legacy continues as a vital resource, with data available through the Genomic Data Commons (GDC) Data Portal, which provides web-based analysis and visualization tools [1] [6]. TCGA's uniqueness stems from its inclusion of "normal control" data from tissue adjacent to tumors or blood samples, enabling precise identification of somatic changes. The data's uniformity, generated through standardized protocols, makes it particularly valuable for pan-cancer analyses comparing molecular features across different cancer types [6].

ICGC ARGO

The International Cancer Genome Consortium Accelerating Research in Genomic Oncology is an active international initiative aiming to analyze genomes from 100,000 cancer patients across multiple countries and jurisdictions [3]. A key strength of ICGC ARGO is its rigorous focus on high-quality, harmonized clinical data collection through its Data Dictionary, which defines a minimal set of clinical fields to ensure consistency across global programs [3]. The dictionary uses an event-based, donor-centric model with 79 core and 113 extended fields covering areas like primary diagnosis, treatment, and follow-up. As of September 2025, Release 13 provided data from over 5,500 donors, featuring detailed clinical annotations covering primary diagnosis, treatment history, and follow-up, alongside genomic and transcriptomic files [2]. This design supports longitudinal tracking of a patient's cancer journey, which is critical for understanding disease evolution and treatment response.

AACR Project GENIE

AACR Project GENIE is a multi-institutional, real-world data registry that aggregates clinico-genomic data from 20 cancer centers worldwide [4] [5] [7]. Its founding principle was that combining data across institutions was necessary to study rare genetic variants and rare cancers, which no single institution could do meaningfully [5]. The registry, celebrating its 10th anniversary of public operation in 2025, has grown to approximately 250,000 sequenced samples from more than 211,000 patients [4] [7]. Data is released publicly every six months, with the current version being GENIE 18.0-public [4]. Users can access the data via cBioPortal for interactive exploration or download it directly from the Synapse platform, requiring registration and agreement to data use terms [4].

Experimental Methodology and Workflows

Data Generation and Analysis Protocols

The utility of consortium data depends on robust methodologies for data generation and analysis. While wet-lab protocols vary, the bioinformatics pipelines for processing sequencing data follow standardized steps.

Table 2: Bioinformatics Pipeline for NGS Data Analysis

| Step | Input | Process | Output | Key Tools/Standards |

|---|---|---|---|---|

| 1. Raw Data Processing | Sequenced reads (FASTQ) | Trimming of adapters and low-quality bases [8] | Clean FASTQ files | Trimmomatic, Cutadapt |

| 2. Sequence Alignment | Clean FASTQ files | Mapping to a reference genome [8] | BAM/SAM files | BWA, STAR, GRCh38 |

| 3. Variant Calling & Processing | BAM files | Deduplication, recalibration, variant calling [8] | VCF files | GATK, DeepVariant |

| 4. Variant Annotation & Filtering | VCF files | Functional annotation & frequency-based filtering [8] | Annotated VCF | VEP, SnpEff |

| 5. Clinical Interpretation | Annotated variants | Classification based on clinical evidence [8] | Clinical report | ACMG/AMP guidelines [8] |

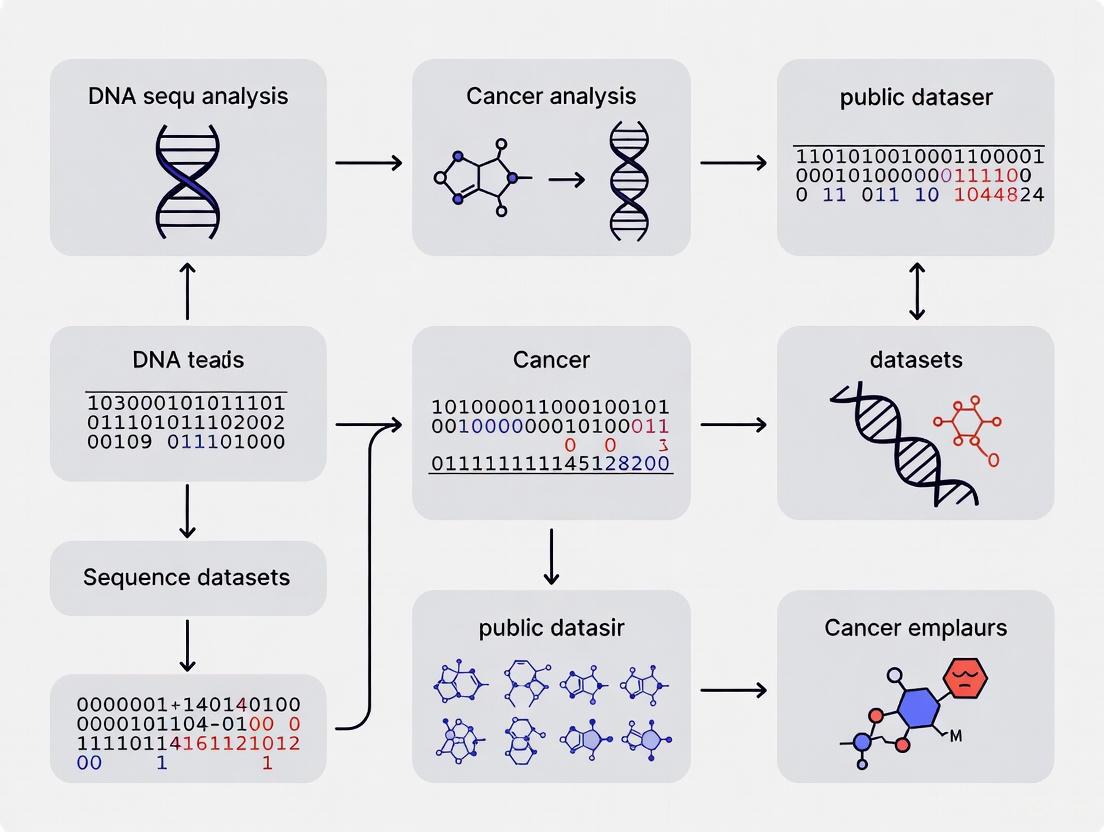

The following diagram illustrates the core bioinformatics workflow for analyzing next-generation sequencing data, from raw data to clinical interpretation:

Representative Research Applications

Studying Rare Cancers with AACR Project GENIE: A 2025 study on collecting duct carcinoma (CDC), a rare kidney cancer, exemplifies using consortium data for validation [5]. Researchers performed whole exome sequencing, RNA sequencing, and DNA methylation profiling on 22 cases. They then validated their findings against 25 CDC samples in the AACR Project GENIE database, revealing novel chromosomal losses (chromosome 22q) and Hippo pathway dysregulation, and identifying a biomarker subset likely to respond to immunotherapy [5].

Biomarker Discovery and Clinical Trial Design: A team at Clasp Therapeutics used AACR Project GENIE to analyze the frequency of a specific p53 mutation (R175H) across over 180,000 tumors [5]. This analysis revealed the mutation occurred in approximately 2% of all tumors, more commonly in tough-to-treat cancers. This data helped define the addressable population for a new T-cell engager therapy, CLSP-1025, and supported a tumor-agnostic approach in the subsequent first-in-human trial [5].

Leveraging ICGC ARGO's Structured Clinical Data: ICGC ARGO's data model enables complex longitudinal studies. Its dictionary structures data into core entities (donor, primary diagnosis, specimen) and event-based entities (treatments, follow-ups) [3]. This allows researchers to analyze how somatic changes evolve from before treatment to after treatment and relapse, correlating these changes with detailed clinical outcomes captured over time.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Item/Tool | Function | Application Example |

|---|---|---|

| cBioPortal | Web-based visualization and analysis tool [4] [6] | Interactive exploration of genomic alterations and clinical associations in AACR Project GENIE and TCGA data [4] [9] |

| Genomic Data Commons (GDC) Portal | NCI's primary data portal for TCGA [1] | Accessing and analyzing the most up-to-date, uniformly processed TCGA data [1] [6] |

| ICGC ARGO Data Dictionary | Defines minimal set of clinical fields for consistent data collection [3] | Ensuring interoperable, high-quality clinical data for cross-study analysis [3] |

| GATK (Genome Analysis Toolkit) | Industry standard for variant discovery in high-throughput sequencing data [8] | Identifying somatic mutations from tumor-normal paired sequencing data [8] |

| ACMG/AMP Guidelines | Standardized framework for interpreting sequence variants [8] | Classifying germline variants as Benign, VUS, Likely Pathogenic, or Pathogenic [8] |

Key Signaling Pathways and Workflows

The following diagram maps the logical workflow for a researcher leveraging multiple consortium databases, from data access to biological insight, illustrating how these resources can be used in an integrated fashion:

Discussion and Future Directions

Major cancer genomics consortia have fundamentally transformed cancer research by providing large-scale, publicly accessible datasets. TCGA, ICGC ARGO, and AACR Project GENIE offer complementary strengths: TCGA provides deep multi-omics characterization, ICGC ARGO offers meticulously curated longitudinal clinical data, and AACR Project GENIE delivers large-scale real-world evidence. The future of these resources lies in their integration with emerging technologies, particularly artificial intelligence (AI). Researchers are already using these datasets to train and refine AI models for cancer diagnosis, prognosis, and treatment prediction [6]. Furthermore, initiatives to increase global representation, including addressing bioinformatics challenges in regions like Latin America, are crucial for ensuring the equitable advancement of precision oncology [8]. As these databases continue to grow and evolve, they will remain foundational for unlocking new discoveries in cancer biology and improving patient care worldwide.

The era of precision oncology is fundamentally reliant on the comprehensive analysis of large-scale genomic data to unravel the complexity of cancer. Centralized data portals have become indispensable infrastructure for the cancer research community, providing integrated access to vast, well-annotated molecular datasets and powerful analytical tools. These platforms enable researchers and drug developers to move beyond single-institution datasets, facilitating discoveries across cancer types through standardized data access. The Cancer Genome Atlas (TCGA) and similar international efforts have generated petabytes of multi-omics data, including genomic, transcriptomic, epigenomic, and proteomic profiles from thousands of tumor samples[citexref:6]. This review focuses on three pivotal portals—cBioPortal, the NCI Genomic Data Commons (GDC), and the UCSC Genome Browser—examining their specialized capabilities for cancer DNA sequence analysis within the broader ecosystem of public genomic resources. By providing cross-platform comparison and detailed experimental methodologies, this guide aims to empower researchers to effectively leverage these resources to accelerate oncogenic discovery and therapeutic development.

cBioPortal for Cancer Genomics

The cBioPortal is an open-access platform designed to lower the barrier to complex cancer genomics data analysis. It provides a visualization interface that enables interactive exploration of molecular profiles and clinical attributes from large-scale cancer genomics projects. While specific current details were unavailable in the search results, its established value lies in enabling researchers without bioinformatics expertise to query genetic alterations across patient cohorts.

NCI Genomic Data Commons (GDC)

The GDC serves as a uniform data repository that harmonizes and standardizes cancer genomics data across multiple initiatives, including TCGA and Therapeutically Applicable Research to Generate Effective Therapies (TARGET). The GDC provides not only raw data but also harmonized processing through standardized pipelines for variant calling, gene expression quantification, and methylation analysis. This ensures consistency and reproducibility across studies, making it particularly valuable for pan-cancer analyses seeking to identify common molecular themes across different cancer types[citexref:6].

UCSC Genome Browser

The UCSC Genome Browser provides an interactive graphical interface for exploring genome annotations across multiple species. Unlike portal-specific resources, it functions as a contextual framework where users can visualize their own genomic data alongside thousands of publicly available annotation "tracks" including gene predictions, expression data, regulatory elements, and variation data. Recent enhancements have incorporated AI-powered tracks such as Google DeepMind's AlphaMissense, which predicts pathogenicity of missense variants, and VarChat, which uses large language models to summarize scientific literature on genomic variants[citexref:2]. After 25 years of continuous operation, it remains "an essential tool for navigating the genome and understanding its structure, function and clinical impact"[citexref:8].

Table 1: Comparative Analysis of Centralized Genomic Data Portals

| Feature | cBioPortal | NCI GDC | UCSC Genome Browser |

|---|---|---|---|

| Primary Focus | Interactive exploration of cancer genomics data | Comprehensive data repository and analysis | Genome annotation visualization |

| Core Strengths | Intuitive visualization of clinical and genomic data | Data harmonization, scalable analysis | Contextual visualization, extensive annotation tracks |

| Data Types | Genomic alterations, clinical data, expression | Raw and processed genomic, transcriptomic, epigenomic data | Genome annotations, conservation, regulation, variation |

| Analytical Tools | OncoPrint, mutation mapper, survival analysis | Bioinformatics pipelines, API access | Track hubs, data visualization, table browser |

| AI/ML Integration | Not specified in available sources | Supports AI model training with standardized data | AlphaMissense, VarChat, and other AI-prediction tracks[citexref:2] |

Data Types and Experimental Methodologies

Multi-Omics Data in Cancer Research

Comprehensive cancer analysis relies on integrating multiple molecular data types that provide complementary insights into tumor biology. Centralized portals provide access to these diverse data modalities:

mRNA Expression Data: mRNA carries genetic information transcribed from DNA and provides insights into gene activity. Dysregulation of specific genes can result in uncontrolled cell proliferation, a hallmark of cancer[citexref:6]. Studies have used mRNA expression data to classify tumor types with approximately 90% precision using machine learning approaches[citexref:6].

miRNA Expression Data: miRNAs are small non-coding RNAs that regulate gene expression by degrading mRNAs or inhibiting their translation. They function as key post-transcriptional regulators of oncogenes and tumor suppressor genes[citexref:6]. For example, in non-small cell lung cancer, high let-7 expression reduces cancer cell growth and inhibits differentiation[citexref:6].

Copy Number Variation (CNV): CNV refers to variations in the number of copies of genomic segments. Genes such as BRCA1, CHEK2, ATM, and BRCA2 have strong associations with cancers like breast cancer due to copy number alterations[citexref:6].

Epigenomic Modifications: DNA methylation and histone modification patterns regulate gene expression without altering the underlying DNA sequence. These epigenetic marks are frequently dysregulated in cancer and can serve as diagnostic markers.

Genomic Mutations: Somatically acquired mutations in DNA drive cancer development and progression. These include single nucleotide variants (SNVs), small insertions/deletions (indels), and structural variations.

Table 2: Key Multi-Omics Data Types for Cancer Research

| Data Type | Biological Significance | Research Applications | Example Analysis |

|---|---|---|---|

| mRNA Expression | Gene activity level | Tumor classification, biomarker discovery | Li et al. classified 31 tumors with 90% precision[citexref:6] |

| miRNA Expression | Post-transcriptional regulation | Therapeutic targeting, diagnostic biomarkers | Wang et al. achieved 92% sensitivity classifying 32 tumors[citexref:6] |

| Copy Number Variation | Gene dosage alterations | Driver gene identification, pathway analysis | Dagging classifier for CNV-based categorization[citexref:6] |

| DNA Methylation | Epigenetic regulation | Early detection, prognostic stratification | Pan-cancer epigenetic clock development |

| Somatic Mutations | Causal driver events | Targeted therapy, mutational signature analysis | Pathway enrichment and drug-gene interaction mapping |

Standardized Pan-Cancer Analysis Workflow

A generalized workflow for pan-cancer classification provides a framework for systematic analysis across cancer types. The standardized methodology encompasses data acquisition through biological validation, ensuring robust and reproducible findings.

Detailed Protocol: Pan-Cancer Classification Using Multi-Omics Data

This protocol outlines the steps for developing a machine learning model to classify cancer types using multi-omics data from centralized portals, based on established methodologies in the literature[citexref:6].

Data Acquisition and Preprocessing

Data Download: Access multi-omics data (e.g., mRNA expression, miRNA expression, CNV) through the GDC Data Portal API or cBioPortal's web interface. Select datasets spanning multiple cancer types with sufficient sample sizes (minimum 50 samples per cancer type recommended).

Data Harmonization: Apply normalization procedures appropriate for each data type. For RNA-Seq data, use TPM (Transcripts Per Million) or FPKM (Fragments Per Kilobase Million) normalization followed by log2 transformation. For methylation data, perform beta-value normalization and batch effect correction using ComBat or similar methods.

Quality Control: Remove samples with poor quality metrics (e.g., low mapping rates, extreme outlier profiles). Filter molecular features with low variance or excessive missing values across samples.

Feature Selection and Model Training

Dimensionality Reduction: Apply feature selection methods to reduce computational complexity and mitigate overfitting. For genomic data, use variance-based filtering, followed by recursive feature elimination or LASSO regularization to identify the most discriminative features.

Model Selection: Choose appropriate algorithms based on dataset characteristics. For high-dimensional omics data, random forests, support vector machines, and neural networks typically outperform simpler models. Implement using scikit-learn, TensorFlow, or PyTorch frameworks.

Training and Validation: Split data into training (70%), validation (15%), and test (15%) sets. Perform k-fold cross-validation (typically k=5 or 10) on the training set to optimize hyperparameters. Evaluate final model performance on the held-out test set.

Performance Evaluation and Biological Interpretation

Metrics Calculation: Compute standard classification metrics including accuracy, precision, recall, F1-score, and area under the ROC curve (AUC-ROC). Generate a confusion matrix to identify specific cancer types that are frequently misclassified.

Benchmarking: Compare performance against established baselines and state-of-the-art methods. Significance testing (e.g., McNemar's test) should be applied to demonstrate statistically significant improvements.

Biological Validation: Conduct pathway enrichment analysis (using tools like GSEA or Enrichr) on discriminative features to identify biological processes driving classification. Validate findings in independent datasets or through experimental follow-up.

Essential Research Reagents and Computational Tools

Successful utilization of centralized data portals requires both computational resources and biological research reagents for experimental validation.

Table 3: Essential Research Reagents and Computational Tools

| Resource Type | Specific Examples | Function/Application |

|---|---|---|

| Public Data Resources | TCGA Pan-Cancer Atlas, UCSC Genome Browser, dbGaP | Provide foundational multi-omics datasets for analysis[citexref:6] [10] |

| Reference Materials | NIST Genome in a Bottle reference cell lines | Quality control and benchmarking for genomic analyses[citexref:4] |

| Computational Tools | GDC API, UCSC Table Browser, cBioPortal R package | Programmatic data access and analysis |

| ML/DL Frameworks | Scikit-learn, TensorFlow, PyTorch | Implementation of classification algorithms[citexref:6] |

| Visualization Tools | UCSC Genome Browser tracks, OncoPrints, ggplot2 | Data exploration and result presentation |

| Validation Reagents | CRISPR libraries, antibodies, cell lines | Experimental validation of computational findings |

AI and Machine Learning Applications

Artificial intelligence approaches are increasingly integrated with centralized data portals to enhance cancer genomic analysis. The NIST Cancer Genome in a Bottle program provides comprehensively sequenced cancer cell lines that researchers can use to train AI models to detect cancer-causing mutations and identify potential therapeutic approaches[citexref:4]. The UCSC Genome Browser has incorporated AI-powered tracks including Google DeepMind's AlphaMissense, which predicts pathogenic missense variants, and VarChat, which uses large language models to summarize scientific literature on genomic variants[citexref:2]. In pan-cancer classification, deep learning models such as convolutional neural networks have achieved 95.59% accuracy in classifying 33 cancer types, with the added benefit of identifying biomarkers through guided Grad-CAM visualization[citexref:6]. The emerging trend of natural language processing applications includes tools to convert natural language to graph queries for knowledge graphs, with potential extensions to genomic querying[citexref:1].

Future Directions and Challenges

The future of centralized data portals for cancer research will be shaped by several emerging trends and persistent challenges. Key areas of development include:

AI Integration: Deeper incorporation of machine learning for predictive modeling and automated data interpretation, as exemplified by tools like AlphaMissense and VarChat[citexref:2].

Streaming Data Analysis: Development of benchmarks and methods for analyzing "always in motion" streaming genomic data, moving beyond static snapshots to dynamic models of tumor evolution[citexref:1].

Ethical Data Sharing: Expansion of consented data resources following models like the NIST pancreatic cancer cell line, which was developed with explicit patient consent for public data sharing[citexref:4].

Multi-Omics Integration: Advanced methods for combining genomic, transcriptomic, proteomic, and clinical data to build comprehensive models of cancer biology.

Tool Democratization: Continued development of user-friendly interfaces that make complex genomic analyses accessible to researchers without computational expertise.

Persistent challenges include addressing tumor heterogeneity, improving early detection capabilities, managing the increasing scale and complexity of genomic data, and ensuring equitable access to both data and computational resources across the research community. Centralized data portals will continue to evolve to address these challenges, maintaining their position as essential infrastructure for cancer research.

Large-scale public datasets are foundational to modern cancer research, enabling the discovery of molecular subtypes, biomarkers, and therapeutic targets. The Cancer Genome Atlas (TCGA) stands as a landmark program in this field, having molecularly characterized over 20,000 primary cancer and matched normal samples spanning 33 cancer types [1]. This joint effort between the National Cancer Institute (NCI) and the National Human Genome Research Institute generated over 2.5 petabytes of multiomic data, creating an unprecedented resource for the research community [1]. The data, which are freely available through repositories like the Genomic Data Commons (GDC) Data Portal, have already led to significant improvements in our ability to diagnose, treat, and prevent cancer by providing comprehensive molecular profiles of tumor tissues [11] [1].

The power of these datasets lies not only in their scale but also in their integrated data diversity, which combines multiple molecular data types with clinical and pathological annotations. This multi-faceted approach allows researchers to correlate genomic alterations with clinical outcomes, tumor stages, and treatment responses. For instance, TCGA collected diverse data types for each case, including clinical information (e.g., demographics, smoking status, treatment history), molecular analyte metadata, and molecular characterization data (e.g., gene expression values) [11]. Such rich annotation enables researchers to move beyond simple mutation cataloging toward understanding the clinical implications of molecular findings, supporting the development of precision oncology approaches that tailor treatments to individual molecular profiles.

Tumor Type Diversity in Major Atlas Programs

Comprehensive cancer genomics resources encompass a wide spectrum of malignancies, ensuring broad relevance across cancer biology and clinical oncology. TCGA's design included careful selection of cancer types based on incidence, mortality, and availability of tissues, resulting in the characterization of 33 different cancers. The program includes common malignancies such as breast adenocarcinoma (BRCA), lung squamous cell carcinoma (LUSC), colon adenocarcinoma (COAD), and prostate adenocarcinoma (PRAD), as well as rarer but molecularly informative cancers like glioblastoma multiforme (GBM) and ovarian carcinoma (OV) [12]. This diversity enables comparative analyses across tissue types and identifies pan-cancer patterns of tumorigenesis.

Table 1: Selected Tumor Types in Public Cancer Genomics Datasets

| Cancer Type Abbreviation | Full Name | Selected Characteristics |

|---|---|---|

| BLCA | Bladder Urothelial Carcinoma | High mutation burden; chromatin modification genes mutated |

| BRCA | Breast Adenocarcinoma | Subtypes based on gene expression; BRCA1/BRCA2 mutations |

| COAD | Colon Adenocarcinoma | Microsatellite instability; APC and TP53 mutations common |

| GBM | Glioblastoma Multiforme | Aggressive brain tumor; EGFR amplification common |

| KIRC | Kidney Renal Clear Cell Carcinoma | VHL mutations leading to HIF accumulation |

| LUSC | Lung Squamous Cell Carcinoma | TP53 mutations nearly universal; smoking-related |

| OV | Ovarian Serous Cystadenocarcinoma | TP53 mutations nearly universal; homologous repair defects |

| PRAD | Prostate Adenocarcinoma | SPINK1, ERG rearrangements; androgen receptor signaling |

| SKCM | Skin Cutaneous Melanoma | Highest mutation burden; UV signature mutations |

| UCEC | Uterine Corpus Endometrial Carcinoma | Microsatellite instability; POLE mutations in hypermutated subset |

The selection of these specific cancer types for intensive molecular characterization has enabled researchers to address fundamental questions in cancer biology while accounting for tissue-specific alterations. For example, studies of bladder urothelial carcinoma (BLCA) have revealed frequent mutations in chromatin modification genes, while analyses of kidney renal clear cell carcinoma (KIRC) consistently show alterations in the VHL gene [12]. The inclusion of multiple cancer types originating from the same tissue, such as lung squamous cell carcinoma (LUSC) and lung adenocarcinoma (LUAD), has further enabled investigations into how cells of origin influence oncogenic pathways. This systematic approach across diverse malignancies provides the necessary foundation for identifying both universal and tissue-specific cancer drivers.

Molecular Data Layers: A Multiomic Perspective

Modern cancer genomics employs diverse molecular profiling technologies that collectively provide a comprehensive view of tumor biology. These technologies capture information at multiple regulatory levels—from DNA sequence variations to epigenetic modifications, gene expression, and protein abundance—enabling researchers to build detailed models of oncogenic processes. The integration of these multiomic data layers is essential for understanding the complex mechanisms driving cancer development and progression, as each layer provides complementary biological insights.

Genomic and Epigenomic Characterization

Genomic characterization forms the foundation of cancer genome atlas projects, focusing on identifying alterations in DNA sequence. TCGA employed multiple platforms for genomic analysis, including whole exome sequencing (WES) to capture protein-coding variants across all cancer types, whole genome sequencing (WGS) for a comprehensive view of coding and non-coding regions (for select cases), and SNP microarrays for copy number variation and loss of heterozygosity analysis [11]. These approaches collectively identify somatic mutations (acquired in tumor tissue), copy number alterations (amplifications or deletions of genomic regions), and structural variations (chromosomal rearrangements). The detection of these variations helps pinpoint driver mutations responsible for oncogenic transformation.

Epigenomic profiling complements genomic analyses by characterizing molecular modifications that regulate gene expression without altering DNA sequence. TCGA extensively utilized DNA methylation arrays to measure genome-wide cytosine methylation patterns, which are frequently disrupted in cancer and can silence tumor suppressor genes [11]. For some tumor types, bisulfite sequencing provided single-nucleotide resolution methylation maps after bisulfite conversion of DNA [11]. Additional epigenomic methods included ATAC-Seq to assess chromatin accessibility, identifying regions of open chromatin associated with active regulatory elements [13]. These epigenomic profiles help explain how cancer cells reprogram gene expression beyond the constraints of their DNA sequence.

Transcriptomic and Proteomic Characterization

Transcriptomic analyses measure gene expression levels, providing insights into the functional consequences of genomic and epigenomic alterations. TCGA employed mRNA sequencing using poly(A) enrichment for most cancer types, generating data on gene-level, isoform-specific, and exon-level expression [11]. For some tumor types, total RNA sequencing using ribosomal depletion captured both coding and non-coding RNAs [11]. Additionally, microarray-based expression profiling was used for certain cancer types before RNA sequencing became the standard [11]. Beyond bulk tissue analysis, emerging approaches like single-cell RNA sequencing and spatial transcriptomics resolve expression patterns at cellular resolution within the complex architecture of tumor microenvironments [13].

Proteomic characterization bridges the gap between gene expression and functional protein activity. While technically challenging for large-scale atlas projects, TCGA included reverse-phase protein arrays (RPPA) to quantify protein abundance and post-translational modifications for key signaling pathways across all cancer types [11]. These data provide critical validation of whether genomic and transcriptomic alterations actually translate to changes at the protein level, offering insights into pathway activation states that might not be evident from RNA measurements alone. Advanced integrated methods like Cellular Indexing of Transcriptomes and Epitopes by Sequencing (CITE-Seq) now enable simultaneous measurement of proteins and RNA in single cells, linking gene expression to cancer phenotypes [13].

Table 2: Molecular Data Types in Cancer Genomics Atlas Programs

| Data Layer | Technologies | Data Formats | Key Applications |

|---|---|---|---|

| Genomics | Whole Exome Sequencing (WES), Whole Genome Sequencing (WGS), SNP Microarray | BAM (alignment), VCF (variants), MAF (mutation calls), CEL | Mutation calling, copy number analysis, structural variant detection |

| Epigenomics | DNA Methylation Array, Bisulfite Sequencing, ATAC-Seq | IDAT, BAM, BED (methylation calls) | Promoter methylation analysis, chromatin accessibility mapping |

| Transcriptomics | mRNA Sequencing, Total RNA Sequencing, Microarray | BAM, TXT (normalized expression values), CEL | Differential expression, fusion detection, pathway analysis |

| Proteomics | Reverse-Phase Protein Array (RPPA), CITE-Seq | TIFF, TXT (normalized expression) | Protein quantification, phosphorylation signaling analysis |

| Imaging | Whole Slide Imaging, Radiological Imaging | SVS, DCM | Digital pathology, radiology-genomics correlation |

Clinical Annotations: Bridging Molecular Data and Patient Outcomes

Clinical annotations form the critical link between molecular profiling and patient phenotypes, enabling researchers to connect genomic findings with disease presentation, progression, and treatment response. These annotations encompass demographic information (e.g., age, gender, race), diagnosis and staging data (e.g., TNM classification, Gleason score for prostate cancer), treatment history (e.g., surgical procedures, chemotherapy regimens, radiation therapy), and outcome measures (e.g., overall survival, progression-free survival, development of metastasis) [11] [14]. In TCGA, clinical information is typically available in XML format per patient or as tab-delimited text files grouped by cancer type [11].

The quality and consistency of clinical annotations significantly impact the validity of research conclusions. Studies have demonstrated that rigorous methodologies for clinical data extraction are essential for generating reliable datasets. For example, in prostate cancer research, implementing a defined source hierarchy—specifying which clinical documents take precedence when contradictory information exists—substantially improves data reproducibility [14]. Key elements such as T stage, metastasis date, and castration resistance status have been shown to have lower reproducibility if not carefully defined and extracted, highlighting the importance of standardized data collection protocols [14]. Such meticulous annotation practices ensure that molecular findings can be accurately correlated with clinical outcomes.

Annotations in systems like the GDC provide essential contextual information about files, cases, or metadata nodes that may impact data analysis [15]. These annotations include comments about why particular patients, samples, or files are absent from the dataset or why they may exhibit critical differences from others. Researchers should review these annotations prior to analysis, as they capture information that cannot be represented through standard data model properties [15]. The GDC automatically includes relevant annotations when downloading data via the Data Transfer Tool, and they can also be searched through the API or annotations page of the GDC Data Portal [15].

Experimental Protocols and Analytical Workflows

Data Access and Preprocessing Pipeline

Accessing and processing data from public cancer genomics resources requires a systematic approach to ensure data quality and analytical reproducibility. The primary portal for TCGA data is the Genomic Data Commons (GDC), which provides unified data access, analysis tools, and documentation [1]. The GDC Data Portal offers web-based interfaces for querying and retrieving data, while the GDC API enables programmatic access for large-scale downloads. For transferring substantial datasets, the GDC Data Transfer Tool efficiently manages large file transfers and automatically includes relevant annotations that might affect analysis [15].

The preprocessing of genomic data requires careful attention to platform-specific considerations and quality control metrics. For whole exome sequencing data, the GDC provides aligned reads in BAM format, variant calls in VCF format, and aggregated mutation annotations in MAF files [11]. It is important to note that germline mutation calls and unvalidated non-coding somatic variants are under controlled access due to privacy considerations, while derived data are typically open access [11]. For DNA methylation array data, the GDC provides raw intensity files (IDAT format) as well as processed beta values representing methylation levels [11]. Researchers should consult the extensive documentation provided by the GDC for each data type to understand processing pipelines, normalization methods, and potential batch effects.

Data Processing and Integration Pipeline

Genome Deep Learning Methodology

Artificial intelligence approaches, particularly deep learning, have emerged as powerful tools for analyzing complex cancer genomics data. The Genome Deep Learning (GDL) methodology represents one such approach that uses deep neural networks to identify relationships between genomic variations and cancer phenotypes [12]. This method has demonstrated remarkable performance, with specific models achieving over 97% accuracy in distinguishing certain cancer types from healthy tissues based solely on whole exome sequencing data [12].

The GDL workflow consists of two main components: data processing and model training. The data processing phase involves: (1) comparing sequencing data to a reference genome to obtain mutation files; (2) converting mutation files into model input format; and (3) filtering data and selecting relevant features [12]. For feature selection, the method ranks point mutations by frequency of occurrence in each cancer group and selects the top 10,000 mutations as dimensions for model building [12]. The model training phase employs a deep neural network architecture with four fully connected layers and a softmax regression layer for classification [12]. The model uses Rectified Linear Unit (ReLU) as the activation function and incorporates L2 regularization to minimize overfitting while using an exponential decay method to optimize the learning rate [12].

Genome Deep Learning Workflow

Biomarker Discovery and Validation Pipeline

The identification and validation of molecular biomarkers represents a central application of cancer genomics data. A comprehensive biomarker discovery pipeline typically integrates multiple data types and analytical approaches to establish clinical significance. For example, a recent study investigating SLC10A3 as a potential biomarker in head and neck cancer exemplifies this multi-step approach [16]. The methodology involved: (1) analyzing SLC10A3 expression across public datasets including TCGA, CPTAC, and GEO; (2) assessing prognostic relevance using Kaplan-Meier survival analysis and receiver operating characteristic (ROC) curves; (3) performing correlation analysis to identify genes associated with SLC10A3 expression; and (4) conducting protein-protein docking studies to predict functional interactions [16].

This integrated approach revealed that SLC10A3 was significantly upregulated in head and neck squamous cell carcinoma tumor samples compared to normal tissues, and increased expression correlated with poor survival outcomes [16]. The correlation analysis identified 26 genes positively associated with SLC10A3, with BCAP31, IRAK1, and UBL4A showing consistent correlation across multiple datasets [16]. Computational protein interaction modeling using docking and AI/machine learning-based Evolutionary Scale Modelling (ESM) framework further revealed significant binding affinities, suggesting potential functional interactions [16]. This comprehensive workflow demonstrates how diverse computational approaches applied to public datasets can nominate and characterize potential therapeutic targets.

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools

| Resource Category | Specific Tools/Platforms | Application in Cancer Genomics |

|---|---|---|

| Sequencing Platforms | Illumina MiSeq i100, NovaSeq Series | Targeted and genome-wide sequencing; varies by throughput needs |

| Library Prep Kits | Illumina TruSeq, Nextera Flex | DNA/RNA library preparation for NGS |

| Data Analysis Tools | GDC Data Portal, GDC API, Data Transfer Tool | Data access, query, and transfer from public repositories |

| Mutation Callers | MuTect2, VarScan2, GATK | Somatic and germline variant detection |

| Pathway Analysis Tools | GSEA, DAVID, Ingenuity Pathway Analysis | Functional interpretation of genomic alterations |

| Visualization Platforms | IGV, UCSC Genome Browser, cBioPortal | Exploration and visualization of genomic data |

| Statistical Environments | R/Bioconductor, Python | Data processing, statistical analysis, machine learning |

Data Repositories and Knowledgebases

The cancer genomics research ecosystem is supported by numerous publicly accessible data repositories and knowledgebases that serve different specialized functions. The Genomic Data Commons (GDC) represents the primary repository for TCGA data, providing harmonized processing pipelines and unified data access [11] [1]. The Cancer Imaging Archive (TCIA) stores radiological images associated with TCGA cases, including MRI, CT, and PET scans [11]. For proteomic data, the Clinical Proteomic Tumor Analysis Consortium (CPTAC) provides complementary protein-level measurements for selected cancer types [16]. The Gene Expression Omnibus (GEO) serves as a general repository for functional genomics data, including many cancer-related datasets beyond TCGA [16].

Specialized tools have been developed to facilitate access and analysis of these complex datasets. The cBioPortal for Cancer Genomics provides intuitive web-based visualization and analysis of multidimensional cancer genomics data, allowing researchers to interactively explore genetic alterations across patient cohorts and correlate them with clinical outcomes [12]. The UCSC Cancer Genomics Browser offers similar functionality with specialized tools for visualizing genomic data in context with clinical annotations. For programmatic access, the Bioconductor project in R provides hundreds of specialized packages for analyzing cancer genomics data, while Python ecosystems like PyData and scikit-learn offer complementary tools for machine learning and data analysis.

The diversity of tumor types, molecular data layers, and clinical annotations in public cancer genomics datasets provides an unprecedented resource for advancing our understanding of cancer biology and treatment. The integration of genomic, epigenomic, transcriptomic, and proteomic data across multiple cancer types enables researchers to identify both universal and tissue-specific patterns of oncogenesis, while comprehensive clinical annotations facilitate the translation of molecular findings to clinical relevance. As analytical methods continue to evolve—particularly with advances in artificial intelligence and multiomic integration—these foundational datasets will continue to yield new insights into cancer mechanisms, biomarkers, and therapeutic targets.

Future directions in the field include increased emphasis on single-cell analyses to resolve tumor heterogeneity, spatial transcriptomics to contextualize cellular interactions within tumor microenvironments, and longitudinal sampling to understand tumor evolution under therapeutic pressure [13]. The integration of real-world evidence from electronic health records with genomic data will further enhance the clinical relevance of research findings. As these technologies mature, the principles of data diversity, rigorous annotation, and integrated analysis exemplified by TCGA will continue to guide the next generation of cancer genomics research, ultimately advancing toward more precise and effective cancer care.

The shift towards precision oncology is fundamentally driven by the analysis of large-scale genomic datasets. These resources enable researchers to uncover the molecular underpinnings of cancer, identify new therapeutic targets, and develop diagnostic and prognostic biomarkers. For scientists navigating this complex field, understanding the available data, its structure, and the methods to leverage it is paramount. This guide provides a technical overview of major public cancer genomic data repositories, protocols for their access and utilization, and their application across research scenarios from pan-cancer analyses to the study of rare tumors.

A wealth of data is available through coordinated efforts like The Cancer Genome Atlas (TCGA), which has molecularly characterized over 20,000 primary cancer and matched normal samples spanning 33 cancer types, generating over 2.5 petabytes of genomic, epigenomic, transcriptomic, and proteomic data [1]. The Pan-Cancer Atlas (PanCanAtlas) further builds upon this robust dataset by comparing these tumor types to answer overarching questions about cancer [17]. Beyond NCI resources, other portals like the European Genome-phenome Archive (EGA) and dbGaP host a multitude of genomic studies. However, as detailed in later sections, accessing and harmonizing this data presents significant technical and logistical challenges that researchers must be prepared to address [18] [19].

The table below summarizes the primary data sources available to cancer researchers, detailing their hosting organization, primary content, and access model.

Table 1: Major Public Resources for Cancer Genomic Data

| Resource Name | Hosting Organization | Primary Content & Data Types | Access Model |

|---|---|---|---|

| The Cancer Genome Atlas (TCGA) [1] | National Cancer Institute (NCI) | Genomic, epigenomic, transcriptomic, and proteomic data from 33 cancer types. | Open access via the Genomic Data Commons (GDC) Data Portal. |

| Genomic Data Commons (GDC) [19] | National Cancer Institute (NCI) | Unified data repository for raw and processed sequencing data, curated clinical metadata, and pathology images; includes TCGA and other programs. | A mix of open and controlled access. |

| Database of Genotypes and Phenotypes (dbGaP) [18] [19] | National Center for Biotechnology Information (NCBI) | Primarily raw sequencing data with study-specific metadata from a wide range of studies, including many clinical trials. | Controlled access; requires application and approval. |

| European Genome-phenome Archive (EGA) [18] | European Bioinformatics Institute (EBI) | A repository for genotype and phenotype data from a wide array of studies, often used by European consortia. | Controlled access; requires application and approval. |

| Pan-Cancer Atlas (PanCanAtlas) [17] | NCI (hosted by multiple sites, e.g., MSK) | Integrated analyses and datasets from TCGA, focusing on cross-tumor comparisons and emergent themes. | Open access via the GDC and associated portals. |

| Treehouse Childhood Cancer Initiative [18] | University of California Santa Cruz | A compendium of >11,000 tumor gene expression profiles, combining public data and clinical cases, with a focus on pediatric cancers. | Public compendium available online; clinical data access governed by specific Data Use Agreements. |

| Alliance Standardized Translational Omics Resource (A-STOR) [19] | NCI's National Clinical Trials Network (NCTN) | A living repository for multi-omics and associated clinical data from Alliance clinical trials, designed to facilitate rapid, embargoed analyses. | Controlled access for approved investigators during the embargo period; data eventually deposited in public repositories. |

For researchers focusing on specific malignancies, these resources offer granular data. The following table, compiled from a pan-cancer dataset repository, exemplifies the variety of data available for a selection of cancer types within TCGA [20].

Table 2: Exemplary Data Availability for Selected TCGA Cancer Types

| Cancer Type (TCGA Code) | # Cases | Primary Publication | Genomics | Proteomics | Pathology Images | Radiology Images |

|---|---|---|---|---|---|---|

| Glioblastoma (TCGA-GBM) | 523 | Nature 2008 | Yes | 100 Cases | 2,053 svs | 481,158 images (CT, MR, DX) |

| Breast Cancer (TCGA-BRCA) | 1,036 | Nature 2012 | Yes | 3,111 svs | 230,167 images (MR, MG, CT) | |

| Lung Adenocarcinoma (TCGA-LUAD) | 517 | Nature 2014 | Yes | 1,138 svs | 60,196 images (CT) | |

| Acute Myeloid Leukemia (TCGA-LAML) | 135 | NEJM 2013 | Yes | 41 Cases | 120 svs | |

| Colorectal Adenocarcinoma (TCGA-COAD) | 458 | Nature 2012 | Yes | 1,442 svs | 8,387 images (CT) |

Navigating the Data Access and Integration Workflow

Identifying and obtaining genomic data is a non-linear process often fraught with delays. An analysis of the Treehouse initiative's experience found that it takes an average of 5–6 months to obtain access to and prepare public genomic data for research use [18]. The workflow can be broken down into several key steps, each with its own challenges.

Figure 1: The multi-stage workflow for accessing and preparing public genomic data, highlighting common challenges at each step [18].

Step 1: Finding the Data

Researchers must comb through public repositories, search literature, and often contact authors directly. Common challenges include data being withheld until publication, mislabeled datasets, and incorrect accession links in publications. For example, the Treehouse team encountered instances where RNA-Seq data referenced in a paper was not present in the repository or was incorrectly labeled [18].

Step 2: Obtaining Access

Most genomic data is under controlled access, requiring a detailed application describing the proposed use. A straightforward process can take 2–3 months, but complex cases can take up to 6 months. The resulting Data Use Agreements often have cumbersome requirements, such as yearly progress reports, lists of all personnel touching the data, and in some cases, pre-approval of manuscripts [18].

Decentralization and Harmonization Challenges

A significant barrier in the field is the decentralized nature of clinical trial omics data. Data are often siloed for years to protect the publication rights of the primary study team, making them less relevant by the time they become publicly available. Furthermore, different repositories (e.g., dbGaP, GDC, NCTN Archive) have distinct content and formatting requirements, creating further bottlenecks [19]. Initiatives like A-STOR aim to fill this gap by creating a shared, living repository for multi-omics data from clinical trials, facilitating rapid, parallel analyses while protecting investigators' rights [19].

Experimental Protocols and Analytical Frameworks

Protocol: Assessing Clinical Utility in Rare Cancers

The IMPRESS-Norway trial provides a prospective methodology for evaluating the clinical benefit of genomic-guided therapies in rare cancers [21].

- Objective: To determine the clinical benefit of offering comprehensive genomic profiling and alteration-matched targeted therapies to patients with advanced cancers who had exhausted standard treatment options.

- Patient Population: Patients with advanced rare cancers.

- Intervention: Genomic profiling was performed, and patients were offered matched targeted therapies based on identified alterations.

- Outcome Measurement: The primary efficacy endpoint was the 16-week disease control rate (DCR), defined as the sum of complete response (CR), partial response (PR), and stable disease (SD) rates according to RECIST criteria.

- Analytical Challenge: Distinguishing true drug effect from indolent disease biology in patients with stable disease.

- Statistical Methodology: To address this, researchers can employ:

- Tumor Growth Kinetics (TGK): Analyzing the rate of tumor growth before and during treatment.

- Time to Progression (TTP) Ratio: Calculating the ratio of TTP on the new therapy to TTP on the most recent prior therapy (as defined by the Von Hoff criteria). A ratio >1.3 is often considered evidence of clinical benefit [21].

Protocol: Multi-Modal Molecular Investigation

The Rare Tumor Initiative at MD Anderson Cancer Center exemplifies a comprehensive approach to rare cancer profiling [21].

- Objective: To uncover distinct molecular subsets and key tumor-intrinsic and microenvironmental features in rare cancers.

- Technologies Employed:

- Whole-Exome and Whole-Genome Sequencing: For identifying somatic mutations, copy number alterations, and structural variants.

- Whole Transcriptome Sequencing (RNA-Seq): For analyzing gene expression, gene fusions, and splicing variants.

- Multispectral Immunofluorescence Profiling: For characterizing the immune cell composition and functional state within the tumor microenvironment.

- Data Integration: Computational pipelines are used to integrate these multi-modal data streams to define molecular subtypes and identify potential therapeutic vulnerabilities.

The AI-Driven Predictive Pipeline for DNA Sequence Analysis

Artificial intelligence is increasingly used to complement wet-lab methods, accelerating the interpretation of genomic data. A unified AI workflow for DNA sequence analysis can be broken down into four key stages [22] [23].

Figure 2: The four-stage predictive pipeline for AI-based DNA sequence analysis, highlighting the crucial sequence encoding step [22] [23].

- Stage 1: Data Curation: This involves the collection and development of high-quality benchmark datasets from public databases such as TCGA and dbGaP. The quality and relevance of the dataset are foundational to the success of the entire pipeline.

- Stage 2: Sequence Encoding: This is often considered the most crucial stage. Raw DNA sequences (strings of A, C, G, T) are converted into numerical representations (statistical vectors) that AI models can process. Methods include [22] [23]:

- Traditional Methods: Physico-chemical properties (e.g., using pre-computed values for nucleotides) and statistical methods (e.g., k-mer frequency counts). These capture intrinsic sequence characteristics but may miss complex, long-range relationships.

- Advanced Methods: Neural word embeddings and language models (e.g., DNABERT). These capture richer syntactic, semantic, and contextual information of nucleotides or k-mers but require large amounts of data and computational power for training.

- Stage 3: AI Predictor: The statistical vectors are fed into predictors.

- Machine Learning Models (e.g., SVMs, Random Forests): Require less data and computational power but may struggle with highly complex relationships.

- Deep Learning Models (e.g., CNNs, RNNs, Transformers): Can learn highly complex patterns but are data-hungry and computationally intensive.

- Stage 4: Evaluation: The final model is rigorously evaluated using hold-out test sets and appropriate metrics (e.g., accuracy, AUC-ROC) under different experimental settings to ensure its robustness and generalizability.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Genomic Analysis

| Item / Technology | Function in Research |

|---|---|

| Next-Generation Sequencing (NGS) [24] | High-throughput sequencing technology enabling simultaneous sequencing of millions of DNA fragments. It is foundational for comprehensive genomic profiling (CGP) of tumors. |

| Comprehensive Genomic Profiling (CGP) Panels [25] | Targeted NGS panels designed to simultaneously detect a wide variety of somatic genomic alterations (SNVs, indels, fusions, CNVs, TMB, MSI) from a single tissue specimen. |

| Immunohistochemistry (IHC) [25] | A technique that uses antibodies to detect specific protein antigens in tissue sections, used for initial diagnostic workup and biomarker validation. |

| Fluorescence In Situ Hybridization (FISH) [25] | A cytogenetic technique used to detect specific DNA sequences, such as gene rearrangements or amplifications, on chromosomes. |

| Polymerase Chain Reaction (PCR) [25] | A method to amplify specific DNA sequences, often used for validating single-gene alterations detected by NGS. |

| CRISPR Screens [24] | A functional genomics tool that uses CRISPR-Cas9 gene editing to perform high-throughput knockout screens to identify genes critical for specific cancer phenotypes. |

| Cloud Computing Platforms (e.g., AWS, Google Cloud) [24] | Provide the scalable storage and computational power necessary to process and analyze terabyte-scale genomic and multi-omics datasets. |

| AI/ML Tools (e.g., DeepVariant) [24] | Software tools that use artificial intelligence and machine learning to accurately identify genetic variants from sequencing data or predict functional impacts. |

| cBioPortal [19] | An open-access web platform that provides intuitive visualization and analysis tools for complex cancer genomics and clinical data. |

Case Studies: From Data to Clinical Insight

Case Study: Diagnostic Recharacterization via CGP

Comprehensive Genomic Profiling can reveal inconsistencies between a primary diagnosis and the molecular features of a tumor, leading to diagnostic refinement or reclassification. A 2025 study showcased 28 such cases [25].

- Methodology: Cases were selected where CGP findings were inconsistent with the initial diagnosis. A secondary clinicopathological review was triggered, integrating all available data—morphology, IHC, and genomic results—to establish a final, molecularly informed diagnosis.

- Results: The study included two types of events:

- Disease Reclassification (7 cases): A complete change from one distinct diagnosis to another (e.g., initial diagnoses of NSCLC or sarcoma were reclassified to medullary thyroid carcinoma, melanoma, or prostate carcinoma based on driver mutations like RET M918T or TMPRSS2-ERG fusion).

- Disease Refinement (21 cases): Ambiguous diagnoses like "Carcinoma of Unknown Primary" (CUP) were refined to a specific tumor type (e.g., NSCLC, cholangiocarcinoma) based on alterations like EGFR L858R or FGFR2 fusions.

- Clinical Impact: In all cases, the updated diagnosis unveiled new, more precise therapeutic strategies. For example, a CUP refined to NSCLC with an EGFR L858R mutation would make the patient eligible for EGFR tyrosine kinase inhibitors, a option not considered under the original diagnosis [25].

Case Study: Evaluating Targeted Therapy in Rare Cancers

The prospective IMPRESS-Norway trial provides a framework for assessing the real-world utility of matched targeted therapies in rare cancers [21].

- Intervention: Patients with advanced rare cancers received genomic profiling and were offered targeted therapies matched to identified genomic alterations after exhausting standard options.

- Outcome: Among 158 evaluable patients, the 16-week disease control rate was 46% (comprising 1% complete response, 19% partial response, and 25% stable disease). Ovarian cancer, which can be considered rare depending on the definition, was a frequent malignancy in the cohort (14% of treated patients).

- Key Consideration: The study highlights the challenge of interpreting "stable disease," which could be due to drug effect or an indolent tumor. The use of tools like tumor growth kinetics or TTP ratios is critical to attribute benefit accurately to the therapeutic intervention [21].

The landscape of public cancer genomic datasets provides an unparalleled resource for driving precision oncology forward. From foundational projects like TCGA to focused clinical trial repositories and rare cancer initiatives, these data hold the key to understanding cancer biology and improving patient care. Success in this field requires not only computational skill but also a rigorous understanding of the data access workflow, analytical methodologies, and the biological and clinical context. As AI and multi-omics integration continue to evolve, the potential for extracting meaningful insights from these vast datasets will only grow, further accelerating the translation of genomic discoveries into clinical practice.

Practical Workflows for Data Access, Integration, and Analysis

In cancer DNA sequence analysis research, the management of genomic data is paramount. Data access tiers define the conditions under which researchers can obtain and utilize datasets, balancing the imperative of open science with the ethical obligation to protect participant privacy. The two primary models are open access and controlled access. The choice between these models is determined by the nature of the data, particularly the presence of information that could be used to identify research participants. For cancer genomic data, policies such as the National Institutes of Health (NIH) Genomic Data Sharing (GDS) Policy provide a governing framework, requiring that data sharing practices adhere to strict guidelines to ensure responsible use [26]. This guide details the distinctions between these access tiers, their associated data types, and the procedural workflows researchers must navigate, all within the critical context of advancing cancer research.

Defining Open and Controlled Access

Open Access Data

Open access data is made publicly available on the internet with minimal restrictions, typically limited to requirements for attribution or adherence to a specified license agreement [27]. This model is appropriate for data that has been effectively anonymized and does not contain protected or sensitive information, such as personally identifiable information (PII) or protected health information (PHI) [27].

The core principle is unrestricted access. Investigators can typically access these datasets by registering on a data portal and agreeing to a set of standard data use terms. For example, the Genomic Data Commons (GDC) provides open access data that requires users to adhere to the NIH GDS Policy, which stipulates that researchers must not attempt to re-identify participants and must acknowledge the data source in publications [26]. The benefits of open access are significant: it enhances the visibility, discoverability, and citation of research, complies with funder mandates for data sharing, and accelerates scientific progress by enabling broad reuse and supporting reproducibility [27].

Controlled Access Data

Controlled access sharing is implemented when datasets contain sensitive or regulated information that cannot be shared freely without risking participant confidentiality or violating ethical guidelines [27]. This includes data that could potentially be used to identify human research participants, such as detailed clinical attributes or germline genetic variants.

Access to this data is strictly managed. While the metadata describing the dataset (e.g., title, description, protocols) is often publicly discoverable, the actual data files are secured. External researchers must submit a formal access request, which is then reviewed by a Data Access Committee (DAC) [26]. The DAC evaluates the request based on the proposed research's consistency with the participants' original consent and the data use limitations set by the submitting institution. Approval often involves the execution of a Data Use Agreement (DUA) between the researcher's institution and the data repository [27]. This process is deliberate and secure, ensuring that data is used appropriately for legitimate research purposes.

Table 1: Core Characteristics of Open and Controlled Access

| Feature | Open Access | Controlled Access |

|---|---|---|

| Definition | Data made publicly available with no restrictions beyond attribution [27]. | Data access is restricted and granted only to approved researchers [27]. |

| Data Sensitivity | Contains no protected or sensitive information [27]. | Contains potentially identifying or sensitive participant information [28]. |

| Access Mechanism | Public download after registration and acceptance of data use terms [28]. | Formal application and approval by a Data Access Committee (DAC) [26]. |

| Speed of Access | Fast and immediate. | Slower, due to required review and approvals [27]. |

| Primary Goal | Maximize visibility, reuse, and compliance with funder mandates [27]. | Protect participant privacy and comply with ethical/legal obligations [27]. |

Data Classification and Tiering

A nuanced approach to controlled access involves further classifying sensitive data into tiers based on the potential risk of re-identification. The Human Connectome Project (HCP) provides a clear model for such a tiered system, which is highly applicable to cancer genomics [28].

- Open Access Data: In the HCP example, this includes defaced brain imaging data and non-sensitive behavioral data. The act of "defacing" the images removes identifying features, rendering the data suitable for open sharing [28].

- Tier 1 Restricted Data: This tier includes "potentially identifying but non-sensitive information." Examples from HCP include age (in years), race/ethnicity, and twin status. While not highly sensitive on their own, these attributes could be combined with other data to identify an individual [28].

- Tier 2 Restricted Data: This tier contains data with a "greater potential factor for allowing subjects to be identified," or information that "could be damaging to a subject if it became publicly known." This includes genetic data, detailed health information, and sensitive behaviors such as drug/alcohol use or family psychological history [28].

This tiered model allows for granular data management and access control, ensuring that the level of security is commensurate with the sensitivity of the data.

Table 2: Examples of Data Types by Access Tier

| Data Category | Open Access Examples | Tier 1 (Controlled) Examples | Tier 2 (Controlled) Examples |

|---|---|---|---|

| Genomic & Image Data | Defaced MR images; Somatic mutation calls from TCGA [28] [29]. | N/A | Germline genetic variants; Raw genomic sequencing data [28]. |

| Demographic Data | Age group (e.g., 26-30); Gender [28]. | Exact age (by year); Race; Ethnicity; Handedness [28]. | N/A |

| Clinical & Behavioral Data | Cognitive test scores (e.g., from Flanker Task) [28]. | Life function scores (e.g., Achenbach self-report) [28]. | Drug use history; Family illness history; Specific physiological measures (e.g., glucose levels) [28]. |

The Controlled Data Access Workflow

Securing access to controlled data is a multi-stage process that requires careful preparation. The following workflow, common to resources like the NCI's GDC and the American Cancer Society (ACS), outlines the general steps from initial inquiry to data receipt.

Pre-Application Preparation

Before submitting a request, researchers must thoroughly review the available cohort information to ensure the dataset contains the necessary variables and sample types to answer their research question [30]. It is equally critical to understand the Data Use Limitations for the specific dataset, which are set by the submitting institution and listed in public databases like dbGaP [26]. The proposed research use must be consistent with these limitations. Furthermore, researchers should confirm their institutional readiness to handle secure data and enter into a legal DUA.

The Application Process

The formal application typically requires detailed information about the lead investigator, their institution, and the proposed project. As per ACS guidelines, this often includes [30]:

- A short biography of the principal investigator.

- A concise project title and a single-paragraph description.

- Specification of the study population and datasets required.

- A timeline for the project, especially if aligned with a grant submission.

- Details on any biospecimens needed.

The DAC evaluates requests based on criteria such as the project's scientific merit, feasibility, consistency with the ACS mission, and the research team's qualifications [30]. For NIH-controlled data, authorization must be obtained through the dbGaP system [26].

Post-Approval Management

Upon DAC approval, the researcher's institution typically executes a Data Use Agreement [27]. This legally binding document outlines the standards for appropriate data use, security protocols, ownership of results, and publication expectations, including any requirements for co-authorship [30]. Researchers must then adhere to the technical and ethical terms of the DUA, which include not attempting to re-identify participants and acknowledging the data source in all publications [26]. The GDC and similar repositories may impose technical limitations, such as data transfer rate limits (e.g., 250 concurrent connections per IP address), to ensure fair access for all users [26].

Essential Tools for Cancer Genomic Analysis

Researchers working with public cancer genomic datasets rely on a suite of computational tools and platforms for analysis. The following table details key resources, many of which are developed and maintained by groups like the Cancer Genome Computational Analysis (CGCA) group at the Broad Institute [29].

Table 3: Research Reagent Solutions for Cancer DNA Sequence Analysis

| Tool/Platform Name | Type | Primary Function in Analysis |

|---|---|---|

| FireCloud | Cloud-based Platform | A centralized workspace that houses large datasets (e.g., TCGA) and provides robust, scalable workflows for genomic analysis [29]. |

| FireBrowse | Data Portal | A user-friendly, web-based interface for browsing, downloading, and generating summary reports from TCGA data [29]. |

| ABSOLUTE | Computational Algorithm | Estimates tumor purity and ploidy from sequencing data, computing absolute somatic copy-number and mutation multiplicities [29]. |

| MutSig | Computational Algorithm | Identifies genes that are mutated more often than expected by chance, highlighting potential driver genes in a cohort [29]. |

| dRanger | Computational Algorithm | Detects somatic rearrangements by identifying clusters of aberrant paired-end sequencing reads in a tumor sample [29]. |

| POLYSOLVER | Computational Algorithm | Infers HLA types from whole exome sequence data, which is crucial for immuno-oncology studies [29]. |

| TumorPortal | Data Resource | A comprehensive mutational dataset and web resource for exploring somatic mutations in 21 cancer types [29]. |

| GTEx Portal | Data Resource | Provides a reference atlas of gene expression and regulation across normal human tissues, essential for comparing tumor data [29]. |

Experimental Protocol for Accessing and Analyzing Controlled Data

This section provides a detailed methodology for a researcher to follow when embarking on a project using controlled-access cancer genomic data, from initial discovery to publication.

Protocol: Utilizing Controlled-Access Data from the GDC

Objective: To identify somatically mutated genes in a specific cancer type using controlled-access whole genome sequencing data from the Genomic Data Commons (GDC).

Step 1: Discovery and Project Scoping

- Navigate to the GDC Data Portal or related resource (e.g., FireBrowse) [29].

- Use the public data explorer to identify available datasets for your cancer of interest. Examine the available data types (e.g., WGS, RNA-Seq), sample size, and accompanying clinical data.

- Note the specific dbGaP study accession number (e.g., phs000178) and the associated Data Use Limitations.

Step 2: Data Access Request

- Log in to the dbGaP authorized access system using your eRA Commons credentials.

- Submit a Data Access Request for the identified dbGaP study. The request must include:

- A research use statement that aligns exactly with the dbGaP Data Use Limitations.

- A detailed research plan outlining the specific aims and analytical methods.

- Information about key personnel who will handle the data.

- Await review and approval from the NIH Data Access Committee (DAC). This process can take several weeks [26] [30].

Step 3: Data Retrieval and Alignment

- Once approved, log in to the GDC Data Portal with your authorized credentials.

- Use the GDC Data Transfer Tool to securely download the required BAM or FASTQ files for your selected cases.

- Perform quality control on the raw sequencing data using tools like FastQC.

- Align the sequencing reads to the human reference genome (e.g., GRCh38) using a splice-aware aligner like BWA-MEM or STAR.

Step 4: Somatic Variant Calling and Analysis

- Execute a somatic variant calling pipeline. For example:

- Use

MuTect2(part of the GATK suite) for calling small somatic SNVs and indels. - Use

dRangeror similar tools for identifying somatic structural variants [29].

- Use

- Annotate the resulting variants using a tool like

VEP(Variant Effect Predictor). - Perform downstream analyses to identify significantly mutated genes:

Step 5: Validation and Reporting

- Validate key findings using orthogonal methods (if possible) or in independent validation cohorts.

- In all oral and written presentations, disclosures, or publications, acknowledge the specific dataset(s) and the NIH-designated data repositories (GDC, dbGaP) as required by the NIH GDS Policy [26].

This technical guide outlines the core bioinformatics pipeline for identifying somatic variants from cancer DNA sequencing data, a foundational process for research utilizing public datasets in oncology. The transition of next-generation sequencing (NGS) from a research tool to a clinical cornerstone for precision oncology makes the understanding of these pipelines imperative [31]. The process transforms raw sequencing data into a structured list of genetic variants that can be mined for insights into tumorigenesis, heterogeneity, and therapeutic targets.

Next-generation sequencing (NGS) allows for the massive parallel sequencing of DNA fragments, providing a comprehensive view of a tumor's genetic landscape at a fraction of the cost and time of traditional methods [31]. In cancer research, this typically involves sequencing matched tumor and normal tissue pairs. The computational analysis of this data is challenging yet crucial, as the accurate identification of somatic mutations—particularly low-frequency variants present in subclones of the tumor—can have significant implications for understanding drug resistance and patient prognosis [32]. The pipeline for this analysis is a multi-step process where raw data is progressively refined into actionable genetic information.

The journey from raw sequencing data to variant calls follows a structured pathway. The major stages of this pipeline are illustrated in the workflow diagram below.

Detailed Methodologies and Experimental Protocols

Pre-Alignment and Sequence Alignment

Input: Unaligned reads in FASTQ or BAM format. Output: Aligned reads in BAM format.

Prior to alignment, BAM files submitted to repositories may be split by read group and converted to FASTQ format. Reads that fail the Illumina chastity test are typically filtered out [33].

The alignment step maps the sequenced reads to a reference genome. The choice of algorithm often depends on the read length.

- BWA-MEM is used for mean read lengths greater than or equal to 70 bp [33].

- BWA-aln is used for shorter reads [33].

Protocol: BWA-MEM Alignment

Parameters: -t 8 specifies thread count; -T 0 disables the minimum score threshold; -R defines the read group header.

Following alignment, read group alignments belonging to a single aliquot are merged, and the data is sorted by coordinate.

Protocol: BAM Sorting with Picard

Alignment Co-Cleaning

Input: Aligned Reads (BAM). Output: Harmonized Aligned Reads (BAM).

Co-cleaning improves alignment quality by processing the tumor and matched normal BAM files together. This two-step process, often implemented using the Genome Analysis Toolkit (GATK), reduces false positives in subsequent variant calling [33].

- Indel Local Realignment: Locates and corrects regions with misalignments caused by insertions or deletions, which can otherwise be erroneously scored as substitutions [33].

- Base Quality Score Recalibration (BQSR): Systematically adjusts the base quality scores based on detectable errors, increasing the accuracy of variant calling. The original quality scores are retained for potential downstream use [33].

Protocol: GATK BaseRecalibrator

Somatic Variant Calling

Input: Co-cleaned Aligned Reads (BAM). Output: Raw Simple Somatic Mutations (VCF).

Variant calling is performed on tumor-normal pairs to identify somatic mutations. There is no single best variant caller, and performance varies significantly depending on the context, such as variant allele frequency and coverage [34] [32]. Therefore, using multiple callers or optimized combinations is often recommended.