A Researcher's Guide to LOQ for ctDNA Digital PCR: From Foundational Concepts to Clinical Validation

This article provides a comprehensive resource for researchers and drug development professionals on determining the Limit of Quantification (LOQ) for circulating tumor DNA (ctDNA) using digital PCR (dPCR).

A Researcher's Guide to LOQ for ctDNA Digital PCR: From Foundational Concepts to Clinical Validation

Abstract

This article provides a comprehensive resource for researchers and drug development professionals on determining the Limit of Quantification (LOQ) for circulating tumor DNA (ctDNA) using digital PCR (dPCR). It covers the foundational principles distinguishing LOQ from Limit of Detection (LOD) and Limit of Blank (LoB), explores methodological frameworks for assay design and characterization, and details optimization strategies to enhance sensitivity and specificity. Furthermore, it examines validation protocols and comparative performance of dPCR against other technologies like next-generation sequencing (NGS), synthesizing key takeaways and future directions for implementing robust ctDNA quantification in cancer research and therapeutic development.

Understanding LOQ, LOD, and LoB: The Essential Pillars of ctDNA Assay Sensitivity

In the field of molecular diagnostics, particularly in the detection of circulating tumor DNA (ctDNA) using digital PCR (dPCR), accurately characterizing an assay's lower limits is not merely a technical formality but a fundamental requirement for clinical utility. The ability to detect minimal residual disease or early treatment response hinges on precisely understanding what constitutes a real signal versus background noise. Three critical metrics form the hierarchical foundation of this understanding: the Limit of Blank (LoB), the Limit of Detection (LOD), and the Limit of Quantitation (LOQ). These parameters establish a continuum of an assay's capability, from distinguishing something from nothing, to reliably detecting an analyte's presence, and finally to measuring it with precise accuracy [1] [2].

For ctDNA research, where analyte concentrations can be exceptionally low—often below 0.1% variant allele frequency (VAF)—grasping the distinctions and relationships between LoB, LOD, and LOQ is paramount [3] [4]. These metrics directly inform researchers about the confidence they can place in low-end results, guide the setting of clinical reporting thresholds, and ultimately determine whether an assay is "fit for purpose" in areas like therapy monitoring or recurrence risk assessment [1] [5]. This guide provides a comparative analysis of these core performance metrics, framed within the context of ctDNA dPCR research, to equip scientists with the knowledge needed for rigorous assay validation and interpretation.

Defining the Metrics: Core Concepts and Calculations

The terms LoB, LOD, and LOQ are often used interchangeably in error; however, they represent distinct concepts with specific definitions and calculations standardized by guidelines such as those from the Clinical and Laboratory Standards Institute (CLSI) EP17 [1] [6]. The following table provides a concise comparison of their key characteristics.

Table 1: Core Definitions and Characteristics of LoB, LOD, and LOQ

| Parameter | Definition | Primary Question Answered | Typical Sample Type for Determination | Key Statistical/Performance Focus |

|---|---|---|---|---|

| LoB (Limit of Blank) | The highest apparent analyte concentration expected when replicates of a blank sample (containing no analyte) are tested [1]. | Could the signal observed be explained by background noise alone? | Sample containing no analyte (e.g., blank plasma, buffer) [1]. | Characterizes the background and false-positive rate (Type I error, α) [1]. |

| LOD (Limit of Detection) | The lowest analyte concentration likely to be reliably distinguished from the LoB [1]. | Can we be confident the analyte is present? | Sample containing a low concentration of analyte, commutable with patient specimens [1]. | Balances false positives (α) and false negatives (Type II error, β); about detection, not precise measurement [1] [6]. |

| LOQ (Limit of Quantitation) | The lowest concentration at which the analyte can be quantified with acceptable precision and bias [1] [7]. | Can we reliably put a number on how much is there? | Sample with analyte concentration at or above the LOD [1]. | Defined by predefined goals for imprecision (e.g., CV ≤ 20%) and bias [1] [2] [7]. |

Hierarchical Relationship and Distinctions

These three metrics exist in a progressive hierarchy: LOQ ≥ LOD > LoB [1]. The LOD is always greater than the LoB because a signal must exceed the inherent noise of the blank with a high degree of confidence. The LOQ, in turn, is at or above the LOD, as it imposes stricter requirements, demanding not just detectability but also acceptable quantitative performance [1] [2]. It is crucial to recognize that an assay's "functional sensitivity," often defined as the concentration yielding a 20% coefficient of variation (CV), is a measure of its LOQ, not its LOD [1].

The following diagram illustrates the conceptual relationship between these three limits and the zones of analytical performance they define.

Experimental Protocols for Determination

Adhering to standardized experimental protocols is critical for generating robust, reproducible estimates of LoB, LOD, and LOQ. The CLSI EP17 guideline provides a widely accepted framework for this process [1] [2].

Protocol for Limit of Blank (LoB) Determination

The LoB is established by repeatedly measuring a blank sample to characterize the background noise distribution [1].

- Sample Type: A matrix-matched sample confirmed to contain no analyte. For ctDNA assays, this is typically plasma from healthy donors or a suitable buffer [1] [8].

- Experimental Replicates: A minimum of 60 replicate measurements is recommended for a robust establishment, while 20 may suffice for verification [1].

- Data Analysis: Calculate the mean (

mean_blank) and standard deviation (SD_blank) of the results. The LoB is then derived as: LoB =mean_blank+ 1.645(SD_blank) [1]. This formula estimates the 95th percentile of the blank distribution, meaning only 5% of blank measurements are expected to exceed this value due to random noise [1] [6].

Protocol for Limit of Detection (LOD) Determination

The LOD determination requires testing a low-concentration sample in addition to knowing the LoB, ensuring the analyte can be distinguished from noise [1].

- Sample Type: A sample with a low but known concentration of the analyte, prepared in the same matrix as the blank. For ctDNA dPCR, this could be a synthetic reference material or a patient sample diluted to a low variant allele frequency (VAF) [1] [3].

- Experimental Replicates: Similar to LoB, 60 replicates for establishment and 20 for verification are recommended [1].

- Data Analysis: Calculate the mean and standard deviation (

SD_low) of the low-concentration sample. The LOD is then calculated as: LOD = LoB + 1.645(SD_low) [1]. This ensures that 95% of measurements at the LOD concentration will exceed the LoB, resulting in a false-negative rate of only 5% [1].

Protocol for Limit of Quantitation (LOQ) Determination

The LOQ is the concentration where predefined goals for imprecision and bias are met [1] [7].

- Sample Type: Samples with analyte concentrations at or slightly above the estimated LOD.

- Experimental Replicates: Multiple replicates (e.g., 20-60) across multiple runs are tested at several candidate concentrations near the LOD.

- Data Analysis: The precision (CV%) and bias (difference from expected concentration) are calculated for each level. The LOQ is the lowest concentration where the CV is ≤ 20% and the bias is within an acceptable predefined limit (e.g., ±20%) [2] [7]. This is sometimes referred to as the "functional sensitivity" of the assay [1].

Table 2: Summary of Experimental Protocols for Determining LoB, LOD, and LOQ

| Parameter | Recommended Number of Replicates (Establishment) | Key Formula(s) | Acceptance Criterion |

|---|---|---|---|

| LoB | 60 | LoB = mean_blank + 1.645(SD_blank) |

Defines the upper limit of the blank signal [1]. |

| LOD | 60 | LOD = LoB + 1.645(SD_low_sample) |

≥95% of measurements at LOD exceed the LoB [1]. |

| LOQ | 60 (at multiple levels) | N/A (Determined by performance goals) | CV ≤ 20% and bias within acceptable limits (e.g., ±20%) at the LOQ concentration [1] [7]. |

Application in ctDNA dPCR Research

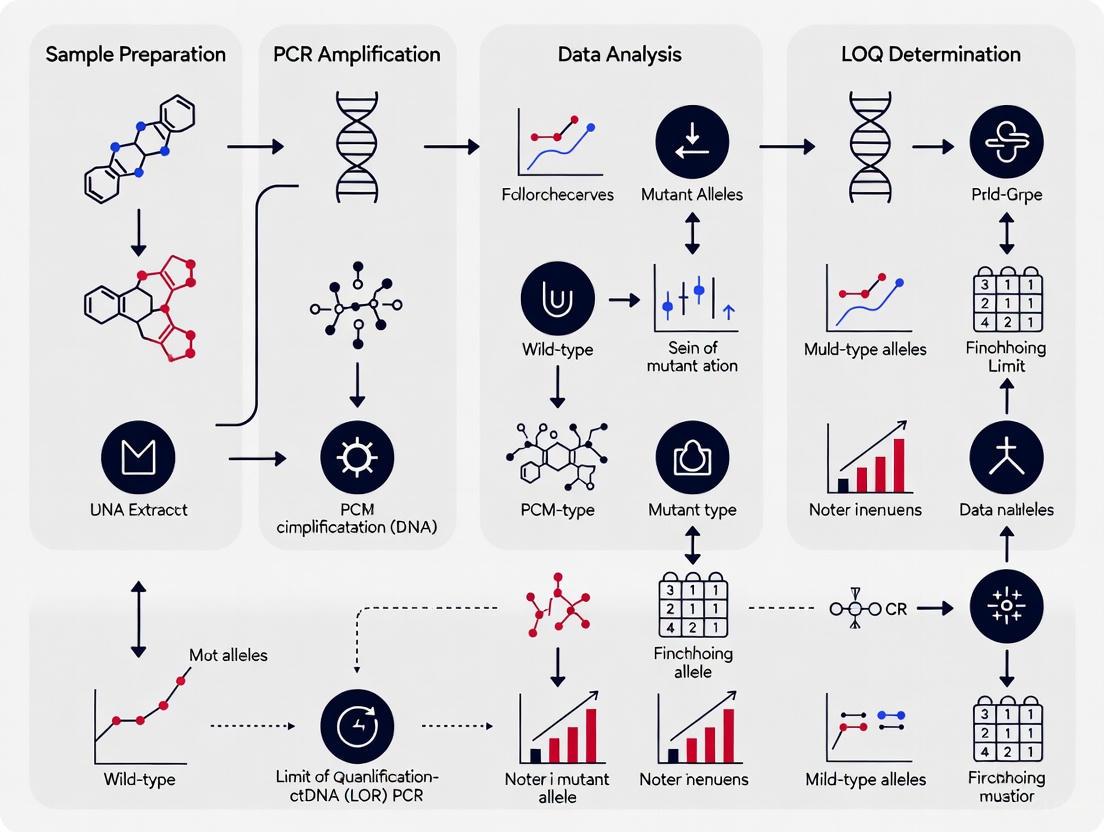

In the context of ctDNA analysis using droplet digital PCR (dPCR), these metrics take on critical practical significance. The following diagram outlines a typical workflow for establishing and applying these limits in a ctDNA assay.

Performance Comparison: dPCR vs. NGS for ctDNA

The choice of technology profoundly impacts achievable sensitivity. Droplet digital PCR (ddPCR) and next-generation sequencing (NGS) are two primary techniques for ctDNA analysis, each with distinct performance characteristics related to LoB, LOD, and LOQ [3].

- ddPCR: This technique is characterized by a very low background (LoB), contributing to a low LOD. It excels in sensitivity for detecting known, pre-identified mutations. A 2025 study directly compared ddPCR to an NGS panel in localized rectal cancer, finding that ddPCR detected ctDNA in 58.5% (24/41) of baseline plasma samples, significantly higher than the NGS panel's 36.6% (15/41) [3]. This superior detection rate is attributed to ddPCR's ability to reliably detect mutant alleles at VAFs as low as 0.01% [3].

- NGS-Based Panels: While NGS panels can screen for multiple mutations simultaneously (a significant advantage for discovery), they generally have a higher LOD and LOQ compared to targeted dPCR. The same study noted that the higher LOD of NGS meant it missed ctDNA that was detectable by the more sensitive ddPCR assay [3]. Furthermore, the operational costs for ctDNA detection with ddPCR were reported to be 5–8.5-fold lower than with NGS [3].

Correlation with Clinical Tumor Burden

The ability to quantify ctDNA at low levels (i.e., a low LOQ) is crucial as it often correlates with clinical measures of disease burden. A 2025 study on metastatic pancreatic cancer demonstrated a significant correlation between ctDNA quantity and total tumor volume, especially liver metastasis volume [4]. The study established that a liver metastasis tumor volume threshold of 3.7 mL was associated with ctDNA detection with a sensitivity of 85.1% and specificity of 79.2% [4]. This empirical link underscores why validating the LOQ is not just an analytical exercise but a vital step in ensuring that an assay can generate clinically actionable data for monitoring therapy response and tumor dynamics.

Essential Research Reagent Solutions

The successful implementation of a robust ctDNA assay depends on a suite of specialized reagents and materials. The following table details key components and their functions in the context of establishing LoB, LOD, and LOQ.

Table 3: Essential Research Reagents and Materials for ctDNA Assay Validation

| Reagent / Material | Critical Function | Considerations for LoB/LOD/LOQ Determination |

|---|---|---|

| Cell-Free DNA Blood Collection Tubes (e.g., Streck Cell-Free DNA BCT) | Preserves blood samples to prevent white blood cell lysis and release of genomic DNA, which dilutes ctDNA [3]. | Critical for obtaining a true "blank" (healthy donor plasma) with minimal background genomic DNA for LoB studies. |

| Reference Materials (e.g., Synthetic ctDNA, GMAP controls) | Provide a known quantity of mutant analyte for spiking experiments to determine LOD and LOQ [1]. | Must be commutable with patient samples. Used to prepare the low-concentration samples for LOD determination and precision studies for LOQ. |

| dPCR Mutation Detection Assays | Target-specific probes (e.g., TaqMan) for the absolute quantification of mutant alleles [3]. | The specificity of the probe directly influences the background signal (LoB). Design against a high-VAF tumor mutation is key for tumor-informed ddPCR [3]. |

| Methylation-Specific Assays | Target epigenetic modifications (e.g., methylated HOXD8, POU4F1) for ctDNA detection [4]. | An alternative to mutation-based detection. Requires validation of LoB using plasma negative for the methylation marker. |

| NGS Panel Kits (e.g., Ion AmpliSeq Cancer Hotspot Panel v2) | Enable multiplexed detection of somatic alterations across multiple genes [3]. | The panel's inherent error rate and sequencing depth set a higher fundamental LoB and LOD compared to dPCR [3]. |

The hierarchical concepts of Limit of Blank (LoB), Limit of Detection (LOD), and Limit of Quantitation (LOQ) form the bedrock of reliable analytical measurement, especially in the challenging low-concentration environment of ctDNA analysis. A clear understanding of their distinct definitions, experimental protocols for their determination, and their practical implications is non-negotiable for developing assays that are truly "fit for purpose." As the field advances towards using ctDNA for minimal residual disease detection and personalizing therapy, the rigorous application of these principles in digital PCR workflows will be the cornerstone of generating trustworthy, clinically actionable data.

The Critical Role of LOQ in Reliable Low-Frequency Mutation Quantification

In the field of circulating tumor DNA (ctDNA) research, the accurate detection and quantification of low-frequency mutations are paramount for applications in early cancer detection, minimal residual disease monitoring, and therapy response assessment. This guide examines the critical role of the Limit of Quantification (LOQ) in ensuring reliable measurement of these rare genetic variants. By comparing the performance of digital PCR (dPCR) and next-generation sequencing (NGS) technologies, we provide a structured analysis of their quantification capabilities, supported by experimental data and standardized protocols. Understanding and applying LOQ principles is essential for researchers and drug development professionals utilizing ctDNA analysis in precision oncology.

The Limit of Quantification (LOQ) is defined as the lowest concentration at which an analyte can not only be reliably detected but also quantified with acceptable precision and accuracy [1]. In the context of ctDNA analysis, this translates to the lowest mutant allele frequency that can be precisely measured against a background of wild-type DNA. Unlike the Limit of Detection (LOD), which merely confirms presence, the LOQ ensures that quantitative values meet predefined goals for bias and imprecision, making it a crucial parameter for reliable biomarker measurement [9].

Circulating tumor DNA (ctDNA) refers to the fraction of cell-free DNA in blood that originates from tumor cells, released through apoptosis, necrosis, or active secretion [10]. These fragments are typically 70-200 base pairs in size and present unique analytical challenges due to their low concentration in plasma (often 5-10 ng/mL) and rapid clearance from circulation (half-life of 16 minutes to 2.5 hours) [10]. Particularly challenging is the fact that the variant allele frequency (VAF) of ctDNA is often much lower than 1%, and can be influenced by factors such as cancer type, stage, and metabolic clearance rates [10].

For clinical applications in precision oncology, particularly in scenarios like early cancer detection or monitoring residual disease after surgery, the ability to confidently quantify these low-frequency mutations is critical. The establishment of a method's LOQ provides researchers with a clear boundary defining the concentration range where quantitative results can be trusted for clinical decision-making.

LOQ Fundamentals and Definitions

Distinguishing Between Blank, Detection, and Quantification

In analytical chemistry and molecular diagnostics, three distinct performance characteristics define the lower limits of an assay:

- Limit of Blank (LOB): The highest apparent analyte concentration expected to be found when replicates of a blank sample containing no analyte are tested. It represents the upper threshold of background noise, calculated as LOB = meanblank + 1.645(SDblank) [1].

- Limit of Detection (LOD): The lowest analyte concentration likely to be reliably distinguished from the LOB. It represents the point at which detection is feasible but without guarantee of precise quantification, calculated as LOD = LOB + 1.645(SDlow concentration sample) [1].

- Limit of Quantification (LOQ): The lowest concentration at which the analyte can not only be reliably detected but also quantified with acceptable precision (typically defined by a coefficient of variation ≤20%) and accuracy [1] [9].

The relationship between these parameters is hierarchical, with LOB < LOD ≤ LOQ, establishing progressively stringent requirements for assay performance at low analyte concentrations.

Calculation Methods for LOQ

The LOQ can be determined through several approaches, with the most common being:

- Standard Deviation and Slope Method: LOQ = 10 × σ / S, where σ is the standard deviation of the response and S is the slope of the calibration curve [9].

- Signal-to-Noise Ratio: A signal-to-noise ratio of 10:1 is generally accepted for estimating LOQ in chromatographic methods and other instrumental analyses [9].

- Functional Sensitivity Approach: The concentration that results in a coefficient of variation (CV) of 20% represents a practical LOQ for many bioanalytical applications [1].

These calculation methods emphasize that LOQ is not merely about detection but encompasses both precision and accuracy requirements for reliable quantification.

Technology Comparison for ctDNA Analysis

Methodologies for ctDNA Analysis

The landscape of ctDNA analysis technologies spans from targeted approaches assessing predefined mutations to untargeted methods scanning for unknown variants. The selection of an appropriate platform depends heavily on the application requirements, particularly the necessary sensitivity and quantification reliability.

Quantitative Performance Comparison

Table 1: Analytical Performance of Major ctDNA Detection Technologies

| Technology | Theoretical Sensitivity | Practical LOQ | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Real-Time PCR | 10% MAF [10] | ~25% MAF | Low cost, rapid, simple workflow [10] | Limited sensitivity, not suitable for low-frequency mutations |

| Digital PCR (dPCR/ddPCR) | 0.1% MAF [10] | 0.5-1% MAF | Absolute quantification, high sensitivity, minimal standards needed [11] | Limited to known mutations, lower multiplexing capability |

| BEAMing | 0.02% MAF [10] [11] | 0.1% MAF | Exceptional sensitivity, combination with flow cytometry [10] | Complex workflow, specialized equipment required |

| Targeted NGS | 0.1% MAF [11] | 1-2% MAF | Wider mutation profiling, discovery capability [10] | Higher input requirements, complex data analysis |

| Whole Genome/Exome NGS | 1-5% MAF [11] | 5-10% MAF | Comprehensive genomic coverage [11] | Highest cost, extensive bioinformatics, lowest sensitivity |

Impact of Pre-Analytical Factors on LOQ

The reliable quantification of low-frequency mutations in ctDNA is profoundly influenced by pre-analytical variables that can alter the apparent LOQ of any methodological approach:

- Sample Collection: The interval between venipuncture and processing should be minimized, or specialized blood collection tubes containing preservatives (e.g., Streck or PAXgene) should be utilized to prevent white blood cell lysis and dilution of ctDNA with genomic DNA [11].

- Processing Protocols: Two-step high-speed centrifugation is critical for obtaining platelet-poor plasma, and storage at -80°C with limited freeze-thaw cycles (<3) is recommended to prevent DNA degradation [11].

- ctDNA Extraction: Commercial kits like the QIAamp Circulating Nucleic Acid Kit are commonly employed, but protocols vary significantly in plasma volumes and extraction methods, directly impacting ctDNA yield and quality [11].

- Emerging Technologies: Microfluidics and nanotechnology approaches, such as dielectrophoresis-based microarray devices and nanochip/nanowire-based assays, show promise for improving cfDNA yields and reducing loss during extraction [11].

Standardized operating procedures (SOPs) and strict quality control are essential for maintaining consistent LOQ performance across experiments and laboratories, with guidelines available from organizations such as the European Committee for Standardization (CEN), Cancer ID Consortium, and BloodPAC [11].

Experimental Protocols for LOQ Determination

Establishing LOQ for dPCR Assays

Determining the LOQ for digital PCR assays requires a systematic approach to establish the lowest mutant allele frequency quantifiable with acceptable precision:

- Preparation of Reference Materials: Create dilution series of known mutant DNA in wild-type DNA background, spanning the expected LOQ range (typically 0.1% to 2% VAF).

- Partitioning and Amplification: Perform digital PCR using appropriate platforms (droplet-based or chip-based), ensuring sufficient partitions (typically >10,000) to capture rare mutant alleles.

- Data Collection: Quantify positive and negative partitions for both mutant and reference assays.

- Precision Assessment: Process multiple replicates (n≥5) at each concentration level and calculate the coefficient of variation (CV) for the measured VAF.

- LOQ Determination: Identify the lowest concentration where CV ≤20% is consistently maintained, indicating reliable quantification [1].

This empirical approach establishes a functional LOQ specific to the assay design, target sequence, and sample matrix.

Determining LOQ for NGS-Based Approaches

For NGS methodologies, LOQ establishment requires additional considerations related to sequencing depth and bioinformatic processing:

- Library Preparation: Use standardized input amounts of ctDNA (typically 10-30 ng) and unique molecular identifiers (UMIs) to correct for amplification biases and duplicate reads [11].

- Sequencing Depth: Achieve sufficient coverage (>10,000x read depth per base) to detect low-frequency variants with statistical confidence.

- Variant Calling: Implement duplex sequencing approaches where both strands of original DNA fragments are independently tracked to reduce sequencing errors.

- Precision Calculation: Process multiple replicates across different VAF levels and calculate CV for measured allele frequencies.

- Error Modeling: Account for background error rates using negative control samples and establish statistical confidence thresholds for variant calling.

The LOQ for NGS methods is typically higher than for dPCR due to inherent sequencing errors and more complex data processing pipelines.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagents and Materials for ctDNA LOQ Studies

| Item | Function | Examples/Specifications |

|---|---|---|

| Cell-Free DNA Blood Collection Tubes | Preserves blood sample integrity, prevents genomic DNA contamination | Streck Cell-Free DNA BCT, PAXgene Blood cDNA Tube [11] |

| cfDNA Extraction Kits | Isolation of high-quality ctDNA from plasma | QIAamp Circulating Nucleic Acid Kit, other magnetic bead-based systems [11] |

| dPCR Systems | Partitioning and amplification for absolute quantification | Droplet digital PCR (ddPCR) systems, chip-based dPCR platforms [10] |

| NGS Library Preparation Kits | Preparation of sequencing libraries from low-input ctDNA | Kits with UMI adapters, targeted capture panels [11] |

| Reference Standard Materials | Assay validation and LOQ determination | Seraseq ctDNA Reference Materials, Horizon Multiplex I cfDNA Reference Standards |

| Bioinformatic Tools | Data analysis, variant calling, and statistical quantification | VarScan, MuTect, custom pipelines for low-frequency variant detection [11] |

The reliable quantification of low-frequency mutations in ctDNA represents a significant challenge in molecular oncology, with the Limit of Quantification serving as a critical benchmark for assay performance. As demonstrated in this comparison, digital PCR technologies currently offer superior LOQ for targeted applications, while NGS platforms provide broader genomic coverage at the expense of higher quantification limits. The selection of an appropriate technology must balance sensitivity requirements with practical considerations of cost, throughput, and mutational scope. As ctDNA analysis continues to evolve toward earlier disease detection and minimal residual disease monitoring, ongoing refinement of LOQ parameters through standardized protocols and optimized reagents will be essential for advancing precision oncology applications.

In the pursuit of accurately defining the Limit of Quantification (LOQ) for circulating tumor DNA (ctDNA) using digital PCR (dPCR), researchers must contend with a complex landscape of background noise and false positives. These confounding signals arise from both biological and technical sources, presenting a significant challenge for applications requiring high sensitivity, such as minimal residual disease (MRD) detection and early cancer diagnosis [12] [13]. The fundamental issue is that in early-stage cancers or during MRD monitoring, ctDNA can represent ≤ 0.1% of the total cell-free DNA (cfDNA), meaning that false positive signals at even very low rates can severely impact test specificity and clinical utility [14] [13]. Understanding and mitigating these sources of noise is therefore not merely an analytical exercise but a critical prerequisite for reliable ctDNA quantification, especially at the low end of the dynamic range where clinical decisions are most consequential.

This guide systematically compares the performance of dPCR against emerging technologies, providing structured experimental data and methodologies to help researchers identify and control for key sources of error in ctDNA analysis.

Biological false positives originate not from technical artifacts but from naturally occurring processes within the body. The most significant of these is clonal hematopoiesis.

Clonal Hematopoiesis of Indeterminate Potential (CHIP)

Clonal Haematopoiesis of Indeterminate Potential (CHIP) represents a major biological confounding factor for ctDNA detection assays [12]. CHIP occurs when normal hematopoietic cells accumulate somatic mutations during ageing, leading to clonal expansions in the absence of dysplasia. Since over 80% of cfDNA in healthy individuals originates from hematopoietic cells, mutations from these clones are released into the bloodstream and can be mistaken for tumor-derived DNA [12].

The prevalence of CHIP increases with age, with one study finding that 60% of healthy participant cfDNA samples harbored at least one non-synonymous mutation or indel [12]. The most commonly mutated gene is DNMT3A, though mutations span many genes. While some of these mutations are indexed in cancer databases like COSMIC, their presence in cfDNA does not necessarily indicate the presence of solid tumor malignancy [12].

Table 1: Characteristics of Clonal Haematopoiesis (CHIP)

| Feature | Description | Impact on ctDNA Detection |

|---|---|---|

| Origin | Hematopoietic cells | Source of non-tumor mutations in plasma |

| Prevalence | Increases with age (60% in healthy elderly) | High false positive rate in older populations |

| Variant Allele Frequency | Can occur at <0.1% [12] | Challenges detection thresholds |

| Commonly Mutated Genes | DNMT3A, others | Mutations can be mistaken for cancer drivers |

Mitigation Strategies for Biological Noise

- CHIP Filtering: Sequencing peripheral blood cell DNA to the same depth as cfDNA sequencing creates a "CHIP-filter" to remove somatic variants originating from hematopoietic cells [12].

- Variant Annotation: Filtering ctDNA analyses for alterations commonly associated with CHIP, such as specific DNMT3A mutations, can reduce false positives [12].

- Oncogene Activation Focus: Detection of oncogene activating mutations in plasma may be more specific for solid malignancies, though this appears to be gene-dependent [12].

Technical artifacts arise from the experimental workflow, from sample collection to data analysis. These can be categorized as follows:

Pre-analytical and Analytical Errors

The journey from blood draw to final ctDNA measurement is fraught with potential error sources:

- DNA Damage: During library preparation, DNA damage can introduce artifactual errors that are misclassified as somatic variants [12].

- PCR Errors: Unspecific fluorescent probe binding and off-target amplification in dPCR can generate false positive signals [15].

- Sequencing Errors: In next-generation sequencing (NGS) methods, base calling errors by the sequencing platform contribute to background noise [12].

- Low Input DNA: Allele drop-out due to limited library complexity can reduce sensitivity for low-frequency variants [12].

Methodological Comparisons: dPCR vs. NGS

Different detection platforms exhibit distinct noise profiles and performance characteristics. The table below summarizes a direct comparison between droplet digital PCR (ddPCR) and NGS for ctDNA detection in localized rectal cancer [3].

Table 2: Performance Comparison of ddPCR vs. NGS in Rectal Cancer

| Parameter | ddPCR | NGS (HS1 Panel) | Statistical Significance |

|---|---|---|---|

| Baseline Detection Rate (Development) | 24/41 (58.5%) | 15/41 (36.6%) | p = 0.00075 |

| Variant Allele Frequency (VAF) Detection | As low as 0.01% | Threshold set at 0.01% | Not significant |

| Operational Cost | 5–8.5-fold lower [3] | Higher | N/A |

| Multiplexing Capability | Limited (1-2 mutations/assay) | High (50+ genes) | N/A |

| Association with Clinical Stage | Higher detection in advanced stages | Higher detection in advanced stages | Significant |

Another study in early-stage breast cancer compared the QX200 ddPCR system with the Absolute Q plate-based dPCR system, finding that "both systems displayed a comparable sensitivity with no significant differences observed in mutant allele frequency" and possessed "a concordance > 90% in ctDNA positivity" [14].

Advanced Error Suppression Methodologies

To overcome the challenge of background noise, researchers have developed sophisticated error suppression techniques.

Molecular Barcoding Strategies

Unique Molecular Identifiers (UMIs) are short random nucleotide sequences ligated to individual DNA molecules before amplification. This allows bioinformatics tools to distinguish true mutations from PCR or sequencing errors by grouping reads originating from the same original molecule [15].

The GeneBits workflow employs tumor-informed panels with UMIs combined with ultra-deep sequencing, achieving exceptionally low error rates ranging from 7.4×10⁻⁷ to 7.5×10⁻⁵ for duplex reads [15]. This approach enables variant detection at a limit of detection as low as 0.0017% with no false positive calls in mutation-free reference samples [15].

Computational Error Suppression

Computational methods provide powerful post-sequencing approaches to distinguish true variants from technical artifacts:

- TNER (Tri-Nucleotide Error Reducer): This novel background error suppression method uses a Bayesian framework that incorporates tri-nucleotide context to provide a robust estimation of background noise, significantly enhancing specificity without sacrificing sensitivity [16].

- Integrated Digital Error Suppression (iDES): This approach combines molecular barcoding with a background polishing model to reduce technical errors, increasing the percentage of error-free positions from approximately 90% to 98% [16].

Experimental Protocols for Error Control

Duplex Sequencing with Molecular Barcodes

The endogenous duplex barcoding approach described by Liu et al. provides a robust method for error-controlled ctDNA detection [12]:

- Library Preparation: Use the xGen cfDNA & FFPE DNA Library Prep Kit or similar with UMI adapters containing fixed 8-bp sequences from a pool of 32.

- Target Enrichment: Employ hybridization capture with tumor-informed panels covering 20-100 SNVs with 1x, 2x, or 3x tiling densities.

- Sequencing: Sequence on Illumina platforms in paired-end mode (2 × 150 bp) to ultra-high depth.

- Bioinformatic Processing: Use tools like umiVar for UMI-based barcode correction, variant calling, and MRD detection.

This method achieves a background error rate of 2×10⁻⁷ errors per base, approximately 50-fold lower than digital error-suppression with single strand molecular barcoding [12].

CRISPR/Cas12a-Based Detection (PRC-Cas Assay)

For environments where dPCR and NGS are not feasible, emerging technologies like the PRC-Cas assay offer alternative approaches:

- Recombinase Polymerase Amplification (RPA): Achieve exponential amplification of target DNA at 37°C using modified primers to insert protospacer adjacent motif (PAM) sequences.

- CRISPR/Cas12a Detection: Use Cas12a with optimized crRNAs containing single- or double-base mismatches to reduce off-target effects.

- Fluorescent Detection: Measure cleavage of nonspecific ssDNA fluorescent reporters.

This method identifies mutations down to 0.02% VAF with high selectivity and can be completed in 50 minutes with only isothermal control [17].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Reagents for ctDNA dPCR Analysis

| Reagent/Kit | Function | Application Note |

|---|---|---|

| Streck Cell Free DNA BCT Tubes | Blood collection & cfDNA preservation | Prevents dilution from cellular genomic DNA [3] |

| MagMAX Cell-Free DNA Isolation Kit | cfDNA extraction from plasma | Optimized for low-abundance targets [17] |

| QX200 Droplet Digital PCR System | Absolute quantification of target mutations | Gold standard in the field [14] |

| xGen cfDNA & FFPE DNA Library Prep Kit | Library preparation with UMI adapters | Compatible with low-input cfDNA [15] |

| IDT/Twist Hybridization Capture Probes | Target enrichment for sequencing | Tumor-informed panel design [15] |

| CRISPR/Cas12a (Lba Cas12a) | Sequence-specific detection | Enables rapid, isothermal detection [17] |

The accurate detection and quantification of ctDNA using dPCR requires careful consideration of both biological and technical sources of background noise. CHIP-associated mutations represent a significant biological confounder, particularly in older populations, while technical artifacts from sample preparation through sequencing can generate false positive signals that obscure true ctDNA detection.

Effective error mitigation employs a multi-layered strategy: wet-lab methods like molecular barcoding and optimized library preparation protocols work in concert with computational approaches like TNER to suppress background noise. The choice between dPCR and NGS involves trade-offs between sensitivity, multiplexing capability, and cost, with dPCR offering advantages for focused mutation tracking and NGS providing broader genomic coverage.

As the field progresses toward ever-lower limits of quantification, integrated approaches that address both biological and technical noise will be essential for realizing the full potential of ctDNA as a biomarker for minimal residual disease detection and early cancer diagnosis.

In circulating tumor DNA (ctDNA) research, a significant disparity exists between the theoretical sensitivity of digital PCR (dPCR) technologies and their practical performance in real-world settings. While dPCR platforms can theoretically detect mutant allele frequencies (MAFs) as low as 0.001%-0.01% under ideal conditions, this performance is rarely achieved in clinical studies, particularly for non-metastatic cancers [14] [18]. The core thesis of this guide is that the input DNA mass serves as a fundamental limiting factor that governs the practical limit of quantification (LOQ), often creating a several-order-of-magnitude gap between theoretical capabilities and achievable sensitivity. This comparison guide examines how biological constraints, pre-analytical variables, and technical workflows interact to determine the final assay sensitivity, providing researchers with a framework for evaluating and selecting appropriate methodologies for ctDNA detection and quantification.

Technical Comparison of dPCR Platforms and Methodologies

Platform Performance Characteristics

Different dPCR platforms offer varying technical capabilities that influence their practical performance in ctDNA detection. The table below summarizes key characteristics of major dPCR systems and their reported performance in clinical studies.

Table 1: Performance Comparison of Digital PCR Platforms for ctDNA Analysis

| Platform/Method | Theoretical Sensitivity (MAF) | Reported Practical Sensitivity in Studies | Key Advantages | Key Limitations |

|---|---|---|---|---|

| QX200 Droplet Digital PCR (ddPCR) | 0.001% | 0.01%-0.07% (early-stage cancer) [14] [18] | Established gold standard; high reproducibility [14] | Higher variability and longer workflow compared to plate-based systems [14] |

| Absolute Q Plate-Based Digital PCR (pdPCR) | 0.001% | 0.01%-0.07% (comparable to ddPCR) [14] | More stable compartment number; less hands-on time [14] | Fewer independent validation studies available |

| Quantitative NGS (qNGS) with UMIs/QSs | 0.1% | VAF 0.5% (commercial panels); improved with specialized protocols [19] [20] | Enables absolute quantification independent of background DNA; multi-variant detection [20] | Complex workflow; requires specialized bioinformatics |

| Tumor-Informed ddPCR (High-Volume) | 0.001% | 0.003% VAF achieved with 20-40mL plasma [18] | Ultra-sensitive detection; combines ctDNA and CTC analysis | Requires large blood volumes; patient-specific assay design |

Impact of Input DNA on Detection Sensitivity

The relationship between input DNA mass and detection sensitivity follows fundamental statistical principles that directly impact assay performance. The absolute number of mutant DNA fragments in a sample represents the ultimate constraint on sensitivity [19]. For example, a 10 mL blood draw from a lung cancer patient with typically low cfDNA levels (~5.23 ± 6.4 ng/mL) might yield only approximately 8,000 haploid genome equivalents (GEs) [19]. With a ctDNA fraction of 0.1%, this provides a mere 8 mutant GEs for the entire analysis, making detection statistically improbable. Conversely, the same volume from a high-shedding liver cancer patient (~46.0 ± 35.6 ng/mL cfDNA) could provide ~80,000 GEs, yielding 80 mutant GEs at the same 0.1% ctDNA fraction, providing a much stronger, detectable signal [19].

Table 2: Input DNA Requirements for Reliable ctDNA Detection

| Detection Target | Required Input DNA | Minimum Plasma Volume | Theoretical vs. Practical LOQ | Key Dependencies |

|---|---|---|---|---|

| VAF ~0.5% | 60 ng DNA (~20,000 GE after deduplication) [19] | 5-10 mL (conventional) [18] | Theoretical: 0.1%Practical: 0.5% [19] | Tumor shedding capacity; cfDNA concentration |

| VAF ~0.1% | >60 ng DNA; ultra-deep sequencing | 10-20 mL [18] | Theoretical: 0.01%Practical: 0.1% with optimized protocols [19] | Blood collection tube; processing delays |

| VAF ~0.01% | Not achievable with standard volumes; requires 20-40 mL plasma [18] | 20-40 mL [18] | Theoretical: 0.001%Practical: 0.01% with high-volume approach [18] | Input plasma volume; DNA extraction efficiency |

| Early-stage Breast Cancer | DNA from 20-40 mL plasma (vs. conventional 5 mL) [18] | 20-40 mL [18] | Conventional volume (5mL): 0.07% VAFHigh volume (20-40mL): 0.003% VAF [18] | Mutation selection; background wild-type DNA |

Experimental Protocols and Methodologies

High-Sensitivity ctDNA Detection Workflow

The following diagram illustrates the comprehensive workflow for achieving high-sensitivity ctDNA detection, emphasizing critical pre-analytical and analytical steps:

Quantitative NGS (qNGS) with Absolute Quantification

A novel qNGS methodology incorporating unique molecular identifiers (UMIs) and quantification standards (QSs) enables absolute quantification of nucleotide variants independent of fluctuations in non-tumor cfDNA [20]. This approach addresses a key limitation of conventional NGS, which relies on variant allele frequency (VAF) that can be influenced by background wild-type DNA. The method involves:

- QS Design: Synthetic DNA molecules (190 bp) mimicking cfDNA fragment size, engineered with a characteristic mutation for unique identification [20]

- UMI Barcoding: Short random DNA sequences (8-16 nucleotides) added to each DNA molecule during library preparation to track original molecules [20]

- Spike-in Protocol: Known concentration of QS molecules added to plasma sample before cfDNA extraction to correct for sample loss [20]

- Dual Quantification: Simultaneous measurement of both QS molecules and patient cfDNA using the same NGS panel [20]

This method demonstrated strong linearity and high correlation with dPCR in validation studies, enabling simultaneous quantification of multiple variants from a single plasma sample [20].

Essential Research Reagent Solutions

The following table details critical reagents and materials required for implementing high-sensitivity ctDNA detection protocols, along with their specific functions and selection criteria:

Table 3: Essential Research Reagents for ctDNA Analysis

| Reagent/Material | Function | Key Considerations | Example Products |

|---|---|---|---|

| Cell-Free DNA BCTs | Preserves blood sample integrity; prevents genomic DNA contamination from white blood cell lysis | Allows room temperature storage for up to 7 days; critical for multi-center trials [21] | Streck cfDNA BCT; PAXgene Blood ccfDNA; Roche cfDNA tubes [21] |

| cfDNA Extraction Kits | Isolation of high-purity cfDNA from plasma | Silica membrane columns yield more ctDNA than magnetic bead methods [21] | QIAamp Circulating Nucleic Acids Kit; Maxwell RSC ccfDNA Plasma Kit [21] [22] |

| Unique Molecular Identifiers (UMIs) | DNA barcoding to distinguish true mutations from PCR/sequencing errors | Essential for NGS-based ctDNA detection; enables accurate counting of original molecules [19] [20] | Integrated DNA Technologies; Thermo Fisher Scientific |

| Quantification Standards (QSs) | Synthetic DNA spikes for absolute quantification in qNGS | Corrects for sample loss during extraction and library preparation [20] | Custom-designed synthetic DNA fragments (e.g., gBlocks) [20] |

| Restriction Enzymes | Digestion of genomic DNA for reference gene analysis | Pre-digestion improves accuracy of DNA quantification for dPCR [22] | HindIII and other frequent cutters [22] |

| dPCR Master Mixes | Partitioning and amplification of target mutations | Chemistry affects fluorescence resolution and quantification accuracy [22] [14] | ddPCR Supermix; Absolute Q dPCR Master Mix |

Biological and Technical Factors Influencing Practical LOQ

Biological Limitations and Variability

Practical sensitivity in ctDNA analysis is constrained by several biological factors that are frequently overlooked in theoretical sensitivity calculations:

- Tumor DNA Shedding Rate: Varies significantly by cancer type, with lung cancers exhibiting low cfDNA levels (5.23 ± 6.4 ng/mL) while liver cancers show much higher levels (46.0 ± 35.6 ng/mL) [19]

- Tumor Volume and Stage: In non-metastatic gastric cancer, ctDNA detection rates are only ~21% despite using sensitive ddPCR assays, highlighting the impact of tumor burden [23]

- Clonal Hematopoiesis: Can cause false-positive results when mutations originate from hematopoietic cells rather than tumors [19]

- Circadian Dynamics: CTC and ctDNA content fluctuations have been observed at different times of day, suggesting biological rhythms affect release patterns [21]

Technical and Pre-Analytical Considerations

Technical workflow variables introduce additional constraints that impact achievable sensitivity:

- Blood Collection Procedures: Butterfly needles are recommended, while excessively thin needles and prolonged tourniquet use should be avoided [21]

- Centrifugation Protocols: Double centrifugation is essential (first step: 380-3,000 g for 10 min; second step: 12,000-20,000 g for 10 min at 4°C) [21]

- Sample Storage Conditions: Plasma should be stored at -80°C, with freeze-thaw cycles minimized by storing in small fractions [21]

- Input DNA Requirements: Achieving 20,000× coverage after deduplication requires a minimum input of 60 ng DNA, corresponding to approximately 300 haploid genome equivalents per ng [19]

The disparity between theoretical and practical sensitivity in ctDNA dPCR analysis primarily stems from the fundamental limitation of input DNA mass and the statistical constraints it imposes on detecting rare mutant molecules in a background of wild-type DNA. While technological advancements continue to push detection limits lower, researchers must consider the biological reality that even the most sensitive assays cannot detect mutations that are not present in the sample volume analyzed. The implementation of high-volume plasma processing (20-40 mL) coupled with optimized pre-analytical protocols and tumor-informed assay designs currently offers the most promising approach for bridging this sensitivity gap. For researchers focusing on LOQ in ctDNA analysis, these methodological considerations are paramount for achieving clinically relevant detection levels, particularly in minimal residual disease monitoring and early-cancer detection applications where ctDNA fractions are exceptionally low.

Establishing a Robust Workflow: From Assay Design to LOQ Determination

Digital PCR (dPCR) has emerged as a powerful technology for the absolute quantification of nucleic acids, offering superior precision and sensitivity compared to traditional quantitative PCR (qPCR). This is particularly crucial in the field of circulating tumor DNA (ctDNA) research, where accurately measuring low-abundance mutations is essential for cancer diagnosis, monitoring treatment response, and tracking minimal residual disease. The exceptional performance of dPCR stems from its partitioning approach, where the PCR reaction is divided into thousands of individual reactions, enabling precise target molecule counting via Poisson statistics without requiring standard curves [24] [25]. This partitioning confers higher resistance to PCR inhibitors present in complex clinical samples, a common challenge when working with ctDNA [25].

The reliability of any dPCR assay, however, fundamentally depends on rigorous assay design. Properly designed primers and probes are paramount for achieving the high specificity and sensitivity needed to distinguish rare mutant alleles from abundant wild-type DNA in ctDNA analysis. Furthermore, incorporating advanced technologies like Locked Nucleic Acid (LNA) nucleotides can significantly enhance assay performance by improving hybridization affinity and specificity. This guide explores the best practices in dPCR assay design, with a specific focus on optimizing the Limit of Quantification (LOQ) for ctDNA applications, and provides a comparative analysis of different dPCR platforms and reagent solutions.

Fundamental Rules for Primer and Probe Design

The foundation of a robust dPCR assay lies in the careful design of primers and probes. Adherence to established design parameters ensures high amplification efficiency, specificity, and reliable quantification.

Core Design Parameters for Primers

Primer design follows several key principles to ensure optimal binding and amplification. The guidelines for dPCR are largely consistent with those for qPCR [26].

- Length and Melting Temperature (Tm): Primers should be 18–30 bases in length, with an optimal Tm between 60–64°C [26] [27]. The Tm values for the forward and reverse primer should not differ by more than 2°C to ensure both primers bind efficiently during each amplification cycle [27].

- GC Content: The GC content should ideally be between 40–60%, which provides sufficient sequence complexity while maintaining specificity [26]. Another source suggests a slightly broader range of 35–65%, with 50% being ideal [27].

- 3' End Sequence: The 3' end of the primer is critical for initiation of amplification. It should terminate with a G or C base (a phenomenon known as GC clamping) to strengthen binding, but should not contain more than 2 G or C bases in the last 5 bases [26].

- Secondary Structure: Primers must be screened for self-dimers, heterodimers, and hairpin formation. The free energy (ΔG) for any such secondary structures should be weaker (more positive) than -9.0 kcal/mol to prevent non-specific amplification [27].

Core Design Parameters for Probes

Hydrolysis probes (e.g., TaqMan probes) are commonly used in dPCR for specific target detection. Their design requires careful consideration of several factors.

- Length and Melting Temperature (Tm): Probes should be 20–30 bases long [27]. The Tm of the probe should be 5–10°C higher than the Tm of the primers to ensure the probe binds before the primers and remains hybridized during extension [26] [27].

- GC Content and Sequence: Similar to primers, probe GC content should be between 35–65% [27]. It is critical to avoid a Guanine (G) base at the 5' end, as this can quench the fluorescence of the reporter dye [27]. Furthermore, the probe sequence should not contain runs of four or more consecutive G bases [26].

- Location: The probe should be designed to bind in close proximity to the forward or reverse primer but must not overlap with the primer-binding site on the same strand [27].

Amplicon Design

The region of DNA amplified by the primers, the amplicon, must also be carefully designed.

- Length: For optimal amplification efficiency, amplicon length should typically be 70–150 base pairs [27]. Shorter amplicons are generally amplified with higher efficiency, which is particularly beneficial for degraded samples like FFPE-derived DNA or ctDNA.

- Location: When working with RNA or distinguishing between genomic DNA and processed targets, designing assays to span an exon-exon junction can reduce false-positive signals from genomic DNA contamination [27].

Table 1: Summary of Primer and Probe Design Guidelines

| Parameter | Primers | Probes |

|---|---|---|

| Length | 18–30 bases [27] | 20–30 bases [27] |

| Melting Temperature (Tm) | 60–64°C [27] | 5–10°C higher than primers [26] [27] |

| Tm Difference | ≤ 2°C between primers [27] | - |

| GC Content | 40–60% [26], 35–65% (ideal 50%) [27] | 35–65% [27] |

| 3' End | G or C, but ≤ 2 G/C in last 5 bases [26] | Avoid G at the 5' end [27] |

| Secondary Structure | ΔG > -9.0 kcal/mol for dimers/hairpins [27] | ΔG > -9.0 kcal/mol for dimers/hairpins [27] |

Advanced Techniques: Enhancing Assays with LNA Nucleotides

For challenging applications such as detecting single-nucleotide variants (SNVs) in ctDNA, standard DNA-based probes may not provide sufficient discrimination power. Locked Nucleic Acid (LNA) technology offers a solution to this challenge.

LNA nucleotides are modified nucleotides containing a bridged sugar-phosphate backbone that "locks" the structure into a rigid conformational state [26]. This rigidity enhances the hybridization thermodynamics, leading to a significant increase in the melting temperature (Tm) of the oligonucleotide by several degrees Celsius. This property allows for the design of shorter probes without sacrificing Tm, which can improve specificity [28].

A key application in ctDNA research is the use of LNA nucleotides in competitive allele-specific PCR (castPCR). In this setup, an LNA-modified "Clamp" probe can be designed to bind perfectly to the wild-type sequence, suppressing its amplification. This enables the selective amplification and sensitive detection of the rare mutant allele, dramatically improving the assay's ability to distinguish between closely related sequences and thereby lowering the LOQ for variant detection [28]. The use of LNA in PCR primers has also been shown to increase sensitivity and performance [26].

Experimental Protocols for dPCR Assay Validation

Before deploying a dPCR assay for clinical research, it is imperative to validate its performance characteristics. The following protocols outline key experiments for establishing the Limit of Detection (LOD) and Limit of Quantification (LOQ).

Protocol for Determining Limit of Detection (LOD) and Limit of Quantification (LOQ)

The LOD is the lowest concentration of target that can be detected, while the LOQ is the lowest concentration that can be reliably quantified with acceptable precision and accuracy [25] [29].

- Preparation of Standard Material: Serially dilute a standard of known concentration, such as synthetic oligonucleotides or genomic DNA from cell lines. The dilution series should span a wide range, from a high concentration down to a theoretical concentration near the expected detection limit.

- dPCR Run: Analyze each dilution level in multiple replicates (at least 3-5) using the optimized dPCR assay.

- Data Analysis:

- LOD Calculation: The LOD with 95% confidence (LOD95%) can be estimated statistically. One method involves probit analysis or using a statistical model to determine the concentration at which the target is detected 95% of the time. A study quantifying HIV DNA reported an LOD95% of 79.7 copies per million cells using this approach [25].

- LOQ Determination: The LOQ is typically defined as the lowest concentration at which the coefficient of variation (CV) remains below a predetermined threshold (e.g., 25% or 35%). This is determined by plotting the measured concentration and its corresponding CV% for each dilution level. Another method involves fitting a polynomial model to the data from a dilution series and determining the concentration at which the model fit is acceptable, as demonstrated in a study that found an LOQ of 54 copies/reaction for a nanoplate-based system [29].

Protocol for Assessing Precision (Repeatability and Reproducibility)

Precision measures the assay's variability under different conditions.

- Repeatability (Intra-assay Precision): Run the same sample with a known target concentration multiple times (e.g., 5-10 replicates) within the same dPCR run. Calculate the Coefficient of Variation (CV%) for the measured concentrations.

- Reproducibility (Inter-assay Precision): Run the same sample across different dPCR runs, different days, and potentially by different operators. Again, calculate the CV% across these measurements.

- Interpretation: A well-optimized assay should show low CVs. For example, a duplex dPCR assay for HIV DNA showed a CV of 8.7% for high concentrations (1,250 copies/10⁶ cells) and 26.9% for low concentrations (150 copies/10⁶ cells) in intra-assay tests [25]. Higher variability at lower concentrations is expected due to Poisson noise.

Diagram 1: dPCR assay validation workflow for LOD, LOQ, and precision.

Comparative Performance of dPCR Platforms

Different dPCR platforms utilize distinct partitioning technologies, which can influence performance metrics critical for ctDNA research, such as sensitivity, precision, and dynamic range. The two main types are droplet-based digital PCR (ddPCR) and nanoplate-based digital PCR (ndPCR).

- Droplet-based dPCR (ddPCR): This method, exemplified by the Bio-Rad QX200 system, partitions the reaction mix into thousands of nanoliter-sized oil-emulsion droplets [24] [29].

- Nanoplate-based dPCR (ndPCR): This method, used by the QIAGEN QIAcuity, employs microfluidic chips with fixed nanowells to partition the reaction, offering a more automated workflow [24] [29].

A recent comparative study highlights the performance characteristics of these platforms. The research found that while both platforms showed high accuracy and precision in quantifying gene copy numbers, their LOD and LOQ differed slightly. The ndPCR system demonstrated a lower LOD, while the ddPCR system showed a marginally better agreement with expected values in some concentration ranges [29]. Another study emphasized that ndPCR platforms reduce hands-on time and eliminate variability associated with droplet size and number [25].

Table 2: Performance Comparison of ddPCR vs. ndPCR Platforms

| Performance Metric | ddPCR (QX200) | ndPCR (QIAcuity) | Context & Implications |

|---|---|---|---|

| Limit of Detection (LOD) | ~0.17 copies/µL input [29] | ~0.39 copies/µL input [29] | Both platforms offer high sensitivity suitable for low-abundance targets. |

| Limit of Quantification (LOQ) | ~4.26 copies/µL input [29] | ~1.35 copies/µL input [29] | ndPCR reported a lower LOQ in this study, suggesting reliable quantification at very low concentrations. |

| Precision (CV%) | 6% - 13% (using synthetic oligos) [29] | 7% - 11% (using synthetic oligos) [29] | Both platforms show high precision across their dynamic range. |

| Partitioning Method | Droplet generation [29] | Fixed nanowells [25] | Nanowells offer a more automated workflow and eliminate droplet variability [25]. |

| Impact of Restriction Enzymes | Precision significantly improved with HaeIII vs. EcoRI [29] | Precision less affected by enzyme choice [29] | ndPCR may be more robust to enzymatic fragmentation steps. |

The Scientist's Toolkit: Essential Reagents and Materials

Successful dPCR assay development relies on a suite of specialized reagents and tools. The following table details key solutions and their functions in the workflow.

Table 3: Essential Research Reagent Solutions for dPCR Assay Development

| Item | Function / Purpose | Example Use Case |

|---|---|---|

| LNA Nucleotides | Increase probe Tm and enhance specificity, particularly for SNP detection. | Designing "Clamp" probes in castPCR to suppress wild-type amplification and enrich for mutant allele detection in ctDNA [28]. |

| Double-Quenched Probes | Reduce background fluorescence by minimizing fluorescent dye and quencher proximity issues, especially in longer probes. | Achieving higher signal-to-noise ratios in multiplex assays, leading to clearer positive/negative partition calling [27]. |

| Restriction Enzymes | Fragment complex genomic DNA to improve access to the target sequence and ensure more homogeneous amplification. | Pre-digestion of DNA with enzymes like HaeIII or EcoRI to improve the precision of gene copy number quantification, as shown in protist studies [29]. |

| Automated Nucleic Acid Extraction Systems | Provide high-quality, inhibitor-free nucleic acids from various sample types (e.g., blood, plasma, tissue). | Systems like the KingFisher Flex with MagMax kits are used to extract ctDNA from plasma samples for downstream dPCR analysis [24]. |

| Commercial Assay Design Tools | Bioinformatics platforms that automate and optimize primer and probe design based on established rules. | Tools like IDT's PrimerQuest or QIAGEN's GeneGlobe Assay Wizard help researchers quickly generate high-performance assay sequences [27] [28]. |

The design of primers and probes is a critical determinant of success in dPCR assays, especially for demanding applications like ctDNA quantification where a low LOQ is essential. Adherence to fundamental design rules—governing Tm, length, GC content, and secondary structure—forms the foundation of a robust assay. For maximum specificity and sensitivity in detecting rare variants, the incorporation of LNA nucleotides represents a powerful advanced technique.

The choice of dPCR platform involves trade-offs. While both ddPCR and ndPCR platforms offer high sensitivity and precision, recent comparative studies indicate differences in their LOD/LOQ and robustness to certain experimental variables like DNA fragmentation. Ultimately, a rigorously validated assay, following the outlined protocols for LOD, LOQ, and precision, is non-negotiable for generating reliable, clinically actionable data in ctDNA research. By leveraging the best practices and tools detailed in this guide, researchers can fully harness the power of dPCR to push the boundaries of cancer diagnostics and monitoring.

In the field of molecular diagnostics, particularly in circulating tumor DNA (ctDNA) research, accurately determining the lowest concentration of an analyte that can be reliably measured is crucial for assessing minimal residual disease and early treatment response. The Limit of Quantitation (LOQ) represents the lowest concentration at which an analyte can not only be detected but also quantified with acceptable precision and accuracy under stated experimental conditions [30]. For ctDNA digital PCR (dPCR) applications, where mutant allele frequencies can be extremely low (often below 0.1%), proper LOQ characterization ensures that reported results are both reliable and clinically actionable [31] [32].

The Clinical and Laboratory Standards Institute (CLSI) EP17-A2 guideline, titled "Evaluation of Detection Capability for Clinical Laboratory Measurement Procedures," provides a standardized framework for evaluating detection capability metrics, including Limit of Blank (LoB), Limit of Detection (LoD), and LOQ [33]. This framework is recognized by regulatory bodies like the U.S. Food and Drug Administration (FDA) and is applicable to both commercial in vitro diagnostic tests and laboratory-developed tests, making it particularly valuable for ctDNA assay validation in clinical research and drug development [33].

The importance of EP17-A2 lies in its comprehensive approach to defining an assay's analytical sensitivity. Without such standardization, as noted in scientific literature, "alternative forms for calculating LOD/LOQ frequently lead to dissimilar results," making cross-method comparisons challenging and potentially misleading [30] [8]. This tutorial explores the EP17-A2 framework for LOQ characterization, provides experimental protocols for implementation, and compares it with alternative approaches through the lens of ctDNA dPCR research.

Core Concepts and Definitions

Within the EP17-A2 framework, LOQ is part of a hierarchy of detection capability metrics that must be understood collectively. The guideline defines three critical limits that establish the lower bounds of assay performance [33] [34] [35]:

Limit of Blank (LoB): The highest apparent analyte concentration expected to be found when replicates of a blank sample containing no analyte are tested. LoB is typically defined with a specific confidence level (1-α), often 95%, meaning 95% of blank measurements are expected to fall below this threshold [34] [35]. Mathematically, LoB represents the 95th percentile of the blank distribution.

Limit of Detection (LoD): The lowest analyte concentration likely to be reliably distinguished from the LoB and detectable in a sample with a specified probability (typically 95% with β = 0.05) [34]. The LoD exceeds the LoB and accounts for both the variability of blank measurements and the variability of low-level analyte samples.

Limit of Quantitation (LOQ): The lowest concentration at which an analyte can be quantified with acceptable precision and accuracy under stated experimental conditions [33] [30]. While EP17-A2 provides guidance on LOQ determination, it acknowledges that acceptable precision goals (e.g., 20% coefficient of variation) must be defined based on the assay's intended use.

The relationship between these parameters creates decision thresholds for analytical interpretation. In practice, measurements falling below the LoB are considered "not detected," those between LoB and LoD are "detected but not quantifiable," and measurements at or above the LOQ are both detected and quantifiable with known reliability [34] [35].

EP17-A2 Experimental Design for LOQ Characterization

The EP17-A2 guideline recommends a structured, stepped approach to characterizing detection capability metrics. This process begins with LoB determination, progresses to LoD establishment, and culminates in LOQ characterization. Each step requires specific sample types and replication schemes to ensure statistical reliability [33] [34] [35].

The following workflow diagram illustrates the comprehensive experimental design for LOQ characterization according to the EP17-A2 framework:

Sample Preparation and Experimental Replication

Proper sample preparation is fundamental to obtaining valid detection capability estimates. The EP17-A2 guideline emphasizes using matrix-matched samples that closely resemble actual patient specimens [34] [35]. For ctDNA dPCR assays, this means:

Blank samples: Should contain wild-type DNA in a background that mimics patient plasma cfDNA, rather than simple buffer solutions or no-template controls [35]. For circulating tumor DNA applications, appropriate blank samples would be plasma-derived cfDNA from healthy donors or samples with confirmed wild-type status for the target of interest.

Low-level samples: Should be representative positive samples with analyte concentrations near the expected detection limits. For ctDNA assays, these are typically created by spiking synthetic mutant DNA fragments or cell line DNA into wild-type background DNA at concentrations 1-5 times the LoB [34] [35]. The background DNA should match the fragmentation pattern of authentic cfDNA.

The EP17-A2 protocol specifies minimum replication requirements for statistical reliability [34] [35]:

- LoB determination: Requires at least 30 replicate measurements of blank samples to establish the 95th percentile with confidence.

- LoD determination: Requires a minimum of five independently prepared low-level samples with at least six replicates each (total of 30 measurements).

- LOQ determination: Requires multiple samples across the concentration range of interest with sufficient replication to establish precision profiles.

This replication scheme ensures that estimates account for both within-run and between-run variability, providing realistic performance metrics for actual assay use.

Statistical Calculations and Data Analysis

The EP17-A2 guideline provides specific statistical methods for calculating detection capability metrics. The process employs a non-parametric approach for LoB determination and parametric methods for LoD and LOQ [34] [35]:

LoB Calculation (Non-parametric)

- Sort blank measurements in ascending order (Rank 1 to N, where N ≥ 30)

- Calculate rank position: X = 0.5 + (N × PLoB) where PLoB = 1 - α (typically 0.95 for 95% confidence)

- LoB = C1 + Y × (C2 - C1), where:

- C1 = concentration at rank immediately below X

- C2 = concentration at rank immediately above X

- Y = decimal portion of X

This non-parametric approach is recommended as it does not assume normal distribution of blank measurements, which is particularly valuable when dealing with the typically skewed distribution of blank results in molecular assays.

LoD Calculation

- Calculate global standard deviation (SDL) from low-level samples: $SDL = \sqrt{\frac{\sum{i=1}^{J}(ni - 1)SDi^2}{\sum{i=1}^{J}(ni - 1)}$ Where J = number of low-level samples, ni = replicates per sample, SD_i = standard deviation for each sample

- Compute Cp = 1.645 / √(1 - 1/(4×L)) where L = total number of low-level measurements

- LoD = LoB + Cp × SDL

The value 1.645 represents the 95th percentile of the normal distribution for β = 0.05, and the adjustment factor accounts for the degrees of freedom in the SD estimate.

LOQ Determination

Unlike LoB and LoD, LOQ is determined based on precision profiles. The LOQ is established as the concentration where measurements achieve predefined precision criteria, typically expressed as coefficient of variation (CV) [30] [36]. The process involves:

- Testing multiple samples across the concentration range from below LoD to above expected LOQ

- Calculating CV at each concentration level

- Defining LOQ as the lowest concentration where CV ≤ 20% (or other acceptable threshold based on assay requirements)

This precision-based approach ensures that measurements at or above the LOQ meet the quantitative needs of the assay's intended use.

Comparison with Alternative Approaches

Methodological Comparison

While EP17-A2 provides a comprehensive framework for detection capability evaluation, other approaches exist in the scientific literature. The table below compares EP17-A2 with two alternative methods for LOQ determination:

Table 1: Comparison of LOQ Characterization Approaches

| Method Characteristic | CLSI EP17-A2 Framework | Uncertainty Profile Approach | Calibration Curve Approach |

|---|---|---|---|

| Theoretical Basis | Non-parametric (LoB) and parametric (LoD/LOQ) statistics | Tolerance intervals and measurement uncertainty | Parameters of calibration curve and error propagation |

| Experimental Requirements | 60+ measurements (30 blanks + 30 low-level) | Multiple series with replicates across concentration range | Calibration standards with replication |

| LOQ Definition | Concentration meeting specified precision criteria (e.g., CV≤20%) | Intersection of uncertainty intervals with acceptability limits | Typically 10×SD of blank/slope or based on prediction intervals |

| Regulatory Recognition | FDA-recognized standard [33] | Emerging approach, limited regulatory recognition | Referenced in ICH guidelines |

| Applicability to ctDNA dPCR | Well-suited, accounts for digital assay characteristics | Computationally complex, requires sophisticated statistical implementation | May underestimate values for complex biological samples [30] |

| Handling of Complex Matrices | Explicitly requires matrix-matched samples | Can incorporate matrix effects through variance components | Challenging without proper blank matrix |

Research comparing these approaches has demonstrated that "the classical strategy based on statistical concepts provides underestimated values of LOD and LOQ," while graphical methods like uncertainty profiles may offer more realistic assessments for complex samples [30]. However, the regulatory recognition and standardized methodology of EP17-A2 make it the preferred approach for diagnostic applications.

Practical Implementation in ctDNA Analysis

The EP17-A2 framework has been successfully implemented in various ctDNA assay validations. In one study developing multiplex drop-off digital PCR assays for colorectal cancer mutations, researchers achieved a limit of detection ranging from 0.084% to 0.182% in mutant allelic frequency following EP17 principles [31]. This sensitivity enabled detection of 42.45% of mutations in a cohort of 106 CRC patients that were missed by less sensitive methods.

Another recent study using PhasED-Seq technology for detecting residual disease in B-cell malignancies demonstrated an exceptionally sensitive LoD of 0.7 parts per million with 120 ng input DNA, establishing the LOQ through precision profiling across dilution series [32]. The implementation followed EP17 principles with appropriate blank samples (cancer-free donor cfDNA) and low-level samples (limiting dilutions of clinical specimens).

The following decision logic illustrates how these detection capability metrics guide interpretation of experimental results in ctDNA analysis:

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of the EP17-A2 framework for LOQ characterization requires careful selection of reagents and materials. The following table outlines essential components for ctDNA dPCR experiments:

Table 2: Essential Research Reagents and Materials for LOQ Characterization in ctDNA dPCR

| Reagent/Material | Specification Requirements | Function in LOQ Characterization |

|---|---|---|

| Blank Sample Matrix | Wild-type plasma cfDNA, matched to patient sample fragmentation | Establishes baseline noise and LoB; critical for matrix effects assessment |

| Reference Standards | Synthetic mutant DNA fragments with verified concentration | Creation of low-level samples for LoD/LOQ determination; enables accurate spike-in |

| dPCR Master Mix | Optimized for cfDNA templates (<200 bp); minimal inhibitor effects | Ensures efficient amplification of low-concentration targets; reduces false negatives |

| Partitioning Oil/Reagents | Low emulsion failure rate; compatible with sample matrix | Creates reproducible partitions for digital quantification; impacts precision |

| Positive Control Templates | Sequence-verified plasmid or synthetic DNA with known mutation | Monitors assay performance across experiments; confirms LoD/LOQ stability |

| Sample Diluent | Matches cfDNA storage buffer (e.g., TE buffer) without background DNA | Maintains analyte stability during serial dilution for precision profiles |

The quality and appropriateness of these materials directly impact the reliability of detection capability estimates. For example, using inappropriate blank matrices (e.g., buffer instead of wild-type cfDNA) can lead to underestimation of LoB and consequently overoptimistic LoD and LOQ values [35]. Similarly, reference standards must be accurately quantified to ensure correct spike-in concentrations for low-level samples.

Advanced Considerations in LOQ Characterization

Statistical Handling of Data Near Detection Limits

A significant challenge in LOQ characterization involves the appropriate statistical treatment of measurements near the limits of detection and quantification. Research demonstrates that simple replacement methods for values below LOQ (e.g., substituting LOQ/2) introduce substantial bias in both mean and standard deviation estimates [37]. As the proportion of replaced values increases, this bias becomes more pronounced, potentially leading to incorrect conclusions.

Superior statistical approaches treat measurements below LOQ as left-censored data and use maximum likelihood estimation or other censored data methods for analysis [37]. These approaches maintain greater fidelity to the underlying distribution, providing more accurate parameter estimates even when up to 90% of observations fall below the LOQ. Implementation requires specialized statistical software but is essential for valid inference with data near detection limits.

Application in Clinical Validation Studies

The EP17-A2 framework's utility extends beyond analytical validation to clinical application. In a recent study evaluating high-sensitivity cardiac troponin I (hs-cTnI) assays for non-ST elevation myocardial infarction (NSTEMI) diagnosis, researchers implemented EP17-A2 to establish detection limits before assessing clinical performance [36]. The study demonstrated that the "LoB strategy demonstrated 100% sensitivity but low PPV (14.0%)," highlighting how understanding detection capabilities informs appropriate clinical implementation strategies.

For ctDNA assays, similar considerations apply. An assay with LOQ at 0.1% variant allele frequency might be excellent for monitoring treatment response but inadequate for early detection where variant frequencies may be below 0.01%. Thus, LOQ characterization must align with the assay's intended use case, whether for residual disease detection, early diagnosis, or therapy selection.

The CLSI EP17-A2 framework provides a rigorous, standardized methodology for LOQ characterization that addresses the critical need for reliable quantification at the lower limits of detection. For ctDNA dPCR research, implementing this framework requires careful attention to matrix-matched samples, appropriate replication schemes, and precision-based LOQ determination. While alternative approaches exist, EP17-A2 offers regulatory recognition, methodological comprehensiveness, and proven applicability to complex biological matrices.

As ctDNA technologies continue evolving toward increasingly sensitive detection, proper LOQ characterization becomes ever more essential. The EP17-A2 framework provides the methodological foundation for demonstrating that assays are "fit for purpose" and that reported quantitative results meet the reliability standards required for both research and clinical applications. By implementing this framework, researchers ensure their detection capability claims are defensible, comparable across laboratories, and meaningful for the intended use of the assay.

Preparing Representative Blank and Low-Level ctDNA Samples

The accurate determination of the Limit of Quantification (LOQ) for circulating tumor DNA (ctDNA) using digital PCR (dPCR) is foundational to its application in precision oncology. Achieving reliable LOQ values is heavily dependent on the use of properly prepared representative blank and low-level ctDNA samples. These samples serve as critical controls that establish assay sensitivity, define detection thresholds, and validate performance across the low variant allele frequency (VAF) range essential for detecting minimal residual disease (MRD) and early-stage cancers [38]. The preparation of these samples must account for the challenging reality that ctDNA can represent ≤ 0.1% of total cell-free DNA (cfDNA) in early-stage tumors, and in some contexts, VAF can be less than 0.01% [39] [38]. This article compares methodologies for generating these crucial reference materials, objectively evaluates the performance of leading dPCR platforms, and provides standardized protocols to support robust LOQ determination in ctDNA research.

Technical Comparison of dPCR Platforms for Low-Level ctDNA Analysis

Performance Characteristics of Leading dPCR Systems